Abstract

Background:

Approximately 1 in 4 adults will develop hallux valgus (HV). Up to 80% of adult Internet users reference online sources for health-related information. Overall, with the high prevalence of HV combined with the numerous treatment options, we believe patients are likely turning to Internet search engines for questions relevant to HV. Using Google’s people also ask (PAA) or frequently asked questions (FAQs) feature, we sought to classify these questions, categorize the sources, as well as assess their levels of quality and transparency.

Methods:

On October 9, 2022, we searched Google using these 4 phrases: “hallux valgus treatment,” “hallux valgus surgery,” “bunion treatment,” and “bunion surgery.” The FAQs were classified in accordance with the Rothwell Classification schema and each source was categorized. Lastly, transparency and quality of the sources’ information were evaluated with the Journal of the American Medical Association’s (JAMA) Benchmark tool and Brief DISCERN, respectively.

Results:

Once duplicates and FAQs unrelated to HV were removed, our search returned 299 unique FAQs. The most common question in our sample was related to the evaluation of treatment options (79/299, 26.4%). The most common source type was medical practices (158/299, 52.8%). Nearly two-thirds of the answer sources (184/299; 61.5%) were lacking in transparency. One-way analysis of variance revealed a significant difference in mean Brief DISCERN scores among the 5 source types, F(4) = 54.49 (P < .001), with medical practices averaging the worst score (12.1/30).

Conclusion:

Patients seeking online information concerning treatment options for HV search for questions pertaining to the evaluation of treatment options. The source type encountered most by patients is medical practices; these were found to have both poor transparency and poor quality. Publishing basic information such as the date of publication, authors or reviewers, and references would greatly improve the transparency and quality of online information regarding HV treatment.

Level of Evidence:

Level V, mechanism-based reasoning.

Introduction

Hallux valgus (HV) or a bunion is one of the most common forefoot deformities, specifically a deformity involving the first metatarsal. 24 Approximately 1 in 4 adults will develop HV, with a higher prevalence in adult females. 24 Treatment options consist of nonsurgical such as shoe modifications, orthoses, padding, and analgesics. 27 Surgical management is only indicated after conservative treatment has failed to address pain. 26 Numerous noninvasive and surgical procedures have been reported for the correction of HV. However, no intervention is superior.3,10 -12,18,23,27,31

Patients searching the Internet and more specifically Google for health-related and orthopaedic information is now a well-documented occurrence.7,14,19 Considering the high prevalence of HV along with the wide variety of nonsurgical and surgical treatment options for HV, we suspect patients have been searching the Internet for information regarding treatment options for HV. Google’s “people also ask” (PAA) feature provides questions that are directly related to one’s original search, allowing insight into what others are searching for based on similar queries. 34 Providing a unique opportunity to study commonly searched questions related to any specific condition or treatment. Previous orthopaedic investigations have used Google’s PAA box to characterize frequently asked questions (FAQs) regarding total knee and hip arthroplasty and knee osteoarthritis.35,40 Yet, no such investigation has been conducted for HV. The purpose of this study is to (1) characterize the content of FAQs regarding HV, (2) categorize the sources answering the FAQs, and (3) assess both the quality and transparency of the suggested sources. Physicians should be made aware of the common questions about HV and the nature of information patients are exposed to online to ensure patients understand the pros and cons of orthopaedic interventions for HV.

Materials and Methods

Reproducibility

This study was conducted in accordance with a previously written protocol publicly available via the Open Science Framework. 36 The methodology in the current study has been adapted and improved on from our previous works that examined FAQs regarding treatments for carpal tunnel, the COVID-19 vaccine, and osteopathic medicine.29,30,37

Systematic Search

On October 9, 2022, using a clean web browser to minimize personalized advertisements, we searched Google 15 for 4 terms: “hallux valgus treatment,” “hallux valgus surgery,” “bunion treatment,” and “bunion surgery.” We selected these terms to capture the most likely inquiries related to treatments or surgeries for HV and bunions. We used a free Chrome extension SEO Minion for each inquiry to download the FAQs and associated answer links. 33 The extension software allows for on-page search engine analysis that retrieved both the FAQs and attached links from the Google search return page. This process was repeated until reaching a minimum of 200 FAQs for each search. We used a minimum of 200 FAQs, as previous studies using similar methodology have recommended using 50 to 150 sources.30,35 One author (S.S.) screened each FAQ for relevance to the search on October 9, 2022. Duplicate FAQs were removed from the individual searches. Then, we compiled the results of the 4 searches and screened the sample for relevance pertaining to the 4 search terms. We excluded any FAQ not pertaining to the search terms. Additionally, all videos, paywall-restricted sites, and uploaded document returns were excluded.

Data Extraction

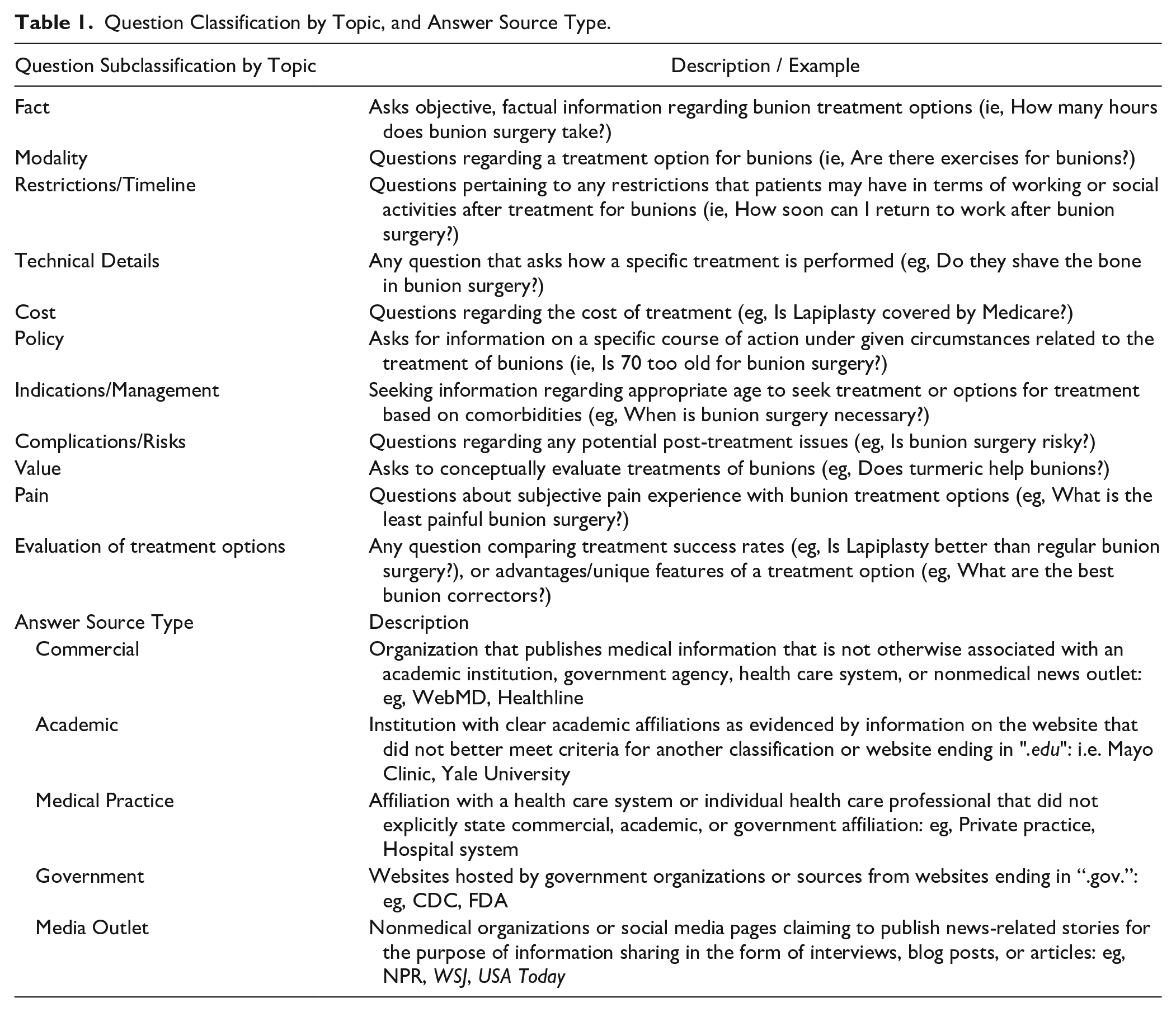

In masked and duplicate fashion using a Google Form, C.P. and S.S. recorded each FAQ and the linked source. Source types were categorized as either, Academic, Commercial, Government, Media Outlet, or Medical Practice according to previously established classification schemes.35,39 Applying methodology adapted from published literature,20,30,35 we classified FAQs using Rothwell’s Classification of Questions 28 designating them as either Fact, Policy, or Value questions. Fact questions were further subclassified into 4 groups: Cost, Modality, Restrictions/Timeline, and Technical Details. Policy questions were subclassified into 2 groups: Indications/Management and Complications/Risks. Value questions were subclassified into 2 groups: Pain and Evaluation of treatment options. Refer to Table 1 for Question Classification and Answer Source Type definitions. Both the JAMA Benchmark criteria and the Brief DISCERN tool were applied in a masked duplicate fashion for each source, and author GH resolved any discrepancies.

Question Classification by Topic, and Answer Source Type.

Information Transparency

Each source was assessed using the Journal of the American Medical Association’s (JAMA’s) Benchmark criteria. 38 The JAMA Benchmark criteria have been used to effectively screen online information for fundamental aspects of information transparency.8,9,21,30,35,40 The items used to determine transparency were as follows: authorship, attribution, currency, and disclosure. Sources meeting 3 more criteria are considered to have high transparency whereas sources meeting less than 3 criteria have poor transparency. Refer to Table 2 for JAMA Benchmark criteria definitions.

AMA a Benchmark Criteria.

Journal of the American Medical Association.

Information Quality

Information quality was assessed using the Brief DISCERN information quality assessment tool. DISCERN has been used to assess the quality of Internet sources in a variety of medical fields.1,13,17,30 Khazaal et al 22 developed a 6-item version called Brief DISCERN that has comparable validity while preserving the advantages of the original tool and offering a more user-friendly format. Therefore, we used the Brief DISCERN quality assessment tool as used in other studies.2,30,42 Each of the 6 questions can be scored from 1 = no, 2/4 = partially, and 5 = yes for a maximum score of 30. For this study, we considered all partial answers as a 3 to increase accuracy and precision for the partial category. We will consider an aggregate score of 16 or greater to be of good quality keeping in line with previous recommendations. 22 For specific details of the 6 questions, see Table 3.

Brief DISCERN Questions and Scoring.

Analyses

Frequencies and percentages were reported for each type of FAQ. The Chi-Square Test of Independence was used to determine associations between JAMA Benchmark criteria and source type. One-way analysis of variance was used to determine whether mean Brief DISCERN scores differed by source type. Tukey’s honestly significant difference test was done post hoc to determine the significance of DISCERN completion between source types. Statistical significance was set at P < .001. Statistical analysis was calculated in R (version 4.2.1).

Results

Search Return

There were a total of 1281 FAQs after combining the 4 search terms: 361 from searching “hallux valgus treatment,” 324 from “hallux valgus surgery,” 348 from “bunion treatment,” and 252 “bunion surgery.” After removing duplicates, there were 554 unique FAQs. Of these, 255 were removed because they either did not pertain to HV treatments or surgeries, were a link to a video resource, were restricted behind a paywall, or were a form of uploaded documents, resulting in a final count of 299 FAQs.

Question Classification

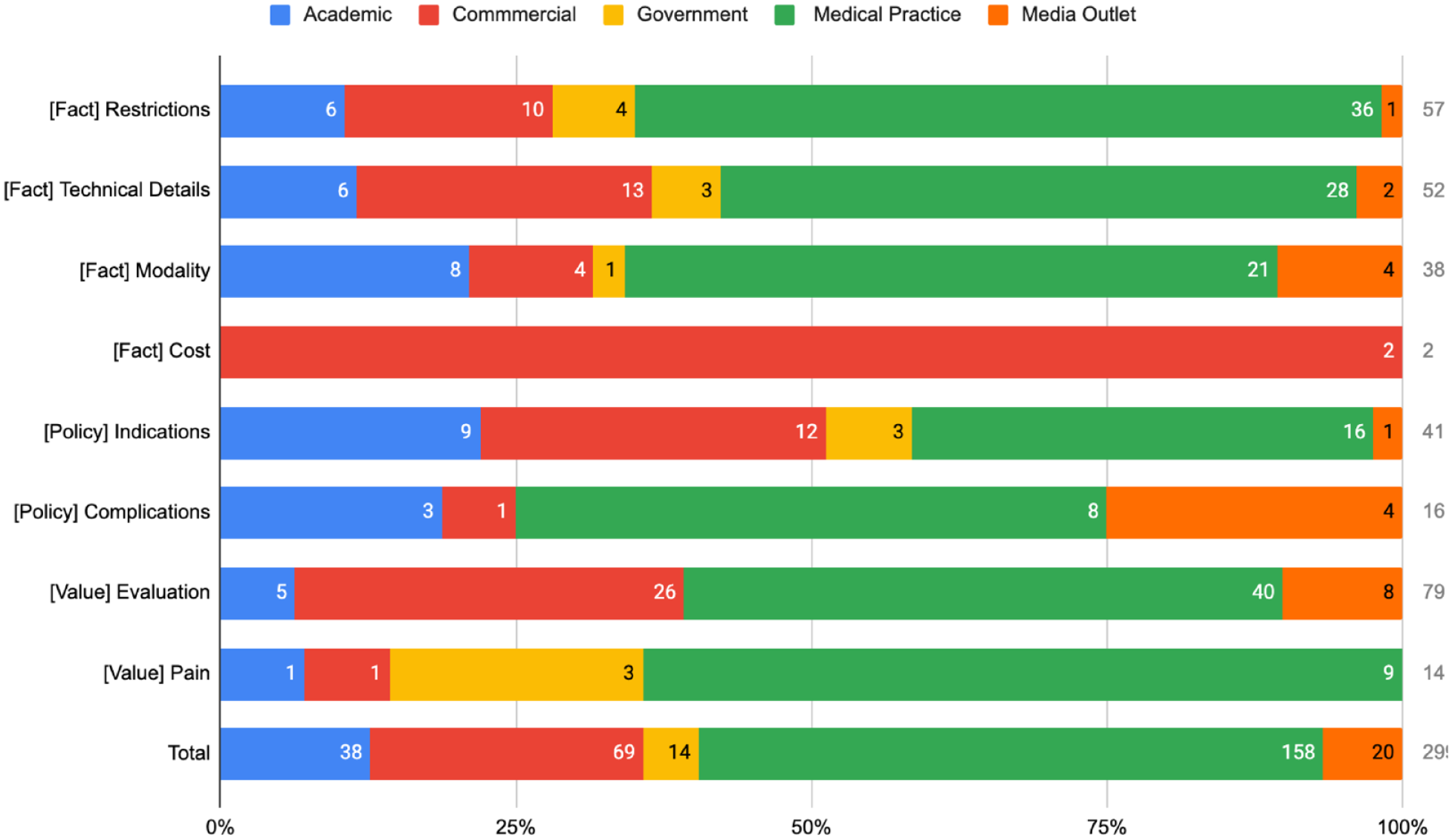

Using the Rothwell classification for HV included FAQs, 126 (49.8%) were fact-based questions, 93 (30.8%) were value-based questions, and 57 (19.4%) were policy-based questions. Of the 126 fact-based FAQs, the most common topic was Restrictions (57/126, 45.2%) followed by Technical Details (52/126, 41.3%), Modality (14/126, 11.1%), and Cost (2/126, 1.6%). Of the 93 value-based FAQs, the most common topic was Evaluation (79/93, 84.9%) followed by Pain (14/93, 15.1%). Of the 57 policy-based FAQs, most were interested in Indications (41/57, 71.9%) followed by Complications (16/57, 28.1%). Answer Sources medical practices (158/299, 52.8%) were the most identified source within our sample followed by commercial sources (69/299, 23.1%), academic (38/299, 12.3%), government (14/299, 4.7%), and media outlets (20/299, 6.7%). Medical practices were also responsible for answering the most FAQs in each individual topic such as evaluation (40/79, 51%) and restrictions (36/57, 63.2%) The breakdown and associated answer sources are listed in Figure 1.

Question classification by source category.

Information Transparency

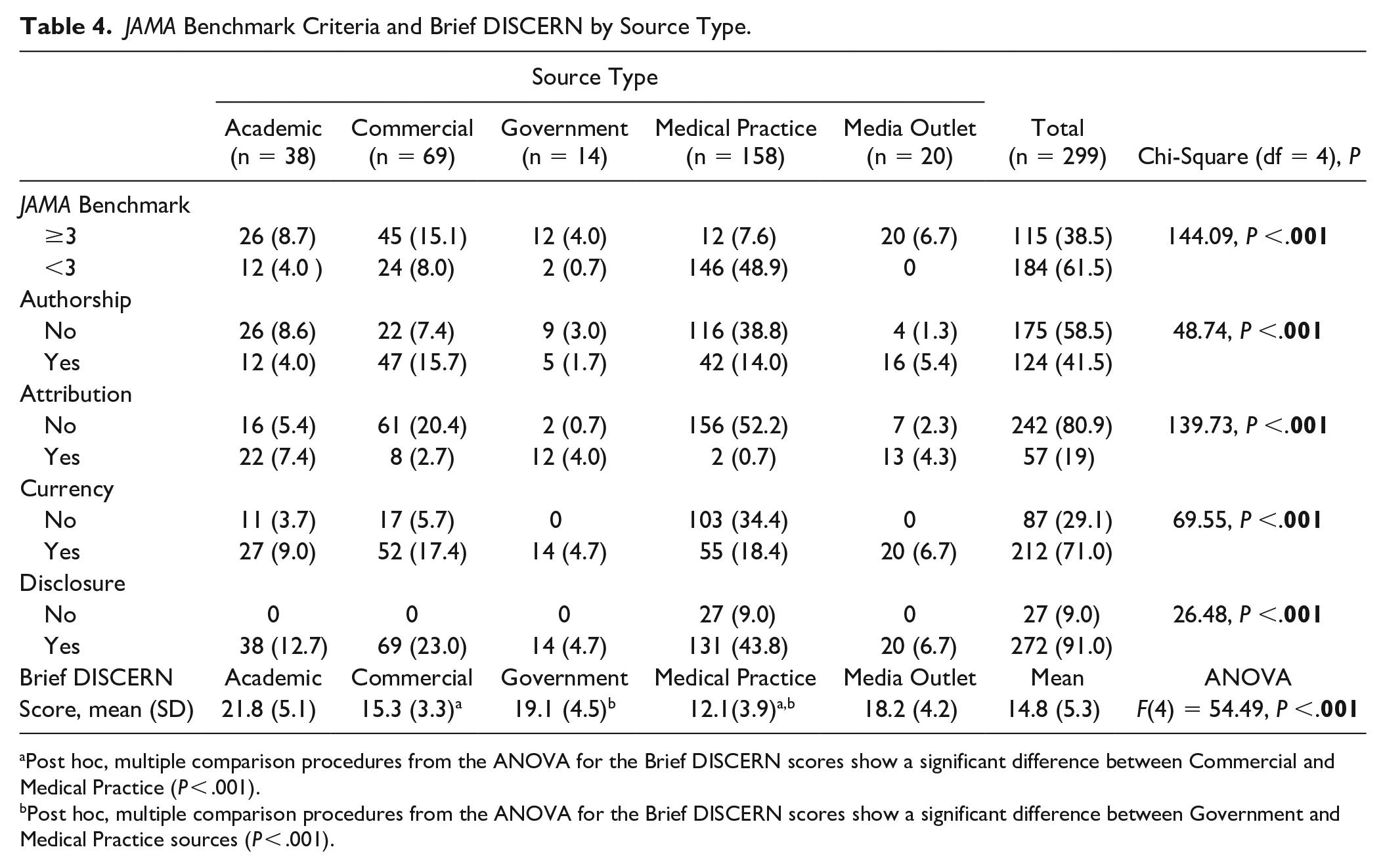

One hundred fifteen (of 299, 38.5%) sources met 3 or more JAMA Benchmark criteria. A large portion of these sources were commercial sources (45/115, 39.1%) followed by academic sources (26/115, 22.6%), media outlets (20/115, 17.4%), government (12/115, 12.4%), and medical practices (11/115, 9.6%). Both academic (26/38, 68.4%) and medical practices (116/158, 73.4%) failed to assign authorship in more than two-thirds of included sources. Only 8 of the 69 (11.6%) commercial sources and 2 of the 158 (1.2%) medical practice sources met the attribution criteria (ie, listing references). The difference in the likelihood of meeting 3 or more JAMA Benchmark criteria by source type was statistically significant (x24 = 144.09; P ≤ .001). There were statistically significant differences in the likelihood of meeting each JAMA Benchmark criterion among source types, and the results are presented in Table 4.

JAMA Benchmark Criteria and Brief DISCERN by Source Type.

Post hoc, multiple comparison procedures from the ANOVA for the Brief DISCERN scores show a significant difference between Commercial and Medical Practice (P < .001).

Post hoc, multiple comparison procedures from the ANOVA for the Brief DISCERN scores show a significant difference between Government and Medical Practice sources (P < .001).

Information Quality

The overall average Brief DISCERN score for HV FAQs was 14.79 with an SD of 5.27. Academic sources had the highest average score at 21.8 of 30, followed by government sources with an average of 19.1, media outlet sources at 18.2, commercial at 15.3, and medical practices at 12.1. The ANOVA revealed a statistically significant difference in Brief DISCERN scores among the 5 source types, F(4) = 54.49 (P < .001). Post hoc comparisons from the ANOVA showed there was a statistically significant difference in the mean Brief DISCERN scores of medical practices compared to both commercial (P < .001) and government sources (P < .001). Academic, government, and media outlet sources were >16, indicating quality content, except for commercial and medical practice sources; all results are presented in Table 4.

Discussion

Bunions are one of the most encountered foot conditions today. Therefore, it can be inferred that patients tend to search for online information before making an appointment with a doctor. Google’s advanced data mining and machine learning software can be leveraged to quantify the level of public interest in conditions like HV, which can then be used to inform clinicians and policy makers. Yet, for these data to be useful, it is necessary to evaluate the transparency and quality of the resources to which Google directs individuals. Our study’s aims were to analyze the content of the most commonly asked questions about HV or bunions and assess the transparency and quality of the sources of information that patients are potentially accessing.

FAQs

Our results indicate the most commonly searched questions on Google about HV are value-based questions evaluating possible treatment options, accounting for more than a fourth of all the FAQs in our sample. Questions related to restrictions from HV treatment were the second most encountered FAQs, whereas questions about the associated costs of HV treatment were the least common. These findings suggest patients have more interest in learning about their treatment options and restrictions after treatment than the cost of treatment. Patients seem uncertain about which treatment option for HV is suitable for them. This could be attributed to the lack of consensus among foot and ankle experts on the best intervention for HV, as evident from the current literature.3,11,12,18,23,27,31 Moreover, it is possible our findings reflect the high rate of dissatisfaction that persists despite attempts at both nonsurgical and surgical treatments for HV.3,32,41 Finally, the high rate of searches being conducted over evaluation of treatment may be in part to differing expectations. Previously Baumhauer et al 4 identified outcome measures that physicians consider to be key in determining the outcome success or not differ from patients with foot and ankle complaints. One possible strategy to address these challenges is to introduce validated patient-reported outcome measures (PROMs) 32 or to include a multimedia component to help establish patient expectations and supplement their understanding of bunion surgery. 5 Yet, ultimately the most effective strategy to address these issues will depend on individual practice settings.

Sources

Despite the fact that medical practices (ie, individual practitioners or health care systems) comprised more than half of all websites in our sample, they exhibited the lowest level of transparency and quality among all source types. These findings are reflected in prior research. For example, McCormick et al 25 when evaluating online sources for information on femoroacetabular impingement found both single-physician websites and medical practices were associated with the lowest JAMA Benchmark scores. A 2021 study reported comparable findings for single practitioner and medical practice websites. 35 Finally, our previous study examining online information for carpal tunnel syndrome demonstrated this relationship between medical practices and incomplete transparency with poor quality. 37 The lack of transparency and poor quality observed in medical practice websites is concerning, given that an increasing amount of trust is being put into online information. For instance, in France, approximately 80% of young adults consider online health information to be trustworthy. 6 As patients increasingly turn to online resources for health information and place greater trust in them, health care providers need to ensure that the content they publish online contains basic criteria—authorship, date of publication, links or references—to improve transparency as well as the quality of the information.

Transparency and Quality

Information transparency within our sample was poor, as nearly two-thirds of all sources failed to meet at least 3 or more JAMA Benchmark criteria. Analyzing our sample without accounting for medical practices drastically improves the overall transparency, with almost 3 of every 4 sources meeting the criteria for transparency. Media outlets, surprisingly, were found to have perfect transparency, though a small portion of our sample. It is possible that nonmedical websites are motivated to provide sources of information to validate their claims, whereas physicians and hospitals may not feel compelled to do so. Additionally, the criteria that were being evaluated for the JAMA Benchmark may have been located elsewhere on the website and not on the page source for the FAQ, a potential limitation of the JAMA Benchmark. Although there are certain limitations to its use, the JAMA Benchmark criteria remain one of the most established and widely used tools for evaluating online health information.8,16,35 Academic, government, and media outlet sources were all considered to be of good quality, with academic sources averaging the highest Brief DISCERN score. The only sources to not meet the threshold (16) for quality on the Brief DISCERN assessment were commercial and medical practice sources. Analyzing what questions from the Brief DISCERN assessment contributed to the poor score the most, we found the first 2 questions (see Table 3), on average, were medical practice source’s worst-performing questions. Coincidentally, these questions are similar to those the JAMA Benchmark also assesses: (1) Is it clear what sources of information were used to compile the publication? (2) Is it clear when the information used or reported in the publication was produced? It is apparent that medical practices by simply providing rudimentary information as that which is being gauged by both the JAMA Benchmark and Brief DISCERN would increase their websites' transparency and quality at the same time.

Limitations of a study with this methodology are such as the fluidity of Google’s search outputs, which could alter the reproducibility of our study. With continued searches regarding HV treatments, it is possible that new FAQs may be generated as well as Google may alter the source to which they direct the public for answers, thus limiting the generalizability of our study to the time when our search was performed. Both JAMA Benchmark and Brief DISCERN are considered proxies for transparency and quality of online information, respectively. Additionally, the transparency and quality assessments we used do not assess for information accuracy, as this would require source-by-source comparison. Finally, the categorization of FAQs and answer sources is limited to subjectivity, and there is potential for overlap between categories. Nevertheless, both were adapted from previously published work.29,30,37

Conclusion

Value-based questions evaluating treatment options were the most searched questions on Google for HV or bunion treatments. Despite being the most frequent source of information, medical practices had the worst transparency and quality of all sources. We recommend medical practices optimize their online information as it is clear their websites are reaching patients searching for information regarding HV treatments.

Supplemental Material

sj-pdf-1-fao-10.1177_24730114231198837 – Supplemental material for Insights Into Patients Questions Over Bunion Treatments: A Google Study

Supplemental material, sj-pdf-1-fao-10.1177_24730114231198837 for Insights Into Patients Questions Over Bunion Treatments: A Google Study by Cole R. Phelps, Samuel Shepard, Griffin Hughes, Jon Gurule, Jared Scott, Jesse Raszewski, Safet Hatic, Bryan Hawkins and Matt Vassar in Foot & Ankle Orthopaedics

Footnotes

Ethical Approval

This protocol (IRB 2021028) was submitted to the institutional review board of Oklahoma State University Center for Health Sciences and was determined to be non–human subjects research.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article. ICMJE forms for all authors are available online.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Matt Vassar reports grant funding from the National Institutes of Health, the US Office of Research Integrity, and Oklahoma Center for the Advancement of Science and Technology, all outside the present work.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.