Abstract

Fatigue monitoring during sports activities is crucial for optimizing athletic performance and preventing injuries. However, existing methodologies frequently lack real-time capability and accuracy in dynamic sports environments. This study presents a hybrid deep learning framework that integrates Temporal Convolutional Networks with deep recurrent neural networks for real-time fatigue state recognition during cycling activities. The framework processes synchronized electrocardiography (ECG) and electromyography (EMG) signals through physiological signal fusion techniques acquired from an intelligent garment system. The EMG signals were recorded from both the Erector Spinae and Anterior Deltoid muscles, while ECG signals were captured via strategically positioned electrodes. In a comprehensive investigation involving 15 subjects, we conducted comparative analyses between our hybrid neural network architecture and four baseline deep learning models: TCN, Gated Recurrent Unit (GRU), Transformer, and recurrent neural networks. The subjects performed a structured cycling protocol alternating between low-intensity (5 km/h) and high-intensity (30 km/h) phases, with fatigue levels assessed utilizing the Borg Rating of Perceived Exertion scale. Experimental results demonstrate that our proposed hybrid model achieves superior performance with an average prediction accuracy of 89.20%, significantly outperforming traditional single-architecture approaches. The model’s efficacy was validated through multiple metrics, including precision, recall, F1 score, and area under the receiver operating characteristic curve (AUC). This study provides a viable approach to real-time fatigue monitoring in cycling sports, indicating potential value for future developments in sports science applications.

Keywords

Introduction

Fatigue in sports represents a complex physiological and psychological phenomenon that significantly impacts athletic performance and safety.1,2 Modern sports science has evolved from viewing fatigue as mere physical exhaustion to understanding it as a multidimensional state characterized by the interaction between performance fatigability and perceived fatigability. Performance fatigability manifests through objective indicators of declining physical function, including decreased muscle strength, reduced motor precision, and prolonged reaction times.1,3 At the physiological level, performance fatigability manifests through ATP depletion, glycogen exhaustion, and altered motor unit recruitment patterns,4,5 while perceived fatigability reflects psychological factors integrated through central nervous system regulation.6,7

The critical challenges in fatigue monitoring during cycling exercise stem from both the dynamic nature of the activity and the complexity of fatigue manifestation. Traditional assessment methods exhibit inherent limitations: subjective tools such as the Borg rating of perceived exertion (RPE) scale are susceptible to psychological variations, while conventional objective measures often require controlled laboratory environments, making real-time monitoring impractical. These limitations have motivated the development of more sophisticated evaluation approaches incorporating both objective measures and subjective assessments. 1

Recent advances in machine learning have demonstrated promising results in fatigue detection. Traditional approaches such as the Support Vector Machine (SVM) with skin conductance monitoring and the Gradient Boosting Decision Tree (GBDT) with EEG analysis have achieved accuracies of 92.95% and 95% respectively.8,9 Deep learning methods have further enhanced fatigue prediction capabilities through multimodal signal analysis, with deep autoencoder models combining EEG and EOG signals achieving 85% accuracy.10,11

However, several technical challenges persist in achieving accurate and reliable fatigue state recognition. First, the inherent heterogeneity of physiological signals, including electrocardiogram (ECG) and electromyogram (EMG), necessitates sophisticated feature extraction and fusion strategies to capture their complementary information. 12 Second, the temporal dependencies in these physiological signals span multiple scales, from rapid local variations to long-term modulation patterns, making it particularly challenging for conventional deep learning architectures to model them effectively. 13 Third, significant individual variations in fatigue manifestation patterns require robust modeling approaches that can maintain consistent performance across diverse subject populations.

Existing deep learning approaches have made significant strides in addressing temporal sequence modeling challenges. Temporal Convolutional Networks (TCN) utilize dilated convolutions to establish exponentially growing receptive fields, enabling effective capture of multi-scale temporal patterns. 14 The Gated Recurrent Unit (GRU) architecture introduces a dual-gating mechanism that simplifies the traditional recurrent structure while maintaining comparable modeling capabilities. 15 Transformer models, through their self-attention mechanisms, have demonstrated remarkable capacity in capturing global dependencies across temporal sequences. 16 Long Short-Term Memory (LSTM) networks, with their sophisticated three-gate control system, excel at modeling long-range temporal dependencies while mitigating the gradient vanishing problem. 17 However, these single-architecture solutions, despite their individual strengths, often struggle to simultaneously address both local feature extraction and long-term dependency modeling, leading to suboptimal performance in fatigue state recognition tasks.

To address these challenges in modeling complex physiological time series, hybrid deep learning architectures, particularly those combining convolutional neural networks (CNNs) or Temporal Convolutional Networks (TCNs) with recurrent neural networks (RNNs) like Long Short-Term Memory (LSTM), have seen a surge in research interest and successful applications across diverse domains. These hybrid models are predicated on the synergistic advantage of leveraging CNNs/TCNs for robust local feature extraction and LSTMs for capturing long-range temporal dependencies.18-20 For instance, such architectures have demonstrated considerable success in financial forecasting for stock selection and price prediction,21-23 in industrial settings for machine fault detection and short-term load forecasting,24-27 and in various biomedical applications including EEG-based epilepsy recognition, 28 muscle force estimation, 18 and Parkinson’s disease prediction from speech signals. 29 The review by Ullah on short-term load forecasting provides a comprehensive overview of the growing adoption and efficacy of CNN-LSTM hybrid approaches, highlighting their ability to outperform traditional single-architecture models. 26

Despite this increasing adoption, the development and application of these hybrid structures are not without challenges. The optimal way to combine convolutional and recurrent layers can vary significantly depending on the specific characteristics of the time-series data. Much of the existing literature explores sequential architectures, where features extracted by CNN/TCN layers are subsequently fed into LSTM layers.20,30,31 Other approaches include ConvLSTM cells, where convolutional operations are integrated directly into the LSTM structure, which have been compared against sequential CNN–LSTM models in contexts like water stress forecasting. 32 Factors such as computational complexity, the need for large datasets for effective training, and the intricacies of hyperparameter tuning for these more complex models 29 may have historically tempered their widespread adoption compared to simpler, more established individual architectures. Furthermore, while the general concept of hybrid models is gaining prominence, their specific application and optimization for real-time fatigue monitoring using multimodal physiological signals like ECG and EMG remain less explored.

This paper proposes a hybrid deep learning framework that synergistically combines the strengths of TCN and LSTM architectures for real-time fatigue monitoring in cycling. While TCN and LSTM components are established, the contribution of this work lies in the specific application and evaluation of a tailored parallel integration strategy for the complex task of multimodal physiological signal analysis in fatigue monitoring. Taking these established architectures as baseline models, systematic comparative analyses are conducted to validate the effectiveness of the proposed hybrid approach. The primary contributions of this research are manifold.

A TCN–LSTM hybrid architecture featuring a parallel dual-branch structure is presented and evaluated, which effectively integrates the local feature extraction capabilities of TCN with long-term dependency modeling of LSTM to comprehensively capture fatigue-related physiological patterns from concurrently processed ECG and EMG signals. The architecture employs a differential pooling strategy where max pooling in the LSTM branch preserves temporal features relevant to acute fatigue detection, while average pooling in the CNN branch maintains statistical distributions for monitoring fatigue, thus enabling comprehensive signal representation across various fatigue states.

Experimental validation conducted with cycling subjects demonstrates that the TCN–LSTM hybrid framework achieves higher classification accuracy compared to baseline architectures including LSTM, TCN, GRU, and Transformer, with consistent performance across evaluation metrics and subjects, indicating effective generalization capability across individual physiological variations.

The remainder of this paper is organized as follows: the section “Materials and Systems” introduces the intelligent garment system for data acquisition; the section “Experiment and Methods” describes the experimental protocol, fatigue assessment methodology, and feature extraction techniques; the section “Deep Learning Models for Fatigue State Recognition” presents the baseline deep learning architectures and elaborates on the proposed TCN–LSTM hybrid framework; the section “Results” provides comprehensive performance evaluation and architectural analysis; and the section “Discussion” concludes the paper and outlines future research directions in real-time fatigue monitoring for sports science applications.

Materials and Systems

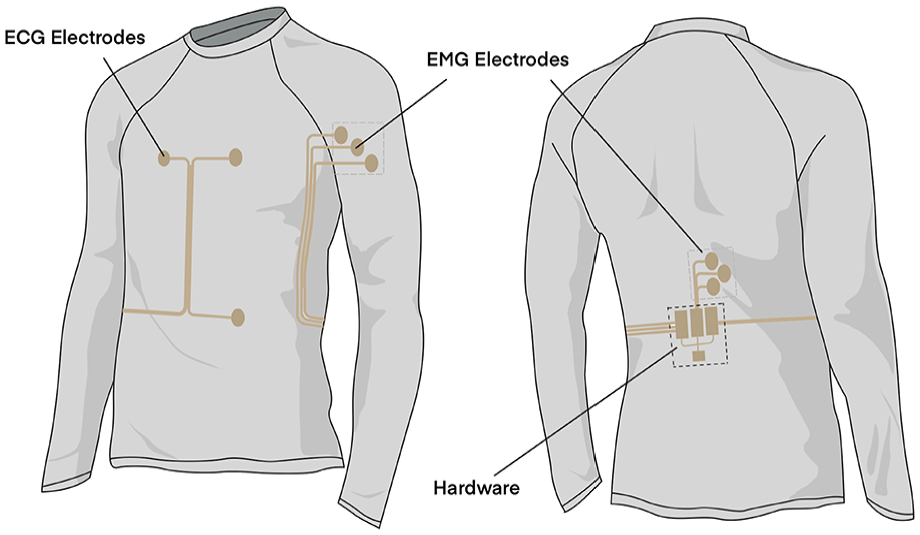

An intelligent garment system has been developed in our previous study which represents a comprehensive solution for real-time physiological monitoring during prolonged physical activities. 33 This system enables simultaneous acquisition of electrocardiography (ECG) and electromyography (EMG) signals through strategically positioned electrodes integrated into a compression garment, as illustrated in Figure 1.

Design of the intelligent garment system. 33

For ECG monitoring, the system employs a single-lead configuration with two electrodes positioned on the chest area and one reference electrode on the left abdomen. The EMG monitoring capability is achieved through two sets of three-electrode arrays: one set positioned on the anterior deltoid muscle and the other on the erector spinae muscle. This electrode arrangement, following the guidelines established by the Consensus for Experimental Design in Electromyography (CEDE) project, 34 enables comprehensive monitoring of muscle activation patterns during dynamic movements.

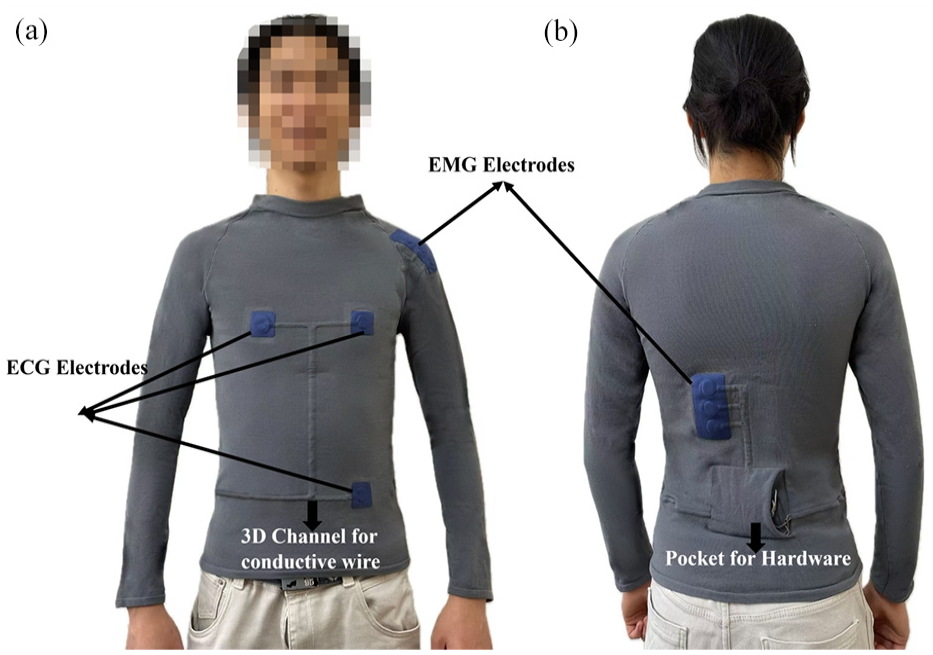

To ensure reliable signal acquisition during intense physical activities, the system incorporates adjustable Velcro straps around each electrode site, maintaining consistent electrode–skin contact pressure. As shown in Figure 2, the hardware components, including an ADS1292R chip for ECG acquisition and two SEN0240 ADCs for EMG detection, are strategically positioned on the lower back to minimize motion artifacts. The Arduino Nano 33 IoT microcontroller processes and transmits the acquired signals wirelessly via WiFi, while a 9 V battery powers the entire system. This configuration allows for high-fidelity signal capture with ECG sampling at 125 Hz and EMG sampling at 1000 Hz.

Structure and component distribution of the physiological signal acquisition intelligent garment system with integrated electrode configuration: (a) Front view and (b) back view.

The system’s design particularly excels in long-duration sports monitoring scenarios, where traditional gel-based electrodes might deteriorate due to sweat and movement. The integration of the electrodes into a compression garment ensures comfort and stability during extended wear, while the wireless data transmission capability enables unrestricted movement during athletic activities. This combination of features makes the system especially suitable for real-time monitoring of cardiac activity and muscle fatigue during endurance sports such as cycling.

Experiment and Methods

Experimental Protocol

The experimental study involved 15 healthy male university students. Their baseline physiological characteristics were: age 24.3 ± 1.5 years, height 175.7 ± 2.1 cm, and weight 72.3 ± 2.5 kg. The participants’ body mass index (BMI) ranged from 23.1 to 23.7 kg/m2, indicating a normal and reasonable distribution. Recruiting from a university student population provided context for the relatively narrow age range. All participants provided written informed consent, and the study protocol was approved by the Donghua University Ethics Committee (DHUEC; approval no.: DHUEC-FZ-2024-09).

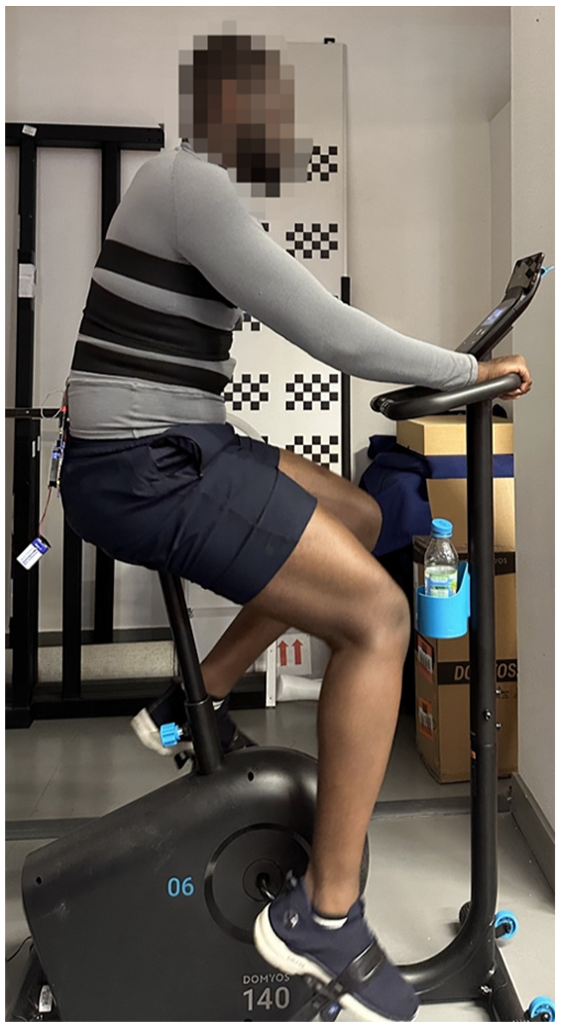

Participant recruitment adhered to strict inclusion criteria to ensure data quality and homogeneity: (1) participants’ physique was required to conform to the M size (size 50) specifications of the intelligent compression garment (chest circumference: 92–98 cm, height: 173–178 cm) to ensure accurate signal acquisition and wearing comfort; (2) all participants possessed a minimum of 6 months of regular cycling training experience, with a training frequency of no less than three times per week, ensuring their familiarity with cycling exertion and fatigue perception; (3) participants reported no history of cardiovascular diseases or musculoskeletal injuries to exclude potential health risks; and (4) participants refrained from high-intensity physical activity for 48 h prior to the experiment to ensure baseline physiological consistency. As shown in Figure 3, the experiments were conducted using an Essential Exercise Bike EB 140 (Decathlon, France) in a controlled laboratory environment (temperature: 25°C, humidity: 50%). Considering the relatively short duration (30 min) of the experiment, participants were instructed not to consume water throughout the session to maintain standardized hydration conditions across all subjects. This protocol decision eliminated hydration status as a potential confounding variable during physiological signal acquisition and fatigue assessment.

Laboratory cycling test configuration.

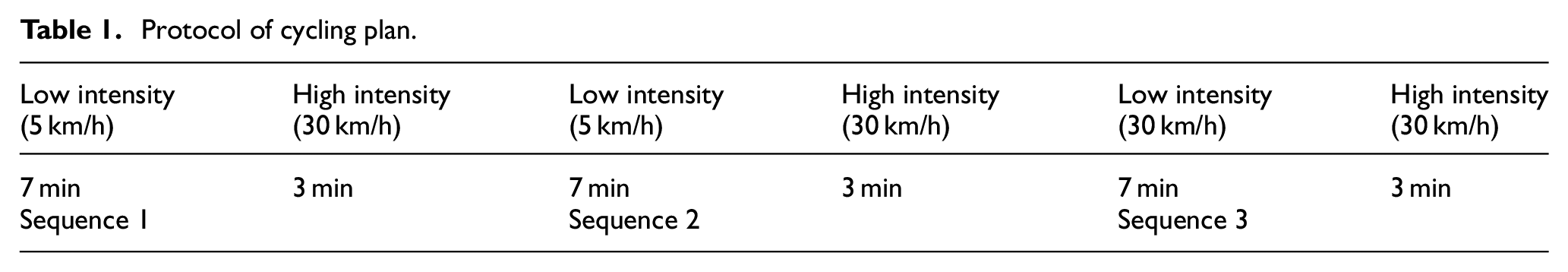

As shown in Table 1, the cycling protocol consisted of three consecutive sequences, each comprising two distinct phases: a 7 min low-intensity phase at 5 km/h followed by a 3 min high-intensity sprint phase at 30 km/h. This structured alternation between low and high intensities was designed to induce progressive fatigue while enabling the assessment of physiological responses across varying exertion levels. The total duration of each experimental session was 30 min, conducted at 10:00 AM to minimize circadian rhythm effects. Each participant completed three identical trials on separate days to ensure measurement reliability.

Protocol of cycling plan.

Fatigue Assessment

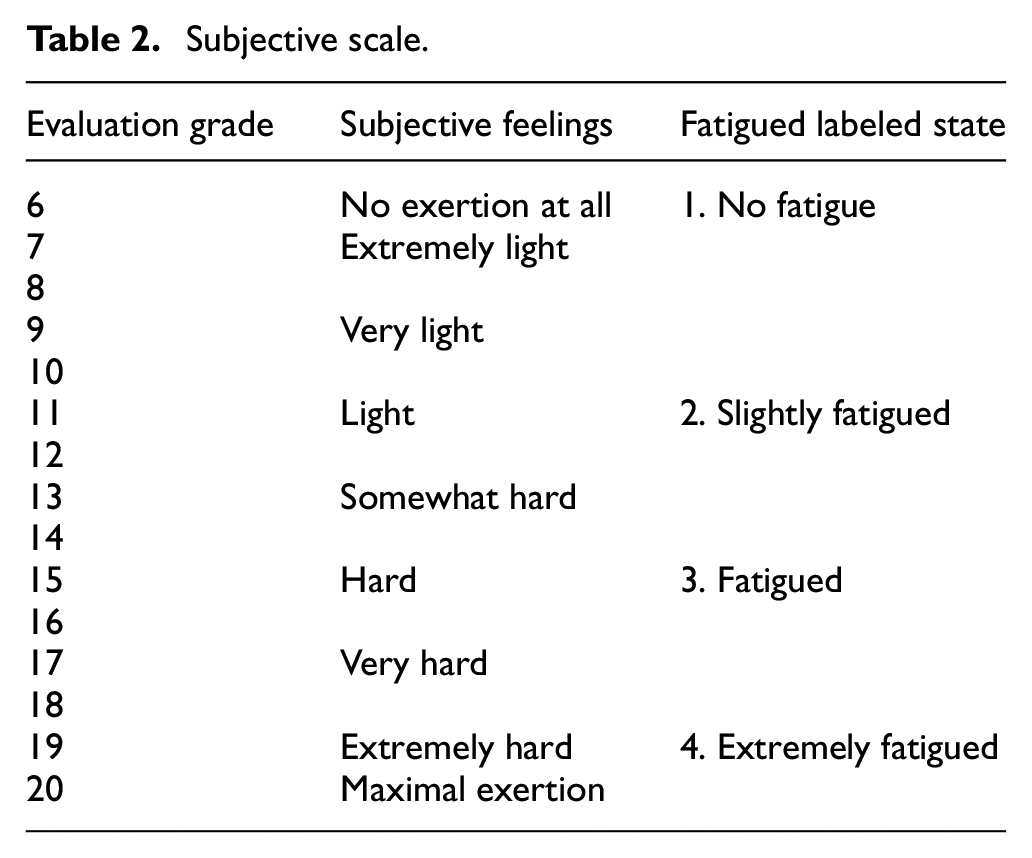

Subjective fatigue levels were evaluated using the Borg rating of perceived exertion (RPE) scale, which was modified into four distinct fatigue states: No fatigue (6–10 RPE), Slightly fatigued (11–14 RPE), Fatigued (15–16 RPE), and Extremely fatigued (17–20 RPE). As shown in Table 2. Participants were instructed to verbally report their perceived fatigue state whenever they experienced a change in their fatigue level, allowing for real-time tracking of fatigue progression throughout the exercise session.

Subjective scale.

To validate the physiological relevance of the RPE-based fatigue assessment, we conducted a preliminary correlation analysis between subjective fatigue ratings and key physiological indicators during the cycling protocol. The analysis revealed consistent patterns between RPE progression and objective physiological markers of fatigue. Specifically, heart rate exhibited a progressive increase that aligned closely with RPE escalation across the four fatigue states, demonstrating correlation coefficients ranging from 0.73 to 0.82 across subjects (p < 0.01). This correspondence reflects the well-established relationship between cardiac autonomic modulation and perceived exertion during progressive exercise loading.

Similarly, EMG median frequency parameters from both anterior deltoid and erector spinae muscles demonstrated the expected downward trend characteristic of peripheral muscle fatigue, showing significant negative correlations with RPE values (r=−0.68 to −0.76, p < 0.01). The spectral compression toward lower frequencies, indicating slowed muscle fiber conduction velocity and altered motor unit recruitment patterns, occurred progressively as participants transitioned through higher RPE categories. This physiological validation confirms that the RPE-based fatigue classification captures meaningful changes in both central cardiovascular and peripheral neuromuscular systems.

Data Collection and Feature Extraction

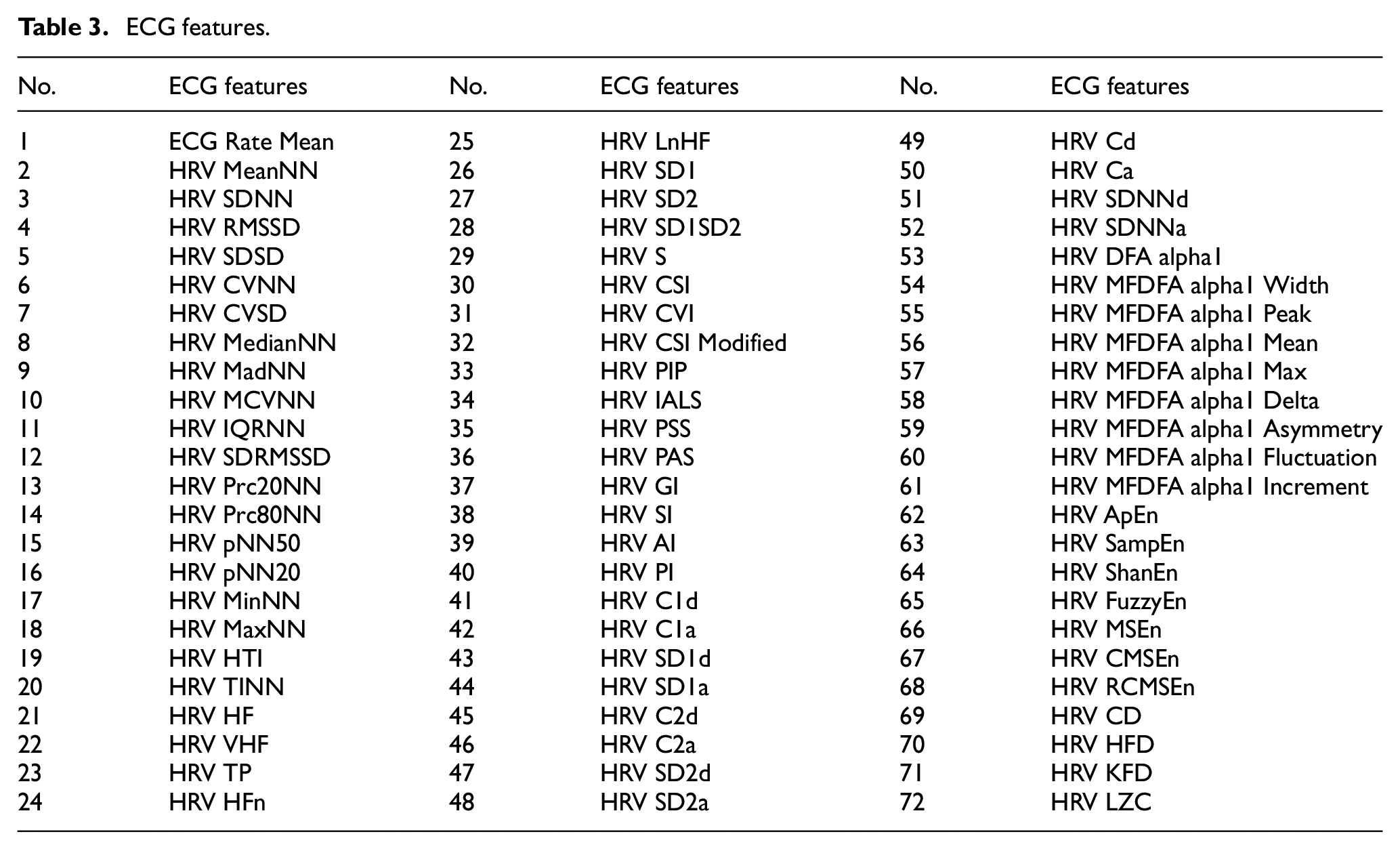

The ECG signals were acquired at 125 Hz and preprocessed using a bandpass filter (0.5–100 Hz) to remove baseline wander and high-frequency noise. The R-peaks were detected using the Pan–Tompkins algorithm, enabling the extraction of 72 time-domain and frequency-domain features including heart rate variability (HRV) parameters, time-domain statistics, and frequency-domain power distributions, which are shown in Table 3.

ECG features.

ECG signals were acquired at 125 Hz and pre-processed using a bandpass filter (0.5–100 Hz) to remove baseline wander and high-frequency noise. R-peaks were subsequently detected using the Pan–Tompkins algorithm. 35 Seventy-two time-domain and frequency-domain features were then extracted using the NeuroKit2 toolbox. 36 These features encompassed heart rate variability (HRV) parameters, time-domain statistics, and frequency-domain power distributions, as detailed in Table 3.

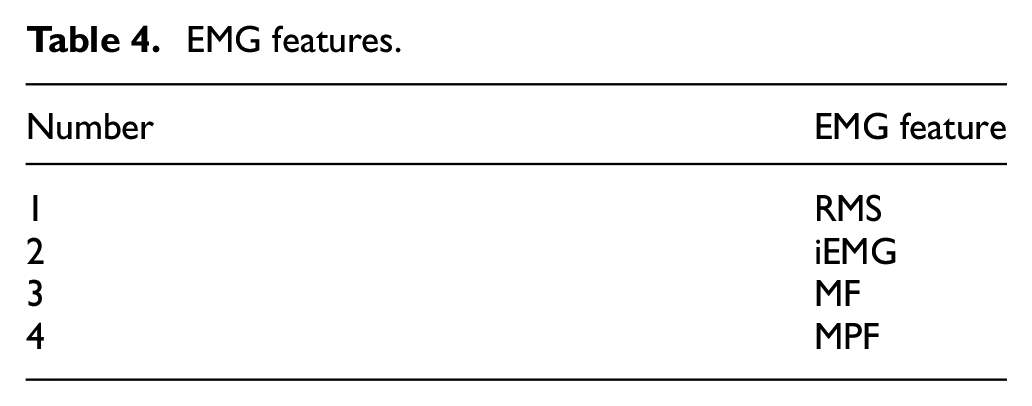

The EMG signals were acquired simultaneously from the anterior deltoid and erector spinae muscles at a sampling frequency of 1000 Hz. A comprehensive signal processing pipeline was implemented to ensure optimal signal quality and feature extraction. Initially, DC offset was eliminated through mean subtraction of the raw signals, followed by a second-order Butterworth bandpass filter with cutoff frequencies at 20 Hz and 450 Hz to remove motion artifacts and high-frequency noise, which serves as a critical preprocessing step to isolate genuine physiological EMG components. The filtered signals then underwent full-wave rectification to obtain absolute EMG values, after which a low-pass filter with a 10 Hz cutoff frequency was applied to extract the signal envelope, effectively smoothing the rectified signal to reveal the underlying muscle activation patterns. Feature extraction was performed using a sliding window technique with a window size of 2 s and a 1-s overlap, ensuring continuous monitoring of muscle activity patterns while maintaining temporal resolution. From each window, four established EMG parameters were calculated to quantify different aspects of muscle activation and fatigue (Table 4).

EMG features.

Following the signal processing, four EMG features were calculated from each 2 s window with a 1 s overlap (Table 4) to quantify different aspects of muscle activation and fatigue. These parameters include the RMS (root mean square), iEMG (integrated EMG), MF (median frequency), and MPF (mean power frequency). The RMS provides a measure of the EMG signal amplitude, reflecting the overall level of muscle activation, while the iEMG represents the integrated EMG signal over time, indicating the cumulative muscle activity. Both RMS and iEMG generally increase with muscle fatigue as more motor units are recruited to maintain force output. Conversely, MF and MPF, which characterize the frequency content of the EMG signal, tend to decrease during fatigue. This shift toward lower frequencies is attributed to the slowing of muscle fiber conduction velocity and the preferential recruitment of slower, fatigue-resistant motor units.

Deep Learning Models for Fatigue State Recognition

Baseline Model Architectures

Our data processing pipeline forms the foundation for all model implementations, transforming raw physiological signals into structured inputs suitable for deep learning analysis. The pipeline employs a sliding window approach with a 2000-sample window size, processing and concatenating ECG and EMG signals into unified temporal sequences. For model training and evaluation, we implemented a five-fold cross-validation protocol, where 80% of the data was allocated for training and 20% for testing in each fold. All models were trained using a uniform set of standardized hyperparameters, applied equally across all architectures, to ensure fair architectural comparison: Adam optimizer with a learning rate of 0.0001, batch size of 64, and cross-entropy loss as the objective function due to its effectiveness for multi-class classification problems. This uniform application, rather than exhaustive individual tuning for each model, was crucial for isolating architectural differences as the primary variable under investigation. Each model underwent 100 epochs of training. To further ensure a fair comparison and prevent overfitting, consistent regularization strategies including dropout (p = 0.2 for all baseline models) and Xavier initialization for all linear layers were applied as part of this standardized training protocol. This methodical approach to both data processing and model training allowed us to isolate the effects of architectural differences on fatigue state recognition performance.

We evaluated four baseline deep learning architectures, each representing distinct approaches to temporal sequence modeling.

Temporal Convolutional Network (TCN)

As illustrated in Figure 4, TCN architecture implements a hierarchical feature extraction strategy through dilated causal convolutions. The model processes the concatenated input tensor (2000 × 3) through three cascaded temporal blocks with progressively increasing channel dimensions (64, 128, and 256). Each temporal block contains two sequential one-dimensional convolutional layers followed by sequence truncation, ReLU activation, and dropout regularization (p = 0.2). The progressive dilation rates (1, 2, 4) across the three blocks enable exponential expansion of the receptive field, facilitating multi-scale temporal feature extraction. Residual connections with 1 × 1 convolutions are employed at channel transition points to maintain gradient flow. This architecture effectively captures local temporal patterns while preserving computational efficiency through parameter sharing.

Architecture of temporal convolutional network with hierarchical dilated convolutions for multi-scale feature extraction.

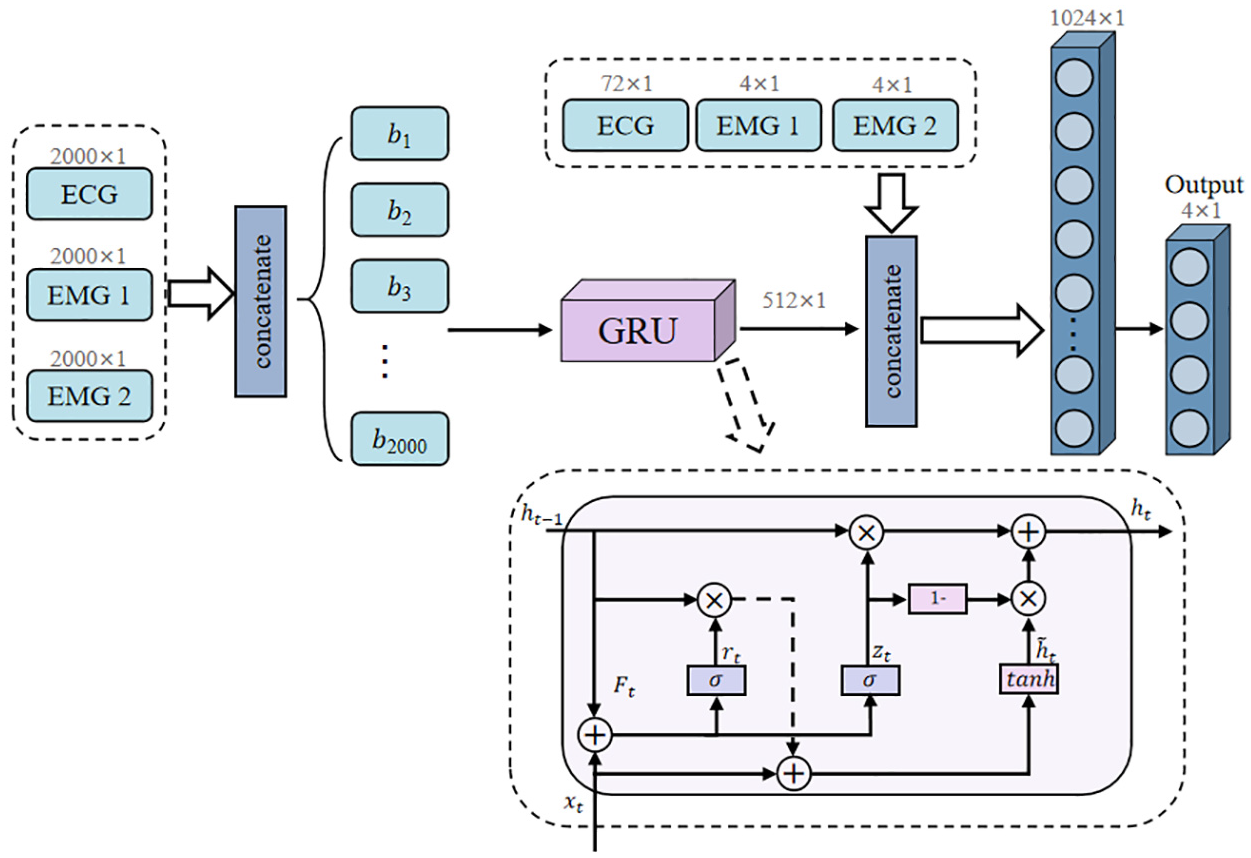

Gated Recurrent Unit (GRU)

Figure 5 presents GRU architecture, which employs a streamlined recurrent approach for sequence modeling. The network processes the input sequences through a four-layer GRU structure with a hidden dimension of 512 units per layer. The GRU cell implements an efficient dual-gate mechanism, combining reset gates (rt) for selective historical information filtering and update gates (zt) for adaptive memory retention. This mechanism enables the model to simultaneously manage information flow and reduce computational complexity compared to traditional recurrent structures. The final hidden state output (512 × 1) is concatenated with auxiliary features before classification through fully connected layers.

Architecture of gated recurrent unit network with sequential processing mechanism for temporal dependency modeling.

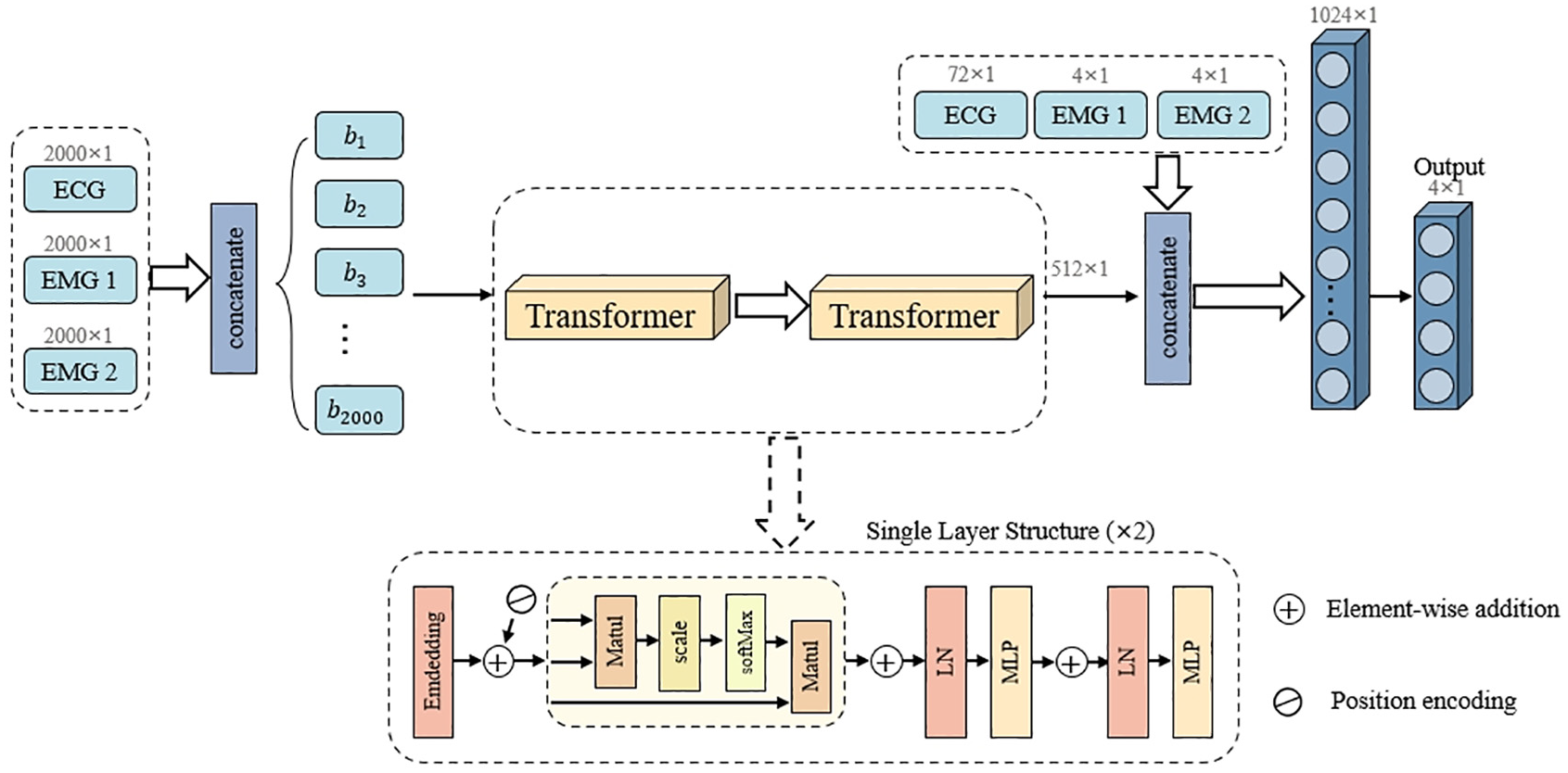

Transformer

The transformer architecture, depicted in Figure 6, leverages self-attention mechanisms for capturing global dependencies in physiological signals. The model first projects the input features to a 64-dimensional embedding space through linear transformation, followed by positional encoding to preserve sequential information. The core processing module consists of two transformer encoder layers, each implementing a four-head self-attention mechanism that enables parallel processing of temporal information. The output features undergo layer normalization and feed-forward transformation with dimensions 64–256–64, establishing a hierarchical feature representation space. This architecture theoretically excels at modeling long-range dependencies through global attention distribution.

Architecture of transformer network with self-attention mechanism for global context representation.

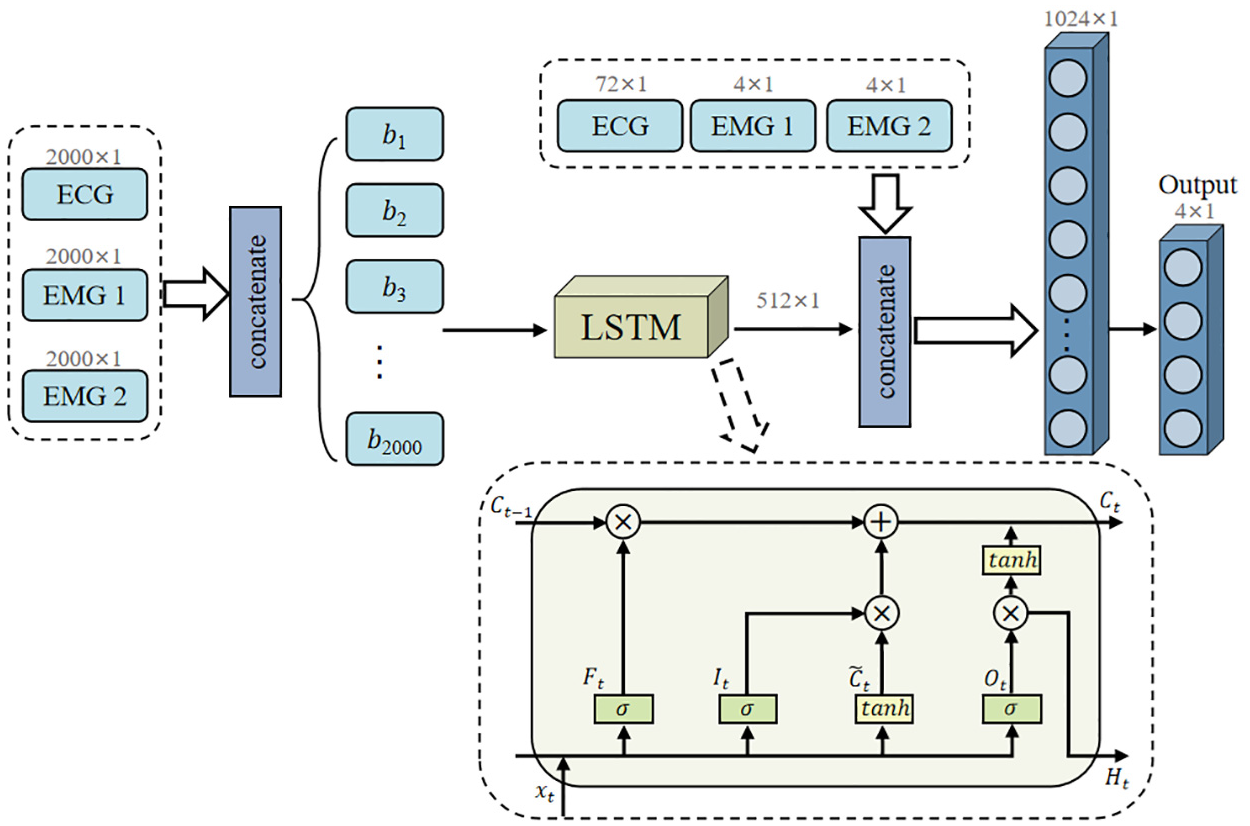

Long Short-term Memory Network (LSTM)

Figure 7 illustrates the LSTM architecture, which incorporates explicit memory cells for long-term dependency modeling. The network comprises four stacked bidirectional LSTM layers with 512 hidden units per direction. The LSTM cell implements three distinct gating mechanisms: the forget gate (Ft) for historical information regulation; the input gate (It) for new information filtering; and the output gate (Ot) for output control. This sophisticated memory management system enables fine-grained control over information flow across different time scales. The network extracts the final time-step output as a 512-dimensional feature representation, which is then concatenated with auxiliary features for classification.

Architecture of long short-term memory network with triple-gate control system for long-range dependency capture.

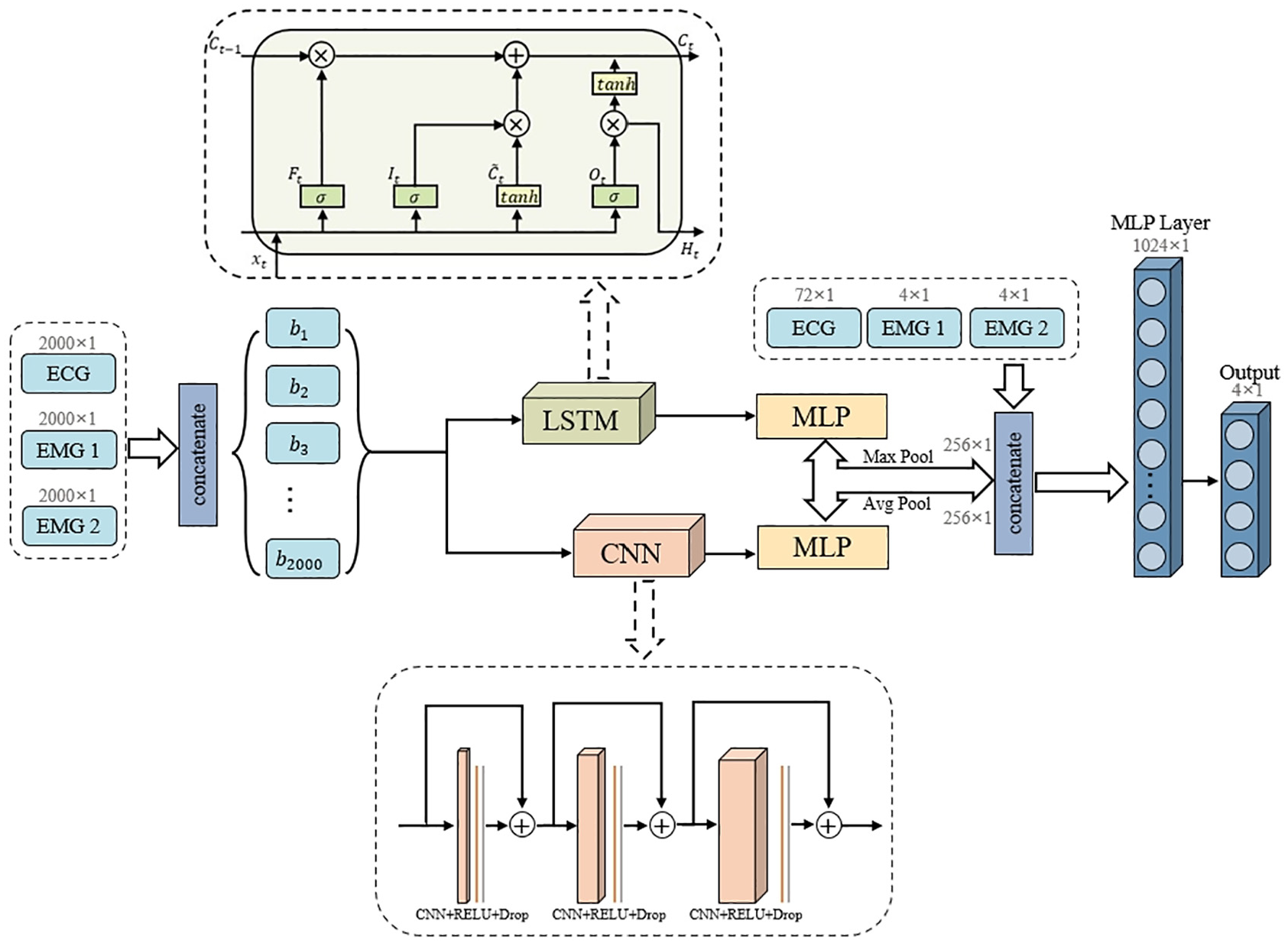

TCN–LSTM Hybrid Architecture

Through comprehensive analysis of physiological signals during fatigue progression, we identified several key characteristics that present unique challenges for computational modeling: (1) multi-scale temporal dependencies spanning from millisecond-level ECG waveforms to minute-level fatigue-induced variations; (2) heterogeneous signal modalities with ECG exhibiting strong periodicity and EMG manifesting as non-stationary burst activities; and (3) complex inter-signal relationships reflecting the physiological coupling between cardiac and muscular systems during fatigue development. These observations revealed inherent limitations in single-architecture approaches, motivating the development of our TCN–LSTM hybrid model specifically designed to address these challenges.

As discussed in the introduction, hybrid models combining convolutional and recurrent neural networks are becoming increasingly prevalent for time-series analysis due to their enhanced capability to model both local features and long-term dependencies.18,19,26 The specific manner of integration, whether sequential,20,30 parallel, or through specialized cells like ConvLSTM, 32 dictates the model’s suitability for different data types and analytical objectives. Many such hybrid models aim to first extract salient features using the convolutional component and then model their temporal evolution using the recurrent component.18,30,31 Some advanced implementations also incorporate attention mechanisms to further refine feature importance over time.22,24,37 Our proposed TCN–LSTM hybrid architecture, however, adopts a distinct parallel processing strategy. This architectural choice, contrasting with more common sequential TCN–LSTM configurations, is specifically designed to concurrently capture the multi-scale temporal dynamics and heterogeneous characteristics inherent in fused ECG and EMG signals for fatigue state recognition. The rationale for this parallel design is to allow simultaneous and independent processing of these complex signals by specialized pathways before feature fusion, rather than a hierarchical feature extraction pipeline.

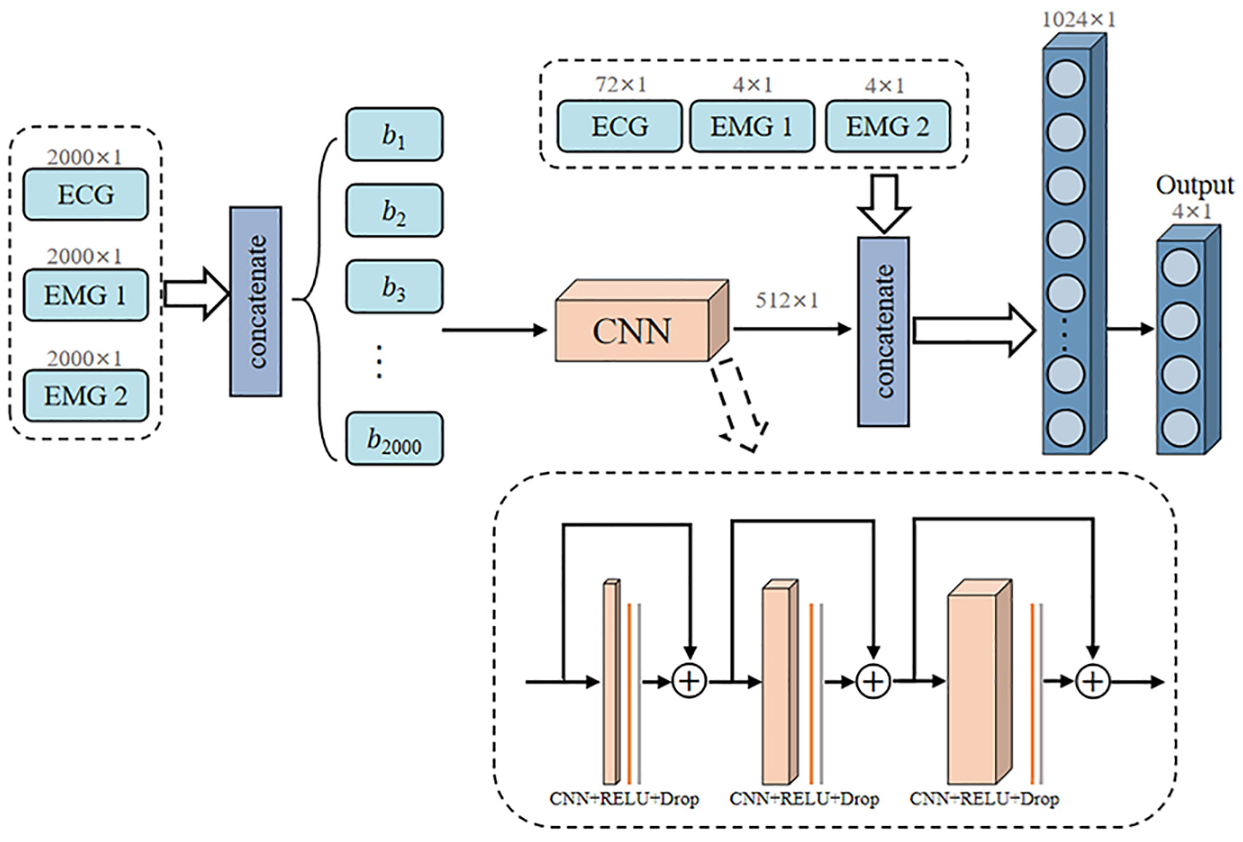

Figure 8 presents the architecture of our proposed model, which implements a dual-pathway parallel processing structure engineered to simultaneously capture different aspects of physiological signals.

Architecture of proposed TCN–LSTM hybrid framework with parallel pathway processing and differentiated pooling strategy.

The LSTM pathway is optimized for modeling long-term dependencies and state transitions in physiological signals. It employs a four-layer bidirectional structure with 512 hidden units, enabling comprehensive temporal dependency modeling in both forward and backward directions. This bidirectional processing overcomes the inherent limitations of unidirectional information flow, particularly crucial for capturing the complex temporal context in ECG rhythms and progressive EMG modulation patterns. The three-gate control system (forget, input, and output gates) enables precise regulation of information flow, allowing the network to selectively retain critical historical patterns while adaptively incorporating new measurements.

Simultaneously, the CNN pathway is engineered to extract hierarchical local features across multiple time scales. It implements three convolutional blocks with multi-scale convolutional kernels (sizes 5, 7, and 9) to match the intrinsic periodicity of physiological signals, particularly the cardiac cycle in ECG. The progressive dilation rates (1, 2, 4) enable exponential expansion of the receptive field without increasing parameter count, effectively capturing features from rapid ECG waveform characteristics to slower EMG envelope variations. Residual connections between convolutional blocks ensure efficient gradient propagation through the network, facilitating the learning of deep feature hierarchies.

A key design element in our architecture is the differentiated pooling strategy, meticulously designed to preserve complementary aspects of physiological signals: max pooling for the LSTM branch retains salient temporal features critical for detecting acute fatigue indicators, while average pooling for the CNN branch preserves statistical distribution characteristics essential for monitoring gradual fatigue progression patterns. This complementary approach ensures comprehensive feature representation across different manifestation patterns of fatigue.

Both branches produce 256 × 1 dimensional feature vectors that are concatenated with auxiliary features (72 × 1 ECG features and 4 × 1 EMG features from each muscle), forming an integrated feature representation with 592 dimensions. This feature fusion approach leverages both deep-learned representations and domain-specific physiological parameters. The joint features are then processed through a 1024 × 1 fully connected layer with exponential linear unit activation and dropout regularization (p = 0.2) before final classification.

The parallel processing capabilities of this hybrid architecture enable simultaneous modeling of both rapid physiological responses and gradual fatigue development patterns, effectively addressing the multi-scale temporal dynamics inherent in physiological signals during exercise. This design specifically targets the complementary strengths of both network types while mitigating their individual limitations, providing a more robust framework for real-time fatigue monitoring.

Results

Model Performance Evaluation

To rigorously evaluate the efficacy of the proposed models for fatigue state recognition, we conducted systematic performance assessments across multiple dimensions. We first present a detailed comparative analysis of training dynamics and confusion matrices for a representative subject, followed by a comprehensive evaluation across all subjects using multiple performance metrics.

Training Dynamics and Convergence Behavior

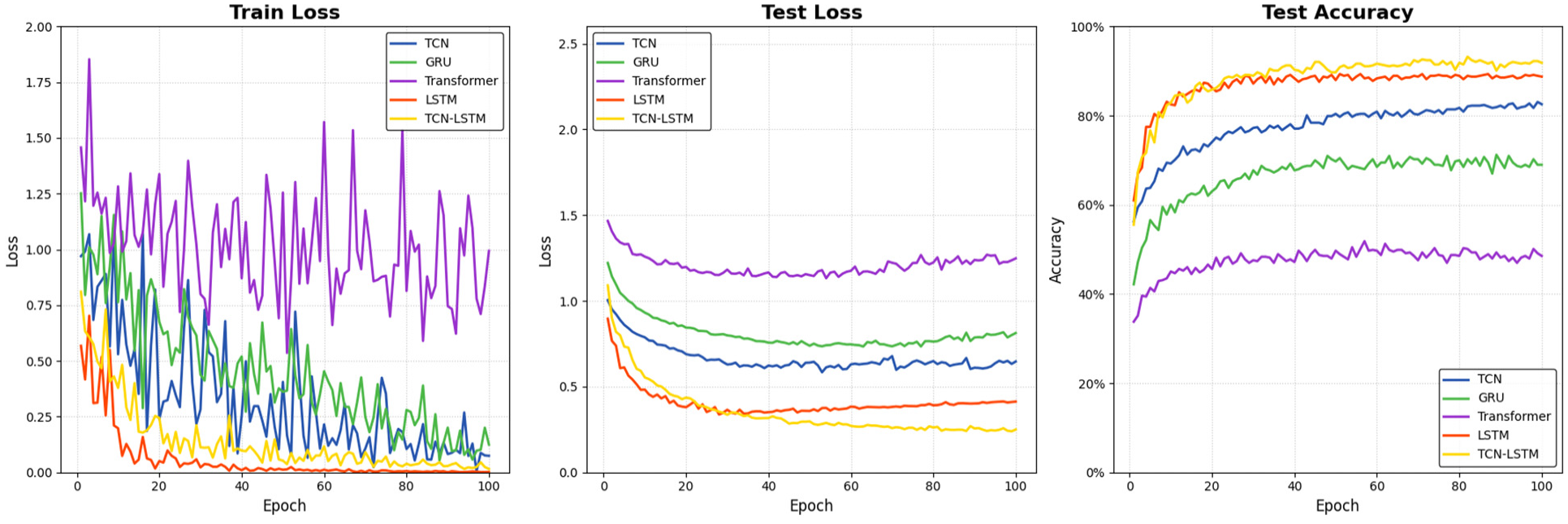

Figure 9 illustrates the training dynamics of the five deep learning architectures, depicting the evolution of training loss (Figure 9(a)), validation loss (Figure 9(b)), and validation accuracy (Figure 9(c)) throughout the 100-epoch training process. These visualizations provide crucial insights into the learning behavior and convergence properties of each model.

Training dynamics of five deep learning models: (a) training loss curves; (b) validation loss curves; and (c) validation accuracy curves.

The training loss curves (Figure 9(a)) reveal distinct optimization patterns across the architectures. The TCN–LSTM hybrid and LSTM models demonstrate superior convergence characteristics, rapidly decreasing to near-zero values within the first 30 epochs and maintaining stable optimization thereafter. The TCN and GRU models exhibit moderately effective but more gradual convergence trajectories, while the Transformer model displays significant oscillations throughout training, with loss values fluctuating between 0.5 and 1.75, indicating unstable parameter optimization that fails to converge effectively.

Validation loss patterns (Figure 9(b)) provide even more illuminating contrasts between model architectures. The TCN–LSTM hybrid model achieves the lowest validation loss (∼0.25), closely followed by LSTM (∼0.40), with both models exhibiting smooth, monotonic decreases that stabilize after approximately 40 epochs. The TCN and GRU models converge to intermediate validation loss values (∼0.65 and ∼0.80, respectively), showing adequate but limited generalization capability. The Transformer model, however, plateaus at a substantially higher loss (∼1.25), never dropping below 1.0 throughout training, suggesting fundamental limitations in its ability to extract meaningful patterns from physiological signals.

The validation accuracy trajectories (Figure 9(c)) most directly reflect model performance on unseen data. The TCN–LSTM hybrid and LSTM models rapidly achieve high accuracy levels (>85% and >80%, respectively) within the first 20 epochs, with the hybrid model maintaining a consistent 5–10% advantage throughout training, ultimately reaching approximately 92% accuracy. The TCN architecture demonstrates moderate performance progression, gradually climbing to approximately 82% accuracy, while GRU exhibits more limited effectiveness, plateauing around 70%. The Transformer architecture’s performance ceiling remains distinctly lower, struggling to exceed 50% accuracy even after complete training, reinforcing its incompatibility with the continuous, temporally dependent nature of physiological signals in this application domain.

These convergence patterns highlight the superior learning efficiency and generalization capability of the hybrid architecture, which effectively combines the complementary strengths of its constituent components to achieve optimal performance in fewer training iterations.

Classification Performance Analysis via Confusion Matrices

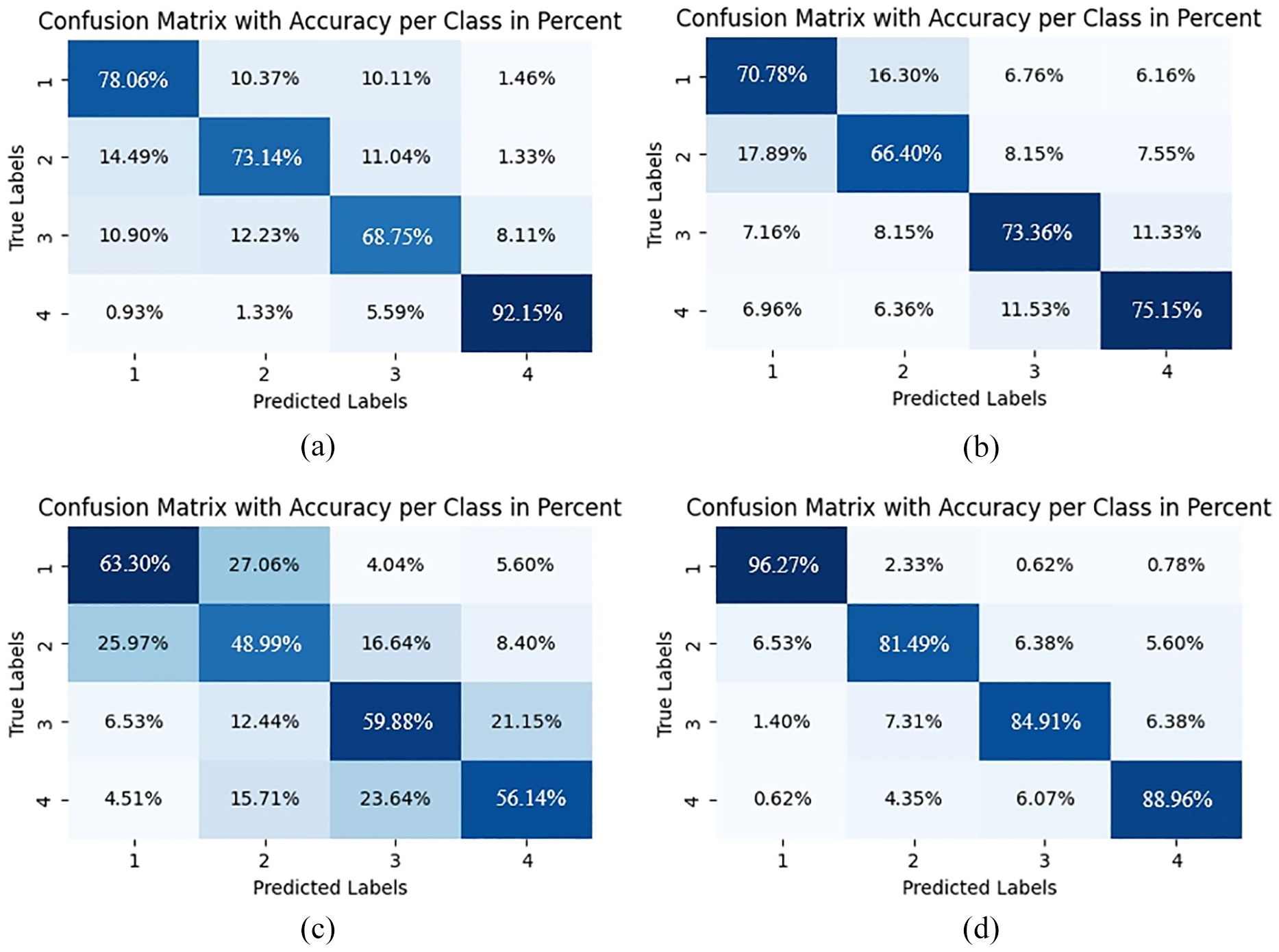

Figure 10 presents the confusion matrices for four baseline models on a representative subject, providing detailed insights into class-specific recognition performance. These visualizations reveal not only overall accuracy differences but also distinct error patterns that illuminate each architecture’s underlying capabilities and limitations.

Confusion matrices of fatigue state recognition on a representative subject: (a) TCN; (b) GRU; (c) Transformer; and (d) LSTM.

The TCN model (Figure 10(a)) demonstrates acceptable performance for extreme fatigue states (78.06% for level 1, 92.15% for level 4) but exhibits considerable limitations with intermediate levels (73.14% and 68.75% for levels 2 and 3), characterized by bidirectional misclassifications between adjacent states (10–15% error rates). The GRU architecture (Figure 10(b)) shows further performance deterioration, particularly for intermediate states (66.40% accuracy for level 2), with concerning misclassifications that violate the ordinal relationship between fatigue states (6.96% of level 4 cases misclassified as level 1). The Transformer model (Figure 10(c)) exhibits the most significant classification inadequacies across all fatigue states, with particularly low accuracy for intermediate levels (48.99% for level 2, 59.88% for level 3) and a disorganized error distribution pattern that indicates fundamental limitations in modeling temporal dependencies in continuous physiological signals. The LSTM architecture (Figure 10(d)) achieves substantially improved performance, particularly for baseline state recognition (96.27% for level 1), but still demonstrates moderate degradation for intermediate states (81.49% and 84.91% for levels 2 and 3) and non-negligible confusion between non-adjacent states.

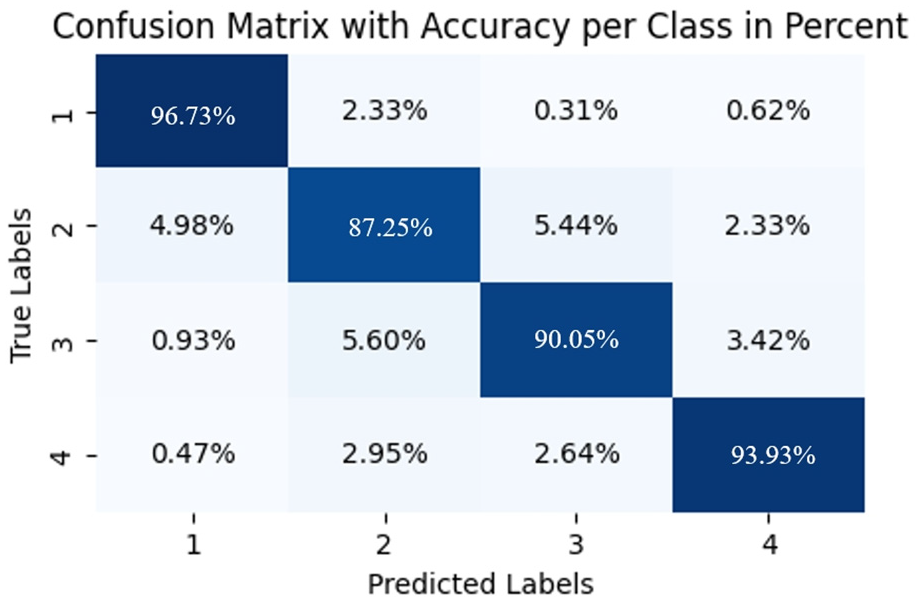

In contrast, the TCN–LSTM hybrid model (Figure 11) exhibits superior classification performance across the entire fatigue spectrum, with exceptional accuracy for both extreme states (96.73% for level 1, 93.93% for level 4) and robust performance for challenging intermediate states (87.25% for level 2, 90.05% for level 3). Compared to baseline models, the hybrid architecture demonstrates notable improvements, particularly in discriminating intermediate fatigue levels where single-architecture models show significant limitations. The hybrid model outperforms TCN by 18.67% and 14.11% for levels 1 and 2 respectively, while showing a 21.30% improvement over Transformer for level 2 recognition. Unlike GRU and Transformer, which frequently misclassify non-adjacent states (evidenced by GRU’s 6.96% error rate between levels 4 and 1), the hybrid model’s misclassifications are minimal (consistently below 6%) and predominantly occur between adjacent fatigue levels. This pattern of errors suggests the model’s capacity to capture the continuous progression of fatigue states. The hybrid architecture effectively integrates TCN’s local feature extraction capabilities with LSTM’s long-term dependency modeling, establishing more physiologically appropriate decision boundaries that respect the inherent ordinal relationship between fatigue states—a critical advantage for precise fatigue monitoring applications.

Confusion matrices of fatigue state recognition on a representative subject TCN–LSTM hybrid model.

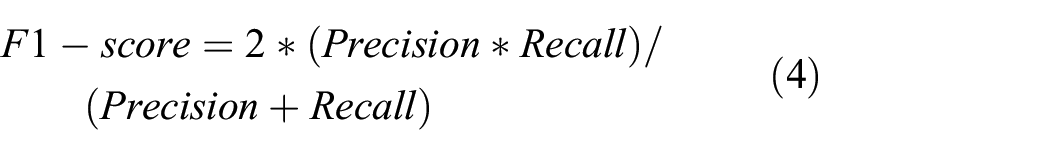

Comprehensive Multi-metric Evaluation

To systematically evaluate model performance, we employed multiple evaluation metrics to comprehensively assess classification quality across different dimensions. These metrics collectively provide a holistic perspective on each architecture’s efficacy in fatigue state recognition tasks, with each metric offering unique insights into specific performance aspects. The evaluation framework includes accuracy (overall correctness), precision (reliability of positive predictions), recall (detection capability), F1-score (balance between precision and recall), and Area Under the Receiver Operating Characteristic Curve (AUC—threshold-independent discriminative ability). The mathematical formulations of these metrics are defined as follows:

Where TP represents true positives (correctly identified positive cases), TN represents true negatives (correctly identified negative cases), FP represents false positives (negative cases incorrectly classified as positive), and FN represents false negatives (positive cases incorrectly classified as negative). AUC is calculated as the integral of the Receiver Operating Characteristic curve, which plots the true positive rate against the false positive rate at various threshold settings, thereby providing a threshold-independent measure of the model’s discriminative capability across the complete operating range.

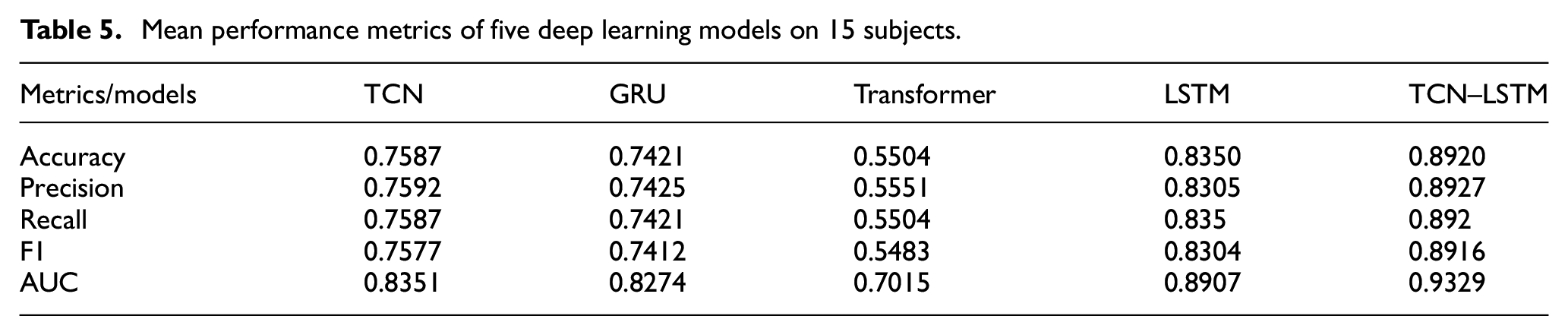

The quantitative results presented in Table 5 demonstrate the consistent superior performance of the proposed TCN–LSTM hybrid model across all evaluation metrics. With a mean accuracy of 89.20%, the hybrid architecture substantially outperforms LSTM (83.50%), TCN (75.87%), GRU (74.21%), and Transformer (55.04%). The hybrid model’s exceptional cross-metric consistency is particularly noteworthy, with precision (89.27%), recall (89.20%), and F1-score (89.16%) maintaining nearly identical values, indicating well-balanced classification performance across all fatigue states without bias toward specific classes. The AUC value of 93.29% further validates the hybrid model’s robust discriminative capability, surpassing the next best performer (LSTM) by 4.22%. This comprehensive performance advantage quantitatively confirms the theoretical hypothesis that the dual-pathway architecture effectively addresses the multi-scale temporal challenges inherent in physiological signal analysis by synergistically combining the complementary strengths of TCN’s local feature extraction and LSTM’s long-term dependency modeling capabilities.

Mean performance metrics of five deep learning models on 15 subjects.

Architectural Analysis and Interpretability

Baseline Models Analysis

The observed performance variations across baseline architectures can be attributed to specific structural characteristics and their alignment with physiological signal properties during fatigue progression.

TCN architecture (75.87% accuracy) leverages dilated causal convolutions with progressive expansion rates (1, 2, 4) to establish an exponentially growing receptive field. This configuration enables effective extraction of multi-scale temporal features from physiological signals. While TCN excels at capturing local morphological patterns in ECG waveforms and EMG bursts, its performance exhibits limitations in modeling long-term dependencies that characterize fatigue development. The structurally fixed receptive field, despite dilation mechanisms, cannot adaptively extend beyond predetermined temporal ranges, resulting in inter-subject performance variations of up to 6.01%. This empirical finding demonstrates that while dilated convolutions numerically expand the temporal receptive field, they fundamentally lack the adaptivity necessary for comprehensive modeling of complex interdependencies in multimodal physiological signals.

LSTM architecture achieves superior baseline performance (83.50% accuracy) through its sophisticated triple-gate control mechanism (forget, input, and output gates), which implements fine-grained regulation of information flow. This performance was achieved under the same standardized hyperparameter settings (Section 4.1) applied to all models, ensuring a fair basis for comparison. The training and validation loss curves for the LSTM model (Figure 9(b)) demonstrate stable convergence without clear indications of overfitting under these conditions. This mechanism effectively captures both rapid physiological signal variations and gradual fatigue progression patterns. However, LSTM demonstrates a notable vulnerability: significant performance degradation on certain data patterns (particularly evident in the 63.07% accuracy observed for subject 11). This limitation stems from LSTM’s inherent sequential processing paradigm, which introduces optimization challenges when processing highly non-stationary signals with multi-scale temporal features. The sequential parameter update mechanism can trap the model in local optima where the gating mechanism becomes excessively selective, failing to maintain optimal balance between short-term feature detection and long-term memory retention.

GRU architecture, despite its computational efficiency advantage through the dual-gate structure, achieves only moderate classification performance (74.21% accuracy). The model exhibits substantial subject-specific variability, ranging from 78.28% accuracy for subject 9 to 69.99% for subject 2. This 8.29% performance disparity reflects a fundamental structural limitation: the consolidated gating mechanism creates optimization coupling between historical information retention and current input integration. This coupling becomes problematic when processing signals with heterogeneous temporal dynamics. When modeling multi-scale modulation patterns during fatigue progression, GRU’s parameter optimization converges toward suboptimal compromise solutions that cannot simultaneously address the diverse temporal requirements of ECG and EMG signals.

Transformer architecture, despite its theoretical advantages in modeling arbitrary dependencies through self-attention mechanisms, demonstrates the lowest performance (55.04% accuracy) in our specific application context. This unexpected result warrants careful analysis of the potential challenges when applying standard Transformer implementations to physiological signal processing. Several factors may contribute to this performance gap: (1) self-attention’s global distribution approach may not optimally align with the predominantly local correlation structure of physiological signals without appropriate modifications; (2) conventional position encoding schemes designed for discrete sequences may need adaptation to better represent the continuous temporal characteristics of ECG and EMG signals; and (3) standard multi-head attention’s feature subspace division may benefit from domain-specific adjustments to preserve coherent physiological signal patterns.

The observed performance variability (standard deviation 5.12%) suggests that standard Transformer architectures as implemented in our study face challenges with physiological signal analysis. However, this does not necessarily indicate an inherent limitation of attention-based approaches for all time-series applications. Future exploration could investigate architectural adaptations specifically designed for physiological signals, including modified positional encoding strategies and attention mechanisms that better accommodate the continuous nature of ECG and EMG data. The performance differences observed in our experiments highlight an opportunity for domain-specific architectural innovations that might better leverage the potential strengths of attention mechanisms for fatigue monitoring applications.

TCN–LSTM Hybrid Model Analysis

The proposed TCN–LSTM hybrid architecture demonstrates superior classification efficacy (89.20% accuracy) through the systematic integration of complementary architectural strengths in a dual-pathway parallel processing framework. From a structural perspective, the CNN pathway implements hierarchical feature extraction via multi-scale convolutional kernels (dimensions 5, 7, and 9) with progressive dilation rates (1, 2, 4) that facilitate exponential expansion of the receptive field without parametric increase, while residual connections ensure efficient gradient propagation for learning deep feature hierarchies. Concurrently, the LSTM pathway employs a four-layer bidirectional structure with 512 hidden units and a sophisticated three-gate control system (forget, input, and output gates) that implements precise regulation of information flow for comprehensive temporal dependency modeling. A critical design innovation lies in the differentiated pooling strategy—max pooling in the LSTM branch selectively retains salient temporal features essential for detecting acute fatigue indicators, while average pooling in the CNN branch preserves statistical distribution characteristics necessary for monitoring gradual fatigue progression patterns. The feature fusion mechanism integrates deep-learned representations (256-dimensional vectors from each pathway) with domain-specific physiological parameters (72 ECG features and 8 EMG features), establishing a comprehensive 592-dimensional feature representation processed through a 1024-unit fully connected layer with exponential linear unit activation and dropout regularization (p = 0.2). As evidenced by Table 5, the hybrid architecture consistently outperforms baseline models across all evaluation metrics, with exceptional cross-metric consistency in accuracy (89.20%), precision (89.27%), recall (89.20%), and F1-score (89.16%), while the AUC value of 93.29% further validates the model’s robust discriminative capability, surpassing the next best performer (LSTM with 89.07%) by a significant margin of 4.22%.

Model Ablation Study and Component Contribution Analysis

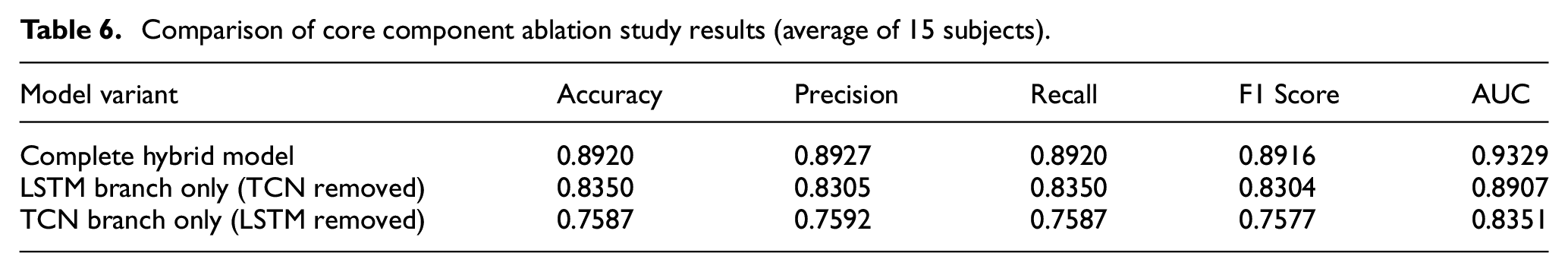

To evaluate the contribution of core components in the TCN–LSTM hybrid model to overall performance, we constructed an ablation study framework based on existing experimental data. By comparing model performance under different structural configurations, we examined the impact of the dual-pathway feature extraction architecture on fatigue state recognition capability. This ablation analysis helps validate the rationale of the hybrid architecture design while providing empirical evidence for understanding the model’s internal mechanisms.

In our core component contribution analysis, we used the complete TCN–LSTM hybrid model as the baseline and examined performance changes after removing either the TCN branch or the LSTM branch. The variant without the TCN branch corresponds to the Long Short-Term Memory network model, while the variant without the LSTM branch corresponds to the Temporal Convolutional Network model. By comparing the performance differences between these dimensionally reduced variants and the complete hybrid model, we quantitatively assessed the contribution of each core branch.

As shown in Table 6, removing the TCN branch resulted in performance decreases across all evaluation metrics: accuracy declined from 89.20% to 83.50%, a reduction of 5.70 percentage points; precision decreased by 6.22 percentage points; F1 score dropped by 6.12 percentage points; and AUC value decreased by 4.22 percentage points. These metric changes indicate that the TCN branch plays a crucial role in capturing local time-varying features of physiological signals. When processing ECG R-wave characteristics and rapid power fluctuations in EMG signals, the TCN branch provides effective feature extraction capabilities through multi-scale receptive fields enabled by dilated convolutions.

Comparison of core component ablation study results (average of 15 subjects).

When the LSTM branch was removed, model performance exhibited more significant decreases: accuracy declined from 89.20% to 75.87%, a reduction of 13.33 percentage points; precision decreased by 13.35 percentage points; F1 score dropped by 13.39 percentage points; and AUC value decreased by 9.78 percentage points. This validates the LSTM branch’s critical contribution in establishing long-term dependencies. In fatigue state recognition tasks, the LSTM branch captured long-term trends in physiological signals through its gating mechanism, modeling progressive changes in heart rate variability and spectral migration features during muscle fatigue processes.

Analyzing these performance decrements reveals that removing different branches impacts model performance to varying degrees, highlighting the complementary nature of the two branches in feature extraction: the TCN branch primarily captures local time-varying features, while the LSTM branch focuses on modeling long-term dependencies. Their combination provides a complete feature representation space for fatigue state recognition.

In terms of performance stability, the complete hybrid model also demonstrated advantages: its accuracy standard deviation across 15 subjects was 0.89%, compared to 2.58% for the LSTM-only variant and 1.41% for the TCN-only variant. The dual-pathway parallel feature extraction architecture not only enhanced model recognition accuracy but also strengthened its adaptability to individual variations.

From an architectural design perspective, the performance of the TCN–LSTM hybrid model stems from its feature extraction structure. The TCN branch, through causal convolutions and dilation mechanisms, constructs multi-scale receptive field coverage, effectively capturing local time-varying features of physiological signals. The LSTM branch, through its gated regulatory mechanism, achieves modeling of long-term dependencies. In our hybrid architecture, dual-pathway features are fused through feature concatenation and processed using a differentiated pooling strategy that considers the statistical characteristic differences between feature branches, providing complementary feature representations.

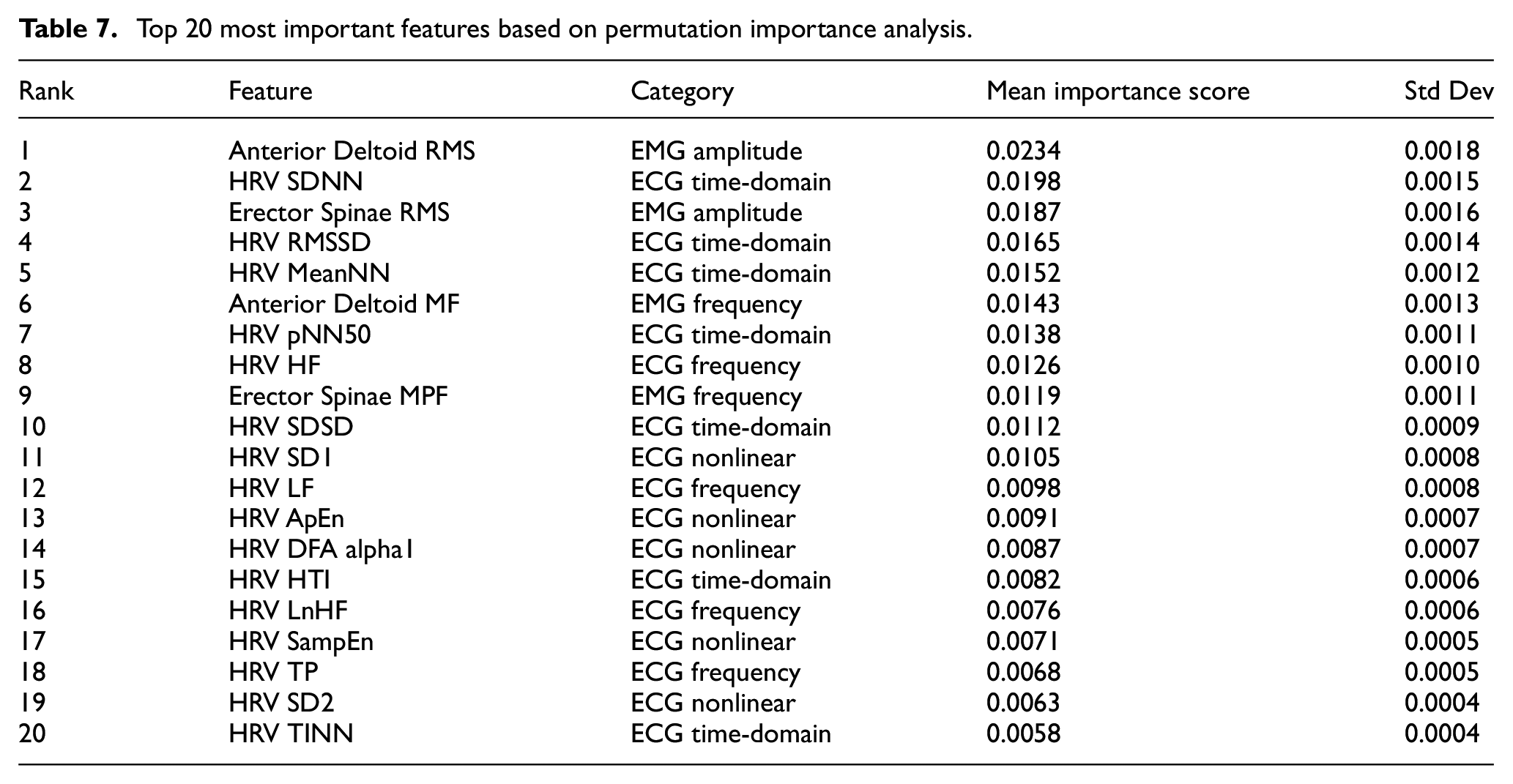

While architectural design provides the computational framework for effective feature extraction, understanding which specific physiological signals drive the model’s predictions is equally crucial for clinical applications. To complement our architectural ablation analysis, we conducted a comprehensive permutation importance analysis on all input features, providing feature-level interpretability of the TCN–LSTM hybrid model.

The permutation importance method evaluates feature contribution by measuring performance degradation when each feature’s values are randomly shuffled. For each feature, we performed 10 permutations and calculated the mean accuracy decrease across all subjects. This approach captures both linear and nonlinear feature relationships while providing directly interpretable importance scores (Table 7).

Top 20 most important features based on permutation importance analysis.

The analysis reveals that EMG amplitude features emerge as the most influential predictors, with Anterior Deltoid RMS achieving the highest importance score. This finding validates that the TCN branch’s multi-scale convolutions effectively capture critical muscle activation patterns. Among ECG features, time-domain HRV parameters (SDNN, RMSSD, MeanNN) demonstrate consistently high importance, confirming that the LSTM branch’s temporal modeling successfully extracts autonomic regulation patterns central to fatigue assessment.

The physiological significance of these findings warrants further elaboration. The prominence of Anterior Deltoid RMS (importance score: 0.0234) as the top predictor aligns with established exercise physiology principles: during cycling, upper body muscles play a crucial compensatory role in maintaining posture and stability as lower extremity fatigue progresses. The anterior deltoid, in particular, exhibits increased activation to stabilize the torso and maintain handlebar grip when core and lower body fatigue accumulates. This compensatory recruitment pattern makes upper body EMG amplitude a sensitive early indicator of overall fatigue state.

Similarly, the high importance of HRV time-domain parameters (SDNN: 0.0198, RMSSD: 0.0165) reflects the well-documented relationship between autonomic nervous system modulation and exercise-induced fatigue. SDNN captures overall heart rate variability, which progressively decreases as sympathetic activation increases during fatigue development. RMSSD specifically reflects parasympathetic withdrawal, a hallmark of increasing exercise stress. The prominence of these features validates that our model successfully captures the interplay between peripheral muscle fatigue (EMG) and central cardiovascular adaptation (HRV) that characterizes exercise-induced fatigue progression.

Discussion

This study presents a hybrid TCN–LSTM framework, featuring a specific parallel dual-branch architecture and a differential pooling strategy tailored for real-time fatigue monitoring during cycling activities, demonstrating superior classification performance compared to conventional single-architecture approaches. While the TCN and LSTM components themselves are well established, the contribution of this work lies in the application and rigorous evaluation of their synergistic integration through this particular parallel design for the complex task of multimodal physiological signal analysis. Through comprehensive empirical analysis, several significant findings emerge regarding the mechanistic advantages of this specific hybrid architectures architecture for physiological signal processing.

The fundamental principle underlying our architectural design stems from the inherent characteristics of physiological signals during fatigue progression. Electrocardiographic signals exhibit structured periodicity with well-defined waveform morphology, while electromyographic signals manifest as non-stationary burst activities with complex temporal modulation patterns. These distinct signal properties create a challenging analytical scenario that single-architecture models struggle to address comprehensively. Our parallel dual-pathway design addresses this challenge by leveraging the complementary strengths of temporal convolutional networks and recurrent neural networks, each processing the original signal simultaneously rather than sequentially.

The TCN pathway excels at extracting hierarchical local features across multiple time scales through dilated causal convolutions. This architectural mechanism establishes exponentially growing receptive fields that effectively capture physiological patterns ranging from rapid ECG waveform characteristics to slower EMG envelope variations. Concurrently, the LSTM pathway, with its sophisticated triple-gate control system, models long-term dependencies and state transitions in both forward and backward directions. This bidirectional processing is particularly crucial for capturing the complex temporal context in ECG rhythms and progressive EMG modulation patterns associated with fatigue development.

A critical innovation in our framework lies in the differentiated pooling strategy designed to preserve complementary aspects of physiological signals. Max pooling in the LSTM branch selectively retains salient temporal features essential for detecting acute fatigue indicators, such as sudden heart rate variations and EMG burst activities during high-intensity cycling phases. Meanwhile, average pooling in the CNN branch preserves statistical distribution characteristics necessary for monitoring gradual fatigue progression patterns that develop across multiple exercise sequences. This mechanistic division enables comprehensive representation of the multidimensional nature of fatigue states.

The performance advantages observed in our hybrid model can be mechanistically explained through feature representation complementarity. When processing ECG signals, the TCN branch effectively captures R-wave morphological features, QT interval variations, and temporal pattern disruptions associated with cardiac adaptations to exercise stress. Simultaneously, the LSTM branch models progressive heart rate variability changes that reflect autonomic nervous system modulation during fatigue development. For EMG signals, the TCN component extracts spectral migration features indicating muscle fiber conduction velocity changes, while the LSTM component tracks motor unit recruitment pattern evolution and muscle activation level trends across exercise sequences.

Our ablation analysis reveals asymmetric contributions from the two pathways, with the LSTM branch providing a more substantial contribution to overall performance compared to the TCN branch. This finding suggests that long-term dependency modeling plays a more critical role than local feature extraction in fatigue state discrimination for this specific task. It is noteworthy that the LSTM-only baseline itself achieved a robust accuracy of 83.50% (Table 5). Nevertheless, the addition of the TCN branch in a parallel configuration, yielding the complete hybrid model, resulted in a further significant improvement of 5.70 percentage points in accuracy (to 89.20%). However, the substantially higher performance of the complete hybrid model compared to either standalone component demonstrates that the complementary nature of these feature extraction mechanisms is essential for comprehensive fatigue assessment.

The unexpected underperformance of the Transformer architecture in our experiments merits mechanistic consideration. Despite theoretical advantages for capturing global dependencies, standard self-attention mechanisms appear fundamentally misaligned with the predominantly local correlation structure of physiological signals. The continuous nature of ECG and EMG signals contrasts with the discrete sequential data for which Transformer architectures were originally optimized. Furthermore, the absence of inherent inductive biases in pure attention-based models may prevent effective learning of the periodic patterns characteristic of physiological signals during cyclical activities like pedaling.

From a physiological perspective, fatigue during cycling activities manifests through complex interactions between central and peripheral mechanisms. Central fatigue involves altered motor unit recruitment patterns, reduced cortical drive, and modified neuromuscular junction transmission, while peripheral fatigue encompasses metabolic substrate depletion, ionic imbalances, and excitation–contraction coupling impairment. These mechanisms produce distinctive temporal signatures in physiological signals across different time scales, from millisecond-level alterations in muscle fiber action potentials to minute-level changes in cardiac autonomic modulation. The dual-pathway architecture provides a computational framework aligned with this multiscale physiological reality, enabling more comprehensive modeling of fatigue development mechanisms.

The computational complexity implications of our parallel architecture warrant consideration. While the dual-pathway design increases the number of parameters and theoretical computational cost compared to single-architecture approaches, the improved learning efficiency resulting from complementary feature extraction mechanisms partially offsets this disadvantage. The hybrid model requires fewer training epochs to achieve superior performance, suggesting that the synergistic integration of TCN and LSTM components creates a more favorable optimization landscape. This balanced approach to model design prioritizes physiological signal representation quality over computational efficiency, which aligns with the critical nature of accurate fatigue monitoring for athletic performance optimization and injury prevention.

Beyond performance metrics, our hybrid framework advances the state-of-the-art in physiological signal processing by enabling more nuanced fatigue state discrimination compared to binary classification approaches. The ability to distinguish between four distinct fatigue levels enables more precise intervention strategies that can be tailored to specific fatigue progression stages. This granularity is particularly valuable in endurance sports where optimal performance depends on maintaining appropriate physiological loading without exceeding individual fatigue thresholds.

Integration of our fatigue monitoring system with training management platforms could enable data-driven decision-making for coaches and sports scientists, facilitating evidence-based approaches to endurance sport training. The real-time capabilities of the proposed framework, coupled with the non-invasive nature of the intelligent garment system, provide practical advantages for field implementations compared to laboratory-constrained fatigue assessment methods. Additionally, the insights gained from model interpretation could advance fundamental understanding of fatigue mechanisms and individual variations in fatigue manifestation patterns, potentially informing personalized training prescription approaches.

Conclusion and Future Perspectives

In this paper, we address the challenge of real-time fatigue monitoring during cycling activities utilizing physiological signals. The conventional single-architecture approaches demonstrate inherent limitations in modeling multi-scale temporal dynamics present in ECG and EMG signals during fatigue progression. To overcome these constraints, we propose a TCN–LSTM hybrid framework that synergistically combines the strengths of convolutional and recurrent architectures through a dual-pathway parallel processing structure. The framework implements a differential pooling mechanism wherein max pooling in the LSTM branch selectively preserves salient temporal features for acute fatigue detection, while average pooling in the CNN branch maintains statistical distributions essential for monitoring gradual fatigue progression. Comprehensive experimental validation with 15 subjects demonstrates that our proposed hybrid model achieves 89.20% accuracy, significantly outperforming baseline architectures including LSTM (83.50%), TCN (75.87%), GRU (74.21%), and Transformer (55.04%). The model exhibits exceptional cross-metric consistency and robust generalization capability across individual physiological variations. This hybrid deep learning framework, integrated with our intelligent garment system featuring strategically positioned electrodes, provides an effective approach to real-time fatigue monitoring with substantial potential for applications in sports science, performance optimization, and injury prevention.

While our study demonstrates promising results, we acknowledge several methodological limitations that should be addressed in future research. Primarily, the laboratory setting, while essential for controlling variables and ensuring signal quality, does not fully capture the complexity of outdoor cycling environments. Outdoor cycling introduces additional variables such as varying terrain gradients, wind resistance, temperature fluctuations, and psychological factors associated with real-world cycling that may influence physiological responses and fatigue progression patterns.

Furthermore, while the Borg rating of perceived exertion (RPE) scale provides a validated measure of fatigue, future research should explore multi-modal fatigue assessment approaches that combine subjective ratings with objective physiological markers. We envision implementing a comprehensive fatigue monitoring protocol that integrates intermittent oxygen consumption measurements, blood lactate threshold analysis, heart rate recovery patterns, and near-infrared spectroscopy for muscle oxygenation assessment. This integrated approach would provide more robust ground truth data for model training and validation, potentially improving classification accuracy while reducing reliance on subjective measures. The correlation between these objective markers and our model’s predictions could further validate the physiological relevance of the extracted features and enhance the clinical applicability of the system.

Additionally, while our current ablation study provides architectural-level interpretability, more detailed feature-level importance analysis is needed to fully address the “black box” nature of deep learning models in physiological signal processing. Future work should implement advanced interpretability techniques such as permutation importance analysis, SHAP (SHapley Additive exPlanations) values, and integrated gradients to quantify the contribution of individual ECG and EMG features to fatigue state classification. Such analyses would not only enhance model transparency but also establish stronger connections between model decisions and specific physiological indicators of fatigue, potentially revealing novel biomarkers with clinical relevance.

Future studies should extend this research by validating the hybrid framework in naturalistic outdoor environments to enhance ecological validity. This validation could employ a multi-stage approach: (1) controlled outdoor experiments on standardized cycling tracks to isolate specific environmental variables; (2) real-world cycling tests incorporating GPS data to correlate terrain features with physiological responses; and (3) development of environmental compensation algorithms that adjust model predictions based on contextual factors such as temperature, humidity, and elevation changes. Additionally, longitudinal studies tracking fatigue patterns across multiple sessions could provide insights into adaptation processes and individual variability factors.

Beyond validation methodologies, system optimization for practical deployment requires addressing hardware constraints. While the proposed TCN–LSTM model demonstrates superior accuracy, its computational complexity compared to simpler models necessitates careful consideration for deployment on resource-constrained wearable devices. Energy consumption studies should be conducted to ensure long-term usability, potentially incorporating power management strategies that extend operational duration during extended cycling sessions. In addition to hardware-level improvements such as the adoption of flexible PCB technology that could significantly enhance wearing comfort and reduce system bulk, future work must also focus on model-level optimizations. making the monitoring device less obtrusive while maintaining signal quality. This miniaturization approach would be particularly beneficial for competitive cycling applications where equipment weight and aerodynamics are crucial considerations.

Specifically, we plan to systematically investigate model compression techniques. These include structured weight pruning to reduce the number of model parameters and quantization of decreased model size, memory footprint, and computational load, which are critical for efficient execution on microcontrollers. Knowledge distillation, where a smaller “student” model is trained to mimic the performance of our more complex “teacher” model, will also be explored. Furthermore, we will evaluate the use of efficient deep learning frameworks optimized for embedded systems, such as TensorFlow Lite for Microcontrollers (TFLM), and investigate architectural refinements, potentially including more compact TCN variants that have shown promise in low-power applications. A thorough evaluation of the trade-offs between model accuracy, computational cost (latency and power consumption), and memory footprint will be essential to determine the most viable path for practical on-device deployment in real-time wearable fatigue monitoring systems. This miniaturization and optimization approach would be particularly beneficial for competitive cycling applications where equipment weight, aerodynamics, and battery life are crucial considerations.

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by the Fundamental Research Funds for the Central Universities (Grant No. 2232 025G-08), China Scholarship Council (Grant No. 2022 06630002), and International Cooperation Fund of Science and Technology Commission of Shanghai Municipality (Grant No. 21130750100).

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.