Abstract

Due to the suboptimal efficiency, accuracy, and increasing costs of manual defect detection in the textile industry, online visual inspection for fabric defects has emerged as an essential and promising research area. However, challenges such as the lack of defective samples and issues with industrial deployment still persist. This paper presents a novel defect detection technique based on deep learning, which primarily comprises two frameworks. First, we design an improved generative adversarial network with an encoder–decoder architecture to address the paucity of requisite defective samples. We use defect-free samples as input to the generator, ensuring that the generated defect samples maintain a similar pattern. We mitigate the vanishing gradient problem using Wasserstein distance as the loss function. Second, we enhance the Single Shot MultiBox Detector network by introducing Inception modules and feature fusion to detect defects across different scales. The AdaBound optimizer is selected to update the model parameters. We compare the proposed approach with other methods on self-generated fabric data sets that are partially produced by our generative adversarial network model. An online defect detection system is proposed to capture fabric images and evaluation in a production environment. Experiments demonstrate the superior performance of the proposed approach, achieving 97.5% accuracy in real time, making it well-suited for application in the industry.

Keywords

Introduction

Fabric surface inspection is a critical task in the textile industry, whether from the perspective of quality or cost. 1 Typically, this task is carried out manually, which is highly intensive and costly. Moreover, it is hard to set a unified standard as defects are detected subjectively. Therefore, there is a pressing need to replace manual detection with industrial automatic methods, such as machine vision. 2

The traditional machine vision methods that use image processing rely on handcrafted features combined with machine learning techniques. 3 These can be divided into statistics-based, 4 spectrum-based, 5 and model-based 6 methods. While traditional methods can achieve good detection results, they are sensitive to the lighting environment, and their performance depends on parameter adjustment. In recent years, deep learning methods have performed well in the field of computer vision by capturing complex nonlinear relationship.3,7 However, deep learning approaches require a large number of fabric defect samples, which are difficult to obtain from the industrial site. In addition, real-time detection should be conducted during production to identify problems promptly, while most detection methods are still offline.

In this paper, we present a novel approach for synthesizing defect samples using a generative adversarial network (GAN) with an encoder–decoder architecture. In contrast to traditional data augmentation methods like random cropping, rotation, and scaling, GANs 8 have shown great potential in generating high-quality synthetic data that closely resemble the original data. Our approach utilizes defect-free samples as input to the generator, ensuring that the generated defect samples preserve similar patterns. Next, inspired by the Single Shot MultiBox Detector 9 (SSD) model, we proposed a refined framework to detect defects with greater accuracy. The refined framework fuses the feature maps from different scales, enabling the detection of defects of various sizes. Thus, the entire model primarily comprises two frameworks. Moreover, we design an online inspection system to evaluate the proposed methods in a real production environment. The system consists of several cameras, a light source, and a mechanical platform. The main contributions are summarized as follows:

An improved GAN model with an encoder–decoder architecture is proposed to expand the defect samples variously without corrupting the original textured pattern.

We propose a refined SSD model by introducing a feature pyramid structure to increase the interaction of information from different levels.

We construct an online defect detection system and demonstrated the performance of our methods in a production environment.

Related Work

Previously, traditional machine vision methods based on manually designed feature extractors were able to effectively detect simple fabric defects with better robustness and interpretability. Typical examples include a gray-level co-occurrence matrix, 10 gray histogram back-projection, 11 linear filters, and morphological methods. 12 Kang and Zhang 13 proposed a novel method by integrating the idea of the integral image into the Elo-rating algorithm (IIER), which can quickly detect the defects of various fabric types detect. Liu et al. 14 proposed a fabric method based on template correction and primitive decomposition.

Traditional machine vision methods involve crafting features manually by experts to identify defects, which is time-consuming and often requires domain-specific knowledge. Recently, with the advancement of computer computing ability, deep learning methods have achieved excellent performance in many visual applications, such as classification 15 and detection.16,17 Deep learning methods can automatically extract features from the input data, eliminating the need for manual feature engineering. Some researchers began to apply proven deep learning architectures like Faster R-CNN 18 and Cascade R-CNN 19 to fabric defect detection as well.20,21 Jing et al. 22 used the nonlinear mapping ability of radial basis function neural network to detect color fabric defects, which can identify more kinds of defects than traditional methods. Wu et al. 23 used the shallow neural network structure method to detect single-color fabric defects, which effectively improves the speed of defect detection. Li et al. 24 proposed a novel feature-based attention network with sequential information for fabric defect detection. Li et al. 25 proposed a wide and compact convolutional neural network architecture for the detection of a few common fabric defects. Jeyaraj and Nadar 26 proposed a computer-aided fabric defect detection and classification algorithm using an advanced learning algorithm. Liu et al. 27 proposed a multistage GAN model to synthesize reasonable defects in new defect-free samples, which can deal with fabrics with unseen textures or materials. However, deep learning methods require a large number of defect samples for training, which can be a challenge, especially in textile applications.

The Proposed Method

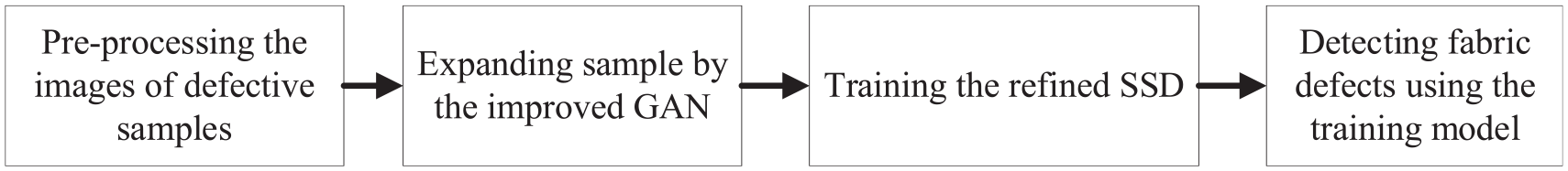

The steps of the defect detection approach are shown in Figure 1. First, the collected defect and defect-free images will be pre-processed, which will be described in section “Refined SSD Structure.” Second, the improved GAN is used to expand the defect sample set. Then, the real and generated defect samples are used to train the refined SSD model. Finally, fabric defects will be detected by realizing the classification and position based on the trained model.

Defect detection steps.

Improved GAN Structure

Pipeline

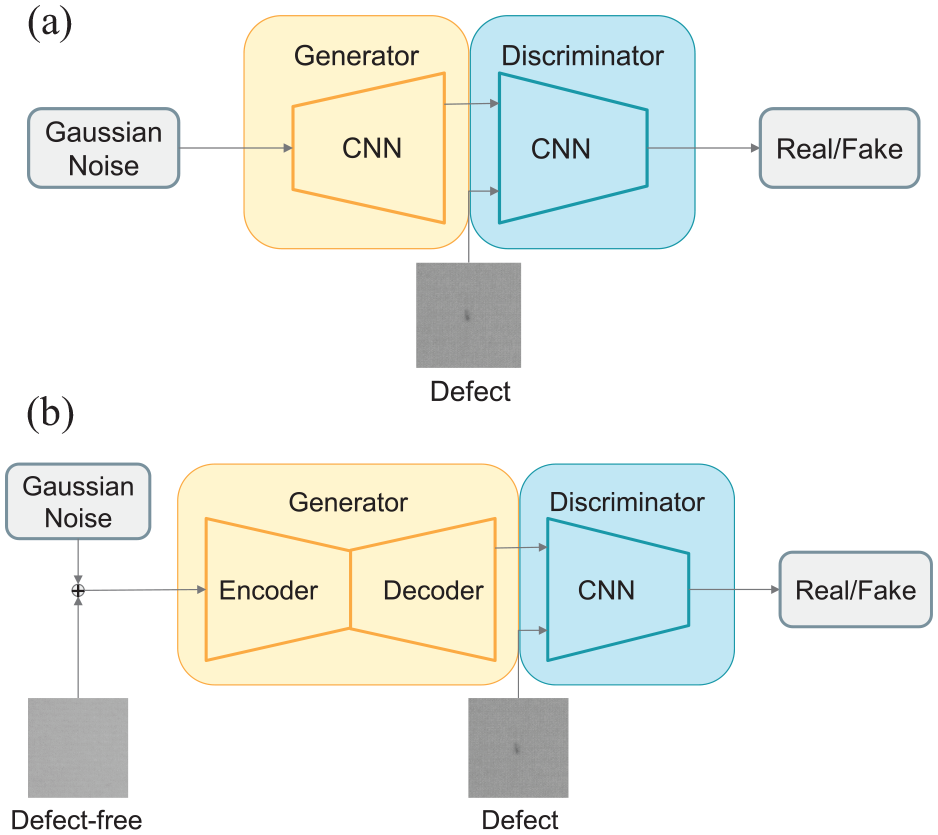

The proposed GAN framework is shown in Figure 2(b).

Pipeline of GAN framework: (a) the original GAN and (b) the modified GAN.

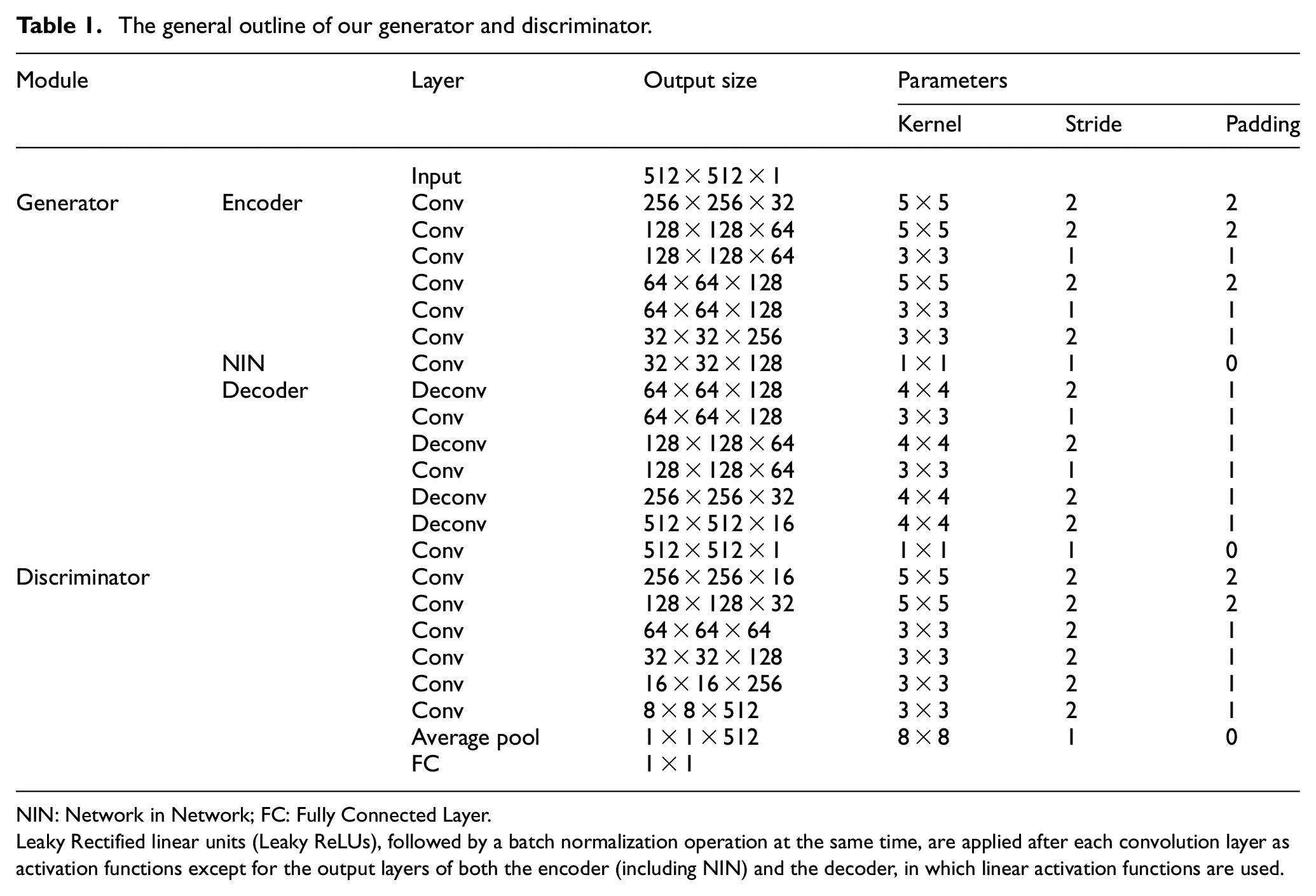

The generator of the original GAN (Figure 2(a)) is to convert a Gaussian distribution or other prior random distribution into the desired data distribution. Given the prominent textured pattern of the fabric, we have incorporated an additional transformation network before the original generator. Using defect-free samples as the input, the new generator has been devised as an encoder–decoder structure architecture to map the defect-free sample to a latent space of the texture pattern. This enables the generated defect samples to maintain a pattern that closely resembles the actual ones. Meanwhile, the discriminator, composed of a sequence of convolution layers, classifies the input data as real or fake. These two modules are learned in an adversarial and unsupervised manner. To enhance the network’s robustness against noise and corrupted input samples, we add Gaussian noise to the input training samples of the generator. The Network in Network (NIN) module, which significantly enhances the nonlinear performance of the entire network, is introduced to address the problem of mode collapsing. Furthermore, 1 × 1 convolution kernels can help reduce the network parameters, thereby accelerating the generation speed. A more detailed parameterization of our network is provided in Table 1.

The general outline of our generator and discriminator.

NIN: Network in Network; FC: Fully Connected Layer.

Leaky Rectified linear units (Leaky ReLUs), followed by a batch normalization operation at the same time, are applied after each convolution layer as activation functions except for the output layers of both the encoder (including NIN) and the decoder, in which linear activation functions are used.

Loss Function

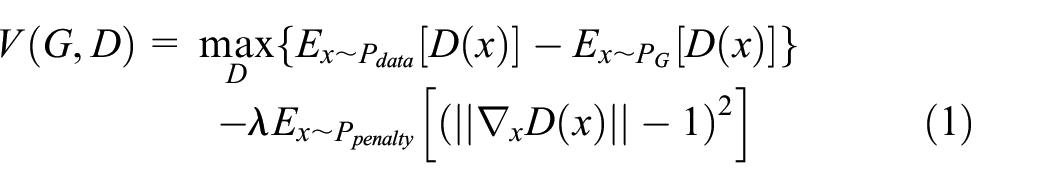

The GAN loss function is typically formulated as a minimax problem, where the generator

The Jensen-Shannon (JS) divergence is commonly used in GANs. 8 However, the problem with the JS divergence is that its gradients can become very small or zero when the generated and real data distributions are very different. The Wasserstein distance measures the minimum amount of work that is required to transform one probability distribution into another. It can provide a smooth and continuous gradient, even when the distributions are very different, and has been shown to provide a more meaningful measure of distance between probability distributions. 28

The Wasserstein distance assumes that the discriminator is Lipschitz continuous. In this paper, we introduce the Wasserstein distance with gradient penalty 29 to enforce the Lipschitz continuity constraint on the discriminator.

where

The gradient penalty term encourages the discriminator to have a Lipschitz constant of 1, which means that its gradients are bounded by a constant factor. The gradient penalty term penalizes the discriminator if its gradients are too large or too small, which helps to ensure stable training and improve the quality of the generated samples.

Refined SSD Structure

Overall Framework

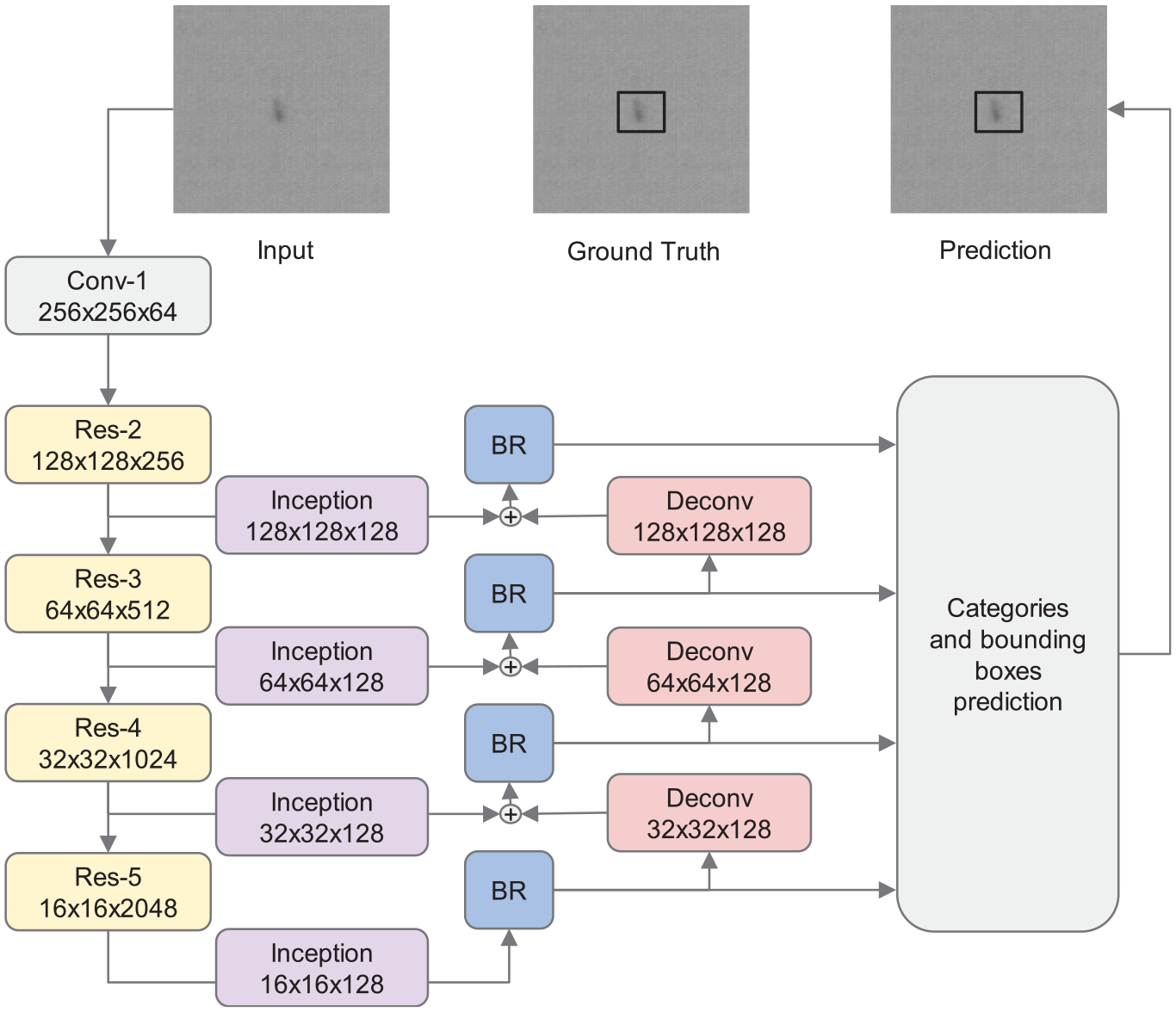

As shown in Figure 3, we proposed a novel fabric defect detection model based on the improved SSD. As a one-stage method in which the frame detection operation is complete in one step, the detection process can be finished more efficiently while maintaining high accuracy. The proposed model consists of ResNet50-based backbone, Inception modules, Boundary Refinement blocks, and an expansive path.

Overview of the proposed SSD framework.

ResNet50

We change the backbone of the original SSD from VGG16 30 to ResNet50 31 by duplicating several blocks of it and arranging them in cascade, as shown in the left of Figure 3. The purpose is to improve accuracy.

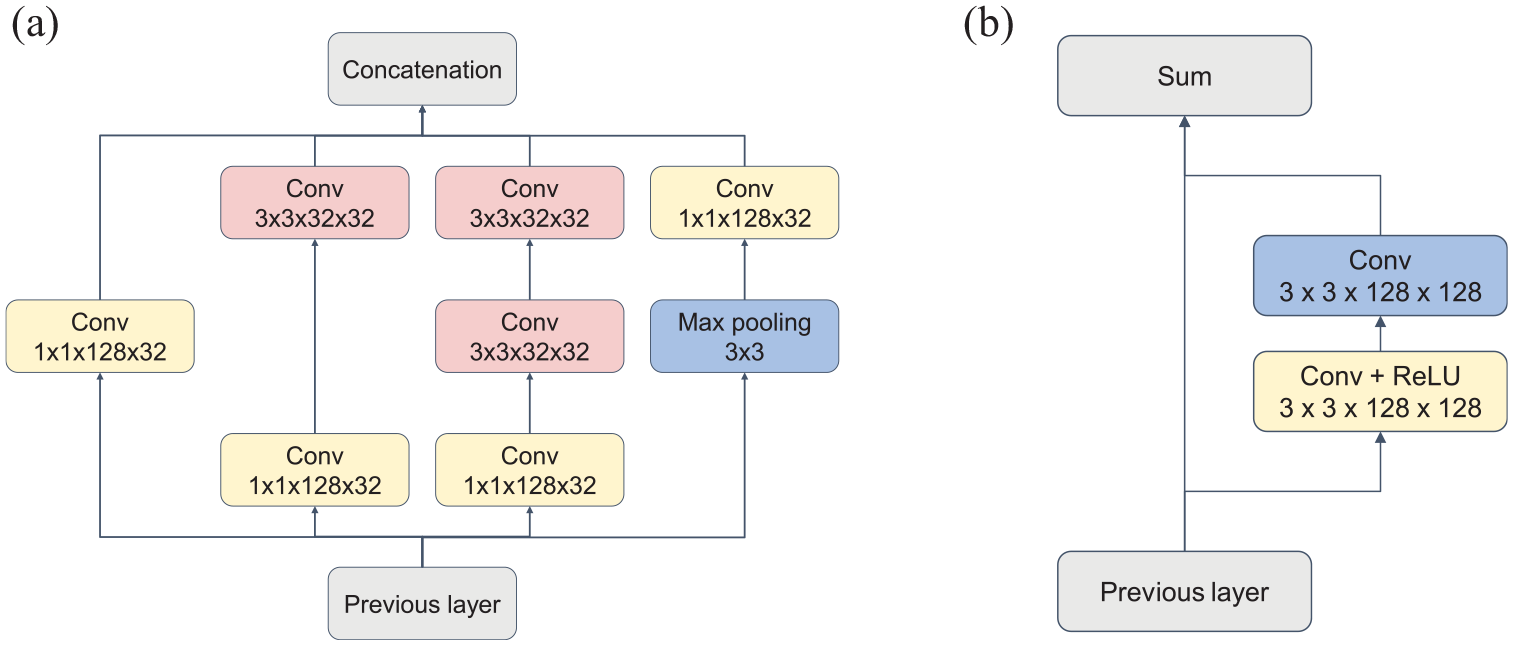

Inception

To address the issue of varying fabric defect sizes and types, we introduce Inspection modules 32 into the SSD network framework. The asymmetric structure of the Inspection module, illustrated in Figure 4(a), can significantly increase the nonlinear degree of the network, effectively extract the fine features of defects in the process of network training, and improve the accuracy of defect detection.

The details of Inception: (a) and boundary refinement, (b) blocks in Figure 3.

Boundary Refinement

We also employ the Boundary Refinement block 33 as a residual structure to refine the boundary of the defect in the feature map. The details can be referred to in Figure 4(b).

Feature Fusion

To get multi-contextual information from detection, we adopt an expansive feature fusion path (the right part of Figure 3). This allows us to capture more semantic information from deeper layers and potentially fuse it with earlier feature maps. We employ learnable deconvolution layers and summation-based skip connections with the corresponding map filtered by Inception modules for feature fusion.

Optimization

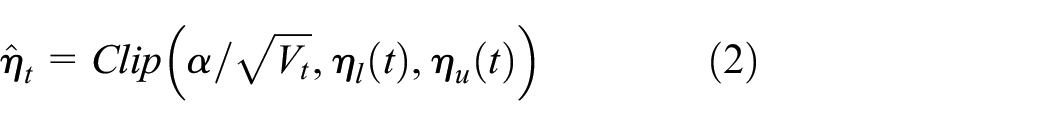

The original SSD model uses Stochastic Gradient Descent (SGD) as an optimizer because the gradient scaling of the algorithm in different dimensions is uniform. The optimization effect is good in the later stage of network training, while the convergence speed is slow in the early stage. Thus, it is difficult to deal with the unbalanced training samples. Another optimizer, Adam, can significantly improve the training speed of the network in the early stage, but it is easy to find the global optimal solution due to the extreme learning rate in the later stage.

In order to solve the above problems, AdaBound 34 is adopted:

where

The initial optimization function is similar to Adam and the training speed is fast. The post-optimization function is similar to SGD. The optimization function is stable throughout the whole training process.

Experiment

In this section, we will validate the performance of the proposed detection model through a series of experiments. In addition, we also design an online inspection system to test the proposed methods in a real production environment.

Data Sets

First, we conduct contrast experiments on Tianchi fabric defect data set 35 to better test the performance of the proposed detection model. The data set contains 9576 images with a size of 1000 × 2466 pixels, including 5913 defect images and 3663 defect-free images. There are 34 categories of defects, including holes, stains, knotted ends, rough warps, and so on.

Then, we generate sufficient samples on a small, self-built data set and validate the performance of the proposed model on an online detection system. All the images are captured by the system elaborated in Figure 6. It consists of 25 grayscale images of size 4096 × 1024 pixels captured from three different warp fabric structures, as shown in Figure 7(a). The images contain five types of common fabric defects, including dirt (water stains, oil stains, etc.), spots (knots, etc.), creases, warp defects (broken yarn, loose warp, crack ends, etc.), and weft defects (lines, looped weft, etc.).

Metrics

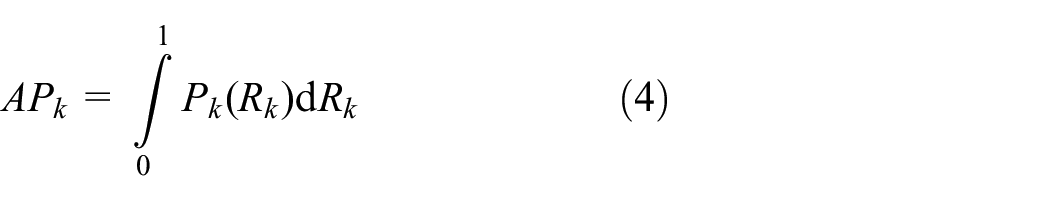

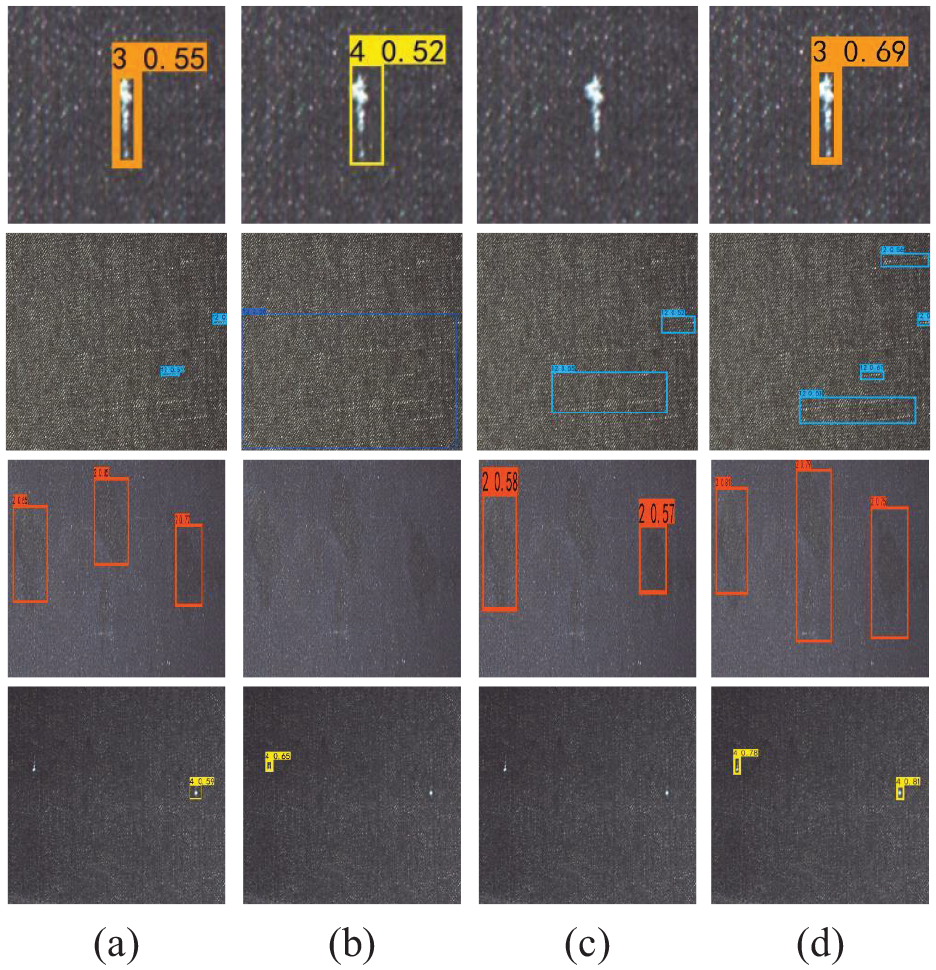

We choose mean Average Precision (mAP), Accuracy (ACC) as metrics to evaluate the location accuracy of the model, which are often utilized in object detection. mAP in patch-wise tasks can be calculated by the following equations:

where,

where

Considering that in the process of cloth defect detection, we focus more on whether the defect is detected correctly than whether the detected defects can perfectly overlap with the labeled region. Therefore, in the calculation of mAP, we set the threshold value of Intersection over Union (IoU) to 0.5, that is, it is considered that the defect is correctly detected if the IoU between the detected region and the labeled region reaches 0.5.

In addition, we use detection time as a metric to evaluate the detection speed of the model. Frame Per Second (FPS) is used on the Tianchi data set, while the average detection time per meter is used in the online experiments for the processes of data acquisition, transmission, and so on also take time.

Contrast Experiments

Experimental Settings

On the Tianchi data set, we only use defect images for training, for defect images also contain a large number of defect-free samples. We split the data set into a training set and a validation set in the ratio of 9:1, that is, 5321 images for training and 592 images for validation. The experiments were trained and evaluated on a single Nvidia RTXA5000, with batch size 16 and epoch 300.

To show the advantages of the proposed method, we apply several common defect detection approaches, such as Jing et al.’s, 36 Liu et al.’s, 37 and Zhou et al.’s 38 works as comparison methods. The configurations are as follows:

Jin: YOLOv3 (DarkNet53 + three layers of feature pyramid +feature fusion) + SGD + NMS;

Liu: SSD (VGG16 + six layers of feature pyramid) + SGD + NMS;

Zhou: Faster RCNN (ResNet50 + FPN + RPN) + Adam + NMS;

Ours: ResNet50+four layers of feature pyramid + Inception + boundary refinement + feature fusion + AdaBound + NMS.

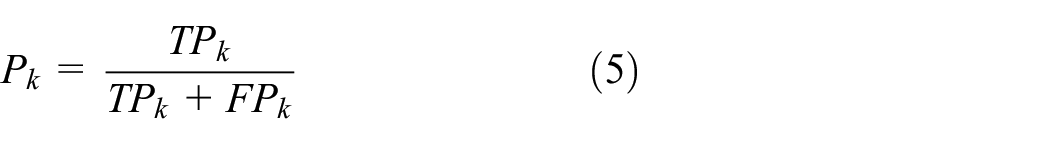

Results

The performance of different detection methods is given in Table 2. In comparison with mAP, the proposed model has a 33.21% score, which has higher location accuracy than other methods. In terms of detection speed, our method can reach 60 FPS, which is suitable for real-time detection. The proposed method uses multiscale feature fusion with Inception structure, which increases the inference time but has higher accuracy compared to SSD and Faster R-CNN. It can better recognize small defects compared to YOLOv3 due to the fusion of the low-level features.

Comparison on different fabric defect detection methods on Tianchi data set.

mAP: mean Average Precision; FPS: Frame Per Second.

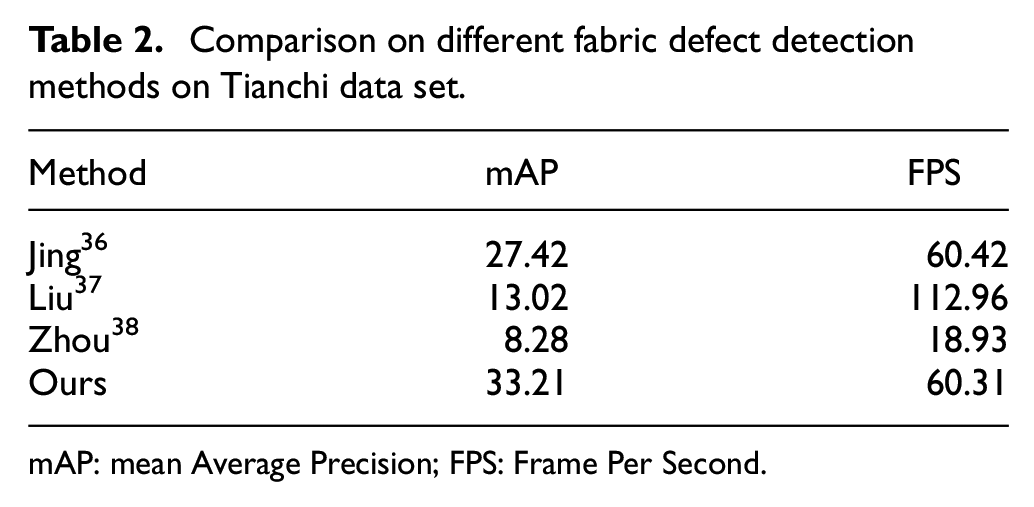

Figure 5 shows the detection results of different detection methods on the Tianchi data set. It can be seen that our method is better at detecting defects at different scales.

The detection results of different detection methods on the Tianchi data set: (a) Jin, (b) Zhou, (c) Liu, and (d) ours.

Online Detection

In order to evaluate the effectiveness of the proposed methods online, we design a fabric defect detection system.

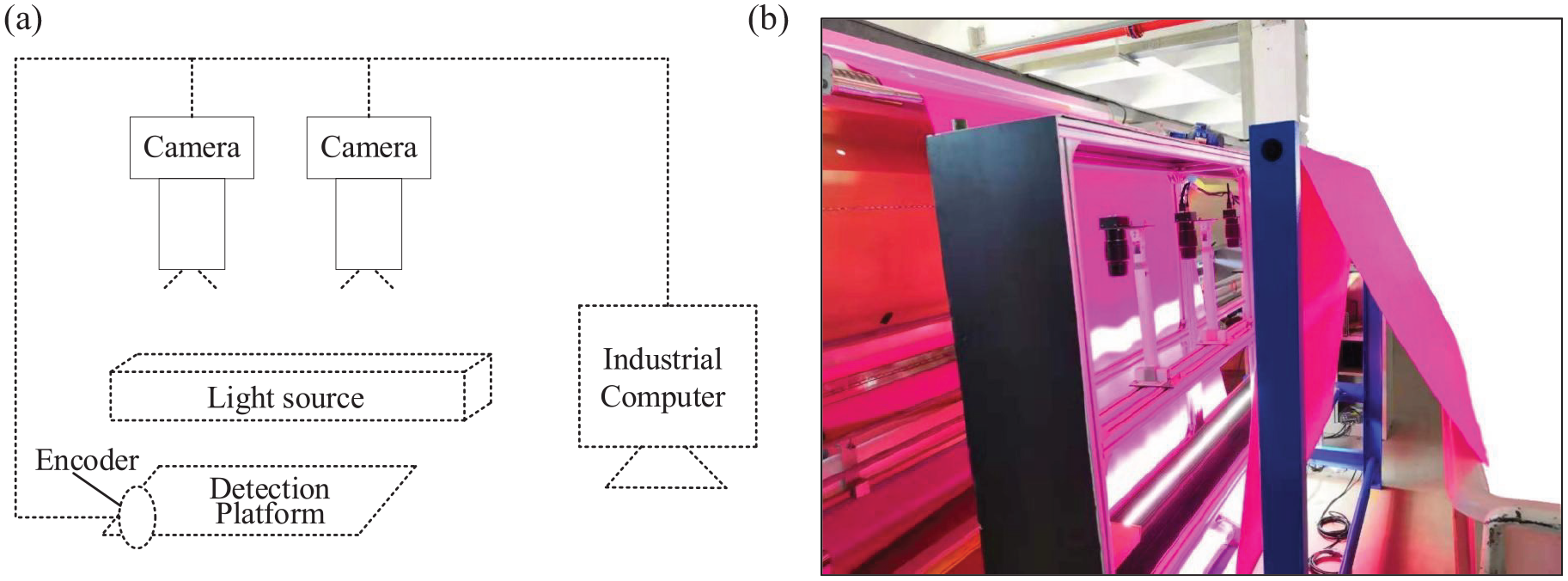

Hardware System

The components are illustrated in Figure 6. Fabric is transported mechanically on a platform. The light source which can produce uniform white light with adjustable light intensity to project on the fabric is perpendicular above. Images are captured by two cameras based on CMOS sensors. We use 4K line-scan cameras, and the frame grabber is an Xtium-CL MX4 for image acquisition. The total field of view of two cameras is 2 m, while the width of fabrics is 1.5–1.8 m. Therefore, two cameras are sufficient to cover the entire fabric. An encoder is used to trigger the image acquisition. The vertical distance between the lenses and the fabric can be adjusted according to the actual width of the fabric. As the effect of defect detection is easily affected by the image quality, the camera and light source of the detection system are installed independently from the detection platform.

Fabric detection system: (a) the components of the detection system and (b) detection system physical map.

Experimental Settings

Due to the high resolution and the small number of defective images, we randomly crop all the original images into 512 × 512 pixels. We only crop in the region with defects to ensure that we do not change linear defects into spot defects, and the problem of positive and negative sample imbalance can be mitigated at the same time.

The data set contains 4400 defect samples, among which 4000 images are generated. We randomly divided them into two parts: 4000 for training and 400 for validation.

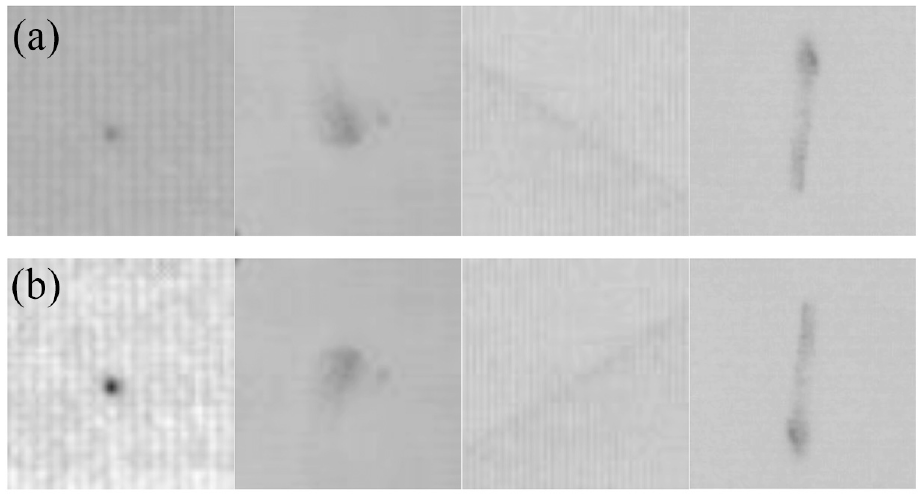

We use random horizontal flipping, random vertical flipping, random 180° rotation, and random histogram equalization as data augmentation, as the captured images are easily affected by the environment. The results are shown in Figure 7.

The result of data augmentation: (a) original image and (b) after augmentation.

Defect Generation Results

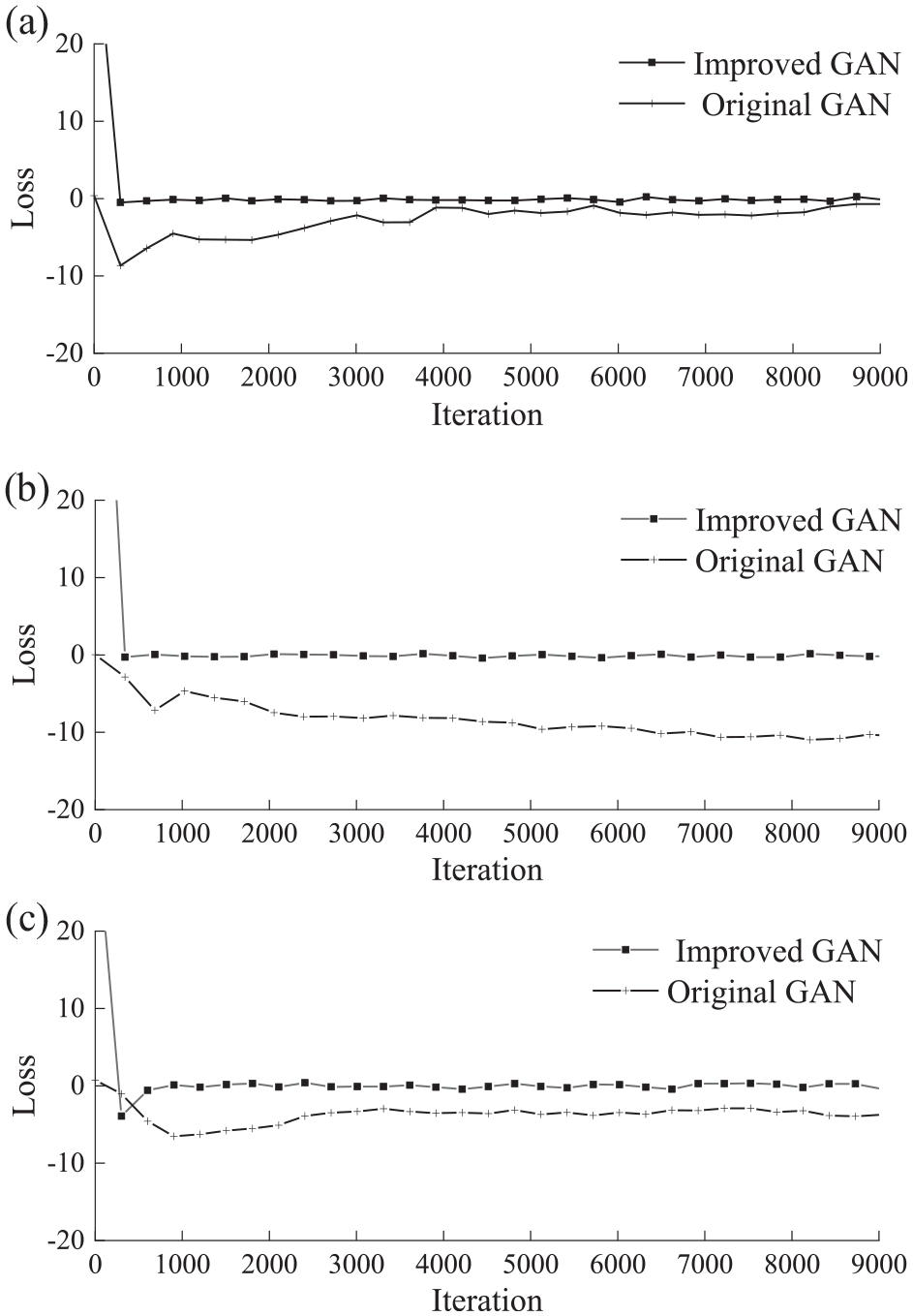

Given that the generation quality and convergence speed of images with various types of defects may be different, the improved GAN is compared to the original GAN under different kinds of defect images.

To compare the convergence speed of the improved GAN and the original GAN under different defects, for analysis, spots, dirt, and creases are chosen. The performance of the generator depends on the value and stability of the loss function of the discriminator in the training process. When the number of iterations is set to 9000, the numerical changes of the discriminator’s loss function in the process of model training are visualized, and 300 points are taken for mapping, as shown in Figure 8.

The convergence rate comparison of GAN algorithm: the convergence diagram of (a) spot, (b) dirt, and (c) crease.

The original GAN can converge after about 4000 iterations, while the convergence stability is not as good as that of the improved GAN. Figure 8(b) shows the effect of the original GAN and the improved GAN in dealing with the dirt with complex features. With the increase in iteration times, the discriminator loss function tends to diverge. Figure 8(c) shows the convergence of the original GAN and the improved GAN when dealing with crease defects. The improved GAN has good convergence speed and convergence effect for defects and converges to 0 after 1000 iterations.

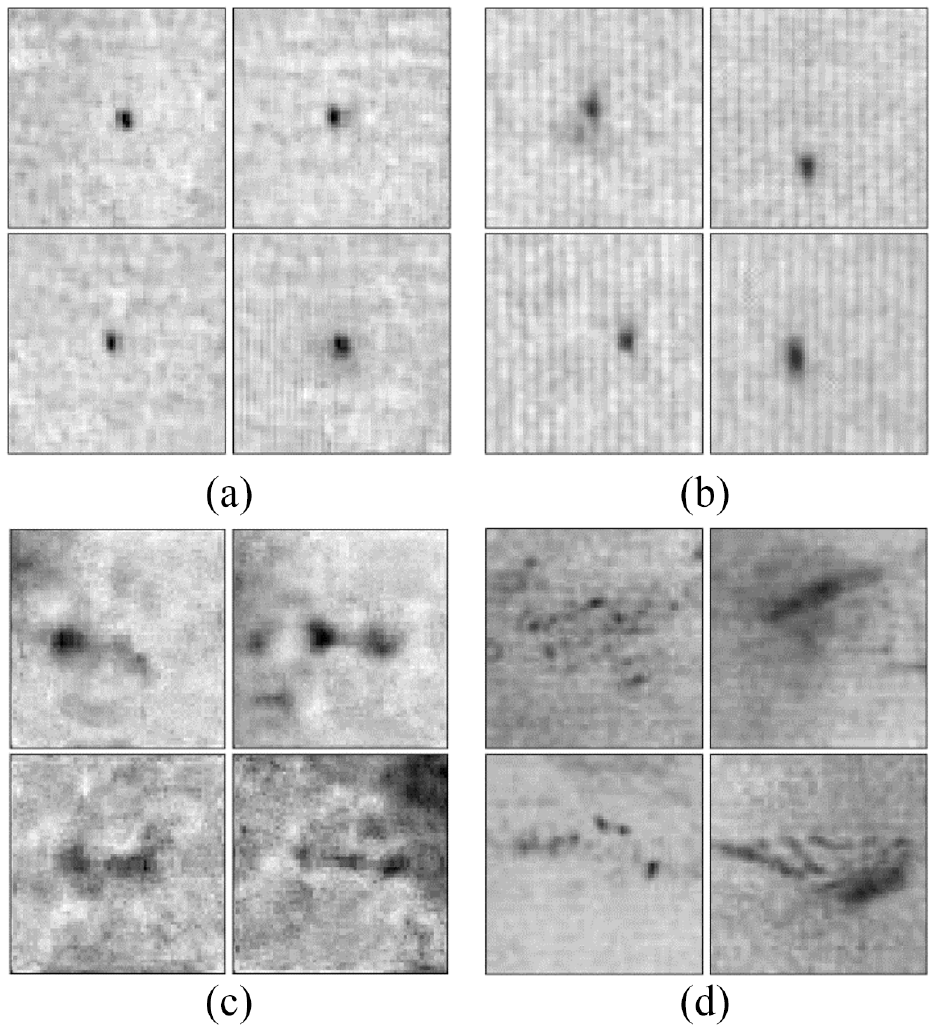

Figure 9 shows the outputs from the original improved GAN generator trained after 9000 iterations. Obviously, the defect images generated by the original GAN are fuzzier. Conversely, samples from our approach show more variation and the original texture pattern remains unaltered, which is consistent with the actual situation.

Image comparison between original GAN and improved GAN. (a) Spot images generated by original GAN. (b) Spot images generated by improved GAN. (c) Dirt images generated by original GAN. (d) Dirt images generated by improved GAN.

Defect Detection Results

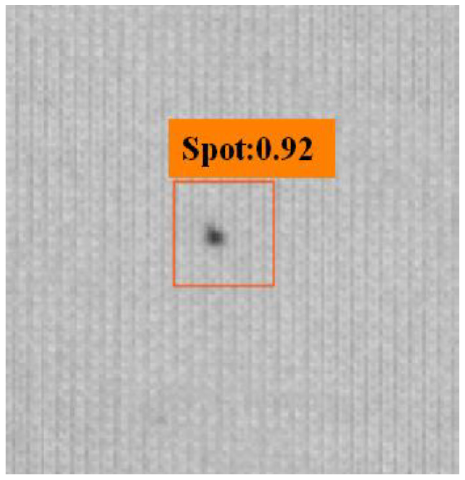

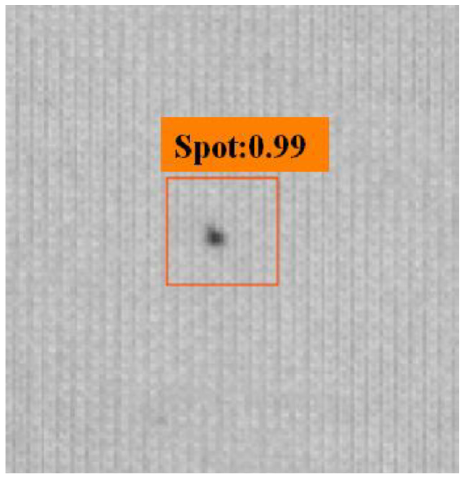

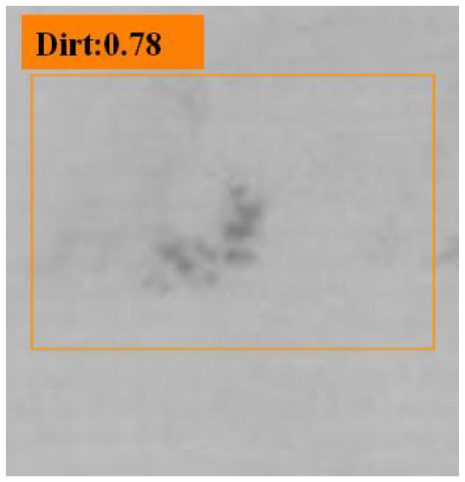

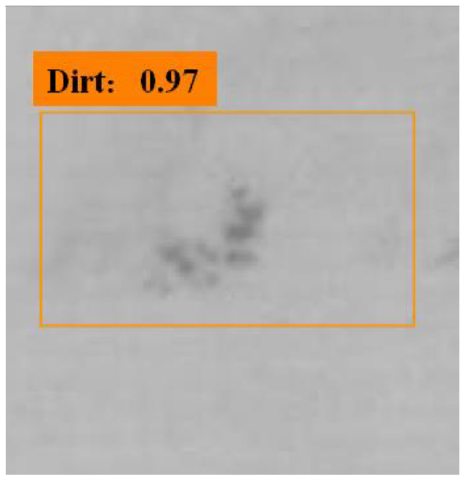

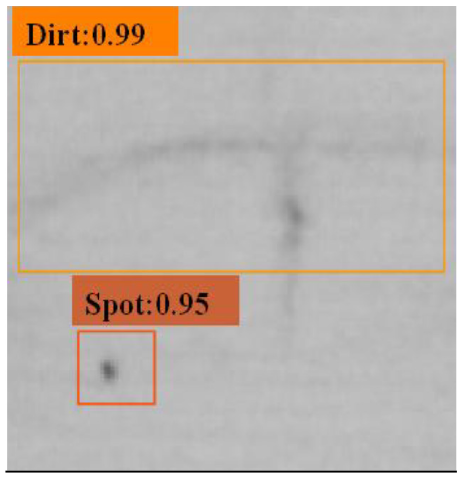

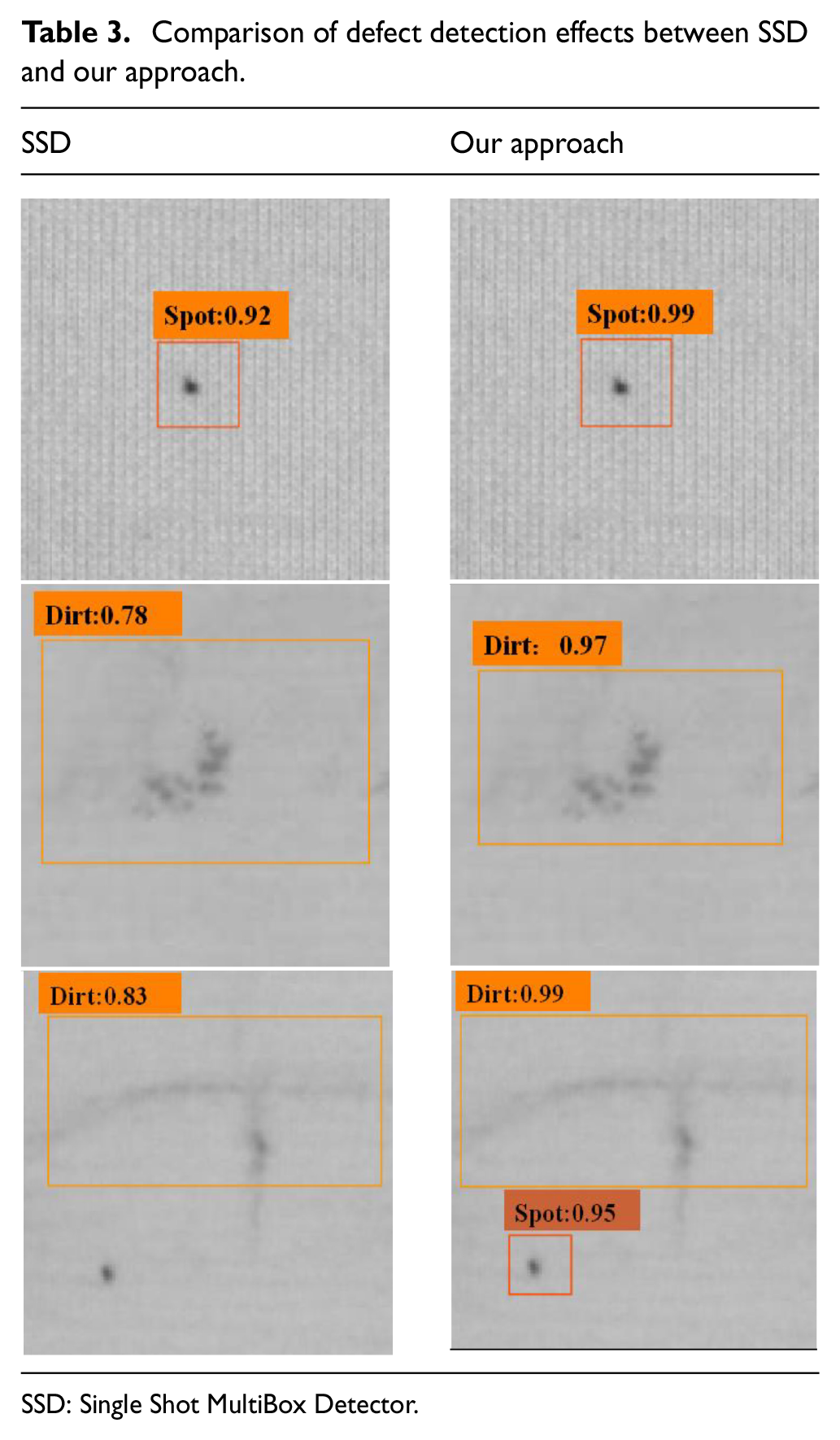

Table 3 shows comparison of defect detection effects between SSD and our approach. The results show that the original SSD and our approach can accurately detect only a single type of defect and dirt in the image, but the improved SSD model has a higher confidence score in identifying defects, which is 0.07 and 0.19 points higher, respectively. When there is a mixture of defects and dirt in the image, the original SSD can only identify the dirt, whereas our approach can simultaneously identify the defects and dirt. Our approach can also accurately identify the same image containing multiple defects, which is more common.

Comparison of defect detection effects between SSD and our approach.

SSD: Single Shot MultiBox Detector.

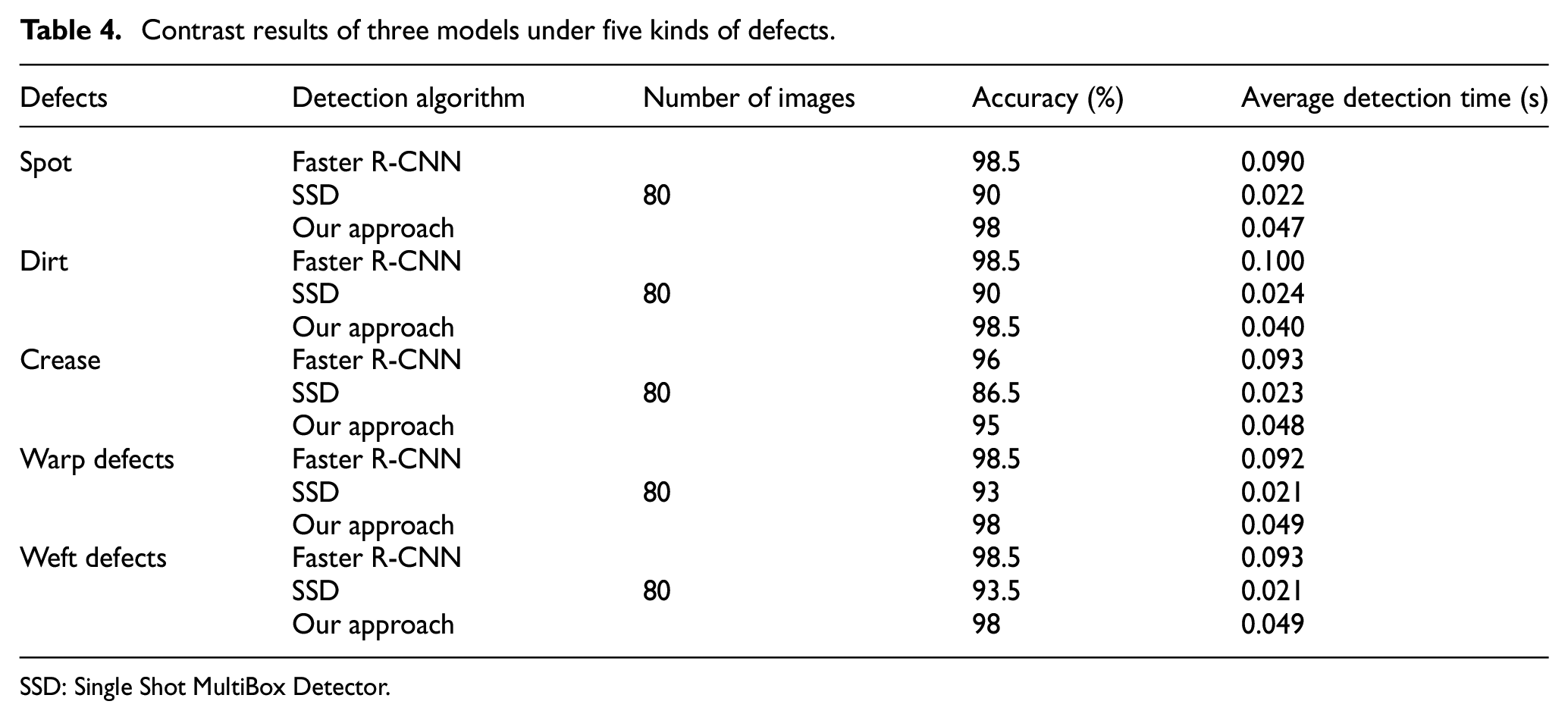

In order to realize online inspection, the defect detection time is especially important. We compare our approach with Faster R-CNN 17 and SSD model in five types of defects: spots, dirt, crease, warp defects, and weft defects. The size of the testing image is cropped into 512 × 512 pixels, and the detection results are shown in Table 4.

Contrast results of three models under five kinds of defects.

SSD: Single Shot MultiBox Detector.

According to Table 4, our approach has higher accuracy than SSD, and basically has the detection accuracy of the Faster R-CNN, with an average detection accuracy of 97.5%. The detection speed is about twice that of Faster R-CNN, and the speed can reach 0.047s/frame, which can meet the requirements of factory online inspection.

Conclusion

In this paper, we present an online detection method for fabric defects based on deep learning, which mainly consists of two frameworks. First, an improved GAN model with the encoder–decoder structure, whose inputs are noisy defect-free samples to maintain the original texture pattern, is adopted to handle the lack of required training samples. Then, we design a novel extension of SSD with deconvolutions and Inception modules to recognize the defects in multi scale. Our experimental results show that this method is efficient while maintaining high accuracy, which makes it suitable for industrial applications.

Although the speed of detection can meet the requirements of the real detection process, the proposed approach is mainly for plain fabrics. In the future, we will concentrate on sample generation on more complex patterned fabrics, which can be combined with our method to improve detection performance.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Key Research and Development Project (grant number: 2018YFB1308800).