Abstract

Camouflage refers to an essential means of protection for military reconnaissance. However, the traditional method of camouflage image generation does not allow for end-to-end generation. The algorithm of Cycle Generative Adversarial Network adopted in this article can not only keep the features of original pictures but also realize the end-to-end generation, which can better solve seasonal problems better. The generation model and the discrimination model are trained using the concept of the cyclic confrontation game of Cycle GAN. In the training process, the loss function served to stimulate the background image and camouflage images mapping to each other. The generated image is captured into the recognition model for recognition, so as to provide feedback on the findings. Finally, the camouflage image with background image characteristics is output to realize the generation of an end-to-end camouflage image. The camouflage evaluation index is used to detect the quality of color, texture, and edge of the experimental output image. The generated image shows a good camouflage effect in the color, texture, and comparison of edges, thus verifying the effectiveness of the practical scheme.

Keywords

Introduction

With the rapid development of computer technology, intelligence, and networking have been pushed to a higher level of development. Technologies such as artificial intelligence (AI) tailoring and intelligent design have promoted the transformation and upgrading of the industry. The effective combination of computer technology and clothing can not only can improve the efficiency of clothing development but also can effectively improve the function of special clothing.

Pattern is an important application of camouflage technology; it has a unique form and distinctive style. The camouflage effect is achieved by reducing the difference of optical properties such as brightness, color, and texture between the camouflage effect and the surrounding environment.1,2 Camouflage is widely used in the military field because of its advantages of quick effect and low cost. By means of camouflage technology, the obvious characteristics of targets will be reduced to avoid the observation, detection, and attack by enemy detection equipment so as to protect strategic targets and soldiers, thus camouflage technology will effectively improve the survival probability of soldiers on the battlefield. 3 Nowadays, as necessary strategic equipment for combat and training, camouflage clothing is widely used not only in the military field but also in outdoor activities. At present, the commonly used pattern in camouflage clothing takes advantage of human visual characteristics, through the computer extraction background of color for deep processing, and develops digital camouflage. The unique mosaic pattern of digital camouflage spot combination well simulates the characteristics of the natural environment. However, digital camouflage is primarily intended for a single background and state, and its capability is considerably weakened with changes of location, season, and time. Digital camouflage still relies on human perception and a single color, making it difficult to camouflage within a 150-m radius. 4 Therefore, it is necessary for us to develop a method that can better automatically map the color and texture of the image background into the generated pattern to obtain a better camouflage effect.

The fusion of computer technology and graphic design has created digital camouflage; however, k-means clustering algorithm, peak point clustering algorithm, and fuzzy C-means (FCM) clustering algorithm are effective in the design of digital camouflage, but they are not well integrated with the final product design. With the continuous development of deep learning technology, 5 scholars combine the technology of computer neural networks with digital camouflage. Alfimtsev et al. 6 used deep convolution generative adversarial networks to generate samples of different camouflage patterns based on existing camouflage patterns with image recognition models such as the fast region-based convolutional network method (Fast R-CNN) 7 to evaluate the generated camouflage effect. And it was proved that the method is valid by selecting the best camouflage style. Teng 8 uses densely connected convolutional networks to extract image features. Therefore, in this work, we use the automaticity of the Cycle Generative Adversarial Network (CycleGAN) model to generate camouflage patterns, which is based on the application of depth learning in camouflage image processing. The CycleGAN can use the input image to carry on the feature fusion; it does not depend on the experimenter’s subjective judgment and can produce high-quality, strong anti-reconnaissance ability, and an end-to-end camouflage pattern according to different environments; consequently, the final camouflage clothing has a good camouflage effect.

CycleGAN

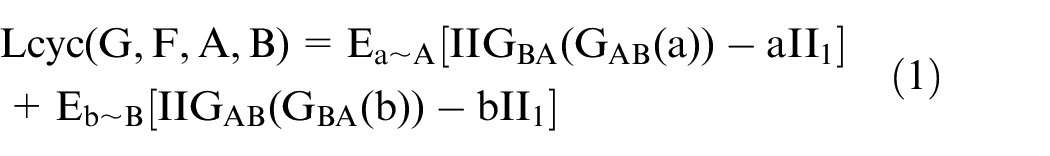

Ian Goodfellow first proposed the generative adversarial network model in 2014, which is a deep learning model that stands out among numerous computers learning networks due to its unique adversarial ideas. With the continuous improvement of the GAN algorithm model, it has been widely used in computer vision (CV),9–11 images, 12 machine learning (ML),13,14 voice processing (AS),15–17 and so on. The GAN includes two parts: the generative model (G model) and the discriminative model (D model). The model structure of GAN is shown in Figure 1. The generator first receives a random signal and learns the distribution of the input real image, and then generates an image similar to the real image; the ultimate goal is to deceive the discriminator. At the same time, the generator inputs the generated image and the real image into the discriminator. The discriminator determines the probability that the input image is close to the real image, and then outputs a probability value and gives a feedback to the generator. In this way, the generator and the discriminator constitute a dynamic game process. The G model needs to be optimized as much as possible to train the generator and the discriminator through the stochastic gradient descent. While the G model needs to be optimized as much as possible, the D model also needs to optimize itself to identify the true or false targets as much as possible so that the results can be optimized. In the ideal state, the image generated by the generator gets closer and closer to the real image, making the discriminator unable to judge the truth of the input image, and finally, the whole model reaches the Nash equilibrium state. The generation of camouflage can be regarded as the migration of image style in the data set using GAN to generate garment patterns. CycleGAN realizes bidirectional mapping based on GAN, which has a better effect in the field of image style migration.

Generative adversarial network model.

CycleGAN was first proposed in 2017 by Zhu et al., 18 while the model builds on the original GAN model, adding a generator and a discriminator separately. The CycleGAN model is superior to other models in the field of image translation mainly because of the introduction of cyclic mapping. The CycleGAN network is modeled by defining two potentially related domains X and Y, namely, G:X-Y. Two generating models G and F and their corresponding discriminant models DX and DY are set in the two mapping functions. By inputting the image data set X{x1, x2, …} into generator G, that can generate a fake image of the target field Y, namely, F(G(x)). Then, inputting Y1 into generator F can generate a fake image G(F(x)) of the X field. F(G(x)) and G(F(x)) will be sent to the corresponding discriminant network, respectively. Because the two discriminators have the ability to distinguish true data from false data, DX (DY) will cause the generator to generate false images as similar as possible to the target domain; the mutual learning transformation between the two sets of images is achieved through continuous circular confrontation training, that is, the mutual mapping between domain X and Y is realized.

Camo Image Generation Model Based on CycleGAN

The bidirectional mapping of the CycleGAN algorithm enables the output image to retain the features of the input image to the greatest extent. In this article, we present an experiment based on CycleGAN, which is different from the original GAN in that the input is an image rather than a random noise. This article will describe the overall architecture of the CycleGAN model and the whole algorithm process in detail.

Experimental Model Design

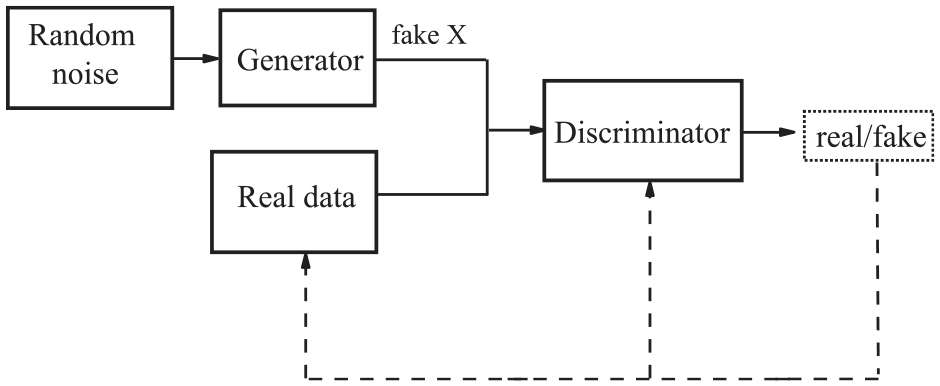

Because of its unique cyclic mapping performance, CycleGAN has incomparable advantages over other models in feature texture extraction and the style transfer of two image sets. Define the environmental image set as domain A and define the camouflage image set as domain B. The generated network model is shown in Figure 2. The generated network consists of three convolution layers (sampling below), multiple ResNet blocks, two transposed convolutions with stride 1/2, and one convolution layer that maps features to RGB (upper sampling). Nine ResNet blocks are used to process the input image with a resolution of 256 × 256 so that the original features of the input image can be preserved in the output image. The discriminant model uses the PatchGAN structure to divide the image into 70 × 70 patch sizes, and the discriminant network only needs to compare or judge the truth and falsehood of each patch and then take the average output. Compared with the model that inputs the whole picture for discriminant, this model can greatly improve the speed of operation.

Generative model structure.

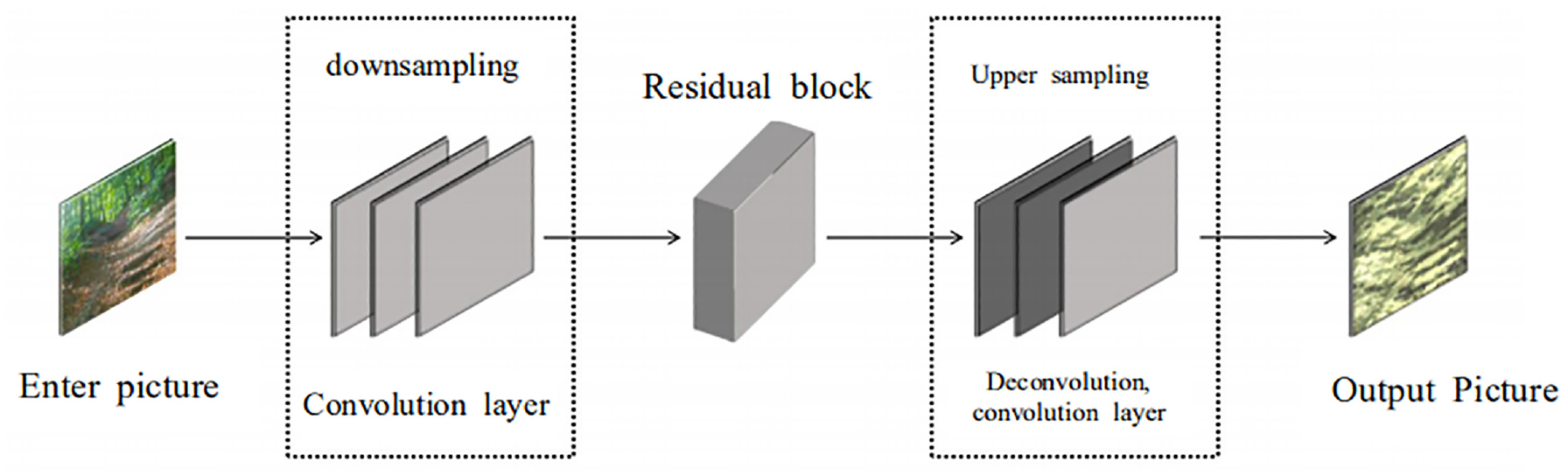

The aim of this article is to make the camouflage image have the complex texture of the environment image, so we use the fight loss function to study a → b → a mapping. To make the false graph G of the two discriminators as similar as possible to the real image, the cyclic loss function is shown in formula (1):

where G (·) is the generator, F (·) is the discriminator, A is the generated image, and B is the real image. The sample is A ∈ A, b ∈ B (where A represents the target data set, and B represents the source data set).

The adversarial loss is used to learn the map and the loss function is used as an incentive, so the CycleGAN network is able to realize a one-to-one map of images in the domain in the face of a more complex data set, which avoids major errors in the learning process. With the background image that inputs into the model network, the multi-layer residual network in the generative model is used to ensure the delicacy and integrity of the extracted texture features. Then, the generated image is input into the discriminator of PatchGAN structure to judge its authenticity, and the camouflage pattern similar to the color texture of the environment image can be generated.

Algorithm Process

To realize the generation of camouflage pattern by Cycle GAN algorithm, we need to write the code in Python language and implement the framework of the whole model to perform the experiment. The specific algorithm flow is described as follows:

Step 1: Sets up the running environment, installs the Python interface OpenCV, scikit-image, and other libraries, and preprocesses the data set of the production, the environment, and the camouflage image preprocessing.

Step 2: Layer files are compiled, including the definition of the model, the convolution layer, the deconvolution layer, and some auxiliary functions, which provide a preliminary framework for the final complete model.

Step 3: Compiles the model file, defines the generation, discriminates the model, compiles the training file and the main function, and completes the entire CycleGAN model structure.

Step 4: Uses the original data set to train the model. After training, the result and the weight are checked.

Step 5: Trains the existing data set, places the environment data set in the train file, places the camouflage image data set in the train B folder, starts the program under the main function, and sets the number of training sessions to 100.

Step 6: Compares the image effect produced by different training times, which can adjust the times of cycle iteration in time to output the best result.

Experiment

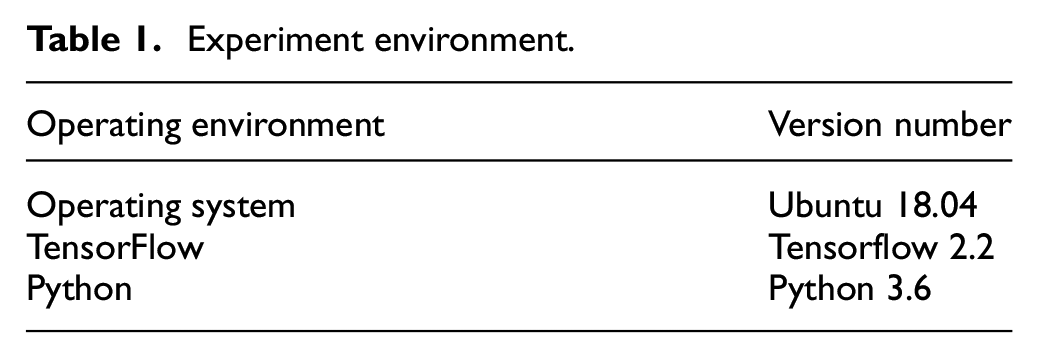

To verify the results of the present experimental scheme, many experimental arguments are conducted on the image generation. The algorithm operation process is shown in Table 1. The whole operation uses the Ubuntu 18.04 system and carries the operation environment platform with Python 3.6 and TensorFlow 2.2. Simultaneously, the OpenCV, scikit-image, tqdm, and oyamel data image processing package based on the Python interface are installed.

Experiment environment.

Data Set Preprocessing

Since the generator requires a large amount of learning and training to produce realistic images, the classic zebra and horse data sets of GAN are used for training, and the training times are set to 100. According to the output results, the cycle times are adjusted to determine the model parameters. Then, to determine the best input effect of camouflage images, 40 camouflage images and 40 environment images are fed to the algorithm, and the number of input images is continuously adjusted according to the test effect. However, few countries make their camouflage image data sets available to the public. Therefore, it is necessary to collect your own pictures and build data sets as required. To make the final generated pictures achieve a satisfactory effect, it is necessary to understand the picture size and resolution of pictures to be collected according to the data code, ensuring that each picture has the same size and resolution in each data set. Finally, 300 environment images and 300 camouflage images are selected in the image database, and the data set is expanded to 600 × 600 images by means of turning over, clipping, and adjusting contrast. All images are 256 × 256 pixels in JPG format.

Environment Picture

As mentioned above, good camouflage effect can be achieved if the camouflage pattern retains the texture of the relevant environment picture, similar color block composition, and so forth. The seasonal characteristics of each environment are different. According to the literature, the best hiding effect can be achieved using the middle color between the general color and the unique color of each background. 19 Therefore, in the design of camouflage clothing pattern, we first need to set the unique color of the combat environment, and then set the scene color that may be converted to a universal color. This article takes army jungle camouflage clothing pattern design as an example (below are all), with green jungle plants as the main background, collecting brown, blue, and other colors to match the specific combat background of a series of environmental pictures. The final selection is 300 environmental images; some specific images are shown in Figure 3.

Partially environment image of some forest land.

Camouflage Pictures

Camouflage is an important means to confuse the enemy’s military investigation. Camouflage technology has been improved with the development of patterns. At present, the People’s Liberation Army has begun to adopt new camouflage clothing, also known as “starry sky camouflage,” divided into five types of ocean, city, desert, woodland, and jungle, which can adapt to the needs of different terrain and environment at home and abroad. However, due to military secrecy and other issues, no country has made public the data set used for camouflage clothing design. Therefore, it is necessary to collect pictures and establish data sets by ourselves. To make the output pattern close to the camouflage style, pictures of camouflage clothing should be extensively collected. To adapt to the combat environment, countries have been continuing to optimize the pattern and the color design of camouflage clothing. Consequently, the aim is to learn from other countries some of the design experience of camouflage clothing; we also collected some of their actual camouflage clothing pictures. Finally, we selected 300 camouflage images. Figure 4 shows the sectional camouflage images. In addition, to adapt to the diversity of camouflage clothing and the variability of the combat environment, we no longer distinguish the types of camouflage pictures when inputting them.

Partially input camouflaged image.

Result Analysis

Some results of the experiment are shown in Figure 5. When the training epoch is 15, the output image has simple color, vague and blurred texture, and barely obvious patches. When the training epoch is 45, the image texture begins to be clear, more blurred patches can be seen, and the color remains good, with no large offset. As the training epoch increases, the output camouflage image will be closer and closer to the background image in terms of color and texture.

Some images with epochs 15 (a) and 45 were trained (b).

Figure 6 shows some examples of images generated based on the CycleGAN model. The left-most images are the environment images, the middle images are the process images of the algorithm, and the right-most images are generated by the model.

Part of the CycleGAN model generates the image.

Neuroscience research has found that when we measure the similarity between images, we observe the similarity between image structures more. 20 The evaluation of the generated camouflage image effect has traditionally adopted subjective analysis, with the pattern applied to clothing and in-depth environments for field application evaluation. With the development of science and technology, many scholars have proposed some evaluation indexes of the camouflage design process. In addition, the color and texture of the background image are pixelated by digital camouflage. 21 Driven by this, to verify the validity of the experimental scheme, we propose to take the color, texture, and edge of the input and output images shown in Figure 7 as the evaluation indexes and evaluate the practical effect objectively and subjectively. Finally, the structural similarity algorithm is used to evaluate the generated images of different models quantitatively.

The input image ((A), (B), (C)) and the corresponding output image ((A1), (B1), (C1)).

Color

Color is the basic physical characteristic of the color image, which is different from the gray image. Under the condition of illumination, the object reflects light into the visual perception system, and the brain receives the stimulation to get a color judgment. The camouflage principle is similar to the chameleon effect, by blending with the color of the external environment to achieve the best camouflage effect.

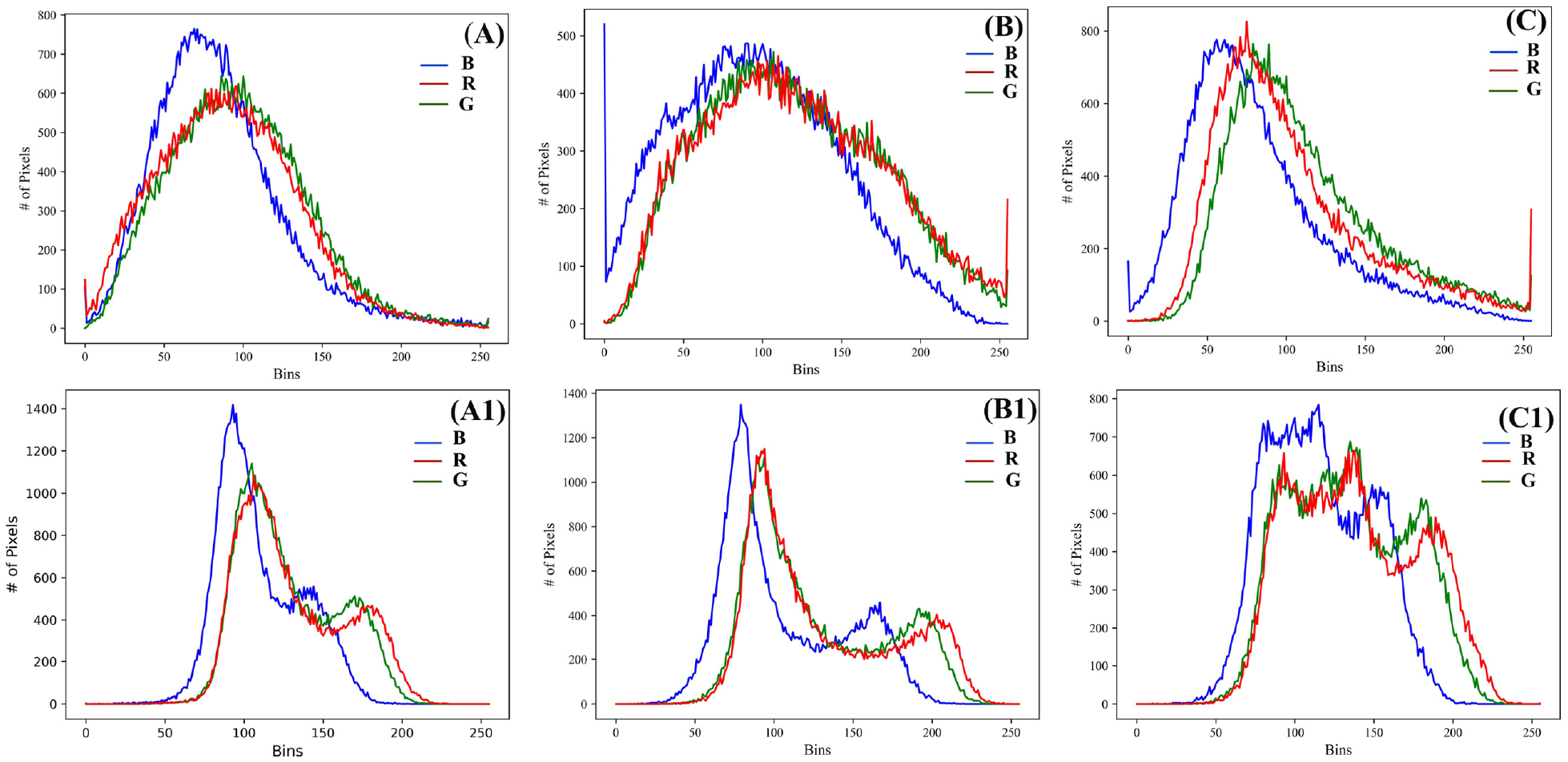

In the aspect of camouflage image color, the input image and its generated image are extracted as shown in Figure 8. The color card in the middle of Figure 8 shows that the input image and the generated image are roughly the same in a color. Then, we process the input image and its generated image using the color dominant color histogram. The result is shown in Figure 9. From Figure 9, we can see that the input image and its generated image have the same dominant color, and the primary color ranges are roughly the same. Moreover, the transition between different color blocks is not obvious, which has a good color mixing effect. The color similarity between the camouflage image and the background image is high, and the tone gradient effect of the simulated background image is good.

Camouflage image produced by color contrast with the background image.

The color primary color histogram of the input and output images.

Texture

Texture is the visual representation of the surface of a visual object. It can reduce the difference between the camouflage object and the surrounding environment by preserving the texture features of the background. In his research, Wu 22 used the combination algorithm of the dot template distribution of a heuristic nesting algorithm to make a natural background contour texture, and then designed a digital camouflage pattern. Jia et al. 23 used the Markov random field model to simulate the texture features of natural environment and combined it with the main color extraction of the natural environment to generate camouflage patterns.

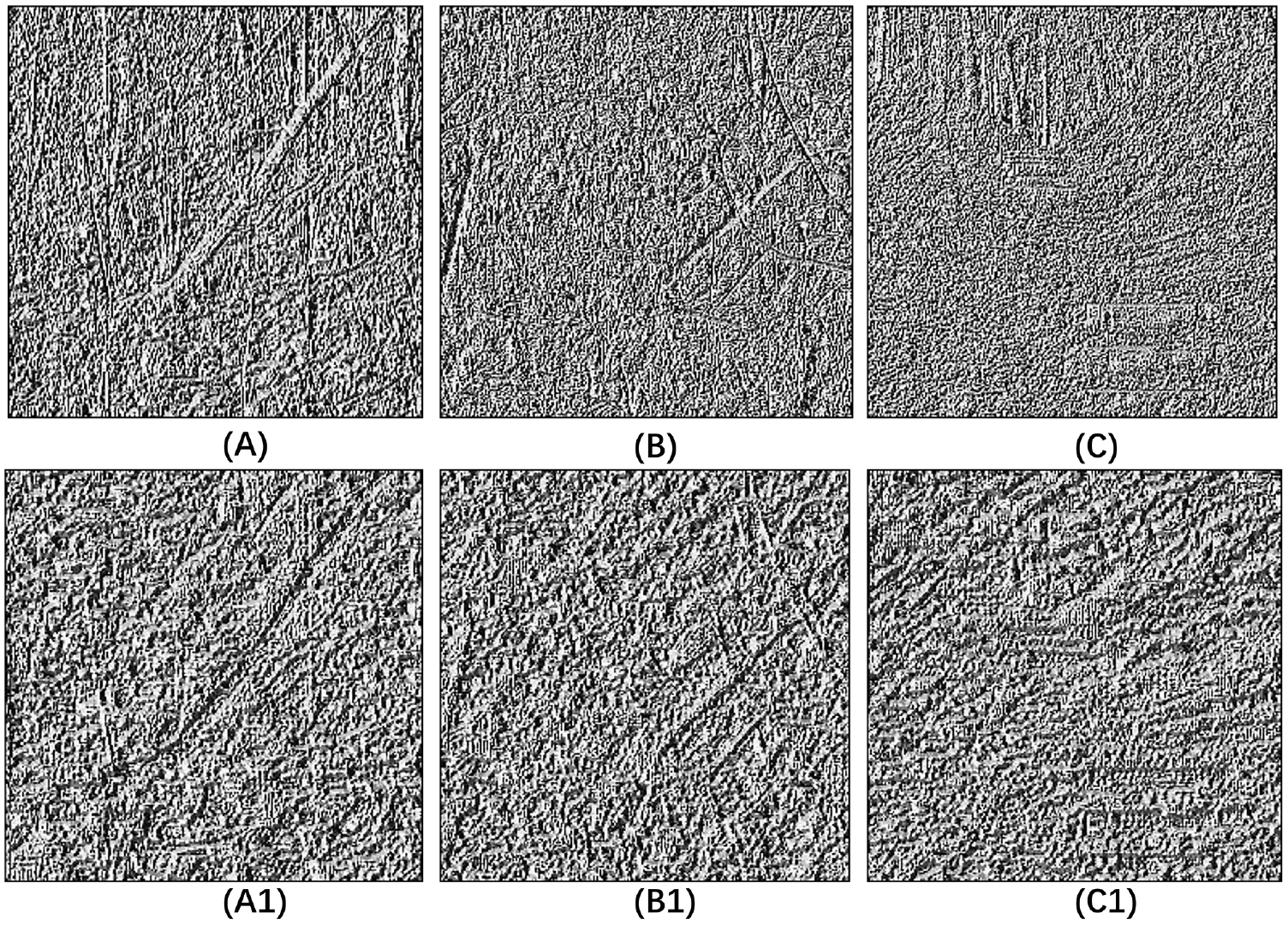

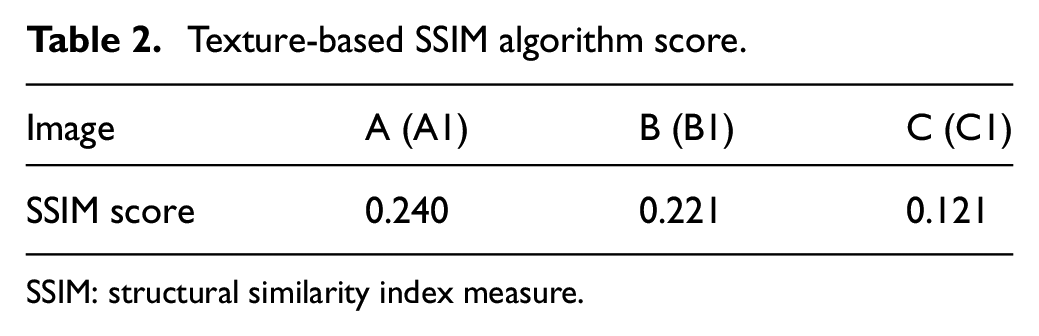

In the aspect of texture comparison, the local binary pattern (LBP) algorithm is used to extract the texture features of the image, as shown in Figure 10. As can be seen from the generated image shown in Figure 7, the generated image clearly retains some of the texture features of the background image. The preservation of texture features can reduce the difference between the contour of clothing and the environment and has a remarkable three-dimensional effect, so as to improve the camouflage effect of clothing. In terms of patch details, the generated masquerade patches are out of order and the patches of different colors and shapes overlap each other on the basis of keeping a large background outline, so the image will produce a visual three-dimensional effect. On the basis of LBP algorithm problem extraction, the structural similarity index measure (SSIM) structural similarity algorithm is used to detect the texture of the image. The experimental results are shown in Table 2. The results show that the generated image is similar to the input image in texture. The CycleGAN algorithm can keep some texture features of the environment image in the camouflage image, and the generated camouflage image has a good consistency with the background image in texture.

LBP algorithm for image texture extraction.

Texture-based SSIM algorithm score.

SSIM: structural similarity index measure.

Edge

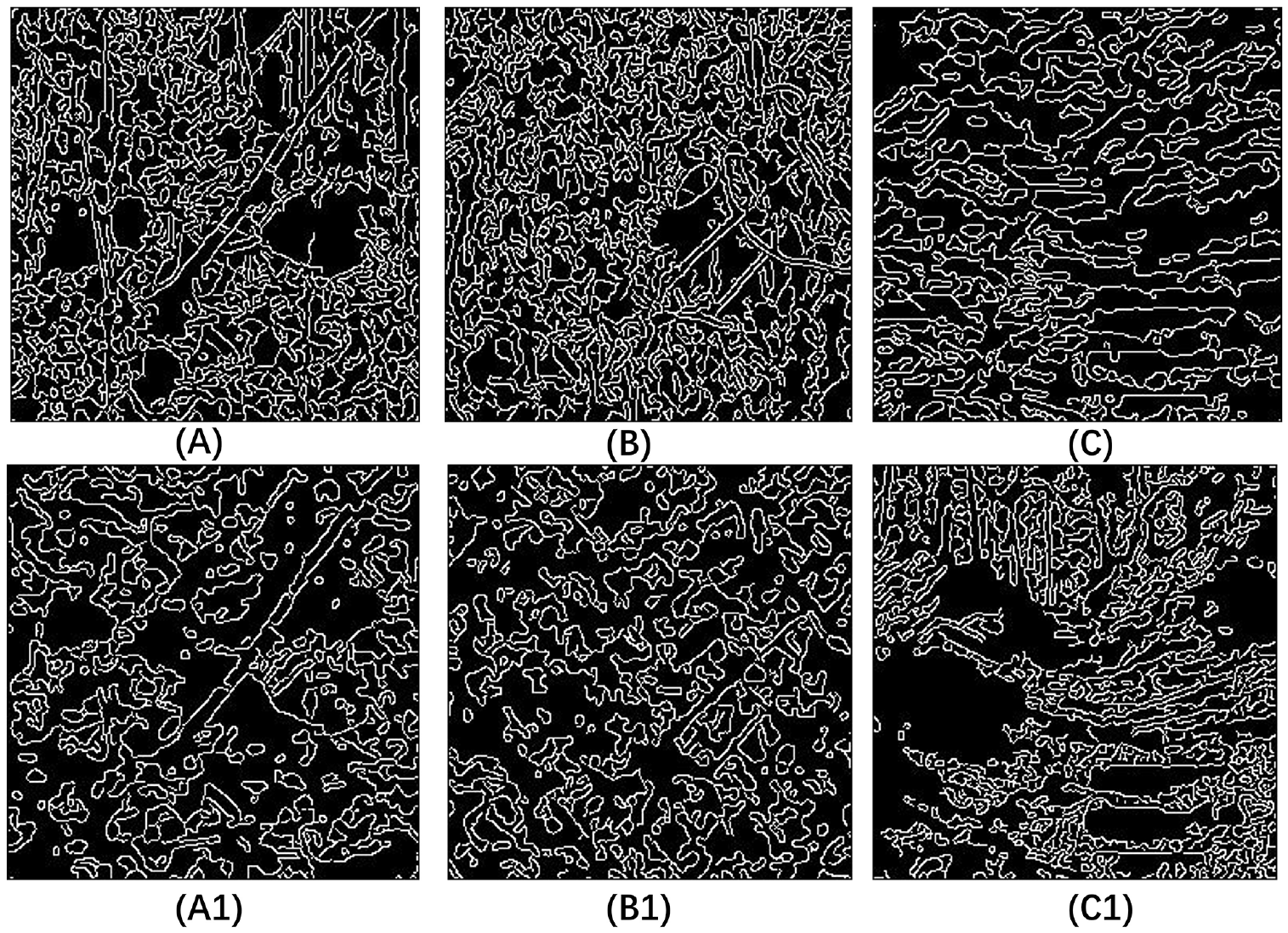

Digital camouflage, also known as mosaic camouflage, is made up of innumerable blocks of pigment arranged in a particular way. To achieve a good camouflage effect, it is necessary to break or blur the edges of adjacent pigment blocks so as to make them infiltrate each other and produce a new gradient color at the broken edge, thus making the human eye produce an optical illusion.

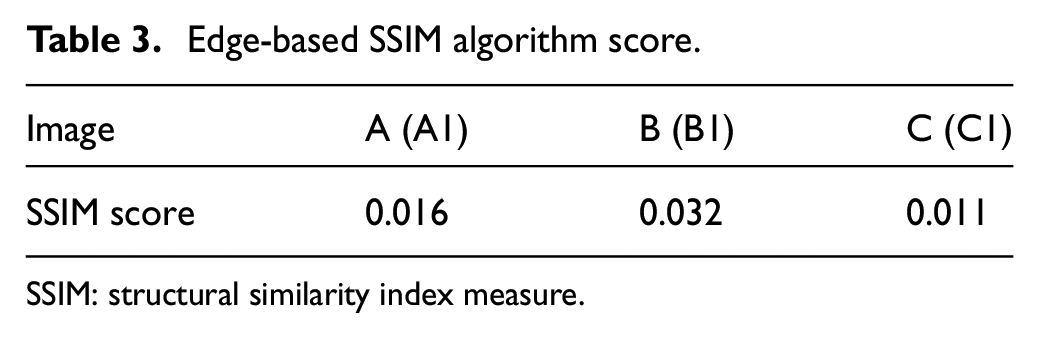

To improve the camouflage effect, we want to generate a digital camouflage that can be hidden as much as possible between the target and the surrounding background edge. Therefore, the canny edge detection algorithm is used to process the images before and after camouflage, as shown in Figure 11. Then, the SSIM algorithm is used to detect the camouflage effect, and the results are shown in Table 3. As can be seen from Table 3, the SSIM scores of the input and generated images based on edge detection are low. Meanwhile, the generated image edges vary in boundary and detail compared to the input image, there is no obvious transition among the edges of the generated image blocks, and the effect is better.

Image edge detection results.

Edge-based SSIM algorithm score.

SSIM: structural similarity index measure.

However, we will generate images to simulate the clothing style map, while downloading an 07-style digital camouflage style map from the network. The two clothing patterns are placed in the same position of the environmental image for overall observation, and the result is shown in Figure 12. Macroscopically, the color and the texture of the camouflage image are close to the background image, and the color blocks produce color mixing through irregular overlapping and juxtaposition, forming a large speck effect. There is little difference in ambient image in color aberration and brightness. At the same time, under the mixed color effect caused by the staggered gradient of different colors, the camouflage edge becomes blurred and difficult to distinguish and has a high degree of integration with the environment.

The contrast between the generated image (a) and the original camouflage image (b) in the environment.

Model Effect Evaluation

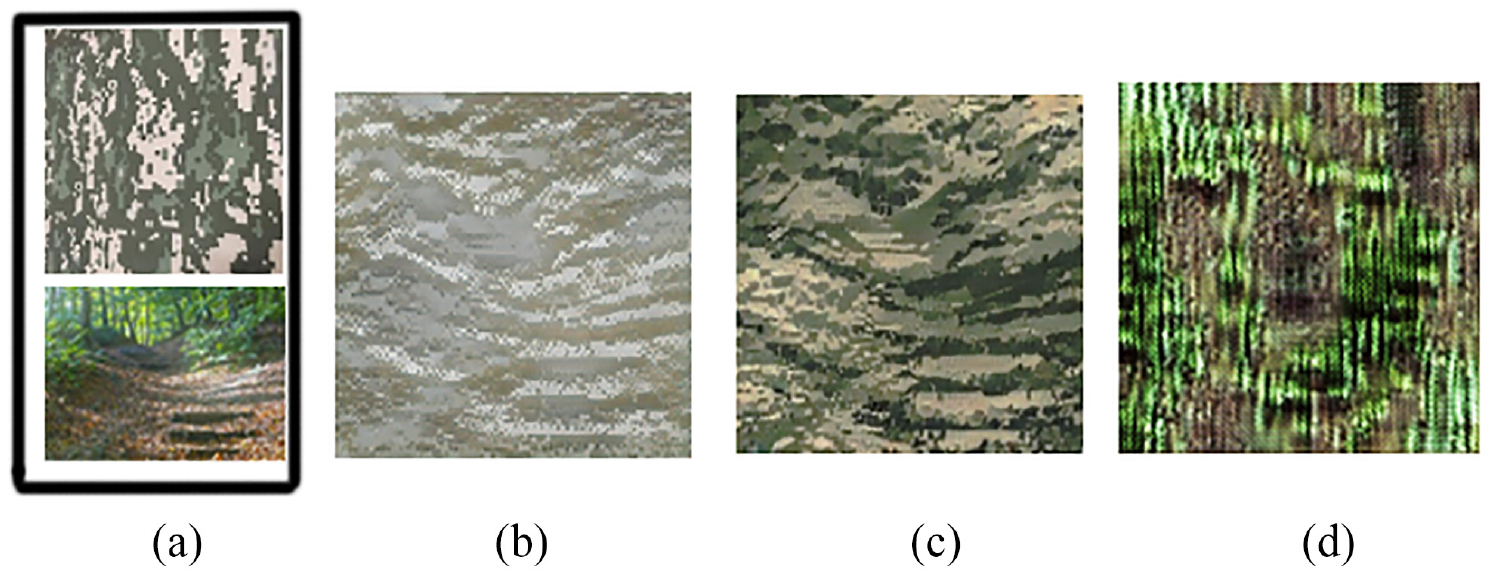

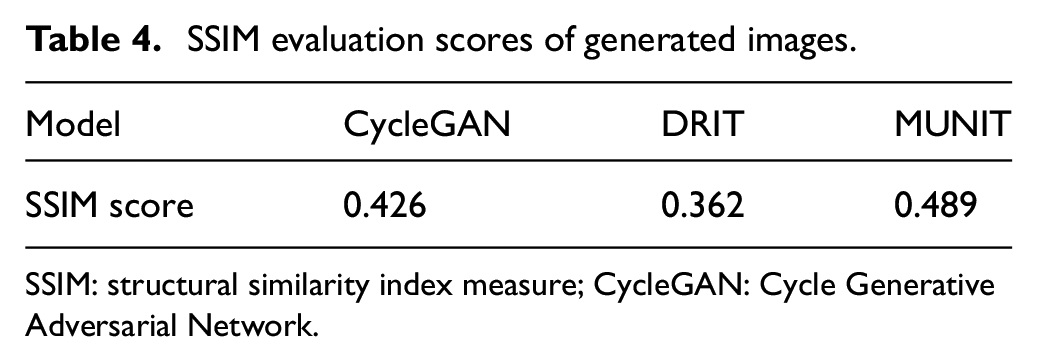

CycleGAN is an unsupervised image-to-image translation model; it is similar to multimodal unsupervised image-to-image translation (MUNIT) and diverse image-to-image translation via disentangled representations (DRIT). The two latent spaces of CycleGAN are separate. Huang et al. 24 put forward MUNIT based on unsupervised image-to-image translatio (UNIT), which is a multi-image transformation model and can decompose and reorganize the style and content of samples collected in the data domain to generate new images. MUNIT can better complete the diversification of image styles and input excellent quality pictures. Similar to the MUNIT model, the DRIT model separates the style and content features of the sample and recombines the input content features with the target domain style features to generate new images. However, the image generated by the DRIT model is of poor quality. 25 To verify the effectiveness of the algorithm, we use CycleGAN, MUNIT, and DRIT models to generate camouflage images using the same data set, and the results are shown in Figure 13. Figure 13(a) is the environment and camouflage image of the input model, Figure 13(b) is the image generated by DRIT model, and Figure 13(c) is the image generated by the counter network based on circular counter. Figure 13(d) is an image generated using the MUNIT model. As can be seen from the figure, the color of the generated camouflage image in Figure 13(b) is far from the input image, and the image in Figure 13(c) retains the color and texture features of the original image; compared to the other two models, the resulting images are excellent. The generated image in Figure 13(d) is blurred, the pigment is cloudy, and the resulting image is poor.

Image contrast between different models: (a) the environment and the camo picture of the model entred, (b) a picture generated using the DRIT model, (c) a picture generated using the CycleGAN model, and (d) a picture generated using the MUNIT model.

For the quantitative evaluation of the generated image effect, the original camouflage image shown in Figure 13 and the images generated by the three models are input into the SSIM algorithm, and the scoring of the three models is shown in Table 4. The structural similarity algorithm of SSIM mainly evaluates the structural differences between two images from the aspects of brightness, contrast, structure, and so on. The higher the score, the smaller the difference between the generated image and the original image. According to the calculation results shown in Figure 13 and Table 4, the camouflage images generated by the CycleGAN algorithm adopted in this article not only retains certain similarities with the original camouflage images but also incorporates some unique structure, color, and other features of environmental images. Compared with the DRIT model, the results are clearer. Compared with MUNIT results, the fusion degree of the camouflage image and environment image is higher, so the image generated by the CycleGAN model has a better camouflage effect compared with the other two algorithms.

SSIM evaluation scores of generated images.

SSIM: structural similarity index measure; CycleGAN: Cycle Generative Adversarial Network.

To sum up, the generated camouflage image is found to have good camouflage effect of color, texture, and edge, so the experimental method of camouflage design based on GAN model is effective.

Conclusion

Camouflage technology is one of the most commonly used and effective camouflage technologies in the military field and plays a significant role in resisting detection and attack using high-tech weapons. The scope of operations in modern battlefields varies greatly, and the combat environment is changeable. But the design process of traditional camouflage pattern is complex, and cannot be adjusted quickly according to the change of combat environment. Based on the combination of neural networks and the military field, a method of generating camouflage pattern using CycleGAN is presented in this article. The features of the input image are extracted, and the desired image is generated. In the whole camouflage process, the automatic generation of camouflage patterns is achieved using computer networks. In terms of the model, the structural similarity algorithm is used to evaluate the image generated by MUNIT, DRIT, and CycleGAN models, which has verified the validity of the model used in this article. At the same time, the quantitative and qualitative analyses of the pictures generated by the CycleGAN model are carried out.

At the same time, the use of in-depth learning networks and traditional clothing design will save paper, fuel, and other resources, in line with the current advocacy of the environmental protection theme. This also saves time and money by making clothing design simpler and faster, rather than shifting according to the designer’s preferences. Furthermore, it can also improve the camouflage clothing pattern design in new ways for the realization of the integration of clothing design and artificial intelligence to provide more possibilities for the realization of the integration of clothing design and artificial intelligence. Through this method, we can see that it is possible for the realization of the integration of clothing design and artificial intelligence. In the follow-up research work, we will continue to collect a large number of high-definition images and improve the clarity and quality of model-generated images. In a constantly changing environment, we can input pictures of two or even multiple environments into the model at the same time, so that it can produce camouflage patterns that adapt to multiple environments, which is one of the experimental ideas for the future. At the same time, another research idea is to use CycleGAN algorithm to generate and apply common landscape and flower design to promote the applicability of GAN in the field of ordinary clothing. The development of artificial intelligence technology promotes the progress of the garment industry. The combination of GAN computing network and traditional garment design can achieve more accurate and convenient garment design.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported, in part, by the National Natural Science Foundation of China (52073151), the National Natural Science Foundation of Shandong, China (ZR2019PEE022), and the Science and Technology Guidance Project of China Textile Industry Federation (2018078).