Abstract

Background and aims

Children of Deaf adults (codas) acquire both a spoken language and a signed language from birth, making them bimodal bilinguals. While the language development of typically developing codas has been well studied, little is known about bimodal bilingual development in children with autism spectrum disorder (ASD).

Methods

This study presents the first longitudinal case study of expressive spoken and signed language in an autistic bimodal bilingual child, spanning ten years of development. The participant, a hearing child with Deaf parents, was exposed to both English and American Sign Language (ASL) from birth. Expressive language samples were analyzed at ages 4;11, 6;6, 9;11, and 14;11 for syntactic complexity, lexical diversity, modality use, and echolalia.

Results

Results revealed a significant expressive language delay in both modalities, with no consistent advantage of one modality over the other. Despite lifelong exposure to ASL, the child exhibited similarly limited language development in both English and ASL, challenging assumptions that signed language may be inherently more accessible for autistic individuals. Although there were global delays in expressive language in both modalities, there was evidence of pragmatic strengths and sensitivity to the linguistic preferences of his conversation partners. Unique features of bimodal bilingualism in autism, including code-blending, whispering, and cross-modal echolalia, are described.

Conclusions

These findings highlight the need for further research into the developmental trajectories and communicative strategies of autistic bimodal bilingual children.

Keywords

Bilingualism—the use of two languages in daily life—is a global norm and includes both typical and atypical language learners (Grosjean, 1999). Among bilingual populations, children of deaf adults (codas) represent a distinct case: they acquire a signed language from their deaf parents and a spoken language from the surrounding hearing community. This simultaneous acquisition of two languages in different modalities—spoken and signed—is referred to as bimodal bilingualism (Emmorey et al., 2013). Bimodal bilinguals differ from traditional bilinguals not only in how their languages are acquired and used, but also in the structural interactions that arise between modalities, such as code-blending, in which elements of both languages are expressed simultaneously (Emmorey et al., 2008; Petitto et al., 2001). Research on typically-developing codas has found that early sign development is similar to that of deaf children (van den Bogaerde, 2000), with speech typically becoming dominant as children mature (Emmorey, Borinstein et al., 2008; Emmorey, Giezen et al., 2013; MacSweeney et al., 2002; Neville et al., 1997), although young coda children sometimes show the influence of the signed language in their spoken-language productions, for example through sign-influenced word order (Johnson et al., 1992; Lillo-Martin et al., 2010). However, virtually nothing is known about the developmental trajectories of bimodal bilingual children with developmental disorders, such as autism spectrum disorder (ASD).

ASD is a neurodevelopmental condition characterized by difficulties in social communication and restricted, repetitive behaviors (APA, 2013). Although ASD is not defined by language impairment per se, language difficulties—both structural and pragmatic—are common in ASD and may affect both spoken and signed language acquisition (e.g., Shield & Meier, 2012; Shield et al., 2017; Shield, Meier et al., 2015; Shield, Pyers et al., 2016). Numerous studies exploring bilingualism in autistic children exposed to two spoken languages have concluded that bilingual exposure is not harmful to autistic children: bilingual-exposed autistic children are not delayed in their language acquisition compared to monolingual-exposed autistic children (Hambly & Fombonne, 2012; Hastedt et al., 2023; Wang et al., 2018). Additionally, some studies have found that bilingual exposure may even advantage autistic children: for example, bilingually-exposed autistic toddlers make more communicative overtures than monolingual-exposed autistic toddlers (Valicenti-McDermott et al., 2013), and several recent studies have found that bilingually-exposed autistic children outperform monolingual-exposed autistic children on theory of mind tasks (Andreou et al., 2020; Dai et al., 2018; Lund et al., 2017; Meir & Novogrodsky, 2019; Peristeri et al., 2021; Peristeri, Tsimpli et al., 2024). These findings are clinically important given the fact that clinicians often recommend that parents adopt a monolingual approach (typically, English only) with their autistic children due to fears that bilingualism could be detrimental to their children's linguistic development (e.g., Kay-Raining Bird et al., 2012; Yu, 2013).

It has been suggested that the signed modality may be more accessible to some learners, including those with autism, due to its increased iconicity (Konstantareas et al., 1982), ease of prompting and reinforcement through touch (Stull et al., 1979), relatively slow rate of production (Klima & Bellugi, 1979), and ability to hold signs in a static position over time, permitting additional processing time. Despite this important work, little is known about autistic children exposed to a signed language and a spoken language. 1

This study represents a first step toward addressing this gap by presenting a detailed longitudinal case study of an autistic bimodal bilingual child. Prior work on deaf children has shown that autism can affect signed language development in ways that mirror spoken language profiles in autism, including echolalia in sign (Shield et al., 2016) and pronoun avoidance (Shield et al., 2015). One prior longitudinal case study of the child described in this paper examined the development of the four parameters of sign articulation—handshape, location, movement, and palm orientation—over the course of 10 years (Shield et al., 2020). The child showed persistent but gradually improving delays in American Sign Language (ASL) phonology. In particular, he showed frequent and enduring palm orientation errors, highlighting a potentially distinctive feature of sign language development in autism. The authors concluded that his improvements in the articulation of handshapes, locations, and movements reflected improvements in motor control, while persistent palm orientation reversals likely were a result of imitative and perceptual differences.

A recent case series (Shield, 2025) described the receptive language profiles of seven hearing autistic children with deaf parents who were exposed to both ASL and English from birth, including the child described in the current study. The study found that the children showed three distinct patterns: (1) severe receptive language impairment in both languages, (2) impaired comprehension in both modalities, with a slight ASL advantage, and (3) clear English dominance in receptive language. This study adds to literature showing parallel delays in bilingual-exposed children with developmental language disorders (e.g., Paradis, 2007), while also challenging the notion that the signed modality is inherently more accessible to autistic children.

Although the recent study contributes to our understanding of receptive language development in autistic bimodal bilingual children, little is known about expressive language development in the two modalities. Indeed, no study to date has examined how any hearing autistic child with native exposure to a signed and a spoken language produces both languages (ASL and English) over time.

The current study follows the expressive language development of a hearing bimodal bilingual child with ASD from early childhood through adolescence. We ask the following motivating questions:

What is the trajectory of expressive language development in each modality? Is one modality—spoken or signed—more accessible or advanced than the other? What are the unique features of bimodal bilingualism in autism?

In addressing these questions, we aim not only to document a hearing, autistic, bimodal bilingual's expressive language profile but also to explore broader theoretical questions about language representation, processing, and social adaptation in neurodiverse bimodal bilinguals.

Methods

Participant

The participant, referred to here pseudonymously as Morgan, is a hearing, left-handed male exposed from birth to both ASL and English. Morgan has two deaf parents who communicate primarily in ASL and one hearing younger sibling who is bilingually fluent in ASL and English. He was diagnosed with ASD and intellectual disability by a licensed clinical psychologist at age 2;10 through a comprehensive evaluation that included direct behavioral observation and standardized testing. Since diagnosis, Morgan has received a range of services including occupational therapy, speech-language therapy, and classroom accommodations. These services were primarily delivered in English. Morgan attends a mainstream hearing school. Written informed consent was obtained from Morgan's mother before data collection at each time point of observation, and his parents granted informal permission via personal communication for the current case study to be published.

Morgan was observed at four time points over the course of 10 years: at ages 4;11, 6;6, 9;11, and 14;11. At each point, expressive language was sampled and analyzed across both signed and spoken modalities.

Developmental History

Developmental assessments at 30 and 34 months, administered by a licensed clinical psychologist, confirmed early and persistent delays across domains, including gross and fine motor skills, receptive and expressive language, and adaptive functioning.

A summary of relevant assessments is provided in Table 1.

Parent-Reported Test Results.

Note. AE: age equivalent; T: T-score; SS: standard score; mo: months; GM: gross motor; PS: problem solving; RL: receptive language; EL: expressive language; ASD: autism spectrum disorder.

Standardized Assessments

Formal testing was conducted at multiple time points to assess cognitive abilities and receptive language in both modalities (Table 2). Although in this paper we analyze Morgan's expressive language in both modalities, no formal assessments of expressive ASL or English were conducted. All assessments were administered by the first author, a hearing researcher who is fluent in both English and ASL. No interpreter was used for any assessment session.

Standardized Assessments Administered by Researchers.

Note. SS: standard score (mean = 100, SD = 15); ASL: American Sign Language.

Scores across measures were consistently 2+ standard deviations below the mean, confirming significant language delays in both modalities.

Data Collection

Expressive language data were drawn from structured and naturalistic interactions recorded as part of larger studies on ASL development. Twelve-minute language samples were selected for each age and coded using ELAN software (ELAN, 2019). Each language sample was drawn from a longer recorded session and standardized to 12 min for consistency across time points. These 12-min samples were selected from segments that contained active interaction with minimal interruptions or camera/microphone issues. Although we considered coding the full sample for each session, we retained a uniform 12-min window for cross-time comparability.

Session contexts varied: at age 4;11, Morgan was recorded playing at home with his deaf parents and hearing sibling; at 6;6, he engaged in structured tasks with his deaf mother and the hearing researcher (the first author); at 9;11, he participated in an Autism Diagnostic Observation Schedule, Second Edition (ADOS-2) session with a hearing examiner (a licensed clinical psychologist) fluent in ASL; and at 14;11, he engaged in conversation with his deaf mother. All sessions were conducted in the afternoon. Prompting strategies varied slightly by context but included open-ended questions (e.g., “What happened next?”) and modeling during play (e.g., offering toys or suggesting a story). Prompts were tailored to Morgan's communication level and delivered in both ASL and English, depending on his interlocutor (e.g., his parents tended to sign ASL with occasional voicing of English, while the ADOS-2 assessor spoke English with frequent accompanying signs).

Coding and Transcription

Transcription was performed by the second author, a hearing master's-level student proficient in ASL and a native speaker of English, and cross-checked for accuracy by the first author. Coding categories included the following:

Expressive speech and sign: Transcriptions of all intelligible spoken and signed utterances. Measures included mean length of utterance (MLU), number of total words, number of different words (NDW), and informal analysis of parts of speech. For sign utterances, MLU was calculated based on the number of discrete signs (excluding repetitions or fillers). All signs coded were citation forms without additional morphological markings. Deictic points were counted as pronouns if used referentially (e.g., pointing to a person). Nonmanual markers were not included in MLU counts. Modality: Each utterance was categorized as spoken-only, signed-only, or code-blended (simultaneous sign and speech). Utterance boundaries were decided by pauses in speaking or signing. Whispering: Spoken utterances significantly lower in volume, without voicing, and alongside ASL signs were classified as whispered speech (ASL-dominant code-blends), a behavior previously documented in young typically-developing codas (Petroj et al., 2014). Echolalia: Utterances were coded as spontaneous, elicited, or echolalic (immediate or delayed), following Prizant and Rydell (1984). Immediate echolalia was defined as repetition within two conversational turns; delayed echolalia was defined as repetition occurring later in the same session with intervening content or the use of repetitive scripted phrases (e.g., “of course you can”).

Reliability

A second trained undergraduate research assistant recoded 5 min of each sample (approximately 42% of data), with >90% agreement across coding categories.

Results

Syntactic Complexity

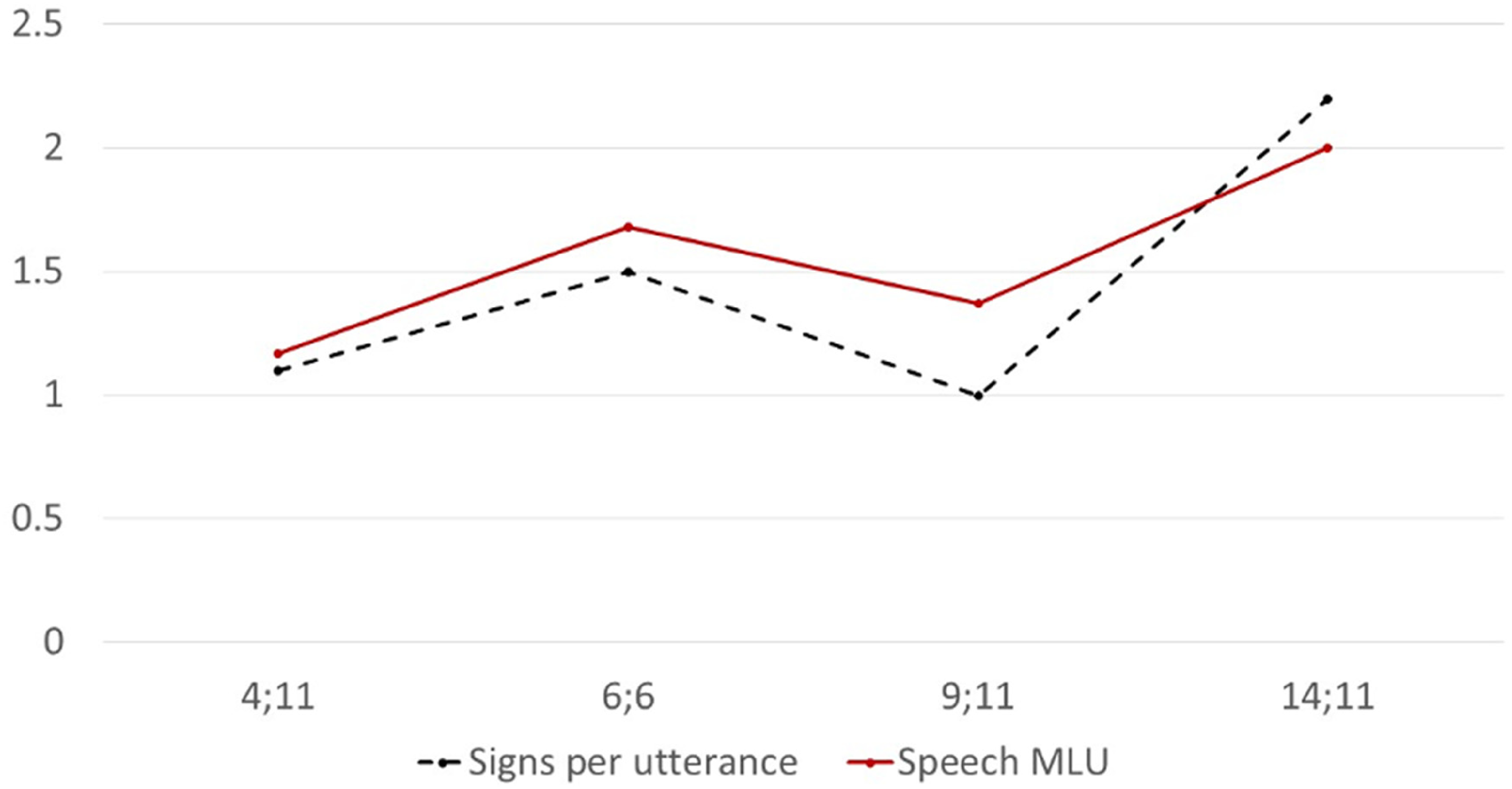

Morgan's syntactic complexity remained low across time in both modalities. MLU in speech and number of signs per utterance in ASL followed parallel trajectories, increasing modestly over time but remaining significantly below age expectations (Figure 1).

Mean utterance length in speech and sign across ages.

Table 3 shows representative utterances from each category (spontaneous, elicited, and echolalic) in ASL and English. By age 14;11, Morgan's longest utterances in both modalities reached four elements, though several were echolalic in nature.

Representative Utterances in ASL and English at Each Time Point.

Note. ASL: American Sign Language.

This was a three-sign production of Morgan's name sign followed by the signs NAME and SIGN.

Lexical Quantity and Diversity

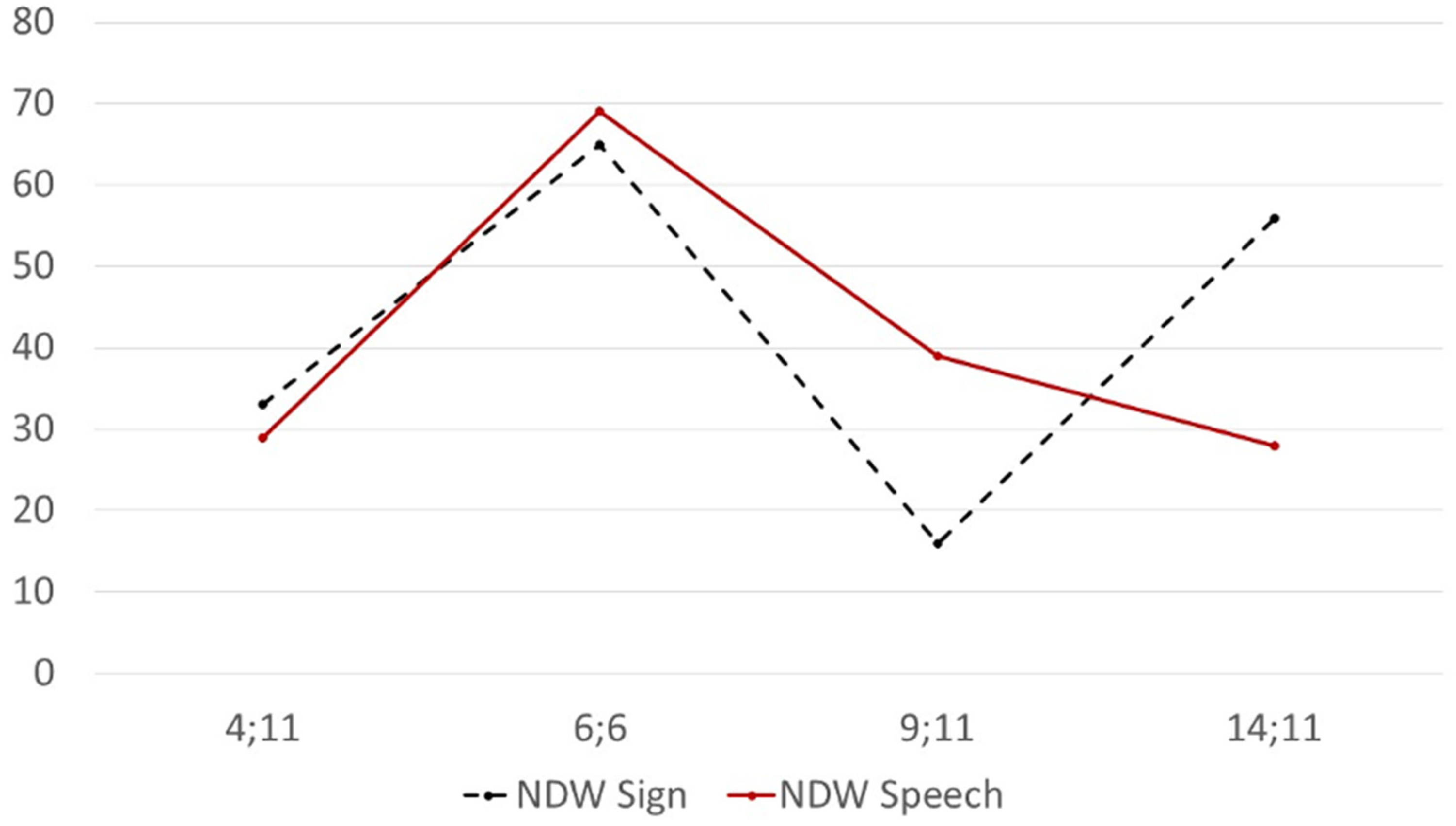

Across ages, Morgan produced 34–180 total spoken words and 32–165 total signs per 12-min sample (Figure 2). Rates varied by interaction partner and context. Lexical diversity, measured by NDW, ranged from 28 to 69 in speech and 16 to 65 in sign (Figure 3). At some time point (e.g., 14;11), Morgan used more signs than words; at others (e.g., 6;6 and 9;11), the reverse was true.

Number of total words (NTW) in sign and speech produced per 12-min sample.

Number of different words (NDW) in sign and speech per 12-min sample.

Part-of-speech analysis revealed a predominance (>50%) of nouns in both modalities across all ages, with some use of verbs in both ASL and English, determiners and interjections in English, and limited use of adjectives and adverbs in both languages. We examined cross-modality overlap in vocabulary and found that many lexical items were expressed in both ASL and English across time points, suggesting shared semantic representation. Most of these lexical items were produced as simultaneous ASL–English code blends, discussed in the next section. However, some signs and words were unique to one modality (e.g., the scripted phrase “of course you can” was only produced in English without accompanying ASL signs).

Modality Use

Morgan used spoken-only, signed-only, and code-blended utterances at all ages (Figure 4). Modal choice appeared sensitive to interaction partner: at 6;6, when interacting with both a deaf mother and hearing researcher, he produced the highest proportion of code-blended utterances. At 14;11, during conversation with a deaf parent, he relied more heavily on sign alone.

Proportion of utterances by modality at each age.

Code-blends also sometimes reflected ASL syntax: at age 6;6 Morgan signed

Whispered Speech

Whispered utterances (i.e., ASL-dominant code-blends) were observed at ages 4;11 and 6;6, consistent with prior reports of this phenomenon in bimodal bilingual children (Petroj et al., 2014). Whispered English words were produced at the same time as the synonymous sign in ASL, indicating simultaneous activation of both languages. At 4;11, he produced seven whispered code-blends, including the words “monkey” (three times), “yeah,” “elephant,” “turtle,” and “red.” At age 6;6, he produced three whispered code-blends: “okay,” “turtle,” and “bowl.” No whispered speech was observed at later ages.

Echolalia and Utterance Type

Morgan produced echolalic utterances at all four time points in both modalities. These included both immediate and delayed echoes/scripted phrases, often embedded in otherwise elicited or spontaneous interactions. The majority of his utterances were elicited, with spontaneous productions remaining limited throughout the study (Figure 5). At age 9;11, Morgan produced more spontaneous utterances in both English and ASL than at other time points. This session, conducted using the ADOS-2 protocol with a hearing researcher fluent in ASL, may have facilitated greater spontaneous language due to its structured prompts and emphasis on social-communicative behaviors.

Type of utterance.

A particularly novel finding emerged at age 6;6: Morgan produced a cross-modal echo, in which he responded to a signed utterance with a spoken echo. Specifically, after his deaf mother signed

Similarly, at age 14;11 Morgan signed

Discussion

This study presents the first known longitudinal case study of expressive language development in a bimodal bilingual (ASL–English) child with autism. Over a 10-year period, we tracked the spoken and signed language development of this hearing child with deaf parents, documenting his syntactic and lexical abilities, modality use, and echolalic behaviors. Our goal was to compare developmental trajectories across modalities and to identify phenomena unique to the intersection of bimodal bilingualism and autism.

Parallel Language Delays Across Modalities

Our first research question asked whether sign and speech would follow similar developmental trajectories. The results indicate that expressive language was markedly delayed in both modalities. Syntactic complexity, as measured by MLU, remained low across time points in both languages, and part-of-speech analyses showed a predominance of nouns with limited grammatical elaboration. These findings are consistent with prior work suggesting that bilingual children with developmental language disorders often experience parallel delays across both languages (Paradis, 2007).

Morgan's persistent language delay across modalities reinforces the interpretation that his difficulties stem from core challenges with language, rather than a modality-specific processing issue. His low receptive language scores in both ASL and English further support this conclusion, with standardized assessments confirming significant difficulties with comprehension despite lifelong exposure to both languages. These findings argue against the idea that one modality may compensate for limitations in the other in cases of broader language delays. We find evidence that language acquisition challenges in autism may originate in accessing and forming linguistic representations, rather than input limitations or modality-specific processing difficulties.

No Consistent Modality Advantage

Our second question explored whether signed language might offer an advantage over speech, as has been suggested in the context of augmentative and alternative communication (AAC) for minimally speaking children with autism (Bonvillian et al., 1981; Oxman et al., 1978). In Morgan's case, no such advantage was observed. His expressive output in ASL mirrored that of English in complexity and lexical diversity, and both were delayed relative to age expectations. Although Morgan produced more signs than words at age 14;11, this pattern appeared to reflect contextual factors (i.e., interaction with a deaf parent) rather than an underlying shift in language proficiency. However, his production of ASL-dominant code-blends (whispers) and the production of unusual English syntax in code-blended utterances (e.g., “that way camera”) could indicate dominance of ASL when both languages are activated.

The use of manual sign as a replacement for speech may be appropriate for some children with intact language systems but speech-motor challenges (e.g., apraxia), but our findings suggest that sign may not always be more accessible for children with core language (i.e., structural) difficulties. These findings have practical implications: recommendations to use sign as an AAC strategy should consider the child's broader language profile, not just speech output.

Unique Features of Bimodal Bilingualism in Autism

Our third research question examined whether Morgan displayed any phenomena unique to bimodal bilingual development in the context of autism. Several features stood out.

Code-blending, or the simultaneous production of spoken and signed elements, was present at all time points. This pattern is well-documented in typically developing codas (Emmorey et al., 2013; Lillo-Martin et al., 2014) and reflects the capacity of bimodal bilinguals to activate both languages concurrently. Interestingly, Morgan produced the highest proportion of code-blends during interactions with both a deaf and hearing interlocutor, suggesting a strategic use of both languages to navigate a dual-language environment.

Whispered speech, or ASL-dominant code-blends, was also observed at younger ages. Morgan's whispers were brief, single-word utterances that co-occurred with signing, and they declined with age, consistent with previous findings that whispering is more common in younger bimodal bilinguals (Petroj et al., 2014). We interpret whispers as additional evidence of the simultaneous activation of ASL and English during language production. However, whispers also indicate that the speaker is making an effort to suppress speech, insofar as he is not voicing while speaking. Thus, these ASL-dominant code blends, which were produced in the context of interactions with a deaf interlocutor (both parents at 4;11 and his mother at 6;6), lend additional support to the idea that Morgan was sensitive to the preferred language modality of his conversation partners.

Most notably, we observed two cross-modal echoes—a novel and previously undocumented phenomenon. At age 6;6, Morgan responded to a signed utterance produced by his mother (

First, it suggests that Morgan was not simply repeating surface-level linguistic material but was engaging in semantic processing and modality switching, translating the meaning of the signed input into a spoken output or vice versa. This challenges long-standing assumptions that echolalia in autism is necessarily noncommunicative or devoid of meaning (Fay & Schuler, 1980; Lovaas, 1977). Instead, it supports the assertion that echolalia can be communicative and meaningful (e.g., Prizant & Rydell, 1984), especially when embedded in broader bilingual or bimodal contexts.

Second, the presence of cross-modal echoes raises new questions about how autistic children with dual-language exposure process and organize linguistic input. In Morgan's case, the ability to interpret a signed utterance and respond with an equivalent spoken phrase (or a signed echo of a spoken word) indicates a capacity for modality-independent semantic mapping, even in the presence of significant expressive language delays. Our findings are consistent with the language synthesis model (Lillo-Martin et al., 2016), which posits that bimodal bilinguals draw from a shared syntactic system to produce both unimodal and bimodal (code-blended) utterances. This rare behavior may reflect a level of linguistic integration that has been underestimated in autistic echolalia and warrants further theoretical and empirical attention.

Additionally, this observation adds to a growing literature suggesting that bimodal bilingualism may reveal novel linguistic strategies in autistic individuals—strategies that may not be accessible in monolingual or unimodal bilingual populations.

Finally, despite Morgan's relatively limited language proficiency, he showed clear sensitivity to the language preferences of his interlocutors, often selecting the appropriate modality for communication. His shifting use of ASL, English, and code-blends depended on his conversation partners, suggesting pragmatic awareness and underscoring the need to study pragmatic strengths in autistic children alongside their challenges.

Limitations and Future Directions

This study provides a unique longitudinal view of expressive language development in a bimodal bilingual child with ASD, but several limitations constrain the generalizability and interpretability of our findings.

First, the study was not originally designed as a longitudinal investigation. As a result, data collection contexts varied across time points, including differences in interaction partners, tasks, and elicitation styles. While we attempted to control for these differences by selecting representative 12-min samples, contextual variability may have influenced the quantity and modality of language produced at each age. Future studies should employ standardized, consistent elicitation protocols across time to enable more robust comparisons.

Second, this case study focuses on a single child. Although rich in detail, the findings cannot be generalized to the broader population of autistic bimodal bilingual children without additional data. Language outcomes in this population likely vary depending on multiple factors, including cognitive profile, language exposure, educational setting, and family language practices. The participant in this study presented with both intellectual disability and language delays. Future research should examine a larger cohort of autistic bimodal bilingual children, including those with typical cognition and language ability, in order to identify patterns, subgroups, and developmental trajectories.

Third, the conversational partners differed across sessions. While some interactions took place with deaf parents, others involved the first author, a hearing researcher. Given that Morgan's language output varied by communication partner, it is possible that social-pragmatic factors—such as familiarity, communication style, or perceived audience—played a role in his language modality choices and quantity. Future work should examine how interlocutor characteristics influence language use in autistic bimodal bilinguals.

Despite these limitations, this study opens several avenues for future research. Large-scale, mixed-methods studies could examine the prevalence and nature of code-blending, whispering, and cross-modal translation in autistic codas and compare them to their nonautistic peers. In addition, neurocognitive or processing-based investigations could explore how bimodal bilingual children with autism map meaning across modalities, and whether echolalia in such contexts supports language development. Finally, clinical research is needed to determine how best to support language growth in autistic children exposed to more than one language modality, with an emphasis on preserving access to both home and community languages.

Conclusion

This case study provides the first longitudinal documentation of expressive language development in a bimodal bilingual child with autism. Across 10 years of observation, Morgan demonstrated parallel delays in spoken and signed language, with no evidence of a modality-specific advantage. His expressive output in both English and ASL remained syntactically and lexically limited, despite early and consistent exposure to both languages. These findings challenge the assumption that sign language is inherently more accessible for autistic learners and suggest that expressive language difficulties may arise from deeper challenges with accessing linguistic knowledge, regardless of modality. At the same time, Morgan exhibited several phenomena unique to bimodal bilingual development, including code-blending and whispering, as well as the first documented cross-modal echoes, which suggest meaningful processing of language across modalities. These findings underscore the importance of studying bilingualism—including bimodal bilingualism—within neurodiverse populations. Moreover, Morgan's ability to shift modalities based on the language background of his interlocutor reflects pragmatic sensitivity that may not be captured by standardized assessments alone. Such adaptive behaviors deserve greater attention as strengths in autistic communication profiles. The observation of whispered speech alongside sign, and of semantically integrated cross-modal echoes, points to the possibility that bimodal bilingual autistic children may engage in internal language planning or modality translation, offering new avenues for understanding metalinguistic processing in this population.

This study highlights the importance of including bimodal bilingual children in autism research—not as exceptions, but as essential to understanding the full range of neurodiverse language development. Further research is needed to understand the full range of communicative strategies used by autistic bimodal bilinguals and to inform best practices for supporting their language development across both modalities.

Footnotes

Ethical Approval and Informed Consent Statements

The research described in this manuscript was approved by the institutional review boards of Boston University (2471e) and Miami University (01375). Written informed consent was obtained from a parent prior to data collection, including consent to publish the data collected in a de-identified format.

Author Contributions

AS conceived the study and carried out data collection, supervised data analysis, and wrote and edited the final manuscript. KC assisted with data collection, coded data, analyzed data, and wrote an initial draft of the manuscript.

Funding

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: This work was supported by the National Institute on Deafness and Other Communication Disorders [grant number 1F32 DC011219] and an American Speech-Language-Hearing Association (ASHA) Advancing Academic-Research Career (AARC) Award to the first author and to a Dean's Scholar Award from the College of Arts and Sciences at Miami University to the second author.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data that support the findings of this study are not publicly available due to the sensitive nature of the participant information and the nature of a case study, which could compromise confidentiality. The participant is a member of a small and identifiable population (hearing child of deaf adults), and despite de-identification, there remains a risk of deductive disclosure. As such, data sharing is not possible under the terms of our ethical approval.