Abstract

Background & Aims

Many young children with autism spectrum disorder (ASD) demonstrate striking delays in early vocabulary development. Experimental studies that teach the meanings of novel nonwords can determine the effects of linguistic and attentional factors. One factor that may affect novel referent selection in children with ASD is visual perceptual salience—how interesting (i.e., striking) stimuli are on the basis of their visual properties. The goal of the current study was to determine how the perceptual salience of objects affected novel referent selection in children with ASD and children who are typically developing (TD) of similar ages (mean age 3–4 years).

Methods

Using a screen-based experimental paradigm, children were taught the names of four unfamiliar objects: two high-salience objects and two low-salience objects. Their comprehension of the novel words was assessed in low-difficulty and high-difficulty trials. Gaze location was determined from video by trained research assistants.

Results

Contrary to initial predictions, findings indicated that high perceptual salience disrupted novel referent selection in the children with ASD but facilitated attention to the target object in age-matched TD peers. The children with ASD showed no significant evidence of successful novel referent selection in the high-difficulty trials. Exploratory reaction time analyses suggested that the children with autism showed “stickier” attention—had more difficulty disengaging (i.e., looking away)—from high-salience distracter images than low-salience distracter images, even though the two images were balanced in salience for any given test trial.

Conclusions and Clinical Implications

These findings add to growing evidence that high perceptual salience has the potential to disrupt novel referent selection in children with ASD. These results underscore the complexity of novel referent selection and highlight the importance of taking the immediate testing context into account. In particular, it is important to acknowledge that screen-based assessments and screen-based learning activities used with children with ASD are not immune to the effects of lower level visual features, such as perceptual salience.

As a group, young children with autism spectrum disorder (ASD) demonstrate striking delays in early vocabulary development (Charman et al., 2003; Ellis Weismer & Kover, 2015; Luyster et al., 2008). Though some individuals with ASD eventually acquire average or above average vocabulary skills (Fein et al., 2013; Kjelgaard & Tager-Flusberg, 2001; Tyson et al., 2014), many experience lifelong language impairments (Howlin et al., 2004). In recent years, autism researchers have undertaken a concentrated effort to better understand why these vocabulary delays occur and how best to facilitate vocabulary development in children with ASD (Arunachalam & Luyster, 2016, 2018). One fruitful approach has been the use of experimental studies in which children with ASD are taught the meanings of novel nonwords (e.g., labels for unfamiliar objects) in various conditions (McGregor et al., 2013; Swensen et al., 2007; Tenenbaum et al., 2017). Using nonwords ensures that children have no prior experience with the new words and their meanings. This approach is advantageous because it can determine how linguistic and attentional factors affect the earliest stages of word learning in children with ASD—something not possible in observational research.

Depending on their design, novel word studies can address various phases of word learning, from initial exposure to longer-term retention (Horst & Samuelson, 2008; McMurray et al., 2012). Some studies measure fast mapping—children's ability to identify a labeled referent after only minimal exposure to a novel word and its meaning (Carey & Bartlett, 1978; McDuffie et al., 2012; Venker et al., 2016). Other studies—often conducted with school-aged children or adolescents with ASD—measure retention, consolidation, and/or generalization of new words over longer periods of time (Hartley et al., 2019, 2020; Henderson et al., 2014; Norbury et al., 2010).

Novel word studies also differ in how they measure novel referent selection (i.e., identification of word meaning). Some studies require children to make an explicit selection—for example, by picking up or pointing to a novel object, or touching an image of a novel object on a tablet screen. This type of design can be beneficial because it elicits a single, definitive response, but it can also introduce unintended confounds. Children with ASD—particularly toddlers and preschoolers—may perform poorly on such tasks not because they failed to learn, but because they were unwilling (or unable) to produce a prompt, purposeful response to an examiner's request (Charman et al., 2003; Muller & Brady, 2016; Venker et al., 2016; Venker & Kover, 2015). As an alternative, novel referent selection can be measured by monitoring children's gaze to named versus unnamed objects on a screen (Horvath et al., 2018; Norbury, 2016; Potrzeba et al., 2015; Swensen et al., 2007; Venker, 2019). Eye-gaze studies are advantageous because they can measure recognition of spoken words in real time with limited task demands (Brady et al., 2014; Plesa Skwerer et al., 2015; Sasson & Elison, 2012; Venker & Kover, 2015). Because they examine in-the-moment attention, eye-gaze studies are particularly well suited to investigate how the visual properties of objects affect novel referent selection.

One visual property that has the potential to affect novel referent selection in children with ASD is visual perceptual salience—how interesting (i.e., striking) stimuli are on the basis of their visual properties (e.g., color, brightness, visual complexity; Parish-Morris et al., 2007; Pruden et al., 2006; Wang et al., 2015). Work by Parish-Morris et al. (2007) revealed successful novel word identification in young children with ASD when the target object had high perceptual salience, but not when the target object had low salience. Relatedly, Akechi et al. (2011) found that overlapping gaze and salience (i.e., movement) cues facilitated fast mapping in school-aged children with ASD.

Depending on the learning context, however, perceptual salience can also be disruptive. Gliga et al. (2012) found that the presence of a high-salience distracter object that changed color or had moving parts disrupted learning in infants at high familial risk for ASD. Though adults with ASD can rely on social cues to determine word meaning in the absence of conflicting salience, their performance decreases to chance levels when a distracter object moves at the same time that the gaze cue is presented (Aldaqre et al., 2015). Together, these findings suggest that perceptual salience has powerful effects on individuals with ASD—facilitating early word learning in some cases, but disrupting it in others. Though perceptual salience also affects fast mapping and referent identification in young children who are TD (typically developing; Houston-Price et al., 2006; Moore et al., 1999), the influence of perceptual salience appears to be stronger and persist for a longer developmental period in ASD than in typical development (Hollich et al., 2000; Pruden et al., 2006; Venker et al., 2018).

The mechanisms by which perceptual salience may facilitate—or disrupt—novel referent selection are not fully understood, but children's attention to and disengagement from objects has been highlighted as a likely contributing factor (Akechi et al., 2011; Aldaqre et al., 2015; Gliga et al., 2012; Venker, 2017; Venker et al., 2018). Visual disengagement refers to the ability to look away from something in the current focus of attention to attend to something new (Posner & Cohen, 1984). Numerous studies have found that individuals with ASD take longer (and are less likely) than individuals without ASD to disengage from their current focus of attention—a phenomenon sometimes referred to as “sticky attention” (Landry & Bryson, 2004; Sacrey et al., 2014; but see Fischer et al., 2014, 2015). As discussed by Sacrey et al. (2014), high-salience stimuli (e.g., dynamic, colorful shapes) are more likely to elicit sticky attention (Elsabbagh et al., 2013; Landry & Bryson, 2004; Zwaigenbaum et al., 2005) than stimuli with low salience (e.g., static crosses; Kawakubo et al., 2004; Todd et al., 2009). These findings suggest that visual stimuli with high perceptual salience capture and maintain attentional engagement in individuals with ASD more strongly than stimuli with low perceptual salience (Sacrey et al., 2014), which could affect novel referent selection.

The goal of the current study was to determine how the perceptual salience of objects affects novel referent selection in children with ASD and children who are TD at a similar age. Unlike previous work investigating perceptual salience and fast mapping in preschool-aged children with ASD (Parish-Morris et al., 2007), we measured novel referent selection by monitoring children's eye movements to named versus unnamed images in real time. To rule out uncertainty about the initial labeling event, each teaching trial presented a single object-label pairing at a time. The purpose of using this unambiguous teaching context was to increase the likelihood of successful novel referent selection in the children with ASD. Given previous evidence that perceptual salience can facilitate the initial stages of word learning in individuals with ASD (Akechi et al., 2011; Parish-Morris et al., 2007), we predicted that the children with ASD would show significantly better novel referent selection in the high-salience condition than the low-salience condition. Because they were older than the developmental period during which salience exerts its strongest effects, we did not expect the TD children to be significantly affected by the salience manipulation (Hollich et al., 2000; Pruden et al., 2006), as indicated by similar (strong) performance across the low-salience and high-salience conditions.

Method

Procedure

Children and their families visited the research lab on two different days. Children completed standardized assessments of language and cognitive skills, as well as several eye-gaze tasks that measured language processing and novel referent selection. Standardized assessments and eye-gaze tasks were interspersed to maximize children's engagement. Typically, two eye-gaze tasks were completed on each day. The novel referent selection task of interest in the current study took place at the end of the second day. Receptive and expressive language skills were assessed using the Auditory Comprehension Scale and the Expressive Communication Scale of the Preschool Language Scales, 5th Edition (PLS-5; Zimmerman et al., 2011). The PLS-5 is an omnibus language assessment that measures children's understanding and expression of language concepts related to vocabulary, grammar, pragmatics, and early literacy. Nonverbal problem-solving skills were assessed using the Visual Reception Scale of the Mullen Scales of Early Learning (Mullen, 1995). The Mullen Visual Reception items measure visual discrimination, memory, and problem solving without requiring children to produce a spoken response (e.g., through picture matching).

Participants

Participants were 16 children with ASD (mean age = 41 months) and 25 children who are TD (mean age = 48 months) taking part in a broader study of language processing and novel referent selection. Participants were recruited from across the lower peninsula of Michigan. All participants were monolingual and spoke English as their first language. The TD children were not reported by their parents to have any developmental delays and showed no signs of having ASD as indicated by the Lifetime Form of the Social Communication Questionnaire (Rutter et al., 2003), an ASD screener. All children in the ASD group had existing diagnoses of ASD, per parent report. In addition, we confirmed ASD diagnoses within the study using the Autism Diagnostic Observation Schedule, 2nd Edition (Lord, Luyster, et al., 2012; Lord, Rutter, et al., 2012). The ADOS-2 also provided a measure of autism severity (ADOS-2 comparison scores; see Table 1).

Participant descriptive information.

Note. Receptive and expressive language standard scores were from the Preschool Language Scales, 5th Edition, Auditory Comprehension and Expressive Communication scales, respectively. PLS-5 standard scores have a mean of 100 and SD of 15. The Mullen Scales of Early Learning t-score for the Visual Reception Scale has a mean of 50 and SD of 10. Comparison scores from the Autism Diagnostic Observation Schedule, 2nd Edition, represent autism severity and range from 1–10, with 10 being most severe.

indicates a significant group difference at p < .001.

Table 1 presents participant descriptive information. The ASD and TD groups did not significantly differ in chronological age (p = .17). Mean PLS-5 scores were significantly lower in the children with ASD than in the children who are TD (ps < .001). All TD children had standard scores within 1 SD of the mean on both scales of the PLS-5, indicating receptive and expressive language skills within normal limits. On average, the TD children scored within the 81st percentile on the Auditory Comprehension Scale and the 77th percentile on the Expressive Communication Scale. The majority of children with ASD scored more than 1 SD below age expectations on both scales of the PLS-5, indicating clinically significant delays in receptive and expressive language skills. Two children with ASD scored in the normal range on the Auditory Comprehension Scale, and one child with ASD scored in the normal range on the Expressive Communication Scale. On average, the children with ASD scored within the 12th percentile on the Auditory Comprehension Scale and within the 8th percentile on the Expressive Communication Scale.

On average, Mullen Visual Reception scores were significantly lower in the ASD group than in the TD group. All TD children had t-scores within the normal range and, on average, scored at the 73rd percentile. All children with ASD had t-scores more than 1 SD below the mean on the Mullen Visual Reception Scale, indicating moderate to severe delays in nonverbal problem-solving skills. On average, the children with ASD scored at the 26th percentile.

Novel referent selection task

Using a screen-based experimental task, children were taught the names of four novel objects: two high-salience objects and two low-salience objects. To limit auditory distraction, the task was conducted in a soundproof booth. To limit visual distraction, the walls were covered in black cloth. In most cases, children sat in a chair on their parent's lap. In a few cases, older children sat independently in the chair. Visual stimuli were presented on a 55-inch screen mounted on the wall of the soundproof booth. Auditory stimuli were presented from a central speaker at the base of the screen. A video camera below the screen recorded children's faces as they participated in the tasks, which allowed for offline manual coding of gaze location. Parents wore opaque sunglasses to prevent them from seeing the visual stimuli and unintentionally affecting their children's behaviors.

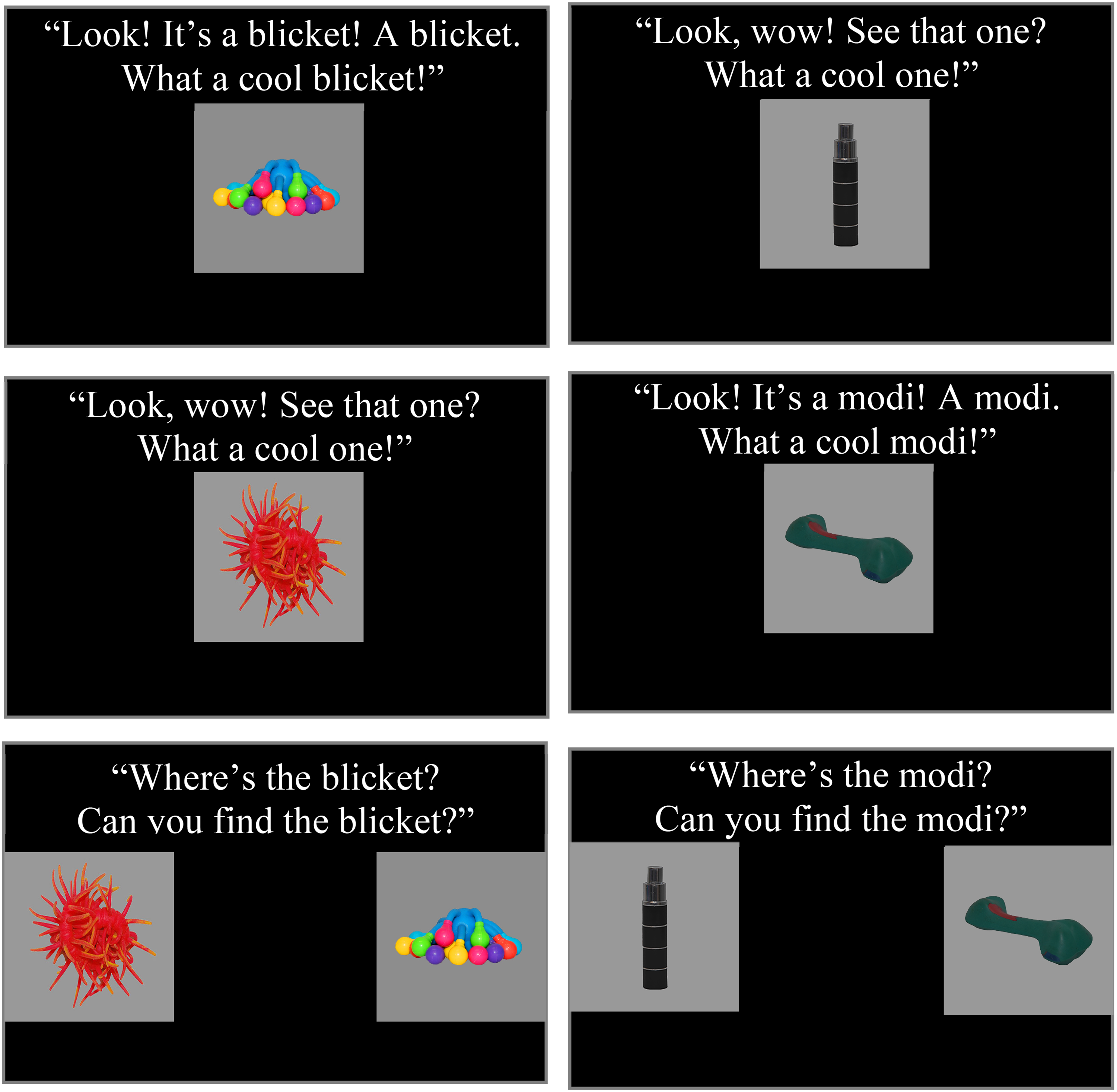

The novel referent selection task was comprised of teaching trials, low-difficulty test trials, and high-difficulty test trials (see Table 2). Attention grabbing video clips (e.g., a dynamic sunset with classical music) were interspersed to maintain engagement. Across the task, children heard a total of four novel labels—two for high-salience objects and two for low-salience objects. The full task lasted approximately 2½ min. Teaching trials presented a single object on the screen, along with auditory stimuli either labeling the object three times (e.g., Look! It's a blicket! A blicket. What a cool blicket!) or drawing attention to the object without labeling it (e.g., Look, wow! See that one? What a cool one!). The labeled object was the target in the low-difficulty test trials that immediately followed each pair of teaching trials, and the unlabeled object was the distracter. Each pair of teaching trials presented either low-salience or high-salience objects; salience levels were not mixed.

Sequence of teaching and test trials.

Note. Teaching trials presented 1 image at a time. Test trials presented 2 images at a time. Low-difficulty test trials occurred immediately after each teaching sequence and only required children to differentiate a labeled object from an object that had not been presented in one of the 2 preceding trials (“Look, wow! See that one? What a cool one”), but not labeled. High-difficulty test trials required children to differentiate two previously labeled objects. The full task lasted approximately 2½ min.

Immediately following each pair of teaching trials, a low-difficulty test trial displayed the labeled (target) object and unlabeled (distracter) object that had just been presented and asked children to find the target (e.g., Where's the blicket? Can you find the blicket?). These test trials were considered to have low difficulty because they occurred immediately after the teaching sequence and because they only required children to differentiate an object that had been labeled from an object that had not been labeled (see Figure 1).

Example teaching trials and low difficulty test trials for the high-salience condition (left) and the low-salience condition (right).

Following the teaching trials and low-difficulty test trials, four high-difficulty test trials were presented. The High-difficulty test trials began 1 min, 45 s into the task. Each high-difficulty test trial displayed either the two high-salience objects or the two low-salience objects that had been previously labeled and named one of them (e.g., Where's the blicket? Can you find the blicket?). Each novel label was probed once. These test trials were considered to have high difficulty because they did not occur immediately after a given teaching sequence and because they required children to differentiate two previously labeled objects (see Figure 2).

Examples of high-difficulty test trials for the high-salience condition (left) and low-salience condition (right).

Novel words and unfamiliar objects were obtained from the novel object and unusual name database (NOUN) database (Horst & Hout, 2016). The novel labels were blicket, gazzer, teebu, and modi. Auditory stimuli were recorded in a child-friendly voice by a female speaker native to the geographic area in which the study was conducted. Novel objects were selected to have either high salience or low salience. High-salience objects were bright, colorful, and visually complex (i.e., had multiple parts). Low-salience objects were less bright, less colorful, and did not have multiple parts. To ensure that specific aspects of the task design did not influence children's performance, two versions of the task were created, with object labels and target sides counterbalanced across the two conditions.

All test trials lasted 6.790 s. The onset of the target noun occurred 1.888 s into the trial. The target noun was presented a second time at 5.286 s into the trial; however, we did not analyze the second noun presentation because our focus was on the initial recognition of the target word. We established a window of analysis that began 300 ms after the onset of the initial target noun presentation and lasted for 2,000 ms (i.e., from 2.188 s to 4.188 s into the trial.) This analysis window allowed adequate time for children to hear the start of the target word and initiate a related eye movement.

Manual eye-gaze coding

Gaze location was determined by trained research assistants from video of the child's face and the known location of the images on the screen. We used manual gaze coding because it has been found to produce significantly lower rates of missing data in young children with ASD, compared to automatic eye tracking (Venker, Pomper, et al., 2020). The video had a sampling rate of 30 Hz, yielding time frames lasting approximately 33 milliseconds (ms). For each 33 ms time frame, coders recorded whether children were looking at the left image or the right image, were shifting between fixations, or were looking away from the screen. Post-processing software converted left and right looks to target and distracter looks. Approximately 15% of the data were independently coded by two trained research assistants; on average, coding agreement was 94%.

Analysis plan

The primary dependent variable was the proportion of looking to the target (looks to the target divided by looks to either the target or distracter). We refer to this variable as accuracy, as it represents the overall percentage of time children looked at the target image after it was named. Instances in which children were looking at neither object were categorized as missing data to avoid penalizing children for looks away from the screen. As a first step in characterizing children's novel referent selection, we used one-sample t-tests to determine whether mean accuracy during the analysis window was significantly higher than chance (50% looking at both images), which would provide evidence of novel referent selection.

Our primary analyses used mixed-effects modeling (Mirman, 2014) to determine how the perceptual salience of objects affects novel referent selection in TD children and children with ASD. We first conducted separate models for each group (TD and ASD). The dependent variable in each model was accuracy in each 33 ms time frame during the analysis window (300– 2,000 ms after noun onset). We analyzed the data using mixed-effects models. We included Salience (low vs. high), Linear Time, and Quadratic Time as fixed effects. We included interaction terms for salience and each time variable and allowed the intercept to vary for individual participants and the slope for both time variables. We further transformed the time terms (linear and quadratic) using orthogonal polynomials, allowing us to interpret each term independently. In addition, the orthogonal intercept was overall accuracy, which allowed us to determine the effect of salience on children's overall accuracy during the analysis window (rather than one specific y-intercept value; Mirman, 2014). The reference trial type was low-salience. Following the separate group models, we estimated a model including all participants to test the effect of Group (TD vs. ASD). This model contained an additional independent variable (Group) and several additional interaction terms. The reference group was the TD group.

Results of analyses for all participants combined.

Note. This model contained all participants. The low-Salience condition was the referent condition. The children who are typically developing were the reference group. Time terms were orthogonal. The dependent variable was accuracy during the analysis window.

Results

In the children who are TD, accuracy during the analysis window was significantly higher than chance (.50) in the high-salience and low-salience conditions for both the low-difficulty trials (ps < .006) and high-difficulty trials (ps < .016). These results indicated that the children who are TD demonstrated significant evidence of target novel referent selection across both conditions and both trial types. In the children with ASD, accuracy was significantly higher than chance in the low-difficulty trials for the low-salience condition (p = .020), but not for the high-salience condition (p = .815). In the high-difficulty test trials, accuracy in the children with ASD did not significantly differ from chance for either the high-salience condition (p = .509) or the low-salience condition (p = .389), indicating that children did not look at the target image more than would be expected by chance when they were required to differentiate the named target from a distracter that had also been labeled during the teaching trials. Because the gaze patterns in the children with ASD provided no significant evidence of target novel referent selection in the high-difficulty trials, subsequent analyses focused on the low-difficulty test trials only.

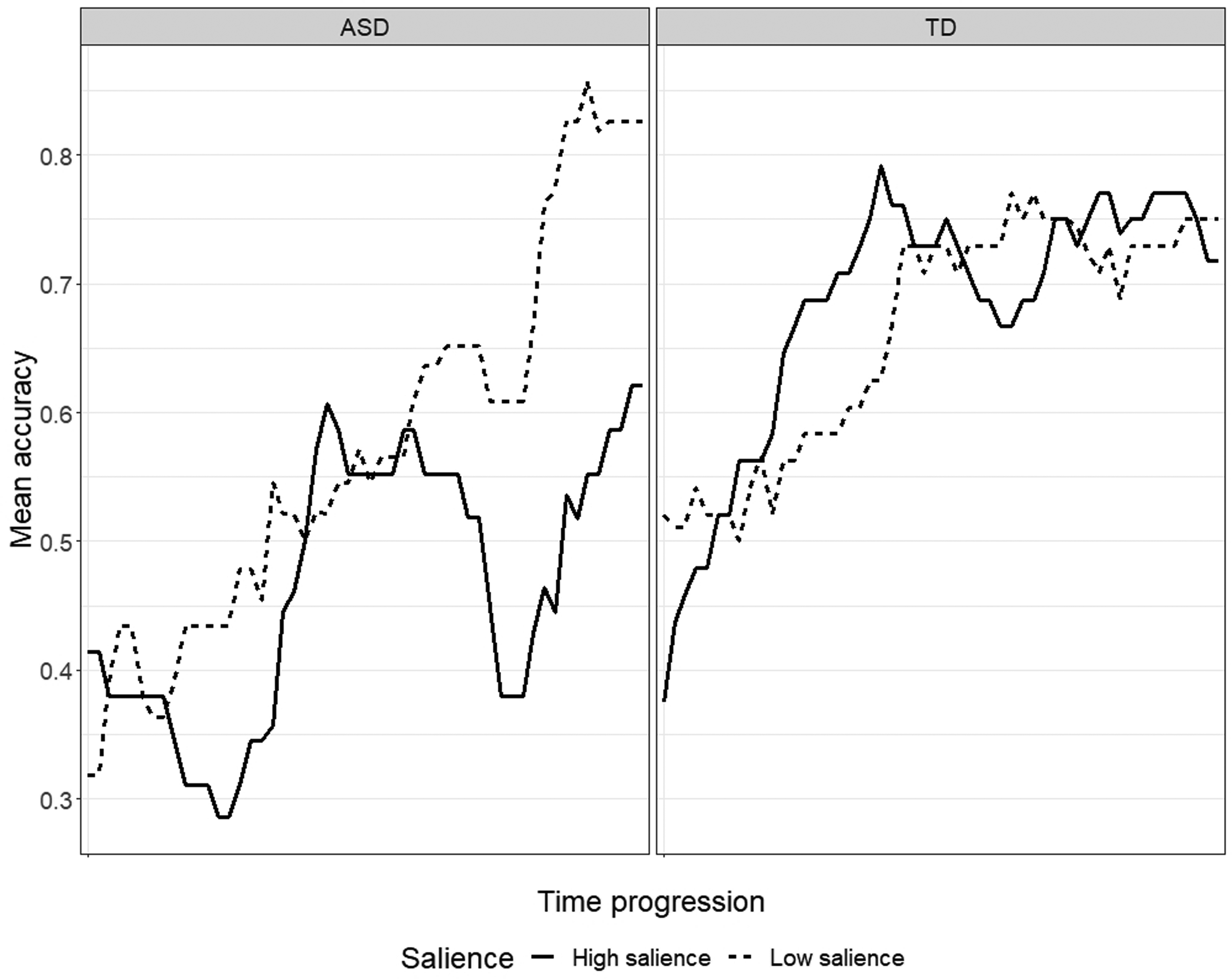

Full model results for each group are presented in Table 3. In the TD group, children's overall accuracy (represented by the orthogonal intercept term) was .67 in the low-salience condition and .69 in the high-salience condition. There was a significant fixed effect of Salience (Estimate = .02, p = .046), indicating that overall accuracy was significantly higher in the high-salience condition than in the low salience condition. The interaction between Salience and Linear Time was not significant (Estimate = .04, p = .478). There was a significant interaction between Salience and quadratic time (Estimate = −.13, p = .025), indicating that the peak of the curve was significantly less sharp in the high-salience condition compared to the low salience condition.

Results of separate analyses for each group.

Note. ASD Group = children with autism spectrum disorder. TD Group = children who are typically developing. The low-Salience condition was the referent condition. Time terms were orthogonal. The dependent variable was accuracy during the analysis window.

In the ASD group, children's overall accuracy was .55 in the low-salience condition and .47 in the high-salience condition. There was a significant fixed effect of Salience (Estimate = −.08, p < .001), indicating that overall accuracy was significantly lower in high-salience trials than in low-salience trials. There was also a significant interaction between Salience and Linear Time (Estimate = −0.62, p < .001), indicating that the rate of increase in accuracy over time was significantly less steep in high-salience trials than low-salience trials. The interaction between Salience and Quadratic Time was not significant (Estimate = −.16, p = .101).

We next estimated a model including all participants to test the effect of Group (TD vs. ASD; See Table 4). There was a significant main effect of Group (Estimate = −.12, p = .009), indicating that overall accuracy in the low-salience condition was significantly lower in the ASD group than in the TD group. There was also a significant Salience × Group interaction (Estimate = −.09, p < .001), demonstrating that there was a significantly larger difference in accuracy between the TD and ASD groups in the high-salience condition than the low-salience condition. There was also a significant Linear Time × Salience × Group interaction (Estimate = −.67, p < .001), indicating that the relationship between Linear Time and Salience significantly differed between the TD and ASD groups.

Exploratory reaction time analyses

To better understand why the children with ASD performed more poorly in the high-salience condition than the low-salience condition, we conducted a set of exploratory analyses examining reaction times (RTs) in the low-difficulty trials. We used 1-tailed p-values based on the expectation that it may take children longer to initiate a look away from the distracter images when they were visually striking (i.e., the high-salience condition) than when they were less visually striking (i.e., the low-salience condition). The dependent variable was the speed of novel referent selection (i.e., reaction time; RT), quantified as the amount of time after noun onset it took for children to initiate a look away from the distracter toward the target. Following standard procedures (Fernald et al., 2008), we examined only those trials in which children were looking at the distracter at noun onset and subsequently switched their attention to the named image (i.e., distracter-initial trials). We also excluded trials with RTs that were too short (< 300 ms) or too long (> 3,398 ms) to reasonably be related to the onset of the novel noun. For the ASD group, the number of valid RT trials was 8 in the low-salience condition and 10 in the high salience condition. For the TD group, the number of valid RT trials was 13 in the low-salience condition and 16 in the high-salience condition.

In the ASD group, it took an average of 829 ms for children to look away from the distracter in the low-salience condition (median = 634 ms), compared to 1,063 ms in high-salience trials (median = 884). A Mann Whitney U test revealed that this difference between RTs in high-salience and low-salience trials was non-significant for the children with ASD (1-tailed p = .084). In the TD group, the mean RT was 974 ms in low-salience trials (median = 633 ms) and 719 ms in high-salience trials (median = 667 ms). The difference between low-salience and high-salience trials was not significant in the TD group (1-tailed p = .184). In addition to testing the difference in RTs across low- and high-salience trials within each group, we tested the differences between the TD and ASD groups for each condition. In the low-salience condition, RTs did not significantly differ between the ASD and TD groups (1-tailed p = .268). In the high-salience condition, RTs were significantly longer in the ASD group than in the TD group (1-tailed p = .039).

Discussion

The current study investigated the effect of perceptual salience on novel referent selection in children with ASD and TD children of the same age. Based on previous findings (Akechi et al., 2011; Parish-Morris et al., 2007), we predicted that the children with ASD would show better novel referent selection in the high-salience condition than the low-salience condition. We also expected the TD children to show similar (strong) performance across the low-salience and high-salience conditions. Results were inconsistent with these predictions. For the low-difficulty trials, which immediately followed each pair of teaching trials, the children with ASD performed significantly worse in the high-salience condition than the low-salience condition. In fact, the eye movements of the children with ASD provided no indication of target novel referent selection in the high-salience condition; they looked at the named object only as much as would be expected by chance. In contrast, the TD children (who showed significant evidence of novel referent selection in both conditions) performed significantly better in the high-salience condition than the low-salience condition for the low-difficulty trials. These findings indicated that high perceptual salience disrupted novel referent selection in the children with ASD but facilitated attention to the target object in age-matched TD peers.

Why did the children with ASD show better performance in the low-salience condition than the high-salience condition—the opposite of the predicted effect? We based our initial prediction on the premise that children with ASD would be more interested in the high-salience target objects (see Figures 1 and 2), and, thus, more likely to associate these objects with their nonword labels. It is also possible that the high salience of target objects instead interfered with children's ability to encode the novel word, the unfamiliar object, and the link between them. Electrophysiological studies that examine event-related potentials (ERPs) may be beneficial in addressing this question (Key et al., 2020). The visual salience of the distracter images within the test trials themselves may also have played a bigger role than expected. In the test trials, the distracter images were purposefully selected to have the same salience level as the target: high-salience targets were presented with high-salience distracters, and low-salience targets were presented with low-salience distracters. We incorporated this design feature to ensure that the images within a given test trial were balanced in salience, to prevent children's attention from being disproportionately drawn to the more salient image (Fernald et al., 2008; Venker, Mathée, et al., 2021; Yurovsky & Frank, 2015). However, the high-salience distracters may have disrupted performance in the children with ASD (compared to the low-salience distracters), even though the target images within a given trial also had high salience (Figure 3).

ASD = children with autism spectrum disorder (left). TD = children who are typically developing (right). The x-axis represents the time course of the trials during the analysis window (300–2,000 ms after noun onset). Mean accuracy was the proportion of looking to the target (looks to the target divided by looks to either the target or distracter), averaged across trials and participants. The high-salience condition is represented by the solid line. The low-salience condition is represented by the dashed line.

Difficulty with visual disengagement has been proposed as a potential explanation for the disruptive effects of perceptual salience, as novel referent selection in the presence of a high-salience distracter object requires the ability to flexibly disengage and switch attentional focus (Akechi et al., 2011; Aldaqre et al., 2015; Gliga et al., 2012; Venker, 2017; Venker et al., 2018). Visual disengagement difficulties in individuals with ASD are related to the perceptual salience of the initially attended stimulus (Sacrey et al., 2014). Thus, the children with ASD may have had an easier time looking away from the low-salience distracters to focus on the low-salience target, and a harder time looking away from the high-salience distracters to focus on the high-salience target, resulting in chance-level performance in the high-salience condition (also see Aldaqre et al., 2015; Gliga et al., 2012). In other words, it may be possible that children with autism showed “stickier” attention (i.e., had more difficulty disengaging) with high-salience distracters than low-salience distracters, even though the two images were balanced in salience for any given test trial.

Consistent with this possible explanation, in the high-salience condition, children with ASD took significantly longer than the TD children to look away from the (high-salience) distracter image. In the low-salience condition (with low-salience distracter images), there was no significant difference between RTs in the ASD and TD groups. These results align with prior work showing difficulty disengaging from salient visual stimuli in children with ASD and point to the presence of these attentional effects during novel referent selection (Landry & Bryson, 2004; Sacrey et al., 2014; Venker et al., 2018). It should be acknowledged that the children with ASD had lower language and cognitive skills than the children who are TD, which could have played a role. Interestingly, perceptual salience has been found to have similar effects on young TD children (1–4 years old) during novel referent selection in an experimental context where one image had higher salience than the other (Yurovsky & Frank, 2015).

Another notable finding was the low performance of the children with ASD in the high-difficulty test trials. Though the TD children showed significant recognition of the novel labels in the high-difficulty trials across both salience levels (as they had in the low difficulty trials), the children with ASD did not show significant evidence of recognizing the novel words in the high-difficulty trials, regardless of salience level. This finding was somewhat surprising because the teaching trials labeled only one object at a time and, thus, did not require children to disambiguate which of two objects was the intended referent during teaching. It may be beneficial for future studies to repeat novel labels more than three times to increase the likelihood of success (Luyster & Lord, 2009). In addition, future work should include studies that present more than one object during training, which will increase ecological validity by presenting situations of referential ambiguity, in which is it not immediately clear which object is the intended referent.

In our view, it is likely that the disruption the children with ASD experienced in the high-salience condition was the product of proximal effects during the test trials. As discussed by Yurovsky and Frank (2015), even minor adjustments in object salience during test trials can mask children's learning. Eye-gaze tasks can be advantageous for measuring novel referent selection in real time, but it is important to acknowledge that they are not immune to the effects of lower level visual features, such as perceptual salience. The current results underscore the complexity of referent selection and word-learning processes and highlight the importance of taking the immediate testing context into account. This finding applies not only to experimental tasks, but also to screen-based language assessments that take eye-gaze patterns into account (e.g., Brady et al., 2014).

The current findings also have broader implications for the effective design of electronic screen media and screen-based learning activities for individuals with ASD. As is the case for children in general, electronic screen media is a preferred leisure activity for children with ASD (Montes, 2016; Shane & Albert, 2008; Stiller et al., 2019; Stiller & Mößle, 2018). Screen-based learning activities for individuals with ASD are also increasingly common in academic settings and intervention contexts (Shane & Albert, 2008; Stiller et al., 2019; Wainer & Ingersoll, 2011). However, numerous research groups have emphasized the importance of evaluating and refining electronic screen media as a learning tool for individuals with ASD (Gwynette et al., 2018; Odom et al., 2015; Shane & Albert, 2008). For example, it has been proposed that the constrained viewing area of electronic screen media helps individuals with ASD focus their attention on the information relevant for learning (Mineo et al., 2009). However, the current findings suggest that, even within a single screen, the perceptual details of any incidental content presented can draw attention away from the target stimuli. It is also notable that disruption occurred even though the on-screen material was presented in a very simple, picture-based format; stimuli in this study differed only in their color, brightness, and complexity. The effects of salience would likely be exaggerated if movement or audio features were also involved, as in the case of animation. Given the particular susceptibility for learning to be disrupted by salience-based features in individuals with ASD, it is critical to ensure that designers and creators of these screen-based learning tasks take the importance of these perceptual features into account and acknowledge their potential effect on attention and learning.

The current study had several limitations. We did not measure long-term retention, in part because we were concerned that the children with ASD would have difficulty even with short-term referent recognition (which was, indeed, the case). Successfully investigating retention in young children with ASD will require boosting the initial stages of learning. Because we did not include a behavioral forced-choice task, it cannot be ruled out that the children with ASD could have displayed unusual looking behaviors prior to selecting the correct referent through a purposeful response. Future studies may benefit from combining eye-gaze measures and forced-choice tasks. The distracters in the low difficulty test trials had been described during teaching, but not labeled. Children may have attended to the target object not because they had associated it with its label, but because it had been labeled (Axelsson et al., 2012; McDuffie et al., 2006). The exploratory analyses did not test the relationship between RT and learning accuracy, which would be informative in future work. Language and cognitive skills were significantly lower in the children with ASD than the children who are TD. Because the groups differed both on diagnostic status (ASD vs. TD) and language and cognitive skills, current results cannot determine whether the results in the ASD group represent a quantitatively or qualitatively different pattern compared to typical development. Future work may benefit from including both age-matched and language-matched comparison groups or testing within-group correlations in larger samples.

Our use of manual gaze coding had both strengths and limitations. We used manual gaze coding because it has been shown to produce significantly less missing data and exclude fewer participants than automatic eye tracking (Venker, Pomper, et al., 2020). Increasing usable data and participant retention maximizes the validity and generalizability of results—a strong scientific advantage. However, manual gaze coding has relatively limited spatial precision compared to automatic eye tracking. As a result, we were unable to manually code children's attention during the teaching trials. Unlike the test trials (which contained two images, one on each side of the screen), each training trial presented a single image in the center of the screen. Though coders easily differentiated looks to the left and right images during the test trials, pilot testing revealed that it was not possible to reliably distinguish looks to the center image from looks to other (non-image) areas of the screen. Relatedly, we are unaware of any published studies that have successfully used manual gaze coding to measure gaze to a single, center image. Automatic eye-tracking systems offer the spatial precision needed to measure gaze to a single image, but we considered the benefits of manual gaze coding to outweigh this potential advantage—particularly since many of the children with ASD in this study were unable to successfully calibrate using our automatic eye tracker.

Conclusion

Findings from the current study add to growing evidence that high perceptual salience has the potential to disrupt novel referent selection in children with ASD—even in situations where perceptual salience may provide a boost for their TD peers. Children with ASD appear to be particularly vulnerable to moment-by-moment distractions from irrelevant (but visually interesting) stimuli, which could have cumulative negative effects on language development (Kucker et al., 2015; Tamis-LeMonda et al., 2014; Venker et al., 2018). Intervention strategies in which adults provide linguistic input based on the child's focus of attention are likely to be beneficial in accounting for these attentional differences (McDuffie & Yoder, 2010). Integrating social and perceptual cues to maximize the salience of relevant information may also help to facilitate novel referent selection and word learning in children with ASD (Akechi et al., 2011; Hartley et al., 2020; Moore et al., 1999). Future work is needed to understand the cognitive processes that underlie attentional differences in word-learning contexts in individuals with ASD, including factors related to motivation, inhibitory control, and reward value versus information value of visual stimuli (Wang et al., 2015; Yurovsky & Frank, 2015).

The Authors declare that there are no financial or professional conflicts of interest regarding the findings reported in this manuscript.

Footnotes

Acknowledgements

We gratefully acknowledge the children and families who took part in this study. This work would not have been possible without them. Thanks to the Lingo Lab Research Team—Ellen Brooks, Carly Clark, Kaylee Commet, Ilana Cooper, Libby Fernau, Kaitlin Gaynor, Ava Harding, Monica Holland, Rachel Houtteman, Lauren Luzbetak, Jessie Magalski, Megan Nylund, Kendra Peffers, Kelli Pfiester, Olivia Roberts, and Megan Yasick—for their invaluable assistance with stimulus preparation, recruitment, data collection, and eye-gaze coding.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Institute on Deafness and Other Communication Disorders, (grant number R21DC016102).