Abstract

Introduction

Effective clinical research training is crucial for advancing medical science and improving patient care. However, current evaluation systems in China often focus on theoretical knowledge, neglecting practical skills and innovation. This study aimed to develop a comprehensive evaluation framework for clinical research training programs using the Delphi consensus method.

Method

A 2-round Delphi method was employed, involving healthcare professionals and educators from top tertiary hospitals and leading academic institutions in China. The first round included 15 participants, and the second round included 19 participants. The evaluation framework was based on the Kirkpatrick model, covering Reaction, Learning, Behavior, and Results dimensions. Indicators were evaluated using a 5-point Likert scale, with consensus defined as a mean significance score ≥3.50 and a coefficient of variation ≤0.25.

Results

In the first round, 9 indicators were excluded and 5 added. In the second round, 26 indicators met consensus criteria. Key indicators included “Relevance of training content” (mean = 4.89, CoV = 0.06), “Degree of knowledge mastery” (mean = 4.58, CoV = 0.13), and “Impact on career development” (mean = 4.53, CoV = 0.15). Other significant indicators were “Timeliness of training information” (mean = 4.84, CoV = 0.08) and “Success rate of applying for scientific research funds” (mean = 4.05, CoV = 0.21).

Discussion

This study developed a comprehensive evaluation framework for clinical research training in China, emphasizing the importance of relevant training content, strong learning outcomes, and long-term professional impact. This framework provides a robust tool to assess and enhance clinical research training programs, ultimately contributing to improved healthcare and medical research. Future work should focus on validating this framework through empirical studies and refining it based on ongoing feedback.

Keywords

Introduction

The rapid evolution of medical science and healthcare demands robust clinical research training to cultivate a new generation of physician-scientists capable of driving innovation and improving patient outcomes.1,2 While this need is global, it is especially urgent in China, where clinical research capacity is expanding rapidly but lacks a standardized, multidimensional evaluation system to ensure training quality and effectiveness.

Internationally, structured approaches to evaluating clinical research training have gained traction.1,3,4 In the United States, the National Institutes of Health (NIH) supports program assessment through the Clinical and Translational Science Awards (CTSAs), which emphasize measurable outcomes across multiple domains of trainee development. 5 Similarly, the European Union promotes competency-based training frameworks that integrate multidisciplinary collaboration, practical research skills, and translational impact.6,7 These initiatives highlight a shared recognition: effective training must be systematically evaluated—not just by knowledge acquisition, but by changes in behavior, research output, and real-world contributions.

In contrast, evaluation of clinical research training in China remains underdeveloped. 8 Most programs rely heavily on theoretical coursework and summative exam scores, offering little assessment of practical research competence, innovation capacity, or long-term professional impact. 9 This narrow focus fails to capture essential dimensions of trainee development and hinders the identification and nurturing of high-potential research talent. 9 Moreover, no nationally recognized evaluation framework exists that aligns with China's institutional structures, educational priorities, or healthcare goals.

To address this gap, we propose a comprehensive, contextually adapted evaluation framework grounded in the Kirkpatrick model—a well-established theory in training evaluation that assesses outcomes across 4 levels: Reaction, Learning, Behavior, and Results. This model provides a robust theoretical foundation for evaluating not only how trainees perceive and learn from programs, but also how training translates into research practice and institutional impact.10,11

To ensure our framework was both evidence-informed and practically relevant, we began with a systematic literature review and expert interviews. We conducted an extensive search of PubMed, Embase, and Web of Science databases (from inception to May 2025) using keywords including “clinical research training,” “medical training,” “evaluation,” and “framework.” After screening 484 articles, we identified 35 that were most pertinent to evaluating clinical research training or similar educational programs. These articles provided a foundational set of potential indicators. Crucially, we then conducted in-depth interviews with 9 experts—specifically, individuals who serve as program directors of clinical research training programs or are recognized experts in medical education. These experts were purposively selected based on their extensive, hands-on experience and demonstrated expertise in designing, implementing, or evaluating clinical research training initiatives. This dual approach ensured our initial list of 35 indicators was not merely derived from international literature but was actively shaped and refined by practitioners familiar with the specific challenges and opportunities of clinical research training in China. By integrating these expert perspectives with the findings from the literature, we ensured a well-rounded and robust starting point for our Delphi process.

Method

Study Design

A 2-round modified-Delphi method was selected as our primary research method to engage a diverse group of experts in the field of clinical practice and education. 12 This method is particularly suited for achieving consensus among experts on complex issues, such as the development of an evaluation model that is both culturally sensitive and scientifically rigorous. 13 The goal was to leverage the collective expertise of healthcare educators and professionals with practical experience to develop an evaluation model that is theoretically sound and practically applicable within the Chinese context. The study was conducted between November 27, 2024, and January 17, 2025. The reporting of this study conforms to the DELPHISTAR statement. 14

Modified-Delphi Method

In the first round of our Delphi process, we constructed a survey to assess key elements of clinical research training evaluation using the Kirkpatrick model. This survey was based on literature research for indicators of clinical research training evaluation or similar courses. Participants were asked to rate each indicator using a 5-point Likert scale, where a score of 5 represented strong agreement, 4 indicated agreement, 3 signified neutrality, 2 denoted disagreement, and 1 reflected strong disagreement. The criteria for index deletion were a mean significance score of less than 3.50 or a coefficient of variation greater than 0.25.15–17 After completing the questionnaire, experts were encouraged to identify any additional elements not previously listed.

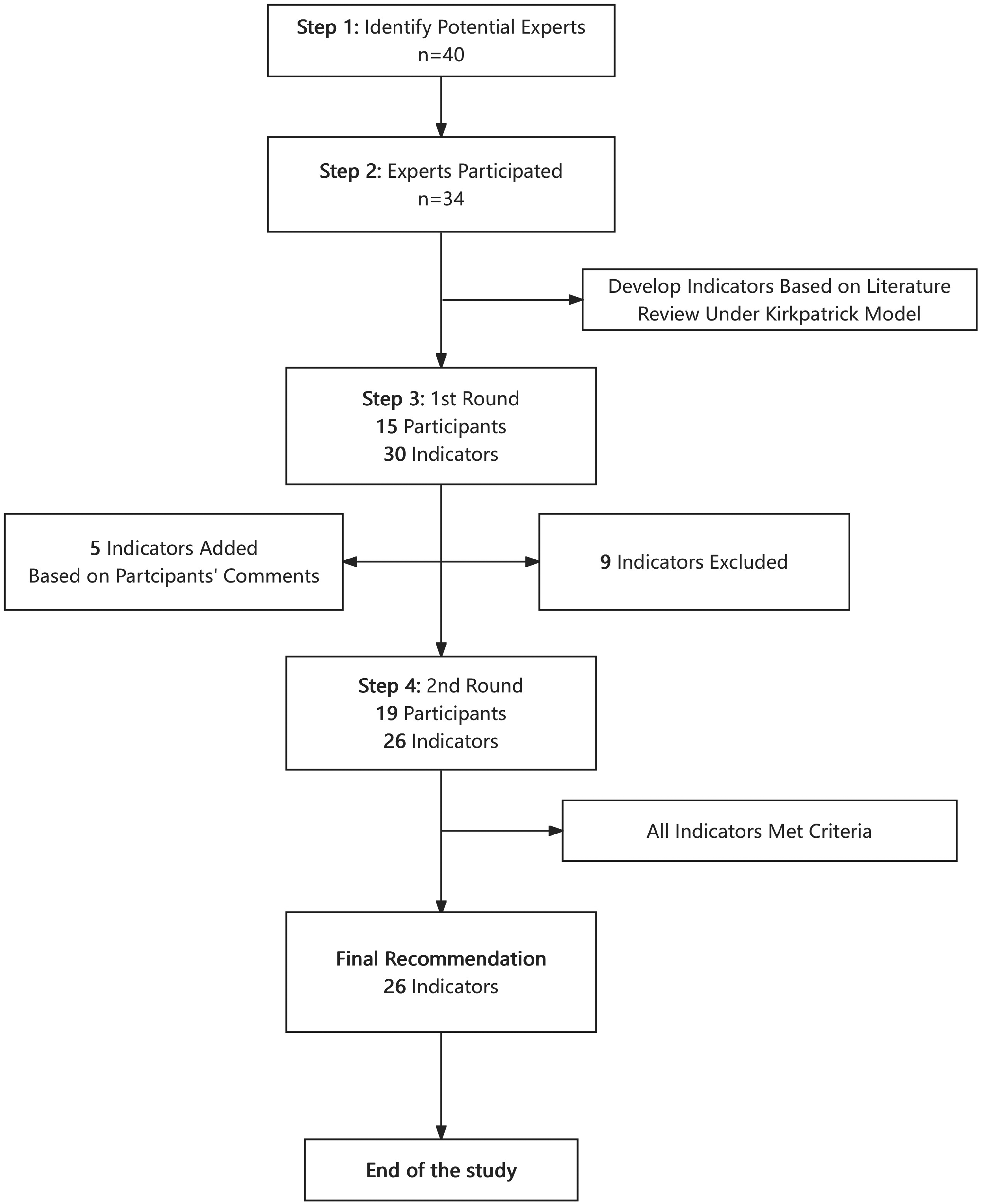

Based on the first-round results and feedback, the indicators were modified, and a second-round questionnaire was developed. To achieve a shared understanding and pinpoint the essential aspects of clinical research training, we undertook at least 2 iterations. The Delphi process was terminated once consensus was reached.18,19 The study process is shown in Figure 1.

Screening process of the clinical research training program.

Participants

In our study, we used a 2-round Delphi method with 34 participants in total (15 in the first round and 19 in the second). We recruited healthcare professionals involved in clinical research and education.

Participants were selected based on the following inclusion criteria:

Clinical Research Training Experience: Completion of at least 1 clinical research training program in China. Professional Role: Current or past involvement as a student, instructor, program manager, or curriculum designer in clinical research training programs in China (Figure 2). Relevant Expertise: Demonstrated experience in clinical research and/or medical education, evidenced by active participation, teaching, program leadership, or scholarly output (eg, publications or conference presentations) in the field.

Evaluation framework for clinical research training in China based on the Kirkpatrick model.

The following exclusion criteria were applied:

Less than 3 years of professional experience in clinical research or medical education. No recent contributions to the field (defined as no publications, presentations, or program involvement in the past 5 years).

All participants were affiliated with top-tier tertiary hospitals or leading academic institutions in China to ensure expert-level input. A total of 34 experts participated across 2 Delphi rounds.

In the first round, there were 15 participants, consisting of 3 males (20%) and 12 females (80%). In the second round, the number of participants increased to 19, with 5 males (26.32%) and 14 females (73.68%). The age distribution of participants varied across different age groups. In the first round, the majority were aged between 31 and 40 years (46.67%), followed by those aged 41–50 years (40%). In the second round, the age group 41–50 years had the highest representation (47.37%), with those aged 31–40 years also well-represented (36.84%). Participants had diverse areas of expertise, including clinical medicine, education, hospital management, and bioinformatics. In the first round, 9 participants (60%) were from clinical medicine, while 1 participant (6.67%) was involved in education. In the second round, all 19 participants (100%) were from clinical medicine; 1 also had involvement in hospital management (5.26%), and 1 had a background in another discipline (5.26%), indicating a focus on clinical perspectives. Participants represented a diverse range of stakeholder roles within clinical research training programs, including students, instructors, program managers, and program designers. Importantly, the survey allowed participants to select all roles that applied to them, reflecting the reality that many individuals transition between these roles over time—for example, former students often become instructors or program managers, and some may even contribute to curriculum design. This approach ensured that we captured perspectives from individuals with direct, lived experience across multiple stages of the training lifecycle. In the first round, 8 participants (53.33%) identified as students, 7 (46.67%) as instructors, and 7 (46.67%) as program managers. In the second round, participation broadened further: 10 participants (52.63%) selected “student,” 2 (10.53%) “instructor,” 8 (42.11%) “program manager,” and 12 (63.16%) “program designer.” Participants had varying levels of involvement in clinical research training programs. In the first round, 7 participants (46.67%) had participated in 1–3 times, while 6 (40%) had participated more than 6 times. In the second round, the majority of participants (63.16%) had participated in 1–3 times, and only 3 (15.79%) had participated more than 6 times. For a comprehensive overview of participant demographics, please refer to Table 1.

Characteristics of Expert Panelists Involved With Clinical Research Training Evaluation in China Questionnaire.

Ethical Considerations

The study protocol adhered to the ethical guidelines outlined in the Helsinki Declaration and was approved by the Clinical Research Ethics Committee of Jiahui Medical Education and Research Group (Ethics approval number: A-CR-2025003). Written informed consent was obtained from all participants.

Statistical Method

The statistical analysis was conducted using Stata SE 16 and Microsoft Excel. Descriptive statistics (mean, standard deviation, coefficient of variation, percentages) were used to summarize the data. Consensus was determined iteratively across the 2 rounds. For each indicator, the mean score and CoV were calculated after each round. An indicator was retained if it met the predefined consensus criteria (Mean ≥ 3.50 and CoV ≤ 0.25) in the second round.

Result

The Delphi process successfully refined an initial set of 35 indicators (Table 2) into a final framework of 26 consensus-based indicators for evaluating clinical research training programs in China. This evolution reflects the iterative nature of expert consultation, with 9 indicators removed after Round 1 due to failing to meet prespecified consensus criteria, and 5 new indicators added based on expert feedback to address critical gaps.

The Description of the Indicators Which Used in the Evaluation Model Questionnaire Based on the Kirkpatrick Model.

Indicators added on the second round of survey.

Consensus Criteria and Indicator Evolution

Consensus for retaining an indicator was defined a priori as a mean significance score ≥3.50 and a coefficient of variation (CoV) ≤ 0.25. In Round 1, 9 indicators failed to meet these criteria and were excluded from the final model. Notably, several indicators related to behavioral outcomes, such as “Frequency of participation in academic exchanges” (mean = 3.20, CoV = 0.24) and “Number of patents” (mean = 3.27, CoV = 0.27), did not achieve consensus. Based on qualitative feedback from Round 1 participants, 5 new indicators were introduced in Round 2 to capture more nuanced aspects of trainee development, including “Innovation ability,” “Student practical ability,” and “Lecturer feedback suggestion.” All 5 new indicators met the consensus criteria in Round 2, demonstrating their perceived value to the expert panel.

Summary of Consensus Indicators by Kirkpatrick Dimension

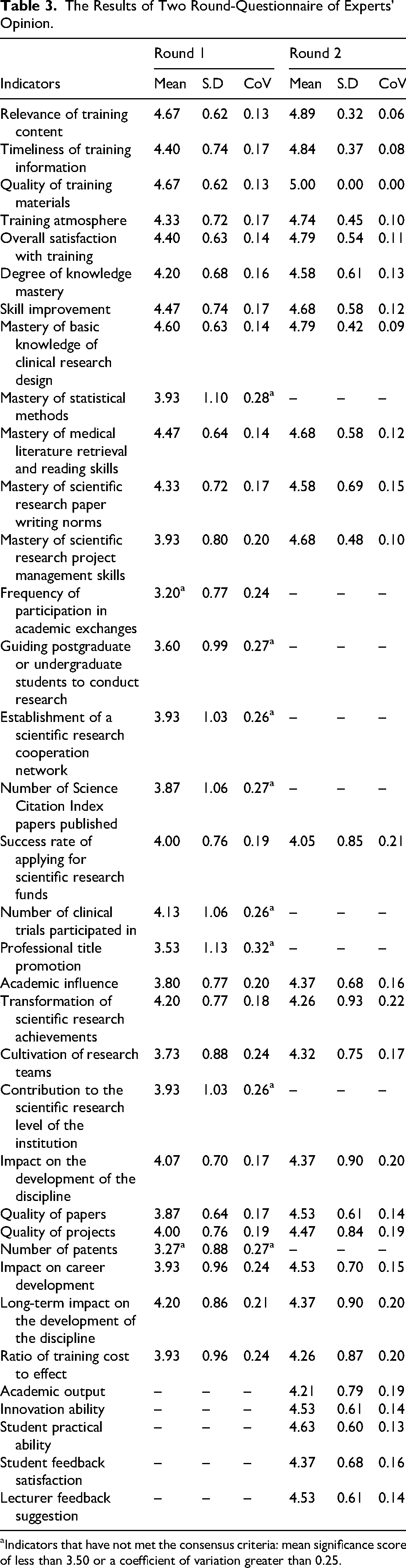

The final 26 indicators are presented below, organized by the 4 levels of the Kirkpatrick model. The results highlight strong agreement across all dimensions, with particular emphasis on the relevance of content and long-term career impact, please refer to Table 3.

Reaction Dimension: Experts strongly endorsed indicators assessing the immediate trainee experience. “Relevance of training content” received the highest mean score (4.89, CoV = 0.06), followed closely by “Timeliness of training information” (4.84, CoV = 0.08). “Quality of training materials” achieved a perfect mean score of 5.00, indicating unanimous agreement on its importance. Learning Dimension: This dimension showed robust consensus, with high scores for core competencies. “Skill improvement” (mean = 4.68, CoV = 0.12) and “Degree of knowledge mastery” (mean = 4.58, CoV = 0.13) were among the top-rated indicators. “Mastery of basic knowledge of clinical research design” also scored highly (mean = 4.79, CoV = 0.09). Behavior Dimension: Only 1 indicator in this dimension met the consensus criteria: “Success rate of applying for scientific research funds” (mean = 4.05, CoV = 0.21). While this indicator just met the CoV threshold, its inclusion underscores the panel's view that securing funding is a critical behavioral outcome of effective training. Results Dimension: This dimension yielded the largest number of consensus indicators, reflecting a strong focus on long-term impact. Key outcomes included “Impact on career development” (mean = 4.53, CoV = 0.15), “Academic influence” (mean = 4.37, CoV = 0.16), and “Transformation of scientific research achievements” (mean = 4.26, CoV = 0.22). The newly added indicator “Innovation ability” (mean = 4.53, CoV = 0.14) was also highly rated, highlighting the panel's desire to measure creative capacity.

The Results of Two Round-Questionnaire of Experts’ Opinion.

Indicators that have not met the consensus criteria: mean significance score of less than 3.50 or a coefficient of variation greater than 0.25.

The analysis reveals a clear prioritization of indicators that link training directly to tangible, long-term outcomes. The high ratings for “Impact on career development” and “Success rate of applying for scientific research funds” suggest that experts view training success through the lens of professional advancement and research productivity. Furthermore, the unanimous support for “Quality of training materials” and the high scores for “Relevance” and “Timeliness” indicate that the foundation of effective training lies in well-designed, current, and applicable content. The addition and subsequent consensus on new indicators like “Innovation ability” and “Student practical ability” demonstrate the panel's commitment to evolving the framework to capture modern competencies beyond traditional metrics.

Discussion

This study, employing the Delphi method, has successfully identified and refined key indicators to form a robust evaluation model based on the Kirkpatrick model. The findings provide valuable insights into the critical elements that should be considered when assessing the effectiveness of clinical research training programs in China. While the study is grounded in the Chinese context, its implications extend globally, offering a comparative lens for international medical education communities to reflect on their own clinical research training frameworks.

Addressing the Core Deficiencies in China's Training Landscape

The most significant contribution of this framework is its explicit response to the identified shortcomings of China's current evaluation system, which remains heavily skewed toward theoretical knowledge and summative exams.8,9 Our findings demonstrate a clear expert consensus that effective evaluation must extend far beyond the “Learning” level to encompass tangible behavioral changes and long-term professional outcomes. This represents a fundamental shift from the prevailing model.

From Theory to Practice: The high consensus scores for indicators like “Success rate of applying for scientific research funds” (mean = 4.05) and “Transformation of scientific research achievements” (mean = 4.26) are particularly telling. In the Chinese context, where career advancement for physicians is often tied to securing national grants and publishing in high-impact journals, these indicators are not abstract metrics; they are direct measures of a trainee's ability to navigate the real-world research ecosystem. This framework forces programs to move beyond assessing what trainees know to evaluating what they can do and achieve. It directly counters the criticism that current programs produce researchers who are strong on paper but weak in practice.20,21 Bridging the Clinical-Research Divide: A major challenge in China is the perceived tension between clinical service and research productivity.

20

The framework implicitly addresses this by including indicators that measure the practical application of skills, such as “Student practical ability” (mean = 4.63), which assesses the ability to apply theory to real clinical research design. Furthermore, the emphasis on “Impact on career development” (mean = 4.53) acknowledges that successful training must support both academic and clinical career paths, fostering physician-scientists who can thrive in integrated roles rather than being forced into silos. Moving Beyond the “Publication Count": While “Number of Science Citation Index papers published” was excluded for failing to meet consensus, the retention of “Quality of papers” (mean = 4.53) and “Academic influence” (mean = 4.37) suggests a sophisticated understanding among experts. They recognize that quantity alone is an inadequate metric. Instead, they prioritize the impact and quality of research output, which better reflects genuine scholarly contribution and aligns with global trends moving away from simple publication counts toward more nuanced impact assessments.21,22

How This Framework Improves Upon Existing Models

While frameworks such as the NIH's CTSA or Europe's Horizon Europe emphasize comparable domains, this Chinese model distinguishes itself through contextual prioritization and locally grounded design. 23 Unlike generic competency-based approaches like CanMEDS or ACGME milestones24,25—often adapted retrospectively to fit diverse settings—our framework was built from the ground up with direct input from Chinese experts who understand the realities of local institutional pressures and incentive structures. This is exemplified by the near-unanimous consensus on “Quality of training materials” (mean = 5.00), which reflects a pressing local need for scientifically rigorous and practically relevant educational resources, addressing widespread concerns about outdated or poorly designed course content in many Chinese institutions. 26 Moreover, the framework introduces a dynamic element often absent in traditionally top-down evaluation systems: feedback loops. Newly added indicators such as “Lecturer feedback suggestion” (mean = 4.53) and “Student feedback satisfaction” (mean = 4.37) institutionalize ongoing dialogue between trainers and trainees, transforming evaluation from a static compliance exercise into a mechanism for continuous program improvement. Finally, the framework directly confronts a well-documented weakness in Chinese medical education—the underemphasis on creative thinking—by incorporating “Innovation ability” (mean = 4.53) as a measurable outcome. This not only validates innovation as a core competency but also mandates that training programs actively cultivate originality and problem-solving alongside technical skills.

Implications for Clinical Research Training Programs in China and Beyond

This study's framework not only addresses China's unique challenges but also contributes to global discussions on optimizing clinical research training, particularly in resource-limited settings where balancing clinical and research demands is a universal challenge. 27

Curriculum Design

Clinical research training programs in China should be designed to be dynamic and responsive to the latest developments in medical science. The curriculum must align with the current needs of the healthcare system and the future career aspirations of trainees, ensuring that they acquire both clinical and research competencies. The integration of basic medical sciences with clinical practice and research skills is crucial for developing well-rounded physician-scientists. 20 Additionally, the curriculum should emphasize the importance of continuous learning and adaptation to new technologies and methodologies. 28

Quality of Training Materials

High-quality educational resources are fundamental for effective knowledge transfer and skill development in clinical research training. Training materials should be regularly updated to reflect the latest advancements in medical research and practice. In China, the use of validated assessment tools and diverse instructional methods, such as problem-based learning (PBL) and team-based learning (TBL), is essential for enhancing the learning experience. 26 However, current training programs often rely heavily on lecture-based formats, with limited emphasis on practical skills and behavioral changes. 26 Future efforts should focus on diversifying instructional methods and incorporating more interactive and hands-on learning experiences.

Skill Development

Practical skills are a cornerstone of clinical research training in China. Trainees should be equipped with essential skills such as data analysis, scientific writing, and project management to navigate the complexities of clinical research. The current training model in China, particularly the “5 + 3 + X” model, aims to combine clinical subspecialty training with research capabilities. This model comprises 5 years of undergraduate medical education, followed by 3 years of standardized residency training, and an additional “X” years of specialized fellowship training (which may include research components) for those pursuing advanced clinical or academic careers.20,29 However, concerns have been raised about the adequacy of clinical practice experience in these programs, highlighting the need for a balanced approach that integrates both clinical proficiency and research competence. 20 Hands-on workshops and mentorship from experienced researchers can further enhance the practical skills of trainees.

Long-Term Impact

The long-term impact of clinical research training programs should be measured through outcomes such as the transformation of research achievements into clinical practice. 30 In China, the ability to secure research funding and translate scientific findings into practical applications is crucial for career advancement and institutional contributions. 31 Training programs should focus on developing the skills required for successful grant applications and fostering a culture of innovation and collaboration. Additionally, ongoing evaluation and feedback mechanisms are necessary to ensure that training programs continue to meet the evolving needs of the healthcare system and research community. 32

Implementation and Evaluation

The next critical phase involves implementing this Delphi-consensus evaluation framework in real-world clinical research training settings to assess its practicality and effectiveness. Pilot programs should be conducted across diverse institutions in China, including tertiary hospitals and academic medical centers, to evaluate how well the framework aligns with local training needs and operational constraints. Feedback from trainees, instructors, and program administrators will be essential to identify gaps, such as discrepancies between theoretical training and practical application, or challenges in measuring intangible outcomes like innovation capacity.

Also, from a global perspective, the framework's adaptability should be tested in other healthcare systems, particularly in low- and middle-income countries (LMICs) where resource limitations and varying research infrastructures may necessitate modifications.

Limitations

The present study acknowledges several limitations. First, while we aimed to recruit 40 participants, the final sample size was 34 (15 in Round 1, 19 in Round 2). This shortfall may limit the robustness of the consensus achieved and the generalizability of the findings. We mitigated this by ensuring all participants were highly experienced experts from leading institutions and by conducting a second round to refine the indicators. Second, the panel composition, while expert, was predominantly female and heavily focused on clinical medicine, with limited representation from other disciplines like public health, biostatistics, or ethics. This lack of diversity in perspectives may affect the comprehensiveness of the framework, potentially overlooking critical evaluation aspects relevant to nonclinical stakeholders. Future studies should strive for a more diverse panel. Third, the framework was developed for China; its applicability to other regions requires further validation through comparative studies. Fourth, a formal calculation and justification for the target sample size were not performed prior to data collection, which is a methodological limitation common in Delphi studies but nonetheless affects the interpretability of the consensus strength. Finally, the small sample size and potential for selection bias are inherent limitations of the Delphi method that warrant consideration when interpreting the results.

Conclusion

This study developed a comprehensive evaluation framework for clinical research training in China using the Delphi method. The findings emphasize the importance of relevant training content, measurable learning outcomes, and long-term professional impact. While focused on China, the framework aligns with global priorities in clinical research education, offering insights for international medical training programs facing similar challenges in integrating research and practice. Future studies should validate this model in diverse settings to enhance its applicability worldwide. By addressing both local needs and universal training principles, this work contributes to advancing clinical research education globally.

Supplemental Material

sj-docx-1-mde-10.1177_23821205261428939 - Supplemental material for Developing a Delphi-Consensus Evaluation Framework for Clinical Research Training: A Chinese Model With Global Implications

Supplemental material, sj-docx-1-mde-10.1177_23821205261428939 for Developing a Delphi-Consensus Evaluation Framework for Clinical Research Training: A Chinese Model With Global Implications by April Shengjie Zhu, Jeremy Haoqing Zhu, Yun Chen and Paula Pei Li in Journal of Medical Education and Curricular Development

Supplemental Material

sj-docx-2-mde-10.1177_23821205261428939 - Supplemental material for Developing a Delphi-Consensus Evaluation Framework for Clinical Research Training: A Chinese Model With Global Implications

Supplemental material, sj-docx-2-mde-10.1177_23821205261428939 for Developing a Delphi-Consensus Evaluation Framework for Clinical Research Training: A Chinese Model With Global Implications by April Shengjie Zhu, Jeremy Haoqing Zhu, Yun Chen and Paula Pei Li in Journal of Medical Education and Curricular Development

Footnotes

Acknowledgments

The authors thank all 34 expert panelists who generously contributed their time and expertise to this study. Their insights were instrumental in developing this evaluation framework.

Ethics Statement

Not applicable.

Consent to Participate

Not applicable. Participation in the Delphi survey was voluntary, and informed consent was obtained electronically from all participants prior to their involvement in the study.

Author Contributions

April Shengjie Zhu: conceptualization, literature review, survey development, data collection, data analysis, manuscript writing. Jeremy Haoqing Zhu: literature review, manuscript writing. Yun Chen: literature review, manuscript writing. Paula Pei Li: project administration, participant recruitment, corresponding author responsibilities, manuscript review and editing. All authors reviewed and edited the final manuscript.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Conflict of Interest

Not applicable.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.