Abstract

Objectives

To develop and evaluate a low-cost, 4-step micro-research closed-loop teaching model using Tencent Docs spreadsheets to improve polymerase chain reaction (PCR) experimental teaching in a sophomore molecular biology course.

Methods

In a single-institution, nonrandomized quasi-experimental study, 33 students from Capital Medical University were assigned to either an experimental group (n = 17) using the online model or a control group (n = 16) receiving traditional instruction. Both groups completed a 20-item PCR knowledge test and a 5-item self-confidence survey before and after the intervention. Outcomes included protocol completion time, first-pass approval rate, operational error rate, confidence, and PCR knowledge. Data were analyzed using independent-samples t-tests (with Welch correction) and Fisher's exact test (two-sided).

Results

The experimental group completed protocol design more quickly (8.04 ± 0.81 vs 12.58 ± 1.08 min; t = –13.62, P < 0.001) and showed a higher first-pass approval rate (82.4% vs 50.0%; P = 0.071). They also had fewer operational errors (8.24% ± 6.85% vs 20.50% ± 6.99%; t = –5.09, P < 0.001) and greater increases in confidence (+0.89 vs −0.10; t = 5.03, P < 0.001). Both groups showed significant improvements in PCR knowledge (experimental: 78.08 ± 5.04 to 84.79 ± 3.98; control: 78.16 ± 5.59 to 80.81 ± 5.69), and the experimental group had a larger knowledge gain (6.72 ± 2.21 vs 2.65 ± 0.71; t = 7.21, P < 0.001).

Conclusion

In this small, nonrandomized cohort, use of an online spreadsheet-based micro-research closed-loop model was associated with shorter protocol design time, fewer low-level errors, higher experimental confidence, and larger short-term improvements in PCR theoretical knowledge. These preliminary findings suggest that a lightweight, readily scalable “digital laboratory” may help support PCR experimental teaching in resource-constrained settings, although more rigorous, larger-scale studies with long-term and higher-order learning outcomes are needed before drawing firm causal conclusions.

Keywords

Introduction

Scientific training relies heavily on the iterative process of “hypothesis–experiment–revision–revalidation.” This trial-and-error approach, paired with timely feedback, is essential for students to understand experimental logic and build the resilience needed to overcome failure. 1 However, in many foundational medical experimental courses, time and resources are limited, which often results in only 1 post-demonstration run. This leaves students with too few chances to experiment, and corrections come too late. As a result, students frequently make minor errors—such as using incorrect reagent amounts, wrong temperature settings, or missing procedural steps—during key experiments such as polymerase chain reaction (PCR) or gene cloning. These mistakes not only waste resources but also undermine student confidence and hinder the development of critical scientific thinking. 2

To tackle these issues, educators across fields are increasingly turning to digital and remote technologies to provide more hands-on practice and real-time feedback without needing to extend lab hours. While several online lab solutions have emerged—from advanced virtual simulations such as Labster3,4 and remote IoT-enabled labs 5 to low-cost home-based experiment kits 6 —many are limited by expensive subscriptions, high maintenance costs, or narrow functionality. This makes it difficult for smaller institutions or those with fewer resources to adopt these tools widely. 7

To address these challenges, this study proposes a “zero-development” solution: a 4-step micro-research teaching model built entirely on Tencent Docs’ online spreadsheet platform. The model integrates structured trial guidance, a controlled design space, instant online feedback, and a shared failure-case repository. The benefits of this approach are clear: (1) low cost and accessibility, requiring no additional software or hardware beyond standard collaborative documents; (2) rapid iteration, with complete design-feedback cycles in 5–10 min, aligning with micro-learning principles; (3) collaborative reflection, where real-time highlights and a shared error database promote peer learning and teamwork; and (4) seamless application to hands-on experiments, with the aim of improving first-pass success rates.

By focusing on PCR exercises in a sophomore molecular biology course, we explore whether this model is associated with reduced operational errors and higher student confidence, and how it might conceptually support scientific thinking. Given the non-randomized, quasi-experimental design and single-institution context, our goal is to provide preliminary evidence about the feasibility and potential value of this approach.

Theoretical Framework and the 4-Step “Combo-Punch” Model

Drawing on constructivist learning theory and cognitive load theory, this study highlights how student-driven inquiry, combined with the right support and timely feedback, helps students internalize knowledge more deeply. 8 By blending Bloom's taxonomy with Kolb's experiential learning cycle, 9 we developed the 4-step “combo-punch” model, as shown in Figure 1.

Four-step micro-research closed loop: (1) structured trial guidance, (2) controlled design space, (3) instant feedback, (4) failure case repository. Each module feeds into the next via cyclical arrows, supporting 5–10 min micro-iterations and seamless transfer from online design to hands-on experimentation.

Structured Trial Guidance: From “Ingredient Inventory” to “Variable Cognition”

Macro Cognition (Form 1): List all PCR reagents (template DNA, buffer, deoxynucleoside triphosphates, primers, polymerase, and water) to activate prior knowledge and establish a conceptual scaffold. Quantitative Reasoning (Form 2): Enter volumes within suggested ranges; embedded IF-formula checks immediately flag out-of-range entries, prompting students to reflect on dose–response relationships (eg, “Excess polymerase can inhibit amplification” and “over-abundant primers increase dimer formation”). Process Planning (Form 3): Sequence pipetting steps and receive real-time guidance (eg, “Add enzyme last to avoid heat inactivation”), shifting order-of-operations from an afterthought to an integral design decision.

Controlled Design Space: Rule-Bound Free Exploration

Parameter Calibration (Forms 2 & 6): Fields for volume, annealing temperature, and cycle number compare entries to optimal thresholds and illuminate ✓ or ⚠ to correct deviations without stifling creativity. Segmented Nodes (Forms 3–7): Break the workflow into 5 discrete nodes—pipetting order → mixing method → enzyme selection → thermal cycling parameters → product handling—so that learners can trial each step and verify correctness immediately.

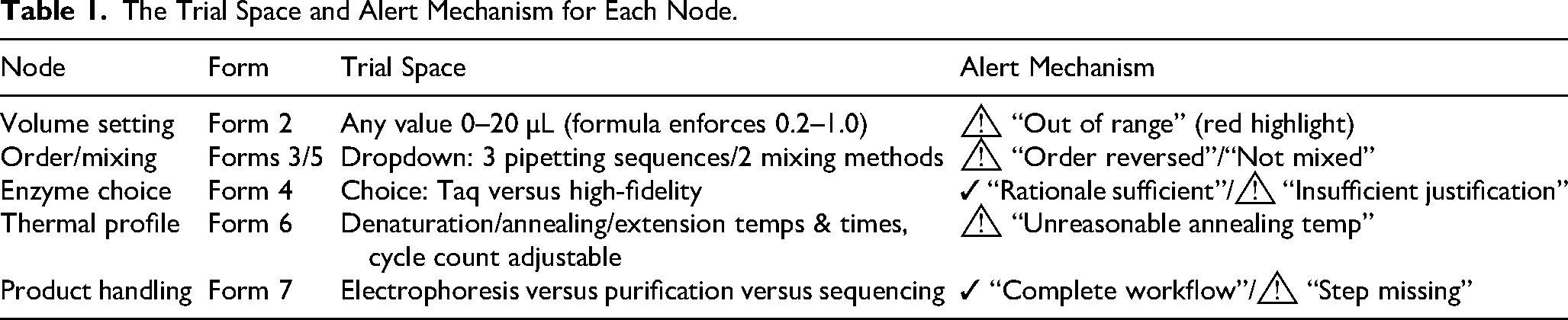

As shown in Table 1, each node is configured with a “safety range,” a “correct preset,” and an “options list,” so that students can both experiment freely and clearly understand the boundaries of their trials.

The Trial Space and Alert Mechanism for Each Node.

Instant Feedback Mechanism: A “Real-Time, Visual, Interactive” Triad

Visual Highlights: ✓/⚠ icons in green/red draw immediate attention to correct versus problematic entries. Comment Alerts: In-cell @-mentions generate popup comments and push notifications via WeChat or email for one-on-one correction. Summary Feedback Zone: Instructors compile all ⚠ flags into a “Peer Learning Library,” publicly sharing common errors to foster “learning through sharing.”

Every ⚠ alert prompts an immediate revision, completing the cycle within 5 min for an “instant feedback–rapid iteration” loop.

Failure Case Repository: Turning “Failures” into “Resources”

Operational Errors: for example, missed or reversed pipetting (“Improvement: use checklists + peer verification”). Parameter Misconfigurations: for example, incorrect enzyme concentration or temperature (“Improvement: perform gradient trials + literature review”). Workflow Gaps: for example, poor linkage between mixing → run → analysis (“Improvement: create flowcharts + group rehearsals”).

As the semester progresses, this collapsible “Case Library” becomes a dynamic repository that students review before each session and contribute to during debriefs.

By cycling through

Methods

Participants, Setting, and Study Design

This nonrandomized quasi-experimental study was conducted at the School of Basic Medical Sciences, Capital Medical University (Beijing, China) between June 16, 2025, and June 17, 2025. The study included sophomore students enrolled in the Molecular Biology Experimental Course. Due to logistical constraints, the experimental (n = 17) and control (n = 16) groups were formed pragmatically based on student ID numbers (first 16 for control, next 17 for experimental), rather than true randomization. This convenience sampling and nonrandom allocation may introduce selection bias and limit generalizability beyond this single institution and cohort.

Inclusion criteria were: (1) enrollment in the sophomore Molecular Biology Experimental Course during the study term, and (2) completion of both the design and hands-on PCR sessions. Exclusion criteria were: (1) absence from either teaching session; (2) incomplete pre- or post-test data; or (3) refusal to allow anonymized teaching-quality data to be used for research purposes.

The same instructor (JZ) delivered all teaching sessions and evaluated protocol approval and operational errors using a pre-specified checklist, as described below.

Ethics

The study protocol was submitted to and reviewed by the Academic Committee of the School of Basic Medical Sciences, Capital Medical University. As the study involved only anonymized, aggregated teaching-quality assessment data and posed minimal risk, the Committee granted a formal waiver of full ethics review on May 7, 2025. Although no waiver number is available, the ethics waiver was granted in accordance with the guidelines for educational research involving minimal risk. All procedures were conducted in accordance with the ethical standards of the Helsinki Declaration of 1964 and its later amendments. Participation was voluntary, and completion of the anonymized online forms was considered implied consent.

Instructional Design and Implementation

During both sessions, students worked in small bench groups (3–4 students) to discuss the experimental tasks; however, each student designed and submitted an individual PCR protocol and completed the confidence surveys independently.

Intervention procedure:

Data Collection and Metrics

Statistical Analysis

Continuous outcomes (protocol completion time, low-level error rate, confidence scores, and knowledge test scores) are reported as mean ± standard deviation and were compared between groups using independent-samples t-tests. Welch's correction was applied in all t-tests to account for potential unequal variances. Where appropriate, within-group pre- to post-changes were examined using paired t-tests.

Categorical outcomes (first-pass protocol approval) were compared between groups using Fisher's exact test (two-sided), given the small sample size and borderline expected cell counts; Pearson's χ2 values were computed as a secondary check, but Fisher's exact P-values are reported as primary. Statistical significance was defined as a two-sided α = 0.05.

The primary endpoint was the first-pass protocol approval rate, as it directly reflects the effectiveness of the model in facilitating the design process. Class enrollment fixed the sample size at 33 students (17 in the experimental group and 16 in the control group), and we did not perform a formal a priori sample size calculation. Instead, a post hoc sensitivity analysis using G*Power 3.1 indicated that, with α = 0.05 (two-sided) and N = 33, the design has adequate power to detect large between-group differences in first-pass approval (approximately Cohen's h ≈ 0.70, corresponding to about a ≥ 30 percentage-point difference), whereas smaller effects may go undetected.

The reporting of this study conforms to the SQUIRE 2.0 statement 10 (SQUIRE 2.0 Checklist, Supplemental File 1).

Results

Baseline Comparison

Although the groups were not randomly assigned, a baseline comparison was conducted to assess PCR theoretical scores and experimental confidence before the intervention. No significant differences were found between the experimental group (n = 17) and the control group (n = 16) in these measures.

The experimental group had a mean theoretical score of 78.08 ± 5.04, while the control group scored 78.16 ± 5.59 (t(30.17) = –0.040, P = 0.966). For experimental confidence, the experimental group averaged 3.23 ± 0.58, compared to 3.50 ± 0.53 in the control group (t(30.95) = –1.389, P = 0.175). These results suggest that the two groups were similar at baseline on measured knowledge and confidence, although unmeasured factors such as prior laboratory experience, motivation, or attendance may still have differed between groups.

Protocol Completion Time

There was a clear difference in the time it took the 2 groups to complete the protocol design task. The experimental group took an average of 8.04 ± 0.81 min, while the control group took 12.58 ± 1.08 min. This difference was statistically significant (t(27.91) = –13.62, P < 0.001), indicating that students using the micro-research closed-loop model completed the design process faster than those using the traditional paper-based approach.

Protocol Approval Rate and Iterations

On the first submission, 14 out of 17 (82.4%) protocols in the experimental group were approved without needing revisions, compared to 8 out of 16 (50.0%) in the control group. Fisher's exact test (two-sided) indicated a nonsignificant trend toward a higher first-pass approval rate in the experimental group (P = 0.071).

Among experimental-group students, the mean number of formal submission–revision cycles required to obtain final approval was 1.24 ± 0.56. Because protocol revisions in the control group were conducted on paper and not systematically logged, iteration counts are not available for that group, and no direct statistical comparison can be made.

Low-Level Error Rate

When it came to hands-on PCR setup, the experimental group had a significantly lower error rate. Their average low-level error rate was 8.24% ± 6.85%, compared to 20.50% ± 6.99% in the control group (t(30.79) = –5.09, P < 0.001). The experimental group made fewer errors across all categories: volume errors (9.24% vs 26.79%), pipetting order errors (7.56% vs 13.39%), and programming parameter errors (8.02% vs 21.02%).

Experimental Confidence Improvement

After the intervention, the experimental group saw a significant increase in confidence, with their average score rising to 4.12 ± 0.34, while the control group's confidence slightly decreased to 3.40 ± 0.47. The difference between groups was statistically significant (t(27.31) = 5.03, P < 0.001). The experimental group's confidence increased by 0.89 points, while the control group's confidence dropped by 0.10 points, further supporting the idea that instant feedback and iterative corrections help boost student confidence.

Theoretical Knowledge Post-Test

After the intervention, both groups showed statistically significant improvements in theoretical knowledge. In the experimental group, mean scores increased from 78.08 ± 5.04 to 84.79 ± 3.98 (t(16) = 12.54, P < 0.001). In the control group, mean scores increased from 78.16 ± 5.59 to 80.81 ± 5.69 (t(15) = 14.97, P < 0.001).

Between-group comparisons using independent-samples t-tests with Welch's correction showed that post-test scores were significantly higher in the experimental group than in the control group (84.79 ± 3.98 vs 80.81 ± 5.69; t(26.72) = 2.32, P = 0.028). When pre–post change scores were compared, the experimental group also exhibited a significantly larger gain in knowledge than the control group (6.72 ± 2.21 vs 2.65 ± 0.71; t(19.44) = 7.21, P < 0.001).

Discussion

In this quasi-experimental study, a 4-step micro-research closed-loop model implemented via an online spreadsheet was associated with faster PCR protocol design, a trend toward higher first-pass approval, fewer low-level operational errors, and larger gains in self-reported confidence and theoretical knowledge compared with a traditional paper-based approach. At the same time, both groups showed significant pre–post improvement, indicating that the overall molecular biology experimental course effectively supported learning. The following sections discuss the main findings and their educational implications, followed by a dedicated subsection on limitations and future directions.

Design Efficiency and Protocol Quality

Students in the experimental group completed the protocol design substantially faster than those in the control group. This suggests that the structured, stepwise spreadsheet workflow helped students navigate routine design tasks more efficiently. In time-constrained laboratory sessions, where students may have only one opportunity to run a protocol after a demonstration, such efficiency gains can be meaningful because they leave more time for hands-on execution, troubleshooting, and instructor–student interaction.

Beyond speed, the experimental group also achieved a higher first-pass approval rate, indicating that the model may support students in producing more complete and internally consistent protocols on their initial attempt. Although this difference did not reach conventional statistical significance when evaluated with Fisher's exact test in this small cohort, the pattern is consistent with the intended function of the 4-step model: to make requirements explicit, provide timely cues, and reduce avoidable omissions at the design stage.

Procedural Errors and Confidence

The spreadsheet-based model was associated with a markedly lower low-level error rate during PCR setup. Students who used the 4-step workflow made fewer mistakes in pipetting volumes, reagent order, and thermal cycler programming. This alignment between better-structured design and more accurate execution is pedagogically important: when students carry out a procedure that they have planned in a clear, granular way, there is less cognitive load at the bench, and fewer opportunities for simple slips to undermine the experiment.

The pattern in self-reported confidence complements these behavioral findings. The experimental group reported increased confidence after the intervention, while confidence in the control group decreased slightly. Designing and revising a protocol within a transparent, feedback-rich environment may help students feel more in control of complex tasks, and may reduce anxiety about “getting everything wrong.” Confidence alone does not guarantee deeper understanding, but feeling more capable and less intimidated can be a valuable prerequisite for engaging with more challenging experimental design tasks.

Iteration Patterns and Design Process

A distinctive feature of the model is its emphasis on iterative refinement within a shared digital space. In the experimental group, most students achieved final protocol approval within 1 or 2 formal submission–revision cycles, yielding an average of 1.24 iterations. Within each cycle, the structured layout and real-time feedback icons encouraged students to examine their choices systematically—first at the level of reagent completeness, then at volumes, pipetting order, and program parameters.

These iteration patterns highlight a subtle aspect of experimental learning. On the one hand, the model is designed to minimize wasted effort by helping students address multiple issues within a single revision cycle. On the other hand, much of the conceptual work may occur in the “micro-iterations” that are not captured simply by counting formal submissions—for example, when students adjust annealing temperatures, think through volume constraints, or re-order steps before clicking “submit.” Future applications of the model could take advantage of spreadsheet edit logs or other digital traces to more fully characterize how students explore and refine their designs over time.

Theoretical Knowledge Outcomes

Both groups demonstrated statistically significant gains in PCR theoretical knowledge from pre- to post-test, reflecting the effectiveness of the broader course design, which combined lectures, demonstrations, and hands-on practice. The experimental group, however, showed a higher post-test mean score and a larger mean gain than the control group. This pattern suggests that tightly integrating protocol design, immediate feedback, and subsequent execution may help students consolidate conceptual understanding by linking abstract principles directly to the parameters they must choose in a real experiment.

Importantly, the knowledge gains observed here occurred over a relatively short, 2-day PCR module. The fact that both groups improved, with a larger gain in the experimental group, points to the potential of the closed-loop model as a complement to more traditional instructional elements, rather than as a replacement. Whether such advantages persist over longer periods, extend to other techniques, or translate into improved performance on novel design tasks remains an important question for future research.

Pedagogical Implications and Theoretical Underpinnings

The design of the 4-step model is informed by several complementary educational perspectives. By combining constructivist scaffolded instruction 8 with Kolb's experiential learning cycle,9,11 the model is intended to lower the barrier to experimental design for novice learners while keeping them actively engaged in cycles of planning, acting, observing, and reflecting. It also exemplifies a “learning as research” approach,12,13 which emphasizes active, hands-on engagement with authentic problems rather than passive reception of information.

Each of the 4 phases—macro cognition, quantitative reasoning, process planning, and case reflection—provides immediate, interactive feedback that encourages students to externalize and iteratively refine their thinking. Unlike passive video demonstrations or post-class quizzes delivered through learning management systems such as Moodle,14,15 the spreadsheet-based workflow embeds real-time cues directly into students’ design activities at the moment decisions are made. Additionally, the shared failure-case repository turns individual mistakes into collective learning resources, promoting group-wide reflection and helping normalize error as a legitimate part of the learning process. Although the present study did not directly test these theoretical mechanisms, the observed patterns in design efficiency, procedural accuracy, confidence, and knowledge gains are broadly aligned with these pedagogical intentions.

Limitations and Future Directions

Several limitations should be considered when interpreting the findings of this study. First, the design was nonrandomized, and group allocation was based on student ID numbers rather than random assignment. Although baseline measures of knowledge and confidence were similar between groups, unmeasured factors such as prior laboratory experience, intrinsic motivation, or attendance may still have differed, so all between-group differences should be interpreted as associative rather than causal.

Second, the sample size was relatively small and determined by class enrollment, and no formal a priori power calculation was conducted. The study is therefore likely powered only to detect relatively large effects, and smaller differences—particularly in first-pass approval rates—may have gone undetected. Replication with larger cohorts and across multiple institutions will be important to obtain more precise effect size estimates and to assess generalizability.

Third, all feedback, protocol approval decisions, and low-level error observations were made by a single instructor using a standardized checklist, and only the experimental group had systematically logged iteration data. This ensured internal consistency but precluded assessment of interrater reliability and limited our ability to characterize the full revision process in the control condition. Future work should involve multiple trained raters, formal checks of agreement, and more comprehensive logging of design and revision steps in both groups.

Finally, the intervention was evaluated over a short, 2-day PCR module focused on a single experimental technique, and data aggregation and feedback were handled manually. Extending the model to additional experiments and courses, integrating automated analytics or learning tools interoperable with institutional systems,16,17 and incorporating delayed post-tests, measures of transfer, and broader constructs such as resilience and metacognition are important next steps for determining the long-term educational impact and scalability of this approach.

Conclusion

In this single-course, quasi-experimental study, a 4-step micro-research closed-loop model implemented via an online spreadsheet was associated with faster PCR protocol design, a trend toward higher first-pass protocol approval, fewer low-level operational errors, larger gains in self-reported experimental confidence, and greater short-term improvements in PCR theoretical knowledge compared with a traditional paper-based approach. These findings suggest that a lightweight, zero-development “digital laboratory” can help streamline design work and support both procedural performance and students’ subjective experience in resource-constrained molecular biology laboratory teaching.

At the same time, the nonrandomized design, small convenience sample, reliance on a single instructor, and asymmetric logging of revision processes mean that these advantages should be interpreted as associative rather than causal. The intervention was also limited to a short, 2-day PCR module and a single institution, and longer-term outcomes such as retention, transfer to other techniques, and broader constructs such as resilience or metacognition were not assessed. Future research should therefore employ more rigorous designs with larger and more diverse cohorts, systematically track design and revision steps in both intervention and control conditions, and incorporate delayed post-tests and higher-order outcome measures. If confirmed in such studies, the spreadsheet-based closed-loop model may offer a scalable, low-cost strategy for integrating design, feedback, and execution across a wider range of experimental teaching contexts.

Supplemental Material

sj-pdf-1-mde-10.1177_23821205261422881 - Supplemental material for A Four-Step Micro-Research Closed-Loop Model via Online Spreadsheets for Molecular Biology Experimental Teaching: A Polymerase Chain Reaction (PCR) Case Study

Supplemental material, sj-pdf-1-mde-10.1177_23821205261422881 for A Four-Step Micro-Research Closed-Loop Model via Online Spreadsheets for Molecular Biology Experimental Teaching: A Polymerase Chain Reaction (PCR) Case Study by Jing Zhang and Qiaoyun Chu in Journal of Medical Education and Curricular Development

Supplemental Material

sj-xlsx-2-mde-10.1177_23821205261422881 - Supplemental material for A Four-Step Micro-Research Closed-Loop Model via Online Spreadsheets for Molecular Biology Experimental Teaching: A Polymerase Chain Reaction (PCR) Case Study

Supplemental material, sj-xlsx-2-mde-10.1177_23821205261422881 for A Four-Step Micro-Research Closed-Loop Model via Online Spreadsheets for Molecular Biology Experimental Teaching: A Polymerase Chain Reaction (PCR) Case Study by Jing Zhang and Qiaoyun Chu in Journal of Medical Education and Curricular Development

Supplemental Material

sj-xlsx-3-mde-10.1177_23821205261422881 - Supplemental material for A Four-Step Micro-Research Closed-Loop Model via Online Spreadsheets for Molecular Biology Experimental Teaching: A Polymerase Chain Reaction (PCR) Case Study

Supplemental material, sj-xlsx-3-mde-10.1177_23821205261422881 for A Four-Step Micro-Research Closed-Loop Model via Online Spreadsheets for Molecular Biology Experimental Teaching: A Polymerase Chain Reaction (PCR) Case Study by Jing Zhang and Qiaoyun Chu in Journal of Medical Education and Curricular Development

Supplemental Material

sj-xlsx-4-mde-10.1177_23821205261422881 - Supplemental material for A Four-Step Micro-Research Closed-Loop Model via Online Spreadsheets for Molecular Biology Experimental Teaching: A Polymerase Chain Reaction (PCR) Case Study

Supplemental material, sj-xlsx-4-mde-10.1177_23821205261422881 for A Four-Step Micro-Research Closed-Loop Model via Online Spreadsheets for Molecular Biology Experimental Teaching: A Polymerase Chain Reaction (PCR) Case Study by Jing Zhang and Qiaoyun Chu in Journal of Medical Education and Curricular Development

Supplemental Material

sj-xlsx-5-mde-10.1177_23821205261422881 - Supplemental material for A Four-Step Micro-Research Closed-Loop Model via Online Spreadsheets for Molecular Biology Experimental Teaching: A Polymerase Chain Reaction (PCR) Case Study

Supplemental material, sj-xlsx-5-mde-10.1177_23821205261422881 for A Four-Step Micro-Research Closed-Loop Model via Online Spreadsheets for Molecular Biology Experimental Teaching: A Polymerase Chain Reaction (PCR) Case Study by Jing Zhang and Qiaoyun Chu in Journal of Medical Education and Curricular Development

Supplemental Material

sj-xlsx-6-mde-10.1177_23821205261422881 - Supplemental material for A Four-Step Micro-Research Closed-Loop Model via Online Spreadsheets for Molecular Biology Experimental Teaching: A Polymerase Chain Reaction (PCR) Case Study

Supplemental material, sj-xlsx-6-mde-10.1177_23821205261422881 for A Four-Step Micro-Research Closed-Loop Model via Online Spreadsheets for Molecular Biology Experimental Teaching: A Polymerase Chain Reaction (PCR) Case Study by Jing Zhang and Qiaoyun Chu in Journal of Medical Education and Curricular Development

Supplemental Material

sj-xlsx-7-mde-10.1177_23821205261422881 - Supplemental material for A Four-Step Micro-Research Closed-Loop Model via Online Spreadsheets for Molecular Biology Experimental Teaching: A Polymerase Chain Reaction (PCR) Case Study

Supplemental material, sj-xlsx-7-mde-10.1177_23821205261422881 for A Four-Step Micro-Research Closed-Loop Model via Online Spreadsheets for Molecular Biology Experimental Teaching: A Polymerase Chain Reaction (PCR) Case Study by Jing Zhang and Qiaoyun Chu in Journal of Medical Education and Curricular Development

Supplemental Material

sj-docx-8-mde-10.1177_23821205261422881 - Supplemental material for A Four-Step Micro-Research Closed-Loop Model via Online Spreadsheets for Molecular Biology Experimental Teaching: A Polymerase Chain Reaction (PCR) Case Study

Supplemental material, sj-docx-8-mde-10.1177_23821205261422881 for A Four-Step Micro-Research Closed-Loop Model via Online Spreadsheets for Molecular Biology Experimental Teaching: A Polymerase Chain Reaction (PCR) Case Study by Jing Zhang and Qiaoyun Chu in Journal of Medical Education and Curricular Development

Supplemental Material

sj-docx-9-mde-10.1177_23821205261422881 - Supplemental material for A Four-Step Micro-Research Closed-Loop Model via Online Spreadsheets for Molecular Biology Experimental Teaching: A Polymerase Chain Reaction (PCR) Case Study

Supplemental material, sj-docx-9-mde-10.1177_23821205261422881 for A Four-Step Micro-Research Closed-Loop Model via Online Spreadsheets for Molecular Biology Experimental Teaching: A Polymerase Chain Reaction (PCR) Case Study by Jing Zhang and Qiaoyun Chu in Journal of Medical Education and Curricular Development

Footnotes

Acknowledgments

We thank the participating students and instructors for their engagement and feedback.

Human Ethics and Consent to Participate Declarations

The study protocol was submitted to and reviewed by the Academic Committee of the School of Basic Medical Sciences, Capital Medical University. As the study involved only anonymized, aggregated teaching-quality assessment data and posed minimal risk, the Committee granted a formal waiver of full ethics review on May 7, 2025. All procedures were conducted in accordance with the ethical standards of the Helsinki Declaration of 1964 and its later amendments. Participants were verbally informed of the purpose and nature of the study prior to data collection. Given that no personal identifying information was collected, written consent was not required. Voluntary participation was confirmed through the completion of the Tencent Docs online spreadsheet, which served as implied consent.

Consent for Publication

Not applicable.

Author Contributions

JZ conceptualized the study, developed the teaching model, and wrote the manuscript. QC contributed to the study design, data collection, and analysis, and reviewed the manuscript. All authors approved the final manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported by the 2025 Annual Higher Education Scientific Research Planning Project of the China Association of Higher Education (25KC0306).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Availability of Data and Materials

All de-identified raw datasets used for statistical analyses (protocol completion times, approval/iteration logs, error counts, and pre-/post-test confidence scores), as well as the complete 5-point self-confidence survey instrument, are included in the Supplemental Files.

Clinical Trial Number

Not applicable.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.