Abstract

In the Pediatric Intensive Care Unit (PICU), most teaching occurs during bedside rounds, but technology now provides new opportunities to enhance education. Specifically, smartphone apps allow rapid communication between instructor and student. We hypothesized that using an audience response system (ARS) app can identify resident knowledge gaps, guide teaching, and enhance education in the PICU. Third-year pediatric residents rotating through the PICU participated in ARS-based education or received traditional teaching. Before rounds, experimental subjects completed an ARS quiz using the Socrative app. Concomitantly, the fellow leading rounds predicted quiz performance. Then, discussion points based on the incorrect answers were used to guide instruction. Scores on the pre-rotation test were similar between groups. On the post-rotation examination, ARS participants did not increase their scores more than controls. The fellow’s prediction of performance was poor. Residents felt that the method enhanced their education whereas fellows reported that it improved their teaching efficiency. Although there was no measurable increase in knowledge using the ARS app, it may still be a useful tool to rapidly assess learners and help instructors provide learner-centered education.

Introduction

Rotating through the Pediatric Intensive Care Unit (PICU) is an essential component of pediatric residency in which trainees acquire in-depth knowledge about life-threatening diseases. Often introduced in the second year of training, many pediatric residents are able to recognize and assist in the management of critically ill children after completing the rotation.

With institution of duty hours rules, residents face new challenges in medical training. 1 Drolet et al 2 conducted a survey of more than 6000 trainees and reported that the majority felt their education was compromised due to duty hour restrictions. Changes in the requirements for categorical pediatric programs also reduced the amount of time residents spent in the PICU. 1 As a result, current trainees have less PICU experience compared with their predecessors, further limiting the opportunity to learn from critically ill children.

As with other patient care experiences, teaching in the PICU often occurs during bedside rounds using the Socratic Method where an attending physician or fellow poses a question to the residents. 3 Although this technique has merit in teaching and motivating the learner and students find it useful, there are times when it is used with the goal of humiliating the learner.4,5 Thus, some trainees may feel embarrassed if they are unsure of the answer to the question being posed. Another disadvantage of this approach is the inability to assess learners. This is important as determining a learner’s current level of knowledge can help the educator ask appropriately challenging questions. 6 In addition, those who are already knowledgeable about the topic may find the instruction redundant and inefficient. A method to identify the resident’s knowledge deficit to focus teaching in the PICU would be helpful.

The increased availability of devices for mobile learning (m-learning) has created new opportunities for teaching and assessment, allowing users to interact with educational resources in many more locations. Although its efficacy remains to be clearly shown, learner responses to this new modality are generally favorable.7–9 With the development of audience response system (ARS) apps for smartphones, there is now the ability to rapidly assess learner knowledge. Socrative is an ARS app that allows instructors to conduct real-time question-and-answer sessions with learners using free-text responses. 10 On review of the answers, the instructor can identify gaps in knowledge and customize teaching based on the assessment. ARS has been shown to be an effective instrument to improve resident medical knowledge in the lecture setting but has not been previously evaluated as an assessment tool prior to bedside rounds.11–13

We hypothesized that an ARS app will be useful to identify knowledge gaps in PICU residents and that this information can be used to improve the educational experience. Specifically, we aimed to (1) use ARS to identify knowledge deficits in third-year residents rotating through the PICU and use this to guide fellow teaching on rounds, (2) determine if residents who participated in ARS-based education 3 times a week improved their scores on a post-rotation test by 20% when compared with a similar group of residents not exposed to ARS-based education, and (3) assess the overall value of using ARS by surveying the participating trainees.

Methods

This was a prospective study in which third-year residents on their monthly rotation through the PICU were assigned to either the ARS or control groups. Allocation occurred a priori and was based solely on the investigator’s schedule and without knowledge of resident or fellow skill level. The study was conducted from September 2013 to March 2015. The institutional review board (IRB) deemed it exempt.

The study site is a busy 18 bed PICU at an academic institution. The typical physician team comprises two attending physicians, a fellow, and 4 to 5 pediatric residents. No emergency medicine or medicine-pediatric residents rotate through the Unit and no trainee had completed another residency prior to participating in this investigation. Only third-year residents were included in the study as they rotated in the PICU for 4 weeks whereas those in their second year spent only 2 weeks. Fellows typically had little previous familiarity of resident knowledge, as the residency program is large and fellows also rotate through another PICU.

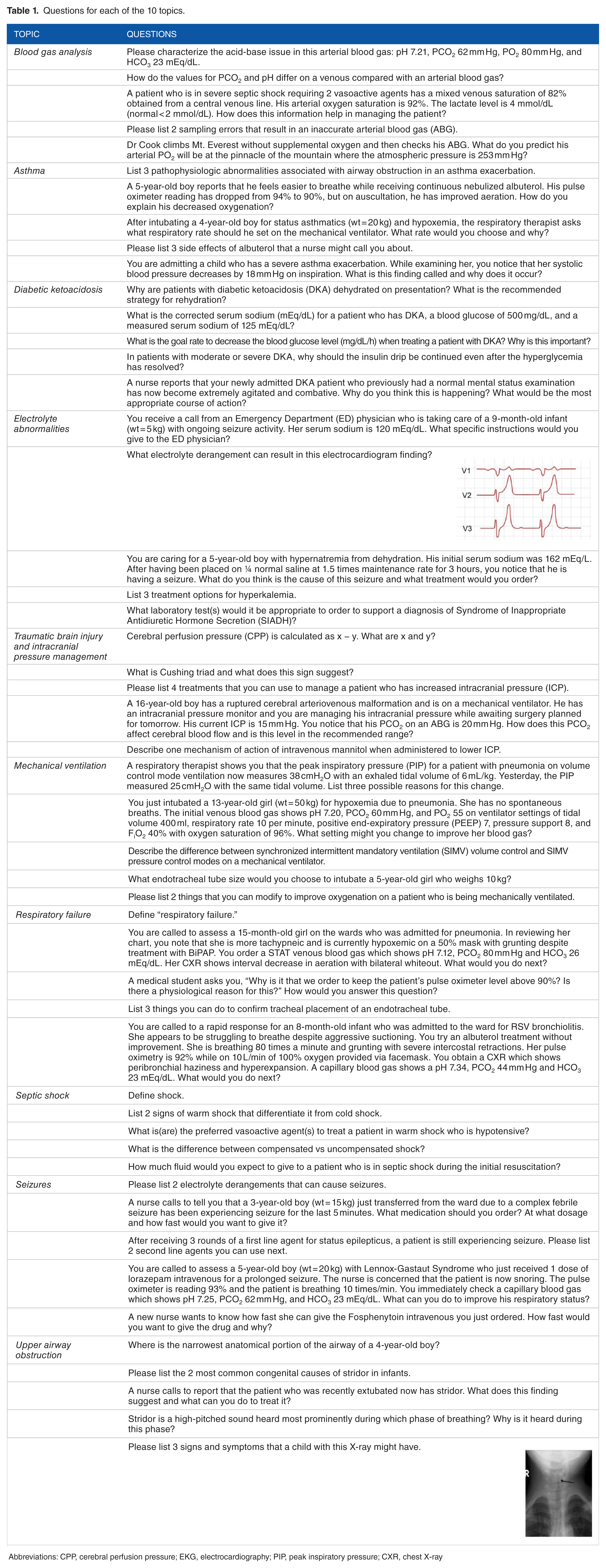

Ten ARS quiz modules of common pediatric critical care medicine topics were created by the principal investigator. Each module comprised 5 free-text questions which were reviewed by 3 expert PICU physicians with extensive experience in graduate medical education (Table 1). The questions were modified until there was agreement that a resident would be able to answer 60% to 80% of the questions in each module correctly.

Questions for each of the 10 topics.

Abbreviations: CPP, cerebral perfusion pressure; EKG, electrocardiography; PIP, peak inspiratory pressure; CXR, chest X-ray

ARS participants installed the free Socrative app on their smartphone at the beginning of the rotation. 10 Approximately 3 times a week, the principal investigator selected a topic for teaching just before rounds based on the diagnoses of the patients in the PICU. The residents’ knowledge about this topic was then assessed using the previously developed ARS quiz by sending it to them through the app. While the residents were completing the quiz, the fellow predicted the residents’ performance. Then, just before rounds began, the investigator reviewed the responses and verbally shared the results with the fellow along with points for teaching based on the questions answered incorrectly. Residents in the control group did not use ARS. For these trainees, the fellow did not receive any specific instructions about teaching.

Aside from the performance data in the ARS group that was communicated to the fellow, teaching in both groups was unstructured and used the Socratic Method. As an integral part of their training, fellows are expected to lead PICU bedside morning rounds and to teach residents, although the attending physicians may occasionally provide supplementary instruction. Prior to the start of the investigation, fellows were instructed about how to use the performance data to guide their teaching on rounds but there was no specific education about how to teach nor was any faculty development provided.

Several outcomes were measured to assess the potential benefits of ARS-based education. The primary outcome was to compare the improvement in the scores from the test given at the start of the rotation with that administered on the last week. The test comprised 25 multiple-choice questions selected from the Resident ICU course offered by the Society of Critical Care Medicine and the American Academy of Pediatrics Pediatrics Review and Education Program (PREP) Self-Assessment.14,15 Questions were selected to specifically evaluate the 10 topics.

Other outcomes included the agreement between the fellow’s prediction of the residents’ performance and actual performance on the quizzes. Fellows were asked to predict the overall score for the 5 questions for each trainee but were told only the average score of the percentage correct for the group of residents on the rotation. In addition, the fellows and residents completed a brief survey at the end of the rotation to evaluate the value, feasibility, and satisfaction with using ARS and were encouraged, but not required, to provide comments.

To determine sample size, 5 pediatric third-year residents (not included in the study) completed the test before their PICU rotation. These individuals had a mean (standard deviation [SD]) of 70.6% (14). Based on these data, it was determined that the number of subjects required to achieve 80% power to detect a 20% increase on the post-rotation test was 18 participants per group (α = .05). This would provide a large effect size with Cohen d of 1.03.

Baseline test scores between the subjects in the ARS and control groups were analyzed with a 2-tailed student t-test and the difference between the pre-post examinations used a paired-t test. Correlation analysis was performed with Spearman rho and Cohen kappa was used to evaluate the agreement between the fellow’s prediction of resident performance with the average score of the percentage correct for the group of residents. A P < .05 was considered significant. Results are reported as mean (95% confidence interval).

Results

A total of 39 pediatric residents were eligible to participate in the investigation but two withdrew leaving a total of 37 residents who completed the study (95% of all eligible trainees). One trainee in the ARS group voluntarily withdrew and 1 control did not complete the assessments due to medical leave. As a result, a total of 18 subjects were in the ARS group and 19 in the control group.

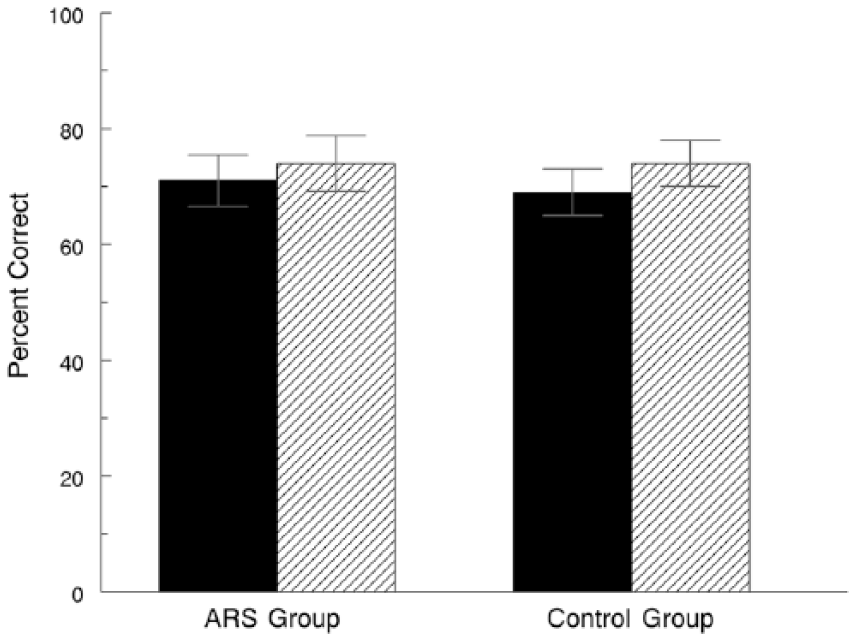

Results from pre- and post-rotation examinations are shown in Figure 1. The baseline average pre-rotation examination scores were similar (P > .05) between the two groups (71.3% [66.9-75.7] in ARS vs 69.3% [65.3-73.2] in controls). Compared with controls, those in the ARS group did not show greater improvement (P > .05) in their test scores. ARS subjects had a mean post-pre rotation examination score difference of 2.4% [–3.1 to 8] whereas in the controls, it was 4.8% [0.3-9.4]. In addition, the post-test scores in the ARS group (73.8% [69.2-78.4]) were the same (P > .05) as in those who served as controls (74.1% [70.3-78.0]).

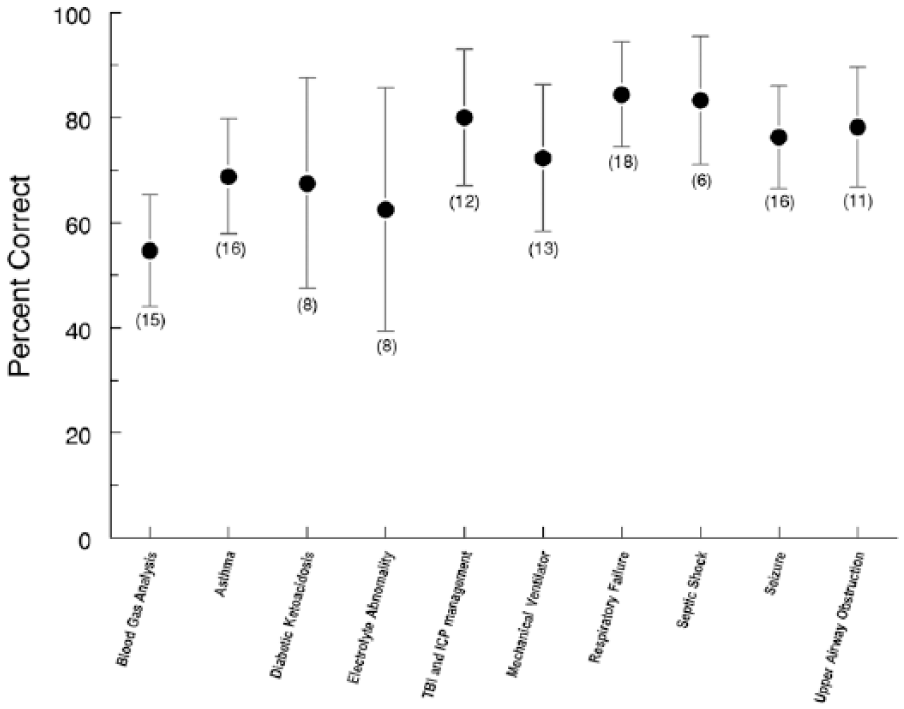

Resident performance on ARS modules expressed as a percent of the number of questions answered correctly. Dark circles represent mean scores and error bars the 95% confidence interval. Numbers in parenthesis indicate the number of residents in the ARS group who participated in the quiz for that topic. Performance on blood gas analysis was less (P < .05) than that on respiratory failure. There were no differences (P > .05) among the scores for the other topics. ARS indicates audience response system; ICP, intracranial pressure; TBI, traumatic brain injury.

The average number of ARS sessions in which the residents participated over their 4-week rotation was 6.8 (6.2-7.5). No trainee was exposed to all 10 topics. The mean scores on the 10 modules are shown in Figure 2. Trainees achieved the highest score on the respiratory failure module (84.4% [74.6-94.3]) and lowest on arterial blood gas analysis (54.7% [44.1-65.2] (P < .05). When comparing the scores by week, there was no improvement over time (week 1: 71.7% [64.2-79.1]; week 2: 77.7% [66.4-89], week 3: 71.7% [63.7-79.7]; week 4: 71.3% [62.5-80], P > .05). The residents who rotated through the PICU from July through December had lower scores on the ARS quizzes (61.7% [48.4-74.9]) compared with those who rotated during January through June (78.4% [69.7-87.1], P < .05). There was no correlation between the numbers of ARS sessions in which residents participated with improvement in their post-rotation examination score (P > .05).

Mean scores on the pre-rotation (filled bar) and post-rotation (hatched bar) examinations for the two groups. Error bars represent the 95% confidence interval. There was no difference (P > .05) between the pre and post scores for either group. ARS indicates audience response system

Overall, the agreement between fellow’s prediction of resident quiz scores and the actual values was low. The average percent agreement between fellow’s prediction on resident performance and the actual results did not show any difference over the course of the fellow’s rotation (week 1: 25%, week 2: 30%, week 3: 0%, week 4: 22%, P > .05). There was no association between the fellow’s ability to predict with their year of training (P > .05).

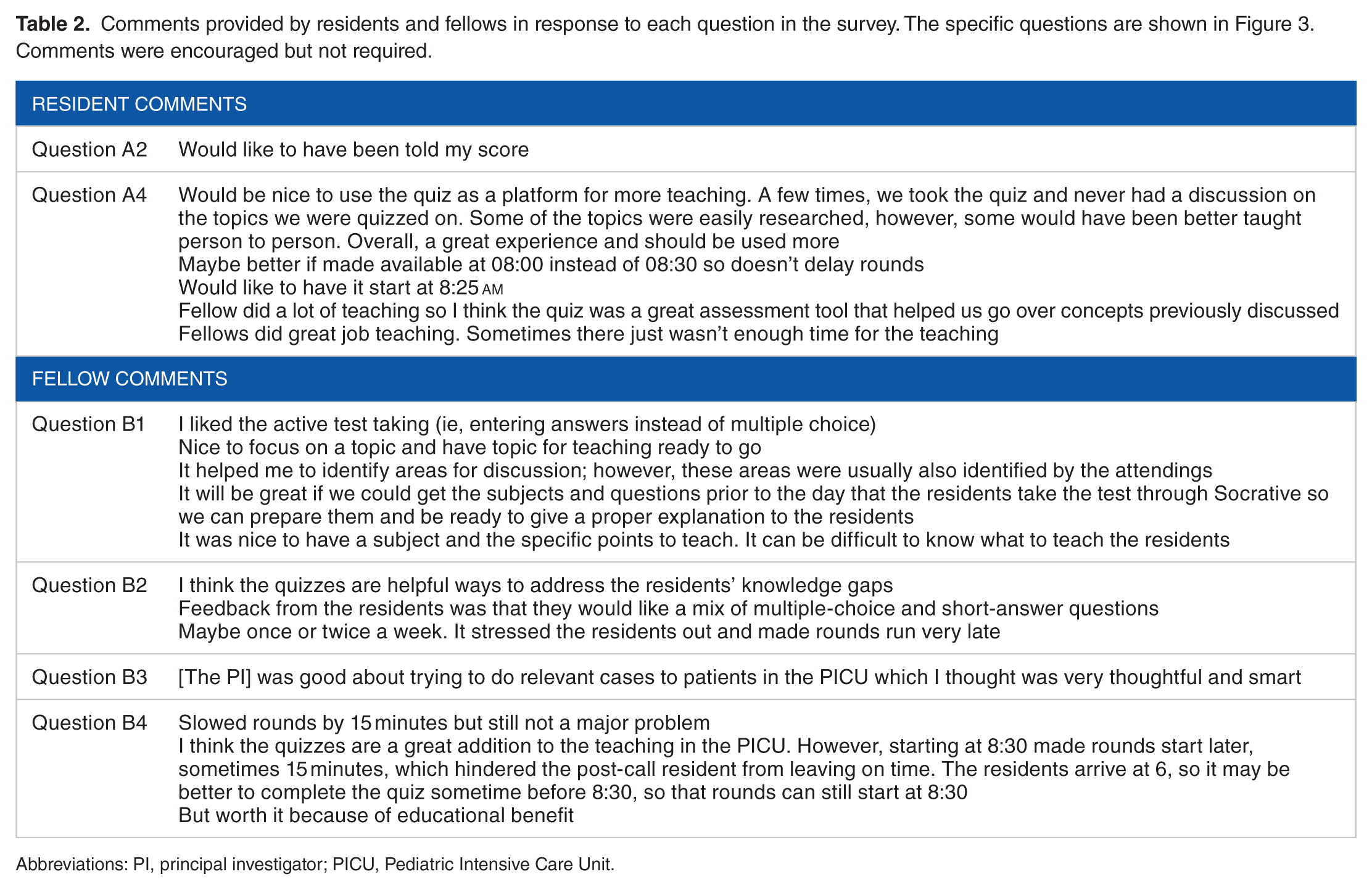

All study subjects reported satisfaction with using ARS (Figure 3). Residents felt they learned better because it identified their knowledge gaps and enhanced the fellow’s teaching. Similarly, fellows said that using the ARS was a positive experience and that it made their teaching more effective and efficient. However, their rating for how ARS affected PICU rounds was significantly lower (P < .05) than that of the other questions related to ARS satisfaction. Some fellows did comment that it was an additional task added to a busy morning but both groups felt it should be used more in the PICU. Comments provided by the residents and fellows are shown in Table 2.

Survey results from the residents (A) and the fellows (B). Boxes represent mean scores and the error bars the 95% confidence intervals. Numbers on the X-axis refer to a Likert-type scale with 1 = very little, 3 = neither, and 5 = very much. ARS indicates audience response system; PICU, Pediatric Intensive Care Unit.

Comments provided by residents and fellows in response to each question in the survey. The specific questions are shown in Figure 3. Comments were encouraged but not required.

Abbreviations: PI, principal investigator; PICU, Pediatric Intensive Care Unit.

Discussion

We used an ARS smartphone app to enhance resident education in the PICU. Studies evaluating the use of technology in medical education often rely on a survey of the participants’ attitudes, but our investigation attempted to objectively measure the effectiveness of the effort. Although we did not demonstrate increased knowledge by the participants, both teachers and learners enjoyed using the ARS app and thought it was helpful. In addition, the study showed that fellows could not accurately assess the knowledge of the trainees.

Our study concept is similar to Just-in-Time Teaching, a pedagogical strategy in which feedback between classroom activities and work that students have already prepared is used. 16 The goal is to increase efficiency and efficacy in the classroom by allowing the instructor to fine-tune activities to best meet students’ needs. By being aware of the student’s level of knowledge, teaching can be specifically modified to the learner’s level.

Our utilization of a smartphone app is an example of m-learning. M-learning permits a flexible educational environment that allows for knowledge acquisition and skill development. 8 Most medical education studies evaluating m-learning have focused on knowledge acquisition by disseminating the information using text, video, or apps through the learner’s mobile device.7,8,17,18 Overall efficacy of this modality has been mixed, with some studies showing an improvement in knowledge and others reporting no effect. Nonetheless, the manner in which we used ARS extends m-learning to include drill and practice, a form of computer-assisted instruction.19–21 The ARS quizzes were constructed assuming that the residents would answer some, but not all, of the questions correctly. By presenting questions for which the residents were anticipated to know the answer, knowledge would be reinforced. For questions answered incorrectly, teaching (ie, feedback) was provided during bedside rounds to address the knowledge gap. Studies support the efficacy of drill and practice in promoting learning.20,22

The objective in our study was to improve resident knowledge or the “knows” or “knows how” of Miller’s pyramid of competence. 23 Therefore, we used a multiple-choice test as the outcome measurement, an assessment method appropriate for our intent.24–26 We did not formally assess the validity and reliability of the test but questions were selected to match the content of the ARS quizzes 25 and were obtained from respected sources. Obtaining validity evidence for a test is both time-intensive and costly and, unlike checklists or scales, once the test questions are available in the public domain, the validity of the test is easily compromised. In addition, the pre-post test design allowed us to account for experiences and educational sessions that the resident may have had previously as each participant served as his or her own control. Although it would have been ideal to show an improvement in the higher levels of clinical competence (eg, “shows” or “does”), we did not think this would be possible in a month-long rotation and with the currently available assessment methods.

The ARS quiz modules were developed to simulate questions that are often asked to the residents by the fellows or attendings on rounds. They were designed to be free-text, short-answer questions because this provides a better assessment of learners compared with multiple-choice questions and should have minimized cueing, 1 of the weaknesses of multiple-choice tests.25–27 Using the ARS app allowed an entire group of residents to be evaluated in real-time and preserved anonymity, preventing the embarrassment of providing a wrong response in front of the health care team, including the patient and his or her family. 28

There was no increase in knowledge with ARS and there are several possible reasons. Education was provided mainly by fellows with guidance by the attendings as this is the usual practice in PICUs with pediatric critical care fellows. One of the goals of fellowship is to learn how to educate residents. If we had altered this system to require that only attendings provide the instruction, the effects of the intervention would have limited applicability to actual practice. Nonetheless, the outcome might have been different if the attending provided the education. In addition, teaching was not scripted, permitting fellows to present the information as they chose. As fellows are less experienced educators, this likely introduced more variability but it was also included in the study design so that the results would be more applicable to current practice.

There are other limitations that may have influenced the results. First, no resident completed all 10 modules because not all disease processes were encountered during the rotation or because the fellow had to attend to other patients and was not available to teach. In designing the investigation, we expected all 10 topics to be discussed in the month-long rotation, and the test was constructed assuming this would be the case. It is possible that the failure to improve test scores reflected a lack of resident exposure to all the topics. However, there was no correlation between the numbers of ARS sessions in which residents participated with the difference between their pre-post rotation examination scores. Second, it may be that the test, which comprised only 25 questions, was not sufficiently sensitive to detect an increase in knowledge. Although more questions might have improved sensitivity, the added time to complete a longer examination might have discouraged participants from completing it. It is noteworthy that residents who rotated through the PICU in the first part of the year had lower quiz scores compared with those who rotated in the second half, but this difference was not found in the overall knowledge test, raising concerns about the sensitivity of the test. However, this might also reflect that the quiz questions were predominately factual. Third, it is possible that the information communicated during rounds was not retained. Some residents were rounding after night call and may have been too fatigued to gain new knowledge. Fourth, subjects completed the tests online without proctoring and may not have paid full attention to the questions, negatively influencing the results. On a few occasions, fellows were unable to provide teaching due to the need for them to provide urgent patient care. Fifth, although the group allocation was made before the study was initiated, it was not randomized. Finally, there are other factors that could have influenced the results, such as differences in teaching style and overall interest of the educator in teaching.

There was a small, statistically significant increase in the post-pre test scores in the controls but not in the ARS group. As the final test scores were same in the two groups, this difference may be due to the controls starting at a slightly lower pre-test score. Alternatively, perhaps using ARS actually limited the amount of information that was conveyed and detracted from learning.

Utilization of ARS during lectures has been shown to improve learning.11–13 In a randomized study, Pradhan et al 11 showed that obstetrics and gynecology residents who were taught in an interactive lecture format had a 21% increase in their test scores following the learning session compared with those who were taught in the traditional lecture format. Rubio et al 13 reported that the residents who were taught using ARS in the classroom had significantly higher scores on their post-lecture test and on repeat examination 3 months later compared with controls. In their investigation, students completed a short ARS quiz during their 40-minute lecture and learning was reinforced with another quiz at the end of the session. Perhaps our results would have been different if the ARS quiz was given at the bedside immediately before the patient with the particular disease was discussed and a post-quiz administered after rounds were finished.

Fellows did not accurately predict resident performance and this did not improve over time. Even though they had completed a pediatric residency, fellows had limited knowledge about what residents actually knew about pediatric critical care. Recognizing this, fellows praised ARS as being helpful for their teaching and the majority wanted it to be incorporated into resident education. One fellow commented, “It was nice to have a subject and the specific points to teach. It can be difficult to know what to teach the residents.” Nonetheless, fellows were somewhat less enthusiastic about how ARS influenced rounds.

Developing the necessary skills to be an effective teacher is 1 of the sub-competencies identified by the Accreditation Council for Graduate Medical Education and the American Board of Pediatrics. 29 According to the Pediatric Milestones, a novice teacher is one who “demonstrates a completely teacher-centered approach; focused on what needs to be taught rather than the learning needs of the students” whereas an expert instructor is one who adapts “learner-centered approaches” and “assesses learner needs and incorporates them to advance learners . . .” 30 The inability of the fellows to accurately predict resident performance is consistent with a “teacher-centered” approach. Utilizing ARS in this fashion may help young educators advance their teaching skills, shifting from being “teacher-centered” to “learner-centered” by helping them provide teaching that is better suited to the student. As this was not the primary focus of the investigation, future studies will need to specifically evaluate how ARS influenced the fellow and his or her teaching and also take into account the potential negative affect it may have on the flow of rounds.

Although we did not demonstrate an increase in knowledge with ARS, this does not necessarily mean that the intervention is without value. Although adequately powered for a large effect size, the group of learners subjected to ARS was small and may not be representative of other learners. Future studies should include a larger sample size with true randomization and across multiple residency programs. In addition, ARS should be evaluated for use across several rotations, not just in the PICU, as it is applicable to other rotations as well. Furthermore, utilization over an extended period would allow other assessment methods to be used and better gauge its value. Studies should also evaluate the impact on fellow teaching, perhaps by examining the trajectory of the fellow’s milestone rating for the teaching competency.

Conclusions

The use of an ARS smartphone app to improve resident education in the PICU did not result in a measureable increase in knowledge in this pilot study involving a single residency program. However, it was well received by both the residents and fellows, and using it may help young instructors provide more “learner-centered” teaching. Additional studies are needed to establish its efficacy in resident education, including areas outside of the PICU.

Footnotes

Funding:

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests:

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Author Contributions

All authors made substantial contributions to the design of the study and the acquisition of data, critically reviewed the manuscript and approved its submission.