Abstract

Keywords

In recent years, the assessment and appraisal of new medicines by health technology assessment (HTA) agencies and payer bodies for the purpose of pricing and reimbursement has received considerable attention. At times, this has emerged as a consequence of negative coverage decisions for some medicines, often related to treatments for high-burden diseases associated with significant mortality and morbidity. As a consequence, intense disputes for the allocation of resources often arise usually involving patients, clinicians, and pharmaceutical manufacturers on one hand and HTA agencies, commissioners of care, and public decision makers on the other.

To a large extent this conflict can be attributed to current methodologies used for the evaluation of new medicines as they cannot adequately capture the different notions of value evident given the existence of diverse perspectives. 1 For example, despite the fact that the QALY (quality-adjusted life-year) metric has managed to successfully combine the two vital dimensions of health benefit relating to life expectancy and health-related quality of life (HRQoL), its sole and inflexible adoption as part of economic evaluations in HTA can at times be regarded as blunt and insufficient, not least because it does not reflect other important value dimensions of treatments.2,3 This lack of comprehensiveness has led decision makers to use additional parameters of value in an informal and nonsystematic manner, often diminishing transparency in the evaluation procedures, 4 with emerging inconsistencies in coverage threatening the credibility of the decision outcomes and their acceptance or perceived fairness from the public.

More recently, a growing number of resource-conscious health care professionals have started to publicly oppose the use of highly expensive new drugs in clinical practice that are perceived to bring marginal added clinical benefit on the grounds of poor value for money. Escalating drug prices have catalyzed the generation of numerous “value frameworks” with the aim to inform payers, clinicians, and patients around the assessment process of new medicines for the purpose of coverage and treatment selection decisions.5–8 Although this is an important step toward a more inclusive value-based assessment approach and should be supported, 9 aspects of these frameworks might be based on weak or atheoretical methodologies and could potentially result in misleading recommendations or decisions. 10

Research and development of alternative methodologies for assessing and appraising the value of new medicines could overcome such limitations, contributing toward a more complete framework for measuring value and making resource allocation and treatment decisions. Multiple criteria decision analysis (MCDA) has recently surfaced as an alternative method not only to traditional economic evaluation techniques with the prospects of addressing some of their limitations in HTA11–18 but also for eliciting patient preferences and facilitating treatment selection.19–21 In any case, empirical evidence from real-world applications with HTA decision makers are very limited.

This is a proof-of-concept case study in an HTA context applying a recently developed value framework, the Advance Value Framework (AVF),22,23 for assessing the value of metastatic castrate-resistant prostate cancer (mCRPC) treatment options following second-line chemotherapy. This corresponds to the first of a series of case studies that test in practice the AVF by engaging with and eliciting the preferences of decision makers from national HTA agencies and payer bodies in Europe. It forms continuation of pilot work from an earlier case study on the assessment of drugs for metastatic colorectal cancer using value judgements from a range of stakeholders in the English setting. 24 A facilitated workshop was organized taking the form of a decision conference, 25 adopting a facilitated decision analysis modelling approach,26,27 and involving an experts panel of assessors from the Swedish Dental and Pharmaceutical Benefits Agency (Tandvårds- och läkemedelsförmånsverket [TLV]). Metastatic prostate cancer was chosen as a case study topic mainly because of its high disease severity and the availability of several expensive treatment options that highlight a clear decision problem. This indication has been a popular appraisal topic by numerous HTA agencies, including TLV in Sweden and the National Institute for Health and Care Excellence (NICE) in England.

The adaptation of the AVF for the metastatic prostate cancer setting is described in the Methods section, the rankings of the treatment options based on their value are shown in the Results section, with limitations of the methodology and challenges of MCDA applications in HTA presented in the Discussion section.

Methods

Methodological Framework

The AVF was applied for assessing the value of a set of drug treatments, similarly to a previous application for another oncology indication. 24 This methodology adopts an MCDA “clean-slate” approach for HTA 12 based on multi-attribute value theory (MAVT),28,29 with the aim to derive a comprehensive value function following five iterative phases: 1) problem structuring, 2) model building, 3) model assessment, 4) model appraisal, and 5) development of action plans. 23 The AVF was operationalized using the decision support system M-MACBETH, enabling the use of visual graphics to build a model of values, acting as a facilitation tool to inform both the design phases (1 and 2) and evaluation phases (3 and 4) of the methodological process. 30 Additional information on the methodological process can be found in the Online Supplementary Appendix.

Clinical Practice and Scope of the Exercise (Problem Structuring)

In 2004, clinical evidence demonstrated the survival benefit of docetaxel chemotherapy in combination with prednisolone for castrate-resistant prostate cancer (CRPC) patients, when compared with mitoxantrone in combination with prednisolone.31,32 Since then a fruitful period in the development of prostate cancer drugs followed with a number of new molecules indicating significant clinical benefit in patients previously treated with docetaxel including abiraterone, cabazitaxel, and enzalutamide.33–35 Cross-resistance appears to exist between abiraterone and enzalutamide, indicating that it is unlikely to experience clinical benefit by switching from one treatment to the other,36,37 based on which NICE recommends only the use of either treatment and not both.38,39 Although specific patient subpopulations might be contraindicated to any of the treatments, in most cases all three new and expensive drugs could be suitable for patients, and therefore, the topic of CRPC treatment coverage acts as a suitable decision context for the application of the value framework.

The analysis presented here focuses on assessing the value of third-line treatments for mCRPC following prior docetaxel-containing (i.e., second line) chemotherapy, essentially adopting the clinical practice guidelines from the European Society for Medical Oncology (ESMO) and the scopes of the respective Technology Appraisals (TAs) for each technology by NICE and TLV. In doing so, the same clinical evidence from the corresponding TAs was used to inform the respective criteria of the value tree, but this was further supplemented by additional evidence for value concerns not explicitly addressed by NICE and TLV. The scope of NICE TA255 was adopted for the case of cabazitaxel in combination with prednisone, the scope of NICE TA259 and TLV TA4774/2014 was adopted for the case of abiraterone in combination with prednisone, whereas the scope of NICE TA316 and TLV TA2775/2013 was adopted for the case of enzalutamide.38–42

Adaptation of the Advance Value Tree for Metastatic Prostate Cancer (Model Building)

Selection of evaluation criteria benefitted from a hybrid approach, which essentially combined features from “value focused thinking” 43 and “alternative focused thinking.” 44 Initially, a top-down approach was adopted for the selection of a general set of evaluation criteria by decomposing the overall value concerns of HTA decision makers into subconcerns, which took place prior to the selection of any drug options; this was followed by the adaptation of the general criteria into disease-specific criteria, which took place after comparing the characteristics of the particular drugs evaluated, as part of a bottom-up approach. 23

More precisely, following a systematic literature review and expert consultation as part of the Advance-HTA project,4,i a generic value tree was developed outlining higher level criteria that were decomposed into lower level criteria for assessing the value of new medicines in the HTA context. 22 The structure of this generic value tree, the Advance Value Tree, consists of five value domains or criteria clusters, aiming to reflect all the essential value attributes of new medicines in tandem with their indication under a prescriptive decision-aid approach. These include 1) burden of disease (BoD), 2) therapeutic impact (THE), 3) safety profile (SAF), 4) innovation level (INN), and 5) socioeconomic impact (SOC), with the first value domain relating to the disease of interest and the four latter domains relating to the actual medical intervention(s), essentially producing the following value function:

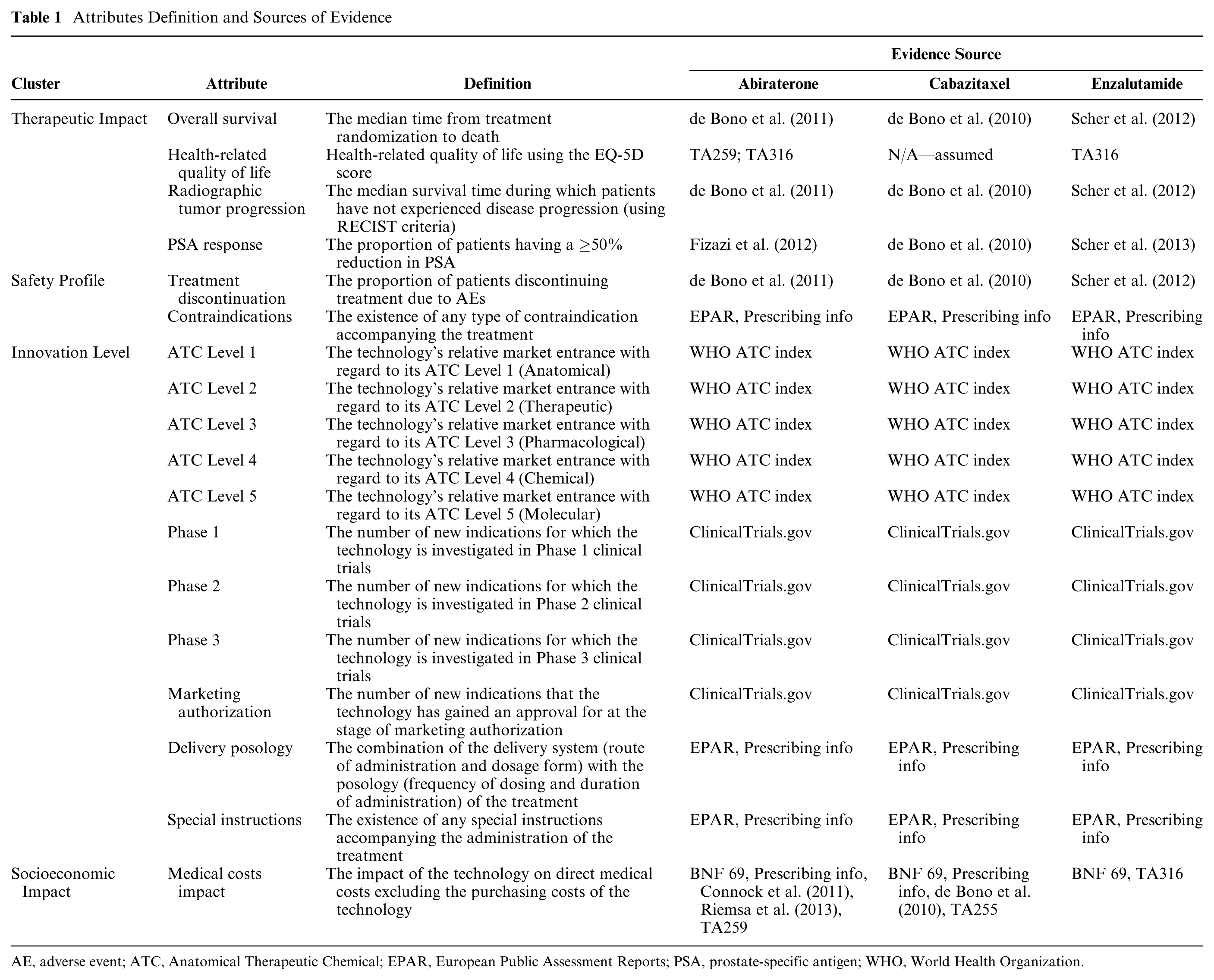

In consultation with a clinical oncologist, the generic value tree was then adapted for the case of mCRPC while striving to adhere to the ideal decision theory properties to ensure methodological robustness. As a result, a disease-specific value tree was produced with five criteria clusters decomposed into eight subcriteria clusters and a total of 18 emerging criteria attributes (Supplementary Figure A1 in the Online Appendix). The definitions of all criteria attributes are listed in Table 1.

Attributes Definition and Sources of Evidence

AE, adverse event; ATC, Anatomical Therapeutic Chemical; EPAR, European Public Assessment Reports; PSA, prostate-specific antigen; WHO, World Health Organization.

Subsequently, the value tree was validated together with the decision conference participants as part of a more constructive decision-aid approach allowing for interactivity and enabling group learning. This “socio-technical” approach is reflecting another type of mixed approach adopted in the evaluation (i.e., values construction): initially, model adaptation and structuring took place in consultation with a clinical expert, which was then collectively validated and evaluated with the group before the completion of the model building phase. 45

Evidence Considered and Alternative Treatments Compared (Model Building)

The alternative treatment options compared in the analysis were abiraterone (Zytiga) in combination with prednisone, cabazitaxel (Jevtana) in combination with prednisone, and enzalutamide (Xtandi) monotherapy.

The evidence sources used included the peer review publications concerning the pivotal clinical trials of the alternative treatment options that were considered in the appraisals of NICE and TLV,33–35,46 the NICE Evidence Review Group (ERG) reports,47,48 or their relevant peer review publications,49,50 a Swedish population study on health related quality of life, 51 the Product Information sections of the European Public Assessment Reports (EPAR) from the European Medicines Agency (Annex I and III),52–54 the Anatomical Therapeutic Chemical (ATC) classification system indexes available through the portal of the WHO Collaborating Centre for Drug Statistics Methodology, 55 the US National Library of Medicine database of clinical trials available through ClinicalTials.gov, 56 and the British National Formulary (BNF). 57 The clinical evidence (falling under the THE and SAF criteria clusters) for each alternative treatment option were sourced from the same clinical studies that NICE and TLV evaluated (single pivotal trials for each drug). The source of evidence used for identifying the performance of options across the evaluation criteria is shown in Table 1. Additional information on the evidence considered can be found in the Online Appendix.

Setting Attribute Ranges and References Levels (Model Building)

With regard to model building, the allowable range of performance across the different attributes (i.e., attribute ranges) was selected based on the performance of the alternative treatment options compared. Within the attribute ranges, “lower” (x_l) and “higher” (x_h) reference levels were defined to serve as benchmarks for preference value scores of 0 and 100, respectively, acting as value anchors for constructing criteria value functions and eliciting their relative weights.58,59,ii The “lower” reference levels denoted less preferred levels aiming to reflect “satisfactory” performance, whereas the “higher” reference levels denoted more preferred levels aiming to reflect “ideal” performance. The latter was defined by considering plausible changes in best available performance, for example, by accounting for new product characteristics that might enter the market in the near future that could outperform the current ones. The reference levels of the clinical attributes (under the THE and SAF value domains) were decided in consultation with a clinical expert (urologic oncologist). Depending on the criterion under consideration, the typical rationale adopted the BSC performance as a “satisfactory” reference level with a hypothetical performance improvement of 20% better than the best available option acting as the “ideal” reference level (or vice versa for negative attributes). The 20% hypothetical improvement was selected because it was deemed to be a realistically plausible clinical effect of forthcoming products. By considering the performance of best available option(s) among the treatments evaluated in the exercise and accounting for plausible performance improvement in the near future, the value scale essentially reflected characteristics of both a “local” scale (benchmarks derived based on the performance of options evaluated, i.e., what is best available) and a “global” scale (benchmarks derived based on the plausible performance of options not necessarily captured in the exercise, i.e., what is best plausible). 60 The emerging value scores of options could in theory take negative or higher than 100 values where v(xlower) = 0 and v(xhigher) = 100, essentially by conducting a linear transformation that is acceptable as an interval scale such as a value scale. “Lower” and “higher” reference levels for all attributes at the pre-workshop stage and the basis of their selection are outlined in Table A1 in the Online Appendix (assuming no impact of LHRH analogue). Additional information on the methodological basis adopted for setting the min-max attribute limits and reference levels is provided in the Online Appendix.

Decision Conference (Model Assessment and Appraisal)

The model assessment and model appraisal phases of the exercise took place through a facilitated workshop with assessors from TLV, taking place as a decision conference and hosted at the head offices of TLV in Stockholm, Sweden. In total four experts participated, including one medical investigator, one pharmacist, and two health economists. Background material introducing the scope of the exercise in more detail was sent to the participants one week before the workshop.

The author acted as an impartial facilitator and assisted the group’s interaction and thinking about the decision problem using the preliminary version of the mCRPC specific value tree (Supplementary Figure A1 in the Online Appendix) and the relevant data as the model’s starting point, based on which value judgements and preferences were elicited on-the-spot.27,61,62 On the day of the workshop the preliminary model was validated with the participants by revising it cluster by cluster in real time through an open discussion, seeking group consensus by adopting an iterative and interactive-model-building process where debate was encouraged and differences of opinion actively sought. More information on the decision conference is provided in the Online Appendix.

MCDA Technique (Model Assessment and Appraisal)

The AVF adopts a value measurement MCDA methodology making use of a simple additive (i.e., linear, weighted average) aggregation model that assumes preference independence between the different attributes, with overall value V(.) of an option a defined by the equation below 22 :

where m is the number of evaluation criteria, wivi(a) is the weighted partial value function of evaluation criterion i for option a, and V(.)_ is a multi-attribute value function based on value theory principles. 29

Each attribute’s value function correlates the performance along an attribute range to a value scale. These were elicited from the workshop participants using the Measuring Attractiveness by a Categorical Based Evaluation Technique (MACBETH), based on MAVT. 28 This is a pairwise qualitative comparison approach where qualitative judgements about the difference of value between different pairs of attribute levels (e.g., difference in value between 3 and 6 months overall survival) are expressed using seven categories (no difference, very weak difference, weak difference, moderate difference, strong difference, very strong difference, or extreme difference in value).63,64 By only requiring such semantic judgements, which are then converted into a cardinal (interval) value scale using a mathematical algorithm with a set of decision rules, MACBETH provides a simple, interactive, and constructive approach, with various real-world applications illustrating its usefulness as a decision support tool65–68; the mathematical foundations of MACBETH have been extensively described. 69 A cardinal scale can reveal information about the difference between its levels, in contrast to an ordinal scale that can only be used for a more restrictive ranking along its levels without allowing any arithmetic operations. An indirect (qualitative) swing weighting technique was applied involving the MACBETH value judgements to elicit relative criteria weights, 64 given that direct questions of importance for a criterion that do not take into account their attribute ranges are known to be one of the most common mistakes in making value trade-offs. 70

In turn, the M-MACBETH software was used as a support system in order to construct the value tree, elicit criteria value functions (through which options were scored), assign relative criteria weights through the MACBETH qualitative swing weighting protocol, combine preference value scores and weights together using additive aggregation to derive overall weighted preference value (WPV) scores, and perform sensitivity analysis on criteria weights.58,71 The software automatically performs consistency checks between the qualitative judgements expressed, and in addition a second consistency check was manually performed by the author to validate the cardinality, i.e. the interval nature, of the emerging value scale. This was done by comparing the sizes of the intervals between the proposed scores and inviting participants to adjust them if necessary, 72 a requirement that is essential for the application of simple additive value models. More information regarding the technical details of MACBETH is available in the Online Appendix.

Costs Calculation (Model Assessment and Appraisal)

Drug costs were calculated according to UK prices (excluding VAT), pack sizes, and dosage strengths as found in the BNF 57 ; the recommended dosages and treatment durations were taken from the pivotal trial for the case of cabazitaxel, 33 the respective NICE technology appraisal for the case of enzalutamide, 39 and the labelling and package leaflet (EPAR—Annex III) for the case of abiraterone. 54 Vial wastage was assumed in all calculations. Drug administration costs for cabazitaxel were kept consistent with the respective NICE TA. 40 The UK perspective was adopted as a neutral benchmark, partially because of the readily available data and primarily in order to allow the measurement of cost(s) in a common unit across a series of similar case studies in different European countries, so that overall WPV scores can then be viewed against the same cost denominator to produce comparable cost-value ratios.

Results

Criteria Validation and Amended Value Tree for Metastatic Prostate Cancer

Overall, consensus was reached in terms of criteria inclusion and exclusion with no criteria considered to be missing. The final version of the value tree, as emerged following the open discussion with the participants of the workshop, is shown in Figure 1. In total, 10 out of the 18 attributes were removed from the value tree because they were judged from the participants to be nonfundamental for the scope of the exercise. Most of these attributes were part of the innovation level cluster, with all attributes relating to the ATC classification system (five attributes) and spillover effects (four attributes) being excluded. The only other attribute removed was the PSA (prostate-specific antigen) response attribute of the therapeutic impact cluster because it was thought to be redundant, without adding any value on top of the remaining therapeutic attributes.

Final value tree for metastatic prostate cancer (post-workshop). Image produced using the M-MACBETH (beta) software, version 3.0.0.

An example of a MACBETH value judgements matrix, its conversion into a value scale, and the respective scoring of the alternative treatments for the case of Overall Survival (OS) criterion is described in the Online Appendix. Effectively all attributes produced almost linear value scales, either based on quantitative or qualitative performance levels.

Options Performance, Criteria Weights, and Overall Preference Value Rankings

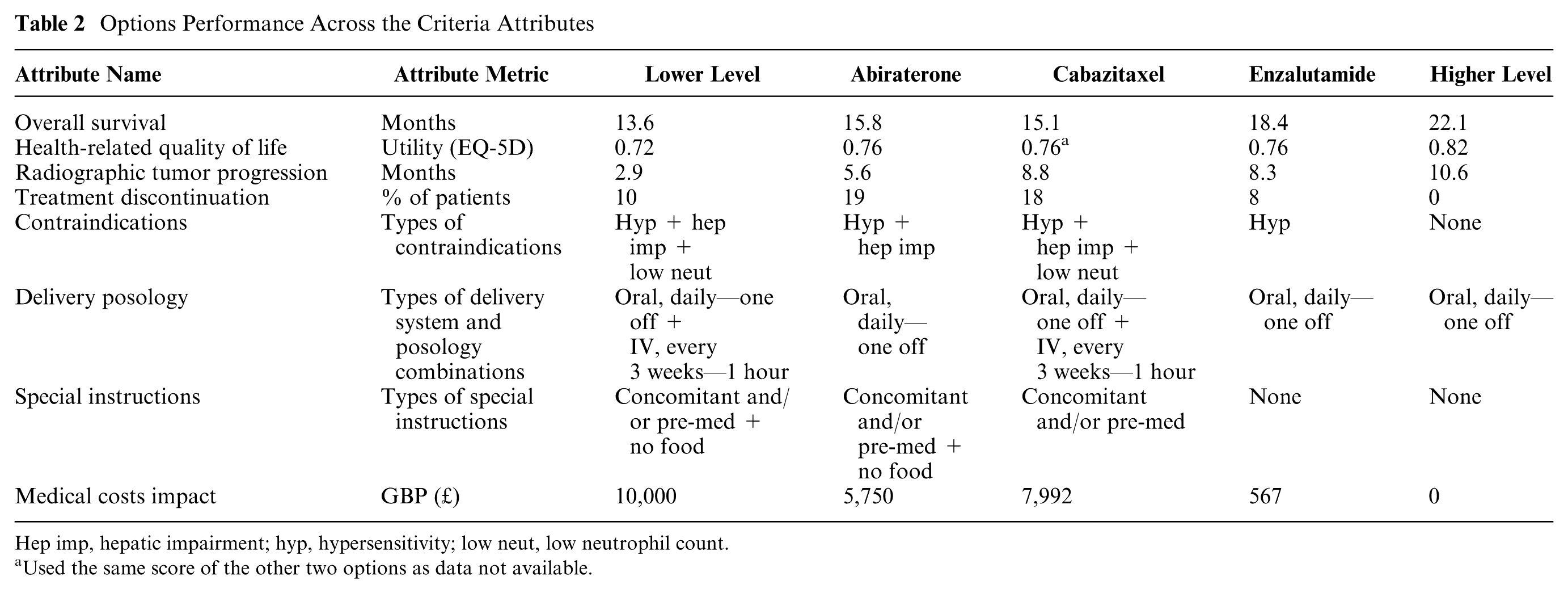

The performance of the options across the different attributes together with the “lower” and “higher” reference levels are shown in Table 2.

Options Performance Across the Criteria Attributes

Hep imp, hepatic impairment; hyp, hypersensitivity; low neut, low neutrophil count.

Used the same score of the other two options as data not available.

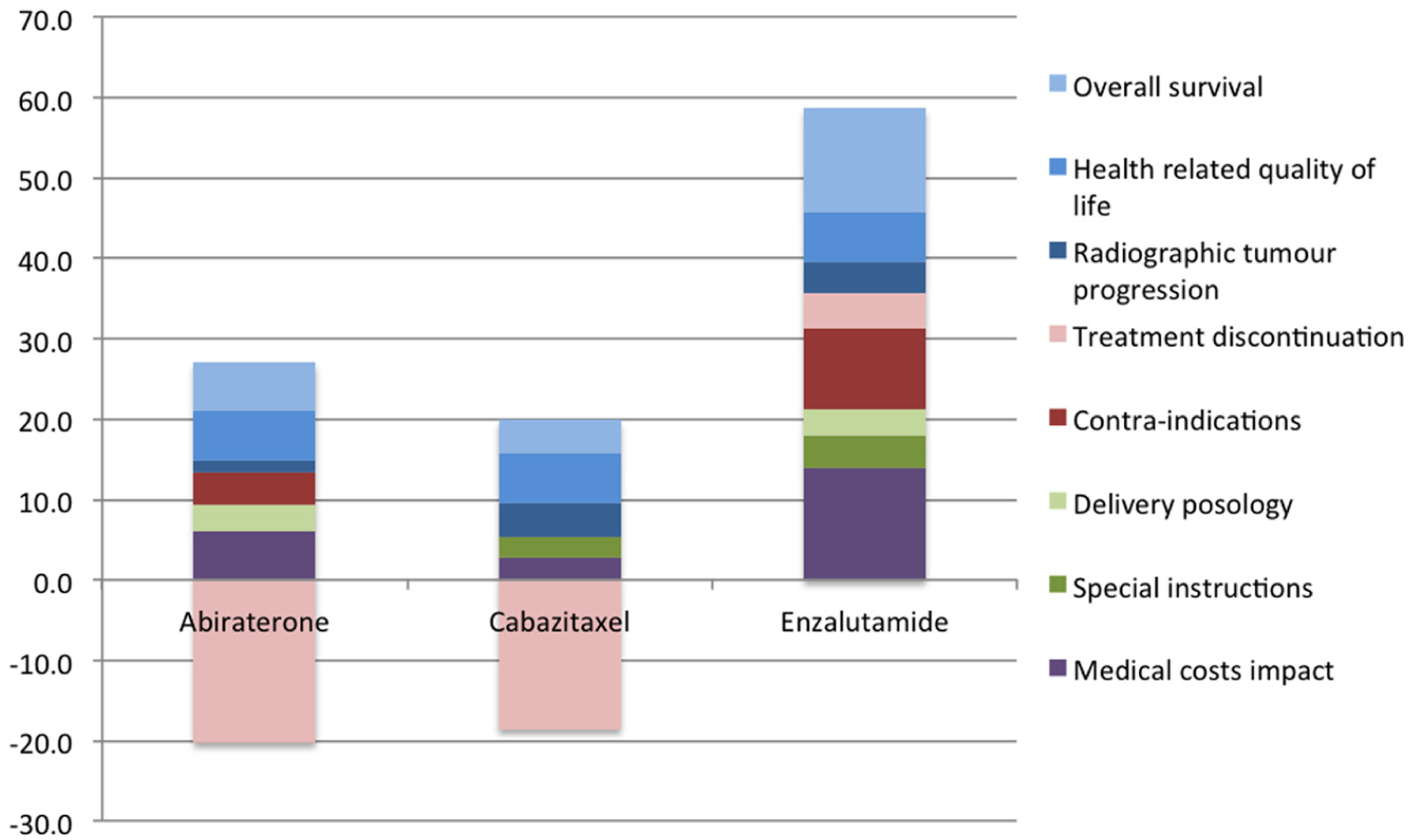

The overall WPV scores of the options and their breakdown into their partial value scores across the different criteria attributes with their respective weights are shown in Table 3. Enzalutamide scored the highest overall WPV score of 58.7. Abiraterone and cabazitaxel produced overall WPV scores of 6.9 and 1.4, respectively, partially due to their “negative” performance in the treatment discontinuation attribute, producing absolute preference value scores of −95.3 and −87.5, respectively, and WPV scores of −20.2 and −18.6, respectively. This was due to their performance on the treatment discontinuation attribute (19% and 18%, respectively), which lay below the lower reference level of the scale (i.e., 10%). A stacked bar plot of the WPV scores of the alternative treatments across the attributes is shown in Figure 2.

Overall Weighted Preference Value (WPV) Scores, Partial Preference Value Scores, Relative Weights, Costs, and Cost per Unit of Value

Stacked bar plot of treatments’ weighted preference value (WPV) scores across all attributes.

The relative weights of importance assigned to the different attributes are shown in Figure 3. By taking into account the “lower” to “higher” ranges of the attributes, the greatest relative weights were yielded for the case of Overall Survival, Treatment Discontinuation, and Health-Related Quality of Life (with relative magnitudes of 23, 21, and 15 out of 100, respectively), adding up a combined relative weight of 60% of the total. With regard to the total weights assigned across the criteria clusters, Therapeutic Impact cluster totaled a relative weight of 44 (three attributes), the Safety Profile cluster totaled a relative weight of 33 (two attributes), the Socioeconomic Impact cluster totaled a relative weight of 15 (single attribute), and the Innovation Level cluster totaled a relative weight of 7 (two attributes) out of 100.

Relative criteria weights stacked bar.

Value for Money Analysis

By using rounded up cost figures of £24,600 for enzalutamide, £21,900 for abiraterone, and £23,900 for cabazitaxel (£22,190 drug cost and £1,710 administration cost) and dividing them with overall WPV scores, the costs per MCDA value unit were calculated to be £419, £3,173, and £17,509, respectively (Table 3). Therefore, in terms of value-for-money, cabazitaxel is shown to be dominated by abiraterone, being also very close to be dominated by enzalutamide (£500 difference based on the prices used). Enzalutamide on the other hand is associated with a higher cost (£2,500 difference) and a higher overall WPV score (51.8 difference) compared with abiraterone. The overall WPV scores of the alternative treatments versus their costs (purchasing costs plus any administration costs) is provided in the form of a cost-benefit plot in Figure 4.

Cost-benefit plot of overall weighted preference value (WPV) scores versus costs. Image produced using the M-MACBETH (beta) software, version 3.0.0.

Sensitivity and Robustness Analysis

Parameter uncertainty relating to the estimation of weights was addressed by conducting deterministic sensitivity analysis. At the end of the workshop, the impact of baseline weights’ changes on options’ rankings was explored using one-way sensitivity analysis. In order for cabazitaxel to become better ranked than abiraterone, PFS relative weight would have to change from 5.8 to 15.6, Treatment Discontinuation relative weight from 21.2 to 53.9, or Special Instructions relative weight from 4.1 to 12.2. Alternatively, for cabazitaxel to become better ranked than enzalutamide, PFS relative weight would have to change from 5.8 to more than 89.5. Under no scenario abiraterone could have been ranked above enzalutamide. Therefore, conclusions were robust as the ranking of the treatments was not sensitive to single variations of up to at least 100% along the attributes weight range. The robustness of the results was also validated by conducting seven-way sensitivity analysis in the reference levels of the quantitative attributes using the respective function of the M-MACBETH software (“Robustness analysis”). A simultaneous change of up to ±10% across all of the attribute reference levels would not affect the ranking of the alternative treatments. This means a change of 20 points in the value scale between the highest and the lowest scores for every attribute (i.e. the allowable range of eligible scores), did not affect treatments’ ranking. In terms of weighting, a simultaneous change of ±3% would be needed for a change in the rankings of the options to be observed (cabazitaxel to become second, abiraterone to become third), with no changes in the best-ranked option plausible.

Other types of uncertainty also exist, including stochastic uncertainty, structural uncertainty, and heterogeneity that could possibly be addressed with other approaches (or combinations of), such as probabilistic sensitivity analyses, Bayesian frameworks, fuzzy set theory, or grey theory. 73 For instance, if significant uncertainty exists with regard to options’ performance due to sampling variation from clinical trials, or in terms of criteria weights due to failure to agree on them, the application of point estimates might be inappropriate in which case stochastic multi-criteria acceptability analysis (SMAA) could be used. 74

Discussion

This case study demonstrates the application of a new value framework, the AVF, 22 adopting an MCDA methodological process in practice 23 for a set of alternative mCRPC treatments from the perspective of an HTA agency. Generally, MCDA methods in the context of HTA could be applied using two different ways, either as part of a supplementary/incremental approach or a pure/clean-slate approach.12,23 The former could in theory be used in combination with economic evaluation techniques to adjust the incremental cost effectiveness ratio (ICER) through the incorporation of additional parameters of value (or benefit criteria), therefore essentially “tweaking” the QALY or any other relevant outcome measure. The latter refers to deriving value anew, not necessarily involving the QALY or other value metric from some type of economic evaluation. The current application of AVF as part of this case study focuses on the latter.

In terms of design, implementation, and review of the analysis, the process adopted is effectively in alignment with the recent ISPOR good practice guidelines on the use of MCDA for health care decisions. 75 Through a decision conference, alternative options were assessed and ranked based on their overall WPV scores reflecting their performance against a set of evaluation criteria weighted for their relative importance based on the preferences of the group, yielding a holistic benefit component. In doing so, MACBETH was used as an MCDA modelling technique to operationalize the AVF in terms of scoring the treatment options and weighting the relative importance of the evaluation criteria. Subsequent consideration of drug costs (purchasing and administration costs) enabled the estimation of “cost per unit of value” ratios, which showed a dominance between the second and third ranked options. Assuming the existence of well-defined budgets, this approach could be used to derive MCDA-based cost-benefit ratios, prioritizing the most efficient funding options with the lowest cost-benefit ratios until the available budget is exhausted. 76

The TLV assessors who participated in the workshop gave positive feedback about the potential usefulness of the value framework, having the prospects of acting as a decision support tool in the evaluation of new medicines. Main advantages included the feasibility to transparently assess the performance of the options across a number of explicit evaluation criteria, while allowing to elicit their value trade-offs (i.e., their relative importance), but also its overall facilitative nature in the construction and analysis of the groups’ value preferences.

It should be highlighted that the decision context addressed in this case study is a one-off evaluation problem within a particular disease indication in contrast to the typical remit of TLV, which involves repeated decisions around the reimbursement of drugs across different disease areas by considering their opportunity cost through the use of, flexible or adjusting, willingness to pay thresholds. Consequently, even the most valuable or efficient treatment in the exercise could prove to be non–cost-effective on traditional economic evaluation grounds. This notion would be supported by the fact that the breath of the value model captured aspects that go beyond the formal remit of TLV. Therefore, the results aim to illustrate the methodology’s feasibility rather than to inform policy making.

Even under the more restricted context of feasibility testing, the findings should be interpreted under the specified MCDA characteristics of the exercise, namely, the particular decision context defined and the value model developed. The latter consists of the alternative options assessed, the evaluation criteria incorporated, the relevant value scales defined, the respective value functions elicited, and the relative weights elicited. Although more challenging, the value framework could also be applied at broader inter-indication levels, aiming to assess treatments for different indications that might require the use of more generic clinical evaluation criteria and less disease-specific endpoints, as, for example, the use of QALY that allows the comparison of health gain across different populations.

With regard to the removal of the spillover effect attributes under the innovation level cluster, this took place on the grounds that they “currently go beyond the agency’s remit” and therefore should not be considered in the first place. However, the opinion was evident that in real practice this information could act as supplementary type of evidence, not for influencing the coverage of the respective treatments but possibly for other secondary purposes as, for example, awarding reimbursement extensions or communicating internally possible new indications in the near future.

The following section discusses some limitations of the case study and focuses on challenges faced by MCDA applications in HTA settings and how they could be encountered. A more extensive discussion around the types of strengths and opportunities possibly offered by the AVF are provided elsewhere, both in terms of relevant practice and policy implications as part of a recent case study on another oncology indication 24 and from a more theoretical perspective. 22

Limitations of the Case Study

The results from the case study should be interpreted with caution as they comprise the outcome of a simulation exercise aiming to test the AVF in practice by illustrating its application rather than to inform real coverage decision making in respect to the particular drugs under consideration. Given the absence of head-to-head clinical trials comparing directly all treatments of interest and the lack of relative treatment effects across the clinical attributes of interest, un-synthesized evidence from the respective single pivotal trials of the alternative treatments were used. However, using un-synthesized evidence from different clinical trials to directly compare alternative treatments is not accurate, even if the populations of patients across the different trials are similar (in terms of disease severity and treatment history). Ideally, an indirect treatment comparison should be conducted first by using a common comparator to estimate the relative effects of two treatments versus the comparator, or a network meta-analysis that combines both direct and indirect evidence available through a mixed treatment comparison. 77 Therefore, an important limitation of this study is the lack of relative effects as part of clinical evidence and the use of their absolute effects from different clinical trials with the assumption that they can be directly compared. In real-world evaluations, evidence synthesis would be required to take place together with evidence collection as part of the model building phase. An example can be illustrated through the application of an SMAA approach for assessing the comparative benefit-risk of alternative statins in primary prevention, 19 using comparative effects from evidence of three meta-analyses,78–80 or the combination of SMAA with a network meta-analysis for assessing the comparative benefit-risk of second-generation antidepressants. 81

The relative small number of participants could be perceived as another limitation on the grounds of insignificant representation of perspectives for the purpose of informing policy making, but capturing an all-round set of preferences was not among the primary aims of the exercise. Ideally, a group size of between 7 and 15 participants should be aimed for; these sizes allow efficient group processes to emerge while preserving individuality, as they are large enough to represent all major perspectives but small enough for working toward agreement. 26 However, it should be noted that in reality final TLV decisions are made by a separate expert board/committee within the agency (the Pharmaceuticals Benefit Board), which consists of only seven members and whose overall expertise is well aligned with the expertise of the exercise participants. 82

Another issue was that only the HRQoL of the stable disease state was assessed because none of the treatments was assumed to have any effects during the progressive disease state. 39 In other disease indications this might not hold true in which case the relevant HRQoL attribute would need to capture both the stable and progressive disease states, possibly in the form of an OS-HRQoL aggregated attribute (given their possible preference dependency, discussed below), therefore producing a similar metric to QALYs.

Furthermore, another issue which could be perceived as a limitation was the definition of the “lower” reference level in the treatment discontinuation attribute and its impact on scoring, as it could influence the negative partial value scores observed in two of the treatments and consequently their overall WPV scores. The lower reference level of 10% used on the basis of Best Supportive Care performance was sourced from the placebo comparator arm of enzalutamide’s pivotal clinical trial (AFFIRM). The choice of using the placebo arm of the AFFIRM trial to proxy BSC performance and not the comparator arm from any of the other two treatments’ pivotal trials was because it better resembled BSC; in abiraterone’s pivotal trial (COU-AA-301) all patients in the placebo comparator arm were also administered steroids (prednisone), whereas in cabazitaxel’s pivotal trial (TROPIC) all patients in the comparator arm were administered steroids (prednisone or prednisolone) on top of chemotherapy (mitoxantrone). As a result, abiraterone and cabazitaxel produced negative partial value scores in treatment discontinuation as their performance lay below the lower reference level. The basis adopted for setting all clinical attribute reference levels is extensively described in the Methods section, and although it took place in consultation with a clinical expert, others might have chosen different attribute levels. However, any such differences would most probably have been of minor impact, not necessarily affecting the overall valuation or ranking of the treatments.

Challenges of MCDA Applications in HTA

Among the main challenges of MCDA applications in HTA evaluations include the technical requirements in ensuring that all attributes possess the necessary theoretical properties.83,84

Because of the use of a simple additive (i.e., weighted average aggregation) model, the violation of preference-independence is of particular relevance as it might undermine the validity of such models. 83 There are two types of preferential independence, at ordinal and cardinal levels, the former of which is a precondition for the latter, but not vice versa. Intuitively, ordinal preference independence means that alternative options can be ranked on one dimension (e.g., attribute) independently of their impact on another dimension. In contrast, the notion of cardinal preference independence, which is also referred to as “difference independence,” 85 denotes that the difference in attractiveness—or added value of an improvement—between two performance levels on one criterion (e.g., attributes levels) does not depend and can be measured independently of the levels on another criterion. 86 Such a “difference consistency” between (the values of the) attributes is often taken as a working hypothesis given that it is “so intuitively appealing that it could simply be assumed to hold in most practical applications.”87(p284)

However, evidence suggests potential preference dependencies between health gain and disease severity, 88 or overall survival and HRQoL. 22 Considering the absence of a disease severity criterion, in order to avoid potential preference-dependence between the OS and HRQOL attributes and given an equal performance across all options in terms of the latter, OS value functions derived were effectively conditional on the range of the HRQoL attribute at a cardinal level. More precisely, the “lower” (x_l) and “higher” (x_h) reference levels of the plausibly dependent HRQoL attribute (EQ-5D index scores) were revealed to participants, that is, v(x_0.72) = 0 and v(x_0.82) = 100, and they were instructed to assume an identical performance between the options on the dependent attribute (given that all three options had identical performance), but without disclosing the exact figure. However, it should be noted that the use of such “conditionally cardinal value functions” might in theory be incompatible with the use of an additive model and therefore should only be used with caution, as, for example, within descriptors of performance (i.e., attributes). 89 Alternatively, a multiplicative model could be used; see the example of the Thai essential list. 90 In any case, the use of a simple test involving the combination of Reasoning Maps with MACBETH that takes the form of a multilinear evaluation model structure has been proposed as a way to capture potential value interactions between criteria and therefore cardinal preference dependencies. 91

Other technical challenges include a possible lack of connection between attribute ranges (i.e., criteria scales) and weights, and the incorporation of economic evaluation–related considerations in the MCDA. 84 First, assigning weights of “generic importance” without considering the performance of the alternative decision options being analyzed is known to be one of the most common mistakes when making value trade-offs.43,70 In the value framework adopted, elicitation of weights takes place following the selection of the alternative treatments and the specification of their performance as part of the adaptation of the generic Advance Value Tree into a disease-specific value tree. 22 Second, considering the costs and/or the cost-effectiveness of the options can lead to theoretical challenges as costs do not represent attributes of benefit whereas the effectiveness component would have already been captured as part of the relevant criteria, therefore possibly leading to double-counting. 92 Combining both benefits and costs together can also cause practical hurdles when trying to link the MCDA results with policy recommendations, as prioritizing resource allocation would not be a clear task given the absence of a cost-benefit (or value) ratio that reflects efficiency. Instead, the value framework applied only considers impact on resources without accounting for the purchasing cost of the options themselves, 22 therefore providing a more comprehensive unit of benefit. Such benefit units could then be considered in alignment with the respective costs of the options to derive appropriate cost-value ratios, which could be used to inform the allocation of resources by understanding the opportunity cost of different decisions assuming a defined budget, 76 possibly as part of a portfolio decision analysis exercise.

Future research could investigate the opportunity cost associated with disinvestments which could feed the development of incremental cost value ratio thresholds, acting as potential cutoff points for efficiency similarly to current ICER thresholds used in economic evaluations. However, this would face all the current challenges associated with the technical difficulties in estimating sound ICER thresholds to reflect opportunity cost.93,94

Conclusion

In health care systems facing significant budgetary pressures, HTA challenges relating to the evaluation of and resource allocation for innovative treatments require novel value-based assessment methodologies that can facilitate preference elicitation and decision making in a transparent way. Such methodologies should be based on robust theoretical principles so the results can lead to credible decisions. Future research could help validate the robustness and usefulness of the current value framework by conducting similar case studies with other HTA and insurer bodies across different European countries.

In order to catalyze the possible implementation of any such MCDA-based value framework, even at a testing phase, the development of the model and the application of the methodology altogether should be aligned with the formal remit of the decision-makers’ institutions. Establishing clear goals for the role of the results would be beneficial for the initial exploration of the methodology. In settings where strict guidelines and requirements exist around the application of specific HTA methods, as in Sweden with the use of economic evaluation, the exploration of a supplementary or “incremental” MCDA mode to cost-effectiveness analysis might act as a more realistic option for decision makers so that the results can be more readily adaptable to their current needs and therefore more easily implementable in daily practice. In turn, adaptation of a pure or “clean-slate” mode could still be viable but involving the utilization of more concise value trees that restrict the number of criteria around the formal value concerns currently in explicit use. This would allow capturing the primary value concerns as specified by the evaluation guidelines requirements while still offering the added benefits of assessing value through a decomposition approach that could enable the facilitation of decision making through a stepwise elicitation and transparent construction of value preferences. Following such a testing phase, decision makers could acquire important experience and confidence that could enable them to explore incorporating any additional value concerns judged to be relevant to their decisions.

Supplemental Material

DS_10.1177_2381468318796218 – Supplemental material for Evaluating the Benefits of New Drugs in Health Technology Assessment Using Multiple Criteria Decision Analysis: A Case Study on Metastatic Prostate Cancer With the Dental and Pharmaceuticals Benefits Agency (TLV) in Sweden

Supplemental material, DS_10.1177_2381468318796218 for Evaluating the Benefits of New Drugs in Health Technology Assessment Using Multiple Criteria Decision Analysis: A Case Study on Metastatic Prostate Cancer With the Dental and Pharmaceuticals Benefits Agency (TLV) in Sweden by Aris Angelis in MDM Policy & Practice

Footnotes

Acknowledgements

The author is grateful to Prof Carlos Bana e Costa (University of Lisbon) for critically appraising this work and recommending ways of improvement. Constructive feedback and suggestions were also received from Dr. Monica Oliveira (University of Lisbon), Dr. Matteo Galizzi (London School of Economics), Prof. Larry Phillips (London School of Economics), Dr. Huseyin Naci (London School of Economics), Dr. Themistocles Resvanis (Massachusetts Institute of Technology), and Prof. Gilberto Montibeller (University of Loughborough), all of which are much appreciated. Special thanks go out to Dr. Mark Linch (University College London Hospitals) for providing valuable clinical insights that helped the author to understand the current clinical practice in England and Europe, informing the preliminary version of the model and earlier draft version of the respective section. The author is thankful to the staff of the Dental and Pharmaceutical Benefits Agency in Sweden (TLV) that participated in two half-day workshops in Stockholm and whose preferences were elicited acting as the basis of the analysis: Loudin Daoura (medical investigator), Niklas Hedberg (chief pharmacist), Daniel Högberg (health economist), Douglas Lundin (chief economist). Special thanks go to Karl Arnberg (head of unit) and Douglas Lundin for helping organize the workshop. Olina Efthymiadou and Erica Visintin (London School of Economics) provided valuable research assistance with drug costs and innovation spillover effects calculations. The author finally thanks the participants at the PPRI conference of the WHO Collaborating Centre for Pharmaceutical Pricing and Reimbursement Policies in Vienna (October 2015) and the HTAi conference in Oslo (June 2015) for useful feedback. The vision of this research was originally proposed by Dr. Panos Kanavos (London School of Economics) as part of the Advance-HTA project. All outstanding errors are of the author.

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article. Financial support for this study was provided entirely by a grant from the European Commission, DG Research (Advance HTA project, Grant Number 305983).

The funding agreement ensured the author’s independence in designing the study, interpreting the data, writing, and publishing the report. The views represented in the article do not necessarily reflect the views of the European Commission.

Supplemental Material

i

Advance-HTA was a research project funded by the European Commission’s Seventh Research Framework Programme (FP7). It was comprised of several complementary streams of research that aimed to advance and strengthen the methodological tools and practices relating to the application and implementation of HTA. It was a partnership of 13 consortium members led by the London School of Economics—LSE Health.

ii

These are interval scales and therefore it is important to set up clear bounds/limits for each attribute.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.