Abstract

Psychological and neuroscience research reveals how motivational systems in the brain interact with the structure of social media platforms to enhance the propagation of misinformation. Features such as likes activate the brain’s reward system, encouraging people to share more emotionally engaging content, especially that which provokes outrage against opposing groups. False information is more likely to evoke outrage than is truthful information. Furthermore, exposure to misinformation increases its perceived credibility, and later corrections often fail to override its influence. To reduce impacts of false information, regulation of social media platforms should focus partly on the algorithms that prioritize content. Additionally, investing in media literacy interventions and grassroots efforts can bolster people’s motivation and ability to resist misleading content.

Misinformation, defined as “any information that is demonstrably false or otherwise misleading, regardless of its source or intention,” 1 is a critical global problem today because of its role in distorting reality among the public. False and misleading information is not new and has long been used in political contests, warfare, and economic competition. But social media, currently estimated to reach 5.4 billion users, 2 allows misinformation to spread much further and faster.

Research on misinformation has expanded rapidly since 2017, likely due to the assumed role of false information in the 2016 U.S. presidential election and in the Brexit referendum the same year. 3 Opinion polls suggest that a sizable number of voters from both U.S. political parties believe in conspiracy theories that spread via social media. For instance, a 2024 poll showed that 34% of Democratic voters believed the implausible conspiracy theory that Donald Trump staged his own attempted assassination. 4 Still, there are partisan asymmetries leading to a more severe problem among conservatives. Since 2016, Republican elites have spread far more false information than Democratic elites. 5 Furthermore, Republican voters are more likely to believe false information about consequential matters like public health. A January 2025 poll found that 40% of Republicans believed that it is probably or definitely true that more people have died from COVID-19 vaccines than from the virus itself, up from 25% in 2023. 6 Similarly, 35% of Republicans in an April 2025 poll reported believing that the MMR vaccine causes autism. 7 More problematic still is that these beliefs align with policymaking by Republicans that contradicts expert consensus. Specific recent examples in U.S. federal government policy include limiting access to COVID-19 vaccines, funding research on the already-discredited belief linking vaccines to autism, and impeding development of future mRNA vaccines. 8 Similar policy changes are also happening at the state level, where legislation weakening longstanding evidence-based public health protections on vaccines, milk pasteurization, and water fluoridation has been enacted in 12 U.S. states. 9

At the same time, the ability of researchers to study the content flowing through actual social media platforms has been hampered in the last few years by changes such as the 2023 price increase that made the application programming interface for Twitter, now known as X, unaffordable for most academic users 10 and by the shutdown of Meta’s CrowdTangle tool in 2024. 11 Naturally, platform transparency can yield important insights, 12 and we do not disagree about the value of such real-world data. However, many of the studies referenced in the current review were laboratory based and relied on simulated social media environments. This approach does not require cooperation of social media platforms, allows for greater experimental control, and can enable using neuroimaging methods to identify brain mechanisms. Data from simulated environments can ideally complement real-life social media data to improve understanding of the neural, social, and cognitive mechanisms that underlie people’s vulnerability to false information. We review some of these insights next and use them to help inform actionable recommendations for combatting false information.

Reward Processing

Liking on Social Media

The brain system that processes reward and motivates behavior, via interconnected regions such as the ventral striatum and ventromedial prefrontal cortex, plays a key role in processing positive feedback on social media. A series of studies showed this brain response using a simulated version of Instagram.13,14 Participants provided some of their own Instagram posts, which were then presented in an MRI scanner alongside other images chosen by the experimenters. Participants were told that a group of their peers had chosen whether to like each image. In reality, the like count for each image was randomly assigned to be either relatively high or relatively low.

When one’s own images had a high number of likes, there was a broad increase in neural activity in the reward system, as well as in brain regions involved in self-relevance and processing social information. Providing likes to posts ostensibly from peers in the same task paradigm also led to reward system activation. 15 Overlap between giving and receiving likes was apparent in core reward-processing regions of the brain, further emphasizing that in the social media environment, the reward system similarly motivates both providing and receiving positive feedback.

Sharing

Reward mechanisms also play a central role when people decide to share online content. One key study in this domain demonstrated that New York Times news articles most likely to be shared among the general population engaged the reward system more strongly in a small group of participants tested in an MRI scanner. 16 Relationships were also observed between real-world sharing and activity in brain systems that process both one’s own and others’ mental states, but these activations were statistically mediated by reward processing. In other words, articles that stimulated either thinking about what other people would be thinking (mentalizing) or thinking about oneself led to sharing to the extent that this activation led to increased activity in the brain’s reward circuit. These findings suggest that the neural reward response is most directly related to virality of content.

Novelty

There are various pathways by which misinformation could lead to a greater reward signal than true information, thereby motivating sharing of false content. One seminal study found that misinformation, especially in the political domain, spreads more quickly and to more people on social media than true content, based on a large sampling of Twitter data up to 2017. 17 The false content also tended to be more novel, salient, and surprising than the true content. These results can be considered alongside human and animal neuroscience research indicating that the brain’s reward system is sensitive to novelty.18,19 Thus, novelty may contribute to the spread of false information via its tendency to stimulate a reward response in the brain.

Reinforcement Learning and Habit Learning

Other mechanisms by which reward motivates behavior, specifically reinforcement learning and habit learning, also predict sharing on social media. Real-world data from Instagram show that getting more positive feedback on one post is associated with making a new post more quickly. 20 This relationship is consistent with reinforcement learning, where positive or negative feedback affects motivation for subsequent actions. In the brain, reinforcement learning is known to depend on dopamine-releasing neurons in the reward system. 21 However, this relationship does not hold for individuals with higher posting frequencies and more habitual posting styles. 22 Here, posting appears to be a habit, that is, a learned response to cues, initially motivated by reward, that persists even when the cues no longer consistently predict reward. 23 Sharing becomes habitual when repeated decisions to share content, and receiving positive social feedback for doing so, lead people to share even without positive feedback.

A series of studies using a simulated social media ecosystem further tied habitual sharing with sharing of misinformation. 24 Specifically, people who posted more frequently and/or habitually on their real Facebook accounts shared more stories overall in the simulated environment. Moreover, a higher proportion of the stories that they chose to share were false. However, when participants were given monetary incentives for sharing accurate information, the ratio of true to false stories among habitual sharers improved. Creating a new, healthier habit seemed to have helped people unlearn the habits of indiscriminate sharing that, at least in some, had been trained by real social media platforms.

Motivating Accuracy

The reward system has also been invoked in suggesting that motivation to be accurate competes with other motivations such as group identity. 25 Indeed, attention to accuracy can be improved using subtle accuracy nudges, leading people to share a higher proportion of true content. 26 Other changes in the design of simulated social media environments can also motivate a focus on accuracy. For instance, in one study, people given feedback indicating trust or distrust rather than like or dislike tended to share more accurate content in future posts. 27 Feedback from others indicating that a post is misleading can also make people less likely to share such posts, even for individuals and topics that are highly politically polarized. 28 Thus, social media platforms could be modified from their current structure to increase the motivation to be accurate.

Distinguishing Wanting From Liking

A key takeaway from this body of work is that when social media algorithms are designed to maximize engagement, such as likes and shares, they probably optimize the degree to which the social media environment stimulates the brain’s reward system. These signals motivate behavior in a way that goes beyond any reasoned choices a person might make. In fact, neuroscience research has documented a distinction between wanting and liking. The mesolimbic dopamine system, often considered the core reward system, motivates animals to approach or want a certain object or response option even when they have no experience with it being pleasurable. 29 The liking system that encodes pleasure relies on a partially overlapping but neurobiologically distinct system mediated by opioid neurotransmitters. 30

The finding that wanting (but not necessarily liking) drives behavior is considered a model for addictive behavior in both humans and animals, and indeed, this neural mechanism has been directly associated with social media addiction. 31 Here, we propose that wanting drives engagement on social media, and thus is reinforced by typical social media algorithms. Posts that generate positive emotions (liking) would only drive engagement and algorithmic prioritization to the extent that they also stimulate wanting. This distinction may help explain why accurate, uplifting, and thoughtful content that people believe should go viral (which hypothetically stimulates mostly liking) is disadvantaged relative to the provocative and incendiary content, including misinformation, that people correctly believe actually does go viral, likely by stimulating wanting. 32 How social media engages these core motivational systems of the brain is therefore likely to play a central role in the propagation of misinformation.

Socioemotional and Moral Motivations

Psychological drivers of the spread of misinformation also include moral motivations and group identity. These factors plausibly contribute to the motivational force that activates the reward system, as discussed in the previous section.

Morality

Referencing of moral topics is associated with the spread of content on social media. One key early study found that posts on Twitter about contentious political topics were more likely to be shared when they expressed greater levels of moral–emotional language: words with both moral and emotional connotations. 33 Another study found that when people were shown headlines about contentious topics, there was a bias toward sharing the headlines that agreed with their own prior point of view (dubbed myside bias). This effect was stronger for topics that the person defined as being of absolute moral importance and for attitudes that were more extreme, whether the headline was true or false. 34

Other work has shown that the moral framing of a headline similarly affects sharing decisions. Specifically, participants were more likely to share headlines when the framings of those headlines matched their own values with regard to moral foundations, and this effect was particularly robust for false headlines. 35 This study primarily relied on a carefully crafted set of headline framings that the authors could vary systematically, presented in a simulated social media environment. They were then able to largely replicate key findings (though with less conceptual precision) in an accompanying analysis of real social media data. 35 Together, these studies suggest that people are highly motivated to share content that reinforces their moral values, even when it is false.

Outrage

Outrage is one moral–emotional response that appears particularly relevant to the spread of misinformation. Research has shown that content from internet domains that tend to contain misinformation, including those associated with the Russian government’s Internet Research Agency disinformation shop, elicited more angry reactions on Facebook and more outraged language in responses on Twitter. 36 Importantly, such expressions of outrage were associated with increased sharing of both true and false content, with the effect being even stronger for false content.

Another study found that positive social feedback on Twitter in response to moral outrage reinforced the level of outrage in subsequent posts, consistent with reinforcement learning. 37 Individuals were also more likely to express outrage when other members of their social networks did so, consistent with a separate mechanism known as norm learning. These authors also obtained causal evidence for the effect of norm learning using a simulated social media paradigm. Participants randomly assigned to a social network where outrage expressions were more common, then asked to choose between sharing a post with high levels of outrage or a more neutral post, were more likely to choose the outraged post compared to those in a network where others’ posts did not contain outrage. Together, these findings strongly suggest that social media algorithms that are maximally optimized for engagement are likely to amplify outrage and, in turn, increase the spread of misinformation.

Group Identity

A related dimension that motivates sharing is the reinforcement of one’s group identity. This motive often combines with outrage, creating a powerful driver of content sharing. One analysis of real social media posts by partisan news sources and U.S. politicians found that references to political outgroups (notably, the opposition party) were linked to increased sharing on Twitter and Facebook among both Republicans and Democrats. 38 These posts generally expressed negative emotions toward the opposing side, possibly including outrage. Another study linking individual survey data with sharing behavior on Twitter found that people who were more strongly partisan showed a greater preference for sharing news supporting their side, regardless of whether the news was true or false. 39

Neural Mechanisms of Moral Conflict

While the above studies did not directly examine brain mechanisms, there is neuroscience research showing that the reward system is stimulated when people envision making aggressive responses motivated by strong moral disagreement. One particularly interesting example is a neuroimaging study of supporters of a terrorist group affiliated with al-Qaeda, who were asked to evaluate their willingness to fight and die for a range of political causes. Higher ratings of willingness to fight and die for sacred causes were associated with increased activity in the ventromedial prefrontal cortex, a core region for reward-motivated behavior that encodes subjective value. 40 Other work has shown that even among people in the United States who were asked to consider hypothetical violent protests, activity in the reward system predicted ratings of appropriateness and moral relevance more strongly for protests on one’s own side. 41

The reward system does not seem to process evaluation of moral issues in all contexts, however. Another recent study asked participants to choose which of two groups of (peaceful) protesters they supported more. Findings showed reward system activation associated with average support for the two groups but not with the moral relevance of the decision. 42 These findings imply that it is specifically moral outrage against opposing groups that activates a reward response, not all moral content.

Algorithmic Prioritization

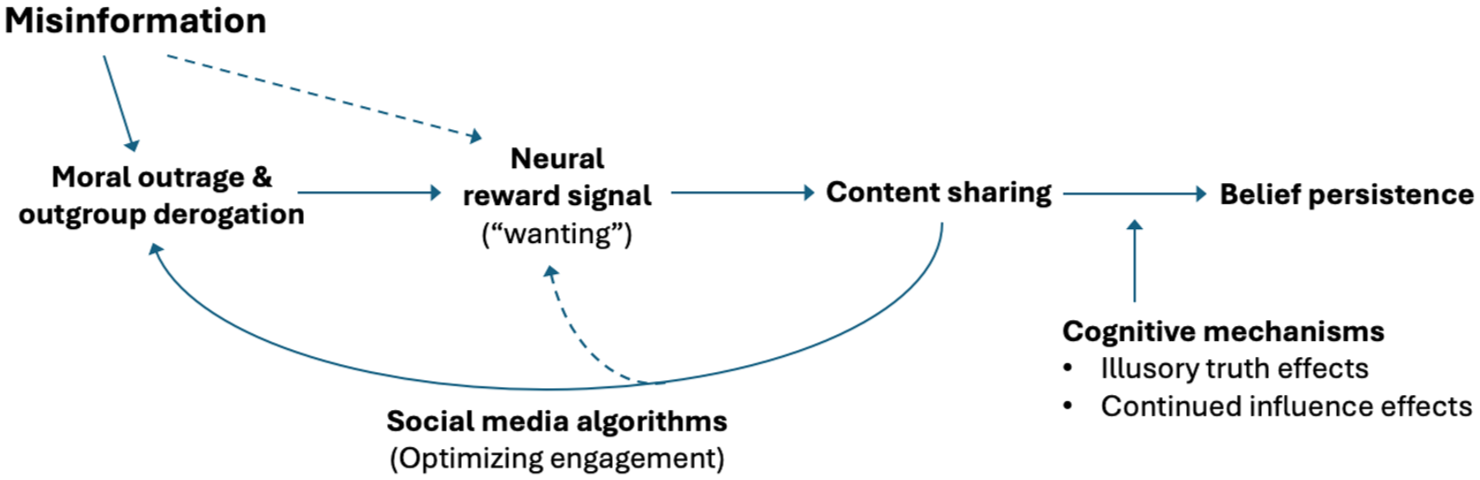

The findings reviewed in this section suggest a potential feedback loop whereby content that evokes moral outrage (especially false content) activates the reward system, prompting greater user engagement. This engagement drives algorithmic prioritization, which in turn reinforces the posting of similar outrage-inducing material (see Figure 1). These dynamics likely amplify the spread of misinformation and contribute to polarization more broadly. This suggests that social media platforms may need to consider reducing the reach of content that provokes moralized outrage against opposing groups. Of course, the benefits of reducing the reach of inflammatory content should be balanced against the beneficial role that this type of content can play in motivating social change and against users’ apparent desire to engage with such content. Still, the risks posed by its unchecked circulation are too significant to simply ignore.

Hypothesized model by which social media algorithms reinforce false and polarizing content

Cognitive Mechanisms

Illusory Truth

A separate body of research from cognitive psychology helps explain why the effects of false information are so persistent and highlights the importance of reducing one’s initial exposure. A well-known quote often attributed to Nazi propagandist Joseph Goebbels is that one can “repeat a lie often enough and it becomes the truth.” 43 This phenomenon has been studied in cognitive psychology experiments dating to the 1970s, 44 and in this literature is referred to as the illusory truth effect. The initial work examined truth in the context of trivia questions where people did not have preexisting knowledge of the correct answers. However, illusory truth effects also occur even with statements that participants can later identify as incorrect. 45

Prior exposure also reduces the ability to detect false political news headlines, showing the pervasiveness of illusory truth effects across various contexts. In one study that used actual fake news headlines seen on Facebook, even a single exposure increased subsequent perceptions of accuracy, both within the same session and after a week. The illusory truth effect held even for stories labeled as contested by fact-checkers or that were inconsistent with the reader’s political ideology. 46

Another study set in a simulated social media environment found that people were more likely to share false content after being previously exposed to it. 47 A statistical mediation analysis showed that prior exposure led to an increase in perceived accuracy and that this increase fully accounted for the subsequent increase in content sharing after prior exposure. 47

These findings indicate that illusory truth effects likely contribute to the belief in and spread of misinformation both inside and outside the laboratory. However, some research has shown that making people aware in advance that certain statements may be false can reduce illusory truth effects. 48 Thus, educating people on how to approach media content more skeptically may help reduce the impact of false information.

Continued Influence Effects

Another cognitive phenomenon that makes people vulnerable to misinformation is continued influence effects (CIEs). CIEs describe the difficulty of fully correcting an initial impression formed by false information, even when a clear factual refutation is provided. One classic approach for studying CIEs involves telling participants that flammable chemicals contributed to the spread of a fire. Later, they are told that this information was false. Nevertheless, participants are much more likely to cite flammable chemicals as contributing to the fire if they are exposed to this incorrect information. 49

More recent work has shown that CIEs are similarly robust when negative information about political candidates is presented but then factually refuted. 50 Emotional arousal triggered by accusations also appears to lead to prioritized processing for the accusations compared to factual refutations. This emotional prioritization may cause accusations to have more impact on decision-making about candidates than less-arousing refutations. As with illusory truth, corrections do appear to be more effective if people are suspicious of the false information before ever being exposed to it. 51 Still, once false information has spread, factual corrections are unlikely to eliminate its effects on subsequent beliefs.

Importance of Preemptive Refutation

The studies reviewed in this section suggest that once misinformation takes hold, it is difficult to fully rebut its influence, even in neutral contexts such as trivia questions or causal reasoning narratives. This persistence shows how deeply vulnerability to false information is grounded in cognition. It is crucial for the media to recognize that false narratives can exert powerful and pervasive negative effects even when accurate information is presented alongside them. News organizations should use this knowledge to adopt a more deliberate approach about what narratives they prioritize. When likely false stories are newsworthy and cannot be ignored entirely, these organizations must make clear in their own voice—and before conveying the story—that there is reason to be skeptical of what is being presented. Overall, this body of research makes clear that the spread of false content via either mass media or social media can be harmful, even if the truth is readily accessible.

Overall Policy Recommendations and Conclusions

If policymakers have a goal of reducing the spread and impact of misinformation, social media platforms cannot be left unregulated. Evidence suggests that social media executives are aware of this challenge. For example, both Facebook and Twitter/X previously implemented content moderation policies and algorithmic adjustments to label or limit the reach of false and polarizing content. However, the platforms have since reversed these measures.52,53 There is evidence that these policies can be effective; for instance, deplatforming of influencers posting extremist content on Twitter reduced harmful posts from those who remained. 54 However, Meta CEO Mark Zuckerberg primarily justified his company’s policy change with his belief that society currently values the right to free expression over regulation of harmful content. 55

Regulation of Content Algorithms

A major theme that emerged from our model (see Figure 1) is the urgent need to regulate social media algorithms. Other reviews have drawn similar conclusions based on research from social psychology,56,57 but our review is unique in highlighting the role of the neural reward system. That is, social media algorithms tend to prioritize content that people “want” (see Reference 29), even if it makes them unhappy and/or harms society.

Based on our framework, future research could more precisely characterize the content (especially false content) that motivates wanting in the brain despite causing harm to individuals and/or society. Regulatory bodies could then target such content to require its deprioritization in algorithmic recommendations. Recent laws, particularly the European Union’s Digital Services Act, provide a good starting point for such regulation. Articles 34–35 of this law require audits of algorithms for systemic risks to society and require efforts to mitigate those risks. The law also provides legal mechanisms to compel cooperation from platforms.

In contrast to Europe, it seems unlikely that federal regulations addressing these issues will be enacted in the United States in the near term. Republican politicians and voters consistently express concern that regulation against misinformation targets them asymmetrically.58,59 However, in a study that applied systematic and unbiased determinations of problematic content, those who supported Donald Trump shared more such content, demonstrating that asymmetries in enforcement are unavoidable. 60 Still, the perception of bias is a hurdle that must be overcome.

U.S. regulations against false information will also need to account for the strong Constitutional protections against government regulation of speech in the First Amendment. Some regulation of harmful speech has been allowed by the U.S. Supreme Court, such as of speech causing “imminent lawless action,”61,62 but not of speech that is simply false. 63 It is unclear whether the contribution of false social media content to extreme political polarization constitutes sufficient harm to allow regulation. One advantage of regulating algorithmic prioritization is that such regulation would likely face fewer First Amendment obstacles than regulating specific false content.

Another difficult issue is efforts by the current U.S. government to impede other countries’ enforcement of laws regulating social media platforms.64 –66 We believe the scientific evidence reviewed here supports the premise that social media can create systemic risks and urge European regulators to be undeterred from enforcing their laws. Despite the considerable power wielded when U.S.-based social media companies align with U.S. governmental resistance to regulation, democracies cannot afford to allow unchecked exploitation of humans’ psychological vulnerabilities.

Strengthening Individual Resistance

Beyond government regulation, policymakers can also support efforts to increase citizens’ capacity and motivation to resist false and manipulative content. One promising approach toward this goal is psychological inoculation. 67 These interventions include games and videos that expose people to manipulation techniques in an exaggerated, humorous context. The goal is to strengthen psychological defenses against such content in the real world. Other work has reviewed a wider range of interventions beyond inoculation that can improve resilience to misinformation on an individual level. 68

Another approach to motivating resistance is using emotional appeals. One recent example is the case of Paloma Shemirani, a young British woman who refused conventional cancer treatments and later died. Paloma’s mother was an avid conspiracy theorist who persuaded her daughter to pursue ineffective alternative treatments rather than the chemotherapy that doctors estimated would have had an 80% chance of success. 69 Grassroots activism could unite loved ones of those harmed by disinformation to encourage them to share their stories publicly.

Concluding Comments

The psychological vulnerabilities we discussed here are not inherently harmful. In many contexts, they promote prosocial behavior and cooperation. The danger arises when they interact with the modern social media environment, which tends to sway people toward beliefs that can pose serious personal risks as well as risks to others.

In a world facing a complex set of serious threats, including those from climate change, pandemic diseases, terrorism, and autocratic regimes gaining influence, leaders must be able to come together to solve problems. However, solutions are made more difficult when social media amplifies false and polarizing information, as such environments can contribute to false beliefs taking hold among wide swaths of the electorate. Given that shifts in public opinion tend to influence policymaking, 70 representatives may feel pressure to act based on these false beliefs and against making the compromises between groups that are necessary to solve problems.

To limit these harmful impacts, we recommend that governments implement and enforce regulations of social media platforms in ways that are informed by psychological science. At the same time, efforts must be made to enhance people’s recognition of and resistance to manipulative content. Without such measures, societies remain highly vulnerable to destabilization.

Summary of Major Scientific Points & Policy Recommendations

Scientific Points

Liking and sharing content on social media is associated with activation of the brain’s reward system.

Misinformation and polarizing content may stimulate a greater reward response by activating outrage, novelty, moral values, and group identity.

Content that stimulates a reward response motivates behavior but is not necessarily pleasurable.

These dynamics lead people to engage more with false and polarizing content, which would cause algorithms optimized purely for engagement to prioritize such content.

Once people are exposed to false information, this information can have subtle but persistent impacts even when they are unaware that it is still affecting their beliefs.

Policy Recommendations

Limiting the spread of false information preemptively is important.

Social media algorithms should be subject to regulation to limit the spread of false and polarizing content. The European Union’s Digital Services Act provides a useful model for this type of regulation.

Investing in public service messaging to enhance citizens’ motivation and capacity to resist manipulation by online content is also important.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.