Abstract

We did something unthinkable: we used an oral exam in our undergraduate business analytics course where course enrollment exceeded 600 students. ConVOEs, Concurrent Video-Based Oral Exams, are assessments conducted through a learning management system whereby all students simultaneously engage in an exam and submit a video of themselves responding to each question. By limiting the time frame over which the assessment can be completed, ConVOEs resemble a sort of conversation, as students must respond in real time to each question and demonstrate their understanding of course material in their own words. The conception of this format, though initially motivated by the shift to online learning during the pandemic, is particularly relevant as richer forms of communication are highly sought by employers yet absent from many business courses, and serves to highlight the old adage, “Everything old is new again.”

Keywords

Oral exams are making a comeback. Instructors have been re-introducing this assessment method as a way to strengthen connections and engagement with their students during the COVID-19 pandemic (Belkin, 2023; Dumbaugh, 2020), but more recently, educators are advocating for verbally administered exams to combat the perils of generative artificial intelligence (AI), like ChatGPT (Dobson, 2023; Donnelly, 2023). While these are valid reasons to implement oral examinations, there is another: preparing students for postgraduate careers. Based on text-mining analysis of over 3,500 analytics-related job postings and course descriptions from nearly 50 graduate programs in analytics, Seal et al. (2020) identified several soft-skill and analytical competencies that were lost opportunities; in other words, these competencies are highly sought by employers but not emphasized (i.e., taught explicitly) in program curricula. Among these lost opportunities were communication skills. Stanton and Stanton (2020) reported similar findings based on their study of business analytics careers, which revealed that communication skills (verbal and written) were in the top five most frequently mentioned skills required for entry-level positions in this field. Those authors recommend incorporating opportunities to develop communication skills into analytics-focused assignments.

While a communications course would provide ample opportunities for students to practice a variety of communication styles, what tends to happen in business analytics courses is that these skills are secondary to other learning objectives. For example, a group project’s main focus is technical analysis, with some (small) portion of grades allocated to a class presentation. Brink and Costigan (2015) argue that this one-way style of communication is fine but two-way communication would provide richer context and is what is most valued in the workplace. This means not just speaking to an audience but also listening and responding. The act of conversing is what most naturally happens in business settings, where thoughtful but unrehearsed and quick responses can set individuals apart. While the case method of teaching spurs much discussion in a group setting, individual oral exams can dig deeper to test a student’s command of the course material.

Burke-Smalley (2014) advocates for the inclusion of oral exams in business programs, noting its documented effectiveness across fields like pharmacy and foreign language instruction but its lack of application in academic business literature. To aid and encourage instructors to adopt this evaluation format, Burke-Smalley (2014) provides helpful guidelines, such as ensuring consistency in the number and wording of questions and exam duration, tips which are echoed by Akimov and Malin (2020) who implemented oral exams in an online setting.

Both Burke-Smalley (2014) and Akimov and Malin (2020) stress, however, that administering oral assessments in courses with large enrollments presents challenges. The sheer number of students leads to logistical difficulties, as more time is needed to administer individual exams sequentially, and this extended time frame could provide an opportunity for students who completed the exam to share questions and answers with those who have yet to be evaluated. Furthermore, students tend to be concerned about consistency in question difficulty and wonder if their individual experience was different (i.e., more difficult) than their peers. In multi-section introductory courses (MICs), conducting oral exams in traditional one-on-one or small group formats would be unmanageable. Achieving consistent assessment across different sections, potentially taught by different instructors, adds another layer of complexity. These same challenges are what contributed to the gradual movement away from oral exams beginning in the 1700s, and why by the early 1900s, written exams became favored for their standardization and efficient grading process (Spolsky, 1990; Stray, 2001). The open question is how to bridge the gap between standardization and scalability and the verbal assessment setting.

To transfer those desired and scalable characteristics to a verbal assessment setting, we propose ConVOEs: Concurrent Video-based Oral Exams. This virtual oral exam format, whose development in Fall 2020 was prompted by the COVID-19 pandemic, provides many benefits of a traditional viva, ensures that each student’s assessment experience is consistent, and is administered concurrently to decrease the likelihood of academic integrity violations. In the following sections, we describe our design of ConVOEs and our experience implementing it in a MIC on business analytics with over 600 students.

Method

ConVOEs mimic an in-person oral exam experience in that students must verbally respond to a set of questions posed. Rather than interact directly with the instructor in a face-to-face environment, ConVOEs are implemented as online quizzes via the course’s learning management system (LMS), with students recording their verbal responses using a personal webcam or smartphone. Students must answer one question at a time and cannot navigate to a previous question once it has been answered. To simulate the conversational style of an oral exam, we set an overall time limit by which all questions must be answered. This time limit is restrictive enough to discourage students from spending too much time developing overly polished and highly edited recordings of their responses. Rather than conduct each of these oral exams sequentially (which would be logistically challenging for courses with high enrollments), students are implicitly required to take the quiz simultaneously.

ConVOEs differ from a traditional oral exam in two ways. First, administering the assessment via an LMS allows students to take the assessment in parallel to one another rather than in serial. Second, each question posed is independent from one another which does not permit instructors to delve into a student’s answer for clarification or to test the boundaries of the student’s knowledge. While this latter feature may offset the desired attribute of a traditional oral exam, this impact can be minimized with cleverly chosen questions.

Implementation

Building a ConVOE

In Fall 2020, we implemented ConVOEs as an assignment in a mandatory third-year introductory business analytics course. Total enrollment was approximately 600 students, split into eight sections of 75 to 80 students each, with four faculty members each assigned to lead two sections. This course, due to the pandemic, was conducted entirely virtually with synchronous class sessions hosted on Zoom; however, in-person courses can also benefit from this online assessment format. We describe our experience with the Canvas LMS, though the features we employed are common across other LMS platforms.

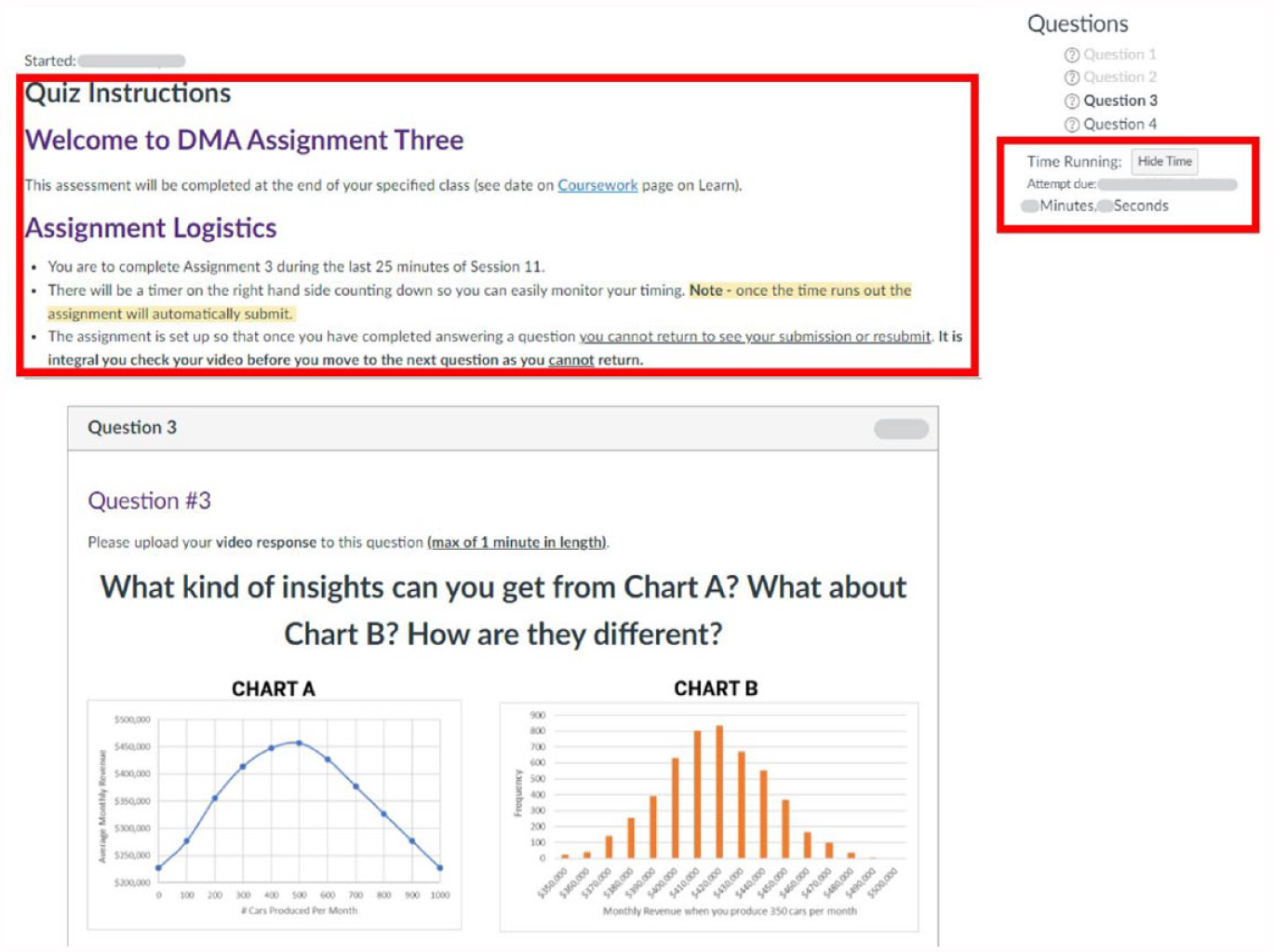

The ConVOE was created as a Canvas Classic Quiz consisting of four questions, with a 25-minute overall time limit. Every instructor developed a unique set of four questions for each of their sections. The quiz availability (time window during which students can access the quiz) and due date were identical for all students in a section, and to avoid individual scheduling conflicts, this availability window overlapped with the last 30 minutes of a class session so that students could complete the assessment during their regular class time. An example of the student interface is provided in Figure 1, and sample questions are provided in Appendix A.

Student View of Interface.

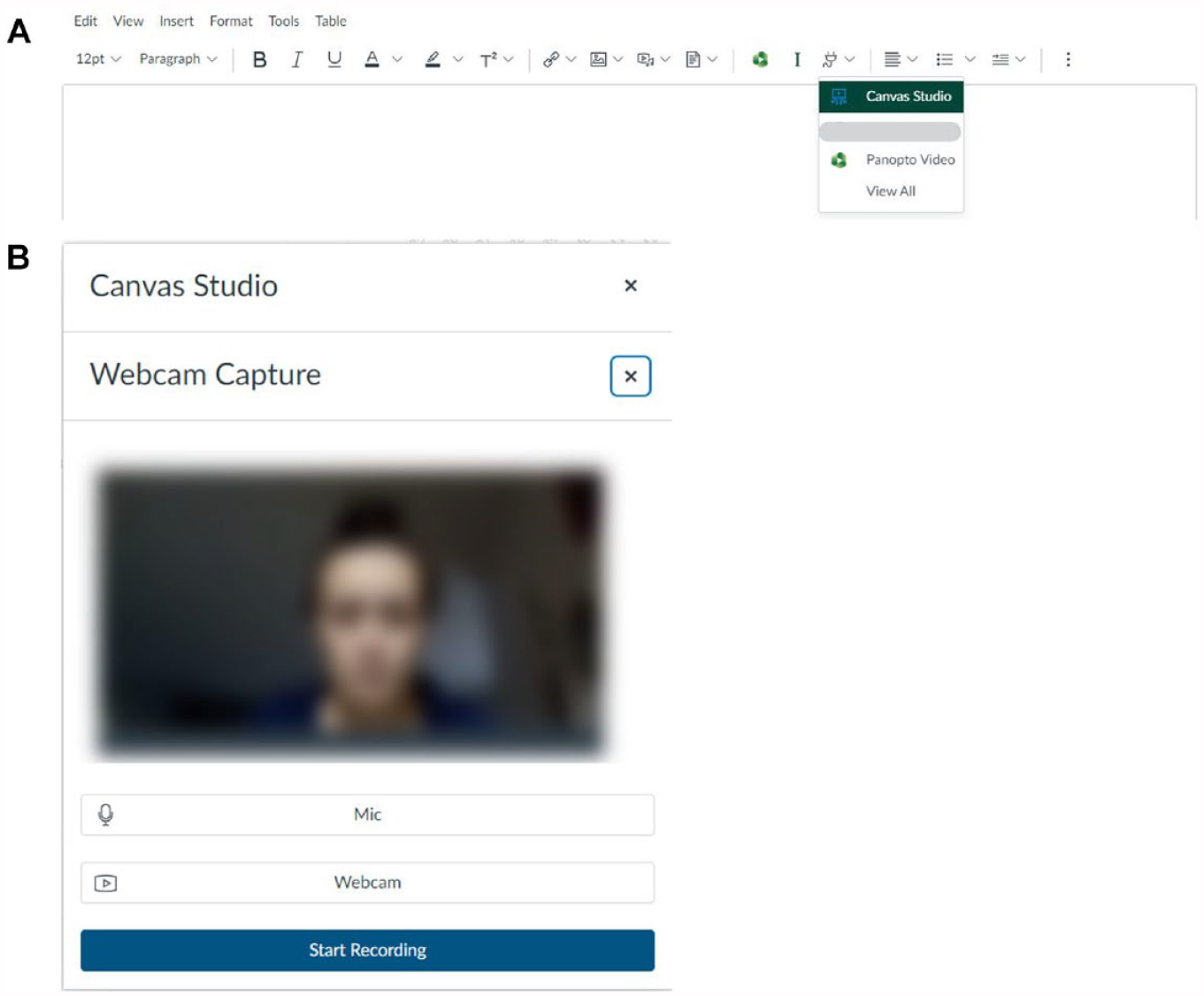

To populate the quiz, we used Essay-type questions. The question text and any accompanying graphics were input, and student responses could be uploaded using a video or audio tool integrated into the Rich Text Editor within the quiz interface. We harnessed Canvas’ native video tool, Studio, to facilitate this process (Figure 2). We advised students that only the first 60 seconds of each of their responses would be graded, which encouraged concise and direct answers to each question.

Recording Process: (A) Canvas Studio Ability to Embed Videos and (B) Student Webcam Capture Options.

To minimize technological hiccups during the actual assessment period, we created a practice version of a ConVOE and made it available to students 1 week prior to the graded ConVOE. This mock assignment allowed students to familiarize themselves with the quiz interface and structure, test their equipment (e.g., microphone and video functionality), and seek assistance from our IT team if needed. Faculty members encouraged students to take advantage of this opportunity, and approximately 75% of students engaged with the practice ConVOE. Our IT team was also able to lend laptops to students if they did not own a laptop or their device was not working.

ConVOE Evaluation Process

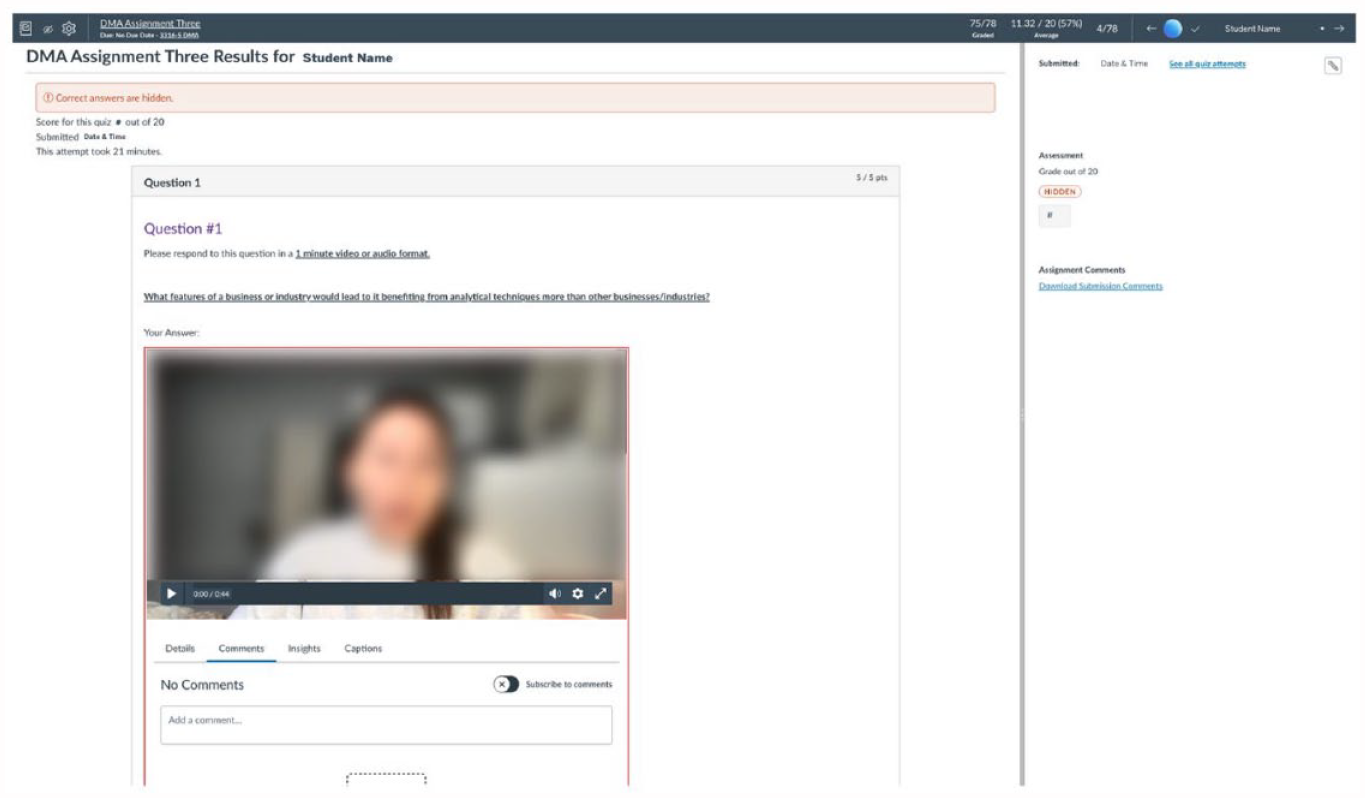

A major benefit of ConVOEs is the ability to deploy and grade an oral exam at scale. To operationalize grading, each section’s submissions were marked by a single grader who was instructed to adopt a parallel evaluation approach, as described in Maclean and Bayley (2023); for example, Question 1 would be graded for all students, followed by grading of Question 2 for all students, and so on. We assumed that this process would ensure consistent application of the grading rubric within a question. Our graders used Canvas’ integrated grading tool, SpeedGrader, which serves as a comprehensive interface that streamlines assessment and feedback through its consolidation of various grading functions. Graders accessed students’ submissions, viewed video responses, and assigned grades to each question within a single window (Figure 3), with the LMS automatically updating the ConVOE’s cumulative score for each student.

Canvas SpeedGrader.

Results and Effectiveness

One unexpected benefit of using video/audio responses is that it allowed graders to pause and rewind responses or view submissions at 1.5× or 2× normal speed. Graders indicated that these speeds were sufficiently slow enough to understand speech while also significantly increasing grading throughput. This was also feasible because our questions were designed to test students’ understanding of concepts through explanations in plain language versus having students recount detailed technical facts or calculations. Graders could thus watch and mark videos quickly and use the stop and rewind functions to listen for specific keywords provided in the grading rubric.

We experienced two challenges in running this assessment type. The first was that some students reported technological setbacks during the assessment. For instance, a student asked for extra time due to losing internet connection at their residence. In another case, a student recorded and uploaded their video responses, but realized only after submitting that their audio had not been recorded. These issues were handled in real time by faculty members on a case-by-case basis using Quiz Moderation tools in Canvas, either by extending the time limit or allowing additional attempts for the ConVOE itself. While these issues were unfortunate, they also seemed similar in number to situations that arise during other time-limited assessments at our institution (e.g., issues with lockdown browsers on exams). The other reassuring indication is that the technology issues appeared to be idiosyncratic. In a debrief after the assessment, the teaching team concluded that further training or information provided to students would not have prevented these occurrences, as the issues were due to factors outside our control.

The other challenge we faced was student collaboration, which was prohibited as per faculty instructions. In one section, the faculty member extended the scheduled availability of the ConVOE from 25 to 40 minutes to ease student anxiety. Some students who began the ConVOE near the beginning of the availability window posted ConVOE questions to an online “group chat,” ostensibly to give other students (who had not yet accessed the platform) advance notice of the assessment content. In response, the faculty member had students from that section retake the ConVOE under the original 25-minute availability window and with different questions.

Finally, we gathered feedback from students and faculty members on their experiences. 1 Students generally noted the increased sense of stress from this assessment format; however, some also recognized the benefits. One student wrote, “The advantage was that it was a lot less tiring than a traditional test because the duration of time to complete it was a lot shorter.” On the administrative side, faculty members reiterated the importance of providing students with a method to practice the platform technology. One faculty member noted, “We had to deal with a few cases that required resubmission. Some videos were muted for example and some questions were not submitted. Some tech issues need to be thought about in advance.” Another said, “I believe giving students the chance to practice [the ConVOE format] beforehand is key.” Appendix B lists practical advice for instructors wishing to adopt ConVOEs in their courses.

Discussion and Conclusion

We used ConVOEs to administer and assess oral exams for an undergraduate MIC on business analytics, a subject and class enrollment size for which oral assessments have not traditionally been viewed as feasible. This format facilitated evaluation of communication skills, a competency that is highly sought for business analytics careers but not generally incorporated as a learning outcome for technically focused business courses.

ConVOEs can also easily extend to other business disciplines. The essence of management courses, regardless of their specialization, is to cultivate decision-making, problem-solving, and communication skills. While group projects and presentations do encourage these softer skills to a certain degree, ConVOEs build on them by requiring students to analyze and articulate their reasoning concisely and in real time, which mimics workplace interactions they’ll experience in meetings with colleagues and superiors. Because of this unique requirement for real-time reasoning, we posit that ConVOEs, while tested only in the context of business analytics, are likely to foster enhanced learning outcomes across a broader spectrum of management courses.

Consider, for example, the strategy course offered to the same student cohort as described in our Fall 2020 implementation. Learning objectives in this course challenge students to navigate organizations’ value creation processes, align resources to support organizational strategy, and grow and reimagine strategies. There is hardly a single correct answer in a course of this nature; rather, students must analyze and assess competitive environments using frameworks (e.g., Porter’s Five Forces (Porter, 2008); Diamond-E (Crossan et al., 2016), and Value Chain (Porter, 1998)) and articulate, propose, justify, and defend their recommendations. By employing ConVOEs, students could be presented with real-world scenarios requiring them to apply these frameworks thoughtfully and then communicate their analysis and strategic recommendations in real time. This method would not only test their understanding of the theoretical concepts but also their ability to synthesize these concepts in practical, often complex, business situations. The immediate nature of ConVOEs thus provides a robust platform for students to demonstrate their strategic thinking and decision-making skills, reflecting the practical realities of business professionals.

This evaluation format was motivated by the COVID-19 pandemic when a major shift to online delivery of assessments was necessary. Our decision in Fall 2020 to implement a time limit, restrict the availability window, and disable backtracking was purposeful with the intention to discourage question-sharing between students that could occur via online channels. Coincidentally, even as many courses are now in-person again, there is a new threat to academic integrity: the development and popularization of large language models (LLMs) such as ChatGPT. Much has been written about the threats and opportunities that LLMs create (Cotton et al., 2023; Perkins, 2023); however, it is clear that assessments must be adapted in response. This has arguably made oral assessments even more valuable than they were in 2020, and we believe that instructors should be prepared with this evaluation format in their repertoire. By offering a structured, scalable method for deploying and grading oral assessments, we hope to enhance students’ learning experience in business and management education, particularly for large student groups, and better prepare them with skills they will inevitably require in their future careers.

Footnotes

Appendix A

Appendix B

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.