Abstract

The authors examine how people interpret expert claims when they do not share experts’ technical understanding. The authors review sociological research on the cultural boundaries of science and expertise, which suggests that scientific disciplines are heuristics nonspecialists use to evaluate experts’ credibility. To test this idea, the authors examine judicial decisions about contested expert evidence in U.S. district courts (n = 575). Multinomial logistic regression results show that judges favor evidence from natural scientists compared with social scientists, even after adjusting for other differences among experts, judges, and court cases. Judges also favor evidence from medical and health experts compared with social scientists. These results help illustrate the assumptions held by judges about the credibility of some disciplines relative to others. They also suggest that judges may rely on tacit assumptions about the merits of different areas of science, which may reflect broadly shared cultural beliefs about expertise and credibility.

The expansive authority of scientific and expert knowledge is a hallmark of modern societies (Beck 1992; Habermas 1990; Weber [1905] 2002). Firms, legislatures, courts, and other organizations consult frequently with technical experts to make decisions related to management, legislation, litigation, and more (Gorman and Sandefur 2011). Yet decision makers usually evaluate expert advice from the position of a knowledge deficit. Nonspecialists’ disadvantage is amplified when experts’ claims are ambiguous or contested by other experts. With incomplete technical understanding, how do decision makers know whether experts are credible?

Research on knowledge asymmetries in economic decisions offers one set of answers to this question. These studies frame the problem as unevenly distributed information between actors, such as between a firm’s shareholders and its executives (Brodbeck et al. 2007; Peysakhovich and Karmarkar 2016; Zhu and Weyant 2003). In many exchanges, information asymmetries can be minimized and knowledge claims can be verified because actors have similar technical capacity (Sharma 1997). However, in exchanges between clients and experts, vast knowledge disparities undermine clients’ ability to verify experts’ claims. This client-expert problem surfaces in many organizations that rely on professional services, including courts that use expert witnesses. Consistent with the research on economic decision making, research on courts suggests that nonspecialists, such as judges and jurors, must overcome information deficits to know whether experts are credible (Dixon and Gill 2002; Lesciotto 2015; Penrod et al. 1995; Schauer and Spellman 2013). In other words, the assumption is that nonspecialists must identity the essential qualities of knowledge claims that signal whether they are genuine expertise or junk science.

These studies highlight a common problem in modern systems but they say little about the role of culture in how people resolve it. Yet perceptions of experts are not reducible to understanding technical information (Gauchat and Andrews 2018). Instead, people interpret experts on the basis of symbols associated with the cultural boundaries of science, such their credentials and the places they work (Abbott 1988; Gieryn 2018). Disciplines are especially important markers of credibility (Gieryn 1999). Research on the cultural boundaries of science also shows that scientific authority is relational, and that people interpret disciplines’ credibility relative to other sources of authority, such as other disciplines (Gauchat and Andrews 2018). Accordingly, some experts are more scientific than others by virtue of their discipline.

Disciplines are important to public perceptions of experts, but their heuristic value may be limited to passive, abstract exchanges, such as when survey respondents are asked to rate different kinds of scientists. In contrast, actors who seek out expert advice to solve concrete, organizational problems may be more attuned to experts’ instrumental value. Organizational rules may further depersonalize decisions and level decision makers’ personal biases. Yet without shared technical understanding, decision makers may implicitly judge experts on the basis of cultural symbols of science that either override organizational rules or anchor their interpretation.

In this article we examine the cultural frameworks used to recognize credible experts. To do so, we analyze judicial decisions about contested expert evidence in courts. Our interest is not in whether experts have specialized knowledge but in how decision makers use cues to draw conclusions about expert claims when faced with technical uncertainty. Our focus on the cultural schemas used to sort and rank experts sets this research apart from studies of the concrete knowledge and skill that constitute expertise (Collins 2018; Evans and Collins 2007; Eyal 2013). Yet our attention to experts in action reflects a wider current in the sociology of expertise, which is to understand the normative intervention of experts in society (Eyal and Buchholz 2010). Our approach also builds on research into the cultural schemas used by publics to locate actors in the field of science (Gauchat and Andrews 2018).

To study the cultural frames used to interpret experts’ credibility, we examine judicial decisions about motions to exclude expert evidence from court. The data come from a probability sample of judicial decisions contained in Daubert Tracker (n = 575), a database of judicial decisions about the admissibility of expert evidence. When a party in a court case files a motion to exclude evidence from an opposing expert witness, the judge must decide whether to allow or exclude the evidence. Although judges are bound by the rules of evidence, evaluating specialized knowledge may require them to apply their own tacit understanding of credible expertise. In this article we examine whether, drawing on the same explicit rules of evidence, there are patterns to the disciplines judges exclude from court. Multinomial logistic regression results suggest that judges are substantially more likely to allow evidence from natural scientists than from social scientists. Judges also favor evidence from medical and health experts compared with social scientists. The results help illustrate the assumptions held by judges about the credibility of some disciplines relative to others. They also suggest that courts may have tacit expectations about the merits of different types of science, which may reflect broadly shared cultural beliefs about expertise and credibility.

Background

Cultural Boundaries of Science

A long tradition of research in the sociology of science examines the creation and maintenance of boundaries between science and other fields of cultural production (Gieryn 1983). During credibility contests, actors vie to situate their claims within the cultural boundaries of science and to deny their opponents’ claims to that terrain. Winners are allowed to make authoritative statements about the world and losers are stripped of their scientific legitimacy. This constructionist view of science does not deny the utility of scientific knowledge or the presence of knowledge deficits between specialists and nonspecialists. It simply recognizes that the distinction between science and nonscience reflects social forces rather than essential qualities of knowledge. The purpose of inquiry is therefore not to demarcate true from false but to identity the schemas people use to navigate social life.

In addition to separating science from nonscience, cultural boundaries distinguish disciplines within science. Although disciplines are often thought of as areas of specialized knowledge, they are also sites of identity and competition (Cambrosio and Keating 1983; Whitley 1976). Nested within a wider field of science, disciplines compete to define the practices that constitute science (Bourdieu 1975). By creating new disciplines, actors can create new rules for legitimation and avoid subjugation to the field as a whole (Fligstein and McAdam 2012). Yet within the wider field of science, disciplines are judged against its dominant practices, which are determined by its dominant actors. As a result, disciplines such as physics and biology set the standards by which other disciplines are evaluated. In turn, disciplines that adopt the practices of dominant actors, such as quantification and experimentation, are more scientific, and therefore more credible, than disciplines that do not.

Jurisdictional disputes throughout the history of science have created a cultural map that helps people appraise the value of knowledge claims from different parts of the field (Gieryn 1999). The result is a durable, yet pliable understanding of which disciplines are more scientific and credible than others. Not every person agrees that natural sciences are more scientific than social sciences, but most do. And although the map is occasionally revised through boundary work, it is mostly stable. We add to this scholarship by studying whether judges’ decisions about expert evidence reflect broader contours in the cultural map of science.

By many measures, natural sciences are more successful than social sciences as disciplinary projects. In the United States, nearly everyone thinks that physics is scientific, but fewer than half think that sociology is (Gauchat and Andrews 2018). People also think that natural scientists should have more influence than social scientists over public policy (O’Brien 2013). These differences in public opinion track with wide differences in funding for natural and social scientific research and education, which favor natural sciences (NCSES 2022). When Congress established the National Science Foundation (NSF) in the 1940s, many lawmakers balked at including social sciences, arguing they were too ill defined to be considered scientific (Gieryn 1999). After winning entry into the NSF at its inception, the credibility of social science was on the line again in the 1960s, put there this time by lawmakers who sought their expulsion for their alleged political biases. Social sciences prevailed again and remain a part of the NSF, but these episodes illustrate their tenuous place in the field of science and the boundary work performed to keep them there.

Overall, research on boundary work shows how audiences use disciplines to interpret the scientific-ness and legitimacy of knowledge claims. What researchers have yet to examine is how the tacit hierarchy of disciplines produced through boundary work interacts with formal organizational rules to help nonspecialists interpret technical information. Based on the research reviewed so far, we anticipate that disciplines guide nonexperts’ evaluations of technical information in ways that, at the aggregate level, prioritize natural sciences over social sciences.

Sociology of Expertise

Our interest in how experts are deployed in organizations aligns with other sociological research on experts in action (for reviews, see Azocar and Ferree 2016; Eyal and Buchholz 2010). These studies of experts and expertise have roots in twentieth-century research on professions, which focused primarily on the institutionalization and professionalization of fields of work (Abbott 1988; Friedson 1988). Like the research on boundary work in science, these studies lean heavily on historical jurisdictional disputes to observe the production of cultural categories. Often equating professions with expertise, research on classical professions like science, law, and medicine focused closely how fields of work establish autonomy and monopoly over areas of knowledge and practice.

The specialization of knowledge work and the rise of the professional services sector in the late twentieth century blurred distinctions between professions and other occupations, which challenged earlier understandings of expertise (Gorman and Sandefur 2011). Research on knowledge work has since expanded to consider claims-making more broadly. Attention also shifted away from institutional analyses to studies of experts’ intervention in institutions (Eyal and Buchholz 2010). Rather than seeing expertise as an attribute of actors, these studies often approach it as a system of material, cognitive, and social relationships (Latour 1987). Prioritizing the historical processes that create expertise and legitimate experts, this view defies the traditional expert-layperson hierarchy by making patients, consumers, activists, and other stakeholders nodes in networks of expertise (Epstein 1996). By drawing attention to the role of nonexperts in assembling expertise, these studies further underscore the importance of audiences to the boundary work performed to legitimate disciplines’ knowledge claims.

Although these studies shed light on the material and symbolic content of expertise, they say little about the cultural schemas that help audiences interpret expert claims. This study builds on research into experts and expertise by examining how the boundary work used to differentiate scientific disciplines relates to experts’ credibility in courts. If, as we expect, disciplines are heuristics that nonspecialists use to gauge the legitimacy of expert claims, then there should be overall differences in how judges’ rule on expert evidence from different disciplines.

The Judicial Gatekeeping of Expert Evidence

Like many organizations, courts commonly rely on outside experts to perform knowledge intensive work. Litigants from individual plaintiffs and defendants to the federal government hire expert witnesses to assist in virtually every area of criminal and civil law. Litigants also try to exclude opposing experts from testifying. When they do, trial judges must decide whether to allow the contested evidence or bar it from the case. These rulings can be pivotal, because admitting expert evidence into court imbues it with credibility and makes it more persuasive (Schweitzer and Saks 2009). Once expert evidence is admitted, it can affect litigation in a variety of ways including having a direct impact on jurors’, juries’, and judges’ subsequent decisions about the case (Woody et al. 2018).

Research on judicial evaluations of expert evidence largely comes from a jurisprudential perspective and focuses on the legal framework for admissible evidence. In 1993, the Supreme Court’s ruling in Daubert v. Merrell Dow Pharmaceuticals, Inc. established trial judges as the “gatekeepers” of scientific knowledge in federal courts and codified guidelines to help them evaluate the reliability of expert evidence (Gianelli 1993). Although Daubert identified several indicators of reliability for judges to consider, such as whether the evidence was produced through a method with a known error rate, the criteria are nonrequired, and the ruling gave judges substantial discretion to decide whether expert evidence is admissible. Daubert also clarified that expert evidence must be relevant through a “valid scientific connection to the pertinent inquiry” (Daubert v. Merrell Dow Pharmaceuticals, Inc. 1993:580) but once again gave judges ample leeway to decide what constitutes a valid scientific connection. We argue that these rules provide judges not only a legal context to evaluate expert evidence, but also a cultural one. By conditioning the admissibility of expert evidence on judges’ beliefs about science, Daubert may create a tacit advantage for disciplines that are seen as more scientific than others despite the discipline-neutral language of the rules of evidence.

Researchers have studied the legal framework for expert admissibility carefully, but the role of culture in these decisions is overlooked almost entirely. This blind spot in the literature reflects its dominant conceptual approach to expertise as a real, underlying quality that corresponds to specialized knowledge. A realist perspective such as this suggests that credibility comes from underlying qualities of knowledge claims, which judges can identify by applying the rules of evidence. The view of expertise as an essential quality of knowledge is reflected by the centrality of scientific reliability in the jurisprudence and scholarship on expert evidence. Yet sociological research on symbolic boundaries suggests that this is not how expert claims are evaluated, especially when there are extreme knowledge asymmetries. We therefore extend research on the judicial gatekeeping of expert evidence by examining whether the cultural schemas that organize public perceptions of science are also evident in courts.

Hypotheses

In this article we advance research on the cultural boundaries of science by investigating how they correspond to judicial decision making. Public perceptions of scientific disciplines are based on cognitive maps people use to organize their impressions of science. On the basis of the foregoing discussion, we anticipate that judicial rulings on the admissibility of expert evidence reflect a similar map. Specifically, we expect judges to see natural scientists as more authoritative than social scientists and therefore to exclude evidence from social scientists more often than evidence from natural scientists. Engineering’s close association with science suggests that judges may also exclude social scientific evidence more often than engineering evidence. And medicine’s high status as a field of science and profession may make medical experts seem more credible than social scientists (Whooley 2013). If so, then judges’ may also be more likely to exclude evidence from social scientists than from medical experts.

Hypothesis 1: Judges are more likely to exclude evidence from social scientists than from natural scientists, engineers, and medical experts.

Within social science, economics wields unusual authority. The discipline’s unique trajectory of professionalization and expansive jurisdiction set it apart from other areas of social science (Fourcade 2006). Economics, especially in the United States, institutionalized quantification and formal modeling more thoroughly than many other areas of social science. By adopting the dominant practices of natural sciences so fully, economics has amassed disproportionate cultural authority among the social sciences. Economics’ clout among social science is reflected in economists’ greater visibility in nonacademic institutions and superior remuneration compared with other social scientists (Fourcade, Ollion, and Algan 2015). Given the greater cultural authority of economics compared with other areas of social science, we expect that economists may seem more credible than other social scientists and may be more likely than other social scientists to withstand judicial scrutiny.

Hypothesis 2: Judges are less likely to exclude evidence from economists than from other social scientists.

Data

To study the cultural schemas that organize perceptions of experts, we use data from judicial decisions about the admissibility of expert evidence. In trial courts, judges issue written decisions to resolve issues raised by parties at various points during the case, such as whether the case should be dismissed or whether certain pieces of evidence should be allowed. The lack of a uniform purpose, style, or reporting mechanism for trial court decisions make them challenging sources of data for statistical comparisons. Yet trial judges’ substantial discretion over motions to exclude or limit expert evidence provides a valuable chance to study how decision makers interpret the credibility of expert claims when they do not share experts’ technical understanding.

Existing studies of expert admissibility are typically limited in scope, focusing on one or a few kinds of evidence. In contrast, we analyzes a large probability sample of judicial decisions selected from the most comprehensive sampling frame available, Daubert Tracker, a subscription-based service that compiles expert admissibility decisions in federal and state courts. Conventionally, research on expert admissibility uses nonprobability samples of decisions reported to databases such as LexisNexis and Westlaw. However, not all decisions are reported. E-filing has substantially improved the coverage of conventional databases, yet they may contain only the “tip of the iceberg” of expert admissibility decisions (Boyd, Kim, and Schlanger 2020). In addition to all reported admissibility decisions, Daubert Tracker contains many unreported admissibility decisions gathered from docket sheets, litigation reports, jury verdict reports, court Web sites, and other sources. At the time of data collection, the database held more than 219,000 decisions on expert admissibility. More than 130,000 of these were unreported to LexisNexis and Westlaw.

Daubert Tracker has important advantages over the conventional approach to studying judicial gatekeeping. Because Daubert Tracker includes unreported decisions, it provides much more information about the population of interest compared with other case law databases. And given its substantial coverage, Daubert Tracker allows us to use probability sampling, which allows us to generalize results to the population of written admissibility decisions. The database was designed to help legal professionals recruit and deploy expert witnesses, and it is rarely used in academic research (although see Page, Taylor, and Blenkin 2011a, 2011b for exceptions). However, its unmatched scope offers a unique chance to study national patterns in judicial decisions about contested expert evidence. Because of state-level variation in rules for evidence and because evidentiary decisions are made during the trial phase, we focus on U.S. district courts. In total, the sampling frame included 11,095 decisions about expert witness admissibility in federal courts between 2015 and 2018.

Five researchers content-coded a randomly selected 575 of these admissibility decisions. Admissibility decisions were coded for characteristics of judges, expert witnesses, and court cases. Before beginning the study, coders completed a training course that included methodological and study specific trainings and practice coding and norming sessions. During training, the researchers refined the code sheet and practiced applying it until interrater reliability reached 90 percent and a Cohen’s κ coefficient of 0.80.

A potential limitation of these data is that the sampling frame, although substantially larger than previous research, may still be unrepresentative of the population of admissibility decisions. Although it is not possible to assess representativeness, Daubert Tracker provides far more information about the population than LexisNexis or Westlaw, which are the two most likely alternative data sources. Moreover, reported judicial decisions are especially important because they codify doctrine that influences future cases.

Methods

The purpose of our analysis is to determine whether judges’ decisions about expert evidence are associated with expert witnesses’ disciplines. We begin by examining the bivariate relationship between experts’ disciplines and judges’ decisions to exclude, limit, or admit their evidence. Then, we use multinomial logistic regression models to control for other characteristics of courts, judges, and experts that may be associated with judges’ decisions. Despite the intuitive ordering of our dependent variable—judges’ decisions to exclude, limit, or admit evidence—we use the multinomial logistic model because a Brant test indicates that the parallel regression assumption is not met and that the ordinal logistic model should not be used (χ2 = 43.77, df = 29, p < .05). A total of 346 judges authored decisions in these data, with a mean of 1.67 decisions per judge. We therefore cluster standard errors in the regression model by judge. To account for potential regional variation in judges’ decisions associated with variation in the composition of judges across districts, we conducted additional analyses that clustered standard errors by judicial district and found similar results. To interpret regression results and to illustrate discipline differences in judges’ decisions, we compute predicted probabilities and average marginal differences.

Measures

Dependent Variable

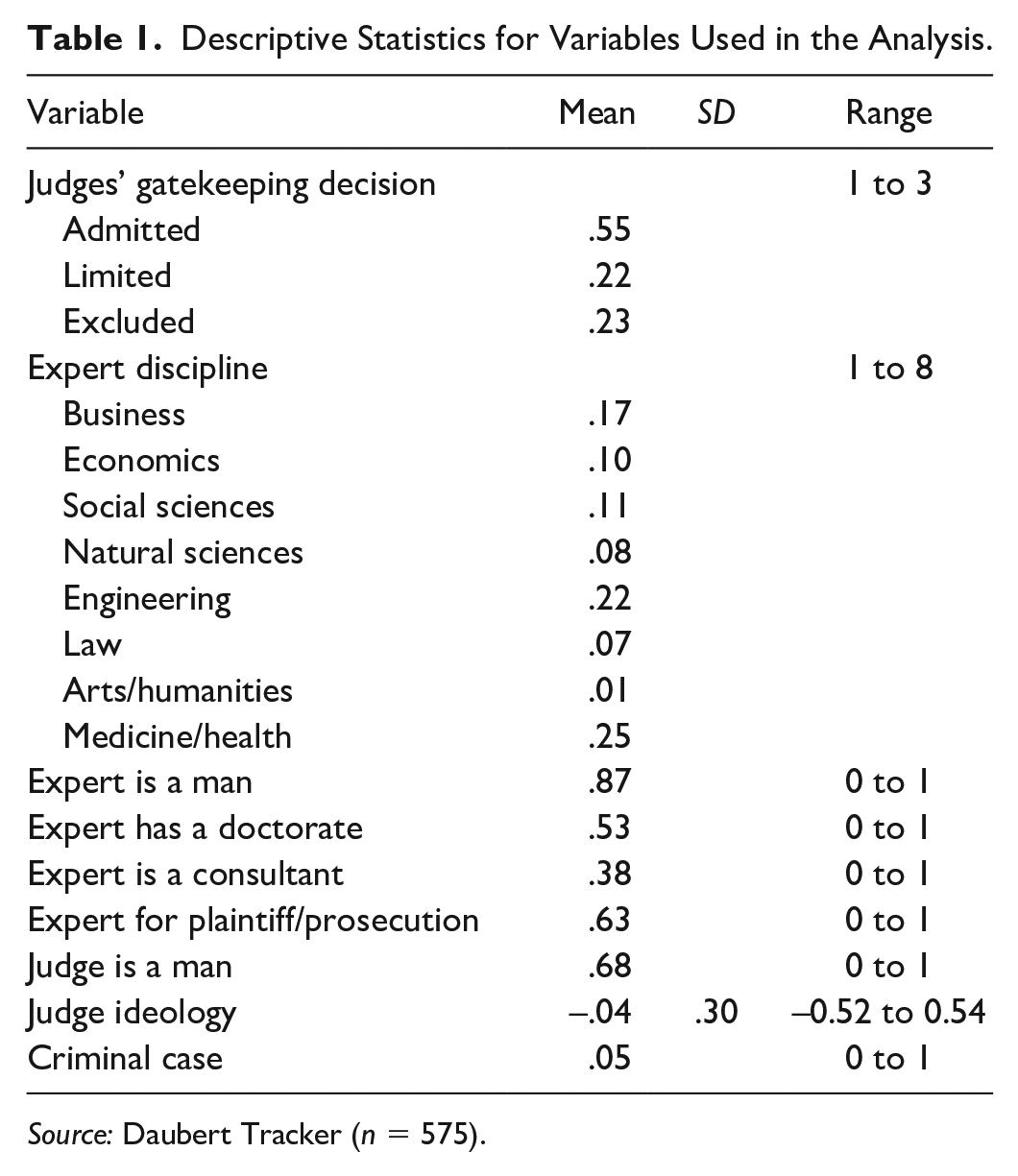

The dependent variable is judges’ decisions about motions to exclude expert evidence. When a party in a court case files a motion to exclude opposing evidence, judges may grant the motion and exclude the evidence from being used, grant the motion in part and exclude some but not all of the evidence, or deny the motion and admit the evidence into court. We measure these rulings using mutually exclusive categories for decisions to exclude, to limit, and to admit evidence. Table 1 contains summary statistics for the dependent variable. Overall, judges admitted evidence in 55 percent of these cases, limited evidence in 22 percent of these cases, and excluded evidence in 23 percent of these cases.

Descriptive Statistics for Variables Used in the Analysis.

Source: Daubert Tracker (n = 575).

As we described, judges have substantial discretion in these rulings, and each decision category includes a wide variety of evidentiary circumstances. For example, a limiting decision may strike nearly all of an expert’s deposition testimony or it may remove a single sentence from their forensic report. Despite the heterogeneity within decision categories, their shared focus on contested expert evidence provides a unique chance to study how powerful organizational leaders interpret specialized knowledge claims from the position of a deficit in technical understanding.

Independent Variable

The independent variable of interest is expert witnesses’ discipline, which we measure as the field of their highest degree. Experts are sorted into eight mutually exclusive categories for arts and humanities, business, economics, engineering, law, medical and health sciences, natural sciences, and social sciences. 1 Because our interest is in the cultural boundaries of science, the discussion of results focuses on economics, engineering, medical and health sciences, natural sciences, and social sciences. Information about experts’ education came from sources such as ProQuest Dissertations and Theses, public profiles on social media, company and personal Web pages, and databases of service providers.

We see disciplines as flexible, analytical categories not immutable properties of knowledge or practice. This approach is consistent with a research tradition in science and technology studies (Cambrosio and Keating 1983). The disciplines we examine align roughly with scientific and professional training programs and with the disciplines contained on national surveys of science attitudes. The distinction between social and natural sciences is especially important to the analysis and relatively easy to identify. The decision to separate medical and health sciences from natural sciences reflects their autonomy, esteem, and distinctive professional jurisdiction (Whooley 2013). The decision to separate economists from other social scientists reflects economics’ high standing among social sciences, its unique history as a profession, and its insularity from other social sciences (Fourcade et al. 2015). There are many more economists (n = 55) than any other kind of social scientist in the data set, which is indicative of the importance of financial damages in these cases. The next largest group of social scientists, and the only other one with more than 10 experts, is psychologists (n = 22). Supplemental analyses found that judges’ decisions about psychologists resemble their decisions about other social scientists. Psychologists are therefore included with other social scientists in the analysis.

Although most experts in these data fit clearly into the coding scheme, some boundary cases could reasonably be classified differently. For example, nursing administration is coded as a business degree but could arguably be included with medical and health sciences. And toxicology is included among the medical and health sciences although it may reasonably be considered a natural science. Although boundary cases introduce a degree of ambiguity into the analysis, there are too few of them to substantially affect our main conclusions. For instance, there are only three toxicologists and one nursing administrator in the data. And analyses of alternative discipline categories verify that conclusions do not depend on boundary cases. Although other coding schema may provide other insights about the relationship between scientific disciplines and credibility, this article’s operationalization of disciplines aligns with existing theory and measurement and provides a baseline to compare against alternative measures of disciplines used in future research.

Control Variables

The regression model controls for several characteristics of experts, judges, and court cases that past studies suggest may be associated with judges’ decisions about expert evidence. Educational credentials are easily recognizable symbols of legitimacy, which connote status and authority (Abbott 1988). The regression model therefore controls for expert’s credentials with a dichotomous measure that indicates whether the expert held a doctoral degree, including doctor of medicine, doctor of philosophy, or another doctorate such as doctor of science, doctor of psychology, or doctor of education.

Expert witnesses who testify repeatedly may be better than those with less experience at providing evidence that meets the legal standards for admissibility. To control for experts’ litigation experience, we include a dichotomous control variable that equals one for consultants that specialize in expert witnessing services. The persistent underrepresentation of women in science, technology, engineering, and mathematics (STEM) fields coupled with gender disparities in perceived task competence suggest that judges may tacitly assume that men are more credible expert witnesses than women (Correll 2001; Kahn and Ginther 2018). Indeed, a study of civil courts found that judges were more likely to exclude expert evidence from women than from men, although the gender gap disappeared among experts with advanced credentials (O’Brien 2016). Our analysis therefore includes a dichotomous variable for expert witnesses’ gender, which equals one for men and zero for women. Experts’ gender was identified using their first names and pronouns used to describe them.

Research on trial judging suggests that women and men judges rule differently in some contexts, including when gender is salient, such as in discrimination and sexual harassment cases (Boldt et al. 2021; Boyd, Epstein, and Martin 2010; O’Brien 2018). Regression models therefore control for judges’ gender using a dichotomous variable retrieved from the Federal Judicial Center, which equals one for men and zero for women. There is also evidence that judicial behavior may reflect judges’ political ideologies (Harris 2008; Segal and Spaeth 2002). The regression model therefore controls for judges’ ideology using common space scores, which are based on the parties of judges’ nominating president and confirming senators (Boyd 2015; Giles, Hettinger, and Peppers 2001; Epstein et al. 2007). Because magistrate judges are elected by judges in their district, they are assigned the mean common space score for their judicial district.

Judges tend to be more receptive to evidence from criminal prosecutors than from civil plaintiffs (Dioso-Villa 2016), which is consistent with powerful litigants’ more general advantages in court (Galanter 1974). The regression model therefore includes a dichotomous measure that equals one for experts who represent plaintiffs or prosecutors and zero otherwise, a dichotomous measure that equals one for criminal cases and zero for civil cases, and a product term for the interaction of these two variables. The interaction allows us to distinguish among judges’ decisions about evidence from criminal prosecutors, criminal defendants, civil plaintiffs, and civil defendants. Finally, the regression model includes fixed effects for judicial circuit to account for differences in judicial precedent across appellate circuits as well as for decision year to account for differences in judicial behavior associated with time.

Results

Descriptive Statistics

To find out whether judges’ admissibility decisions are associated with experts’ disciplines, we begin by examining descriptive statistics for the bivariate relationship. Table 2 contains the percentage of experts from each discipline that judges decided to admit, to limit, and to exclude. It shows that judges admitted a larger share of evidence from natural scientists than from any other disciplines (67 percent). It also shows that judges limited less natural science evidence than any other discipline (9 percent). Judges allowed evidence from medical and health experts nearly as often as from natural scientists (62 percent). However, judges limited medical and health evidence more than twice as often as natural science evidence. Judges also admitted economic and business evidence more than average (60 percent and 56 percent), although like medical and health evidence, judges limited economic and business evidence more than twice as often natural science evidence. In contrast, judges admitted evidence from engineering, social science, law, and arts and humanities at below average rates. Judges were especially likely to exclude social science (31 percent), legal (34 percent), and arts and humanities (38 percent) evidence.

Judicial Gatekeeping Decision by Expert Discipline.

Source: Daubert Tracker (n = 575).

Note: The table contains row percentages. Rows may not sum to 100, because of rounding.

Several of these findings align with our expectations. The relative success of natural sciences, medical and health sciences, and economics compared with other social sciences is consistent with the hierarchy of disciplines found in public opinion (Gauchat and Andrews 2018). More surprising is the low percentage of engineering evidence allowed. One explanation for this may be that professional engineers are less likely than scientists, medical and health experts, and economists to hold terminal degrees, and that the link between disciplines and admissibility is confounded by experts’ credentials, important symbols of their credibility. If so, then judges’ decisions about engineering evidence should look more like their decisions about natural science and medical evidence after adjusting for experts’ credentials.

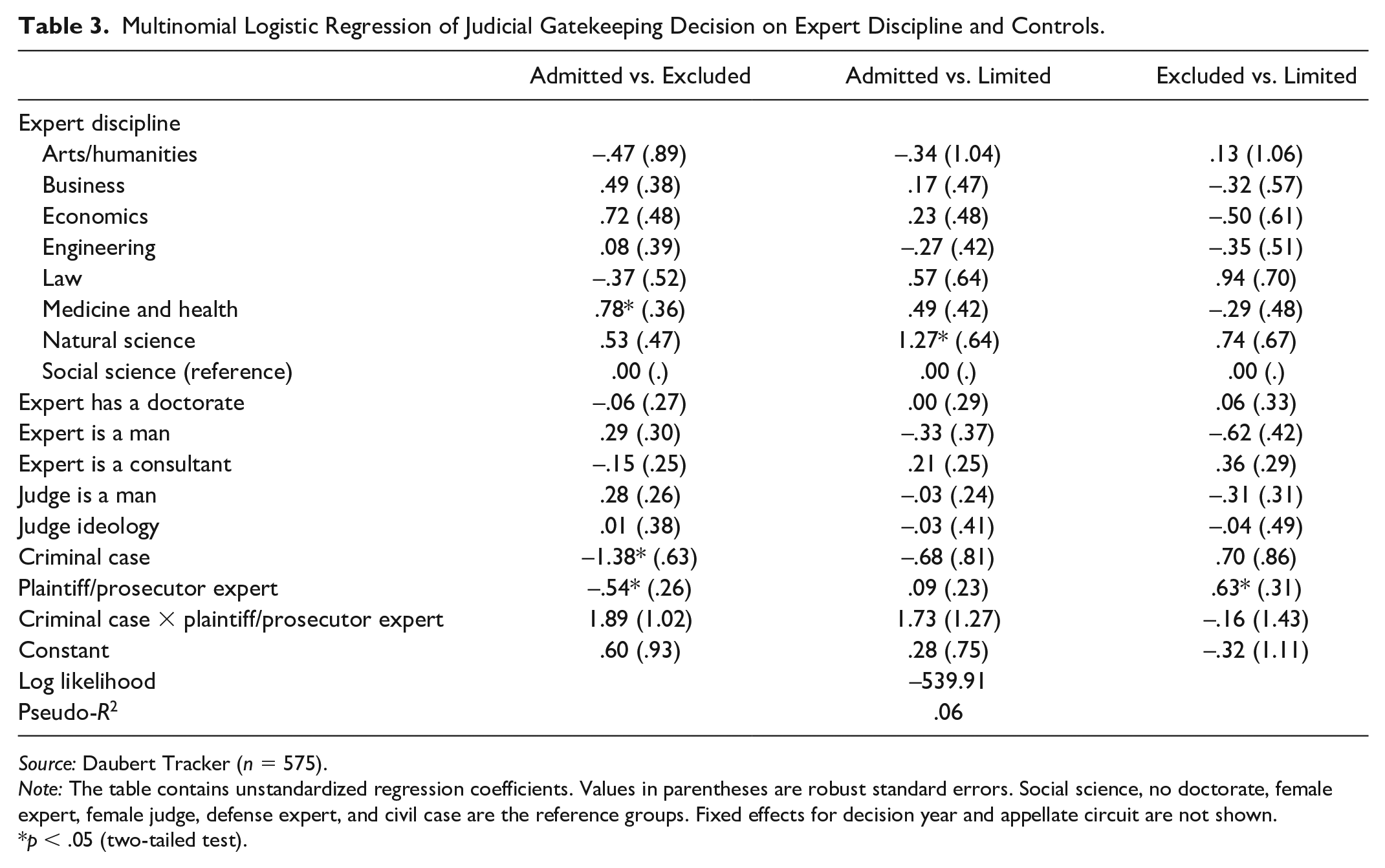

Multinomial Logistic Regression

Next, we examine these descriptive patterns in a regression context where we can control for other relevant factors and conduct significance tests. Table 3 contains estimates from a multinomial logistic regression of judges’ decisions on experts’ disciplines and controls for characteristics of experts, judges, and court cases. Like the descriptive findings, the regression results suggest that judges favor evidence from natural sciences and medical and health sciences compared with social sciences. All else equal, judges are more likely to admit rather than limit evidence from natural sciences compared with social sciences, the reference group (b = 1.27, p < .05). And judges are more likely to admit than exclude evidence from medical and health experts compared with social scientists (b = 0.78, p < .05).

Multinomial Logistic Regression of Judicial Gatekeeping Decision on Expert Discipline and Controls.

Source: Daubert Tracker (n = 575).

Note: The table contains unstandardized regression coefficients. Values in parentheses are robust standard errors. Social science, no doctorate, female expert, female judge, defense expert, and civil case are the reference groups. Fixed effects for decision year and appellate circuit are not shown.

p < .05 (two-tailed test).

Table 3 also shows that experts’ credentials and gender and judges’ ideology and gender do not have statistically significant associations with judges’ decisions, net of other differences. This is notable because each of these factors is associated with judicial behavior in other contexts, including decisions about expert evidence (Boyd et al. 2010; Harris 2008; O’Brien 2016). Parties’ resources are, however, associated with significant differences in judges’ decisions, all else equal. Because the regression coefficients in Table 3 are shown as log odds, the interaction and its components are difficult to interpret. However, predicted probabilities based on the regression results indicate that criminal prosecutors are more successful than other parties in these cases. Specifically, judges are more than twice as likely to admit evidence from a criminal prosecutor (0.69) than from a criminal defendant (0.34). Judges are also more likely to admit evidence from prosecutors than from civil plaintiffs (0.53). It is beyond the scope of this article to investigate how litigant resources relate to judicial gatekeeping, but this initial finding suggests that there may be large differences in parties’ success depending on their access to legal experience and resources.

For a more concrete illustration of discipline differences in judicial gatekeeping, Figure 1 shows predicted probabilities of judges’ decisions for the average expert from each discipline. The predictions are based on the regression results in Table 3. Consistent with the descriptive analysis, the predicted probabilities show that judges are most likely to admit (0.67) and least likely to limit (0.10) evidence from natural scientists. Evidence from medical and health experts and from economists have the next best chances of being admitted (0.63 and 0.59). Also consistent with the descriptive analysis is judges’ relatively low probability of admitting (0.48) and relatively high probability of excluding (0.29) social science evidence.

Predicted probabilities of judicial gatekeeping decision for select disciplines.

Perhaps the most surprising element of Figure 1 is judges’ low probability of admitting evidence from engineers. Judges are more likely to limit engineering evidence than any other discipline in the figure (0.29) and are more likely to exclude engineering evidence than any discipline except for social sciences (0.25). Engineering subfields are too small to explore individually, but this suggests at minimum that judges’ rulings on engineering evidence are not commensurate with engineers’ relatively high scientific status among the public (Gauchat and Andrews 2018).

For a more direct look at discipline differences, Figure 2 shows the average marginal differences between judges’ decisions about social science evidence compared with other disciplines. The bars in the figure represent how much more or less likely a decision is for social science evidence compared with other evidence. Negative values mean a decision is less likely than it is for social science and positive values mean a decision is more likely than it is for social science. The error bars are 95 percent confidence intervals. When they cross zero, it means that the discipline differences are not statistically significant.

Average marginal differences in predicted gatekeeping decisions between social science and select disciplines.

For example, the top left panel shows that judges are significantly more likely to admit evidence from natural scientists and from medical and health experts compared with social scientists. The differences are substantively large, amounting to advantages of 19 probability points for natural sciences and 15 points for medical and health sciences. The top left panel also shows that differences in the probability of a decision to admit are not significantly different for engineering or economics evidence compared with other social sciences.

The top right panel shows that natural scientists’ advantage over social scientists extends to judges’ limiting decisions. Compared with social science, judges are 14 points less likely to limit natural science evidence (p < .05). And the figure’s lower panel shows that judges’ are 10 points less likely to exclude medical evidence compared with social science, although the difference is not significant at the 95 percent level (p = .07). Although there are no significant differences in judges’ rulings on engineering or economic evidence compared with other social sciences, Figure 2 shows several advantages for natural sciences and medical and health sciences relative to social sciences.

In a final set of comparisons (not shown), we examined the average marginal differences in judges’ decisions about natural science evidence compared with medical and health evidence and with economic evidence. The results suggest that judges’ decisions about medical and health experts do not differ significantly from their decisions about natural science. However, judges are significantly more likely to limit economics evidence compared with natural science evidence (p < .05).

To summarize, judicial decisions about contested expert evidence mirror broader patterns in the cultural boundaries of science in several ways. Most notably, we found that judges’ rulings favor natural sciences compared with social sciences. Likewise, medical and health sciences are more likely than social sciences to withstand judicial scrutiny. Yet judges’ decisions also stand apart from other research on the cultural authority of science. Most notably, engineering evidence was no more successful than social science evidence, which is not reflective of status disparities between these fields (Gauchat and Andrews 2018). And although the descriptive analysis found the anticipated distinction between economic and other social science evidence, economic evidence was not significantly more successful than other social sciences in the direct comparisons (see Figure 2). Still, unlike economic evidence, judges are significantly less likely to admit other social sciences compared with natural sciences. So, although economic and other social sciences are each more likely than natural sciences to be limited, economics evidence seems nevertheless to have an edge over other social sciences in the judges’ decisions to admit contested expert evidence.

Discussion

In this study we asked how people interpret complex, specialized information when faced with technical uncertainty, a common problem in organizations that rely on outside experts to perform knowledge intensive work. Building on recent research on symbolic boundaries and status hierarchies, we argued that disciplines help audiences locate knowledge claims within the field of science, which allows audiences to assess their credibility. Our approach contrasts against research that assumes that credibility comes from essential qualities of knowledge, such as internal consistency, instrumental utility, or predictive ability. Instead, we use disputes about experts’ credibility as sites to observe how nonexperts make sense of competing forms of knowledge and in doing so, reproduce the cultural map of science.

Our analysis of judicial decisions about contested expert evidence found a hierarchy of disciplines like the one found by studies of public perceptions of science (Gauchat and Andrews 2018; O’Brien 2013; Scheitle 2018). Our findings suggest that judges may use similar cues to interpret specialized knowledge even when constrained by rules of evidence. Much the way that publics prioritize natural sciences to social sciences, judges’ decisions about the admissibility of expert evidence favor natural sciences and medical and health sciences compared with social sciences.

Differences in the judicial success of these disciplines are consistent with a more general gap in their cultural authority. Elite discourse and public opinion each illustrate social sciences’ precarious position in the field of science (Gauchat and Andrews 2018; Gieryn 1999). Our results suggest that social sciences are also marginal to science as it is deployed in the judicial system. Case studies of jurisdictional disputes show how boundaries between natural and social science are constructed differently in different contexts. In courts, reliability may be one vehicle that translates disciplines into differential judicial success. This is because the legal framework for evaluating reliability is best suited for scientific practices such as quantification, hypothesis testing, and experimentation. For example, judges are instructed to consider whether experts’ methods have been tested, whether they have been peer reviewed, and whether they have a known error rate. Although many social scientists use methods amenable to these reliability tests, these techniques are ubiquitous in physical and life sciences. In contrast, a long tradition of social research disavows reliability as a useful property of inquiry (Becker 1996). Disagreement among social scientists about the field’s relationship to science may add to the perception that social scientists are less rigorous, less reliable, and less scientific than other experts. The perception of social sciences as “soft” may also undermine social scientists’ ability to advise organizational leaders in other settings.

We found the anticipated judicial success for natural sciences and medical and health sciences compared with (noneconomics) social sciences, but the relationship of economics to other social sciences is less clear. The descriptive analysis found the expected advantage for economics over other social sciences, and in contrast to other social sciences, economics evidence was no less likely than natural science to be admitted in the regression model. Yet the direct comparisons between economics and other social sciences were not statistically significant (see Table 3 and Figure 2). This ambiguity is consistent with research showing that publics distinguish between sociology and economics on the basis of their political meaning rather than their scientific prestige (Gauchat and Andrews 2018; Scheitle 2018). The diversity of political interests represented in these cases may help explain why the distinction between economics and other social sciences is less stark than the one between natural and social sciences.

The large share of engineers excluded through judicial gatekeeping is perplexing. The prestige of engineering is evident in its inclusion as a STEM field and in public opinion about engineering, which resembles physics and medicine more so than sociology and economics (Gauchat and Andrews 2018). Yet judges were significantly and substantially less likely to admit and more likely to limit evidence from engineers than from natural scientists (comparisons not shown). The pattern does not appear to be driven by a subset of engineers. More data on engineers are needed to investigate this finding, but on the basis of these results, it seems that engineers’ expert opinions are no more likely than social scientists’ to survive judicial review.

We have suggested the judicial success of some disciplines relative to others reflects the legal meaning of reliability, which corresponds closely to the methods used in fields like physics, biology, and medicine. However, judges must also ensure that evidence is of “scientific” relevance to the case (Daubert v. Merrell Dow Pharmaceuticals, Inc. 1993:580), and social science evidence may seem less useful than natural science evidence because it seems less scientific. It may also be that experts from more prestigious fields are selected by more powerful parties and receive superior preparation. As the regression results showed, criminal prosecutors are more successful than other parties in these cases, as they are elsewhere in the legal system (Galanter 1974). More research on the role of litigant resources in judicial gatekeeping is needed to understand the reasons for prosecutors’ success. For now, however, this article shows that judges’ gatekeeping decisions are associated with experts’ disciplines independently of prosecutors’ advantage.

Whereas past studies have shown that judges’ gender and ideology and experts’ gender and credentials are important to judges’ rulings on expert witnesses, we found no evidence of a significant association between these variables and judges’ gatekeeping decisions. The discrepancy between our findings and previous studies may reflect the diversity of cases in our sample compared with the more limited samples used by others. Specifically, past studies have found that social and political identities are predictive of judicial behavior when social and political identities are salient, such as in civil rights cases, but not when those identities are not salient, such as administrative law cases (Boyd et al. 2010; Kastellec 2013). The lack of average gender and political differences in our results may reflect the wide variety of political and social interests represented by the parties in the cases we study. A more targeted analysis may reveal clearer associations between particular social or political interests and judicial behavior.

Apart from its contributions to research on the cultural authority of science and judicial behavior, this study has practical implications for the use of expert evidence in courts. It suggests that expert witnesses from some disciplines are more likely than others to overcome challenges to their admissibility. Of course, there are limits on the kinds of experts that can plausibly testify on a given issue. But adjacent disciplines may share jurisdiction over certain topics and in those cases, the cultural authority of science may be worth considering. Although recruiting and preparing expert witnesses requires attention to many details, these results highlight scientific disciplines as one feature that may contribute to their credibility in court.

It is important to reiterate that this study is limited to experts whose admissibility is challenged. These data cannot determine what proportion of expert witnesses faces an admissibility challenge. The statistical conclusions generalize to the population of federal gatekeeping cases in the Daubert Tracker database. Daubert Tracker’s inclusion of unreported decisions makes it far more complete than the databases typically used to study expert admissibility. Yet it does not contain oral decisions made by judges from the bench and it offers no information about the number of decisions not included in the database. Despite these limitations, this investigation advances sociological and legal literatures by developing and testing a framework for the role of culture in the judicial gatekeeping of expert evidence. This article also provides clues to guide future research on the use of expert knowledge in organizational decisions.

Expert witnesses in courts, like expert advisers in other organizations, help decision makers process technical information and plan and justify their behavior. In this article we have argued that disciplines help audiences evaluate the credibility of expert knowledge claims because they signal claims’ location within the field of science. The association we found between scientific disciplines and judicial decisions about contested evidence in court suggests that disciplines may have similar effects in other organizations where decision makers must evaluate specialized knowledge claims without shared technical understanding.

Footnotes

Acknowledgements

We thank Myles Levin for access to Daubert Tracker data.

Authors’ Note

An earlier version of this article was presented at the 2021 American Sociological Association meetings.

Funding

This research is supported by the NSF (award 1946236) and an Advancing Research and Creativity Grant from the University of Milwaukee–Wisconsin (project ID AAJ1551).

1

Arts and humanities include arts, English, humanities, music, philosophy, religion, and performance. Business includes accounting, finance, business administration, business management, strategy, marketing, health care administration, human resources, human services administration, industrial administration, industrial hygiene, leadership, management, marketing, nursing administration, occupational safety and health management, organizational leadership, organizational and interpersonal communication, product design and development, production and operations management, real estate, and systems management. Economics includes agricultural economics, applied economics, food and resource economics, and health economics. Engineering includes aeronautical, aviation, biomedical, biomechanical, chemical, civil, computer, electrical, environmental, industrial, geological, marine, mechanical, material, nuclear, metallurgical, petroleum, structural, and transportation engineering. This category also includes experts with degrees in architecture, technology, industrial design, mechanics, operations, technology, computer applications, computer science, and materials science. Another category includes law and prelaw. Medical and health sciences include medicine, nursing, optometry, orthopedics, osteopathic medicine, pharmacology, pharmaceutical sciences, toxicology, physician assistant studies, podiatry, rehabilitation counseling, veterinary medicine, and vocational rehabilitation. Natural sciences include anatomic pathology, animal sciences, biology, chemistry, polymer science, earth and planetary science, environmental health, exercise physiology, forensic DNA and serology, forensic sciences, genetics, geology, health science, horticulture, psychophysiology, hydrology, inorganic chemistry, life sciences, mathematics, metallurgy, microbiology, molecular biology, molecular biophysics, neuropsychology, oceanography, pathology, physics, physiology, cognitive neuroscience, and soil science. Social sciences include anthropology, clinical psychology, communications, criminal justice, media studies, education, criminology, psychology, ethnomusicology, geography, health policy, international relations, journalism, social policy, juvenile justice, law enforcement, linguistics, political economy, political science, psychotherapy, public administration, public health, rehabilitation psychology, social studies, social welfare, social work, and sociology.