Abstract

The author uses risk society theory to develop hypotheses about sources of confidence in science. The focus is on perceptions that science creates harms, scientific knowledge is uncertain, and scientists pursue self-interests. Controls are included for political and religious identity and ideology, science knowledge, and other variables. Data are from two cross-sectional waves of the General Social Survey. Analyses using additional cross-sectional and panel samples from the General Social Survey are used to evaluate the robustness of the findings and to test the direction of causality; these are presented in the Appendix. The results indicate that perceptions of harmful consequences, scientific uncertainty, and self-interested scientists are associated with lower confidence. The results are robust across a variety of topics and types of scientists. The findings suggest that some strategies intended to increase public confidence in science, such as greater transparency or political mobilization in defense of science, might have the opposite effect.

Scholars have examined public attitudes toward science for many years (see Bauer, Allum, and Miller 2007 for a review of trends in this literature). Most research attributes differences in attitudes to levels of scientific knowledge or to religious and political identity and ideology. New research on science attitudes during the pandemic rightly shares this focus (Cowan, Mark, and Reich 2021; Evans and Hargittai 2020; Scheitle and Corcoran 2021). Risk society theory (Beck 1992; Giddens 1990) also offers an important perspective on public confidence in science. Surprisingly, however, scholars have neglected it in previous research (for exceptions, see Gauchat 2011; Price and Peterson 2016). The purpose of this research is to use risk society theory to identify additional concepts that may help us better understand public confidence in science. I examine whether people’s beliefs about the balance of beneficial and harmful results, levels of uncertainty in scientific knowledge, and the pursuit of self-interests by scientists are associated with confidence in the scientific community.

Confidence in science is an important research topic for many reasons. As we have seen over the past several years, distrust of the scientific community appears to be associated with noncompliance toward public safety measures, the rise of conspiracy theories and alternative treatments, political polarization, and higher death rates. Beyond what we have witnessed, research confirms that confidence in science is associated with individual decision making that supports the public good, such as vaccination (Cowan et al. 2021; Gauchat and Andrews 2018; Jamison, Quinna, and Freimuth 2019; Yaqub et al. 2014). In addition, better understanding the sources of trust might help scientists better defend their research against those that discredit sound science to push narrow interests (Dunlap and McCright 2015; Motta 2018a). The climate crisis and, more recently, the pandemic have only highlighted the need for more research in this area.

Public Confidence in Science: Science Literacy, Religion, and Politics

Science Literacy

Much existing research on public attitudes toward science focuses on science literacy. Those who are more knowledgeable about science are expected to have more positive attitudes toward it (see Laugksch 1999 for a detailed overview of the science literacy concept and Bauer et al. 2007 and Yearley 2005 for reviews of the literature). Allum et al.’s (2008) meta-analysis indicates that there is a weak relationship between science knowledge and positive attitudes toward science as well as considerable variability in the relationship between knowledge and attitudes toward specific issues, such as genetically modified food or nuclear power. The mechanism linking science literacy and attitudes is not understood well, and many are critical of this “deficit model” because, among other things, it values scientific knowledge over other types of knowledge (Bauer et al. 2007; Gauchat 2011). Some critics also contend that people develop science attitudes to demonstrate their commitment to an identity group and that science knowledge provides them with more tools for explaining away facts that are not in line with their identity commitments (Kahan et al. 2012).

Religious and Political Identity and Ideology

Scholars have also focused on religious and political identity and ideology to explain public attitudes toward science (Rutjens et al. 2018). Some argue that differences in confidence in science by religiosity and political ideology are rooted in competition and conflict for cultural capital (Sherkat 2017). Others, however, are critical of the conflict model, especially as it pertains to religion and science (Ecklund and Scheitle 2018; Evans and Evans 2008; O’Brien and Noy 2015). Research suggests that a conflict narrative oversimplifies the relationship between science and religion. O’Brien and Noy (2015), for example, demonstrated that about one fifth of Americans blend religious and scientific beliefs in a “post-secular worldview” (p. 95). Although a large majority of people have more favorable views of religion or science, postsecularists use a mix of religious and scientific ideas to make sense of the world, especially on certain topics, such as the big bang, evolution, and stem cells (O’Brien and Noy 2015).

Gauchat (2012) demonstrated there has been a decline in confidence in science among political conservatives and those who more frequently attend religious services. The relationship between political ideology and science attitudes, however, is complex. More recent research indicates that a growing political gap is as much from increases in confidence among moderate and liberal Democrats as it is from decreases in confidence among conservative Republicans (Lee 2021). Also, Sherkat (2017) demonstrated that the relationships between political and religious identification and ideology with trust in science are contingent on the larger context; that is, the effects can change over time as political and religious ideologies are redefined. Attitudes toward science are also complex because they depend on the topic in question, such as the climate crisis, vaccine hesitancy, or nuclear power (Campbell and Kay 2014; Lewandowsky, Gignac, and Oberauer 2013; Motta 2018a; Rutjens et al. 2018; Scheitle and Corcoran 2021).

Risk Society Theory and Confidence in Science

Science literacy, politics, and religiosity are certainly important for understanding public attitudes toward science. Risk society theory, however, also holds promise for better understanding science attitudes. With a few exceptions (Gauchat 2011; Price and Peterson 2016), those examining public attitudes toward science have largely overlooked this theory. In this section, I identify and describe concepts central to risk society theory and use them to develop the hypotheses that guide the analysis.

Trust in Expert Systems

Life in late modernity is characterized by a “sense of foreboding” that is based on being exposed to many dangers that are caused by humans, global in scope, and beyond our control (Giddens 1990:131). Although there is disagreement about whether people are safer now or in the past, most agree that the public is highly focused on risk (Ropeik and Gray 2002; Slovic 1987). According to Giddens (1990), we make it through the day despite feelings of dread because of routine and trust in experts and expert systems.

Expert systems are “systems of technical accomplishment or professional expertise that organize large areas of the material and social environments in which we live today” (Giddens 1990:27). Examples include water treatment and distribution systems, systems that evaluate food safety or disease control, and road transportation systems. Giddens (1990) argued that we rely on these systems even though we may have little understanding of how they work and that these systems influence us continuously, even though our contact with specific people in them (i.e., experts) is limited and intermittent.

According to Giddens (1990), trust in experts and expert systems is due partly to the fact that experts limit access to what they do. Limited access and having experts act in a “business-as-usual” way (Giddens 1990:85) help maintain public trust in expert systems. Trust is eroded when the public becomes aware of ignorance among experts. This happens when they observe harmful consequences, such as an accident, or instability in scientific knowledge, such as a change in what is believed to be true (Giddens 1990). Wynne’s (1995) research provides a classic example of the consequences of this “lifting of the veil”: farmers lost faith in scientists after observing them trying to measure radioactive elements on sheep following the Chernobyl disaster.

Harmful Consequences of Science

Risk society theory focuses on ecological risks arising from nuclear, chemical, and biological sciences. A central tenet of risk society theory is that science is often blamed for many of the threats that we face today (Arnoldi 2009; Lupton 2013). According to Beck (1992), “the sciences . . . are targeted not only as a source of solutions to problems, but also as a cause of problems” (p. 156). There are several aspects to the argument that science is a source of risk. First, when science is applied to solve problems, it opens itself up to criticism and blame if it fails. In the premodern world, people might attribute a flood that leads to death and destruction to fate or to the will of a vengeful god. A flood that leads to death and destruction today, by contrast, is the result of multiple failures of experts and expert systems, for example, in failing to accurately predict rainfall totals or a faulty flood control system (see Arnoldi 2009; Smith and Schwartz 2019).

Second, science creates new problems, which are consequences of new technology. Some argue that this has become more prevalent after the World War II, when “the dominant mode of science was oriented toward providing knowledge that generated innovative technologies that increased industrial capitalist production” (McCright and Dunlap 2010:104). Harmful consequences can appear as dramatic accidents, such as the Chernobyl disaster, or unfold more slowly, such as the realization that the herbicide Roundup is a likely cause of cancer. It is also important to note that harmful consequences need not be unintended. Some people may have less confidence in science because of harmful consequences that are not unintended, such as segregation that limits access to jobs in science fields or the exploitation of disadvantaged groups in medical research (Ecklund and Scheitle 2018; Noy and O’Brien 2018).

Hypothesis 1: Harmful consequences: Those who believe that science creates more harms than benefits have less confidence in the scientific community.

Uncertainty: The Instability of Knowledge

Uncertainty also leads to the realization of ignorance and shattering of trust in experts (Giddens 1990). Douglas and Wildavsky (1982) argued that science increases uncertainty in several ways. First, its tools allow us to envision things that we cannot detect with our senses alone. Improvements in measurement increase uncertainty because the new information may change what we know, but also because it may raise additional questions (Merton 1987). Second, they argued that scientists create uncertainty because of disagreements, such as over methodology or the interpretation of data: “Scientists disagree on whether there are problems, what solutions to propose, and if intervention will make things better or worse. . . . No wonder the ordinary lay person has difficulty in following the argument” (Douglas and Wildavsky 1982:63). Beck (1992) attributed uncertainty to the application of science onto itself (referred to as “reflexive scientization”) as well as to increasing specialization among scientists and the rise of inconclusive and conditional results. According to Giddens (1990:39), the revision of knowledge has become “radicalised” in the period of late modernity. People are now forced to confront risk on a daily basis, for example, in deciding whether it is safe to consume grilled meat or wear sunscreen (Egan 2019; Smyth 2019). The basic problem is that the answer to these questions seems to be constantly changing.

Additional scholarship on climate change skepticism also highlights the importance of perceptions of scientific uncertainty (Brulle 2014, 2021; Jacques, Dunlap, and Freeman 2008). It argues, however, that a climate change countermovement promotes perceptions of scientific uncertainty in order to further their own narrow interests, a process which McCright and Dunlap (2010) labeled “antireflexivity.” Dunlap and McCright (2015), for example, demonstrated that special-interest groups seek to undermine the authority of climate scientists by establishing think tanks, supporting contrarian scientists, and funding conferences to create the appearance of disagreements within science: “By creating the appearance of controversy within the public realm, denialists are able to appeal to values such as freedom of speech, fairness to both sides, and respecting minority viewpoints to add legitimacy to their claims” (p. 309).

Hypothesis 2: Uncertainty: Those who perceive greater uncertainty in science have less confidence in the scientific community.

The Pursuit of Self-Interest

Within Beck’s (1992) version of risk society theory, science plays a prominent role in its loss of authority (see also Douglas and Wildavsky 1982). Experts initially apply scientific skepticism to the external world but eventually apply it to science itself as they evaluate the risks research has created. Internal conflicts within science over these issues eventually become external conflicts between science and society. At the same time, with greater specialization within fields of research, knowledge becomes “hypercomplex,” and uncertainty increases (Beck 1992:157). Ultimately, skepticism and complexity erode scientists’ authority in making truth statements. Other actors, then, step in to fill the void (often in the guise of science), leading to the rise of special interests in statements of truth. Through this process of “demonopolization of scientific knowledge” (Beck 1992:156), those in business, politics, and the public become active coproducers of knowledge, and we see the rise of “alternative expertise” and “public-oriented scientific experts” (Beck 1992:161).

Many contributors to the more general literature on public attitudes toward the scientific community (i.e., beyond risk society theorists) have also addressed issues of bias, self-interest, and politicization in science. Rutjens et al. (2018), for example, discussed racial, gender, and political bias in science fields, data fraud, and conflicts of interest in research funding and their relationships with attitudes toward science. They argued that perceptions of bias lead to the acceptance of conspiracy theories (e.g., pertaining to vaccines and climate change; see also Lewandowsky et al. 2013). Douglas and Wildavsky (1982) argued that scientists are increasingly becoming politically active given the seriousness of the issues that we face today. According to Jacques et al. (2008), however, “Sceptics allege that environmental science has become corrupted by political agendas that lead it to unintentionally or maliciously fabricate or grossly exaggerate these global problems” (p. 353). The rise of “production science” (McCright and Dunlap 2010), politicization of government science, and growing reliance of academic scientists on industry might also fuel perceptions that scientists are pursuing self-interests rather than the common good (Marshall and Picou 2008). Supporting this view, Funk and Kennedy (2016) demonstrated that a larger percentage of the public believes that climate scientists’ career aspirations, political biases, and links to industry influence their research findings than concern for what is best for the public.

Hypothesis 3: Self-interest: Those who believe that scientists pursue self-interests have less confidence in the scientific community.

Methodology

Data for the primary analyses are from subsamples within the 2006 and 2010 cross-sectional waves of the General Social Survey (GSS; Smith et al. 2019). Although a question measuring confidence in the scientific community is available in nearly every cross-sectional wave of the GSS since 1973, the questions needed to measure the risk society variables are available only for subsets of cases from 2006 and 2010 (unfortunately, they are not part of the repeating core). Among 6,554 respondents, 1,154 were asked the confidence question as well as the set of science questions in 2006 and 2010 (928 in 2006 and 226 in 2010). The maximum sample size for the main analysis is therefore 1,154. I used multiple imputation to deal with all missing data among the 1,154 cases with possible data (additional details are available in the Appendix).

To evaluate the robustness of findings, I also analyze data from three other GSS samples. Two of these are additional cross-sectional subsamples within the 2006 data (n = 936) and 2010 data (n = 221). The third additional sample is a three-wave panel sample in which the same respondents were surveyed in 2006, 2008, and 2010 (they were asked the science questions in both 2006 and 2010). These data make it possible to test the direction of causation. I describe the data from these three other samples and their results in the Appendix.

Basic information on question wording for the main independent variables as well as confidence in the scientific community is provided in Table 1. Additional measurement details can be found in the Appendix, including measurement models testing for the independence of these concepts (i.e., whether a single- or multifactor model is a better fit to the data). The results suggest that these are in fact distinct attitudes. Control variables include political party identification, political ideology, religious identity, religious attendance, biblical literalism, applies religious values (“I try hard to carry my religious beliefs over into all my other dealings in life”), science knowledge, propensity to guess on science knowledge questions (i.e., the number of “don’t know” responses), education, sex, having children, and social class. Religious identity is measured by Lehman and Sherkat’s (2018) religious identity measure. Religious attendance is an ordinal variable with nine categories, but it is treated as a ratio variable in the analyses. Education is measured in years.

Summary of Measurement.

Although confidence in the scientific community is measured at the ordinal level, I use multinomial logistic regression for the analyses. Analyses from preliminary ordinal logistic regression models indicated that the proportional odds assumption is not valid (see the Appendix for more information). Analyses use a supplied design weight, WTSSNR (see GSS Appendix A on sampling design and weighting for more information).

Results

The first hypothesis predicts that those who believe that science creates more harms than benefits have less confidence in the scientific community. Results in Table 2 support this hypothesis. The log odds of answering “hardly any confidence” (compared with answering “only some confidence”) are 0.982 higher for those who believe that science creates more harms (relative to those answering more benefits; odds ratio = 2.670 = e0.982). Also, the log odds of answering “a great deal of confidence” (compared with answering “only some confidence”) are 0.526 lower for those who believe that science creates about as many harms as benefits (relative to those answering more benefits; odds ratio = 0.591). In other words, people who perceive negative, harmful consequences from science are less likely to have a great deal of confidence in the scientific community.

Confidence in the Scientific Community Using Pooled Cross-Sectional Data from 2006 and 2010.

Note: The base outcome for the multinomial logistic regression is “only some confidence.”

p < .05.

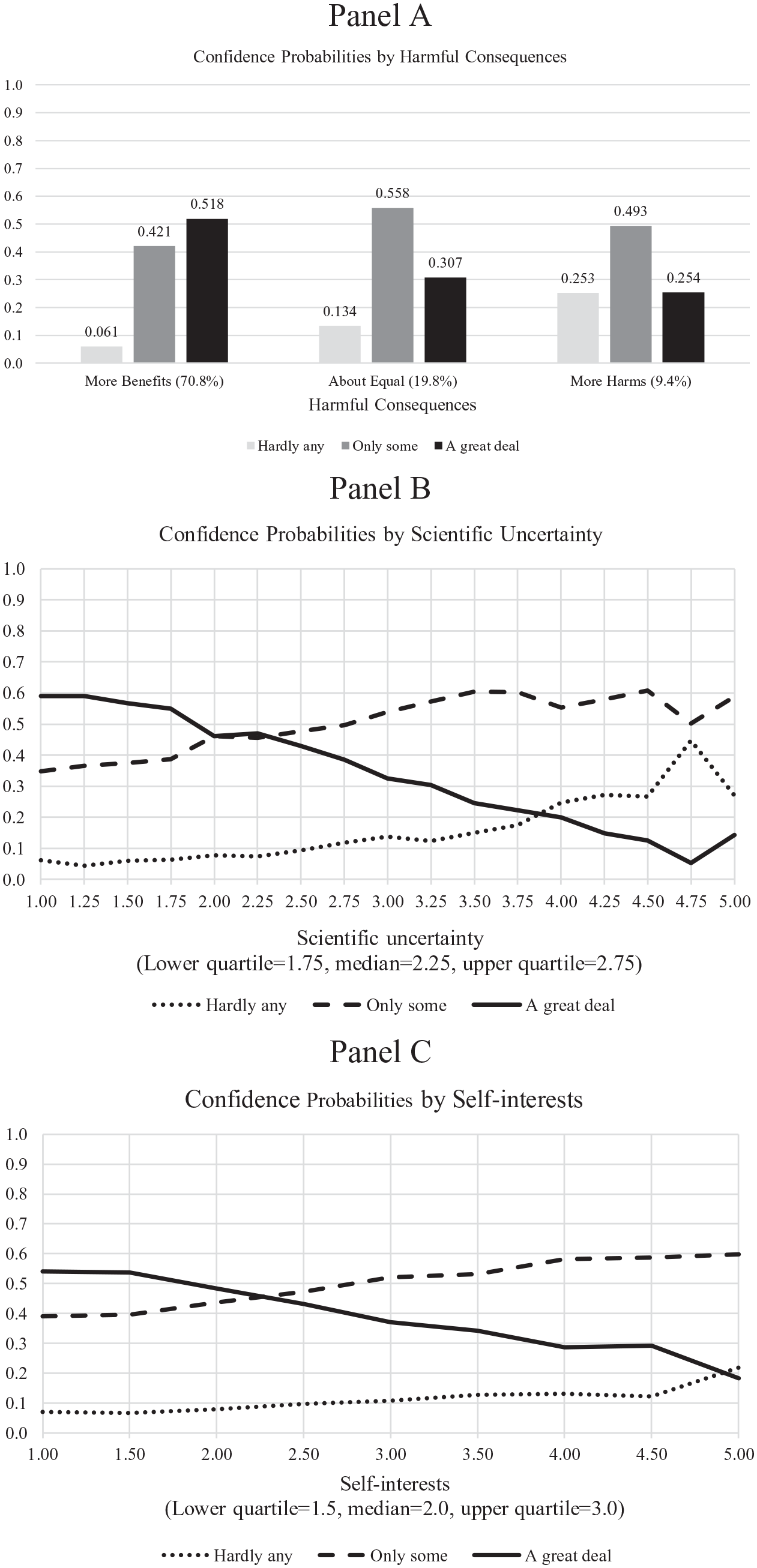

Figure 1A shows the predicted probabilities of answering “hardly any confidence,” “only some confidence,” and “a great deal of confidence” by response on the harmful consequences variable. The overwhelming majority of people believe that science creates more benefits (70.8 percent). For them, the most probable response is “a great deal of confidence”; the probabilities of answering “a great deal of confidence,” “only some confidence,” and “hardly any confidence” are 0.518, 0.421, and 0.061, respectively. This pattern changes for those who believe that science brings about equal amounts of benefits and harmful results (19.8 percent of respondents). For them, the most probable response is “only some confidence”; the probabilities of answering “a great deal of confidence,” “only some confidence,” and “hardly any confidence” are 0.307, 0.558, and 0.134, respectively. Only 9.4 percent of respondents believe that science creates more harmful results. The most likely response for them is “only some confidence” (the probability is 0.493), while the probabilities of answering “hardly any confidence” and “a great deal of confidence” are much lower (0.253 and 0.254). The probability of answering “a great deal confidence,” then, is lowest for those who perceive the greatest harmful consequences.

Confidence in the scientific community using pooled cross-sectional data from 2006 and 2010: predicted probabilities.

The second hypothesis predicts that those who perceive greater uncertainty in science have less confidence in the scientific community. Results support the second hypothesis. With each one-unit increase in perceptions of scientific uncertainty, the log odds of answering “a great deal of confidence” decrease by 0.322 (odds ratio = 0.725). I present predicted probabilities in Figure 1B. The scientific uncertainty variable ranges from 1 to 5 and has a mean of 2.2. The lower, middle, and upper quartiles are 1.75, 2.25, and 2.75, and most cases (91.2 percent) have scores of 3.25 or less. For those with uncertainty scores less than 2, the most probable response is “a great deal of confidence.” Responses of “a great deal of confidence” and “only some confidence” are just about equally likely for those scoring between 2 and 2.25 on scientific uncertainty. “Only some confidence” becomes the most probable response for those with uncertainty scores greater than about 2.25. For the middle 50 percent of respondents on uncertainty (i.e., those scoring from 1.75 to 2.75), the probability of answering “a great deal of confidence” ranges from 0.549 to 0.385. In sum, those who perceive greater scientific uncertainty tend to be less confident in the scientific community.

The third and final hypothesis predicts that those who believe scientists pursue self-interests have less confidence in the scientific community. Results support the third hypothesis. Each one-unit increase in perceived self-interest decreases the log odds of answering “a great deal of confidence” by 0.277 (odds ratio = 0.758). Self-interest ranges from 1 to 5 and has a mean of 2.2. The lower, middle, and upper quartiles are 1.5, 2.0, and 3.0, respectively. Overall, the pattern for self-interest is very similar to the patterns for harmful consequences and uncertainty. For those with lower scores on self-interest (e.g., 2.0 and below), the most probable response is “a great deal of confidence.” For those with higher scores (e.g., 2.5 and above), the most probably response is “only some confidence.” For the middle 50 percent of respondents on self-interest (i.e., those scoring from 1.5 to 3.0), the probability of answering “a great deal of confidence” ranges from 0.556 to 0.380. In sum, those who perceive the greatest self-interest tend to be less confident in the scientific community.

In addition to the risk society variables, several controls are associated with confidence in science in the main analysis: political party identity, religiosity, education, sex, and social class. Results suggest that those who self-identify as members of the upper class and Republicans are more likely to have a great deal of confidence in science compared with those in the working class and political independents, respectively (a separate model, not shown, indicates that Republicans do not differ from Democrats). Women and those who apply religious values in their life are less likely to have a great deal of confidence. Finally, those with more years of education are less likely to have hardly any confidence in science. Unlike the results for the risk society variables, the results for these control variables are not robust across samples. Only the sex (in the genetically modified organisms sample) and applies religious values (in the nuclear power sample) variables are significant in other samples.

Discussion and Conclusion

Results from this analysis indicate that perceptions of harmful consequences, uncertainty, and self-interest are associated with lower confidence in the scientific community in the United States. Additional models presented in the Appendix demonstrate that these findings are robust across a variety of science topics (e.g., genetically modified food and nuclear power in addition to global warming and stem cells) and types of scientists (nuclear in addition to environmental and medical scientists). Results from the panel analyses, also available in the Appendix, indicate that confidence in science is influenced by earlier perceptions of harmful consequences and scientific uncertainty. Confidence in science (measured earlier in time), however, does not influence any of the risk-society attitudes.

Risk society theory has largely been overlooked in previous research on the public understanding of science. Although some have examined single concepts, such as trust, no existing studies have sought to simultaneously incorporate and test multiple concepts from this theory. This analysis suggests that risk society theory may hold promise for providing a larger frame within which to situate future research. Perceptions of harmful consequences, uncertainty, and self-interest seem to be critical concepts for understanding confidence in science, and future research should explore connections between these various attitudes as well as their connections with science knowledge and political and religious identity.

Researchers focused on the public understanding of science have been critical of the deficit model for many years. It is evident that better educating the public will not be sufficient to change public opinion if the outreach fails to address concerns of harm, bias, self-interest, and the like. This analysis suggests that several strategies may be critical for improving risk communication, especially under conditions of high uncertainty and time constraint, such as the current pandemic. These may include emphasizing that disagreements between scientists are normal and push knowledge forward, that research takes time and requires sufficient resources, and that safeguards are in place to protect human subjects and human populations more generally. Just reporting these assurances, however, will likely not be enough. Creativity in presentation with an emphasis on repetition and accessibility will be important. Drawing insights from risk society theory into risk communication, such as the social amplification of risk framework (see Kasperson et al. 2010), could only improve best practices in risk communication.

Several comments are in order. First, we have clearly entered a new era with respect to the public understanding of science. Data for this analysis are from the period 2006 to 2010. Since then, the worsening climate crisis and pandemic have further thrust scientists into political and social conflicts. Although the model should be retested with more recent data, this analysis provides a useful baseline with which to compare results from more recent data. It seems likely that the basic patterns identified in the period 2006 to 2010 have only intensified.

Second, although many concepts central to risk society theory are associated with confidence in science, more recent scholarship suggests some important revisions to risk society theory. Risk society theory blames science itself for decreases in trust. It focuses on the double-edged nature of science (i.e., in creating both benefits and harmful results), the creation of uncertainty through scientific research, and the pursuit of self-interest by scientists. More recently, scholarship on climate change skepticism indicates that special-interest groups actively seek to increase perceptions of uncertainty and self-interests to further their own goals (e.g., to delay action on climate change that would be bad for business). Although core concepts, such as harmful consequences, uncertainty, and self-interests, are closely related to confidence in science, more research should be done to examine additional source of these perceptions, especially those that are external to science. Exploratory models using the data from 2006 through 2010, in fact, indicate that political party identity and political ideology influence perceptions of harmful consequences, uncertainty, and self-interest (not shown). Recent research also suggests that political and religious identity are related to both perceptions of uncertainty (i.e., scientists’ understanding of the pandemic) and of sharing values with scientists (Evans and Hargittai 2020).

Third, it is important to remember that the public has a relatively high level of confidence in the scientific community; for example, in the pooled data from 2006 and 2010, 44.0 percent of respondents have a great deal of confidence, 49.5 percent have only some confidence, and 6.6 percent have hardly any confidence. Of the 13 institutions included in the confidence question set (the scientific community, medicine, major companies, banks and financial institutions, organized religion, education, organized labor, the press, television, the executive branch, Congress, the Supreme Court, and the military), the military is the only institution with greater confidence (50 percent of respondents have a great deal of confidence in the military). For most institutions, the percentage of respondents having a great deal of confidence falls between 10 percent and 25 percent. Since 2010, the level of confidence in science has remained stable. More recent data from the 2018 GSS, for example, suggest that 45.2 percent of respondents have a great deal of confidence in the scientific community, while only 6.6 percent have hardly any confidence. The relatively high levels of confidence should be somewhat reassuring for those who are worried about the status of science.

Fourth, the results do not suggest any easy solutions for those hoping to improve the public standing of science. Perceptions of uncertainty, self-interested scientists, and harmful consequences seem a near certainty given existing trends. A few examples include increasing corporate ties to science, reliance on a model of applied science that prioritizes short-term economic growth, financial incentives to push new technology to market, the complex nature of the problems being addressed today, the politicization of science, and organized efforts to create confusion in the public (Dunlap and McCright 2015; Marshall and Picou 2008). The incentives for the pursuit of self-interest are not going away and it seems unlikely that science will become less politicized as issues such as climate change and vaccinations take center stage within national politics around the globe. If anything, science appears to becoming more rather than less politicized (see Frickel 2018; MacKendrick 2017; Ruane 2018). How science becomes political and how it is portrayed as doing so may affect public perceptions of self-interest among scientists, which could work against improving the status of science.

Calls for greater transparency in research are warranted given what is at stake, for example, by the establishment of rigorous guidelines in data sharing and for pursuing greater reproducibility of research (Freese and Peterson 2017). Greater transparency may backfire, however, if it is done in a way that increases public perceptions of uncertainty. Osman, Heath, and Löfstedt (2018) concluded that “it is not enough to advocate transparency without a clear idea of why and how it should be made transparent and what the public understanding of such information is” (p. 136).

Efforts to improve public confidence in the scientific community should probably focus on science education (including better explaining the process of science and explaining the distinction between science for production and impact science), better communication with the media (Slovic 2000), strengthening local, national, and international organizations that play important roles in oversight (e.g., institutional review boards, disciplinary organizations), and working to incorporate larger communities into the research process. Many of these initiatives are consistent with the push for postnormal science, with its emphasis on values in addition to facts, recognition of uncertainty, enhanced public participation, and application of the precautionary principle (Funtowicz and Ravetz 1993; Marshall and Picou 2008). Although science may have an imperfect voice, it continues to be our best hope as long as independence, oversight, and public engagement exist.

Supplemental Material

sj-docx-1-srd-10.1177_23780231221093162 – Supplemental material for Confidence in Science: Perceptions of Harmful Consequences, Scientific Uncertainty, and the Pursuit of Self-Interest in Scientific Research

Supplemental material, sj-docx-1-srd-10.1177_23780231221093162 for Confidence in Science: Perceptions of Harmful Consequences, Scientific Uncertainty, and the Pursuit of Self-Interest in Scientific Research by Robert M. Kunovich in Socius

Footnotes

Author Biography

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.