Abstract

Hand gestures are a potent aid in foreign language learning. The present experiment sought to enhance their utility using transcranial direct current stimulation (tDCS). Employing tDCS, we investigated whether stimulation over the left inferior frontal gyrus (IFG)—an area implicated in multimodal integration—increases gesture’s beneficial role in foreign vocabulary learning. Replicating previous research, we found that when participants learned words accompanied by iconic gestures, they demonstrated better retention of those words compared to learning with speech alone. However, when tDCS was applied to the region above the left IFG, the beneficial effects of gesture were absent. Despite the recent enthusiasm over neuromodulation techniques, the present results provide one case in which neural stimulation may actually disrupt, rather than help, learning.

Keywords

Learning a new language as an adult poses challenges on many fronts, from mastering novel phonemes to learning new morphology to grasping a different syntax. However, before taking on these challenges, the first step for a typical adult learner in the classroom is to start acquiring a new set of vocabulary items. Previous research has shown that hand gestures can greatly facilitate these initial stages of word learning (for a review, see Macedonia & von Kriegstein, 2012), and the neural mechanisms of this process are increasingly better understood. The present study draws on these neuroscience findings to explore whether electrical stimulation over the left inferior frontal gyrus (IFG)—an area implicated in multimodal integration—facilitates the role that gesture plays in the earliest stages of vocabulary learning in a foreign language (FL).

Facilitating Vocabulary Learning

For an adult student of an FL, learning the basics of conversation—saying hello, ordering a beer, inquiring about the location of the “biblioteca”—is often the most urgent priority. Indeed, introductory oral language courses and textbooks, not to mention the growing number of online language programs, place basic conversational vocabulary as the first goal of the learning endeavor. The dual cognitive challenge of this initial stage of vocabulary learning is not just remembering numerous new words but also remembering numerous sound combinations that are arbitrarily related to their meanings. For example, an adult learner of Japanese not only has to remember new words, such as “taberu” and “nomu,” but they also need to remember that these new words are associated with the actions of eating and drinking, respectively. To make matters worse, these new words are likely to receive intense interference from the already thoroughly mastered English words, “eat” and “drink.”

One way to help learners make these arbitrary mappings is to associate new vocabulary items with nonarbitrary information. For example, teaching strategies, textbooks, and computer programs liberally use all sorts of imagery to visually represent the meaning of new words, and there is clear evidence that this is very helpful (Jones, 2013; McNeil, Alibali, & Evans, 2000; Yeh & Wang, 2013). In face-to-face teaching, one of the most common ways to add visual richness to new vocabulary is for a teacher to physically enact the meaning. One type of enactment is the use of physical props—for example, using chopsticks to link taberu to the act of eating. Another very common type of enactment, gesture, occurs in the absence of props—for example, gesturing the use of chopsticks to help link taberu to the act of eating. This is an example of an “iconic” gesture (McNeill, 1992), which is the focus of the present study. These gestures may be particularly helpful for foreign vocabulary learning because they visually depict meaning in a direct and nonarbitrary fashion. Not only are hand gestures very convenient—they require no actual physical props—they are also particularly good at helping learners attach new symbols to old meanings (Macedonia & von Kriegstein, 2012).

In the past 15 years, there has been increased interest in the role that hand gestures play in the classroom (Goldin-Meadow, 2005; Nathan & Alibali, 2011; Roth, 2001). It is now well established across a number of educational domains that hand gestures are not only a pervasive part of the classroom, but that they actually facilitate teaching and learning in that context. With regard to the FL classroom, there has been renewed interest by experts on second-language learning in regard to gesture’s important role in the process (Hardison, 2010; Gullberg, 2006; McCafferty & Stam, 2009). 1

These claims about gesture in the FL classroom are supported by empirical studies in the laboratory. For example, controlled experiments have shown that instruction with hand gestures increases recollection of novel foreign vocabulary words and expressions in novice adult and child learners (Kelly, McDevitt, & Esch, 2009; Macedonia & von Kriegstein, 2012; Quinn-Allen, 1995; Tellier, 2008). This enhancement occurs beyond just attention capture, as mere hand waving does not produce similar learning effects. Moreover, the beneficial effects of gesture are observed only when the gesture is semantically congruent with spoken information, as with the chopsticks example (Kelly et al., 2009; Macedonia & von Kriegstein, 2012).

In sum, gesture serves as a natural bridge in FL learning, adding a layer of meaning to arbitrary foreign words and connecting them to known meanings, which is beneficial in the daunting task of learning new vocabulary. Building on these behavioral findings, the goal of the present study is to draw upon recent research in neuroscience to enhance gesture’s positive effects on the earliest stages of foreign vocabulary learning.

Neural Mechanisms for Gesture Processing

In the years following the discovery of mirror neurons in monkeys—which are involved in both production and comprehension of manual actions and oral communication (Rizzolatti & Arbib, 1998; Rizzolatti & Craighero, 2004)—researchers theorized that there might be shared neural mechanisms for action and language in humans as well (Bates & Dick, 2002; Bernardis & Gentilucci, 2006; Kelly et al., 2002; Nishitani, Schürmann, Amunts, & Hari, 2005). Following this lead, there was a stream of empirical research on how gesture and speech are integrated in the brain during language comprehension. Starting with early studies using event-related potentials (ERPs; Holle & Gunter, 2007; Kelly, Kravitz, & Hopkins, 2004; Özyürek, Willems, Kita, & Hagoort, 2007; Wu & Coulson, 2007) and moving to research using functional magnetic resonance imaging (fMRI; Green et al., 2009; Holle, Gunter, Rüschemeyer, Hennenlotter, & Iacoboni, 2008; Straube, Green, Weis, & Kircher, 2012; Willems, Özyürek, & Hagoort, 2007), we have learned that gestures are integrated with speech during semantic stages of processing and that the integration sites are located in traditional language areas, for example, the left IFG and superior temporal sulcus (STS). The left IFG, in particular, has received much attention as a potential “general unification site” that integrates gesture and speech during conceptual stages of processing (for a review, see Özyürek, 2014).

In addition to indirect neuroimaging evidence identifying the IFG as a site of gesture–speech integration, there have been a handful of studies using more direct techniques, such as transcranial magnetic stimulation (TMS) and transcranial direct current stimulation (tDCS). Broadly speaking, these techniques work by actively modulating the electrical activity of brain regions (from above the skull) to more directly identify whether a particular brain region is causally implicated in a particular cognitive process or behavior. For example, Gentilucci and colleagues (2006) found that temporarily inactivating the left IFG with repetitive TMS (inhibitory) disrupted people’s ability to translate hand gestures into words, further suggesting the importance of left IFG as a site where speech and hand gestures are linked during language comprehension.

Building on these neuroimaging and neuromodulatory studies on native language processing, researchers have begun to explore regions involved in, and the mechanisms for, how gestures facilitate FL learning. For example, Kelly and colleagues (2009) found that observing congruent iconic gestures not only helps people learn foreign vocabulary words better than no gesture, but ERP data also revealed that words learned with gesture produced deeper imagistic memory traces than words without gesture (as measured by the Late Positive Complex [LPC]). In addition, researchers have used fMRI to demonstrate that the left IFG is implicated in strengthening these memory traces (Macedonia, Müller, & Friederici, 2011; Macedonia & von Kriegstein, 2012; Mayer, Yildiz, Macedonia, & von Kriegstein, 2015). Thus, it appears that the IFG is not only involved in integrating gesture and speech in native language comprehension but implicated in FL learning as well.

The Present Study

Given that gesture facilitates foreign vocabulary learning, and that the left IFG is implicated in this facilitation, the present study investigates whether neuromodulation of left IFG can enhance gesture’s positive effects beyond their current benefits. We chose the neuromodulation technique of tDCS to explore this question. tDCS can reliably increase or decrease excitability in particular cortical regions by delivering a weak direct current—producing minimal discomfort—that modulates cortical excitability in that region (Gandiga, Hummel, & Cohen, 2006). Anodal tDCS is thought to increase cortical excitability by depolarizing neurons to bring them closer to threshold, which increases the likelihood of spontaneous cell firing and ultimately facilitates task performance (Brunoni et al., 2012; Holland et al., 2011). Specifically, it is well suited to facilitate motor memory formation and learning (Nitsche et al., 2003; Reis et al., 2008) as well as linguistic processing (Flöel, Rosser, Michka, Knecht, & Breitenstein, 2008; Monti et al., 2013).

We sought to investigate gesture’s benefits on early stages of foreign vocabulary learning in adults while targeting the left IFG area, a region proposed to reside within the neural network responsible for gesture and speech processing and integration. Based on the research reviewed above, we made three predictions. First, we predicted that we would replicate previous research and find that FL instruction with iconic gesture will produce better learning outcomes than instruction without gesture. Second, we predicted that compared to a sham control (in which no stimulation is given), tDCS stimulation over left IFG area will enhance overall performance on vocabulary tests. And third, if the left IFG is indeed a region involved in integrating gesture and speech, we predicted that the positive effects of instruction with gesture compared to no gesture will be greater in the stimulation condition compared to the control condition.

Method

Participants

Twenty-four native English-speaking students were recruited from introductory classes in a liberal arts school in Northeastern United States (ages 18–23; mean = 19.71, SD = 1.73; 17 right handed). Participants filled out safety screening questionnaires to determine their eligibility to undergo tDCS and completed language and musical background questionnaires. Those reporting eight years or more of second language or musical experience were excluded, as it has been shown that bilingualism and extensive musical experience alter brain structure and plasticity (Kraus & Chandrasekaran, 2010; Mechelli et al., 2004). Signed consent was obtained and students received research credit or compensation.

Materials and Instructor

Video training

Participants viewed training videos on a 30-in Mac desktop using QuickTime media player at full screen, full brightness, and full volume with participants seated approximately 3 ft from the screen.

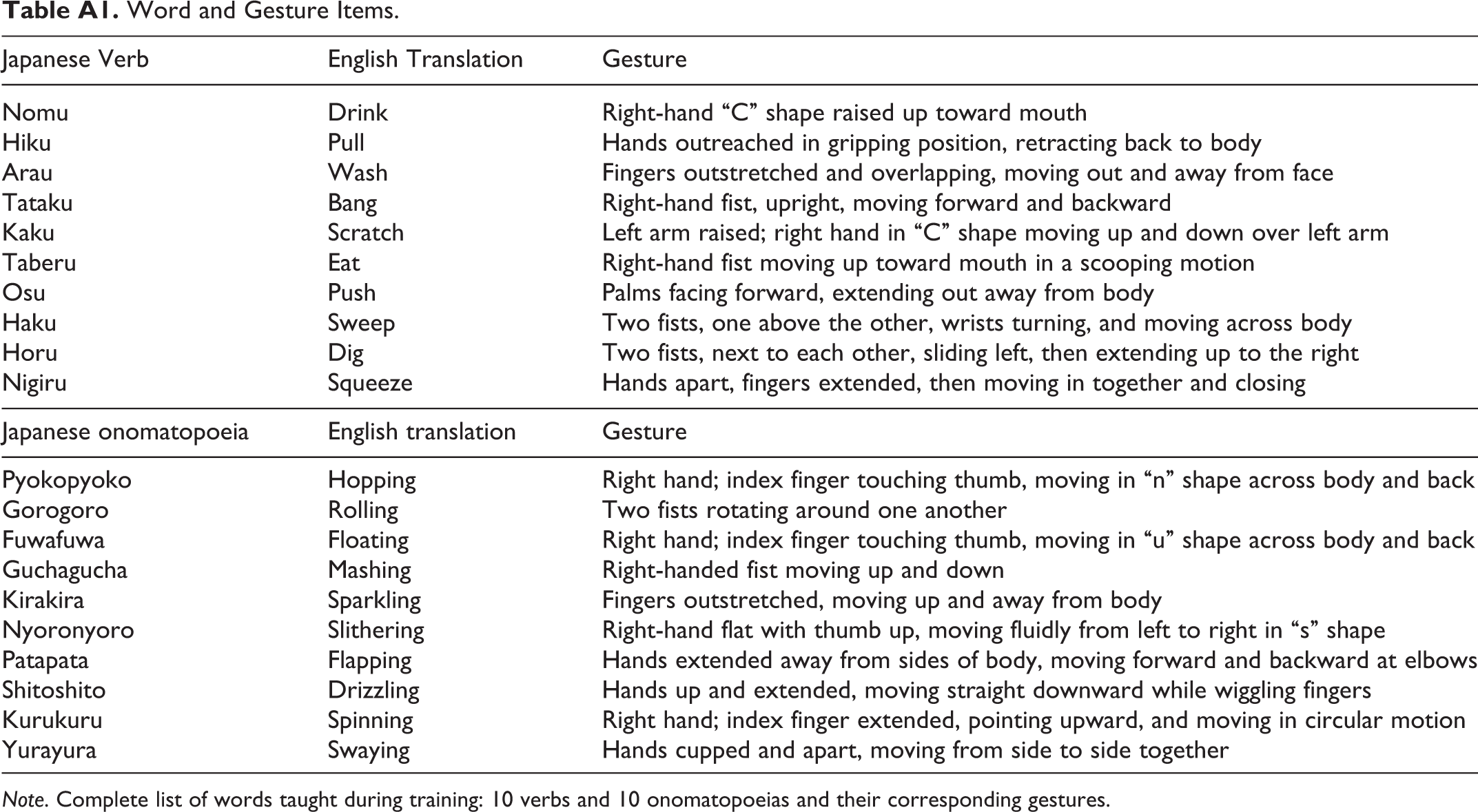

The instructor was a Japanese department intern, a 23-year-old female native Japanese speaker living in the United States for less than 1 year. She had been studying English for 10 years in school and speaks fairly fluently with an accent. There were eight issue versions of the training videos, four with verb instruction, four with onomatopoeia instruction, and each comprised ten Japanese words (for all words and gestures, see Appendix A). We opted to include onomatopoeias because they phonetically resemble the sound that they describe, making them less arbitrary than other word types. Verbs were adapted from Kelly, McDevitt, and Esch (2009), and onomatopoeias were bisyllabic and commonly used in everyday Japanese. Verb accompanied gestures were those employed by Kelly et al. (2009), and new gestures were chosen to accompany onomatopoeias. Prior to data collection, a group of American and Japanese native speakers viewed the onomatopoeia gestures in order to ensure that the meaning of each gesture was not ambiguous.

The instructor read at a slow, deliberate pace, and briefly introduced herself before each session began to allow participants to acclimate to her speech: “Hello, my name is _____. I am the Japanese intern and I am so excited to teach you some Japanese words today. Nice to meet you. Let’s start.” She would then say, “The first word is ‘Japanese word.’ ‘Japanese word’ means ‘English translation.’ ‘Japanese word’ means ‘English translation’” (for stimulus examples, refer Figure 1; for script example, refer Appendix B). Each session consisted of 30 trials (10 words × 3 repetitions), with the Japanese word repeated 3 times per trial.

Stimulus examples. Left panel: Gesture + Onomatopoeia. The instructor said, “Gorogoro means rolling” with “rolling” accompanied by two fists rotating around one another. Right panel: Gesture + Verb presentation. The instructor said: “Nomu means drink” with “drink” accompanied by right-handed gesture in a “C” shape raised to the mouth.

Half of the words (five) were taught with gesture and the other half were presented without gesture in each of the two training sessions per participant (see Appendix B). For words accompanied by a gesture, the instructor performed the gesture only with the English translation of the word, so participants could hear the Japanese word without distraction. For words without gesture, the instructor kept her hands at her side. Word order and gesture presence were randomized, and training videos were counterbalanced across subjects.

Vocabulary tests

Prerecorded auditory vocabulary tests were comprised of the 10 words taught per session. Participants were informed that the test was not self-paced, that each word would be repeated 3 times, and that their task was to write down the English translation of each Japanese word. They were discouraged from going back and changing previous answers, instructed to put a question mark (?) if they did not know the answer, and not to leave any questions blank. The instructor on the recording said the item number, and then each of the 10 word items was repeated 3 times (e.g., “One: ‘Nomu,’ ‘Nomu,’ ‘Nomu’”). There was 1 s of silence between the item number and the first iteration of the Japanese word, and 5 s of silence in between each iteration of the Japanese word. Participants were able to write responses at any point during each question number.

tDCS device

The Chattanooga Ionto Device for tDCS is a small box (about the size of a cell phone) that delivers a constant current; the anode and cathode wires connect to electrodes that are covered by potassium chloride (KCl)-soaked sponge pads. tDCS can reliably increase or decrease excitability in cortical regions by delivering a weak direct current—producing minimal discomfort—that affects neuronal firing in the region under the electrode (Gandiga et al., 2006). Anodal tDCS is thought to increase cortical excitability by depolarizing neurons and bringing them closer to threshold, facilitating task performance (Brunoni et al., 2012; Holland et al., 2011).

Post-training surveys

After each session was complete, two surveys were administered: a post-tDCS side effect checklist and posttraining survey. Among the survey items were questions about what was most helpful in the instruction, what words were most difficult, and how distracting the tDCS was. After the second session and experiment completion, participants were asked if they could correctly identify the sham and tDCS sessions.

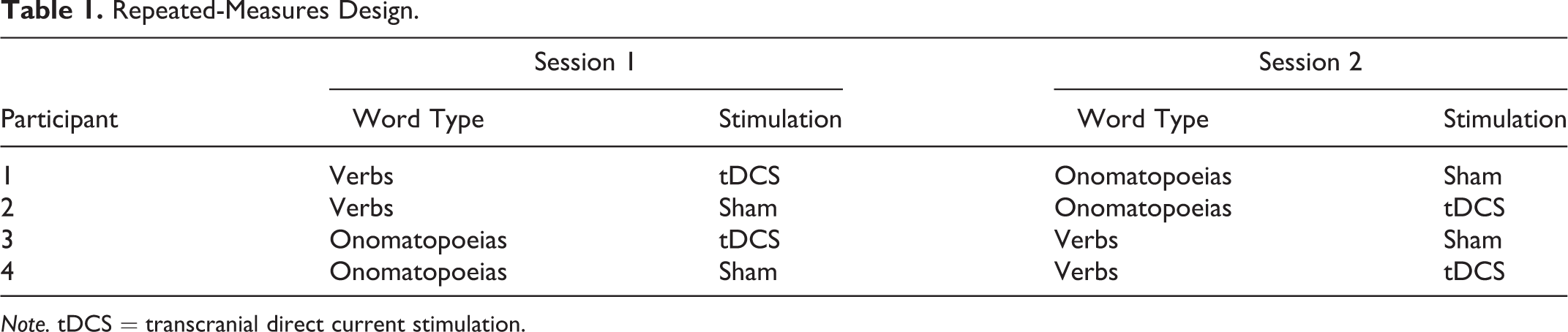

Training Procedure

The study consisted of two separate 30-min sessions conducted 3 days apart. Participants were randomly assigned to receive either a sham control or tDCS, and onomatopoeias or verbs first, with word and gesture orders alternated across participants with varying script versions. For example, they were taught one word type (either verbs or onomatopoeias) and underwent one stimulation condition (either sham or tDCS). For their second session, they were taught the other word type and underwent the other stimulation condition (see Table 1). 2 Participants filled out safety screening questionnaires upon arrival to the lab, and then began the experimental procedure.

Repeated-Measures Design.

Note. tDCS = transcranial direct current stimulation.

Electrode pads were prepared according to the manual for using the Chattanooga Ionto Device for tDCS (Version 1.0). The halfway points between the nasion and inion and from tragus to tragus were measured for vertex localization to center a 128-electrode Geodesic electroencephalogram (EEG) practice net.

The stimulation region was centered over F7 of the international 10–20 system (De Vries et al., 2010; Marangolo et al., 2011; Wakita, 2013). After marking of the stimulation region, the EEG cap, used solely for localization, was removed. Sponge-covered electrodes were placed on dry, nonirritated portions of scalp, and a large band fastened around the head held the electrodes in place. The stimulation electrode (35 cm2) was placed over the marked stimulation region, the reference electrode on the contralateral supraorbital region. At increased dosages, tDCS might be painful to subjects, therefore a conservative dosage was chosen. The apparatus dosage was set to 12 (1 mA × 12 min = 12; density = 1 mA/35 cm2 = .029 mA/cm2), as research has shown these to be effectual parameters: Nitsche et al. (2003) found that a current of 1 mA for 15 min correlated with improved implicit motor learning in primary motor, premotor, and prefrontal cortices, and Fregni et al. (2005) demonstrated that stimulation (1 mA for 10 min) over left dorsal lateral prefrontal cortex resulted in increased performance in a working memory task.

Participants receiving sham stimulation underwent identical tDCS preparation—the same instructions as in the actual tDCS stimulation were stated—except they received no dosage; the device underwent the same initial 30-s ramp-up period in order to give the same initial tDCS sensation but was then discretely powered off after that 30-s period. 3 It was important that participants underwent identical set up and treatment regardless of actual dosage received in order to control for any stimulation expectations. 4

Following the 30-s ramp-up, the training video was played. Participants were instructed not to mouth the words, nor perform any gestures themselves while observing the training video. After the training video’s completion, vocabulary tests were administered. Stimulation continued during vocabulary testing for those receiving tDCS. When the training and subsequent vocabulary tests were completed, the device ramped down for those receiving tDCS, and electrodes were removed. At both time points after training and test completion, participants completed a post-tDCS side effect checklist and posttest survey.

Scoring criteria

Correct vocabulary test answers received a score of 1 and incorrect answers a score of 0. Onomatopoeia were taught in present progressive form (i.e., swaying), while verbs were taught as simply the verb infinitive (i.e., eat), therefore correct words, but incorrect forms, or semantically similar words were marked correct (e.g., “pulling” was accepted for “pull,” or “swinging” accepted for “swaying”) in order to avoid discrediting any successful learning or recall affected by spelling mistakes or varying word form. In addition, we recorded total number of unanswered (not attempted) questions as an additional measure of how well participants learned (or did not learn) the words.

Design

The study employed a repeated-measures design with the following within-subjects independent variables: type of instruction (gesture vs. no-gesture) and stimulation presence (tDCS vs. sham). The dependent measures included two types of vocabulary responses: correct responses and unanswered questions. To account for a potential nonnormal distribution, the percentages were arcsine transformed before being entered into the analysis of variance. Multiple pairwise t-tests were adjusted with the Bonferroni correction. Order of words and stimulation were not treated as factors because they were counterbalanced across participants. Moreover, for reasons stated in Note 2, the current study did not treat word type—verb vs. onomatopoeia—as a factor either. However, although our design prohibited effectual analysis of word type as a factor in our overall analysis of variance, we looked at paired samples t-tests to compare verb and onomatopoeia scores overall as well as within each stimulation condition (sham verb: gesture vs. no-gesture; sham onomatopoeia: gesture vs. no-gesture; tDCS verb: gesture vs. no-gesture; tDCS onomatopoeia: gesture vs. no-gesture). Finally, there were two types of survey responses, meta-judgments about ease of learning and post-tDCS side effect ratings. These were compared using two-tailed paired t-tests.

Results

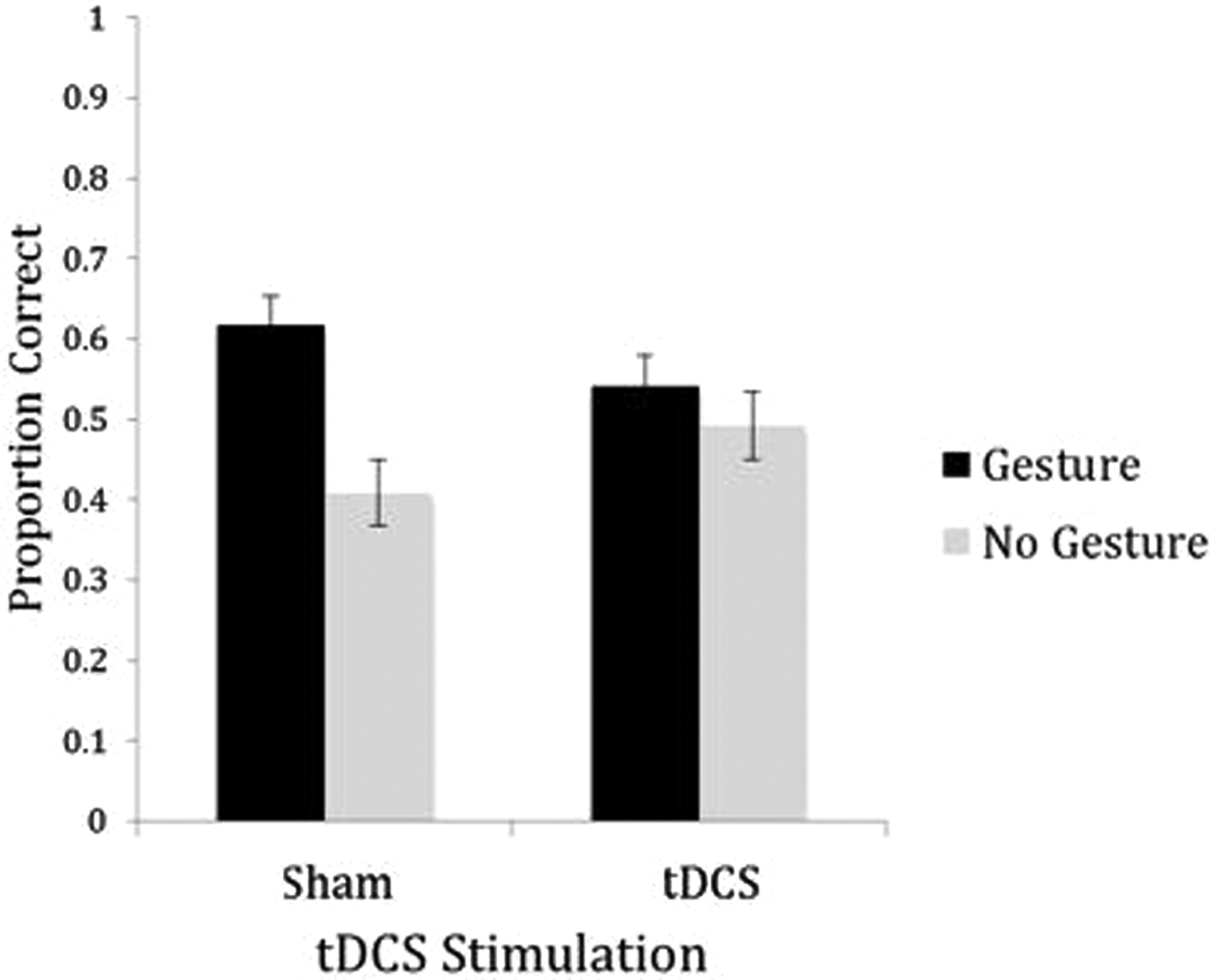

For the correctly recalled vocabulary items, there was no main effect of stimulation, F(1, 23) = .16, ns, but there was a main effect of gesture instruction, F(1, 23) = 7.83, p = .01,

Transcranial direct current stimulation (tDCS) and gesture effects on vocabulary test. Students performed significantly better on word items presented with gesture as compared to no-gesture words, F(1, 23) = 7.83, p = .01, along with an interaction between stimulation and gesture presence, with students performing better on gesture words in sham condition, F(1, 23)= 5.32, p = .03. The interaction effect was significantly driven by sham gesture and no-gesture words, t(23) = 3.78, p < .001, with participants scoring higher on gesture words, and no significant differences between gesture and no-gesture words in the tDCS condition, t(23) = .26, ns.

Moreover, comparing across stimulation conditions, there were no differences for gesture items for sham versus stimulation, t(23) = 1.52, ns, nor were there any differences for no-gesture items for sham versus stimulation, t(23) = 1.11, ns. Thus, the stimulation condition did not make gesture or no-gesture any better or worse than the sham condition—it just eliminated the differences between them.

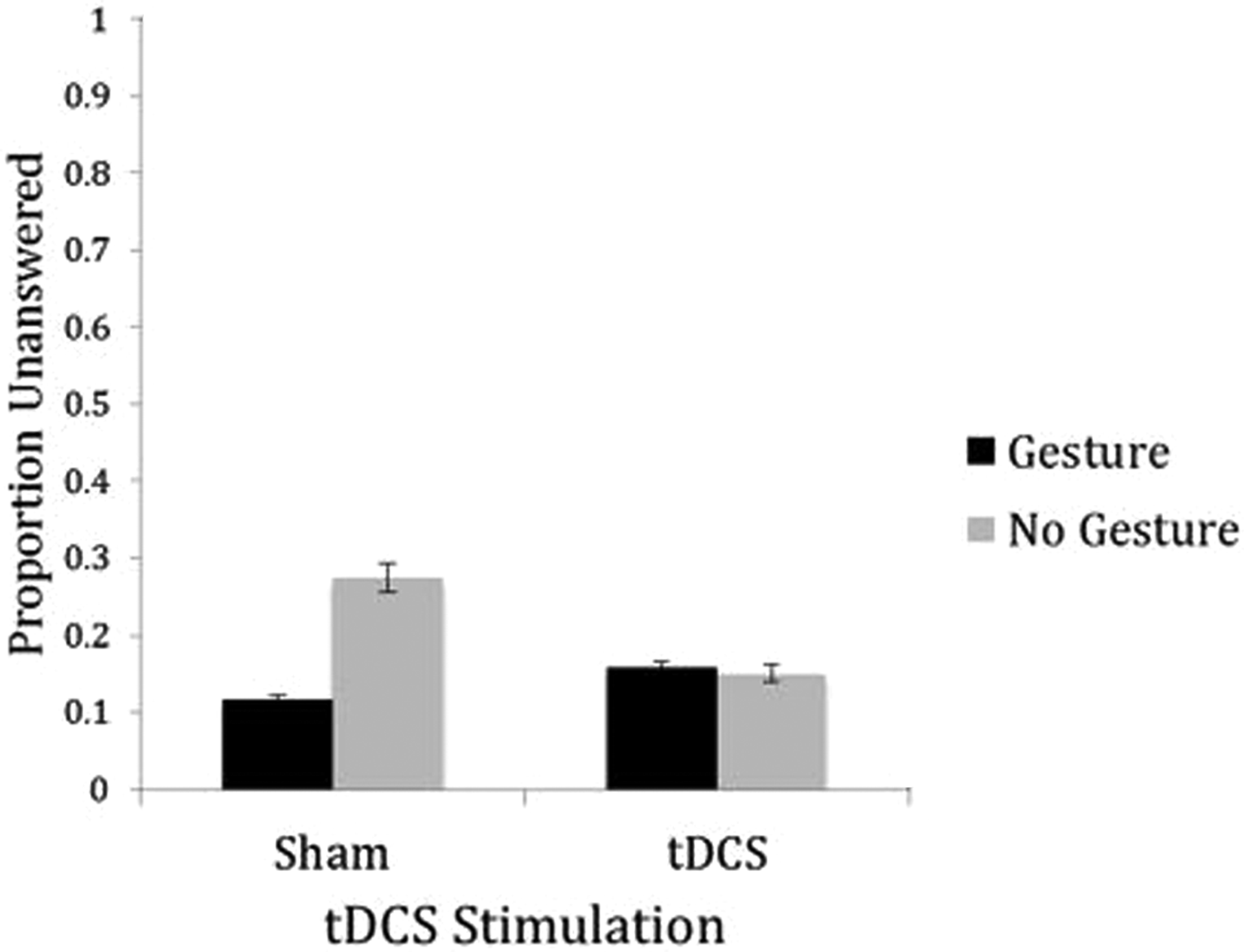

We additionally investigated how many questions students did not attempt on their answer sheets. Although there was no main effect for stimulation condition, F(1, 23) = 1.43, ns, participants left more no-gesture words unanswered than gesture words overall, F(1, 23) = 7.16, p = .014,

Transcranial direct current stimulation (tDCS) and gesture effects on unanswered questions. There was a significant difference between gesture and no-gesture words, with more no-gesture words left unanswered, F(1, 23) = 7.16, p = .014, along with a significant interaction between simulation and gesture, F(1, 23) = 4.55, p = .044. Paired samples t-tests revealed that unanswered gesture and no-gesture word items were significantly different within the sham condition, t(1,23) = −3.16, p = .004, with more no-gesture words left unanswered. Unanswered gesture and no-gesture words were not significantly different in the tDCS group, t(23) = .09, ns.

For our word type analysis collapsing across stimulation conditions, paired samples t-tests revealed that when onomatopoeia and verb scores overall were compared, there were no significant differences between total number correct, gesture number correct, or no-gesture number correct. When looking at paired samples t-tests on gesture and no-gesture training within each stimulation and word type condition (sham verb: gesture vs. no-gesture; sham onomatopoeia: gesture vs. no-gesture; tDCS verb: gesture vs. no-gesture; tDCS onomatopoeia: gesture vs. no-gesture), all but the tDCS onomatopoeia condition, t(11) = 1.00, ns, yielded significantly better learning for gesture compared to no gesture instruction (sham verb: t(11) = 2.28, p = .022; sham onomatopoeia: t(11) = 4.11, p = .002; tDCS verb: t(11) = 3.22, p = .008).

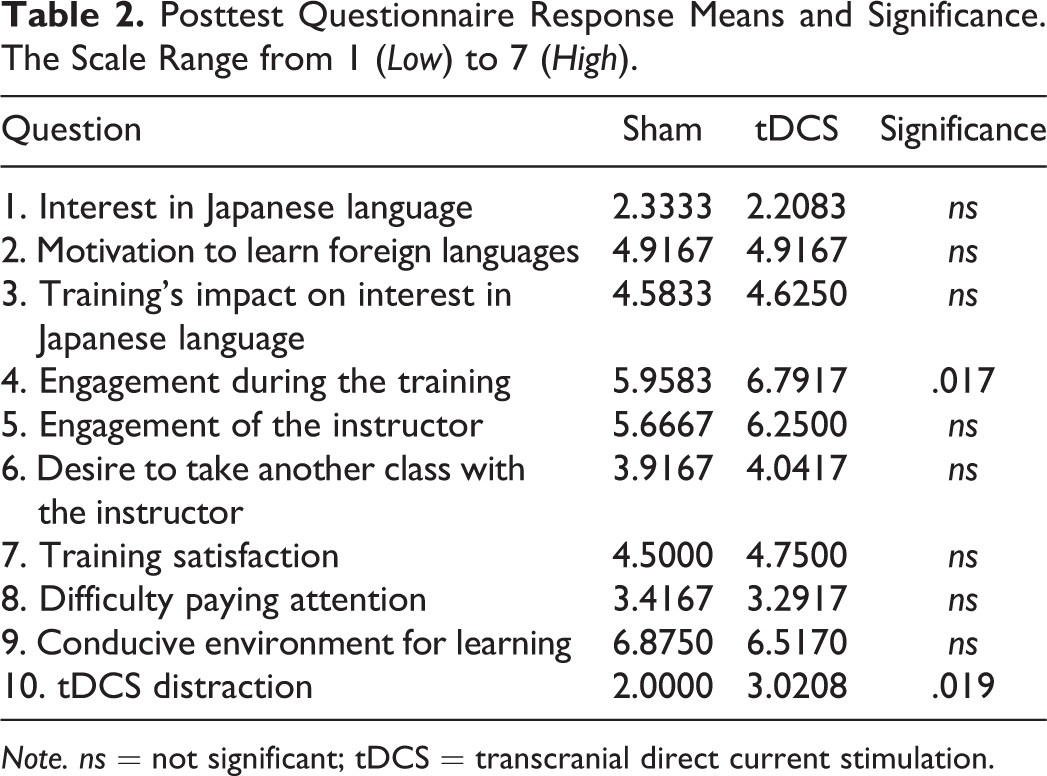

Posttest Surveys

Meta questions

Of the 10 paired t-tests conducted, two questions differed significantly between the tDCS and sham conditions in the posttest surveys. Participants reported feeling more engaged during the tDCS condition, t(23) = 2.59, p = .017 (two-tailed),

Posttest Questionnaire Response Means and Significance. The Scale Range from 1 (Low) to 7 (High).

Note. ns = not significant; tDCS = transcranial direct current stimulation.

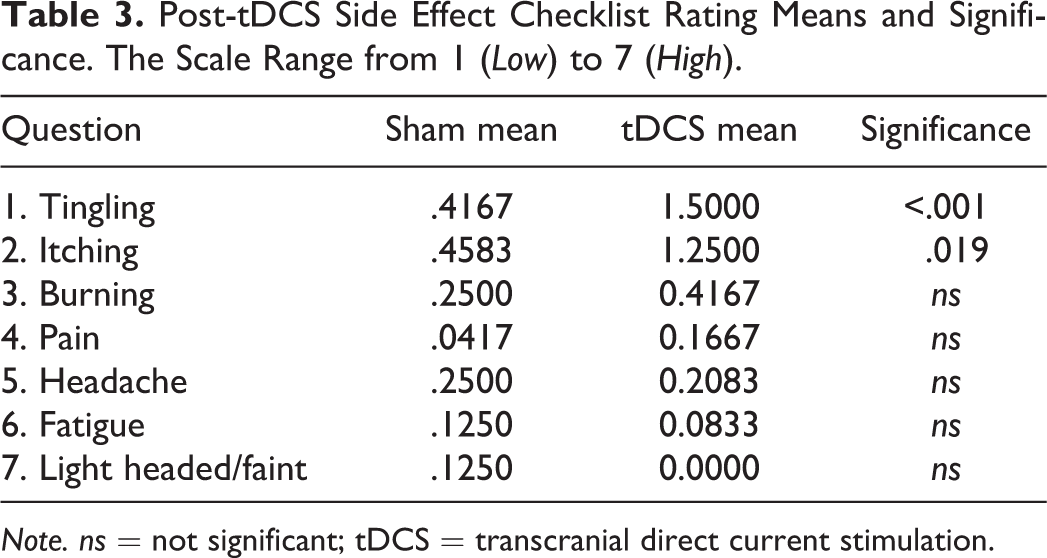

Stimulation side effects

Analysis of the tDCS side effects checklist ratings revealed that participants reported significantly more tingling, t(23) = 4.38, p < .001,

Post-tDCS Side Effect Checklist Rating Means and Significance. The Scale Range from 1 (Low) to 7 (High).

Note. ns = not significant; tDCS = transcranial direct current stimulation.

Discussion

We found support for the first prediction that FL instruction with iconic gestures produced better learning outcomes than instruction without gesture. However, the results did not support the second prediction that tDCS stimulation should produce greater overall learning outcomes than a sham control, and to our surprise, the results concerning the third prediction went contrary to expectations: Instruction with gesture produced positive benefits for the sham control, but those positive effects vanished in the stimulation condition. Thus, it appears that although gestures help with vocabulary learning, stimulating the area above the left IFG does not increase gesture’s effectiveness—in fact, it detracts from it.

By confirming our first prediction, we have replicated previous studies demonstrating that iconic gestures facilitate vocabulary learning in novice adult and child FL learners (Kelly & Lee, 2012; Kelly et al., 2009; Macedonia & von Kriegstein, 2012; Tellier, 2008). This lends further empirical support to long-standing claims that the body is a highly effective tool in teaching FLs (Asher, 1969; Moscowitz, 1976).

Regarding our second prediction, despite the well-established research demonstrating beneficial outcomes of anodal stimulation of the left IFG on learning and memory (Flöel et al., 2008; Monti et al., 2013; Nitsche et al., 2003; Reis et al., 2008), we found no such enhancement effects on FL vocabulary learning. There are a number of possible reasons for this. One possibility is that the intensity of our stimulation was not high or long enough (Boggio et al., 2006). However, our intensity duration was consistent with other studies in the literature: Although 1 mA for 12 min is a relatively conservative current and duration, other studies have reported enhancement effects with similar parameters (Fregni et al., 2005; Nitsche et al., 2003). Another possibility is that we perhaps did not stimulate in the right location. This seems unlikely because we used our coordinates from various studies that found significant stimulation effects (De Vries et al., 2010; Marangolo et al., 2011; Wakita, 2013). Still another possibility is that our dependent measure was just not sensitive enough. For example, researchers have found that tDCS can affect response times more than accuracy measures (Holland et al., 2011), so perhaps using response time measurements in future studies would reveal an effect, as brain stimulation effects are often weak and varied. However, this does not make sense with the fact that our vocabulary scores did reveal an effect of stimulation—it was just not the predicted one.

Why Does Stimulation Disrupt Gesture?

The lack of support for our second prediction is interesting in light of the unexpected finding regarding our third prediction: The fact that gesture was highly effective in the sham condition—boosting performance by 50%—but lost its effectiveness in the stimulation condition suggests that the tDCS was doing something. Below, we outline three possible explanations for what tDCS might have altered to eliminate the effectiveness of gesture.

Temporal misalignment

It is possible that a timing disruption could explain the lack of gesture effect in the stimulation condition. Given that left IFG is implicated in processing both spoken language and manual actions (Rizzolatti & Arbib, 1998; Rizzolatti & Craighero, 2004; Rizzolatti, Fadiga, Gallese, & Fogassi, 1996; Nishitani et al., 2005; Willems et al., 2007), it is possible that tDCS may have differentially affected one process. During language comprehension, speech and gesture are most successfully integrated when the two modalities are closely coupled in time (Habets, Kita, Shao, Özyürek, & Hagoort, 2011). Even though gesture and speech were presented simultaneously in the present study, it is possible that our tDCS stimulation artificially sped processing of one modality at the expense of the other. For example, if tDCS sped gesture processing, this boost might have disrupted the ideal timing of how the brain typically connects that information to the accompanying speech. Indeed, Obermeier and Gunter (2014) showed that if gestures are processed much more than 200 ms prior to speech, they begin to lose their communicative effectiveness. Thus, stimulation in our study might have created temporal separation of gesture from speech, and this could explain why gesture did not appear to help word learning in the tDCS condition.

An increased error signal in IFG

A second possibility concerns a relatively new interpretation of what increasing the activity in left IFG should do for gesture–speech processing. Unlike most standard views of IFG function, a model proposed by Skipper, the natural organization of language and the brain (NOLB) model (2014), predicts that increased activity in left IFG may actually cause problems for learning. According to Skipper’s model, the auditory cortex (AC) projects and receives information to and from higher brain regions such as the left IFG (in addition to posterior regions) with the purpose of confirming predictions about what the auditory system is hearing. When helpful visual input precedes a word (e.g., seeing an “eating” gesture and then hearing the word “eat”), there is decreased activity in left IFG and AC because the presence of congruent iconic gestures minimizes the potential for error when identifying the word. This is because the context of the gesture greatly constrains the possible words it might accompany, thus requiring less metabolic activity in the network to get it right. In contrast, when there is no helpful visual input—or worse, misleading input—there is increased activity in left IFG and AC because the system is on alert for making a mistake, and this requires increased metabolic energy in these brain regions. In this way, according to Skipper’s model, decreases and increases of activity in the left IFG and AC network signal two very different things: Decreases reflect that there is a low likelihood of an error, whereas increases reflect a high likelihood.

In light of this model, our results make more sense. When we artificially stimulated the “gesture” part of the network (left IFG), the auditory portion of the network (AC) may have interpreted that increased activity as an error signal, and this might have prompted allocation of more resources to the network. Deploying these additional neural resources may have disrupted the subsequent connection between the English translations (and associated gestures) and the to-be-remembered new Japanese words. 5 Although this interpretation is speculative at this point without additional data, it could be tested using tDCS by delivering cathodal stimulation—which would decrease activity in left IFG—and testing whether this decrease enhances the effect of gesture.

Cognitive load

A third possibility offers a more straightforward explanation. Perhaps tDCS was simply a distraction to subjects. Participants were already exposed to a lot of novel information—foreign words, accented speech, visual gestures—and many learners commented that the task was difficult. 6 This flood of information, coupled with the distractions of the tDCS stimulation reported on the questionnaires (itching and tingling), could have created a state of cognitive load that negated the positive effects of gesture. After all, there was no main effect of stimulation, suggesting that tDCS modulation over left IFG did not make the overall task harder, but rather, made it harder to use gesture to help with the task. This possibility makes sense in light of recent research, showing that gesture is less effective under cognitive load (Kelly & Lee, 2012; Post, Van Gog, Paas, & Zwaan, 2013), so perhaps participants were already at their maximum processing level, and the addition of tDCS was enough to mitigate any beneficial effects of gesture.

Finally, although not an a priori prediction, further support for the cognitive load interpretation comes from the effect of tDCS stimulation on onomatopoeia words trained with gesture. Recall that in the tDCS condition, onomatopoeias trained with gesture produced no better learning than onomatopoeias trained without gesture (in fact, the trend was that gesture instruction produced worse learning than no gesture instruction). Because onomatopoeia words already have high levels of auditory “iconicity”—that is, they sound like what they mean—adding actual iconic gestures to those words could have provided too much information under stimulation, and this could have result in higher levels of cognitive overload, and subsequently, lower performance on the memory task.

Implications for FL Instruction

The goal of the present experiment was to explore whether a neuromodulation technique could enhance gesture’s already positive role in facilitating learning and memory of an FL. We were optimistic because neuromodulation has been used in many applied settings: It has been shown to improve rule-based knowledge in healthy adults (de Vries et al., 2010; Fregni et al., 2005), working memory in both healthy adults and Parkinson’s patients (Boggio et al., 2006), and long-term recovery from speech apraxia in aphasics (Baker, Rorden, & Fridriksson, 2010; Fridriksson, Richardson, Baker, & Rorden, 2011; Marangolo et al., 2011).

Despite these successful applications, we found no evidence of such positive effects in the present study—in fact, we found that tDCS was not only unhelpful on the overall amount of vocabulary learning, but it actually compromised the benefits of gesture. Regardless of the cause of that disruption, these findings are significant because if tDCS has a disruptive influence in the controlled environment of the laboratory, using it in the uncontrolled context of an actual classroom may make it even more unhelpful. Indeed, classrooms already have their share of distractions, and these may pose particular problems for FL learning because of the phonetic, morphological, and syntactic novelty of the material. As Hirata and Kelly (2010) point out, despite the intuition that more input is better, adding more and more layers of instruction—or distraction—to novice FL learners may be overwhelming and ultimately counterproductive.

To our knowledge, there are no published studies demonstrating the effectiveness of tDCS in facilitating gesture’s role in FL learning, and for that matter, we know of no studies using neuromodulation as a technique to boost FL learning more generally. Despite this, there is much excitement by scientists, educators, and the press regarding the promise of these techniques (for a recent news article, see Geddes, 2015), with a few high-profile studies showing benefits for application in the classroom (e.g., Snowball et al., 2013). Given how little has been published, it is important to proceed cautiously. In addition to sharing results demonstrating the beneficial effects of neuromodulation, it is important for the neuroscience and education communities to publish inconclusive or even negative results (as in the present study) regarding the effectiveness of the technique. Only by presenting the whole range of findings—the good, the bad, and the neutral—will educators and policy makers have access to the right information to make sound decisions about how (or even whether) to apply neuromodulation techniques in the future.

Going forward, it is our hope that the excitement about neuromodulation techniques does not overshadow important research focusing on less high-tech aids to FL learning. After all, unlike electronic brain stimulation devices, tried and true teaching tools such as hand gestures come as standard operating equipment for most FL instructors. This means that language teachers already have the potential to stimulate the brain in the old-fashioned way—just by moving their hands.

Footnotes

Appendix A

Word and Gesture Items.

| Japanese Verb | English Translation | Gesture |

|---|---|---|

| Nomu | Drink | Right-hand “C” shape raised up toward mouth |

| Hiku | Pull | Hands outreached in gripping position, retracting back to body |

| Arau | Wash | Fingers outstretched and overlapping, moving out and away from face |

| Tataku | Bang | Right-hand fist, upright, moving forward and backward |

| Kaku | Scratch | Left arm raised; right hand in “C” shape moving up and down over left arm |

| Taberu | Eat | Right-hand fist moving up toward mouth in a scooping motion |

| Osu | Push | Palms facing forward, extending out away from body |

| Haku | Sweep | Two fists, one above the other, wrists turning, and moving across body |

| Horu | Dig | Two fists, next to each other, sliding left, then extending up to the right |

| Nigiru | Squeeze | Hands apart, fingers extended, then moving in together and closing |

| Japanese onomatopoeia | English translation | Gesture |

| Pyokopyoko | Hopping | Right hand; index finger touching thumb, moving in “n” shape across body and back |

| Gorogoro | Rolling | Two fists rotating around one another |

| Fuwafuwa | Floating | Right hand; index finger touching thumb, moving in “u” shape across body and back |

| Guchagucha | Mashing | Right-handed fist moving up and down |

| Kirakira | Sparkling | Fingers outstretched, moving up and away from body |

| Nyoronyoro | Slithering | Right-hand flat with thumb up, moving fluidly from left to right in “s” shape |

| Patapata | Flapping | Hands extended away from sides of body, moving forward and backward at elbows |

| Shitoshito | Drizzling | Hands up and extended, moving straight downward while wiggling fingers |

| Kurukuru | Spinning | Right hand; index finger extended, pointing upward, and moving in circular motion |

| Yurayura | Swaying | Hands cupped and apart, moving from side to side together |

Note. Complete list of words taught during training: 10 verbs and 10 onomatopoeias and their corresponding gestures.

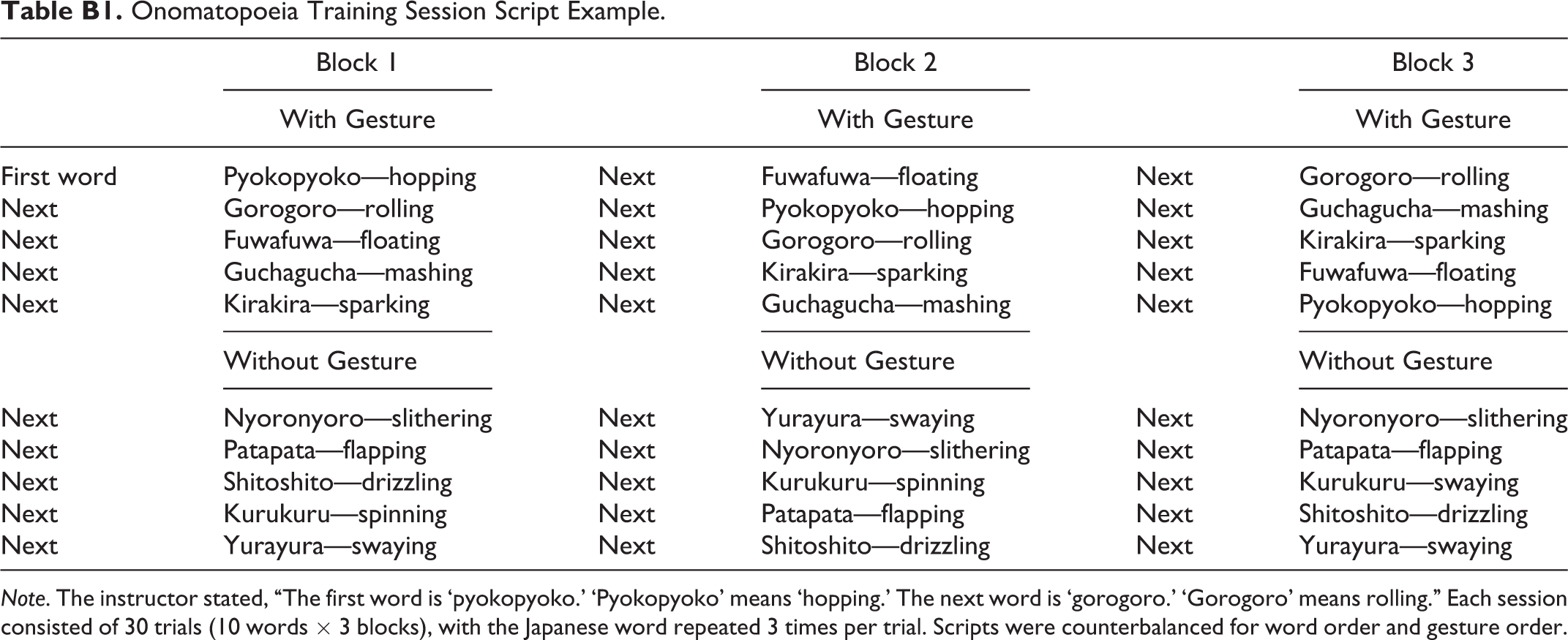

Appendix B

Onomatopoeia Training Session Script Example.

| Block 1 | Block 2 | Block 3 | |||

|---|---|---|---|---|---|

| With Gesture | With Gesture | With Gesture | |||

| First word | Pyokopyoko—hopping | Next | Fuwafuwa—floating | Next | Gorogoro—rolling |

| Next | Gorogoro—rolling | Next | Pyokopyoko—hopping | Next | Guchagucha—mashing |

| Next | Fuwafuwa—floating | Next | Gorogoro—rolling | Next | Kirakira—sparking |

| Next | Guchagucha—mashing | Next | Kirakira—sparking | Next | Fuwafuwa—floating |

| Next | Kirakira—sparking | Next | Guchagucha—mashing | Next | Pyokopyoko—hopping |

| Without Gesture | Without Gesture | Without Gesture | |||

| Next | Nyoronyoro—slithering | Next | Yurayura—swaying | Next | Nyoronyoro—slithering |

| Next | Patapata—flapping | Next | Nyoronyoro—slithering | Next | Patapata—flapping |

| Next | Shitoshito—drizzling | Next | Kurukuru—spinning | Next | Kurukuru—swaying |

| Next | Kurukuru—spinning | Next | Patapata—flapping | Next | Shitoshito—drizzling |

| Next | Yurayura—swaying | Next | Shitoshito—drizzling | Next | Yurayura—swaying |

Note. The instructor stated, “The first word is ‘pyokopyoko.’ ‘Pyokopyoko’ means ‘hopping.’ The next word is ‘gorogoro.’ ‘Gorogoro’ means rolling.” Each session consisted of 30 trials (10 words × 3 blocks), with the Japanese word repeated 3 times per trial. Scripts were counterbalanced for word order and gesture order.

Acknowledgments

We would like to thank Julie Falotico and Rachel Neal for data collection assistance, Suzumi Sanu for volunteering her time to provide our Japanese instruction, Nicholas Grunden and Dr. Bruce Hansen for technical tDCS instruction and support, Michael Manansala and Jessica Huang for experimental set up and materials, and our participants, all of whom made this study possible.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.