Abstract

Limited English proficiency (LEP) poses a significant barrier to effective communication and patient-centered care. Despite regulatory mandates, documentation of preferred language and interpreter use in electronic health records (EHRs) remains inconsistent, especially in urgent care settings were time and staffing constraints limit workflow interventions. This study evaluates a pragmatic, low-cost quality improvement initiative implemented at a tertiary-affiliated urgent care center (UCC) in New York City, serving a highly multilingual, predominantly non-White population, to improve documentation accuracy for LEP patients. From January to June 2023, triage nurses were trained to apply pink flag stickers on the doors of patients requiring interpreter services and to document language needs in the EPIC EHR system. Weekly error rates, defined as the proportion of patients labeled as “English-speaking” with documented interpreter use, were tracked using time-series and segmented regression analysis. Among 6604 encounters, the baseline error rate was 79.3%. One-week postintervention, this rate dropped by 21.1%, though not statistically significant (P = .55). The trend remained variable, underscoring the need for sustained education and engagement. While the intervention was not technology-based, it was designed to overcome practical EHR integration barriers unique to the UCC setting. The approach highlights how modest workflow adjustments drive frontline awareness and prompt action. Despite its limitations—including a single-site design, short duration, and data inconsistencies, this study contributes a replicable model for improving communication equity for LEP patients. Broader implementation may inform system-level strategies that enhance patient experience and documentation quality.

Introduction

The U.S. healthcare system serves a diverse and multilingual population. In 2019, approximately 67.8 million individuals in the United States reported speaking a language other than English at home, and 27.1 million (about 40%) were classified as having Limited English Proficiency (LEP). 1 In New York City, this proportion is even higher, with 1.8 million residents (roughly 25% of the population) considered LEP. 2 Despite these demographics, documentation of preferred language and interpreter use remains inadequate across healthcare settings. Prior studies have found up to 20% error rates in preferred language documentation compared to patient self-reported language.3–5 Moreover, front desk staff correctly identify LEP patients only 60% to 80% of the time. 6

The consequences of inaccurate documentation are dire in emergencies and urgent care settings. A lack of interpreter services—utilization rates have been reported to range from 11% to 89% in such environments, leading to communication failures and worsening health disparities.7–10 LEP patients are likelier to experience lower quality of care, misunderstanding of clinical instructions, and increased risk for adverse events during hospitalization.11–13

A few efforts have been made to improve documentation of preferred language and interpreter use for LEP patients. Quality improvement projects within emergency departments have implemented an LEP icon on the ED dashboard, a language interpreter use survey on the electronic health records (EHR), and font changes on EHR when the survey was completed, which improved the frequency of preferred language and interpreter use documentation.6,14 Other strategies include provider training and dual-headset telephones in patient rooms, which will enhance both language interpreter service (LIS) usage and awareness. 15

Though these studies showed an increase in recorded language interpreter use and documentation on EHRs, these methods are challenging to implement in the context of an urgent care center (UCC). This study aimed to improve documentation of preferred language and interpreter use at a UCC using a low-cost “door-flag” method.

Method

Theoretical/Conceptual Framework

The Toyota 8-Step Problem-Solving Approach underpinned our intervention strategy, applied through the A-3 problem-solving framework (Mohd Saad et al, 2013). This systematic methodology helped us to deeply understand the specific challenges by shadowing registration staff and providers at the UCC. Specifically, we chose to address the underlying issues of the lack of documentation of language preference and the inability of physicians to determine LEP patients before entering their rooms. Additionally, we employed process mapping to visually document and analyze each phase of the patient care process, particularly focusing on interactions involving LEP patients (see Supplemental Figure S1). This approach facilitated a targeted and effective intervention, aiming to enhance the quality of care by ensuring precise communication needs are met.

Study Setting and Population

The research was conducted at a tertiary care center affiliated with a UCC, situated within the precincts of a main teaching hospital in New York City, from April 1, 2023, to June 30, 2023. The clinic stands as a beacon of medical care in a locality where 86% of its citizens identify as non-White and nearly half communicate in a non-English language at home. Given the demographic diversity and the inherent challenges associated with language preference documentation for LEP patients, UCC mirrored the complexities many emergency departments faced nationwide. The decision to opt for this site stemmed from its relatively condensed patient volume compared to the primary teaching hospital, despite sharing a similar patient demographic owing to its proximity. Such a setting was perceived as ideal for piloting an intervention, which, if successful, could then be extrapolated to larger emergency and urgent care settings.

Measurement and Intervention Strategy

We implemented a flag-based intervention to address the gap in consistent documentation of language preferences and interpreter use within the EPIC® EHR system. This strategy involved comprehensive training for triage nurses on the need for accurate documentation of patients’ language preferences, one flag per patient placed on the patient's door, and where to document language information in the EHR.

Triage nurses received an initial one-time training session during implementation, with informal reminders given during weekly huddles throughout the intervention period After this training, these nurses were assigned to mark the doors of patients needing an interpreter with a pink flag sticker and simultaneously log the patient's preferred language and interpreter usage in the EPIC system, specifically under the “Language Interpreter Used” section. A new sticker will be used for each new patient. The choice to involve triage nurses directly stems from their unique position; they interact with every patient entering the urgent care site and have the necessary access to input data into EPIC, unlike the registration team that uses the Cerner system.

During the intervention period, which lasted from April 1 to June 30, 2023, we initially provided a set number of door flags, replenishing them as needed. We calculated the difference between the initial and final flag counts each week to determine the weekly influx of LEP patients. This data was then compared to the “Language Interpreter Used” entries in EPIC to evaluate the intervention's effectiveness. Additionally, we assessed documentation accuracy by comparing these EPIC entries with the actual language preferences recorded for patients. The primary goal was to closely monitor interpreter utilization and identify any discrepancies in documentation over these 6 months, analyzing both before and after the intervention's implementation.

Error Rate Computation

In our study, we define “error rate” as the percentage of patients documented as “English-speaking.” We defined error rate as the proportion of patients who were labeled as “English-speaking” in the EHR but for whom interpreter services were documented. This reflects potential gaps in documentation accuracy or triage identification of LEP status.” The error rate for any given week was calculated with the formula:

Time Series Analysis

To assess the impact of the flag-based intervention, we conducted a time-series analysis. The analysis tracked the variation in error rates before and after the intervention, providing insights into both the immediate effects and the enduring influence of our actions.

We gathered weekly error rate data from January 1, 2023, to June 30, 2023. This range included 3-month periods before and after the intervention commenced on April 1, 2023, giving us a detailed picture of error rate fluctuations over time. Following data collection, we plotted these figures chronologically to pinpoint any direct consequences of the intervention and to identify persistent trends over the analysis period.

Segmented Regression Analysis

A segmented regression analysis was conducted to analyze trends preintervention, 1 week postintervention, and long-term postintervention. Grounded in the model is the following equation:

where β0 is the baseline error rate at the start of the study, β1 is the rate of change in error rate over time before the intervention, β2 is the 1-week change in error rate following the intervention, and β3 is the rate of change in error rate over time after the intervention.

Results

Our study included 6604 participants, with a mean age of 46.0 years (SD = 17.8 years).

Age distribution was consistent across pre- and postintervention groups. Overall, 64.2% of participants identified as female, and 35.8% as male.

The most common language preference was English (91.9%), followed by Spanish (6.2%). Other languages, such as Bengali, French, and Arabic, each represented less than 1% of the sample. Detailed racial, ethnic, and religious breakdowns are provided in Table 1.

Demographics.

*Pearson's chi-square test (P ≤ .05).

**Two-sample t-test (P ≤ .05).

Interpreter Use by Language Label Before and After Intervention

Concerning language preferences, the dominant group was English speakers, accounting for 91.9% of the total, followed by Spanish speakers at 6.2%. Minor languages included Bengali, French, Arabic, Chinese (Cantonese), Italian, Portuguese, and others, comprising less than 1% of the total.

Interpreter Use by Language Label Before and After Intervention. A comparative analysis was conducted on the number of patients who utilized interpreter services, differentiating between those labeled as English speakers and non-English speakers, during both pre- and postintervention periods (Table 2). Preintervention, 164 patients identified as English-speaking, and 35 labeled non-English-speaking accessed interpreter services. Postintervention, there was an observed increase in the number of English-labeled patients who used interpreter assistance, rising to 197. In contrast, 43 patients labeled as non-English-speaking utilized interpreter services in the postintervention phase. Overall, 361 English-labeled patients and 78 non-English-labeled patients availed themselves of interpreter services during the entire study period.

Breakdown of Patients With Language Interpreter During the Visit.

Interpreter Usage and Associated Error Rates

Interpreter usage and associated error rates between January 1, 2023, and June 30, 2023, we tracked weekly interpreter usage and error rates. As defined in the Methods, the error rate represented the proportion of patients labeled as “English-speaking” in the EHR who nonetheless received documented interpreter services. A higher error rate therefore reflects greater misclassification of LEP patients at triage, while a lower rate indicates improved accuracy of language preference documentation.

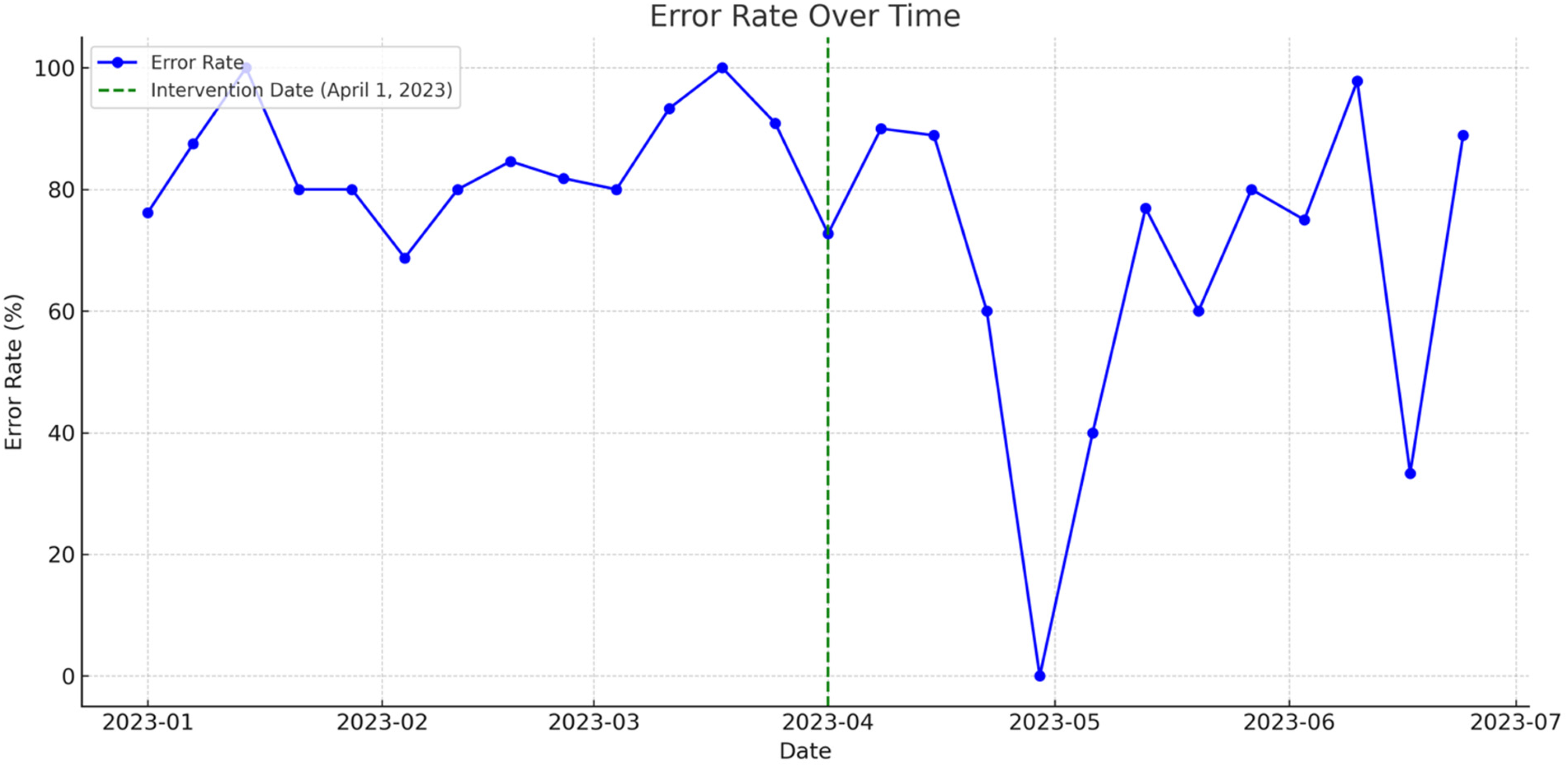

As seen in Figure 1, during this 6-month observation period, interpreter usage patterns fluctuated considerably across weeks, as summarized in Supplemental Table S1. Prior to the intervention, error rates were consistently high, ranging from

Time series of error rate over time.

Segmented Regression of Interpreter Error Rate Over Time

Complementing the time series and tabular analyses, a segmented regression model was employed to quantitatively assess the impact of the intervention on the error rate from January to June 2023. Figure 2 juxtaposes actual error rates (blue, solid line) against the predicted error rates (red, dashed line). Notably, the green dashed line demarcates the intervention date.

Actual versus predicted error rate over time.

The derived results were:

The initial error rate—meaning the proportion of misclassified LEP patients—was 79.3% at baseline, indicating substantial discordance between documented language preference and actual interpreter use. Prior to the intervention, there was a marginal weekly rise in the error rate by about 0.8% (P = .62). Consequent to the intervention's implementation, there was an immediate drop in the error rate by approximately 21.1% (P = .55). Postintervention, the weekly trend in the error rate evidenced a decrease by roughly 0.4% (P = .87).

Discussion

Our study revealed substantial variation in the documentation of language preference and interpreter use within an urban UCC setting. The observed preintervention error rates underscore the systemic inconsistencies in identifying and supporting patients with LEP, echoing prior literature on health communication disparities.7,10,16 Our findings suggest that even low-cost, analog interventions, such as pink door-flag indicators and staff training, may influence frontline behavior, particularly when integrated into existing triage workflows.

Staff feedback indicated that the pink flag system was easy to implement and helped prompt real-time awareness of LEP needs. However, some nurses expressed concern about sticker supply and the need for clearer documentation protocols, suggesting that sustained improvements would benefit from stronger system-level support.

Although the segmented regression did not demonstrate statistically significant improvements, the initial 21.1% drop in error rate represents a clinically relevant change. This aligns with past research indicating that even small interventions can have tangible effects on documentation and equity in interpreter service use.6,9 While we observed an immediate drop in error rate after intervention rollout, this improvement was not sustained across the study period. As summarized in Supplemental Table S1, initial gains gave way to substantial variability—likely due to inconsistent flag implementation, documentation lapses, and competing clinical demands in the urgent care environment. This suggests that point-of-care interventions require ongoing reinforcement to maintain improvements in LEP documentation. However, our fluctuating postintervention trends likely reflect challenges common in dynamic care environments: high staff turnover, competing clinical priorities, and variable adherence to new protocols.

Another important observation was the discrepancy between flag counts and interpreter documentation, suggesting potential lapses in consistent implementation. Similar to findings in other care settings, this points to the importance of continuous reinforcement and system-level integration—lessons also emphasized in national studies of interpreter reimbursement and access.16,17

One major limitation was that our study was a single-site pilot study conducted in East Harlem, limiting generalizability to other UCCs or outpatient settings. In addition, we could not account for undocumented interpreter use or situations where family members served as ad hoc interpreters—both known issues that complicate documentation accuracy.8,18 The intervention's reliance on physical flag usage introduces variability in tracking and implementation fidelity. Lastly, the facility's closure precluded long-term analysis.

Conclusion

In conclusion, our study demonstrates that a low-cost, flag-based intervention can meaningfully improve documentation of preferred language and interpreter usage in urgent care settings. By enhancing visibility of LEP patients at the point of care and standardizing EHR documentation practices, this quality improvement effort offers a replicable model to support linguistic equity in similar clinical environments. Continued investment in language access infrastructure remains essential to addressing structural barriers that LEP patients face throughout the healthcare system.

As supported by large-scale LIS claims data studies, reimbursement policies alone are insufficient; consistent identification, documentation, and education remain critical levers to ensure equity. 16 Our findings add practical insight into bridging LIS access gaps for LEP patients, particularly in high-volume urban urgent care settings.

Supplemental Material

sj-docx-1-jpx-10.1177_23743735261418003 - Supplemental material for Improving Accurate Documentation of Limited English Proficiency Patients at a Tertiary Affiliated Urgent Care Center in New York City

Supplemental material, sj-docx-1-jpx-10.1177_23743735261418003 for Improving Accurate Documentation of Limited English Proficiency Patients at a Tertiary Affiliated Urgent Care Center in New York City by Jaskiran Dhinsa, Vibhor Mahajan and Ka Ming Ngai in Journal of Patient Experience

Supplemental Material

sj-jpg-2-jpx-10.1177_23743735261418003 - Supplemental material for Improving Accurate Documentation of Limited English Proficiency Patients at a Tertiary Affiliated Urgent Care Center in New York City

Supplemental material, sj-jpg-2-jpx-10.1177_23743735261418003 for Improving Accurate Documentation of Limited English Proficiency Patients at a Tertiary Affiliated Urgent Care Center in New York City by Jaskiran Dhinsa, Vibhor Mahajan and Ka Ming Ngai in Journal of Patient Experience

Footnotes

Acknowledgments

We acknowledge the Student High Value Care Curriculum at the Icahn School of Medicine for its significant contribution to this study's framework, providing essential insights and methodologies that shaped our research approach. We would also like to acknowledge Tamanna Obyed, MHA, MPH, for her valuable assistance in the initial design of our study.

Author Contributions

JD and VM contributed equally to the conception and design of the study, collection and analysis of data, interpretation of results, and manuscript drafting. VM and KMN provided expertise in data analysis and contributed to interpreting results. JD and VM contributed to the conception of the study and provided critical intellectual input throughout the drafting process. KMN took responsibility for the manuscript and supervised the research process. All authors reviewed and approved the final version of the manuscript for submission.

Availability of Data and Materials

All relevant data are available as supplemental materials.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethics Statement

The Mount Sinai IRB office has determined that this study qualifies as EXEMPT human research under the DHHS regulations, specifically 45 CFR 46.104(d), as all data were provided in aggregate without any identifiers.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.