Abstract

Person-centered care focuses on the needs of the individual receiving care, and involves cooperation between patients and health professionals to develop and monitor care. This research demonstrates that online patient reviews provide a rich, real-time, and detailed source of patient experience that can be used for this purpose. This study also shows that unstructured online data can be quantified using machine learning and natural language processing to automatically flag and rate patient reviews. We describe a supervised learning approach, training a model on a large dataset of manually annotated patient reviews. We report model scores of 99% accuracy in predicting overall score, and 93% to 99% in predicting relevance to seven domains of patient experience, such as Effective Treatment, Fast Access, and Emotional Support. Furthermore, we show statistically significant alignment between these aggregated online patient reviews and HCAHPS star ratings—a “gold-standard” measure of care quality for hospitals in the United States. This approach enables benchmarking between health systems and evaluating the impact of interventions on patient experience, while quantifying and enhancing the patient-centeredness of care.

Keywords

Introduction

Patient-Centered Care

Person-centered care sees patients “as equal partners in planning, developing and monitoring care to make sure it meets their needs,” 1 and emphasizes the importance of patient preferences, values, and experiences in their treatment. The term “Patient-centeredness” was first defined in the Institute of Medicine report, “Crossing the Quality Chasm” as “providing care that is respectful of and responsive to individual patient preferences, needs, and values and ensuring that patient values guide all clinical decisions.” 2 This remains the gold-standard definition of patient-centeredness, although multiple variations have been offered. 3 The Beryl Institute defines Patient Experience as “the sum of all interactions […] that influence patient perceptions across the continuum of care.”4,5

The Picker Principles are the foundational approach to delivering patient-centered care, 6 which define quality dimensions to measure and understand patient-centered care. The principles include, emotional support, effective treatment, fast access to reliable services, communication and involvement, continuity of care, attention to physical and environmental needs and involvement in decisions. 7

Patient, or person-centered care is essential to delivering high quality care and supporting patient experience. 3 Patient-centered encounters result in better patient and physician satisfaction, fewer malpractice complaints, improved health status and increased efficiency. 8 Over 90% of patients said it is important to have a good experience as a patient, with 54% stating that this contributes to healing and good healthcare outcomes in the Beryl Institute's Consumer Perspectives on Patient Experience. 9

The Need for Patient-Centered Feedback

The move in the United States toward person-centric care necessitates a better understanding of patient experience to codify, measure, and report insights in a meaningful, actionable, and timely way to improve quality. All health systems in the United States currently solicit patient experience data through surveys, which can provide interpretable findings that can be used to stimulate and guide quality improvement efforts. 10 These include HCAHPS (Hospital Consumer Assessment of Healthcare Providers and Systems), commissioned by the Centers for Medicare and Medicaid Services (CMS) to capture patient experience across the United States. HCAHPS was designed to allow transparency, accountability, and meaningful comparison, 11 and as such is the “gold-standard” metric for comparing hospital quality. Standardized surveys like HCAHPS enable large-scale analyses, showing, for instance, that lower patient experience scores in hospitals with more Black and Hispanic patients are partly offset in those serving higher proportions of these groups. 12

However, surveys have limitations. They are expensive to administer 13 and suffer from extensive time lag; HCAHPS results cover a 12-month period that ends around nine months before publication. 11 They are also constrained by format; patients can only respond to the specific questions asked. Surveys commissioned by health systems typically only cover users of the system in question, preventing their usage for benchmarking, or competitor insight. Responses to surveys such as CAHPS skew extremely positive. 6 Healthcare surveys may also influence responses, through wording or acquiescent responding,13,14 and under-represent demographic groups, including people who are non-white, less well educated, or uninsured (in the USA). 14 Patient surveys also exclude the experiences of the patient's family and friends, and that of potential patients who refused treatment, or left before treatment. HCAHPS may suffer from nonresponse error bias, bias toward smaller hospitals, and against hospitals that treat high acuity patients. 15

These limitations necessitate an alternative resource to understand patient experience. This is provided by online patient reviews. In contrast with surveys, these are unsolicited, 16 unstructured, real-time, 17 low burden for patients, 16 and contain rich qualitative insights. These factors enable patient-centered feedback, where patients discuss the issues that matter most to them.

However, the use of online reviews faces two key challenges: collation from disparate sources, and insight extraction from a single unstructured text field. Automation through web scraping and natural language processing (NLP) provides a promising solution to collect and analyze these disparate, unstructured, volumes of text and identify key themes. 18 The use of multiple sources enables a “wisdom of the crowds” effect, which mitigates the biases of any single source, and enables a comprehensive and accurate view of patient experience. 17 The unstructured format allows patients to express what is important to them, without being led by survey fields.

Patient-Centered Feedback and VBH

Patient-centered feedback is essential for healthcare providers operating under value-based healthcare (VBH) models of payment, which hold systems accountable for delivering on the Triple Aim: Outcome, Cost, and Patient Experience. 19 VBH aligns the needs of patients, providers, and health plans, and aligns care with how patients experience their health. 20

Payers and providers have data to track the first two components of the Triple Aim, but a historical lack of timely and quantifiable patient experience data has prevented accurate tracking of its delivery. Patient experience is not “just” part of quality; rather, a majority of patients cite quality, safety, and service as the key components of experience. 9 Patient experience, whether positive or negative, is associated with profitability, 21 patient loyalty and reduced risk of malpractice. 22 Therefore, effective monitoring and interventions around patient experience not only lead to better health outcomes but also contribute to reduced readmission rates, higher patient retention, and lower operational costs.

Research Questions

This work seeks to answer the following:

Can patient experience be captured from online patient reviews, using automated methods? Does the resulting “patient-centered feedback” align with a gold-standard measure such as HCAHPS?

This contrasts with existing work23,24 that analyses the text responses to HCAHPS survey questions; instead, it analyses unsolicited comments and uses HCAHPS scores as a gold-standard to compare these against. It expands on previous work 25 that compared online reviews to HCAHPS ratings, by looking at multiple online sources, by greatly increasing the number of hospitals and reviews considered, and by using NLP to extract and quantify themes.

Method

Identifying Data Sources

Patient reviews were collected from multiple sources to gain the most representative dataset possible. These included the most widely-used accessible sites within social media (Facebook, Twitter), general reviews (Google, Yelp) and healthcare reviews (Healthgrades, WebMD, RateMDs, CareDash, Vitals). Healthcare locations were mapped to their review pages by a fuzzy-matching process, using Levenshtein distance as a similarity metric, with manual intervention for lower-confidence matches.

Data Collection

Custom Python scripts were run daily, using web scraping and APIs to collect reviews posted in the preceding 24 hours. An additional one-off collection process also gathered earlier data. Duplicate comments were removed, and spam comments were filtered out using keywords. Reviews were stored in an AWS-hosted PostgreSQL database with metadata including URL and posted date. In total, over 30 million reviews were collected for healthcare locations in the United States, of which 1.31 million were posted about hospitals in the 12-month period July 2022 to June 2023 (inclusive). After collection, reviews were processed through the same source-ambivalent processing pipeline.

Overall Score

All sources except for Facebook and Twitter require the reviewer to leave a numerical 1 to 5 “star” rating of the entity being reviewed, such as healthcare system or location. This was used as the Overall score where available, to reflect patient sentiment. For sources without a user-submitted Overall score, this was calculated for each comment as the average of its domain scores. Over 80% of user-submitted overall scores were positive, contrary to expectations of a negative bias from dissatisfied patients.

Defining Care Domains

Care domains are topics which capture distinct, actionable themes of patient experience. The purpose of defining care domains is to allow healthcare providers to target and appraise interventions in particular areas.

Preliminary work used eight care domains, corresponding to the eight Picker principles. To better represent the topics that patients actually discuss, two of these principles which were rarely mentioned (“Involvement and support for family and carers” and “Involvement in decisions and respect for preferences”) were merged with “Clear information, communication, and support for self-care” to form the “Communication and Involvement” domain. An additional domain, “Billing and Administration” was also added, resulting in seven care domains.

To quantify these qualitative patient feedback domains, all reviews were assigned a numerical score for each domain, in a two-stage process. First, a subset of patient comments were assigned domain scores through manual annotation. Then, the resulting labelled data was used to train a machine learning model, to automate the domain scoring for the rest of the data.

Manual Annotation of Data

A team of five annotators manually labelled over 100,000 comments. For each of the seven care domains, every comment was flagged for relevance, and if relevant, also assigned a Likert scale score from 1 (strongly negative) to 5 (strongly positive). To ensure consistent annotation, the care domains were defined in detailed documentation that outlined the criteria for relevance and scoring. Inter-annotator agreement was calculated as follows: if the majority of annotators have the same score for a comment, a comment was flagged as incorrect for those who assigned a different score. If each annotator had a different score, annotators discussed the comment, and if they reached a consensus after discussion, the original annotation matching consensus was marked as correct. Individual annotator accuracy ranged between 85% and 96%.

Modelling Domains

The manually labelled comments were used to train models, which were then used to annotate the remaining, unlabeled, data. Open-source large language models (LLMs) are pre-trained on a large text corpus to understand language patterns, 26 before being fine-tuned to perform specific tasks. Fifteen models were trained in total; two per domain, to flag relevance and Likert score, plus one additional model to predict Overall score.

Hyperparameter optimization was performed by using a Bayesian approach. Model fine-tuning and optimization were performed on AWS Sagemaker, using cross-validation to optimize model accuracy. Of the annotated data, 90% was used in this training process; the remaining 10% was held-out as a test set, to assess performance by comparing model outputs to manual annotations.

HCAHPS Validation

Overall Score

To verify that online reviews reflect real-world ground truths of patient experience, review scores were compared against HCAHPS Summary Star Ratings. HCAHPS scores are calculated quarterly, based on a rolling four-quarter period. Summary Star Ratings are calculated for hospitals with at least 100 surveys in this period, and are intended to summarize survey responses simply, “making it easier to use the information and spotlight excellence in healthcare quality”. 10

To assess agreement between the two metrics, hospitals were divided into groups according to their HCAHPS Summary Star Rating. The distribution of 1-, 2-, 3-, 4- and 5-star online patient reviews was then compared between each group. All hospitals receive a mixture of positive and negative reviews; this experiment determined whether this distribution varies between hospitals of different Summary Star Ratings. If hospitals which are positively rated by HCAHPS have a more positive set of online reviews than those which are negatively rated, this would demonstrate agreement between the two metrics. As online scores are collected in real-time, agreement would enable online reviews to be a leading indicator of HCAHPS performance. This is of particular utility given the lag between the HCAHPS survey and publication.

Measures

HCAHPS provides scores for specific “measures” of health system performance, which are analogous to our patient experience care domains. Measures were mapped to one or more corresponding domains, to allow comparison. For example, the “Cleanliness” and “Quietness” measures each correspond to the “Attention to Physical and Environmental Needs” domain, and the “Doctor communication” measure corresponds to “Emotional Support”, “Effective Treatment”, and “Communication and Involvement” domains. This mapping is shown in Table 2. A similar approach was then employed to compare score distributions between groups of hospitals with different measure scores, as was described for Overall scores.

Model Performance for Prediction of Relevance to Each Domain, and Score for Each Domain.

Mapping From HCAHPS Measures to Corresponding Patient Experience Domain, and Significance of Difference in Distribution.

Results

Model Performance

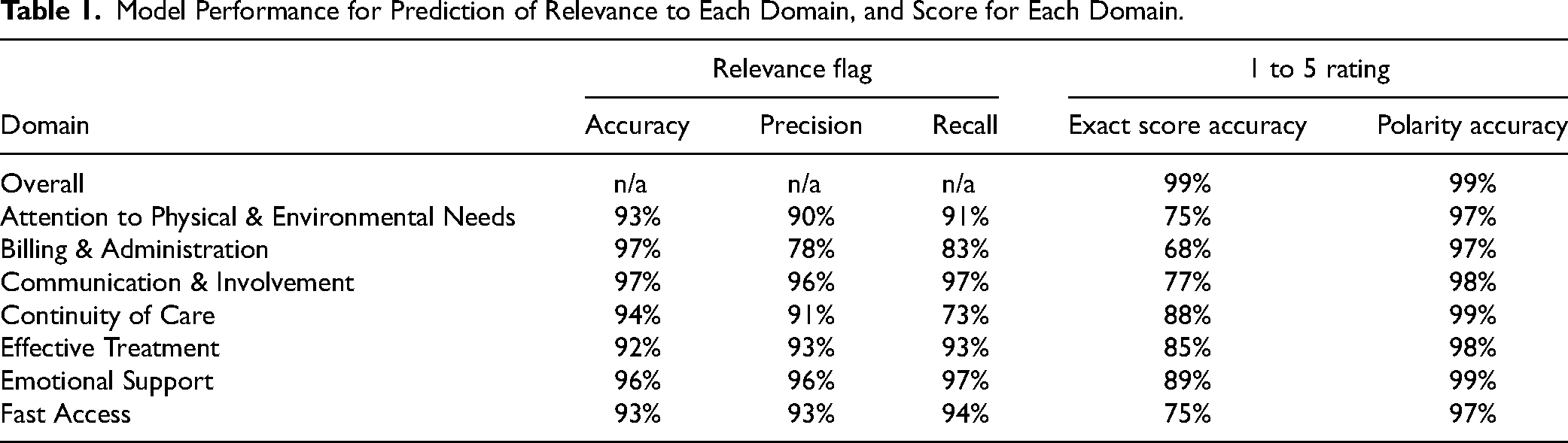

The predictive models for domains and Overall score show strong accuracy when compared to human annotation (summarized in Table 1). The 1 to 5 domain rating was assessed via two metrics: how often it was predicted exactly, and how often its polarity was correctly assigned as positive or negative. The small number of 3-star reviews (<1% of data in each domain) were removed to calculate this latter measure.

Overall score was predicted exactly in over 99% of cases. The sentiment polarity scores were predicted with 97% to 99% accuracy across all domains. The more stringent measure of exact 1 to 5 accuracy ranged from 68% (for Billing & Administration) to 89% (for Emotional Support). This metric is strongly impacted by training data volume; the lowest-scoring domain is the least-mentioned, which suggests that it could improve with additional manually labelled data.

HCAHPS Validation

Online patient scores strongly align with HCAHPS Summary Star Ratings (shown in Figure 1). Hospitals with 5-star HCAHPS Summary Star Ratings had the highest average online scores, the most positive online patient reviews (87.5% were 5-star or 4-star), and the fewest negative online reviews (11.4% were 1-star or 2-star). Conversely, hospitals with 1-star HCAHPS ratings had the lowest average score, the fewest positive reviews (69.8%) and the most negative reviews (27.7%). Hospitals which received 4-star, 3-star, and 2-star HCAHPS scores followed in the same pattern. The Mann–Whitney U Test was chosen as a suitable test 27 to establish whether these differences in 5-point Likert score distributions were significant. For each pairwise comparison of scores (5-star vs 4-star, 4-star vs 3-star, etc.), the P-value was <.01, indicating a significant difference in the distribution of patient scores between each pair of groups. This shows agreement between the Overall online review scores, and HCAHPS scores.

(a) Average score of online hospital reviews, across all hospitals with a given HCAHPS Summary Star Rating (left), and (b) distribution of online patient score for hospitals, broken down by HCAHPS Summary Star Rating (right). Based on data from July 2022 to June 2023 (inclusive), for all hospitals with HCAHPS Summary Star Rating.

Looking more granularly, the mapping from specific HCAHPS measures to corresponding care domains resulted in 13 measure-domain pairings. The distribution of domain scores was compared between hospitals with negative HCAHPS scores (1- and 2-star) for that HCAHPS measure, and hospitals with positive HCAHPS scores (4- and 5-star) for that measure. For all 13 pairings, the hospitals with positive HCAHPS measure scores had higher average scores in the corresponding domain than those with a negative HCAHPS measure score (shown in Figure 2). There was a significant difference in score distributions for all 13 pairings. That is, hospitals with a positive rating for a given HCAHPS measure had significantly better scores for the corresponding domain (summarized in Table 2).

Average score for online patient experience domain, for positive (green) and negative (red) HCAHPS scores.

Discussion

Strengths

This work has shown that online, publicly accessible data provides a quantifiable measure of patient experience, which can be captured through automated collection and analysis, and validated against HCAHPS.

This approach has a number of unique advantages over traditional surveys:

Timely: Automation enables daily collection and analysis of patient reviews, enabling near real-time monitoring, timely intervention, and continual improvement.

Cost-effective: Online review analysis massively reduces the administrative cost of collecting experience data, and requires no additional patient effort.

Representative: Collecting reviews from diverse sources, with distinct user demographics, ensures a representative pool; this includes caregivers, family, and potential patients denied care, who are all excluded from traditional surveying.

Unsolicited: Online patient reviews are unstructured, without specific prompts, empowering patients to talk about whatever matters the most to them in a “bottom-up” approach.

Scalable and consistent: Automated annotation enables patient experience analysis at a scale unachievable with manual processing, allowing consistent methodology nationwide. This facilitates “like-for-like” comparisons for benchmarking and quality assessment at local, system, and regional levels.

Uses

This work has three key potential user groups. Healthcare systems can monitor performance in real time, allowing early intervention to improve patient outcomes, and learnings from well-regarded patient care. Administrators such as insurance companies can benchmark locations and systems across a network. Regulators can utilize real-time data to assess performance and target timely inspections or interventions.

Future Work

This work opens the door to deeper analysis. Firstly, each of the domains can be divided into more granular categories to facilitate targeted improvement, and new domains can be added. The impact of each domain as a driver of Overall score should also be analyzed. Secondly, this approach should be expanded to analyze comments from different languages to gain a more holistic understanding of patient experience, and to investigate differences in experience between language groups. Preliminary work on Spanish-language comments suggests that automated translation into English allows existing models to achieve similar levels of accuracy when compared to native Spanish-language manual scoring. Thirdly, this approach can be expanded to additional sources, and different types of data, such as conversational analysis. Lastly, there is opportunity for further validation with other data sources such as the HINTS survey, and with quality metrics such as outcomes data, to triangulate VBH.

Limitations

Online survey data “can only identify those most likely to be performing poorly.” 13 Secondly, using unverified reviews may include responses to public events or news. However, this effect is balanced by the inclusion of relevant reviews from non-patients. A third limitation is digital exclusion; some patients lack access to internet reviews. However, this is also mitigated by the inclusion of non-patients such as carers and family members. Lastly, patients who feel strongly about their care may be more likely to leave reviews, causing a potential bias towards polarized 1- and 5-star reviews.

Conclusion

These results validate NLP analysis of online patient experience sources on two levels. First, a single patient review can be accurately assessed for relevance and sentiment across several healthcare-specific themes. Second, multiple patient reviews processed in this way can, in aggregate, predict the performance of a “gold-standard” metric of patient outcomes.

On the first level, this work demonstrates that qualitative patient feedback can be quantified at scale, transforming in-depth and subjective feedback into measurable value. This allows benchmarking within and between health systems and geographic regions. Compared to previous work analyzing patient experience, this work improves in four areas: the volume of data being collected and analyzed, the level of detail in themes extracted from comments, the timeliness of analysis, and the accuracy with which these themes are extracted.12,13,20

This validation work has shown significant alignment between the HCAHPS overall hospital rating and the overall online score, and between HCAHPS ratings with their respective corresponding online domain scores. These results validate the performance of online data as a measure of patient experience which reflects real-world ground truths about hospitals. This indicates that aggregated online patient reviews offer a near real-time assessment of hospital performance, enabling the early identification of risks for declining patient experience and allowing for prompt corrective actions.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical Statement

This article describes a novel methodology to measure patient experience, using publicly-available data collected from around the web. This did not contain personally identifiable information. Since the data was anonymized and accessible in the public domain, ethical approval was not required in accordance with institutional and national ethical guidelines. No interventions or direct interactions with patients occurred during the data collection process. One potential risk is around presenting harmful or inflammatory content to healthcare providers; this can be handled by keyword-based filtering, and by automated redaction.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.