Abstract

All Accreditation Council for Graduate Medical Education accredited pathology residency training programs are now required to evaluate residents using the new Pathology Milestones assessment tool. Similar to implementation of the 6 Accreditation Council for Graduate Medical Education competencies a decade ago, there have been challenges in implementation of the new milestones for many residency programs. The pathology department at the University of Iowa has implemented a process that divides the labor of the task in rating residents while also maintaining consistency in the process. The process is described in detail, and some initial trends in milestone evaluation are described and discussed. Our experience indicates that thoughtful implementation of the Pathology Milestones can provide programs with valuable information that can inform curricular changes.

Keywords

Pathology residency training is diverse and includes options for anatomic pathology (AP) only, clinical pathology (CP) only, and combined AP/CP. There is significant variation across pathology residency training programs. For example, some programs may frequently train AP- or CP-only residents while others do so only infrequently. For AP/CP training, curricular structure as well as extent and timing of exposure to subspecialties within AP and/or CP may vary greatly between programs.

The Pathology Milestones provide a uniform framework for the assessment of pathology resident physicians in the context of their progression in Accreditation Council for Graduate Medical Education (ACGME)-accredited residency programs. 1 The Pathology Milestones, published in 2014, encompass 27 individual milestones, of which 21 apply to both AP and CP, 5 only to AP, and 1 only to CP. An AP/CP resident is assessed on all 27 milestones, while an AP resident is assessed on 26 milestones and a CP-only resident is assessed on 22 milestones. 1

It is expected that residency training programs establish a clinical competency committee (CCC) that evaluates each resident on all the applicable milestones on at least a semiannual basis. The ACGME recommends 3 to 5 faculty members on the CCC 1 ; however, guidelines from the Pathology Milestones Working Group suggested that it may be more appropriate to include up to 8 to 10 individuals with supervisory roles in areas of pathology such as autopsy, chemical pathology, cytopathology, hematopathology, laboratory administration, microbiology, pathology informatics, surgical pathology, and transfusion medicine. 2 The CCC could include board-certified pathologists, clinical PhDs, and pathology assistants. Ultimately, the composition of the CCC is up to the department to determine. Regardless, especially for larger programs, evaluation of every trainee for each applicable milestone requires a significant amount of time and effort. Further, consistency with regard to the evaluation process across all of the resident classes and longitudinally over time is essential for a successful evaluation system.

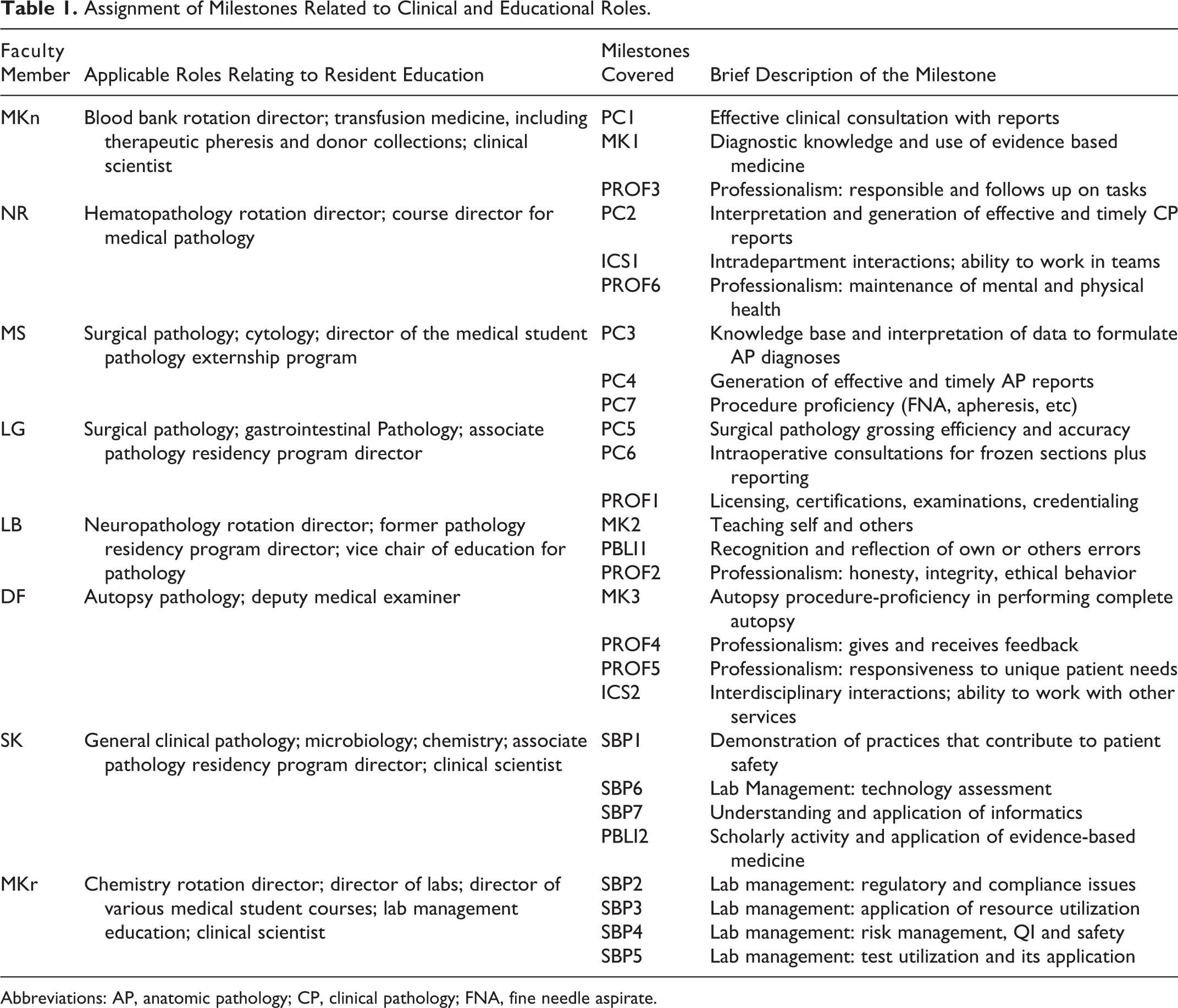

At the University of Iowa, we have developed a system that allows for the division of labor in formulating the evaluations for the milestones. Further, faculty were asked to assess all residents on areas for which they have a particular expertise and/or for which they have the most familiarity with resident performance. Table 1 outlines the specific areas of expertise for each faculty member along with the milestones each were asked to evaluate. For many of the milestones, we have been able to include a CCC member uniquely qualified to evaluate the residents. As follows, the committee consists of a mixture of AP- and CP-oriented faculty members. Having each member of the CCC responsible for only a few milestones allows him or her to gain experience with that milestone and develop a strategy to evaluate it.

Assignment of Milestones Related to Clinical and Educational Roles.

Abbreviations: AP, anatomic pathology; CP, clinical pathology; FNA, fine needle aspirate.

Once the CCC was formed, a chair of the committee was identified. It is the role of this person to ensure that all milestones are evaluated before the deadlines and to oversee the CCC meetings. For each 6-month cycle, the CCC members are asked to evaluate their assigned milestones for each resident. Typically, this is done for 2 resident classes at a time based on resident class size in our department. The CCC members rely on their own experiences with the residents plus a number of supporting documents. These include rotation evaluations from the past 6 months, previous milestone ratings for each trainee (both CCC ratings and resident self-ratings), their most recent test scores if available (resident in-service examinations, rotation examinations, etc), currently available resident aggregated portfolios, and the 360° evaluations that are submitted by nonfaculty staff (such as laboratory managers, supervisory staff, gross room staff, or fellows). All of the documents are placed into an internal, secured folder on a shared drive for CCC faculty to access. Evaluators may also contact other faculty or staff who might have additional particular insight on a trainee, if necessary, to complete their ratings. The CCC members are given several weeks to review the documents and derive their “draft” milestone evaluations for 2 resident classes. A 90-minute meeting with all CCC members and the residency program director (nonvoting member) is then held to discuss all of the milestones for the 2 resident classes (usually pairing the R1s and R2s). The Pathology Milestone document is projected in the meeting to remind everyone of the specific language for each milestone. 1 Each proposed milestone rating is discussed in the order listed in the Pathology Milestone document 1 for all residents in one particular class. After each preliminary rating is deliberated, a final evaluation rating is agreed upon for each resident in that class before moving on to the next milestone. The process is repeated for the second class being discussed, with all final evaluations recorded by the program coordinator, also present, onto a spreadsheet. This entire process is repeated for the remaining 2 resident classes (eg, R3s and R4s) in a separate 90-minute meeting at a later date. In total, it typically takes each faculty member 1 to 2 hours to develop their preliminary evaluations for all 4 classes of residents (20 total residents × 2-4 milestones assigned per member). Combining preparation time with meeting times results in a total of 4 to 5 hours every 6 months for each faculty member on the committee. To this point, we have had reliable participation from members of this committee with very little turnover in membership which has resulted in consistency in the milestone ratings within and between cycles. The program director also had meetings with both faculty and residents to describe each milestone, and there was a general consensus of our interpretation for each one. This early clarity was also key for consistency in the ratings.

As noted earlier, one of the supporting pieces of information available to the CCC members are the resident milestone ratings of themselves. We have had 1 “practice” cycle and our most recent official cycle of ratings where these were not yet available to the CCC during the meeting. In our experience, these ratings do not have any significant influence on the CCC ratings. However, this could be variable among programs, depending on the potential makeup of the committee. The decision regarding to availability of these ratings to the group at the time of making the assessments needs to be decided by each individual CCC.

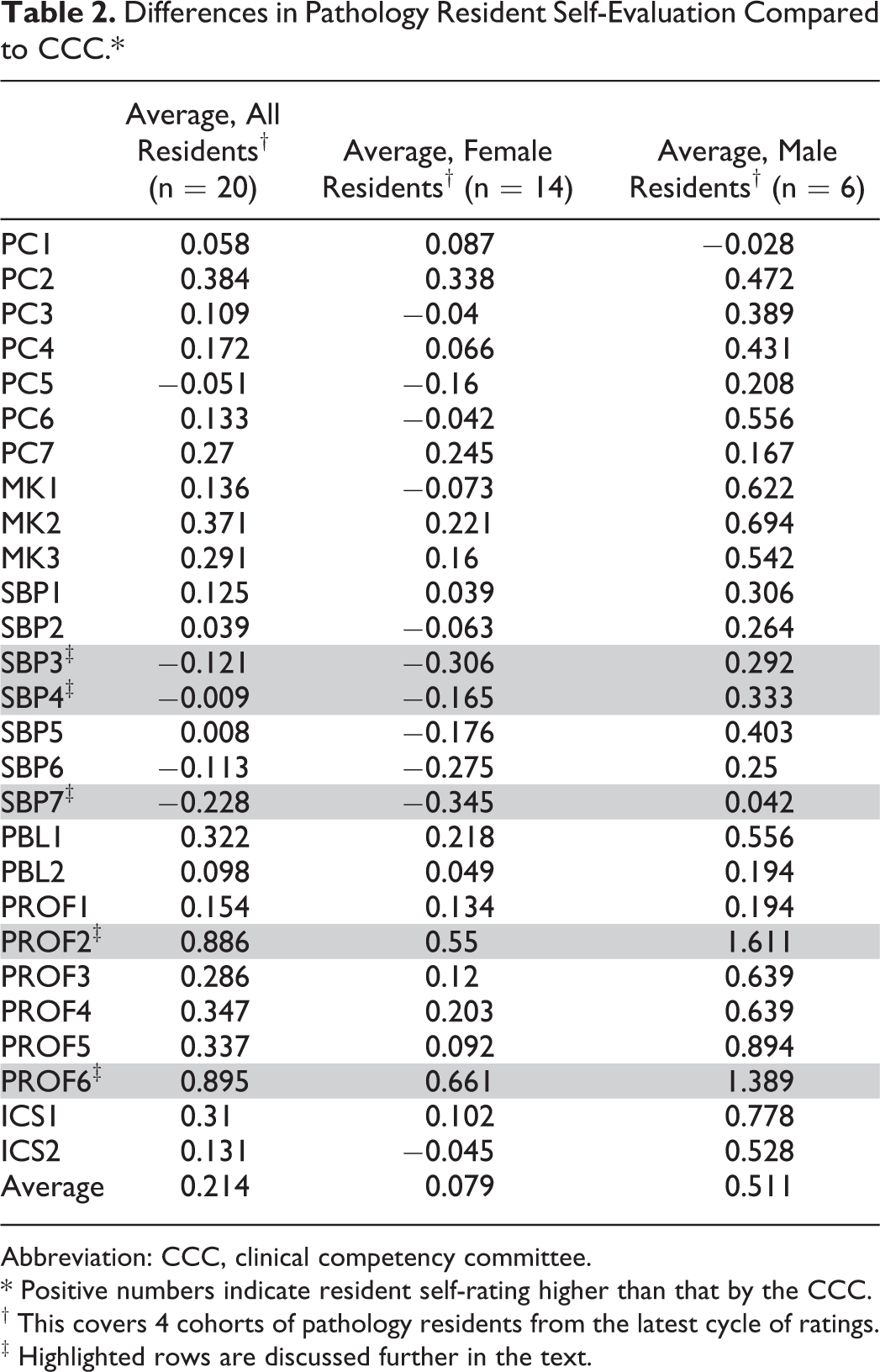

Also related to resident self-assessments, we have seen some interesting patterns. There are residents who consistently underrate themselves, presumably as a result of lower self-confidence. Feedback to residents who tend to underrate themselves using the milestone data has proven to be a useful tool for the program director to bolster the confidence of these trainees. Conversely, we also have a cohort of residents that trend toward overrating themselves. In these situations, the milestone ratings by the CCC have been used as a feedback mechanism for a trainee who might be a little overconfident in their abilities in a particular area. Other interesting observations with regard to resident self-ratings are highlighted in Table 2, which shows the differences between CCC and self-ratings for each milestone in aggregate. An initial observation is a suggestion that there may be differences in self-ratings by gender for some milestones. At this point, the numbers for this observation are insufficient to analyze, but this will be an area for further evaluation. The general, nongender-related differences between CCC ratings and the self-ratings could be secondary to several factors. First, there could be language in a milestone that is creating some confusion and inconsistency in how the ratings are being applied. We feel that this is particularly the case for PROF6, which describes the handling of responsibility in the maintenance of physical and mental health. It appears that the way our residents are interpreting this milestone is simply based on a “Am I healthy?” scale. However, the CCC has tended to read all of the milestone-level descriptors before assigning ratings. For this particular milestone, the language could be interpreted to suggest that there needs to be some kind of problem that was addressed appropriately for a resident to progress beyond level 3. Further, some of the level descriptors in this particular milestone describe private physical and mental health matters for trainees that the CCC has trouble assessing. PROF2, which describes elements of professionalism relating to integrity, honesty, and ethical behavior, also showed a significant discrepancy between CCC and self-ratings. As with PROF6, the language in this milestone can be challenging for residents and faculty to interpret.

Differences in Pathology Resident Self-Evaluation Compared to CCC.*

Abbreviation: CCC, clinical competency committee.

* Positive numbers indicate resident self-rating higher than that by the CCC.

† This covers 4 cohorts of pathology residents from the latest cycle of ratings.

‡ Highlighted rows are discussed further in the text.

A second reason that there might be a discrepancy between CCC and self-ratings could be due to perceptions the trainees have with regard to their training. An example from Table 2 where our residents have consistently rated themselves lower than the CCC rated them is SBP3. This milestone describes elements of laboratory management. Management was indeed being consistently covered but in a rather fragmented manner throughout the resident curriculum. Thus, the residents did not perceive this to be something that was a focus in our curriculum and thus did not rate themselves as being as competent as they were. As a result, we altered the content and title of one of the CP rotations to formally include curricular elements of laboratory management in one identifiable place. This improvement was made as a direct result of our experiences with implementing and evaluating the milestones and has helped our trainees recognize the training they receive in management.

Finally, discrepancies could be due to true curriculum deficits. The best example is SBP7, which covers elements of informatics. We were aware that this was not a particular strength for our program, but we were surprised how little informatics training/exposure that the residents perceived they obtained. As with laboratory management, the exposure was often fragmented and not explicitly framed as “informatics training.” We have a new faculty member whose main focus is informatics, so we are confident that this will improve over time, using the published proposed curriculum on informatics as a guide. 3 Another example of a programmatic change made after our experience of implementing and evaluating a milestone was recognition that the curriculum could more fully address aspects of SBP4 (laboratory management as it relates to quality and safety) by having pathology residents attend hospital Safety Oversight Team meetings that included root-cause analysis of serious events within the medical center. Prior to this, resident experiences in this area were highly variable and inconsistent. In sum, these examples highlight how the milestone assessment process can identify deficiencies in a curriculum and suggest potential solutions.

In conclusion, having the milestone data generated by a consistent cohort of faculty, as opposed to just 3 or 4 faculty members or a transient committee, lends significantly more weight to these data when used in discussions with residents. Further, the division of labor to formulate the milestone ratings involving a large a core group of faculty further invests them into the resident education and evaluation process. This division also protects against the inundation of any one faculty member to complete all or a large number of milestone assessments. The group effort to formulate the milestone evaluations also lessens the potential that personality conflicts or potential favoritism between a single faculty member and a particular resident will affect the final ratings. Finally, it is also important to follow milestone rating trends, especially differences between CCC and self-evaluations, as these can inform your program about issues that may need to be addressed in the curriculum.

Footnotes

Authors’ Note

The University of Iowa Institutional Review Board (IRB) has approved this project (IRB #201507745; approved 16 July, 2015).

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article