Abstract

Health sciences instructors hold a wide range of opinions about generative artificial intelligence (GenAI) such as ChatGPT, Bing, and Bard; however, many are uncertain about guiding students on how to use technology for assigned writing. Our survey of 62 public health instructors at a single institution highlighted their perceived benefits, limitations, and concerns about student use of GenAI for assigned writing. Perceived benefits included the completion of tasks unrelated to relevant learning such as spellchecking and reference formatting, as well as for certain writing activities such as brainstorming. Several identified the preparation for future workplace activities as a meaningful benefit. Important limitations and concerns included the worry that GenAI would inhibit learning, as well as ethical and equity-related concerns. Nearly half of instructors expressed concerns about whether using GenAI tools constitutes plagiarism or violates academic integrity. Nearly half of instructors also indicated concern about being able to detect whether a student completed an assignment with GenAI tools. Developing thoughtful guidance on technology use for assigned writing is important as it sets standards for academic integrity and supports learning. We used the survey data and applied backward design principles to develop the Brave New Words framework and three-step process described in this paper. This framework is intended to help instructors think through and ultimately develop guidelines for students on whether and how they should use technology for assigned writing. An example assignment and activity are used to demonstrate the framework.

Health sciences instructors hold a wide range of opinions on generative artificial intelligence (GenAI) technologies like ChatGPT, Bing, or Bard for student writing. While some feel that using this type of technology is inappropriate, unethical, or unhelpful for student writing (Baron, 2023), others are enthusiastic about bringing it into their classroom (Beck & Levine, 2023; Elliot, 2023; P. Greene, 2023). Still others have mixed feelings about such technology (Bruder, 2023; Moore, 2023).

Regardless of their stance, many instructors are uncertain about how to develop technology guidelines for their assignments (Robin, 2023). Such guidance is important as it sets standards for academic integrity and supports learning (Marche, 2022). We wrote this paper to help instructors think through and ultimately create guidelines for students on whether and how they should use technology for assigned writing. Our paper presents a framework with a three-step process for instructors to identify and articulate the most appropriate technology guidelines for their writing assignments and context. Survey data collected at one institution that informed this framework are also presented.

Data Informing the Brave New Words Framework

In the months after ChatGPT was released, two of the three authors—a writing specialist and pedagogy specialist—began preparing a “Brave New Words” workshop for University of Michigan School of Public Health instructors. Our goal for the workshop was to help instructors consider whether and how they wanted students to incorporate technology in their assigned writing. To help us better understand the needs and concerns of these instructors, all three authors (the other author specializing in scholarship of teaching and learning in public health education) administered a survey focused on the perceived benefits and limitations of, and concerns about student GenAI technology use for writing assignments. The study was determined exempt through the university’s Institutional Review Board; HUM00233651. To analyze the survey data, we used a data-led thematic analysis for open-ended responses (Braun et al., 2019), and identified emergent themes. More information about the study methodology can be found in Appendix 1.

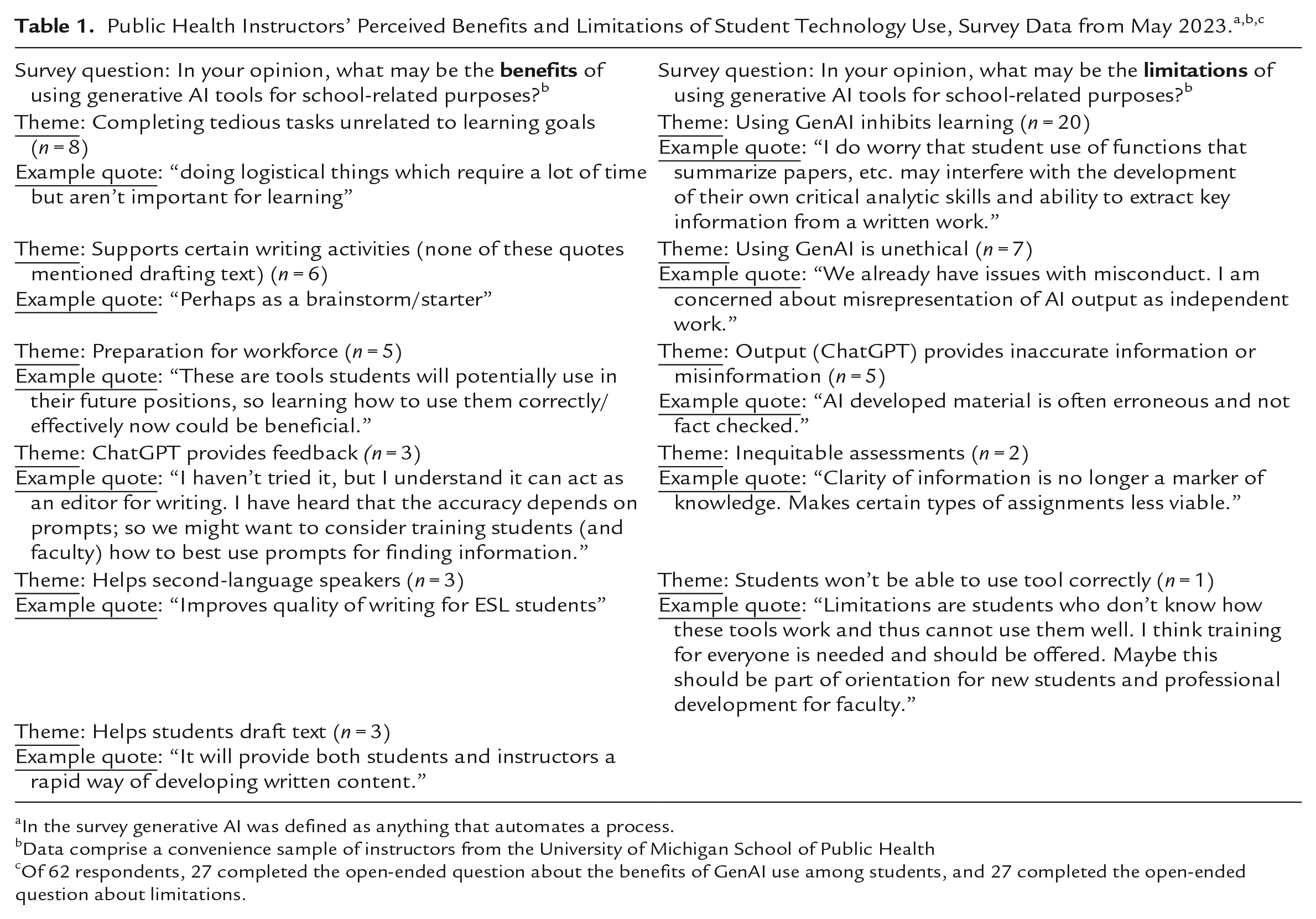

In our survey, instructors identified their perceived benefits of student use of GenAI for school-related purposes. These included students’ ability to use GenAI to complete tedious tasks unrelated to meaningful learning, but also to brainstorm, compose text, and edit writing (Table 1). These activities have been identified as benefits elsewhere (Beck & Levine, 2023). Several respondents to our survey indicated that asking students to use new technologies will help them prepare for the future workforce (Tables 1 and 2). Workforce preparation has been cited as an advantage by others (Villasenor, 2023), and the utility of AI for public health activities has been described (Ayers et al., 2023; Baclic et al., 2020; Benke & Benke, 2018).

In the survey generative AI was defined as anything that automates a process.

Data comprise a convenience sample of instructors from the University of Michigan School of Public Health

Of 62 respondents, 27 completed the open-ended question about the benefits of GenAI use among students, and 27 completed the open-ended question about limitations.

Responses to the “check all that apply” survey question

Data comprise a convenience sample of instructors from the University of Michigan School of Public Health.

In our survey, instructors articulated several limitations of student use of GenAI for school-related purposes; many of these focused on ChatGPT (Table 1). A common theme in these data was the belief that using such technology would inhibit learning (Table 2), which is echoed in scholarly discourse (Baron, 2023; Barrot, 2023).

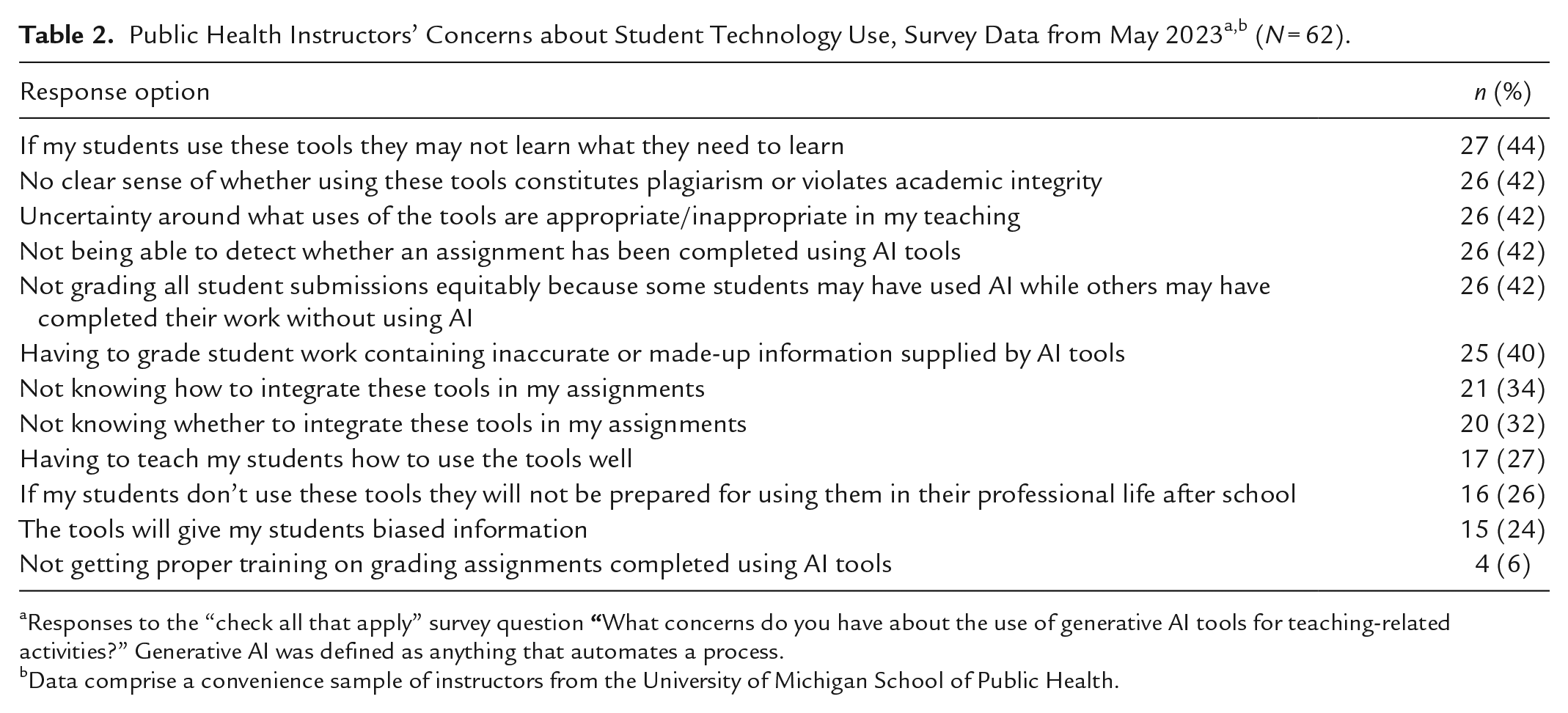

Several respondents stated concerns that student use of GenAI for schoolwork would be unethical or inequitable, and such concerns are highlighted in Table 2. For example, 42% of instructors expressed a concern about grading equity when only some students completed an assignment with genAI. Others indicated concerns that using GenAI would result in inaccuracies. In fact, AI models are not a reliable source of accurate information, and ChatGPT in particular acknowledges this on its host website (Open AI, n.d.). GenAI platforms are also known to provide biased information (Baum & Villasenor, n.d.; Schwartz et al., 2022).

Prominent instructor concerns included uncertainty about whether using GenAI tools constitutes plagiarism or violates academic integrity, and whether it was possible to detect genAI tool use. These concerns arose in our data (Table 2) as well as through informal discussion during our training workshops and they are intertwined. First, it is not possible to identify with certainty whether a student used GenAI. Software such as ChatGPT generates a unique output each time a prompt is given. That is, repeatedly entering the same prompt (e.g., “Write a paper about the prevalence of malaria in Ecuadorian children”) will result in a unique output each time, preventing an instructor from comparing the student’s writing to an “original text” (Bodnick, 2023).

While plagiarism involves a person using and taking credit for words other than their own, prior to the introduction of ChatGPT it has been, at least theoretically, possible to access an original text and compare it to the text in question to assess plagiarism. “Plagiarism” per se, is no longer clear cut in the age of ChatGPT (Barnett, n.d.; Vynck, 2023). Additionally, while there are some technology detection software applications, at this time there is no way to definitively assess whether ChatGPT was used to generate text. 1 Finally, universities are not uniform in the way they conceptualize the relationships between GenAI, academic integrity, and plagiarism (Barnett, n.d.), which can create uncertainty. A recent survey of 100 US institutions (Caulfield, 2023a) showed that eight out of 10 universities offer no clear policy guidelines on AI writing tools, leaving instructors to make their own determinations about what constitutes plagiarism or academic dishonesty in their course.

Summary of Our Approach to Designing the Framework

To guide the development of our framework, we used a backward design approach (Wiggins & McTighe, 2018). This approach asks an instructor to begin by clarifying their desired learning outcomes and then develop content to help students meet those outcomes. For example, an instructor developing a writing assignment would first clarify and reflect on their desired learning outcomes and then craft the assignment to help students reach those outcomes. In our framework, described below, we similarly ask instructors to start by focusing on their learning objectives for an assignment, and then to create technology policies that help students reach those objectives.

Our survey results and published discourse further informed our framework. First, it is clear from our data that many instructors feel uncertain about technology as it relates to assigned writing and our framework provides a tool to help them create technology guidelines. We also integrate consideration of ethical factors such as data privacy, accuracy concerns, equity, and transparency about technology use because these factors emerged as areas of uncertainty in our data. We have also used discussion of these issues in published scientific discourse to inform our framework. While the concepts of plagiarism and academic integrity arose in our data and have been the focus of debate since the emergence of ChatGPT, we do not directly integrate these concepts. This is partly because of the complexity of this topic in the context of GenAI and partly because institutions will have their own policies on academic integrity and plagiarism guiding instructors and students alongside our framework.

Framework: A Three-Step Process to Develop Technology Guidelines for Public Health Writing Assignments

While our survey and framework were inspired by the introduction of GenAI technologies, our framework asks instructors to consider technology broadly as anything that automates a process; for example, large language models which are sometimes referred to as GenAI (ChatGPT, etc.), search engines (Google, PubMed, etc.), JANE (Journal Author Name Estimator), data analysis software, Excel, DALL-E, grammar check, spell check, and reference formatting software. This broader definition of technology helps instructors reflect on why they are using a given technology and encourages them to compare technologies such as spellcheck and ChatGPT that may have different impacts on learning. We define writing as any activity that helps bring a written product to fruition, including reading, annotating and synthesizing information, brainstorming, writing draft text, creating visual elements (e.g., tables, figures), revising, editing, and formatting. Breaking writing down into such activities can help instructors specify the benefits of writing for students, and how technology might appropriately support or hinder learning for a given writing activity.

Writing offers numerous benefits for health professional students (Anderson & August, 2022; Bean & Melzer, 2021; Carillo, 2020; Dwyer et al., 2014; Facione, 1990; L. Greene, 2010; Kurfiss, 1988; National Research Council, 2001; Pace, 2004; Quitadamo & Kurtz, 2007; University of Michigan Sweetland Center for Writing, 2015). Taking these benefits into consideration helps instructors identify their learning objectives for student writing and parse how technology may support or undermine such learning. Below, we focus on two of many skills that students may gain through writing: critically evaluating problems and addressing such problems using critical thinking skills. Using the context of population health, we summarize these activities below and give examples of how technology may affect their development.

Using GenAI to Promote Critical Thinking Through Writing in Population Health

Students can learn about population health issues through writing assignments (Anderson & August, 2022). To develop a written product such as a fact sheet or research paper that addresses a population health issue, students first need to understand the problem by identifying key readings, reading the material, and making judgments about the arguments presented (Bean et al., 2013; Carillo, 2020). Students must synthesize the information they consume and decide which is important to convey in their written product (Bean et al., 2013; Carillo, 2020). A large language model like ChatGPT may be a starting point to help students understand unfamiliar terminology or concepts; however, the ability of ChatGPT to provide prose for a specific readership could potentially reduce the motivation for students to read primary source material. If students avoid reading these materials, they will be deprived of the opportunity to synthesize information and make their own judgments, therefore dampening the benefits of critically evaluating a population health issue (Bean et al., 2013; Carillo, 2020). Likewise, if a student relies on ChatGPT to generate text for their draft, they will be deprived of the chance to build their critical thinking skills.

Alternatively, technology may be helpful given the appropriate strategy or guidance. For example, students could enter their draft text into ChatGPT and receive suggestions on how to improve their arguments (see example activity asking students to do this in Appendix 2). In addition to receiving potentially helpful feedback on their own ideas and text that inspires revision, this activity helps students learn to use ChatGPT effectively. It can also help them learn to critically evaluate ChatGPT’s output, deciding which feedback is helpful and which is not.

Three-Step Process to Help Instructors Clarify Their Thinking and Craft Guidelines

Our framework and process can be used to help instructors clarify the learning objectives of an assignment, identify how technology might help or hinder students in reaching these objectives (or have no material impact), and create guidelines for students for a particular writing assignment.

We use an example writing assignment and in-class activity (Appendix 2) to explain the framework and demonstrate the process. The example assignment and activity are for Master of Public Health Students in an epidemiology course. Students are asked to write a draft of an introduction section as part of a scaffolded series of assignments that build toward a research paper. It builds on previous activities such as reading and annotating, choosing a target journal (which defines the readership), and others. The example activity is intended to serve as a model for how instructors may incorporate ChatGPT into their class to promote learning. It asks students to enter their introduction section draft text into ChatGPT and prompt it for writing feedback. Importantly, while the framework is described as a three-step process, in practice it may not be linear and may not include all three steps. For example, an instructor who is using learning objectives prescribed by an accreditation body (such as the Council on Education for Public Health) may skip step 1.

Step 1: Articulate the Assignment’s Learning Objectives

Learning objectives are the foundation on which assignments are built. A learning objective is a clear and concise statement that describes the intended outcome for learning (Wiggins & McTighe, 2018). A well-written learning objective outlines the specific knowledge or skills the students will acquire and demonstrate and uses behavioral verbs that are observable and measurable. Bloom’s Taxonomy (https://bloomstaxonomy.net/), a hierarchical framework for classifying learning objectives, provides a list of such verbs categorized according to the cognitive level at which students should be performing. For example, the student learning objectives for the example assignment are to: (1) apply what they have learned about the structure, tone, and style of academic writing to their introduction section draft; and (2) develop effective arguments about why their research is important, where the research gap is, and how their study addresses the research gap. The learning objectives for the ChatGPT activity are to: (1) write effective prompts that will elicit the most informative output from ChatGPT; and (2) identify which part(s) of the ChatGPT output provides helpful advice about the draft introduction and which advice should be ignored because it is not helpful or not correct. Instructors should align their assignment learning objectives with their course objectives.

Step 2: Identify How Students Could Use Technology for the Assignment, and Impacts on the Learning Objectives

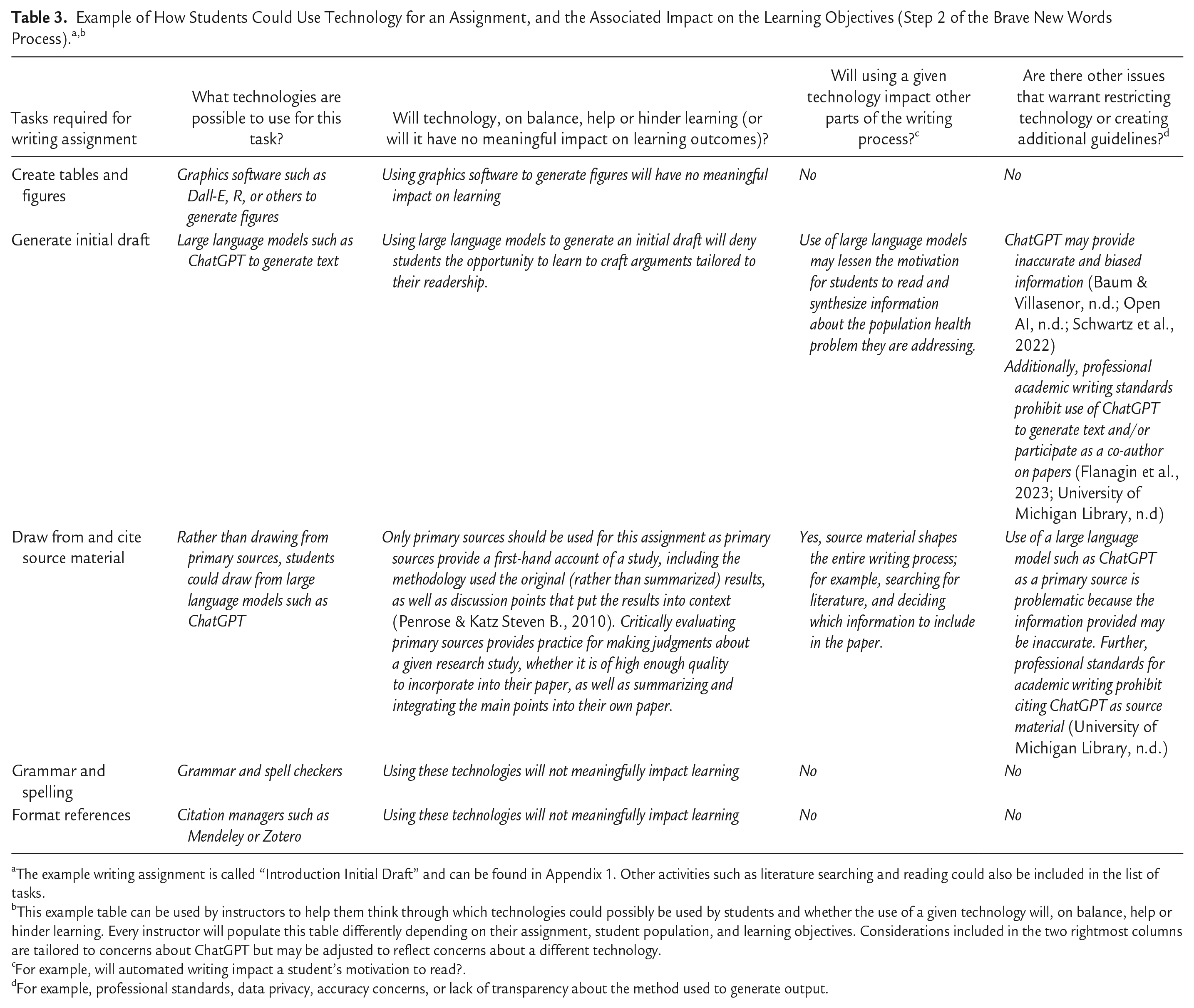

Step 2 involves consideration of what technologies could be used for an assignment, and the associated benefits or drawbacks to learning. First, an instructor should create a list of tasks that students need to do to complete the writing assignment. For the example assignment (the Introduction Initial Draft) in Appendix 2, students need to complete several tasks, such as searching for and identifying source material, reading and annotating this material, developing their research question, and other activities. Example tasks are shown in Table 3.

The example writing assignment is called “Introduction Initial Draft” and can be found in Appendix 1. Other activities such as literature searching and reading could also be included in the list of tasks.

This example table can be used by instructors to help them think through which technologies could possibly be used by students and whether the use of a given technology will, on balance, help or hinder learning. Every instructor will populate this table differently depending on their assignment, student population, and learning objectives. Considerations included in the two rightmost columns are tailored to concerns about ChatGPT but may be adjusted to reflect concerns about a different technology.

For example, will automated writing impact a student’s motivation to read?.

For example, professional standards, data privacy, accuracy concerns, or lack of transparency about the method used to generate output.

The next step is deciding which technologies could possibly be used by students, and whether a given technology will be allowed, prohibited, encouraged, or required for each task. To make this decision, instructors should consider the impact of a technology on their learning objectives. Using a spell checker will probably not impact an assignment’s learning objectives but using ChatGPT to write an introduction draft may significantly hinder students from learning. It is important to note that in some cases, students may both benefit and lose out on learning opportunities, and an instructor may need to decide, given the tradeoffs, which is the best option for the assignment and context.

A second consideration is whether a particular technology used in one part of the writing process might impact other parts of the process. For example, the search engine students use to identify their sources will shape the type of information they retrieve, ultimately shaping the content of their paper.

Additionally, instructors should consider the potential impact of accuracy, bias, or equity-related issues that may not have arisen in the initial assessment of a technology’s impact on learning. A final consideration is data privacy: information entered into ChatGPT is not kept private (this is currently stated on the OpenAI website, August 19, 2023). 2 This lack of privacy may be particularly relevant for some students, for example those who are writing a paper they intend to publish.

Step 3: Develop Your Guidelines

The final step is to develop clear guidelines for students (example guidelines for technology use are provided in the example assignment and activity in Appendix 2). A guideline like “AI is not permitted for this class” needs more specificity to let students know what “AI” means in the context of a particular class, to what assignments and activities the guideline applies, and whether disclosures are required (i.e., what or how technologies were used). Instructors should build from the work they did in Step 2, specifying whether technology is required, permitted, or not permitted for each task, and why.

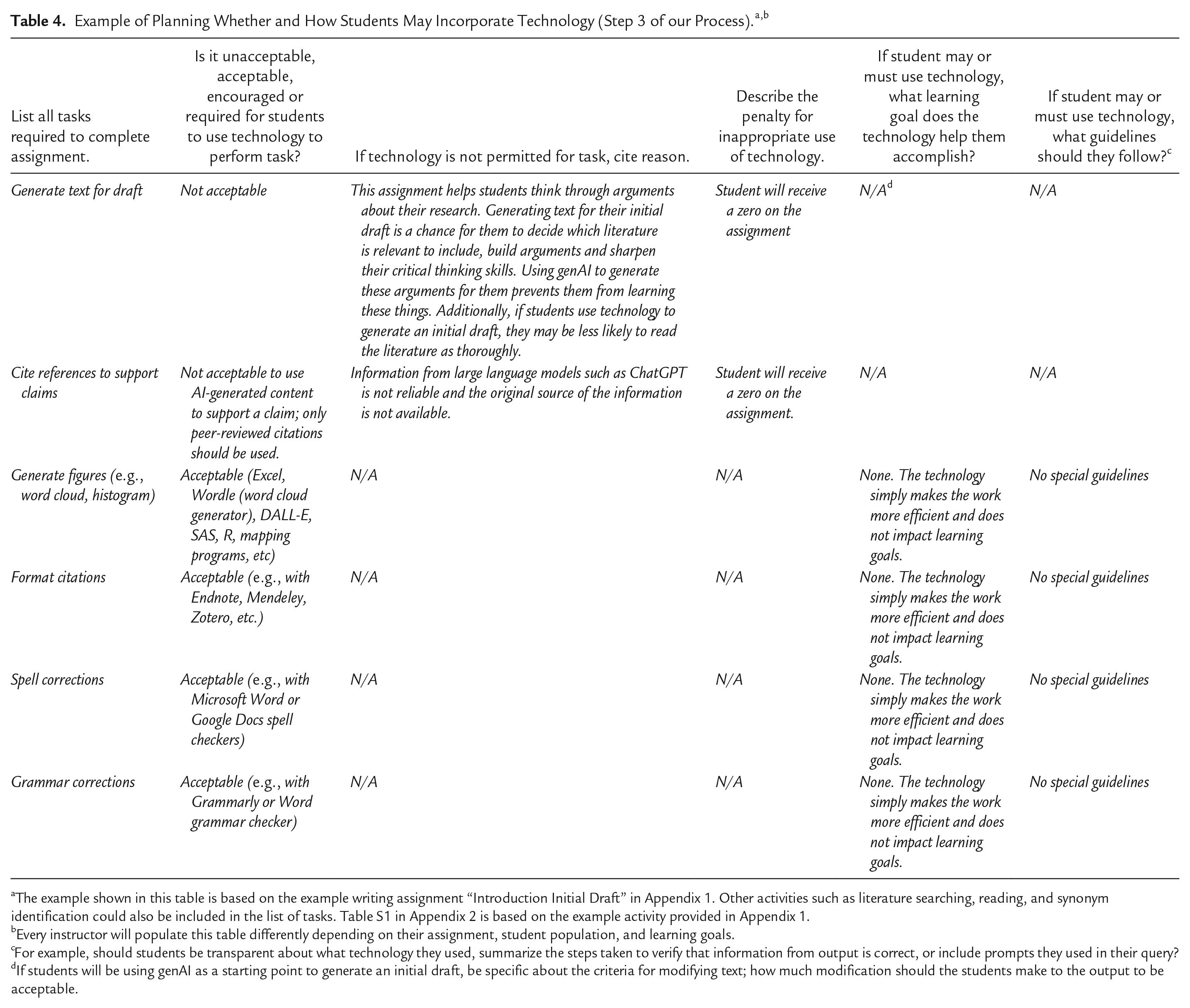

Table 4 shows an example of how an instructor might plan whether and how students may incorporate technology into their assigned writing. The goal of step 3 is to identify whether a given technology is unacceptable, acceptable, encouraged, or required for each task (this is sometimes referred to as prohibitive use, guided use, and unlimited use, respectively, in academic and publishing realms). For permitted or required technologies, instructors should specify what learning objective the technology helps students accomplish or whether the technology simply makes the work more efficient or easier in a way that does not meaningfully impact the learning objective. For prohibited technologies, instructors should specify how each compromises learning. If students will be required to be transparent about which technologies they used, they should be given instructions about how to do this. For example, they may be required to summarize the steps they took to verify that the information from their ChatGPT output is correct, and/or to include the prompts they used in their query.

The example shown in this table is based on the example writing assignment “Introduction Initial Draft” in Appendix 1. Other activities such as literature searching, reading, and synonym identification could also be included in the list of tasks. Table S1 in Appendix 2 is based on the example activity provided in Appendix 1.

Every instructor will populate this table differently depending on their assignment, student population, and learning goals.

For example, should students be transparent about what technology they used, summarize the steps taken to verify that information from output is correct, or include prompts they used in their query?

If students will be using genAI as a starting point to generate an initial draft, be specific about the criteria for modifying text; how much modification should the students make to the output to be acceptable.

When crafting guidelines and discussing them with students, instructors should consider the impact of technology use on equity. For example, in the scenario in which some students use technology to improve their assignment and some do not use technology—because it is not allowed, because they choose not to, or because they can’t afford to purchase the technology—it might not be equitable to allow it.

Part of training students to write and publish papers is helping them understand relevant professional standards such as authorship standards. These can be integrated into assignment policies and rationale where appropriate. For example, in the health sciences, several professional organizations and journals have stated that AI technologies such as ChatGPT cannot ethically be considered authors. This is because under standard authorship criteria, authors should be accountable for all parts of work performed, should be able to identify which co-authors are responsible for specific other parts of the work, and should have confidence in the integrity of their co-authors’ contributions. Artificial intelligence technologies cannot be held accountable for their contributions and AI technologies should therefore not be included in an author list (Flanagin et al., 2023; Nature, 2023; Thorp, 2023). Additionally, JAMA (the Journal of the American Medical Association) citation styles do not recognize citations for ChatGPT or other large language models. JAMA citation styles only recognize content created by humans and exclude content created by artificial intelligence (University of Michigan Library, n.d.). Finally, instructors should check their university, school, and departmental guidelines and ensure that they are in line with these policies.

Challenges and Lessons Learned

It is important to acknowledge to students that AI technology is new and rapidly changing. Instructors might consider including a disclaimer in their syllabus “Technology is changing rapidly and the guidance provided in this syllabus may need to change during the course. This change may impact the evaluation of your assignments.” Likewise, we recognize that some of the issues we present in this paper may become irrelevant within years, months, or even weeks. One particularly challenging issue is the inability of instructors to ascertain whether students used certain technologies like ChatGPT, and for this reason we did not describe penalties for use in our guidance examples. This is an evolving issue that may change in the future.

Discussing and even co-creating technology use guidelines with students can help instructors learn from the student perspective and may enhance student support and compliance. Some universities have developed guidance directed to students (e.g., https://genai.umich.edu/guidance/students) and pointing students toward such resources may be helpful.

Final Considerations

Finally, the benefits that assigned writing can provide are only as good as the writing assignments themselves. In our previous work, we have created a set of recommendations for creating effective writing assignments in public health (Anderson & August, 2022; August et al., 2019; August & Trostle, 2018). Some of the key recommendations include presenting a real-world disciplinary problem to be addressed in the written product through critical thinking, describing the purpose of the writing, requiring a document type used in the workplace, identifying the intended readers, allowing for a process to support writing through specific tasks, and explaining the assignment’s requirements and criteria for evaluation. More recommendations, rationale, and examples can be found in our previous work.

Guiding students to use technology in a way that supports their learning will enhance their critical thinking and communication skills, leading to more effective professional practice upon graduation. Ultimately, this will give rise to better population health and preventative healthcare.

Supplemental Material

sj-docx-1-php-10.1177_23733799241235119 – Supplemental material for Brave New Words: A Framework and Process for Developing Technology-Use Guidelines for Student Writing

Supplemental material, sj-docx-1-php-10.1177_23733799241235119 for Brave New Words: A Framework and Process for Developing Technology-Use Guidelines for Student Writing by Ella T. August, Olivia S. Anderson and Frederique A. Laubepin in Pedagogy in Health Promotion

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The University of Michigan School of Public Health provided financial support for the publication of this article.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.