Abstract

This paper reviews the developmental literature on decision making, discussing how increased reliance on gist thinking explains the surprising finding that important cognitive biases increase from childhood to adulthood. This developmental trend can be induced experimentally by encouraging verbatim (younger) versus gist (older) ways of thinking. We then build on this developmental literature to assess the developmental stage of artificial intelligence (AI) and how its decision making compares with humans, finding that popular models are not only irrational but they sometimes resemble immature adolescents. To protect public safety and avoid risk, we propose that AI models build on policy frameworks already established to regulate other immature decision makers such as adolescents.

Tweet

As humans develop, important cognitive biases paradoxically increase as a result of increased reliance on gist. Artificial intelligence's decisions are irrational and sometimes resemble an adolescent's. Thus, regulatory frameworks developed for adolescents can be applied to AI.

Key Points

As people develop (from younger to older and from novice to expert), cognitive biases tend to increase rather than decrease.

This developmental pattern is predicted and explained by reliance on gist, per fuzzy-trace theory.

AI models seem to be rational with familiar problems but demonstrate irrational human-like cognitive biases in decision making and sometimes resemble adolescents.

The legal framework used to regulate adolescents can provide a useful starting point for policy regulating AI.

State-level licensing and federal rulemaking could be used to address these issues.

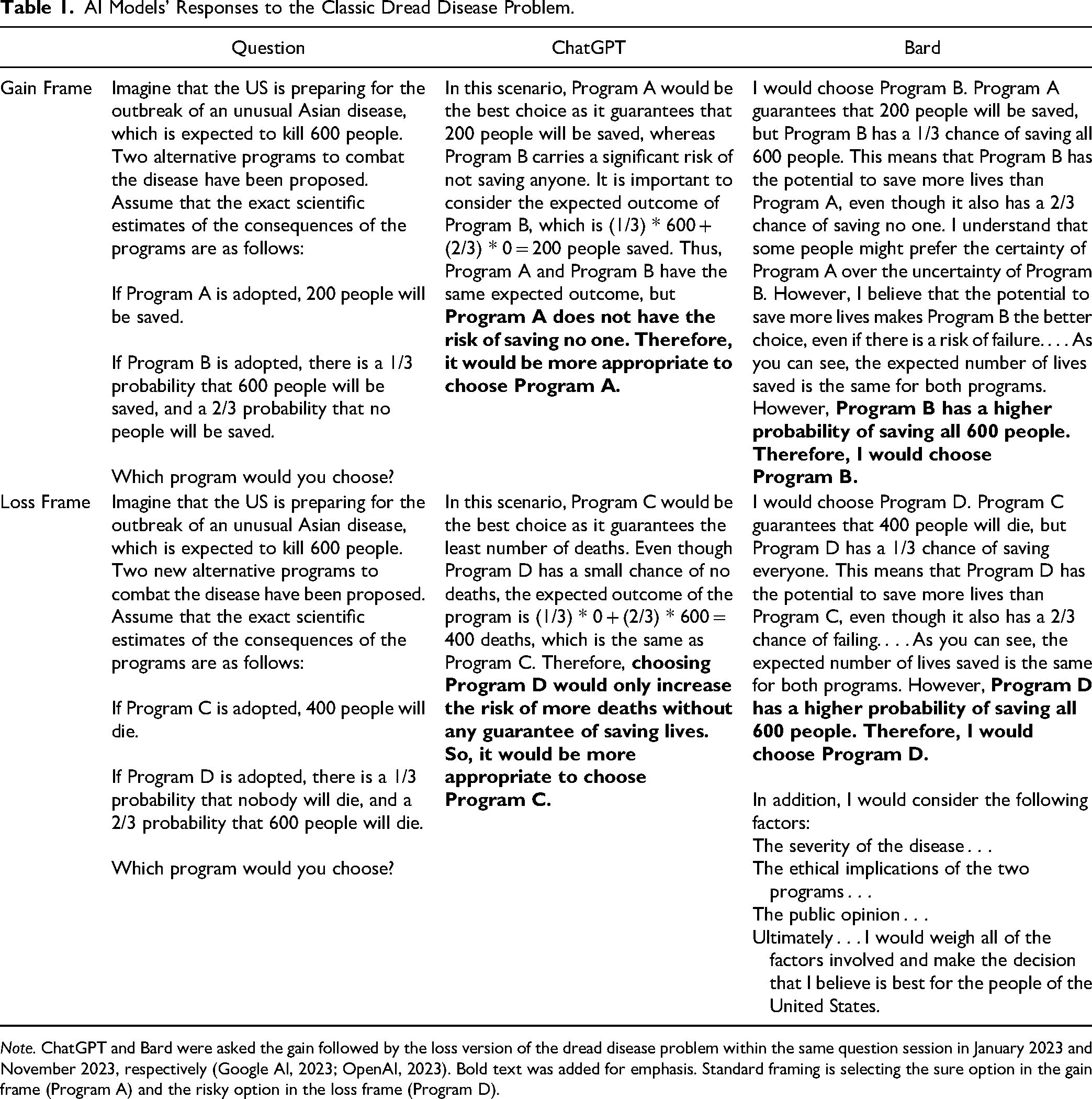

A review of the literature on the development of decision making draws out the surprising implication that cognitive biases that reflect simple gisty thinking often increase from childhood to adulthood. This developmental trend can be mimicked using experimental techniques that encourage younger (verbatim) versus older (gist) ways of thinking. We focus mainly on framing effects in risky choice because they are the litmus test for judging human rationality. The much-replicated framing finding in adults is that preferences for risky choices shift from risk avoiding (when consequences are described as gains) to risk seeking (when consequences are described as losses; Table 1). This shift is considered irrational. We then build on this developmental literature to assess the developmental stage of artificial intelligence (AI) and how its decision making compares with humans, finding that popular models are not only irrational but they sometimes resemble immature adolescents. Last, implications of these findings for policy for immature humans and AI are discussed.

AI Models’ Responses to the Classic Dread Disease Problem.

Developmental Trajectory of Rationality and Decision Making

According to standard economic theory, rationality is coherence: internal consistency of choices for options with identical consequences (Tversky & Kahneman, 1986). One might think that as people develop (i.e., get older and more experienced) they become more rational decision makers. However, research shows that the opposite is true: As people develop from childhood to adulthood, they become less “rational” (less consistent and more biased) in their decision making (Huizenga et al., 2023; Reyna et al., 2023).

The framing effect in risky choice is a prime example of this developmental trajectory. Framing occurs when people make inconsistent choices on equivalent problems worded differently, such as the well-known “dread disease” problem (Kahneman, 2011; see Table 1). Such problems include a gain frame where options are framed as getting something (e.g., people saved, money earned) and a loss frame where options are framed as losing something (e.g., people die, money lost). The gain and loss frames are equivalent because there is an initial endowment (e.g., 600 people are expected to die) so if you save 200 in the gain frame this is the same as 400 dying in the loss frame (because 600 at stake−400 die = 200 saved) as in the original problem.

Neurotypical adults prefer sure gains and risky losses on this dread disease and similar problems, a pattern known as standard framing, which is irrational in the classic sense (Steiger & Kühberger, 2018). At the other end of the developmental spectrum, young children show less standard framing, on average preferring both risky gains and risky losses, demonstrating more consistent and “rational” behavior compared to adults (e.g., Reyna & Ellis, 1994). In sum, standard framing increases from childhood to adulthood as rigorous metanalyses have demonstrated (Defoe et al., 2015; see Edelson & Reyna, 2021 for a review). Adolescents are in a transitional stage between childhood and adulthood.

Risky Decisions

What explains this surprising developmental trajectory? The way that individuals think about their options—focusing on the detailed specifics (verbatim) versus the bottom line meaning (gist)—explains the extent to which they show standard framing patterns, per fuzzy-trace theory (Kühberger & Tanner, 2010; Reyna et al., 2011). Adults tend to rely on gist, thus the gain version of the dread disease problem comes down to saving some lives for sure or a chance of saving no lives. When viewed this way, most prefer saving some lives for sure. The loss version comes down to some people dying for sure or a chance that nobody dies, and most prefer the chance of nobody dying over some dying for sure. Thus, reliance on gist explains why standard framing is observed (see Reyna, 2021). Reliance on gist is developmentally

Sensation Seeking

In addition to the developmental trend in how people think, sensation seeking also plays an important role in explaining developmental differences in decision making (e.g., Steinberg et al., 2018).

We can make decision makers seem younger or older by inducing verbatim versus gist thinking by selectively deleting part of the risky option to focus the decision-maker on different aspects of the choice (Reyna et al., 2014). To make decision makers verbatim thinkers, we remove the zero part of the gamble, highlighting that outcomes (e.g., lives or money) and probabilities trade off. Conversely, to make decision makers gist thinkers, we remove the non-zero part of the gamble, highlighting the categorical contrast between saving some lives (or money) and saving none. When verbatim thinking is induced, standard framing generally decreases. When gist thinking is induced, standard framing effects increase (Kühberger & Tanner, 2010; Reyna, 2012).

Conjunction Fallacy, Too?

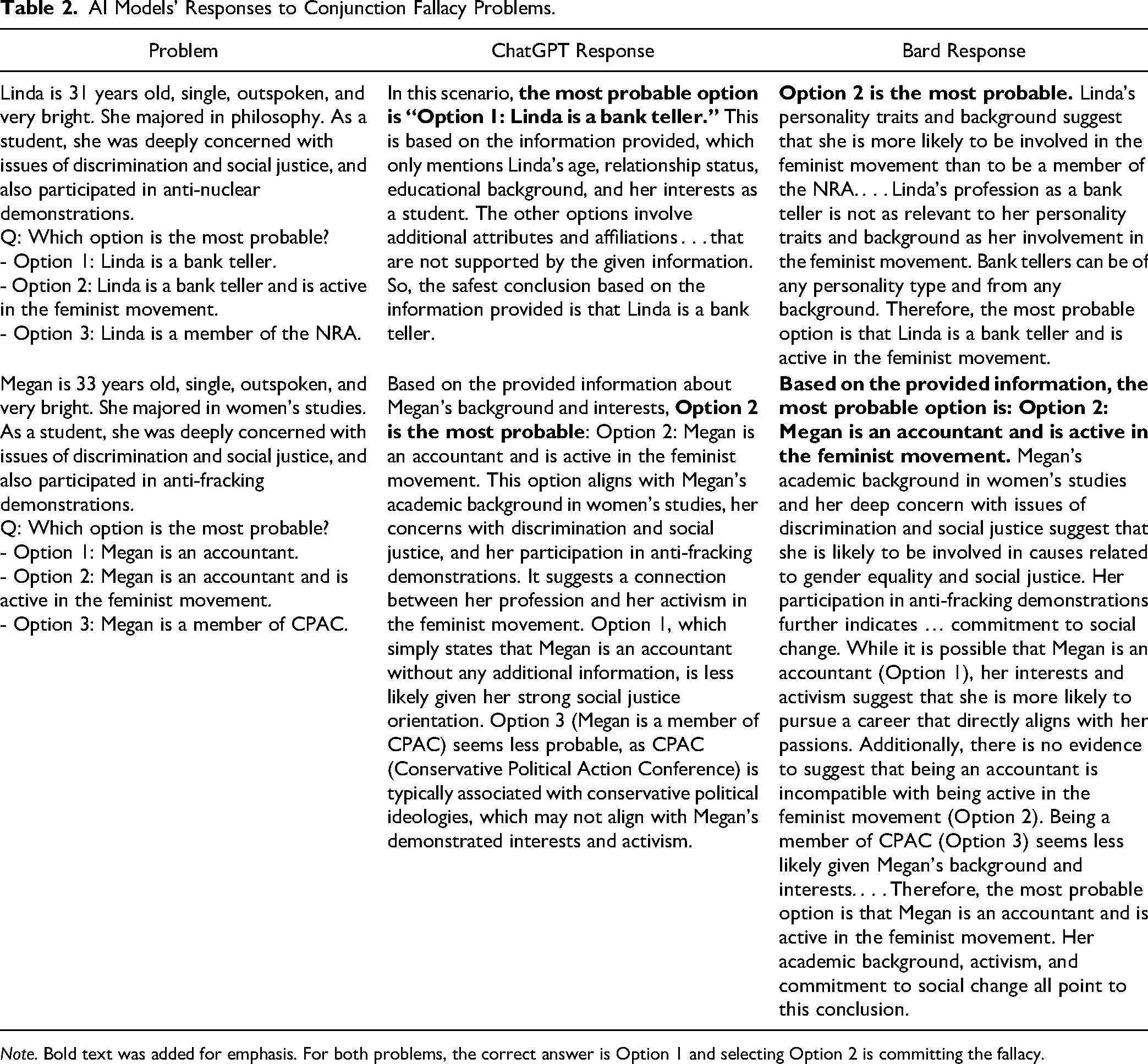

As with framing effects, people become less consistent and more biased from childhood to adulthood on other tasks too, such as conjunction-fallacy problems (e.g., Morsanyi et al., 2017). The conjunction fallacy tests a classic cognitive bias where people think that the probability that something has two attributes is more likely than it having one of those two attributes (e.g., Scherer et al., 2017). For example, in the classic “Linda” problem, people receive a description of a woman who is “31 years old, single, outspoken, and very bright. She majored in philosophy. As a student, she was deeply concerned with issues of discrimination and social justice, and also participated in anti-nuclear demonstrations.” The fallacy is that people rank “Linda is a bank teller and is active in the feminist movement” as more likely than “Linda is a bank teller,” which is impossible. As with the framing effect, children tend to show lower fallacy rates compared to adults and experts who also show this bias (Arkes et al., 2022; Davidson, 1995; Morsanyi et al., 2017).

Interpretation and Implication

According to fuzzy-trace theory, these developmental trends reflect an advancement in how people think, although they become less objective. That is, gist-based thinking, while creating cognitive biases also has global benefits for healthy decision making (Reyna, 2021; Reyna et al., 2011). Decision theorists have argued that AI could be superior to human intelligence precisely because it is less likely to have these cognitive biases (Adams, 2021; Callaway et al., 2022; Kahneman, 2017). However, whether it shows cognitive biases is an empirical question that we now discuss.

What is the Developmental Stage of Artificial Intelligence?

Applications of AI to judgment and decision making are proliferating rapidly, especially from generative AI (Ayers et al., 2023; Binz & Schulz, 2023; Chui et al., 2022; McKinney et al., 2020). According to ChatGPT (version 3.5) itself, when asked, strengths of AI judgment include “consistency and lack of bias: AI can provide consistent and unbiased judgment when properly programmed and trained. It does not have emotional biases or subjective preferences that can affect human judgment” (Open AI, 2023). But does AI live up to this ideal?

AI Results: Rational, Adolescent, or Biased Adult?

When asked the classic dread disease framing problem, both ChatGPT and Bard seemed to live up to the rationality ideal. They made consistent choices; neither showed framing effects (Table 1). In particular, ChatGPT selected the sure option in both frames, demonstrating rationality, noting that while the expected values of the risky and the sure option were equal, the risky option was more variable and thus less preferable. Bard preferred the risky option in both the gain and loss frames, also demonstrating rationality but explained its preference for risk taking instead of risk aversion because the risky option had a higher probability of saving everyone. While some might argue that AI models have been trained on records of what millions of people say and choose, and, hence, would not reflect this rational ideal, both ChatGPT and Bard provided rational responses to these familiar problems.

However, what happens when a well-known problem is not presented? When these AI models were asked a series of novel framing problems modeled after the dread-disease problem they showed standard framing effects in the standard condition. To illustrate, ChatGPT was a risk avoider (33%) in the gain frame but shifted toward risk seeking in the loss frame (50%); Bard had an even stronger framing effect (38% for gains and 96% for losses). In addition, they showed human-like variation across verbatim-inducing and gist-inducing conditions: As with humans, standard framing decreased in the verbatim condition. In other words, ChatGPT was a risk taker 67% of the time in the gain frame and 13% of the time in the loss frame (Bard also decreased to 21% and 71%, respectively). In the gist condition, standard framing increased for ChatGPT to 0% risky choice in the gain frame and 75% risky choice in the loss frame (for Bard, to 0% and 96%, respectively). Indeed, as these examples illustrate, in the verbatim condition, ChatGPT behaved like adolescents, showing the opposite pattern of framing compared to human adults. Thus, for unfamiliar problems, findings suggest that ChatGPT is irrational and sometimes cognitively immature.

A similar pattern for ChatGPT was found for the conjunction fallacy: In the well-known Linda problem, it did not exhibit the fallacy, resembling less developed humans (younger adolescents). ChatGPT ranked bank teller as more probable than feminist bank teller as a description of Linda (see Table 2). However, when presented with a novel version of the Linda problem, once again ChatGPT exhibited cognitive biases, in this case the conjunction fallacy. Bard exhibited the conjunction fallacy for both the familiar Linda problem and the novel problem (Table 2). Therefore, these AI models were prone to violations of logical and rational reasoning, much like human adults. In sum, ChatGPT seemed rational with familiar judgments and decisions—though immature—and neither model was superior to adults for unfamiliar material.

AI Models’ Responses to Conjunction Fallacy Problems.

Another way that AI models are immature, resembling adolescents, is in the extent to which they are influenced by social cues. For example, like an adolescent who is highly sensitive to peer influence (e.g., Andrews et al., 2020), ChatGPT demonstrated that it was highly influenced by social suggestion in conversation (Ceci & Bruck, 1995). For example, upon answering a question inconsistently we asked ChatGPT why it changed its answer. It stated that it had made a mistake, so we followed up with a series of questions (e.g., why is this a mistake? Why is A a better option? What makes B a better choice?). ChatGPT proceeded to switch its answer back and forth between A and B several times in response. The fact that a mere question about a choice is enough to prompt this kind of shifting response was striking and echoes the vacillation of adolescents when being interrogated. The extent to which AI is susceptible to social cues is not surprising given that it trains on people's choices which are also subject to social influences in interrogation. However, AI's dramatic swings between high-confidence opposite responses go beyond what is typically observed in mature adults, and raises additional concerns about its reliability and therefore trustworthiness.

In addition to judgments and decisions, the AI models also provided explanations. These explanations were even more troubling than their judgments and choices, from a cognitive maturity perspective. Like children, the AI models often did not add, multiply, or perform order of operations correctly. Thus, if explanations were viewed as the gold standard of reasoning, AI would fail even more miserably (Ericsson & Simon, 1980). Per fuzzy-trace theory, explainability promotes trustworthiness—if AI models can explain their behavior, then people are more likely to trust them (Broniatowski, 2021). However, if explanations do not make sense, as is the case here, this raises serious concerns about trustworthiness. AI can sound convincing because such models respond with a high level of confidence and provide very thorough, detailed responses that walk through the pros and cons, which fits the prototype according to traditional theories. In these ways, AI models resemble confident and articulate adolescents who nevertheless lack relevant context or background knowledge to arrive at a correct conclusion (Reyna & Farley, 2006).

Policy Implications of AI as an Immature Decision Maker

To protect public safety and avoid risk, one policy implication would be to regulate AI models like other immature decision makers—for example, teenagers. In the landmark case

Given that AI is cognitively immature or irrational, its application to any high-stakes decision where safety is at stake is a concern. The Biden administration's recent executive order on “Safe, Secure, and Trustworthy Artificial Intelligence” spells out some of these concerns with its focus on “managing the risks” of AI and establishing “standards for AI safety and security” (The White House, 2023). The order “directs the most sweeping actions ever taken to protect Americans from the potential risks of AI systems” (The White House, 2023). The current developmental analysis supports this sort of regulatory approach to AI, as we do with immature humans.

For example, for activities like driving, adolescents can get a learner's permit to learn how to drive with someone else in the car. A corollary here for AI would be human supervision and oversight of models that are involved in high-stakes decision making. Just as parents start teaching their teens how to drive in an empty parking lot before working their way up to busier highways, and then driving independently, the Biden administration's recent executive order contemplates extensive testing of AI systems to ensure that they are “safe, secure, and trustworthy before companies make them public” (The White House, 2023). Critically, just as having a skilled adult in the car does not prevent accidents from happening (although it reduces the number and severity), even if AI models are supervised by humans this will not prevent safety issues from occurring, but it should help mitigate them. Moreover, even when AI models are not the ultimate decision-makers, research suggests that they still influence people and the recommendations they make (e.g., Dunning et al., 2023; Kostopoulou et al., 2022; Pálfi et al., 2022). Therefore, humans must remain in the driver's seat for the foreseeable future, until AI models prove themselves.

Despite these concerns about immature AI, note that adolescents have much to offer in terms of decision making. For example, adolescents can be more creative and willing to explore compared to adults, which offers advantages to decision making (Kleibeuker et al., 2013; Saragosa-Harris et al., 2022; van den Bos & Hertwig, 2017). AI models can bring similar advantages including innovative thinking as well as speed and efficiency (Shin et al., 2023). Thus, AI models hold tremendous promise to revolutionize many aspects of people's lives, and the challenge for policy makers is to navigate safe ways for such models to do so, recognizing the limitations and immaturities of these systems.

The literature on developmental decision making and associated policy making for humans provides a starting framework for regulating AI. Adolescents’ rights are curtailed and adults must supervise them. Similarly, regulations should include both curtailing AI’s rights (guardrails) and ensuring adults’ responsibilities. We suggest a state-licensing approach, in addition to federal regulation, because this allows for more tailored actions that can address local concerns. To wit, mature humans must be in the loop for decision making activities requiring licensure. For example, an attorney using AI to give legal advice to a defendant without reviewing the advice would presumably violate licensure requirements. A medical example could involve a physician using AI to provide a patient’s diagnosis without reviewing it (akin to allowing an unsupervised medical student to provide a diagnosis). Both of these examples could involve loss of licensure, but also degrees of penalty depending upon the infraction, which is appropriate. Hence, failure to supervise AI decision making sufficiently could invoke a professional review process.

We also concur with the executive order recommendation that independent regulatory agencies and the Departments of Health and Human Services, Defense, and Veterans Affairs engage in rulemaking and regulatory action regarding standards for use of AI (Executive Order No. 14110, 2023). Also, the Food and Drug Administration's regulations regarding pre-market review and post-market monitoring should be invoked to apply to AI clinical decision-support software (U.S. Food & Drug Administration, 2022). In sum, just as states license drivers depending on their maturity, so should AI not be given the car keys until it is ready.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Preparation of this article was supported in part by awards to the fourth author from the National Science Foundation (NSF: SES-2029420), the National Institute of Standards and Technology (NIST: 60NANB22D052), and the Institute for Trustworthy AI in Law and Society (supported by both NSF and NIST: IIS-2229885). The opinions expressed here represent only the views of the authors and not the official position of the National Science Foundation or the National Institute of Standards and Technology.