Abstract

To examine the psychometric properties of the Multifactorial Memory Questionnaire (MMQ) in older adults with subjective memory complaints. The three MMQ subscale (Satisfaction, Ability, and Strategy) was administered twice, with a 3-month interval. The test

Keywords

Introduction

Subjective memory complaints (SMC) reflect an individual’s dissatisfaction with his or her memory function, which is common in community-dwelling older adults (Matthews et al., 2020; Mitchell et al., 2014). SMC appears to be associated with poor outcomes, including reduced social participation, increased emotional symptoms (e.g., depression and anxiety), and low quality of life (Mogle et al., 2017; Montejo et al., 2011). Moreover, SMC reportedly is a risk factor for further functional decline, including cognitive decline and increased experienced difficulties in performing activities of daily living (ADLs; Cordier et al., 2019). Subjective memory assessment can help clinicians detect early stages of cognitive decline in older adults with SMC. Interpreting change is a requisite component of clinical decision- making for clinicians. Moreover, to interpret the measurement results, clinicians have to decide whether the change scores in the subjective memory measures are true or beyond measurement error (Seamon et al., 2022). Therefore, a reliable and clinically applicable SMC assessment tool that allows the assessor to effectively distinguish whether the subject has SMC is essential.

The Multifactorial Memory Questionnaire (MMQ; Troyer & Rich, 2002) is among the most commonly used measures for assessing self-perceived memory function in older adults (Troyer et al., 2019). It appears to be a promising measure for two reasons. First, the MMQ includes an independent dimension (i.e., the satisfaction subscale) to assess examinees’ subjective feelings about memory (Troyer & Rich, 2002). SMC is linked to subjective feelings about memory (Hanninen et al., 1994; Lee, 2016); specifically, SMC may have a direct negative impact on emotional symptoms in older adults (Kawagoe et al., 2019; Schweizer et al., 2018). Thus, the MMQ provides more information for assessing SMC in addition to the “ability” (e.g., the frequency of making memory mistakes) and/or “strategy” (e.g., frequency of using memory strategies and aids) dimensions included in most measures. Second, in healthy older adults, the MMQ has shown preliminary good psychometric properties, including test

Reliability refers to the stability of the scores provided by a measure. It is considered a basic psychometric property of all measures; thus, reliability validation is a prerequisite of all psychometric validations. The reliability validation generally includes test

However, the MMQ has not been validated in older adults with SMC. Particularly, because the psychometric properties are sample dependent (Hobart & Cano, 2009), the previous psychometric evidence on healthy older adults (Troyer & Rich, 2002) cannot be directly inferred to the SMC population. This lack of psychometric evidence may interfere with the MMQ score interpretations in older adults with SMC. Furthermore, from a previous review article on the psychometric properties of MMQ (Troyer et al., 2019), the minimal detectable change (MDC95) of the MMQ has not been examined, which may thus limit the clinical applicability of this tool (Seamon et al., 2022).

Accordingly, validation of the psychometric properties of the MMQ is essential to determine the utility of the MMQ in the SMC population. The present study aimed to investigate additional psychometric properties of the MMQ in older adults with SMC: test

Methods

This study used a qualitative psychometric, repeated-assessments design (with a 3-month interval) and adhered to the STROBE guideline.

Study Design and Participants

The data were extracted from a research project that investigated the effect of cognitive function training in older adults with SMC; some data have been published in a previous study (Yang et al., 2019). We recruited older adults (age ≥65 years) by convenience sampling mainly from senior centers and homes. The inclusion criteria were as follows: (1) SMC, reports of at least two or more memory failures (e.g., forgetting an appointment or someone’s name; Silva et al., 2014); (2) Montreal Cognitive Assessment (MoCA) score >17 points; (3) ability to perform ADL independently (assessed using the Barthel Index and Lawton’s instrumental activities of daily living scales; Jekel et al., 2015); and (4) can understand and follow written instructions. The participants were excluded if they had major neurocognitive disorders (e.g., dementia) or a rapid decline in cognitive or physical function. The study was approved by the Joint Institutional Review Board. Informed consent was obtained from the participants (approval no.:201301045).

Procedure

The MMQ was tested in three senior centers and homes throughout Taiwan from 2015 to 2017. Specifically, MMQ data were extracted at two-time points during the follow-up period of the previous study (i.e., 3- and 6-month follow-up; Yang et al., 2019). In addition, the data on individual demographics, ADL (i.e., Barthel Index and IADL scales), MoCA, and MMQ (baseline data) scores were extracted. To avoid the interference of external factors on the participants’ judgments, assessments were performed in a quiet room under the same conditions and environment throughout the study. The MMQ was filled in by the participants or with the help of a research assistant. All other information was provided by the participants.

Measures

The MMQ (Troyer & Rich, 2002) consists of 57 items that measure the individuals’ self-perceived problems of memory function over the past 2 weeks using a 5-point Likert-type scale (0–4). These items are categorized into three subscales (i.e., Satisfaction, Ability, and Strategy). The Satisfaction subscale (formerly called

The MoCA is a screening tool for cognitive impairment that assesses multiple cognitive domains (e.g., visuospatial/executive function and memory). The scores range from 0 to 30, with higher scores indicating greater cognitive function. A score of ≤17 indicates cognitive impairment (Tsai et al., 2016). The MoCA takes approximately 10 minutes to complete (Nasreddine et al., 2005).

ADL assessment is performed using the Barthel Index and Lawton’s IADL (Bouwstra et al., 2019; Lawton & Brody, 1969). The Barthel Index assessment includes 10 items assessing basic self-maintenance skills, such as bathing, dressing, or eating. The scores range from 0 to 100, with higher scores indicating greater independence. Lawton’s IADL assessment consists of eight items for assessing more complex activities, such as using public transportation, managing finances, or shopping. The scores range from 0 to 8, with higher scores indicating greater independence.

Data Analysis

Test-Retest Reliability

We calculated the intraclass correlation coefficients (ICCs) based on a two-way random model with an absolute agreement (2,1; Qin et al., 2019; Weir, 2005). An ICCs value of ≥.90 was considered to indicate excellent reliability; .75 to .90, good reliability; .50 to.75, moderate reliability; and <.50, poor reliability (Koo & Li, 2016).

Random Measurement Error

We examined the MMQ’s random measurement error by standard error of measurement (SEM). Moreover, to improve the subscale scores’ interpretability given the influence of random measurement error, the MDC95 was also calculated.

The SEM is a measure of the degree to which observed scores are spread around a true score, which can be calculated based on the ICCs value. Because the SEM is dependent of the unit of measurement (Hars et al., 2013), it was expressed as a percentage of the mean (i.e., SEM%) to produce a unitless indicator and allow for comparison across different measures (Hars et al., 2013). The SEM and SEM% formulas are as follows:

In these formulas,

The MDC95 is a threshold for determining whether the change score between consecutive assessments exceeds a true change with a 95% confidence interval. The MDC95 values were calculated based on the SEM value using the following formula (Haley & Fragala-Pinkham, 2006):

In this formula, the value of 1.96 was the z-score corresponding to the 95% confidence interval. The square root of 2 was used to adjust for sampling from repeated assessments. A smaller MDC95 indicates greater reliability (Haley & Fragala-Pinkham, 2006).

Heteroscedasticity

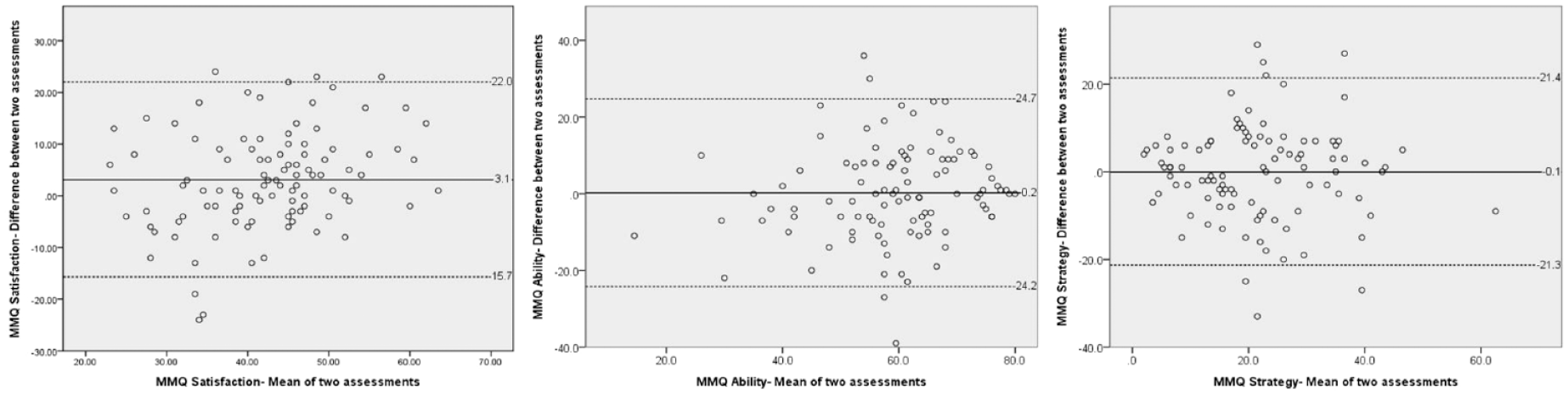

Heteroscedasticity was examined by Bland–Altman plot and Pearson’s correlation coefficient (

The Bland–Altman plot was used to reveal whether the discrepancies between the two assessments were distributed randomly and equally across individuals with diverse levels of self-perceived memory function (i.e., heteroscedasticity). This plot is constructed by plotting the means of

Systematic Bias

Systematic bias was examined by paired

Data were analyzed using the IBM Statistical Package for Social Science (SPSS) version 22.0 software (SPSS Inc., Chicago, IL, USA).

Results

Altogether, 101 participants with mean age close to 80 years were recruited and completed all study assessments. More than two-thirds of the participants were female. The participants’ characteristics are listed in Table 1.

Demographic Characteristics of the Participants (

Table 2 shows the test

Test–Retest Reliability and Random Measurement Error of the MMQ (Raw Scores;

Total score range of the MMQ Satisfaction subscale, 0 to 72 points; Total score range of the MMQ Ability subscale, 0 to 80 points; Total score range of the MMQ Strategy subscale, 0 to 76 points.

The Bland–Altman plots (Figure 1) showed that discrepancy distribution between the two assessments of the three subscales were generally random. Moreover, the amounts of discrepancy were equal across individuals with diverse levels of self-perceived memory function. The upper and lower LOAs were −15.7 and 22.0 points, respectively, for the MMQ Satisfaction subscale, −24.2 and 24.7 points for the MMQ Ability subscale, and −21.4 and 21.3 points for the MMQ Strategy subscale.

Bland–Altman plots showing the means (

Pearson’s

Regarding the systematic bias (Table 2), scores of two MMQ subscales were not significantly different across the two assessments (ES = 0.01 and −0.02,

Discussion

Test-Retest Reliability

We found that the ICCs values of the three MMQ subscales ranged from .72 to .77, indicating acceptable test

Random Measurement Error

The SEM% of two MMQ subscales was slightly higher than the acceptance criterion of 10% (SEM% of the Satisfaction and Ability subscales were 11.7% and 11.2%, respectively), but that of the Strategy subscale (28.2%) was much higher. These findings indicate that the random measurement errors of the MMQ may not be satisfactory, which may partly explain the modest ICCs mentioned earlier (i.e., scores had been affected by random measurement errors, resulting in lowered test

We found that the MDC95 values of the three MMQ subscales were 13.2 (Satisfaction), 18.4 (Ability), and 16.9 (Strategy), indicating the lowest score for true change in each domain. Such values are useful for both clinicians and researchers to interpret individuals’ changes in self-reported memory functions. For clinicians, the MDC95 can be used to determine whether the individual changes are beyond random measurement errors (Atkinson & Nevill, 1998; Mellenbergh, 2019). For instance, a Satisfaction subscale score change exceeding 14 points between two assessments can be regarded as a true change. For researchers, the percentage of individuals whose change scores exceed the MDC95 can be calculated as an index of the effectiveness of the intervention (i.e., the higher the percentage, the larger the effects). Accordingly, the MDC95 can be helpful in interpreting the MMQ’s change scores, given the influences of random measurement error on scoring in both clinical and research settings.

Heteroscedasticity

Regarding heteroscedasticity, the Bland–Altman plot showed that the distributions of the discrepancies between two assessments of the three subscales were generally random. Moreover, the Pearson’s

Systematic Bias

Our results showed no statistically significant differences among the test–retest assessments in either the MMQ Ability (

Study Limitations

This study has two limitations. First, convenience sampling was used. Second, the interval between repeated assessments was long (i.e., 3 months). These two issues may limit the findings’ generalizability. Future studies are needed to confirm the MMQ’s validity in older adults with SMC.

Conclusions and Implications

The MMQ appears to be a reliable measure with acceptable test

Clinical Implication and Future Studies

Despite some uncertainty about the psychometric properties of the MMQ subscales, we believe that the results of this study are reliable enough to recommend that researchers apply it to memory training in older adults to assess self-reported memory complaints during the interventions. The multidimensional assessment features of the MMQ can be used to quantify the experience of older adults with SMC in terms of strategies, ability, and satisfaction. Theoretically, MMQ could provide insights into participants’ perceptions of the progression of self-memory changes, which could be a starting point for shared decision-making and goal-setting in memory-related interventions.

To some extent, the assessment of SMC can be used as a predictor of cognitive function or ADL degradation and can provide a clinically valuable reference range. Therefore, the use of MMQ can help clinical professionals to identify barriers that may hinder the cognitive decline of older adults, enhance the sensitivity of clinical care, and thereby maintain or improve the cognitive function of older adults, providing the most effective individualized care. Additionally, MMQ was originally developed and validated for use with middle-aged and older adults in the community-dwelling, but may also be useful for other older adults outside the community-dwelling or those with memory/cognitive impairments. Further research could further examine the psychometric properties of MMQ in other cognitive impairment populations is recommended.

Footnotes

Acknowledgements

Thanks to all the older adults who participated in this study and also thank the institutions for their cooperation.

Author Note

This manuscript has not been published before and also is not under consideration for publication anywhere else.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This study was supported by research grants from the Ministry of Science and Technology, Taiwan [NSC102-2628-B-038-006-MY3].