Abstract

We used principal component analysis (PCA) to examine the component structure of a neuropsychological test battery administered to 943 cognitively-normal adults enrolled in the Southern Illinois University (SIU) Longitudinal Cognitive Aging Study (LCAS). Four components explaining the most variance (63.9%) in the dataset were identified: speed/cognitive flexibility, visuospatial skills, word-list learning/memory, and story memory. Regression analyses confirmed that increased age was associated with decreased component scores after controlling for gender and education. Our identified components differ slightly from previous studies using PCA on similar test batteries. Factors such as the demographic characteristics of the study sample, the inclusion of mixed patient and control samples, the inclusion of different test measures in previous studies, and the fact that many neuropsychological test measures assess multiple cognitive processes simultaneously, may help to explain these inconsistencies.

Introduction

Individual cognitive test measures are not typically sensitive enough to distinguish between normal age-related cognitive decline and pathological cognitive decline, especially in the preclinical phase of mild cognitive impairment (MCI) and Alzheimer’s dementia (AD) (Collie et al., 1999; Hayat et al., 2021; Lu et al., 2019; Mansbach et al., 2014; Riordan, 2017; Rivera-Fernández et al., 2021; Wessels et al., 2015). In addition, when scores from multiple individual measures are used in analyses, the likelihood of a Type 1 error increases due to increasing multiplicity in the dataset (Riordan, 2017). Individual measures may also have ceiling/floor effects when administered to older adults experiencing normal cognitive decline (Hoogendam et al., 2014).

In order to reduce the possibility of a Type 1 error, as well as to increase the sensitivity of diagnostic tools to subtle cognitive change during the preclinical phase of dementia, researchers have begun to employ cognitive component scores (CCS) to measure changes in cognition over time (Collie et al., 1999; Hayat et al., 2021; Hoogendam et al., 2014; Mansbach et al., 2014; Riordan, 2017; Schneider & Goldberg, 2020; Wessels et al., 2015). CCS reduce the possibility of a Type 1 error by reducing multiplicity in the dataset, reduce the ceiling/floor effects of individual measures (Riordan, 2017), and are more highly correlated with biomarkers in many neurological and psychiatric conditions than individual measures (Malek-Ahmadi et al., 2018). Because of the higher sensitivity of CCS to disease states and treatment effects (Millan et al., 2012; Riordan, 2017), these measures have been prioritized in clinical trials, where treatment-associated changes are assessed with multiple measures (Hoogendam et al., 2014). For example, the Federal Food and Drug Administration (FDA) has endorsed the use of CCS as a primary efficacy endpoint in prodromal AD clinical trials as long as they combine both cognitive and functional measures (Hoogendam et al., 2014). CCS derived from neuropsychological test batteries consist of statistically related measures within that battery (Alavi et al., 2020; Salih Hasan & Abdulazeez, 2021; Schneider & Goldberg, 2020). The CCS are then given names that represent specific cognitive processes or domains, such as learning and memory, visuospatial abilities, executive function (EF), language, attention and processing speed (which are sometimes included within EF), and intelligence (Arango-Lasprilla et al., 2017; Baek et al., 2012; Harp et al., 2021; Harrison, 2019; Harvey, 2019; Hayat et al., 2021; Hubley, 2010; Lambert et al., 2018; Millan et al., 2012; Perry et al., 2017; Riordan, 2017; Schumacher et al., 2019; Smits et al., 2015; Vigliecca, 2021; Wessels et al., 2015).

Principal component analysis (PCA) is one of the most common data reduction techniques used to identify CCS (Alavi et al., 2020; Salih Hasan & Abdulazeez, 2021). The primary goal of PCA is to reduce a large dataset into the fewest possible components in order to reduce the dimensionality of the data while maximizing the possible information and variation in the original dataset (Alavi et al., 2020; Salih Hasan & Abdulazeez, 2021). In the present study, we used PCA as a data-driven approach to determine the cognitive component structure of a neuropsychological test battery administered to cognitively-normal (predominantly) older adults in the Southern Illinois University (SIU) Longitudinal Cognitive Aging Study (LCAS) (Pyo et al., 2006).

The choice between PCA and exploratory factor analysis (EFA) has been the subject of debate for several years (Bandalos & Boehm-Kaufman, 2009). In the present study, PCA was chosen over EFA because the underlying factor structure of the test battery was not known (Santos et al., 2015), and because PCA retains the maximum possible amount of variance whereas EFA accounts for common variance in the data. After CCS were identified, we sought to confirm previous findings in the literature regarding the association between increasing age and cognitive decline after controlling for gender and education; two demographic variables that are known to influence neuropsychological functioning (An et al., 2018; Cohen et al., 2016; Lezak et al., 2012; Riordan, 2017; Sachs et al., 2020; Salthouse, 1996, 2011; Zimmerman et al., 2021).

Methods

Setting

The present study was embedded within the SIU LCAS, a longitudinal community-based study in (predominately) older adults living in central and southern Illinois, which began in 1984. The study has enrolled over 1,700 participants who have completed at least one study visit, lasting approximately 2.5 to 3 hours. Every attempt is made to see participants annually. The Springfield Committee for Research Involving Human Subjects (Institutional Review Board for SIU School of Medicine) initially approved the study (IRB # 12-416) and reviews it annually. Participants provide written informed consent at the time of their visit.

Participants

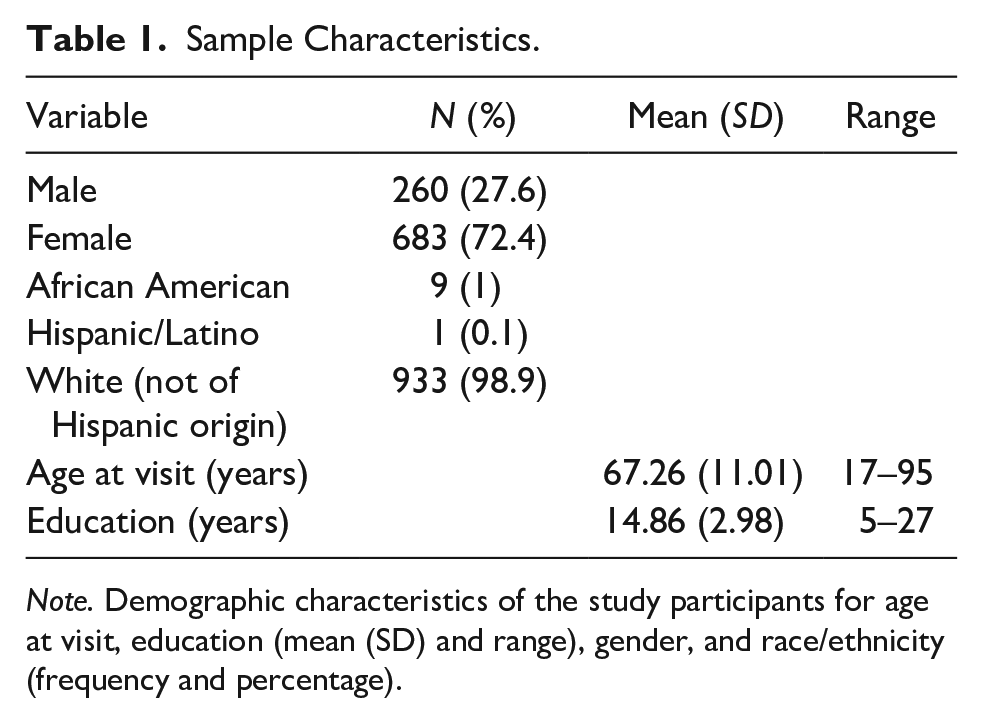

In the current, cross-sectional analysis, we used the neuropsychological test data from the first visit for 943 participants who ranged in age from 17 to 95 years old, with a mean age of 67.26 years and a standard deviation of 11.01 years. Although most of the data captured was from older adults, younger participants enrolled in the study were included in order to examine the influence of age on the component scores after adjusting for gender and education. Total years of educational attainment ranged from 5 to 27 years, with a mean of 14.86 years and a standard deviation of 2.98 years. Nine hundred thirty-three participants were White/Non-Hispanic (98.9%), 9 participants were African American (1%), and <5 participants were of Hispanic/Latino descent (0.1%). The sample predominately identified as female, with 683 (72.4%) women and 260 (27.6%) men included (see Table 1). All study visits included in the present analysis were conducted between 08/08/1995 and 10/09/2020.

Sample Characteristics.

Note. Demographic characteristics of the study participants for age at visit, education (mean (SD) and range), gender, and race/ethnicity (frequency and percentage).

Participants were selected for the present study retrospectively from a full sample of N = 1,700. Participants were recruited through a variety of methods, including caregivers, spouses and children of Memory clinic patients, newspapers advertisements, and community presentations. Participants were explained the goals of the study and the test measures they would receive at each clinic visit. Participants with incomplete data (N = 316) were excluded from statistical analyses. We also excluded participants who met the established criteria for late-stage MCI or AD at baseline (N = 174) or developed these or any other neurological or psychiatric conditions within three subsequent visits (N = 267) when this information was available. The latter exclusion criterion was limited to participants who completed at least four study visits. There were N = 676 participants (72%) included in the PCA analysis who had fewer than four consecutive visits (see Figure 1).

Flow chart of sample selection for PCA analysis.

Late-stage MCI was defined by Rey-Auditory Verbal Learning Test (RAVLT) total and delayed recall scores that were at least −1.4 standard deviations below their age-normed mean in combination with a deficit on at least one other memory measure in the battery. This conservative approach was used because of the high false positive rate for individuals with early-stage MCI (Edmonds et al., 2018, 2019). AD was diagnosed using established NINCDS-ADRDA criteria (Blacker et al., 1994).

Test Measures

Participants were administered a medical history questionnaire to determine health status, as well as a comprehensive battery of neuropsychological tests including measures of language, learning/memory, processing speed, visuospatial skills, and EF. A team of trained neuropsychologists, psychometricians, research assistants, and students conducted the assessments.

The 21 measures from 13 tests included in the PCA were as follows: a modified Stroop Test (word reading speed, color naming speed, and color-word interference) (Stroop, 1935), SIU Story Recall Test (immediate and delayed recall) adapted from the WMS-R Logical Memory subtest (Wechsler, 1987), Boston Naming Test (BNT) (Kaplan et al., 1983), Phonemic Word Fluency (PWF; sum of FAS), Semantic Word Fluency (SWF; sum of animals, boys names, and states of the United States) (Lezak et al., 2012), Alternating Word Fluency (AWF; sum of occupation/color, animals/states of the United States, and the letters C/P) similar to the Delis-Kaplan Executive Functioning System (D-KEFS) category switching subtest (Delis et al., 2001), an 8-trial version of the RAVLT (total recall across trials, 5- and 30-minute delayed recall) (Rey, 1964), Rey-Osterreith Complex Figure Test (RO-CFT) (copy, immediate recall and 30-minute delayed recall) (Osterrieth, 1944), Trail Making Test A & B (TMT-A/B) (Reitan, 1958), Hooper Visual Organization Test (HVOT) (Hooper, 1983), Raven’s Progressive Coloured Matrices (RPCM) (Raven, 1995), WAIS-R Block Design, and WAIS-R Digit Symbol (Wechsler, 1981) (see Table 2). A detailed description of all test measures including instructions is available upon request.

Mean (SD) for Cognitive Measures included in the PCA.

Note. RAVLT = Rey Auditory Verbal Learning Test; RO-CFT = Rey-Osterreith Complex Figure Test; WAIS-R = Wechsler Adult Intelligence Scale-Revised.

Statistical Analysis

A preliminary examination of the data revealed that the variables were normally distributed. The Kaiser-Meyer-Olkin Measure of Sampling Adequacy and Bartlett’s Test of Sphericity indicated that the data was suitable for a PCA. Timed measures (TMT-A/B and Stroop) were reverse coded to match the other measures where higher scores indicated better performance. Scores were standardized using a z transformation. Varimax rotation was used to extract uncorrelated components from the variables. As a form of sensitivity analysis, oblimin rotation was also run to extract correlated components. Components were extracted according to eigenvalues of ≥1 and total variance explained. Linear regressions were then run to examine the influence of age on component scores after controlling for gender and education. All analyses were performed using SPSS software (Statistical Package for Social Sciences; SPSS, Inc., Chicago IL., USA).

Results

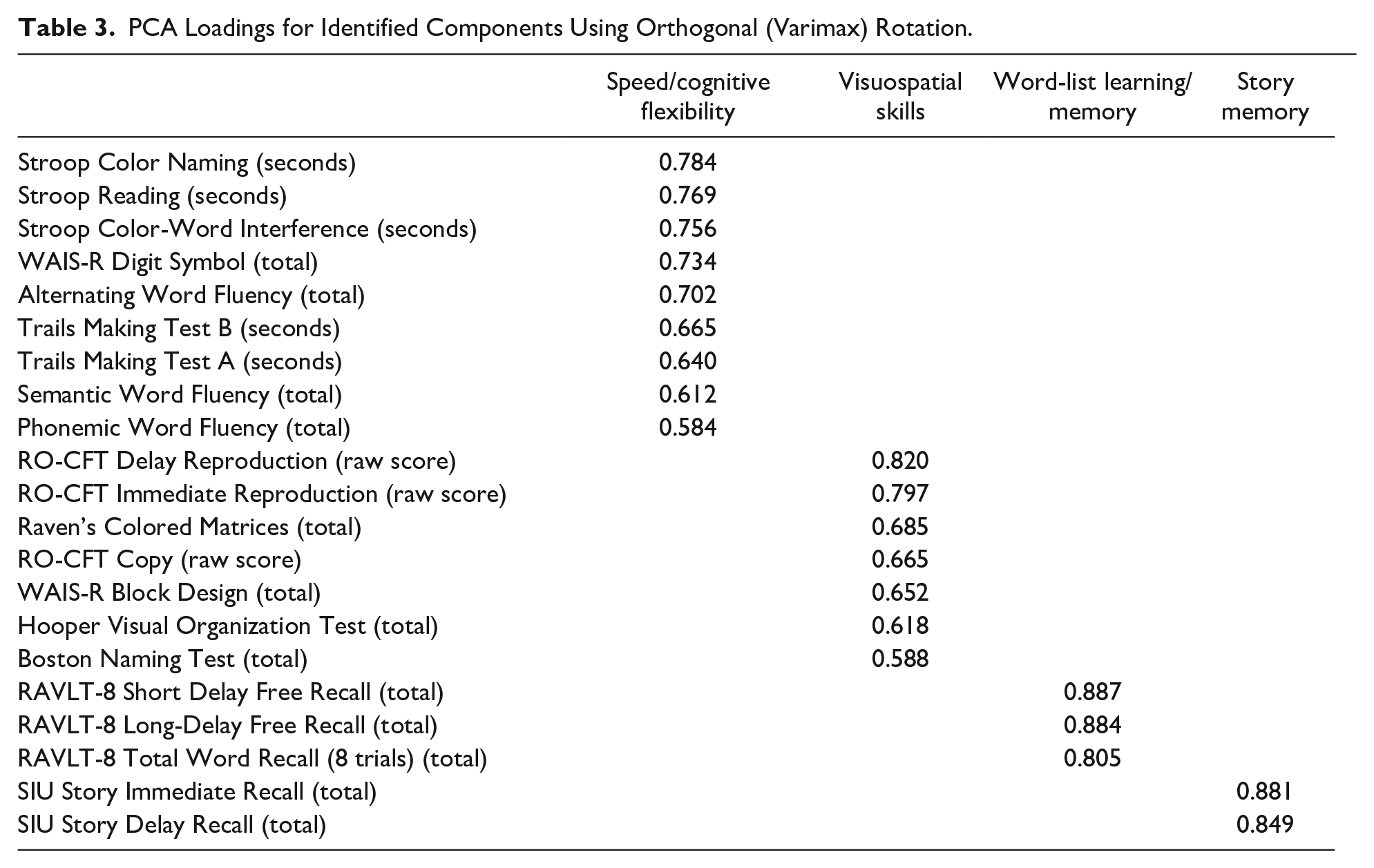

The varimax rotation produced a four-component model that explained 63% of the variance in the dataset. The four components were labeled as: speed/cognitive flexibility, visuospatial skills, word-list learning/memory, and story memory (See Table 3). The variable loadings on the components ranged between 0.58 and 0.88. Consistent with the logic of PCA, most variable loadings occurred on the first two (speed/cognitive flexibility and visuospatial skills) rather than the latter components (word-list learning/memory and story memory). Sensitivity analysis using oblimin rotation resulted in the same four components (see Supplemental Table) with the same test variables loading onto the four components in the varimax and oblimin rotations, though the exact values were not identical.

PCA Loadings for Identified Components Using Orthogonal (Varimax) Rotation.

Linear regression analysis demonstrated that increasing age was associated with decreased scores for all four components after controlling for gender and education (b = 0.44, b = 0.29, b = 0.16, b = 0.11, p < .001) (see Figure 2). The strongest relationship was between age and the speed/cognitive flexibility component. The percentage of variance in component scores explained by gender and education (i.e., Radj2) was 51%, 11%, 3%, and 6%, for the speed/cognitive flexibility, visuospatial skills, word-list learning/memory, and story memory components, respectively. After controlling for gender and education, increasing age explained 24%, 18%, 5%, and 7% of the variation in those four component scores according to Radj2.

Age effects on (a) speed/cognitive flexibility component, (b) visuospatial skills component, (c) word list learning/memory component, and (d) story memory component. The x-axis represents age and the y-axis represents the component scores. Estimates are adjusted for gender and education.

Discussion

The current study examined the cognitive component structure of a neuropsychological test battery administered in the SIU LCAS. The PCA revealed four components, which best represented the cognitive processes of speed/cognitive flexibility, visuospatial skills, word-list learning/memory, and story memory. The same four components were identified in the varimax and oblimin rotations, though the exact values of the variable loadings differed slightly.

We also found that age predicted all four cognitive components after controlling for gender and education; as expected, the strongest relationship was between age and the speed/cognitive flexibility component. Further, we found that the variables of gender and education together explained more than 50% of the variation in the speed/cognitive flexibility component score; gender and education accounted for only 11% of the variation in the visuospatial skills component and even less variation in the memory component scores (i.e., 3%–6%).

Our findings support the existing literature that increasing age has a significant negative effect on various aspects of cognition, especially processing speed (An et al., 2018; Salthouse, 1996, 2011). However, the exact mechanism by which age affects cognition is not well understood. Previous studies have demonstrated that normal age-related deterioration of the brain negatively affects cognitive processes, such as processing speed, explicit memory, and verbal fluency (An et al., 2018). In addition, age-related declines in processing speed may account for most of the age-related declines that are present for higher-order cognitive processes such as EF and episodic memory (Salthouse, 1996, 2011). Age-related declines in processing speed may be attributable to anatomical changes in the brain, although there is uncertainty as to if, and which, brain structures or physiological properties are associated with cognitive aging (Raz & Rodrigue, 2006; Salthouse, 2011; Van Petten, 2004). In a review of the literature, Salthouse (2011) observed that multiple longitudinal and cross-sectional studies have found a significant relationship between white matter hyperintensities (WMH), a marker of white matter damage, and age-related changes in cognitive function. WMH are commonly found in adults over age 60 but may be found as early as age 30, and may impact cognition by interrupting or slowing the speed of signal transmission across brain regions (Bartzokis, 2004; Hu, Ou et al., 2021; Salthouse, 2011).

An interesting finding in this study is that all three RO-CFT measures loaded onto the visuospatial component, rather than only the copy trial. As tests of visuospatial memory (Arango-Lasprilla et al., 2017), the immediate and delayed recall trial measures could be expected to load with the other memory measures such as the word list or story recall. However, the RO-CFT assesses incidental visual memory while all the other memory measures assessed intentional verbal memory. These two types of memory and their underlying cognitive processes differ in substantive ways; examinees are not told to remember the figure, but they are told to learn and remember the word list and story. Thus, any information recalled from the RO-CFT would have been encoded unintentionally (Kontaxopoulou et al., 2017; Vigliecca, 2021). This hypothesis is supported by Rivera-Fernández et al. (2021), who found that the RO-CFT copy and recall measures loaded onto the same component. Previous research has also indicated that the RAVLT is superior to the RO-CFT at predicting conversion of MCI to AD (Zhao et al., 2015). More research is necessary to understand the usefulness of the RO-CFT in assessing memory functioning in MCI and AD.

Another intriguing finding is that the word-list learning/memory measures and story memory measures did not load onto the same component in our final four-component model, which explained the most variance. Previous studies have shown low correlations between word list memory and story memory (Hoogendam et al., 2014), as well as inconsistent findings regarding the sensitivity of both types of tasks to cognitive decline (Baek et al., 2012; Mansbach et al., 2014). For example, Baek et al. (2012) found that the word list recall task was more sensitive to cognitive decline than the story recall task, even in individuals with dementia. Conversely, Mansbach et al. (2014), found that in both cognitively-normal older adults and individuals with dementia and MCI, all four verbal memory measures (delayed recall and recognition of a story and delayed recall of two different word lists) independently predicted cognitive diagnosis. However, when the data analyses were restricted to only cognitively-normal older adults and individuals with MCI, only one of the delayed word list recall measures predicted cognitive function. This finding may suggest that the word list recall and story recall tasks capture different cognitive functions for cognitively-normal older adults versus those with cognitive impairment. In general, word list recall is thought to be more difficult than story recall, as it requires patients to engage in effortful encoding strategies because word lists lack inherent meaning (Mansbach et al., 2014).

Lastly, we did not expect to find that the BNT would group with the other visuospatial measures, as the BNT is a common measure of semantic language (Garcia et al., 2008; Lin et al., 2014; Sachs et al., 2020; Tracy & Boswell, 2008). Instead, we hypothesized that the BNT and verbal fluency measures (PWF, SWF, AWF) would group together to form a language component. One possible explanation for this discrepant finding is the strong visuospatial element of the BNT (i.e., participants are asked to name each line drawing). Supporting this interpretation, a previous study demonstrated that visual degradation impairs performance on the BNT (Ferraro et al., 2002). Naming errors on the BNT may also be attributed to impairment in visual interpretation or word retrieval difficulties (Lin et al., 2014). Thus, normal age-related degradation in visual acuity or word retrieval may have a negative impact on BNT performance. However, this finding could also imply that there are not enough measures of language in the battery to form a component.

Upon comparing our CCS to those of previous studies, we noticed a pattern of inconsistencies regarding the definitions of CCS and subsequently cognitive domains, as well as which cognitive tests are being used to measure these domains. Researchers disagree as to which cognitive processes represent unique cognitive domains and which cognitive processes should be grouped together into a broader cognitive domain (Harp et al., 2021; Harrison, 2019; Harvey, 2019; Lambert et al., 2018; Perry et al., 2017; Schumacher et al., 2019; Smits et al., 2015). For example, processing speed is sometimes defined as its own cognitive domain, but has also been grouped with EF (Arango-Lasprilla et al., 2017; Baek et al., 2012; Cohen et al., 2016; Harvey, 2019; Hayat et al., 2021; Kontaxopoulou et al., 2017; Lambert et al., 2018; Perry et al., 2017; Schumacher et al., 2019; Wessels et al., 2015). Inconsistencies regarding the component structure of neuropsychological batteries identified by PCA may contribute to this problem. However, not all cognitive domains are inconsistently defined. Memory is listed as a cognitive domain in most studies, potentially due to the fact that it is a core fundamental process of human cognition (Lambert et al., 2018; Luzzi et al., 2011). Illustrating such a discrepancy, a PCA performed by Rivera-Fernández et al. (2021) identified cognitive components of processing speed, memory, visuoconstructional skills, verbal fluency, and EF across a combined sample of cognitively healthy older adults, individuals with subjective cognitive decline (SCD), and individuals with MCI. These components are greater in number and differ in composition from the ones identified in the present study—we found that verbal fluency measures grouped onto a speed/cognitive flexibility component, rather than its own component (Rivera-Fernández et al., 2021). In addition, in the Wisconsin Registry for Alzheimer’s Prevention (WRAP) study, Clark et al. (2016) found that immediate and delayed memory measures loaded onto separate cognitive components, whereas we found that they grouped onto the same component. Previous studies have also identified EF components (Harrison, 2019; Harvey, 2019; Lambert et al., 2018; Rivera-Fernández et al., 2021; Schumacher et al., 2019), which contain many of the measures included in the speed/cognitive flexibility component identified in the present study (i.e., verbal fluency measures, TMT-B) (Harrison, 2019; Lambert et al., 2018).

The variability in findings from previous studies may be attributed to a number of different factors including: differences in the demographic characteristics of the study sample (Lambert et al., 2018), the inclusion of mixed patient and control samples (Garcia et al., 2008; Rivera-Fernández et al., 2021), the inclusion of different test measures in the PCA (Harrison, 2019), and the fact that many neuropsychological test measures assess multiple cognitive processes at the same time (e.g., EF and processing speed measures) (Harvey, 2019; Lambert et al., 2018). Future studies examining data reduction techniques in large samples with consistent test measures are needed to improve the consistency of CCS across studies. For example, Agelink van Rentergem et al. (2020) argue that large-scale factor analyses can create more robust findings than comparing multiple small-scale studies using PCA. Across 52 previous exploratory and confirmatory factor analysis studies (N = 60,398), they found cognitive factors of acquired knowledge/crystallized ability, processing speed, long-term memory encoding and retrieval, working memory, and word fluency. However, using such a technique required the grouping of different tests together as a single variable, such as the RAVLT, California Verbal Learning Test, and the Hopkins Verbal Learning Test (Agelink van Rentergem et al., 2020).

Efforts to standardize definitions of cognitive domains and establish best practice in their measurement would help to address the aforementioned inconsistencies in the literature by reducing variability in test selection and improving interpretation of CCS (Harp et al., 2021; Lambert et al., 2018). Additionally, investigators would have better guidance as to the optimal composition of neuropsychological test batteries for specific clinical populations, as the functionality of certain cognitive domains may be more or less important for different populations. Inconsistencies regarding the component structure of neuropsychological test batteries need to be addressed in future studies, as they could potentially lead to the development of unreliable diagnostic tools that require extensive validation and re-development efforts.

A major limitation of the present study is that, of the participants included in the analyses, 676 had fewer than four visits; therefore, some participants may have developed MCI or AD after their participation in the study ended. The implication is that their data may be contaminated due to prodromal cognitive decline, even though they were still considered cognitively normal at the time. The cohort was also not representative of the overall U.S. population in terms of gender and race, with a significantly higher number of female and non-Hispanic/White participants than the general U.S. population (U.S. Census Bureau, 2022). Further data collection in more diverse participants as well as both internal and external validation of the PCA components are necessary to improve the generalizability of our findings. Community outreach efforts are underway to diversify the SIU LCAS cohort with respect to gender, race/ethnicity, and geography.

While the present study has a number of limitations as described above, we also believe that this study has several strengths that are worth noting. First, the sample size of the present study was much larger than other previous studies that have used PCA to examine the component structure of neuropsychological test measures. Second, the present study may help to further reduce an existing gap in the current literature regarding the usefulness of PCA of neuropsychological test data for the diagnosis of cognitive impairment in community based longitudinal cohort studies of cognitive aging and dementia. For example, previous studies using PCA have used data from mixed samples of normal controls and individuals with MCI and/or AD (Garcia et al., 2008; Levin et al., 2013; Rivera-Fernández et al., 2021), which limits the generalizability of these findings to individuals with no cognitive impairment. In the present study, we included baseline data from participants who were considered cognitively-normal at baseline and for three subsequent visits when this information was available to reduce (but not eliminate) the possibility that we would include individuals with incipient cognitive impairment. Finally, because we restricted our analysis to baseline data, we also eliminated any potential of practice effects.

Conclusion

The present study used PCA to identify the underlying cognitive component structure of neuropsychological test measures administered to participants enrolled in the SIU LCAS. These four components identified broadly corresponded to speed/cognitive flexibility, visuospatial skills, word-list learning/memory, and story memory. Consistent with previous findings in the literature, all of our components were negatively associated with increasing age. More studies in larger, more diverse populations are needed to determine if the components identified in the present study are sensitive to the pre-dementia phase of AD.

Supplemental Material

sj-docx-1-ggm-10.1177_23337214221130157 – Supplemental material for Cognitive Component Structure of a Neuropsychological Battery Administered to Cognitively-Normal Adults in the SIU Longitudinal Cognitive Aging Study

Supplemental material, sj-docx-1-ggm-10.1177_23337214221130157 for Cognitive Component Structure of a Neuropsychological Battery Administered to Cognitively-Normal Adults in the SIU Longitudinal Cognitive Aging Study by Madison G. Hollinshead, Albert Botchway, Kathleen E. Schmidt, Gabriella L. Weybright, Ronald F. Zec, Thomas A. Ala, Stephanie R. Kohlrus, M. Rebecca Hoffman, Amber S. Fifer, Erin R. Hascup and Mehul A. Trivedi in Gerontology and Geriatric Medicine

Footnotes

Acknowledgements

We would like to thank all of the individuals who have participated in the SIU Longitudinal Cognitive Aging Study.

Author Contributions

All authors (MGH, AB, KES, GLW, RFZ, TAA, SRK, MRH, ASF, ERH, MAT) meet the following criteria:

1. Substantial contributions to the conception or design of the work; or the acquisition, analysis, or interpretation of data for the work (see below); AND

2. Drafting the work or revising it critically for important intellectual content (see below); AND

3. Final approval of the version to be published; AND

4. Agreement to be accountable for all aspects of the work in ensuring that questions related to the accuracy or integrity of any part of the work are appropriately investigated and resolved.

MGH (design, acquisition, analysis and interpretation of data for the work; drafting and revising of the work), AB (analysis of data for the work; revising of the work), KES (interpretation of data for the work; revising of the work), GLW (design and acquisition of data for the work; revising of the work), RFZ (design, acquisition, and interpretation of data for the work; revising of the work), TAA (interpretation of data for the work; revising of the work), SRK (acquisition of data for the work; revising of the work), MRH (interpretation of data for the work; revising of the work), ASF (interpretation of data for the work; revising of the work), ERH (interpretation of data for the work; revising of the work), MAT (design, acquisition, analysis and interpretation of data for the work; drafting and revising of the work).

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: There are no current funding sources to report. This work was previously supported by the Illinois State funded Dale and Deborah Smith Center for Alzheimer’s Research and Treatment (formerly the Center for Alzheimer’s Disease and Related Disorders).

Ethical Approval/Patient Consent

The present study was approved by and conducted in accordance with the Southern Illinois University Institutional Review Board (Springfield Committee for Research Involving Human Subjects), approval number 12-416. The study undergoes annual continuing reviews. All study participants have provided written informed consent.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.