Abstract

This study investigated the impact of ChatGPT on task motivation in advanced Chinese as a Foreign Language (CFL) learners, guided by Self-Determination Theory (SDT) and Self-Regulated Learning (SRL). Seventy-two undergraduates were assigned to either an AI-assisted writing intervention or a peer-collaborative control group. Quantitative results showed a large immediate increase in motivation for the intervention group (p < .001, Cohen’s d ≈ 1.03), with sustained gains at delayed post-test (p < .01, Cohen’s d ≈ 0.70). Qualitative analysis of eight participants revealed enhanced writing confidence (100%), increased enjoyment (75%), over-reliance on AI (50%), and frustration with misaligned outputs (50%). Interactions primarily targeted vocabulary and sentence-level refinement rather than broader content. Findings suggest AI scaffolding can improve perceived competence, autonomy, and self-regulatory strategies, although over-reliance may limit deeper engagement. Instructional recommendations include draft-first approaches, reflective prompts, and teacher mediation. Overall, AI integration can meaningfully enhance multidimensional task motivation in Mandarin writing.

Keywords

Introduction

Motivation has long been recognized as a central construct in second language acquisition (SLA), influencing both the effort learners invest and the persistence they demonstrate over time (Azar & Tanggaraju, 2020; Dörnyei & Ushioda, 2021). It is defined as the driving force behind choices, behaviors, and goal pursuit, and is highly dynamic, shaped by individual differences, instructional context, and task-specific factors (Dörnyei et al., 2015; Ushioda, 2008). A particular focus of interest is task motivation, which refers to learners’ engagement, emotional responses, and sustained effort within specific learning tasks (Hiver & Dao, 2025; Zhao, 2024). Essay writing is one such task, often presenting considerable challenges for L2 learners due to its cognitive complexity, linguistic demands, and requirement for sustained engagement (Abdi Tabari & Lee, 2025). In this study, task motivation is operationalized in terms of multiple dimensions, including perceived competence, anxiety, intrinsic interest, cognitive effort, and persistence, as learners engage in Mandarin argumentative essay writing.

Mandarin Chinese, as a morphosyllabic language, poses unique challenges for second language learners, particularly in writing. Compared with alphabetic languages such as English, writing in Chinese involves not only vocabulary and grammar but also character memorization, syntactic structuring, and contextually appropriate expression. These additional layers of complexity often impact learners’ willingness to write in Chinese and contribute to higher levels of writing anxiety and reduced motivation (X. Huang et al., 2024). Despite growing interest in integrating digital tools to support Chinese language instruction, relatively few studies have examined how generative AI influences learners’ task motivation in L2 Chinese writing, particularly for essay production. Most AI research in SLA has focused on English and on performance outcomes, leaving motivational processes in Mandarin writing underexplored.

While tools such as Grammarly, Write & Improve, and QuillBot provide grammar correction and vocabulary enhancement, they tend to offer static feedback that may not fully engage learners or support metacognitive growth (Abdullah, 2025; Zhou, 2025). In contrast, ChatGPT, developed by OpenAI, offers a dialogic, context-aware interface that allows learners to ask questions, receive immediate feedback, and iteratively refine their writing. These dialogic affordances align with the principles of learner-centered instruction, emphasizing autonomy, responsiveness to individual learner needs, and active engagement in the learning process (Barrot, 2024; Renandya et al., 2024).

Although studies have begun to examine ChatGPT’s pedagogical potential in L2 writing contexts (Guo et al., 2022; Su et al., 2023), empirical research on its motivational impact remains sparse, particularly in the context of Mandarin Chinese as a second language. Existing studies largely focus on English learners or general attitudes toward AI tools, overlooking how these technologies dynamically influence learner motivation throughout the writing process. Comparative studies of AI writing tools also rarely address learners’ behavioral and affective responses beyond surface-level preferences (Bhattacharya et al., 2024; Zhang et al., 2025). These gaps highlight the need to investigate not only whether ChatGPT enhances motivation but also how learners’ motivation evolves during AI-supported writing tasks.

This study was designed to bridge these gaps by examining the impact of ChatGPT-4o on L2 learners’ motivation to write essays in Mandarin Chinese. Specifically, it investigated whether, to what extent, and in what ways interaction with ChatGPT shaped learners’ task motivation over time. In addition, the study aimed to justify the pedagogical integration of ChatGPT by comparing its affordances with those of other commonly used AI platforms. Unlike rule-based or correction-focused tools, ChatGPT’s adaptive and dialogic feedback aligns closely with learner-centered instruction: It supports autonomy through learner choice in prompts, competence through scaffolded feedback, and relatedness through dialogic interaction. These affordances also map onto Self-Determination Theory (SDT) and Self-Regulated Learning (SRL), which together provide a theoretical foundation for understanding how ChatGPT may influence motivational processes in L2 writing.

Employing an experimental mixed-methods design, the study combined quantitative measures of motivational change with qualitative insights derived from learners’ reflections and experiences. In doing so, it contributes to the expanding literature on generative AI in L2 education and offers pedagogical guidance for the effective integration of tools such as ChatGPT. The findings are particularly significant for the underexplored field of Chinese as a second language, offering practical implications for fostering sustained motivation and learner autonomy in academic writing.

Literature Review

Task Motivation in Second Language Acquisition

Motivation occupies a central role in SLA, influencing both the intensity and persistence of learners’ efforts over time (Dörnyei & Ushioda, 2021). It is widely recognized as a key determinant of language learning success. Within this broader construct, task motivation refers to learners’ momentary engagement, interest, and effort during specific language activities, encompassing intrinsic, extrinsic, and task-specific dimensions (Jendli & Albarakati, 2024). This situational form of motivation is shaped by learners’ perceptions of task difficulty, relevance, novelty, and the extent to which tasks afford autonomy.

Task motivation emerges from the dynamic interaction of individual learner characteristics, classroom context, and task design (Hiver & Dao, 2025). Learner-related factors, such as perceived competence, anxiety, and cognitive or learning styles, significantly influence motivational responses. At the contextual level, teacher support, peer collaboration, and overall classroom climate shape engagement. At the task level, features including authenticity, clearly defined purposes, and opportunities for personal expression consistently enhance motivation (Barcomb & Iwashita, 2024; Jin, 2025; Lamb, 2017).

Research shows that task motivation is strengthened when learners are afforded ownership over tasks through opportunities for personalization and choice (Mozgalina, 2015; Zare & Aghajani Delavar, 2024). However, balance is crucial: Excessive freedom can lead to confusion or off-task behavior. Task difficulty also plays a pivotal role: Tasks that are too easy or overly demanding can reduce motivation, whereas optimally challenging tasks sustain engagement (Kormos & Préfontaine, 2017).

SDT provides a useful framework for understanding how learning environments can support motivation by satisfying three basic psychological needs: autonomy, competence, and relatedness (Y. C. Huang et al., 2019; Ryan & Deci, 2017). This theoretical lens directly informed our methodological design: The Task Motivation Questionnaire (TMQ) was selected to measure changes across these dimensions, ensuring alignment between theory and measurement. In SLA contexts, tasks that enable learners to make choices, experience progress, and collaborate with peers are more likely to foster sustained motivation, whereas overly prescriptive tasks or those lacking meaningful interaction can result in disengagement.

Complementing this perspective, SRL theory highlights the role of learners’ metacognitive and behavioral strategies in shaping motivation and performance (Zeidner & Stoeger, 2019). SRL involves planning, monitoring, and evaluating one’s own learning processes (Alonso-Mencía et al., 2020). Self-regulated learners set clear goals, seek feedback, revise their work, and reflect on progress (Xiao & Yang, 2019). In this study, SRL principles guided the design of semi-structured interviews and AI-supported writing tasks, which scaffolded iterative engagement and reflective practice. This alignment enabled the study to capture both motivational outcomes and the underlying self-regulatory processes.

Finally, principles of learner-centered instruction emphasize designing tasks that respond to learners’ diverse backgrounds, needs, and aspirations (Alshraah et al., 2023). This approach advocates flexible task design and differentiated feedback to support autonomy and engagement, treating motivation not as a secondary outcome but as a central pedagogical goal.

Argumentative Writing and Motivation in L2 Chinese with ChatGPT

Essay writing, particularly in academic contexts, is a complex skill that poses multiple challenges for L2 learners. Argumentative writing demands both linguistic accuracy and higher-order cognitive skills, including reasoning, organization, and the ability to present nuanced positions (Pandya & Saiyad, 2025). For learners of Mandarin Chinese, these demands are intensified by the logographic writing system, topic-prominent sentence structures, and culturally specific rhetorical conventions, all of which require additional cognitive and affective effort (Fang, 2021).

Consequently, many L2 Chinese learners experience elevated writing anxiety, reduced self-confidence, and lower motivation (Wang et al., 2023; Zhou & Goh, 2025). Teachers often face constraints in providing individualized support due to large class sizes, limited instructional time, and scarce resources. As a result, learners frequently receive generalized feedback that may not meet their specific linguistic or cognitive needs, potentially undermining motivation and engagement (Guo et al., 2022).

Research highlights the importance of personalized feedback, which not only identifies linguistic and structural weaknesses but also supports metacognitive development, goal setting, and increased task motivation (Olsen & Hunnes, 2024). Delivering such feedback at scale, however, is challenging, particularly in L2 Chinese essay writing classrooms.

In response, AI-based tools have emerged as promising solutions. Among these, ChatGPT stands out for its advanced language modeling and ability to generate human-like, contextually sensitive text in multiple languages, including Mandarin. Unlike tools such as Grammarly or Write & Improve, which primarily offer surface-level corrections, ChatGPT provides conversational, iterative, and adaptive support. It can assist learners with brainstorming, revising, and evaluating argument structure, thereby addressing both form and content.

Recent studies indicate that AI chatbots can improve writing fluency, accuracy, and learner satisfaction (Apriani et al., 2024; Barrot, 2023). However, research in Mandarin Chinese remains limited, and motivational outcomes are particularly underexplored. Most studies focus on performance metrics rather than on how AI tools influence learners’ willingness to engage with challenging tasks, such as argumentative essay writing.

Integrating SDT and SRL frameworks helps illuminate these motivational dynamics. From an SDT perspective, AI-supported writing environments can satisfy learners’ needs for autonomy (through choice and control over AI use), competence (by providing targeted, scaffolded support), and relatedness (via dialogic interactions), thereby enhancing intrinsic motivation (Ryan & Deci, 2017). From an SRL perspective, ChatGPT scaffolds iterative planning, monitoring, and reflection, enabling learners to engage in self-regulated writing practices, track their progress, and adjust strategies in real time (Xiao & Yang, 2019; Zeidner & Stoeger, 2019).

Nevertheless, the potential for over-reliance or surface-level engagement remains a concern. Passive use of AI outputs may reduce cognitive effort and limit the development of self-regulatory skills, highlighting the importance of instructional design that encourages active, reflective, and strategic engagement.

Guided by these theoretical lenses, the present study investigates how interaction with ChatGPT-4o influences L2 learners’ task motivation during Mandarin argumentative essay writing, examining not only motivational outcomes but also the strategies learners employ to engage with AI throughout the writing process.

Accordingly, the research addresses the following questions:

RQ1: To what extent does engagement with ChatGPT-4o influence L2 learners’ task motivation in writing argumentative essays in Mandarin Chinese?

RQ2: How do L2 learners perceive their experiences of using ChatGPT-4o throughout the essay writing process?

Using a mixed-methods approach, the study examines both changes in learners’ motivational states and their perceptions of AI-assisted writing. By analyzing learner engagement across different stages of the writing cycle, the research provides a nuanced understanding of the pedagogical affordances and limitations.

Research Method

Context and Design

This study was conducted at the Mandarin Chinese Department of University M, a leading comprehensive university in West Malaysia, where Mandarin is taught as a foreign language and primarily used in academic and culturally immersive contexts. Such an environment provides an authentic setting for investigating CFL learning dynamics.

A sequential explanatory mixed-methods design was adopted, integrating quantitative (motivation questionnaires) and qualitative (semi-structured interviews) approaches. The quantitative phase addressed RQ1 by examining the effects of ChatGPT-4o on learners’ motivation, while the qualitative phase complemented these findings by exploring learners’ experiences in depth, thereby enhancing interpretation and contextualization in relation to RQ2.

To strengthen causal inference, a pre-test/post-test control group experiment was implemented. Participants were randomly assigned to either a control group, completing conventional pair-based writing tasks, or an experimental group, engaging with ChatGPT-4o to support argumentative essay composition. Motivation was measured at three intervals, pre-intervention, immediately post-intervention, and one month post-intervention—to capture both immediate and sustained effects of the AI-assisted writing intervention (Zare et al., 2025).

This design enabled clear comparisons between groups while controlling for external variables. The repeated-measures framework allowed for the analysis of motivational changes over time, providing a comprehensive perspective on the impact of AI-supported writing.

Participants

The study involved 72 undergraduate students enrolled in a compulsory advanced Mandarin course at University M during Semester II, 2024. Most participants majored in Chinese Language and Culture or East Asian Studies, while a smaller proportion were from other humanities programs. Learners’ primary reasons for studying Chinese included academic interest, career development, and cultural engagement. For all participants, Mandarin study was a required component of their degree, providing a clear basis for understanding task motivation and engagement with AI-supported writing activities.

Eligibility criteria included voluntary participation, comparable Mandarin proficiency (approximately HSK Level 5), similar writing competence, and willingness to engage in language learning tasks (Hsiao & Broeder, 2013; Lu & Song, 2017). HSK Level 5 was deliberately selected because it reflects an advanced proficiency stage at which learners can read complex texts and produce extended essays (Yang & Jirawit, 2025). At this stage, linguistic knowledge is sufficiently developed to allow the study to focus on the motivational and self-regulatory processes involved in completing cognitively demanding tasks, such as argumentative essay writing (Yang & Jirawit, 2025; Zhou & Goh, 2025). In line with SDT, this proficiency level is particularly relevant because learners’ needs for autonomy and competence are salient, making motivational fluctuations more observable. This rationale ensured that motivation could be investigated beyond the basic challenges of vocabulary or grammar acquisition (Yang & Jirawit, 2025).

A stratified random sampling procedure was used to select participants. The larger student population was first stratified by academic year, gender, and age, and participants were randomly chosen within each stratum to ensure a balanced and representative sample. Baseline assessments of Mandarin proficiency, writing ability, and task-related motivation were conducted prior to the intervention (as described in Section 3.1) to establish equivalence between experimental and control groups.

The final sample comprised 16 males (22.22%) and 56 females (77.78%). Regarding academic level, 12.5% were second-year (n = 9), 33.33% were third-year (n = 24), and 54.17% were final-year students (n = 39).

All participants provided informed consent and were briefed on the study’s objectives, procedures, confidentiality protections, and their right to withdraw at any time (Esposito & Clum, 2002).

Measures

Standardized Mandarin Proficiency Test (Hanyu Shuiping Kaoshi, HSK)

To establish participants’ baseline language proficiency and ensure comparability between groups, the HSK was administered. The HSK is an internationally recognized standardized assessment that evaluates learners’ Mandarin vocabulary, grammar, reading, writing, and listening comprehension. Its proficiency levels correspond to the Common European Framework of Reference for Languages (CEFR), ranging from Pre-A1 to C2 (Lu & Song, 2017). In this study, the HSK Level 5 test (CEFR equivalent: B1+/B2) was used to verify that general language proficiency differences would not confound the investigation of learners’ task motivation and writing performance. The full test paper is available in online

Independent Writing Tasks in Mandarin

Participants’ baseline writing proficiency was further assessed using the standardized HSK Level 5 independent writing tasks, which included Sentence Completion and Short Essay Writing. The Short Essay Writing section required participants to either (a) combine a set of given words to compose a short essay of approximately 80 words or (b) write a short essay of approximately 80 words based on a picture. These tasks were employed to establish general proficiency in vocabulary use, grammatical accuracy, and written coherence. They are widely recognized as valid, authoritative, and reliable measures of Mandarin writing ability and required participants to complete their responses within 40 minutes (Yang & Jirawit, 2025; Zhou & Goh, 2025). The baseline writing prompt is provided in

Following baseline assessment, researcher-developed independent writing tasks were used to examine learners’ task motivation during the intervention. These prompts were designed to be authentic, personally meaningful, and contemporary (e.g., topics related to language policy and educational technology). They were aligned with the argumentative and expository demands of advanced Chinese writing at HSK Levels 5–6, although they did not replicate the exact HSK test format.

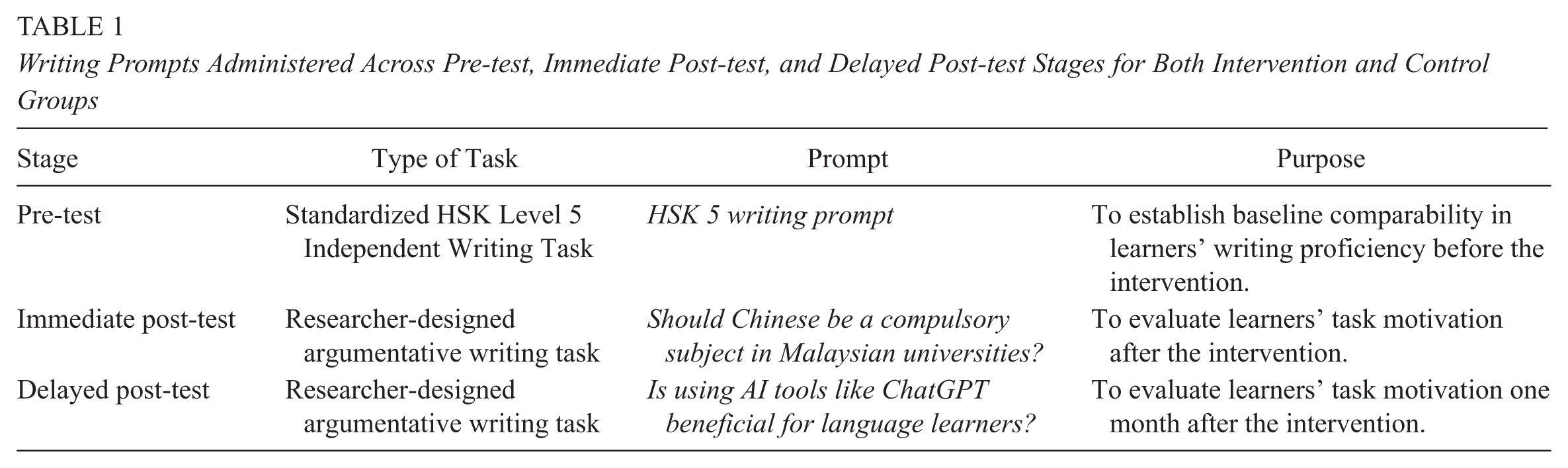

To ensure clarity and transparency, Table 1 summarizes the writing prompts administered at each testing stage. These tasks served as reference points for evaluating learners’ engagement with argumentative writing and for tracking changes in task motivation over time.

Writing Prompts Administered Across Pre-test, Immediate Post-test, and Delayed Post-test Stages for Both Intervention and Control Groups

All participants in both the intervention and control groups responded to the same prompts at each stage, ensuring methodological comparability. The inclusion of the ChatGPT-related prompt was intentionally pedagogical, as it aligned with the study’s central research aim and encouraged learners to critically reflect on a current and authentic educational issue. We acknowledge that participants in the intervention group may have been influenced by prior engagement with ChatGPT, potentially enhancing initial motivation. To maintain methodological consistency, prompts were fixed across testing stages rather than rotated or counterbalanced. This design choice allowed for a controlled examination of task motivation while highlighting potential novelty effects associated with AI-mediated writing.

Task Motivation Questionnaire

To assess learners’ motivation in relation to specific writing tasks, this study employed the TMQ originally developed by Ma (2009). As outlined in

The TMQ scale encompasses eight distinct dimensions: Perceived Choice (Item 5, 23, 24), Perceived Competence (Item 1, 9, 14), Relatedness (Item 2, 12, 17), Intrinsic Motivation (Item 7, 15, 20), Identified Regulation (Item 6, 13, 19), External Regulation (Item 3, 11, 16), Amotivation (Item 4, 8, 21), and Intentions to Persist (Item 10, 18, 22). Each dimension is represented by three corresponding items, allowing for a nuanced analysis of motivational constructs (Zare et al., 2025).

To ensure that the TMQ was culturally and contextually appropriate for Malaysian CFL learners, all items were carefully reviewed and, where necessary, slightly reworded in English to enhance clarity for participants whose primary medium of instruction is English. A panel of three bilingual experts in Chinese language education evaluated each item for linguistic clarity, cultural relevance, and alignment with intrinsic, extrinsic, and task-specific motivational constructs. Minor adjustments were made based on expert feedback to ensure that the items were fully comprehensible and applicable to Malaysian learners’ experiences with AI-assisted writing.

A pilot test with 10 Malaysian CFL learners at HSK Level 5 confirmed that the adapted items were easily understood and interpreted as intended. Despite these contextual adjustments, the TMQ retained strong psychometric properties. Principal component analysis with varimax rotation confirmed its construct validity (KMO = 0.813; Bartlett’s Test of Sphericity,

The TMQ was administered electronically via Google Forms at three critical stages: prior to the intervention, immediately after, and one month following completion. This design enabled a longitudinal analysis of motivational changes within and between the control and experimental groups, while maintaining theoretical alignment with SDT and SRL principles.

Follow-up Interviews

To complement the quantitative data and provide deeper insights into learners’ motivational experiences, follow-up semi-structured interviews were conducted with a purposively selected subgroup of participants from both conditions. A total of 16 students (eight from the intervention group and eight from the comparison group) participated voluntarily in these post-intervention interviews. Participants were selected based on four criteria to ensure a rich and representative qualitative sample:

Participants were selected using a transparent, stepwise approach to ensure a rich and representative qualitative sample. The selection was guided by four criteria:

(1)

(2)

(3)

(4)

In practice, participants were first stratified according to motivation change and engagement level, and then selected within these strata to maximize heterogeneity in experiences. This procedure ensured that the qualitative sample reflected a range of motivational trajectories and interaction patterns, avoiding bias toward any single subgroup. Descriptive statistics for motivation change and engagement guided the final selection, supporting transparency and rigor.

Semi-structured interviews were conducted online in either Malay or English, depending on participants’ preferences, to ensure clarity and comfort of expression. The interview protocol (see

perceived changes in motivation following the writing activities

aspects of the ChatGPT interaction, where applicable, that influenced motivation

perceived advantages and challenges of AI-assisted and traditional writing approaches

recommendations for future use of AI tools in Chinese language learning

For the comparison group, interview questions were designed to explore analogous areas without reference to AI, including perceived changes in motivation after the writing tasks, experiences with peer collaboration or other instructional supports, advantages and challenges of conventional writing approaches, and suggestions for improving writing instruction or support.

Each interview was conducted after the intervention phase, lasted approximately 30–40 minutes, and was audio-recorded with informed consent. Transcripts were produced verbatim and subjected to rigorous thematic analysis. Although both groups (16 participants) were interviewed, the present study analyzes only the intervention group data, as this directly addressed the research questions. The comparison group data were retained but not analyzed for the purposes of this paper, as they pertained to traditional writing experiences beyond the study’s central focus. This qualitative component enriched the quantitative findings by providing deeper insight into how ChatGPT influenced learners’ motivational dynamics in Mandarin essay writing.

AI Writing Support Tool: ChatGPT-4o

The experimental group employed ChatGPT-4o, a large language model developed by OpenAI, as the AI-supported writing assistant. ChatGPT-4o was officially released in May 2024 as an advanced iteration within the GPT-4 model family, featuring enhanced response speed, improved contextual reasoning, and strengthened multilingual performance, including Mandarin Chinese. Its transformer-based architecture enables the generation of coherent, contextually appropriate, and linguistically fluent text, rendering it suitable for L2 writing support.

Participants in the experimental group used ChatGPT as a writing assistant during their Mandarin Chinese essay tasks. The tool provided immediate feedback, vocabulary enhancement, and suggestions for sentence restructuring based on user input. It also supported idea development, paragraph organization, and verification of appropriate usage of Chinese expressions, addressing common challenges faced by non-native writers.

Access to ChatGPT was granted via a secure web interface (https://start.chatgot.io/), and students received a brief orientation to familiarize themselves with its functions before the writing tasks began. The design aimed to simulate an autonomous learning environment in which students could independently interact with the AI tool, reflecting real-life applications in CFL classrooms.

The integration of ChatGPT was intended not only to improve writing performance but also to explore its potential in enhancing learner motivation. The tool’s adaptive and interactive nature aligned with learner-centered pedagogical principles, offering individualized support that traditional classroom instruction may not consistently provide.

Instructional Context and Procedures

The study was conducted within an advanced Mandarin writing course that met twice weekly for 90 minutes and spanned eight weeks (16 sessions in total). The course emphasized argumentative essay writing and was delivered by a senior lecturer specializing in CFL education, experienced in both language teaching and academic writing pedagogy. Instruction integrated explicit teaching of rhetorical structures with scaffolded opportunities for practice, reflection, feedback, and revision. This human-led instructional foundation ensured that AI support was positioned as a supplementary scaffold rather than a substitute for pedagogical interaction.

The pre-test phase consisted of three components: the HSK Level 5 test, the HSK Level 5 independent writing tasks, and a task motivation questionnaire. These measures were administered to minimize the influence of potential differences in participants’ Mandarin proficiency, writing ability, and initial motivation on their subsequent performance. Baseline writing proficiency was assessed using a rubric from the HSK Level 5 Independent Writing Scoring Criteria (see

Based on pre-test results, 72 students with comparable Mandarin proficiency, writing ability, and motivation were selected through stratified sampling to ensure balanced representation of demographic and academic characteristics such as gender, age, and year of study across the intervention and comparison groups.

Instructional delivery followed the widely adopted Presentation–Practice–Production (PPP) model, with both groups receiving identical instructional content and teacher guidance. In the presentation stage, the instructor introduced the structure of a Chinese argumentative essay, including thesis development, stance-taking, cohesion, supporting evidence, and rhetorical strategies, using model texts and exemplars to illustrate key features. In the practice stage, students engaged in guided exercises designed to reinforce their understanding. Activities included analyzing sample essays, identifying argumentative features, and completing structured writing tasks emphasizing cohesion, progression, and the effective use of Mandarin vocabulary and grammar.

The instructor maintained a human-mediated learning environment through weekly rubric feedback, small-group discussions, and in-class conferences on rhetorical choices and writing strategies, while peer review fostered collaboration and metacognitive reflection. These interactions provided the framework into which AI support was integrated.

In the production stage, students composed their own argumentative essays. The key distinction between groups lay in the type of support provided. The comparison group collaborated in pairs, offering peer feedback on idea development, draft revisions, and the use of vocabulary, grammar, and textual devices. The intervention group, in contrast, used ChatGPT-4o on their smartphones (https://start.chatgot.io/) to obtain comparable forms of support. Learners were given clear guidance on the scope of ChatGPT use (1) to refine ideas, (2) to provide feedback on drafts, and (3) to suggest corrections for vocabulary, grammar, and textual devices. Crucially, students were prohibited from using ChatGPT to generate their final essays. To ensure appropriate use, the instructor monitored interactions through in-class observations and debriefings. As students were new to ChatGPT, three model prompts were also provided to scaffold their engagement with the tool.

Following the intervention, both groups completed the task motivation questionnaire twice: immediately after the instructional phase and again one month later during the delayed post-test.

Data Analysis

All statistical analyses, including participants’ baseline writing proficiency and task motivation scores, were conducted using SPSS Version 26. Although subsequent writing samples were collected during the study, they were not analyzed for the purposes of this paper. The present analysis focuses exclusively on learners’ task motivation throughout the essay-writing process rather than changes in writing proficiency. A two-phase analytic strategy was employed, combining quantitative statistical testing with qualitative thematic exploration to provide a comprehensive understanding of learners’ motivational trajectories.

Quantitative Analysis

Before conducting parametric tests, the key statistical assumptions were systematically examined. First, the normality of motivational scores at each time point (pre-test, immediate post-test, delayed post-test) was evaluated using the Shapiro–Wilk test. Results confirmed that data were approximately normally distributed, with all p-values exceeding the .05 threshold (pre-test, p = .108; immediate post-test, p = .245; delayed post-test, p = .323). Second, homogeneity of variances between the intervention and comparison groups was assessed using Levene’s test, which yielded non-significant results at each testing stage, thereby supporting the assumption of equal variances. Finally, the design of the study ensured the independence of observations.

To examine between-group differences, independent-samples t-tests were conducted at each of the three measurement points. This procedure allowed for the evaluation of whether learners in the intervention group (ChatGPT-supported writing) reported significantly higher levels of motivation than those in the comparison group (traditional peer collaboration). In addition to p-values, effect sizes (Cohen’s d) were calculated to gauge the magnitude of observed differences.

To investigate within-group changes over time, separate repeated measures ANOVAs were applied to each group. These analyses tested for significant variations in motivation across the three assessment stages—baseline (pre-test), immediately following the instructional phase (post-test), and one month later (delayed post-test). The sphericity assumption was evaluated with Mauchly’s test; where violations occurred, the Greenhouse–Geisser correction was applied to adjust the degrees of freedom and maintain the robustness of the results. For each significant finding, partial eta squared (η²) was reported as a measure of effect size. Pairwise comparisons with Bonferroni adjustments were further conducted to locate specific differences between time points.

Qualitative Analysis

To complement and extend the quantitative findings, semi-structured online interviews were conducted with a purposive subsample of 16 participants (eight from the intervention group and eight from the comparison group). The interviews explored learners’ experiences with Mandarin Chinese essay writing, focusing on their engagement with ChatGPT, perceived changes in motivation, and evolving writing behaviors. For the purposes of this study, only the eight interviews from the intervention group were analyzed, as the research questions specifically examined learners’ experiences with ChatGPT and its influence on task motivation. Although interviews were also collected from the comparison group, these were not included in the analysis because they did not align with the scope of the research questions. This decision ensured a focused examination of the motivational effects of ChatGPT-assisted writing.

All interview transcripts were translated into English when necessary and subjected to thematic analysis, guided by Braun and Clarke’s (2006) six-phase framework. An inductive coding approach was adopted: Transcripts were carefully read and segmented into meaningful units, which were then categorized and refined into overarching themes.

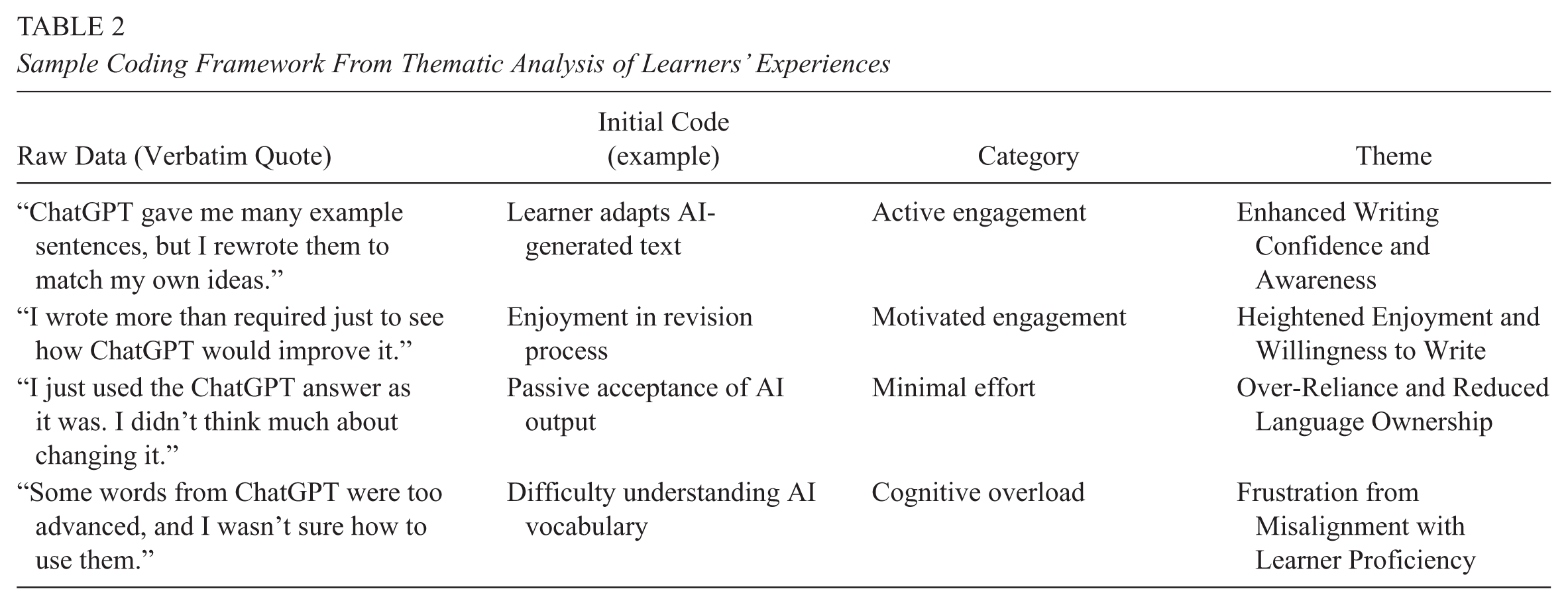

NVivo 15 software was used to support data management and ensure transparency in the analytic process. A codebook was developed iteratively, containing operational definitions, inclusion and exclusion criteria, and representative quotations for each code. Table 2 provides the coding framework used to consolidate raw interview data into broader categories and illustrates the four central themes identified in this study.

Sample Coding Framework From Thematic Analysis of Learners’ Experiences

From this process, four central themes: Enhanced Writing Confidence and Awareness, Heightened Enjoyment and Willingness to Write, Over-Reliance and Reduced Language Ownership, and Frustration from Misalignment with Learner Proficiency.

To ensure the trustworthiness of the analysis, the study adhered to Lincoln and Guba’s (1985) four criteria:

1) Credibility was established through methodological triangulation, integrating both qualitative and quantitative data sources (Naidoo, 2025). 2) Transferability was supported by providing detailed descriptions of the research context, participant demographics, and procedural steps, allowing readers to assess the applicability of findings to similar settings. 3) Confirmability was strengthened through independent coding conducted by two researchers, yielding high inter-coder reliability (Cronbach’s α = .87). To further enhance objectivity, an external bilingual coder with expertise in second language acquisition and qualitative research independently analyzed a subset of transcripts, resulting in a reliability coefficient of α = .85. This external validation contributed an etic perspective and helped mitigate potential researcher bias. 4) Dependability was ensured through member checking: Five participants reviewed the preliminary themes and confirmed that the interpretations accurately represented their experiences (Doyle et al., 2016). Only minor modifications were made to improve clarity based on their feedback.

The integration of qualitative insights with quantitative trends provided a richer, multidimensional understanding of how ChatGPT-assisted writing tasks shaped learners’ motivation in Mandarin Chinese essay writing.

Results

Pre-test Equivalence

Prior to examining the impact of ChatGPT-4o on learners’ motivation, it was essential to confirm that the intervention and comparison groups were comparable at baseline. Independent-samples t tests were conducted on Mandarin proficiency (HSK Level 5 scores), writing proficiency (HSK Level 5 rubric), and task motivation.

The analyses revealed no significant differences between the two groups in HSK Level 5 scores (Intervention: M = 192.45, SD = 21.38; Comparison: M = 189.72, SD = 23.11), t(70) = 0.49, p = .627; baseline writing proficiency (Intervention: M = 15.61, SD = 2.87; Comparison: M = 15.04, SD = 3.02), t(70) = 0.78, p = .439; or motivation scores (Intervention: M = 3.84, SD = 0.52; Comparison: M = 3.77, SD = 0.49), t(70) = 0.59, p = .556.

These results confirm that the two groups did not differ significantly in language proficiency, baseline writing ability, or initial motivation. Establishing this equivalence reduces the likelihood that subsequent differences in motivation can be attributed to pre-existing disparities, thereby strengthening the internal validity of the study.

Quantitative Analysis of Motivation Scores

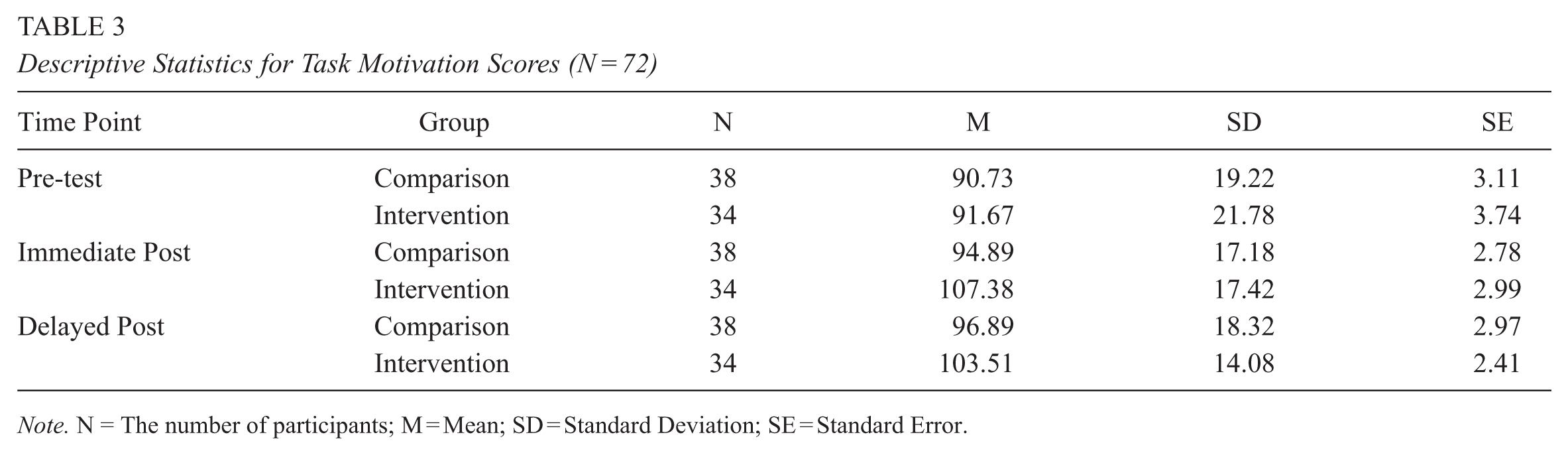

Having established baseline equivalence, the analysis turned to the central RQ1. Descriptive statistics for both groups across the three measurement points (pre-test, immediate post-test, and delayed post-test) are presented in Table 3.

Descriptive Statistics for Task Motivation Scores (N = 72)

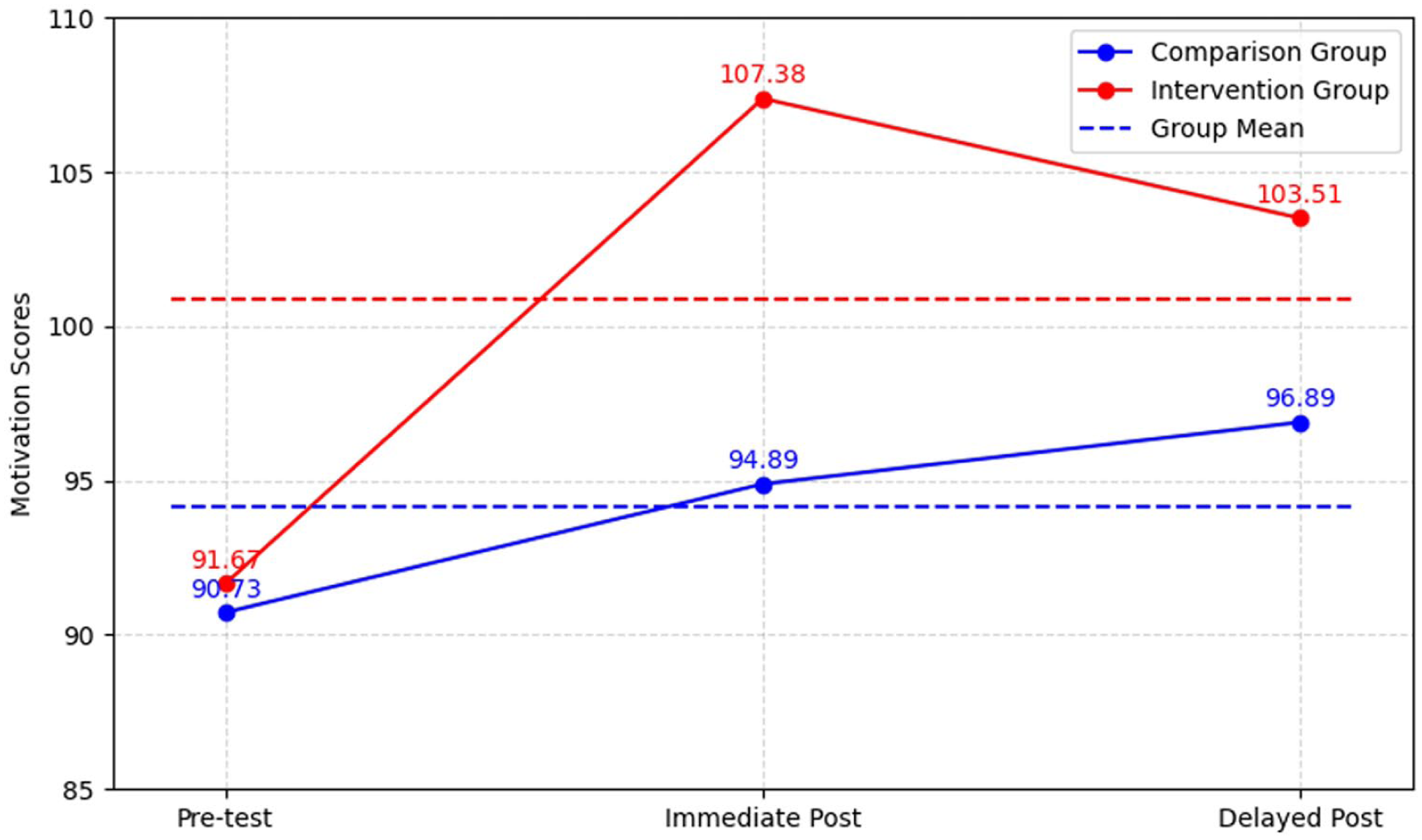

As shown in Table 3, initial motivation levels were nearly identical between groups (Intervention M = 91.67, SD = 21.78; Comparison M = 90.73, SD = 19.22), confirming equivalence at baseline. Following the intervention, the intervention group exhibited a marked increase in motivation, with their mean score rising to 107.38 (SD = 17.42). In contrast, the comparison group showed only a modest gain to 94.89 (SD = 17.18).

At the delayed post-test, the intervention group sustained relatively high motivation (M = 103.51, SD = 14.08), despite a slight decline from the immediate post-test. This pattern suggests that the motivational benefits of ChatGPT-4o were not limited to the short term but persisted for at least one month. By comparison, the control group continued on a gradual upward trend, reaching 96.89 (SD = 18.32) at the delayed post-test, but their improvement remained modest in magnitude.

These descriptive findings provide preliminary evidence that integrating ChatGPT into essay writing tasks contributed to a significant and enduring enhancement of learners’ motivation. The overall progression of scores for both groups across the three testing phases is illustrated in Figure 1.

Motivation Score Trends over Time for the Comparison and Intervention Groups

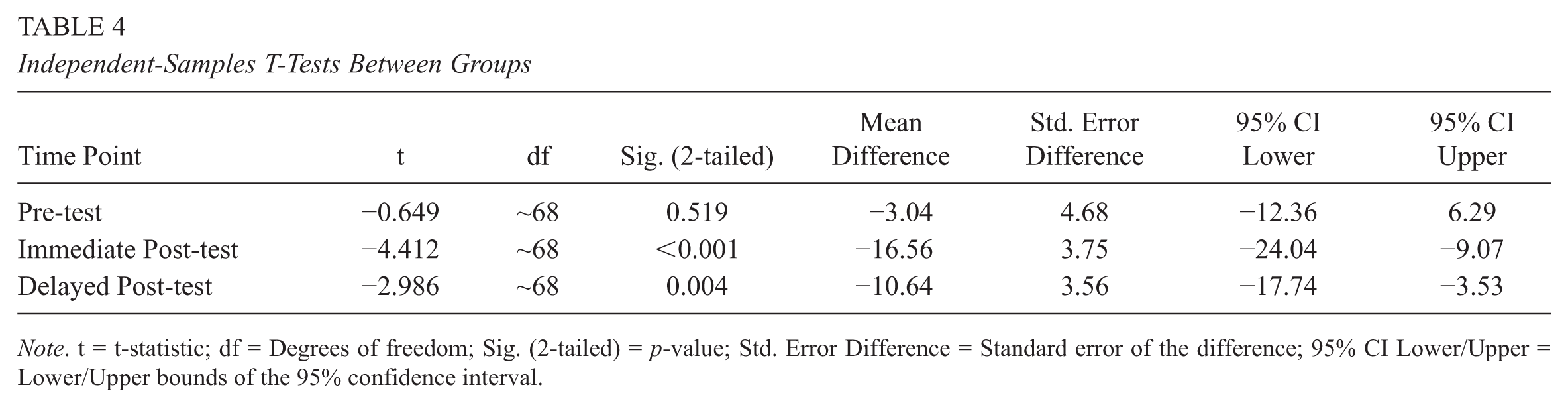

To determine whether these observed differences were statistically meaningful, independent-samples t tests were conducted at each measurement point. Table 4 summarizes the results. These analyses enabled a direct evaluation of whether ChatGPT-supported writing tasks produced significantly higher motivation compared to traditional peer-collaborative writing.

Independent-Samples T-Tests Between Groups

At the pre-test stage, no statistically significant differences were observed between the comparison and intervention groups,

Following the instructional phase, however, a significant divergence emerged during the immediate post-test,

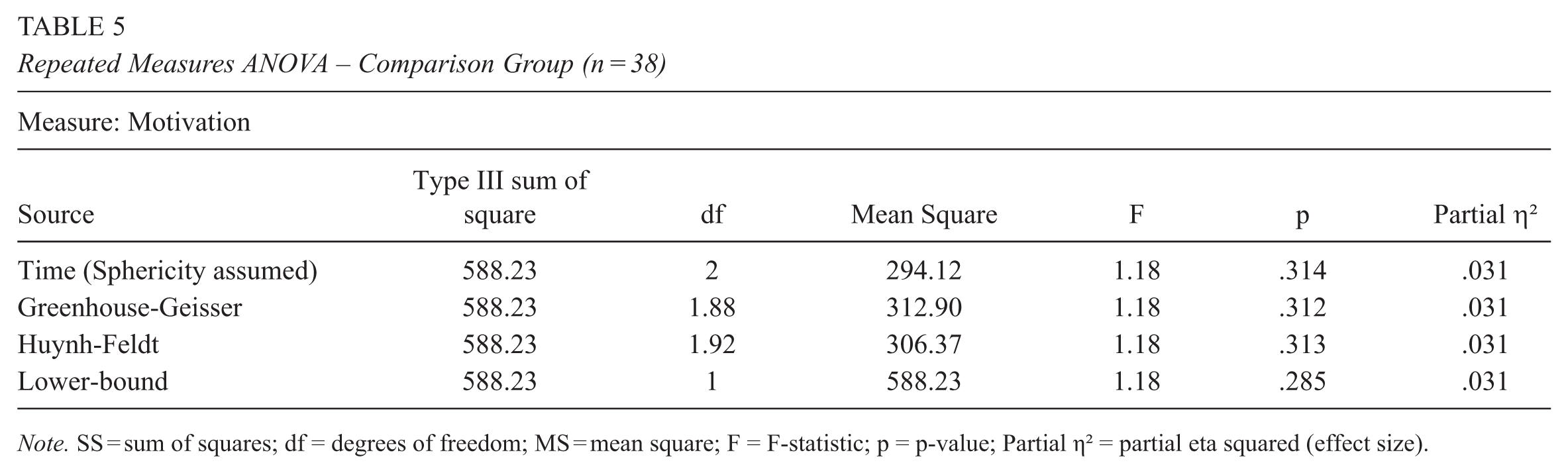

To gain further insight into motivational trajectories within each group, separate repeated measures ANOVAs were conducted. For the comparison group, no significant variation in motivation was observed across the three assessment points—pre-test, immediate post-test, and delayed post-test, F(1.88, 70.56) = 1.18,

Repeated Measures ANOVA – Comparison Group (n = 38)

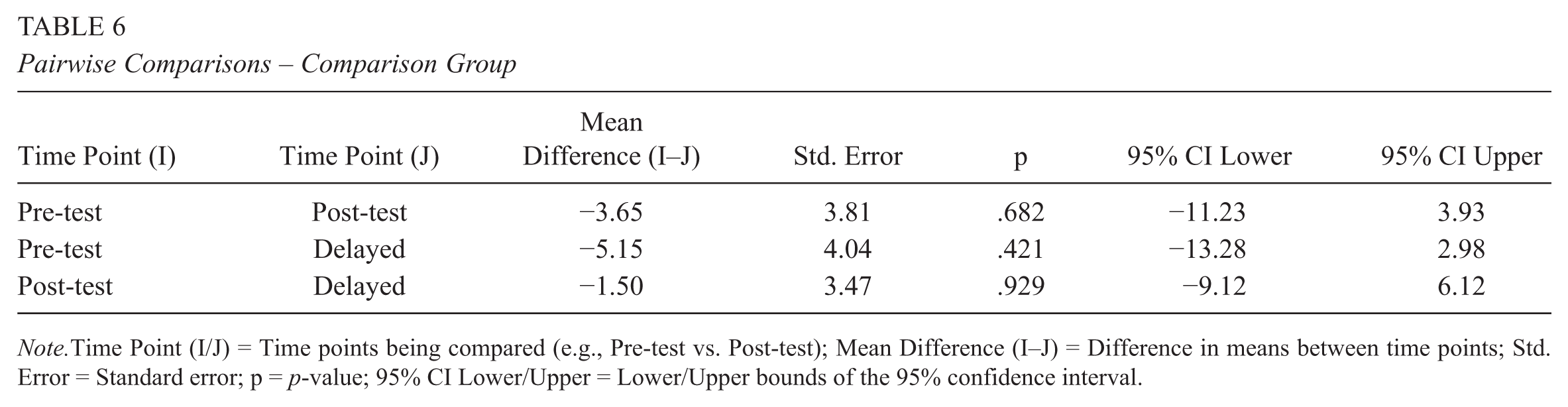

Pairwise comparisons (Table 6) confirmed the absence of significant changes between any two time points (all ps > .40), suggesting that learners in the comparison group maintained relatively stable motivation throughout the study period.

Pairwise Comparisons – Comparison Group

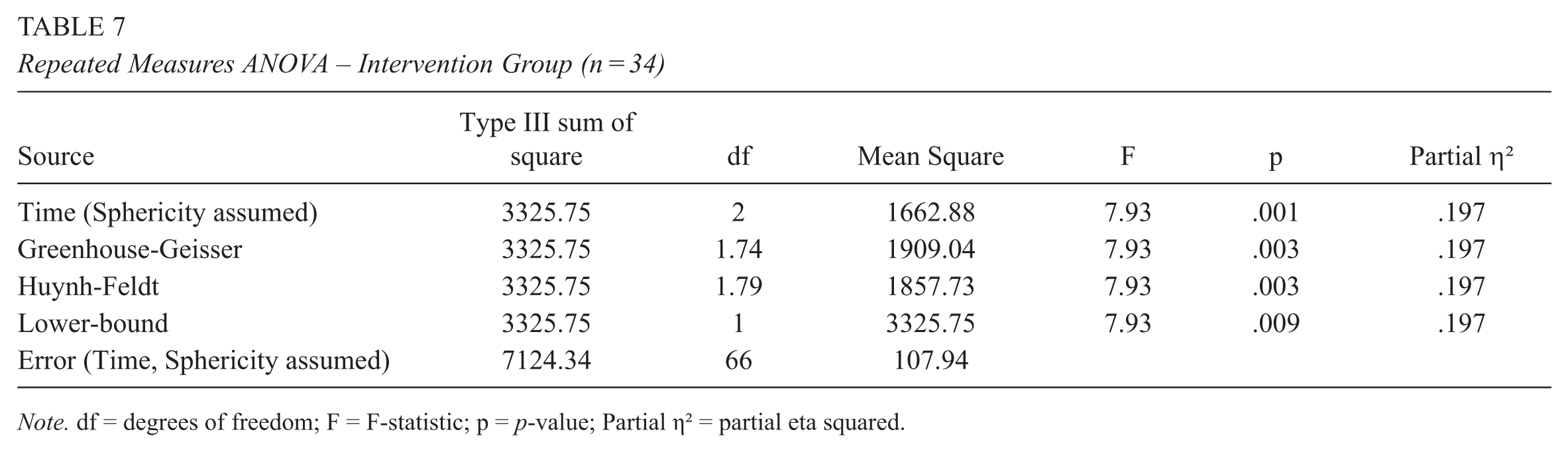

In contrast, the intervention group showed a clear upward trend in motivation over time. The repeated measures ANOVA revealed a statistically significant change across the three assessment points, F(1.74, 56.42) = 7.93,

Repeated Measures ANOVA – Intervention Group (n = 34)

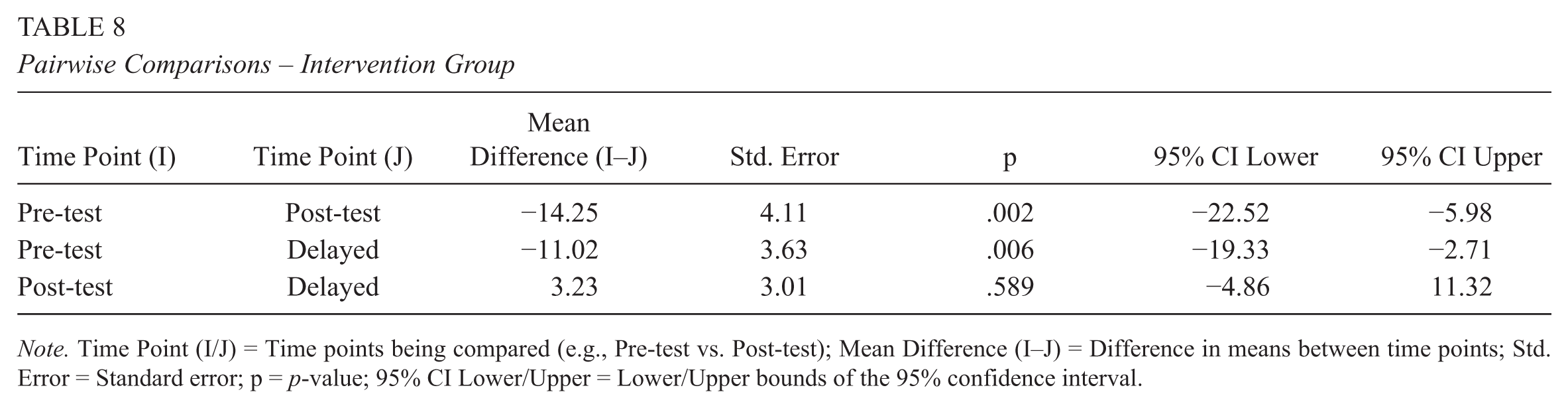

Pairwise comparisons (Table 8) revealed that learners’ motivation in the intervention group increased significantly from pre-test to immediate post-test (

Pairwise Comparisons – Intervention Group

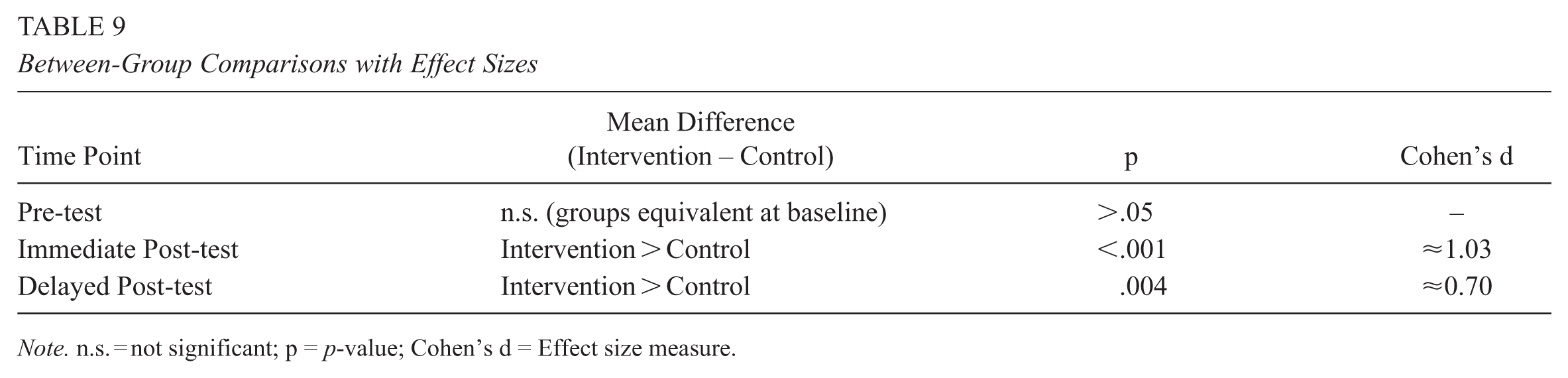

Complementary between-group tests further compared the intervention and control groups at each time point. As shown in Table 9, the intervention group demonstrated significantly higher task motivation than the control group at both the immediate post-test (

Between-Group Comparisons with Effect Sizes

Taken together, these findings highlight the effectiveness of ChatGPT-supported writing activities in promoting and maintaining learners’ motivation to write Mandarin Chinese essays. Whereas the control group showed no notable changes in motivation, the intervention group demonstrated both immediate and lasting motivational improvements.

Qualitative Results

To address RQ2 qualitatively, we conducted a thematic analysis of eight post-intervention interviews with students in the ChatGPT group. Four themes emerged, each aligned with constructs from SDT and SRL:

(1)

Learners reported greater awareness of writing structures, vocabulary use, and stylistic expression. Many adapted AI-generated suggestions to better reflect their intended meaning, demonstrating autonomy and metacognitive regulation. This theme reflects SDT’s competence construct and SRL’s metacognitive engagement, showing how AI-supported writing can strengthen learners’ confidence in managing their work.

(2)

Participants described a renewed enjoyment of writing, fostered by ChatGPT’s immediate feedback and dialogic support. This shift from anxiety to active engagement highlights increased intrinsic motivation and SRL-driven engagement, aligning with SDT’s emphasis on autonomy-supportive learning environments.

(3)

Some learners acknowledged directly copying AI-generated text, bypassing critical thinking and reducing ownership of their work. This reflects SRL strategy misalignment, where reliance on AI weakened autonomy and limited deeper cognitive processing.

(4)

A subset of learners found ChatGPT’s suggestions too complex, leading to confusion, reduced motivation, and eventual avoidance of the tool. This undermined their sense of competence, as perceived task difficulty eroded confidence and engagement.

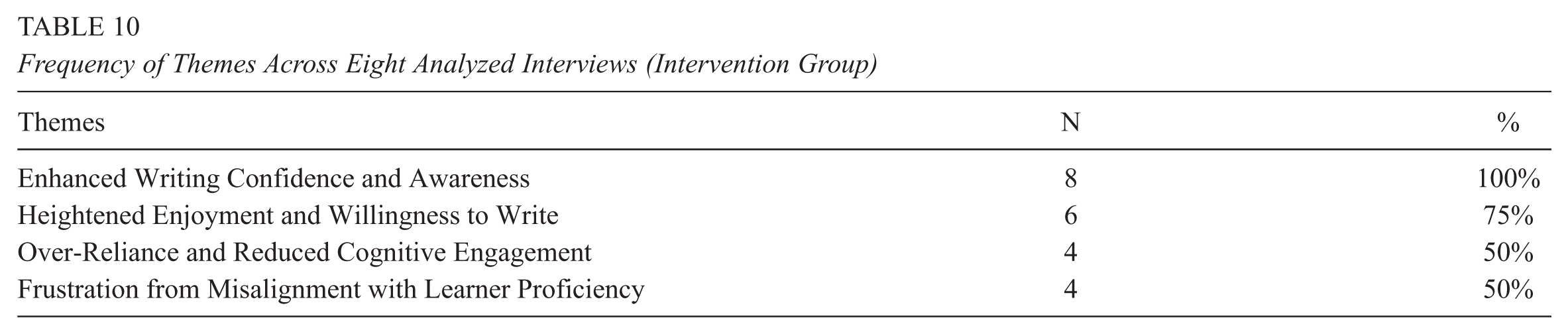

Taken together, these themes extend the quantitative findings by illustrating the lived experiences underlying motivational changes, showing how AI-mediated writing both supports and challenges motivation and self-regulation. Table 10 summarizes the frequency of each theme across the eight interviews.

Frequency of Themes Across Eight Analyzed Interviews (Intervention Group)

In the following subsections, each theme is explored in more detail with illustrative excerpts.

Enhanced Writing Confidence and Awareness

All eight participants (100%) reported feeling more confident in their ability to write in Mandarin after using ChatGPT. Learners consistently highlighted that the tool not only provided linguistic suggestions but also helped them better understand structural and rhetorical choices. For example, Xiaojun (24) explained: “

Similarly, Mei (21) emphasized how ChatGPT raised her awareness of stylistic differences: “

These reflections suggest that ChatGPT functioned as a scaffold that promoted metacognitive awareness and strengthened learners’ perceived competence—a key factor in sustaining task motivation. Learners moved from seeing writing as guesswork to viewing it as a manageable process supported by explicit models.

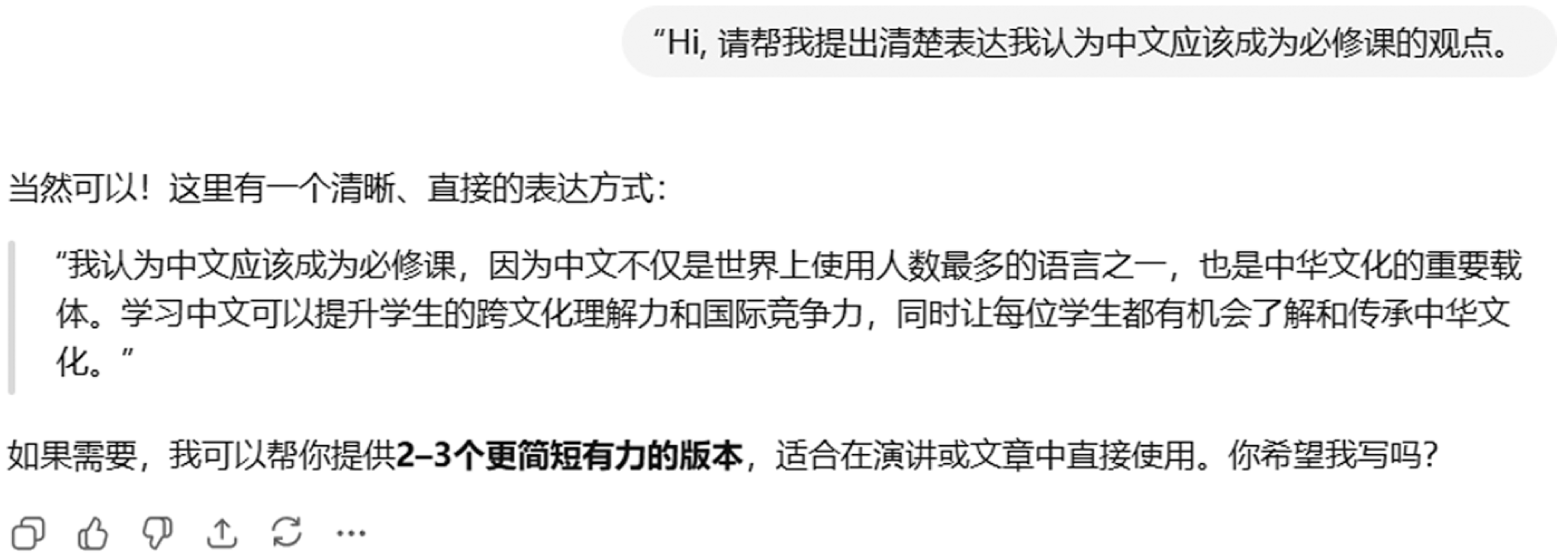

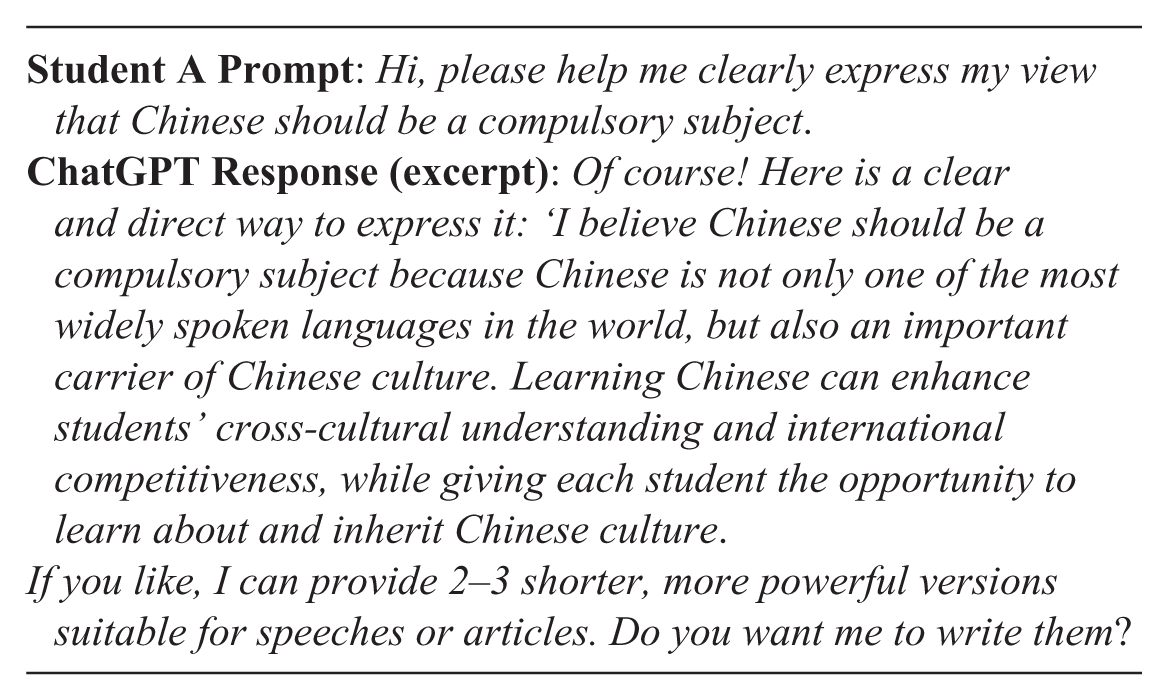

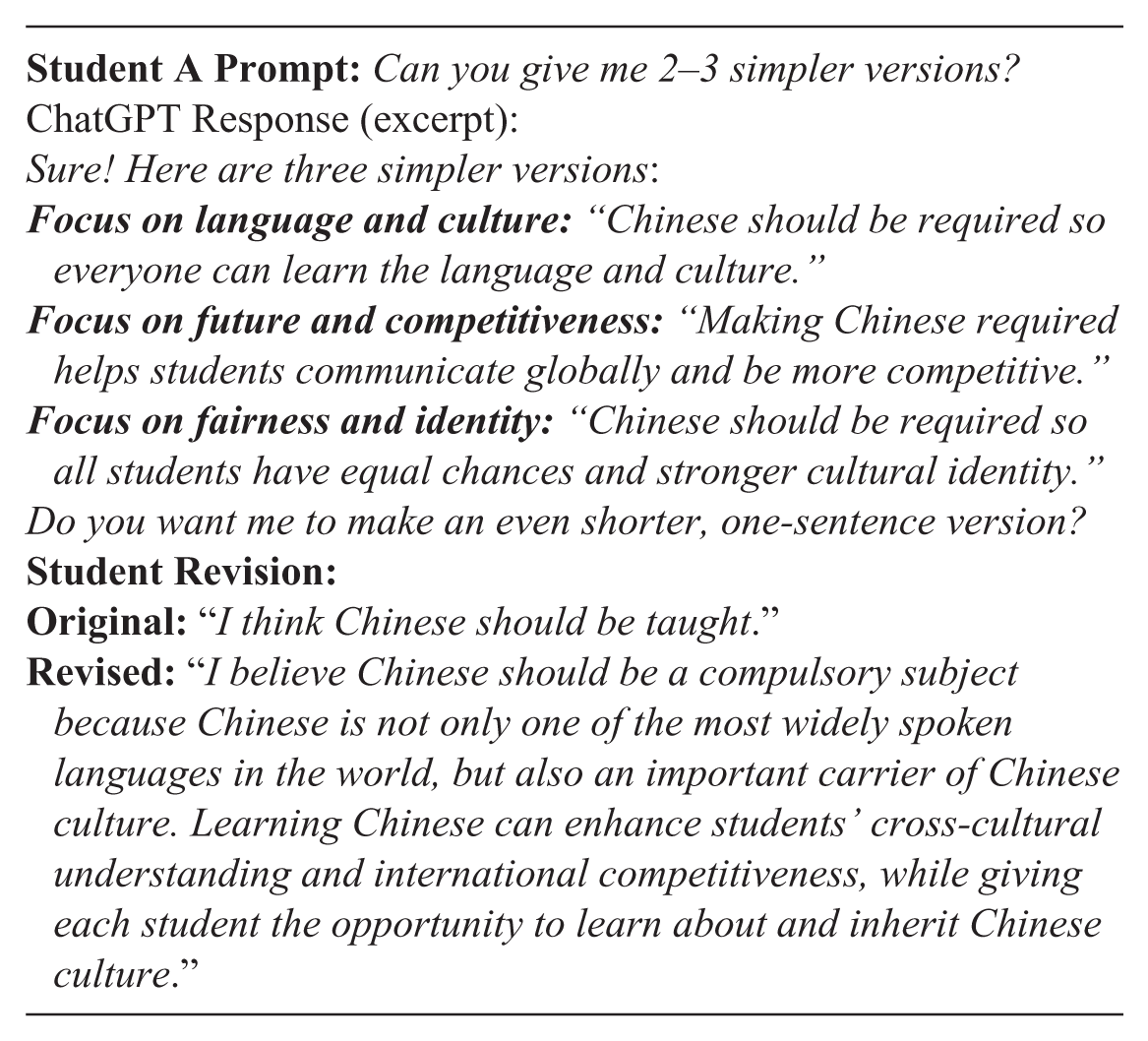

To illustrate learner–AI interactions, Figure 2 presents an anonymized screenshot of a student–ChatGPT exchange from the intervention phase. Students used ChatGPT to refine ideas, check coherence, and receive feedback on draft paragraphs. Additional examples are provided in

Student–ChatGPT Interaction (Immediate Post-Test)

This example shows a student, here given the pseudonym “Student A,” prompting ChatGPT to articulate their stance that Chinese should be a compulsory subject in Malaysian universities. ChatGPT suggested multiple ways to structure the argument, and the student revised their thesis accordingly.

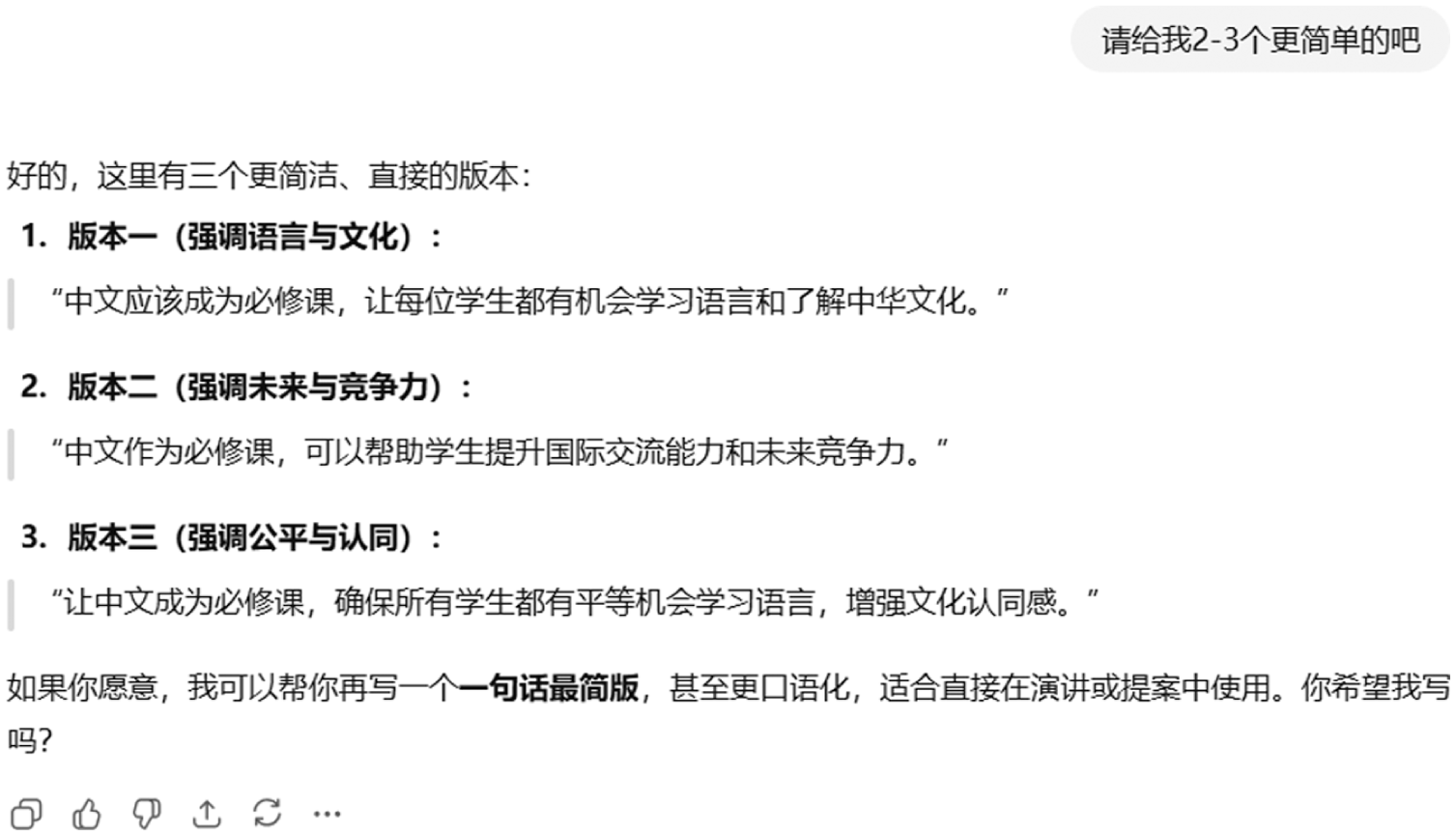

Student A continued to interact with ChatGPT, as illustrated in Figure 3.

Student A’s Follow-Up Interaction with GPT.

Heightened Enjoyment and Willingness to Write

Six participants (75%) described increased enjoyment and willingness to engage in essay writing after incorporating ChatGPT. Writing, which was previously experienced as a burdensome academic task, was reframed as a more dynamic and interactive activity. Haoran (22) captured this shift: “

Yue (23) similarly noted: “

These accounts indicate a movement toward intrinsic motivation, where the process itself, experimenting, receiving feedback, and refining ideas, became rewarding. For most participants, ChatGPT’s immediacy and interactivity helped reduce writing anxiety and increase their willingness to practice voluntarily.

Over-Reliance and Reduced Cognitive Engagement

At the same time, four participants (50%) acknowledged that their reliance on ChatGPT sometimes reduced their own cognitive effort. What began as a helpful support occasionally turned into a shortcut that replaced active problem-solving. Longfei (25) admitted: “

Wei (22) echoed this concern: “

This theme illustrates a motivational paradox: While ChatGPT increased competence and enjoyment for many, it also risked undermining learners’ autonomy and engagement when used passively. From a task motivation perspective, the tool can both energize participation and inadvertently reduce self-regulated learning if students defer too much to AI output.

Frustration from Misalignment with Learner Proficiency

Finally, four participants (50%) expressed frustration when ChatGPT’s output exceeded their proficiency level or failed to align with their needs. Learners noted that overly advanced vocabulary, idiomatic expressions, or culturally specific references could increase their workload and decrease motivation. Ling (21) described this challenge: “

Similarly, Bin (23) explained:

Such experiences highlight the importance of calibration. Although scaffolding can empower learners, mismatched or overly complex AI output risks overwhelming them, undermining perceived competence, and reducing motivational benefits.

In sum, these qualitative insights complement and enrich the quantitative findings. The statistically significant gains observed in motivation scores for the intervention group align with participants’ reported increases in writing confidence, awareness, and enjoyment. At the same time, the mixed accounts of over-reliance and frustration provide an explanatory layer for why improvements were not uniformly distributed across all motivational dimensions in the survey results. Together, the two strands of evidence suggest that ChatGPT can effectively enhance L2 learners’ task motivation in Mandarin essay writing, but the extent of its impact depends on how learners balance AI support with their own cognitive engagement and on the appropriateness of the AI-generated output relative to their proficiency level.

Discussion

This study examined how interaction with ChatGPT-4o influenced L2 learners’ task motivation for Mandarin argumentative essay writing by combining repeated quantitative measures with qualitative interviews. Overall, the intervention produced a substantial and largely sustained increase in task motivation. The intervention group showed a large immediate effect (immediate post-test vs. control,

Qualitative analysis of the eight intervention interviews revealed four central themes, which correspond to the coding framework presented in Table 2. These insights help explain the quantitative trends without overclaiming, showing potential benefits alongside observed constraints. Learners reported increased confidence in structuring sentences, applying linking words, and managing tone appropriately; many also experienced greater enjoyment and motivation to engage with writing tasks. Conversely, some learners demonstrated over-reliance on ChatGPT and occasional frustration when AI output exceeded their proficiency. These patterns suggest that AI-mediated support can facilitate engagement while also posing challenges for some learners.

A closer examination of motivational trajectories reveals that the short-term increases observed immediately post-intervention likely reflect both the novelty of using ChatGPT and the supportive feedback it provided (Abdullah, 2025). However, the modest decline in motivation at the delayed post-test suggests that some learners may have experienced over-reliance on AI, which reduced opportunities for autonomous engagement and generative effort. This pattern highlights the conditional nature of motivational gains and highlights the importance of designing interventions that balance AI support with strategies that foster learner autonomy and sustained engagement (Pratiwi et al., 2025; Zare et al., 2025).

From an SDT perspective, these experiences reflect enhanced perceived competence, a primary driver of intrinsic motivation (Y. C. Huang et al., 2019; Ryan & Deci, 2017). By providing intelligible models of sentence structure, transitions, and register, ChatGPT reduced task anxiety and clarified writing strategies, potentially contributing to the large immediate motivational increase. In SRL terms, rapid feedback supported goal setting, strategy use, and self-monitoring, facilitating engagement with writing tasks.

An important consideration emerging from this study concerns the design of the writing prompts, particularly the inclusion of the ChatGPT-related task (“Is using AI tools like ChatGPT beneficial for language learners?”). The intervention group, recently exposed to ChatGPT, may have been more motivated by this task than the comparison group, as reflected in the higher post-test scores. Qualitative evidence indicates that over-reliance on ChatGPT may have limited generative effort for some learners, while misaligned outputs occasionally challenged competence, partly explaining the partial decline in delayed motivation. These findings suggest potential benefits while also revealing important constraints (Pratiwi et al., 2025; Zare et al., 2025).

Another key observation relates to the type of feedback learners predominantly received. Learners mainly used ChatGPT to refine vocabulary and sentence structures rather than to generate new content. This likely reflects the prompts, which required prior knowledge, and the instructional emphasis on linguistic refinement. Even when focused on sentence-level scaffolding, AI support appeared to enhance perceived competence and writing confidence for many learners, although the benefits varied across individuals. For advanced CFL learners at HSK Level 5, higher-order content development may be more relevant at HSK Levels 7-8, suggesting opportunities for future interventions to broaden cognitive and motivational benefits.

The Malaysian context also shaped learners’ experiences and motivation. CFL learners in Malaysia often navigate a multilingual environment, where Mandarin learning competes with Malay and English as societal and educational languages. Participants frequently reported drawing on prior cultural knowledge from family or community settings when interacting with ChatGPT, which facilitated engagement and confidence. For instance, several learners connected AI-suggested phrases to expressions commonly used at home or in Malaysian Chinese community media, enhancing perceived relevance and enjoyment. This sociocultural embedding likely contributed to the 75% reporting increased enjoyment, reinforcing the quantitative finding of elevated immediate post-test motivation. Awareness of local language practices and culturally familiar expressions may have made feedback more accessible, supporting both perceived competence and sustained motivation in ways that might differ in other CFL contexts.

The qualitative evidence of internalized modeling behaviors (e.g., noticing linking words, attending to tone) also helps explain why motivation remained elevated at the delayed post-test despite the slight decline. These processes suggest some transfer from external scaffold to internal strategy, although not uniformly across learners (Pratiwi et al., 2025; Zhai et al., 2024).

Beyond pedagogical insights, ethical, equity, and cultural-linguistic dimensions of AI use warrant careful attention. AI-generated output can reflect biases in language or cultural content, particularly when models are English-dominant, potentially misrepresenting Chinese rhetorical norms such as indirectness, collectivism, and stance, and reinforcing stereotypes (Ifenthaler et al., 2024; Nguyen et al., 2023). Unequal access to AI tools may exacerbate disparities among learners, especially in contexts with differing availability of devices or internet connectivity (Ifenthaler et al., 2024). Additionally, privacy concerns arise when learners’ writing is processed or stored on external platforms. Such biases and structural limitations could influence motivation and engagement, indicating that potential benefits are conditional rather than universal. To mitigate these risks, educators can implement responsible AI practices, including critically reviewing AI suggestions, ensuring equitable access, and safeguarding learner data.

Furthermore, we acknowledge concerns regarding conversational AI personalization and potential psychological effects. In this study, ChatGPT interactions were deliberately bounded to task-focused prompts, students were instructed not to anthropomorphize or emotionally rely on the AI, and teacher oversight with guided prompting was provided to prevent inappropriate personalization. These safeguards help contextualize our findings, situating observed motivational gains within a framework that considers both affordances and potential risks of AI-mediated learning (Bhattacharya et al., 2024).

A central insight from our mixed-methods data is the “motivational paradox”: the same features of ChatGPT that increased competence and enjoyment for many learners also created conditions for decreased cognitive engagement for others. This duality aligns with SRL theory: supports that reduce cognitive effort too strongly can interrupt the iterative cycle of planning–monitoring–reflection that fosters durable learning. Practically, if learners accept AI output without critical evaluation or prior generative attempt, they lose opportunities for retrieval practice and deeper processing, processes known to underpin long-term skill acquisition.

Our results align with broader reports that AI-assisted writing can increase engagement and reduce writing anxiety while sometimes promoting dependency or mismatches with learner needs (Alshraah et al., 2023; Pandya & Saiyad, 2025). The present study advances this literature in two important ways. First, the mixed-methods, longitudinal design links statistically robust, sustained motivational gains to concrete learner experiences, moving beyond cross-sectional self-reports. Second, the focus on task motivation as it unfolds across the writing process (not just end-state attitudes) reveals process-level mechanisms (e.g., modeling → competence → willingness to attempt complex moves; immediate feedback → enjoyment → voluntary practice) and boundary conditions (over-reliance; miscalibration) that previous single-method studies have not fully articulated. Where prior studies have reported largely positive group effects, our qualitative data clarify why benefits vary across learners and across time.

The findings suggest several instructional recommendations to maximize motivational and learning benefits while mitigating potential risks. First, implementing a draft-first approach, in which learners produce an initial essay independently before consulting ChatGPT, can preserve generative effort and ensure that AI serves as a tool for revision rather than replacement. Second, teaching output calibration strategies, such as requesting simpler explanations, graded alternatives, or stepwise rewrites tailored to learners’ proficiency, can prevent cognitive overload and enhance alignment between AI suggestions and learners’ capabilities. Third, embedding reflective prompts, where learners annotate the reasons for accepting or rejecting AI feedback, can foster metacognitive awareness and counteract passive adoption of AI outputs. Finally, teacher mediation through guided debriefs on common AI-generated suggestions can correct misalignments, provide culturally contextualized guidance, and support deeper understanding. Collectively, these practices align with SDT by promoting autonomy (through learner choice in AI use), competence (through scaffolded skill development), and relatedness (via teacher feedback), and they are consistent with SRL principles by preserving essential phases of planning, monitoring, and reflection in learners’ writing processes (Alonso-Mencía et al., 2020; Zeidner & Stoeger, 2019).

In sum, ChatGPT-4o can meaningfully enhance L2 learners’ task motivation for Mandarin essay writing by increasing perceived competence and making writing more enjoyable and interactive. However, our findings also show that novelty effects, over-reliance, misalignment of output, and sociocultural and ethical factors can temper long-term retention, indicating that motivational benefits are conditional rather than automatic. Thoughtfully designed instructional procedures, aimed at preserving generative effort, calibrating AI output, and ensuring equitable and culturally sensitive implementation, are essential to realize the full potential of AI-mediated writing support.

Conclusion

This research explored how interaction with ChatGPT 4o affected the motivation of L2 learners engaged in writing Mandarin Chinese essays. The results suggest potential short-term motivational benefits, although gains were not uniform across learners. Participants attributed this improvement to various factors, such as noticeable gains in their writing proficiency, the encouraging and responsive learning environment fostered by the AI tool, its interactive design, and the increased metacognitive awareness it promoted regarding their writing difficulties.

While quantitative results demonstrated motivational gains, qualitative narratives revealed nuanced patterns, including over-reliance on AI, mixed emotional responses, and variability in engagement. Together, these data suggest that AI-mediated writing support can enhance motivation for many learners but may also introduce challenges for others, emphasizing the conditional and context-dependent nature of these benefits.

At the same time, the ChatGPT-related delayed post-test prompt may have introduced a topic-specific novelty effect. Learners in the intervention group, having recently interacted with ChatGPT, appeared more motivated when responding to this AI-focused prompt than their peers in the control group. However, this motivational boost declined at the delayed post-test, suggesting that AI-related prompts can generate short-term enthusiasm but may not ensure long-term persistence without continuous scaffolding. These findings highlight the importance of balancing AI support with the cultivation of learner autonomy to sustain motivation over time (Bhattacharya et al., 2024; Zare et al., 2025).

Future instructional designs should incorporate reinforcement strategies to mitigate over-reliance on AI and sustain long-term motivation. Such strategies may include requiring learners to draft essays independently before consulting AI, embedding reflective prompts to promote critical engagement, and implementing scaffolded feedback techniques that encourage deeper processing.

Several limitations should be acknowledged. First, the relatively small sample size and focus on intermediate-level Mandarin learners from a single institution limit the generalizability of the findings. Individual differences in cognitive and affective characteristics were not systematically controlled, which may have influenced outcomes. Importantly, writing performance was not directly assessed, preventing a clear link between motivational gains and improvements in linguistic accuracy, coherence, or higher-order essay quality. Future research should integrate performance-based measures alongside motivational assessments and employ complementary data sources such as stimulated recall protocols, learning analytics, and classroom observations.

Second, the short duration of the intervention (eight weeks) may restrict the extent to which findings reflect sustained motivational change. Although pre-test, immediate post-test, and delayed post-test measures captured both immediate gains and short-term retention, they cannot fully account for long-term motivational processes. Some improvements may reflect novelty-driven effects rather than stable shifts. Consistent with SDT, initial engagement may be sparked by novelty, whereas sustained motivation depends on competence and autonomy. Future studies should adopt longer, longitudinal designs and implement strategies to mitigate novelty effects.

Finally, ethical and equity-related considerations warrant attention. Unequal access to AI-enabled devices, internet connectivity, algorithmic biases, and privacy concerns may constrain equitable adoption of AI in language education. Future research should explicitly address these dimensions to ensure responsible and inclusive integration. Comparative studies of AI-mediated versus teacher-mediated feedback, as well as multi-semester designs, could provide stronger evidence of long-term benefits and the sustainability of motivational outcomes.

A key methodological implication of this study is that prompt design itself can shape learner motivation. The use of AI-related prompts may have amplified motivational differences between groups. Future research should therefore consider employing rotating or neutral prompts to disentangle tool-related effects from topic familiarity.

Despite these limitations, this study contributes to the field of second language acquisition by offering empirical evidence on the motivational affordances of AI tools such as ChatGPT. The findings suggest that, when grounded in sound pedagogy, AI-mediated instruction can enhance learner autonomy, competence, and engagement. Educators and curriculum designers are encouraged to consider how AI can be meaningfully embedded into writing instruction to personalize learning and sustain motivation. By offering concrete pedagogical recommendations and identifying directions for future inquiry, including performance assessment, longitudinal monitoring, and ethical safeguards, this study provides a foundation for the responsible and effective use of AI in L2 writing pedagogy.

Supplemental Material

sj-docx-1-ero-10.1177_23328584261435952 – Supplemental material for Investigating L2 Learners’ Task Motivation in Mandarin Chinese Essay Writing: The Role of ChatGPT in AI-Mediated Learning

Supplemental material, sj-docx-1-ero-10.1177_23328584261435952 for Investigating L2 Learners’ Task Motivation in Mandarin Chinese Essay Writing: The Role of ChatGPT in AI-Mediated Learning by Xiaosheng Zhou in AERA Open

Supplemental Material

sj-docx-2-ero-10.1177_23328584261435952 – Supplemental material for Investigating L2 Learners’ Task Motivation in Mandarin Chinese Essay Writing: The Role of ChatGPT in AI-Mediated Learning

Supplemental material, sj-docx-2-ero-10.1177_23328584261435952 for Investigating L2 Learners’ Task Motivation in Mandarin Chinese Essay Writing: The Role of ChatGPT in AI-Mediated Learning by Xiaosheng Zhou in AERA Open

Supplemental Material

sj-docx-3-ero-10.1177_23328584261435952 – Supplemental material for Investigating L2 Learners’ Task Motivation in Mandarin Chinese Essay Writing: The Role of ChatGPT in AI-Mediated Learning

Supplemental material, sj-docx-3-ero-10.1177_23328584261435952 for Investigating L2 Learners’ Task Motivation in Mandarin Chinese Essay Writing: The Role of ChatGPT in AI-Mediated Learning by Xiaosheng Zhou in AERA Open

Supplemental Material

sj-docx-4-ero-10.1177_23328584261435952 – Supplemental material for Investigating L2 Learners’ Task Motivation in Mandarin Chinese Essay Writing: The Role of ChatGPT in AI-Mediated Learning

Supplemental material, sj-docx-4-ero-10.1177_23328584261435952 for Investigating L2 Learners’ Task Motivation in Mandarin Chinese Essay Writing: The Role of ChatGPT in AI-Mediated Learning by Xiaosheng Zhou in AERA Open

Footnotes

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the Ministry of Education’s 14th Five-Year Plan National Key Project for Educational Research (Project No. JKY6431972KT).

Ethical Considerations

This study was conducted in accordance with the recommendations of the Research Ethics Committee at Universiti Teknologi MARA (UiTM). The research protocol was reviewed and approved by the committee under protocol number 600-TNCPI (5/1/6). Prior to participation, all individuals were provided with detailed information about the study’s purpose, procedures, potential risks, and benefits.

Open Practices

The dataset for this study is not publicly available due to institutional data sharing restrictions. However, all research instruments, coding guides, and analysis files necessary to reproduce the results reported in this article are available through openICPSR at ![]() . Researchers interested in accessing the restricted dataset may contact the corresponding author to request access, subject to institutional approval.

. Researchers interested in accessing the restricted dataset may contact the corresponding author to request access, subject to institutional approval.

Author

XIAOSHENG ZHOU is a lecturer at Wenzhou University of Technology, Wenzhou, China; email: