Abstract

One of the key goals of meta-analyses is to provide educators with relevant evidence to guide their decisions in practice. This meta-review examined the extent to which education meta-analysts have used strategies that likely increase the relevance, applicability, and accessibility of research to practitioners. We reviewed 103 meta-analyses of school-based academic interventions, coding for: (a) stakeholder engagement in review phases; (b) reporting of study population, setting, and intervention characteristics and testing them as moderators; and (c) accessibility of the findings to a wide audience using effect size metrics and visualizations. We found limited stakeholder involvement. Certain characteristics such as grade level were commonly reported and used to explored heterogeneity, while others, like material and training costs, were rarely considered. Effect size transformations were not common, and traditional forest plots were the most prevalent visualization method. We propose future research directions to improve the relevance, applicability, and accessibility of meta-analysis findings for educational practice.

Keywords

Researchers, policymakers, and educators have made great strides in recent decades to better connect research, policy, and practice (Oliver et al., 2014). This is especially true in the field of K–12 education across the globe due to increased recognition of the complexity of the decision-making process, awareness of the mismatch between researcher and educator professional cultures and emphasizing the value of involving educators in the research process to help overcome this mismatch (Farley-Ripple, 2021; Organization for Economic Co-operation and Development [OECD], 2025). While the gap between research and practice may be narrowing, more work still needs to be done, particularly in the field of evidence synthesis research (Maynard, 2024).

Evidence syntheses, such as systematic reviews with or without meta-analysis, are an important yet understudied area in the field of research on research use, which is surprising given the exponential growth of evidence syntheses in recent decades and the uptick in financial investment in them (Hoffmann et al., 2021; Maynard, 2024). Further, meta-analyses, which statistically synthesize effects across multiple studies, allow estimation and exploration of the sources of variability of effects across contexts, and can offer insights into uncertainties that may arise when individual studies present conflicting results (Yuan & Hunt, 2009). Despite the potential benefits of these findings from meta-analyses, prior studies offer little evidence that they are regularly used in educational decision-making (Slavin, 2020).

To help explain this gap, scholars have posited that meta-analyses fall short in their relevance, applicability, and accessibility to educational audiences, but few studies have systematically mapped these shortcomings onto recent literature (Day et al., 2025a; Maynard, 2024). Detailing how and in what ways evidence synthesis researchers currently approach strategies for making findings relevant, applicable, and accessible can help guide future efforts to better connect research, practice, and policy.

Conceptual Frameworks

This study was guided by frameworks centered on connecting research and educational practice, with a particular focus on making findings from meta-analyses more likely to be used by K–12 educators (Farley-Ripple et al., 2018; Maynard, 2024). Educators’ “use of evidence” has been defined in various ways but is often categorized in the research literature as instrumental, conceptual, tactical, and mandated uses (Tseng, 2012; Weiss, 1977). “Instrumental use” refers to research used directly to inform a decision. One example occurs when educators use research evidence to select which program to fund. “Conceptual use” refers to research that shapes beliefs, understandings, and broader contexts surrounding an issue. Conceptual use is less concrete than other types, as it centers around changing mindsets and understandings of an issue. For example, if educators notice that a sub-group of students are struggling with feeling connected, conceptual use occurs when the educator turns to the research literature to learn more about causes and correlates of school connectedness. “Tactical use” refers to evidence used to support a pre-existing position, such as when an educational decision-maker needs to justify an item in a budget. “Mandated use” refers to research imposed on a decision-making process, as with federal or national mandates for evidence-based programs. We use this definition of use—as a multifaceted construct—in the present study.

Researchers have suggested various reasons that explain the gap between evidence synthesis production and the use of research evidence. Maynard (2024) broadly posits that a lack of relevance and accessibility of findings from meta-analyses drives the gap, particularly in the fields of education, workforce development, and social services. More specifically, Maynard recommends that meta-analysts pay close attention to the “focus, format, and accessibility of evidence” to impact its use and usefulness (2024, p. 12). This includes attention to which questions are of particular interest to stakeholders (i.e., anyone who has a vested interest in using research but is not a traditional producer of research) to how effect sizes are presented, and to what supplemental descriptive information is top priority for the end user. Similarly, Farley-Ripple et al. (2018) describe factors that may drive the gap between research and educational decision-making, including (a) a lack of exchange between researchers and education stakeholders; (b) a mismatch in what qualities or characteristics of a study are prioritized and reported (e.g., internal vs external validity); and (c) different preferences for approaches to disseminating findings. Taken together, these facets of the research-to-practice gap proposed by both Maynard (2024) and Farley-Ripple et al. (2018) are the focus of the present study: researcher-stakeholder engagement (which may improve research relevance), reporting priorities (which may improve research applicability), and dissemination strategies (which may improve research accessibility). We describe each in turn.

Stakeholder engagement

Educational researchers have long recognized the importance of involving educators in the research process, as exemplified by the growing implementation of research-practice partnerships (Farrell et al., 2021). Far less is known, though, about educational stakeholder engagement in evidence syntheses specifically. Recent literature highlights the importance of involving non-researchers in the systematic review process (e.g., Harris et al., 2016) as an important way to improve review relevance, such as by identifying the evidence needs of the stakeholders and presenting results in a way that is practically useful to educators (Neal et al., 2018).

While a dearth of information exists for the education space, a more robust literature exists in in the field of healthcare research. Approaches to involvement have centered around incorporating patients’ and medical professionals’ perspectives into the evidence synthesis process (Cochrane Methods Equity, n.d.; Cottrell et al., 2014; Harris et al., 2016; Khangura et al., 2012; Pollock et al., 2018). Prior research including the ACTIVE Framework—Authors and Consumers Together Impacting eVidencE—has described involvement in different ways, including iterative feedback throughout the review process, stakeholders offering feedback at one timepoint, or stakeholders involved at the “top and tail” (beginning and end) of the review (Kreis et al., 2013; Pollock et al., 2019). In a 2018 scoping review of the stakeholder engagement literature in healthcare evidence syntheses, for example, researchers identified 291 papers that discussed any aspect of stakeholder involvement in the systematic review process (Pollock et al., 2018). Of these papers, just 30 reported enough information for the authors to code a more detailed description of the stakeholder involvement. Most papers reported involving stakeholders throughout the review process (15 papers) or after the review was completed to help interpret results (10 papers). Findings also showed that most studies included stakeholders as “contributors” who provided views, thoughts, and feedback to indirectly inform the review process (19 papers) or as “influencers” who commented, ranked, or reached consensus to provide data that directly influenced the research process (Pollock et al., 2018).

To explore the benefits of stakeholder engagement, researchers have presented case studies of examples of stakeholder engagement or provided commentaries that reflect personal experiences engaging stakeholders (Keown et al., 2008; Merner et al., 2021). These, too, are largely in the healthcare field. Researchers have found that stakeholder involvement helps patients and the public stay informed, increases confidence in findings because they are validated by non-researchers, ensures transparency and accountability in the research process, helps researchers anticipate controversy on the topic of study, and empowers patients (Agyei-Manu et al., 2023; Cottrell et al., 2014; Merner et al., 2021; Oliver et al., 2015). Understanding when and how stakeholders have been involved in education evidence synthesis research is a critical first step given the dearth of extant research.

Reporting priorities

Even when researchers are not able to directly involve stakeholders in the research process, there are ways to make findings more relevant for stakeholders and applicable to school contexts. These approaches include describing Participants, Interventions, Comparisons, Outcomes, Times, and Settings (PICOTS) characteristics so that educational decision-makers can assess how well the intervention might fit with their local context (Farley-Ripple et al., 2018). Studies on the use of research in educational decision-making have highlighted the importance of “fit” for educators deciding which programs to implement, as educators tend to specifically seek out information on how programs will work in their context (Day et al., 2025b; Fitzgerald & Tipton, 2023; Moullin et al., 2019). Characteristics of fit might include student demographics often reported in meta-analyses, but also intervention characteristics, such as resources at the school (e.g., financial constraints) and staffing considerations (Brown, 2018; Lyon & Bruns, 2019; Richter et al., 2022) that are not usually considered by meta-analysts in describing the primary studies of their reviews. Evidence synthesis scholars have recognized that although there are challenges to analyzing school, student, and program characteristics given insufficient information in primary studies, they are a necessary piece of information for meeting educators’ needs (Maynard, 2024).

This is also connected to the generalizability of intervention effects to the students, schools, and districts decision-makers serve. For decades, experimental research has primarily focused on determining whether an effect exists, often with limited attention to external validity and variations in effect. However, in the social sciences, it is unrealistic to assume that interventions produce uniform effects across different contexts and populations (Littell, 2024). Understanding for whom and under what conditions a program is effective has become a central focus in both experiments and meta-analyses (Jones et al., 2025; Littell, 2024). In the context of experimental studies, for example, Tipton and Olsen (2022) proposed methods for designing experiments that yield results generalizable to the target population. Meta-analyses, which synthesize findings from primary studies conducted in diverse contexts and populations, may lack a clear target population for generalization, especially since intervention effects are often heterogeneous across several PICOTS characteristics. This further complicates the question: To whom do the findings apply? As a result, the primary goal of meta-analysis has shifted from estimating an average treatment effect to understanding the variation in effects and the exploration of potential reasons (Littell, 2024; Pigott & Polanin, 2020; Tipton et al., 2023). Clearly reporting variations in effects has the potential to improve the applicability of findings for decision-makers, given their focus on determining “fit” with their population of interest (Day et al., 2025b).

Dissemination strategies

Prior research has also emphasized the importance of meeting educators’ needs around dissemination to support the accessibility of research to non-research audiences, particularly when presenting advanced statistical estimates and data visualizations (Farley-Ripple et al., 2018; Fitzgerald & Tipton, 2022; Maynard, 2024; Valentine et al., 2015). Studies exploring how non-research audiences interpret effect sizes can offer evidence synthesis researchers concrete tools for better translating their results into accessible formats for educators (Carrasco-Labra et al., 2016; Fitzgerald & Tipton, 2022; Peters et al., 2007; Valentine et al., 2015). For example, in a study of teachers in the United Kingdom, researchers measured teachers’ ratings of perceived informativeness and effectiveness of different effect sizes, finding that months of progress, threshold scores, and test scores were the most effective in maximizing stakeholder engagement (Lortie-Forgues et al., 2021). In contrast, Baird and Pane (2019), who analyzed the strengths and weaknesses of various metrics, identified years of progress as the least effective measure, due to its undesirable properties, while percentile gains performed the best. Other studies, focused specifically on data visualizations for meta-analytic effects, also found that presentations of findings were related to educators’ interpretations of effects; compared to other data visualizations the Meta-Analytic RainCloud Plot is more effective in helping educators correctly interpret meta-analytic evidence (Fitzgerald & Tipton, 2022; Fitzgerald et al., 2025). Across these studies, findings suggest that what might be the “status-quo” for reporting findings (e.g., with standardized mean differences and forest plots) may fall short in providing educators with information they can understand.

Lastly, studies on the use of research evidence have noted the importance of making findings clear and actionable for stakeholders to promote the accessibility of evidence throughout the decision-making process. This may be especially important in the field of education, given the tight timelines under which educational decision-makers operate, and the vast amount of research on intervention and program effectiveness (Chu et al., 2023). To better meet the needs of educators, the onus is on researchers to be sure that the “message sent” is the “message received” (Fitzgerald & Tipton, 2023, p. 8). To do so, researchers can clearly signal the implications of their findings for non-research audiences, including descriptions around the uncertainty of findings or suggested ways for findings to be used (Fitzgerald & Tipton, 2023).

The Current Study

This study is part of a larger project that aims to evaluate the methodological quality of education intervention meta-analyses focused on K–12 student achievement (Pellegrini et al., 2025a). In this paper we focus on how meta-analysts both directly (via stakeholder engagement) and indirectly (via data visualization and reporting of study characteristics and findings) incorporate strategies for making findings useful for non-research audiences. Specifically, we sought to answer the research question: To what extent and in which ways have education meta-analysts used strategies that have the potential to improve the relevance, applicability, and accessibility of meta-analytic findings? We specifically focused on the strategies of (a) engaging educational stakeholders in the research process, (b) reporting study and intervention characteristics that increase the applicability of findings for educators, and (c) presenting findings in ways that make them more accessible to non-research audiences.

Given the large number of recent meta-analyses in education, we needed to narrow our scope. We focused our study on education meta-analyses evaluating the impact of educational interventions on student academic achievement. Intervention meta-analyses may be more likely–as compared to correlational meta-analysis–to clearly signal actionable insights into interventions that can be directly applied in practice. We focused on academic achievement because it is the most frequently assessed outcome in school-based interventions.

Methods

We pre-registered the protocol to conduct this meta-review in the Nordic Journal of Systematic Reviews in Education (Pellegrini et al., 2024).

Inclusion Criteria

Meta-analyses were included if they met the following criteria:

The meta-analysis includes general populations of students in K–12. We include pre-K or post-secondary only when other K–12 grades are included. We exclude meta-analyses solely focused on special education populations (including learning disabilities).

The meta-analysis focuses solely on school-based academic interventions. We exclude interventions that may happen in school but are not directly related to learning an academic subject, such as health interventions, after-school programs, physical activities, school structure, social-emotional interventions. We include motivation interventions if they are focused on academic achievement.

The meta-analysis reports a summary effect size for student academic achievement. We exclude other education-related outcomes, such as socio-emotional skills, attendance, dropout rates, computational thinking, and teacher outcomes.

The meta-analysis includes studies using group designs (i.e., randomized controlled trials, quasi-experimental designs). We exclude correlational, single group pre-post designs, single-subject design meta-analyses, as well as meta-analyses that combine different designs if the analyses (i.e., average effect size and model for heterogeneity) are not conducted separately for studies with group designs. We exclude these other design types as we are most interested in meta-analyses that include study designs that can best support a causal inference about the effectiveness of the academic intervention.

The meta-analysis is published in English between January 2021 and September 2023. We were interested in the recent reviews since the interest in research use has grown in recent years amidst the onset of the COVID-19 pandemic and its disruption of education systems around the world (United Nations, 2020).

The meta-analysis is published in a peer-reviewed journal. We restrict our search to peer-reviewed papers excluding gray literature for two reasons. Peer-reviewed syntheses have already passed an expert evaluation for their quality. Some unpublished syntheses (e.g., conference papers) may not report the full method sections. For a similar equitable reason, we exclude dissertations and reports due to their typically longer page count compared to articles.

Search Strategy and Information Sources

As described in the protocol, we identified reviews for the current meta-review using three search strategies. First, we searched databases including Academic Search Ultimate, APA PsycINFO, ERIC, Teacher Reference Center via EBSCOhost, and Social Sciences Citation Index, Science Direct. We used the search strings decided in advance in the protocol (Pellegrini et al., 2024), including keywords related to meta-analysis, intervention study, and participants, and adapted them as needed depending on the database. The publication year was limited from 2011 to 2023 because the search was conducted for the larger project on the methodological quality of meta-analyses. Second, we hand searched the tables of contents of journals devoted to research syntheses or impact evaluation using Paperfetcher (Pallath & Zhang, 2023): Review of Educational Research, Educational Research Review, Review of Research in Education, Campbell Systematic Reviews, and Journal of Research on Educational Effectiveness. Third, we screened the reference list of all meta-analyses included in previous meta-reviews (see Pellegrini et al., 2024) with the aim of analyzing the quality of methodological features. Compared to the list provided in the protocol, we added Nordström et al. (2023), which was found later in the process.

Selection Process

A two-stage process was used for selecting relevant reviews. First, the title and abstract of the located reviews were single screened and only records that were meta-analyses in K–12 education were retained for full-text review. Next, the full text of the retained reviews was reviewed against the inclusion criteria. Reviews were screened by two independent reviewers to avoid the exclusion of potential eligible reviews. The team met regularly to jointly resolve conflicts, including the reviewers involved in the conflicts and at least a third experienced reviewer. The initial inter-coder agreement was 81%, reaching 100% after discussing the conflicts. The selection process was carried out using Covidence (https://www.covidence.org/), an online platform for reviews that supports a systematic and transparent process. Before the two stages, guidelines for selection were provided to the review team and constantly updated during the process. Training was conducted with review team members to practice screening on a weekly basis. Guidelines for the selection process alongside the list of excluded reviews with reasons are accessible at Pellegrini et al. (2025b).

Data Extraction

Coding for study characteristics

A draft codebook was developed and published in the pre-registered protocol. We specifically extracted information to assess the extent to which authors (a) explicitly engaged stakeholders in the research process (two items), (b) reported study characteristics and findings that support the application of the interventions in similar contexts (14 items), and (c) used dissemination strategies with potential to make the findings accessible to stakeholders (six items). In developing our codebook for Section B, we used the PICOTS framework to code which characteristics of participants, interventions, comparisons, outcomes, times, and settings were described by the authors and were tested as potential moderators of the effect. Thus, the same PICOTS elements were coded two times. The first one focuses on the characteristics of primary studies described at the beginning of the results section. This may include the type of population involved in the included studies or the duration of the intervention under evaluation. We then coded whether the same elements were tested as potential moderators of the effects. We coded characteristics and moderators separately, because the primary study characteristics provide a sample representation and contextual information about the studies that can help stakeholders make informed decisions, and moderator analysis gives information about the differential effects of the intervention. We also coded if the review considered the sampling method used in the included primary studies.

For Section C we coded whether the authors provided data visualizations and effect size transformations to support stakeholders’ understanding of findings (e.g., Baird & Pane, 2019; Fitzgerald & Tipton, 2023), as well as open-text coding of implications sections (described in more detail below). Data visualizations included the type of tables used for reporting primary studies’ characteristics and graphical visualizations. We distinguished between a detailed table, which reports the characteristics for each primary study, and an aggregated table reporting the overall characteristics of the primary studies. We also considered whether the paper explicitly mentioned how the findings could be used by educators or policymakers (e.g., implications for practice).

Coders (ED, HS, MP) pilot-tested the draft codebook on 10 reviews and revised it as necessary. This step was also used to align coders and reach a high level of agreement. After reaching 95% of agreement on average, ED and HS single coded the reviews and MP double coded 25% of the reviews. For the double-coded reviews we calculated an average agreement of 93% (range: 72%–100%). Disagreements were reconciled by the coder who was not involved in them. Data extraction was conducted in MetaReviewer (Polanin et al., 2023), a free software for data extraction in reviews. A complete list of items coded with agreement rate, the codebook, and the dataset are available at Pellegrini et al. (2025b).

Coding open-text responses

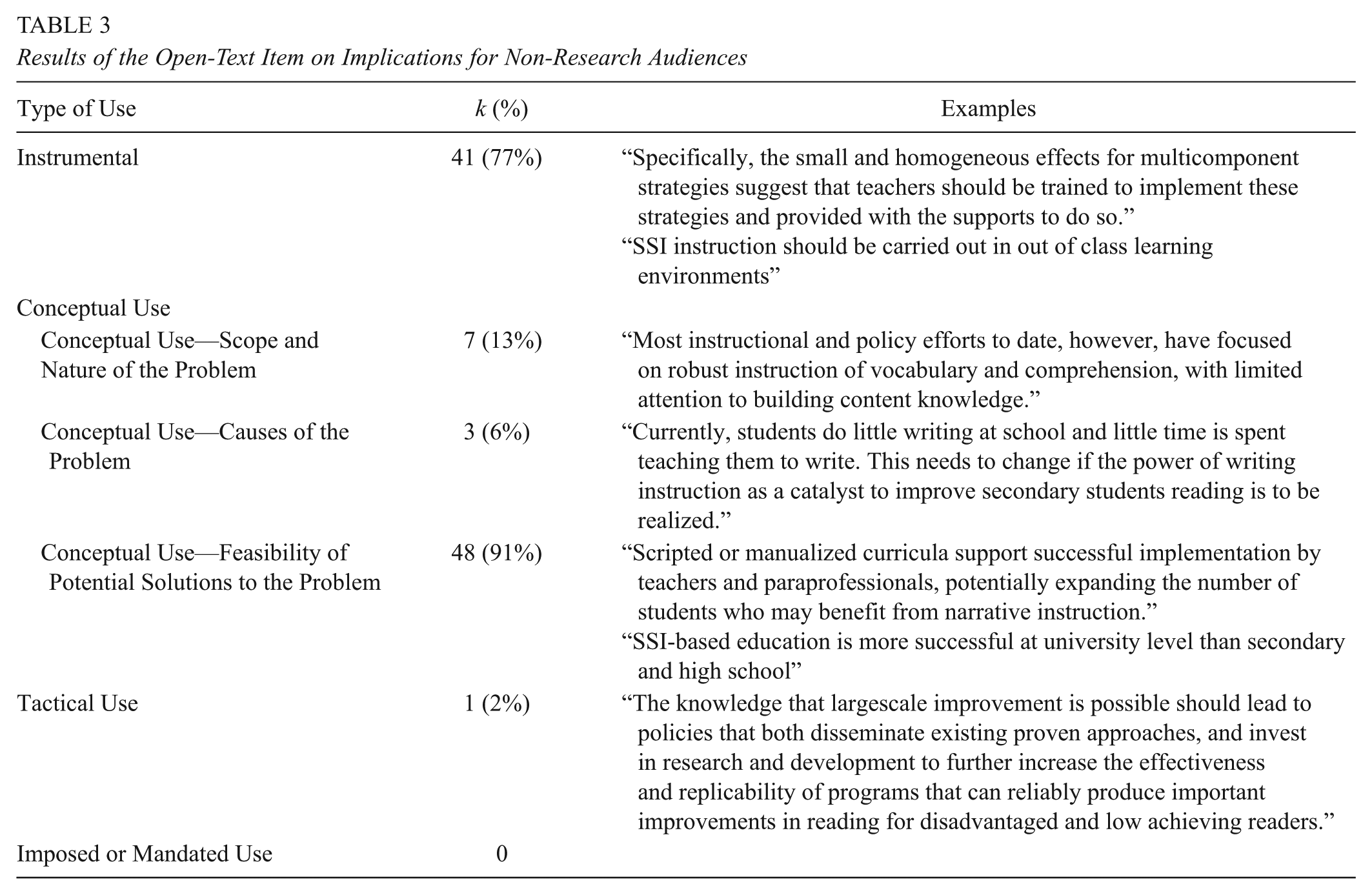

Once the initial round of coding was complete, a second round of coding involved analyzing the open text responses for reviews that included implications for non-research audiences. Codes for this process were adapted from an established coding tool for assessing use of research evidence in four categories: instrumental use, conceptual use, mandated use, and tactical use (Yanovitsky & Weber, 2020). Conceptual use involved three sub-categories: understanding the scope and nature of the problem, understanding causes of the problem, and understanding the feasibility of the solutions (Table 1). Each category was coded as 1 if the text included that category and 0 if not. Coders also noted the target audience (e.g., teachers, school administrators) and the format with which implications were presented (e.g., explicit paragraph with implications or not). Coders (ED, HS, MP) pilot-tested the codebook on eight reviews and revised as necessary. Then, all reviews were double-coded, with an average agreement rate of 95% across all codes. Disagreements were reconciled by the coder who was not involved in them.

Coding Descriptors for the Open Text Question

Data Analysis

We analyzed results by providing descriptive statistics for the characteristics coded. All items were categorical characteristics for which we calculated the frequency and percentage across the options. If a review used multiple options, we counted the frequency for each option. Thus, the sum of the percentage of some characteristics exceeded 100%. We examined whether reviews published in journals devoted to research syntheses or impact evaluations (i.e., Review of Educational Research, Educational Research Review, Review of Research in Education, Campbell Systematic Reviews, and Journal of Research on Educational Effectiveness) included more elements related to the significance of research use than other content-related journals. Descriptive analyses, figures, and tables were conducted using R Statistical Software (Version 4.4.2; R Core Team, 2024).

Results

We identified 2,003 potentially relevant records from database searches and 291 from handsearching and retrospective citation chasing. After removing 637 duplicates, titles and abstracts of 1,657 records were screened. We retained and independently reviewed 486 records in full text. We excluded 239 reviews for different reasons, with the most common ones being ineligible outcomes (k = 84), intervention (k = 79), research design (such as a narrative review; k = 54), and student population (k = 20). A total of 247 were included in the larger project but many were excluded in this review because they were published before 2021. Finally, 103 reviews were included in this review (see list of the included and excluded reviews at Pellegrini et al. (2025b). Figure 1 shows a PRISMA flowchart documenting the search and selection process.

Selection Process.

Across the included reviews, 30 (29%) were published in 2021, 45 (44%) in 2022, and 28 (27%) in 2023. Most reviews were published in journals that were not devoted to research syntheses (k = 83, 81%).

Stakeholder Engagement in the Review Process

Most of the reviews did not mention the involvement of stakeholders in the review process (83%). Among the reviews reporting the stage of stakeholder involvement in the text, two reviews consulted them at the initial stage of the review (i.e., research design), three reviews in data collection, and two reviews in either data analysis or interpretation of results. In 14 reviews, stakeholders were listed as authors and identified by their institutional affiliation (e.g., a school district), with no other indication of their involvement. There was some slight variation across journal types with a higher percentage (19%) of reviews in non-specialized journals including stakeholders than specialized journals (10%).

Reporting Study Characteristics

Table 2 provides an overview of reported study characteristics and potential tested moderators. No studies reported more than 59% of the information included in our coding scheme, with the majority reporting 36% or less. Most of the meta-analyses (81%) reported grade levels included in primary studies but few reported other participant characteristics, such as gender (13%), ethnicity (13%), and socioeconomic status (16%). Reviews reported the types of interventions (62%) and the academic subject or topic of the interventions (52%) of the primary studies, but none reported the cost of materials or teacher training. In all, 17% and 22% of the reviews provided no information about participants and intervention, respectively.

Study Characteristics and Potential Moderators Included in the Reviews

Additionally, 29% of the meta-analyses reported the nature of the comparison groups included in the primary studies and 20% had inclusion criteria that specified only one type of comparison group could be included in the meta-analysis (i.e., “business as usual”). Half of the reviews (51%) did not report the nature of the comparison groups. Nearly half (42%) of the included meta-analyses did not report characteristics related to primary study outcomes. This could be due to only including a particular type of outcome as specified in their inclusion criteria (i.e., only mathematics outcomes or only standardized test outcomes). Most meta-analyses (57%) reported the duration of the interventions, but few reported the intensity (23%), timing of the outcome measure (10%), or intensity of teacher training (4%). One-third (34%) of the reviews reported the countries where the studies were conducted. Several meta-analyses only included primary studies from one country (i.e., only the United States or Turkey). Few reviews reported other setting characteristics, such as urbanicity (4%), and none reported whether schools were public or private. In all, 39% and 60% of the reviews did not report any characteristics of time and setting, respectively.

Testing potential moderators

Moving to the moderator analysis based on PICOTS framework, most reviews included grade level as a potential moderator (73%) and a few included at-risk status (13%). Very few included other participant characteristics including gender (5%), special education needs (9%), ethnicity (7%), and socioeconomic status (9%). Many meta-analyses (44%) reported the subject or topic of the intervention as a potential moderator. Some (23%) meta-analyses included the instructional setting of the primary study, and 19% included the interventionist as potential moderators. Additionally, none included the cost of materials or teacher training as a potential moderator.

Most reviews (81%) did not include the comparison group type as a potential moderator, including those that had inclusion criteria related to a specific type of comparison group. Nearly half (49%) of reviews did not include any outcome characteristics as potential moderators. One-fourth (25%) reported the type of measure (standardized, researcher developed, etc.) and 35% reported the domain of the outcome as potential moderators. Many of the included meta-analyses (45%) tested the intervention duration as a potential moderator and 13% tested the intervention intensity. Only one meta-analysis included the teacher training intensity as a potential moderator. Few reviews included setting characteristics as potential moderators, such as country (17%) or urbanicity (2%).

Overall, most reviews reported many characteristics of the primary studies but did not include them all as potential moderators, possibly due to power limitations. For example, 81% of reviews reported descriptive characteristics of the grade levels included in primary studies and 73% of those included grade level as a potential moderator in the analysis. Similarly, 28% reviews reported the outcome type of the primary studies and nearly all (25%) included this as a potential moderator. Alternatively, 34% of reviews reported the country or region of the primary studies, but only 17% included location as a potential moderator (Table 2).

Interestingly, several meta-analyses reported the results for the moderator analysis without describing the characteristics of primary studies. For example, 64% tested intervention type as a potential moderator but only 62% reported the intervention type as one of the characteristics mentioned earlier in the description of the primary included studies. Finally, very few (7%) reviews in our analysis reported the sampling method used in the primary study design.

Dissemination Strategies

Effect size transformations

Only six reviews provided Cohen’s U3, the percentage of participants in the treatment group who scored above the control group’s mean. No other effect size transformations (i.e., CLE, Intent to Treat, Years of Learning, Benchmarking, Percentiles, Thresholds) were used.

Data visualizations

Most reviews (59%) reported a detailed table that shows in rows the reference of each primary study and in columns selected study characteristics. Some reviews (14%) reported an aggregated table showing the overall characteristics of the primary studies. Very few (7%) reported both types of tables, and 20% did not include a table. Reviews published in specialized journals more frequently published aggregated tables (30%) than those published in non-specialized journals. Additionally, reviews in specialized journals more frequently published tables at all (95%), while studies in non-specialized journals included tables less frequently (76%).

Nearly half of the reviews (56%) reported the forest plot that displays estimates of the effect sizes from the included studies along with their confidence intervals. Five reviews included a bar plot, box plot, or scatterplot for representing the relationships between specific variables. The review by Williams et al. (2022) used a graph to display the average effect sizes for the three intervention categories coded and the distributions of the studies, including the link to an interactive shiny app. No reviews reported newly developed types of plots, such as Rainforest Plots and Meta-Analytic Rain Cloud Plot (Fitzgerald & Tipton, 2022). In addition, 39% of the reviews did not provide any visualizations at all.

Study implications for stakeholder audiences

Out of 103 reviews, 53 included text addressing implications for non-research audiences, with 38 describing these in a dedicated section. In three reviews, the recommendations were presented as lists. Inclusion of this type of text varied slightly across journal types: more reviews published in specialized journals (65%) included this type of text while only 51% of the reviews published in other journals did.

By analyzing the open-text responses using the framework adapted from Yanovitsky and Weber (2020), we found that most reviews reported information related to the instrumental use (k = 41; 77%) and the feasibility of potential solutions to the problem (k = 48; 91%). The other categories—Scope and nature of the problem (k = 7; 13%), Causes of the problem (k = 3; 6%), Tactical use (k = 1; 2%)—were mentioned by a few reviews, with Imposed or Mandated use never mentioned. In most reviews (k = 42; 79%) the target audience was teachers. Table 3 shows the results of the analysis with examples of sentences from the included papers.

Results of the Open-Text Item on Implications for Non-Research Audiences

Figure 2 provides a visual representation of the results. The heat map shows how the study characteristics—participants, intervention, and setting—and the strategies to make accessible the findings to stakeholders (i.e., use of visualizations, suggested use) have been reported in the studies. Figure 2 includes the studies that reported more items than the overall average across all studies in the review. Overall, studies that included more characteristics also addressed implications for practice and suggested uses. Interestingly, of the four studies that effectively described the interventions, settings, and participants, by reporting 59% of the items, only two discussed the implications for practice, and only one provided a visualization.

Heat Map of the Reported Study Characteristics and Strategies to Make Accessible the Findings to Stakeholders.

Discussion

This paper examined to what extent strategies to make findings more relevant, applicable, and accessible for non-research audiences have been integrated in education meta-analyses. We conducted a meta-review including 103 recent meta-analyses on K–12 education interventions and focused on: (a) engaging educational stakeholders; (b) reporting comprehensive study and intervention characteristics; and (c) disseminating findings using data visualizations, transformed effect sizes, and clear suggestions for practice. In what follows, we briefly summarize the most relevant results on the three strategies, and we propose possible approaches and related challenges to foster the integration of these strategies in meta-analysis practice.

Stakeholder Engagement Is Not Implemented

Very few reviews included stakeholders and those that did were often unclear about the specific roles that stakeholders played. Engaging stakeholders at various points supports the research relevance and accessibility in several ways. Including stakeholders in the design and question development stages helps ensure the work is useful for educators and decision-makers while including them in later stages supports communication and dissemination efforts (Neal et al., 2018; Pollock et al., 2019). Only two reviews reported inclusion of stakeholders at each of these stages.

Some Study Characteristics Are Always Missing

As for reporting priorities, meta-analyses often failed to report details of the samples used in primary studies. While some characteristics were commonly coded (e.g., grade level, intervention type, subject area) others were not considered at all despite their importance for increasing the applicability of findings for decision-making (e.g., cost for materials, cost for teacher training, teacher training intensity, urbanicity, e.g., Day et al., 2025b). Without these key study characteristics, decision-makers may be limited in understanding how results can apply to their own contexts, which may substantially decrease the accessibility of findings

Dissemination Strategies May Make Findings Inaccessible to Non-Research Audiences

Most reviews included tables and visuals, although not necessarily in ways that are easily accessible to a broad audience. To show descriptive study information (e.g., grade level, intervention characteristics) reviews often included a detailed table, organized by primary study, which makes it difficult to extrapolate commonalities or differences across studies. Aggregate tables, which show the number of studies/effect sizes for each descriptive information, may support the generalizability of the results by making it easier to see the big picture of the school contexts included within the review (Alexander, 2020; Pigott & Polanin, 2020).

Further, Forest plots were by far the most common data visualization despite evidence that they are difficult for non-researchers to understand (Fitzgerald & Tipton, 2022). Additionally, very few reviews transformed effect sizes into alternative measures–such as months of progress, threshold scores, percentile gains, and Cohen’s U3–typically more informative for a non-research audience when looking at the impact of an intervention (Baird & Pane, 2019; Lortie-Forgues et al., 2021). Lastly, our results showed that only half of the reviews discussed the implications for non-research audiences, with about one-third reporting them in a dedicated section. Most reviews addressed conceptual use of results, and specifically the feasibility of solutions, within their implications. This is helpful as it addresses how educators would likely use the information in a conceptual way; as Farrell and Coburn (2016) explain, conceptual reporting of results helps decision-makers see new ways of thinking and offers frameworks to change the way they think about policy. Claims about the feasibility of solutions can be bolstered by thorough testing of moderators to explore the various contexts within which the results apply (Tipton et al., 2019).

Not surprisingly, many reviews included text related to the instrumental use of results. This was expected given that the included reviews looked at education interventions and there is a clear connection between the intervention and a policy or use decision (e.g., adopting a program or not). However, without necessary information to inform decision-making it is unclear how useful the recommendations would be; while the authors make claims that the interventions are effective, it would be difficult for educators to know whether it is a good fit—a critical factor that educators consider—without information on the population, resource needs, and intensity (March et al., 2022).

Challenges and future directions

The findings of our meta-review highlight three key points: engaging stakeholders as a way to increase the relevance of the evidence produced for practice; reporting study characteristics in ways that may increase the applicability of review results; and disseminating findings in ways that may increase the accessibility of findings, including translating effect sizes, presenting data visualizations, and offering clear implications for practice that show both the intervention’s impact and the associated uncertainty.

Improving the Relevance of Evidence

Engaging with stakeholders has the potential to enhance the relevance of findings and ease of understanding for non-research audiences. Further research is needed to determine the optimal stages for stakeholder involvement as they relate to researchers’ intended purposes, particularly in fields outside of healthcare (Merner et al., 2021). For example, involving stakeholders in formulating the research questions could increase the relevance of the research by addressing practical challenges faced by educators in their everyday practice. Similarly, engaging stakeholders in identifying key characteristics to examine as moderators of the effect could help provide clearer guidance on when, how, and with whom an intervention is most effective (Tanner-Smith & Grant, 2018). Involving stakeholders in the selection of visualizations and effect size metrics could improve the accessibility and usability of the results. While current research is limited on the best stages to include stakeholders, there are some resources that provide guidance (Cochrane Methods Equity, n.d.; Pollock et al., 2022).

Improving the Applicability of Evidence

Educators and decision-makers need to be able to see how well the results of the review might “fit” within their own context (Fitzgerald & Tipton, 2023; Moullin et al., 2019). In our meta-review, we examined the extent to which descriptive PICOTS characteristics are reported and whether potential moderators of the effects are explored. There are two main reasons why certain intervention and contextual features are often not reported in reviews: either they are not coded by meta-analysts or the information was unavailable in primary studies. When possible, we suggest coding a broader range of characteristics related to contexts and samples to provide a clear representation of the conditions under which an intervention is likely to be effective (Alexander, 2020). Educators also base their decisions on factors such as the cost of intervention materials, training requirements, and the duration of the intervention—often underreported in meta-analyses. This information is essential for educators to understand if a program aligns with their goals (Day et al., 2025b). To enhance reporting, stakeholders could be involved in selecting which features to extract from primary studies. Frameworks like PICOTS and previous reviews on similar topics could serve as valuable guides for determining what to code. In terms of primary studies, further research is needed to develop frameworks for reporting the most relevant items for stakeholders in education impact evaluations (Hill et al., 2025). This would, in turn, promote more comprehensive reporting at the meta-analysis level.

To understand for whom and in which conditions an intervention is effective, meta-analyses should provide information on the variation in effects and explore the moderators that can explain this variation (Pigott & Polanin, 2020; Tipton et al., 2023). Although we examined which categories of characteristics were more common in explaining heterogeneity, in our meta-review we did not examine whether the tested moderators were driven by a theory of how the intervention should work in which context. Meta-analysts are encouraged to preregister a review protocol in order to justify the rationale for selecting the moderators to test (Pigott & Polanin, 2020).

In discussing the results and implications for practice, we suggest that meta-analysts make clear the level of uncertainty of the findings and the factors that may influence its effectiveness in specific settings. This approach ensures that educators and decision-makers can make informed decisions, understanding both the potential benefits and limitations of the intervention in their specific context.

Improving the Accessibility of Evidence

To better meet the needs of educators, researchers must ensure that the “message sent” aligns with the “message received” (Fitzgerald & Tipton, 2023, p. 8). We recommend using effect size transformations and visualizations that are more easily interpreted by stakeholders and that include a dedicated section on the implications for practice, offering clear, structured recommendations (Pigott & Polanin, 2020). Previous studies have examined the strengths and limitations of effect size transformations, as well as stakeholders’ perceptions (e.g., Baird & Pane, 2019; Lortie-Forgues et al., 2021). However, despite this existing research, a critical gap remains in identifying which effect size measure—among the available options or potential new ones—best balances ease of understanding, teacher engagement, and the establishment of realistic expectations about intervention effectiveness, while also maintaining strong methodological rigor.

Research by Fitzgerald and Tipton (2022) has shown that certain forms of visualizing meta-analysis results are more effective than others. Based on their results, we recommend moving away from the traditional forest plot and adopting newer alternatives, such as the Meta-Analytic Rain Cloud Plot (Fitzgerald et al., 2025). It is essential to communicate that, in addition to the average effect, considerable variability exists, influenced by factors such as the intervention, the context, and the methodological quality of the studies. In addition, aggregate tables are valuable for providing an overview of the contexts and populations studied, offering a broader perspective (Alexander, 2020). We also advocate for sharing the full data, as this allows stakeholders to explore specific features of interest and researchers to re-use the data. This approach is consistent with the open science movement, which aims to enhance transparency and reproducibility of research (Grant et al., 2023). An additional strategy we suggest that meta-analysts use is disseminating the results through other outlets, such as journals and conferences with high participation from educators and policymakers, as well as through informal communications with schools and districts (Penuel et al., 2017).

Limitations of this review

We focused this review on meta-analyses that examined the effectiveness of interventions for improving academic achievement. We acknowledge that this sample of meta-analyses is not representative of other education meta-analyses, which are valuable sources to examine. These include reviews addressing non-academic outcomes, different types of interventions, or a wider range of study designs and questions. Additionally, we did not contact the authors of the meta-analyses to determine whether they engaged with stakeholders at any stage of their review. While stakeholder engagement has gained increasing attention in research, it is not required information in education journals. As a result, some authors may have involved stakeholders without explicitly documenting it. We also recognize that the original intent of the included meta-analyses may not have been to communicate findings to non-research audiences. The absence of certain information relevant to educators and policymakers may reflect the authors’ research goals rather than a lack of consideration for these audiences.

Conclusions

Education meta-analyses often remain inaccessible to stakeholders due to their technical and language complexity. As meta-analyses are primarily intended to inform the decisions of educators and policymakers, it is essential to find effective ways to make review results relevant, applicable, and accessible for stakeholders. Our meta-review shows that key strategies that likely increase the relevance, applicability, and accessibility of research to practitioners are not yet widely adopted. With this paper, we aim to encourage meta-analysts to consider those strategies when designing, conducting, and reporting education meta-analyses. Stakeholders’ involvement at various review stages can ensure that the research addresses real-world concerns and needs; presenting effect sizes and data visualizations may further increase both the relevance and applicability of findings. The “Implications for Practice” section in a meta-analysis paper is an ideal space to provide stakeholders with information and visuals on the variation in the effect and the level of uncertainty in the findings to make clear the conditions and context in which the intervention is expected to have a certain effect. Since stakeholders rarely read academic papers, we encourage the dissemination of findings beyond academic journals through outlets that reach educators and policymakers.

Footnotes

Declaration of Conflicting Interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Marta Pellegrini and Therese Pigott are authors of three reviews included in our meta-review, and as such they were not involved in the screening and coding of these three studies.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was partially supported by the Young Researchers Mobility Program 2023 [Programma Mobilità Giovani Ricercatori (MGR)] of the University of Cagliari.

Authors

MARTA PELLEGRINI is an associate professor at the Department of Education, Psychology, Philosophy, University of Cagliari, Via Is Mirrionis 1, Cagliari 09127, Italy; email:

ELIZABETH DAY is a research assistant professor at the HEDCO Institute for Evidence-Based Educational Practice, University of Oregon, 6247 University of Oregon Eugene, OR 97403; email:

HANNAH F. SCARBROUGH is a PhD candidate in education policy studies and a graduate research assistant in the Educational Policy Studies department in the College of Education and Human Development, Georgia State University, 30 Pryor St. SW, Suite 450 Atlanta, GA 30303; email:

THERESE D. PIGOTT is a distinguished university professor in the College of Education and Human Development, Georgia State University, 30 Pryor St. SW, Suite 300 Atlanta, GA 30303; email: