Abstract

Though scores of teacher education programs use mixed reality simulations as platforms for preservice teacher candidates’ (“candidates”) low-stakes practice, we know little about how customizable features of these simulations shape candidates’ perceptions. To build such evidence, we analyze post-simulation survey data from 192 candidates, each of whom completed two simulation sessions with varying tasks, supports, and modes of delivery. Using within-candidate estimation, random assignment, and standardized simulation procedures, we mitigate selection bias to make more precise empirical claims about how shifting simulation features might influence candidates’ perceptions. We find changing the task and support is associated with changes to candidates’ perceptions. The mode of delivery may also influence candidates’ perceptions for some, but not all, tasks. We conclude with recommendations for implementing simulations in teacher education to more fully realize the potential of this pedagogical approach.

Keywords

T

Candidates’ practice opportunities often occur mostly during student teaching, where they are supported by mentor teachers as they take on increasing responsibility in K–12 classrooms. However, clinical environments are inherently high-stakes, unpredictable, and rightly focused on students rather than candidates (Ronfeldt, 2015). Simulations as a pedagogy of teacher preparation can complement clinical experience by allowing novices to explore components of teaching with lower-risk and more support than is typical in real classrooms (Dieker et al., 2014; Grossman et al., 2009).

Simulations are a broadly defined category of practice opportunities, including role-plays, standardized student interactions, and technology-based classroom experiences (Kaufman & Ireland, 2016). Here, we focus on mixed reality simulations (MRS), a type of digitally mediated simulation used in scores of TEPs (AACTE, 2020). In mixed reality platforms such as Mursion—the platform used in this study—live actors (“simulation specialists”) control digital avatars in real time from a remote location. The presence of a human specialist allows MRS to feel responsive and naturalistic while also being highly standardizable.

Using these platforms is time and resource-intensive for TEPs but potentially very useful in candidates’ arc of development. Several studies—from our own research and others’—document candidates’ positive knowledge, skill, and belief shifts following simulated practice (e.g., Bondie et al., 2023; Cohen et al., 2020; Cohen, Anglin, & Wiseman, 2024a; Cohen, Wong, et al., 2024b; Ledger et al., 2022; Luke et al., 2024). A major advantage of simulations is that they can be differentiated to meet candidates’ needs. Candidates can simulate a range of “tasks,” which we define as the teaching and/or student learning scenarios to which candidates respond (Bondie et al., 2021). Candidates can also iterate on tasks with different types of “supports,” such as coaching, pausing, or reflecting, before trying again (Cohen et al., 2020). Simulations can also be delivered via different “modes,” such as in person with peers or online from a private location (Bondie et al., 2021). Existing research on simulations in teacher education reflects this variety of features (Cohen et al., in press; Ireland, 2021). Across many types of simulated experiences, candidates report finding simulations useful, realistic, and relevant (e.g., Dalinger et al., 2020; Larson et al., 2020; Walters et al., 2021), suggesting their broad utility in teacher education.

A major limitation of the current evidence base is that existing studies of perceptions of simulations often rely on cross-sectional data, capturing participant responses to a single simulation opportunity (e.g., Hirsch et al., 2023). Teacher candidates likely respond differently to learning experiences based on a host of traits, such as prior knowledge and beliefs (Cohen, Anglin, & Wiseman, 2024a; Horn et al., 2008). Although statistical methods can account for some readily observable traits, unobserved candidate characteristics may confound relationships between perceptions and simulation features. Limited prior research empirically disentangles features of the learning opportunity from the characteristics of the respondents that might influence their perceptions. This limits the practical guidance that TEPs can draw from existing research, as insights about candidates’ perceptions of simulation features cannot be precisely distinguished from unobserved differences between the perceivers.

To address this methodological challenge, we use a within-candidate estimation strategy paired with standardized simulation features and random assignment for some simulation features. We draw on a large sample of candidates (N = 192), each of whom completed two distinct simulation sessions that varied systematically in task type, support, and delivery mode. This design enables us to examine how specific simulation features relate to candidates’ perceptions, while controlling for unobserved individual differences. Although we do not aim to make causal claims, this approach allows us to illuminate features that may enhance—or hinder—candidates’ experiences. Do candidates appreciate the chance to practice a particular task? Or do those same candidates find opportunities for practice valuable regardless of task? Is feedback crucial for a positive experience, as Bondie et al. (2023) suggest? There is much more to learn about how and why simulated practice feels valuable—and why it sometimes may not.

Importantly, these data were collected as part of a larger set of experimental studies that tested the impact of different supports during simulated practice on candidates’ teaching skills and beliefs about students (see Cohen et al., 2020, 2025; Cohen, Anglin, & Wiseman, 2024a; Cohen, Wong, et al., 2024b). Those studies consistently identify the positive causal impact of these simulations on candidates’ development, especially when augmented with coaching. However, candidates’ time in their preparation programs is brief and their preparatory learning opportunities are myriad. What candidates take with them from TEPs to classrooms likely depends, to some extent, on the value they ascribe to these many experiences. Given the affective dimensions of teacher learning (Korthagen, 2017), we hypothesize that improving candidates’ experiences in simulations might enhance their retention of and reliance on their learning in these platforms down the road (Wigfield & Eccles, 2000). As such, this research moves beyond analysis of what candidates “do” in simulations to build understanding of how they feel about and what they want from this pedagogy of preparation.

To do so, we capitalize on post-simulation survey data from 192 candidates at Lambeth University, 1 a public university in the Southeastern United States. Candidates in two Lambeth cohorts completed 384 total simulation sessions (two sessions per candidate). Across these 384 sessions, specific features, including task, support, and mode of delivery, were systematically varied. Task and support varied randomly within candidates (that is, all candidates experienced two different, randomly assigned support and task combinations), while mode of delivery varied by cohort. Besides these features, all sessions followed standardized procedures. This enables us to leverage within-candidate comparisons to understand the influence of shifting tasks and supports, while using the standardized implementation to facilitate broader comparisons to understand the influence of mode of delivery. Together, this design allows us to more readily disentangle the influence of shifting features from stable attributes of the perceiver and the simulation format (Boyce, 2010; Quintana, 2021).We investigate the following research question: what are candidates’ perceptions of simulated practice and to what extent do those perceptions vary by the simulation’s task, supports, and/or mode of delivery?

We highlight the implications of our findings for TEPs seeking to design simulated practice experiences that candidates value, which we see as a helpful—though not required—precondition for professional learning. We also lay the foundation for continued investigation into how simulations are best leveraged in teacher preparation—decisions that are currently often guided by teacher educators’ intuition (Cohen et al., in press; Ireland, 2021).

Background Literature and Framework

We draw on Grossman et al.’s (2009) pedagogy of approximations, Korthagen’s (2017) “professional development 3.0” framework, and Wolf’s (1978) theory of social validity to motivate and ground our study. Although Grossman and colleagues highlight the benefits of low-stakes “approximations of teaching” for enhanced professional learning, Wolf’s theory and Korthagen’s framework suggest a connection between how participants feel about a learning opportunity, what they want from the opportunity, and what they take away from it. Understanding the degree to which and ways in which different features make simulated practice feel more or less useful can help TEPs implement simulations in ways that maximize the likelihood of positive experiences. These insights are especially crucial as more teachers are being prepared in contracted time frames and without extensive preservice clinical experiences (Yin & Partelow, 2020). Although candidates in this study were required to complete simulations as part of their preservice preparation, we can imagine a world where novice teachers gain practice experience by opting into simulated practice when they are not required to. In this situation, believing that the opportunity is useful and relevant is likely important if candidates are to choose these platforms for preparation and learning.

We hypothesize that candidates’ perceptions of simulations will vary according to the features of the learning opportunity, including the tasks candidates enact, the supports they receive during the experience, and the simulation’s mode of delivery. MRS are a useful platform for studying candidates’ responses to particular features of the learning opportunity because of their flexibility compared to other contexts in which candidates learn to teach, such as college and K–12 classrooms. In simulations, some features of an experience can be held constant while others are systematically varied. Candidates’ perceptions of simulations are likely influenced by many factors, including their individual characteristics and the context in which they are learning to teach. We advance the literature on this topic by more effectively addressing issues of selection in our models that might bias observed relationships. To do so, we employ standardized simulation procedures, random assignment of the support feature, and a within-candidate estimation strategy. This design allows us to make more precise claims about the relationship between simulation features (task, support, and mode of delivery) and candidates’ perceptions of simulations. In the following sections, we detail each aspect of our framework, along with the relevant literature about the features under study.

Understanding Candidates’ Perceptions

Mounting empirical evidence suggests that candidates can productively shift their knowledge, beliefs, and skills during simulated learning opportunities (e.g., Cohen et al., in press; Ersozlu et al., 2021; Luke et al., 2024). However, theory suggests that the degree to which candidates retain and use these shifts during their work in real classrooms likely depends, at least in part, on the value they ascribe to this pedagogy of preparation. According to Korthagen (2017), approaches to teacher learning have often focused mostly on developing candidates’ theories (thinking and knowledge) and practices (behavior) with little attention to the teachers themselves. Such approaches overlook the role of feeling and motivation in teacher development. Korthagen argues for seeing teachers’ behavior as a joint result of their thinking, feeling, and motivation. As such, any attempts to shift teacher behavior should also consider teachers’ needs, goals, desires, preferences, and feelings.

To understand candidates’ affective responses to simulated learning opportunities, we turn to social validation. Social validation (Wolf, 1978) involves seeking and applying evaluative feedback from participants to determine if and how simulated practice meets their needs. This feedback can then guide the planning and evaluation of future implementations. Assessing learning outcomes—if and how targeted skills develop or beliefs shift—can indicate whether a pedagogy “works” in terms of supporting candidate development. The affordance of social validation is that it clarifies whether such opportunities and their associated outcomes feel worthwhile to participants. These understandings are useful because learning opportunities that feel valuable might be more easily integrated with candidates’ developing theories about good teaching and, most importantly, later taken up in real classrooms (Brouwer & Korthagen, 2005).

Extant research suggests that candidates tend to value simulated learning opportunities, find them useful, and even prefer them to other practice opportunities, such as role plays with peers (e.g., Larson et al., 2020; McKown et al., 2022; Mikeska et al., 2023; Walters et al., 2021). For example, McKown et al. find that candidates who completed a student behavior-focused task in MRS found the experience more relevant, realistic, and beneficial for their development than candidates who completed a similar task in a live rehearsal with peers. These and other studies (e.g., Bautista & Boone, 2015; Dalinger et al., 2020; Mikeska et al., 2023) also highlight candidates’ heightened nervousness, discomfort, and/or uncertainty during simulated practice. Therefore, many authors recommend ways to mitigate candidates’ anxiety—for instance, by increasing candidates’ exposure to the simulator (e.g., Larson et al., 2020; McKown et al., 2022). However, the sources of and remedies for candidates’ nervousness in simulations are not well-understood empirically. We identify a tension between candidates’ perception of the utility of simulations and their strong affective reactions to them, which could detract from their learning if not properly scaffolded (Parong & Mayer, 2021). With a better understanding of when and why candidates value simulated practice—or do not—TEPs might be more able to structure these opportunities in ways that are both positive and productive.

Although prior studies have explored the social validity of simulations, most analytic approaches have not accounted for unobserved heterogeneity between candidates that may confound understanding of candidates’ perceptions. Teacher candidates bring a host of varying characteristics, including beliefs, prior knowledge, and personalities, to their learning experiences (Cohen, Anglin, & Wiseman, 2024a; Horn et al., 2008). Some readily observable characteristics can be controlled through statistical methods to generate more precise estimates of the relationship between candidates’ perceptions and the experience under study. However, unobserved characteristics can also influence how candidates respond to a pedagogical opportunity such as simulated teaching. This methodological issue is mitigated when assignment to features is randomized and/or data is collected at multiple time points, allowing for comparison of average outcomes for the same individuals across different simulation conditions. Such an approach yields more precise empirical evidence of the relationship between particular features and candidates’ perceptions by controlling for between-participant heterogeneity.

Importantly, learning opportunities can be valuable even when they are not enjoyable. In fact, progress is often accompanied by a certain level of productive discomfort (Ericsson & Pool, 2016; Hiebert & Grouws, 2007). Moreover, candidates may harbor incomplete perceptions of what teaching entails, making it difficult for them to evaluate the relevance or utility of their preservice learning opportunities (Dicke et al., 2015; Evertson & Weinstein, 2006). We do not argue that candidates’ preferences and perceptions alone should drive curricular choices. Rather, we hope to provide more precise empirical evidence of which opportunities candidates appreciate and how these preferences might be integrated or adapted to enhance existing evidence of candidates’ learning in simulations (e.g., Bondie et al., 2023; Cohen, Anglin, & Wiseman, 2024a). When candidates see value in simulated practice, they may be more likely to engage deeply with the opportunity, retain what they learn, and flexibly extend this learning later in real classrooms (Korthagen, 2017; Wigfield & Eccles, 2000).

Practice Opportunities in Teacher Education

Most traditionally prepared teacher candidates engage in protracted and authentic practice opportunities, or “approximations of teaching,” during their clinical teaching placements, where they practice teaching alongside a more experienced mentor (Grossman et al., 2009). However, classrooms are inherently high-stakes and highly variable (Matsko et al., 2020; Ronfeldt, 2012). K–12 classrooms present rich diversity, but what a novice teacher has the opportunity to see and do in classrooms can vary tremendously by school, classroom, and time of day. During student teaching, candidates’ opportunities for experimentation and refinement can be limited given the presence of and focus on K–12 students. Student teachers are not often able to teach the same lesson twice, and their mentors may not have time to provide detailed feedback (Kavanagh et al., 2022; Matsko et al., 2020).

Given these structural limitations of clinical practice, many TEPs supplement clinical experiences with less authentic approximations that occur in the absence of real children. These might include simulations or rehearsals of particular teaching scenarios (Grossman et al., 2009). During these low-stakes opportunities, candidates can try their hands at instructive and developmentally appropriate aspects of teaching with more support and oversight than they might receive in more authentic clinical approximations.

Mixed Reality Simulations as Platforms for Candidates’ Practice

Mixed reality platforms offer numerous affordances as spaces for candidates’ practice opportunities. These platforms are widely used in teacher education and other fields for professional preparation (Kaufman & Ireland, 2016). In mixed reality simulations (MRS), candidates interact with virtual student avatars on a screen, who are remotely controlled by a live human actor. This blend of real and virtual elements creates “mixed” reality space for practicing teaching (see Appendix Figures A1–A2 for examples of the user interface). In MRS, candidates might try their hand at skills they are unlikely to lead in their clinical placements, such as facilitating a caregiver conference (Sebastian et al., 2023), as well as skills they may not see modeled well, such as providing high-quality text-focused instruction (Cazden & Beck, 2003). Instructors can also standardize simulation tasks, match their difficulty to candidates’ needs, and/or offer varying levels of support during these learning opportunities (Bondie et al., 2021). Because avatars are controlled by a live actor, it is relatively straightforward to make such adjustments.

Nearly a hundred TEPs are investing in MRS as platforms for candidates’ practice (AACTE, 2020). A growing body of research suggests that simulated practice promotes an array of positive learning outcomes, including improved confidence (e.g., Hirsch et al., 2023), perceived classroom management abilities (e.g., Hudson et al., 2019), and skills for a range of instructional practices (e.g., Cohen et al., 2020; Cohen, Wong, et al., 2024b ; Luke et al., 2024). However, as evidenced in these examples, existing research describes simulations with widely varying features, including a mixture of tasks, supports, and delivery modes. Simulation technology is an investment for TEPs. Knowing more precisely whether and how these features influence candidates’ perceptions of simulated practice can help resource-constrained programs more strategically allocate resources in ways that are likelier to enhance outcomes.

Features of Simulated Learning Opportunities

Extant research on novice teachers’ simulated learning highlights the flexibility of this pedagogical approach. In simulations, candidates can practice a variety of teaching tasks with differentiated supports, and they can do so live in classrooms or online from a location of their choosing. However, little is known about how to optimize this flexibility to promote positive experiences for candidates—likely a helpful precondition for enhanced professional learning (Korthagen, 2017). The major contribution of this study is our exploration of how candidates respond to particular features of simulated learning opportunities. We focus on three flexible features of simulated learning opportunities: what candidates do (task), how they are supported (supports), and whether candidates complete simulations in person or online (mode of delivery). These features are easy for TEPs to adjust, and exploring them empirically can yield guidance about whether and how particular combinations enhance candidates’ perceptions of simulations.

Task

Candidates can benefit from approximating many components of teaching before beginning their work in real classrooms (Grossman et al., 2009). Fortunately, the simulator can be used to approximate a range of teaching tasks (Bondie et al., 2021; Ledger et al., 2022), from collecting behavioral data (Hirsch et al., 2023) to using productive mathematical talk moves (Aguilar & Flores, 2022). The task is the scenario related to teaching and/or student learning to which candidates respond in the simulator. TEPs can customize simulated tasks and avatar behaviors to be instructive and developmentally appropriate for their candidates (Bondie & Dede, 2021). Through a combination of standardized and adaptable avatar interactions, designers ensure that candidates encounter desired teaching challenges while still engaging in interactions that feel authentic. These opportunities might gradually increase in complexity and authenticity over a candidate’s course of preparation (Grossman et al., 2009). For example, candidates just starting their program could practice launching a lesson in a simulation, while candidates at later stages of a TEP might simulate the implementation of longer and more multifaceted portions of instruction.

As candidates develop their understanding of real classrooms, some tasks may challenge or discomfit them more than others. Novices’ early teaching experiences often prompt “reality shock,” especially in the area of navigating classroom behavior (Dicke et al., 2015; Evertson & Weinstein, 2006). Simulations are potentially useful spaces for grappling with the distance between candidates’ expectations and classroom realities, but they understandably may not particularly enjoy the experience. Understanding candidates’ reactions to different simulated tasks can help TEPs design and implement them in ways that feel positive and useful, potentially encouraging their uptake down the road (Korthagen, 2017).

Supports

Studies of simulations often include opportunities for repetition coupled with other supports designed to enhance candidates’ learning in/from practice (Cohen et al., in press). By virtue of the flexibility of simulated learning experiences, extant literature describes a wide range of supports. A teacher educator can pause simulations on-demand (Spencer et al., 2019), prompting opportunities for self-reflection (Cohen et al., 2020) and/or group debriefing (Mikeska et al., 2023). Peers can also observe each other’s practice (Ely et al., 2018) and provide feedback to one another. Such feedback can also come from an expert, such as the teacher educator or an outside coach (Bosch & Ellis, 2021). Within simulations, the student avatars themselves can also provide feedback to candidates. Since student avatars are controlled by a human simulation specialist, they often deliver a mixture of standardized and improvised lines (Bondie et al., 2021). By responding to candidates using If-Then logic, avatars can reveal potential implications of candidates’ instructional choices (Bondie & Dede, 2021). For example, most contemporary classroom management literature recommends a responsive approach that affirms students’ place in classrooms and avoids subjective judgments (Tigert et al., 2022). TEPs can design simulations to cue candidates toward this approach: if candidates respond punitively to off-task comments, then avatars will put their heads down and become disengaged. Conversely, if candidates redirect avatars responsively and calmly, then avatars will reengage with the activity.

The availability and type of supports (or a lack thereof) likely shape candidates’ perceptions of simulations (Bondie et al., 2023), as well as the productivity of the learning opportunity (Cohen et al., 2020; Cohen, Wong, et al., 2024b). Knowing which instructional supports candidates appreciate for which tasks might improve their perceptions of simulations and, more distally, their productivity. Although it is clear coaching can help candidates improve more quickly (Bondie et al., 2023; Cohen et al., 2020; Mikeska et al., 2023), it is less clear how candidates feel about this support or others. Using random assignment, Bondie et al. (2023) find that receiving coaching during simulated practice improves preservice candidates’ sense of task value compared to self-reflecting. However, more research with larger samples is needed to determine whether this finding is influenced by the particular features of the simulation under study, such as the task. With a sense of how candidates feel about different supports during different tasks, TEPs will be better positioned to design practice opportunities candidates value. For example, candidates may feel judged while receiving coaching for particular tasks and, as such, they may be resistant to returning to the simulator or seeking out future coaching, despite its proven effectiveness. Relatedly, candidates may appreciate coaching for some tasks more than others. Studies leveraging within-person designs to control for unobserved aspects of the participants can generate these sorts of precise understandings about simulation features.

Mode of Delivery

A major but understudied affordance of MRS is the ability to deliver simulations live in physical classrooms or remotely via video conferencing software (see Appendix Figures A1–A2 for examples of each). This feature holds particular promise as growing numbers of TEPs are offering hybrid and online courses of study (Yin & Partelow, 2020). Many studies of simulations do not report how the simulations were delivered (Bondie et al., 2021). Among those that do, researchers report a diverse range of approaches, without extensive discussion of the rationale underlying the chosen mode of delivery. Some researchers have suggested completing simulations in person in real classrooms may promote suspension of disbelief (Dieker et al., 2014). Although candidates do seem to find mixed reality simulations more realistic than live role plays with humans (e.g., McKown et al., 2022), limited research has deeply explored the nature of authenticity in MRS (Howell & Mikeska, 2021). There may well be benefits to candidates completing simulations remotely from locations of their choosing, even if it feels less realistic. For example, in private, candidates may feel less surveilled and, accordingly, more able to set aside self-consciousness.

Altering the mode of delivery could also be a way to mitigate impediments to candidates’ experiences related to their reactions to the platform. Candidates often have strong initial reactions to simulations (Bautista & Boone, 2015; Dalinger et al., 2020; Mikeska et al., 2023; Parong & Mayer, 2021), including surprise, excitement, confusion, and/or anxiety. Emerging research suggests that these affective responses can have an unproductive impact on learning and attention (Parong & Mayer, 2021). More immersive environments such as live classrooms may exacerbate candidates’ reactions in ways that detract from their perceptions of simulated practice. Completing simulations privately could help candidates focus on the teaching tasks rather than the novelty of the platform. However, no studies to our knowledge have explored this empirically.

Methods

To examine the influence of features of a simulated learning opportunity on candidates’ perceptions, we used post-simulation survey data from candidates (N = 192) who engaged in 384 MRS sessions over two academic years as part of a curricular requirement of their TEP at Lambeth University. 2 These data were collected as part of a larger investigation into the impact of simulated practice on candidates’ development (Cohen et al., 2020; Cohen, Wong, et al., 2024b). These studies identified the positive causal impact of these simulations on candidate development, especially when augmented with coaching.

Candidates completed simulations either in-person in a simulation lab or remotely via Zoom. All simulation sessions followed a standardized format of two five-minute rounds of a simulated task with five-minutes of support in the form of directive coaching or independent, survey-based self-reflection between rounds. At the conclusion of each session, candidates completed a post-simulation survey about their perceptions of the session. These surveys are the primary data source for this study. Using these survey responses, we identify associations between systematically varied features of simulations (task, support, and mode of delivery) and candidates’ survey responses. The simulation procedures are summarized in Figure 1. We describe these procedures and our analytic approach in more detail below.

Simulation Procedures Flowchart.

Participants and Setting

All data was collected at Lambeth University, a research-intensive, public university in the Southeastern United States. Although many TEPs use simulations in specific courses (AACTE, 2020), Lambeth is a useful study site due to its sustained, program-wide integration of simulations across cohorts. Participants (N = 192) were enrolled in one of two cohorts of a graduate TEP that culminated in elementary, secondary, or special education licensure. Our sample is generally reflective of the current population of entering teachers (National Center for Education Statistics [NCES], 2023): mostly white (74.5%), female (85.9%), under 23 years of age (76.6%), and having at least one college-educated parent (78.2%).

All participants completed two sessions in MRS as a compulsory component of the final two semesters of the TEP. Candidates completed the first simulation session in the fall and the second in the spring. Before each simulation window, candidates learned about concepts related to the simulation tasks in their general methods coursework. In particular, candidates saw tasks modeled in video representations (i.e., examples of teaching relevant to the simulation scenarios); the models were then analyzed, or decomposed, to illuminate the key features of high-quality approaches (Grossman et al., 2009).

Simulation Procedures

Before candidates’ first session, the research team collected demographic information about participants, and participants were oriented to the simulator in a brief demonstration by the research team. The purpose of the demonstration was to familiarize candidates with the platform and help them understand how they would interact with the student avatars.

A simulation session consisted of two rounds of a five-minute teaching task with five minutes of a randomly assigned support between rounds. Both tasks required candidates to provide instruction in a virtual classroom to a group of five students who appeared to be in late elementary or early middle school. The tasks were aligned with frameworks used in local K–12 clinical placements and were developed collaboratively by members of the research team, the simulation specialists, and TEP faculty.

Facilitators, who were undergraduate research assistants, joined each session to provide introductions, instructions, and transitions for self-reflection sessions. One of two simulation specialists controlled student avatars during all 384 sessions; these specialists were not randomly assigned but were scheduled in such a way across course sections, tasks, and support assignments as to minimize specialist effects. Simulation specialists attended a two-hour intensive training and multiple practice sessions with members of the research team, in which they learned to respond to candidates using a mixture of improvised and standardized lines for both tasks. This ensured that participants completed “parallel” versions of each task, meaning that avatar responses remained consistent in terms of their number, type, timing, and intensity across sessions, though their substance could vary. To maintain fidelity of implementation, our research team used an observational rubric to code specialist performance in a random subset of simulations throughout data collection (see Appendix B for a Sample Simulation Specialist Fidelity Rubric). Based on the results of this coding, researchers provided ongoing feedback and calibration to simulation specialists. To further assess the influence of simulation specialists on our results, we conducted an additional robustness check by adding a specialist fixed effect to the analytic model for a subsample of simulation sessions and found the magnitude, direction, and statistical significance of the task and support outcomes remained stable (see Appendix Tables B1–B2 for complete model outputs with and without the specialist fixed effect).

Systematically Varied Simulation Feature: Task

Each candidate practiced a different task in each of their two simulation sessions. In the fall, all candidates completed a text-focused instruction task, in which candidates aimed to improve their skill providing feedback to student contributions during a text-focused discussion (for details, see Cohen, Anglin, & Wiseman, 2024a). Before this task, candidates received a copy of the fictional 6th-grade text “A Dangerous Game” and three text-based questions. They were asked to lead a discussion of these questions with a small group of student avatars (termed “students”) in the simulator. In the simulator, candidates asked the questions and probed and provided feedback on students’ responses. Student responses included a standardized mix of exemplary answers, partial answers, and unsupportable claims. Simulation specialists were trained to respond to candidate instruction using If-Then logic: if a candidate did not ask follow-up questions, students would not elaborate their thinking or correct misunderstandings. If a candidate asked follow-up questions or scaffolded students’ engagement with the text, students provided additional information and/or revised their thinking.

In the spring, all candidates completed a behavioral redirection task, in which they aimed to develop their skill redirecting students’ off-task behavior. Before the simulation, candidates were told it was students’ first day of school and tasked to co-create classroom norms with students. In the simulator, candidates led a conversation on classroom norms while concurrently attempting to redirect students’ off-task behaviors. Examples of off-task behaviors included making noises, talking to peers, and texting. Simulation specialists responded to candidate instruction using an If-Then logic: if candidates did not specifically redirect the off-task behavior, students remained off-task until the next scripted behavior (roughly 40 seconds). If candidates redirected the behavior, students immediately re-engaged with the conversation.

Systematically Varied Simulation Feature: Support

For each session, candidates were randomly assigned to receive either five minutes of coaching or spend five minutes self-reflecting between rounds of the task. Coaches were doctoral students with K–12 teaching experience who underwent over 20 hours of intensive training in a researcher-developed protocol. Their training involved observing recorded exemplar sessions and live role-play practice with a member of the research team. Coaches’ fidelity to the protocol was monitored by a member of the research team throughout the study; coaches also attended calibration meetings each month (for more information about coaches’ adherence to and replicability of the protocol, see Anglin et al., 2021). During sessions with coaching, coaches observed participants’ first round of practice. Using these observations, coaches located candidates on a skill sequence for each task. Coaches then followed a four-step protocol to coach the participant on the identified focal skill. The protocol involved eliciting participant reflection, identifying and providing feedback on a focal skill, discussing the new approach with the candidate, and finally practicing that skill in a brief role-play (for more details on the coaching protocol, see Cohen et al., 2020). The candidate then completed another round of the task.

During sessions supported by self-reflection, a session facilitator shared a link to an online self-reflection survey after candidates completed the task. Candidates then spent five minutes responding to the survey. The facilitator encouraged candidates to use the full five minutes. The survey included three open-ended questions: What are some ways you think you were able to support students in making text-based claims (or staying on task) during this discussion? In what ways could you improve your responses to students during this discussion so they would be better able to provide text-based claims (or stay on task)? What are you going to try to do differently in your next session? After five minutes, the facilitator asked the candidate to submit the survey. The candidate then completed another round of the task.

Systematically Varied Simulation Feature: Mode of Delivery

In the first year of data collection, simulations occurred in a university-based simulation lab in the same building as participants’ other coursework. Candidates met a facilitator in the lab, where the simulation was already visible on a large television screen. The simulation specialist controlled the avatars remotely. The facilitator introduced the simulation, reminded participants of the task details, and facilitated transitions. In coached sessions, the coach served as the facilitator.

In the second year of data collection, simulations occurred remotely via Zoom. This change allowed candidates to complete simulations from a location of their choosing, so long as they had a reliable internet connection. Facilitators, coaches, and simulation specialists joined sessions via Zoom. As with in person delivery, facilitators or coaches conducted introductions, instructions, and transitions, while also troubleshooting technology issues.

Data Sources

All candidates completed an online post-simulation survey immediately following the conclusion of each simulation session. The survey consisted of eight Likert questions that asked about candidates’ perceptions of the simulator, including their preparation, willingness to do it again or recommend it to a friend, and its relevance and utility. For each question, participants rated their response on a scale of one (strongly disagree) to five (strongly agree). Similar surveys have been used in prior research on candidates’ experiences of simulations (e.g., Bondie et al., 2023; Larson et al., 2020). We examined the scale’s validity for use for our sample and purpose of understanding experiences during simulated practice. Confirmatory factor analysis (CFA) models (Brown, 2015) were fitted using the questions on the survey (see Appendix Table C1 for additional details on the CFA models). Given the poor model fit and measurement invariance over tasks and years, we do not present a composite experience score. Instead, we completed the subsequent analyses on item-level responses.

Analyses

To understand participants’ perceptions across the full sample, we begin by analyzing descriptive statistics for each item. Next, to tease out the influence of simulation features on candidates’ perceptions, we use a candidate random effects estimator to compare survey responses across different simulation conditions. The estimation strategy leverages variation in the features of simulation sessions within candidates and across cohorts to estimate the influence of task, support, and mode of delivery on candidates’ survey responses. We estimate observation i for participant j as

where Yij is observation i for participant j, taskij is a binary variable taking a value of 0 if observation i for participant j is a response after the text-focused task and a value of 1 if observation i for participant j is a response after the behavioral redirections scenario, supportij is a binary variable taking a value of 0 if observation i for participant j is a response after self-reflection and a value of 1 if observation i for participant j is a response after coaching, deliveryj is a binary variable taking a value of 0 if participant j was in the program during in person delivery (cohort 1) and taking a value of 1 if participant j was in the program during the online-delivery (cohort 2), deliveryj × taskij is an interaction effect that allows taskij to vary by deliveryj, deliveryj × supportij is an interaction effect that allows supportij to vary by deliveryj, µj is a person random intercept, and εij is a within-person random error.

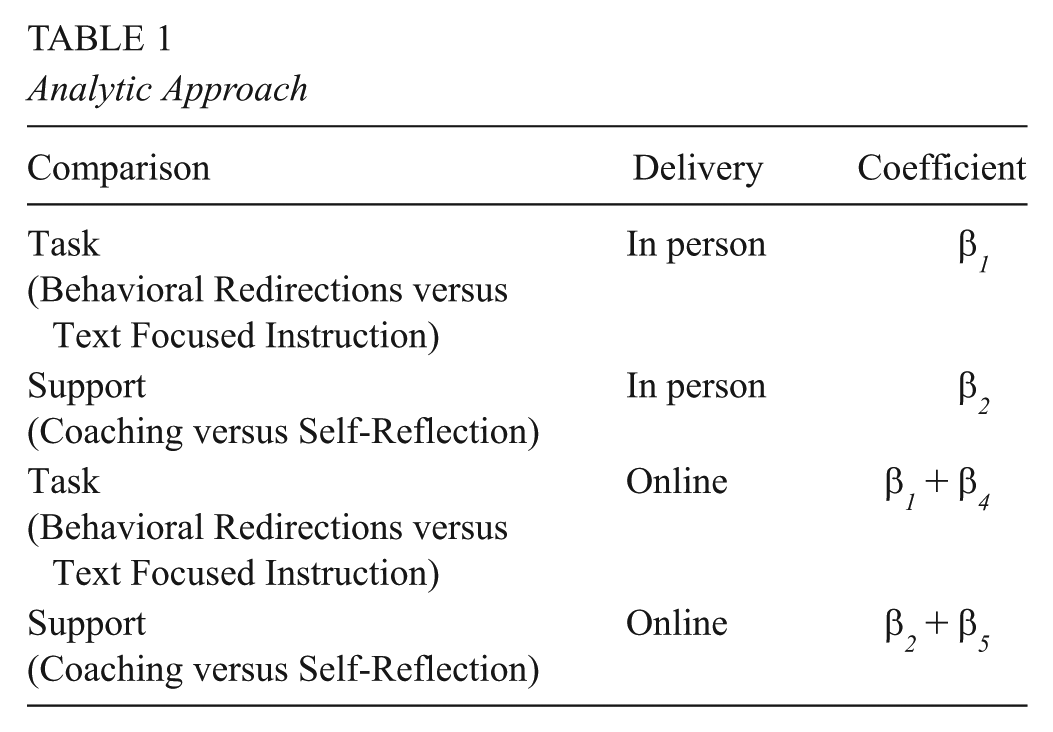

This model compares each candidate’s perceptions across tasks and supports. These comparisons rely on two cohorts of survey data in which simulations were delivered via different modes (in-person or online). For the in-person delivery cohort, the task comparison is captured by the coefficient β1 and the support comparison is captured by β2. For the online delivery cohort, the task comparison is represented by β1 + β4 and the support comparison is represented by β2 + β5. Here, β4and β5 represent the changes in task and support outcomes for the online delivery cohort compared to the in-person delivery cohort.

An intuitive way to think about the model is as two parallel regressions, one for the online cohort and one for the in-person cohort, with comparisons made between the results. We use person-level random effects to test for average differences in perceptions between the online and in-person cohorts. The random effects model separates individual-level (e.g., mode of delivery) and time-varying (e.g., task and support) characteristics. This is accomplished by treating individual characteristics as random variables, uncorrelated with the simulation features of interest (Boyce, 2010, Quintana, 2021). This assumption is plausible given random assignment of supports within each cohort. Conversely, including an individual fixed effect would remove all heterogeneity between participants, meaning that mode of delivery, which was fixed within cohorts and individuals, would be absorbed alongside other between-participant differences. We pool the samples into a single model to improve statistical power and allow for formal hypothesis testing as to whether the task and support outcomes differ across cohorts.

Table 1 maps each coefficient to the corresponding comparison across the two delivery modes. For transparency, we also provide disaggregated results by delivery mode in the Appendix (see Tables D1–D2). The coefficients are similar for the disaggregated results and pooled results.

Analytic Approach

Limitations

Our study is not without limitations. Although estimates for support and task are unconfounded by unobserved between-participant heterogeneity, the delivery estimates are not. Unobserved differences between the two cohorts might bias our estimates of the influence of delivery. Because the mode of delivery was consistent within each participant and varied only between cohorts, those results should be interpreted with caution. We do not intend to identify causal relationships but rather aim to point to promising potential levers for improving candidates’ perceptions of simulations. More research is needed to understand how different tasks, supports, and modes of delivery impact candidates’ experiences and learning in simulations. The field can also benefit from expanding this research to explore other design choices, such as completing simulations in group formats.

Additionally, all candidates in this study completed simulations as a required component of a shared TEP curriculum. Therefore, the sequencing and timing of simulations, relative to one another and relative to other preparatory opportunities, were consistent across the sample. As such, we are not able to disentangle the role of context or task order on candidates’ perceptions of simulations. As discussed in our framework, candidates’ perceptions of simulations are likely a function of several factors—their characteristics, the context in which they are learning, and the features of the simulation itself—that interact in complex ways (Bondie et al., 2023; Bosch & Ellis, 2021; Cohen, Anglin, & Wiseman, 2024a; Cohen, Wong, et al., 2024b; Pankowski & Walker, 2016). More research is needed to understand how our findings generalize to other populations of teacher candidates at different stages of their preparatory experience, as well as how candidates’ perceptions may be influenced by repeated simulation opportunities. In ongoing work, we are randomly assigning participants to different task orders to better understand the most effective sequences for practicing the myriad skills prospective teachers need.

Findings

What Are Candidates’ Perceptions of Simulated Practice?

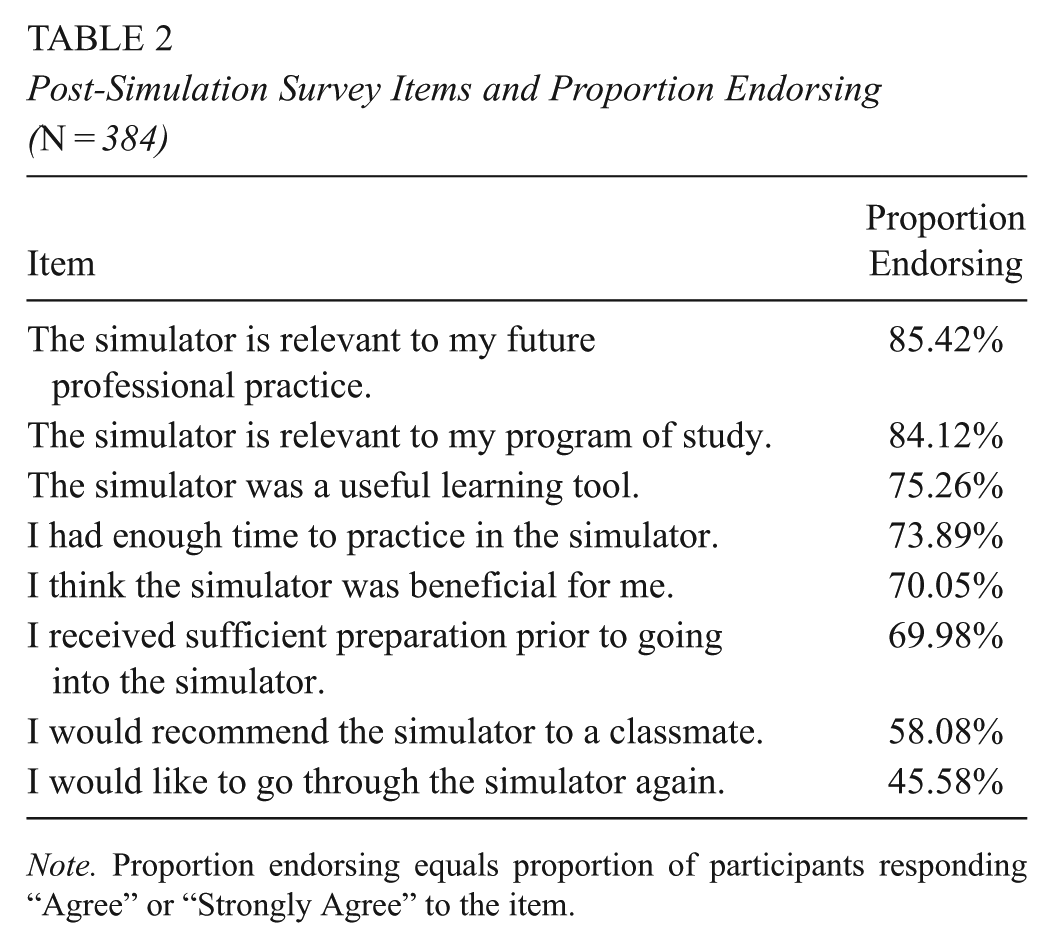

Table 2 shows the proportion of respondents (N = 192) who endorsed each item after all simulation sessions (N = 384). The proportion endorsing each item is equal to the proportion of participants who responded “Agree” or “Strongly Agree” for each item. Across both years of data collection and all simulation feature variations, candidates were highly likely to endorse questions related to the simulation’s relevance and utility. They were less likely to endorse going through the simulator again or recommending it to a friend. Subsequent analyses clarify features that might improve or detract from candidates’ simulation perceptions.

Post-Simulation Survey Items and Proportion Endorsing (N = 384)

Note. Proportion endorsing equals proportion of participants responding “Agree” or “Strongly Agree” to the item.

To What Extent Do Candidates’ Perceptions of Simulated Learning Opportunities Vary by the Task, Support, and/or Mode of Delivery?

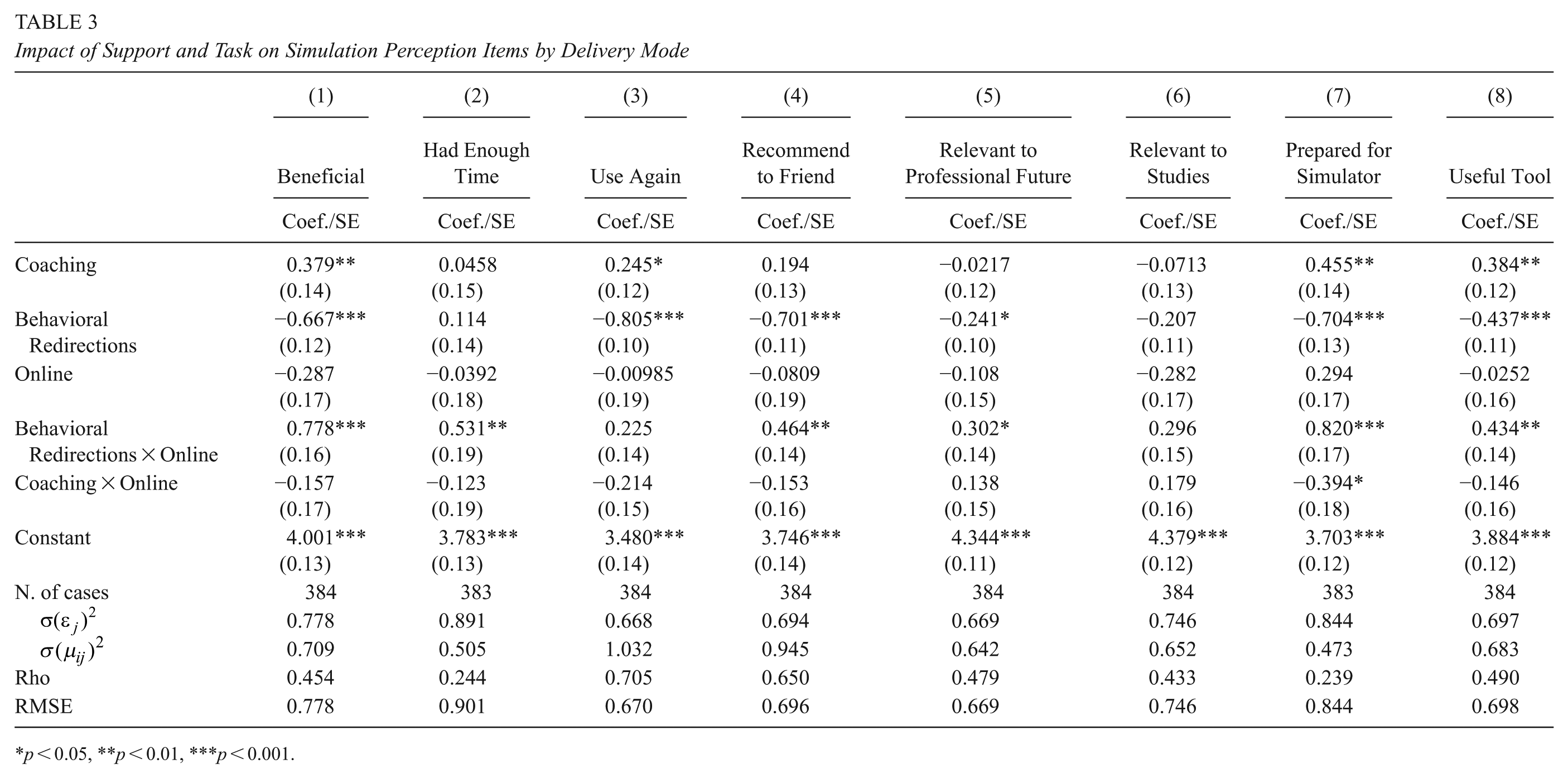

Results from equation (1) for each survey item are presented in Table 3. Results are reported using raw scale scores (1–5), and the baseline group (constant) is candidates who self-reflected following the text-focused instruction task with an in person delivery. The coefficients are interpreted as the average change in experience compared to the reference group. The results indicate significant heterogeneity in experiences across aspects of experience by tasks, supports, and delivery modes. Differences between participants account for 20% to 71% of observed variance in item responses, indicating candidates seem to experience simulations differently from each other, regardless of the features. This is important to note, as for some items, the shifts in experiences associated with varying simulation features are smaller than the impact of unobserved differences between candidates. For example, 71% of the variance in the “use again” item is attributable to between-candidate differences. This result suggests differences in candidates’ interest in using the simulator again is mostly driven by unobserved candidate characteristics that we are not able to unpack in these data. This finding aligns with our theoretical framework, as it suggests that individual candidates have different responses to opportunities to learn about teaching (Korthagen, 2017). Without sufficiently adjusting for those individual-level differences, we risk making inappropriate inferences about those opportunities. It also reinforces the value of our chosen methodological approach, which controls for these unobserved candidates to identify if and how simulation features influence candidates’ perceptions of simulated learning experiences.

Impact of Support and Task on Simulation Perception Items by Delivery Mode

p < 0.05, **p < 0.01, ***p < 0.001.

The Influence of Task on Candidates’ Perceptions

Changing simulation features does seem to significantly shift candidates’ responses to many items. In particular, candidates’ responses became more negative when the task changed from text-focused instruction, the reference category, to behavioral redirection. Engaging in the behavioral redirection task compared to the text-focused instruction task significantly decreased participants’ experience across all survey items, except for the items about having enough time and finding the simulation relevant to their studies, which remained stable across both tasks. On a Likert scale of one to five, β1 coefficients range from −0.241 (p < 0.005)for candidates’ perception of the simulation’s relevance to their future profession to −0.805 (p < 0.001) for their willingness to use it again following the behavioral redirection task.

The Influence of Support on Candidates’ Perceptions

Changing the supports also influenced participants’ reported experiences. When participants received coaching, they generally reported better experiences, although not across the board. For example, coached participants found the simulator more beneficial

The Influence of Mode of Delivery on Candidates’ Perceptions

Next, we investigated whether mode of delivery (Zoom versus in person) was related to candidates’ experience and whether delivery is a potential moderator of the impact of task and support on their experience. We discovered that shifting simulation to online delivery moderated the influence of the different tasks, but it did not seem to moderate the relationship between the simulation’s supports and candidates’ perceptions. In other words, participants who completed simulations with coaching online reported similar experiences to those who received coaching in person. On the other hand, online delivery moderated the influence of task, as suggested by the large and statistically significant positive interaction coefficients (Behavioral Redirections × Online) for six of eight items. Participants tended to report worse experiences during the behavioral redirection task compared to text-based instruction when they completed simulations in person. However, when they completed simulations online, their experience did not seem to change for the behavioral redirection task versus the text-focused instruction task. Online delivery seems to reduce the differences in reported experiences between the two tasks.

Discussion and Implications

Mixed reality simulations are increasingly common platforms for approximations of teaching (Grossman et al., 2009) that are used in scores of teacher preparation programs across the country (AACTE, 2020). Given promising evidence about candidates’ growth following simulated practice, TEPs are taking advantage of this technology to provide candidates with wide-ranging practice opportunities. In simulated environments, novice teachers can practice the many skills required for successful teaching that they are learning about in their coursework and seeing in their clinical placements, making them a helpful bridge and complement to practicing teaching in real classrooms (Grossman et al., 2009). They can receive differentiated supports that are adjusted to meet their needs as they progress. Moreover, candidates can complete these opportunities remotely or live in classrooms—a promising affordance as online and asynchronous pathways into teaching proliferate (Yin & Partelow, 2020).

Despite this widespread use, there is a lack of evidence about the particular simulation features that promote positive experiences for those learning to teach. In this study, we add empirical precision to a growing body of research about candidates’ perceptions of MRS across tasks, supports, and delivery formats (e.g., Bondie et al., 2023; Dalinger et al., 2020; Larson et al., 2020). By identifying particular features of simulations that make simulations feel more valuable to candidates during the crucial preservice period, we extend the knowledge base in novel and important ways. In particular, we offer new evidence by systematically testing candidates’ responses to different features of the simulated learning opportunities while more robustly addressing issues of selection that might influence those estimates. With these findings, we hope to support TEPs in structuring practice opportunities that feel valuable to candidates. When these learning opportunities feel valuable, candidates might be more likely to integrate them with their developing ideas about good teaching and, more importantly, take them up down the road in real classrooms (Korthagen, 2017). Below, we describe the implications of our findings for each feature under study.

Tasks

Candidates reported significantly better simulation experiences in the text-focused instruction task compared to the behavioral redirection task. Little work has compared the ways candidates respond to different tasks, although we might logically assume simulations lend themselves to some teaching tasks more than others (Cohen, Wong, et al., 2024b). The tasks’ differing If-Then logics could explain candidates’ varying experiences. In both tasks, avatar responses provide candidates with live “feedback” on their instruction, but the technology facilitates more discernible feedback for some skills than others. During the instructional task, student avatars signaled the quality of candidates’ feedback by offering incorrect or unrevised answers to text-based questions. Unless candidates probed student responses, students echoed the same misconceptions. Candidates likely needed some pedagogical content knowledge to take up this less-obvious avatar feedback, as they would have to recognize the misconception to see avatar responses as problematic and meriting teacher action (Bondie et al., 2023). In the behavioral redirection task, students offered similarly immediate—but arguably more obvious—feedback: until candidates redirected the off-task behavior, the behavior persisted. This meant students might continue drumming, humming, or talking to a neighbor for up to a minute as the candidate tried to instruct.

A student who does not know the right answer likely does not prompt the same sort of disruption or teacher discomfort as one who is off task. Whereas candidates might “move on” after receiving wrong answers during the instructional task, avatar disruptions in the behavioral task made it virtually impossible to continue teaching unless they redirected the behavior. As such, candidates may have developed a stronger awareness of their skill gaps during the behavioral task. This could explain why candidates reported less preparedness and less willingness to recommend or repeat the task. This “reality shock” phenomenon is well-documented in the literature on novice teachers’ growth (Dicke et al., 2015; Evertson & Weinstein, 2006; Tschannen-Moran et al., 1998): recognizing one’s unpreparedness—and feeling negatively about it—is a frequent and disheartening experience for beginning teachers.

We do not suggest that candidates should not complete challenging tasks like the behavioral one in this study. Establishing and maintaining positive learning environments is an important component of successful teaching—and an area where novice teachers often need support (Bartanen et al., 2023; Dicke et al., 2015). Candidates will likely receive the same sort of hard-to-ignore feedback from their real students if they struggle to respond to behavioral disruptions in the classroom. And the stakes are high: although teachers may hone their redirection skills “on the job,” early-career difficulties with classroom management are associated with attrition (Bartanen et al., 2023).

Practicing in simulations could be a way to curb their reality shock in a lower-stakes context. However, considering candidates’ affective responses to simulations is likely an important first-step to developing these sorts of understandings (Dalinger et al., 2020; Korthagen, 2017). Notably, candidates ranked the behavioral task—their second opportunity in the simulator—as less relevant to their professional future than the instructional task and expressed less preparation for it. This is concerning given the research-backed importance of classroom management for beginning teacher development (Bartanen et al., 2023). A host of prior research suggests that candidates might become more confident in and receptive to the simulator with exposure (e.g., Dalinger et al., 2020; Larson et al., 2020). Here, we find the opposite: participants felt less prepared and appreciated the opportunity less in their second session. We see this as suggestive evidence that the nature of the task being practiced is a salient consideration when designing and implementing simulations.

Given candidates’ more negative response to the behavioral redirection task, TEPs should consider how to introduce, situate, and support such opportunities. For instance, candidates might find a behaviorally focused simulation more valuable after viewing high-quality models of the task and analyzing those models to highlight key attributes of strong instantiations (Grossman et al., 2009; Kelley-Petersen et al., 2018). This sort of preparation before the simulation might curb disheartening reality shock in the simulator (Cohen et al., in press; Dicke et al., 2015; Evertson & Weinstein, 2006). To improve candidates’ sense of the value and relevance of this task, TEPs might also equip candidates with more knowledge about the realities of classrooms and student behavior, developing explicit links between those realities and the simulation. Such framing might improve candidates’ sense that they need to practice enacting strong behavioral redirections (Korthagen, 2017; Wolf, 1978). We hope candidates who ascribe high value to their simulation experiences may be more likely to utilize the lessons learned in simulations later in their real classrooms, although that is also an important area for future research.

It follows, then, that candidates may have been less able to accurately evaluate their performance in the text-focused instructional task, which featured less obvious avatar cues. If so, they may have evaded the “reality shock” described above. As teachers, we cannot “feel” students’ difficulties comprehending a text in the same way we experience their level of engagement. It is important to consider if candidates’ more positive experiences in the instructional task may belie inaccurate assumptions about readiness (Tschannen-Moran et al., 1998) or signal that the task was not challenging enough (Ericsson & Pool, 2016). As such, future research might explore how candidate perceptions interact with performance. Fortunately, supports and tasks can be easily differentiated and adjusted in simulated environments to meet candidates’ needs. However, more research is needed to determine which supports work best for which tasks at which stages of candidates’ development (Cohen, Wong, et al., 2024b; Cohen et al., 2025).

Supports

Candidates reported significantly better simulation experiences when they received coaching compared to when they self-reflected between rounds. Coaching made simulations feel more beneficial and enhanced candidates’ sense of preparedness, perhaps because it supported their understanding of and skills for the task. The positive impact of coaching on candidates’ experience persisted for both tasks. For some items, the positive effect of coaching made headway in offsetting the negative impact of the behavioral redirection task. Coupled with previous research showing the benefits of directive coaching on candidates’ skill development across multiple tasks (Bondie et al., 2023; Cohen et al., 2020; Cohen, Wong, et al., 2024b), we see this as convincing evidence in favor of pairing simulated practice with directive feedback from coaches or teacher educators. We hypothesize that coaching may be a way to help candidates overcome the “reality shock” of challenging tasks with hard-to-ignore avatar feedback.

The nature of feedback seems like an important lever for improving candidates’ experience. Candidates seem to appreciate explicit feedback from a coach. However, our results suggest that candidates may not value (or even fully realize) more implicit feedback, such as cues from avatars or self-reflection prompts. Coaching, as it is presented here, is more time and resource-intensive than self-reflection or baking avatar feedback into tasks. However, it may not have to be. For instance, candidates may equally value and benefit from observing MRS in a fishbowl format with group coaching (e.g., Ely et al., 2018; Levin & Flavian, 2020). Relatedly, Demszky et al. (2023) and Littenberg-Tobias et al. (2021) show positive outcomes when using natural language processing to provide teachers with automated feedback on their instruction. Such technological advancements might be layered onto existing simulation platforms to enhance candidates’ perceptions in resource-effective ways. Understanding how candidates respond to a wider array of supports during simulated practice is an important direction for future research.

Mode of Delivery

The option to deliver simulations online or in person in classrooms offers TEPs more flexibility around simulation implementation. However, up to this point, the field has lacked empirical guidance for how to make such decisions. We find that moving simulations online seems to improve candidates’ experience for the behavioral redirection task. In fact, completing the task online seems to cancel out the negative influence of the behavioral task compared to the instructional task. In other words, candidates who completed the behavioral redirection task online reported similar experiences as candidates who completed the instructional task under similar conditions. On the other hand, candidates’ more positive experiences in the instructional task did not seem to change whether the simulation was delivered online or in person.

Our findings about mode of delivery underscore the importance of attending to candidates’ preferences and needs as a means of optimizing TEPs’ time and money investments in simulated learning opportunities (Korthagen, 2017; Wolf, 1978). Prior studies have recommended delivering simulations in person, in either lab settings or real classrooms, because completing simulations in person may support candidates’ suspension of disbelief or create opportunities for peers to learn vicariously through observation (Dalinger et al., 2020; Dieker et al., 2014). However, our findings suggest a complex relationship between mode of delivery and candidates’ perceptions of simulated opportunities. Specifically, mode of delivery seems to matter less to candidates during instructional tasks, whereas, for more demanding tasks, candidates may appreciate the privacy, security, and convenience of completing simulations remotely. TEPs might, accordingly, schedule opportunities to simulate behavioral tasks with attention to candidates’ preferences and desires. In contrast, for instructionally focused tasks candidates perceive as necessitating less vulnerability, TEPs might make decisions about mode of delivery based on learning objectives or economy of time and resources. If candidates can complete some simulations remotely and still find the experience valuable, TEP course time might be used for introducing and decomposing strong models and discussing the theoretical underpinnings of the skill to be simulated (Grossman et al., 2009).

Conclusion

The flexibility of simulations makes them a promising pedagogy of teacher education. However, the current variety in what TEPs are simulating and how researchers are studying these platforms may inhibit their optimal use. We offer more precise empirical evidence relating features of simulated learning opportunities to candidates’ perceptions so that TEPs can design simulations candidates see as valuable components of their preparation. In our study, participants reported low willingness to repeat simulations or recommend them to others under certain conditions. This is unfortunate given their cited potential as spaces for growth and development. These participants completed simulations as required curricular components, but we can imagine a future where candidates opt into simulated practice. Adjusting features of simulations to improve candidates’ experiences might help them see simulations as exciting, rigorous spaces where they want to learn—and recommend the opportunity to their peers.

The field of teacher education needs more evidence of the types of preservice experiences that prepare candidates to deliver high-quality teaching from the outset of their careers. Research suggests rapidly advancing simulation technology may offer one such experience. However, intuition, inertia, or preferences should not drive how simulations are implemented in TEPs. Here, we precisely identify simulated experiences that candidates find valuable—and those that feel less so—in service of building an evidence base of tested approaches for TEPs to draw on as they make decisions about program design. We hope this work and other ongoing research help TEPs capitalize on MRS as a rigorous and positive pedagogy of teacher education.

Supplemental Material

sj-docx-1-ero-10.1177_23328584251375064 – Supplemental material for Teacher Candidates’ Perceptions of Mixed Reality Simulations: Variations by Task, Support, and Mode of Delivery

Supplemental material, sj-docx-1-ero-10.1177_23328584251375064 for Teacher Candidates’ Perceptions of Mixed Reality Simulations: Variations by Task, Support, and Mode of Delivery by Kristyn Wilson, Julie Cohen and Steffen Erickson in AERA Open

Footnotes

Acknowledgements

We wish to thank the past and present members of the TeachSIM lab at the University of Virginia for their support collecting the data presented here. All errors are those of the authors.

Author Note

The opinions expressed are those of the authors and do not represent views of the Institute or the U.S. Department of Education.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research reported here was supported by the Institute of Education Sciences, U.S. Department of Education, through Grant #R305B140026 and Grant #R305D190043 to the Rectors and Visitors of the University of Virginia, the National Academy of Education/Spencer Foundation post-doctoral fellowship, the Jefferson Trust through Grant #DR02951 and the Bankard Fund through Grant #ER00562.

Notes

Authors

KRISTYN WILSON is a postdoctoral researcher at the University of Virginia School of Education and Human Development, 405 Emmet Street, Charlottesville, VA 22903. Email:

JULIE COHEN is the Charles S. Robb Associate Professor of Curriculum and Instruction at the University of Virginia School of Education and Human Development, 405 Emmet Street, Charlottesville, VA 22903. Email:

STEFFEN ERICKSON is an IES pre-doctoral fellow at the University of Virginia School of Education and Human Development, 405 Emmet Street, Charlottesville, VA 22903. Email:

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.