Abstract

This article presents the first psychometric validation of the Culturally Responsive Assessment of Indigenous Schooling

The Culturally Responsive Assessment of Indigenous Schooling (CRAIS) tool was developed to support the assessment of culturally responsive principles in schools serving Indigenous students. Engaging in collaborative learning, professional development, and research across a diverse group of stakeholders led to the development of the CRAIS tool, and to the suggestion in this article that methodological complexity is necessary for projects wishing to advance cultural responsiveness with and in Indigenous contexts. In our work specifically, we discuss the results of an exploratory factor analysis of the CRAIS tool, alongside the decolonizing methodologies and conversations engaged by the research team. This complexity—while not easily captured in a written form such as this—ultimately enriches our learning, strengthens the tool we have developed, and offers a path forward in our ongoing work with teachers and other educators across Indian Country.

The exploratory factor analysis of the CRAIS tool presented in this article builds on prior work through the Diné Institute for Navajo Nation Educators (DINÉ). Additional details about DINÉ are below, but what is important to note at the outset is that DINÉ is a partnership with the Diné Nation focused on professional development to strengthen teaching through a culturally responsive approach (Castagno et al., 2022). The original introduction of the CRAIS tool provides the historical context and rationale for the development of the tool (Castagno et al., 2022). The tool is designed to address the historical disparity of culturally responsive components of curriculum planning that often perpetuate traditional paradigms of western educational pedagogy, leaving out the stories, ways of knowing, and knowledge systems of Indigenous communities. The CRAIS tool does not provide guidance on the appropriate integration of western academic curricular standards; rather, the tool’s focus is to address core principles that are crucial for making schooling culturally responsive for Indigenous students. The tool offers teachers and administrators a framework to approach planning, designing, and implementing curriculum in schools and communities serving Indigenous students (Castagno et al., 2022).

Members of the research team initially used the CRAIS tool to assess integration of culturally responsive schooling principles within curriculum units written by K–12 teachers in the DINÉ program. This original team included two Indigenous scholars, a White scholar, and an Indian American scholar. Having collated the numeric findings through use of the CRAIS tool, the team maintained three of the four original members and invited two additional members who contributed to this article. Given the claims to apply decolonizing methods, we provide brief positionality backgrounds for each member to emphasize the experiences, colearning, and relationality that has occurred in the development of the article. The two co–first authors are both Indigenous and from federally recognized tribes located in the Southwest. Darold H. Joseph is Hopi and has over 25 years of experience in educational settings including PK–12 Indigenous-serving schools, social service settings for adolescents and adults with developmental disabilities, and as an assistant professor of special education in a predominantly White and Hispanic serving institution. He facilitates his worldview and experiences as a Hopi educator to use qualitative research, including Indigenous research methodologies to broaden perspectives related to the intersection of sociocultural differences for American Indian and Alaska Native (AI/AN) youth with and without disabilities. He engages in work with the intention to advance opportunities for Indigenous youth to persist in education, health, wellness, and cultural well-being. Chesleigh A. Keene is Diné/Navajo and, as an interdisciplinary population health researcher, has collaborated with educators for 10 years on work that has improved learning environments and outcomes for children. She was trained as a counseling psychologist with consideration of how the environments where clients spend the most time can impact their wellness. She now practices as a community-involved, collaborative researcher. With this, she has spent much time in classrooms considering the resources available to support psychological recommendations for students diagnosed with psychological disorders. Her clinical work and collaborative research focus on how to use cultural models and predictors of wellness to improve educational and health outcomes. Angelina E. Castagno is a White woman who has lived and worked in the southwestern United States for almost two decades. She has been engaged in qualitative research and educational partnerships with and in Indigenous communities for roughly 25 years, including with the DINÉ for over 6 years. As the director of the Institute for Native-serving Educators (INE), which includes the DINÉ, she engages collaborative leadership with PK–12 teacher leaders and often copresents and coauthors with Indigenous educators, scholars, and community leaders. Pradeep M. Dass is a male native of India who has lived and worked in the southwestern United States for 10 years. He has conducted qualitative research for the past 28 years, beginning in his doctoral program. He has worked with DINÉ teachers for the past 6 years in an in-service teacher professional development project. As the co–principal investigator of the National Science Foundation (NSF)–funded DINÉ project, he engages with teacher leaders and participating teachers in the DINÉ project, most of whom are Native Americans or teaching in Native American serving schools. Crystal Macias is a Hispanic woman, a graduate assistant with the INE, and is pursuing an EdS graduate degree in school psychology. Prior to commencing her master's program, she was an elementary/middle school teacher for 8 years. During her teaching career, she focused on implementing culturally responsive practices in math and science for preschool through eighth-grade curriculum. With the INE program and her work just outside the Navajo Nation, she supports and empowers Indigenous-serving educators to integrate a both/and approach to consider culturally responsive practices and pedagogies while adhering to state standards.

Our team is composed of a diverse representation of experts who participated in the development of the CRAIS and includes people who identify as Indigenous and non-Indigenous; men and women; faculty at various ranks and graduate students; and those with teaching, counseling, and leadership experience in schools. As such, it was important for our team to understand how our professional and lived experiences conceptualized diversity and how these experiences shaped our critical perspectives to intentionally engage in this work.

This article describes our initial psychometric validation of the CRAIS tool, alongside our insights about the need for methodological complexity when engaged in research with and in Indigenous contexts.

Background and Literature

The robust body of literature on culturally responsive pedagogy and praxis consistently substantiates community contexts, value systems, knowledge systems, and learning ecosystems as essential considerations in effective schooling of culturally and linguistically diverse students (e.g., Abrams et al., 2014; Barnhardt & Kawagley, 2005; Cajete, 2015). This is true for students in K–12 settings representing Indigenous Nations, just as it is for any student in rural, suburban, and urban settings across the United States. In addition, it is imperative that the schooling of Indigenous students take into account the sociopolitical context (i.e., treaties and federal Indian law) and the relationships between federally recognized, state-only recognized, and nonrecognized Tribes and the U.S. federal government. For example, the Indian Self-Determination and Education Assistance Act of 1975 funds—in theory—educational initiatives including training teachers to work with Native American students, meeting the special needs of students, providing academic and vocational instruction, and accountability for bilingual instruction (Strommer & Osborn, 2014; Whiteman, 1986). In practice, however, many educational programs do not receive full funding from the federal government, and some programs are categorized under discretionary funding streams. Although this and other legislation exist to redress centuries of harm imposed on Native Nations by federal schooling, disparities continue to exist between Indigenous students and their peers, including disproportionate representation among Indigenous students in disability representation, graduation rates, discipline referrals, and mental health—all of which impact enrollment and completion of K–12 and postsecondary education. Notwithstanding that these circumstances are realities within our Indigenous communities, it is necessary to assert asset-based approaches to place the strengths of Indigenous communities and their interrelated and interconnected relationships to history, language, ceremony, and place (Holm et al., 2003) in educational practices. As acts of decolonization, deficit language in traditional notions of Western education toward Indigenous communities must be countered and replaced with language to affirm, validate, and celebrate the diversity and knowledge systems of Indigenous communities (Craig & Craig, 2022). Culturally responsive schooling promises a different kind of educational experience—one that honors, centers, and leverages the cultural knowledge, prior experiences, frames of reference, and performance styles of diverse students to make schooling more relevant and effective. School-based material is more relevant and meaningful when students’ “home” communities, stories, and histories are valued and leveraged as tools for students to make connections between home and school contexts, knowledges, and expectations (Joseph & Windchief, 2015). While much has been studied and written about culturally responsive approaches, tools for educators and researchers to assess the use of these approaches in schools serving Indigenous students are limited. One reason for this absence relates to the unique languages, knowledges, and heritages of tribal communities across the United States (Kūkea Shultz & Englert, 2021). Assessments that exist are typically designed for students to demonstrate their knowledge of a particular content area, but concerns emerge when addressing the cultural contexts, priorities, and practices for students from Indigenous communities (Ball, 2021; Kūkea Shultz & Englert, 2021; Trumbull & Nelson-Barber, 2019). In particular, examples exist where assessments have been deemed culturally responsive by merely translating the language of an instrument from English to the heritage language, without also examining the content to ensure it reflects the cultural contexts of Indigenous students (Kūkea Shultz & Englert, 2021). Assessment development must also consider the priorities to address cultural validity applicable to the communities the tool is intended to serve (Kane, 2006, 2012).

Not unlike culturally responsive schooling, good research partnerships with and in Indigenous communities include decolonizing research methodologies to account for both Indigenous and Western methods, theory, and results. Decolonizing research centers Indigenous voices and epistemologies while respecting Indigenous values and following Indigenous protocols (Brayboy et al., 2012; Smith, 2012). When engaged in decolonizing research, the integration of Western methods and theories should only be done if and when it serves the local community, and adaptation may be necessary to fit with Native American contexts. Historically, what has passed for Indigenous research has been data collection and analysis conducted and represented by Western researchers, which achieves a label of Indigenous scholarship via Indigenous methodologies, but essentially perpetuates Western methodologies (Smith, 2012). This practice has generally assumed the position of external analysis or diagnosis with minimal or no input of local, culturally specific knowledge, and it has solely benefited individuals rather than communities as a whole. Researchers that have a trajectory of values and goals tangent of the Indigenous communities in the research engaged, have not gone unnoticed. For example, in 2010, the Havasupai Tribe settled with an institution of higher education in Arizona for the absence of informed consent and misrepresentation (Drabiak-Syed, 2010). Historical examples like this necessitate decolonizing approaches in research to be framed in ways that accentuate an Indigenous research agenda emphasizing self-determination (Smith, 2012) and, in relation, sovereignty. For starters, recognizing the epistemological pluralism (Windchief & Cummins, 2022) that exists among Tribal nations and Indigenous communities globally provides an optic to understand there are many ways of knowing and, thus, prevents the circumstance of epistemological overgeneralization.

The CRAIS tool was developed and informed through its originators’ knowledge of both culturally responsive schooling principles and decolonizing research methodologies (Castagno et al., 2022). As part of a professional development partnership between Northern Arizona University and the Navajo Nation, CRAIS was developed as a tool for teachers, school leaders, and researchers to assess the integration of culturally responsive principles in schools, classrooms, and curriculum serving Indigenous students. The formative development of the CRAIS tool includes the experiences and expertise of faculty and teachers serving Indigenous students, and it is critically important as part of a decolonizing methodological framework intended to disrupt and counter essentializing narratives. After the research team developed the first iteration of the CRAIS tool, we applied quantitative measures to further investigate the validity and reliability of the CRAIS tool. Our intention in employing quantitative methods was not to discern if the 23 principles were meaningful constructs according to scientific assessment, but rather to learn how the principles may organize themselves. The principles necessarily situate concepts of culture, knowledge, history, and power within educational contexts for Indigenous students and are principles largely represented as significant and valid (Castagno & Brayboy, 2008; Grande, 2000; McCarty & Brayboy, 2021; McCarty & Lee, 2014). Therefore, the intent of applying the statistical measures on the CRAIS tool was to gather insight about psychometric validation (and to validate this measure) and construct validity (to determine if scoring on the CRAIS aligns with original theoretical intent) of the tool.

Implementing qualitative methods followed by quantitative methods to confirm the culturally responsive principles in the CRAIS tool was an intentional process. Quantitative methods often deduce data into measurable units, potentially creating opportunities to misinterpret and simplify findings and can lead to limited understanding of the observed experience. This is particularly likely with dimensions of culture (Spoon, 2014). Our team used a connected process of engaging qualitative and quantitative methods, rigorously and collectively pushing the research team to examine validity beyond the traditional scope of the methods selected. Like the ways researchers have intentionally honored the role of relationality and responsibility in the use of Indigenous research methodologies (Lopez, 2021; Walter & Suina, 2019), we too incorporated a process to carefully consider our arguments for validity of the CRAIS tool. We sought to use our combined methodologies to meet the call for educational research being more dynamic in asking and answering research questions: There is very little literature that addresses how to get Western scientists and educators to understand Native worldviews. We have to come at these issues on a two-way street, rather than view the problem as a one-way challenge to get Native people to buy into the Western system. (Kawagley & Barnhardt, 1999, p. 117)

By engaging a qualitative lens first, we were able to approach our results with both knowledge and humility as we applied quantitative methods to the work.

The next sections present the methods and discussion regarding validating the CRAIS tool. We conclude by advancing the need for methodological complexity when engaged in projects with and in Indigenous communities.

Methods

Measure

The CRAIS is a tool that was developed to “assess the degree to which culturally responsive principles are or are not present in schools serving Indigenous youth” (Castagno et al., 2022, p. 138). The CRAIS was modeled after five established tools used for curricular, classroom, and school-wide observations (Bryan-Gooden et al., 2019; Martinez et al., 2012; Piburn et al., 2000; Powell et al., 2017; Skelton et al., 2017). These instruments have advanced our understanding of culturally responsive classrooms. We sought to build on existing resources to develop an assessment specifically for evaluation of culturally responsive principles in schools that serve Indigenous students. We further sought to pursue psychometric validation in order to determine the construct validity of our newly developed tool.

A diverse group of experts participated in the development of the CRAIS; this team includes people who identify as Indigenous and non-Indigenous; men and women; faculty at various ranks and graduate students; and those with teaching, counseling, and leadership experience in diverse schools.

The CRAIS is a 23-item assessment with questions about five different areas: (a) relationality, relationships, and communities; (b) Indigenous knowledge systems and language; (c) sociopolitical context and concepts, and specifically sovereignty, self-determination, and nationhood; (d) representation of Indigenous peoples; and (e) critical understandings of diversity and specifically race (Castagno et al., 2022). These five areas represented the qualitative identification of underlying principles found across a robust body of published research related to Indigenous education broadly. Developing the CRAIS relied upon the expertise and insights gathered from teachers and faculty who had experience working with Indigenous students. Examples of items include “Indigenous people are represented as contemporary (not only historical)” and “Traditional and/or cultural knowledge is included.” Items are scored on a 7-point Likert-type scale ranging from −3 (indicating high contradiction to culturally responsive principles) to +3 (indicating high alignment with culturally responsive principles). A score of 0 indicates that there is a complete absence of culturally responsive principles, and a score of “not applicable” is used if the document, context, or process being assessed could not reasonably include the particular rubric item.

This is the first study to offer psychometric validation of the CRAIS. We want to be clear that psychometric validation serves a particular purpose and is useful in certain contexts, but we do not position it as primary or superior to the qualitative, experience-based process that informed the development of CRAIS and continues to offer validation, trustworthiness, and accountability of the CRAIS principles. Those methods captured the relational endeavors of INE in meeting the educational needs of the Navajo Nation and the psychometric validation offers an additional angle of interpretation.

Data Analysis

As a first step, we examined the dimensions underlying the newly created tool using exploratory approaches in independent samples of curriculum units produced by teachers in the 2019 DINÉ program (Sample 1; N = 19). We will then use confirmatory approaches on independent samples of curriculum units to cross-validate the model proposed in this first sample when the research team completes the CRAIS assessment of the 2020 and 2021 curriculum units.

An exploratory factor analysis using principal axis factoring (PAF) was conducted on Sample 1 data at the item-level. This sample of 19 curriculum units came from a professional development institute called the DINÉ. As this was a professional development program in partnership with the Navajo Nation, the recruitment of teachers required that they were employed in any school (i.e. public, tribally controlled, Bureau of Indian Education [BIE], or private) located on the Navajo Nation. Teachers who participated were those who responded to an open call and invitation to apply for DINÉ professional development seminars. Each unit was written by a single K–12 teacher who participated in the 8-month long DINÉ in 2019. As described elsewhere (Castagno et al., 2022), the DINÉ offers a cohort-based professional development experience that focuses on increasing teachers’ content knowledge, ability to develop culturally responsive curriculum, and leadership capacity. All of the teachers are full-time, certified teachers in schools on or bordering the Navajo Nation, and in 2019, they came from 16 distinct schools. Among this group, 18 were women, and 17 identified as Diné/Navajo.

A combination of empirical and substantive criteria was utilized to determine the dimensions underlying the CRAIS items and were in line with best practices as outlined by Watkins (2018). In this study, exploratory factor analysis was applied as a statistical method to the CRAIS tool to determine the number of factors influencing the responses to the CRAIS tool. First, scree plots and percentage of variance accounted for (VAF) were reviewed, which provided preliminary insight into the potential number of underlying factors. Next, the results of Kaiser-Meyer-Olkin (KMO) values and Bartlett’s Test of Sphericity were examined to test the eligibility of the CRAIS tool in factor extraction. The KMO statistic measures sampling adequacy and ranges from 0.00 to 1.00, while Bartlett’s Test of Sphericity is used to confirm that the correlation matrix is not random and the KMO statistic is above the required minimum standard of .50 (Watkins, 2018). For our study, KMO = .680 and Bartlett’s Test of Sphericity were found to be significant (c2 = 914.333, p < .000). These findings show that factor analysis could be performed on our tool. To robustly consider the factor structure of the initial 23 items, several criteria were used (Pituch & Stevens, 2016). This included factors greater than one; inspection of the eigenvalue scree plot; and eigenvalues larger than expected by chance in a parallel analysis of actual data and the simulated data (Patil et al., 2008, 2017).

Results

The scree plot and percentage of VAF suggested there were two to three factors present in the data. The first four eigenvalues of the nonrotated factors were (with percentage of VAF in parentheses): 10.401 (45.22), 2.823 (12.27), 2.212 (9.616), and 1.496 (6.506). The results of a parallel analysis (Glorfeld, 1995; Horn, 1965) were used in the decision of how many factors to extract. A parallel analysis is one of the most accurate methods for selecting factors to retain in a factor analysis (e.g., Hayton et al., 2004). We generated 500 random permutations of the data. The mean eigenvalues computed from the random data at the 95th percentile were then compared with the actual eigenvalues that were obtained. The parallel analysis indicated that only the first two eigenvalues from the actual data were greater than the mean eigenvalues generated from the simulated data. Thus, we extracted a two-factor solution by PAF, and an oblique (e.g., Promax) rotation was applied. The resulting two eigenvalues were (with %VAF in parentheses): 10.401(45.222) and 2.823(12.272). The two factors correlated at .390. The scree plot graphic (Figure 1) shows there is a two-factor solution, and the number of factors corresponds to the number of factors determined via the eigenvalue methods (Table 1).

The scree plot of the factor analysis demonstrating the two-factor solution.

Parallel Analysis of the Eigenvalues—Actual and Simulated Data Comparison

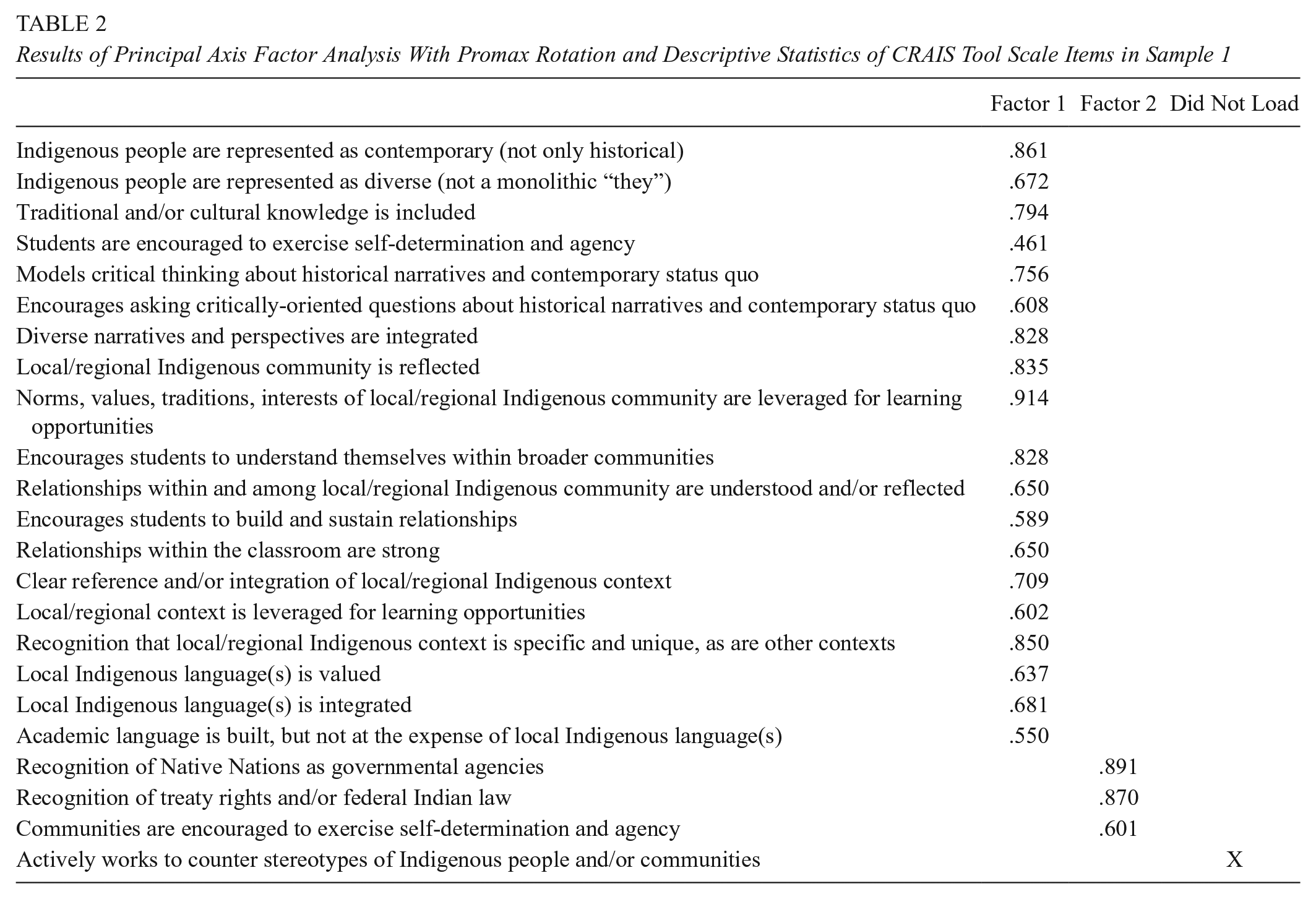

The team made initial interpretations of the two factors based on inspection of the structure matrix. Initial labels for these factors were (a) Indigenous peoplehood and (b) organizational cultural knowledge. After team meetings that centered on discussing the core elements of the curriculum design and the population of students and educators, consensus was achieved for the final subscale labels: (a) Indigenous peoples and cultures and (b) Indigenous sociopolitical concepts. Table 2 shows the pattern coefficient matrix, descriptive statistics, and communality estimates of the 23 CRAIS items. One item did not load: Actively works to counter stereotypes of Indigenous people and/or communities. As a team, we discussed the original intention of including this item and the importance of the construct behind the item and the complexity in determining the qualitative validity of this statement. Our discussions centered on the value of teachers countering stereotypes of Indigenous people and/or communities in their curriculum. So, we each submitted a revised phrasing of this item to best capture how teachers might do this across units. As a result, a consensus agreement was achieved among the research team to maintain the construct but to revise the language slightly. The item was revised to Stereotypes of Indigenous peoples and/or communities are addressed. In a future study, we will cross-validate the two-dimensional structure of the CRAIS tool with confirmatory factor analysis (CFA) with data from another sample and will include the item that did not load.

Results of Principal Axis Factor Analysis With Promax Rotation and Descriptive Statistics of CRAIS Tool Scale Items in Sample 1

Discussion

The results from exploratory factor analysis of the 23 items in the CRAIS tool presented important considerations for determining the role of a mixed methods approach to addressing the validity and reliability of the tool. First, the exploratory analysis yielded two correlated dimensions with all but 1 of the 23 principles. Second, the validity and reliability of the 22 items that loaded spoke to the probability that users of the tool may do so with confidence. Finally, the authors were presented with navigating the middle ground between quantitative and qualitative methods to determine what to do with the item that did not load.

What is important to consider is the discussion by team members regarding the two factors and the one outlier item not fitting into the factor analysis identified as “Actively works to counter stereotypes” and the interpretation of this finding from a quantitative and qualitative lens. Quantitatively, the dimensionality of the CRAIS was examined in independent samples and is only one step in establishing the construct validity of this measure. The psychometric validation of the CRAIS advances the assessment of culturally responsive schools serving Indigenous students by identifying the dimensions that underlie the broader construct of Indigenous schooling. The findings from this study can be used to inform the development of future assessments of Indigenous-serving schools, or any school with an enrollment of students who are from federally recognized tribes in the United States. While the CRAIS focuses on cultural responsiveness, our results suggest that considering the content domains of (a) Indigenous peoples and cultures and (b) Indigenous sociopolitical concepts is important to considering cultural responsiveness. Prior to this exploration of the CRAIS dimensions, the availability of empirically supported assessments for the pedagogical practices of teachers to promote culturally responsive classrooms serving Indigenous students was limited. Hopefully, this will promote additional research on the CRAIS, which will serve to further our understanding of culturally responsive practices in Indigenous schools.

The authors indicate in their initial presentation that the scoring items on the 23 factors scored above zero, meaning the units positively correlated with each question from the CRAIS tool. It was also noted that the subsets of the 23 questions were qualitatively organized into the five different categories of (a) relationality, relationships, and communities; (b) Indigenous knowledge systems and language; (c) sociopolitical context and concepts, and specifically sovereignty, self-determination, and nationhood; (d) representation of Indigenous peoples; and (e) critical understandings of diversity, and specifically race (Castagno et al., 2022).

These findings are significant because they present important considerations for the team to determine how to engage with the conversation of reliability and validity associated with the purpose of the CRAIS tool and the communities with whom it is intended to be used. Specifically, we asked ourselves, How much do we rely on quantitative methods to validate elements of culturally responsive schooling for Indigenous schooling? And, how do we reframe the use of methodological pathways (qualitative and quantitative) to demonstrate we are not privileging one over the other? To address these questions, we considered the first factor (Indigenous peoples and cultures) and learned of the 23 CRS principles, 19 aligned with this one factor, strongly supporting the notion of addressing the relevance of representation of the Indigenous lifeways, or the multiple contexts and lived experiences interdependent and interconnected to the concepts of language, history, ceremony, and land (Holm et al., 2003). Three CRS principles loaded well with the second factor (Indigenous sociopolitical concepts), supporting the representation of curricular elements connecting students with the macro dimensions of Tribal Nations as sovereign nations, centering sociopolitical concepts such as tribal sovereignty, self-determination, and treaties, and therefore supporting theoretical constructs such as Nation building to be part of curricular planning. The use of a quantitative factor analysis by the team was intentional and presented positive outcomes demonstrating an alignment of an overwhelming majority of the 23 CRS principles.

Of equal value, and critically important for our team, was to have the discussion about the following questions: What to do with the one outlier? Do we drop it and reduce the CRAIS tool to 22 CRS principles? Or, do we rephrase the language so it can load as a factor through CFA? Our discussions returned our team to the intended use of this tool, which is to assess the degree to which culturally responsive principles are or are not present in schools serving Indigenous youth (Castagno et al., 2022). Knowing what we know about the extant literature on culturally responsive schooling (Barnhardt & Kawagley, 2005; Castagno & Brayboy, 2008; McCarty & Brayboy, 2021), it was important for our team to evaluate the semantics used in this item and decide about its relevance in relation to the purpose of the CRAIS tool. Additionally, we revisited conversations regarding decolonizing methodologies for research and situated Tuck and Yang’s (2014) concept of “refusal” to substantiate the warranted inclusion of the item, rather than not include it because the quantitative method invalidated the item. Ultimately, the team came to consensus about a revised wording of the item, Stereotypes about Indigenous peoples and/or communities are addressed. Qualitative and quantitative arguments can be made as to whether or not to keep this item. From a quantitative lens, it makes the most sense to delete this item from the list of 23 principles, since the findings from the factor analysis did not load. However, the statement, “Actively works to counter stereotypes of Indigenous people and/or communities” intentionally grounds historical contexts and experiences by Indigenous populations rooted in colonization and assimilation to actively address misrepresentation as a form of agency (Tuck & Yang, 2014). From a qualitative perspective, the team deemed it important to recognize the value of this statement for youth and educators as it relates to culturally sustaining and responsive schooling. The team engaged decolonized methodological approaches (Brayboy et al., 2012; Grande, 2000; Paris & Alim, 2017, Smith, 2012; Tuck & Yang, 2014) by coming to consensus to keep the principle in the CRAIS tool, as it is concerned with the lived realities of Indigenous students. For example, it was important to redress the history of stereotypes toward Indigenous communities and how they continue to exist in curriculum and instruction. Taking out this item would mean that, in its absence, there is a very strong potential for educators to perpetuate such stereotypes. Further conversation by our collaborators continued to bring us back to the intent for the use of the tool, which was in part, to facilitate action among educators to engage their students and collectively contribute to tribal nation building and to the principles of sovereignty and self-determination. Keeping this item would, in theory, facilitate responses among educators to avoid pathologizing students by misinformed and unwarranted stereotypes predicated by hegemonic structures. Including this principle will engage an “inward gaze” among educators to situate the funds of knowledge and cultures of our local communities informing asset-based pedagogies (Alim & Paris, 2017, González et al., 2006).

Toward Methodological Complexity When Assessing Culturally Responsive Schooling in Indigenous Communities

While structural validity of the CRAIS was identified and supported, the results are considered against the limitations of this study and recognition that future research may be conducted with different indigenous groups, in different regions, and with different researchers. Thus, if our research is used to model future studies, we hope researchers will consider the many factors that yielded our results and to thoughtfully engage with their participant communities or nations. This will have implications for teacher training and professional development, curricular design and implementation for students, and policies and practices intended to support educational and developmental issues facing Indigenous students.

The limits of this analysis of the CRAIS center on analytical issues, as well as sample considerations. First, examining test-retest reliability is necessary to provide additional support for the identified subscales of this study. We will conduct future confirmatory analysis with an independent sample, but in consideration of sample limits, the use of diverse samples in the future will help with application to the broadest Indigenous population possible. Future studies should examine other aspects of construct validity such as convergent and criterion evidence.

Methodological complexity pushes us to also think alongside these traditionally quantitative priorities in order to center Indigenous communities, epistemologies, methodologies, and priorities. While we have alluded to this throughout, we want to close by being even more transparent about the ways our work was enhanced by methodological complexity. We offer two specific examples.

The first example relates to the decision about whether and how to address the “outlying” item from the exploratory factor analysis. Statistics should always be informed by theory and practice. From a purely quantitative perspective, we might delete the item altogether because it did not map anywhere in the factor analysis. But we know from the published research on Indigenous education that stereotypes are prevalent across curricula and lived experiences. We also know from our own experiences as educators, Indigenous people, and allies that stereotypes perpetuate harm for many Indigenous students. Finally, from the perspective of users of the CRAIS tool, intentionally considering stereotypes in the schooling of Indigenous youth is necessary.

The second example relates to the identification and naming of the factors. Prior to conducting the exploratory factor analysis, the research team engaged qualitative content analyses to clump the 23 CRAIS items into five categories. We relied heavily on the extant literature on culturally responsive schooling, our experiences in Indigenous-serving schools, and our work with Indigenous teachers to develop the five categories. The exploratory factor analysis resulted in two factors, with most of the CRAIS items loading on just one of the factors. The research team engaged in dialogue about these findings—we listened to different interpretations, we collectively explored possible explanations, we educated each other about divergent sets of expertise, and we ultimately grew consensus on a path forward. We came to realize that it was only through this complex, sometimes time-consuming process that we could confidently embrace a both/and approach. This approach means that we maintained the five categories as they center Indigenous lived experiences and generations of knowledge, and they are relevant for educators working in schools with Indigenous youth. But we also can maintain the two factors as they were so strongly evidenced by the statistical method and are relevant for researchers who may leverage the CRAIS tool in other projects.

In the end, we offer this discussion to advocate for methodological complexity and decolonizing conversations whenever we work with and in Indigenous communities. Without this, our work risks misinterpretation and only partial usefulness by the communities it is intended to serve.

Footnotes

Author Contributions

The authors Chesleigh N. Keene and Darold H. Joseph have contributed equally to the development of this article and share the role as co–first authors.

Ethical Approval

This research is approved through the Northern Arizona University IRB, as well as through the Navajo Nation Human Research Review Board.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project is funded through NSF Grant #1908464.

Open Practices Statement

Authors

DAROLD H. JOSEPH (Hopi) is an assistant professor and director at Northern Arizona University, Box 5774, Flagstaff, AZ 86011; e-mail:

CHESLEIGH N. KEENE is an assistant professor at Northern Arizona University, Box 5774, Flagstaff, AZ 86011; e-mail:

ANGELINA E. CASTAGNO is a professor and chair in the Department of Educational Leadership, College of Education at Northern Arizona University, PO Box 5774, Flagstaff, AZ 86001; e-mail:

PRADEEP M. DASS is a professor and dean of the College of Education and Psychology at the University of Texas in Tyler, 3900 University Blvd., Tyler, TX 75799; e-mail:

CRYSTAL MACIAS is an NAU graduate student of the EdS School Psychology program in the College of Education at Northern Arizona University, Box 5774, Flagstaff, AZ 86011; e-mail: