Abstract

Digital reading is ubiquitous, yet understanding digital reading processes and links to comprehension remains underdeveloped. Guided by new literacies and active reading theories, this study explored the reading behaviors and comprehension of thirteen fifth graders who read static digital texts. We coded for the quantity and quality of digital reading behaviors and employed action path diagrams to connect behaviors to comprehension. We used timescape analyses to visualize how behaviors were orchestrated differently across readers. Findings showed no single behavior was related directly to comprehension, indicating varying pathways to digital reading success. Occasional rereading seemed to support active reading and improved comprehension. Instances of students subverting expected digital tools were observed. Minor distractions like mind-wandering did not link to poor performance. This research deepens our understanding of self-monitoring and active reading in static digital contexts, offering insights for future study of more complex digital reading contexts like reading on the internet.

Digital reading is ubiquitous in our society, yet our understanding of digital reading processes and comprehension remains underdeveloped (Coiro, 2021). While “a vast body of research” (Alexander, 2020, p. S91) has been built regarding digital literacies, the scale of change, use, and complexity of digital reading makes it so that we lack the deep knowledge developed across a century of research with print texts. Although many studies suggest that paper reading is superior to screen reading in terms of comprehension (Clinton, 2019; Delgado et al., 2018; Furenes et al., 2021; Kong et al., 2018; Öztop & Nayci, 2021; Singer & Alexander, 2017), others have found that factors such as genre, text length, and interactive features in digital texts lead to contrary or mixed results (Clinton, 2019; Clinton-Lisell et al., 2023; Delgado et al., 2018; Schwabe et al., 2022). Given the complexity of reading, we explore static digital reading to establish a baseline understanding of how digital reading processes occur when the medium is designed as similar as possible to traditional paper reading. As such, it provides potential insights into why the field is finding mixed comprehension results across mediums and acts as a foundation for future work into other unbounded forms of digital reading such as web-based reading.

As early as 1998, Reinking (1988) emphasized that “electronic and printed texts are qualitatively different” (p. xxiv) and that “the new technologies of electronic reading and writing are slowly but steadily transforming classrooms, schools, and instruction” (p. xxv). Since then, Leu et al.’s (2013) theory of new literacies suggests “a collaborative approach to theory building . . . because both old and new elements of literacy are layered in complex ways” (p. 1157). Coiro (2021) has extended our understanding of the range of digital reading activities suggesting “a spectrum between the reading of single digital texts and reading in highly interactive environments” (p. 10) and the need to consider variables like reader, task, text, and context. Additionally, researchers have emphasized the need to move beyond analyzing reading products (i.e., reading comprehension outcomes) to understand reading processes. As Singer and Alexander (2017) write, “future studies need to focus on capturing processing data . . . what is occurring while reading in print and digitally will offer critical information regarding the processing of the text” (p. 1034).

Uniting these points, the current study explores how fifth-grade students approach a specific digital reading task historically associated with standardized testing traditionally delivered via paper. This task involves reading a static passage digitally and then answering comprehension questions to demonstrate understanding. Here, we define static digital text as a text that is presented digitally but does not require an internet connection, nor does it include interactive elements such as hyperlinks or videos. Investigating this specific digital reading process and product can provide a foundation from which to build future comparisons to other digital tasks (i.e., multiple document reading, internet searches) and paper tasks (i.e., paper standardized tests). Rather than comparing paper and digital reading, we focus on digital reading, paying attention to the ways in which skills and strategies associated with paper reading are used in concert with digital tools during static digital reading. Our focus on digital reading acknowledges the historic move to digital that we are experiencing. We see this through our daily digital reading, our experiences during COVID-19 when academic reading by and large occurred digitally, and with the understanding that digital reading tends to be the default mode of standardized testing with most states and even the National Assessment of Educational Progress (NAEP) assessing reading digitally.

This study comes at an important point in time when theory has identified the need to understand digital reading behaviors and when assessment and analysis tools exist that can honor the complex nature of even static digital reading and the multimodal nature of observable reading behavior data. For example, because reading occurs in the brain, understanding the reading process involves considering as many observable data sources as possible (i.e., video of participant faces and screens, audio, and eye-gaze data). These behaviors tell us about what Duke and Cartwright’s (2021) active model of reading comprehension suggests might be particularly important and instructionally relevant. They write, “In addition to acquiring necessary word-reading and language comprehension knowledge and skills, readers must learn to regulate themselves, actively coordinate the various processes and text elements necessary for successful reading, deploy strategies to ensure reading processes go smoothly, maintain motivation, and actively engage with text” (Duke & Cartwright, 2021, p. S30). Our study explores how such behaviors are observed in static digital reading. In the following sections, we discuss what is known about digital reading. While research tends to frame findings in comparisons (i.e., paper versus digital), we use findings to inform our understanding of digital reading specifically.

Digital and Paper Reading Outcomes

The shift from page to screen has led many to investigate how reading medium impacts comprehension. Spanning a wide range of ages and populations, reviews and meta-analyses compare digital and paper reading outcomes (i.e., comprehension), finding screen-reading (i.e., digital reading) may be inferior to print reading in terms of comprehension outcomes (Clinton, 2019; Delgado et al., 2018; Furenes et al., 2021; Öztop & Nayci, 2021). Specifically, one systematic review found benefits for paper over digital reading of long texts (Singer & Alexander, 2017) and six meta-analyses found significant and meaningful effect sizes in comprehension benefits to reading paper versus digital texts (e.g., Clinton, 2019; Delgado et al., 2018; Furenes et al., 2021; Kong et al., 2018; Öztop & Nayci, 2021; Singer & Alexander, 2017). These comparisons tend to involve static digital texts compared to paper texts.

With that said, close examination of these studies suggests that effect sizes are variable and impacted by factors such as the genre (Clinton, 2019; Schwabe et al., 2022), text length (Delgado et al., 2018), and interactive text features sometimes available in digital texts (Schwabe et al., 2022). For instance, conducting a meta-analysis of studies across eleven countries, Schwabe et al. (2022) found there were no differences in outcomes between the screen and print reading when considering only the narrative genre. In fact, two meta-analyses concluded that interactive features, such as dictionaries and question prompts available in digital texts, positively supported comprehension when compared to paper texts without such interactive features (Clinton-Lisell et al., 2023; Schwabe et al., 2022). These findings highlight the potential of digital texts to scaffold students’ reading through their interactive features. Our study adds to the current literature by beginning to unravel the complex behaviors students engage in when reading a static digital text to possibly explicate differences found across mediums and provide a baseline understanding the field can build from when considering more complicated digital reading (e.g., websites, hyperlinked texts, etc.).

Multifaceted Digital Reading Behaviors

A second key finding that emerges from the digital reading literature is that digital reading is multifaceted and not identical to paper reading. We refer readers to helpful commentaries like Coiro (2021) and Goldman et al. (2016) for additional detail. Generally, research suggests that across the wide range of contexts, digital reading overlaps with paper reading but differs in important and complex ways particularly related to a greater need to be strategic, critical, and able to synthesize across multiple multimodal sources in a goal-directed manner (see Coiro, 2021). Even within a single static digital text, students must decide whether and how to scroll, zoom, highlight, track text with a mouse, or use digital tools like dictionaries or word readers. Hence, these findings emphasize Duke and Cartwright’s (2021) recent active view of reading, which as mentioned previously, suggests the importance of how readers strategically coordinate tools and reading behaviors to build comprehension. Research indicates that students are aware of different strategies and strengths of tools used within digital versus paper mediums. For example, adolescents in Turner et al.’s (2020) study described the power of digital highlighting tools as being easy where “you can tap a word, and you can highlight it” (p. 302) in ways that are permanent (i.e., can be saved) and changeable (i.e., accidental marks can be erased unlike traditional highlighters). Turner et al.’s work also indicates adolescents are aware of different digital reading processes, with the shallowing hypothesis suggesting quick interactions via screens, like those experienced on social media, lead to attention challenges when screen-based activities require more complex and time-consuming engagement. While research suggests adolescents’ diminished digital reading comprehension is related to less effortful processing/active self-regulation/active reading (note, we use these terms interchangeably) when reading on screens (i.e., Dahan Golan et al., 2018; Halamish & Elbaz, 2020; Ronconi et al., 2022), the field has yet to provide clarity on how these differences occur. The active model of reading (Duke & Cartwright, 2021) guides us to consider active self-regulation, including executive function skills and strategy use as observed through digital reading behaviors, and how these vary across texts and individuals.

Active Reading in Digital Contexts

Correlational research suggests the importance of active reading behaviors related to executive function and self-regulation like cognitive flexibility, inhibitory control, working memory, planning, and attentional control (see Duke & Cartwright, 2021, for an overview). Rather than focusing on self-regulation measures generally, observable behaviors like highlighting, scrolling, mouse movements, mind-wandering or lack thereof, rereading, and playful movements (e.g., gesturing, facial expressions, body movements, etc.) provide evidence of active reading and self-regulation during the reading process. For example, in a study with 371 American middle schoolers, Goodwin et al. (2020) found that students highlighted more on paper, but that less-frequent, more-effortful highlighting (i.e., aligning a cursor with text to highlight) during screen reading predicted comprehension. Here, digital readers highlighted a greater proportion of the time in areas of interest compared to paper readers, suggesting active self-regulation to cognitively engage and digitally highlight were important to support comprehension. Generally, digital research and surveys suggest digital highlighting is less frequent than paper highlighting, possibly because it is harder or possibly because digital text is processed more shallowly, making highlighting less likely. With that said, results are mixed in terms of quality of digital highlights (Liu, 2005; Schugar et al., 2011; Turner et al., 2020). While Goodwin et al. (2020) reported more digital highlights involved important text content than paper highlights, Ben-Yehudah and Eshet-Alkalai (2014), studying university students, reported no differences in highlight content between digital and paper highlighting conditions. Hence, more work is needed to understand how active reading behaviors like highlighting are involved in the digital reading process.

Actions such as scrolling, zooming, and mouse movements are additional digital reading behaviors that may reflect active reading, although research is quite limited. In their meta-analysis, Delgado et al. (2018) found that when screen reading required scrolling, paper-based reading was found to be at an advantbibrage. They concluded this might indicate that “scrolling may add a cognitive load to the reading task by making spatial orientation to the text more difficult for readers than learning from printed text” (p. 35). Generally, studies have suggested that scrolling can present challenges for participants (Piolat et al., 1997; Sanchez & Wiley, 2009), although Brady et al. (2018) found scrolling impacted reading comprehension for some students (i.e., students with no preference between reading on paper or digitally) but did not affect others in terms of comprehension.

Lastly, other reading behaviors like rereading, playful movements, mind-wandering, and refocusing can provide further evidence of active reading. Exploring digital and paper reading with college students, Singer Trakhman et al. (2018) analyzed rereading (defined as looking back rather than preceding in the direction of the text) and other processing behaviors (i.e., repositioning, questioning, skimming) to identify reader profiles when reading on paper and on screens and links to comprehension. Analysis resulted in four profiles: regulators who routinely reread and repositioned, plodders who read slowly and linearly without many deep processing behaviors, gliders who also did little deep processing but moved quickly through the text, and samplers who mostly read straight through with target moments of rereading. In line with active reading, those who engaged in deep processing behaviors such as rereading (i.e., regulators) comprehended better in both mediums. Interestingly, reader profiles were not constant between mediums. For instance, over a third of students who were gliders on paper shifted to the regulator profile when reading on a screen. “This ability to adapt one’s processing behaviors as a consequence of medium is a noteworthy aberration from the majority of participants [plodders, samplers, regulators] who did not adjust” (Singer Trakhman et al., 2018, p. 15) and an indication of how active reading strategies may be even more essential for readers in the digital environment.

This correlates to the larger literature on rereading, which links rereading with effortful processing and comprehension success (Chevet et al., 2022; Masson, 1983; Schotter et al., 2014). Generally, rereading is a behavior that often occurs in response to confusion, indicating the reader is actively self-regulating their reading process. For example, Bicknell and Levy (2011) analyzed eye movements of 10 adults reading articles on computer screens and noted rereading correlated with “when a new word fits relatively poorly with what the reader believed the prior context to be . . .” (p. 932). Additional gaze-tracking studies with adults (Levy et al., 2009; Schotter et al., 2014) suggest rereading is prompted by high-level language processing, where readers actively correct for faltering comprehension. Rereading seems less common with digital texts, as Jian (2022) and Singer Trakhman and colleagues (2018) both found undergraduates were less likely to reread on screens than on print texts, but school-age samples remain understudied.

One behavior that comes up often, especially when discussing digital reading, is mind-wandering. Generally, Delgado and Salmerón (2021) and Jian (2022) found that college students showed more mind-wandering in digital or screen reading compared to print reading. In contrast to active reading, adult and college-aged readers have been shown to zone out about 20–50% of the time while completing reading and comprehension tasks (D’Mello & Mills, 2021; Mooneyham & Schooler, 2013). Usually portrayed as negative, mind-wandering harms readers’ retention, model building, and comprehension-task performance, but it can also have benefits like support for creative thinking and relief from boredom (Mooneyham & Schooler, 2013). In an active reading model (Duke & Cartwright, 2021), a reader might strategically engage in short mind-wandering sessions with refocusing as an element of active self-regulation. For instance, a study by D’Mello and Mills (2021), showed that readers who appeared to zone out could be nudged back on task, resulting in less frequent and shorter incidents of mind-wandering. These nudges were correlated with higher performance on comprehension tasks. Considering the reader’s self-regulatory efforts, Varao-Sousa and colleagues (2018) found that college readers’ behaviors immediately after mind-wandering—specifically whether they reread—impacted their performance on comprehension assessments. Together, this work suggests mind-wandering, as well as less studied off-task behaviors such as play, may fit into a model of active reading.

Task and Reader Differences

Coiro’s work (i.e., multifaceted heuristic for digital reading; Coiro, 2021) extends the work of the RAND report (RAND Reading Study Group, 2002) to show the importance of attending to differences in readers, texts, contexts, and tasks when considering digital reading. With tasks in mind, considering types of questions can help us better understand modality effects. For instance, in their study with over 4,000 fourth graders, Neugebauer and colleagues (2022) reported that after reading on paper, students performed better on questions designed to connect to more surface-level understanding, such as recall/retrieve tasks, than they did with ones made to elicit deeper comprehension, such as interpretation and evaluation questions. When reading and answering questions digitally, however, students’ relative performance on these tasks was reversed.

In terms of behaviors, differences have been noted. For example, Brishtel and colleagues (2020) reported increases in mind-wandering when adults read easier texts. Also, links between reading processes and comprehension may be mediated by mode as well. Ben-Yehudah and Eshet-Alkalai (2014) showed the effect of highlighting on comprehension was positive only for paper reading and specifically for questions about higher-level inferencing and processing, not lower-level factual recall. In contrast, Goodwin et al. (2020) showed digital highlighting contributed to comprehension but not paper highlighting. Such research confirms that further consideration of task specifications is important, as Coiro’s (2021) heuristic suggests.

Individual differences have also been shown to mediate mode effects on comprehension and digital reading processes. Vidal-Abarca and colleagues (2010) found that seventh and eighth graders more skilled at reading comprehension appear more able than their grade-level peers to self-regulate the search process when they look back to a text after reading a question. While more skilled comprehenders did not spend more time on relevant sections of the passage during their initial cold read, they were more likely to return to these sections while test taking and then to answer the questions correctly. Also, D’Mello’s team (2017) found less skilled adult readers experienced more mind-wandering, and Nguyen et al. (2014) correlated increased mind-wandering in second graders with poor comprehension performance. Together, this work emphasizes the importance of considering what different types of readers are doing to respond to the specific characteristics of the text and questions involved.

Current Study

This study is part of a larger study (Goodwin et al., 2020) that collected data from 371 fifth- through eighth-grade students while they engaged in both digital and paper reading. However, for this study, our goal was to move beyond the paper versus digital debate and enhance the field’s nascent understanding of digital reading by examining how readers effectively use tools, strategies, and behaviors to comprehend static digital texts in order to develop a baseline understanding of digital reading processes when the medium is designed to be highly congruent to paper reading. First, we explore students’ reading behaviors while digitally reading and answering questions, taking a broad descriptive lens. Second, we consider how students’ digital reading and question-answering behaviors connect to their comprehension outcomes. Then, we examine how students put these behaviors together with the goal of illustrating pathways students took as they orchestrated strategies and behaviors toward comprehension. Specifically, our three research questions were:

RQ1—What behaviors do readers exhibit during digital reading and question answering?

RQ2—How do digital reading and question-answering behaviors connect to comprehension?

RQ3—How do readers orchestrate digital reading and question-answering behaviors?

Methods

Participants

Thirteen students from the data set of 371 fifth- through eighth-grade students (see Goodwin et al., 2020) are the focus of this study. Purposive sampling (Patton, 2024) was used to choose students for qualitative analysis. We focused on fifth graders (N = 87) to minimize variability associated with age and reading ability and because fifth grade marks a developmental time point where reading comprehension standards begin to identify digital text reading as a specific goal (i.e., CCSS.ELA-LITERACY.RI.5.7). Next, we focused on students from condition B (N = 45) that read the digital section of the text first to minimize the impact of reading fatigue.

The 45 fifth-grade students were grouped by their reading ability, defined by performance on the district literacy measure, which was the Northwest Evaluation Association (NWEA) Measures of Academic Progress (MAP) reading test. The NWEA-MAP is a standards-aligned, computer-adaptive assessment of reading that is used in the school district in which the study was performed to inform decisions around school ranking, student retention, program placement, intervention, and instruction. Three groups were made consisting of students whose percentile ranking were low (i.e., 30% or lower, N = 7), mid (i.e., 31–70%, N = 15), and high (i.e., 71% or higher, N = 21) with the two students who had no NWEA data excluded. We further constrained groups excluding students (N = 12) whose previous ranking was contradictory to their current ranking. This included a student from the low group whose prior performance was at the 49th percentile; three students from the mid-scoring group who were ranked five percentile points or more above the 70% threshold on their previous test; and eight students from the high-scoring group, who were in the 65th percentile or lower on the prior test.

Nine students were originally selected for preliminary qualitative analysis—three from each of the reading ability groups—with the goal of including students identified with different genders, home languages, socioeconomic statuses, and racial and ethnic identities in each group. Preliminary analysis found striking differences between the students in the low and mid groups. To further explore those differences, we selected four additional students, two from the low-ability group and two from the mid-ability group. The final 13 students selected for qualitative analysis are listed in Table 1.

Student Reading Ability, Demographics, and Posttest Digital Reading Performance

Despite our goal to attain demographically diverse samples, all of the fifth graders in condition B who scored below the 30th percentile (N = 7) were identified by the district as Black or African American. This showcases the likely racially and culturally biased nature of this measure. Yet, since we grounded this study in partnership work with the district, we continued to use it for grouping because it was the measure the district used to guide instructional choices. This was the information conveyed to teachers, and therefore, we used it as a way of bridging research to practice. As with many standardized literacy tests, NWEA relies on assumptions of background knowledge that disadvantage culturally minoritized students and valorizes standard academic English over other dialects (Baker-Bell, 2020). These features of the test, along with broader educational opportunity gaps (Milner, 2022), contribute to overrepresentation of racialized students in lower-scoring categories (Au, 2016). Like any test, NWEA does not give a complete or unbiased picture of students’ literacy abilities or—as our analysis reaffirms—the complexity and particularities of their reading practices. However, these scores are consequential for students, teachers, and schools, so we chose to include them with the hope that our study could add a more complete picture of these students as readers.

Procedures

Students read in a quiet school area, usually a corner of the library or a coach’s room. Participants read a 2011 eighth-grade NAEP social studies selection on women’s suffrage (see appendix A). We chose this text because the study occurred at the end of fifth grade, and Lexile stretch levels for fifth and sixth grade associated with the Common Core more closely aligned with the eighth-grade level NAEP text. To confirm fit, we piloted this passage with 15 questions and a fourth-grade NAEP passage on mummies with 13 questions for 77 fifth-grade participants to make sure the reading level would be appropriate. In item response theory (IRT), any two tests can be considered parallel “when for each item in one test there is an item in the other test with the same item response function” (e.g., Kim, 2012, p. 157; Lord, 1983, p. 242). IRT differential item functioning (DIF) analysis (e.g., likelihood ratio test) involving the data suggested item characteristics were similar across the passages, so we allowed teachers to inform our choice of content (i.e., women’s suffrage).

We analyzed participants’ reading of the first half of the passage, which is the section they read digitally. This section was 409 words with a mean sentence length of 19.48, a Lexile range of 1200–1300, and consisted of the original NAEP text plus a sentence of nonwords and a sentence that had been adjusted to be more complex syntactically as a way of designing potential areas of confusion (which are not a focus for this study). Participants were directed to read like they would to complete schoolwork and told they would be answering questions about the reading. Digital highlighting, annotating, and dictionary tools were provided and modeled for students. For each, a cursor was used to drag over the part of the text to be highlighted or annotated or defined. Reading sessions were recorded by iMotions. Prior to reading, students took a content knowledge pretest and after reading, students answered a comprehension posttest (see following description). For the posttest, the digital text appeared to the left of the questions so students could return/scroll through the text if desired.

Data Sources

Video Recordings

This study primarily used video recordings captured by iMotions of students’ digital reading and question answering. The videos consisted of side-by-side videos of the students’ faces recorded by forward-facing laptop cameras (left side of Figure 1) and videos of the screen with which students were engaging (right side of Figure 1). The video of the screen was overlaid with a gaze-tracking notation that approximated where the student was looking throughout the reading task (yellow dot on the right side of Figure 1).

iMotions screenshot.

Demographic and Standardized Test Information

Demographic and NWEA-MAP test scores were collected from our partnering school district. This included information about students’ gender (i.e., male or female), ethnicity (i.e., Hispanic or not), race, socioeconomic status (i.e., below the poverty line or not), and home language (i.e., English, Spanish, Amharic, etc.). Percentile rank from the most recent spring and winter assessments on the NWEA-MAP reading subtest were used. As described previously, these data were used to guide participant selection.

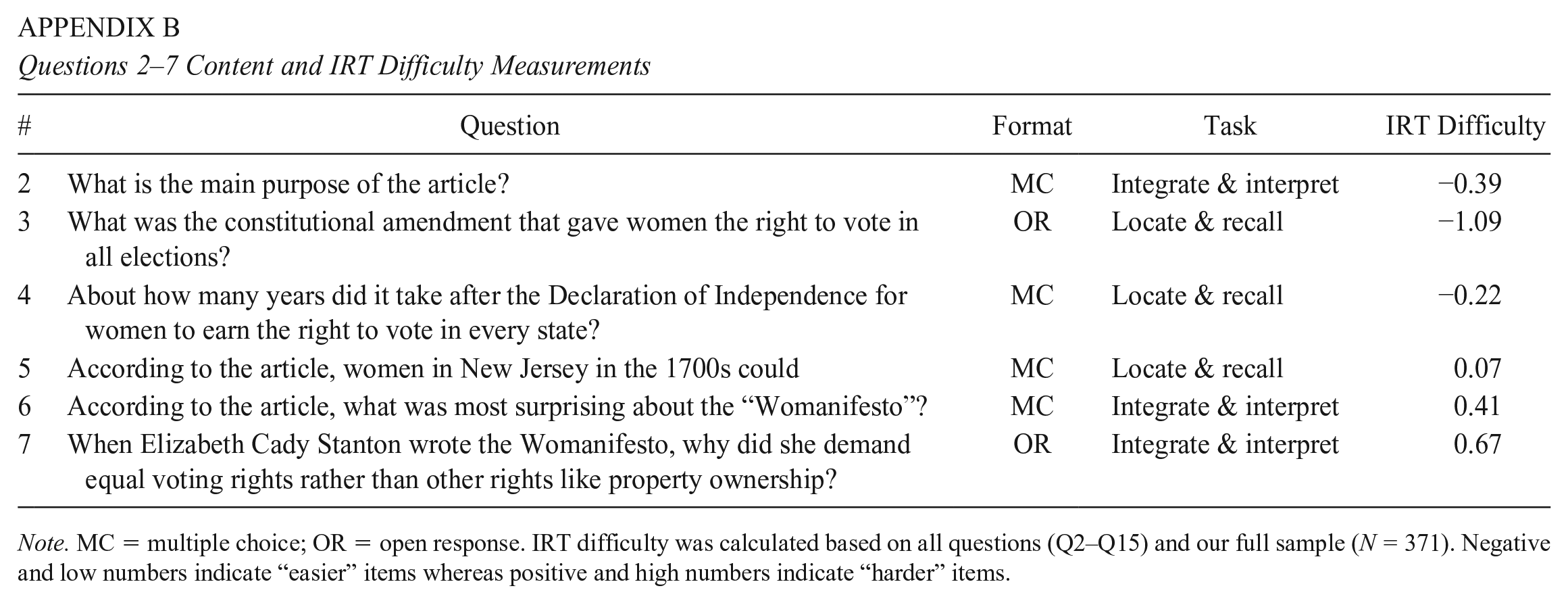

Posttest Comprehension

Performance on the posttest was also analyzed. The full posttest consisted of 14 questions with multiple-choice and open-response formats, although we only use data from questions 2–7 in this analysis because these questions relate to the section of the text read digitally (see appendix B for questions and IRT difficulties). According to the NAEP framework, four items measured students’ ability to locate and recall and two asked students to integrate and interpret information from the text. Two items had open-response formats, one being a locate and recall and the other an integrate and interpret. The remaining questions were multiple choice. Questions were related to local comprehension and required comprehension of more than a single phrase. These questions included those taken from NAEP and ones written by research team members who were former middle school teachers. The full question set was shown to be reliable with marginal item response theory (IRT) reliability (Green et al., 1984) using a Rasch model of .8 for our full sample of students. Also, open response items were coded by two researchers with 91.6% agreement and with discrepancies discussed and resolved.

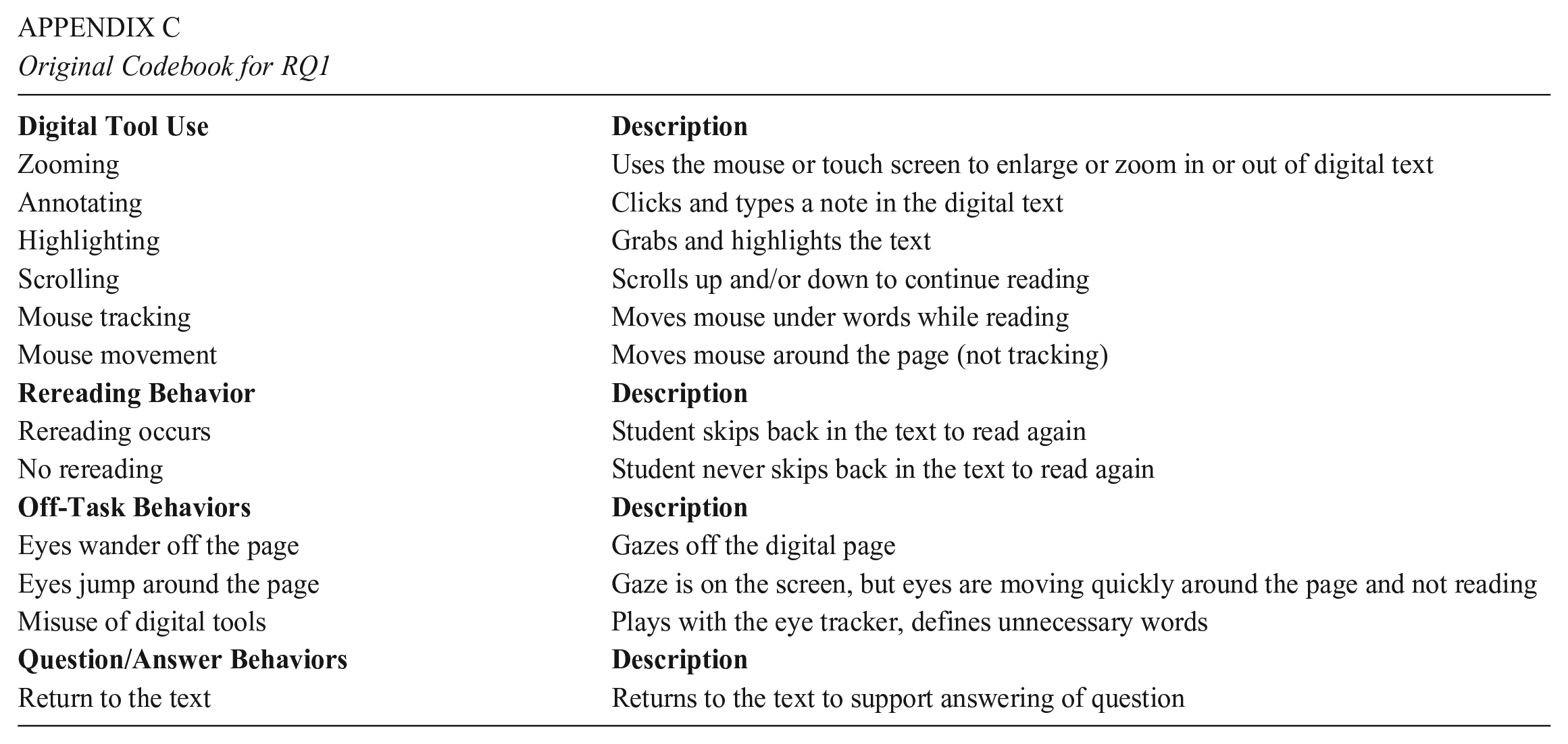

Data Analysis

Qualitative analysis was used to provide a deep understanding of reading behaviors. Prior to this analysis, we engaged in open coding (Strauss & Corbin, 1998) of videos from a larger sample (N = 40) to establish a codebook of digital reading and question-answering behaviors (appendix C).

Coding of Reading Behaviors (RQ1)

While we initially used the codebook (see appendix C) to analyze the videos for the 13 students in our sample, in this paper we also analyzed the quality of the digital reading behaviors, which we defined here as the nature of the behaviors. To do so, we triangulated students’ screen actions, gaze-tracking, facial expressions, audio (when available), and general context to generate descriptive statistics and analytic memos to understand behaviors. Summaries of each category of code follow, while more detailed descriptions of codes for rereading, tool use, and scrolling behaviors can be found in appendix D.

Rereading Behaviors

Given the literature that links rereading with effortful process and comprehension success (Chevet et al., 2022; Masson, 1983; Schotter et al., 2014), rereading was of interest. Via coding, we identified three rereading behaviors: linear reading (i.e., straight through with no pausing or backtracking), nonlinear reading (i.e., jumping around the text in no particular way), and occasional rereading (i.e., mostly linear with some moments of backtracking). See appendix D for further information.

Tool Use Behaviors

Quality of tool use was also of interest given the literature’s suggestion that digital reading potentially requires additional strategic use of tools. Highlighting was coded as strategic when students’ highlights could reasonably aid them when looking back in the text during the question-answer section. Similarly, use of the define tool was considered strategic when students defined words that were reasonably unknown and relevant to the students’ comprehension of the text. See appendix D for further explanations of strategic and nonstrategic tool use.

Scrolling Behaviors

Simple behaviors like scrolling were found to function differently across students as some students simply scrolled as a function of their reading (i.e., students scrolled down to continue reading the passage or scrolled up to reread). Other students engaged in nonfunctional (e.g., unnecessary) scroll. See appendix D for further information.

Embodied Tracking

Students tracked their digital reading in two ways. First, they mouthed or whispered the words as they read them. Second, students tracked the text by dragging the mouse under the words while reading. The team coded both behaviors as embodied tracking if they lasted for approximately a paragraph or more. A paragraph was selected as the unit of analysis because the lack of audio in some of the videos further compounded the challenge of coding brief behaviors with reliability, whereas behaviors that lasted for a paragraph or more were easily validated.

Off-Task Behaviors

Quality of students’ off-task behaviors throughout the digital reading and question-answering portions of the task were coded for duration with off-task behaviors considered to be extended when they were longer than 10 s and brief if they were less than 10 s.

Question-Answering Behaviors

Lastly, students’ question-answering behaviors were coded for questions that related to the digital text (i.e., questions 2–7). Specifically, researchers coded the quality of students’ returns to the text. Strategic behaviors meant students returned to the text in the area of interest (AOI) that corresponded with the question.

Analysis of How Behaviors Link to Comprehension (RQ2)

To connect students’ reading behaviors to comprehension, we created action path diagrams. These diagrams (Figure 2) were informed by student reading abilities as gauged by NWEA-MAP scores, their reading behaviors (i.e., rereading, tool use, scrolling, embodied tracking, off-task behaviors), how often students referred back to relevant AOIs while answering questions, and their performance on the relevant questions. For more detailed description, see appendix E.

Action paths for tool use, scrolling, embodied tracking, off-task behaviors, and rereading are colored by cold-read reading type.

Analysis of Orchestration of Behaviors (RQ3)

Using the findings from the action path diagrams in RQ2, the team selected six focal students for microanalysis of their digital reading and question-answering behaviors in order to illustrate how students’ orchestration of behaviors supported their comprehension or not. To represent a range of students' reading abilities as measured by NWEA, two students from each group were selected as contrasting cases of rereading behaviors, digital tool use, and outcomes (Students K, L, C, E, H, and J).

After selecting these six focal students, a time-stamped log was created for each student’s cold read and question-answering behaviors (see appendix F for further information). Each time-stamped log consisted of 5-s intervals noting off-task/on-task behaviors, screen actions (i.e., highlighting, mouse movements, and scrolling), eye gaze (i.e., rereading, off text, jumping around, focused on a specific section of text), and general text location for each interval. Visual data reduction (Miles et al., 1994) was then applied to the logs to create visual timescapes (Smith, 2017) of students’ varied orchestration of behaviors, which include students’ screen action on the bottom, gaze action in the middle, and text location on top. The 5-s intervals run horizontally across each timescape. Also, a key was created to show behaviors (see Figures 3–5). For example, highlighting was represented by a yellow bar in screen actions. On top of the highlight bar are the word(s) from the text, which were highlighted at that moment with lengthy highlights denoted by the first and last words with an ellipsis between (see appendix A to match to text).

Timescapes of student E and student C cold read and question answering.

Timescapes of student J and student H cold read and question answering.

timescapes of student l and student k cold Read and Question Answering.

Findings

Reading Behaviors (RQ1)

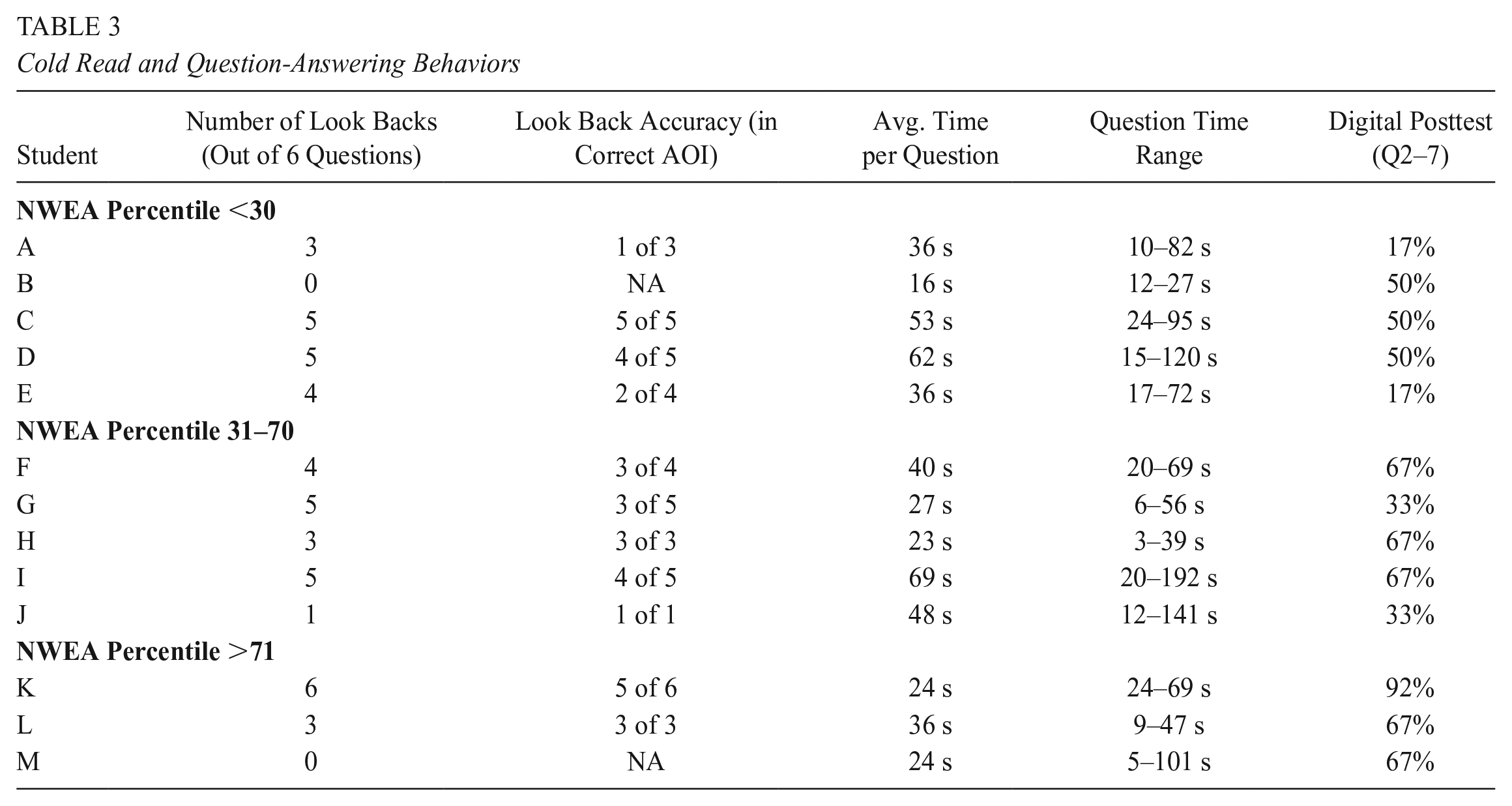

Students displayed a variety of reading and question-answering behaviors within and between ability groups. We describe each behavior and then show a fuller picture of behavior use in Tables 2 and 3.

Cold-Read Behaviors

Cold Read and Question-Answering Behaviors

Rereading Behaviors

Five students read straight through the text with no evidence of rereads. Five others occasionally reread. Four of them (students C, I, F, and K) reread phrases or sentences 2–5 times during the cold read. Student H, on the other hand, quickly reread at least 16 times. The contexts of these rereads varied. For instance, all of these readers except student K (who had the highest NWEA-MAP percentile ranking of this group) reread the text preceding the string of nonwords. Two rereads seemed to be prompted by other features: after beginning a quote, student C returned to the words preceding it, while student I looked back and forth between a caption and corresponding illustrations. Some appeared rooted in context like when student F went back after a prolonged period of looking offscreen. Other rereads were tied to functions specific to the digital mode—students C, H, and I reread before using highlight or define tools, while students C and K reread immediately before or after scrolling.

Three students (A, D, and E) were classified as nonlinear readers. Students A and E frequently scrolled and looked around the text, skipping whole paragraphs and not reading in order. Student D took many long pauses from reading and then returned far back in the text. The occasional rereaders and linear readers included students from all ability groups according to NWEA, while the nonlinear readers had three of the lowest scores.

Tool Use Behaviors

Key facets of any digital reading event are the type and quality of tools available and how the reader takes them up. Here, none of the 13 students used the annotation or zoom (i.e., magnify screen) tools embedded in the platform. Four students used the highlighter, while two used the define function. Student C highlighted once, marking a paragraph’s topic sentence that was in an AOI. Student L highlighted several names and dates in the first half of the digital passage. Student G highlighted proper noun phrases (ex. The Declaration of Independence), dates, and the first of the nonwords. We coded these uses of the tool as strategic. Student H, however, highlighted over half of the words in the text in phrase and sentence-long chunks, showing nonstrategic highlighting. Notably, all of these students except student H appeared to struggle using the highlighting tool to some extent—grabbing larger chunks of text with their cursor before going back to select more precisely. They also highlighted more frequently in the first half of the digital passage (8 of 11 highlights).

Only students A and I used the define tool. The former mostly looked up relatively low-frequency terms that supported local comprehension (e.g., propaganda, rescinded) but also occasionally used the tool nonstrategically (ex. looking up the or Susan). Student I, on the other hand, clicked on words after rapidly scrolling or moving her mouse, seemingly without enough time to read and strategically select the word she looked up, and often moving on before definitions loaded. While the efficiency of the digital define function could make it more accessible to students than a print dictionary (e.g., it does not require physically locating a word), these cases illustrated how it can be utilized for both on- and off-task purposes.

Scrolling Behaviors

Analysis of scrolling indicated that most students treated the functional scroll like a page turn. Here, the majority of students (10/13) scrolled only once or twice as their gaze approached the bottom of the screen. Three of these students made large scrolls up once to reread, perhaps similar to turning to a prior page. Three other students scrolled more frequently, in ways seemingly nonfunctional. Students A and I appeared to pause their reading and make small scrolls, fidgeting their fingers or mouses, and making the text move seemingly too fast to read. Student E made larger scrolls to the beginning and end of the text but also did not appear to read between scrolls.

Embodied Tracking

Four students with varied reading abilities used embodied tracking for more than one paragraph. Student E, a student from the low-NWEA-score group, and student L, from the high-NWEA group, moved the mouse pointer to track words as they read. This is reminiscent of using one’s finger as a pointer when reading paper text, yet it is less tactile as there is a disconnect between the hand that holds the mouse and the cursor. Students G and H, both from the mid-NWEA group, appeared to mouth the words as they read. All four students’ tracking correlated with task engagement and linear reading.

Off-Task Behaviors

Screens have been shown to potentially increase mind-wandering and distractions. Our results showed all but three students veered off-task at some point during the digital cold reads or question-answering sessions. They looked around the room, tried to read the proctor’s screen, made faces, played with their accessories, fidgeted, defined scandalous words, and tried to manipulate the eye-tracker, possibly to spur a reaction from the proctor. These behaviors not only varied qualitatively but in length, frequency, and task and time of occurrence. For instance, students F and J, two students with similar reading abilities as measured by NWEA, had quite different behaviors. The former paused reading twice during the cold read to glance around the room or at the proctor’s screen for a few seconds before reengaging with the text and then remained focused throughout the rest of the session. Student J, on the other hand, read the digital text without pause but stopped when answering questions for over four minutes to manipulate the eye-tracker, make faces, play with his hat, and repeatedly look at the proctor. Generally, seven students engaged in brief breaks (<10 s) and three in extended breaks (>10 s). Students tended to take more breaks later in the passage (three veered off task while reading the first half, five during the second) and during the question answering at the end of the session (nine students). Table 3 includes additional details.

Question-Answering Behaviors

Students again varied in their question-answering behaviors. Two students, one from the low-NWEA group and one from the high-NWEA group, answered the questions without looking back at the text. These students also moved through the questions at the fastest rates in the sample. The remaining students returned to the text at some point to search for information to help them answer a question. However, the quality of students’ returns to the text during the question portion of the task varied across students. For example, student K looked back to the digital text for every question and returned accurately to an AOI 83% of those returns, whereas student E looked back four times and was only accurately in an AOI for 50% of his look backs. Furthermore, some students’ returns to the text were brief as they spent under 30 s engaging in the entire question-answering process. In contrast, others were more extended, with some students spending over a minute and in one case over three minutes engaging with the given question, returning to the text, and answering.

How students engaged with the questions also depended on the format and nature of the question. On average, students spent less time (<45 s) on locate-and-recall multiple-choice questions in which the answer could be found directly in the text than on open-response questions that required more inferential thinking (>60 s). Interestingly, students returned to the text at similar rates for inferential and literal questions whether they were multiple choice or open response, when the questions were determined to be at similar levels of difficulty (appendix B). For example, question two was an integrate-and-interpret multiple-choice question that asked students to identify the main purpose of the article, while question three was a locate-and-recall open-response question about which constitutional amendment gave women the right to vote in all elections. Both were determined to be easier questions. Despite the difference in both the format and type of question, four students returned to the text for both questions. Yet, the outcomes were quite different, with only five correct answers for the integrate-and-interpret multiple-choice question and 12 correct answers for the locate-and-recall open-response question.

For more challenging questions, whether locate-and-recall multiple-choice (Q6) or integrate-and-interpret open-response (Q7), students returned to the text at higher rates than the easier questions, with 9 looking back for question 6 and 10 for question 7. Intriguingly, students spent almost double the time on average (68 s) looking for and attempting to answer the integrate-and-interpret open-response question (Q7) than they did the locate-and-recall multiple-choice question six (36 s). However, reflecting the difficulty of the questions, only 5 of the 13 students correctly answered question six while just one received full credit for their answer to question seven, which required students to gather information across two sentences and compose a response in their own words.

Connecting Behaviors to Comprehension (RQ2)

Action path diagrams focus on single types of behavior during the cold read (e.g., digital tool use, or scrolling) and illustrate the variety of paths students took through this task, within and between ability groups. As shown in Figure 2, most of these diagrams do not imply a clear benefit of any singular behavior. The paths in the tool use, scrolling, embodied tracking, and off-task behavior diagrams tangle together without apparent pattern, showing no link between any specific behavior and increased comprehension. The one exception is reading type, where occasional rereading does appear correlated to posttest performance, so it will be discussed separately.

Generally, different paths appeared to be charted to relative comprehension. For instance, student G strategically highlighted dates and proper nouns, while student H highlighted over half of the text, and student F, a student from the same ability group, didn’t use any digital tools during the cold read, yet they all looked back to the correct AOIs with similar accuracy and answered the same number of questions correctly. Similarly, while student A defined several words and student E did not use the function at all, both struggled on the post assessment. Also, students achieved relative success after only scrolling functionally or sometimes scrolling nonfunctionally (student H; student I), with or without embodied tracking (student A; student E), and engaging in extended or brief off-task behaviors or remaining engaged the whole time (student E; student A and student I; student H). No singular reading behavior—even rereading, described later—explains students’ comprehension. To understand their performance, we must therefore examine how students orchestrate multiple behaviors while reading and answering questions, and how these moves interact with their overall reading ability and other individual factors.

A different trend emerged when we considered students’ cold-read reading type (see Figure 2). We coded five students as occasional rereaders because they generally read through the text linearly but made some brief regressions in the process. While these students came from all three ability groups, they all looked back to the correct AOI with high accuracy during question-answering and scored higher or as high as their ability group peers on the relevant questions. Nonlinear reading, on the other hand, correlated with low performance on the assessment. However, since all three nonlinear readers in this sample were from the low-ability group, it is difficult to say whether their cold-read reading behavior influenced their performance or it was a reflection of their reading ability and struggle with the text. Also, while many of these instances of rereading could reasonably be expected in paper reading, such as when students went back after encountering the string of nonwords, others were either made possible by the digital platform (i.e., when students scrolled a paragraph down, then reread from the top of the screen—something you can’t do after turning a page)—or occurred in tandem with digital highlighter or dictionary use. Hence, the digital mode seemed to play a role in many rereading occurrences and associated comprehension outcomes.

Combining Behaviors Toward Comprehension (RQ3)

In studying how readers orchestrate multiple behaviors, our findings emphasize the multimodal complexity of digital reading and links to comprehension. To illustrate this, we discuss the varied behaviors of six readers (see timescapes in Figures 3–5), contrasting how two readers from the same reading ability group orchestrated their digital reading and question behaviors differently with varied success.

Low-NWEA Group: Nonlinear Reader With No Tool Use vs. Rereader With Minimal Tool Use

Three of the five students who scored in the 30th percentile or below on the NWEA-MAP were nonlinear readers, meaning they jumped around the text while reading, often skipping paragraphs. Two of these students engaged in extended off-task behaviors and ultimately did not perform well on posttest. For example, despite beginning his reading by tracking the text with his mouse, a quick glance at student E’s timescape in Figure 3 tells the story of a frequently off-task reader, as evidenced by the pink and red, which occupied 25% of student E’s cold-reading timescape. Some of these off-task moments were brief looks away from the page, with others being extended. At minute 2:20, for instance, student E ran his gaze on the screen but in the margin of the text while intermittently engaging in nonfunctional scrolls up and down at a very fast pace. His gaze reached paragraph five. Then, he skipped his gaze back through the text with frequent looks to the proctor’s monitor to see how his eye gaze was being picked up. Here, student E played with the eye-tracking technology and did not engage in reading (see minutes 4:10–4:55).

In contrast, student C, who also performed below the 30th percentile on the NWEA-MAP, engaged in very few and brief off-task behaviors and performed better than expected on this digital reading task (i.e., similarly to one of the high-ability students, 64% vs. 71%). In his cold reading he engaged in rereading and minimal highlighting, which was scaffolded by the proctor. Student C’s timescape in Figure 3 shows that he was engaged (even though he read leaning forward and resting his head in his hands) with the few off-task moments, which were brief looks away from the text, at which point he quickly refocused and continued his linear progression through the text. Early in paragraph one, student C engaged in a brief rereading around the words “foment a rebellion,” which can be considered central to the main idea of the text that women were fighting for equal voting rights.

The digital nature of the text was emphasized at 2:20 when student C indicated he was done with the digital reading, so the proctor functionally scrolled the text (to show the remaining reading). About 30 s later, student C reengaged the proctor to ask for assistance with highlighting. The proctor modeled highlighting one word (convention) in paragraph four. Then, student C proceeded to reread and simultaneously highlight the topic sentence of paragraph four, hence why student C was coded as a strategic highlighter.

The differences between students E and C continued when conducting a microanalysis of their behaviors during the question answering (Figure 3). For instance, student E, the nonlinear reader, did look back to the digital text when answering questions but did so with little accuracy. In Figure 3, student E looked back to the pictures, paragraph one, and paragraph four to answer question five, a multiple-choice locate and recall, when he needed to look at paragraph three to find the AOI. Student E’s nonlinear reading did not support him enough to accurately return to the relevant information in the digital text while answering questions.

Unlike student E, student C’s rereading and highlighting behavior did support him to more accurately return to the text. For instance, when attempting to answer question six, a challenging multiple-choice question, the timescape shows how he quickly read the question and scrolled down right away to paragraph four, which contained the AOI for the question. Once there, his eyes fixated on the AOI. At this point, he did engage in a brief off-task, playful behavior similar to behaviors exhibited by student E during the cold read. However, unlike student E, student C quickly reengaged, selected his answer choice, and then checked his answer, moving his gaze from the text back to the answers before proceeding to the next question. Although he did not answer this question correctly, he efficiently returned to the correct AOI, which he had reread and highlighted during the cold read.

Mid-NWEA Group: Linear Reader With No Tool Use vs. Rereader with Maximal Tool Use

Variability of digital reading behaviors continued across midability students (Figure 4). For example, during the cold read, student J read the digital text linearly in under 3 min. Furthermore, during his reading he was rarely off task, engaged in a single functional scroll, and treated this task similar to a paper reading, engaging with no digital tools during the cold read. Student H, another midability reader as measured by NWEA, also engaged in no off-task behaviors during the digital read. However, he used the digital tools available to support active reading that lasted over 5 min. Review of eye tracking and screen action showed he used both his mouse and the highlight tool to actively engage in reading and rereading throughout. For example, student H was coded as a nonstrategic highlighter as he highlighted 206 words of the 409-word text (51%, including nonwords, see multiple yellow bars across the timescape in Figure 4), potentially overusing the tool if marking important content was the goal. Yet the tools of embodied tracking with the mouse and highlighting aligned with moments of rereading. For example, at minute 1:35, student H highlighted the word abridged in paragraph two and continued reading, tracking the text with his mouse as he moved to paragraph three. Then he engaged in rereading as he highlighted the entirety of paragraph three. He next returned to paragraph two (minute 2:10) to reread the second-to-last sentence as he selected and highlighted it. Then, he returned toward the beginning of paragraph two, again, rereading and highlighting the text that read “It would take another 144 years before the U.S. Constitution was amended” before scrolling down to continue reading the text. As is visible in his timescape, he continued this behavior of tracking the text with his mouse, rereading, and highlighting for the remainder of his digital reading process.

The variability in student J’s and H’s behaviors continued into the question-answering portion of the digital reading task. For instance, student J’s timescape in Figure 4 shows how he was off-task for almost 2 min as he was answering question five, a locate-and-recall multiple-choice question. This time was spent both playing with the eye-tracking technology and his makeshift Krispy Kreme hat that started out atop his head and ended up broken and in his lap. Ultimately, student J significantly underperformed on the comprehension posttest. While student J’s cold read was efficient, he scored 33% on the digital text questions, the lowest score in the mid-NWEA group and lower than three students in the low-NWEA group.

Conversely, student H, who engaged in mouse tracking, highlighting, and rereading, fared better on the comprehension posttest. For example, on question five he was able to accurately return to the AOI in the text, albeit not in the most efficient manner. The timescape in Figure 4 shows how he first returned to both the paper and digital texts in locations that did not contain the AOI. He then used functional scrolling to arrive at paragraph three that contained the AOI at around the 0:37 mark. He had highlighted the entire paragraph, not just the specific AOI sentence in paragraph three. However, he spent nearly 10 s looking directly at the AOI and then selected the correct answer. Student H even continued to shift his gaze multiple times between the answers and the AOI in the digital text, double-checking his answer.

High-NWEA Group: Linear Reader With Prolonged Mouse Tracking vs

Rereader With No Tool Use.

Student L read the text linearly, conducted two functional scrolls, and engaged in four short highlights. She was coded as a strategic highlighter, as she seemed to focus on important names (John Adams, Abigail Adams) and dates (1776). She also highlighted the nonsense words. The most notable digital reading behavior from student L was her embodied tracking done with the mouse. As seen in her timescape in Figure 5, she tracked nearly the entire text (up to the end of paragraph 4) with her mouse. At this point, she seemed fatigued, leaning all the way forward to read paragraph five, the final and longest paragraph in a mere 20 s. Overall, student L performed adequately for the digital-related questions (4/6). However, for question seven, an open-response integrate-and-interpret question whose AOI was located in paragraph five, which she had read very quickly without tracking, student L did not return to the text and answered the question incorrectly.

While student L actively used the mouse to track her digital reading, student K did not use any digital tools or even move her mouse. Similar to turning a page, she conducted a single functional scroll and took one brief moment of looking around before quickly reengaging in the reading task. She performed two moments of active rereading. One occurred at the end of paragraph three around the text “allowed women property owners to vote,” a sentence that may have seemed in tension with the main idea of the article, women fighting for their right to vote. The second occurred at the beginning of paragraph four as she entered a new section with a new heading. Both rereads functioned similarly to paper-reading behaviors.

Student K’s question-answering portion was both efficient and accurate (92% on the digital portion). As seen in Figure 5, student K read question five, engaged in a functional scroll to return to the AOI, and answered the question correctly in under 30 s. During the cold read, student K had reread at the end of paragraph three, where the AOI for question five was located.

Discussion

The integration of digital technology into our daily lives has led researchers to question how the medium (i.e., digital screen or paper) of reading influences reading differences. We found that even within digital reading and within readers of similarly measured reading abilities reading a static digital text, there is great variability in reading behaviors and quality of behaviors both during reading and when answering questions. Additionally, there is no single path to efficient digital-text comprehension as no single behavior nor combination of behaviors related to comprehension. Rereading—a behavior reflective of effortful processing and active self-regulation—was found to support comprehension, but this is an example of the importance of applying an established strategy effectively to a new medium: rereading in static digital texts. Generally, some students treated the digital text as paper reading while some took advantage of the digital nature of the text and seemed to experiment with the use of digital tools and practices to support their comprehension. Hence, Leu et al.’s (2013) theory of new literacies, which emphasizes emerging use and hybridization of multiple literacies and reading practices, was embodied by these fifth graders as they comprehended this digital text. For researchers, practitioners, and theorists, our findings emphasize that there are many paths to comprehension success and that fifth-grade students lean on established (paper) reading skills and new (digital) reading skills to different degrees when reading digital static texts.

Linearity and Rereading

A key question in the literature is whether digital text changes the nature of reading, especially the linearity, given that many digital tasks from social media posts to more open digital reading tasks include hyperlinks and hence involve moving through different sections of different texts. The thought is that students may bring these practices into digital-text reading even when the text is static and does not include hyperlinks and thereby impact processing and comprehension (Halamish & Elbaz, 2020; Ronconi et al., 2022). Our study confirms that there are different ways fifth graders read digital text, including linearly, linearly with occasional rereading, and nonlinearly. For static digital reading, we found that linear reading or linear reading with occasional rereading is most supportive of reading comprehension and also more reflective of students with proficient reading abilities, as the nonlinear readers were low-ability readers who generally seemed to struggle with this digital reading task.

In line with prior work (e.g., Chevet et al., 2022; Singer Trakhman et al., 2018), we found rereading to be beneficial for students’ comprehension when engaging in static digital reading. Importantly, it seemed that no matter students’ initial reading ability as measured by standardized tests, rereading supported students to both comprehend and more accurately return to essential parts of the text when answering text-dependent questions after reading (see students C, F, H, I, and K in Table 2). This suggests that rereading may be an important part for students’ active reading (Duke & Cartwright, 2021). Additionally, rereading behaviors when engaging with a digital text can be enacted differently across students. In three of our six focal students, one rereader used no tools or mouse tracking (student K), another used the tools and mouse tracking minimally (student C), and a third engaged in nearly continuous mouse tracking and highlighting to support his rereading (student H). With that said, rereading is not a prerequisite for success as student M and L read linearly without rereading and performed rather well (4/6 questions correct). It may be that teachers want to encourage rereading as an active self-regulation strategy to be used when comprehension is less clear. For example, student K, a strong reader, did reread and performed the best on the posttest (5.5/6). Her rereading occurred when the text stated that women landowners previously did have the right to vote, a potentially confusing area of a text that was mainly about women fighting for the right to vote. Also, the various forms of digital rereading suggest that researchers and teachers may want to consider investigating and supporting students to engage in rereading in a way that matches both their technical and reading ability. For instance, consider student C, who needed proctor assistance for both scrolling and highlighting. Asking him to highlight more frequently might impose an undue cognitive load that could impact his comprehension. Whereas student H seemed to have more technical skills and was able to engage in frequent highlighting while rereading without it seeming to impact his comprehension. Moreover, students’ reading ability, such as that of student K, may negate their need to use any tools at all to support their active (re)reading of digital texts.

Strategic Reading and Expected and Subverted Tool Use

Our study moves forward understandings of tool use investigating strategic, expected, and subverted tool use. First, our close review of the literature indicated that digital reading may require readers to be more strategic in their reading behaviors to compensate for the increased difficulty experienced when reading digitally (on screens, including static readings) compared to paper (Coiro, 2021). Our analysis did not connect strategic use of behaviors with comprehension of the static digital text because there seemed to be many different paths to comprehension, including paths that looked similar to paper reading and paths that involved digital tool usage. Generally, performance on the digital static reading was highly correlated with student scores on the reading test used by our partner district, emphasizing that students need only be as strategic as needed by the static digital reading tasks. For some strong readers, like student M who engaged in no tool use or embodied tracking but scored 71% overall, engagement with a text at a challenging Lexile level for fifth graders may require less strategic behaviors than more open tasks like reading on the internet or digital texts with hyperlinks. Hence, the screen and digital tool availability did not itself mean that the fifth graders engaged in strategic actions or that strategic actions connected to comprehension.

Our analysis of tool use reinforces Coiro’s (2021) call to consider task, text, reader, and context, as our qualitative analysis showed students used or didn’t use various tools and strategies depending on their needs and the tool’s constraints. There was variability in how the tools were used. Importantly, no single tool was linked to comprehension success (see first action path of Figure 2). Two meta-analyses have concluded that digital tools can support readers’ comprehension, making digital reading more advantageous than paper (Clinton-Lisell et al., 2023; Schwabe et al., 2022). But the use of these tools can be complicated, as tools like digital dictionaries or highlighters could scaffold student success but also could distract or be difficult for students to use. In particular, highlighters could be used to color a page or to identify key text content. However, instances of students using the highlighter to select large portions of text and then adjust suggests the tool may have been cumbersome. Relatedly, less frequent highlights in the second half of the digital passage (3 of 11 highlights) may have been due to the fifth graders abandoning the difficult tool. Meanwhile, tools like zooming or scrolling could challenge students in mapping the text, or possibly serve as active reading support, perhaps helping students to focus on important sections. A major finding in this study was how minimally the digital tools were used, with the zoom, dictionary, and annotation tools used scantily or not at all.

In line with Leu et al.’s new literacies (Leu et al., 2013), most of what we observed seemed similar to what we might observe with paper reading, as few students took advantage of the affordances of the digital text; most students functionally scrolled only when they needed to access text, few used the highlighter or dictionary or mouse (to track), and most took quick breaks and then quickly returned to the task at hand. This may be because the digital experience of reading static digital texts to answer questions is similar to other paper tasks the students engage in, and hence, they tend to apply strategies similar to paper reading. Alternatively, it may have been that the digital tools were less efficient than similar paper tools, as the dictionary took time to load definitions and students showed frustration with the highlighting tool because grabbing the exact text the students wanted to highlight was difficult. As many students repeatedly tried to grab text, the effortful processing seemed to be focused on the tool rather than the content being highlighted. Hence, for some of these fifth graders, the digital affordances in our study did not seem to provide effective scaffolds for students, perhaps because the tools themselves ultimately seemed distracting or effortful as has been found in the literature. Educators likely need to provide explicit instruction on how to purposefully use digital tools to support comprehension while reading a digital text, even with our youngest readers who grew up in the digital age.

Moreover, as the team began to code for the quality of students’ tool use, we had preconceived definitions of how strategic tool use would be enacted by the readers. In terms of highlighting, using prior studies that considered the content and amount of highlighting and concluded that effortful, less-frequent highlights as predictors of comprehension (Goodwin et al., 2020), we defined strategic highlighting as brief highlights of central ideas or important facts that could be used to assist their synthesis and recall. When using this operationalization of strategic highlighting, we found that two students did in fact use the highlighting tool in this way, and it did seem to support their comprehension as prior work has found. For instance, one high-ability reader (student L) used the tool to highlight names and dates in the digital text and ultimately ended up answering the majority of the questions related to the digital text correctly. Moreover, as shared in student C’s timescape, he highlighted the topic sentence of the fourth paragraph, which assisted him to accurately return to the text when answering a question related to that AOI.

However, when shifting our focus from the content and amount of highlighting to the process of highlighting, we found other students subverted the expected use of the tools in order to support their active reading process. For instance, student H was originally coded as a nonstrategic highlighter, as he highlighted a large percentage of the digital text. It seemed however, that he used the highlight tool to strategically and actively reread the text. Although this behavior resulted in highlights that could not reasonably aid him in targeted recall, it did seem to support his overall comprehension of the entire text. Student H ended up performing as well as all the high-ability readers in terms of the questions related to the digital text. This indicates that his subverted, “nonstrategic” use of the highlighting tool while reading digitally helped him to actively read and construct meaning from the text. Taken together, it seems that neither expected nor subverted tool use while reading digitally is better or worse, but rather, these seem to function differently for different readers.

For researchers studying digital reading behaviors, this suggests the need to reflect on our understanding of how the use of digital reading tools is conceptualized. The field may need to revise and expand digital tool use to include alternate nonstandard uses that support the active digital reading process, such as those seen by student H. Similarly, for teachers, there may not be a single best practice when considering how students should optimally use digital reading tools. Rather, teachers may want to explicitly model multiple uses for a single digital reading tool. After which, teachers can have students engage in active reflection of their own reading process, so students can consider how certain tools may be useful for them or not, and how these uses may be influenced by both the digital reading text and task. For instance, if students have a lot of background knowledge on a topic, using the highlighter to emphasize new, important words may be helpful. Whereas if they are new to the topic, using the highlighter to actively read and reread may be more helpful as they first engage with the text.

Mind-Wandering and Off-Task Behaviors

In the literature, straying off-task or letting one’s mind wander has been found to occur more often during digital reading than paper reading and can harm students’ ability to retain and comprehend what they have read (Delgado & Slamerón, 2021; Jian, 2022). We did note that most students engaged in off-task behaviors during the digital reading task. These behaviors differed in what occurred as well as length, frequency, and task and time of occurrence and are important considerations for digital reading. Students who engaged in no off-task or mind-wandering behaviors fared well in comprehension, passing with scores of 71% and 79%, respectively. Outcomes for four students, who engaged in brief off-task behaviors, ranged between 64% and 89%. In contrast, students who engaged in extended mind-wandering or off-task behaviors fared poorly, with most scoring around or below 50%.

Critically, though, our study emphasizes the importance of the nature of off-task behaviors. Aligning with the literature suggesting short mind-wandering sessions might be facilitative (Mills et al., 2021), we found that students seemed to be able to nudge themselves back on task after engaging in brief off-task behaviors, and for more than half of the students who engaged in brief off-task behaviors, it did not seem to negatively impact their overall comprehension or performance on the assessment. It may be that in the digital domain, for some, mind-wandering and refocusing is a part of active reading (Duke & Cartwright, 2021). Hence, practitioners should attempt to prevent prolonged off-task behaviors, as these were found to negatively impact students’ comprehension. However, practitioners may want to model active reading strategies for nudging readers back on task, thereby normalizing brief mind-wandering as a part of the active reading process.

Limitations and Future Directions

Reading behaviors have been explored in various ways, including correlational, observational, and think-aloud/questioning protocols. Because we emphasized natural reading and hence observation of behaviors (rather than asking students to explain their behaviors), our findings stemmed from inferences made by the research team about students’ behaviors after triangulating the multimodal sources of data. At times, students who wore glasses or who did not face the camera directly while reading (i.e., students who rested their head on their hand or significantly leaned forward) had incomplete eye-tracking data. Other times, the gaze-tracking seemed inconsistent, requiring the research team to watch the video together and use other contextual information to approximate where the student was looking in the digital text. Future studies should explore alternate methods of gathering data on reading behaviors. This could include newer eye-gaze tools, like Tobi eyeglasses, which are worn by the reader and, therefore, can more readily gauge students’ gaze no matter their body position. Alternatively, this could include a reflective think-aloud interview at the end of the session to add data on what students were doing and why.

Another important avenue for future work is mixed-method analyses that could combine behaviors noticed in this qualitative analysis with quantitative coding at scale. Machine learning and advanced psychometric techniques informed by qualitative analyses like ours could enable investigation of reading behaviors like rereading or digital tool use at scale (e.g., in our large sample of N = 371, see Goodwin et al., 2020). Such mixed-methods and multimodal inquiry can allow researchers to make scalable inferences about digital reading processes without losing sight of the complex behaviors, tools, and strategies students orchestrate in the moment. Here, future work needs to consider the broader digital continuum of reading (i.e., bound to free, Coiro, 2021) as well as how students’ digital reading process is influenced by different genres (i.e., nonfiction, persuasive, etc.) as well as different kinds of tasks (i.e., multimodal essay response) and settings (i.e., at scale in classrooms or even when students are collaborating). This is essential since our findings suggest students’ digital reading processes are nuanced across readers, even when engaging with a static digital text with clear-cut comprehension questions. This work adds to the growing digital reading literature (Alexander, 2020; Coiro, 2021) to show the varied pathways to comprehension for fifth-grade readers reading a digital static text, adding a multimodal lens that considers multiple behaviors and tools. These nuances inform theory, research, assessment, and instructional practices as we continue to navigate the digital era.

Footnotes

Appendix

Appendix

Questions 2–7 Content and IRT Difficulty Measurements

| # | Question | Format | Task | IRT Difficulty |

|---|---|---|---|---|

| 2 | What is the main purpose of the article? | MC | Integrate & interpret | −0.39 |

| 3 | What was the constitutional amendment that gave women the right to vote in all elections? | OR | Locate & recall | −1.09 |

| 4 | About how many years did it take after the Declaration of Independence for women to earn the right to vote in every state? | MC | Locate & recall | −0.22 |

| 5 | According to the article, women in New Jersey in the 1700s could | MC | Locate & recall | 0.07 |

| 6 | According to the article, what was most surprising about the “Womanifesto”? | MC | Integrate & interpret | 0.41 |

| 7 | When Elizabeth Cady Stanton wrote the Womanifesto, why did she demand equal voting rights rather than other rights like property ownership? | OR | Integrate & interpret | 0.67 |

Note. MC = multiple choice; OR = open response. IRT difficulty was calculated based on all questions (Q2–Q15) and our full sample (N = 371). Negative and low numbers indicate “easier” items whereas positive and high numbers indicate “harder” items.

Appendix

Original Codebook for RQ1

|

|

|

| Zooming | Uses the mouse or touch screen to enlarge or zoom in or out of digital text |

| Annotating | Clicks and types a note in the digital text |

| Highlighting | Grabs and highlights the text |

| Scrolling | Scrolls up and/or down to continue reading |

| Mouse tracking | Moves mouse under words while reading |

| Mouse movement | Moves mouse around the page (not tracking) |

|

|

|

| Rereading occurs | Student skips back in the text to read again |

| No rereading | Student never skips back in the text to read again |

|

|