Abstract

We tested a specific theoretical assumption of a learning trajectories (LTs) approach to curriculum and teaching in the domain of early length measurement. Participating kindergartners (

Keywords

Learning trajectories (LTs) in early mathematics curriculum development and teaching have received increasing attention (Baroody et al., 2019; Clements, 2007; Clements & Sarama, 2021; Maloney et al., 2014; Sarama & Clements, 2009). For example, LTs were a core construct in the National Research Council (2009) report on early mathematics education (subtitled “Paths toward excellence and equity”) and the notion of levels of thinking was a key first step in the writing of the Common Core State Standards—Mathematics (National Governors Association Center for Best Practices, Council of Chief State School Officers, 2010). However, little research has directly tested the specific contributions of LTs to teaching compared with instruction provided without LTs (Frye et al., 2013). The goal of the present study was to compare the learning of kindergarteners who received instruction on length measurement following an empirically validated LT to those who received an equal amount of time on the same instructional activities that were not sequenced along the LT’s developmental progression.

Theoretical Framework and Background

Learning Trajectories: Definition and Assumptions

Our theoretical framework is hierarchic interactionalism (Sarama & Clements, 2009). This term reflects the influence and interaction of global and local (domain specific) cognitive levels and the interactions of innate competencies, internal resources, and experience (e.g., cultural tools and teaching). LTs synthesizing these interactions stand at the core of this theory. Different fields name similar constructs differently, such as the use of “learning progressions” in science education.

The structure of LTs is built on the assumption that to be optimally useful to educators, LTs must include and integrate educational standards, empirical research on how children think and learn, as well as teaching strategies (Baroody et al., 2004; Carnine et al., 1997; National Research Council, 2007; Steedle & Shavelson, 2009). Therefore, we define a LT as having three components: a goal, a developmental progression that describes levels of thinking, and instructional activities (including curricular tasks and pedagogical strategies) designed explicitly to promote the development of each level (Clements & Sarama, 2004; Maloney et al., 2014; National Research Council, 2009; Sarama & Clements, 2009). Definitions of LTs and learning progressions can differ, with some including only LTs’ developmental progressions, and others, as also including a sequence of instructional activities. That is, the uniqueness of this view of the LTs construct stems from the inextricable interconnection between the components.

Goals are based on the structure of mathematics, societal needs, and research on children’s thinking about and learning of mathematics and require input from those with expertise in mathematics, policy, and psychology as well as educators (Clements, Sarama, & DiBiase, 2004; Fuson, 2004; Sarama & Clements, 2009; Wu, 2011). Descriptions of the other two components of LTs require more details about hierarchic interactionalism (Sarama & Clements, 2009). Consistent with Vygotsky’s (1935/1978) construction of the zone of proximal development (ZPD), hierarchic interactionalism posits that most content knowledge is acquired along developmental progressions, or levels of thinking within a specific topic that are consistent with children’s informal knowledge and patterns of thinking and learning. Each level is more sophisticated than the last and is characterized by specific concepts (e.g., mental objects) and processes (mental “actions-on-objects”) that underlie mathematical thinking at level n and serve as a foundation to support successful learning of subsequent levels. However, these levels are not stages but probabilistic patterns of thinking through which most children develop (e.g., an individual may learn multiple levels simultaneously; Sarama & Clements, 2009).

Hierarchical interactionalism also posits that teaching based on those developmental progressions is more effective, efficient, and generative for most children than learning that does not follow these paths. Thus, each LT includes a third component, recommended instructional activities corresponding to each level of thinking. That is, based on the hypothesized, specific, mental constructions (mental actions-on-objects) and patterns of thinking that constitute children’s thinking, LTs include instructional tasks explicitly designed to include external objects and actions that mirror the hypothesized mathematical behavior of children as closely as possible. These tasks are sequenced, with each corresponding to a level of the developmental progression, to complete the hypothesized learning trajectory. Such tasks will theoretically constitute a particularly efficacious educational program. However, there is no implication that the task sequence is the only path for learning and teaching; only that it is hypothesized to be one fecund route. In sum: LTs are descriptions of children’s thinking and learning in a specific mathematical domain, and a related, conjectured route through a set of instructional tasks designed to engender those mental processes or actions hypothesized to move children through a developmental progression of levels of thinking. (Clements & Sarama, 2004, p. 83; Sarama & Clements, 2009, provides a complete description of hierarchic interactionalism’s 12 tenets)

The goals and developmental progressions for many topics have been supported and validated by theoretical and empirical work describing consistent sequences of thinking levels. However, the amount of empirical support differs for different topics and ages (Confrey, 2019; Daro et al., 2011; Gravemeijer, 1994; Maloney et al., 2014; National Research Council, 2009), especially in domains such as the approximate number system and subitizing (e.g., Clements, Sarama, & MacDonald, 2019; vanMarle et al., 2018; J. J. Wang et al., 2016), counting (e.g., Fuson, 1988; Purpura et al., 2013; Spaepen et al., 2018), and arithmetic (e.g., Hickendorff et al., 2010). Furthermore, the application of developmental progressions as curricular guides (e.g., D. M. Clarke et al., 2001) and complete LTs (i.e., Clements et al., 2011; Clements & Sarama, 2008) have been successfully applied in early mathematics intervention projects, with significant effects on teachers’ professional development (B. A. Clarke, 2008; Kutaka et al., 2016; Wilson et al., 2013) and children’s achievement (D. M. Clarke et al., 2001; Clements & Sarama, 2008; Clements & Sarama, 2008; Kutaka et al., 2017; Murata, 2004; Wright et al., 2006).

Learning Trajectories: Empirical Evidence

Despite this research foundation, there are few studies that directly test the theoretical assumptions and specific educational contributions of LTs. That is, most studies showing positive results of LTs confound the use of LTs with other factors (Baroody & Purpura, 2017; Frye et al., 2013), thus suggesting the use of LTs yields benefits, but without identifying their unique contribution (D. M. Clarke et al., 2001; Clements et al., 2011; Clements & Sarama, 2007; Fantuzzo et al., 2011; Gravemeijer, 1999; Jordan et al., 2012). For example, preschoolers who experienced a curriculum specifically designed on LTs demonstrated (a) significantly greater growth in mathematics competencies than those in a business-as-usual (BAU) control group score (effect size = 1.07) as well as (b) greater growth than those who experienced an intervention using a research-based curriculum that followed a sequence of mathematically rational topical units (effect size = 0.47; Clements & Sarama, 2008). Given that the contents of the two curricula were closely matched, the latter difference may be due to the use of LTs (e.g., the developmental progressions of the LTs provided benchmarks for formative assessments, especially useful for children who enter with less knowledge). However, the two curricula also differed in organization (e.g., interwoven counting, arithmetic, geometry, and patterning LTs vs. separate units on these topics) and in specific activities. Therefore, again, several factors were confounded with the use of LTs and thus the specific effects of LTs could not be distinguished (Clements & Sarama, 2008).

Testing the Theoretical Assumptions of a Learning Trajectories Approach

The present study is one of multiple experiments rigorously evaluating whether instruction based on LTs for early mathematics (Baroody et al., 2019; Clements & Sarama, 2021; Frye et al., 2013; National Research Council, 2009; Sarama & Clements, 2009) is significantly more efficacious than alternatives. To do so, we need to avoid confounding the essential elements of a learning trajectory with the myriad characteristics of curricula based on LTs. Therefore, we distilled two of the main characteristics of LTs that distinguish their application to curriculum and teaching from alternative pedagogical approaches and designed experimental conditions to rigorously test the efficacy of those two unstated assumptions.

The first assumption is that instruction should move children from their present level to the next higher level and continue in this manner until the instructional goal is reached. A competing approach posits that it is more efficient and mathematically rigorous to teach the target level immediately by providing accurate definitions and demonstrating accurate mathematical procedures (see Bereiter, 1986; Wu, 2011), potentially obviating the need for potentially slower movement through each level. There is evidence supporting this approach to learning (Borman et al., 2003; Carnine et al., 1997; Clark et al., 2012; Gersten, 1985; Heasty et al., 2012), although the research designs often do not include other research-validated approaches. In contrast, LT approaches justify the assumption that each contiguous level be taught consecutively because LT’s developmental progressions are more than linear sequences based on accretion of numerous facts and skills. Each is based on a progression of levels of thinking characterized by specific mental actions-on-objects that serve as a foundation for successful learning of all subsequent levels. We have tested this assumption in a series of studies that support the LT approach, with children exhibiting significantly greater learning than those taught at the target level for the same amount of time, including performing higher on target-level items, particularly those with low entry knowledge (Clements, Sarama, Baroody, & Joswick, 2020; Clements, Sarama, Baroody, Kutaka, et al., 2020; Clements, Sarama, Baroody, et al., 2019).

The second assumption of an LT approach is that there is a definite sequence of such levels of learning and teaching that is determined by research-based developmental progressions and that instruction is more efficacious if it builds each level in turn. Postulating that each level of thinking builds hierarchically on the concepts and processes of the previous levels stands in contrast to some traditional early childhood curricular organizations: theme, project, and emergent approaches (Broderick & Hong, 2020; Edwards et al., 1993; Helm & Katz, 2016; Hendrick, 1997; Katz & Chard, 2000; Tullis, 2011). In these approaches, it is the classroom theme (e.g., “colors”), or a project (visiting an apple orchard and making applesauce or building a bus when children expressed interest in buses spontaneously, reflecting the emergent curriculum approach) that determines the ordering of activities. For example, if the theme is colors, children are asked to sort by color; if it involves apples, children might count the seeds in an apple or cut them and talk about “halves.” Thus, the activity is chosen for its fit to the classroom work, which is ostensibly more meaningful and connected for the child and thus will lead to greater learning. The critical question of “Which approach, LT, mathematical-relational, or traditional, results in better mathematical outcomes for preschool children?” has yet to be answered causally. We believe the benefits of LTs’ sequencing of levels of thinking outweighs the benefits this type of “integration” of math with other themes (and the general philosophy that all early education events should emerge from children’s choices). The present study is the first experiment to focus on the second assumption.

Present Study

To evaluate the second, instructional sequence-of-levels assumption, with controlled conditions, we selected the length measurement learning trajectory. Early length measurement is important itself and serves as a critical bridge between geometry and number concepts (Clements & Sarama, 2021; Sarama & Clements, 2009). Research on the development of length-measurement knowledge suggests that young children enter school with a basic idea of how to use rulers and can verbally list or draw its attributes (e.g., lines and numbers, MacDonald & Lowrie, 2011). However, aligning an object at zero and reading the measure of a ruler does not mean that children understand how or why a ruler works. In fact, young children have trouble understanding the relationship between units and how iteration (accurate, repeated placement of a single unit) of discrete standard and nonstandard units produce measures (Lehrer, 2003; National Research Council, 2007). Thus, early measurement is important, but often taught badly, indicating a need for practice-based evidence (Bryk, 2015; Clements & Sarama, 2021).

Instructional Conditions

To test the significance of the instructional sequence-of-levels assumption in the context of an experimental study, the primary experimental group (LT) received one-on-one instruction that followed an empirically validated learning trajectory for length (Barrett et al., 2011, 2017; Sarama et al., 2011, 2021; Szilagyi et al., 2013). We compared growth in their length-measurement knowledge to two counterfactual groups. The secondary experimental condition, reverse-order (REV) condition, provided one-on-one instruction that did not follow the order of the developmental progression. Instruction in this condition instead consisted of the same activities as the LT group, which covered all levels of the trajectory but sequenced in the reverse order of the learning trajectory. This order stood in for the aforementioned traditional approaches for three reasons. First, the traditional approaches would take place over a full year, well beyond the study’s time frame, and still would not necessarily include all of the LT activities, which a rigorous test required. Second, the design also required a consistent, well-specified order (a random order would likely mix LT- and non-LT-sequences, as well as cover multiple topic strands). Third, studies suggest that attempting to solve challenging problems first is beneficial, as productive failure can help children make sense of the goals of subsequent instructional sessions (see discussions in Kapur, 2010; Loehr et al., 2014), providing a rationale for the efficacy of the REV condition and thus its usefulness as a counterfactual (albeit direct instruction following failure was not provided). The BAU condition did not receive any one-on-one instruction; of course, they continued with their classroom curriculum as did all children in all groups. This curriculum did not teach length during the semester of the study, so the BAU group served as a nontreatment control for statistical purposes.

What Can We Learn From These Comparisons?

Comparing BAU growth to that of the LT and REV groups enables us to draw two categories of conclusions. First, we can confirm that “no harm” was done to student learning through pull-out participation in the LT and REV instructional sessions. Second, we can observe whether the LT length-measurement instructional activities benefit learning in the fall of kindergarten, which necessitated the no-treatment group comparison. This is a valid question, especially as even with instruction, children make slow progress even across grades (Barrett et al., 2017).

Comparing the two experimental conditions addresses the main research question, which is to clarify which characteristics of the LTs are “active ingredients” (vs. “inert,” Bell et al., 2013) to more clearly describe their contributions to teaching and learning. To this end, we use a dismantling design. In such studies, a full “treatment package” is compared with a (dismantled) treatment condition with the hypothesized active component removed. If the full treatment (instruction for the LT condition) is found to be more effective than the dismantled treatment (instruction for the REV condition), the component that was removed (the order of the LT sequence) can be described as an active ingredient of treatment (Bell et al., 2013). Because the children in the REV group received the same activities (unlike our previous studies addressing the first assumption of LTs), we can attribute any differences in learning to the second assumption: instruction is more efficacious if it supports children to learn each LT level in sequence. Thus, we examine the following research question: Does instruction aligned with an LT’s sequence result in greater learning than instruction that uses an LT’s sequence in reverse order?

Method

We used a randomized control trial to compare the three experimental groups: the intervention LT group, the REV group, and the BAU group.

Sample

The intervention took place in a large, urban school district in a Mountain Range state. This school district is racially/ethnically diverse: 53.8% Latinx, 24.7% White, 13.2% African American, 3.2% Asian, 0.7% American Indian, 0.4% Native Hawaiian or Other Pacific Islander, and 4.1% respondents who identified as having two or more races. Additionally, 65% of students qualify for free-/reduced-lunch and 36.3% are English language learners. Preassessments were administered to 187 students; two students moved before the posttest, so 185 students were administered the postassessment.

Recruitment Process

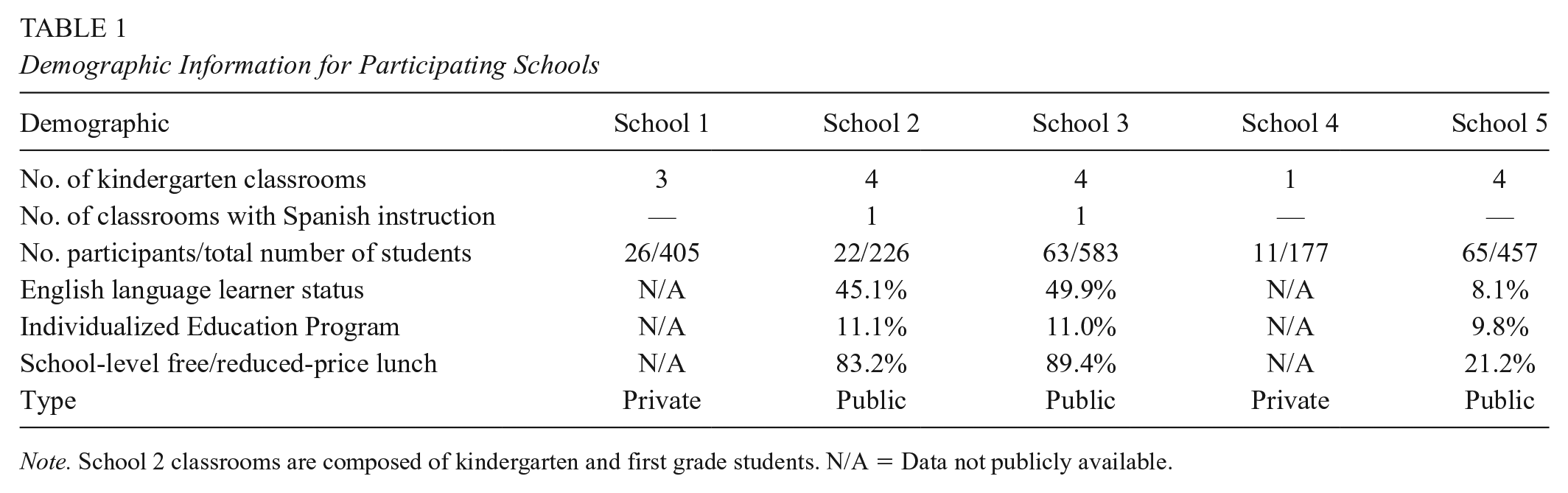

Prior to our recruitment, the proposed study was submitted to the district external research review board. On approval, we reached out to multiple elementary schools that had more than one kindergarten classroom. Five school principals and their kindergarten teams agreed to host the study. Participating schools were offered professional development on early mathematics by the principal investigators of the project, as well as given the instructional materials at the end of the intervention. Table 1 contains basic demographic information of each site.

Demographic Information for Participating Schools

Note. School 2 classrooms are composed of kindergarten and first grade students. N/A = Data not publicly available.

Prior to the study, graduate student instructors volunteered on at least two separate occasions in the participating teachers’ classrooms for two reasons. First, we wanted to be seen as friendly adults by students. Second, it was the beginning of the academic year and teachers were still in the process of establishing classroom norms, expectations, and routines. We wanted to make sure that we set and communicated behavior expectations consistent with each classroom teacher.

Randomization and Assignment to Experimental Condition

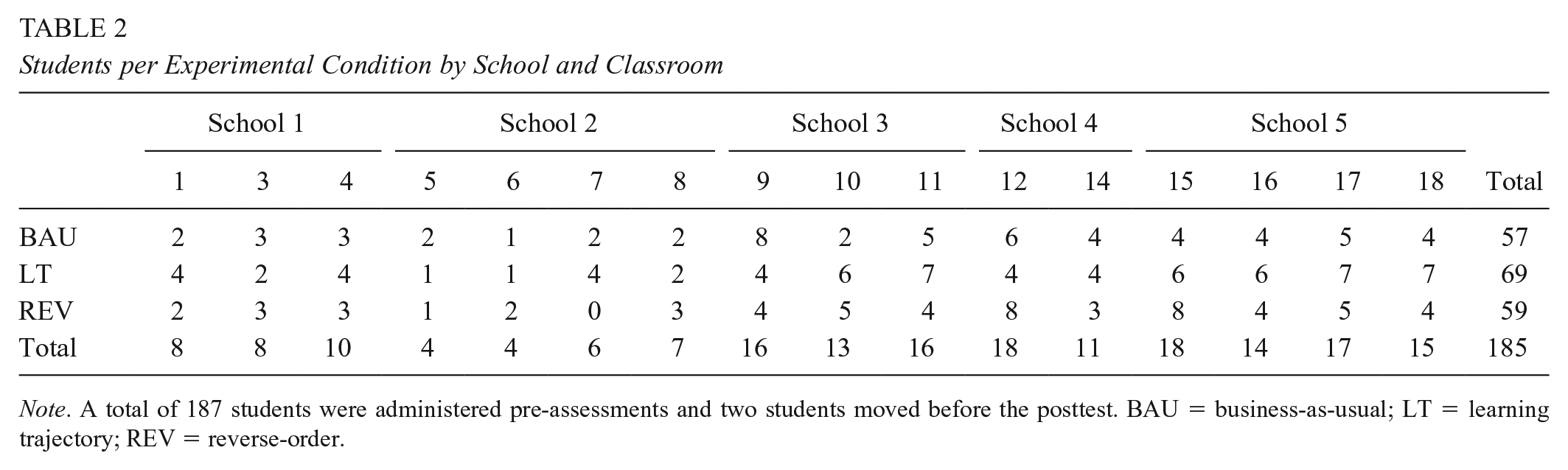

The randomization process began in August 2019 and was completed in September 2019. Within each school, teachers and the research leadership team agreed to the following recruitment and randomization plan. Teachers and the instructional team members worked together to collect as many parental consent forms as possible. Once the teacher confirmed that we had as many signed permission forms we could reasonably expect, we created a list of these children. We used a random number generator to assign each student a number from 0 to 1,000 to reorder the class list. Next, using the reordered class list, we used another random number generator, which assigned a 1, 2, or 3 to each student. Students who were assigned a 1 were put in the LT group, 2 in the REV group, and 3 in the BAU group. Frequency analyses were run to ensure that baseline levels of knowledge at pre-assessment (see details in “Assigning Pre-Mastery Levels”) were distributed evenly among conditions for the whole sample. Table 2 lists the number of students per experimental condition by classroom and by school.

Students per Experimental Condition by School and Classroom

Note. A total of 187 students were administered pre-assessments and two students moved before the posttest. BAU = business-as-usual; LT = learning trajectory; REV = reverse-order.

English Language Learners

As can be seen in Table 1, there were two classrooms (one in School 2 and another in School 3) where Spanish is the primary language of instruction. Assessment and instruction were administered by two bilingual instructors and one assessor proficient in Spanish. Additionally, there were three students at School 5 who were Mandarin speakers who were new to the country. Assessment and instruction were administered by one bilingual instructor. The assessment and instructional materials for the Mandarin speakers were translated in advance but did not go through the translation and back-translation process like the Spanish assessment materials.

Experimental Conditions

Students in the LT and REV experimental conditions received ten 12-minute instructional sessions (120 minutes), while the BAU did not receive one-on-one instruction. The data collection period began the first week of September and ended the first week of December. Each LT and REV student had, at minimum, one instructional session per week. However, the pull schedule varied by classroom and reflected the preferences of the teacher. For example, one teacher requested that her students be pulled no more than one time per week, while another teacher in the same school wanted us to pull her students at least twice per week. As per our agreement with school leadership and teachers, we did not remove students from recess, lunch, math blocks, or literacy instruction.

Our rationale for implementing 10 instructional sessions for each group is based on findings from the small-scale pilot study conducted in a single-classroom prior to the larger scale study reported here. During the pilot, we trained instructors and assessors to fidelity in situ, evaluated our assessments for sensitivity, as well as examined the effectiveness of the instructional activities (e.g., added or deleted recommended scaffolding questions). Importantly, we also learned that 10 instructional sessions were necessary to develop a warm and productive relationship with students and to observe student growth, as indicated by a student’s transition to n + 1 on the developmental progression. Furthermore, the ten 12-minute instructional sessions were negotiated to accommodate the request of participating classroom teachers and school leadership that the intervention minimize the amount of time students were removed from classroom instruction. The following describes each condition and instructor training.

Learning Trajectories Approach

There were 71 students in the LT condition (37 girls). This condition was composed of 10 one-on-one instructional sessions. Each instructor had access to a set of instructional activities that aligned with each level of the development progression and selected activities based on the child’s present level of thinking and the competences needed to master a particular level. Ultimately, the developmental sequence of each activity prepares the student for the following level.

During the one-on-one sessions, the instructors selected activities based on the student’s preassessment performance. The instructors documented and tracked the students’ progression throughout the instructional sessions, which informed the selection of subsequent activities in later sessions. As children demonstrated higher levels of thinking, they were encouraged to use more sophisticated strategies, such as iterating with a single unit (instead of using multiple units). The instructors provided scaffolds and differentiation throughout instruction based on what was most appropriate for each child, including (but not limited to) providing feedback on correctness of solution and instructor modeling of strategies. Unique to this condition, as necessary, instructors could modify an activity so that it required only the preceding level of thinking in the LT, then return to the original activity structure.

Reverse Order Group

There were 59 students in the REV condition (31 girls). This active control condition was composed of 10 one-on-one instructional sessions. Unlike the LT condition, 10 length activities were selected from each level of the developmental progression, which are listed on Table 3 and can be found on the [LT]2 website (LearningTrajetories.org) for in-depth review. Instructors provided activities in a developmental sequence in the length LT in reverse order. Thus, students were exposed to similar activities as the LT condition but began with the most sophisticated level: Level 5 (conceptual ruler measurer).

Instructional Sequence for the Reverse Order Experimental Condition

Note. Children in the LT condition started one level above their initial developmental level as determined by the pretest and were then taught at successively higher levels. LT = learning trajectory.

REV instructors provided feedback about the correctness of children’s solutions to questions but did not modify activities structurally to accommodate less sophisticated levels of thinking, as that would have broken the REV sequence. In the event that the child shared an incorrect solution, the instructor would gently let them know they did not get the right answer and would proceed to show them how to solve the problem (e.g., “Hmm, that is not quite right. Here is how I would solve the problem . . .”). The child would then solve the problem themselves before the instructor progressed to the next part of the activity. Children sometimes finished activities before the end of the session.

Business-as-Usual

There were 57 students in the BAU condition (31 girls). Students in this condition did not participate in any one-on-one instructional sessions, nor was the topic of length-measurement covered in the general curriculum. However, if one of the students expressed disappointment in not being “picked” to play math games, the instructor asked the teacher for permission to play a 10-minute subitizing or shape composition math game. Each of the instructors at the schools confirmed that kindergarten teachers were not exposed to LT curriculum prior to or during the study.

Instructor Training

The instructional team was composed of nine graduate research assistants (GRAs) from programs within the College of Education (others, including the senior authors, taught when needed). All of the GRAs had experience working with young children, and two were certified high school teachers for English literature. The rest of the instructional team members were students from the counseling or research methods and statistics programs. Each of the GRAs were trained by the co–principal investigators (co-PIs) and the project director. The GRAs were trained to implement instruction for the LT and REV conditions.

The training included description of the study design, the theoretical foundations of LTs for length-measurement, and how children advance along the developmental progression. After instructors became familiar with the length-measurement LT, the focus of training shifted to observing and interpreting children’s thinking. Additionally, instructors were trained on how to provide appropriate instruction based on their interpretation of the child’s thinking. As such, instructors were trained on selecting and implementing appropriate instructional tasks for each child (e.g., modifying activities between sessions to match instructional tasks to developmental levels of individual children) during weekly professional development sessions. In addition, the co-PIs and project directors observed the recorded instructional sessions weekly for each instructor and provided constructive feedback.

Each of the instructors participated in weekly team meetings where the co-PIs and Project Directors provided consultation on student cases. Furthermore, co-PIs and project directors were available, as a form of peer-debriefing, to answer questions and recommend next steps every day. Finally, each of the LT instructors had an in-person midpoint check-in (at or around the fifth instructional session) to determine if any midcourse corrections needed to occur. During this meeting, the graduate instructor consulted with the project director for each individual case.

Although we did not have the resources to measure fidelity of implementation in every session, we worked to ensure adequate implementation. Part of the LT instructor debrief included watching videos of instruction (see instructor training). This daily debrief served as an opportunity for the project lead to watch videos of sessions with instructors to provide feedback; for example, a reminder to use a scaffold listed in the activity sheet. Moreover, a fidelity evaluator reviewed the videos of two randomly selected students from each instructional team each week and documented whether the instructor Never (0%–25% of the time), Sometimes (26%–50%), Often (51%–75%), or Always (76%–100%): (a) taught in a teaching space that was conducive to student learning, (b) used the [LT]2 write-up to clearly explain the activity directions, (c) positively engaged the student, (d) correctly set up the activity materials outlined in the [LT]2 write-up, and (e) provided feedback about whether student responses were correct or incorrect (and for LT, moved in the developmental progression if indicated; for the REV, did not do so). Fidelity, defined as equal to or greater than 90%, was achieved for all instructors each week of the experiment.

Instrument

The length measurement assessment was composed of 28 items adapted from the Research-Based Early Mathematics Assessment (Clements et al., 2008, 2021) and Cognitively Based Assessment (Battista, 2012) designed to assess length measurement learning for kindergarten students. Items assessed competences from four levels of the length LT, beginning with direct length comparing (e.g., compare the lengths of two objects, presented without alignment) up to length measurer (e.g., measure a 34.5-inch ribbon with a 10-inch ruler). Each item was scored for correctness and strategy sophistication (where 1 = low, 2 = medium, and 3 = high). Rasch scores were constructed (mean of 0, standard deviation of 1) and difficulty parameters confirmed that that beginning items are less difficult compared with the items near the end of the assessment.

Preassessment

Past work with kindergarteners (not in the study) indicated that they could not respond successfully to most of the higher level items. Therefore, to avoid undue frustration and possibly attrition, the preassessment adopted a stop rule: administration ended when a student made three consecutive mistakes. Consequently, the pre-Rasch scores were constructed on a subset of the earliest items that compose the full-length measurement assessment. The sequencing of items according to Rasch difficulty guaranteed that after three incorrect responses, there was a very low probability that a child would answer any subsequent items correctly. Meanwhile, information—an analog of reliability—generated by the item response theory scores was 3.6 at the sample mean, equivalent to a reliability score of .78.

Postassessment

The postassessment was composed of the same items as the preassessment. All items were administered (because analyses were not on pre- to post change, and administrations were the same for all groups, such differences in administration did not affect the rigor of the assessments and data). Similar to the preassessment, Rasch scores were constructed and difficulty parameters suggest that beginning items are less difficult compared with the items near the end of the assessment. Additionally, information—an analog of reliability—generated by the item response theory scores was 8.8 at the sample mean, which is equivalent to a reliability score of 0.90.

Assigning Starting Points for Instruction

An initial preinstruction level of thinking in length measurement was assigned to each student. The preinstruction level was determined by correctly answering at least 75% of the items at n and all earlier levels. Table 4 contains the students’ preinstruction level by condition.

Preinstruction Levels by Experimental Condition

Note. Level 0 is “length quantity recognizer” in which children recognize length as an attribute, possibly as an absolute descriptor rather than comparative, and distinguish it from other measurable attributes (area, volume). BAU = business-as-usual; LT = learning trajectory; REV = reverse-order.

Covariates

We tested two child-level covariates for the full sample. Child gender (0 = boy) and school type (public/private, where 0 = public) are coded as binary. During the intervention, no children transferred from one school type to another.

Analytic Approach

The research question was examined within a Bayesian hierarchical linear modeling (HLM) framework using the brms package (Bürkner, 2018) in R 3.6.2 (R Core Team, 2019). Bayesian models more accurately quantify and propagate uncertainty (e.g., Kruschke, 2014) and can be more reliable in cases where traditional HLM methods typically fail (Eager & Roy, 2017).

The baseline model was specified to include preassessment ability, the effect of treatment, and a random intercept for classroom. The covariates we tested included child gender and whether the child attended a public or private school. We did not include a random intercept for the five participating schools since Snijders and Bosker (1993) advise against estimating multilevel models for clusters below 10.

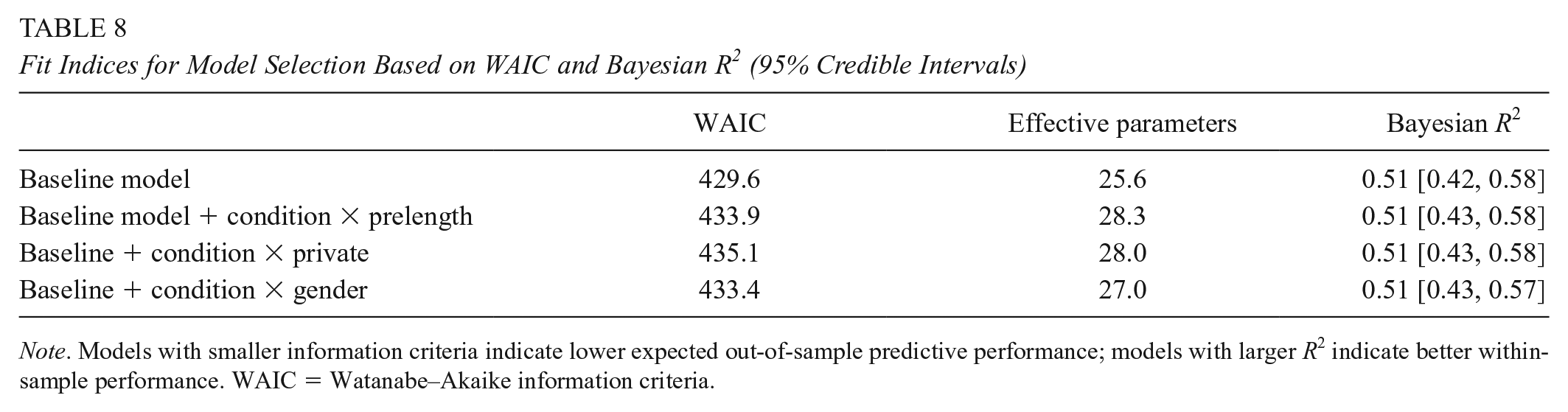

The final models for each research question was selected using Watanabe–Akaike information criteria (WAIC; Watanabe, 2010). Each covariate was added sequentially and tested based on their contribution to model fit (as measured by the WAIC) and compared with the previous, less complex model. We favored parsimonious model solutions: smaller WAIC values to select for robustness and expected out-of-sample predictive performance. As we only have a single quantitative covariate (preassessment Rasch score) we tested a model that specified random slopes that allowed different effects of preassessment Rasch within each classroom. This model was not selected based on a comparison of information criteria.

Results

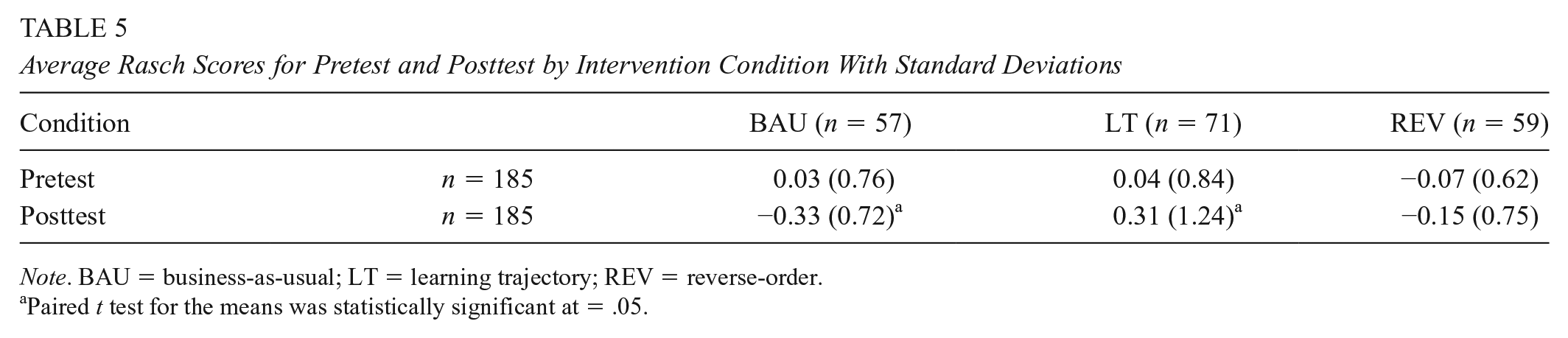

Descriptive Statistics

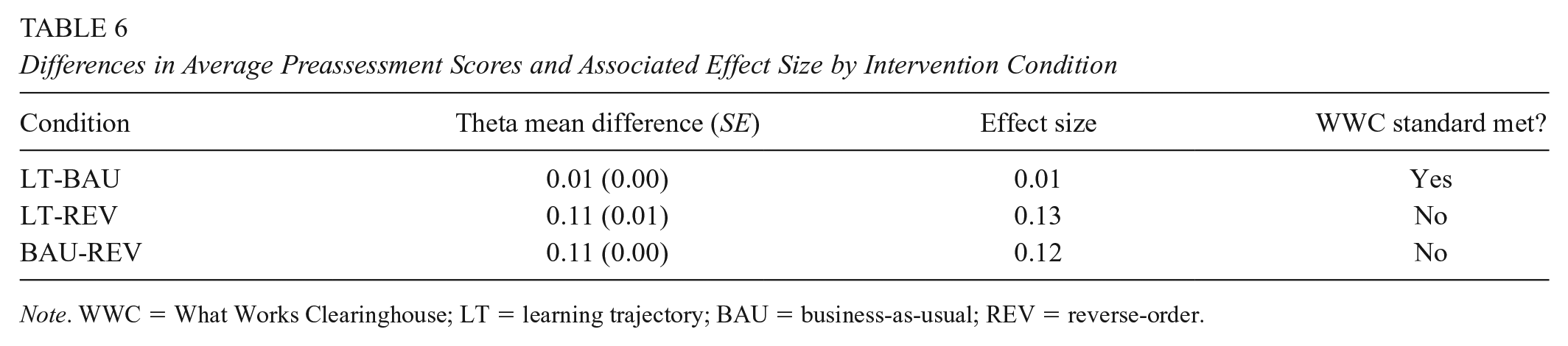

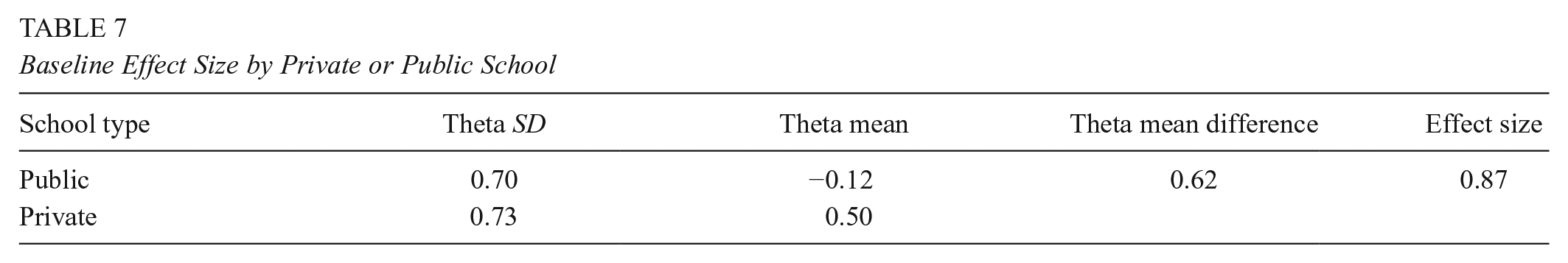

Table 5 contains the pre- and postassessment Rasch scores for each experimental condition. Baseline equivalence was examined and Table 6 contains the differences in preassessment performance, associated effect sizes, and notes whether these effect sizes meets the What Works Clearinghouse (2020) baseline equivalence standard of 0.05 or less in absolute value. The difference in the preassessment scores for the LT and BAU groups meet Institute of Education Sciences baseline equivalence standards. However, there are statistically nonsignificant initial differences between the LT and REV group, as well as the BAU and REV group (Table 5). In accordance with What Works Clearinghouse standards, we include child gender as a covariate, given the slight advantage of girls are pre-assessment (effect size = 0.13). Additionally, there were initial differences between student performance in public (n = 52 students across three schools) versus private schools (n = 135 students across two schools; see Table 7; Hedges g effect size = 0.87).

Average Rasch Scores for Pretest and Posttest by Intervention Condition With Standard Deviations

Note. BAU = business-as-usual; LT = learning trajectory; REV = reverse-order.

Paired t test for the means was statistically significant at = .05.

Differences in Average Preassessment Scores and Associated Effect Size by Intervention Condition

Note. WWC = What Works Clearinghouse; LT = learning trajectory; BAU = business-as-usual; REV = reverse-order.

Baseline Effect Size by Private or Public School

Impact of the Learning Trajectories Approach

The final model was the parsimonious baseline model (see Table 8). For the ith student in the jth classroom, the postassessment Rasch score (

Fit Indices for Model Selection Based on WAIC and Bayesian R2 (95% Credible Intervals)

Note. Models with smaller information criteria indicate lower expected out-of-sample predictive performance; models with larger R2 indicate better within-sample performance. WAIC = Watanabe–Akaike information criteria.

In the equation above,

HLM parameters are presented in Table 9 and differences between experimental conditions are show in Figure 1. Students in the LT and REV condition outperformed their peers in the BAU condition. Contrasts further reveal that students in the LT group also outperform their REV peers = .32 [0.57, 0.07]. Credible Intervals of 95% were estimated for child gender and whether the child attended a public or private school. However, these intervals include zero and therefore deemed to be statistically nonsignificant.

Model Parameter Estimates (Posterior Medians) with 95% Credible Intervals for Postlength With Random Effect of Classroom

Note. Bayesian R2 is computed using methods specified in Gelman et al. (2019). LT = learning trajectory; BAU = business-as-usual; REV = reverse-order.

Estimated growth scores (posterior medians with 95% credible intervals) for three experimental conditions.

Discussion

As one of a set of experiments rigorously testing the efficacy of the educational application of LTs, this study focused on the second assumption of an LT approach: there is a sequence of learning and teaching that is determined by a research-based developmental progression. The topic of length was selected as being both important to early mathematics learning and amenable to the LT and counterfactual conditions. The first of these reversed the sequence of the LT activities to directly test the assumption (REV). The second counterfactual, BAU, served as a passive control.

Students in the LT group outperformed their BAU and their REV peers. The latter contrast, especially, supports the hypothesis that following the developmental progression of an empirically validated LT promotes learning more than the same activities not in that order.

We acknowledge there is a possible alternative explanation for the difference in LT versus REV postassessment performance: the instructor response to student error differed between the LT and REV conditions. Instructors in the LT and REV condition provided feedback about the correctness of solution and modeled how to solve the problem. However, scaffolds provided in response to incorrect answers differed between conditions. Take, for example, the game “Which Bike Path Is Shorter?” In this game, students measure and compare two lengths that cannot be physically compared (length direct comparer level in Table 3) by iterating one or two units—a common cognitive knot.

If a REV student did not endorse the correct answer, the instructor would say, “You’re working very hard and I like how you’re thinking. But, that’s not quite right. Here is how I would do it.” The instructor would then provide a demonstration of how to leapfrog two units for the correct answer, narrating their actions in child-friendly language. The child would then be given a turn. If an LT student made an error, the instructor would ask probing questions for diagnostic purposes. A response that was productive for most students was to have the student share their unitized answer and then check their work against the same length, but this time using as many units as they need to cover the distance. The rationale behind the differences in response is that providing an LT-aligned response to REV student errors would have been incompatible with the nature of the REV condition. To properly address REV student thinking, instructors would have to build skills promoted at a different level of the developmental progression than the intended daily activity.

Students in the REV condition also outperformed their peers in the BAU condition. This indicates that the activities, even when implemented in an order other that of the LT’s developmental progression, are still effective. This result is similar to that of the studies testing the first LT assumption, those that had a “teach-to-target” counterfactual (Clements, Sarama, Baroody, & Joswick, 2020; Clements, Sarama, Baroody, Kutaka, et al., 2020; Clements, Sarama, Baroody, et al., 2019). That is, these and the present study suggest teaching each contiguous level in developmental order of a LT is more efficacious and thus more useful than alternatives, but not necessary to facilitate learning in all cases—children experiencing the active counterfactuals also learned, but they learned less. However, note that in previous studies using a teach-to-target approach to test assumption 1 (Clements, Sarama, Baroody, & Joswick, 2020; Clements, Sarama, Baroody, Kutaka, et al., 2020; Clements, Sarama, Baroody, et al., 2019), instruction was at levels n + 2 or n + 3, avoiding instruction at n + 1. In the present study, children in the REV condition experienced activities at each level considered in this study (Table 3) and thus the activities eventually crossed over the child’s present level of thinking (including n − 1, n, n + 1, etc.).

We also note an alternative explanation for the findings regarding comparisons to the BAU group: LT and REV students received one-on-one instructional sessions, whereas the BAU students did not. It may be that any one-on-one attention yields greater length-measurement performance at postassessment. Indeed, time spent in domain-specific, rather than general, instruction is associated with higher scores in targeted domains, including mathematics, in children from low-income preschool and kindergarten backgrounds (Votruba-Drzal & Miller, 2016; A. H. Wang, 2010).

Another caveat is that comparisons to the BAU condition necessarily confounded the additional one-on-one sessions with the instructional activities. These comparisons were relevant to an evaluation of the activities’ efficacy, but did not address our main question. Finally, the comparison between the LT and REV groups, which differed mainly on the sequence they embodied, is confounded by necessity with the added scaffolding provided children in the LT condition that modified activities at level n to an n − 1 structure temporarily.

Implications for Theory, Research, and Practice

Instruction using activities sequenced according to the levels of an empirically validated learning trajectory was more efficacious than instruction using the same activities for the same amount of time, but not so ordered. This supports the LT assumption that each builds hierarchically on the concepts and processes of the previous levels (e.g., Goodson, 1982; Sarama & Clements, 2009; van Hiele, 1986). That is, each level is characterized by specific concepts (e.g., mental objects) and processes (mental “actions-on-objects”; Clements, Wilson, & Sarama, 2004; Steffe & Cobb, 1988) that underlie mathematical thinking at level n and serve as a foundation to support successful learning of subsequent levels (Sarama & Clements, 2009). However, the learning process is not intermittent and step-like, but rather incremental and gradually integrative. A critical mass of ideas from each level must be constructed before thinking characteristic of the subsequent level becomes ascendant in the child’s thinking and behavior (Clements et al., 2001).

Findings are consistent with Vygotsky’s construction of the ZPD (Vygotsky, 1935/1978) but add required theoretical, empirical, and practical knowledge. For example, application of ZPD must confront the question of whether any particular competence or activity stands within a child’s ZPD (vs. already interiorized or beyond the zone) and must clarify the role and nature of adult guidance for that activity (Wertsch, 1984). The three components of a learning trajectory, instantiated in the theory of hierarchic interactionalism (Sarama & Clements, 2009), provide a research-based structure for children’s mathematical knowledge as well as pedagogical tools that enable us to work in congruity with the ZPD theory. That is, goals elucidate the mathematical content, and developmental progressions specify and arrange increasingly sophisticated levels of thinking. These enable us to identify the child’s current level of thinking and the following level—exactly the ZPD (the “upper threshold of instruction, Wertsch, 1984). The instruction for that level provides the teacher, Vygotsky’s More Knowledgeable Other, with specific teaching activities, as well as a theoretical rationale for why the activity will activate the mental actions-on-objects constituting thinking at that level. LTs also posit the mechanisms that tie a developmental progression levels of thinking to the instructional tasks through the specification of “actions-on-objects.”

Also consistent with the ZPD construct (Vygotsky, 1935/1978), the LT approach involves using formative assessment (National Mathematics Advisory Panel, 2008; Shepard & Pellegrino, 2018) to provide instructional activities aligned with such empirically validated developmental progressions (D. M. Clarke et al., 2001; Fantuzzo et al., 2011; Gravemeijer, 1999; Jordan et al., 2012) and using teaching strategies that evoke children’s natural patterns of thinking at each level, as posited by hierarchical interactionalism (Sarama & Clements, 2009). This approach appears particularly productive for those with the lowest levels of entry competencies. This similarly indicates the importance of supporting children’s learning of each level of the LT in order, as children may not be able to make sense of tasks from higher levels if they have not built the concepts and procedures that constitute prior levels of thinking. Children with low entering competencies may be especially at risk of learning only to apply rote, prescribed procedures (“reduction of level” according to van Hiele, 1986).

Consistent with previous research (Clements, Sarama, Baroody, & Joswick, 2020; Clements, Sarama, Baroody, Kutaka, et al., 2020; Clements, Sarama, Baroody, et al., 2019), teaching each contiguous level in developmental order of a LT is more efficacious and thus useful than alternatives, but not necessary to facilitate learning in all cases—children experiencing the active counterfactuals also learned, just not as much. 1 Thus, selecting activities with traditional approaches (reminiscent of the sequence of instructional activities that defined the REV condition or a random order) has the potential to teach young children mathematics, but not as effectively as selecting activities based on children’s present level of thinking.

A caveat regarding implications for practice is that potential pedagogical power of the traditional approaches, that is integration with other activities and domains, could not be realized in the REV condition due to logistical and research design constraints. Furthermore, the REV condition outperformed the BAU condition, showing that the activities were effective even when not aligned with the developmental progression (the relative efficacy of the LT condition may have been attenuated by the recency effect). Therefore, the results support the efficacy of following the developmental progression, but should not be interpreted, for example, as an evaluation of the traditional approaches, which would require full-year research with different curriculum structures. However, the study does imply that theme approaches that considers LTs in their planning would be more effective than those that do not.

Footnotes

Acknowledgements

Researchers from an independent institution oversaw the research design, data collection, and analysis and confirmed procedures and findings. The authors wish to express appreciation to the school districts, teachers, and children who participated in this research.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the Institute of Education Sciences, U.S. Department of Education through Grant No. R305A150243. The opinions expressed are those of the authors and do not represent views of the U.S. Department of Education.

Notes

Authors

JULIE SARAMA, Kennedy Endowed Chair and Distinguished University Professor, has taught high school mathematics, computer science, middle school gifted mathematics and early childhood mathematics. She directs six projects funded by the National Science Foundation, Institute of Education Sciences, and others (30 total) and has authored over 80 refereed articles, seven books, 60 chapters, and over 100 additional publications.

DOUGLAS H. CLEMENTS is Distinguished University Professor and Kennedy Endowed Chair at the University of Denver, Colorado. Clements has published over 166 refereed research studies, 27 books, 100 chapters, and 300 additional works on the learning and teaching of early mathematics; computer applications, research-based curricula, and taking interventions to scale.

ARTHUR J. BAROODY is a professor emeritus of curriculum and instruction, College of Education, University of Illinois at Urbana-Champaign. His research focuses on the teaching and learning of early number, counting, and arithmetic concepts and skills.

TRACI S. KUTAKA is a research associate at the Marsico Institute for Early Learning at the University of Denver. Her research interests center on early childhood care and education, with an emphasis on mathematics teaching and learning.

PAVEL CHERNYAVSKIY is an assistant professor of biostatistics at the University of Virginia in the Department of Public Health Sciences. His research interests include methods for the analysis of correlated data, as well as Bayesian computational methods.

JACKIE SHI is a PhD candidate in research methods and statistics at University of Denver. Her research interests include applying advanced models in institutional research and public opinions.

MENGLONG CONG is a PhD candidate of the Department of Research Methods and Statistics, Morgridge College of Education, University of Denver. His research interests include child development and mixed-method research.