Abstract

Tinkering is often viewed as arbitrary practice that should be avoided. However, tinkering can be performed as part of a sound reasoning process. In this ethnomethodological study, we investigated tinkering as a reasoning process that construes logical inferences. This is a new asset-based approach that can be applied in computer science education. We analyzed artifact-based interviews, video observations, reflections, and scaffolding entries from three pairs of early childhood teacher candidates to document how they engaged in reasoning while tinkering. Abductive reasoning observed during tinkering is discussed in detail.

Keywords

Tinkering can be defined as playful experimentation that involves a series of changes incrementally applied without systematic planning (Beckwith et al., 2006; Berland et al., 2013; Blikstein et al., 2014). To improve computer science education for learners from underrepresented populations in STEM (science, technology, engineering, and mathematics), it is important to acknowledge tinkering as a productive and even necessary process (Berland et al., 2013; Searle et al., 2014). Since the seminal work by Turkle and Papert (1990), discussions on tinkering have mainly focused on tinkering outcome quality, especially as compared with those of structured programming (e.g., Rose, 2016). But little is known about

Given this gap, we examined conversations and actions of early childhood teacher candidates during debugging to understand their reasoning while tinkering. The research question was, “How do early childhood education (ECE) teacher candidates reason while tinkering?” Because we assume that such reasoning is not an additive process of two individuals’ cognitions, but rather a synergistic product of interaction, we used an ethnomethodological approach (Belland et al., 2016; Garfinkel, 1967; Ingram, 2018) to analyze the data. Specifically, we sought to uncover the methods by which teammates sought to achieve order within their interactions directed toward debugging. From this analysis, we uncovered rules that guided tinkering—rules that were endemic to interaction. The focus of conversation analysis within the ethnomethodological approach was on tinkering “actions rather than the content of talk itself” (Ingram, 2018, p. 1068). This was not only to understand how the participants made sense of bugs and debugging but also to identify particular “features of the interaction that precede particular outcomes” from tinkering (Ingram, 2018, p. 1069).

Relevant Literature

Teacher Learning of Debugging

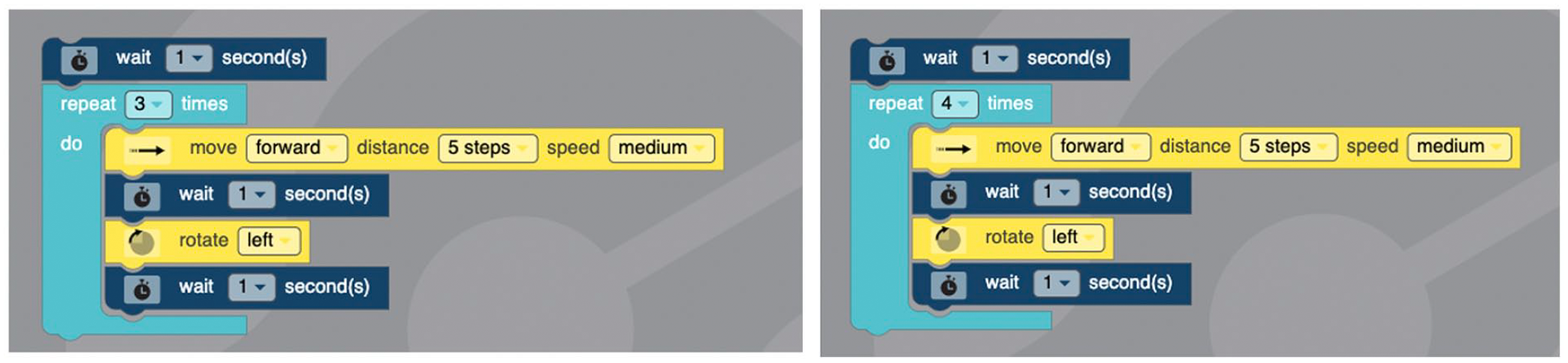

Few ECE teachers are prepared to teach computer science in the United States and globally (Bers et al., 2013; Çetin & Demircan, 2020). Within ECE, block-based programming platforms are often used (Bers, 2018; Bers et al., 2014; Kim et al., 2015; Kim et al., 2017; Kim et al., 2020). While research shows that novice learners are more attracted to block-based programming than text-based programming (Akcaoglu, 2014; Bers, 2018; Bers et al., 2014; Lye & Koh, 2014), actual learning of fundamental computer science concepts and programming through block-based platforms has been criticized (Brennan & Resnick, 2012; Grover et al., 2015). Still, learning to debug could improve incomplete understanding of programming (Kim et al., 2018). For example, when debugging to make a robot travel on a square, sequence and repetition control structures need to be understood to change 3 to 4 in the repeat block (turquoise in Figure 1).

Block-based programing examples of buggy code (left) and correct code (right).

Tinkering as an Asset

In computer science education, it has been long argued that tinkering is a culture of programming that can lead to positive outcomes (Berland et al., 2013; Rose, 2016; Turkle & Papert, 1992). Still, tinkering is often viewed as a practice to be avoided (Grigoreanu et al., 2006; Murphy et al., 2008). In a recent study, an intervention structured the debugging process to help students avoid “rapid cycles of editing and testing without deeply understanding their code” (Ko et al., 2019, p. 474). Others acknowledge that

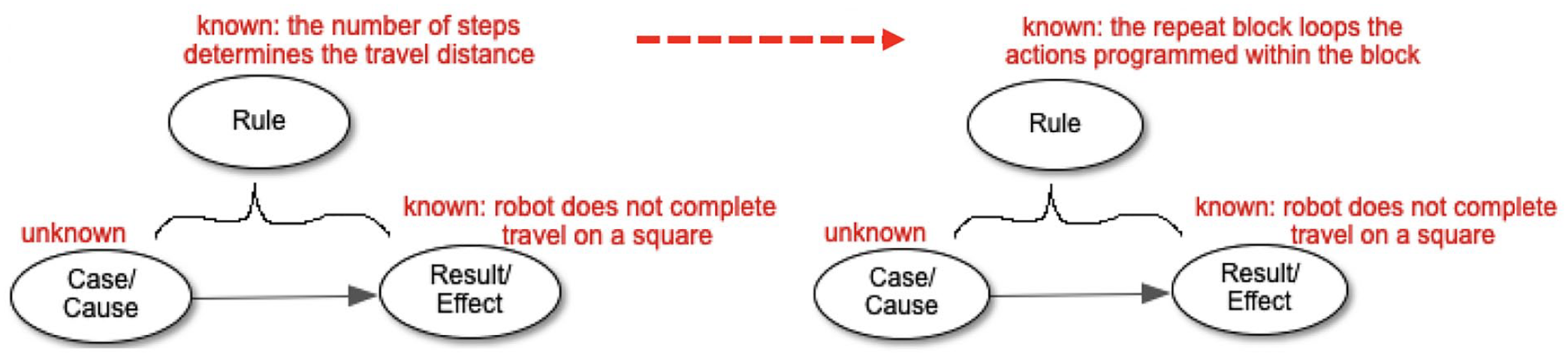

We argue that tinkering during debugging involves

Simplified illustration of reasoning while tinkering during debugging.

Reasoning Types

The debugging example above depicts abductive reasoning, defined as a reasoning process used to explain a phenomenon with incomplete information at hand (Abe, 2003; Aliseda, 2006; Leake, 1995; Magnani, 2009, 2015; Peirce, 1931-1935). Through abduction, one reasons probable cases/causes that seem to have resulted in the phenomenon. The most probable case at the moment is tested to see if it explains the phenomenon. In the example above, the first probable case (i.e., the bug is in the move block) does not explain the robot’s failure to complete the square, which leads to the second probable case (i.e., the bug is in the repeat block). Other types of reasoning are often used in programming. Deductive reasoning and inductive reasoning are mainly discussed in the debugging literature (e.g., Dershowitz & Lee, 1993; Metzger, 2003; Zeller, 2009). The three types of reasoning are explained in Figure 3 with a task of coding a robot to repeat an action

Simplified illustration of reasoning types.

Inductive debugging involves enumerating all cases and studying their relationships with the results. To induce from specific cases to general rules, cases of code that function correctly and incorrectly should be reviewed (Myers et al., 2004). To use deduction in debugging, a rich body of general knowledge drawn on extensive programming experience as well as a full understanding of the current program is necessary (Metzger, 2003). Deduction is commonly considered a strong method of debugging (Myers et al., 2004; Zeller, 2009). However, deductive debugging is usually not recommended to novice programmers (Metzger, 2003). This appears to leave a large role for abduction in the study of novices’ debugging. Debugging by abduction is scarcely discussed outside machine learning development. To our knowledge, the present research is the first that analyzed abductive debugging in a block-based coding context.

Method

Participants and Setting

The setting was an undergraduate class on play in early childhood education at a large, public university in the eastern United States. Out of 19 who consented to participate in the overall study, we focused on three pairs of participants in this qualitative case study (all female; see Table 1). All three pairs self-reported having no to little prior programming knowledge and no prior robot programming experience. Amelia, Barbara, and Charlotte reported having low prior programming knowledge on a closed-ended item in a presurvey. In reviewing other data (i.e., classroom recordings, interviews, reflections, scaffolding entries), we found no detail about, or evidence of the participants referring to, such prior programming knowledge. Two of the three pairs were homogeneous in academic standing.

Participant Demographic Information

Robot Programming Unit

Participants worked in pairs to explore how to (a) integrate block-based coding into children’s play and learning and (b) debug errors that impeded successful execution of block-based code. For example, participants were asked to debug code that had two bugs (in the repeat while and rotate blocks) that impeded the Ozobot from tracing an octagon shape (Figure 4). The unit lasted 3 weeks with a 2.5-hour class each week (see Table 2). Participants used OzoBlockly to instruct Ozobot Evo to make desired movements. OzoBlockly is a block-based programming platform with five levels to support coding from prereader to advanced learners.

A Summary of the Unit Activities

Buggy code (left) and an example of correct code (right) for the octagon tracing task.

Data Collection

Classroom Recordings

The three pairs were video recorded for about 6 hours in three classes. The video recordings were transcribed by advanced speech recognition software. Two researchers edited the transcripts to improve accuracy.

Interviews

Individual interviews were done after the unit (mean interview length = 28.3 minutes). Participants’ responses to the scaffold were used to prompt recollection. Participants were also asked to debug code that instructed an Ozobot to trace a hexagon.

Reflections

Participants wrote (a) how they might support children’s learning in play, (b) how their play scenario was designed with Ozobots, (c) how they engaged in debugging, (d) how they would apply their debugging experience to children’s learning, and (e) how they would support children’s problem-solving.

Scaffolding Entries

Debugging scaffolding was provided for listing block categories, generating and testing hypotheses, and reflecting on hypotheses. Specifically, prompts invited students to (a) list block categories in the buggy code; (b) generate two or three hypotheses along with their reasons; (c) record changes they made in the code, the reason for those changes, and the change outcomes for each hypothesis, and the list of blocks after changes were made; (d) summarize and reflect on the hypotheses they tested by writing down the actual problem in the buggy code, how they fixed the problem, and whether any of the hypotheses was correct (if not, they were encouraged to generate another hypothesis); and (e) list block categories used in the final code. The scaffold included an example hypothesis: “The numeric value that I entered in the Loops block was too small. It should have been 4 instead of 3 for my Ozobot to get to the finish line.”

Data Analysis

The initial coding scheme was developed based on the programming, debugging, and problem-solving reasoning literatures (Abrahamson, 2012; Aliseda, 2006; Berland et al., 2013; Kim et al., 2018; Magnani, 2015; McCauley et al., 2008; Perkins et al., 1986; Rivera & Becker, 2007; Turkle & Papert, 1990). To refine the initial coding scheme, two researchers conducted preliminary coding of data of two participants from different video-recorded pairs. Then, the project team discussed the coded data and revised the coding scheme. Next, five researchers coded the two participants’ data independently and peer-reviewed each other’s coded data. The project team met again to discuss the recoded data and finalized the coding scheme. Using the refined coding scheme, three researchers independently coded the transcript of videos of the two participants (Cohen’s kappa ranged from .84 to .87) and then met to discuss their analysis with another researcher in order to reach consensus. Next, three researchers coded the remaining data and went through multiple rounds of peer reviews and revisions with another researcher. After finishing coding, the three researchers independently created a sensemaking table of their coded data to find relations among coded data under different nodes and to identify salient observations. Four researchers then (a) peer-reviewed, discussed, and revised the sensemaking tables and (b) repeated the process to finalize the sensemaking tables. Throughout this process, one of the key sticking points was how to use the coding node

Findings and Discussion

Abduction was often observed in debugging episodes in that participants (a) determined plausible cases (causes) that explained misfunctioning code (the result) (b) by applying the best rule at the moment that connected the case to the result, and (c) eliminated irrelevant rules when realizing a disconnect between the case and the result through seeing reprogrammed robot behaviors. This process of abduction seemed to have necessitated tinkering. Tinkering observed in the study was also rather cautious (Berland et al., 2013; Perkins et al., 1986). Considering that sociocognitive processes can mediate abductive reasoning (Abrahamson, 2012), scaffolded pair debugging seemed to have guided participants to track code, defined as reading code closely to get a sense of what it does (Perkins et al., 1986). During interviews, participants noted that responding to scaffolding prompts made them look back at their thinking process and the results (i.e., reprogrammed robot behaviors) of their actions (i.e., code revision) taken based on the thinking process.

Our findings can be summarized by the following themes:

Theme 1: Tacit rules were applied in search for best explanations.

Theme 2: Rules were eliminated based on perpetual observations.

Theme 3: Reflective abstraction was seldom observed.

Theme 4: Generating multiple hypotheses in advance was an unnatural requirement.

The themes are sequenced as such because each subsequent theme builds off of the previous theme. Our presentation of Theme 1 reports the process of abduction as a whole in which tacit rules were used to abduce the case (code) that led to the result (robot behavior). When presenting Theme 2, we report perceptual observations that were immediately available during robot debugging, and how such perceptual observations contributed to rule elimination during abduction. In our presentation of Theme 3, we report that scaffolding largely served to facilitate reflective debugging rather than reflective abstraction. Our reporting of Theme 4 centers on participants’ perceptions of the scaffolding requirement to generate multiple hypotheses in advance and reports how they used the scaffolding.

Theme 1: Tacit Rules Were Applied in Search for Best Explanations

Use of Tacit Rules in Abducing the Case Leading to the Result

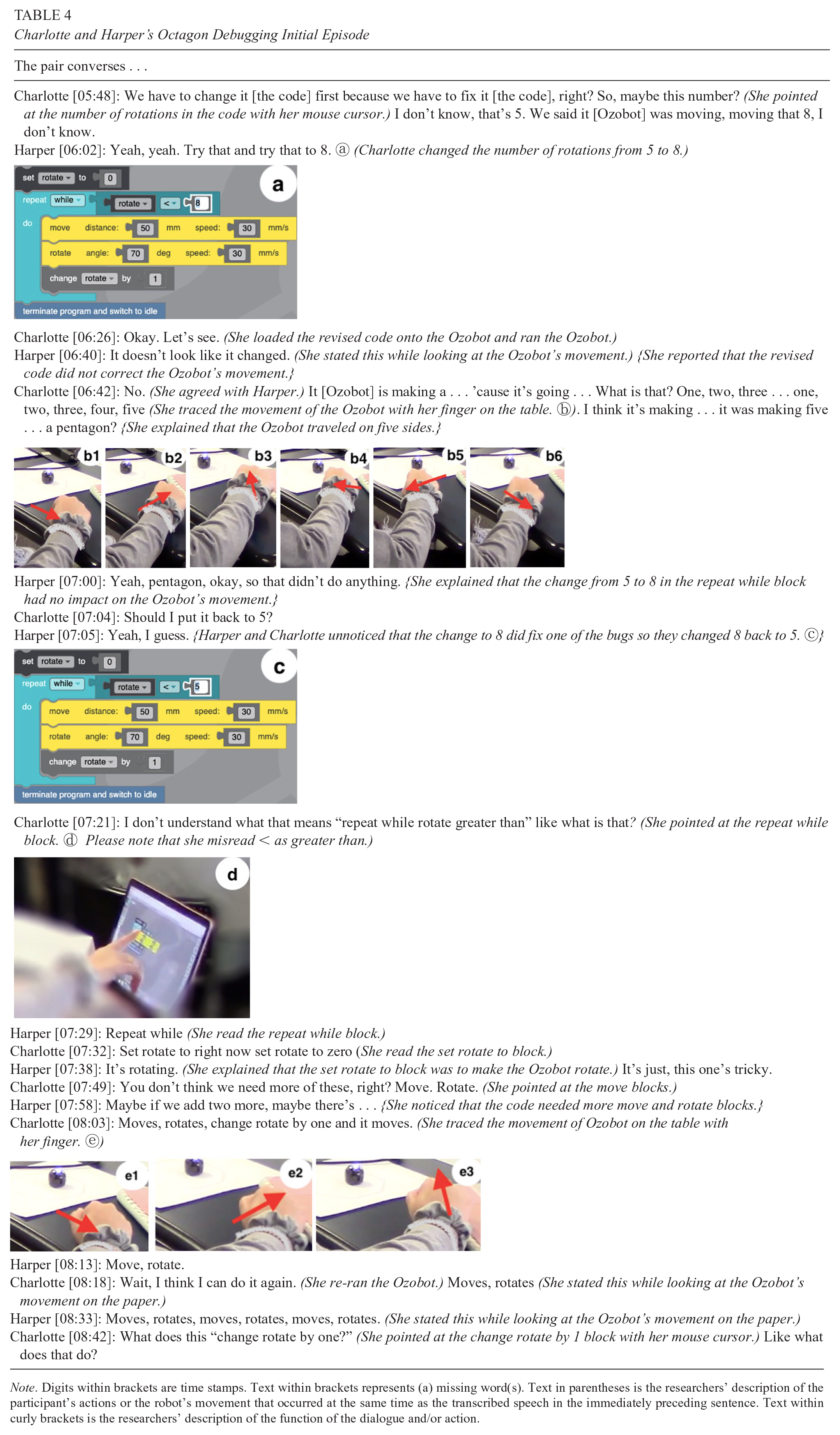

The rules participants used in search of a best explanation for the malfunctioning code were rarely articulated. For example, when Charlotte and Harper talked about the angle value, 70, in the rotate block (see Figure 4), they did not state a rule that made them think that the angle was too big. See Table 3 for the dialogue of one debugging episode in which they changed (a) the angle value from 70, to 110, 50, and 45 in the rotate block and (b) the number in the repeat while block from 5 to 8. The episode is segmented into a series of scenes when a new change in the buggy code was mentioned. In the reasoning analysis column, the

Charlotte and Harper’s Octagon Debugging Episode

An example of abductive reasoning looking for the best explanation for too-wide rotations.

Role of Perceptual Senses in Using Tacit Rules

Without stating the rule, the pair looked at and traced the robot movement and the octagon on the paper. Instead of trying to describe or justify the rule that is being applied, they seemed to use their perceptual senses. This makes sense in that the rule could be checked immediately by revising the code. Through changes made in the code, they collected “perceptual facts” and dialogued about “the evidence of the senses” with each other (Peirce, 1931-1935, para. 141). The use of tacit rules seems necessary in abductive reasoning considering that while “discovering through doing . . . new and still unexpressed information is codified by means of manipulations of some external objects” (Magnani, 2009, p. 11). Abductive inferences and perceptual judgments go together (Hanson, 1958).

Some “naïve perceptual judgment” (Abrahamson, 2012, p. 636) led to unsound explanations that had to be eliminated later. This is a natural part of abduction and the reason why abductive reasoning was needed and applicable. Unlike deductive inferences, abductive inferences can work with “an incomplete knowledge base” (Abe, 2003, p. 233). For example, knowing that A → B

Tacit rules used when formulating unsound explanations were also based on perceptual senses. Some rules were not relevant or conflicted with each other but later were eliminated in the process of tinkering by abduction. For instance, as illustrated in Scene 2 of the debugging episode in Table 3, Harper disapproved of her partner’s and her own previously reasoned case (see Scene 1 in Table 3). This time, she paid more attention to the octagon that the robot should

In Scene 3, Charlotte and Harper went back to the initial rule used in Scene 1 and the initial explanation: 70° was too big. Then, they tried 50°. They did not converse about another applicable rule: The rotate angle value should be equal to the exterior angle of a regular octagon (∴ 360°/8 = 45°). It is probable that the pair knew the rule about exterior and interior angles in an octagon, but they were too occupied with unfamiliar programming activities to notice the relevance of the rule to the present debugging task. If this rule were used, the tinkering process would have been shorter: One change from 70° to 45° could have been made instead of changes from 70° to 110°, 50°, and 45°.

Theme 2: Rules Were Eliminated Based on Perceptual Observations

Rule Elimination Grounded in Ongoing Observation

Abductive reasoning involves eliminating rules, and it is often done with incomplete information. Rule eliminations in the present study were mostly based on ongoing observations while

Charlotte and Harper’s Octagon Debugging Initial Episode

This erroneous elimination of a relevant rule seems to have stemmed from

Sensemaking While Doing

Barbara and Lillian eliminated rules mostly based on observations while

Barbara and Lillian’s Octagon Debugging Episode

While trying to make sense of why the increased distance did not fix the bug, Barbara and Lillian noticed that the robot veered off the octagon, especially the angle (Scene 4). Then they decided to try the exterior angle rule (Scene 4). Their attempt at

Amelia and Elena’s Octagon Debugging Episode

Theme 3: Reflective Abstraction Was Seldom Observed

Scaffolding Reflective Debugging But Not Reflective Abstraction

When the code was debugged, participants seldom attempted to generalize explanations for why some code revisions worked and some did not. For example, when changing the angle value to 110° did not fix the code, Charlotte and Harper explained why they changed the angle in their response in the debugging scaffold: “Because the 110 degrees was making our Ozobot take even sharper turns than the 70-degree angle it was taking before, we thought we should make the angle less than 70.” However, while tinkering from 70°, to 110°, 50°, and 45°, a collection of specific explanations for multiple changes in the angle value did not lead to a generalizable explanation for why bigger angles made sharper turns or why 45° worked but 50° did not. As shown in Table 3, Scene 5, when debugging was completed, the pair was inquisitive about why using 8 for number of rotations did not work until the turn angle was fixed but their sensemaking attempt did not reach an explanation that could account for relationships among multiple cases and results. This finding does not seem unnatural considering that mostly tacit rules were used (see Theme 1).

The current participants did not have enough experience programming and debugging to engage in inductive reasoning. Still, our findings prompted us to reexamine the scaffold design intended for reflective debugging. In the scaffold, participants were invited to describe and reflect on what

What was the real problem that made your Ozobot behave unexpectedly?

The angle and the amount of turns it was making was off.

How was the problem fixed?

We changed the angle to 45 and changed the repeat while rotate < 8.

In the scaffold, participants were also asked

Case 1 (repeat while rotate < 5 & angle 70 degrees) → Result 1 (the robot did not work properly)

Case 2 (repeat while rotate < 8 & angle 70 degrees) → Result 2 (the robot did not work properly)

Case 3 (repeat while rotate < 8 & angle 45 degrees) → Result 3 (the robot worked properly)

Our scaffolding is not the only tool to invite students learning computer science to ask why questions; for example, another such tool is Whyline, which invites students to ask why questions and points to areas of the code that could provide answers (Ko & Myers, 2008). In the current study, it appears that the scaffolding served as a sociocultural mediator (Belland & Drake, 2013) of the pairs’ work, thereby fostering abductive reasoning and, by extension, cautious tinkering. Scaffolding for participants to review the relationship What changes you made and then what happened after the changes were made, writing that down helped. I think completely writing down the code was a lot [of help] . . . I think because [while] writing down what you changed and then writing down what the change did, you could see that [the change] did the opposite of what we were supposed to do. So let’s try the other way.

Similarly, Amelia considered such scaffolding helpful as noted in her interview: “I think having the hypothesis and then writing the reason why kind of helped in debugging it ’cause you were thinking more of how do I fix it when it’s on there.” In the interview, Elena also explained the role of hypotheses: I think the hypotheses part to write it down [was the most helpful], to really see what my process of thinking was. It was good to understand where my line of thinking was starting to go and it was good to have a second hypothesis and a third if that didn’t work out either.

Data suggest that reflective debugging was scaffolded but reflective abstraction was not. Reflective abstraction is the process of arriving at new knowledge through reflection on current and earlier actions, operations, construction, and reconstruction (Abrahamson, 2012; Cetin & Dubinsky, 2017; Piaget & Inhelder, 1969). Reflection on multiple, isolated relationships between each case

Rule X: The rotate angle value should be equal to the exterior angle of a regular octagon.

∴ 360°/8 = 45

Rule Y: The number of rotations should be the number of sides of a regular octagon.

∴ 8

Rule Z: To rotate 8 times, the numeric value in the “repeat while

∴ repeat while rotate < 8

Actual Use of the Scaffold Limited Sensemaking

Participants often used the scaffold to report what they had done. For example, Barbara and Lillian completed the octagon debugging task and then answered scaffold prompts that were designed to be used during the debugging process. Charlotte and Harper listed only some changes they made in the buggy code; they listed 70° to 110°, and then to 45°, instead of listing all the angle changes from 70°, to 110°, 50°, and 45°. Barbara and Lillian made an error in their final specification of the bug location and resolution by including the change in the robot travel distance that they ended up excluding in their final code. Amelia and Elena responded to the scaffold as they engaged in debugging more often than others, as exemplified in Scene 1 of Table 6, but they only recorded the last change they made (i.e., 40°) rather than listing all changes made in Scenes 2 through 5. Although seemingly trivial, these cases may have also discouraged reflective abstraction. While the scaffold helped participants think back on what they had done, using the scaffold as a retrospective tool may have partially resulted in little sensemaking

I remember doing something like this. I remember we at first tried to first change this [the number in the repeat while block] to . . . well, hexagon is six sides, right? Yeah. So, we changed that to eight [in the octagon debugging]. But it still wouldn’t work because of the angles were off. So I think I would just change one of these, I guess this angle [in the hexagon code]. I don’t know what I would change it [the angle value] to. I would do trial and error and see, and then change the sides to six to see if it could make a hexagon.

Why do you think this angle could be a problem?

When we did it before [in the octagon debugging] the angle was too sharp and so before [Ozobot] can even get to eight sides, it already closed itself off.

Do you think if you change to six and then run it, do you think the angle should be smaller or bigger than the number that is there?

Smaller? Hmm. I think.

Why do you think so?

Because when I did this [in the octagon debugging], I remember we changed it to like 110 or something like that cause we thought it would be an obtuse angle but it did the opposite. It [made] even sharper turns.

Turkle and Papert (1990) argued that tinkerers may become decisive when they work on familiar programming tasks, which they regarded as “the benefits of their long explorations” (p. 141). However, Harper’s sensemaking of the repeat while block seems isolated from sensemaking related to the angle and distance. Reasoning that the angle should be less than 70° in the hexagon task just because 70° in the octagon task had to be reduced is evidence of lack of reflective abstraction. There was a bug in the distance, but Harper did not mention making any change in the distance probably because no change was needed in the distance in the octagon task. If sensemaking was completed while and after debugging (see Theme 2), rules about the angle may have been applied to justify the smaller angle value in fixing the hexagon code.

Theme 4: Generating Multiple Hypotheses in Advance Was an Unnatural Requirement

Scaffold Design Intent Misaligned With Abductive Reasoning

The scaffold design was grounded in the literature highlighting the critical role of hypothesis formulation in debugging (Araki et al., 1991; Kim et al., 2018). Along with prompts for generating hypotheses, the scaffold guided participants’ attention to specific structures of the code, blocks, and numeric or descriptive value in blocks. For example, the following questions were given during hypothesis generation: Is the sequence of blocks arranged correctly? Is the numeric value in blocks aligned with what you want your Ozobot to do? Is the logic block used correctly? Scaffolding design suggested in Kim et al. (2018) included use of common errors as part of scaffolding for debugging block-based code, but not as a checklist for step-by-step execution. Thus, these questions were intended to draw participants’ attention to common errors. Lillian noted that the questions prompted her and her partner, Barbara, to pay attention to the code in a productive way: The questions that were asked [were helpful]

Regardless of the design intent for hypothesis generation, the scaffold seems to have asked for an unnatural course of activities in that two or three hypotheses had to be written down altogether before testing any. Considering the salient use of abductive reasoning among our participants, this part of generating multiple hypotheses in advance seemed misaligned with their reasoning process. During reasoning through abduction, a new hypothesis is realized when there is new information that demotes the probability of the old hypothesis (Magnani, 2009). That is, it is natural that the second probable cause (Hypothesis 2) explaining the robot’s malfunctioning could not be guessed without testing the first probable cause (Hypothesis 1). Thus, the requirement of generating multiple hypotheses in advance may have been misaligned with the participants’ reasoning approaches:

Which parts of the scaffold most conflicted with your thinking processes or were not aligned with your thinking processes?

I feel like it was hard to list out specific hypotheses ’cause we sort of did a bunch of trial and error. So to specifically look at what the problem was there. It could have been a lot of things, which I guess is why it said reason for multiple hypotheses . . . I also think that we are anxious to just start moving.

Charlotte noted that her partner, Harper, and she responded to the scaffold retrospectively because they wanted to see what a change would do first before listing multiple hypotheses:

I guess that hypothesis [was most conflicted] ’cause . . . I think I’m a more visual person. . . . I’m better at just like trial and error . . . I would have just like tried a bunch of things and see what worked instead of writing something out.

How did you handle with that process of writing hypotheses?

’Cause my partner I think was kind of the same way, we actually would kind of change something and then . . . write a hypothesis for that. We would kind of do it a little bit backwards actually. We would change something and then I’d be like, okay, we have to write a hypothesis for what we changed and then do it.

Amelia and Elena exhibited more concurrent engagement with the scaffold and debugging than other pairs, as discussed earlier (Theme 3). Still, they did not write multiple hypotheses in advance. They wrote one and tested it prior to writing another one, as shown in Table 6. Moreover, when their change to 120° did not work, they decided not to write another hypothesis yet. That is, they chose to see what happened with their new change (20°) before writing their third hypothesis related to the change. Their first hypothesis was about the number of rotations (Scene 1). Their second hypothesis was about the angle change from 70° to 120° (Scene 2). Amelia explained to Elena that she was not writing down their third hypothesis related to the new angle value, 20°, because they may need to abandon the hypothesis of the moment if 20° does not fix the bug (Scene 3).

Natural Process of Abductive Thinking Leading to Tinkering

Charlotte defined her problem-solving during debugging as a process of trial and error: I think it [debugging] is very similar [to other problem-solving] ’cause a lot of things are trial and error. I don’t fix much like cars, fridge, refrigerators. But even the fridge . . . in my apartment, there’s like 7 and 1, but I didn’t know which one was cold[est] and which one was the warmest. So you just try 7 and wait a few hours and see if it gets colder or warmer. So it’s just like trial and error.

Positive notions were often expressed about trial and error used in debugging and problem-solving in general. Harper wrote in her reflection: We thought about what we thought the solution would be, tried it out, failed, and kept trying until we solved it. This is how a lot of things in life work, and many math problems as well. If something is wrong with my phone, I think “maybe if I reset it, it will work,” then if it doesn’t, I try other things, and if that doesn’t work, I keep trying things until I find the solution that fixes my phone.

She also wrote that she would apply her debugging experience to teaching young children as follows: “I would teach them how failing is not a bad thing, it takes many tries to solve something and that is okay.” Participants The approach we took was more trial and error so see if it worked and not. And, in my real life I try to plan things out more specifically, but to visualize it, it was easier to make the changes as we were going.

These findings are similar to the study of Ko et al. (2019) in which most participants still chose to tinker even though their intervention was designed to help novice programming learners debug step-by-step in structured ways. Now knowing our participants’ common practice of abductive reasoning, the design feature that required them to come up with multiple hypotheses with no explorations yet seems far from our intentions.

As mentioned earlier, tinkering in the present study was cautious (Berland et al., 2013; Perkins et al., 1986) and intentional (Fitzgerald et al., 2010). For example, as Amelia and Elena explained in their interviews, their incremental changes were grounded in tangible observations of the robot’s behaviors: Usually, I would look through the code and see what I didn’t want to do or what I had planned it to do and then based off of what it actually did, I would add something or try to reprogram it to make it better. (Amelia) When I would program it one way for example, like turning and going straight along the line, and it wouldn’t work the way I expected it to, it wouldn’t turn enough or whatever. I would have to readjust that . . . We went over and double-checked everything and kind of correlated where the code was with the movement that we saw it doing. So we would play it again, play the code again, let the Ozobot move again. We would check on the code, everything that it was doing and want to stop doing what we wanted it to. We would figure out where we were in the code and use that. (Elena)

The function of the hypothesis-driven scaffolding was not misaligned with our participants’ dominant reasoning (abductive reasoning) possibly because they ended up formulating multiple hypotheses while tinkering, instead of formulating them all at once. Their hypotheses (Table 7) had explanatory value. Abduction is a “process of reasoning in which explanatory hypotheses are formed and evaluated” (Magnani, 2009, p. 8). Considering that abductive reasoning “never reaches the status of best hypothesis” and that hypotheses can be partial and elementary as long as there is some plausibility (Magnani, 2009, p. 11), all these hypotheses are reasonable explanatory hypotheses. As shown in Table 7, Barbara and Lillian reported their observation of the robot’s current movement as hypotheses. Charlotte and Harper as well as Amelia and Elena wrote their plan for change in the code as hypotheses. Considering that “explanation deals with the current or past problems, while prediction deals with future problems” (Abe, 2003, p. 232), Amelia and Elena may have attempted to generate a predictive hypothesis even though the scaffold asked for hypotheses for why the robot

Responses to Multiple Hypothesis Generation Prompts in the Debugging Scaffold

Conclusion and Implications for Computing Education

The unique contribution of this article is the finding of abductive reasoning used in tinkering. Unlike many existing studies that attempt to identify deficits that lead to tinkering, we investigated how novice programmers tinkered so that we could understand their

Another implication is that the study findings point to a way to include further instruction on reasoning in ECE contexts. A common criticism is that little reasoning is learned in early learning curricula, even in mathematics education in which reasoning is essential (Stylianides et al., 2013). This criticism is applied not only to ECE but also to preservice, ECE teacher education. For example, inductive and deductive reasoning is covered in the ECE teacher mathematics education curriculum but often not abductive reasoning (Rivera & Becker, 2007). But the relationship between abduction and induction is critical to generalization (Rivera & Becker, 2007). If teachers do not recognize abductive thinking, the potential process to induction and generalization could be discarded (Abrahamson, 2012).

Specifically related to scaffolding design for computing education, this study suggests that hypothesis-driven scaffolds can be instrumental to reflective debugging, but it is important to consider the reasoning processes of computing learners. After reviewing data from the present study, we redesigned our scaffold to support reasoning by abduction and prompt for reflective generation of rules during abductive reasoning processes. This redesign is meant to scaffold our teacher candidates to see relationships not only

Footnotes

Acknowledgements

This research is supported by Grants 1927595 and 1906059 from the National Science Foundation (United States of America). Any opinions, findings, or conclusions are those of the authors and do not necessarily represent official positions of the National Science Foundation.

Authors

CHANMIN KIM is associate professor of Learning, Design, and Technology, and Educational Psychology at Pennsylvania State University, University Park. She researches teacher learning of STEM that contributes to the creation of equitable learning environments. Her current focus is on using asset-based approaches to study diverse ways of reasoning during debugging.

BRIAN R. BELLAND is associate professor of Educational Psychology at Pennsylvania State University, University Park. He uses a variety of quantitative, qualitative, and mixed methods approaches to study scaffolding in K–12 and higher education settings. He also conducts meta-analyses on STEM education.

AFAF BAABDULLAH is a doctoral candidate in the Learning, Design, and Technology program at Pennsylvania State University, University Park. Her research interests are in metacognitive collaborative learning and computing education. She is faculty with the Department of Curriculum and Instruction, King Saud University, Riyadh, Saudi Arabia.

EUNSEO LEE is a doctoral student in the Educational Psychology program at Pennsylvania State University, University Park. She is interested in measurement in problem-based learning contexts.

EMRE DINÇ is a doctoral student in the Learning, Design, and Technology program at Pennsylvania State University, University Park. His research interests are in teacher education for computing education.

ANNA Y. ZHANG is a doctoral student in the Educational Psychology program at Pennsylvania State University, University Park. Her research interests are in scaffolding design and machine learning.