Abstract

This study is focused on the threat of retention associated with test-based promotion in Grade 3. Through analyzing the Early Childhood Longitudinal Study Kindergarten Class of 1998–1999 data, we found that schools having such a policy apparently increased math instructional time but not reading instructional time in Grade 3. On average, the policy did not produce significant differences in third graders’ reading and math learning. However, there seemed to be a notable increase in the proportion of students who achieved an at or above-average proficiency level in Grade 3 math. In both reading and math, the test-based promotion seemingly benefited students at the average or lower than average ability levels. In contrast, there was no evidence that the policy had an impact on students at the two ends of the ability distribution. We discussed the implication of the findings for the current design and implementation of test-based promotion in early grades.

Since the late 1990s, a number of states (e.g., Florida, Georgia, Louisiana, North Carolina, and Texas) and large school districts (e.g., Chicago, New York City) have enacted promotional gates and required students to score above a certain minimum level on standardized tests, typically in reading and/or math, before being promoted to the next grade (Huddleston, 2014). At present, this practice also known as test-based promotion has continued to receive endorsement from policy makers. A particular emphasis has been placed on reading proficiency in third grade. For example, 16 states plus D.C. have mandated retention for third graders who do not demonstrate proficiency on state reading tests; eight extra states have similar practices at the discretion of local schools or school districts (National Conference of State Legislatures [NCSL], 2019). Proponents of test-based promotion have argued that students would likely respond to the promotion standard by working harder and that retained students could benefit from an additional year of schooling. However, opponents claimed that such a policy would violate professional standards for fair and appropriate test use and that retained students might suffer from reduced expectations of teachers and parents, difficulties in adjusting to a younger peer group, and an increased likelihood of school dropout (see reviews of Huddleston, 2014 and Penfield, 2010).

Past research on test-based promotion often focused on the effectiveness of grade repetition and mostly attended to retained students and their performance in post-retention years (e.g., Eren et al., 2017; Roderick & Nagaoka, 2005; Schwerdt et al., 2017; Winters & Greene, 2012). However, in addition to holding back low-performing students, test-based promotion is also expected to exert influence on teaching and learning through the threat of retention. For example, it may direct more attention and resources to low-preforming students prior to or at promotional gate grades; and such students may exert more learning efforts under pressure (Thomas, 2005). Research on the Ending Social Promotion reforms in New York city and Chicago (Allensworth & Nagaoka, 2010; McCombs et al., 2009) suggested that introducing the policy of test-based promotion may change teacher behavior, increase the amount of time allocated to core subjects or skills, and shift resources toward test preparation. It was accompanied with gains in reading and math test scores in both promotional and nonpromotional gate grades. Moreover, the policy seemed to have differential effects on student test performance with benefits particularly for students at lower than average ability levels or students with moderate or high risk of retention. However, the effects on teaching and learning were mostly observed in upper grades. In addition, because high-stakes consequences were attached to the test scores that were also used as study outcomes, the observed score gains might not reflect actual improvement in student learning (B. A. Jacob, 2003, 2005). Limited to two large urban school districts, the generalizability of the findings remains uncertain.

The goal of this study is to examine how early-grade teachers and students responded to the threat of retention when the test-based promotion policy was present in schools. Building on the existing research, we analyze the Early Childhood Longitudinal Study Kindergarten (ECLS-K) and use a propensity score-based approach, the marginal mean weighting through stratification (MMW-S; Hong, 2010, 2012, 2015) to compare schools with and without test-based promotion in the amount of time teachers spent on the core subjects in Grade 3 (i.e., reading and math) and in students’ learning in these subjects as measured by the ECLS direct assessment. We ask the following questions: (a) Did the presence of test-based promotion increase the amount of time allocated to reading and math instruction? (b) Did it affect student math and reading learning? and (c) Did the effects on the learning differ by student prior academic ability levels?

Although ECLS has collected data from two kindergarten cohorts, we use the data from the first cohort (1998–1999) in year 2002 which corresponds to the beginning of the No Child Left Behind (NCLB) era. At the time, the test-based promotion policy had been introduced in a few places (e.g., Louisiana and Chicago) and started to roll out at scale in some other states and large school districts (e.g., Florida, Georgia, Texas, Wisconsin, and New York City, see review of Huddleston, 2014). A battery of items measuring school retention practices were included in the ECLS school administrator questionnaire. Despite variations across states and districts and changes in promotion standards and practices over time (Huddleston, 2014; Marsh et al., 2009; NCSL, 2019), Grade 3 has generally been considered as an early check point for student progress and a critical milestone of student learning especially in terms of reading skills (Hernandez, 2011). By focusing on all students and their teachers in Grade 3 in the nationally representative ECLS sample and examining student performance on low-stake assessments that are not prone to the problem of score inflation due to inappropriate test preparation, this study adds to a small body of literature that examines the effects of retention threat associated with test-based promotion. Specifically, we aim to provide a historical picture of whether and how the policy exerted its influence on teaching and learning in early grades.

Teachers’ Behavioral Changes Under Test-Based Promotion

Test-based promotion holds students accountable for their own performance and imposes no explicit sanctions or rewards for teachers. However, as a teacher’s success is often defined by student performance (Cohen, 1996; Finnigan & Gross, 2007; Johnson, 1986), test-based promotion may potentially change teachers’ instructional decision making (Allensworth & Nagaoka, 2010). Past literature on standards-based reforms has suggested that the standards used in test-driven accountability represent instructional targets for teachers and may influence the content and emphasis of instruction. Researchers (e.g., Au, 2007; Koretz et al., 1996; Taylor et al., 2001) have found that while investing more time in instructional alignment with grade-specific standards, in order to help more students meet the testing standards, teachers may significantly narrow the curriculum and focus less on intellectually challenging work. Polikoff (2012) showed that, once the NCLB was in effect, the introduction of standards and assessments was associated with an improvement in instructional alignment with state standardized tests, producing the largest and the most consistent increases in the alignment in mathematics across all grades.

Similarly, in a study of the Ending Social Promotion reform in Chicago, R. T. Jacob et al. (2004) found that after the school district adopted test-based promotion, teachers invested significantly more time in teaching math, especially in teaching grade-level math skills in the seventh and eighth grades. The increase in the math instructional time was over one half of a standard deviation above the prepolicy level. A small yet insignificant upward trend was observed for teaching reading comprehension over the postpolicy years. In addition, teachers tended to provide more instructional support to low-achieving student after the introduction of the Chicago Public Schools test-based promotion policy—the changes were mostly found in upper grades. In Grade 3, the researchers only observed a decline in introducing new math topics. Diamond’s (2007) observation of second- and fifth-grade classrooms revealed that while the Chicago policy affected teacher behaviors, the changes seemed to be limited to the alignment with tests content and format without adding to the complexity and depth of instruction. Summarizing the past evidence, we suspect that test-based promotion may exert influence on teaching mainly through shifting instructional time and mobilizing resources to concentrate on tested subjects and grade-level skills.

Third-Grade Learning Under Test-Based Promotion

Evidence from qualitative studies has suggested that test-based promotion may affect student learning through changing student motivation (e.g., Roderick & Engel, 2001). However, this may not be the case with primary graders who tend to make little differentiation between ability and effort and tend to be optimistic about one’s own academic competency (Dweck, 2001; Stipek, 2002). Even in Grade 3, rather than directly motivating students, test-based promotion is more likely to affect student learning indirectly through changing teachers’ instructional practices that in turn shape students’ learning opportunities at school (Nye et al., 2004; Palardy & Rumberger, 2008; Rowan et al., 1997).

Teachers’ behavioral changes under test-based promotion, as Allensworth and Nagaoka (2010) pointed out, could be a double-edged sword for student learning. While students at-risk of retention may benefit from increased attention from teachers, high-achieving students who are at no risk of retention are unlikely to receive a similar level of support (R. T. Jacob et al., 2004). In addition, instructional alignment with promotion standards may create differential learning patterns among students at different ability levels. Past studies on reading or math learning in early grades have shown that students with low academic skills benefit from the exposure to basic content while their peers with high academic skills benefit from more advanced content (Engel et al., 2013; Wonder-McDowell et al., 2011; Xue & Meisels 2004). It is likely that aligning instructional content with promotion standards that emphasize the mastery of grade-level skills may be beneficial only to students whose academic proficiency is near the grade benchmark, but may not meet the learning needs of students who are far above or far below the grade proficiency level.

Existing studies on the relationship between test-based promotion and student academic learning prior to or at the promotional gate grades were mostly conducted in the context of the Ending Social Promotion reforms in New York City (NYC) or Chicago. Researchers of NYC’s policy (Mariano et al., 2009) compared all fifth graders who were in the promotional gate year to a same-grade cohort who had not been exposed to the policy and found a significant improvement in students’ test performance in English language arts and math. The researchers then grouped students into four proficiency levels with Level 1 as the lowest proficiency and 4 as the highest based on their fourth-grade assessments. Aiming to remove the potential confounding of concurring reform initiatives, they subtracted the observed gain in Level 3 students from that of Levels 1 and 2 students. The difference-in-differences strategy assumed that test-based promotion only affected students who were at risk of being retained—an assumption that would require an empirical verification. It was claimed that in English language arts, the average effect directly attributable to the test-based promotion was almost zero for students who were at the lower end of Level 1 but ranged from 0.10 to 0.21 standard deviations for Level 2 and other Level 1 students who were close to the retention cutoff; in math, the average adjusted effect was indistinguishable from zero for all students at Levels 1 and 2.

Chicago Researchers similarly found that students experienced dramatic gains in test performance from third to eighth grades right after the introduction of the promotion policy, with the highest gains occurred at the promotional gate grades, that is, Grades 3, 6, and 8 (Bryk, 2003; B. A. Jacob, 2003, 2005; Roderick et al., 2002). By grouping students into different retention risk levels on the basis of the learning gain each student would need to achieve to reach the test cutoff, Roderick et al. (2002) revealed that for third graders, in reading, the threat of retention appeared to have a positive effect on the performance of high- or moderate-risk students but a negative effect on the performance of no-risk students; in math, it appeared to benefit all students with the largest effect on high- or moderate-risk students and the smallest effect on no risk students. B. A. Jacob (2003, 2005) argued that the observed test scores were unlikely a valid measure of student learning because the gains did not sustain over time despite the continuation of the policy initiatives and were not found on other low- or moderate-stakes tests. He pointed out that, as was the case in many studies on test-based accountability (e.g., Krieg, 2008; Ladd & Lauen, 2010; Neal & Schanzenbach 2010; Reback, 2008; Springer, 2008), standardized test scores were also used for promotion and other high-stakes decisions and were subject to the problem of score inflation by inappropriate test preparation. Hence, it remains a question whether the threat associated with test-based promotion can actually trigger large-scale changes in student learning and whether the distributional effects observed on high-stakes test scores in the previous studies can be similarly found on other low-stakes learning measures.

Method

Data and Measures

This study uses the first five waves of the ECLS-K data collected by the U.S. National Center for Education Statistics (NCES) from fall 1998 to spring 2002. The ECLS-K study sampled 18 students per school on average at kindergarten entry in fall 1998 and followed them over time. Spring 2002 was the time when most of the sampled students progressed toward the end of Grade 3, the grade that often serves as the lowest promotional gate grade. Standardized testing was prevalent during that year—only 7% of the sampled schools did not report having standardized testing in place. Such schools tended to have a relatively smaller enrollment, a larger proportion of disadvantaged students (e.g., limited English proficiency students, minority students, or students qualified for free or reduced-price lunch program), and a larger likelihood of providing gifted program services. This study focuses on 1,498 schools whose administrators reported having standardized testing in schools and provided information about practices of grade repetition. The total study sample includes 3,324 Grade–3 classrooms and 9,488 students. 1

Test-Based Promotion

The treatment of interest in this study is the threat of retention associated with test-based promotion. According to school administrators’ report in 2002, 278 schools (18.56%) reported that students could be retained if they failed a school-wide standardized test. These are considered as the treatment group in this study; 1,220 schools (81.44%) did not report such a practice and thus constitute the comparison group.

Instructional Time

Our first research question is whether the presence of test-based promotion increased the amount of time allocated to reading and math instruction. We computed the amount of instructional time that third-grade teachers allocated to each subject based on the teacher self-reported frequency and duration of reading and math instruction in spring 2002. On average, the teachers spent about 385 minutes (

Student Academic Learning

Our second research question asks whether the threat of test-based retention affected student learning of reading and math in Grade 3. The student learning was measured by the ECLS third grade direct assessments in spring 2002. The assessments were designed based on National Assessment of Educational Progress framework and consisted of test batteries in core subjects of each sampled grade (NCES, 2002). The test results, reported for each of these subjects, were not used by school districts or schools for accountability-related decision making. Hence unlike other assessment data analyzed in previous studies that were susceptible to score inflation due to attached high-stakes consequences, the ECLS assessment data promise to provide uncontaminated information about the effects of test-based promotion on student learning.

We used two types of ECLS assessment scores to measure student achievement at the end of Grade 3. One is the

Reading and Math Proficiency Levels

Student Prior Academic Ability

Our third research question asks whether the threat of test-based retention affected students differently depending on their prior academic ability. The ECLS-K 98 study did not survey or assess students in Grades 2 and 4. Although we have information about the proportion of students in a Grade–3 class who were already repeating the grade in spring 2002, no data were collected on the promotion standards adopted by schools or school districts or on student retention status in Grades 3 and 4. As a result, we were unable to determine a student’s risk of being retained at the end of Grade 3. Following the studies in NYC and Chicago (Allensworth & Nagaoka, 2010; McCombs et al., 2009), we decided to use a student’s predicted prior reading ability at the end of Grade 2 as a moderator. The ECLS data contain repeated measures of student reading performance in the falls and springs of Kindergarten and Grade 1 and in the fall of Grade 3 that were vertically equated on the same metric. Using an empirical Bayes estimation approach, we estimated a nonlinear reading growth trajectory in kindergarten and Grade 1 for each student and extrapolated it to the end of Grade 2 under the assumption that a student would likely stay on the same growth trend prior to Grade 3 (see online Supplemental Material A). Based on the distribution of the predicted score, we classified the sampled students into five equal-sized ability groups, with 1 being the lowest ability group and 5 the highest group.

Pretreatment Covariates

The ECLS-K study did not collect information on the exact year when the test-based promotion was first introduced into each treated school. We found that the proportion of students who were already repeating Grade 3 in spring 2002 was twice as many in the treatment schools (

Analytic Strategies

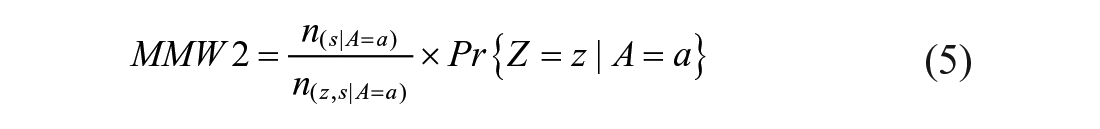

Corresponding to the research questions, we conducted three sets of quasi-experimental analyses. The first set of analyses examined the overall effects of test-based promotion on instructional time allocation; the second set considered the effects of the threat of test-based retention on student academic learning; and the third set investigated whether the effects on learning depend on students’ prior ability levels. Since the assignment to the two treatment conditions was unlikely to be random, we employed a semi-parametric propensity score-based strategy—MMW-S (Hong, 2010, 2012, 2015)—to reduce selection bias associated with the rich set of pretreatment covariates in the ECLS-K data. MMW-S has shown promises for evaluating various types of treatments and for investigating moderated treatment effects in educational studies (Garrett & Hong, 2016; Hong et al., 2012; Hong & Hong, 2009). By assigning a weight to each unit based on propensity stratification, the pretreatment composition of a weighted treatment group resembles that of the whole population or a sub-population.

Effects on Instructional Time Allocation

For the first set of analysis, we used MMW-S to approximate a simple randomized design in which schools were “as if” randomly assigned to either the “test-based promotion” group or the comparison group. We estimated each school’s propensity for adopting test-based promotion through analyzing a logistic regression model. A school’s propensity score is the estimated conditional probability as a function of the 81 school-level covariates. Based on the propensity score, we then subdivided the school sample into four strata and computed a weight for each treated school (

After weighting, the two treatment groups were expected to become comparable in the distribution of the observed school-level propensity scores. A further analysis revealed that the two weighted treatment groups no longer differed in the distribution of about 96% of the school-level covariates.

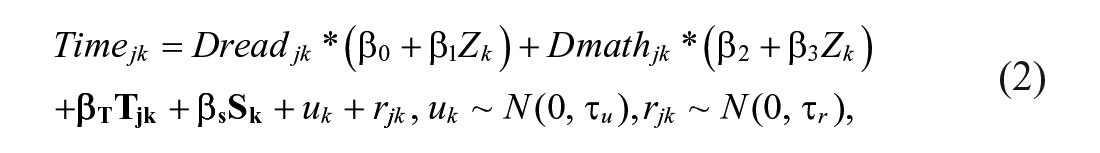

To estimate the effects on instructional time allocated to reading and math, we ran a weighted two-level multivariate model with teachers nested within schools and with the marginal mean weight applied at the school level. For teacher

The outcome “

Effects on Student Learning

To examine the effects of test-based promotion on student learning, we used either standardized

We additionally adjusted for a vector of student outcome predictors

When examining the proficiency level as an outcome, we ran a weighted multinomial logit model for each subject (i.e., reading or math), the structural model is specified as

With the Grade 3 below-average proficiency as the reference level,

Effects on Academic Performance by Student Ability Levels

In the last set of the analyses, we investigated whether the treatment effects were differential by student prior ability levels. Here, we intended to approximate a block randomized design in which five subpopulations of students defined by their prior ability level were viewed as blocks. Within each subpopulation of students, the schools that they attended in Grade 3 were “as if” randomized to either test-based promotion or the comparison condition. On the basis of the four school-level strata previously obtained, for a student from subpopulation

We applied the student-level weight to a three-level univariate model for estimating the moderated effects of test-based promotion on student learning in each of the two subjects as measured by the standardized

Here,

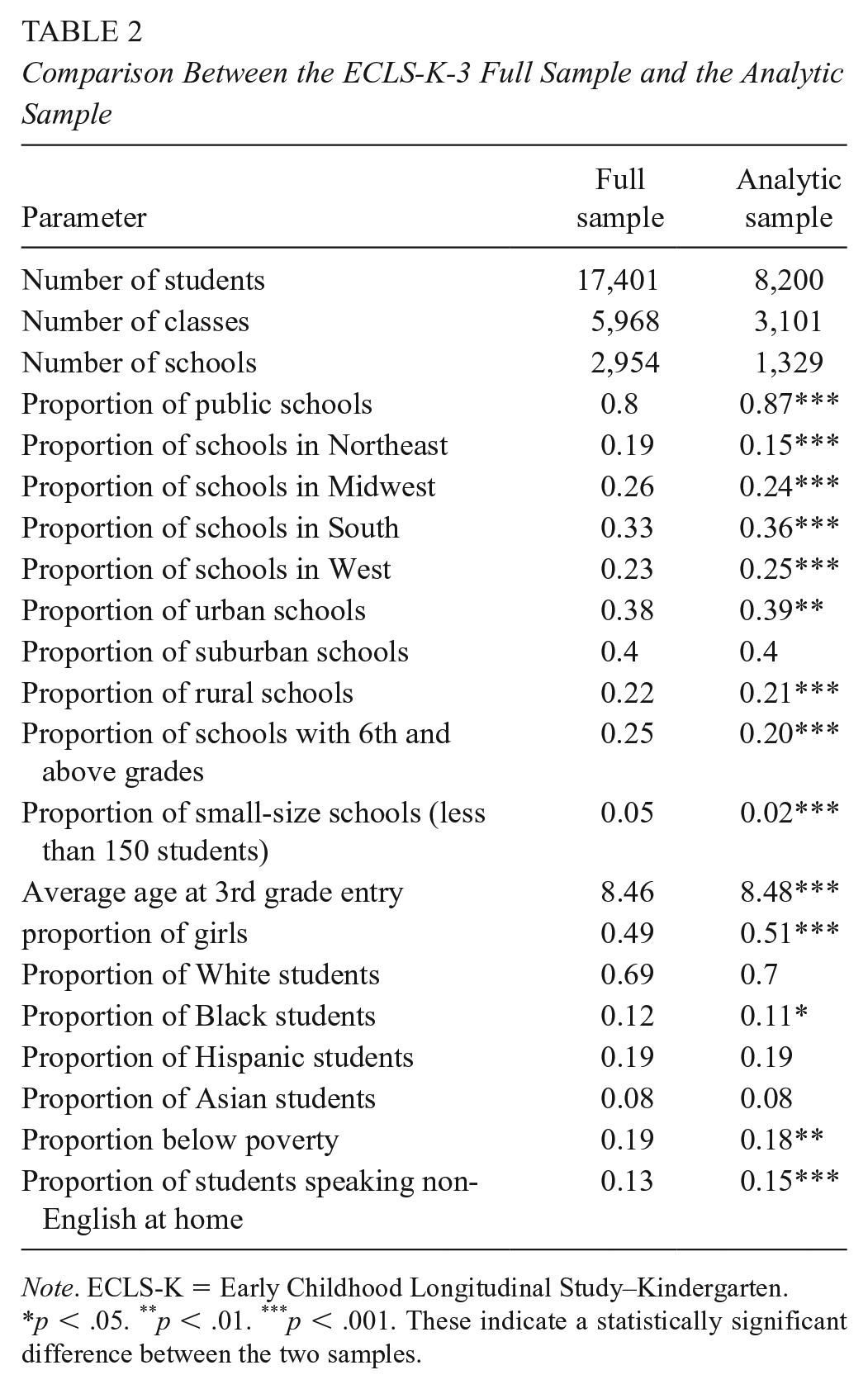

To allow for meaningful comparisons, every school in the analyses should have a nonzero probability of adopting or not adopting test-based promotion. For this reason, we excluded 169 schools that did not have any counterparts in the alternative treatment group on the basis of their estimated propensity scores. Our final analytic sample included 8,200 students from 3,101 classes in 1,329 schools. Table 2 compares the analytic sample with the ECLS-K-3 full sample (

Comparison Between the ECLS-K-3 Full Sample and the Analytic Sample

Results

We started our analysis by first looking at school characteristics that predicted the selection into test-based promotion. With adjustment for the observed school-level pretreatment covariates, we then compared the schools with and without the threat of retention in terms of instructional time allocated to reading and math and in students’ academic learning. We further investigated whether the test-based promotion exerted differential influences on students at different ability levels.

Characteristics of Schools With Test-Based Promotion

We found that the practice of retaining students based on standardized test results was more prevalent in suburban schools and schools in West or Midwest. It was more often found in private or catholic schools at that time. These schools usually enrolled sixth graders and above, and hence had a larger student enrollment and a larger proportion of regular teachers. In addition, compared with schools without test-based promotion, schools with the policy in place had a higher concentration of minority students (especially black and Hispanic students), students eligible for the reduced-price or free lunch program, students with limited English proficiency, and students with lower general knowledge scores at kindergarten entry. The treated schools tended to suffer more from limited educational resources (e.g., inadequate space and no service for students with special needs), problems of student mobility, and/or serious violence and crime within schools and around. Hence, we reason that, had the test-based promotion policy been absent in the treated schools, their students would likely have demonstrated reading and math performance at a lower level on average than their counterparts in the comparison schools.

Effects of Test-Based Promotion on Instructional Time Allocation

We first evaluated the effects of test-based promotion on the time allocated to reading and math instruction. The second panel of Table 3 summarizes the distribution of instructional time by treatment conditions. Grade 3 teachers allocated more instructional time to reading than math on average, a pattern that appeared to be consistent across the two treatment conditions. With adjustment for the observed pretreatment covariates, results from analyzing Model 2 suggest that the presence of test-based promotion in school did not produce a statistically significant change in the amount of time allocated to reading instruction. However, having such a policy apparently increased math instructional time by 15.13 minutes per week (

Outcomes Distribution by Treatment Conditions

Effects of Test-Based Promotion on Student Learning

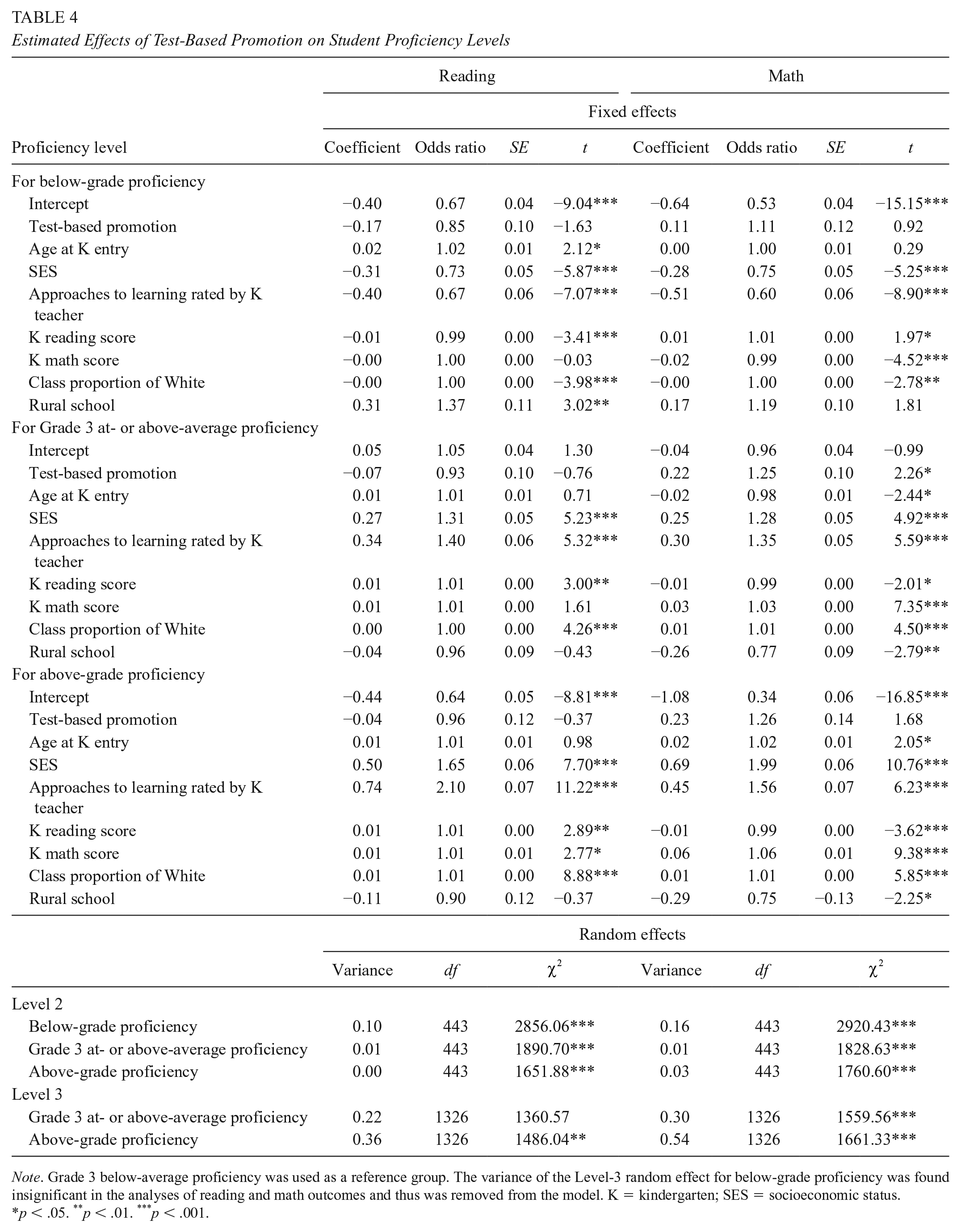

To evaluate the policy effects on student learning in reading and math, we examined both standardized

The

The

Estimated Effects of Test-Based Promotion on Student Proficiency Levels

Effects of Test-Based Promotion on Student Learning by Ability Levels

We hypothesized that the effects of test-based promotion might depend on whether a student was near the margin of failing the grade because such a student might receive special attention from the teacher. The analysis of the overall average effects of test-based promotion on the standardized

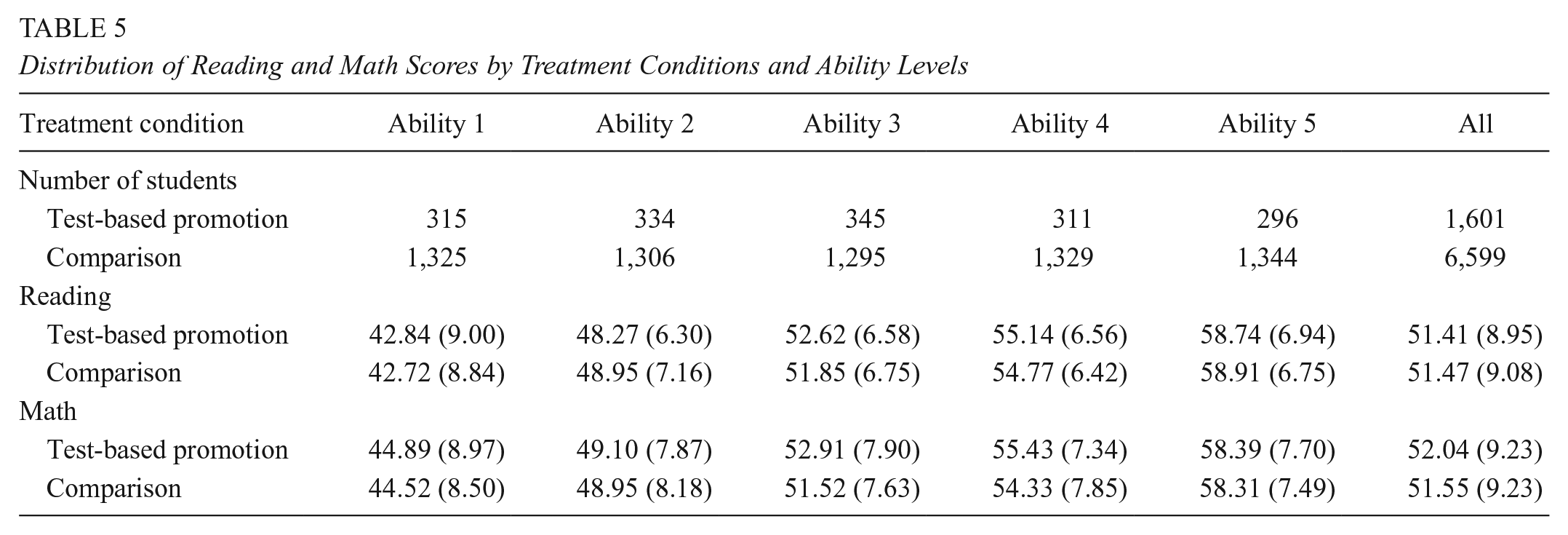

Distribution of Reading and Math Scores by Treatment Conditions and Ability Levels

We then proceeded to examine the treatment effects by ability levels. As shown in Table 6, the presence of test-based promotion had no effects on the average standardized

Estimated Effects of Test-Based Promotion on Student Learning by Prior Ability Levels

Discussion and Conclusion

Analyzing a national dataset collected at the dawning of the NCLB era, this study contributes to the limited literature on the effects of threat of retention associated with the test-based promotion policy. We compared schools with and without retention consequences attached to standardized testing results and examined their teachers’ instructional time allocation and the third graders’ learning in reading and math. We unpacked the policy effects on learning through utilizing ECLS direct assessment results that were not used by the sampled schools and school districts for accountability-related decision making and hence were unlikely to be contaminated by inappropriate test preparation and school gaming strategies (B. A. Jacob, 2003, 2005). The results revealed the likely impacts on teaching and learning when schools set about to implement the new policy.

Summary of Findings

Effects on Instructional Time Allocation

To examine potential changes in teacher behavior, we focused on the time teachers allocated to reading and math instruction. Our results were consistent with the past findings from Chicago that showed an increased investment in math instruction in contrast with limited changes in reading instructional time after the introduction of test-based promotion (R. T. Jacob et al., 2004). We found that teachers in schools with the test-based promotion policy on average spent about 15 more minutes per week teaching math than their counterparts in the comparison schools; yet the policy did not appear to generate a difference in reading instructional time. The average impact of the policy on math instructional time amounted to approximately 6% of the average weekly math instructional time when test-based promotion was not in place (

Subject differences in early grades are well documented in past literature (Spillane, 2005). Schools tend to spend a large amount of time on reading instruction but considerably less time on math in early grades as literacy is considered to be a subject that pervades all disciplines and has established dominance in the daily schedule of elementary schools; in contrast, math is conventionally treated as a stand-alone subject with secondary importance. This pattern continued into Grade 3, as shown in Table 3 that compares instructional time between reading and math. Our findings suggest that when there was an extra pressure to compare student performance against academic standards in both reading and math, teachers and schools might respond by increasing instructional resources for math while leaving the instructional time for reading intact.

Effects on Student Learning

We analyzed two types of ECLS assessment measures: standardized

To sum up, our analyses have provided evidence suggesting some potential benefits of test-based promotion in the early days of the NCLB reform. Specifically, the policy might have promoted the mastery of Grade 3 math proficiency and improved the learning of students at some but not a severe risk of retention. However, our results have also suggested that the effectiveness of the policy was likely limited in terms of influencing teaching and learning. Although under the test-based promotion policy, teachers might see a possibility of enhancing math instruction through increasing its instructional time, the time allocated to reading instruction had likely reached its maximum already. In addition, the policy did not seem to have met the learning needs of students at the two ends of the ability distribution and therefore failed to generate a large-scale improvement in student learning. These findings may have implications for the design and implementation of the current test-based promotion policy that emphasizes reading proficiency in early grades (NCSL, 2019). Test-based promotion alone is likely insufficient for bringing about meaningful changes in teaching and learning that would benefit all students, especially those who tend to suffer from the greatest learning disadvantage.

Study Limitations and Future Research Agenda

Like most secondary data analysis, our work is limited by the available data. Our measures of test-based promotion and instructional time were constructed based on the self-reports of school administrators and teachers and may contain an unknown amount of measurement errors. Additional data collection approaches such as classroom observation or reporting from multiple resources could be employed in the future to further validate the findings.

Moreover, although the ECLS-K 98 data allowed us to examine the outcomes of test-based promotion measured with low-stake assessments, the promotion policy introduced nearly 20 years ago might not be identical to those implemented in more recent years. In addition, no information was provided in ECLS-K about in which year the policy was first introduced into the sampled schools and in which grades promotion decisions were actually tied to student test performance in those schools. For example, we found indication in the data that some treated schools might have adopted test-based promotion even before NCLB started to roll out. Nevertheless, this study does not intend to make inference about the impacts of implementing test-based promotion in specific grades. Rather, we are interested in the threat of retention associated with test-based promotion in general. At a minimum, our empirical evidence has shown how third graders and their teachers would respond to the threat of retention when such a policy was present in schools.

We employed a propensity-score based casual inference approach—the MMW-S method—to reduce selection bias associated with 81 observed pretreatment covariates. The propensity score approach assumed that the treatment assignment was independent of unobserved confounders given the observed covariates (Rosenbaum & Rubin, 1983, 1984). This assumption may not hold as we were conservative in selecting pretreatment covariates due to lack of information about the starting time of test-based promotion in each school. As a result, we considered student/class/school demographic characteristics and students’ learning and behaviors measured at the kindergarten entry only. The adjustment for this set of covariates may not be adequate for removing selection bias. For example, if the test-based promotion was coupled with other reform initiatives such as professional development that might have improved teacher behavior and student learning, we would have likely overestimated the policy effects.

We therefore assessed the extent to which our conclusion would likely be altered by the omission of potentially important unobserved confounders through a sensitivity analysis in which we made further adjustment for a hypothetical confounding effect. Assuming that a bias introduced by some unobserved confounders could not be more severe than that of the strongest observed confounder, we used the bias associated with the pretest scores measured at the kindergarten entry as the referent value for the hypothetical confounding (see procedure described in Hong & Hong, 2009). This sensitivity analysis was focused on the initial estimates that were statistically significant (i.e., the treatment effects on the reading performance of Ability 2 and Ability 3 students, as well as the treatment effect on the math performance of Ability 2 students, see Table 6). Given that the students enrolled in the treated schools tended to have a greater disadvantage and would likely display lower academic performance on average than those enrolled in the comparison schools in the absence of the policy, we reason that our initial results would likely be an underestimate rather than an overestimate of the policy benefit. In reading, after removing the hypothetical negative confounding, the adjusted estimates were 1.27 (95% CI: [0.19, 2.35]) for Ability 2 students and 1.51 (95% CI [0.46, 2.58]) for Ability 3 students. In math, the adjusted estimate was 2.01 (95% CI [0.74, 3.28]) for Ability 2 students. All the 95% confidence intervals were still above zero. Hence, our initial conclusion about the potential benefits of test-based promotion for selected subpopulations of students appeared to be robust to potential omission of confounding.

Although this study employed a national data set, our analytic sample excluded schools that did not provide information on either retention or testing practices as well as schools that did not have counterparts under the alternative treatment condition. The analytic sample also excluded students who had no K–3 assessment data. Table 2 has shown that the analytic sample differed from the ECLS K–3 full sample in school size and student composition. Hence readers should use caution when they attempt to generalize the findings to other populations.

Last, the current analysis did not examine whether the effects of test-based promotion differed across classrooms or teachers. Teachers may calibrate instruction according to their perception of students’ learning potential (Cohen et al., 2003). Future research may examine whether the effects of test-based promotion may depend on teachers’ growth mindset. One may further investigate the medication process by asking whether test-based promotion may affect student learning though changing instructional practices.

Supplemental Material

sj-docx-1-ero-10.1177_2332858420979167 – Supplemental material for Schools With Test-Based Promotion: Effects on Instructional Time Allocation and Student Learning in Grade 3

Supplemental material, sj-docx-1-ero-10.1177_2332858420979167 for Schools With Test-Based Promotion: Effects on Instructional Time Allocation and Student Learning in Grade 3 by Yihua Hong and Guanglei Hong in AERA Open

Footnotes

Acknowledgements

This research was supported by a dissertation grant from the American Educational Research Association, which receives funds for its “AERA Grants Program” from the National Science Foundation under Grant #DRL-0941014 and a William T. Grant Foundation Supplementary Mentoring Award for Junior Scholars of Color. Additional support came from the Spencer Foundation and the Social Sciences and Humanities Research Council of Canada. Opinions reflect those of the authors and do not necessarily reflect those of the granting agencies.

Notes

Authors

Yihua HONG is a researcher at the Center for Research, Evaluation and Equity in Education of RTI International. Her research interests include test-based accountability, new teacher support, and instructional effectiveness.

GUANGLEI HONG is a professor at the Department of Comparative Human Development and the College of the University of Chicago. She has focused her research on developing causal inference theories and methods for evaluating educational and social policies and programs in multilevel, longitudinal settings.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.