Abstract

One of the major challenges in educational reform is supporting teachers and the profession in the continual improvement of instruction. Research-practice partnerships and particularly networked improvement communities are well-suited for such knowledge-building work. This article examines how a networked improvement community with eight school-based professional learning communities—comprised of secondary science teachers, science and emergent bilingual coaches, and researchers—launched into improvement work within schools and across the district. We used data from professional learning communities to analyze pathways into improvement work and reflective data to understand practitioners’ perspectives. We describe three improvement launch patterns: (1) Local Practice Development, (2) Spread and Local Adaptation, and (3) Integrating New Practices. We raise questions about what is lost and gained in the transfer of tools and practices across schools and theorize about how research-practice partnerships find footholds into joint improvement work.

Introduction

Moving away from teaching science as a set of decontextualized facts and toward deep engagement with real-world, puzzling phenomena in ways that are authentic and relevant to students remains a central challenge for science education reform (Banilower et al., 2018). In multilingual schools, there are additional opportunities and challenges to continually improve the development of reform-oriented teaching practices to deepen engagement and diversify participation, particularly for emergent bilingual (EB) students 1 (Hopkins, Lowenhaupt, & Sweet, 2015; National Academies of Sciences, Engineering, and Medicine, 2018).

Some models of science education and emergent bilingual reform lean on research-practice partnerships (RPPs; as defined by Coburn & Penuel, 2016) to improve classroom instruction; most often these are opt-in models in which researchers partner with district leaders and select teachers to reconstruct curriculum and classroom assessments (e.g., Debarger et al., 2016; Moorthy, 2018). The RPP described in this study had two distinct features: (1) over time all secondary science teachers from eight schools in the same school district opted to participate and (2) the focus of the improvement work was directly on classroom teaching practices. The RPP began in two schools with a focus on science instruction in professional learning communities (PLCs). However, after the first year, district leaders pressed the partnership to invite all secondary science teachers and to focus on the improvement of both science and EB instruction as a way to attend to equity. This press meant that the partnership had to attend to scaling and merging two types of instructional expertise.

Similar to most RPPs, mechanisms were put in place to support a culture of collaborative inquiry and sharing across the partnership. Specifically, we borrowed principles and processes from the literature on networked improvement communities (NICs; Bryk, Gomez, & Grunow, 2011; Bryk, Gomez, Grunow, & LeMahieu, 2015; Engelbart, 1992, 2003) to develop district-based infrastructure that supported experimentation with classroom practices and school-to-school learning. For this article, we examine how an “all-comers” NIC in one school district launched into improvement work with science and EB teaching practices. We investigate the varied ways PLCs got started on improvement work and specifically introduce the idea of “footholds.” Akin to potential starting places climbers use to anchor their ascent, we examined how footholds (e.g., teaching practices, classroom and PLC tools, and specialized roles) oriented teams for collaborative work to advance science and EB teaching practices. We examine the internal workings of network initiation (Cannata, Redding, Brown, Joshi, & Rutledge, 2017; Redding, Cannata, & Miller, 2017; Russell, Bryk, Dolle, Gomez, LeMahieu, & Grunow, 2017) attending specifically to the various ways PLCs use practices and tools as footholds into improvement work. Moreover, rather than simply examining initial phases of the partnership work, we retrospectively analyzed footholds by studying PLCs’ engagement in long-term lines of inquiry—providing insights into footholds that proved to be generative and potential “look-fors” in the work of other intradistrict NICs. We also considered how the footholds supported school-to-school learning and the development of practices and tools across the network (Cannata et al., 2017; Stein & Coburn, 2008).

Literature Review

Launching Into Improvement Work With One Form of RPPs: Networked Improvement Communities

Important to the literature on RPPs and NICs is clarifying how research-practice partners collaboratively “launch into improvement work” by taking up and working for sustained periods of time on problems of practice that are meaningful to practitioners (Russell et al., 2017). Some RPP studies describe how partners first negotiate shared areas of interests (see Furtak, Henson, & Buell, 2016; Johnson, Severance, Penuel, & Leary, 2016) and others describe outcomes of systematic partnership work (DeBarger et al., 2016; Farrell et al., 2018). The work between these two ends represents the “messy middle” as partners continually negotiate what and how they learn together and build multileveled infrastructure to support joint work (Penuel, 2019). For this article, we documented a retrospective case of how PLCs in an RPP made sense of and launched into sustained collaborative improvement work; this case could be one of many that inform RPPs about early design decisions and potential trade-offs for these decisions (Coburn & Penuel, 2016).

As RPP NICs are initiated, a team of partners act as the network hub that engage in making design decisions as they name a problem of practice, articulate a working theory of improvement, build measurement and analytic methods, and develop organizational structures (Bryk et al., 2015; Russell et al., 2017). Below, we describe some early design decisions/principles that helped launch the Ambitious Science Teaching (AST) NIC in one school district. Our principles, however, emphasized a decentralized approach to launching the network to foster PLC (and specifically teachers’) ownership of the improvement work (Cohen-Vogel, Cannata, Rutledge, & Rose Socol, 2016).

The first design principle we applied was mapping a complex problem from multiple perspectives and developing a common improvement aim. A general aim emerged from discussion with PLCs and then across role actors (the district leadership team, STEM [science, technology, engineering, mathematics] and EB directors, principals, and science education researchers) as the district wrestled with attending to the Next Generation Science Standards (NGSS; NGSS Lead States, 2013) adopted by the state in 2013; we agreed that the partnership would focus on the improvement of all students’ written and spoken scientific models, explanations and arguments, with a particular focus on EB students. To support the mapping of the problem, we used a driver diagram, a NIC tool used to articulate a working theory of improvement with causal language about how actions might support a particular end goal (Bryk et al., 2011). The diagram is often constructed by partners as they hypothesize links among an improvement aim, primary drivers (ideas that directly advance the improvement aim), and secondary drivers (small-scale, doable actions linked to primary drivers). In our case, school PLCs (composed of all secondary science teachers, science and EB coaches and researchers, and in some cases school administrators) developed related and more specific aims based on their interests and local contexts, constructed initial driver diagrams, and hypothesized and tested links between their aims and named drivers. This process meant there was variability in the improvement work across the AST NIC. In contrast, other NICs typically use a single network-wide driver diagram (Bryk et al., 2015).

The second design principle was using common practical measurements as a central activity in the NIC. Often NICs invest in the upfront development of a network-level practical measure that provides an easy-to-use gauge for system improvements (Yeager, Bryk, Muhich, Hausman, & Morales, 2013). However, in this study, PLCs developed and iterated on their own practical measures in service of PLCs in taking ownership of the inquiry process.

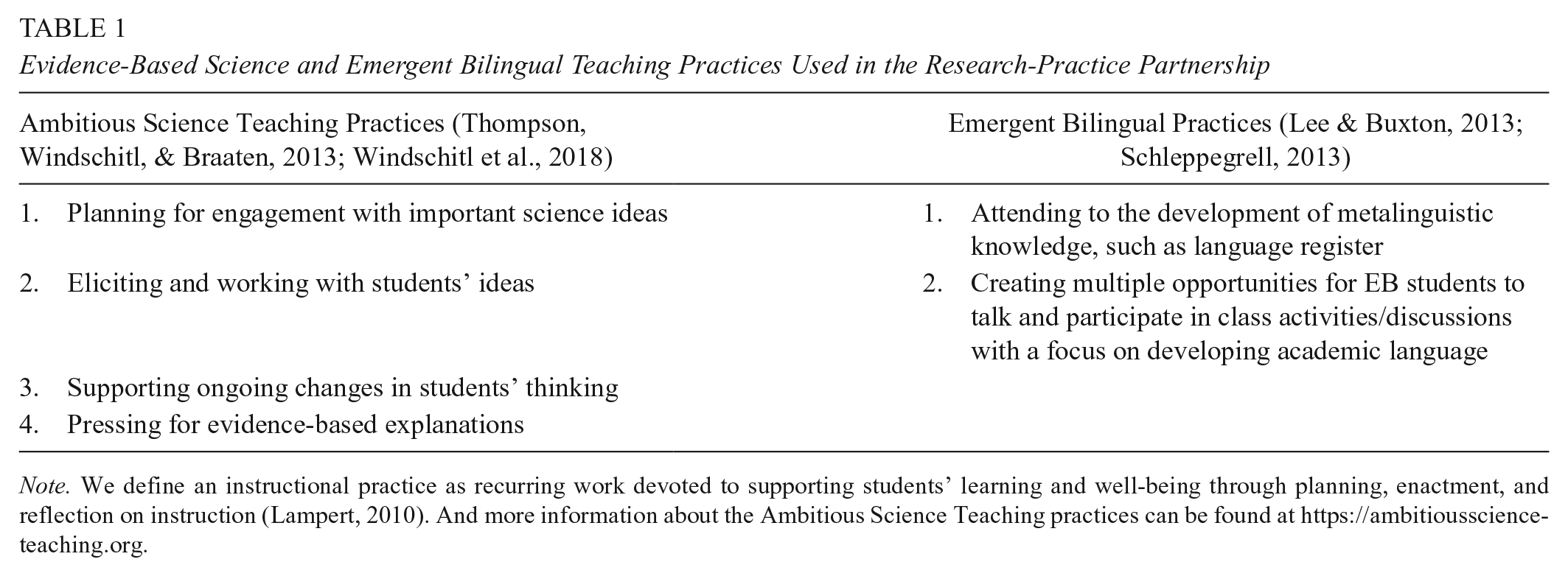

Third, we applied the principle of using common protocols for inquiry to support a continuous improvement ethic across the network. Each school launched into improvement work with a PLC comprised of similar role actors and similar professional learning structures for bringing PLCs together to investigate teaching and learning, called Studios. The science Studio model engaged PLCs in repeated improvement cycles, whereby teams iterated with specific teaching practices, tools, and practical measures during Studios over time (also see Thompson, Hagenah, McDonald, & Barchenger, 2019). Studios are similar to Lesson Study (Lewis, Perry, & Murata, 2006) in that there is a focus on high-quality teaching in the context of a design experiment in a focal classroom. Yet important to our Studio model was inquiry into a starter set of science and EB teaching practices (Table 1) and how they supported student learning.

Evidence-Based Science and Emergent Bilingual Teaching Practices Used in the Research-Practice Partnership

Note. We define an instructional practice as recurring work devoted to supporting students’ learning and well-being through planning, enactment, and reflection on instruction (Lampert, 2010). And more information about the Ambitious Science Teaching practices can be found at https://ambitiousscienceteaching.org.

Last, we applied the design principle of focusing improvement work on the generation of practice-based knowledge. Improvement cycles within Studios were designed from the outset to support PLCs in inquiring into questions about “Which practices work?” “Under which conditions?” and “For whom?” This is a core component of improvement science research. Rather than asking questions of general efficacy, PLCs inquired into the conditions that supported learning with a focus on understanding variation among classrooms. However, the network did not start from scratch; many partners began with knowledge about sets of evidence-based science and EB teaching practices. The coaches, researchers, and about 19% of the teachers initially brought to bear an understanding of the science teaching or EB practices named in Table 1 (percentage described in Table 2). The science teaching practices—also known as ambitious teaching practices since they are designed to engage students in meaningful and authentic disciplinary work—can, but do not always, support rigorous and equitable discourse in classrooms (Thompson et al., 2016; Thompson et al., 2013). The EB practices—which were selected by EB coaches and researchers—are known to support the development of academic discourse and language, and metalinguistic knowledge (Lee & Buxton, 2013; Schleppegrell, 2013).

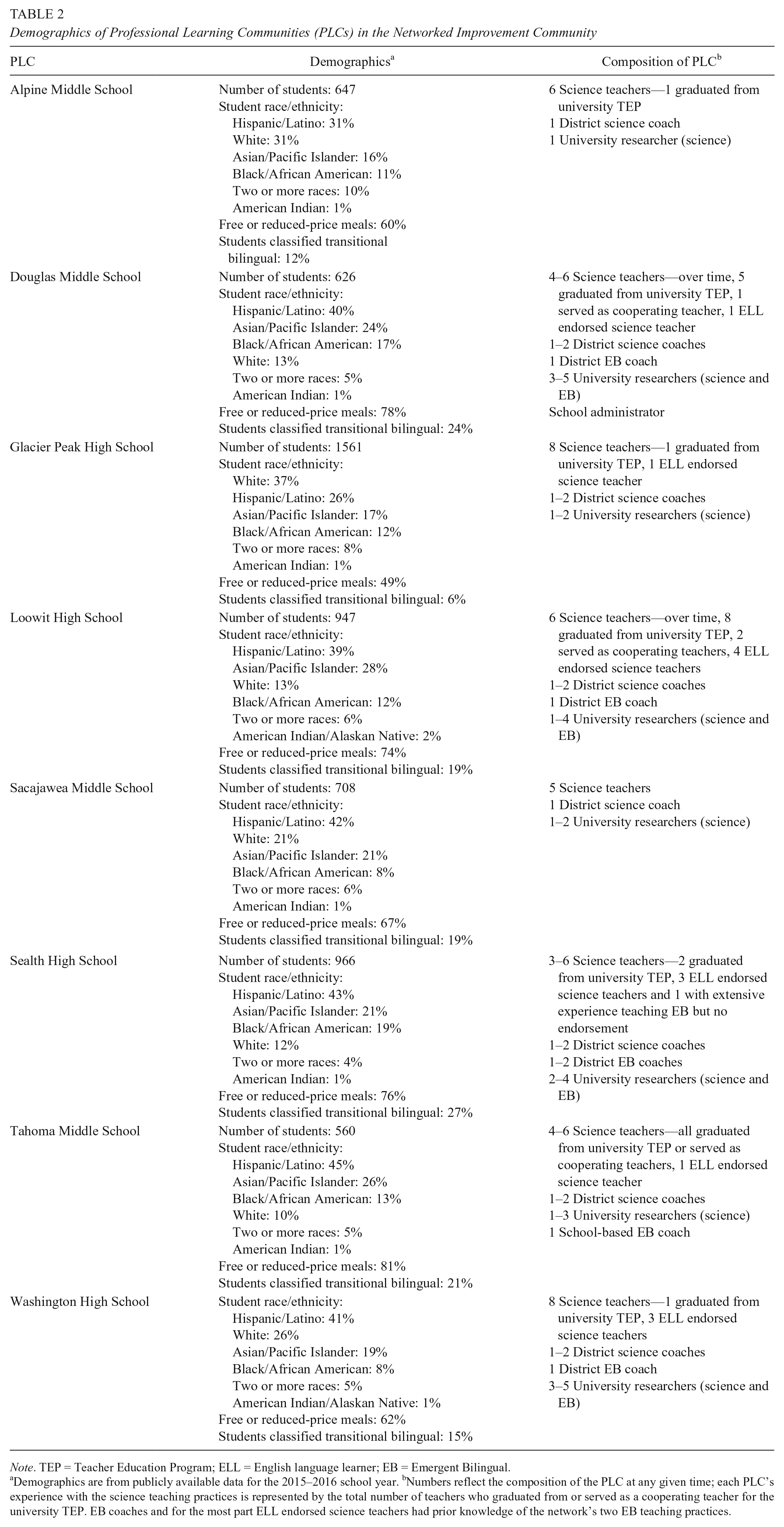

Demographics of Professional Learning Communities (PLCs) in the Networked Improvement Community

Note. TEP = Teacher Education Program; ELL = English language learner; EB = Emergent Bilingual.

Demographics are from publicly available data for the 2015–2016 school year. bNumbers reflect the composition of the PLC at any given time; each PLC’s experience with the science teaching practices is represented by the total number of teachers who graduated from or served as a cooperating teacher for the university TEP. EB coaches and for the most part ELL endorsed science teachers had prior knowledge of the network’s two EB teaching practices.

Each of these practices had existing tools that supported classroom learning, such as talk protocols or sentence starter scaffolds for students to articulate explanations. These “starter” tools and practices were objects of negotiation and knowledge-building for PLCs during Studios. Of interest for this article were the ways that PLCs used these as footholds into improvement work.

School-to-School Learning in NICs

The literature on NICs emphasizes the importance of launching improvement work concurrently at multiple levels in systems. This was particularly important for the AST NIC as we engaged in improvement work linking multiple schools within a single district. Much of the literature on educational reform (NICs and otherwise) tends to describe improvement work in classrooms and in PLCs, but there are fewer descriptions of learning across schools in networks (Bryk et al., 2011; Cannata et al., 2017; Redding et al., 2017). For this article, we focused on school and intradistrict learning; as such we review what is known about how PLCs generate knowledge to be shared in networks.

Talk, Tools, and Practices in PLCs

In the AST NIC, PLCs and classrooms were the nodes of the improvement network, tasked not only with testing practices and tools they created but also with evaluating ideas from other members of the NIC. We drew on the literature about high-functioning PLCs with strong learning cultures engaging in continual improvement to support our design and investigation of this work (Horn, Garner, Kane, & Brasel, 2017; Stoll, Bolam, McMahon, Wallace, & Thomas, 2006; Vescio, Ross, & Adams, 2008; Wei, Darling-Hammond, Andree, Richardson, & Orphanos, 2009; Woodland, 2016). High-functioning PLCs systematically pursue questions of learning goals and instructional practices, reason with student data, and connect general teaching principles and theoretical understandings to specific classroom instances (Feldman, 1996; Slavit & Nelson, 2010). As they do so, PLCs move beyond conversations about “sharing activities, information, and student anecdotes” (Nelson, Deuel, Slavit, & Kennedy, 2010, p. 176) to engaged dialogue about (1) working theories of teaching and student learning, (2) teaching practices and classroom tools, and (3) practical measurements using students’ experiences as data. Bryk and colleagues (2015) describe the interrelationships among these critical elements as a “learning loop for quality improvement” (p. 90). The literature on PLCs suggests that engaging in professional dialogue about the relationships among these elements are important for both launching and sustaining improvement work within and across PLCs (Horn & Little, 2010).

Networked PLCs

While PLCs are important structures for knowledge-building, if they stay isolated, their improvement will stagnate and the influence of their expertise stays limited. Jackson and Temperley (2006) claim that a network of PLCs can strengthen the internal learning culture of these PLCs. Studies have shown that networking educators and schools across jurisdictions/districts generally results in growth in both teacher and student learning (e.g., Chapman & Muijs, 2014; Jackson & Temperley, 2006) and a strong shift in culture toward inquiry and improvement work (Glaze, 2013). Yet going across jurisdictions can be challenging. Prenger, Poortman, and Handelzalts (2018), for example, studied a network of 23 PLCs in 11 different regions in the Netherlands and identified two challenge areas: on the organizational side, a lack of time and high workload for members, varying levels of active participation, and low satisfaction with individual PLC leaders; on the knowledge creation side, variable implementation of developed materials in practice. Teachers found it difficult to apply materials in their own teaching and to broker improvement in their schools. Aware of these challenges, and the opportunity to design for an all-comers model within the boundaries of a single school district, we focused our design and research efforts on understanding school and intradistrict learning.

Our overarching questions were “How did PLCs launch into lines of joint, ongoing instructional improvement work?” and “How did launches across the network vary?” Specifically, we asked, (1) “What footholds (tools, practices, processes, and partnership roles) supported teams in launching into improvement work within and across schools?” and (2) “How were elements of the learning loop (practice, data, and theory) used in PLCs’ discourse as they launched into improvement work?”

RPP Context and Development

Our study draws on data from the professional development activities of a developing intradistrict RPP. The school district is midsized with 18,000 students and 101 languages spoken. Relative to the state, students underperform on K–12 science, math, and literacy assessments, and there is a relatively high rate of teacher and administrator turnover. In 2012, two of the eight schools were labeled as low-performing and received School Improvement Grants from the U.S. Department of Education. Table 2 describes each school-based PLC’s context and composition.

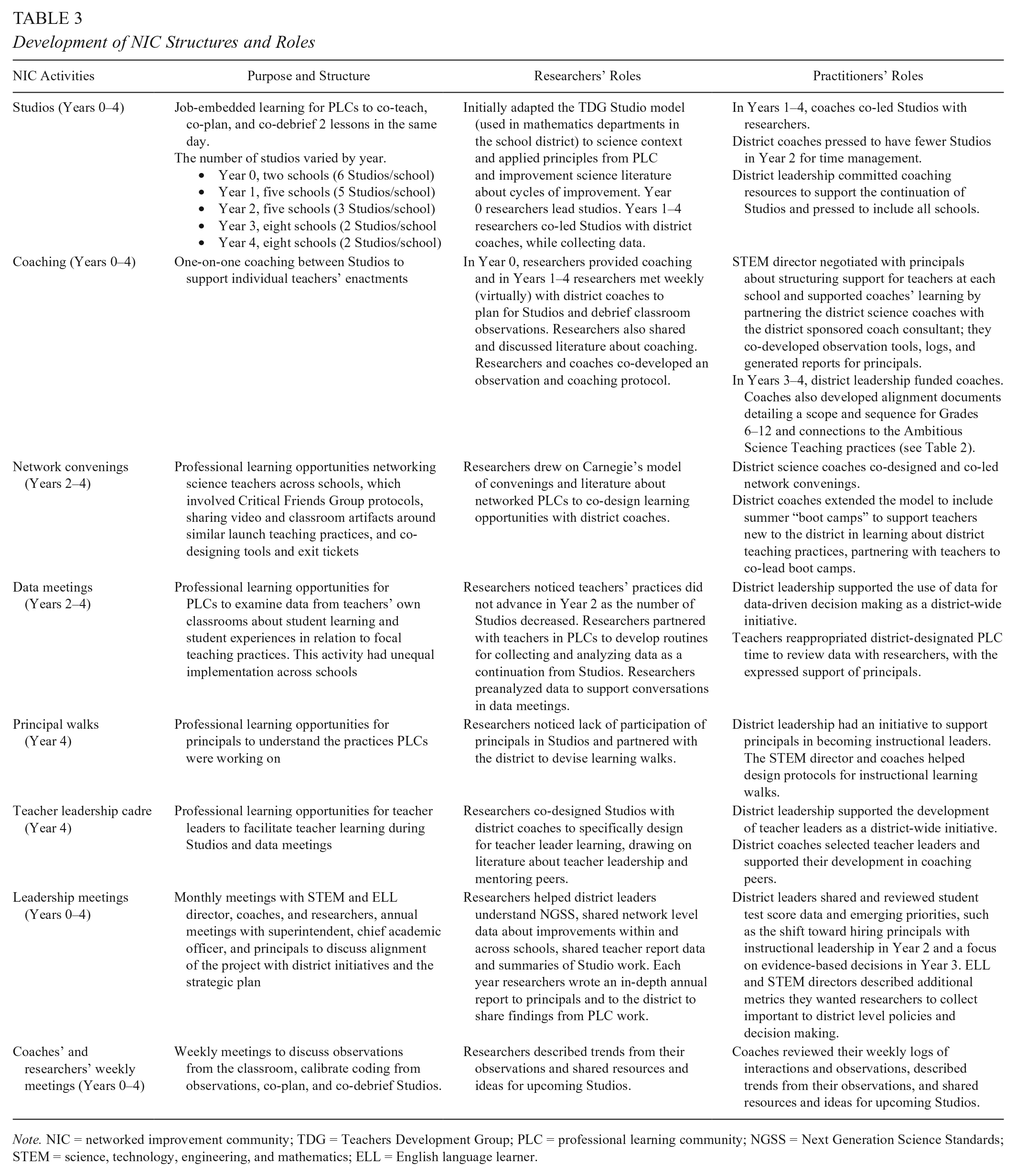

For the past decade, the school district and university partnered on various projects to improve science instruction. The AST NIC evolved to include (1) Studios, full day job-embedded professional development opportunities for PLCs, (2) district science coaches who provided one-on-one coaching and facilitated Studios, (3) network-wide convenings where teams examined common problems of practice and shared learnings and artifacts, (4) data meetings (in between Studios) where teams iterated on practices in relation to classroom data, and (5) instructional walks with principals (see Table 3 for a description of how these structures evolved in the RPP). In Year 4, a teacher leader cadre was developed to drive school-based improvement work and support sustainability. By this time, grant funding had ended and the school district assumed responsibility for funding Studios and the science coaching positions.

Development of NIC Structures and Roles

Note. NIC = networked improvement community; TDG = Teachers Development Group; PLC = professional learning community; NGSS = Next Generation Science Standards; STEM = science, technology, engineering, and mathematics; ELL = English language learner.

There were several district conditions that supported the work. For instance, the district allotted 90 minutes monthly for teacher-directed collaborative work focused on building or district priorities. Departments were held accountable for meeting, but how and what they worked on was largely unregulated, and PLCs could marshal this time for data meetings. Furthermore, Studios were a new structure to science departments, but not to the district. We adapted a Studio model from the Teachers Development Group (2010) that was used in math in which teams co-plan, co-teach, and co-debrief lessons twice during the day.

Data and Method

Data sources spanned levels of the RPP and the eight schools in the network. 2 From 2012 to 2017, we conducted 62 PLC-based Studios; from each, we collected video records, artifacts (e.g., lesson plans, student work), field notes, and short exit surveys from individual teachers reflecting on the Studio and their next steps. We also conducted annual network surveys across this period, which we used to inform our understanding of teachers’ experience with the practices in Table 2 and their perceptions of the teaching practice(s) they were working to improve. At the classroom level, we conducted 145 classroom observations from 2013 to 2016 with 96 teachers and recorded whether teachers implemented teaching practices being worked on in the network. Additionally, at the network level, we collected artifacts and field notes from other AST NIC activities (e.g., convenings). District, state, and national policy contexts were not part of the analysis.

We focused our study on improvement launches, which we operationalized as a series of conversations and actions in which a PLC specified and started testing a focal teaching practice that was then iterated on over time (at minimum half of a school year). Often teams would try a practice or tool once during a Studio but not continue with it; for this study, we only examined practices that were iterated on over time, with evidence that multiple team members continued to shape the practice on subsequent Studios or lessons in their own classrooms. All improvement launches discussed in this article demonstrate such evidence triangulated across data sources (e.g., Studios, notes from classroom observations, teachers’ annual surveys).

To address our research questions, we qualitatively analyzed teams’ activities and discourse just prior to and during identified improvement launches to look for patterns in how launches unfolded across the network. Specifically, we identified resources (e.g., tools, processes) teams drew on just prior to and as they began to specify a teaching practice, considering these resources as candidates for “footholds.” We also focused on where these resources came from (at times tracing to other NIC activities, like convenings). Discursively, we used Studiocode to code video data from Studios for elements of the “learning loop” (Bryk et al., 2015)—practice, theory, and data as described in Table 4. Importantly, we did not just code when teams were talking about these foci, but we also briefly recorded what teams were discussing. This allowed us to further specify the different lines of improvement work. Inductively, we identified two other discursive emphases that were common in teams’ discourse and connected to learning loop conversations (see Table 4) and coded for tools the team used in their work. Once we identified inductive components, we recoded earlier videos for these components (Corbin & Strauss, 2008). (See online Supplemental Material A for an example of our coding.)

Codes for PLCs’ Talk During Planning and Debriefing on Studios

Note. PLC = professional learning community.

Examining similarities and differences in potential footholds and discourse across all 17 improvement launches across the eight schools in the AST NIC led to our characterization of three launch patterns with varied footholds and entries into learning loop discourse.

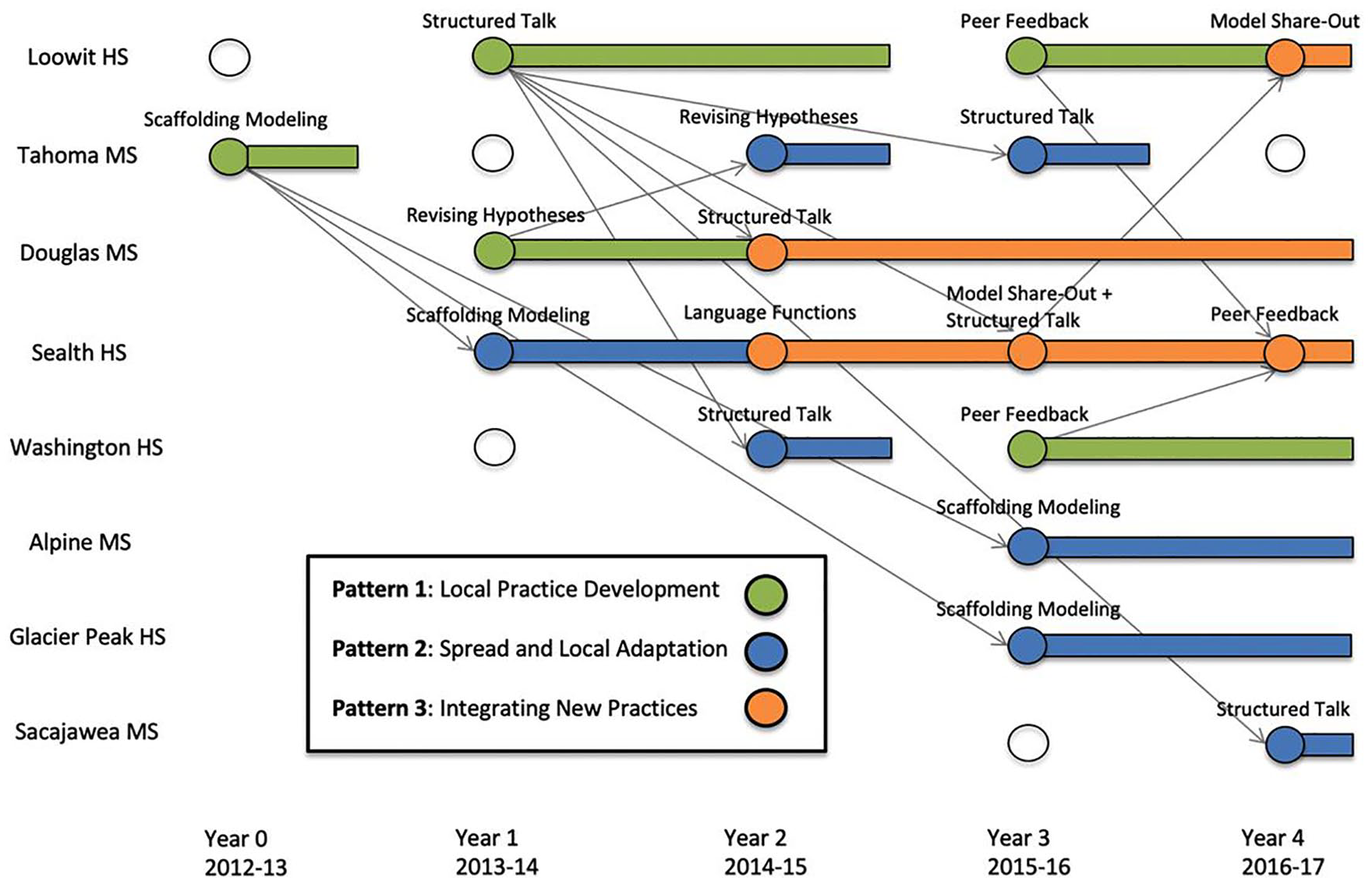

Findings: Improvement Launches

In analyzing improvement launches across the eight schools in the AST NIC, we identified three distinct patterns that recurred and accounted for all examples: Local Practice Development, Spread and Local Adaptation, and Integrating New Practices. These are color-coded in Figure 1, with time on the X-axis and PLCs on the Y-axis.

Improvement launches across the networked improvement community (NIC), showing how work on practices launched, persisted, and spread. Circles represent a launch that occurred in a given year, with the focal practice named above; empty circles mean that professional learning communities (PLCs) were part of the NIC but not collectively working on a focal practice. Rectangles show the persistence of lines of improvement for teams; practices often continued to be implemented in classrooms beyond the end of rectangles but were no longer focal objects of inquiry for the team. Arrows indicate spread as PLCs picked up and worked on practices from other schools.

As evident in Figure 1, teams had different rates and approaches to launching into improvement work, sometimes developing practices and tools in house and sometimes adopting and iterating on practices and tools from other schools. Figure 1 also highlights that the network as a whole developed seven distinct instructional practices between 2012 and 2017. In line with the network’s overall focus on science and EB learning, many practices attended to both; for instance, “Scaffolding Modeling” integrated the science practice of modeling with an EB practice of supporting comprehensible input with visuals (Echevarria, Vogt, & Short, 2013), and the “Science Explanations With Language Functions” practice focused on composing complex sentences in the context of scientific modeling and explanation (Lee, Quinn, & Valdés, 2013).

Below, we organize the findings by the three improvement launch patterns. For each pattern, we first provide a high-level description of the pattern then illustrate each pattern with an in-depth example from a PLC, highlighting footholds and distinct ways PLCs entered into practice-theory-data learning loop conversations associated with each launch pattern. We also provide additional but abbreviated examples for each pattern; additional discourse excerpts can be found in online Supplemental Material B. (Note that by foregrounding the launch patterns across the networked PLCs, our findings do not deeply explore or preserve the chronology of improvement for particular schools.)

Improvement Launch Pattern 1: Local Practice Development

Of 17 improvement launches from 2012 to 2017 across the AST NIC, five fit the pattern of Local Practice Development. For this pattern, PLCs identified a common improvement aim for student learning, often using a driver diagram as a foothold; co-developed a teaching practice and classroom tools in support of the identified aim, drawing on the expertise of diverse role actors (including support from coaches and researchers as a foothold); and testing and improving the practice and tools in interaction with data and theory (Figure 2).

Pattern 1, Local Practice Development (five improvement launches).

We use Loowit High School’s (HS) launch of “Structured Talk” during Year 1 of the AST NIC as our in-depth example for pattern 1.

Identifying a Common Improvement Aim

Developing a common aim for joint work in the PLC was an important first step in launches where teams developed a local practice related to the AST NIC aims. One improvement science tool described in the literature review, the driver diagram, functioned as a foothold into conversations about common aims. During Studios at the beginning of the partnership, coaches and researchers introduced the driver diagram as a tool that could help specify and drive learning across the network by connecting PLCs’ work to the common network improvement aim. PLCs were invited to articulate specific team aims in support of the network aim.

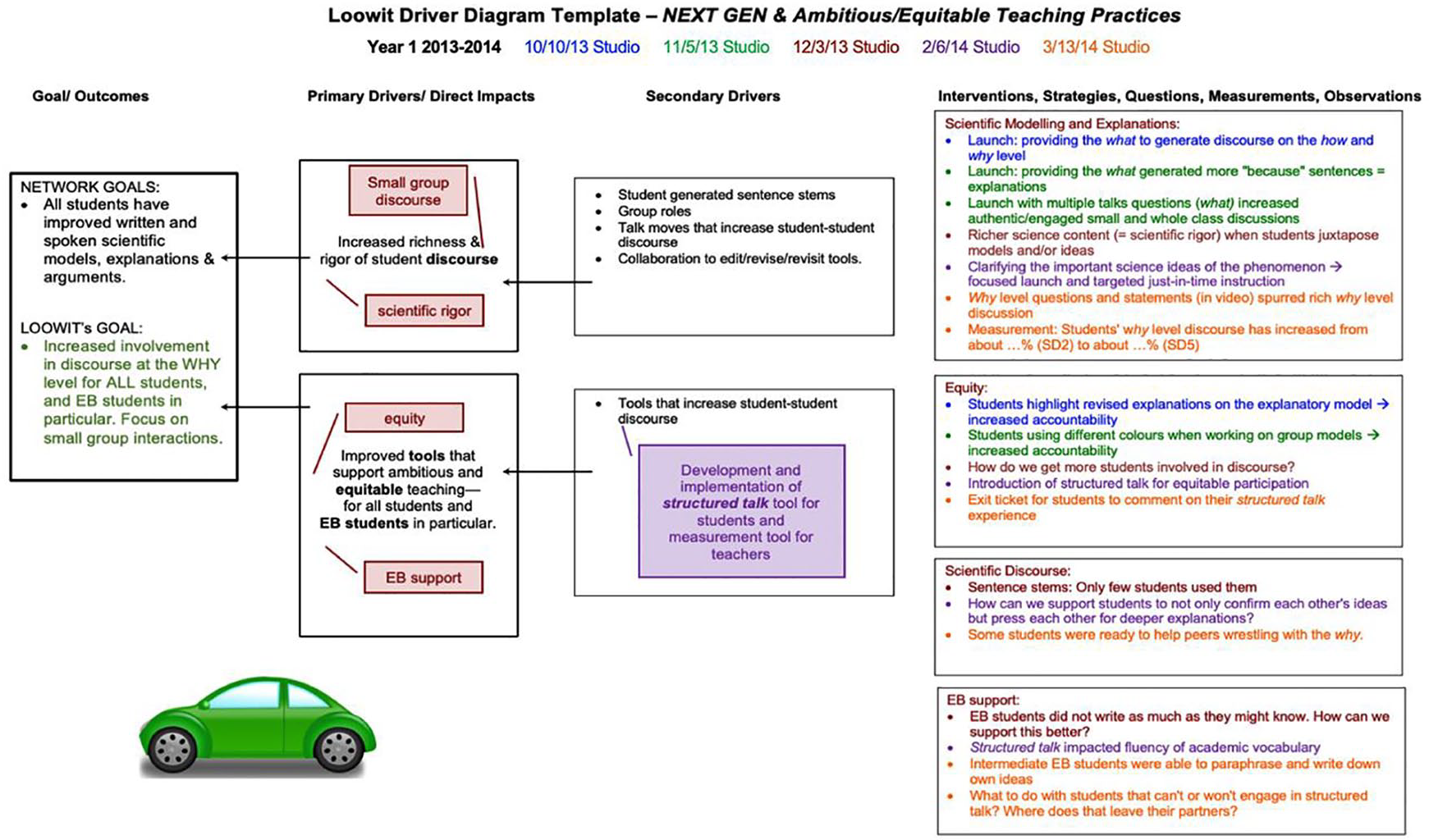

During an initial driver diagram conversation at Loowit at a Studio on 11/5/13, teachers shared ideas about what they would like to work on as a team. The team ultimately articulated an aim of increasing opportunities for sustained and evidence-based student talk for how and why scientific phenomena occur. They constructed an initial driver diagram that operated as a place to record hunches, data, questions, and successful practice ideas for subsequent Studios. Driver diagrams for each school had four general network drivers named as important for supporting the overall goal: scientific modeling/explanation, scientific discourse, equity, and EB supports (see Figure 3).

Loowit professional learning community’s (PLC’s) working driver diagram at end of Year 1. The diagram is read left to right with network and PLC goals on the left. The team identified a goal by their 11/5/13 Studio. General primary drivers were identified by the 12/3/13 Studio (red boxes), actionable secondary drivers were identified by the 2/6/14 Studio, and a list of questions and tested strategies from each Studio appeared on the right. PLC learnings are color-coded by Studio.

When the Loowit PLC examined their driver diagram at their next Studio (12/3/13), the team realized that they had generated questions, but not created or tested any ideas related to EB supports. This noticing prompted them to clarify what they meant by “EB supports,” which they framed as “empowering EBs to share their prior knowledge and experiences.” The discussion pushed the PLC to be intentional about gathering observational data on EBs’ participation during the Studio and seeking ways of supporting EBs’ equitable participation in discourse in subsequent Studios. This expansion of their collective aim helped set the stage for the team’s development of the Structured Talk practice.

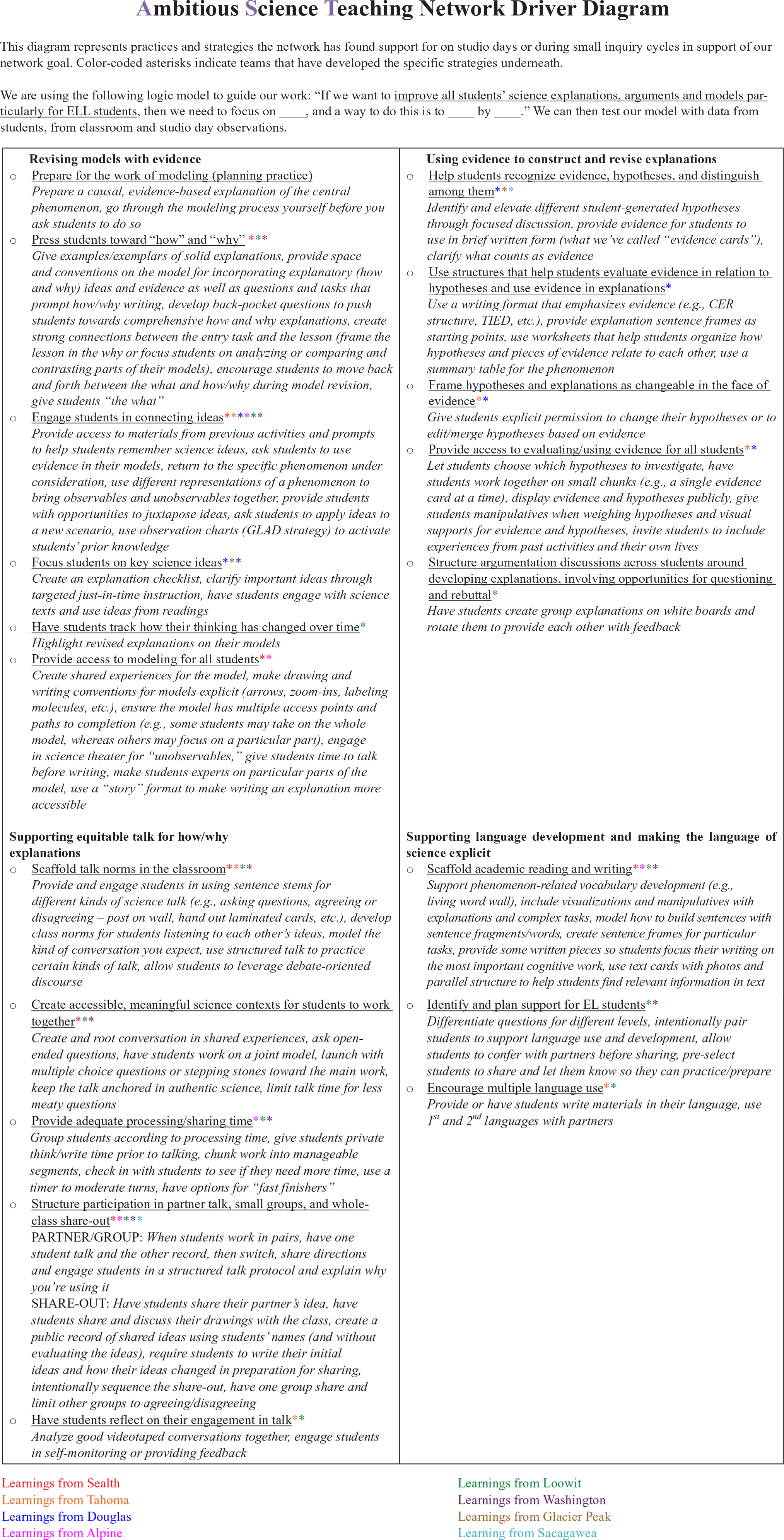

Co-Developing an Initial Practice and Tools

Figure 4 shows the Structured Talk protocol tool developed and piloted at Loowit’s Studio on 2/6/14 with the aid of EB and science coaches and researchers. We conceptualize the support of coaches and researchers in this process as a foothold since they actively contributed ideas and starter resources on which the team collectively built. Structured Talk was originally an EB practice (suggested by an EB researcher and coach) that provided protected opportunities for EB students to engage in academic discourse and practice fluency (Gibbons, 2007), which the team then shaped to a science-specific version focusing on engagement in deeper levels of scientific explanation. As seen in Figure 4, the adapted protocol supported pairs of students in taking turns to share their reasoning and evidence in relation to a focal question and to listen and clarify their understanding of their partner’s response.

Structured Talk protocol developed at Loowit High School.

Learning Loop Discourse: Testing and Improving Practice and Tools With Data and Theory

For this improvement launch pattern, testing initial practices and tools provided a foothold for PLCs to engage discursively in all three components of the learning loop—connecting teaching practices/tools, classroom data, and theories of how students learn. During the 2/6/14 Studio, EB coaches and researchers supported the PLC in conducting a small test to compare unstructured “turn-and-talk” and structured talk opportunities in the lesson, with a goal of inquiring into EB student learning in these different talk opportunities. Teachers noted differences in student participation during this test of the Structured Talk protocol and started to reason theoretically about their observations:

In the unstructured entry task [practice], Jeff was talking a bunch and I had to push Peter to talk [data]. But then in the structured talk [practice], Peter was the one that was asked to share first and he ended up taking on his role of teacher and Jeff was just clarifying what he was saying [data]. It was interesting to compare . . .

Something that I was thinking as I was doing it was there is a cognitive demand of learning a new procedure . . . and that is an important thing to consider with, how much space is left when you are thinking about, “What is this thing that I am doing right now?” [theory]. . . .

Having a “what-level” question might make sense when kids are learning the process [practice]. . . .

I am thinking about breaking the questions and do each one with structured turn and talk [practice].

Here, teachers discussed the results of their test, elevating both data and theoretical considerations and considering implications for practice. Later, teachers also considered how EB students participated in writing but not speaking during Structured Talk, and in the second lesson on the 2/6/14 Studio, the PLC experimented with breaking down the questions and helping students distinguish among “gathering, processing, and applying” questions to support EB students in particular. At the end of the day, the EB coach reflected to the PLC that she believed the team had evidence that their questioning scaffolds supported EBs in “increased fluency throughout the course of the conversation.” Again, with the testing of the practice and physical components on classroom protocols (tools), the PLC discursively made connections among practice, theory, and data.

The Loowit PLC continued to examine Structured Talk as a part of Studios and in their own classrooms for the remainder of the academic year. In the following years, the PLC launched a new, more pedagogically complex focal practice to better address their aim, but we have evidence from classroom observations that most Loowit teachers continued to iterate on Structured Talk in their own classrooms.

Additional Examples of Pattern 1

We provide two additional (but abbreviated) cases of Pattern 1 launches from Douglas Middle School (MS) in Year 1 and Washington HS in Year 3. Like Loowit, driver diagrams, support from a coach, and testing classroom tools and practices provided footholds into improvement. However, in contrast to Loowit, these examples did not focus on EB learning as explicitly, which is a point we return to in the discussion.

Douglas MS’ Year 1 launch

A driver diagram conversation at Douglas MS quickly elucidated a common improvement aim of supporting students in “developing and revising evidence-based and cognitively-demanding explanations throughout instructional units” (11/13/13 Studio). All teachers’ individual reflections at the end of this Studio highlighted this common aim. In preparation for the next Studio, a science coach and several Douglas teachers began to specify a teaching practice and classroom tools that helped students consider whether and why evidence from class activities supported, refuted, or did not apply to hypotheses students had generated. The PLC tested their initial version of the classroom tool during their 1/16/14 Studio (see Figure 5) and began improving aspects of the developing practice (e.g., how many pieces of evidence students work with at a time, changing the task at the start of class to let students practice using evidence) in relation to data (e.g., student reasoning with evidence) and theoretical considerations (e.g., ideas about information processing capacities or students taking “ownership” of particular hypotheses). Focused work on the locally developed practice of “Revising Hypotheses” continued for the remainder of the school year.

Classroom tools and protocols (being) developed at Douglas Middle School (left) and Washington High School (right).

Washington HS’ Year 3 launch

In Year 3, researchers compiled learnings from across schools into a network driver diagram to support schools in learning from one another (Figure 6). This driver diagram provided a foothold for Washington HS’s identification of a common improvement aim. During the team’s 9/29/15 Studio, the PLC drew on the network driver diagram, their perceptions of students’ strengths and needs, and a school-wide goal of “writing to explain” to identify a common aim of improving students’ construction and revision of written scientific explanations. The team designed an initial practice and accompanying classroom tools during the Studio to support students in providing feedback on each other’s developing written explanations, building on a resource shared by a science coach. They then tested the practice and tools in the Studio classroom and improved their feedback protocol (see Figure 5 for the PLC’s work-in-progress protocol) based on insights from data (e.g., changes students made in their use of scientific language, students’ confusion about the process) and working theory of what “counts” as an explanation in science. Numerous teachers worked with the revised protocol in their individual classes, and the team continued to refine the “Peer Feedback” practice during their other Year 3 Studio and into the following school year.

Network driver diagram shared toward the beginning of Year 3, representing learnings to date from each school-based professional learning community (PLC).

However, the Washington HS PLC originally struggled to launch a line of improvement work in earlier years. Their first launch in Year 2 is our in-depth example for the next launch pattern.

Improvement Launch Pattern 2: Spread and Local Adaptation

As the NIC developed, practices such as Structured Talk began to spread to and function as footholds in schools struggling to anchor their vision for improving science instruction. In this launch pattern, coaches and researchers intentionally shared practices and tools from other PLCs in the network (sharing tools both for use in the classroom and for data collection). As teams tested practices and tools borrowed from other schools, they identified localized problems of practice to work on. Specifically, through analysis of student data collected via practical measures like exit tickets, teams began to devise lines of sustained improvement work involving iteration on practice and consideration of theory (see Figure 7). Seven of the 17 improvement launches across the NIC fit the pattern of Spread and Local Adaptation.

Pattern 2, Spread and Local Adaptation (seven improvement launches).

Yet in this launch pattern, as teams identified localized problems of practice, explicit discussion and support of EBs were often backgrounded—despite teams working with borrowed data tools that centered on EBs’ learning and participation.

We use Washington HS’s adoption of Structured Talk during Year 2 of the NIC as our in-depth example for Pattern 2. The Washington PLC spent their first year unpacking and developing their understanding of the network’s improvement aim, as teachers were largely unfamiliar with the NGSS and starter teaching practices (Table 1). Teachers also had a strong press to align their PLC goal with their school goal of teaching reading comprehension skills. Teachers tried to layer this emphasis during Studios and often ended up discussing multiple disconnected instructional strategies.

Strategic Sharing of Existing Practices and Tools

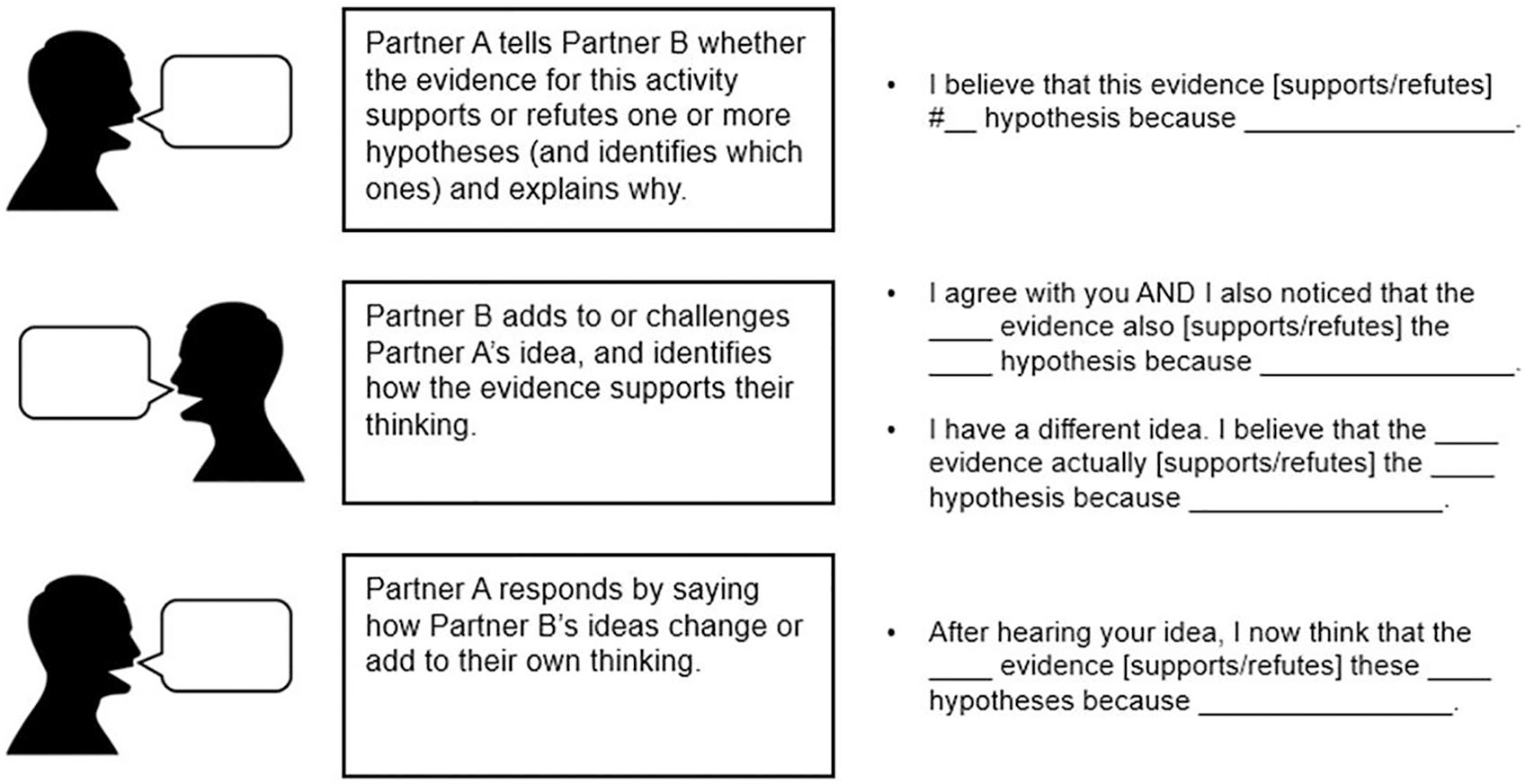

On the recommendation of their science coach, Washington tried Structured Talk at the beginning of Year 2 on their 10/16/14 Studio. The team made small revisions to the existing protocol, adding sentence stems (see Figure 8) and role-playing examples for students.

Structured Talk classroom tool revised by teachers at Washington High School.

Collecting Data on Initial Tests With Practical Measures

Central to the spread of practices and tools was the development and sharing of exit tickets as practical measures, which allowed teachers to learn from students’ experiences with specific practices. These were used widely across the network in part because teachers helped design them and in part because using exit tickets was a familiar classroom routine. However, teachers had previously used exit tickets to assess content understanding, not as an indicator of students’ experiences. The exit tickets provided students with opportunities to weigh in on how practices were working from their perspectives, and for PLCs to carefully consider data from students (vs. data about students; see Figure 9).

Teacher-developed Structured Talk exit ticket for students from Loowit High School.

After their 10/16/14 Studio on which they piloted Structured Talk, Washington teachers opted to pilot Structured Talk widely across their classrooms and survey their students (1,200 in total) about their participation.

Learning Loop Discourse: Specifying Local Problems of Practice With Data

Examining student data from exit tickets seemed to function as a foothold for specifying local challenges and engaging in learning loop conversations. After surveying their students, Washington teachers elevated a common noticing from the exit ticket data on their 2/10/15 Studio—that students reported low engagement in disagreeing with one another and changing their ideas (Nov-14 in Figure 10).

Pre and post student exit ticket data representing percentage of students who reported engaging in each action during Structured Talk at Washington High School. Red circles indicate targeted areas for improvement.

We share one comment from a larger conversation in which teachers started to theorize about their observations:

[pointing out two low bars in the graph] “I disagreed with my partner’s idea” and “I changed my ideas from the beginning of class” [data]. . . . I thought those were related in that . . . in order for those things to happen, you might need a richer prompt [practice]. . . . Maybe it is a little bit taboo to say “I disagree with” [theory]. . . . That is what I think, those are relatively low bars [data], and those can be reasons that we can work on for increasing those.

Teachers also reasoned that disagreeing is more challenging than agreeing and pitched several possible ideas for practice. During the 2/10/15 Studio, they revised the talk protocol to explicitly encourage disagreeing with others based on evidence and noted increases in the targeted areas (Feb-15 in Figure 10). Again, examining student data from exit tickets seemed to support learning loop connections.

Washington teachers continued the exploration of this practice for the remainder of the year, then similarly to Loowit, the Washington PLC launched a more complex practice (“Peer Feedback”) the following year as an object of PLC investigation. Observational data showed that individual teachers continued to use Structured Talk in their own classrooms.

Additional Examples of Pattern 2

We briefly share an additional example of how Structured Talk spread to another school.

Tahoma MS’ Year 3 launch of Structured Talk

At Tahoma MS, the team followed researchers’ recommendation to start Year 3 working on Structured Talk. By the first Studio of the year on 10/2/15, teachers had practiced an initial version of Structured Talk with their classes (see Figure 11, similar to Figure 4 from Loowit and Figure 8 from Washington), but had not yet collected systematic data. During the Studio, they engaged in a concerted test of the practice and used exit tickets as a practical measure. Similar to Washington, Tahoma teachers examined data from the exit tickets and identified a local problem of practice: Students reported low engagement when repeating their partners’ ideas (Figure 11). This identification sparked changes in the practice (e.g., beginning the practice by showing students a PowerPoint slide highlighting each step of the protocol, partnering students who reported “repeating ideas” with students who did not) and consideration of theoretical purposes and implications (e.g., repeating as connected to “processing” others’ ideas, and as helpful for picking up new ideas for use in writing). Teachers’ use of and work on Structured Talk continued after the Studio for the majority of the school year.

Tahoma Middle School’s Structured Talk protocol (left) and student exit ticket data (right). The red circle on the right indicates the targeted area for improvement.

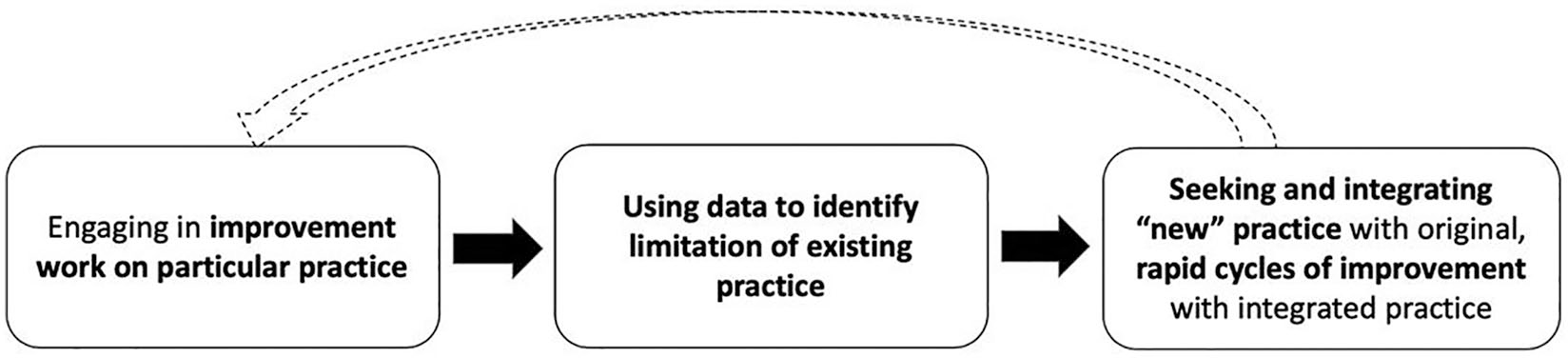

Improvement Launch Pattern 3: Integrating New Practices

Finally, five improvement launches across the NIC fit the pattern of Integrating New Practices. This pattern occurred when PLCs integrated “new” (to them) practices with previously established practices. Pattern 3 launches involved transitioning from ongoing improvement work on a particular practice to identifying a data-driven limitation of the practice to integrating a “new” practice with, and in service of, the original practice, as seen in Figure 12.

Pattern 3, Integrating New Practices (five improvement launches).

This “new” practice was developed or brought in from elsewhere in the network. With prior experience in development and improvement cycles, this progression tended to occur quickly—at times in the course of a single Studio.

We use Douglas MS’s integration of Structured Talk with their prior focus on Revising Hypotheses in Year 2 as an example of Pattern 3.

Ongoing Improvement Work on a Practice and Identification of Limitation

As briefly described in Launch Pattern 1, Douglas MS spent their first year developing a practice of Revising Hypotheses with evidence. At the start of Year 2, the team noted that based on their observations of students in class, while students often worked together, they did not always discuss how they were using evidence.

Integrating a “New” Practice

At Douglas MS, researchers introduced Structured Talk, and teachers immediately recognized how it could be used to support evidence-based discourse. During a Studio on 2/12/15, they added structured talk opportunities to sections of the lesson where students discussed patterns in data and evaluated hypotheses. They also planned to administer a modified exit ticket to collect data on student participation. This imagining of what was possible with tools prior to teaching lessons was characteristic of this launch pattern. (Similar to Pattern 2, however, the EB focus of the original Structured Talk practice was backgrounded.)

Learning Loop Discourse: Rapid Cycles of Improvement With Data and Tools

For this pattern, PLCs engaged in seamless learning loop conversations as they rapidly examined data and designed tools. For example, following the lesson on the 2/12/15 Studio, Douglas teachers discussed data from exit tickets about students’ use of evidence during Structured Talk:

What stands out to you?

75% of the kids said that they used evidence. [multiple team members agree or nod] [data]

That’s what I saw . . . We don’t know what they were saying as evidence, but they thought that they used evidence. [data] . . . Maybe partner A is the one who shares their idea, and partner B is the questioner, and like—“Well, what do you mean by that? Can you explain more? What evidence supports that?” And they have to ask. [practice]

Where they can either clarify or extend [practice]. . . .

And repeat back what they hear. I think is important to get them actually listening [theory].

Prior to the next Studio, the team formalized a Structured Talk protocol to support students in coordinating evidence with hypotheses (Figure 13).

Modified Structured Talk protocol focused on connecting evidence and hypotheses at Douglas Middle School.

At the beginning of the next Studio on 4/30/15, each teacher had iterated with the protocol at least once, and they reported highlights and questions that came up as they used the protocol in their classes. Together they tested the protocol again and after the first lesson, they noted that students did not use the entire protocol [data] and hypothesized that it was a “heavy load” for students to process [theory]. The team revised their protocol for the following class period, deciding to break down the task with partners first discussing the meaning of a piece of data before coordinating it with hypotheses. We observed four of the six science teachers integrate these practices the year they launched the practice and then again in the following year. As with other PLCs integrating new practices, new versions of tools were rapidly and purposefully constructed, accounting for practice-based evidence PLCs had gathered.

Additional Examples of Pattern 3

We briefly share an additional example of Pattern 3 in another PLC.

Sealth HS’ integration of Language Functions in Year 2

Sealth PLC members worked on Scaffolding Modeling in Year 1 and developed scaffolds to help students draw scientific models and write explanations for phenomena. By the end of the year, Sealth identified a data-based problem of practice: Student work from EB students suggested that they needed support with the structures and functions of scientific academic language. The team hypothesized that direct instruction about language functions (e.g., “cause and effect,” “compare and contrast”) might help students’ construction of models and explanations. With this hypothesis, in Year 2, the PLC developed a “Language Functions” practice where they explicitly discussed specific language functions with students and helped students practice using the functions in their models, integrating the functions with their prior work on modeling scaffolds. (Figure 14 shows integrated scaffold examples and some of Sealth’s noticings from testing them.)

Sealth High School professional learning community’s (PLC’s) integration of Language Functions (new practice) and Scaffolding Modeling (existing practice). The scaffold supported students in practicing the language function of “cause and effect” and reasoning about causal relationships as they modeled breast cancer (top). Team’s initial noticings from testing scaffolds during a Studio (bottom).

Discussion

This study aimed to understand variation in how teams of teachers, coaches, and researchers launched into improvement work within a single district-based NIC that included all secondary science teachers. Our aim was to describe elements of the “messy middle” as researchers and practitioners launched into improvement work across three levels of practice—classroom teaching practices and tools, school PLCs, and the network-level. Here, we examine the varied PLC improvement launches, highlight common “footholds” that oriented teams to inquire into teaching practices, and consider the role of school-to-school learning (Redding et al., 2017).

Launches and PLC Learning Loop Discourse

Our discourse analysis suggests that PLCs launched into improvement work by engaging in conversations that specified and iterated on practices and tools, examined evidence from students about their learning, and developed practice-based theories for how students learned (referred to as the learning loop). The literature on PLCs overwhelmingly concurs that working with these elements over extended periods of time supports teacher learning (Horn & Little, 2010; Wei et al., 2009). Yet the varied ways PLCs dive into this work in RPP NICs have not been well-documented in the network initiation literature. This study of networked PLCs suggests three possible launch patterns: Local Practice Development, Spread and Local Adaptation, and Integrating New Practices. The patterns represent different entry points for PLCs, with the learning loop playing a slightly different role in relation to identifying a common aim or problem of practice in each. PLCs with a common aim began with drafting and testing practices and tools (Local Practice Development). PLCs without a joint aim borrowed and localized others’ practices and tools and, through examining data from students, developed a specific problem of practice worth investigating (Spread and Local Adaptation). Some PLCs kept their baseline practice as a focus of inquiry, yet through examining data from students and developing theories of how students learned, they became dissatisfied with aspects of the practice and developed or adopted other network practices to address identified limitations (Integrating New Practices). These teams, in particular, benefitted from having a history of collaborative inquiry, making rapid iterations with tools, practices, theory, and data more plausible. Similarly, Horn and Little (2010) found that histories of collective inquiry into a problem of practice can accelerate PLC learning, and that, in particular, engaging in learning loop discourse supported teams in developing a “shared frame of reference” about problems of practice (with common language about practices, principles, and terms), bringing teams closer to students’ ideas and supporting instructional improvement. This study suggests that there are at least three pathways to developing such language and a longitudinal stance toward inquiry into localized problems-of-practice; why teams take particular pathways at particular times remains an open question for investigation. To understand how PLCs launched into these patterns of improvement work, we next examine the footholds supporting all launch patterns.

Boundary Spanners as Footholds Supporting Improvement Launches

Similar to other studies, the data suggest that the local and network improvement work was supported by research-practice partners spanning multiple settings and levels of the NIC (Cannata et al., 2017; Cohen-Vogel et al., 2016; Redding et al., 2017). Coaches and researchers were well-positioned to promote a network-level common vision of instructional practice and collaboration, contribute to cycles of reification and experimentation, and share practices and tools across teams; in fact, the second launch pattern (Spread and Local Adaptation) began with spanners’ intentional sharing of resources. Similar to other research on teacher networks, this study also suggests that knowledge can accumulate in networks when coaches and researchers are strategically positioned to work with teams of teachers (Frank, Penuel, & Krause, 2015; Sun, Wilhelm, Larson, & Frank, 2014). The boundary spanners in the AST NIC had an important role in reducing variation and leveling the playing field across schools with different histories of working together—both in terms of sharing instructional practices and locally supporting PLCs in inquiring into those practices (Cohen-Vogel et al., 2016). While studies have found that spanners are important for the spread of ideas, these studies have not largely explored how this is linked to language and tools (with the exception of Redding et al., 2017), which we next describe.

Foothold Practices and Tools Supporting Launch Patterns and School-to-School Learning

Our findings suggest that improvement work was, in part, supported by the development and revision of foothold tools and practices. Below, we describe how various foothold tools and practices supported PLCs in developing shared frames of reference and highlight the utility of framing these tools and practices as emergent components of network-level work.

As described in the literature on network initiation, tools such as driver diagrams are generally described as concrete artifacts that help ground actors in common improvement aims and common language (Bryk et al., 2015; Russell et al., 2017). We found that the driver diagram functioned at the school level as a foothold tool to help PLCs identify improvement aims and related measures, design instructional practices and tools to test during Studios, anticipate changes and possibilities, and theorize about how the practices worked, under which conditions and for whom, and develop culturally shared visions of what was possible (Cole, 1996; Wartofsky, 1979). Moreover, the driver diagram provided a written record of change ideas that PLCs continually revisited as they revised practices, supporting continual improvement. Yet this was only the case for the first improvement launch pattern. The driver diagram tool and associated discussions were not enough to support launches into improvement work for all PLCs.

For some PLCs, particular instructional practices and accompanying classroom tools designed in collaboration with teachers elsewhere in the network functioned as important footholds into improvement work. Structured Talk, for example, developed with one PLC then spread and grounded improvement work in five additional PLCs over time via the work of boundary spanners and school-to-school discussions at network-level convenings, thus mediating individual PLC and collective NIC learning (Redding et al., 2017; Toiviainen & Kerosuo, 2013). Importantly, classroom tools and tools supporting PLC inquiry traveled alongside the foothold practices (also described by Knorr-Cetina, 2001). We conjecture that some practices and tools became footholds and supported PLCs in launching into improvement work because (1) the classroom practices helped address familiar and similar problems of practice across schools (as also seen and described by Redding et al., 2017), (2) they were small grain-sized practices, meaning that they could be implemented daily and did not require a large shift from teachers’ everyday classroom practice, and (3) the coupling of classroom tools (i.e., talk protocols focused on practice) with inquiry tools (i.e., exit tickets focused on data) fueled curiosity and innovation, and gave teams shared objects for launching joint work. In effect, the foothold practices and tools contributed to PLC alignment by reducing variation across classrooms and enhancing alignment with network goals (Redding et al., 2017) while sparking the development of more complex practices across schools.

However, similar to studies of object-based work (i.e., Engeström & Blackler, 2005), we note that tools and practices did not always retain their original emphasis as they moved. For instance, Structured Talk tools were developed with particular attention to EBs’ participation and needs, yet the focus often shifted to alternate aspects of participation on moving (e.g., disagreeing or use of evidence). In this way, practices originally designed with attention to supporting and noticing EBs in science were co-opted into generalized practices supporting “all students” and lost their power as a foothold into equitable instruction for EB students in particular. As such, there was a tension and possible trade-off between the forms of equity we sought to design for—equity of inclusion in the network and equity in classroom practice. PLCs were able to define their own foci, which helped broaden participation, but equitable opportunities in classrooms were at times challenged by such flexibility.

Implications

As school districts and policymakers consider how to support the system-wide improvement of teaching in RPPs, they will want to design for multileveled learning across schools. This study suggests that launching into improvement work within and across schools is complex work that takes place over time and that there are multiple ways PLCs launch into improvement, which are shaped by the support of boundary spanners, access to generative and often locally developed tools and practices, and opportunities for PLCs to engage in conversations about classroom practices, tools, and data. Attention to the on-the-ground work of PLCs as networks initiate improvement work is of paramount importance, both in terms of creating buy in and for identifying practices and tools that will press the system to consider which practices work, under which conditions, and for whom.

Supplemental Material

DS_10.1177_2332858419875718 – Supplemental material for Launching Networked PLCs: Footholds Into Creating and Improving Knowledge of Ambitious and Equitable Teaching Practices in an RPP

Supplemental material, DS_10.1177_2332858419875718 for Launching Networked PLCs: Footholds Into Creating and Improving Knowledge of Ambitious and Equitable Teaching Practices in an RPP by Jessica Thompson, Jennifer Richards, Soo-Yean Shim, Karin Lohwasser, Kerry Soo Von Esch, Christine Chew, Bethany Sjoberg and Ann Morris in AERA Open

Footnotes

Authors’ Note

First authorship is shared between the first two authors, who made equal contributions to this publication.

Notes

Authors

JESSICA THOMPSON is an associate professor at the University of Washington, College of Education. Her scholarship focuses on building and studying K–12 networks that support the improvement of ambitious and equitable teaching practices in partnership with teachers, coaches, principals, and district leaders.

JENNIFER RICHARDS is an assistant research professor at Northwestern University in the College of Education. Her research focuses on understanding and promoting equitable knowledge building within educational communities, including but not limited to classrooms and research-practice partnerships.

SOO-YEAN SHIM is a postdoctoral research associate in teaching, learning, and curriculum at the University of Washington, College of Education. Her areas of expertise are in examining students’ disciplinary learning and studying professional learning communities over time.

KARIN LOHWASSER is a principal lecturer at the Gevirtz School of Education at the University of California, Santa Barbara. Her area of expertise is in developing and studying professional learning communities.

KERRY SOO VON ESCH is an assistant professor in educating nonnative English speakers in the College of Education at Seattle University. Her research focuses on examining what rigorous teaching and learning looks like for EL students in mainstream classrooms as more complex than “best practices,” and how teachers learn to become culturally and linguistically responsive educators.

CHRISTINE CHEW serves on the Bellevue School Board and recently completed her doctorate in science education and school leadership. Christine’s interests are in the enactment of educational reform that authentically engages each and every student in active and collaborative learning that leads to a more STEM-proficient population of individuals who not only create and apply STEM but also consume it in meaningful and conscientious ways.

BETHANY SJOBERG is currently an assistant principal in Seattle Public Schools. Formerly, she was a district-based secondary science specialist with expertise in developing ambitious science teaching practices, coaching, and supporting professional learning communities over time.

ANN MORRIS is currently a middle and high school science teacher at Cedar Park Christian. Formerly, she was a district-based secondary science specialist and a school-based STEM coach with expertise in developing ambitious science teaching tools and practices and supporting professional learning.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.