Abstract

Persons with hearing aids or cochlear implants often have difficulty understanding speech well, especially in noisy environments. Auditory perceptual training can help improve an individual's ability to discriminate and identify sound. The current study aimed to determine the efficacy of the ALICE (Assistant for Listening and Communication Enhancement) program, a self-guided home-based hearing care program including monitoring, training and counseling. A multicentric study was carried out, including hearing aid centers and a cochlear implant center in Flanders (Belgium). Adult participants were randomly assigned to an intervention (n = 65) or a control (n = 65) group. Participants in the intervention group received a tailored flow of exercises that could be streamed to the device or presented in a sound field. All participants were tested before and after 8 weeks using sentences in noise and different self-report questionnaires. Participants in the intervention group were compliant during the 8-week training period. Significant on-task improvements were observed, along with improved speech-in-noise understanding for the intervention group only. The self-report data did not reveal changes following the intervention. Our clinical trial demonstrates that the self-guided ALICE training program is effective at improving the auditory system's ability to parse untrained speech in noise. This enhancement in speech-in-noise performance is specific to the training group, as the control group did not show any improvement. The results of the clinical trial imply that ALICE can be used as a scalable, accessible, and safe hearing care intervention.

Keywords

Introduction

According to the World Health Organization (WHO), over 1.5 billion people globally live with some degree of hearing loss (HL), and around 430 million require rehabilitation services. This calls for efficient and cost-effective treatment (WHO, 2025). Without appropriate follow-up, a person with HL will experience increased listening effort and difficulties with communication, learning, social-emotional functioning, employment, and quality of life (Davis et al., 2016; Denham et al., 2024; Pichora-Fuller et al., 2016).

Regular care in hearing centers involves providing and fitting hearing aids, as well as offering guidance on their maintenance. While auditory training is a cornerstone of hearing health care (Boothroyd, 2007, 2010), it is not routinely offered in hearing clinics. Auditory perceptual training consists of a structured set of exercises designed to improve an individual's ability to discriminate and identify sounds. Persons with hearing aids (HAs) or cochlear implants (CIs) often have difficulty understanding speech well, especially in noisy environments (Shehorn et al., 2018; van Wieringen et al., 2021). Auditory training can help their brain relearn how to process sound effectively. Additionally, when hearing sensitivity is good but auditory processing is sub-optimal despite audibility, auditory training can help improve listening skills, spatial processing, binaural integration, and auditory memory (Schafer et al., 2024).

Counseling emotional and psychological challenges related to HL and providing strategies for effective communication (Johnson et al., 2018) are also very important (Contrera et al., 2016; Timmer et al., 2024). However, time constraints, lack of knowledge, and limited reimbursement by the health insurance, among others, prevent implementing this in hearing health care for persons with HAs. The regular care for persons with CIs is often different compared to those with HAs: most persons with a CI receive intensive rehabilitation during the first 6 months after their implantation, including auditory perceptual training and counseling in the clinic.

Over the past years, advancements in telehealth have led to accessible and tailored services for individuals with communication difficulties (Swanepoel & Hall, 2010; Swanepoel & Iii, 2020; van der Mescht et al., 2022). Developments in remote hearing health care accelerated during the COVID-19 pandemic. Self-guided home-based auditory training programs emerged as a practical solution for those seeking to practice and refine their listening skills at their own pace (Frisby et al., 2022; Sweetow & Sabes, 2007). The efficacy of several programs was researched, such as LACE (Saunders et al., 2016; Sweetow & Sabes, 2006; Lai et al., 2023), Brain Fitness cognitive training (Anderson et al., 2013), ReadMyQuips (Abrams et al., 2015; Rao et al., 2017; Rishiq et al., 2016), a serious game scenario (Reynard et al., 2022), and LUISTER (Magits et al., 2023; Van Wilderode et al., 2023).

Despite a multitude of studies, no clear recommendations could be made yet on the functional benefit of auditory training, partly due to limited evidence and external validity (cf, the systematic reviews Henshaw & Ferguson, 2013; Lawrence et al., 2018 for persons with HA and Dornhoffer et al., 2024 for CI users). As a result, many hearing healthcare professionals hesitate to promote auditory training. Many studies in these systematic reviews did not include a control condition or had a limited sample size. Auditory training aims to transfer skills to improve daily life communication and build confidence (Ferguson & Henshaw, 2015; Sweetow & Sabes, 2006), but the transfer to untrained (or real-life) conditions is very difficult to capture.

LUISTER was the first self-guided (home-based) hearing care program designed for the Dutch/Flemish language and operated on a tablet (Magits et al., 2023). It contained a monitoring module with the digits-in-noise (DiN) task and the vowel and consonant identification tasks (van Wieringen et al., 2021) and a training module with more than 500 Flemish/Dutch exercises. Bottom-up exercises focused on sound discrimination and identification, while top-down, synthetic exercises were designed to develop higher-order skills using realistic materials such as sentences. The speech reception thresholds (SRTs) derived from the DiN task determined the starting level of the exercises for the individual, and the training exercises were tailored to address the client's specific challenges. LUISTER was validated in adults with CIs (Magits et al., 2023), as well as in individuals with HAs (Van Wilderode et al., 2023). The results of these studies provided scientific evidence supporting the added value of auditory perceptual training for people with HL. Notably, improvements to untrained measures were observed with the LUISTER training and an alternative program used by the active control group (Magits et al., 2023).

Building on this foundation, the monitoring and training modules were integrated into a comprehensive hearing care app called ALICE (Assistant for Listening and Communication Enhancement), supplemented with counseling and a dashboard for the hearing care professional (HCP). ALICE runs on smartphones (Android or iOS) and transmits data to a clinician's dashboard via a web browser. Given the promising results of the LUISTER program, it is essential to evaluate the effectiveness of its successor, ALICE, in a real-world context. As a more comprehensive and accessible tool integrating monitoring, training, and counseling, ALICE can potentially enhance listening skills and empower persons with a HL. However, a randomized controlled trial is needed to assess its added value in routine audiological care. The training tasks and the tailored (personalized) flow chart were the same as those assessed with LUISTER (Magits et al., 2023; Van Wilderode et al., 2023). Unlike previous clinical trials, which were conducted at a single site, the present study was carried out across various HA centers distributed throughout Flanders (Belgium), alongside the university hospital of Leuven. Furthermore, this study uniquely included an evaluation of the counseling module. Participants had a HA or a CI and received either regular care (control) or regular care supplemented with the ALICE program. We expected that the tailored auditory training program would be engaging and promote adherence, and that it would enhance daily life listening and/or communication strategies by demonstrating improved speech-in-noise performance and reduced self-perceived listening effort. We also expected that the intervention group would be more knowledgeable about their HL and recognize the emotional consequences of their HL than the group without an intervention through the counseling module.

Materials and Methods

A priori power analysis was conducted using G*Power (Erdfelder et al., 2009) based on a repeated-measures ANOVA (α = .05). Given that G*Power does not support power calculations for linear mixed-effects models, this approach served as a reasonable estimate. It indicated that a total sample of at least 76 persons is needed to detect differences in speech understanding in noise between the two treatment groups with a low to medium effect size (d = 0.25). The data were subsequently analyzed using linear mixed-effects modeling to account for intra-subject variability, providing a more accurate representation of the experimental design.

Participants

The HCP at the local investigation site recruited adult clients with at least 6 months of HA experience, and the audiologist at the university hospital recruited CI users with at least 6 months of experience. All participants were Dutch-speaking, were able to use the smartphone or tablet, and had sufficient eyesight to read the screen.

Procedure

Randomization and study data were managed using Research Electronic Data Capture (REDCap) hosted at KU Leuven, the main investigator. REDCap is a secure, web-based software platform to support data capture for research studies (Harris et al., 2009, 2019). After being stratified by age (18–40, 41–60, and 61–80), participants were assigned randomly by REDCap to the intervention or the control group. Two sessions were planned, one before and one after 8 weeks. After obtaining consent to participate in the study, the speech threshold for sentences in noise was determined twice. The questionnaires were then completed by scanning a QR code and responding to the questions online (Session 1). Subsequently, the HCP would send the participant's age and gender to the main investigator for randomization in REDCap. Participants in the intervention group were shown how to download and use the ALICE program. All participants received a new appointment for sentence recognition in noise and self-report assessment after 8 weeks (Session 2). Participants in the control group were given the chance to do the training tasks afterwards.

Ethical approval was granted by the Federal Agency for Medicines and Health Products (Eudamed number: CIV-22-06-039801-SM03). Participants were not paid. The study protocol is registered on ClinicalTrials.gov (NCT05329922). This study adheres to the Consolidated Standards of Reporting Trials extension for nonpharmacologic treatments (Boutron et al., 2017).

Demographics

Initially, 68 participants were included in the ALICE intervention group. Three participants wished to stop (one due to persistent technical difficulties, one due to task difficulty increasing too quickly, and one due to persistent tinnitus before the start of the clinical trial). Sixty-nine persons were assigned to the control group. Four people decided to stop participation (three due to adverse events [an ear infection, reimplantation of CI, and fungal infection so that the participant could not wear HAs for a while], and one without reason).

The demographics of the participants who completed the clinical trial are listed in Table 1. Each group contained 65 participants. CI users had more than 6 months of experience with their device and had completed their clinical rehabilitation. All participants presented with a postlingually acquired profound HL, and they communicated through spoken language in their daily lives. The number of CI and HA users was evenly distributed over the two groups. The two treatment groups did not differ in terms of chronological age, gender, type of hearing device, and other relevant factors (Table 1). Speech materials could be streamed to the device or presented in sound field. Participants with bilateral devices chose the side where they wished to stream the speech materials.

Demographics of the Participants in the Two Groups (ALICE and Control).

The table lists their hearing device (CI, HA), gender, age distribution, working or not, and severity of unaided HL (mild: PTA < 40 dB HL, moderate: PTA between 40 dB HL and 70 dB HL, and severe: PTA > 70 dB HL). ALICE = Assistant for Listening and Communication Enhancement; CI = cochlear implant, HA = hearing aid; HL = hearing loss; PTA = pure tone average.

The ALICE Program

The ALICE program contains two parts: (1) a client application that can be downloaded onto a personal device (smartphone or tablet) and (2) a web-based dashboard for the HCP with data and analytics for their clients. The client application comprises three modules: a performance monitoring module, a listening training module, and a counseling module. The monitoring tasks are the DiN task and the phoneme discrimination task described in Magits et al. (2023). The SRT of the DiN task is used to determine the starting level or signal-to-noise ratio of a training task. The DiN task was administered at the beginning of each week, followed by either the vowel or the consonant identification task in quiet (without feedback). This task's most difficult discriminable pairs were assigned a greater weight in the training tasks. The results of both tasks are displayed on the HCP's dashboard.

The listening training exercises are divided into five categories: vowels, consonants, themes (e.g., bathroom, kitchen, …), suprasegmental (including voice recognition and prosody), and sentences (clock reading, identifying similar-sounding words in sentences, realistic sentences). Exercises are performed in quiet and in various types and levels of background noise in a closed-response set (3, −6, or 9 alternatives). They are varied so that participants do not perform the same exercise for more than 4–5 min. Feedback is provided after each item, and the speech sound can be replayed if desired, providing additional opportunities for learning. A predefined set of rules determines whether the participant needs to repeat a similar task or is referred to an easier or more challenging one (Magits et al., 2023). Although participants received feedback after each trial, they were not shown absolute scores. Task progress was tracked via the dashboard. Participants began training in quiet, and when they passed the target score on these tasks, similar tasks were presented in noise, specifically speech-weighted noise (SWN), then babble noise (BN). This approach resulted in a tailored selection of tasks and difficulty levels for each participant.

With the counseling module, the participant is requested to respond to different questions about his/her listening experience and difficulties. These questions are based on validated questionnaires such as the Speech, Spatial, and Quality of Hearing questionnaire (Gatehouse & Noble, 2004), the Hearing Handicap Inventory for Adults/the Elderly (Ventry & Weinstein, 1982), and the Fatigue Assessment Scale (De Vries et al., 2004).

At the beginning of the training program, the participants in the intervention group indicated difficult listening conditions from a closed set of responses (difficulties at home, at work, during cultural activities, group conversations, leisure, music, one-on-one conversations, outdoors, sports, telephone, and/or television). Subsequently, up to three questions linked to these questions were presented daily to assess their listening experience. Questions were answered on a 5-point Likert scale. Responses were categorized according to “ability” (“I can follow a conversation during dinner”), “feeling” (“Because of my hearing loss, I feel stressed”), and “participation” (“I avoid groups because of my hearing loss”). Results are sent to the HCP dashboard.

Outcomes Used in the Randomized Clinical Trial

The primary outcome was a validated measure of sentence recognition in noise. This main outcome was complemented with questionnaires to evaluate effectiveness in a broader context of functioning and participation.

Sentence Recognition in Noise

Sentence recognition in noise was assessed with the LIST sentences (van Wieringen & Wouters, 2008). Sentences were presented in SWN: the noise level was kept constant at 65 dB SPL. Starting at 55 dB SPL, the level of the first sentence was increased in steps of 2 dB until the sentence was identified correctly. Subsequently, the speech level varied adaptively during the following nine sentences with a one-down-one-up procedure to target the 50% point on the psychometric function (SRT). The SRT is calculated based on the last five responses plus the signal-to-noise ratio (SNR) that would have been tested if one more step had been included. The test was presented twice to the listener, both before and after the 8-week period, to control for procedural effects. Sentences were presented in sound field via a loudspeaker. Lists of sentences are counterbalanced and never presented twice.

Questionnaires

The SSQ12 is a short version of the validated Speech, Spatial, and Qualities of Hearing Scale (SSQ, Noble et al., 2013). The SSQ assesses a person's hearing ability in everyday life, particularly in complex listening environments. In the 12-item questionnaire, the first five questions belong to the subscale “speech” (speech in noise, multiple speech streams, speech in speech). Items 6, 7, and 8 capture self-perceived benefit on localization, distance, and movement, and the last four questions refer to segregation, identification of sound (musical instrument), quality, and listening effort. The higher the score, the better the self-perceived performance. Participants scored each question on a visual analog scale of 0–10. We expected that these domains would be rated higher after the training intervention.

The CAS (Communication and Acceptance Scale) is a validated scale comprising 18 items (Öberg et al., 2021). It was developed to detect clinical changes in “communication strategies and the emotional consequences, knowledge and acceptance of HL”. It contains 18 items on a 5-point scale: completely agree (4), agree (3), neutral (2), disagree (1), and completely disagree (0). Responses are cast into four subscales: emotional consequences, verbal communication strategies, confirmation strategies, and hearing knowledge and acceptance. Higher scores indicated better functioning (Öberg et al., 2021). The statements are divided into five subscales: confirmation strategies (Conf, three questions), Emotional consequences (Emo, eight questions), Hearing Knowledge (HKnow, one question), HL and acceptance (HAccep, four questions), and Verbal communication strategies (VerbalC, two questions). This questionnaire has been translated from Swedish to Dutch (and back-translated to ensure correct translation. It was anticipated that the more knowledge (subscale 4) participants obtained through counseling and learning communication strategies, the better prepared they would be to use the strategies in real life (subscales 2 and 3).

EAS (Effort Assessment Scale) is a validated self-report questionnaire on listening effort (Alhanbali et al., 2017). It captures the extent of mental energy and cognitive effort required for a listening task. Six questions are presented on various listening situations. Participants rate their effort on a visual analog scale, from 0 (no effort) to 10 (extreme effort). The EAS questions were translated into Dutch for this study.

The IOI-HA (International Outcome Inventory for Hearing Aids) assesses the daily use of HAs (or CIs), as well as the perceived benefit and satisfaction. The IOI-HA consists of seven questions rated on a 5-point Likert scale and has been validated in Dutch (Kramer et al., 2002). Each question addresses a different aspect of HA use (daily use, benefit in different listening situations, activity limitation, satisfaction, participation restrictions, impact on relationships, and quality of life). The higher the value, the more positive the respondent is about a situation.

Data Analysis

Statistical analyses were performed using the R programming language and statistical environment (Posit Software, PBC, 2024). Potential improvements between the first and second sessions were assessed using linear mixed models (LMMs). The participant was considered a random factor, and session and treatment group (intervention vs. control) were fixed factors.

Results

The current study aimed to determine the efficacy of the ALICE program. Below, we present the results for the CI users and HA users separately. Progress on exercises, the DiN task, and vowel and consonant identification was derived from the HCP dashboard. The clinical trial outcomes were documented in REDCap.

Adherence and Exercises

Figure 1 illustrates the total practice time per participant for the nine CI participants (left) and the 56 HA participants (right). The black horizontal line indicates the recommended total practice time during the 8 weeks. Most participants adhered to their schedule (or practiced more than the recommended amount), and approximately five participants practiced for less than half of the recommended time.

Adherence to Training and Time Spent on Exercises for CI (n = 9) and HA (n = 57) Users. The Black Horizontal Line Indicates the Recommended Total Practice Time During the 8 Weeks.

Figure 1 also illustrates the amount of time spent per exercise type. Most time was spent on theme exercises (e.g., kitchen utensils, bathroom, animals), but also on minimal pairs (consonants and vowels), followed by suprasegmental tasks (voice recognition and emphasis), and sentences. The figure does not depict the task sequence.

On-Task Improvements for the ALICE Group

At the beginning of a training week, participants first performed a DiN task and alternating, either a vowel or consonant identification task. These data were logged in the HCP dashboard. Figure 2a illustrates the SRTs of the CI and HA users for the 8 weeks of training. Error bars depict twice the standard error between participants. As expected, the average SRTs of the CI users were higher than those of the HA users, although some CI participants performed in line with HA users. However, both groups improved across sessions (β = −.32, SE = 0.11, t = −2.8, p = .005). The interaction between group and device was not significant (β = −.03, SE = 0.12, t = 0.26, p = .792).

(a) Average SRT (and Standard Error) with the DiN Task During the 8 Weeks of Training for the CI (n = 9) and HA (n = 56) Users. The Number of Participants in the Final Weeks Is Slightly Lower Due to Diminished Adherence. (b) Percentage Correct Phoneme Identification for CI (n = 9) and HA (n = 56) Participants for Four Periods (8 Weeks) During the Intervention. The Number of Participants in the Final Weeks Is Slightly Lower Due to Diminished Adherence.

Figure 2b shows the percentage correct scores for vowel and consonant identification tasks for CI and HA participants over four periods (8 weeks). Despite high scores, they are not perfect, indicating room for improvement. CI users scored lower than HA users for both vowel (β = 16.2, SE = 3.1, t = 5.2, p < .001) and consonant identification (β = 19.4, SE = 5.6, t = 3.5, p < .001). Both groups improved over time for vowel (β = 0.63, SE = 0.29, t = 2.2, p = .03) and consonant identification (β = 1.3, SE = 0.32, t = 3.8, p < .001).

Transfer to Untrained Materials

Speech-in-Noise Understanding

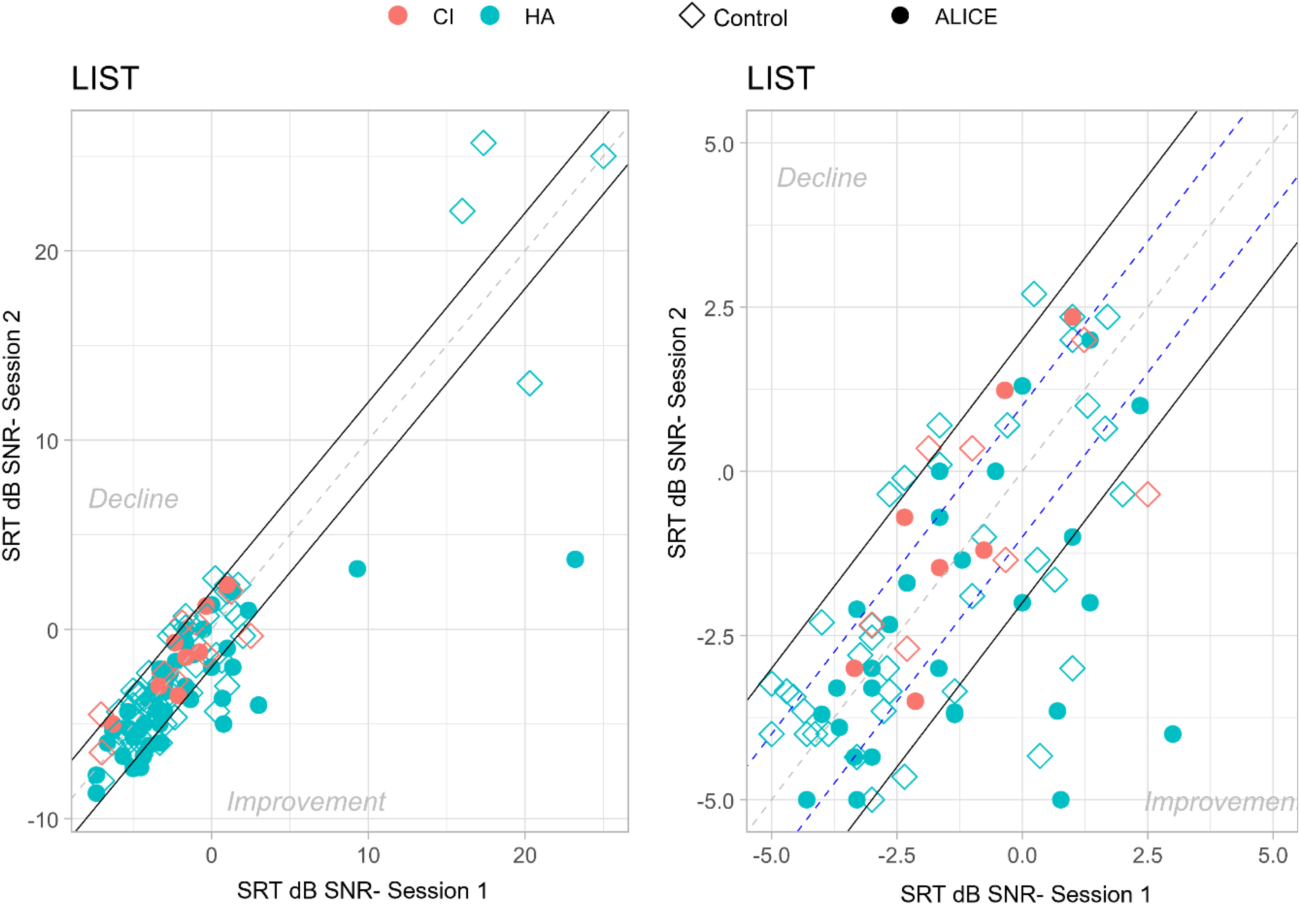

A key question is whether the training gains transfer to functional benefits in real-world listening and whether these improvements are specific to the intervention group. These functional benefits can be improvements in (untrained) speech in noise recognition, memory, attention, and self-perceived benefits. Figure 3 illustrates the performance on speech recognition in noise before and after auditory training for each participant separately. The area between the full lines represents changes in speech recognition in noise that fall within −2 and +2 dB SNR, indicating a clinically relevant improvement or decrement.

Speech Understanding in Noise Before (Session 1) and After (Session 2) Auditory Training for Each Participant Separately. The Area Between the Full Lines Represents Changes in Speech Understanding in Noise That Fall Within a Range of −2 to +2 dB SNR. The Plot on the Right Shows a Zoomed-in Portion of the Plot on the Left, Without the Outliers in the Top Right Corner.

An LMM analysis on the SRTs of the LIST sentences, with treatment group and session as fixed factors and participant as random factor, yielded a significant effect of treatment (β = −1.98, SE = 0.93, t = −2.12, p = .03) and a significant effect of session (β = −.51, SE = 0.19, t = 2.67, p = .008). Moreover, the two-way interaction of session and treatment was significant (β = −1.12, SE = 0.38, t = −2.92, p = .004). Post-hoc tests following the two-way interaction with Bonferroni corrections showed that Session 1 was not statistically different for the two treatments, ΔM = 1.31 dB SNR, t(121) = 1.36, p = .17, and that the difference in sentence understanding in noise between the control group and the ALICE intervention was significant after training, ΔM = 2.51 dB SNR, t(115) = 2.50, p = .01.

Further analysis revealed no significant link between time spent on training and speech recognition in noise improvements (Spearman ρ = 0.03, p = .89). However, individuals with better unaided pure tone average (PTA) improved more than those with worse PTA in the intervention group. This correlation remained significant even after adjusting for age (partial correlation): r = .32, p = .02, F(53) = 2.41 for the ALICE training group.

Self-Perceived Benefit

Questionnaires were administered to assess whether use of the ALICE program would lead to improved self-perceived communication skills and quality of life. Below, we report the data from the four questionnaires. The number of responses per questionnaire may vary. To alleviate the workload of HCPs, participants could fill out the questionnaire by scanning a QR code. However, this method also resulted in some missing values. Only fully completed questionnaires from the same participant for both Session 1 and Session 2 were taken into account. Each figure indicates the number of responses per questionnaire.

Figure 4 illustrates the average performance scores (and standard errors) for Session 1 and Session 2 for the intervention and the control group for the Speech, Spatial, and Qualities questionnaire. An LMM analysis on the performance scores with treatment group and session as fixed factors and participant as a random factor showed significant differences between different questions, because they tap into different domains. For example, SSQ3 ('speech”) is significantly different from SSQ11 (“quality”) in both the ALICE intervention group and the control group. However, neither the two groups (treatment vs. control; β = −.06, SE = 0.28, t = −0.24, p = .809) nor the sessions, F(1) = 3.55, p = .433, were significantly different. There were no significant interaction effects.

Average Response Scores for Each Question of the Speech, Spatial, and Qualities of Hearing Scale (SSQ) Are Shown for the Intervention and Control Groups: Session 1—CI (n = 8), HA (n = 56); Session 2—CI (n = 8), HA (n = 56).

Although this questionnaire does not show improvements after training, it is noteworthy that the scores are relatively low, indicating that individuals are aware of their listening difficulties. This is especially the case for Question 3 (when you have a conversation with one person in a room where there are many other people talking. Can you follow what the person you are talking to is saying?).

Communication and Acceptance Scale

The CAS is intended to capture changes in communication strategies and the emotional consequences, knowledge, and acceptance of HL (Figure 5).

Average Performance (and Standard Error) for Each of the Five Subscales of the Communication and Acceptance Scale (CAS) for the Intervention and Control Groups: Session 1—CI (n = 4), HA (n = 42); Session 2—CI (n = 7), HA (n = 44). Scores of the 5-Point Likert Scale Are Rescaled from 0 to 10.

A LMM analysis of the performance scores with treatment group and session as fixed factors and participant as random factors showed significant differences between different subscales, but not between groups (ALICE vs. control) or between sessions (pre vs. post, β = .3, SE = 0.03, t = 1.11, p = .26). There were no significant interactions.

It's quite notable that the knowledge question (Hknow) “I feel like I have a good understanding of my hearing loss” received a low rating (<5 of a (re)scale of 10) despite counseling and practical advice. This may indicate that limited knowledge affects the ratings of verbal communication strategies and acceptance. Confirmation (Conf) pertains to two items representing verbal and psychosocial acknowledgment of HL. Interestingly, only the subscale for emotional aspects of HL was rated relatively higher, perhaps because they were volunteers keen to participate in the study.

Effort Assessment Scale

The EAS consists of six items, each targeting different listening situations (in quiet, in noise, during conversation). Higher scores indicate greater perceived listening effort. We anticipated that training would reduce listening effort. Our results do not support this hypothesis, as illustrated in Figure 6. Ratings are similar for Session 1 and Session 2 for both groups (β = −.71, SE = 0.34, t = 2.043, p = .044). Participants in both groups indicated high listening effort on most questions, especially Question 4 (Do you have to put in a lot of effort to follow discussion in a class, a meeting, or a lecture?) and Question 5 (Do you have to put in a lot of effort to follow the conversation in a noisy environment (e.g., in a restaurant, at family gatherings)? Listening effort in conversation with others (Question 1), the amount of concentration when listening (Question 2), the ability to ignore other sounds (Question 3), and listening on the telephone (Question 6) appear somewhat less effortful.

Average Performance (and Standard Error) on the Scores of the Effort Assessment Scale (EAS) for the Intervention and Control Groups: Session 1—CI (n = 4), HA (n = 44); Session 2—CI (n = 7), HA (n = 42).

International Outcome Inventory for Hearing Aids

We expected that some perspectives on HA use may change after the intervention. However, the LMM analyses showed significant differences between questions, but no significant main effect of group or session (β = −.17, SE = 0.08, t = −18.5, p = .06; Figure 7).

Average Performance Scores (and Standard Error) on the Six Questions of the International Outcome Inventory for Hearing Aids (IOI-HA) for the Intervention and Control Groups: Session 1—CI (n = 7), HA (n = 51); Session 2—CI (n = 4), HA (n = 50).

Counseling (Only for the ALICE Group)

Before the training with ALICE, participants indicated where and which activities they encountered difficulties with. Table 2 lists a summary of the difficulties noted by participants at the start (n = 65). Most participants indicated encountering difficulties at home (note that only a few participants were professionally active) and mentioned struggling with conversations (both one-on-one and in groups) and watching television. Subsequently, participants received questions on these topics throughout the training period. These questions referred to their ability, feelings, or participation.

Counseling Locations and Number of Participants Who Indicated Having Problems.

Note. Only a few participants are still working.

During the clinical trial, participants received up to three questions per day related to either “participation,” “ability,” or “feeling.” Figure 8 shows the average ratings for these activities across the three categories during the 8 weeks of the trial. Higher scores indicate better self-reported values. Ratings did not differ significantly for the three different categories, nor as a function of time (β = .04, SE = 0.06, t = 0.65, p = .516).

Average Ratings (and Standard Error) on Counseling Questions for the CI and HA Users During the 8-Week Intervention Period. Counseling Questions Reflected on “Ability,” “Feeling,” and “Participation.”

Discussion

Auditory training programs are designed to improve a person's ability to understand and interpret (speech) sounds. They are created on the premise that attention-driven stimulation improves performance due to brain plasticity. Due to multiple exposures to sounds or speech at a tailored level or signal-to-noise ratio, sound patterns are reinforced. Through practice, the brain refines its neural pathways, improving its ability to distinguish subtle differences in sound, improve speech recognition (especially in noisy environments), and enhance auditory memory and attention (Anderson & Kraus, 2013; Russo et al., 2005; Ying et al., 2025).

Our clinical trial shows that the self-guided, tailored ALICE training program enhances DiN and phoneme perception, indicating that auditory training strengthens sensory processing. The training program is also effective at improving the auditory system's ability to parse untrained speech in noisy conditions—a common and challenging real-world problem for individuals with HL. This enhancement in speech in noise performance is specific to the training group, as the control group did not improve. However, the ratings on the counseling questions (ability, feeling, participation) did not change with time. Contrary to our expectations, no reduction in self-perceived listening effort was observed, nor improvements in self-perceived benefits for the intervention group. The intervention group did not report an increased knowledge about their HL and did not recognize the emotional consequences of their HL more after using ALICE than the control group. Although improvements were noted in speech tasks, participants as a group did not report feeling less effort or experiencing greater benefits in their daily lives.

The discrepancy between behavioral performance gains and subjective experiences is well-known (Cox & Alexander, 1999; Sweetow & Sabes, 2006). Questionnaires provide insight into how listening difficulties affect the quality of life and confidence, but they can also be influenced by personality, situational factors, and expectations (Patry, 2011). Moreover, it could be that participants are not aware of the beneficial changes that occur.

Bridging the disparity between behavioral gains and subjective experiences is crucial in hearing health care. To increase the likelihood of self-reported benefits, real-life communication simulations could be incorporated in the training (e.g., conversations in cafés, group chats), or participants could customize listening scenarios based on their everyday challenges. If the training feels more like real-life listening, improvements may transfer more directly to daily communication. Additionally, functional benefits could be evaluated using virtual reality to mimic real-world listening scenarios for a more nuanced assessment (e.g., Devesse et al., 2020).

Another option would be to teach participants how to notice and use their improvements (e.g., communication strategies provided by the counseling module) by including reflective components. People may not perceive improvements if they do not recognize when and how they are doing better. Integrating self-monitoring and reflection into auditory training can influence outcomes based on how well listeners are trained to recognize and adapt to their performance (Imhof, 2001). Currently, counseling questions about participation, feelings, and abilities are not connected to performance on the ALICE exercises. Introducing regular feedback check-ins could be valuable. In the future, individuals might be encouraged to reflect on their abilities, emotions, and level of participation after completing an exercise, helping to increase self-awareness.

Finally, more sensitive self-report measures are required. Many of the self-report measures are not sensitive to changes in performance and may not be the right ones to capture subjective improvements after training. Instead of using questionnaires to capture subjective experiences, it may be more effective to use ecological momentary assessment (EMA; Schinkel-Bielefeld et al., 2024). EMA captures subjective experience in the moment, avoiding recall by asking short questions throughout the day (“How hard was it to understand someone at work today?”). EMA may capture small day-to-day shifts in perceived effort or confidence that traditional questionnaires might miss.

Our current trial data show that adherence is good with the self-guided ALICE training. However, variability in adherence and dropouts remains a concern, and more research is needed to understand the factors underlying motivation and engagement. Engagement and adherence to computer-based auditory training are influenced by intrinsic motivation (e.g., hearing difficulties) and extrinsic motivation (e.g., the desire to help others with HL). Self-management of HL requires motivation and dedication, and, as a result, people become aware of their hearing difficulties (Henshaw et al., 2015). It could be that adherence and uptake of HA technology could be higher with additional hearing care services (low-friction, easy to access, remote monitoring, training, and follow-up), which would also mitigate demands placed on the current rehabilitation centers (Lesica, 2018).

Extending the training duration or adding a maintenance phase could also enhance performance. A recent review by Chae and Bahng (2023) on the duration showed that the largest effect size was found for training periods longer than 600 min compared to periods shorter than 600 min. The brain might need more time for neural modifications to impact subjective experience. Long-term engagement could solidify benefits and improve generalization to untrained materials. Overall, participants adhered to the daily requested practice time, with some even willing to extend it. In the current study, training time was at least 600 min (8 weeks, 15 min/day), while in our previous studies, it was 1200 min (16 weeks, 15 min/day, Magits et al., 2023) and 900 min (12 weeks, 15 min/day, Van Wilderode et al., 2023). All three studies failed to show an improvement in subjective experience.

Regardless of the training gains, ALICE provides a service that can be broadly utilized, particularly in settings where access to traditional hearing healthcare is limited. Self-guided programs serve as a low-barrier supplement or alternative to traditional care. Monitoring performance through DiN and phoneme identification in quiet can be done remotely, allowing hearing healthcare providers to follow up on progress without additional workload. Performance on the DiN task is highly correlated with sentence understanding in noise (van Wieringen et al., 2021) and can be conducted remotely, without the need to travel. In the current study, we presented the percentage correct scores for vowels and consonants. More detailed analyses of the confusion between phonemes are provided in the dashboard of the HCP. Information transmission analysis (Miller & Nicely, 1955) applied to the data of the four most recent stimulus-response matrices yields detailed insight into difficult-to-perceive speech features (van Wieringen et al., 2021). The HCP can track adherence to monitoring and training, generate alerts about clients with decreasing listening performance, and identify clients experiencing problems.

Regarding training, the dashboard provides information about the client's performance on specific exercises and exercise types. The counseling module offers insights into the client's perceived listening ability and satisfaction in daily life. This additional information helps healthcare providers deliver optimal care, enhance daily listening experiences, and improve the ability to manage challenging listening situations.

The ALICE app was proven safe during the multicenter clinical trial, with no data leakages and strong data privacy and security measures. No issues were reported regarding inaccurate sound levels. Despite a few initial bugs, the ALICE interface and the dashboard were user-friendly and easy to navigate. Participants could report issues as needed, and the app was regularly updated with the latest technology and security enhancements.

Although significant challenges remain in optimizing training programs, outcomes, and other factors, self-guided training programs offer greater personalization and interactivity. These programs promote self-management of listening problems and contribute to more equitable hearing care by increasing access to services. In the current study, hearing-impaired participants used HAs and/or CIs. In the future, the efficacy of ALICE can also be evaluated in individuals without hearing interventions who experience difficulty listening in noisy environments. Additionally, streaming speech sounds directly to the ear via a HA or CI may help improve hearing in the deaf ear, particularly in cases of single-sided deafness. Practicing solely with the deaf ear cannot be easily done in the clinic or free-field unless the hearing ear is blocked. ALICE is available in the Dutch and French languages.

Conclusion

Our clinical trial shows that the self-guided ALICE training program enhances DiN and phoneme perception, and is effective at improving the auditory system's ability to parse untrained speech in noise. This enhancement in speech-in-noise performance is specific to the training group, as the control group did not show improvement. Participants were compliant during the 8-week training period. The results of the clinical trials imply that ALICE can be used as a scalable, accessible, and safe hearing care intervention.

Footnotes

Acknowledgments

We sincerely thank the hearing centers Aerts, Amplifon, Audika, Jolien Desmet, Charlotte Marinus, and the University Hospital Leuven for their time and effort to recruit and test participants. We also thank Dr Andrea Bussé for her assistance with preparing the clinical trial and training the investigators. Stefanie Krijger, Dr Tilde Van Hirtum, and Dr Laura Leyssens are thanked for their assistance with the regulatory work in the first phase of the project. The study protocol is registered on ClinicalTrials.gov ID: NCT05329922.

Author Contributions

AvW and MVW contributed to the study design and performed the data analyses. LDR programmed the ALICE app. AvW, MVW, LDR, TF, and JW provided critical revision and feedback.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by a C3-grant project from the KU Leuven (C3/21/046) and by the FWO-TBM Grant No. T000823N (CLINIC, principal investigator: AvW).

Declaration of Conflicting Interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: LDR is co-founder of CELES (founded after the clinical trial, to market ALICE). AvW, TF, and JW have a relationship with CELES concerning consultancy and equity.