Abstract

The reduction in spectral resolution by cochlear implants oftentimes requires complementary visual speech cues to facilitate understanding. Despite substantial clinical characterization of auditory-only speech measures, relatively little is known about the audiovisual (AV) integrative abilities that most cochlear implant (CI) users rely on for daily speech comprehension. In this study, we tested AV integration in 63 CI users and 69 normal-hearing (NH) controls using the McGurk and sound-induced flash illusions. To our knowledge, this study is the largest to-date measuring the McGurk effect in this population and the first that tests the sound-induced flash illusion (SIFI). When presented with conflicting AV speech stimuli (i.e., the phoneme “ba” dubbed onto the viseme “ga”), we found that 55 CI users (87%) reported a fused percept of “da” or “tha” on at least one trial. After applying an error correction based on unisensory responses, we found that among those susceptible to the illusion, CI users experienced lower fusion than controls—a result that was concordant with results from the SIFI where the pairing of a single circle flashing on the screen with multiple beeps resulted in fewer illusory flashes for CI users. While illusion perception in these two tasks appears to be uncorrelated among CI users, we identified a negative correlation in the NH group. Because neither illusion appears to provide further explanation of variability in CI outcome measures, further research is needed to determine how these findings relate to CI users’ speech understanding, particularly in ecological listening conditions that are naturally multisensory.

Introduction

Naturalistic oral communication is typically an audiovisual (AV) experience that reveals striking perceptual benefits in speech comprehension when visual cues are present (Sumby & Pollack, 1954). In contrast, for cochlear implant (CI) users, talking on the phone is a difficult, and oftentimes insurmountable, challenge—one that requires parsing auditory-only speech signals that are conveyed by the CI at a greatly reduced spectral resolution. Fortunately, visual input can improve listening thresholds (Barone and Deguine, 2011; Barone et al., 2010; Grant and Seitz, 2000), and for many CI users, AV conversations are a necessity.

AV integration is the process of filtering and combining information from the different senses, like auditory speech and visual articulations, in order to create a more accurate percept than either sense could on its own. Each sensory modality encodes complementary information that is increasingly useful when the saliency/interpretability of the individual signals is relatively low. While typical, acoustic listeners may only experience the benefits of AV integration in, for example, a very noisy restaurant, electrical hearing through a CI may in itself introduce enough “noise” to necessitate the integration of visual cues as a critical compensatory strategy. In effect, many CI users rely on AV integration to successfully understand speech in most listening environments, not just particularly noisy ones. As a result, visual orofacial articulations play a crucial role in verbal communication both before and after cochlear implantation, and in order to fully describe aural speech recovery following implant surgery, characterization of both unisensory and multisensory processing is necessary.

Despite a daily reliance on the multisensory integration of speech signals, AV testing is not a part of routine audiological exams. Furthermore, as a clinical population, there are substantial individual differences (e.g., onset of hearing loss, etiology, duration of deafness, and age of implantation) that contribute to variable auditory outcome measures (Blamey et al., 2013) and may also have cascading effects on AV integration.

Arguably, the index of multisensory processing that we know the most about in CI users is the McGurk illusion (McGurk & MacDonald, 1976). First described over 40 years ago, this illusion results from hearing a bilabial syllable (e.g., “ba” or “pa”) while seeing the visually ambiguous articulation of a velar syllable (“ga” or “ka”). Together, these elicit a novel, fused percept such as “da”, “tha”, or “ta” (Tiippana, 2014). This effect is both robust and persistent for individuals who experience it, although it is well documented that a subset of individuals fails to perceive the illusion (Mallick et al., 2015). Many studies of CI users have focused on the responses of these “non-perceivers,” because they provide insight into sensory biases in the absence of fused percepts.

One very consistent finding in all studies evaluating the McGurk illusion in CI users is that those who do not perceive the illusion are biased toward the visual component (see Table 1 for brief summaries and references). This finding is in contrast to NH controls who typically report the auditory component when not experiencing the illusion (Massaro et al., 1986; McGurk & MacDonald, 1976). This discrepancy makes intuitive sense, because many CI users struggle to discriminate auditory-only syllables in their everyday lives, and may be perceptually “weighting” visual information more highly in order to improve AV estimates (Huyse et al., 2013). Indeed, adding noise or otherwise altering the saliency of one sensory modality is well-known to effectively and rapidly alter sensory weights in typical populations (Ernst & Bülthoff, 2004). Though there are shared networks involving the perception of congruent AV speech and incongruent speech like the McGurk effect, it should be noted that there are known differences in their processing mechanisms as well (Erickson et al., 2014). As such, McGurk results present a challenge for interpreting how illusory “da” percepts may (or may not) relate to naturally congruent speech, especially in the context of greater semantic information such as full sentences (Van Engen et al., 2017). Attention is another factor to consider with CI users since they typically report high listening effort (Davis et al., 2021), and greater attentional demands can also influence sensory weights (Kok et al., 2012).

An Overview of McGurk Studies with Cochlear Implant Users.

All studies report a visual bias in CI users, while Schorr, Desai, and Tremblay also found various correlations to clinical measures.

*Only auditory bilabial stimuli and visual velar stimuli are listed, though it should be noted that four studies (Rouger, Stropahl, Tona, and Yamamoto) also tested several other syllables in up to 12 different combinations. NED = noisy encoding of disparity (Magnotti & Beauchamp, 2015). CI = cochlear implant, NH = normal hearing, n/a = not applicable.

Despite the consensus in the literature regarding visual bias among CI users in the context of McGurk stimuli, how their rate of fusion (e.g., “da” percepts) compares to NH controls is less apparent. Some studies indicate similar reports of fused percepts in CI users (Huyse et al., 2013; Rouger et al., 2008; Tremblay et al., 2010), while others find both lower (Schorr et al., 2005) and higher (Desai et al., 2008; Stropahl et al., 2017; Tona et al., 2015) reports of McGurk percepts in CI users than controls (Table 1). Given that auditory saliency is lower in CI users (i.e., less reliable), direct comparisons to NH individuals requires further calculations and may account for some discrepancies in the literature. Take, for example, an auditory error in unisensory trials like mistaking the sound of “ba” for “da”. Because this response could also mimic a McGurk percept in the incongruent trials, an adjustment is necessary to better quantify illusion perception per se (Grant et al., 1998; Stevenson et al., 2012). This need may be particularly true for patient populations with hearing impairments. Desai et al. (2008), for instance, found much higher fusion response rates in CI users than controls (60% v. 24%, respectively) that were actually not significantly different after applying an error correction. Further investigations are needed to address this issue, particularly in larger sample sizes that also capture the clinical diversity of CI users today.

In addition to this outstanding question of whether McGurk perception truly differs between CI and NH listeners, it is also unknown how these results directly relate to other metrics of AV integration (Grant & Seitz, 1998; Stevenson et al., 2017). The sound-induced flash illusion (SIFI)—sometimes referred to as the flashbeep or double flash illusion—was discovered more recently and involves simple, non-speech stimuli (Shams et al., 2000). Unlike the McGurk effect, the sound-induced flash illusion, or SIFI, asks participants to ignore what they hear and only report what they saw. Specifically, participants are asked to count the number of rings that rapidly flash on a screen while they also hear a varying number of beeps. The greater the number of beeps, the more likely that multiple, illusory flashes are perceived when only a single flash is presented. Given that deafness has been linked to perceptual enhancements in the visual periphery (Bavelier et al., 2006) and this illusion is perceived more strongly in the parafoveal visual fields of typical listeners (Shams et al., 2002), employing this task in CI users may provide new context for underlying differences in AV integration extending beyond speech stimuli.

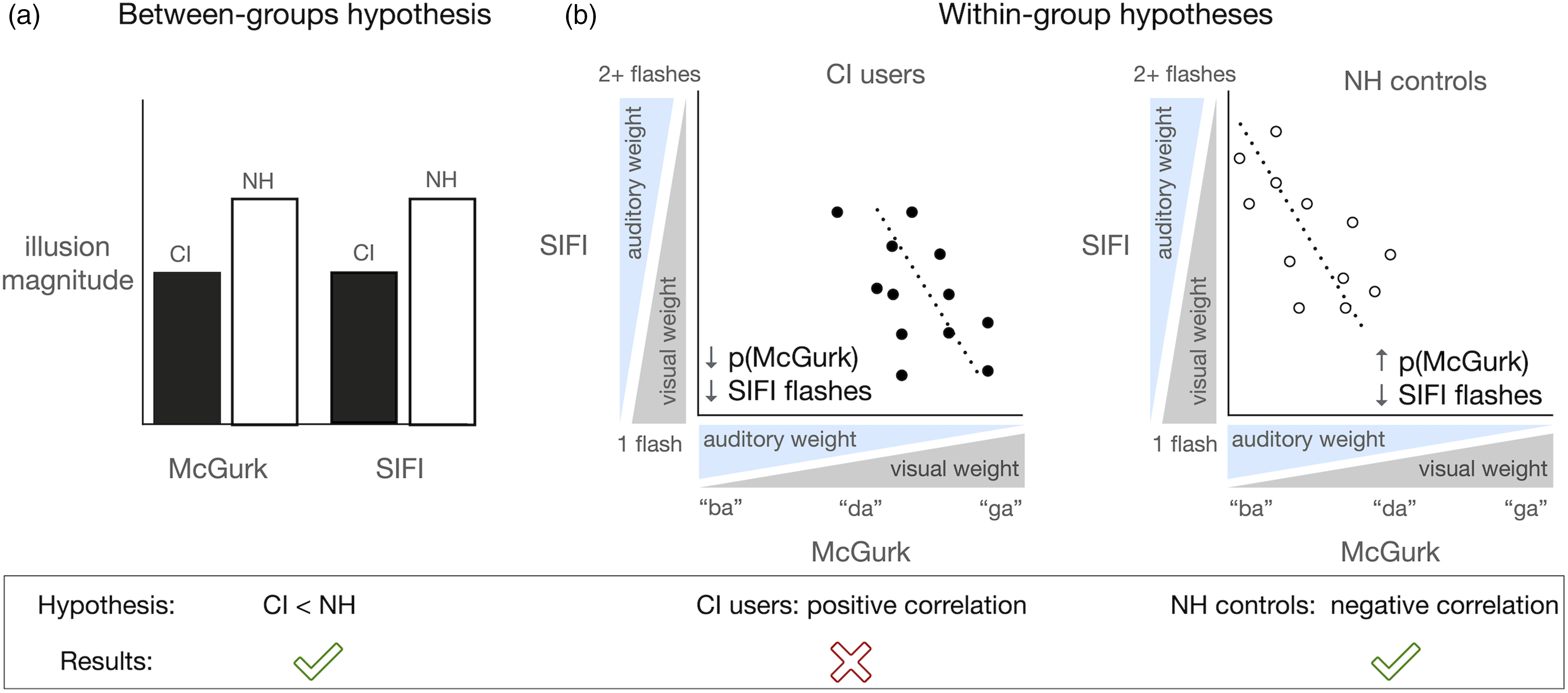

We hypothesized that the known visual biases in CI users would lead to more veridical visual perceptions, which would correspond to a decreased likelihood of perceiving the non-existent flashes in a SIFI task or the fused “da” percept in a McGurk task (see Figure 1a schematic). Furthermore, because low McGurk fusion is effectively a metric of visual bias for CI users, we predicted a positive correlation between these two tasks; that is, when simple visual percepts are more easily biased by sound, we predicted a higher rate of fusion of auditory and visual speech signals leading CI users to perceive the McGurk illusion (Figure 1b). By the same logic, lower McGurk fusion in NH controls typically corresponds to an auditory-dominant percept, which could, in turn, influence a greater number of perceived flashes in the SIFI experiment (i.e., result in a negative correlation between the two tasks).

Schematic of main hypotheses and results. Data from this study supported our hypothesis that CI users experience the McGurk and Sound-Induced flash illusions less often than controls (a). We did not find a positive correlation between these two illusions in CI users as we expected (b), nor did they predict clinical speech outcomes beyond known metrics in the CI group (not pictured). We did, however, find a negative correlation between both tasks among the NH control group in support of our reasoning that their higher perceptual “weighting” of auditory information results in perceiving more illusory flashes (b).

Our aim was to investigate how these tasks relate both to one another and to clinical outcome measures for CI users. Such work is necessary to characterize AV integration more completely in this cohort of individuals for whom it may be exceedingly important to “bind” auditory and visual information into more reliable multisensory percepts. The aforementioned hypotheses (see Figure 1) allowed us to address several outstanding questions in the literature: how proficient CI users are with integrating speech sounds compared to simple flash/beep pairings and whether these tasks have clinical implications for predicting other speech measures.

Our results indicate that CI users perceived both of these illusions less often than their NH counterparts. Within the CI group we did not find a correlation between the two illusions; however, two summary metrics [i.e., SIFI susceptibility index and p(McGurk)] correlated significantly in the NH group such that lower McGurk fusion (i.e., more auditory-dominant percepts) corresponded with more illusory flashes (SIFI). Given the absence of a correlation between these variables in the CI group, it is also possible that these two illusions may be mediated by different mechanisms of multisensory (i.e., AV) integration that are disproportionally influenced by other demographic or clinical factors in CI users. Furthermore, the lack of predictive ability of these illusions in explaining clinical variability within the CI group highlights the need for more extensive clinical characterization and perceptual modeling [e.g., (Braida, 1991; Grant et al., 2007)] to describe, and perhaps predict, AV proficiency among a population of individuals who critically rely on AV integration for speech comprehension.

Methods

Participants

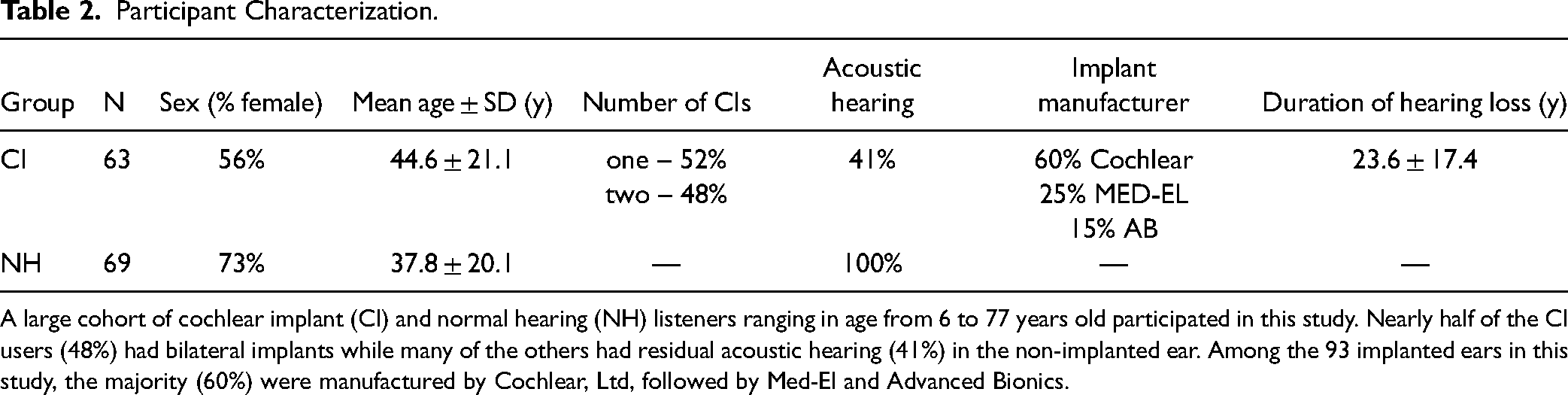

We recruited 63 CI users and 69 NH controls (Table 2). There was no significant difference between groups for age (t(130) = 1.9, p = 0.06, d = 0.33). At least 3 months of experience with their implants was an inclusion criterion for CI users, and the average was 4.6 ± 3.7 years post activation (range = 3 mo – 14 y). The duration of hearing loss as defined from the known onset was 23.6 ± 17.4 years on average (range = 1.5–75 y). We also calculated an approximate “duration of deafness,” which we defined as the date of cochlear implantation minus the onset of severe hearing loss. We used a standard definition of severe hearing loss as either the date when pure tone detection thresholds exceeded 70 dB HL or else the closest estimate from clinical records. The average duration of deafness was 2.4 ± 4.2 years (range 0–27 y). The majority of CI users (n = 53) were postlingually deafened; however, a subset was prelingually deafened (n = 10). These individuals received their implants at an average age of 3.5 ± 1.8 years (range = 1.3–6.5 y; see black circles in Figure 2). Although prelingual deafness (i.e., occurring prior to language acquisition and during critical periods of development) can negatively impact speech outcomes (Fitzpatrick, 2015; Miyamoto et al., 1994), all of these 10 individuals achieved high speech proficiency (Figure 2).

Clinical outcomes for the CI group. Age of implantation ranged from 1.3-75 years old (left), and all users had at least 3 months of CI use prior to participation. Clinical speech testing had a wide range of outcomes (right). We see higher than average performance in prelingually deafened individuals (filled circles) who were all implanted in early childhood (i.e., between 1.3 and 6.5 years of age). Bars indicate group means and error bars are 95% confidence intervals of the mean.

Participant Characterization.

A large cohort of cochlear implant (CI) and normal hearing (NH) listeners ranging in age from 6 to 77 years old participated in this study. Nearly half of the CI users (48%) had bilateral implants while many of the others had residual acoustic hearing (41%) in the non-implanted ear. Among the 93 implanted ears in this study, the majority (60%) were manufactured by Cochlear, Ltd, followed by Med-El and Advanced Bionics.

All CI users completed testing in their “best-aided” hearing condition, which included hearing aids in the non-implanted ear for 41% of the sample. All participants wore corrective lenses as needed and were screened for visual acuity using either a Snellen eye chart or verbal report. Speech perception in the CI group was either tested at the study visit or recorded from recent medical records from the last 6 months. This measure was not available for four individuals, and for the remaining 59 CI users, testing was carried out via best-aided, monosyllabic CNC word lists scored out of a possible 100% correct. Results indicate a wide range of proficiency from 0%-98% correct (67.1% ± 22.7%) (Figure 2), and this variability is consistent with other reports in the literature (Gifford et al., 2018).

Stimuli

Visual stimuli were displayed using Matlab 2008a and Psychophysics toolbox extensions (Brainard, 1997). These stimuli were presented on a CRT monitor positioned approximately 60 cm from participants. Visual stimuli were white circles (10 ms in duration at a 13° visual angle) on a black background and 2 s videos of a female articulating the syllables “ba” and “ga” (Stevenson et al., 2012). Auditory stimuli were 3.5 kHz tones (7 ms in duration) in the SIFI task and utterance of the syllables “ba” and “ga” in the McGurk task. When multiple flashes were presented in control conditions, they were separated by 43 ms intervals. For example, the congruent 4 flash and 4 beep trial is 169 ms in duration while a 1 flash, 1 beep trial is 10 ms. We aligned the onset of visual and auditory stimuli using a Hameg 507 oscilloscope via inputs from a photovoltaic cell and a microphone. Because it is well documented that CI users have normal auditory gap detection thresholds, generally in the range of 2–10 ms (Shannon, 1989), the 46 ms gaps between auditory stimuli in the SIFI experiment should have been easily perceptible as individual beeps for both groups. All auditory stimuli were delivered at a comfortably loud level (approximately 65 dB SPL) through a mono speaker.

Experimental Design and Analysis

The McGurk task has two blocks. The first block is unisensory testing of auditory-only and visual-only presentations of “ba” and “ga” (28 trials total). The next block is all AV presentations of both syllables (20 trials each) and the incongruent pairing of auditory “ba” with the visual articulations of “ga” (20 trials). Participants responded to each trial with one of four letters corresponding to the sounds: “ba”, “ga”, “da”, or “tha” (Figure 3). Illusory responses were considered either “da” or “tha”, which we will simply refer to as “da” throughout. Because misidentifying unisensory stimuli as one of these novel syllables could overinflate the apparent magnitude of the illusion, we made a correction using the formula:

Experiment details of the McGurk illusion and the sound-induced flash illusion (SIFI). These two tasks have both congruent control trials and incongruent illusory trials where participants are asked to either report what the woman said (McGurk) or the number of flashes that they saw (SIFI).

p(“da” | McGurk trial) × [1-p(“da” | Unisensory trial)]

If a participant perceives a unisensory component as “da,” that obfuscates whether a subsequent AV perception of “da” is illusory and thereby indicative of integration. This formula adjusts for those occasions by calculating the proportion of “da” responses out of total trials in the incongruent AV condition and multiplying by (1 - proportion of “da” responses in unisensory trials). This effectively lowers the rate of fusion metric—p(McGurk)—for those who struggle to distinguish the component syllables on their own. Though this correction has the benefits of being a simple adjustment relative to a unisensory baseline, we should note that it assumes “da” errors occur at the same rate in incongruent AV conditions as they do in unisensory trials. For this reason, the p(McGurk) metric could be considered a conservative estimate of AV fusion. Even so, it is a common adjustment to make in McGurk studies such as these (Stevenson et al., 2012) and was applied here a priori. In circumstances where unisensory components elicit highly accurate responses, no such correction is needed. Instead, the raw proportion of fused percepts can be directly compared between groups [see, for example, (Grant et al., 1998)].

In the SIFI task, participants fixated on a white cross in the middle of the screen while a white ring flashed on a black background (Figure 3). They were asked to report the number of flashes while ignoring the beeps. Control trials consisted of: 1–4 flashes without beeps, congruent numbers of flashes and beeps (up to 4), and incongruent pairings of just one flash with 2–4 beeps. 25 trials were tested for each of these 11 conditions, and responses were scored as the average number of flashes reported. Additionally, we calculated a susceptibility index (SI; Stevenson et al., 2012) using the following formula where Rn is the average number of flashes for n beeps:

This index collapses across all incongruent trials in order to derive a single metric for the average number of illusory flashes that are experienced per added beep. A value of 0 means that no illusion was perceived, and a value of 1, for instance, indicates that each additional beep increased the perceived number of flashes by 1.

Procedures

All protocols and procedures were approved by Vanderbilt University Medical Center's Institutional Review Board, and all subjects provided informed consent prior to participation. Experiments took place in a dimly lit, sound-attenuated room with an experimenter seated nearby. For the SIFI task, subjects were instructed to maintain fixation on the centrally-located fixation cross. All responses were collected using a standard keyboard. These experiments were part of a larger testing battery in which both trial and task order was pseudorandomized (Butera et al., 2018). Because some individuals ran out of time at the end of testing, subject numbers are included on figures to indicate those who were able to complete each task. In the McGurk experiment, one CI user and 2 NH controls did not have sufficient time to complete the unisensory testing necessary for p(McGurk) calculations. In lieu of imputation, we used the proportion of “da” responses as a proxy for p(McGurk) in these three individuals.

Statistical Approach

Between-group differences in the McGurk task were tested using resampling methods on account of several highly skewed variables that are incompatible with parametric tests even after standard transformations (e.g., log10). This approach was selected for its minimal assumptions of the data's distribution in each group, and is based on 30,000 reshuffles where each shuffle reassigns data points randomly to the two groups. A two-tailed, Welch's t-test is then used as a comparison metric. We selected this metric instead of a difference in means, for instance, because it has the advantage of capturing both a central tendency and variability. Significance is expressed as the number of times a shuffled sample produces a p value exceeding what was found in the actual sample, and is expressed as a proportion of the total number of simulations. T scores and degrees of freedom are reported from the Welch's t-test of the observed data. Control McGurk trials, the p(McGurk) index, and SI were compared between groups using this resampling method in R (R Core team, 2013). After narrowing our comparisons of p(McGurk) measures to individuals who perceived the illusion on at least one trial, we tested whether proportionally more individuals were excluded from one group than the other using a chi-square test in SPSS (IBM).

Data from the SIFI task were less skewed and a square root transformation corrected for small deviations from normality. Congruent and incongruent conditions were both compared via a mixed-model ANOVA in SPSS. The repeated measure was the number of added beeps, and the group number was the between-subjects factor. Greenhouse-Geisser corrections for violations of sphericity were applied as needed, and significant interactions were followed up with pairwise t-tests.

Correlations between task metrics were done with a Spearman's rank-order correlation in SPSS software. Lastly, for a linear regression within the CI group, we included uncorrelated clinical variables with log transformations as needed to correct for deviations from normality.

Because prelingual deafness and subsequent pediatric implantation is frequently considered to be a distinct subgroup of CI users with a unique developmental time course, we also conducted all analyses excluding data from these 10 individuals. Since none of the main findings were affected by their inclusion, all statistical results and plots include all data collected, including both pre and postlingually deafened CI users. Significance for all statistical tests was defined as α < 0.05, and throughout, effect sizes are reported where applicable and means are reported ± standard deviations. De-identified data from this study are available from the corresponding author (I.M.B.) upon reasonable request.

Results

McGurk Illusion

In both the unisensory and AV control (i.e., congruent) trials, CI users had lower speech perception accuracy for both “ba” and “ga” stimuli (Figure 4). Not surprisingly, the group differences were the largest for the auditory-only conditions (Table 3). Additionally, for identifying “ga,” CI users had lower accuracy in lipreading (CI mean = 53%, NH mean = 68%) and AV listening (CI mean = 83%, NH mean = 99%) (Figure 5). Though AV identification of “ba” was also statistically lower for CI users, both groups scored close to ceiling (CI mean = 97.7% and NH mean = 99.3%). Group responses for the control conditions indicated that incorrect responses mostly corresponded to the fused, illusory stimulus. In fact, for every control condition tested, CI users had more “da” responses than controls (Figure 4). The largest group differences were in auditory only testing, though even for congruent AV presentations of “ga,” the CI group responded incorrectly with “da” on 16% of trials compared to just 4% of these errors in the NH group (Figure 4b). This finding highlights the need for a correction factor in order to better equate groups on illusion perception.

Auditory, visual, and congruent audiovisual responses to McGurk control trials. The distribution of responses is shown for (a) the "ba" stimulus and (b) the "ga" stimulus. The disproportionate amount of "da" errors in the CI group prompted us to apply a correction factor for group comparisons with incongruent AV stimuli.

McGurk experiment results. (a) Mean accuracy of perceiving syllables in both unisensory and AVc (congruent audiovisual) trials are shown for “ba” and “ga." (b) Mean responses to AV incongruent, “McGurk” trials are reported as the proportion of responses out of the total trials for this condition with no further adjustments. (c) Individual data for each response type is shown for AV incongruent trials. Error bars indicate 95% confidence interval of the mean. Chance performance is at 25%. * p < 0.05, **p < 0.01, ***p < 0.001.

Statistical Results from Unisensory, Audiovisual Congruent, and Audiovisual Incongruent Trials of the McGurk Experiment.

Comparisons are made between CI and NH groups using a resampling method and Welch's t-test. Values (p < 0.05) are bolded.

In the incongruent AV (i.e., McGurk) trials (Figure 5b), we analyzed the raw data of response proportions and found no significant difference in average “da” responses between groups (Table 3). However, when CI users did not fuse the syllables, they were much more likely to report the visual component “ga.” Conversely, non-fusing NH controls were most likely to report the auditory component “ba.” Individual data from these McGurk trials illustrate these biases as well as the high proportion of NH controls who did not perceive the illusion (Figure 5c, white circles at or near 0% responses). In NH populations, prior studies have described the McGurk illusion occurring as an “all-or-nothing” effect such that the majority of individuals either experience the illusion almost always or else rarely ever (Mallick et al., 2015). In the present study, we found that 72% of the NH group fell within the two extremes of perceiving the illusion either ≥ 90% of the time or ≤ 10%. In contrast, the CI group had many more intermediate responses with only 35% of individuals having very high or very low fused responses (see “da” panel in Figure 5c).

Sound-Induced Flash Illusion (SIFI)

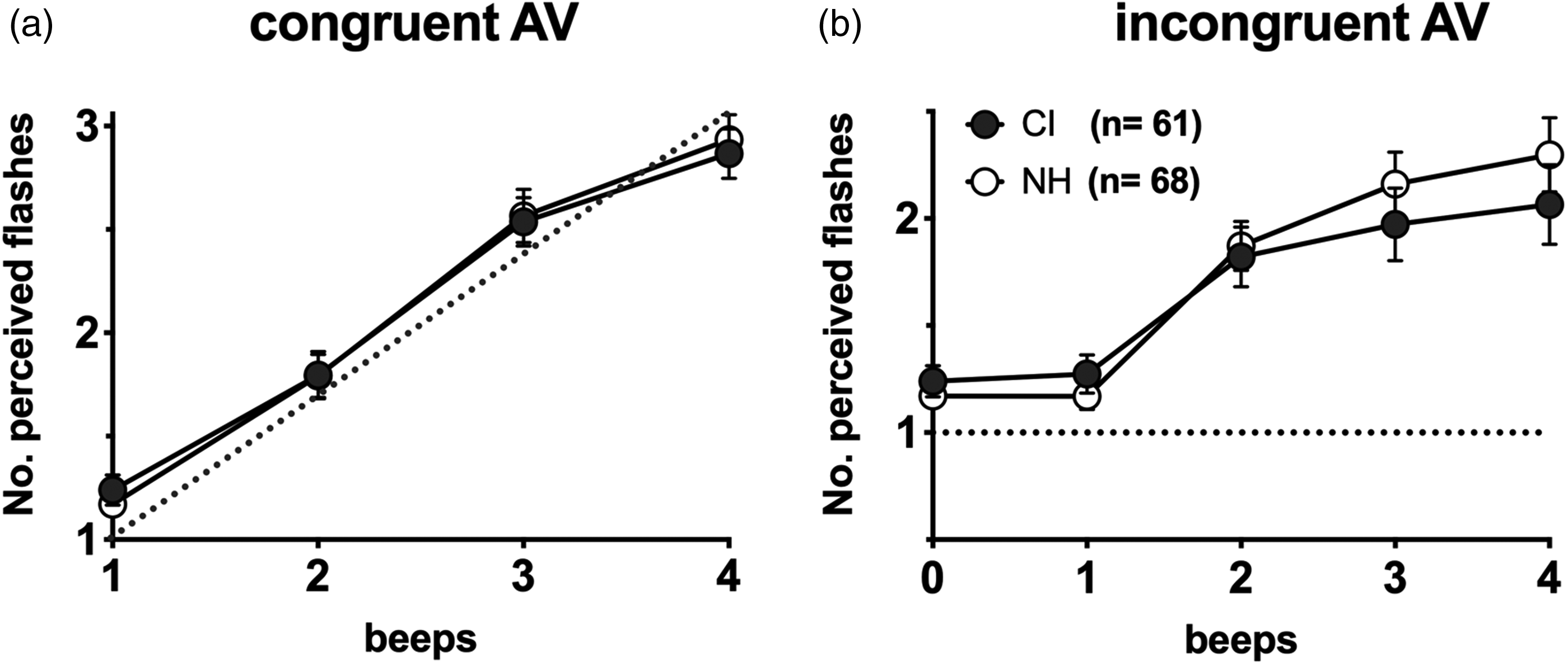

In control trials where the number of flashes and beeps is matched (Figure 6a), there are no between-group differences in the perceived flashes (F(3,127) = 0.002, p = 0.97, ηpartial2 = 0). In the incongruent pairings of just one flash with multiple beeps (Figure 6b), there is also no between-subjects effect (F(4,127) = 0.55, p = 0.46, ηpartial2 = 0.004). However, there is a beep × group interaction (F(1.4,127) = 7.6, p = 0.003, ηpartial2 = 0.056). Follow-up tests did not identify any group differences at specific conditions, though several approached significance (Table 4). Most notably, CI users reported slightly fewer flashes in the three-beep (avg flashes = 1.97) and four-beep conditions (avg flashes = 2.06) compared to controls (2.16 and 2.30, respectively).

Sound-induced flash illusion results. Average reports of the number of perceived flashes are plotted for each group. Trials were either congruent pairings of one or more flashes and beeps (a) or 0-4 beeps paired with only one flash (b). Dotted lines represent 100% accuracy and error bars are 95% confidence intervals of the mean. Note that the 1 beep, 1 flash condition from (a) is replotted in (b) for reference.

SIFI Results.

Though within-subjects effects from a mixed-model ANOVA indicated a significant condition × group interaction, no follow-up t-tests reached the p = 0.05 significance threshold.

*Fractional degrees of freedom result from unequal variances as identified by a Levene's test.

Illusion Metrics

In order to compare group differences in illusion perception between these two tasks, we calculated p(McGurk) and SI metrics (Figure 7). The p(McGurk) calculation corresponded to a unisensory baseline adjustment that lowered the probability of “da” responses for CI users by 0.161 and 0.057 for NH controls. In an initial comparison of p(McGurk) between groups, there is no significant difference (t(109.6) = −1.7, p = 0.097, d = 0.33). However, we also did a post hoc test to compare whether the magnitude of the illusion differed strictly among individuals who did perceive the illusion in at least one trial. Thus, we excluded all “non-perceivers” who made up 13% of the CI group (n = 8) and 30% of the NH (n = 20). To test whether there was a significant difference in this ratio of non-perceivers, we did an exploratory chi-square test and found that groups were significantly different in this regard (χ2(1) = 5.65, p = 0.017, φ = 0.21). Of the remaining 55 CI users and 47 NH controls, we see a highly significant difference between p(McGurk) measures (t(81.0) = −4.9, p = 4 × 10−5, d = 0.24). Following the same trend, there is also significantly lower SIFI susceptibility for CI users (t(124.5) = −2.5,p = 0.014, d = 0.31) for a difference in means of 0.39 for CI users and 0.53 for NH controls (Figure 7b).

Derived metrics of the probability of McGurk perception and SIFI susceptibility index. (a) The p(McGurk) metric corrects for inaccurate “da” percepts in control conditions (i.e., which only contain “ba” and “ga”). (b) Susceptibility index collapses across all incongruent conditions (i.e., 2 to 4 beeps per flash) to quantify the average increase in perceived flashes per added beep.

Relationship between SIFI and McGurk. The correlation between susceptibility index (SI) and probability of McGurk perception is not significant for CI users (a), but does have a significant negative relationship among NH controls (b). Shaded areas correspond to 95% confidence intervals.

Correlation Between Illusion Tasks

Next, we asked whether these two illusions have a relationship to one another in these cohorts. We found no correlation between the illusions for the 87% of CI users who experienced the McGurk effect (rs = 0.041, p = 0.77, Figure 7a); however, we did find a negative, albeit weak, correlation among the 70% of NH controls who perceived the McGurk illusion (rs = −0.317, p = 0.030).

Testing for Additional Explained Variability in Clinical Measures

Lastly, we asked whether these AV illusion metrics had any predictive value for explaining variability in clinical speech scores within the CI group (Figure 2). In a stepwise regression model with CNC scores as the dependent variable, we entered the following four uncorrelated clinical and experimental measures as independent variables: duration of hearing loss, duration of deafness, p(McGurk), and SI. The only variable that was a significant predictor of CNC scores was the duration of hearing loss, explaining 13% of variability (R2 = 0.130, F(1,40) = 5.97, p = 0.019). All other variables were excluded from the model (Table 5).

Predictors of CNC Variability.

In a stepwise linear regression with CNC speech scores as the dependent variable, illusions metrics did not significantly contribute to the model beyond the 13% of variability predicted by duration of hearing loss.

Discussion

This study tested the McGurk illusion in a large cohort of CI users and is, to our knowledge, the first study that investigates the SIFI in this population. A key finding is that CI users perceived both of these AV illusions less often than NH controls (Figure 7). Additionally, we replicated the same general trend as others have reported in the McGurk task: that CI users display a bias toward the visual speech syllable (Figure 5). Importantly, our results also illustrate the need to correct for mistaking unisensory syllables as “da” (Figure 4). That is, in this study, like several others (Huyse et al., 2013; Rouger et al., 2008; Tremblay et al., 2010), raw data of “da” responses (i.e., without any error corrections) were the same between groups (Figure 5c). It was only in comparing the p(McGurk) measure of individuals who perceived the illusion at least once that we saw the group averages significantly diverge (Figure 7). Based on these findings, we recommend that any further investigations of this illusion in CI users take similar measures to disambiguate “da” responses and fused percepts to avoid overestimating the magnitude of the McGurk effect.

Similarly, in the SIFI task, CI users were susceptible to perceiving the illusion; however, the magnitude of the effect was lower than for controls. More specifically, we saw a significant between-group difference in a susceptibility index that collapses across all conditions into a single metric. To our knowledge, the lower CI group average in this metric is a novel finding, which is noteworthy given that relatively little is known about low-level AV stimulus detection in CI users (Stevenson et al., 2017). Based on our prior work, we do know that CI users’ temporal judgments of synchrony for these same flashbeep stimuli are indistinguishable from controls in a simultaneity judgement experiment (Butera et al., 2018). This suggests that low-level AV temporal function is likely intact in adult CI users, so any broader issues may be subtle. Although work in other clinical populations has related reduced SIFI perception to broader impairments in integration (Stevenson et al., 2014), additional work is needed to better characterize any further implications and mechanisms in CI users.

A broad interpretation of the present study is that CI users perceptually “weight” visual information more highly, which has a consequent impact on both the McGurk and SIFI illusions. That is, in incongruent tasks where illusory percepts are more heavily dependent upon sound biasing the visual cue, CI users are more likely to have strictly visual percepts. This visual bias translates to fewer illusory flashes in SIFI and a striking contrast in the higher frequency of visual “ga” percepts than auditory “ba” percepts for incongruent AV trials. In fact, CI users reported the auditory percept in just 2.5% of incongruent AV trials (Table 3), which represents merely 31 “ba” responses over 1,240 total trials. Though there appears to be lower p(McGurk) among CI users who perceived the illusion at least once, this difference in fusion rates is subtle compared with the broader difference in how each group allocates unisensory responses either overwhelmingly toward the visual component (for CI users) or the auditory component (for NH controls).

A similar pattern of more visually biased McGurk responses can also be simulated in NH users by vocoding stimuli to emulate CI processors (Desai et al., 2008). Similarly, adding a visual distractor reduces McGurk percepts (Tiippana et al., 2004), as does adding noise to reduce the auditory and/or video salience (Fixmer & Hawkins, 1998; Hirst et al., 2018). For CI users, it is likely that a combination of reduced auditory saliency and increased visual attention contribute to the greater weighting of visual information.

Our findings support a visual speech bias in CI users, and further work is needed to understand the clinical impacts of this sensory weighting. Though prior studies have suggested that more proficient CI users experience greater fusion (and less visual bias) (Huyse et al., 2013; Tremblay et al., 2010), we did not find McGurk fusion to explain any greater variability in CNC scores than other known measures—in our case, the total duration of hearing loss (Table 5). A major caveat to consider in interpreting these findings is that our outcome measure, which is standard in clinical practice, is auditory only. As a result, it is perhaps unsurprising that an AV illusion like the McGurk effect may relate more directly to AV speech measures than auditory-only ones (i.e., CNC scores). However, as others have reported in NH samples, correlations between McGurk results and AV speech measures are weak (Van Engen et al., 2017), and it is likely that many studies are underpowered to detect such relationships (Magnotti et al., 2020). As a result, larger studies may be necessary to draw the presumed connection between McGurk susceptibility and other AV speech measures, particularly those involving natural, congruent speech.

Though the stimuli in the McGurk and SIFI tasks are dissimilar, perceiving both of these AV illusions may have shared mechanisms since both require the viewer to reconcile whether incongruent auditory and visual cues do in fact originate from the same source (Körding et al., 2007). When comparing the illusion magnitudes between these two experiments, we found a negative correlation between the SI and p(McGurk) metrics in the NH group (rs = −0.317, p = 0.030). However, we did not see a direct relationship between these measures in CI users as we had anticipated (Figure 1b). In a slightly younger and smaller NH sample size, Stevenson et al. (2012) reported a stronger correlation between p(McGurk) and SIFI (R2 = 0.42, p = 0.003) that also had a negative relationship. In contrast, Tremblay et al. (2007) found no correlation between SIFI and McGurk tasks in a younger sample of 38 typical listeners between 5 and 19 years of age. While it is logical that differences in low-level AV illusions indexed by SIFI could have cascading effects on speech integration, these two tasks do not appear to have a direct relationship in CI users. Further work to model the process of cue combination in each of these tasks may provide additional context for how subject-specific parameters like sensory noise may correspond between McGurk and SIFI experiments (Magnotti et al., 2013; Magnotti & Beauchamp, 2015).

It should be noted that the illusion of multiple flashes can be elicited in other modalities as well (Violentyev et al., 2005). In an fMRI study of deaf individuals, a visual-tactile illusion that is analogous to SIFI, revealed larger visual responses in Heschl's gyrus and a greater magnitude of the illusion experienced in deafness (Karns et al., 2012). Although it's beyond the scope of the present study, it would be interesting for future work to investigate possible underlying mechanisms. For instance, given what is already known about the mechanisms behind the SIFI (Cecere et al., 2015; Kerlin & Shapiro, 2015; Watkins et al., 2006), it would be interesting to test whether, for instance, visually derived alpha oscillations play a similar role in predicting the likelihood of experiencing the illusion in CI users or if other, crossmodal mechanisms are at play (Stropahl & Debener, 2017).

One caveat to our study is that that McGurk perception is highly stimulus-specific, and in our case, we only tested responses to a single female speaker. As a result, it is possible that these results may not generalize more broadly to other syllable combinations, male speakers, languages, etc. The primary advantage for interpreting our findings, however, is that we tested a large sample of clinically diverse CI users, so CI perception of this particular stimulus is well-characterized. In future studies, applying a noisy encoding of disparity (NED) model to quantify results would be ideal for investigating the effect in a stimulus-independent manner (Magnotti & Beauchamp, 2015; Stropahl et al., 2017). Such work may also provide more context for our curious findings that CI users have lower lipreading proficiency with the syllable “ga,” as well as lower identification of the congruent, AV presentation of “ga” (Figure 5). In the past our lab has recorded and assayed several other McGurk stimuli and have continuously found the present stimulus to elicit the largest magnitude of the McGurk effect (unpublished observation). This may be due to a high ambiguity of the visual component in this particular recording, which could have disproportionately obfuscated the speech content for proficient lip readers like CI users. Thus, CI users may have performed more poorly in these control conditions for the same reason that this is a good McGurk stimulus—the articulation of “ga” (as well as the speaker's pre-articulatory movements) is particularly ambiguous and more like “da” than other speakers/recordings. Again, the methods described by Magnotti and Beauchamp (2015) would address this issue more conclusively. Another potential approach is to expand the stimulus set to include additional response options into which non-fusion percepts may fall. Otherwise, it is possible that listeners were forced to choose “da” percepts on some trials as the closest match given the limited response options, which may not have been the case with a larger stimulus bank (Rouger et al., 2008).

We should note that, as in all McGurk experiments, our stimuli were specifically selected for the high contrast between their unisensory reliabilities (i.e., the high visual ambiguity of “ga” and the low ambiguity for “ba”). For example, both CI and NH groups display V > A accuracy in identifying “ba” (Figures 4,5). In most practical circumstances this would not be the case. That is, for monosyllabic words, for example, most individuals correctly identify around 20% of words through lipreading alone. With current surgical techniques and modern CI processors, users can typically expect higher auditory-only word recognition accuracy than 20% (Blamey et al., 2013). Thus, these stimuli highlight an unnatural circumstance wherein lipreading provides better sensory estimates than the auditory information, even for NH controls.

Finally, it is not entirely clear what these results mean for CI outcomes given the lack of a relationship with CNC scores. Are more visually biased CI users less proficient with their implants? Are they worse “multisensory integrators” on broader—and more practical—AV speech tasks or simply less apt to perceive illusions? Because it's more likely that these AV illusions would relate to AV speech measures than the auditory-only ones tested in clinic (and reported here), future work comparing illusion perception to a real-world estimate of one's success in the integration of conversational, AV speech would provide more insight. Until we know how McGurk biases relate to natural speech integration of words or sentences it is unclear what utility administering McGurk testing at a broader scale might have. Either way, better identifying individual differences in multisensory integration is an important next step, particularly for the development of new AV remediation strategies.

Footnotes

Acknowledgments

We would like to thank the volunteers who participated in this study, and the assistance provided by Brannon Mangus and Amelia Schuster with recruitment as well as the statistical guidance provided by Dan Ashmead.

Declaration of Conflicting Interests

R.H.G. was a member of the Audiology Advisory Board for Advanced Bionics and Cochlear Americas and clinical advisory board for Frequency Therapeutics at the time of publication. No competing interests are declared for any other authors.

Funding

This work was supported in part by the Eunice Kennedy Shriver National Institute of Child Health and Human Development (EKS NICHD) award No. U54HD083211 (M.T.W.), a Discovery grant No. RGPIN-2017-04656 (R.A.S.) from the Natural Sciences and Engineering Research Council of Canada (NSERC), a Social Sciences and Humanities Research Council (SSHRC) Insight Grant No. 435-2017-0936 (R.A.S.), the University of Western Ontario Faculty Development Research Fund (R.A.S.), the National Institute of Mental Health (NIMH) grant number T32 MH064913 (I.M.B.), and by the National Institute of Deafness and Communication Disorders (NIDCD) award No. 5F31DC015956 (I.M.B.). Its contents are solely the responsibility of the authors and do not necessarily represent the official views of any of these funding sources.