Abstract

The auditory system allows the estimation of the distance to sound-emitting objects using multiple spatial cues. In virtual acoustics over headphones, a prerequisite to render auditory distance impression is sound externalization, which denotes the perception of synthesized stimuli outside of the head. Prior studies have found that listeners with mild-to-moderate hearing loss are able to perceive auditory distance and are sensitive to externalization. However, this ability may be degraded by certain factors, such as non-linear amplification in hearing aids or the use of a remote wireless microphone. In this study, 10 normal-hearing and 20 moderate-to-profound hearing-impaired listeners were instructed to estimate the distance of stimuli processed with different methods yielding various perceived auditory distances in the vicinity of the listeners. Two different configurations of non-linear amplification were implemented, and a novel feature aiming to restore a sense of distance in wireless microphone systems was tested. The results showed that the hearing-impaired listeners, even those with a profound hearing loss, were able to discriminate nearby and far sounds that were equalized in level. Their perception of auditory distance was however more contracted than in normal-hearing listeners. Non-linear amplification was found to distort the original spatial cues, but no adverse effect on the ratings of auditory distance was evident. Finally, it was shown that the novel feature was successful in allowing the hearing-impaired participants to perceive externalized sounds with wireless microphone systems.

Keywords

Introduction

The physical distance to sound-emitting objects that are outside the field of view can be estimated with auditory perception. The acoustic cues related to auditory distance perception (ADP) have been reviewed by Zahorik, Brungart, and Bronkhorst (2005); Georganti, May, van de Par, and Mourjopoulos (2013); and Kolarik, Moore, Zahorik, Cirstea, and Pardhan (2016). In brief, ADP relies on multiple cues: direct-to-reverberant ratio (DRR), intensity, spectral information, and interaural differences. The DRR quantifies the amount of acoustic reflections occurring in a given environment and typically decreases with distance, as the contribution of reverberation becomes stronger. Intensity also tends to be lower for distant sounds than with nearby sounds. Spectral cues are of limited help for distance estimation shorter than 15 m, where the absorption of high frequencies by the air is not perceivable. In the near field (distance shorter than about 1 m), the interaural level difference (ILD) has been shown to rapidly rise as distance decreases. At larger distances, however, the diffraction of sound around the head becomes distance-independent, making both ILD and interaural time difference (ITD) ineffective for ADP.

The estimation of physical distance based on ADP follows a compressive psychophysical function: distances less than 1 m are commonly overestimated, while larger distances tend to be underestimated (Zahorik et al., 2005). In contrast, the evaluation of distance with vision is very accurate up to 15 m (Fukusima, Loomis, & Da Silva, 1997). Similarly as in the ventriloquism effect, in which vision influences the localization of auditory objects based on acoustic cues, Gardner (1968) described the “proximity-image” effect for distance judgment. This effect relates to the fact that the perceived distance of an auditory object can be affected by a visual object located at a different distance (Calcagno, Abregu, Eguía, & Vergara, 2012).

Virtual acoustics over headphones can be used to recreate the ADP of simulated auditory objects (Bronkhorst & Houtgast, 1999; Grimm, Heeren, & Hohmann, 2015; Zahorik, 2002). A prerequisite for that is externalization, which denotes the perception of sounds outside of the head (Kolarik et al., 2016). In the context of binaural audio synthesis with head-related impulse responses (HRIRs), externalization has been shown to depend on auditory and visual factors (Durlach et al., 1992; Hartmann & Wittenberg, 1996). The rendering of room-related cues and especially reverberation, as captured by binaural room impulse responses (BRIRs), substantially contributes to sound externalization (Begault, Wenzel, & Anderson, 2001; Völk, Heinemann, & Fastl, 2008). It has been found that early reflections up to 80 ms were sufficient to restore externalization, while late reverberation provided limited benefit (Catic, Santurette, & Dau, 2015; Völk, 2009). Bronkhorst and Houtgast (1999) likewise showed that the ADP of virtual sources increased with the number of generated early reflections. However, divergence between the auditory and visual impressions may reduce the improvement in externalization gained by adding reflections to the audio signal (Gil-Carvajal, Cubick, Santurette, & Dau, 2016; Udesen, Piechowiak, & Gran, 2015; Werner, Klein, Mayenfels, & Brandenburg, 2016).

The perception of externalization and auditory distance in hearing-impaired (HI) listeners has received limited attention so far. Akeroyd, Gatehouse, and Blaschke (2007) investigated the difference of perception of the DRR and intensity between normal-hearing (NH) and mild-to-moderate presbyacusic listeners. They evaluated the performance in distance discrimination in two experimental conditions: (a) DRR and intensity (denoted as DRR + Intensity) and (b) constant intensity (denoted as DRR-only). Their results suggest that HI listeners encounter difficulties in using the DRR to estimate distance. Ohl, Laugesen, Buchholz, and Dau (2010) compared the externalization scores obtained with NH and mild-to-moderate HI listeners for binaural stimuli over headphones. The experiment took place in a reverberant room and listeners were able to see the loudspeakers supposed to emit the sound. The results showed that HI listeners were less sensitive to changes in externalization than NH listeners. Boyd, Whitmer, Soraghan, and Akeroyd (2012) explored the perception of externalization in NH and HI listeners with a mild-to-moderate hearing loss. Using binaural synthesis of speech sentences in a reverberant environment (visual cues available), they found that HI listeners reported a contracted sense of externalization, with a lower range of estimated distances. Cubick, Santurette, Dau, and Laugesen (2014) evaluated the performance in ADP of 10 NH listeners and 3 mild-to-moderate HI listeners. Performance was assessed with various bandwidths from 1 to 15 kHz, and speech signals were spatialized with increasing distances from 0.4 to 7 m in a reverberant room. The authors reported that distance estimation was robust across bandwidths in NH listeners, while no general conclusion could be drawn for the HI listeners. To our knowledge, no published study has investigated the perception of externalization and auditory distance in severe-to-profound HI listeners so far.

Only a few of the HI listeners involved in the aforementioned studies were regular users of hearing aids (HAs). Most current HAs include non-linear amplification strategies, such as wide dynamic range compression (WDRC), to enhance audibility and mitigate the abnormal perception of loudness experienced by HI subjects. Although WDRC preserves the ITD, it is known to distort the other spatial cues, yielding a reduction of the original DRR, ILD, and interaural coherence (IC; Hassager, Wiinberg, & Dau, 2017; Keidser et al., 2006). Nonetheless, it has been shown that HI listeners may get accustomed to those distortions, since their performance in sound localization is not much affected in either the horizontal plane (Courtois, Lissek, Estoppey, Oesch, & Gigandet, 2018a; Keidser et al., 2006; Korhonen, Lau, Kuk, Keenan, & Schumacher, 2015). Akeroyd (2010) measured the psychometric functions of ADP on mild-to-profound HI listeners, who were all experienced users of HAs. No significant effect of WDRC was found on the perception of distance. Acclimatization to non-linear amplification strategies was suggested as one possible factor that might have accounted for this finding.

In the study reported by Wiggins and Seeber (2012), NH listeners experienced audio stimuli being processed with and without WDRC. The participants were asked to rate four different spatial attributes, including sound externalization. WDRC had no general effect on externalization perception of speech stimuli, but degradations were found in certain individual results. Catic, Santurette, Buchholz, Gran, and Dau (2013) studied the contribution of ILD fluctuations on externalization perception. They provided NH listeners with multiple stimuli in which the natural fluctuations of ILD were preserved or compressed. The compression of ILD fluctuations, as a result of WDRC, was shown to be detrimental for sound externalization. Hassager et al. (2017) investigated the effect of WDRC processing on the perceived sound externalization on NH and mild-to-severe HI listeners, including three HA users. The results indicate that WDRC induced a degradation of externalization in both NH and HI listeners relative to linear amplification, when the compression followed the spatialization processing. An ideal configuration where WDRC was applied before spatialization could preserve the perception of externalization. Contrary to the aforementioned study by Akeroyd (2010), the listeners were not used to non-linear amplification in the study conducted by Hassager et al., which may account for the different conclusions drawn by these two studies.

The perception of auditory distance and externalization with HAs is known to play a major role in the judgment of sound naturalness, an attribute that is crucial for satisfaction with hearing instruments (Akeroyd, 2014; Gatehouse & Noble, 2004; Kochkin, 2010). Wireless microphone systems that work with HAs are a typical application where sound naturalness is missing. In those systems, the speech signal that is captured by the remote microphone located in the vicinity of the speaker (e.g., body-worn device, table microphone) is transmitted wirelessly and reproduced identically to the left and right HAs of the user. This diotic rendering bypasses the spatial cues that are required for sound localization as well as for distance estimation (Courtois, Marmaroli, Lissek, Oesch, & Balande, 2015; Selby, Weisser, & MacDonald, 2017). Over the past few years, new solutions have been proposed to localize the speaker and spatialize the voice accordingly in the HAs (see, e.g., Courtois et al., 2015; Farmani, Pedersen, Tan, & Jensen, 2017). In Courtois, Lissek, Estoppey, Oesch, and Gigandet (2018b), a panel of HI listeners evaluated our “spatial hearing restoration feature” (SHRF), which implements a binaural synthesis technique based on generic HRIRs, that is, without room-related information. The majority of users appreciated the processing, but certain listeners pointed out a persistent lack of naturalness due to sound internalization. This led to the development of a novel feature including early reflections, referred to as the SHRF + ER and evaluated in this article. By the same token, Kates, Arehart, Muralimanohar, and Sommerfeldt (2018) proposed a technique combining a structural binaural model with a simple virtual image model to generate early reflections in remote microphone systems. This additional simulated reverberation was successful in enhancing externalization.

This study had three main objectives. The first was to investigate the perception of auditory distance of indoor and nearby sound sources in HI listeners having hearing losses more severe than has been tested in most previous studies. The second goal was to assess the effect of WDRC on ADP with listeners having long-term experience of HAs and non-linear amplification with stimuli that were equalized in level. The final goal of this study was to evaluate the ability of the novel SHRF + ER algorithm to restore a sense of externalization and auditory distance with wireless microphone systems, as an attempt to enhance the naturalness of sound delivered by such systems.

Methods

Setup

The experiments took place in a reverberant classroom (RT60 = 530 ms, volume = 177 m3), which is a typical environment where wireless microphone systems are used. Three loudspeakers (Genelec 1029 A) were mounted at 67 cm (Loudspeaker 1), 113 cm (Loudspeaker 2), and 200 cm (Loudspeaker 3) apart from the listenersk position, as depicted in Figure 1. They were located at an azimuth of 30° on the right side of the listeners and arranged in slightly increasing heights, so that the closest loudspeaker did not hide the furthest ones.

Setup of the experiments in a reverberant classroom. The loudspeakers were arranged in slightly increasing heights so that all of them were visible by the listeners.

The listeners sat in the center of the room. They were asked to look straight ahead, and their head was immobilized by a chin rest, which was used for all experiments. Measurements of individual BRIRs and headphone-to-ear impulse responses (HPIRs) were carried out using in-the-ear binaural microphones (the sound professionals MS-TFB-2) prior to the experiments. The BRIRs of the three loudspeakers were thereby obtained for every participant. During the training, the three pairs of BRIRs were used to generate stimuli spatialized at Loudspeakers 1 to 3. During the experiments, only the pairs of BRIRs corresponding to Loudspeaker 3 were used. The loudspeakers only served as visual references. A probe microphone measurement unit (Aurical FreeFit) was used to measure the real ear aided response (REAR) at the left and right ears of the HI listeners, using a speech-shaped noise stimulus played at 65 dB SPL through the integrated loudspeaker of the unit.

During the experiments, the stimuli were delivered through a soundcard (M-Audio M-Track Eight) and a pair of open headphones (Audeze LCD2C), with a dedicated amplifier (Lake People HPA RS 02 Reference Series). This configuration allowed to reach reproduction levels higher than 130 dB SPL with low distortion. 1 A limiter (Samson S-Com plus) was inserted at the input of the amplifier to ensure that the output level never exceeded 132 dB SPL at any frequency, according to the regulations of the Food and Drug Administration (2011).

Stimuli

The stimuli were 10-s excerpts of male speech obtained by concatenating multiple sentences of the French HINT database (Collège National d'Audioprothèse, 2006). They were processed with five different methods:

Reference: The speech sequence was filtered by the individual 740-ms BRIRs measured with the furthest loudspeaker (Loudspeaker 3). Anchor: The speech sequence was left unprocessed and reproduced diotically. ER60: The speech sequence was filtered by a truncated version of the individual BRIRs corresponding to Loudspeaker 3. The original BRIRs was truncated to 60 ms and a Hann-shaped falling ramp was applied on the last 1 ms. This way, only the contributions of the direct sound and the early reflections were included. SHRF: The speech sequence was processed by the method implemented in the SHRF. It is based on a minimum-phase representation of 128-sample HRIRs at 22.05 kHz, measured on a KEMAR (G.R.A.S.) in an anechoic chamber. The pair of spatial filters corresponding to 30° with respect to the listeners was selected. A pure delay of 210 μs was inserted between the left and right channels to simulate the ITD. More detail about that feature can be found in Courtois, Marmaroli, Lissek, Oesch, and Balande (2016). SHRF + ER: The binaural stimuli obtained from the SHRF was mixed with the output of a proprietary algorithm that attempts to extract the early reflections present in the acoustic signals captured by the left and right HA microphones. BRIRs measurements of a pair of HAs (Phonak Bolero™ Q) worn by a KEMAR at the listener’s position were conducted beforehand in the classroom, in order to generate the left and right HA signals corresponding to a sound played at Loudspeaker 3. These signals were used as inputs to the algorithm.

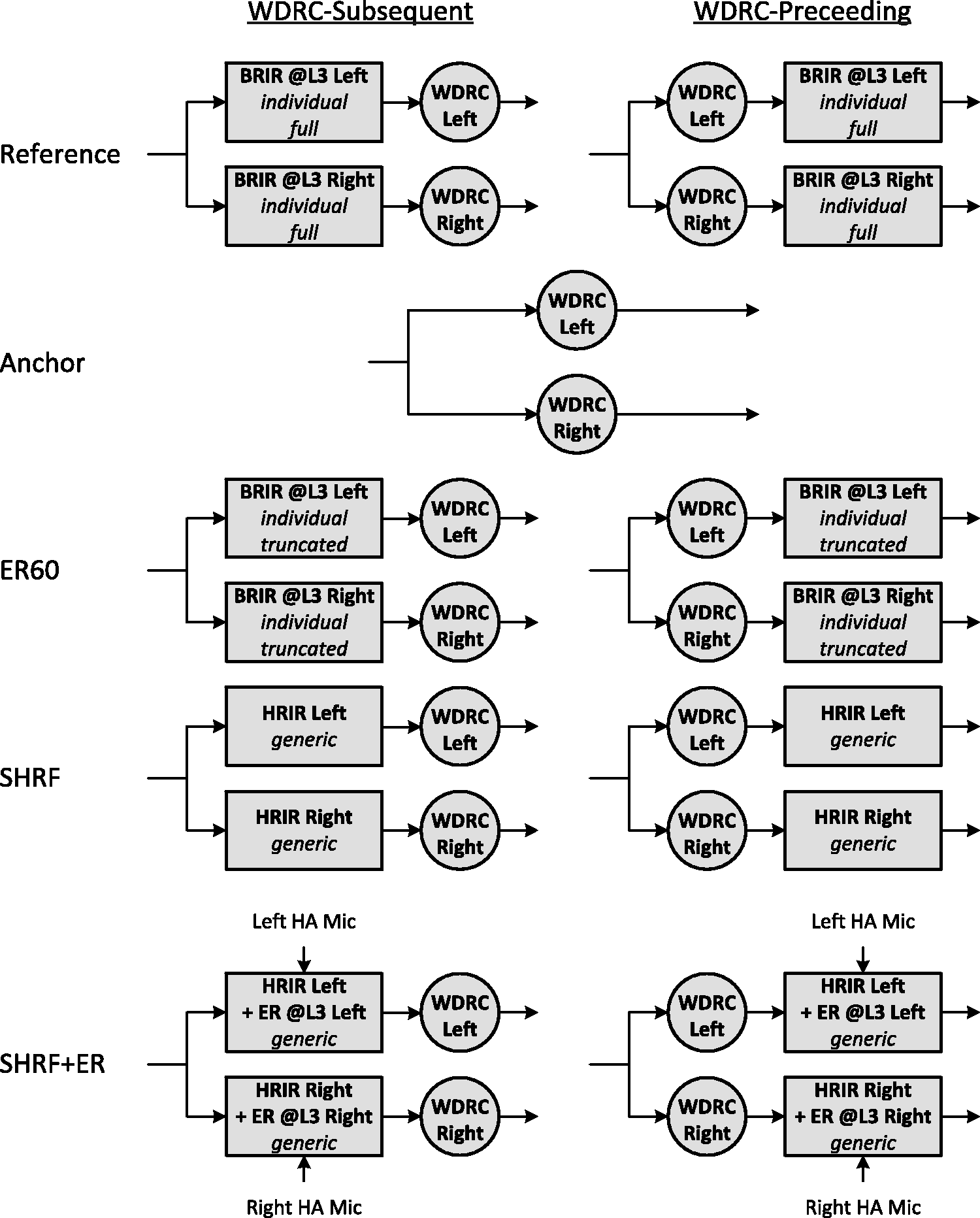

The HI listeners did not wear their HAs during the experiments. Instead, their individual WDRC parameters were exported from the fitting software (Phonak TargetTM, Sonova, Stäfa, CH) and imported in Matlab™ (The Mathworks, Natick, MA) to simulate the WDRC processing. The HA fittings of every listener could significantly differ from the prescribed manufacturer fittings due to the individual fine tuning performed by the audiologist over the years. In Experiment 1 (denoted as WDRC-Subsequent condition), the WDRC was acting after the binaural synthesis. This configuration corresponds to a realistic scenario of HA use, in which audibility is optimized at the cost of spatial cue distortions. In Experiment 2 (denoted as WDRC-Preceding condition), the WDRC was applied first and followed by the auralization. Although this configuration is ideal for accurate spatial reproduction, it is unrealistic—the dry signal is not available in practice—and may impair the audibility and listening comfort of the HI listeners. In both experiments, the WDRC system was acting independently in the left and right channels. The block diagrams of the generation of the five stimuli in the WDRC-Subsequent and WDRC-Preceding conditions are shown in Figure 2. For NH listeners, a single experiment was conducted with no WDRC included in the audio chain.

Block diagrams of the five stimuli in the WDRC-Subsequent (left panel) and WDRC-Preceding (right panel) conditions. The diotic stimulus was identical in both conditions. The Reference and ER60 stimuli were spatialized with the individual BRIRs of the participants corresponding to Loudspeaker 3. The SHRF and SHRF+ER stimuli were processed with generic anechoic HRIRs measured on a KEMAR. The left and right audio signals simulating the HA microphone signals and used as inputs of the SHRF+ER algorithm were generated with prior generic BRIRs measured in the classroom on a KEMAR wearing a pair of behind-the-ear HAs, when the sound was played through Loudspeaker 3. ER60 = first 60-ms early reflections; BRIR = binaural room impulse response; HA = hearing aid; HRIR = head-related impulse response; L3 = Loudspeaker 3; SHRF = spatial hearing restoration feature; SHRF+ER = SHRF plus early reflections; WDRC = wide dynamic range compression.

The root mean square values averaged over the left and right channels of the five stimuli were equalized, in order to limit judgments of distance resulting from intensity differences between the stimuli. The stimuli were played at 65 dB SPL to the NH listeners. For the HI listeners, the reproduction level was set according to their individual REARs, taking as a reference the level measured at 1 kHz. Reproduction levels from 67 to 120 dB SPL were delivered. All stimuli were low-pass filtered at 6.5 kHz to simulate the effective bandwidth of HAs and wireless microphone systems, and individual headphones equalization was applied.

Procedure

The experiments were conducted during a single session of approximately 45 min for NH participants and 90 min for HI participants. The experiments were preceded by a training procedure, in which the listeners were able to freely listen to a speech sequence spatialized as if it was played from Loudspeaker 1, 2, or 3. They were not aware that the sounds were actually reproduced through the headphones and were asked to confirm that their auditory impression matched the physical locations of the loudspeakers. The listeners were encouraged to repeat and compare the stimuli as long as they wished to get used to the acoustic properties of close and far sounds.

The procedure conducted in the experiments was based on a modified multiple stimulus with hidden reference and anchor (MuSHRA) test (International Telecommunications Union, 2001). The hidden reference was the stimulus spatialized at Loudspeaker 3, whereas the diotic stimulus was used as the hidden anchor. The listeners were provided with a graphical user interface showing five “play” buttons and five sliders representing a continuous scale between 0 and 100 with five displayed steps: (0) “In the center of my head,” (20) “At the boundary of my head,” (40) “At Loudspeaker 1,” (60) “At Loudspeaker 2,” and (80) “At Loudspeaker 3.” The range between 80 and 100 was marked as “Further than Loudspeaker 3.” The listeners were instructed to answer the question “How far do you perceive each stimulus from your position?” by comparing and rating the five stimuli. They were informed that the stimuli could be manipulated in such a way that sounds may be perceived between loudspeakers, and they were therefore encouraged to use the entire scale of the slider. The listeners were instructed to ignore other perceptual attributes and to concentrate on distance only. The task was repeated over several runs until one of the two following stop conditions was reached: Either the differences between the reported scores of distance (scale from 0 to 100) were lower than 20 over three successive runs (denoted as success stop condition) or a maximum number of six successive runs was achieved (denoted as default stop condition).

Subjects

In this study, 10 NH and 20 HI listeners participated. The HI listeners presented a congenital or prelingual moderate-to-profound hearing loss, as defined by the World Health Organization (2016). An additional category “Profound + ” was introduced for the listeners having a pure-tone average (PTA, over 0.5–4 kHz) at the best ear (BE) higher than 100 dB HL. The HI listeners were all regular users of bilateral Phonak behind-the-ear HAs commercialized after 2012 and were all patients of the same audiologist, who took part in this research, for more than 10 years. Their hearing loss was symmetric with PTAs that did not differ by more than 15 dB HL between the left and right ears, but could be fitted with asymmetrical HA settings due to the fine tuning performed by the audiologist. Fourteen HI listeners were current or past users of wireless microphone systems.

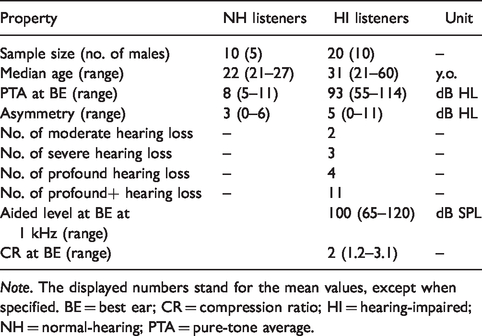

The audiograms at the BE of all NH (blue curves) and HI (orange-to-red curves) listeners are reported in Figure 3. The thick blue and red lines represent the average audiogram of the NH and HI participants, respectively. The black thick dotted line corresponds to the maximum output level of the audiometer used in the study. A hearing threshold equal to the maximum output level plus 5 dB was assigned to any frequency at which the listeners reported no response, and these frequencies were considered as dead regions (Moore, 2007a). Twelve HI participants presented at least one dead region at their best or worst ears. Table 1 reports statistics related to the two groups of listeners. All participants provided written informed consent and were paid for their participation.

Audiograms (best ear) of the NH (blue lines) and HI (orange-to-red lines) listeners. The thick blue dotted line represents the average audiogram in the NH group, while the thick red dotted line is the average audiogram in the HI group. The black thick dotted line corresponds to the maximum output level of the audiometer. The error bars represent the standard error.

Statistics Related to the Two Groups of Listeners.

Note. The displayed numbers stand for the mean values, except when specified. BE = best ear; CR = compression ratio; HI = hearing-impaired; NH = normal-hearing; PTA = pure-tone average.

Binaural Cues

In this study, the use of intensity as a cue for distance estimation was substantially limited because all the stimuli were level-equalized. In addition, it was practically not possible to calculate the actual DRR experienced by the HI participants because we could not access the details of the WDRC implementation (time–frequency gains, compression thresholds, time constants, etc.). This would be necessary to investigate the effect of the DRR on the rating of distance, as it was performed by Hassager et al. (2017). Therefore, the analysis of the distance cues presented to the listeners focused exclusively on the binaural cues, in order to look for any correlation between the WDRC parameters, the reported ADP and the binaural cues.

The short-term ITD, ILD, and IC were estimated a posteriori on the stimuli delivered to each participant. The cues were averaged over 46-ms frames with speech content only (silent parts were discarded not to bias the estimations). The ITD was computed in the time domain as the delay maximizing the short-term low-pass filtered (Fc = 1 kHz) cross-correlation between the left and right channels. The ILD and IC were both calculated in the short-time Fourier transform domain. The ILD was computed as the ratio between the left and right smoothed powers between 1.5 and 4 kHz. An exponential smoother (first-order infinite-impulse response filter) with a time constant of 205 ms (sample-based) at 44.1 kHz was used. The standard deviation of the ILD was also determined, as it has been shown that the ILD fluctuations are related to the perception of externalization (Catic et al., 2013). The magnitude squared IC was computed as suggested by Vesa (2009), with the same time constant as for the ILD.

Statistical Analysis

The statistical analysis was performed using SPSS Statistics™ (IBM, Armonk, NY) on data split into four categories: audiological data (PTA at BE, PTA in the low and high frequencies, asymmetry of hearing loss, slope of hearing loss, etc.), HA-related data (compression ratios [CRs], asymmetry of CRs, REAR, etc.), collected ADP, and binaural cues. The data related to the reported auditory distance were analyzed with non-parametric statistics only, because data from MuSHRA-type tests are known to violate most of the assumptions associated with parametric analysis (Mendonça & Delikaris-Manias, 2018). The non-ADP-related data were analyzed with parametric statistics if the sample size was greater than 10 and once normality was checked with visual inspections combined with Shapiro–Wilk tests. The significance level was set to .05.

The first run of both experiments was considered as a training run and was thus excluded from the collected data. Ten listeners (five NH and five HI) reached the success stop condition. For the other listeners (default stop condition), the three runs minimizing the variance of the distance ratings of the Reference and Anchor were selected as effective runs for the analysis, that is, three runs over five were retained. The listeners who perceived the Reference as being closer than the Anchor were discarded for the analysis, except for correlation analysis. This concerned two participants with a Profound + hearing loss in the WDRC-Subsequent condition and an additional participant (Profound + ) in the WDRC-Preceding condition. Stimuli were considered as externalized if the associated ADP score was higher than 25.

Results

Perception of Auditory Distance

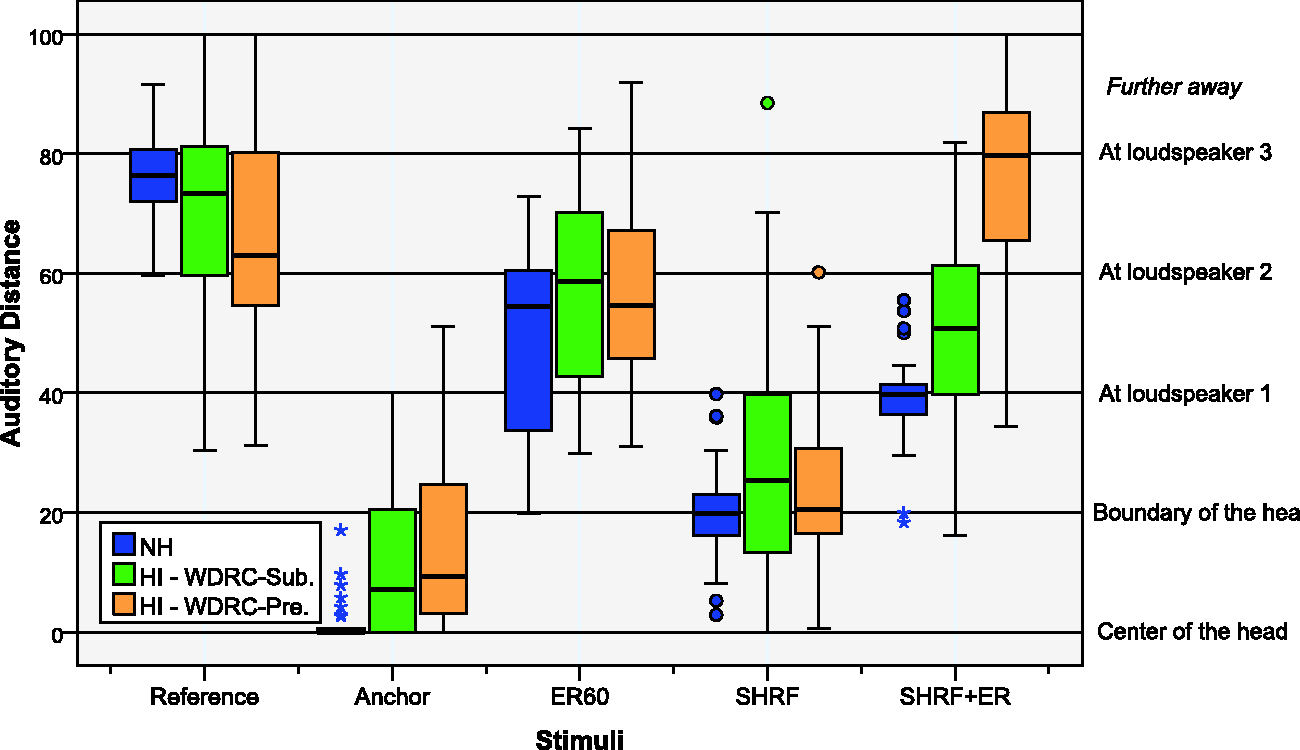

Figure 4 shows the distributions of the perceived auditory distance of the five stimuli, as reported by the NH (blue boxes) and HI listeners in the WDRC-Subsequent (green boxes) and WDRC-Preceding (orange boxes) conditions.

Distribution of the reported auditory distance of the five stimuli by the NH (blue boxes) and HI listeners in the WDRC-Subsequent (green boxes) and WDRC-Preceding (orange boxes) conditions. The dark line in the middle of the boxes represents the median, while the bottom and top lines correspond to the 25th and 75th percentiles, respectively. The T-bars are the whiskers (correspond to the min and max values within 1.5 times the interquartile range). The points are outliers and the stars indicate extreme outliers (values more than three times the height of the box). ER60 = first 60-ms early reflections; HI = hearing-impaired; NH = normal-hearing; SHRF = spatial hearing restoration feature; SHRF + ER = SHRF plus early reflections.

In the NH group, significant differences of ADP were found between the five stimuli from a nonparametric Friedman test among repeated measures (

Results of the Post Hoc Dunn–Bonferroni Tests Performed to Compare the ADP of the Five Stimuli in the NH Group.

Note. Bonferroni adjustment for multiple comparisons is applied on the p values. The significant effects are in red (

In the WDRC-Subsequent condition (HI group), a nonparametric Friedman test among repeated measures showed significant differences between the five stimuli (

Results of the Post Hoc Dunn–Bonferroni Tests Performed to Compare the ADP of the Five Stimuli in the HI Group in the WDRC-Preceding Condition.

Note. Bonferroni adjustment for multiple comparisons is applied on the p values. The significant effects are in red (

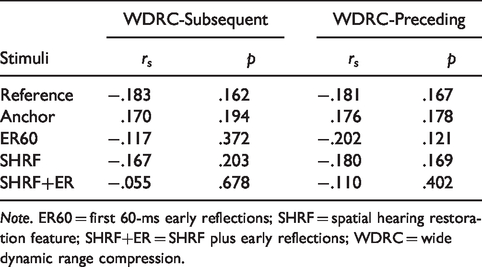

Table 4 reports the Spearman's correlation coefficients, and the associated p values, between the PTA at the BE of the HI listeners and the perceived auditory distance for the five stimuli. Figure 5 depicts the ADP of the Reference (left panel) and the Anchor (right panel) as a function of the PTA at the BE, in the WDRC-Subsequent condition. A significant correlation was found between the ADP of the Anchor and the PTA (rs = .515, corresponding to a moderate correlation after the classification proposed by Mukaka, 2012). This indicates that HI listeners with stronger hearing loss tended to perceive the diotic stimulus further away. A low but significant correlation was also found between the ADP of the Reference and the PTA (rs = −0.354, p = .005), revealing a tendency for listeners with more pronounced hearing loss to rate the Reference closer than Loudspeaker 3. No correlation was found between the PTA and the ADP of the three other stimuli in the WDRC-Subsequent condition.

Spearman's Correlation Coefficients, and Associated p Values, Between the PTA at the BE of the HI Listeners and the ADP of the Five Stimuli in the WDRC-Subsequent and the WDRC-Preceding Conditions.

Note. The significant correlations are in red (

ADP of the Reference (left panel) and Anchor (right panel) stimuli as reported by the HI listeners in the WDRC-Subsequent condition, as a function of their PTA. BE = best ear; PTA = pure-tone average.

In the WDRC-Preceding condition (HI group), a non-parametric Friedman test among repeated measures revealed significant differences between the five stimuli (

Results of the Post Hoc Dunn–Bonferroni Tests Performed to Compare the ADP of the Five Stimuli in the HI Group in the WDRC-Subsequent Condition.

Note. Bonferroni adjustment for multiple comparisons is applied on the p values. The significant effects are in red (

A significant moderate Spearman's correlation (rs = .648) was found between the PTA and the ADP of the Anchor in the WDRC-Preceding condition (Table 4). However, no correlation was found between the PTA and the reported distance of the Reference suggesting that the degree of hearing loss did not have an influence on the ADP of the Reference when the WDRC acted before the auralization. No correlation was either found between the PTA and the perceived distance of the three other stimuli.

Analysis of the Binaural Cues

The left panel of Figure 6 shows the distribution of the ILD, as computed within the stimuli of the NH (blue boxes) and HI listeners in the WDRC-Subsequent (green boxes) and WDRC-Preceding (orange boxes) conditions. The ILDs experienced with the SHRF (5.4 dB) and SHRF + ER (4.5 dB) stimuli were identical for all NH listeners and imposed by the corresponding algorithms. No significant difference was found between the ILD distributions of the NH and HI listeners for the Reference and ER60 stimuli, neither in the WDRC-Subsequent condition (non-parametric Mann–Whitney U test, Reference: U = 68, p = .159, ER60: U = 69, p = .173) nor in the WDRC-Preceding condition (Reference: U = 58, p = .065, ER60: U = 61, p = .086).

Distribution of the ILD (left panel) and IC (right panel) of the five stimuli experienced by the NH (blue boxes) and HI listeners in the WDRC-Subsequent (green boxes) and WDRC-Preceding (orange boxes) conditions. The ILD and IC are obtained for each stimulus by averaging their short-term observations on frames of 46 ms. ER60 = first 60-ms early reflections; HI = hearing-impaired; IC = interaural coherence; ILD = interaural level difference; NH = normal-hearing; SHRF = spatial hearing restoration feature; SHRF+ER = SHRF plus early reflections.

In the HI group, the comparison between the WDRC-Subsequent and WDRC-Preceding conditions revealed a significant reduction of the ILD when the WDRC followed the auralization processing, for the Reference: paired-sample t tests: t(19) = −3.154, p = 0.005; ER60: t(19) = −2.672, p = .015; SHRF: t(19) = −8.247,

Table 6 reports the Spearman's correlation coefficients, and their associated p values, between the absolute value of the ILD and the perceived auditory distance for the five stimuli. Table 7 reports the Spearman's correlation coefficients, and their associated p values, between the standard deviation of the ILD and the perceived auditory distance for the five stimuli. The discrepancies of the Anchor related ILD distributions observed between the NH and HI listeners could be related to the individual and asymmetric WDRC fittings of the HI participants. It was hypothesized that the further distances reported by the HI listeners with respect to the NH listeners were due to the nonzero ILDs found in the Anchor. However, there was no correlation between the ADP of the Anchor and the associated absolute ILD values, arguing against this hypothesis. More generally, Tables 6 and 7 suggest that the ILD had a weak effect on the ADP of the listeners, since all correlations between the reported ADP and the computed ILD were negligible.

Spearman's Correlation Coefficients, and Associated p Values, Between the ADP and the Absolute Value of the ILD Experienced by the HI Listeners for the Five Stimuli in the WDRC-Subsequent and the WDRC-Preceding Conditions.

Note. ER60 = first 60-ms early reflections; SHRF = spatial hearing restoration feature; SHRF+ER = SHRF plus early reflections; WDRC = wide dynamic range compression.

Spearman's Correlation Coefficients, and Associated p Values, Between the ADP and the Standard Deviation of the ILD Experienced by the HI Listeners for the Five Stimuli in the WDRC-Subsequent and the WDRC-Preceding Conditions.

Note. ER60 = first 60-ms early reflections; SHRF = spatial hearing restoration feature; SHRF+ER = SHRF plus early reflections; WDRC = wide dynamic range compression.

The right panel of Figure 6 depicts the distributions of the computed IC for the NH (blue boxes) and HI listeners in the WDRC-Subsequent (green boxes) and WDRC-Preceding (orange boxes) conditions. All NH listeners experienced IC values equal to 1 Anchor 0.74 (SHRF stimulus), and 0.65 (SHRF + ER stimulus). The corresponding average ICs observed in the HI group were 0.95, 0.57, and 0.52, respectively (WDRC-Subsequent condition). HI listeners also experienced significantly lower ICs than NH listeners with the Reference (WDRC-Subsequent condition: U = 4,

Table 8 reports the Spearman's correlation coefficients, and their associated p values, between the IC and the perceived auditory distance for the five stimuli. Contrary to the ILD, three significant correlations were found between the IC and the ADP, although two of them were negligible (< 0.3). In the WDRC-Subsequent condition, there was a low negative correlation between the ADP of the SHRF + ER stimulus and the associated IC values. Listeners submitted to lower IC tended to perceive the SHRF + ER stimulus further.

Spearman's Correlation Coefficients, and Associated p Values, Between the ADP and the IC Experienced by the HI Listeners for the Five Stimuli in the WDRC-Subsequent and the WDRC-Preceding Conditions.

Note. The significant correlations are in red (

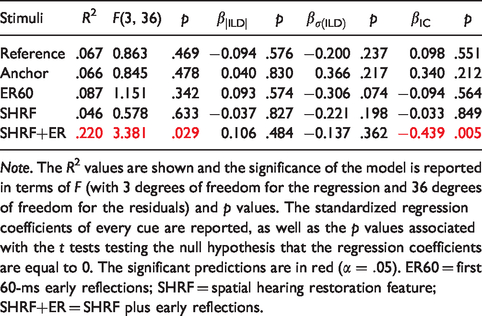

No difference of ITD was found between the NH and HI listeners and between the WDRC-Subsequent and WDRC-Preceding conditions. No correlation was found between the ITD of the various stimuli and the associated ADP either. Multiple linear regressions were carried out to investigate the relationship between the ADP of the five stimuli and the values of the corresponding binaural cues (absolute value and standard deviation of ILD, and IC) over both WDRC-Subsequent and WDRC-Preceding conditions. Table 9 reports the results of this analysis and provides the coefficients of determination, the significance of the regression, as well as the standardized regression coefficients corresponding to every cue and their associated significance. As there is no general consensus on whether the unstandardized or the standardized regression coefficients should be reported, the standardized regression coefficients were chosen because the ILD, standard deviation of ILD and IC do not have the same unit and scale, and the standardized coefficients are therefore easier to interpret (Nieminen, Lehtiniemi, Vähäkangas, Huusko, & Rautio, 2013).

Results of the Multiple Linear Regression Between the ADP of the Five Stimuli and the Associated Absolute Value of ILD, Standard Deviation of ILD (

Note. The R2 values are shown and the significance of the model is reported in terms of F (with 3 degrees of freedom for the regression and 36 degrees of freedom for the residuals) and p values. The standardized regression coefficients of every cue are reported, as well as the p values associated with the t tests testing the null hypothesis that the regression coefficients are equal to 0. The significant predictions are in red (

For the Reference, Anchor, ER60, and SHRF stimuli, less than 9% of the variance in the ADP judgments could be explained by the combination of the ILD and IC cues, which represents rather small effect sizes after the classification reported by Sullivan and Feinn (2012). For the SHRF + ER, the combination of the three cues accounted for 22% of the variance in ADP judgments, corresponding to a medium effect size. The reported standardized regression coefficients suggest that the IC is the strongest predictor. An additional linear regression was carried out to investigate the relationship between the ADP of the SHRF + ER stimulus and the IC. A significant regression equation was found,

Hearing Aid Fittings

In this study, the HI participants experienced their individual HA fittings in terms of amplification (identical reproduction level than HAs through the headphones) and compression (similar WDRC parameters). An a posteriori analysis of the HA fittings revealed some significant correlations between the PTAs of the HI listeners and the aided level measured at 1 kHz at their left and right ears (Pearson correlations at the left ear: r = .749,

Figure 7 depicts the CRs of the WDRC as a function of the PTAs at the best (dark circles) and worst (light crosses) ears. No significant correlation was found between the CRs and PTAs (Spearman's correlations at the BE: rs = .363 [low], p = .116, and at the worst ear: rs = .296 [negligible], p = .204), but the figure shows that more aggressive compression was only given to listeners with higher PTAs. Concurrently, it was found that HI listeners with stronger hearing loss experienced significantly lower IC in the WDRC-Subsequent condition (Reference: rs = −.570 [moderate], p = .009). Lower IC values were also associated with higher CRs in this configuration (Reference: rs = −.509 [moderate], p = .022). This suggests that the heterogeneity of ADP of the Reference reported within the HI group might be related to the fitted amount of compression, when the WDRC followed the auralization processing. Nonetheless, no direct correlation could be found between the perceived distance of the Reference and the CR at the BE (rs = .014 [negligible], p = .918).

Compression ratios of the fitted WDRC as a function of the PTA at the best (left panel) and worst (right panel) ears of every HI participants. PTA = pure-tone average.

More surprisingly, there was no correlation between the difference of PTAs between the left and right ears and the asymmetry of the WDRC settings (ratio of the CRs at both ears; rs = −.126, p = .597). There was no correlation between the asymmetry of the hearing loss and the difference of aided level measured at the left and right ears either (rs = −0.071, p = .765).

Discussion

ADP and Hearing Impairment

The first objective of this study was to investigate the perception of auditory distance in moderate-to-highly profound HI listeners using speech stimuli that were equalized in level. Individualized virtual acoustics over headphones was used in a reverberant environment, with auditory and visual stimulation matching together to maximize the realism of the binaural synthesis (Gil-Carvajal et al., 2016). A large majority of the NH and HI participants orally reported a convincing auditory illusion and was surprised to learn that no stimulus was actually played through the loudspeakers after the experiments. Due to the presumable occurrence of a “proximity image” effect (Gardner, 1968), the estimated auditory distance of certain stimuli might have been influenced by the physical distance of the three loudspeakers. To limit this effect, the listeners were made aware that the stimuli would be processed in such a way that they could appear at any distance, and all of the participants rated certain stimuli between the loudspeakers. The wide distributions of the reported ADP suggest that the “proximity image” effect was rather weak.

The results of the NH listeners are in accordance with the expectations: Diotic stimuli were perceived in the center of the head, while the distance of the stimuli spatialized 2 m away (Loudspeaker 3) was accurately estimated. The stimuli convolved with a 60-ms truncated version of the individual BRIRs of Loudspeaker 3 were mainly perceived between Loudspeaker 1 (0.66 m) and Loudspeaker 2 (1.33 m), which confirms that early reflections are sufficient to provide sound externalization (Begault et al., 2001; Bronkhorst & Houtgast, 1999; Catic et al., 2015; Völk, 2009). Listeners from both NH and HI groups orally reported that the distance of closer sounds (perceived in the head or at Loudspeaker 1) was easier to estimate than further ones (perceived beyond Loudspeaker 2).

Most of the HI participants were able to discriminate closer and further sounds. The results from Ohl et al. (2010) and Boyd et al. (2012) showed that mild-to-moderate HI listeners experience a contracted perception of distance, which was also found in this study, in moderate-to-highly profound HI listeners, especially when audibility was not optimized. Contrary to the NH listeners, the HI participants generally perceived the Reference stimulus closer than Loudspeaker 3, while the diotic stimuli was hardly ever rated in the center of the head and could even be externalized. The externalization of the diotic rendering was more pronounced in the listeners with higher PTA, suggesting that the perception of auditory distance is more compressed with increasing hearing loss.

In this study, the various stimuli were level-equalized, which made the distance estimation based on intensity more difficult. Although the NH listeners rated the stimuli convolved with the truncated BRIRs significantly closer than the Reference such a difference could not be found in the HI group. In the WDRC-Subsequent condition, five (moderate-to-highly profound) listeners repeatedly perceived the ER60 further than or similar to the Reference and this was the case for 10 listeners in the WDRC-Preceding condition, in which the original DRR was yet preserved. This supports the observation of Akeroyd et al. (2007), who suggested that certain HI listeners had a limited access to the DRR cue to judge auditory distance. On the other hand, it is worth mentioning that six HI participants spontaneously reported to use the amount of echo perceived in the stimuli to estimate the associated distance. Boyd et al. (2012) hypothesized that HI listeners rely more on the ITD and DRR than NH listeners for the perception of distance. The present results rather suggest that the accurate preservation of the DRR does not bring a significant benefit to estimate auditory distance, when the ITD is kept unchanged by the amplification strategy.

Effect of WDRC on ADP

The majority of current HAs incorporate non-linear amplification strategies, among which WDRC is one of the most common. WDRC is highly relevant for severe-to-profound HI subjects, who present an auditory dynamic range that is substantially reduced compared to NH listeners (Moore, 2007b). Since this processing essentially decreases the difference of level between weak and loud sounds, a degradation of the performance in auditory distance estimation can be expected. Furthermore, WDRC is known to distort various spatial cues related to ADP, such as the DRR, ILD, and IC. However, this study does not show any detrimental effect of WDRC on the perception of auditory distance. This is in agreement with the conclusions drawn by Akeroyd (2010) on presbyacusic listeners. Comparing the results obtained in the NH group with those of the HI listeners in the WDRC-Subsequent and WDRC-Preceding conditions, it appears that certain listeners have a less compressed perception of auditory distance when WDRC acts after the spatialization. Indeed, these listeners tend to perceive the stimulus auralized at Loudspeaker 3 with a better accuracy when audibility is optimized, despite the subsequent spatial cue distortions. In addition, a significant correlation was found between the PTA of the HI participants and the ADP of the Reference in the WDRC-Subsequent condition. Such a correlation did not exist in the WDRC-Preceding condition, where most of the listeners underestimated the distance of the Reference. This suggests that the participants with lower hearing loss took a greater benefit of WDRC to estimate distance based on auditory cues.

Hassager et al. (2017) proposed a “spatially ideal compression,” in which the non-linear amplification acted before the spatialization processing. This scheme was shown to preserve the spatial perception (azimuth, distance, width) of HI listeners in the same way as linear amplification. In this study, the WDRC-Preceding condition aimed at mimicing this configuration, by inserting WDRC before auralizing the stimuli. This failed to improve the performance in ADP of the HI participants. On the contrary, it seems that the optimization of audibility was more beneficial than the preservation of the distance-related cues for certain listeners. Nonetheless, it is important to point out the main differences between this study and the one of Hassager et al. First, the HI listeners involved in this study had moderate-to-highly profound hearing loss, while Hassager et al. conducted their experiments on mild-to-moderate HI listeners. It is likely that the optimization of audibility is more crucial for listeners with a stronger degree of hearing loss, who may have encountered difficulties to hear the stimuli in the WDRC-Preceding condition. Indeed, eight listeners reported their impression that the stimuli were further and less loud in the second experiment, although the same equalization of level was achieved in both conditions. Second, the HI listeners of this study had a long-term experience with the fittings of WDRC they experienced during the experiments. In addition, all of them presented a congenital or prelingual hearing loss, that is, they had a limited, if not non-existent experience of normal hearing, contrary to the subjects tested by Hassager et al. Finally, there is a procedural difference between both studies. Hassager et al. provided listeners with successive stimuli and asked them to sketch the associated locations. In this study, the listeners were submitted to a set of five concurrent stimuli that they could compare and were asked to focus on the distance attribute only. As emphasized by Akeroyd (2010), ADP evaluated on a relative basis may lead to different results than could be obtained with an absolute rating paradigm.

In accordance with the observations reported by, for example, Keidser et al. (2006), Catic et al. (2013), and Hassager et al. (2017), the WDRC distorted the ILD and IC of the processed stimuli and maintained the original ITD. WDRC was found to yield a strong reduction of the ILD, but there was no evidence that it decreased the natural ILD fluctuations in the stimuli including reverberation, which could explain why the distortions of ILD had no significant influence on the perception of distance (Catic et al., 2013). It is well established that the auditory system can adapt to distorted spatial cues for sound localization (Keidser et al., 2006; Korhonen et al., 2015; Mendonça, 2014), and it seems that a similar process takes place for auditory distance estimation. The IC values computed in the stimuli of the HI group were lower than those of the NH group, whatever the location of the WDRC in the audio chain. However, significant correlations between the IC, the CR, and the ADP were only found in the WDRC-Subsequent condition, suggesting that the perceptual effect of WDRC on the IC is more pronounced when WDRC is applied after the auralization processing. ADP, externalization, and IC are known to be interrelated (Kendall, 1995; Kim et al., 2010). Grimm et al. (2015) thereby reported that the DRR and the IC follow a similar decrease when the distance from the sound source increases, because reverberation tends to decrease the resemblance of the signals at both ears (Vesa, 2009). Catic et al. (2015) observed a strong correlation between the short-term IC of multiple stimuli and the corresponding externalization ratings. In this study, a weak negative correlation was found between the short-term IC and the ADP of the SHRF + ER stimulus, that is, further distances were associated with lower IC values, which is in agreement with the results reported by Catic et al. (2015) and Grimm et al. (2015). Furthermore, IC was found to account for a moderate amount of the variance in the ADP judgments of the SHRF-ER stimulus. Whitmer, Seeber, and Akeroyd (2014) reported a reduced sensitivity to the IC cue in presbyacusic listeners, which makes it uncertain whether the HI listeners were actually sensitive to this change of IC in the present experiments. This question clearly requires further research.

Effect of the SHRF + ER Feature on ADP

Previously, we have introduced the SHRF as a solution to restore spatial hearing with remote microphone systems that currently reproduce speech signals in a diotic way (Courtois et al., 2015, 2018a). Despite the benefits in sound localization brought by the feature, the SHRF was reported to lack naturalness, which is most probably related to the persistent internalization of the rendered voice (Courtois et al., 2018b). The externalization rates associated with the diotic stimulus show that the speech reproduced by current wireless microphone systems is localized inside the head for a large majority of listeners, including those with a long-term experience with these systems. In the NH group, the SHRF did not improve externalization. In the HI group, half of the reported ratings of the feature were externalized, suggesting that anechoic spatialization is enough to reach externalization in certain HI participants. However, the associated distances were often short and remained in the vicinity of the listenersd head.

It is perhaps unlikely that moving from generic to customized spatial filters would enhance the perception of externalization and distance, since the auditory system is able to adapt to other spatial mappings (Mendonça, 2014). Furthermore, it is unsure whether the use of individual BRIRs provides listeners with a better externalization (Begault et al., 2001). Alternatively, Kates et al. (2018) showed that the introduction of artificial early reflections in the auralized remote microphone signal was successful in improving the externalization perceived by NH and HI listeners. The new SHRF + ER algorithm is a real-time approach that adds early reflections from the signal picked up by the HA microphones. This study shows that this approach allowed to reach externalization rates higher than 90% in NH and HI listeners. No significant difference was found between the perception of the SHRF + ER stimuli and the optimal early reflections coming from the truncated version of the individual BRIRs.

It must be clarified here that the SHRF + ER feature was designed to operate in a real HA, that is, by processing the signals before the WDRC processing. In the WDRC-Preceding condition though, the input signals were modified by the WDRC beforehand, which resulted in a singular behavior of the algorithm that tended to excessively amplify the extracted early reflections with certain individual fittings. This most probably accounts for the large perceived distances associated with the SHRF + ER stimulus in the WDRC-Preceding condition.

Study Limitations

This study investigated the perception of distance of indoor and nearby sound sources. This was achieved by providing listeners with a diotic stimulus (distance of 0 m), a stimulus spatialized 2 m away from the listeners, and three stimuli processed to be perceived in between, with various degrees of externalization. All stimuli were equalized in level. Although the listeners trained themselves with stimuli spatialized at the physical location of the three loudspeakers prior to the experiments, they were instructed that the stimuli could originate from any distance during the experiments. It was out of the scope of this study to assess the ability of listeners to estimate the distance of real sound sources located between 0 and 2 m, which would require further attention. In addition, the impact of the initial acclimatization phase could not be specifically evaluated since no participant performed the experiment without experiencing the training procedure first. Given that this training phase provided listeners with a Reference visual-spatial frame in which they mapped their ADP during the experiments, it is likely that it has a substantial effect on the rating of distance. Nevertheless, special care was taken so that this mapping approached their regular visual-spatial frame by using their individual BRIRs and amplification fittings.

The analysis of the binaural cues, including the ITD, the ILD values and their fluctuations, and the IC, generally failed to explain the judgments of distance reported by the HI participants. Given the fact that the intensity of the various stimuli was equalized, the DRR appears to be the major remaining acoustic cue that could account for the collected ADP. Due to their individual fittings of compression, the HI participants experienced different DRRs, which could not be calculated in practice. Thus, this research only provides limited information on the acoustic cues used by the HI listeners to estimate the distance to auditory objects.

Unlike our previous study that investigated the perception of the SHRF on aided HI listeners (Courtois et al., 2018a), the participants were not tested with their fitted HAs in the current protocol. The individual WDRC fittings of the HI participants were simulated to generate customized binaural stimuli that were reproduced over headphones. Despite the low-pass filtering at 6.5 kHz that attempted to match the effective bandwidth of hearing instruments, it is clear that the sound rendered through headphones considerably differs from what can be delivered by HAs. Twelve HI listeners out of 20 incidentally reported to prefer the auditory sensation provided by their HAs and mentioned that the sound was less comfortable and too detailed with the headphones. The difference of acoustic coupling between headphones and ear molds was also pointed out by several participants, who mentioned their preference to receive the sound directly in the ear, as achieved by HAs. On the whole, only three HI listeners reported a similar sensation between headphones and HAs in this study. This may limit the generalization of the results to real HAs.

Since listeners were not wearing their HAs during the experiments, recordings of HA microphone signals were conducted on a KEMAR beforehand and served as inputs of the SHRF + ER algorithm. Thus, all the spatial processing achieved by the SHRF + ER feature was based on generic spatial cues only. In real applications, however, the generic HRIRs used for the spatialization of the remote microphone signal will be mixed with individual spatial information from the extracted early reflections of the HA microphone signals. This might result in a different perception of distance, which could not be evaluated in this study.

Conclusion

This article studied the question of the perception of auditory distance by moderate-to-profound HI listeners. These listeners were all regular users of hearing instruments fitted with non-linear amplification (WDRC), which is known to distort the cues used by the auditory system to estimate the distance to sound-emitting objects. The potential effect of WDRC was investigated with multiple level-equalized stimuli and listening configurations. In parallel, the efficiency of a new feature aiming to restore a sense of externalization and distance perception in wireless microphone systems was assessed. We found that:

A large majority of HI listeners, even those who presented a profound hearing loss, were generally able to discriminate between sounds associated with closer or further distances. However, HI listeners showed a more contracted perception of distance than the NH participants: They tended to underestimate the distance to the furthest sounds while they also frequently externalized stimuli that were delivered diotically. There was a trend for listeners with stronger hearing loss to exhibit a more pronounced contraction of ADP. There was no evidence that WDRC adversely affects the perception of auditory distance and externalization. On the contrary, certain listeners were shown to perform better in the condition where the stimuli were processed similarly as in their HAs, despite the resultant spatial cue distortions. The results suggest that it is more important for the perception of auditory distance to optimize the audibility of the speech signals using WDRC than to accurately render the distance-related cues in HI listeners with a severe-to-profound hearing loss. This is most probably due to the ability of the auditory system to adapt to a distorted spatial reproduction over time. It is possible for HI listeners to externalize the audio speech provided by wireless microphone systems if early reflections are added to the direct sound. Current systems reproduce the voice picked up by the remote microphone in the HAs in a diotic way, preventing users from localizing the speaker(s) based on their auditory perception. The novel spatialization feature processes the original speech signal to restore a sense of localization and distance perception. Although sound externalization was reached only 15% of the time with the current diotic reproduction, this rate rose up to 90% when the spatial hearing restoration feature including early reflections was activated.

Footnotes

Acknowledgments

The authors are grateful to all participants for their interest and participation to this study. The authors thank Dr. Peter Derleth, Dr. Simon Laurent, and Dr. Olaf Strelcyk for their fruitful and valuable comments and corrections on the manuscript, as well as Dr. Matthias Keller and Dr. Nicholas Herbert for their support for the statistical analysis. The authors also thank the reviewers and the associate editor for their fruitful and valuable comments and corrections.

Author Contributions

G. C., V. G., and E. G. conceived and designed the protocol. P. E. and G. C. recruited the participants. G. C., V. G., and P. E. conducted the experiments. G. C. performed the statistical analysis and wrote the manuscript. V. G., E. G., and H. L. revised the manuscript.

Declaration of Conflicting Interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: G. C. is a part-time employee of Sonova AG. V. G. and H. L. have no conflict of interest to declare. P. E. is a private audiologist, premium reseller of Phonak hearing instruments (a Sonova brand). E. G. is an employee of Sonova AG.

Ethical Approval

The protocol of this study has been approved by the Cantonal Office of Public Health of the Canton de Vaud (CER-VD), Switzerland and by the National Authorisation and Supervisory Authority for Drugs and Medical Products in Switzerland (Swissmedic). This study is registered under the identifier NCT03512951 on ![]() .

.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was partly funded by the Swiss Innovation Promotion Agency (Innosuisse) with grant number 25760.1 PFLS-LS and partly by Sonova AG, Stäfa, Switzerland.