Abstract

Dramatic results from recent animal experiments show that noise exposure can cause a selective loss of high-threshold auditory nerve fibers without affecting absolute sensitivity permanently. This cochlear neuropathy has been described as hidden hearing loss, as it is not thought to be detectable using standard measures of audiometric threshold. It is possible that hidden hearing loss is a common condition in humans and may underlie some of the perceptual deficits experienced by people with clinically normal hearing. There is some evidence that a history of noise exposure is associated with difficulties in speech discrimination and temporal processing, even in the absence of any audiometric loss. There is also evidence that the tinnitus experienced by listeners with clinically normal hearing is associated with cochlear neuropathy, as measured using Wave I of the auditory brainstem response. To date, however, there has been no direct link made between noise exposure, cochlear neuropathy, and perceptual difficulties. Animal experiments also reveal that the aging process itself, in the absence of significant noise exposure, is associated with loss of auditory nerve fibers. Evidence from human temporal bone studies and auditory brainstem response measures suggests that this form of hidden loss is common in humans and may have perceptual consequences, in particular, regarding the coding of the temporal aspects of sounds. Hidden hearing loss is potentially a major health issue, and investigations are ongoing to identify the causes and consequences of this troubling condition.

Introduction

It has been widely assumed that the main cause of sensorineural hearing loss is damage or dysfunction of the hair cells in the cochlea (Moore, 2007). The outer hair cells (OHCs), which effectively amplify the motion of the basilar membrane (BM), are more susceptible to damage than the inner hair cells (IHCs), which transduce the motion of the BM into electrical activity. Dysfunction or loss of IHCs leads to a loss of sensitivity and in extreme cases is associated with dead regions—regions of the cochlea with few functioning IHCs and for which information about BM vibration is not transmitted to the brain (Moore, Huss, Vickers, Glasberg, & Alcántara, 2000). Dysfunction or loss of OHCs leads to a loss of sensitivity, a reduction in frequency selectivity, and an abnormally steep growth in loudness with level.

Hearing ability is usually assessed in the clinic using pure-tone audiometry, which measures the smallest detectable levels of pure tones at several frequencies, typically in the range 0.125 to 8 kHz. Hence, this measure reflects loss of sensitivity to weak sounds caused in any way and does not distinguish between IHC and OHC dysfunction, although up to 80% IHC loss may occur without affecting audiometric thresholds (Lobarinas, Salvi, & Ding, 2013). However, it is becoming increasingly apparent that the audiogram is not predictive of some types of auditory deficit. In particular, hearing ability for sounds that are above threshold may be compromised even when the audiogram is normal, that is, for listeners with clinically normal hearing. The present review will focus on cochlear neuropathy due to noise exposure and aging as a potential mechanism for this type of loss.

In this review, we first describe some of the early studies investigating hearing disability in the presence of a normal audiogram. We then discuss the results of animal experiments showing that noise exposure can cause substantial cochlear neuropathy without affecting sensitivity to weak sounds. This type of selective neural loss, which has been described recently as hidden hearing loss (Schaette & McAlpine, 2011), may be the physiological basis for many of the cases of hearing disability with a normal audiogram. This is supported by evidence from human studies that noise exposure may cause perceptual difficulties without affecting the audiogram. The review will also consider the relation between hidden hearing loss and tinnitus, and the relation between aging and hidden loss.

Hearing Difficulties With a Normal Audiogram

It has been known for many years that some listeners with normal audiometric thresholds report difficulties in understanding speech in noisy environments (for a review, see Zhao & Stephens, 2007). For example, in a large UK survey, 26% of adults reported great difficulty hearing speech in noise, while only 16% had abnormal sensitivity (audiometric thresholds ≥25 dB hearing level [HL] averaged between 0.5 and 4 kHz; Davis, 1989). This condition has been given several names, including obscure auditory dysfunction (Saunders & Haggard, 1989) and King-Kopetzky syndrome (KKS; Hinchcliffe, 1992) after the authors of the early reports of this condition (P. F. King, 1954; Kopetzky, 1948). It has been argued that this condition should be regarded as an aspect of auditory processing disorder (APD; British Society of Audiology APD Special Interest Group, 2011), although APD can be associated with central processing disorders. As we shall see, peripheral mechanisms may account for many cases in which listeners experience difficulty hearing speech in background noise.

An issue here is that normal audiogram is not equivalent to no threshold elevation. Thresholds categorized as normal can cover a 30 dB range, so many listeners with clinically normal hearing could well have significant hair cell damage, in addition to any dysfunction not picked up by the audiogram. Ideally, test and control groups should be audiogram matched, having equal mean thresholds either at each individual frequency, or averaged across a set of frequencies. However, this is not always stated in the experimental methodologies, implying that test and control groups may have differed in their degree of threshold elevation. This is worth bearing in mind, as even slight OHC dysfunction, for example, could significantly affect suprathreshold hearing ability. In the descriptions of research findings related to noise exposure, those studies that have matched audiograms between groups will be distinguished from those that have not. Furthermore, some of the studies using matched audiograms did not compare hearing thresholds for frequencies above 4 kHz. It is possible that a selective hair cell loss at very high frequencies contributed to the observed deficits.

Even an audiogram with no apparent threshold elevation may miss subtle deficits in hair cell function. KKS patients tend to have a greater number of narrow notches in the audiogram in the region of 500 to 3000 Hz than do controls (Zhao & Stephens, 1999), but this is revealed only when the audiogram is measured with a highfrequency resolution. Zhao and Stephens (2006) reported a decrease in distortion-product otoacoustic emission levels in KKS patients, suggesting mild OHC dysfunction, even for a subset of patients with audiometric thresholds similar to controls up to 8 kHz. These studies illustrate further that caution has to be applied before interpreting a normal audiogram as indicative of normal hair cell function.

Animal Studies of Noise-Induced Cochlear Neuropathy

Our understanding of one of the possible physiological bases of this condition took a leap forward after seminal discoveries were made using a mouse model. Kujawa and Liberman (2009) showed that mice exposed to a 100 dB SPL 8 - to 16-kHz noise for just 2 hours lost up to half of their IHC/auditory nerve (AN) synapses in high-frequency regions permanently (probably due to excitotoxicity at AMPA glutamate receptors), despite full recovery of their sensitivity to quiet sounds. The neurons concerned subsequently degenerated. Several weeks after exposure, the amplitude of Wave I of the electrophysiological auditory brainstem response (ABR, see Figure 1), a measure of AN function, was normal at low sound levels but reduced at medium to high sound levels. This suggests that the damage affects AN fibers with high thresholds, the medium- and low-spontaneous rate (SR) fibers that are thought to encode acoustic information at medium to high levels and in background noise (Young & Barta, 1986). The role of high-threshold fibers in noise-induced cochlear neuropathy was confirmed in a study on guinea pigs (Furman, Kujawa, & Liberman, 2013). Noise exposure at 106 dB SPL (4–8 kHz) for 2 hours resulted in a reduction in suprathreshold ABR amplitude, despite full recovery of ABR thresholds at 10 days postexposure. Sampling of AN fiber single-unit responses revealed a reduction in the proportion of low- and medium-SR fibers relative to high-SR fibers.

An illustration of typical stimuli and recorded waveforms for two electrophysiological measures of auditory neural coding: the auditory brainstem response (ABR) and the frequency-following response (FFR).

The noise exposures used in the rodent studies, while substantial, are not atypical of the levels experienced by some humans on a regular basis. For example, in a recent study of temporary threshold shift, a noise level of 98 dB A Leq was recorded in Manchester nightclubs (Howgate & Plack, 2011). Considerable neuropathy has been observed in mice exposed to just 84 dB SPL for a week (Maison, Usubuchi, & Liberman, 2013). Hence, it is quite possible that many people with normal audiograms reporting deficits in speech intelligibility are suffering from noise-induced cochlear neuropathy of the type observed in the animal models.

Relation Between Noise Exposure and Perceptual Deficits in Humans With Normal Audiograms

It is well documented that noise exposure can lead to a temporary or permanent reduction in sensitivity through damage to the OHCs and IHCs in the cochlea (Borg, Canlon, & Engstrom, 1995). However, there is, to date, little direct evidence for a noise-induced neuropathy in humans similar to that observed in the animal studies. Indirectly, loss of high-threshold AN fibers might be associated with a reduction in the fidelity with which sounds are encoded at medium-to-high levels and might be particularly detrimental at low signal-to-noise ratios (Kujawa & Liberman, 2009). Although the literature is not extensive, there is some evidence that listeners with a history of noise exposure, but with near-normal threshold sensitivity, show deficits in complex discrimination tasks.

Alvord (1983) reported a significant deficit in word identification in background noise for 10 audiometrically normal males with a history of occupational noise exposure (including jet mechanics, firing range instructors, and helicopter crew members), compared with an audiometrically normal group of three females and seven males without a history of noise exposure. The stimuli were high-frequency word lists presented at 60 dB HL, and the mean difference in correct identification between groups was 10%. However, the groups were not audiometrically matched: The noise-exposed group had a mean absolute threshold at 4 kHz almost 10 dB greater than that for the control group. In addition, members of the noise-exposed group were slightly older on average (41 years vs. 36 years for the control group).

Kujala et al. (2004) investigated the effects of occupational noise exposure on the detection of deviant syllables and nonspeech sounds in a sequence of standard syllables. The noise-exposed group consisted of eight shipyard workers and two day-care center workers (nine males), and they were compared with a control group of 10 listeners (nine males) who had been working in quiet or moderate-noise-level occupations. The groups did not differ significantly in their audiograms up to 8 kHz and had the same mean age. However, there was a significant difference between the groups in their hit rate for detecting deviant sounds in the presence of background noise.

Stone, Moore, and Greenish (2008) measured the discrimination of noise bursts with different envelope statistics at low sensation levels (close to absolute threshold), a task dependent on the accuracy of the representation of the temporal fluctuations of the sounds. Two groups were compared: a control group of 10 listeners (five males) without a history of noise exposure, and an experimental group of six listeners (five males) who attended high-noise events (nightclubs and playing in rock bands). The control group was on average older than the noise-exposed group (29 years vs. 22 years) but had similar absolute thresholds from 2 to 4 kHz. Listeners were required to discriminate a narrowband Gaussian noise from a narrowband low-noise noise that had minimal envelope fluctuations. Both noises were centered on 2, 3, or 4 kHz. It was reasoned that the performance of this task at low sensation levels would be affected by noise-induced IHC dysfunction, and indeed performance was worse for the high-noise group as the level approached 12 dB sensation level (SL). Although these results were interpreted in terms of subclinical IHC dysfunction, they were published before the animal studies on noise-induced cochlear neuropathy. The findings of Stone et al. could possibly reflect cochlear neuropathy, although the fact that the difference between groups was seen only at low sensation levels appears inconsistent with a selective loss of high-threshold, low-SR nerve fibers.

Recently, Kumar, Ameenudin, and Sangamanatha (2012) compared a group of 28 noise-exposed train drivers (aged from 30 to 60 years) with 90 age-matched controls, on several psychophysical tasks with stimuli presented at 80 dB SL. The noise-exposed group showed deficits in amplitude modulation detection (60 - and 200-Hz modulation frequencies), a duration pattern test (identifying the order of short and long tones in a sequence), and speech recognition in background babble. The results suggest that noise exposure causes a deficit in the temporal encoding of sounds, which relies on the ability of groups of neurons to accurately phase lock to the envelope or fine structure of sounds. However, the article only reports that the train drivers had hearing sensitivity within 25 dB HL from 250 Hz to 8 kHz. It is possible that there was a difference in absolute thresholds between the groups, perhaps reflecting hair-cell damage, which could have accounted for some of the deficits.

It is noteworthy that there is no clear evidence that listeners with KKS, that is, reporting hearing difficulties with a normal audiogram, have a history of greater noise exposure than do controls (Stephens, Zhao, & Kennedy, 2003). So, while a group selected on the basis of noise exposure may have hearing difficulties, it is not clear that the converse is true. It is likely that KKS reflects a more diverse set of pathologies than just noise-induced cochlear neuropathy.

Although these findings are broadly consistent with the expected effects of noise-induced cochlear neuropathy, the evidence is patchy, and as yet there has been no direct link made between noise exposure and cochlear neuropathy in humans with normal hearing thresholds. There is evidence from animal models that long-term noise exposure at levels well below those causing cochlear trauma can cause changes to the cortical tonotopic map and that these changes may adversely affect sound discrimination (for a review, see Gourevitch, Edeline, Occelli, & Eggermont, 2014). So some of the effects of noise exposure may be the result of central plasticity, rather than purely cochlear effects. Perhaps the best evidence to date that hidden cochlear neuropathy has perceptual effects in humans comes from studies of the relation between AN function and tinnitus.

Hidden Hearing Loss and Tinnitus

A study by Schaette and McAlpine (2011) provides evidence that the tinnitus (perception of phantom sound in the absence of external sound) experienced by some individuals with normal hearing sensitivity has a basis in AN damage. The patient and control groups had near-identical audiograms up to 12 kHz, were all female, and had similar mean ages. Schaette and McAlpine found that Wave I of the ABR was reduced at high sound levels in the tinnitus group (Figure 2), similar to the results from noise-exposed mice (Kujawa & Liberman, 2009). The amplitude of Wave V of the ABR (reflecting neural function in the upper brainstem) did not differ between groups.

The results of the study of Schaette and McAlpine (2011), showing the amplitudes of Wave I and Wave V of the ABR at two different click levels, for a group of tinnitus patients (triangles) and a group of audiogram-matched controls without tinnitus (circles).

Following Schaette and Kempter (2006), Schaette and McAlpine (2011) suggested that reduced neural output from the cochlea leads to a compensatory increase in neural gain in the auditory brainstem. The gain could lead to tinnitus due to an amplification of the spontaneous activity of auditory neurons. This is consistent with the findings of Munro and Blount (2009) regarding the effects of temporary monaural deprivation on central gain and on tinnitus (Schaette, Turtle, & Munro, 2012). Further work suggests that the gain is also evident in Wave III of the ABR, that is, at the level of the cochlear nucleus (Gu, Herrmann, Levine, & Melcher, 2012). Consistent with these findings in humans, recent work using the mouse model has revealed that noise exposure producing temporary threshold shift but permanent cochlear neuropathy produces a reduction in ABR Wave I amplitude at suprathreshold stimulation levels but no reduction in Waves II to V (Hickox & Liberman, 2014). Indeed, there was evidence for an enhancement of Wave V.

The central gain hypothesis is consistent with the observation that tinnitus and hyperacusis (diminished sound level tolerance) are comorbid conditions (Baguley, 2003), both of which can occur in listeners with clinically normal hearing sensitivity, and which are associated with increased activity in inferior colliculus (in the case of hyperacusis) and auditory cortex (Gu, Halpin, Nam, Levine, & Melcher, 2010). Noise-exposed mice with cochlear neuropathy show hypersensitivity to sound, suggestive of a link between AN damage and hyperacusis (Hickox & Liberman, 2014), although the increased sensitivity was not observed at the highest levels tested (100 dB SPL and greater), which is not consistent with hyperacusis in humans.

The results of Schaette and McAlpine (2011) suggest that some individuals with normal hearing have a cochlear neuropathy linked to a significant perceptual dysfunction. The cause of the neuropathy in the tinnitus group is unclear, although it is tempting to speculate that it may have been noise exposure. Interestingly, it has been shown that people with tinnitus and normal hearing sensitivity have deficits in tone detection in noise (Weisz, Hartmann, Dohrmann, Schlee, & Norena, 2006) and intensity discrimination (Epp, Hots, Verhey, & Schaette, 2012). These might be further manifestations of cochlear neuropathy.

Hidden Hearing Loss and Temporal Coding Deficits

One of the most remarkable aspects of audition is the ability of auditory neurons to encode the fine-grained temporal characteristics of sounds. Neurons in the caudal portion of the auditory pathway encode the temporal features of sounds by their tendency to synchronize their firing, or phase lock, to features in the temporal fine structure and temporal envelope. Such precise temporal coding, with a resolution of less than a millisecond, is fundamental to the way the auditory system represents and processes sounds and is thought to be particularly important for auditory localization, pitch perception, and speech perception in noise (Moore, 2008).

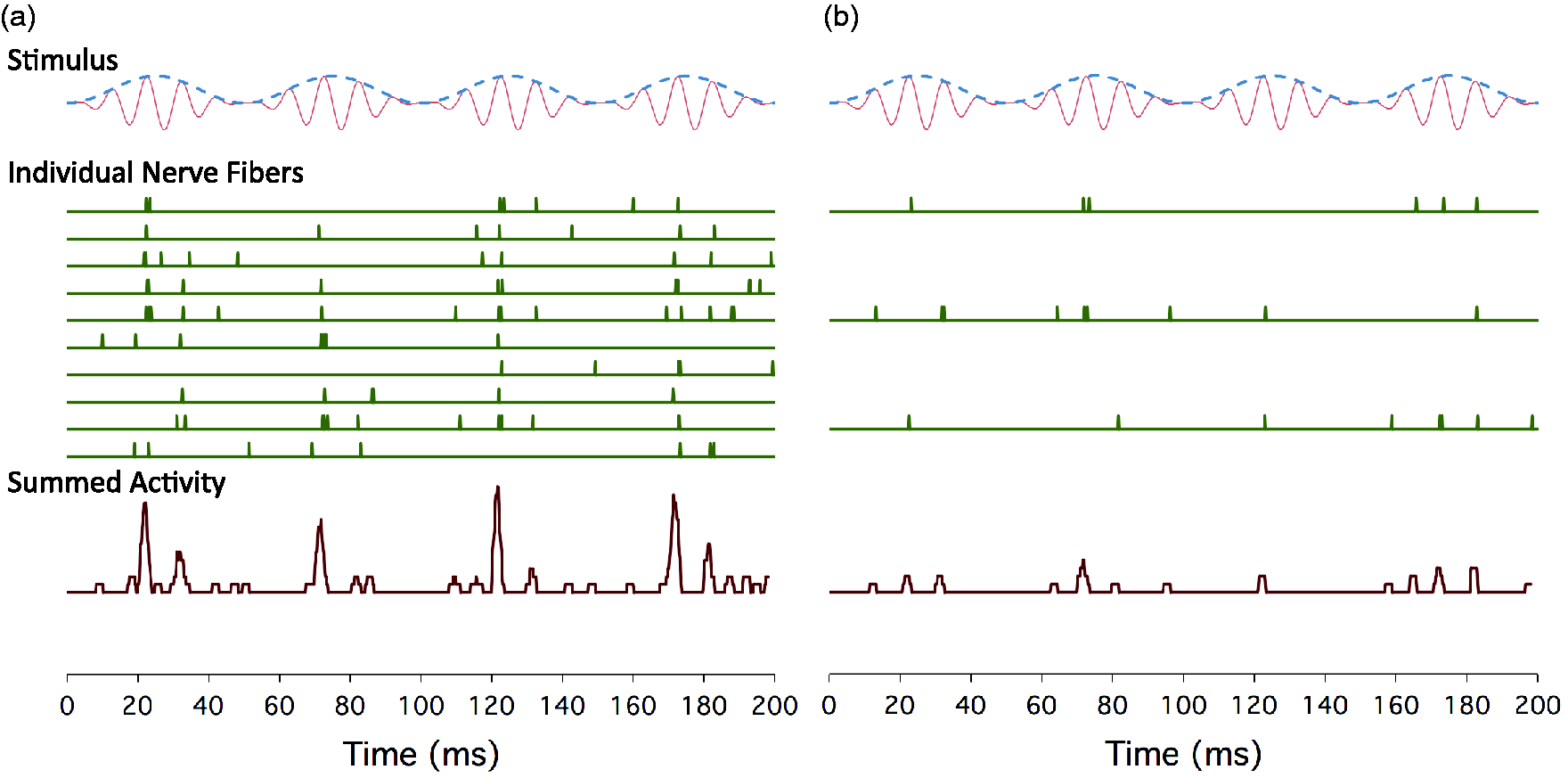

In a review article, Bharadwaj, Verhulst, Shaheen, Liberman, and Shinn-Cunningham (2014) argue that loss of high-threshold AN fibers may affect both fine-structure and envelope coding (Figure 3) and is likely to be especially detrimental to envelope coding at medium-to-high levels. At these levels, the low-threshold, high-SR fibers are saturated and synchronize poorly to envelope fluctuations (Joris & Yin, 1992). The frequency-following response (FFR) is an electrophysiological measure of sustained neural temporal coding, reflecting the combined phase-locked activity of neurons in the rostral brainstem (Krishnan, 2006, see Figure 1). The FFR, at least at low frequencies, is not thought to reflect AN activity directly, but it may reflect the degradation in central temporal coding resulting from cochlear neuropathy. Ruggles, Bharadwaj, and Shinn-Cunningham (2011) reported that the envelope FFR is related to performance on an auditory selective attention task dependent on temporal cues, for listeners with normal audiometric thresholds. With respect to temporal fine structure, Marmel et al. (2013) reported that the strength of the FFR to a 660-Hz pure tone is correlated with behavioral frequency discrimination for that tone, even after the effects of hearing threshold and age are controlled. Finally, there is evidence that neural temporal coding, as reflected by the FFR, is related to speech perception in noise in young adults (Song, Skoe, Banai, & Kraus, 2011).

A simulation of phase-locked auditory nerve activity in response to an amplitude modulated pure tone, illustrating the effects of loss of auditory nerve fibers on temporal coding.

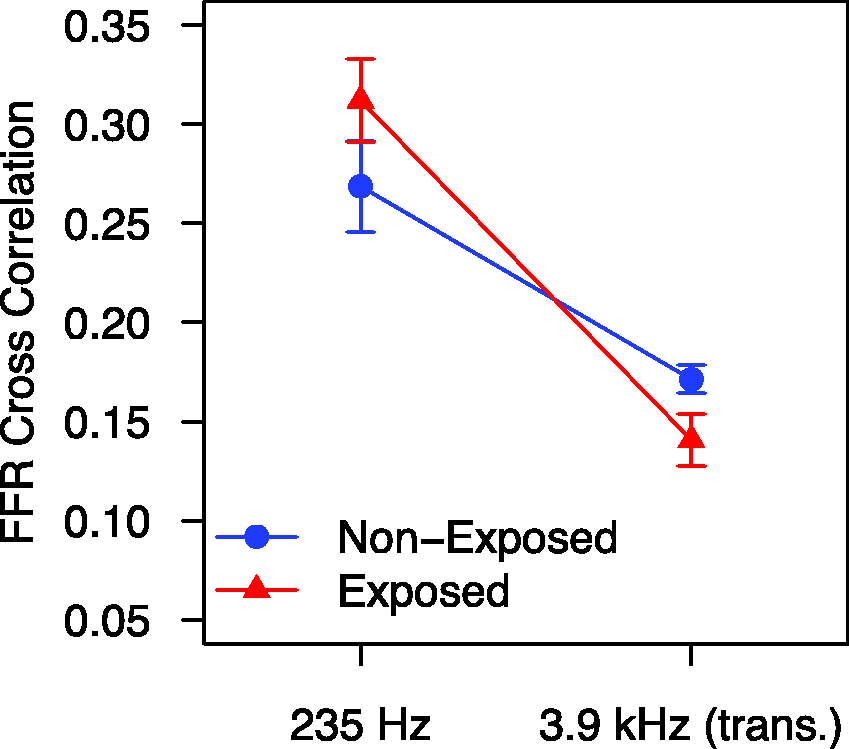

Preliminary data suggest that the FFR may be sensitive to the effects of noise exposure (Barker, Hopkins, Baker, & Plack, 2014). The FFR was recorded to a 235-Hz pure tone and to a 3.9-kHz pure tone amplitude modulated by a half-wave rectified 235-Hz pure tone (this stimulus is referred to as a transposed tone). Two groups of 15 young, audiometrically normal females were tested, audiogram-matched up to 4 kHz. One group had a history of nightclub attendance, and the other had little history of recreational noise exposure. It was reasoned that the effects of noise exposure would be greatest for the high-frequency transposed tone, and, indeed, there was a significant reduction in FFR strength at 235 Hz for the transposed tone in the noise-exposed group (Figure 4). The ratio of FFR strength between the transposed tone and pure tone was also significantly less for the noise-exposed group.

Unpublished results from the conference presentation of Barker et al. (2014), showing FFR synchrony to a 235-Hz pure tone and to a 235-Hz tone transposed to 3.9 kHz (i.e., a 3.9-kHz pure-tone carrier amplitude modulated at 235 Hz), for groups of listeners with (triangles) and without (circles) a history of recreational noise exposure.

Hidden Hearing Loss Due to Aging

Age is related to hearing deficits, even in the absence of significant elevation in audiometric thresholds. Speech intelligibility in background noise declines with age even when there is no significant increase in audiometric thresholds (Dubno, Dirks, & Morgan, 1984; Rajan & Cainer, 2008). In particular, psychophysical and electrophysiological studies show that neural coding of both temporal fine structure and temporal envelope declines with age in humans (Clinard & Tremblay, 2013; Clinard, Tremblay, & Krishnan, 2010; He, Mills, Ahlstrom, & Dubno, 2008; Hopkins & Moore, 2011; A. King, Hopkins, & Plack, 2014; Marmel et al., 2013, Figure 5(a); Moore, Vickers, & Mehta, 2012). These temporal coding deficits can occur independently from an increase in audiometric threshold (Clinard & Tremblay, 2013; A. King et al., 2014; Marmel et al., 2013). As described earlier, there is evidence that neural temporal coding is related to speech perception in noise in young adults (Song, Skoe, Banai, & Kraus, 2011), although it remains unclear how this relation is affected by age. There is some evidence that middle-age listeners rely more heavily on temporal fine structure (rather than temporal envelope) information, which may lead to a speech perception impairment in reverberant environments (Ruggles, Bharadwaj, & Shinn-Cunningham, 2012).

The results of the study of Marmel et al. (2013).

These effects of age could be the result, at least in part, of an accumulation of noise-induced cochlear neuropathy over the lifetime. Indeed, there is evidence that noise exposure exacerbates the effects of age (Gates, Schmid, Kujawa, Nam, & D'Agostino, 2000), perhaps due in part to an accumulation of AN damage (Kujawa & Liberman, 2006). However, results using a mouse model suggest that aging per se, in the absence of significant noise exposure, is associated with a loss of IHC synaptic ribbons and a reduction in ABR Wave I amplitude at high levels (Sergeyenko, Lall, Liberman, & Kujawa, 2013), perhaps reflecting high-threshold fiber loss. Similar to the noise exposure studies, the results show that such a loss occurs before a loss of threshold sensitivity or a reduction in hair cell counts. Consistent with the animal findings, a recent postmortem investigation of human temporal bones from individuals without hair cell loss revealed that the number of spiral ganglion cells (SGCs) declines at a rate of about 100 per year on average (Makary, Shin, Kujawa, Liberman, & Merchant, 2011). By the time an individual has reached 91–100 years of age, about a third of the SGCs present at birth have been lost on average. Also consistent with the mouse model, in the human temporal bone study, neural loss preceded changes in the pure-tone audiogram (audiometric changes were only apparent for ages of 60 years and above). The study found that there was considerable variability in the number of SGCs lost by each age of mortality. Some individuals who died in their teens had substantially lower numbers of SGCs than the mean for young individuals, whereas others in their 70 s retained an almost complete set. Such variability may in part be due to varying environmental factors, including noise exposure (Makary et al., 2011).

Aging has been shown to diminish the amplitudes of Waves I, III, and V of the ABR, and this result holds even when the effects of absolute threshold and noise exposure history are controlled statistically (Konrad-Martin et al., 2012). This result is consistent with an age-related loss of AN fibers, although there does not seem to be a recovery of Waves III and V amplitudes that might be predicted by the central gain hypothesis (Schaette & McAlpine, 2011). Konrad-Martin et al. (2012) suggest that aging may also be associated with a loss of fibers central to the AN, as the age-related reduction in Wave III was still apparent when Wave I amplitude was controlled.

Loss of AN fibers might account for the age-related deficit in temporal coding in humans, as the information carried by the AN to the brain is related to the number of active fibers (Figure 3). However, another possibility is that the precision of synchrony across fibers is affected (He et al., 2008; Konrad-Martin et al., 2012; Ross, Fujioka, Tremblay, & Picton, 2007), perhaps due to a reduction in the speed of transmission of action potentials in individual fibers. This could account in part for the reduction in ABR amplitudes (Konrad-Martin et al., 2012). Aging is associated with the degeneration of myelin sheaths, both in the central nervous system (Peters, 2002, 2009) and in the human and mouse AN (Xing et al., 2012). Electrophysiological measures suggest that aging is associated with an increase in response latency in the AN and brainstem (Konrad-Martin et al., 2012; Marmel et al., 2013, Figure 5(b); Rupa & Dayal, 1993). Another factor that may underlie some age-related perceptual deficits is the age-related decline in the function of the medial olivocochlear efferent system, which can occur prior to OHC degeneration (Jacobson, Kim, Romney, Zhu, & Frisina, 2003).

Summary and Future Directions

To summarize the current state of knowledge, there is good evidence from animal studies that noise exposure can cause a dramatic loss of AN fibers without affecting hearing sensitivity. There is some evidence from human studies that noise exposure may be associated with deficits in suprathreshold discrimination tasks and neural temporal coding (consistent with AN damage), in the absence of a reduction in hearing sensitivity. Finally, there is evidence that tinnitus is associated with cochlear neuropathy, in the absence of a reduction in hearing sensitivity. However, to date, no direct link has been made between the physiological results and the perceptual deficits (Figure 6). We are still waiting for the seminal study that links AN dysfunction with perceptual deficits in a group of noise-exposed listeners with normal audiograms.

Hypothetical causal links between noise-induced loss of AN fibers (cochlear neuropathy), deficits in the neural coding of temporal and intensity fluctuations, deficits in laboratory-based psychoacoustic tasks (lab. tasks), and deficits in real world hearing ability.

Several laboratories are currently investigating neural function in noise-exposed listeners using electrophysiological techniques such as the ABR and FFR. Within the next few years, it is likely that we will be able to provide concrete evidence for or against the hypothesis that noise-induced cochlear neuropathy is a major cause of suprathreshold hearing difficulties in humans.

With respect to aging, the pattern is similar, with good evidence from animal and human studies for a loss of AN fibers with age, and evidence from human studies that aging per se, in the absence of threshold elevation, is associated with deficits in neural temporal coding and speech discrimination in noise. However, there is little evidence so far for a direct link between cochlear neuropathy and perceptual deficits.

Of course, it is likely that listeners with an audiometric hearing loss due to noise exposure or aging also have a hidden cochlear neuropathy. Determining the prevalence of this condition, and how the neuropathy contributes to the perceptual deficits, is an important issue. Understanding the relative contributions of IHC damage, OHC damage, and neuropathy to the perceptual deficits will require a very carefully controlled study and represents a considerable challenge for future investigations.

Finally, if hidden loss proves to be a major contributor to hearing dysfunction, we will need a diagnostic test with sufficient selectivity and specificity to diagnose the condition in the clinic. The current results, such as they are, show effects at the group level, but we really need a test that can identify the condition reliably in individual patients. In the short term, such a test would allow us to identify at-risk individuals, perhaps before any elevation in audiometric thresholds become apparent. This could be used to provide health-care advice, particularly regarding exposure to noise, and could become part of the regular health surveillance in workplaces with a high level of background noise. Long-term treatments for hidden loss, such as neurotrophins to reconnect damaged nerve terminals, or even stem-cell therapies to replace lost fibers (Chen et al., 2012), may become available. There is also the possibility that the condition may be managed using hearing aids with directional microphones and other signal-processing strategies to improve speech discrimination in noise.

The arrival of a diagnostic test would of course make the term hidden hearing loss redundant. But for now, this is an apt descriptor of this intriguing, and somewhat troubling, condition, which may be a significant cause of hearing difficulties for many millions of people worldwide.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Medical Research Council (MR/L003589/1) and Action on Hearing Loss.

Acknowledgments

The authors thank the Editor in Chief, an anonymous reviewer, and Brian Moore for constructive comments on an earlier version of this manuscript.