Abstract

This study assesses the potential use of artificial intelligence-programmed managers in the workplace through two experiments that manipulated source cues and time cues. Data were collected before the Novel Coronavirus pandemic and then 3 years after the pandemic’s outbreak when many businesses had returned to normal operations and ChatGPT had been released. Results held across the two experiments. Neither time nor source automation cues had an impact on the affective impressions participants formed of the simulated email exchange. Attention check data further suggests time cues may no longer be a relevant predictor of impression formation in workplace communication.

With the rise of artificial intelligence (AI) and machine learning, experts (e.g., Chamorro-Premuzic, 2016; Kruse, 2018) have suggested that robot managers have the potential to improve organizational performance and business operations. AI-managed automation can be used for mundane and repetitive tasks, allowing companies to prioritize tasks that need close human oversight (Abdullahi, 2024). This gives businesses more flexibility in employee management and could drastically alter how organizations interact with their employees (Getchell et al., 2022). Automated managerial communication falls under the umbrella of artificial intelligence, which are the “computational systems that involve algorithms, machine learning methods, natural language processing, and other techniques that operate on behalf of an individual to improve a communication outcome” (Hancock et al., 2020, p. 25). Automated messages can be delivered via texts, emails, and internal-organizational web platforms. Such communication can streamline work processes, contribute to employee efficiency, and deliver personalized communication to employees (DesRochers, 2024).

As it relates to managerial communication, AI’s potential has mostly been suggested and theorized to date. There is limited research on how AI has impacted managerial communication and organizational communication (Getchell et al., 2022). Furthermore, the impact of automated communication on employee-organization relationships remains unclear. To address an empirical gap regarding the message receiver’s role orientation in AI-based situations, we report the findings from the same experiment conducted twice in which email messages to employees were manipulated to differentiate two different message cues. Specifically, we ran a 2 (message timing) × 2 (source characteristic) experiment with similar groups at two different points in time, once in 2019 before the outbreak of the Novel Coronavirus (COVID-19) and once in 2023, after many organizations had returned to standard business operations at the endemic phase of the Coronavirus and ChatGPT had been released to the public. Participants in the two study phases were asked to respond as employees in a fictional scenario to messages that were sent either during traditional work hours and a time outside of traditional work hours (very early morning) and to messages that were either sent with an indicator that the message was automatically sent or without that indicator of automation. After viewing these messages, participants were then asked to respond as employees to questions about their views on the quality of the employee-organization relationship in this scenario.

We combined the two findings from these two experiments into one report for two replication-related reasons. One, the Coronavirus pandemic disrupted many traditional organizational routines, notably when people work and how they utilize information communication technologies for work purposes at home (Iogansen et al., 2024; Pennington et al., 2022). Based on our review of the literature after conducting the original experiment in 2019, we surmised that employees would be naturally mindful of time-shifts in work because of the pandemic. Additionally, as we will report in our Experiment 2 rationale, some characteristics of our experiment surfaced in 2022 with the arrival of ChatGPT. In conducting the experiment a second time, we wanted to assess the extent to which both time and system/source cues could have been activated in our automated-managerial communication scenario.

Literature Review

Theorizing informed by the computer-mediated communication (CMC) literature has suggested that AI-related tools have the potential to support and mediate business communication in numerous contexts (Hancock et al., 2020). AI-assisted communication can help with five areas of employee management: facilitating payroll management, onboarding new employees, training new employees, helping open new forms of collaboration, and streamlining scheduling (Tyson & Wilson, 2025). It stands to reason that, whatever the level of deployment of AI tools in their organizations at this point, employees are coming to grips with and adjusting to new forms of automated communication at work.

A number of studies have addressed AI’s potential for external and internal business communication. For example, research suggests that AI can foster closer relationships between consumers and brands (Bergner et al.,2023). If used ethically, AI can also support local journalism, giving AI a potential impact on information distribution in managed contexts (Ahmad et al., 2023; Forja-Pena et al., 2024). Research has also found that AI helps with organizational listening (Men et al., 2022), AI-powered chatbots can provide students with a rich level of engagement with coursework (Littell & Peterson, 2024), and employee uncertainty about AI negatively predicts job performance (Matsunaga, 2021). Yet even as scholars have made notable contributions to the literature on business communication, there is a need to understand the specific ways in which AI and automated messages impact impression formation in the workplace.

AI systems generate distinct perceptual cues about the content of the messages that should influence how one responds to these messages (Hancock et al., 2020; Lew & Walther, 2022; Sundar, 2020). Scholars need to further investigate the specific attributes of the functions and operations in these systems that can shape users’ expectations, perceptions, and experiences with these tools (Sundar, 2020). As a grounding point in research in this area, AI-mediated communication can be characterized by five elements: the magnitude of the technology’s impact on the message, the media type in which the AI system operates, the goal for AI’s impact optimizing on the message, the relative autonomy of the AI tool’s communication, and the role that the AI tool is enacting (such as message receiver or message sender) (Hancock et al., 2020). The latter element—source role— is the first condition that we examine in our experiments. Even with notable theoretical contributions about how source cues in AI environments may impact users (see helpful reviews by Hancock et al., 2020; Sundar, 2020), our review of extant research suggests that key aspects about AI’s ability or inability to trigger heuristic-informed responses among users in the workplace are overlooked. How source-automated communication compares to nonautomated communication also warrants investigation.

In addition to source cues, we were also interested in research from the CMC literature (e.g., Cox et al., 2020; Kalman & Rafaeli, 2011) that highlights the importance of the timed delivery of messages to receivers in mediated contexts. This area of research falls under the category of chronemics, which are structural features of online systems that can include timestamps in messages when they are sent (Walther & Tidewell, 1995). Such features serve as contextual cues that impact the exchange and intimacy of information, particularly when the messages are text-only as with email (Walther & Tidewell, 1995). Chronemic cues are vital in impression formation, particularly in shaping how the deliverer of information is perceived (Kalman et al., 2013). Notably, Walther and Tidewell (1995) found that the timing of responses to messages can impact the perceived intimacy of those messages. More recently, scholars have established several clear patterns when it comes to the timing of messages in CMC contexts: Pauses in between communication can negatively impact trust in dyadic exchanges (Kalman et al., 2013); employees prioritize their email responses based on how quickly a response is believed to be expected of them (Cox et al., 2020); and organizational norms develop around the timing of emails and this can predict employee turnover intentions (Byun & Kirsch, 2021).

To the latter point on norms, employees typically respond first to those emails in which the sender expects a response (Byun & Kirsch, 2021). From this, we can infer that a manager’s email communication should rise in priority for either a response or a response-related action. This raises questions about how a manager’s email sent during a traditional nonwork hour (very early morning) might prompt different response among employees, and affective events theory provides a useful lens for such consideration.

Importance of managerial communication

Through the lens of affective events theory (AET, Weiss & Cropanzano, 1996), we consider a cluster of five interrelated outcomes that could stem from chronemic cue-influenced and automated/nonautomated managerial communication. AET suggests that work-related events can lead to immediate emotional responses by employees and can subsequently impact job outcomes such as job satisfaction and organizational commitment (Mignonac & Herrbach, 2004; Scott & Judge, 2006). Employees’ responses to managerial communication can be both positive or negative and long-lasting or short-term (Houkes et al., 2003; Luo & Chea, 2018; Weiss & Cropanzano, 1996).

Outside of the AET framework, research supports the idea that perceptions of managerial communication can shape both employee satisfaction (Dasgupta et al., 2013; Erben et al., 2019) and employee commitment to the organization (Dasgupta et al., 2013; Raina & Roebuck, 2014). AET provides a rationale for how this might occur: An individual makes an immediate assessment (for good or bad) of a precipitating event and then an emotional response follows (Li et al., 2022; Weiss & Cropanzano, 1996). While a direct reaction to a triggering exchange, employees’ affective responses can also have implications for longer-term consequences such as employee commitment (Houkes et al., 2003). From AET, it would appear that both employee satisfaction and employee commitment could be shaped by direct communication from one’s immediate supervisor. These two were the first outcomes we decided on for our experiments.

We were interested in three additional responses to managerial communication that are related: dominance, affection, and affect. While not framed by AET, an experiment by Walther and Tidewell (1995) has relevance to this discussion on employees’ affective responses to their managers’ communication. This early experiment found that the timing of managerial messages predicted the degree to which an employee would perceive the messages as conveying warmth and affection and the degree to which an employee would see the messages as seeking to assert dominance in the relationship. The two outcomes of affection and dominance are important because they are the most basic for describing the interpersonal interactions between two or more individuals (Walther & Tidewell, 1995). If a message from a supervisor is perceived positively, it should appear both warm and less dominant. Finally, we were interested in a construct that relates to affection: affect. As a state, this can range from positive to negative (Watson et al., 1988). Widely used in the literature as a proxy for mood or emotion, affect can fluctuate over time or in response to specific emotional events (Tran, 2020).

While research has pointed to the relationships between time and source cues and related outcomes in various supervisor contexts, this has not extended to the realm of automated managerial communication. To address this gap, we sought to answer the following research questions in our experiments:

Research Question 1: How do source cues (automated or not) in managerial communication impact perceptions of the message and its receiver?

Research Question 2: How do chronemic cues (work hours or not) in managerial communication impact perceptions of the message and its receiver?

Study 1 Method

A 2 (time) × 2 (source), between-subjects online experiment was conducted in Fall 2019 involving participants at a midsized university in the Midwestern United States. Participants read two emails from a manager to an employee (Appendix A). In each condition, the first email was the same, and it included a request made of the participant as an employee in the simulated scenario. The second email was a follow-up email making the request again. It included a time stamp suggesting that it was sent either during work hours or during nonwork hours (time manipulation), and also included a signature line saying that the email was automated or not (source manipulation). After reading one of these four pairs of emails, participants responded to several measures examining how they think the employee would perceive the message and what they think the nature of the relationship between the manager and the employee is. The condition breakdown was nearly evenly distributed in the final used sample (work hours without automated message: n = 26; work hours and automated message: n = 24; after-hours without automated message, n = 24; after-hours and automated message, n = 25).

Participants

A total of 101 participants were recruited through the authors’ on-campus Listerv and through social media. Removal of two incomplete responses left 99 for analysis. These participants ranged in age from 18 to 33 years (M = 19.20, SD = 2.42), with one participant not reporting their age. Most reported their ethnicity as Caucasian (87, 87.9%) and were roughly evenly split in reported gender (46 male, 46.5%; 52 female, 52.5%; 1 person did not respond). A majority of participants reported that they were not currently working (57, 57.6%).

Measures

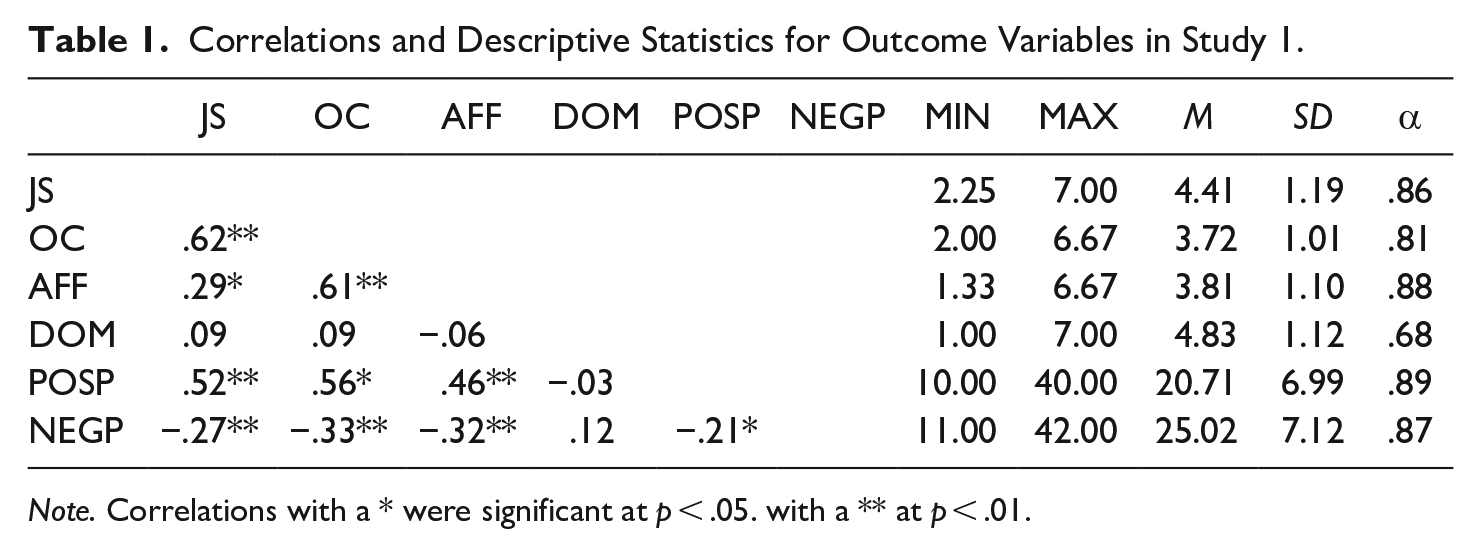

Participants were asked to respond to a variety of measures after reading two emails they received. Items were recoded as necessary so that higher scores meant higher levels of the variable measured. See Table 1 for correlations and descriptive statistics of each variable.

Correlations and Descriptive Statistics for Outcome Variables in Study 1.

Note. Correlations with a * were significant at p < .05. with a ** at p < .01.

Job satisfaction

To measure participants’ perception of how satisfied the employee receiving the emails would be, Eisenberger et al.’s (1997) measure of job satisfaction was adapted. Using a 7-point response set (1 = strongly agree; 7 = strongly disagree), this adaptation consisted of six Likert-type items (e.g., “If a good friend of mine told me that they were interested in this job, the employee would recommend it.”). This scale had acceptable reliability (α = .86).

Organizational commitment

Organizational commitment (perceived) of the employee receiving the messages was measured with an adapted version of Allen and Meyer’s (1990) affective organizational commitment scale. Using a 7-point scale (1 = strongly agree; 7 = strongly disagree), this adaptation consisted of six Likert-type items (e.g., “This employee would be very happy to spend the rest of their career with this organization.”). This scale had acceptable reliability (α = .81).

Affection

How much affection participants suggested message recipients would feel about the message received was measured by adapting the perceived affection part of Burgoon and Hale’s (1987) relational communication questionnaire (see Walther & Tidewell, 1995). Using a 7-point response set (1 = strongly agree; 7 = strongly disagree), this adaptation consisted of nine Likert-type items (e.g., “The manager communicated coldness rather than warmness.”). This scale had acceptable reliability (α = .88).

Dominance

The perceived dominance part of Burgoon and Hale’s (1987) relational communication questionnaire was adapted to measure participants’ perceptions of how dominant message receivers would see the messages as. Using a 7-point response set (1 = strongly agree; 7 = strongly disagree), this adaptation consisted of six Likert-type items. This full scale had an unacceptably low reliability (α = .45). In the end, a scale was created using two of the six items (“The manager tried to control the interaction.”; “The manager had the upper hand in the conversation with the employee.”) This two-item scale had a reliability of α = .68.

Affect

Finally, participants rated how the employee felt when reading the second email using the PANAS (Watson et al., 1988). This measure assesses both positive and negative affect, with higher scores on the positive measure meaning more positive affect, and lower scores on the negative measure meaning less negative affect. Each affect is measured by responding to 10 words (e.g., interested; positive affect, and distressed; negative affect) using a 5-point response set to suggest how much of that affect the person would feel (1 = very slightly or not at all; 5 = extremely) and adding scores. Positive affect had an acceptable reliability (α = .89).

Study 1 Results

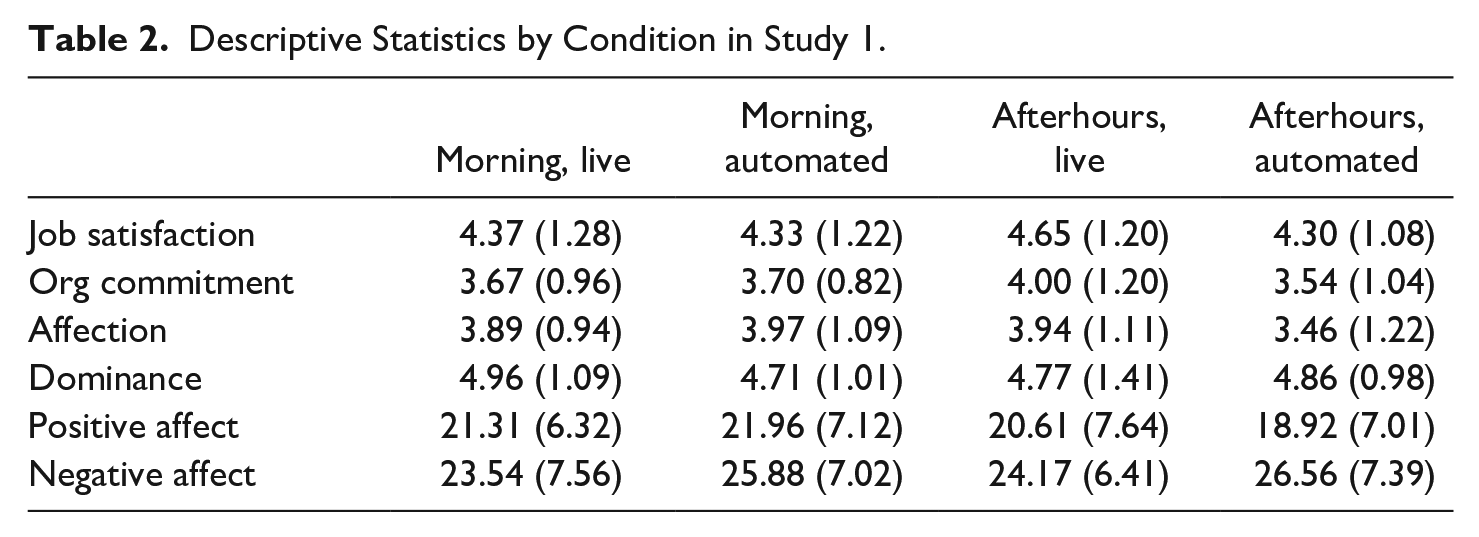

To look for differences based upon the source and time cures, 2 × 2 analyses of variance (ANOVAs) were run for each dependent variable. Overall, there were no statistically significant differences found, and thus, there were no main effects of time or source for any of these dependent variables. Nor were there interaction effects found (see Table 2).

Descriptive Statistics by Condition in Study 1.

The ANOVAs showed no differences for time, F(1, 94) = 0.27, p = .608, η2 = .00, or source, F(1, 94) = 0.86, p = .440, η2 = .01, nor the interaction between time and source, F(1, 94) = 0.60, p = .522, η2 = .00. There were also no differences for time, F(1, 93) = 0.18, p = .671, η2 = .00, or source, F(1, 93) = 1.08, p = .301, η2 = .01, nor the interaction between time and source, F(1, 93) = 1.41, p = .239, η2 = .01, on organizational commitment.

The ANOVA run for perceived affection also found no differences for time F(1, 95) = 1.13, p = .290, η2 = .01, or source F(1, 95) = 0.80, p = .372, η2 = .01, nor the interaction between time and source, F(1, 95) = 1.63, p = .205, η2 = .02. There were also no differences for time F(1, 95) = 0.01, p = .932, η2 = .00, or source F(1, 95) = 0.13, p = .720, η2 = .00, nor the interaction between time and source, F(1, 95) = 0.56, p = .455, η2 = .01, on perceived dominance.

Finally, the ANOVA run for positive affect found no differences for time, F(1, 93) = 1.72, p = .193, η2 = .02, or source, F(1, 93) = 0.133, p = .716, η2 = .00, nor the interaction between time and source, F(1, 93) = 0.67, p = .414, η2 = .01. There were also no differences for time, F(1, 95) = 0.21, p = .648, η2 = .00, source, F(1, 95) = 2.723, p = .102, η2 = .03, nor the interaction between time and source, F(1, 95) = 0.00, p = .984, η2 = .00, on negative affect.

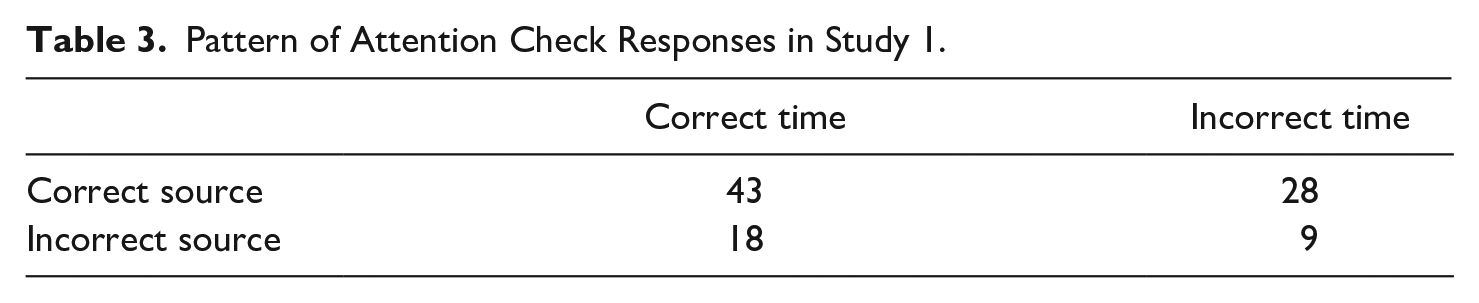

As part of the study, participants were asked to complete a simple attention check after other measures were responded to before answering demographic questions. This attention check consisted of two questions: one asking participants to report on the time that the second email was sent (by either choosing in the morning or in the evening) and a second asking them who sent the email (either the supervisor or an automated service). The results can be found in Table 3. The overall pattern suggests that participants may not have paid a great deal of attention to the pieces of information manipulated in this study, as only 43 participants (43.4%) answered both questions correctly.

Pattern of Attention Check Responses in Study 1.

Study 1 Discussion

This experiment was designed as a partial replication of Walther and Tidewell (1995), with additional testing for effects of source/automation cues. The study found no differences based on timing or source of the second email for perceived job satisfaction and organization commitment of the purported employee, no differences on perceived dominance and affection in the relationship between employer and employee, and no differences in perceptions of how the employee would feel when reading the second email. What these finding might mean as well as directions for future research are discussed below.

On one hand, the lack of statistically significant findings may give some readers pause, suggesting that the current study was a “failure,” as there exists a well-documented publication bias against nonsignificant findings (Levine et al., 2009). However, nonsignificant findings can have important value, including in replication studies (McEwan et al., 2018), as it can suggest that something has changed (among other possibilities). In line with this, perhaps most surprising is the lack of differences for time cues, as Walther and Tidewell (1995) previously found such differences. It is possible that people in the age range of the participants in this study no longer pay attention to time cues such as these. This possibility is given some credence when examining the pattern of attention checks conducted in this study, as over one-third of participants (37.8%) reported the wrong time for the follow-up email they saw when choosing among two options (the correct time or one incorrect time). This seems very high, given the ability to guess correctly at random half of the time, and is something that can be examined in future research (as well as in the follow-up replication discussed below).

The lack of significant findings for source cues are also interesting. In this study, seeing a follow-up that was automated (scheduled to be sent) rather than “live-sent” did not impact participants’ impressions, no matter which time the email was sent from. One possibility is that this is another piece of information that people are not paying attention to. Although not as stark as seen for time cues, still over one-quarter of participants (27.6%) selected the wrong source from among two options. It could be that this lack of attention is driving a lack of findings, but the fact that this piece of information does not seem to be paid attention to is an interesting finding, as is the lack of attention seemingly paid to time cues. Participants may have lacked familiarity with this kind of cue in general, and thus, may not have been looking for it. If this is the case, then future research using this kind of information may see larger effects.

Study 2 Rationale

After the initial data were collected in 2019, two critical factors changed that warranted a follow-up examination of time and source cues from our first experiment. 1 First, the world experienced unprecedented disruption from the COVID-19 pandemic. This had crucial implications for work communication in general and the shifting of traditional work routines through telework. In pushing large percentages of the workforce in many countries to work-from-home arrangements, the COVID-19 pandemic changed where employees work (Pennington et al., 2022). Additionally, the pandemic altered when employees work as many individuals engaged in remote work outside of “peak” business hours (Iogansen et al., 2024). Organizational technology use during the pandemic distorted employees’ boundaries between work and home and many longstanding concerns with remote work were magnified by work-related technology use at home (Pennington et al., 2022).

Second, a key tool that embodied some characteristics of our 2019 experimental condition was released to the general public in November 2022: ChatGPT. This tool is an AI chatbot that uses a large language model that does a superior job to sounding “human” than previous similar types of technologies (Roumeliotis & Tselikas, 2023). Its release led to incredibly swift adoption; it has been called the fastest adopted computer software app ever, with over 100 million users in its first two months. By the summer of 2023, many employees had taken it upon themselves to use ChatGPT and organizations had not initially developed firm policies for its usage in the workplace (Brooks, 2023). ChatGPT’s arrival gave the public a much-discussed version of AI. Entering the public consciousness in such a large fashion may also suggest that automated cues are something that has also become more noticeable when forming impressions of online messages. Anecdotally, there are stories of people forming (largely negative) impressions based on inclusion of information suggesting that a message has been at least in part automated (Cerullo, 2023)

Based on the pioneering work by Walther and Tidewell (1995), we expected some effect of time cues on impressions in our original experiment from 2019. However, our findings (specifically with the original manipulation checks) unexpectedly suggested that in the context of automated managerial communication, users largely ignored key message cues on time and source. The pandemic’s disruption of many work-home location and temporal boundaries and ChatGPT’s arrival presented us with another opportunity to examine the potential effects of message time and automation cues on peoples’ perceptions. In our second study, we revisited the same two research questions that framed our first experiment:

Research Question 1: How do source cues (automated or not) in managerial communication impact perceptions of the message and its receiver?

Research Question 2: How do chronemic cues (work hours or not) in managerial communication impact perceptions of the message and its receiver?

Study 2 Method

The method employed in Study 1 was used again in our replication. The only change was to the year in the timestamp in the emails that participants saw (2023 rather than 2019, to reflect a current message). The data were collected in Spring 2023, after the release of ChatGPT.

Participants

A total of 107 participants were recruited to participate in the second experiment. Removal of nine incomplete responses left 98 for analysis in Study 2. The condition breakdown was nearly even in the final sample (work hours without automated message: n = 23; work hours and automated message: n = 25; after-hours without automated message, n = 23; after-hours and automated message, n = 27). Participants ranged in age from 18 to 32 years (M = 19.01, SD = 1.56), with two not reporting. Most reported their ethnicity as Caucasian (n = 74, 75.8%), with more self-reported males (n = 58, 59.2%) than females (n = 38, 38.8%). Most participants worked at least part-time (66, 67.3%).

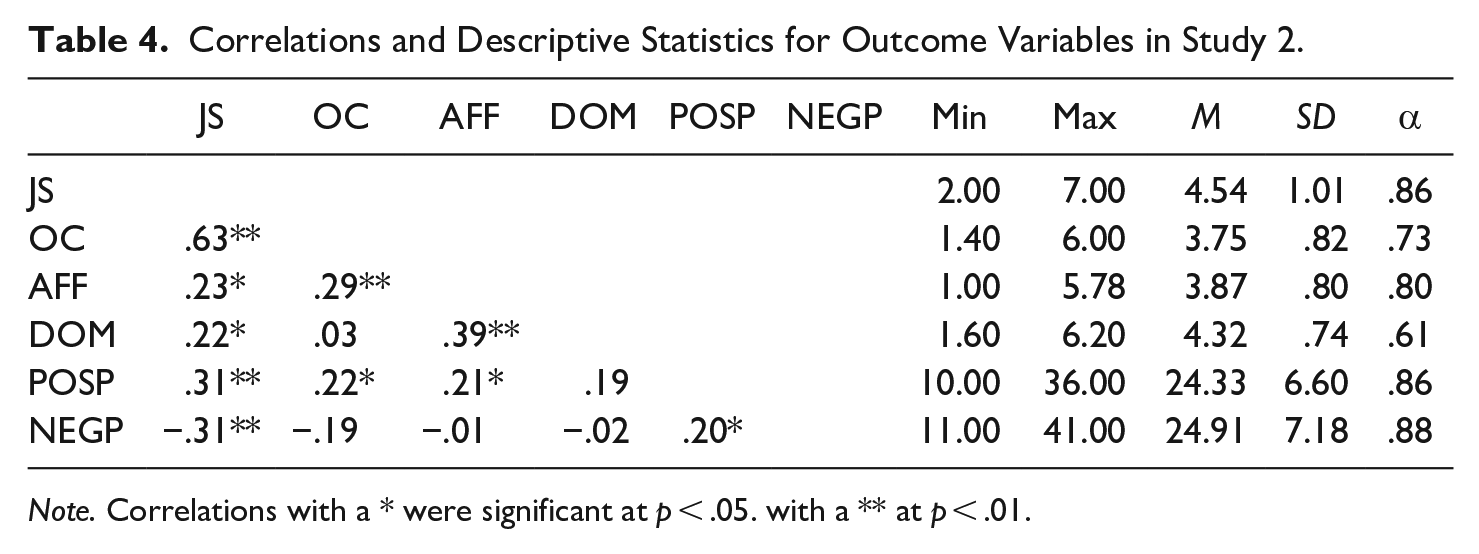

Measures

The measures used in Study 1 were again used in Study 2. The same scales were created as in Study 1, except that one item (“This employee probably feels as if this organization’s problems are their own”) was removed from the organizational commitment measure and one item (“The manager didn’t attempt to influence the employee”) was removed from the dominance measure. See Table 4 for descriptive statistics of each variable in Study 2.

Correlations and Descriptive Statistics for Outcome Variables in Study 2.

Note. Correlations with a * were significant at p < .05. with a ** at p < .01.

Study 2 Results

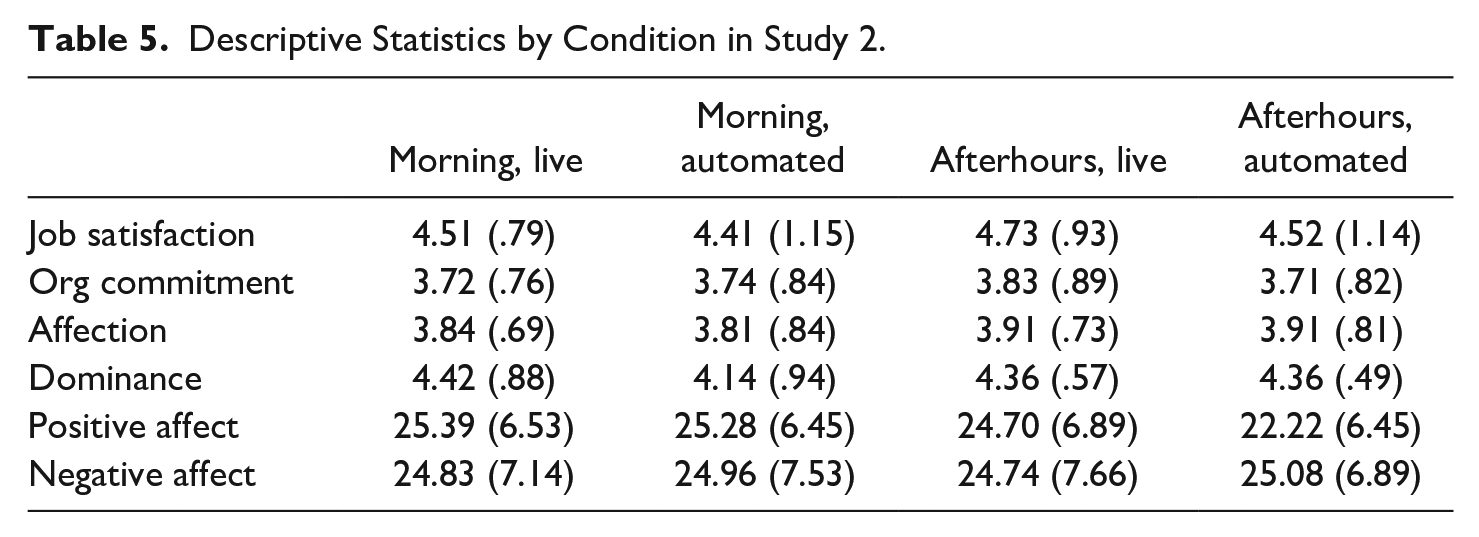

In order to analyze the data collected in this replication study, 2 × 2 ANOVAs were again run for each dependent variable based upon the source and time cues. See Table 5 for means and standard deviation across condition in Study 2.

Descriptive Statistics by Condition in Study 2.

The ANOVA for job satisfaction showed no differences for time, F(1, 94) = 0.62, p = .433, η2 = .01, or source, F(1, 94) = 0.56, p = .455, η2 = .01, nor the interaction between time and source, F(1, 94) = 0.07, p = .793, η2 = .00. There were also no differences for time, F(1, 94) = 0.05, p = .832, η2 = .00, or source, F(1, 94) = 0.08, p = .783, η2 = .00, nor the interaction between time and source, F(1, 94) = 0.17, p = .683, η2 = .00, on organizational commitment.

The ANOVA run for perceived affection also found no differences for time, F(1, 92) = 0.29, p = .592, η2 = .00, or source, F(1, 92) = 0.01, p = .913, η2 = .00, nor the interaction between time and source, F(1, 92) = 0.01, p = .930, η2 = .00. There were also no differences for time, F(1, 92) = 0.26, p = .611, η2 = .00, or source, F(1, 92) = 0.79, p = .377, η2 = .01, nor the interaction between time and source, F(1, 92) = 0.83, p = .365, η2 = .01, on perceived dominance.

Finally, the ANOVA run for positive mood found no differences for time, F(1, 94) = 1.99, p = .162, η2 = .02; source, F(1, 94) = 0.94, p = .334, η2 = .01, nor the interaction between time and source, F(1, 94) = 0.79, p = .377, η2 = .01. There were also no differences for time, F(1, 92) = 0.00, p = .992, η2 = .00, or source, F(1, 92) = 0.03, p = .875, η2 = .00, nor the interaction between time and source, F(1, 92) = 0.01, p = .945, η2 = .00, on negative mood.

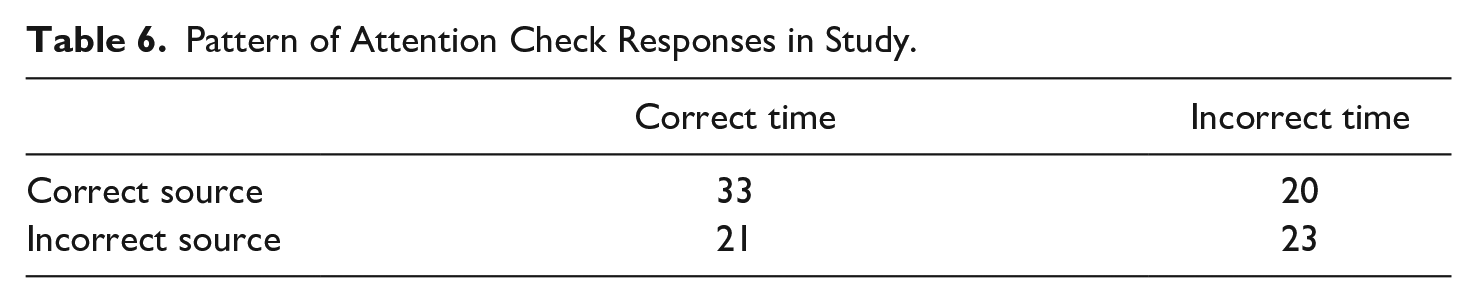

Overall, the findings in Study 2 were consistent with those of Study 1: There were no statistically significant findings. Participants were again asked to complete the same attention check as in Study 1. The results can be seen in Table 6, but also similar to before, participants seem to not be attending to these cues, with only 33 (33.7%) participants answering both attention check questions correctly this time.

Pattern of Attention Check Responses in Study.

General Discussion

The current research was designed to examine the impact of time and source automation cues on people’s impressions and collected data examining these at two times: initially in 2019 and again in 2023, after major contextual changes were thought to potentially have made time and source cues more relevant to workers. In sum, there were no effects found for time, source, nor the interaction between these two on any of the five dependent variables analyzed in the data that were collected in 2019 and in 2023.

As reviewed in our Study 1 discussion section, this lack of significant findings has critical implications for management and theorizing about managerial communication. One reason for the lack of significant findings in Study 1 was that people were not paying attention to these cues. A simple attention check in the first experiment from 2019 suggested that over half of participants failed to correctly identify either the source (27.6%) and/or the timing (37.8%) of the message. This suggests that timing cues were not as meaningful to people in 2019 as they were in 1995 when Walther and Tidewell conducted their pioneering study on chronemics and cues. In our study, a large number of people did not even seem to notice or register these cues. Motivated by pandemic-shifted work routines (Pennington et al., 2022) and the potential impact of ChatGPT on individuals’ mental models for automated managerial communication, we conducted the experiment a second time. If anything, in 2023, these pieces of information were attended to even less, as even more participants (44.3%) missed the attention check for time in Study 2, and almost twice as many (45.4%) missed the attention check for source automation in Study 2 compared to Study 1 nearly 4 years earlier. It appears people (especially of the age range in our experiments) do not care about this information in email communication with their managers. This would be a change for time cues, as previous research (Walther & Tidewell, 1995) found that they did have an impact on some of the impressions studied in the current research. More research is likely needed to address this possibility.

Another explanation for the absence of any significant relationship between our source manipulation in the two experiments and relational outcomes could come from expectation. It is common for AI systems to not disclose AI’s involvement in communication (Hancock et al., 2020). At this stage of AI deployment, partners in AI communication situations presumably are expecting messages to come from fellow humans (Hancock et al., 2020). Our results suggest that even when presented with language indicating that a managerial “email was generated automatically,” users are not at all likely to assume the sender was part of the AI system. Participants in our two studies were likely not detecting a machine-heuristic (Sundar, 2020) as some specific cue that a message was coming from a nonhuman source. With theorists suggesting AI systems need some sort of disclosure mechanism to indicate automation in communication (Hancock et al., 2020) our experiments reveal the difficulty in AI system users discerning whether a source is automated or directly from a human manager. This warrants future study.

One more important issue to note is the challenging measurement for dominance. Although we employed a previously established measure (Burgoon & Hale, 1987) that has been used successfully in similar research in the past (Walther & Tidewell, 1995) as well as a more recent, nonrelated study (e.g., Burgoon et al., 2022), changes were required to make this measure usable in our research. This was especially true in Study 1, where 4 of the 69 items needed to be removed in order to achieve an acceptable reliability. Future research may want to consider using a different scale for measuring dominance if desired.

Another potential limitation from this research is the sample used in each experiment. Participants were drawn primarily from that authors’ university Listserv and, thus, were mostly current university students. Therefore, the results may not directly generalize to current full-time employees’ perceptions of automated responses from managers. However, this sample is still important for a variety of reasons. First, current students will (hopefully) be entering the workforce more fully soon. Therefore, their expectations and experiences with AI and automated responses at work are important to explore, as the prevalence of these technologies will likely continue to increase in the workplace they inhabit. Second, past studies on perceptions of technology use in the workplace have shown a lack of difference between college student samples and working adult samples (e.g., Westerman & Westerman, 2013). Finally, many of the students at the university where these data were collected from do work, including a sizable number that work full time. These data were not collected in the current study, and so these last two issues cannot be tested here. Future research should continue examining working adults’ expectations and impressions of various AI technologies in the workplace.

Conclusion

Evidence from two experiments in our study tells an unexpected story: Young people (who will soon be entering the workforce as full-time employees) do not seem inclined to notice time and source cues in email communication with their managers, let alone feel much in response to task requests from their managers over email. Layering a second experiment against our first enabled us to look definitively at chronemics and potential impacts of automated managerial communication on employees. It appears, then, that the entire environment around employee-manager communication (such as face-to-face meetings, information overload, and peer communication) would be key in shaping affective work dynamics not just email exchanges with managers. While our sample (students) is limited from a generalizability standpoint, the data do point to a muted or restrained connection between source cues in emails in professional business communication contexts and employee outcomes. From our experiments, we assert that automated emails and other forms of AI will be minimally disruptive to workplace routines in this respect. That is, the null differences in one experimental condition run at two different times—the human/automated message—suggests employees are not distinguishing between human and non-human sources of managerial communication. This bolsters the case for AI in the workplace given that employees are, when it comes to their affective responses, nonplussed by their interactions with nonhuman managers. It is not that employees are tuning out nonhuman bosses, it is that the emotional responses to automated and human-sent messages are not meaningful to employees. Moreover, evidence here suggests that even though much has been made about the pandemic disrupting employees’ sense of time, the disregard for time cues existed even before the Coronavirus shifted when employees do their jobs. Managers should recognize this in their communication with staff. While some research had previously revealed the disruptive nature of automated and unconventional work hours on employees, time and source cues in managers’ communication are not as powerful as originally thought.

Footnotes

Appendix A

Email #1

To: (name redacted)

From: Leslie Marks

Time: 08:35am, Sept. 9, 2019

Subject line: Need that report this week

Hello (name redacted). Just wanted to send you a reminder that I need to get that project report from you by the end of this week. As you know, this is a very important report for the next stage of the project. Please let me/your supervisor know if you have any questions about it.

Best,

Email #2

To: (name redacted)

From: Leslie Marks

Time:

Subject line: Need that report this week (follow-up)

Hi again. Just wanted to send you another reminder to say that we need to get that project report from you this week. It’s a key project for us, so we appreciate you finishing the report. If you have any questions, you can follow-up with

Best,

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.