Abstract

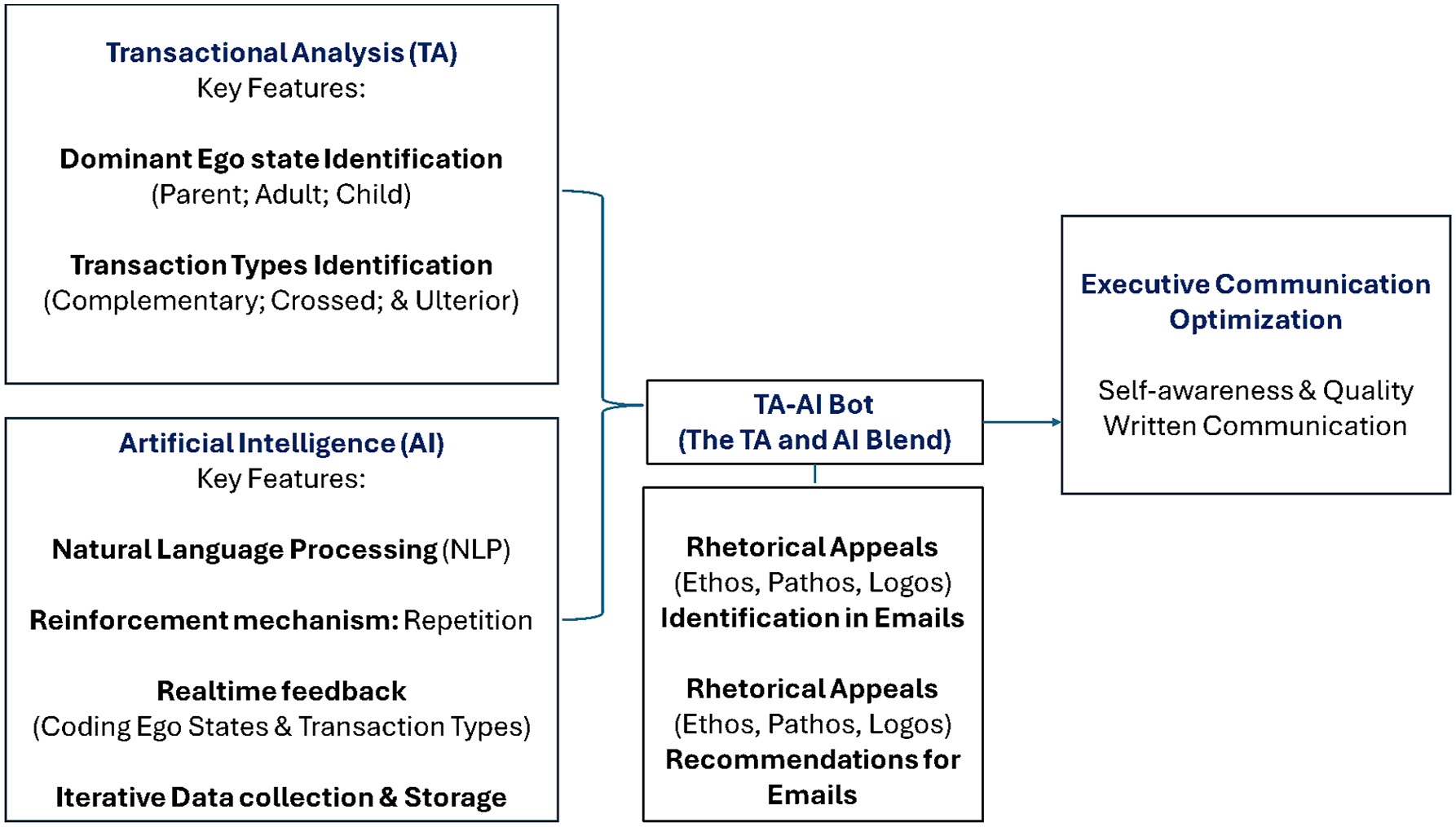

This article presents “The Cure for Talking,” a pioneering conceptual framework that blends Transactional Analysis (TA) with Artificial Intelligence (AI), to produce a TA-AI Bot designed to optimize executive communication. Here, the medium of interest is written emails. The TA-AI Bot aims to change behavior through the reinforcement mechanism of repetition. The feedback system of the TA-AI Bot is designed to enhance users’ self-awareness and communication quality, that is, identification and shifting of ego states to approximate better communication; and recognition of rhetorical appeals that typify their exchanges with others. Validation of “The Cure for Talking” will require iterative research.

Keywords

Grammarly for Business (2024) posits that AI should be embraced as a catalyst for effective business communication, calling for “better” rather than “more” communication. A 2024 State of Business Communication Grammarly Report volunteers these statistics: employees spend approximately 88% of their work week communicating and 19 hours of the work week on writing activities. While 84% of leaders diversified their communication channels, it is noted that an exponential rise in workplace communication fatigues employees and subverts the quality of their interactions. Accordingly, 51% of workers endure increased stress, 41% low productivity, 31% strained relationships, and 26% missed deadlines. Opportunities of Gen AI use in the workplace are noted: 80% of employees report that it improves their work quality, while 73% of employees report that it helps them avoid miscommunication, Gen AI saves professionals 1 day per week in productivity; and organizations with 1000 workers may save $16.5 million a year in productivity via Gen AI (Grammarly for Business, 2024). However, while 58% of employees would like their employers to be receptive to Gen AI, 65% of leaders have expressed concerns about gen AI security.

Executive Communication Effectiveness

In this article, the medium of interest regarding executive communication is written emails. Lansat (2018) reports a Harvard Business Review study that tracked CEO’s time management regarding email usage. Reading and responding to emails stole 24% of the leaders’ time, interrupting their workflow and prolonging their workday. Plummer (2019, p. 1) of Harvard Business Review asserts that “The average professional spends 28% of the workday reading and answering email, according to a McKinsey analysis”. While the former authors problematize email usage among leaders, Biggs et al. (2024) call for the prioritization of written executive communication.

“An often overlooked aspect of executive communication involves writing . . . leaders must have solid communication skills even in writing to lead effectively” (Biggs et al., 2024, p. 202). Biggs et al. (2024) blame “ghost writing” in executive culture for poor executive communication. However, the conceptual contribution advanced in this article, namely, the TA-AI Bot, will replace “ghost writers” by compelling executives to reflect on their written communication choices. The salience and weight of written executive communication is echoed by the fact that emails can easily be distributed across the organization, implying that executives must be deliberate and diligent when writing, to avoid audience confusion when they are not present to clarify their messages. Phan (2024) concurs, highlighting that clarity, conciseness, audience awareness, and professional tone are essential written business communication competencies (Emerson, 2024). Professional relationship management and complex idea articulation are part of “polished professional communication” (Phan, 2024, p. 4). Miscommunication, confusion, and inefficient processes may stem from poor executive communication, as employees struggle to align their behavior to commands from the executive suite (Biggs et al., 2024). Phan (2024) proposes a conceptual framework for teaching business writing through social media, arguing that the digital age necessitates “effective written communication” as a critical skill for business professionals. Similarly, Biggs et al. (2024) flag social media as a tool used by leaders to communicate with the public and promote the organization. Phan (2024) argues that social media provides business students a chance to practice written communication skills in real time, preparing them for modern business environments, typified by nuanced and fast-paced communication. The conceptual contribution proposed (TA-AI Bot) presents a different way of enhancing written executive communication. It offers executives who may not have completed a business communication course the opportunity to gain self-insight (real-time feedback) and enhance their written communication with diverse audiences (ie, people of different Ego states who use rhetorical appeals differently).

Emerson (2024) opines that emotional intelligence underpins communication and urges executives to reread and check the tone of their messages before sending them, and to wait 2 days for their emotions to regulate before replying to emotionally charged messages. These recommendations underscore the importance of executives’ self-insight (Ego state awareness), self-preservation (e.g., emotional regulation and protection of credibility), and influence (choice of rhetorical appeals for effective communication with audiences). Emerson (2024) concludes that executive communication will remain challenging, replete with misunderstandings, miscommunication, and inconvenient messages.

Sadun et al. (2022), Vassilakopoulou et al. (2023), Southworth et al. (2023), Rusmiyanto et al. (2023), and Coman and Cardon (2024) have suggested we turn to AI to improve executive communication. However, no one has provided a multifaceted framework that blends AI with psychology (Transactional Analysis) while incorporating rhetorical appeals, to optimize executive communication. The proposed conceptual contribution (TA-AI Bot) unites fragments of Emerson’s (2024) recommendations to provide a coherent executive communication optimization tool.

A burning question at the heart of the conceptual approach proposed in this article is, What is the potential of AI to improve the self-awareness and communication of executives? Attendant to this inquiry are research gaps posed by Vassilakopoulou et al. (2023) and Coman and Cardon (2024) and statements by Southworth et al. (2023), Sadun et al. (2022), and Rusmiyanto et al. (2023) that validate the exigence of this conceptual contribution. An opportunity exists, as a result of of the rapid developments in AI, to leverage AI for executive communication improvement.

Speed, capability, and scalability are the strengths associated with chatbots, while humans are thought to outperform AI in empathy, critical analyses, and judgment (Vassilakopoulou et al., 2023). However, it is argued that without the TA-AI Bot intervention, executives may miss out on gaining self-awareness regarding habitual yet unnoticed communication patterns (default Ego state and transaction types) that affect their interactions with others and potentially forgo an understanding of how the rhetorical triangle can be applied to enhance their email communication. It is in this way that the TA-AI Bot can be viewed as an empowerment tool that facilitates skilled communication. It should be included among those that are shaping the world (Southworth et al., 2023). Moll (2021) deals with the “humanization” of virtual settings alongside social identity negotiation in a digital context. Users of the TA-AI Bot would “negotiate” their social identities by vacillating between their default ways of communicating and their “enhanced” modes of communication.

Literature Review

Business Communication in a Global Context

Getchell and Lentz (2019, as cited in Andrews, 2023, pp. 1–2) share a detailed account of business communication: “transactional, problem-solving communication that involves creating and disseminating work-related messages through appropriate channels, while being sensitive to the needs of the audience, the context, and culture in which the message is conveyed and the impression that the sender makes on the audience.” Communication is collaborative and extends beyond the sender-audience dyad to include multiple interactants, different conversation directions, and power dynamics (Coman & Cardon, 2024). Transactional analysis aligns with this perspective on communication. Gallagher (2017) introduces “algorithmic audience” as a concept for teaching web-writing; similarly, Moll (2021) highlights a need to grasp how educators and enterprises should catalyze successful dialogue. The author equates the complexity of face-to-face interaction with virtual conversations. While Moll (2021) suggests that social presence tends to be concentrated in visual forms of media, like videos, rather than typed emails, outcomes of the study indicated the latter to be true too.

Andrews (2023) advocates for a design-thinking approach to break the “formulaic” cast of the dyad. However, the current article makes a starkly different conceptual contribution of how blending Transactional Analysis and AI can improve executive communication. Andrews (2023) maintains that the post-COVID reimagined workplace (a somewhat comfortable domesticated office) is a space for design-thinking–enhanced communication. Interactants are expected to be reflective and iterative. It must be noted that while Andrews (2023) lauds a shift from “transactional to relational” business communication, the subversion of a “transactional” element in conversation, as conveyed by the author, does not diminish the conceptual contribution of the Transactional Analysis and AI blend as a relational communication enhancement strategy. Moll (2021, p. 3) highlights a “digital space of inter-cultural exchange” as the context in which digital interactions between business program students occur. In this digital context, participants’ reports of their strengths and weaknesses took different forms; strengths were sharply conveyed with little embellishment, while weaknesses were minimized and hesitantly volunteered (Moll, 2021). Thus, impression management seems core to individuals’ self-projection and interactions. Moll (2021) suggests that participants’ “hypervigilance” in social settings remains the same whether the context is virtual or in-person. The theme of impression management resonates with the TA-AI bot conceptual contribution

Transactional Analysis

Transactional analysis (TA) is a psychoanalytic theory and therapeutic method developed by Eric Berne (Harris, 1969). Transaction analysts track people’s ego states and communication patterns to enhance their communication quality. Transactions denote communication between people; in conversation, a “transaction stimulus” evokes a “transaction response” (Solomon, 2003). Transaction types include complementary, crossed, and ulterior (Hollins Martin, 2011). Voice tone, body language, and facial expressions accompany transactions. There are 3 Ego states that characterize the theory (Parent, Adult, and Child). “Ego states are coherent systems of thought and feeling manifested by corresponding patterns of behavior” (Vos & van Rijn, 2021, p. 164). Ego states and communication response patterns stem from childhood experiences (parenting style that may produce an anxious child). Adult-adult transactions are viewed as the gold standard of healthy interactions; they put conversations on the right track, may help individuals gain a sense of control, and produce good relationships with others (Berne, 1957). TA interrogates how people give and take strokes (adopting a victim mentality or asking people inappropriate questions), with the aim to change unhealthy stroking patterns (Harris, 1969). Strokes can be positive or negative (Vos & van Rijn, 2021). Individuals attribute meaning to life events that affect them, through Life scripts. Life scripts are developed in childhood and impact people’s perception of the world (e.g. fearful, fearless, apathetic). However, the novel business communication enhancement approach (TA-AI Bot) introduced in this article and subsequent research and development of the bot will not account for Life scripts because they are highly individualistic and tricky to interpret.

TA is a complex theory with applicability in a variety of domains. However, the TA-AI Bot will be limited in its design because it needs training underpinned by NLP (Southworth et al., 2023). The author has intentionally omitted excessive complexity to arrive at a functional AI tool that can demonstrate efficacy in Ego state identification and corrective prompts. As the TA-AI Bot and its related research mature, further complexity may be introduced.

Hartman and McCambridge (2011) propose style-typing and style-flexing as solutions to enhance millennials’ communication. These solutions are loosely presented. Communication style-typing requires an individual to identify subjective communication preferences against those of others. Style-flexing requires individuals to shift their communication preferences to meet the other on the same “wavelength.” However, the author’s proposed intervention introduces a structured approach to communication optimization. The approach can be visually depicted (refer to Figure 1), and owing to its blend with AI, the TA-AI Bot will have the advantage of an iterative database of users’ natural language transactions. It is a measurable attempt to optimize communication of business executives. The TA-AI Bot takes a clear and intentional leap forward. “Style-typing” as described by these authors is operationalized by the TA-AI Bot.

The Cure for Talking framework: blending transactional analysis and artificial intelligence (TA-AI Bot) for executive communication optimization.

Artificial Intelligence and Executive Communication

According to IBM (https://www.ibm.com/topics/chatbots), AI “chatbots are chatbots that employ a variety of AI technologies, from machine learning—comprised of algorithms, features, and data sets—that optimize responses over time, to natural language processing (NLP). . . . Deep learning capabilities enable AI chatbots to become more accurate over time.” Southworth et al. (2023) anticipate an AI-ready workforce equipped with 21st-century competencies, like “communication skills.” Framed as “Competencies 2.0,” Hickman and Dvorak (2019) outline 7 expectations for leadership behavior, for example, relationship building, inspiring others, clear communication, critical thinking, and establishing accountability. In alignment with Southworth et al. (2023), the TA-AI Bot aims to enable competent and effective executive communication.

NLP, combines computational linguistics—rule-based modeling of human language—with statistical and machine learning models to enable computers and digital devices to recognize, understand and generate text and speech. . . . NLP lies at the heart of applications and devices that can . . . assess the intent or sentiment of text or speech. (https://www.ibm.com/topics/natural-language-processing)

The IBM website highlights the value of NLP for business efficiency and employee productivity. Reinforcement learning (RL) denotes a subfield of machine learning through which AI-based systems can operate in dynamic contexts. “Reinforcement learning enables a computer agent to learn behaviors based on the feedback received for its past actions” (Kanade, 2022, p. 1).

Affordances

Gibson (1977) writes about unoccupied niches. The TA-AI Bot is a novel unoccupied niche. One may say that “awareness of what things afford and the concomitant ability to select, find, and extract relevant affordances from the environment” (Demir, 2015, p. 127) represents a person’s agency. Vassilakopoulou et al. (2023), Scarlett and Zeilinger (2019), and Zhang (2008) opine that affordances are central to innovation. The potency of the TA-AI Bot is augmented via Affordance Theory in that it will employ emerging AI technology to create “communication affordances” for executives (APA Dictionary of Psychology, 2018a). Evans et al. (2017, p. 36) assert that affordances are “possibilities for action . . . the “multifaceted relational structure” (Faraj & Azad, 2012, p. 254) between an object/technology and the user that enables or constrains potential behavioral outcomes in a particular context”. This relational view helps explain why there is no singular theory of affordances, as they emerge in the mutuality between those using technologies, the material features of those technologies, and the situated nature of use.” Markus and Silver (2008) maintain that the action possibilities are reserved for certain “user groups.”

Scarlett and Zeilinger (2019) highlight Schrock’s (2015) “communicative affordances” as the meeting point between an individual’s subjective perception of the utility of technology and the objective qualities of the technology. This interface produces “altered communication” and behavioral patterns. The affordances of the TA-AI Bot for executives are the following: self-understanding (dominant Ego states and communication patterns identification), self-improvement (corrected transactions and communication quality, including the application of rhetorical appeals), and social impact within the organization and externally (teamwork, stakeholder management, persuasion, negotiation, networking, and conflict resolution). Scarlett and Zeilinger (2019) argue that technology users’ inability to comprehend the complex back end of computations does not prevent them from identifying affordances of the technology. Efficient, responsive, and independent technologies are designed to offload the burden of maintaining “complex representations of the world” from users, so that they can flexibly and timeously respond to dynamic environments. Thus, TA-AI Bot users would perceive its utility and stand to reap its benefits without necessarily understanding the technicalities of the system.

Conceptual Framework for Blending AI and TA

Anna O (APA Dictionary of Psychology, 2023) is credited with coining the term “Talking Cure,” a reference to psychoanalytic intervention, where the therapeutic impact is delivered through clients “talking out” their troubles with a therapist. Bertha Pappenheim (1859–1936) also known as “Anna O,” was an Austrian feminist and social worker, under the therapeutic care of Josef Breuer, Sigmund Freud’s colleague. She was treated for hysteria (APA Dictionary of Psychology, 2018b). The Talking Cure is a well-known catchphrase, which has somewhat lost its sting according to the American Psychological Association’s website. However, in this article the author twists and revives the phrase to denote a novel, urgent, and relevant psychologically rooted intervention that aims to tackle poor communication at the executive level. The author is a registered Industrial/Organizational Psychologist and proposes “The Cure for Talking” (i.e., TA-AI Bot), a communication correction tool produced by a blend of Artificial Intelligence (AI) and Transactional Analysis. The TA-AI Bot is an original concept, which the author will develop into a product to be marketed.

Vassilakopoulou et al. (2023, p. 12) highlight the following research gap: “chatbots are still unable to hold long conversations, to understand which direction a conversation is going, or to match the ability of humans to express empathy in stressful situations (Syvänen & Valentini, 2020).” Interestingly, the proposed conceptual contribution tackles the gap, by ideating a communication enhancement tool (TA-AI Bot) that focuses on the direction and dynamics of conversations. Accordingly, “conversation direction” can be operationalized as an individual’s “movement between” Ego states (Vos & van Rijn, 2021) and transaction types (Complementary transactions; Crossed transactions; Ulterior transactions). Coman and Cardon (2024) raise the issue of conversation direction, also predicting the mainstreaming of AI-assisted business communication. They ask, “Can AI generate emails that adequately capture uniquely human considerations such as tone and style?” (Coman & Cardon, 2024, p. 2). Their question is at the heart of the conceptual contribution prescribed, namely, how blending AI and Transactional Analysis can enhance executive communication. Coman and Cardon (2024) recommend that future studies should investigate whether participants would edit messages before sending them. Following this recommendation, data will be collected from executives regarding their acceptance and rejection of the TA-AI Bot prompts. Southworth et al. (2023, p. 2) highlight the urgency of the TA-AI Bot, asserting that “AI is not something that will happen in the future but rather something that we are living through today.”

The Cure for Talking Framework

This section explains how the Cure for Talking Framework (Figure 1) moves communication beyond superficial fixing to a substantial way to enhance communication. MS Outlook’s email prompt, “start your reply with . . .” is a superficial attempt at “fixing” communication rather than a substantial remedy of the root cause of problematic transactions. Note: In emails (e.g. Outlook), there’s a recommendation of how a sender could respond to an email. However, the author distinguishes these prompts from the transactions of interest in the proposed novel approach. Transactions denote communication between people. In conversation, a “Transaction stimulus” evokes a “Transaction response.” In the conversation (email content), the transactions must involve people on both ends of the conversation, using their own original voice/natural language (not relying on a system-generated response as is recommended in Microsoft Outlook). Focusing on original/authentic responses (response patterns) is the starting point of coding for the Ego states (i.e., Parent, Adult, and Child) and rhetorical appeals, which are the characteristics (ethos, pathos and logos) of an argument that render it persuasive (Lutzke & Henggeler, 2009).

Ego states govern how individuals receive, perceive, and respond to transaction stimuli from others. Characteristics of the 3 Ego states are as follows: Adult ego state—A communication pattern that requires effort to activate; in which thoughts, emotions, and behavior are situated in the present and are direct responses to the here and now. Parent ego state—A default condescending communication pattern, in which thoughts, emotions, and behavior are copied from parents or authoritative figures. Child ego state—A default reactive communication pattern, in which thoughts, emotions, and behavior are replayed from childhood.

The Bot Offloads Communicative Labor from the User to Itself

Communicative norms in the age of digital automation are unique, rapid, and outpace humans’ cerebral and physical capacity. In this context, digital discourse is led by automated machines, which monopolize space once dominated by humans (Reeves, 2016). “One of the most obvious social cognates of this automation is the emergence of new general norms of cultural production and consumption . . . artificial intelligence generates a new way of being-with-technology that remakes our expectations of ourselves and of one another” (Reeves, 2016, p. 158). Regarding the automation of oral discourse, Reeves (2016) asks whether computerized assistants can know a person better than human assistants. Similarly, Reyman (2017, p. 118) echoes “the standard technological quandary where we are either masters of technology or by technology mastered.” Reeves (2016) suggests that computerized assistants create an understanding of our habits, characteristics, shortcomings, biases, and vulnerabilities that are algorithmically complicated. Among the advantages of communicative agents are cost-effectiveness, the ability to translate languages, forecast traffic disruptions, and answering pop culture trivia. “Flesh-and-blood humans” are being excluded from the communicative culture and outpaced by the intelligence and processing speed of virtual assistants. While Hu (2019) acknowledges AI as a double-edged sword (e.g., employment and deskilling threat, and human developmental opportunity); Hu (2019, p. 1144) maintains that “in the age of AI, human beings need not only the ability to communicate with people, but also the ability to communicate with robots.” This perspective is shared by Gallagher (2017).

The Writer’s Perspective

While the TA-AI Bot has elements of “the technological sublime” (i.e., digital optimism) as James Carey would have it; it is not immune to the socially destructive aspects of automated communicative labor. The writer intends to optimize the communicative efficiency of TA-AI Bot users, by incorporating the advantages of automated communicative labor. It then follows that the proposed conceptual contribution may threaten the role of human transactional analysts. Kennedy (2017) recognizes this emotional threat by asserting that advances in artificial intelligence and automation produce anxiety. “We worry about robots . . . about ourselves and our humanness” (Kennedy, 2017, p. 3). Furthermore, the author raises contemporary questions regarding precarious employment, privacy, the authenticity of creative labor, and the danger of automated warfare. Kennedy (2017) argues that cultural anxieties (hope, confusion, and fear) are rooted in history, reflect unreasonable expectations (perpetually energized and ununionized workforces), and deify automated entities. Like the argument about automated emotional labor displacing humans’ therapeutic capacity, the TA-AI Bot stands to exclude and outpace (relying on big data collection and the assignment of intricate algorithmic identities to users) the therapeutic role of Transactional Analysts. Advantages of the TA-AI Bot are efficiency and accuracy, self-insight (immediate feedback), and convenience (perpetual accessibility to users). The psychological value of the TA-AI Bot (an intelligent communicative agent) and its utility regarding rhetorical considerations is recognized.

The Personable Design of the TA-AI Bot

Unlike Microsoft Outlook email response suggestions that are superficial and may be considered as “creating” a false sense of contentment, satisfaction, or rapport between users, the TA-AI Bot deals directly with users’ natural language messages. By attempting to interact with the essence of a user’s perspective (thoughts, emotions, and ideas) as depicted in their own words (written email content), it uplifts the intrinsic value of the human condition. The TA-AI Bot becomes an “active listener/observer” of users’ communication habits, characteristics and biases, to supply the user with immediate feedback (e.g., self-insight that may be obscured) at a convenient time (given that business leaders may have unpredictable schedules and would benefit from the TA-AI Bot, which is accessible around the clock), the desired outcome being users feeling seen and heard by a warm and accurate computerized agent.

The Difference Between Learning and Behavior Change

Learning denotes a cognitive information-acquisition process (problem-solving skills or mastering complex ideas), encompassing reflection, comprehension, and new knowledge synthesis (Bandura, 1977). Learning tends to precede behavior change, but the latter does not rely on the former, because some behaviors are altered by external stimuli or reinforcement (Deci & Ryan, 2013). Learning is assessed through changes in cognitive abilities (Anderson, 2000) while behavior change is assessed through direct observations of modified actions (Fogg, 2009). Introspection and self-knowledge may not trigger behavior change (Noon, 2018). However, executives who are apathetic about improving their communication may be inspired to do so by colleagues that adopt Cure for Talking.

The TA-AI Bot Aims to Change Executives’ Behavior Rather Than Teach Them

The TA-AI Bot is meant to change behavior. Behavior change involves observable differences in actions or responses to external stimuli; this is achieved using reinforcement or incentive-based systems (Skinner, 1965). Social cognitive theory (Bandura, 1986) emphasizes the role of external factors and reinforcement in altering behavior. Accordingly, prompts from the TA-AI Bot represent “external stimuli” intended to catalyze users’ behavior change (i.e., improved communication). Behavior change indicators include “frequency of specific actions,” “adoption of new habits,” or “the persistence of behavior over time” (Fogg, 2009). Accordingly, the TA-AI Bot aims to measure users’ behavior change through tracking their adoption or rejection of the prompts and positive shifts in Ego states that indicate improved communication.

Discussion

The benefits and challenges of blending AI and Transactional Analysis follow.

The Benefits of Blending AI and TA

The affordances of the TA-AI Bot for Executives must be noted. While the context of use is primarily business email communication, the relevance (communication optimization by, inter alia, applying rhetorical appeals) of the TA-AI Bot extends to the following circumstances: negotiating with stakeholders, networking events and conferences (delivering keynote addresses and interacting with unknown audiences), persuasively handling group dynamics during meetings and teamwork, conveying leadership credibility through communication style, organizational strategy development, and articulation.

How the bot takes on the role of collaborator to change behavioral responses to certain stimuli or rhetorical situations

Reeves (2016) asserts that critical scholars of new media and digital rhetoric will be challenged by the displacement of the discoursing human subject (in economic and cultural life) at the hands of machines. However, the author claims that communicative labor will be preserved through new forms of human expression and creativity. Reeves (2016) asserts that critical scholars of media, rhetoric, and communication should focus on the mechanism by which the automation of oral and written discourses affect communicative culture and society. Tantamount to the mechanization of industrial labor, Reeves (2016, p. 152) contends that “human communication is being monitored, analyzed, mechanized, automated, and gradually removed from the work of cultural production.” Given that the proposed TA-AI Bot employs a mechanized approach to communication enhancement, including the analysis and tracking (data storage) of communication patterns between users, one might argue that the TA-AI Bot would contribute to communicative culture erasure.

For Greene (n.d., as cited in Reeves, 2016), communicative labor exists in surplus and is the embodiment of cooperation and creativity; it constitutes power that cannot be captured, constrained, or controlled by capital. However, Reeves (2016) alludes to the social threat of automated communicative labor, maintaining that uncontrolled automated communication strips communicative practice of the values of “deliberative struggle” and “social reciprocity.” The authentic voice of individuals/groups becomes subverted and constrained, preventing clarity, understanding, and efficient collaboration. The opposite side of the automated communication coin holds that automation purifies the communication process by eliminating the “human factor,” that is, unpredictability and error. In this regard, the fallible human is easily swayed by emotions that are in direct opposition to the mathematical rigor and precision that characterize machines (Ellul, as cited in Reeves, 2016). The proposed TAI-AI Bot does not demonize the human factor; instead, it interacts with the user’s own “voice” (which may be colored by emotion, unpredictability, and error) by coding Ego states and suggesting improvements to the written discourse (email messages). The user has the option of choosing to accept the recommendations of the TA-AI Bot or not. Hence, the voice and independence of the user is respected. “Social reciprocity” is preserved in the automated communication context of the TA-AI Bot, since the starting point of users’ communication (written emails) stems from the self in natural response to the other.

How users of the TA-AI Bot reflect on the reasons for their writing and communication choices

Behavior change depends on deliberate interventions and tends to be reinforced through repetition, rewards, or consequences (Michie et al., 2011). The TA-AI Bot is a deliberate intervention, informed by the observation that communication is central to the success of business leaders and their organizations; it will work via an algorithm, which are typically developed for specific purposes (Gallagher, 2017). In “Writing for Algorithmic Audiences,” Gallagher (2017, p. 28) asserts that “audiences can influence the writer by influencing the language of a text; and . . . participatory audiences play a role in the creation of writing and content.” Therefore, one can conclude that as an algorithmic audience, the TA-AI Bot, stands to influence users’ writing (i.e., business emails). The reinforcement mechanism underpinning the TA-AI Bot will be “repetition”—the same format will be used to encourage users to refine their communication via prompts from the TA-AI Bot. Accordingly, users will be made aware of their current Ego state (as encoded in their written communication) and its disadvantages. These “disadvantages” may take the form of recommendations based on the rhetorical triangle (Lutzke & Henggeler, 2009): for instance, guiding users to temper the “emotional” tone (pathos) of their messages for maximum impact (e.g., assertiveness vs arrogance), guiding executives to reformulate their messages to convey their credibility (ethos), or suggesting changes (e.g., crafting argumentatively strong messages with matching data) to enhance users’ persuasiveness (logos). Users have a choice to accept or reject the prompts; they will also be “rewarded” with a summary of personal data that reflects positive shifts in their Ego states over time (Rebellious Child to Adult). This reward should help users perceive the utility and positive impact of the TA-AI Bot in their email communication. It is in this way that users may reflect on why they are making the writing/communicating choices they make. Zuboff’s (1985) description of firm competitiveness as a product of “informating” captures the strategic value of the TA-AI Bot as a communication enhancement tool informed by data collection. Zuboff (1985) outlines the duality that characterizes information technology: First, technology can be used to automate operations (human error minimization and maximum efficiency)—interestingly, the author highlights the “deskilling” of humans as a function of this automation. Second, technology can create information (Zuboff, 1985). Regarding the latter, Zuboff (1985) advances the term “informate” to denote applications (designed to automate), which simultaneously produce information about how (processes) organizations achieve their effectiveness.

How users are prompted to reflect, engage and buy into the TA-AI Bot’s output

The human is meant to reflect on the prompts suggested by the TA-AI Bot. Users are not forced to accept the recommendation; however, their choice will be recorded as an indicator of their interest or potential disinterest in revising their messages before sending them to the targeted recipient. The design of the Bot respects the user’s choice and voice (i.e., agency); and by extension acknowledges that algorithms can be wrong. Reyman (2017) dissects the theme of algorithmic glitches, ethics, and accountability. Algorithms have rhetorical agency, that is, they can speak and be heard, they connect, respond, and effect change (Ingraham, 2014; Gallagher, 2017; Reyman, 2017). Therefore, algorithmic glitches and errors must be acknowledged as “hidden assumptions or underlying biases” that gain momentum owing to users’ continued use of and blind trust in their output (Reyman, 2017). Reyman (2017) warns digital rhetoric scholars to avoid circumscribing our idea of algorithms as tools created to behave in ways predetermined by humans, nor should scholars ascribe undue power to algorithms over “uncritical” human users. According to Ingraham, “algorithms . . . are constrained by the rules that determine their parameters for operating” (2014, p. 62).

Reeves (2016) comments on the displacement of humans by machines is supported by the latter’s capacity to produce “customized and privatized” textual experiences. Users obtain the messages based on their “algorithmic identities.” Leaders’ communication patterns and Ego states may be considered “algorithmic identities,” and the “radically customized and privatized textual experiences” (Reeves, 2016, p. 157) would be the TA-AI prompts and adoption of the TA-AI Bot’s recommendations. Computerized agents (e.g., Chatbots) give users algorithmic identities that are activated by customized communication.

Users discover the fallibility of algorithms through “reflecting” on their experiences of algorithmic glitches

Algorithms can educe emotions. Reyman (2017) argues that algorithmic agency receives attention when humans discover that fallibility of algorithms via reflection. Gallagher (2017) and Reyman (2017) agree that malfunctioning algorithms can produce unexpected associations, generate racist and stereotyped content, or produce “creepy” (highly accurate) suggestions that make users weary of being “watched”. However, unexpected outcomes may come from well-functioning algorithms. Regarding ethics and accountability, Reyman (2017) alludes to the question, “Who is to blame for algorithmic glitches?” Should the human designers of algorithms shoulder responsibility for offensive rhetoric, or the algorithms (with embodied agency) themselves? Attendant to this question is, “Do algorithms operate independently?” Others may assert that algorithms are designed and trained by people, thus removing the “agency” of the computational processes, and assigning blame solely to humans. Reyman (2017) cites the ethical issue surrounding Microsoft’s Twitter chatbot, Tay.ai., which was “intercepted” by malicious human users who trained the bot using offensive content. This example illustrates that human designers, users, and the algorithms themselves may be held accountable for the ethical implications tied to unexpected outcomes from artificial intelligence. Reyman (2017, p. 121) refers to this scenario as “the distributed nature of agency in digital rhetoric.”

Rhetorical actancy as an example of how users “engage” with the machine (TA-AI Bot)

According to Reyman (2017), historically, rhetoric scholars have confined agential roles to human agents, denying algorithms such capacity. A reformulated perspective called “fragmentation of agency” (Geisler, 2004, as cited in Reyman, 2017) holds that agency is distributed across nonhuman and human agents. Rhetorical ecologies highlight that communicative acts are dynamic, fluid, and operate within and across networks. Agency is gradually being recognized as a shared factor among rhetors, technologies, audiences, and spaces across social and cultural networks. Zuboff (1985) asserts that technology is not neutral and holds valence; however, the spectrum of technological advancements gives humans a range of choices and chances to assert their agency. Reyman (2017) asserts that agency in digital contexts can be found between the contiguous human-machine relationship. This “dance” between the actants (human and material entities) is referred to as “rhetorical actancy,” which catalyzes persuasion and change (Reyman, 2017).

When users “buy into” the machine’s output (the technological unconscious in algorithmic authority)

According to Beck (2018), a composite of persuasive technologies and surveillance capitalism sucks unsuspecting end users into immersive experiences (products or services) that can be difficult to escape because their agency is subverted. Beck (2018) argues that surveillance evades end users through “enmeshment,” where they become consumed by algorithmically generated content that they enjoy or find attractive. In keeping with Reyman (2017), Beck (2018) positions algorithms as “opaque” and “invisible” because the act of observation is decoupled from a rhetorical event that promotes engagement between the rhetor (observer) and audience (prisoner). This intensifies the surveillance mechanism inherent in algorithms. Reyman (2017, p. 113) claims Internet users are cocreators of the knowledge economy; however, their influence ranks below that of the algorithms that create the invisible structure: “through trending topics, filtered content, and customized searches, the knowledge economy of the social web is largely constructed through algorithms that operate quietly behind the scenes.” Gallagher (2017, p. 31) refers to this as the “the black box.” Moreover, users become immersed in the virtual world (i.e., medium disappearance) because of the “aesthetic of computing technology” (Beck, 2018, p. 298) which lessens their cognitive awareness of their surroundings (hardware and software included). Based on this, Reyman (2017, p. 114) calls for “a rhetorical study of algorithms,” asserting that persuasion and meaning-making online are products of hidden technological systems that evade users. This inconspicuous automated sorting and filtering of content has been termed “the technological unconscious in algorithmic authority” (Reyman, 2017).

Challenges Associated With Deploying the TA-AI Bot

Storage and surveillance of business communication

The naivety of Internet users as explained by Reyman (2017) is captured by James Carey’s description of digital optimism as “the technological sublime” (Reeves, 2016). Accordingly, trusting users relegate their decision making, thoughts, and activity choices to algorithms. For Ingraham (2014), this outcome occurs because algorithms are “digital rhetorics” with the power to persuade the audience on what can be regarded as truth, knowledge, and material reality in daily life. Ingraham (2014, p. 62) highlights the rhetorical nature of algorithms: “An algorithm’s design thus makes rhetorical choices that privilege the importance of some information or desired outcomes over others.” Reyman (2017) argues that the behavior of naïve users is misguided and their “tunnel vision” creates an echo chamber (Pariser’s 2012 “filter bubble”) that isolates contradictory information and fresh insights.

Algorithmic surveillance capitalism

While personal and public data collection form part of algorithms, the observation and tracking of users’ online activity has been likened to Jeremy Bentham’s panopticon and Orwell’s big brother, where users are imprisoned. Accordingly, “the observed never know whether and when they are being watched” or “they know that they are constantly under surveillance by big brother” (Beck, 2018, p. 298). Beck (2018, p. 298) cautions that “observation” is not benign, but a method of “taking data to engineer the conditions the audience will live with for future rhetorical events.” This amounts to end-user entrapment. Beck (2018, p. 299) calls for a cost-benefit analysis of surveillance capitalism, that is, “manufacturing desire to generate demand for products and services.” Surveillance capitalism is a cocktail of “impulse and enticement” served to end-users to solicit prolonged use of products and services that use algorithms to mine data for profit. The TA-AI Bot will be developed into a product to enhance business communication. In this sense, one might perceive it as a data mining tool for profit that capitalizes on the “business” conversations between users that get filtered by the Bot. However, South Africa has the Protection of Personal Information Act, 2013 (i.e., POPIA Act), which is a privacy law that safeguards the integrity and sensitivity of private information. This implies that the threat of surveillance and storage of business communication is relatively neutralized. Beck (2018) argues that the producers of technology hold power. The definition of algorithms adopted by programmers assumes that they are neutral and unbiased. This perspective undermines the fact that programmers influence end-users “through a guided design” and, thus, cannot be viewed as innocuous (Ingraham, 2014). Beck (2018) suggests that ignoring this power imbalance creates a slippery slope of unprecedented amounts of surveillance data collection from unsuspecting end-users. Highlighting the theme of power, Kennedy (2017) asks “who benefits (from artificial intelligence and automation)?” Thereafter, Kennedy (2017) concludes that AI development and deployment must be ethical and egalitarian. To protect themselves against surveillance, consumers are advised to “obfuscate,” that is, generate “noise” in a data collection pool to complicate the process of harvesting their real data. Obfuscation is recommended by Finn Brunton and Helen Nissenbaum. Hu (2019) highlights another protective measure, viz., Aoun’s “robot-proof” educational model to arm graduates against robots. Aoun advances “humanics,” a composite of data, technological and human literacy, and cognitive reasoning ability (e.g., critical and systems thinking), aimed at catalyzing students’ creativity and flexibility toward their coexistence with robots (Hu, 2019).

Ideologies become robust and more influential when persuasive technologies recede from immediate attention. Zuboff (as cited in Beck, 2018, p. 298) unpacks this scenario of surveillance capitalism as “constituted by unexpected and often illegible mechanisms of extraction, commodification, and control that effectively exile persons from their own behavior while producing new markets of behavioral prediction and modification.” Regarding power imbalance, persuasive computer algorithms, when paired with programmers, take on the role of co-rhetors in the rhetorical event. Programmers then systematically monopolize the behavior of the population by stripping its privacy. “Digital rhetoricians need to turn to developing studies to uncover the relations of algorithms and implicate the persuasive influence computer code has upon people” (Beck, 2018, p. 300).

The deskilling of executives’ skills

Reeves (2016) argues that social obligations, human connection, and values are being disrupted by “artificial conversation partners.” “Human-replacing technologies” have social consequences, according to Turkle (n.d., as cited in Reeves, 2016). Deification of machines robs users of the patience required to deal with the social dynamics of human relationships. In the current article, attrition in social skills represents the deskilling of executives’ skills. Reeves (2016, p. 155) asserts that “many are coming to fantasize about robots that could serve as friends who would always listen to us, who would never become angry, who would never disappoint.” However, this is idealistic as it paints “perfect” communicative conditions that protect individuals’ psyche and emotions, at the expense of cultivating resilience and realism. Removing a person from reality is unhelpful; critical conversations are part of business communication (Emerson, 2024).

Ethical measures the writer will take to ensure responsible usage of executives’ behavioral data

Phishing and web application attacks, data tampering, sensitive information spoofing, DDoS (Distributed Denial of Service), repudiation, and elevation of privilege are some risks that undermine chatbot security (DLabs.AI, 2024). End-to-end encryption, identity authentication and verification, and data anonymization are methods of securing the behavioral data (Amos, 2022). Organizations can add scanning features to chatbots, to detect malware and subvert cyber attacks. Kotha (2023) and Eshed (2024) recommend data minimization (only collecting data that are necessary for the TA-AI Bot’s functionality), frequent security audits, and penetration testing to locate and correct system vulnerabilities. Compliance with data protection regulations (POPIA Act) is advised.

Conclusion

This article makes a conceptual contribution called “The Cure for Talking”—a pioneering approach that blends TA with AI, to produce a TA-AI Bot (chatbot) designed to optimize executives’ self-awareness and communication quality. The benefits of the Cure for Talking include executives’ affordances; the TA-AI Bot’s design, which respects users’ voice and independence; and the opportunity for users to reflect on their writing and communication choices.

Footnotes

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.