Abstract

In a cross-institutional study, this article shares research findings about business student perceptions and experiences using an automated writing assistant program based on traditional artificial intelligence. Using a mixed-methods approach, we share student responses to Grammarly’s suggested revisions and provide insight into students’ confidence levels and correctness in workplace written communication. Finally, this study concludes with a discussion of the implications of this work related to business communication education and research, as well as possibilities for the future.

Keywords

Introduction

For decades, people have sought shortcuts to help them improve their writing. When companies incorporated spelling and grammar checkers into their word processing programs in the 1980s and 1990s, many were ecstatic at the potential to write without the fear of error. However, those tools could have been a better solution to a longstanding problem, as the spelling and grammar checkers had accuracy issues (Sahu et al., 2020). Users still needed a basic understanding of grammar, punctuation, and spelling rules to ensure they were selecting an appropriate response when prompted by the program (Vernon, 2000). These checkers are now more advanced and are capable of assisting with tone, conciseness, and clarity. AI advances have transitioned writing assistance beyond spelling and grammar checkers.

Advances in traditional and generative artificial intelligence (AI) can support different types of writing assistance. If used well, AI-based writing tools “hold significant potential for improving the writing skills of students in a meaningful and targeted manner” (Calma et al., 2022, p. 10). Coman and Cardon (2024) cite that grammar is an attribute of professionalism, and AI can help students’ writing show this. However, instructors are still responsible for improving their students’ writing abilities (Calma et al., 2022). They are in the ideal position to teach its opportunities and challenges for proper and ethical use in educational and business settings.

The growing importance and popularity of traditional and generative AI-based writing tools suggests that business communication instructors’ approaches to how students use these tools will play a key role in equipping students with the knowledge and skill sets necessary to construct professionally written business messages and content. DeJeu (2024) calls for instructors to teach students how to use the available tools effectively, but also think critically and understand the limitations. While traditional AI-assisted writing software has been around for quite some time, a lack of understanding continues in the general use of traditional AI-assisted writing tools. First, how do students use AI writing assistants to write and communicate? Second, how do students consider and respond to suggested revisions made by a writing assistant? Third, what perceptions do students hold about writing assistants and their ability to instill confidence and correctness in their written communication? Fourth, how does a writing assistant prepare students for workplace writing? By seeking student input on how they use AI writing assistance, the study helps faculty and researchers to understand student AI tool usage, student confidence when using an AI tool, and student views of their future as communicators. Understanding student views can help faculty adjust instruction as the writing process adjusts to incorporate widespread AI tool usage. To address these questions, we begin by reviewing the literature on AI’s historical and current uses in writing studies, user responses and perceptions of AI writing assistants, and the potential impacts of AI writing assistants on workplace writing.

Literature Review

AI is not new. To establish a contextual timeline for traditional AI and its use in writing assistants, we summarize the key individuals and their programs that eventually led to modern applications of AI-assisted writing technologies. Scholars often credit Alan Turing and John McCarthy as two of the “fathers” of artificial intelligence (Li & Du, 2017). Each of these scientists contributed significant concepts to humanity’s quest to create “a thinking machine.” For example, Turing is credited with the first application of AI in designing the Bombe machine, which cracked the Enigma machine communications code used by the Germans in World War II (Singh, 2022). After the war, Turing (1950) posed the question, “Can machines think?” and then posited three possible strategies to create artificial intelligence: (1) programming; (2) ab initio machine learning; or (3) logic, probabilities, learning, and background knowledge (Muggleton, 2014).

In addition to Turing’s contributions, the American computer scientist John McCarthy first coined artificial intelligence in 1956 for the first AI Conference held at Dartmouth College in Hanover, New Hampshire (Singh, 2022). That same year, McCarthy also developed the

Although it has received considerable media attention recently, ChatGPT is not the first AI-driven chatbot. The first chatbot, ELIZA, was created in 1964 at MIT (Singh, 2022). From that point forward, many significant advancements in AI have occurred in developing expert systems and intelligent computer-assisted instruction. By the late 1970s, AI made headway into other applications by creating expert systems and using AI-powered tools in computer-assisted instruction (Tennyson & Ferrara, 1987), which are precursors for today’s AI writing assistants.

Using Automated or AI-Based Writing Assistants

Two subfields of AI exist—traditional and generative. Traditional AI uses programmed rules to perform specific tasks, while generative AI produces new content and can create deepfakes, chat responses, and artificial data (Trotta, 2023). In the realm of writing, traditional AI has been used in various ways; however, AI-based and automated writing assistants, automated writing evaluation (AWE), and AI-based writing tutors have been the most beneficial for students and teachers. These natural language processing (NLP) technologies have also changed how writing is taught (McCarthy et al., 2019). AWEs, like Turnitin Gradescope, allow teachers to evaluate and provide feedback on students’ writing without the time constraints of manually reading and marking their work. Additionally, students who receive AWE feedback often produce improved writing assignments (McCarthy et al., 2019).

Newer writing applications contain generative AI, the primary focus of which is authoring rather than editing. For example, Grammarly has introduced generative AI tools to help students generate article ideas, such as “brainstorm topics for my assignment” or “build a research plan for my paper,” to overcome the blank page problem. Feedback prompts, explanations, and auto-citations are also provided to assist students in improving their critical thinking and communication skills (Lucariello, 2023, para 3).

As technology advances, AI writing assistants are becoming increasingly crucial to the writing process, especially in business communication courses where high-quality writing is essential and often contains high-stakes consequences (Joglekar et al., 2022). With the aid of AI-powered writing assistants, students can focus on the content of their writing assignments, while instructors can grade papers more efficiently and provide formative feedback to help students improve their writing skills (Getchell et al., 2022; Yannakoudakis & Fawthrop, 1983). AI-assisted writing software has the potential to enhance writing performance and outcomes, and both students and educators must understand the significance of this technology (Holmes & Tuomi, 2022) and implications of how it may impact student writing and teaching approaches.

From helping students improve their grades to helping business professionals enhance their communications, controlled-language spelling and grammar checkers are not new, but writing programs such as Grammarly.com are innovative because they use AI to improve more than just spelling and grammar. Grammarly provides real-time feedback by explaining errors and suggesting edits (Barrot, 2023). Grammarly does not automatically change the writing and requires the users to accept the suggestions. While the free version of Grammarly.com does offer basic spelling and grammar suggestions, the premium, subscription-based version has features such as reorganizing sentences for clarity, improving formatting for readability, and suggesting tone changes. These improvements, released in 2020, expand beyond the word level and “look at a convoluted sentence and suggest rewrites to make the sentence more readable” (Lardinois, 2020, p. 2). As writing software becomes more widely used, its pedagogical significance should be considered for its impact on teaching writing and rethinking approaches to student learning (Cardon et al., 2023).

Responding to Revision Suggestions

From the educator’s standpoint, AI writing assistants such as Grammarly can be helpful because they allow students to find many of their errors, which may lessen the frequency of punitive markings of mechanical errors and allow instructors to focus on content (Toncic, 2020). Still, AI writing assistants are not foolproof. For example, John and Woll (2020) found that online grammar checkers (including Grammarly) were more accurate than Microsoft Word. However, these online programs were limited in providing complete student writing feedback. As writing software improves, the programs appear to offer fewer inaccurate suggestions, but John and Woll (2020) suggest educators use online grammar checkers with educational activities to target selected errors. If John and Woll (2020) are correct, then implications for rethinking approaches to student learning might include activities that ask students to compare the AI software suggestions against textbook and course instruction or other reputable linguistic source materials.

From the student’s standpoint, AI writing assistants can be helpful when developing writing and self-evaluation skills. Calma et al. (2022) submitted graduate students’ group writing to Grammarly to analyze common mistakes. They found that when students used Grammarly, they had fewer mistakes, which helped instructors focus on other components of submissions. In turn, “instructors can then use the mistakes detected to focus on the further development of writing skills” (p. 7) rather than simply marking grammatical mistakes. Further, the researchers found that instructors could better direct students to self-evaluation. Likewise, O’Neill and Russell (2019) found that students who received instructors’ advice with their Grammarly feedback were generally “very positive” about the program. However, participants noted that feedback from Grammarly was not always accurate. The results indicated that students were hesitant to make changes beyond instructor recommendations, suggesting that students had not relied too much on Grammarly. By contrast, students scoring low on the International English Language Test (IELT) were more satisfied with Grammarly.

Non-native language use

Several studies have considered users whose first language is other than English. Wang’s (2022) study of Chinese students taking an English writing course found positive feedback from students using an automated writing evaluation program. Students commented that the feedback from the program was detailed, understandable, and timely to help improve their writing. Although these programs can help students build their self-confidence, they potentially change the instructor’s role in the learning process from correcting errors to a “learner-friendly social engagement context” (p. 94).

ESL learners may need specific guidance in any case. John and Woll (2020) assessed three grammar checkers’ performance on ESL learners’ writing. The grammar checkers included Grammarly, Visual Writing Tutor, and the grammar checking tool in Microsoft Word. Their conclusions indicated that these grammar checkers had poor overall coverage. However, of the three involved in the study, Grammarly and Visual Writing Tutor performed far better than the grammar-checking tool in Microsoft Word. John and Woll (2020) specified that while these tools may better detect errors in certain categories, they can detect specific errors in ESL student writing, mainly when focusing on form in specific types of ESL classes.

Barrot students found similar positive results for the use of Grammarly in ESL classrooms. This experimental study found a significant improvement of writing accuracy for students who used Grammarly during the course. Students indicated that the written feedback from Grammarly helped explain the errors and engaged them in the learning process. Barrot cautioned, “however, it seems that those with lower proficiency levels were likely to adopt the suggestions outright without processing them” (p. 600). This notation implies that Grammarly may be best used for students with advanced abilities, which implies that those with less-advanced capabilities may need more direct instruction on how to critically assess the AI writing assistant’s suggestions.

Even when looking at an early review of spelling and grammar checkers used by students writing in a nonnative language, Olsen and Williams (2004) believed the checkers to show promise on two fronts: (a) students who were proficient in the nonnative language used these tools to assist with more complex aspects of writing in the language and (b) novice students who struggled to write correct sentences in the nonnative language used them to write simple, straightforward English as correctly as possible. Overall, students who traditionally received feedback from their instructors were satisfied, but students who received feedback from Grammarly with instructor feedback were more satisfied with the program. This would seem to suggest that a multifaceted approach (AI tool + Instructor) to giving and receiving feedback provides better instruction than just a singular method of feedback.

Perceiving Confidence and Correctness

O’Neill and Russell (2019) assessed university students’ perceptions of Grammarly concerning instructor-provided feedback, student visa status, and course delivery mode. In the study, instructors uploaded students’ assignments into Grammarly Premium and then downloaded the Grammarly report with feedback on spelling, grammar, punctuation, sentence structure, style, and word choice. Using these reports, students identified whether the marked errors were accurate. The exercise encouraged students to consider their writing choices instead of automatically accepting the program’s suggestions. Grammarly appears to help students build their writing self-efficacy, especially when using the tone detection tool. Winans (2021) noted that if students were more confident in their ability to write messages, they were more likely to ask for help from their instructors. This may suggest that instructors adapt traditional grammar and editing exercises to include analysis of AI writing assistance feedback. Additionally, these types of exercises may shed some light on and point out the discrepancies between students’ perceptions of their writing and instructors’ evaluation of it.

One disadvantage Winans (2021) reported was that Grammarly did not guide changing the message’s tone. This finding indicates that Grammarly is adequate for writing drafts, but students are responsible for final editing. Calma et al. (2022) proposed using Grammarly strategically to support writing by embedding it when designing the assessment and instructing students to self-evaluate. In this manner, “Grammarly as an instructional tool can play an important role in supporting instructors to integrate writing development into their teaching and assessment practice” (Calma et al., 2022, p. 8). Embedding and using Grammarly in this manner may provide instructors more time to focus on deeper writing concerns such as audience, tone, and message strategy rather than grammatical and usage errors.

Preparing Students for Workplace Writing

In the 1982

Before the advent of computerized writing assistants or AI inherent in spelling and grammar checkers, instructors taught students to write and proofread their documents, looking for grammar, spelling, punctuation, and word choice errors. They stressed the importance of an error-free document and how errors would reflect poorly on an employer and the employee who created it (Moyer, 1977). The mantra “proofread, proofread, proofread” could be heard in every business communication classroom.

Once AI-based writing assistants, such as spelling and grammar checkers, entered the classrooms and workplaces, instructors did not change their focus from teaching grammar, spelling, and punctuation rules. Instead, they stressed the rules so that when these tools noted errors in students’ work, they could discern whether to accept a recommendation to change or modify something or to leave the text, word, or punctuation as is.

When we look at the writing instruction in today’s classrooms, we see AI-based writing evaluation systems, including spelling and grammar-checking tools. These writing systems provide students with immediate personalized feedback, thus assisting them in identifying areas for improvement in writing (McCarthy et al., 2019). Instructors are still teaching the basics of grammar and punctuation and pressing for proofreading; however, they also encourage students to use available tools. Grammar and spelling checkers can be invaluable, but only to the point where students have the critical thinking skills to make rational choices. Employers expect effective writing from employees, but even the most promising technologies are only as good as those using them. These technologies include traditional and generative AI. As Cardon et al. (2023) explained, students should be able to articulate the strengths and weaknesses of AI tools and understand how AI can apply to their school and work tasks. Business communication instructors are in the ideal position to teach about the opportunities and challenges of proper and ethical use in educational and business settings. With this in mind, we wanted to gain an understanding of how students use and perceive a popular traditional, nongenerative AI-based writing assistant by exploring the following research questions:

Methodology

This section outlines the research design, participant recruitment, and data collection used in our study. We aimed to investigate the use and impact of nongenerative AI writing assistants, specifically focusing on Grammarly because of its widespread use in business schools and workplaces. The methodology is divided into several key areas: participant demographics, the focus on AI writing assistants, participant demographics, data collection procedures, and measures.

Participants

Participants were recruited from four universities (three southern, one midwestern) and asked to complete an anonymous Qualtrics survey. All universities’ institutional review boards approved data collection. At each university, the surveys were distributed by email by someone at that university. The student recipients of the emails were told there was no compensation for completing the survey and that it would take 15-30 minutes to complete.

Of 105 participants, 18 did not complete the quantitative measures in the survey, and 10 reported that they had not used Grammarly in the past six months. Those participants were removed from the analysis for Research Questions 1 through 3, leaving 77 participants whose responses were assessed. Of the 77 participants, 66.23% (51) identified as female, 31.17 % (24) as male, and 2.60% (2) chose

Most respondents (76.62%) were undergraduates, 10 freshmen, 10 sophomores, 18 juniors, and 21 seniors. Graduate students, 17 master’s and 1 doctoral, also completed the survey. Most participants (89.61%; 69) were business majors, while 3.9% (3) were minors, and 6.49% (5) were nonbusiness majors in the business school. The majority (84.42%; 65) had completed or were currently in a business communication or writing course. Eight (10.4%) participants were on a visa, meaning they were international students. Additionally, 15 (19.48%) reported being non-native English speakers; 37 (48.1%) spoke more than one language. Forty-three (55.8%) used Grammarly Premium; thirty-two (41.6%) used the free version; and two were unsure which type they used. Demographics were collected to analyze whether any particular group used an AI writing assistant in a different way.

AI Writing Assistant Focus

The survey was developed to investigate our research questions about nongenerative AI writing assistants. Although many AI writing assistants are available today, we narrowed the survey to examine Grammarly use because of its prevalence in business schools and the workplace and our familiarity with its features: “People at 96% of the Fortune 500 already rely on Grammarly” (Business Wire, 2024). As a traditional AI-based writing assistant, Grammarly provides suggestions for writing revision but does not generate text for students, which is more relevant to writing-focused courses.

Data Collection

The survey was distributed in early 2023 before the introduction of generative AI to Grammarly. The survey consisted of four core sections: demographics, writing skills and confidence, Grammarly usage questions, and open-ended questions about AI writing assistants in the workplace.

In the writing confidence section, participants were asked about their skill level in business, academic, and personal writing and how well they wrote, spoke, and read English. Additionally, they completed a 16-item perceived self-efficacy for writing scale (SEWS; Bruning et al., 2013) to assess writing confidence. Students read statements and indicated their confidence for each action on a scale from zero (no confidence) to 100 (complete confidence). The SEWS is a three-factor scale measuring: (1) conventions (α = .87, five items, e.g., “I can write grammatically correct sentences, (2) self-regulation (α = .89, six items, e.g., “I can control my frustration when I write), and (3) ideation (α = .89, five items, e.g., “I can put my ideas into writing). The scale’s overall reliability was α = .93. Overall, students indicated a strong level of confidence in their writing on the SEWS scale (

Next, participants reported Grammarly usage information. These questions, which will be reported in the Results section, covered many topics. Participants were asked what purposes (e.g., academic, work, personal) they used Grammarly for, how it impacted their writing skills, their satisfaction with Grammarly’s corrections, how they used Grammarly in the writing process, what they did with Grammarly’s suggestions, and their planned use of Grammarly after graduation.

Additionally, the survey included an open-ended question that asked participants to explain how Grammarly has or has not prepared them for writing for the workplace. Securing qualitative responses provides a more visible understanding than that obtained through solely closed-ended quantitative research questions. Because of survey attrition, only 39 participants completed the open-ended question. Only those responses were assessed for Research Question 4.

Results

This section presents the findings from our survey, organized by the research questions. We begin with a quantitative analysis of the first three research questions. Following this, we provide a qualitative analysis of the fourth research question to identify themes from open-ended responses. Each subsection details the specific findings related to how students use AI writing assistants (Research Question 1), their responses to suggested revisions (Research Question 2), their perceptions of the impact on their writing confidence and correctness (Research Question 3), and their preparation for workplace writing (Research Question 4).

Data Analysis

Researchers analyzed the first three research questions primarily through descriptive statistics. They also ran analyses of variance to analyze demographic differences in certain variables. Because this was a survey, researchers explored relationships between variables but could not make causal claims.

Researchers qualitatively analyzed the fourth research question using a modified version of constant comparative analysis (Charmaz, 2006; Glaser & Strauss, 1967). First, they thoroughly examined the 39 open-ended responses and noted the content within each answer. Of that sample, five answers were removed from analysis because they did not answer the question or said “not applicable.” With the 34 relevant responses, researchers conducted open coding to determine how using an AI writing assistant affected students’ preparation for writing in the workplace. They analyzed the data and assigned initial categories to the responses, continually moving between the data and the categories. Then, they compared categories and combined similar ideas while separating distinct categories. They identified distinct themes and subcategories from the data through an iterative process. All responses were associated with at least one category within a more prominent theme.

Research Question 1: How do students use an AI writing assistant?

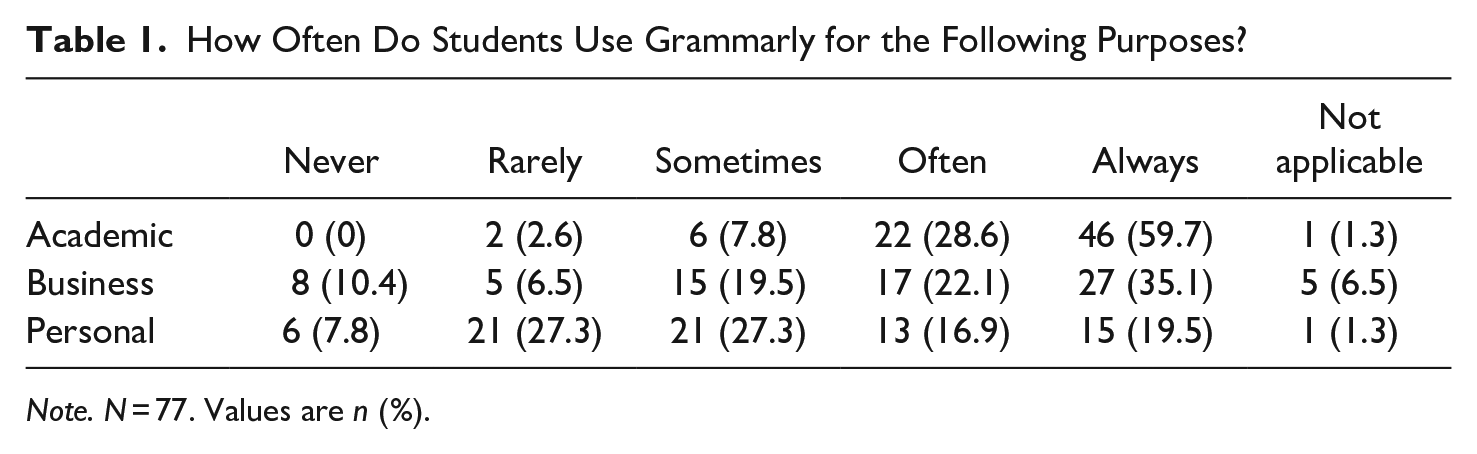

On a 5-point scale (1 =

How Often Do Students Use Grammarly for the Following Purposes?

When given a list of why they might use an AI writing assistant, the top two reasons were because it was helpful (68.8%) and made proofreading easier (67.5%). Other reasons were that their school offered it for free (44.2%), it improved grades on writing assignments (41.6%), and students wanted to improve their English writing (40.3%). Additional reasons provided indicated that students used it in high school and liked it (31.2%), an instructor suggested it (20.8%), and a class required it (15.6%). There were no significant differences in Grammarly use on demographic variables (e.g., international status, Grammarly product used, or gender).

Students reported how they most often used Grammarly. The majority (31; 40.3%) used Grammarly throughout the writing process (e.g., while writing and proofreading). Other students reported using Grammarly most often after completing a final draft (22; 28.6%) or a rough draft (16; 20.8%). Finally, a small contingent (5; 6.5%) used Grammarly while writing a rough draft. A few students reported using it only when worried about their writing (2; 2.6%) or when required by a class (1; 1.3%). These usage patterns suggest that users across all demographics find Grammarly helpful throughout the writing process, but they were most likely to use it for consequential writing, such as in an academic setting.

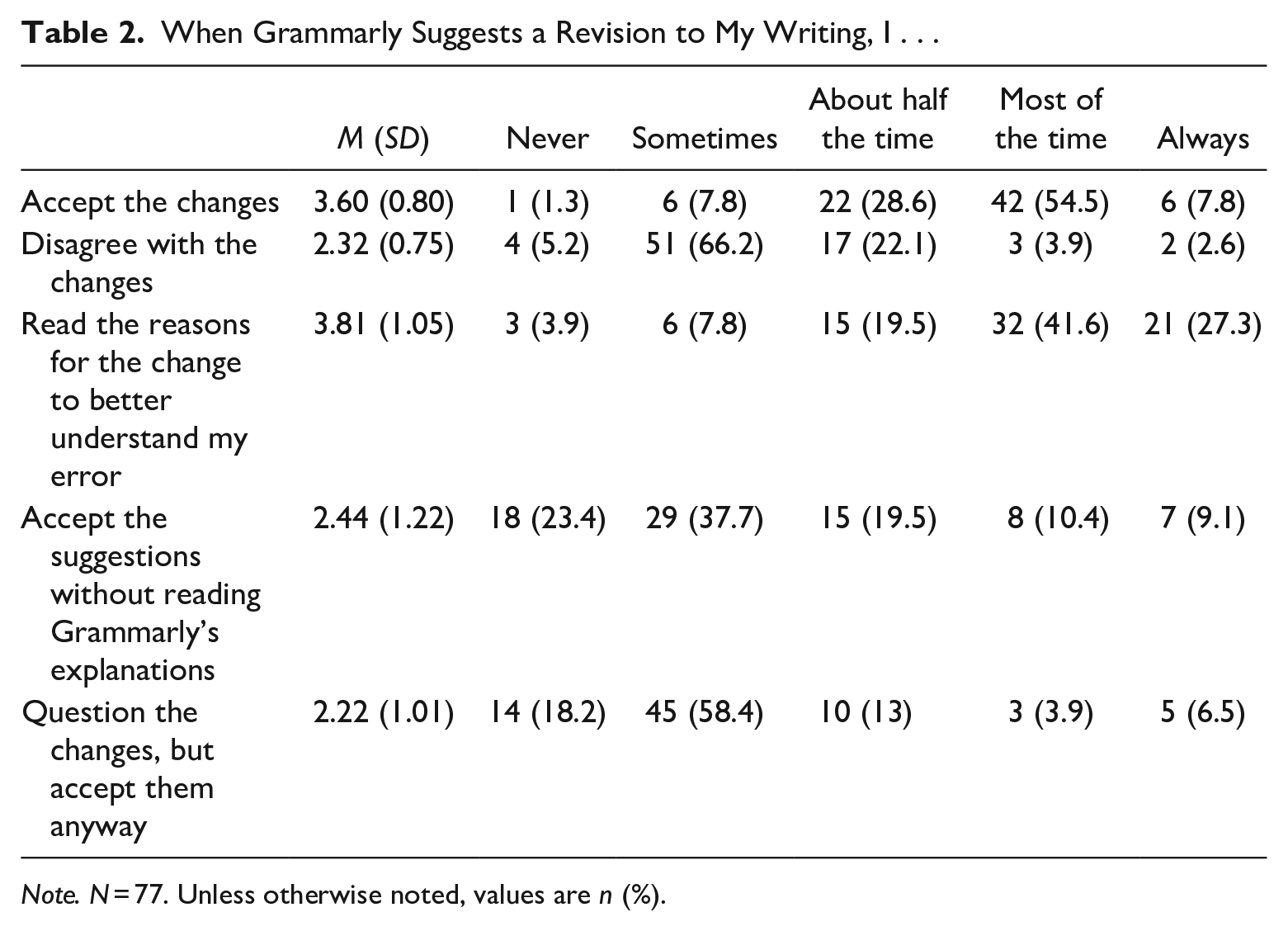

Research Question 2: In what ways do students respond to suggested revisions from an AI writing assistant?

Students reported their reactions to suggested writing revisions on a 5-point scale (1 =

When Grammarly Suggests a Revision to My Writing, I . . .

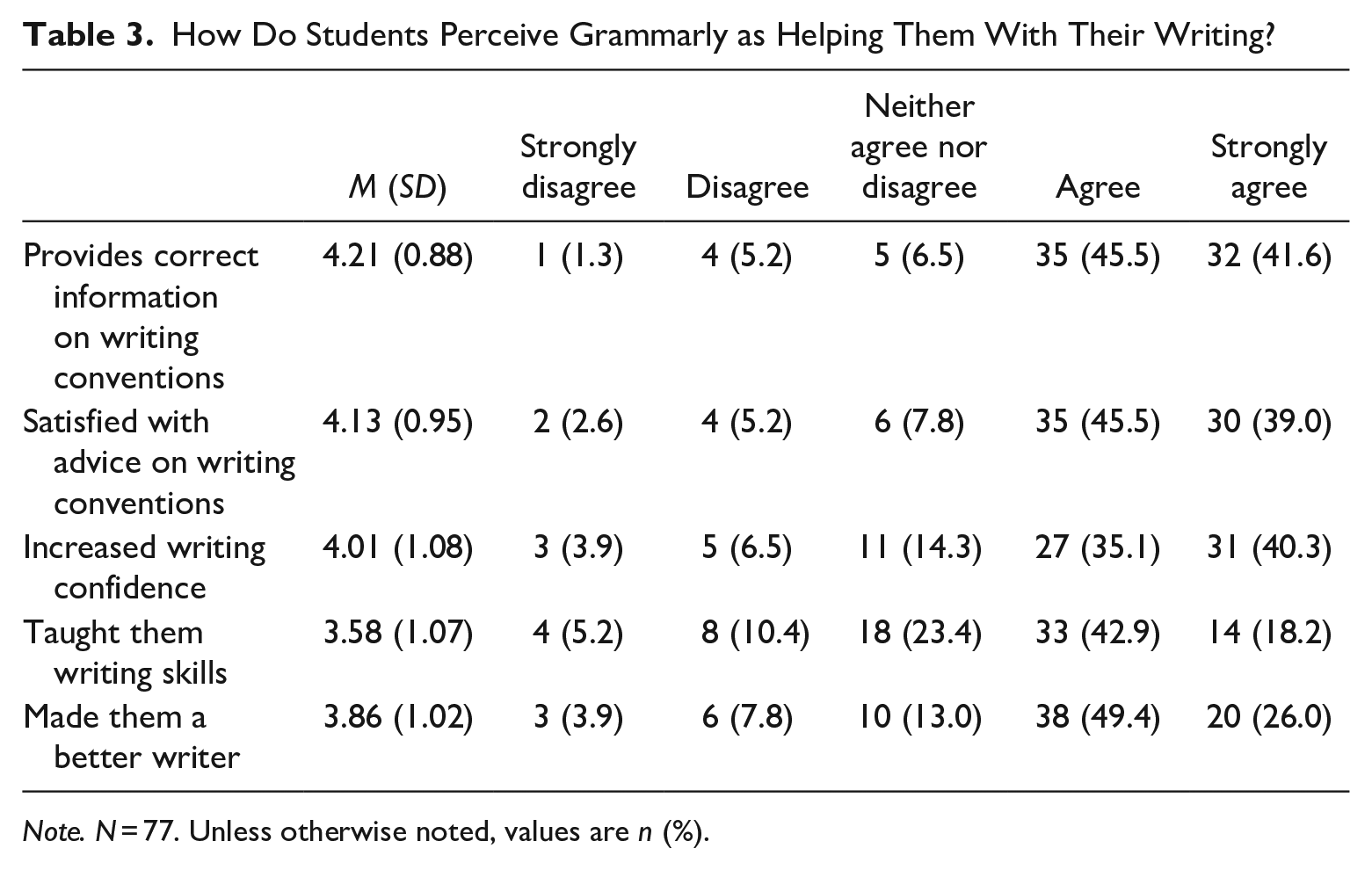

Research Question 3: How do students perceive an AI writing assistant as helping them with their writing regarding confidence and correctness?

Students were asked on a 5-point scale (1 =

How Do Students Perceive Grammarly as Helping Them With Their Writing?

When responses were filtered based on how students used Grammarly, students (

Research Question 4: How has using an AI writing assistant prepared or not prepared students for writing in the workplace?

Students were asked on a 5-point scale (1 =

For Research Question 4, participants were asked to explain how Grammarly “has or has not prepared you for writing for the workplace.” Of all surveyed participants, 34 provided a written answer to this research question that could be analyzed. Multiple themes emerged regarding perceptions of Grammarly’s impact on writing. Some respondents explained that they did not see Grammarly impacting their communication. Included in this sentiment were themes of Grammarly as not impactful because (a) it had incorrect suggestions, (b) it acted as a proofreader only, (c) respondents did not need it because they were confident in their writing skills. Beyond these findings, we developed the following themes from the qualitative analysis.

No Impact on Future Communication (Incorrect Suggestions)

A minority of respondents reported that Grammarly did not impact their writing or prepare them for writing in the workplace. Of that group, most indicated they did not use Grammarly often because they did not find it helpful for major changes. For instance, one respondent said, “Grammarly often suggests unnecessary or unfit changes to my paper, which is why I always check the explanations and never blindly accept them. In other words, sometimes Grammerly [

Returning to the idea of “major” changes, in the quote earlier in this section, the respondent calls the changes “small,” implying they are minor or insignificant. One person wrote, “I have enough knowledge of grammar myself to distinguish between helpful suggestions and non-helpful ones. It hasn’t necessarily improved my writing in any way.” Like in the prior quote in this paragraph, the user considers grammatical changes in a binary: small or big, or helpful or unhelpful. AI writing assistant suggestions considered only “small” or “unhelpful” relate to a perception that Grammarly does not aid their writing. This suggests students see writing issues as minor issues.

No Impact on Future Communication (Mere Proofreader)

A common theme of seeing the writing assistant as a mere proofreader emerged among the responses of Grammarly not preparing them for the workplace. For instance, one respondent said, “Grammarly has not prepared me for writing in the workplace. If I was having to draft reports for my job, I would simply use Grammarly to clean up an already finished draft before I sent it off to my boss or presented it.” While the student found Grammarly helpful, he or she would only use Grammarly for proofreading at the end of the writing process. Not all students used Grammarly at the end of the writing process. Another student uses the platform as they write but said, “I don’t think it has or has not prepared me. I use it as a way to essentially proofread what I type. Right now, for example, I am typing this and it has corrected two small spelling mistakes. Things like that are why I use it.” This suggests faculty must consider the role of AI writing assistance during the writing process and whether or how to teach usage.

No Impact on Future Communication (Confidence)

For those who perceived Grammarly as not impacting their writing for the workplace and those who did find it useful, a common sentiment was confidence in their writing abilities. For example, one respondent said, “Grammarly has not had much of an impact on my writing abilities, apart from growing my confidence. I use it to double check in case I missed something, but it has not helped improve my skills.” Others indicated they already had confidence in their writing and did not rely on Grammarly for writing; they just relied on proofreading. Similarly, another student explained, “It really hasn’t [impacted writing]. Add [

Of the respondents who viewed Grammarly as impactful or helpful, their answers exhibited the following themes: proofreader, awareness of issues, adjustments, and increased confidence.

Proofreader

Like the students who saw Grammarly as less impactful, those who viewed it as helpful in future workplace communication noted the proofreading functionality. As one student said, “It is just auto-correct on steroids. Every office should have it.” Students who viewed Grammarly as positively impacting their writing discussed how the writing assistant feedback made them more aware of their writing by alerting them to common mistakes. For instance, one respondent said, “It helps show me mistakes in my writing that I may not have seen without using Grammarly.”

Awareness of Issues

Students reported a better understanding of common mistakes they made in their writing. They reported gaining awareness regarding punctuation, grammar, tone, clarity, passive voice, and structure. As one student said, it “[makes] me more observant with my writing.” For example, students explained that “Grammarly has shown me that there’s places to improve in my writing and the different types of tone and how they affect the way my writing is read based on the context of what I’m writing,” “it has helped me to become more impartial when presenting facts through my writing,” and “helped me maintain a direct tone for business emails.” Other students noted that using a writing assistant showed them “the importance of active writing and how to use a variety of vocabulary.” Another explained, “It’s made me rethink my voice in writing many times, and I’ve become a better writer because of it.” Absent from these comments is the idea that writing errors are “small” or “minor”; instead, these students connect core professional writing concepts to successful workplace and academic writing.

Ability to Make Adjustments in Writing

Students reported that feedback in these areas helped them understand their mistakes and make adjustments. One illustrated this by saying, “I now look for these mistakes during the writing process in an attempt to avoid them.” Another noted how it assists in “fine-tuning my writing.” This ability to learn from the platform and critically think about their writing has increased confidence in students’ writing. For instance, respondents talked about understanding how to make adjustments based on the feedback and becoming more observant. They felt that “Grammarly has prepared me for the workplace by repeatedly reminding me what errors I tend to make and why these are errors. It puts me in the mindset of the kind of writer I want to be, and makes me feel extremely confident in my work.” These students’ comments reflect a pattern of using Grammarly throughout the writing process rather than as a proofreader for “small” issues as was seen for those with a negative sentiment. The repeated feedback encourages some users to write in such a way as to avoid errors. Overall, these student comments reflect growth in awareness of writing issues using the AI writing assistant.

Discussion

In this section, we interpret the findings from our study and the implications for faculty. We explore several key areas: students’ usage patterns of AI writing assistants, their responses to suggested revisions, their perceptions of the impact on their writing skills, and their preparation for workplace writing. Each subsection provides insights into how these tools are integrated into students’ writing processes. Next, we offer recommendations for faculty on how to adapt their teaching strategies to incorporate AI writing assistants effectively. Finally, this section ends with future research directions for this area of study.

For faculty considering the future of business communication and business writing education, the study demonstrates the importance of teaching the basics of writing and applying traditional concepts when accessing a new tool. Students must understand effective and appropriate communication core knowledge to effectively select, deploy, and use an AI writing assistant. In their responses, students sometimes named writing concepts taught in a business communication course. For example, one student wrote that Grammarly has “made me rethink my voice in writing many times, and I’ve become a better writer because of it. I used to use a lot of passive voice without even knowing, and Grammarly’s suggestions made me realize it.” Several students noted that the immediate feedback and explanations helped solidify their knowledge.

Further, the repeated, frequent, and immediate feedback helped students. One student wrote, “Grammarly has prepared me for the workplace by repeatedly reminding me what errors I tend to make and why these are errors. It puts me in the mindset of the kind of writer I want to be, and makes me feel extremely confident in my work.” These students seemed to benefit from “hands-on” learning in which grammatical corrections were immediately available during the writing process.

How Do Students Use AI Writing Assistants and What Can Faculty Do?

Students reported primarily using an AI writing assistant for academic work, aligning with their lower confidence levels in academic writing. Notably, students reported higher confidence in personal writing than in academic writing, but they saw themselves as average academic writers. Students also used Grammarly for workplace writing, but we do not have details about their workplaces or types of employment. Students said they would be likely to use Grammarly after graduation if access were to be provided by their employer.

While some writing advice suggests revision and editing later in the writing process, the majority of students reported using AI writing assistants throughout the writing process. Some students did report using what some business communication faculty call the “third step” of the three-step writing process. Murray (1972) introduced teaching writing as prewriting, writing, and rewriting (p. 4). Murray defined rewriting as “reconsidering subject, form, and audience. It is researching, rethinking, redesigning, rewriting, and finally, line-by-line editing” (1972, p. 4). Business communication instructors follow the outline of the 3-step writing process. For example, Schwom and Snyder (2018) use an ACE model (Analyze, Compose, Evaluate) in their textbook, and Guffey and Loewy (2018) present a 3 × 3 writing process (prewriting, writing, and revision). Following this model, using Grammarly would involve rewriting or, as many faculty members call it, revising. However, students seemed to use Grammarly for line-by-line editing during the writing stage. Faculty teaching a “3 × 3” or three-step writing process address how and when to respond to AI writing assistant feedback.

This research examples past research about writing instruction. Faculty could consider how to adjust instruction to account for AI writing tools when considering how, when, or whether to teach grammar. Students saw Grammarly as a tool that made proofreading easier and improved grades. Some instructors whose students have access to a tool like Grammarly may consider spending more time on a significant revision, considering qualities like audience orientation or high-level organization. Faculty may consider having students draft without an AI writing tool, revise with the tool, and reflect on the writing process. Some students may deeply need instruction in editing. However, students entering environments with access to AI writing tools may need less emphasis on proofreading tools and more instruction on how to use AI writing tools effectively and appropriately.

In What Ways Do Students Consider Suggested Revisions From an AI Writing Assistant, and How Can Faculty Adjust to Those Considerations?

Given how students reported responding to AI writing assistant suggestions, faculty could use an AI writing assistant to discuss grammar. As stated earlier, most students sometimes disagreed with Grammarly’s suggestions. Faculty could ask students to provide samples of what students perceive as Grammarly’s “mistakes” to foster a conversation about writing conventions and AI writing assistance. This approach may provide an interactive way to teach correct grammar.

Importantly, students read the feedback about their writing. Anecdotally, the authors of this article have certainly experienced students not reading or even viewing the writing feedback they provided when grading in their business communication courses. If students read instant feedback, as reported during the writing process, then when and how students receive feedback on writing should be considered. For example, this student stated, “I used to use a lot of passive voice without even knowing, and Grammarly’s suggestions made me realize it.” The student had an awareness of passive voice, but the instant feedback helped prompt improvement and deepen the learning process. If instant writing feedback were to be read, then there might be significant market potential for future AI writing assistance and assessment tools.

When considering generative AI critical literacy, the findings showed that only a minority of students accepted provided suggestions uncritically. Instead, students reported engagement with the AI’s reported reasoning for its suggestions. In short, they read the feedback, and they thought about it. They did not assume the AI “knew better.” For faculty considering how to teach about AI tools, this finding provides a starting point for considering student perspectives and how their students view and use AI.

For example, at one of the researchers’ universities, Grammarly prompts student, staff, and faculty users to follow the university style guide and include the article “The” when writing the university name. Since the rule’s implementation, Grammarly has prompted more than 22,000 changes, and only 52% of the changes were accepted by the users. In that situation, Grammarly offers correct advice, but the users decline to apply the advice. Further intervention is needed to explain the style rule to achieve compliance.

How Do Students Perceive an AI Writing Assistant as Helping Them With Their Writing?

Students saw using an AI writing assistant like Grammarly as making them better writers. As noted earlier, students saw Grammarly as teaching them writing skills. The students should have reported this help being limited to commas or semicolons, but they often indicated that they not only received help with proofreading but also with revising. In the written comments, one student said, “Grammarly helps my writing be more concise, helps with spelling errors, and makes me aware of smaller issues with my writing.” Similarly, a student said, “It has helped me with proofreading and looking for clarity in my writing.” For schools considering adopting an AI writing assistant, this result suggests student satisfaction with the tool.

How Has Using an AI Writing Assistant Prepared or Not Prepared Students for Writing in the Workplace?

Given how and what students reported about preparation for writing in the workplace, faculty may want to address the misalignment between student confidence and writing ability. As shown in the results, student writing in open-ended survey questions did not consistently demonstrate accuracy. Faculty could discuss the severity of errors or add course content illustrating how a “small” grammatical error can have a “major” adverse impact. Further, faculty could be careful of calling writing errors “small” or “minor,” as students may believe that writing errors ranging from spelling to typos are insignificant. Even if provided with access to Grammarly, these students may resist reviewing those changes or grading feedback if they do not believe writing errors have significance.

Most importantly, using an AI writing assistant promoted learning, including self-correction and self-analysis. As discussed in the results, almost 60% reported “always” using Grammarly for academic writing. Likely, students most frequently used Grammarly for academic work because, as students, this was their main reason for writing. Most students only accepted AI-generated suggestions after considering the nature of the suggestion. Instead, students reported that the immediate feedback offered by the tool improved their understanding and learning.

Teaching Implications

For educators determining how and when to introduce AI writing assistance like Grammarly, the study showed that using an AI writing assistant promoted learning, including self-correction and self-analysis for the surveyed students. While students primarily used Grammarly for academic work, students likely primarily wrote for academic work. Most students only accepted AI-generated suggestions after considering the nature of the suggestion. Instead, students found the immediate feedback offered by an AI-powered tool to improve their understanding and learning.

Teaching writing and critical thinking is more important than ever. Teaching the foundational skills for effective communication remains necessary so students can critically use an AI writing assistant. Consider how one student responded to Grammarly’s suggestion. The respondent wrote, “Some recommendations are incorrect, or do not fit well with the writing content. But it does help find obvious errors. Also its suggestions are sometime [

Faculty must engage students in conversations about the expectations of how and when to use Grammarly if students were to be allowed to use it. Several students noted the potential for a tool like Grammarly in the workplace. One student wrote, “This will help me to feel confident when typing important documents in the workforce.” Further, faculty must have deep and honest conversations to help students think through how and in what instances using AI can be helpful, discouraged, or detrimental. One respondent noted concerns about unacceptable tool use. The student wrote, “My major concern is data security. The main reason I avoid using Grammarly at work is because what I type is stored somewhere and someone may have access to it. Most documents I produce at work are confidential, or internal use only. Grammarly may be useful for school but probably not so for business, unless the workplace endorses the tool.” In addition, business communication faculty should be aware of new policies that ban AI writing assistance in schools and workplaces (Moore & Lookadoo, 2024). Universities are concerned about academic integrity and are beginning to create policies on the proper use of AI, but only 18% of universities are currently banning generative AI (Robert, 2024). A 2024 Cisco Systems, Inc. (2024), study cited that many companies limit generative AI use because of security concerns. We encourage faculty to be active in campus or business school decisions about AI-related policies. Banning all AI use will ban traditional uses of Grammarly and traditional grammar checkers that have long been allowed.

Faculty must teach how to use an AI tool rather than be used by an AI tool. The students’ comments about privacy and critical reflection on AI output demonstrated critical thinking. Faculty may want to add AI writing assistance to traditional lectures on editing and revising, but they may want to include data protection, ethics, and user privacy topics, given student concerns. Critical thinking about communication technology and how tool use can influence employee or customer perceptions provides students with a learning opportunity beyond the classroom.

Finally, AI-assisted writing has a place in a business setting, and one should realize that “these tools will transcend traditional business intelligence and will transform the nature of many roles in organizations” (Gruetzemacher, 2022, para. 13). Instructors should prepare students for proper use of AI and generative AI writing tools in their careers.

Future Research

Several opportunities for further research into AI writing assistance and AI writing tools are evident. Foremost, researchers could consider user perceptions and definitions of AI. Given how generative and traditional AI has been referred to as simply “AI,” the authors note that some confusion may arise when framing traditional AI tools like spellcheck as “AI.” This study has focused solely on traditional nongenerative AI writing assistants. However, other studies might investigate the same type of writing skills and markers of confidence using generative AI tools. Further study might compare both traditional and generative AI writing assistants for student perceptions of writing skills and feelings of confidence.

Since student confidence levels did not match the skills demonstrated in the written comments, further research studies could consider student confidence related to demonstrated writing skills, with and without an AI writing assistant. For example, students used language like “minor” to describe errors; future studies could explore the connections between perceptions of grammatical and writing errors as “minor” or “small” and students’ confidence levels in their writing abilities. Future research projects could assess writing skills and knowledge to understand the connection between confidence and ability when using an AI writing assistant. Further, future research could look at what students think the terms “editing,” “proofreading,” and “revising” mean. While the researchers agreed on how to define these terms, we suspect students may define them differently from faculty.

Finally, the study captured student perceptions at a critical moment when considering AI-based writing assistance. Surveyed students used a system with traditional AI, but without generative AI, as Grammarly released additional generative AI features after we distributed the survey. Since then, not all educational users of Grammarly have been given access to the generative AI tools, as administrators can make that decision. Future research must consider the expanded role of generative AI when incorporated into a traditional AI writing assistance tool, particularly considering the inclusion of generative AI through Microsoft 365 Copilot integrated into Word. Understanding how traditional and generative AI impacts writer and communicator confidence will be essential as generative AI becomes more widely used.

Conclusion

In conclusion, AI-powered writing assistants like Grammarly have been found to help identify grammatical mistakes and enhance students’ writing quality. While we cannot make a correlation, we found that students felt confident in mastering writing conventions when using an AI writing assistant. Some students assume they no longer need proofreading assistance when doing non-academic writing. Overall, the study found that individuals can benefit from using a combination of technological tools and traditional writing strategies to improve writing skills. These students critically use an AI tool as part of their learning process. AI-powered writing assistants will continue to improve with generative features, like those included with Grammarly’s generative AI tools. Therefore, business communication instructors should stay current with new technologies to consider ways to enhance their students’ writing abilities. Business communication and professional writing educators can use AI writing tools to enhance teaching and improve access to immediate grammatical feedback. Those educators must consider overconfidence and perceptions of business versus academic writing. AI and generative AI tools are not mere shortcuts; they are increasingly integral parts of the writing process.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.