Abstract

Objectives:

There has been a significant increase in the number of systematic reviews and meta-analyses published within the sports medicine literature over the last decade. An important aspect of conducting a systematic review or meta-analysis is the evaluation of the methodologic quality and bias of the studies included in these reviews. Several methodologic quality assessment tools have been developed for the evaluation of primary scientific research; however, these tools may not be relevant or specific to observational sports medicine research, a discipline that carries numerous unique nuances and biases. The objective of this effort was to develop a tool – the “Sport Publication Observational Research Tool (SPORT)”

Methods:

The SPORT Score was developed through a modified Delphi process. The Delphi panel included members from the Herodicus Society and The FORUM – two collegial groups of orthopaedic sports medicine experts from across the world. All active members of the Herodicus Society (n=117) and The Forum (n=80) were invited to participate. Four rounds of questions were developed to build consensus regarding the content and scoring system of the novel tool. Based on an a priori power analysis, 55 randomly chosen observational clinical sports medicine research studies were selected by an independent librarian and scored twice by 4 reviewers of varying levels of training. Two-way random-effects intraclass correlation coefficients (ICC) were calculated for interrater reliability (using average measures for agreement) and intrarater reliability (using single measures for consistency) for SPORT subscores and total score among reviewers. The distribution and percentiles for total SPORT Score across the 55 studies were assessed. The newly developed SPORT Score was also compared to another commonly utilized methodologic quality score, the methodologic index for non-randomized studies (MINORS).

Results:

Forty (40/117; 34.2%) Herodicus Society members and 11 (11/80; 13.8%) FORUM members agreed to participate in the Delphi process. One participant dropped out after Round 2. Therefore, the completion rate for Rounds 1 through 4 were 100%, 100%, 98.0%, and 98.0%, respectively. The majority (n=47; 94%) of participants completed a sports medicine fellowship and were still practicing full-time (n=43; 86%) in academic centers (n=35; 70%). The vast majority had experience taking care of collegiate (n=47; 94%) and professional (n=39; 78%) athletes. Almost all (n=49; 98%) served as reviewers for journals and more than half (n=32; 64%) served on an editorial board. Half (n=25; 50%) authored over 100 peer-reviewed publications. Additionally, nearly half (n=23; 46%) received National Institutes of Health (NIH) funding and almost all (n=47; 94%) received other non-NIH funding for research. In the final round, The SPORT Score received 94% consensus approval. There were 4 major categories comprised of 19 subscores that included factors related to bias and methodologic quality such as “study design”, “sampling methods”, “selection criteria”, “power analysis”, “generalizability”, etc.

Mean time to complete a SPORT score for each study was 6 minutes and 19 seconds (379.4 ± 173.4 seconds). However, time to completion varied significantly by level of training in that the “medical student” reviewer was significantly slower (9 minutes and 33 seconds [573.2 ± 188.4 seconds]) than the “junior resident” (5 minutes and 8 seconds [308.2 ± 107.9 seconds]), “chief resident” (4 minutes and 27 seconds [266.4 ± 72.2 seconds]), and “fellow/early career attending” (6 minutes and 8 seconds [368.1 ± 111.1 seconds]) at scoring studies. [F(3,213) = 62.7; p<0.001]

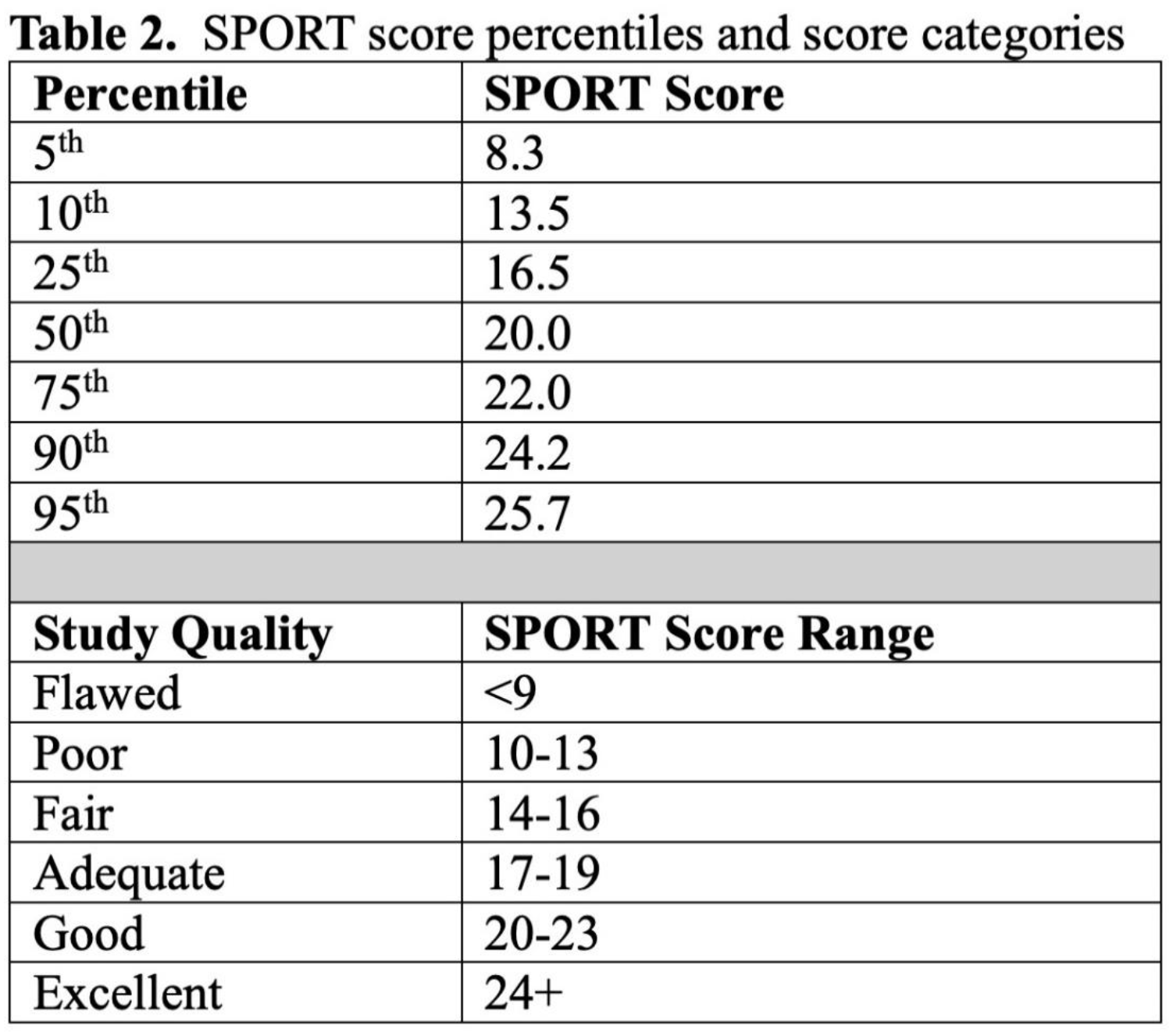

One subscore, regarding “peer review”, demonstrated unacceptable interrater reliability and was thus removed. The remaining 18 subscores had ICC ranges of 0.599-0.955 for interrater and 0.530-1.000 for intrarater reliability. Total SPORT Score ICC was 0.967 for interrater reliability and 0.966 for intrarater reliability, demonstrating almost perfect agreement for both. The final SPORT is presented in Table 1.

Conclusions:

An objective tool to assess the methodological quality of observational clinical sports medicine research was successfully developed through a Delphi approach with numerous field experts through 4 rounds of questions. The tool demonstrated near perfect interrater and intrarater agreement and there was moderate correlation with a previously developed quality assessment tool. This tool can help researchers assess the quality of observational clinical sports medicine research studies, particularly those included in systematic reviews and meta-analyses.