Abstract

Background:

A critical component of conducting systematic reviews or meta-analyses is assessing the methodological quality and bias of included studies. Several methodological quality assessment tools have been developed; however, these tools may not be relevant to observational sports medicine research, which carries numerous unique nuances and biases.

Purpose:

To develop the Sport Publication Observational Research Tool (SPORT), which evaluates and scores the methodological quality of observational sports medicine research.

Study Design:

Consensus statement.

Methods:

SPORT was developed through a modified Delphi approach involving members from the Herodicus Society and The FORUM. All active members were invited to participate in the process aimed at building consensus on SPORT content and scoring. After finalizing SPORT, a power analysis led to the independent selection of 55 observational clinical sports medicine studies, which were scored twice by 4 reviewers of varying training levels. Interrater and intrarater reliability for SPORT was assessed using intraclass correlation coefficients (ICCs). The distribution and percentiles for total SPORT score across the 55 studies were calculated. SPORT was also compared with the methodological index for non-randomized studies (MINORS), a commonly utilized quality assessment tool.

Results:

A total of 51 members participated and achieved 100%, 100%, 98.0%, and 98.0% completion rates for rounds 1 through 4, respectively. The final SPORT included 19 subscores related to methodological quality and bias and achieved 94% consensus approval. Mean SPORT completion time was 6 minutes and 19 seconds per study, which varied significantly by reviewer training level. The subscore "peer review" demonstrated unacceptable reliability and was removed. The remaining 18 subscores exhibited ICC ranges of 0.599 to 0.955 for interrater reliability and 0.530 to 0.936 for intrarater reliability. Total SPORT score demonstrated excellent agreement, for interrater (ICC, 0.967) and intrarater reliability (ICC, 0.966). Median SPORT score across the 55 studies was 20.0 and skewed toward lower scores. There was a moderate significant correlation between SPORT and MINORS (r[53] = 0.575; P < .001).

Conclusion:

An objective tool to assess the methodologic quality of observational sports medicine research (SPORT) was successfully developed through a modified Delphi approach with numerous content experts in the field. This tool may be useful in assessing the methodological quality of primary observational sports medicine studies included in systematic reviews and meta-analyses.

Most medical journals now require authors to follow PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) guidelines when performing a systematic review or meta-analysis to standardize the review process and improve the overall quality of these publications.20,22 A vital component suggested by PRISMA is the evaluation of bias, which can be accomplished by utilizing different methodological quality assessment tools. While there has been a significant increase in the number of systematic reviews and meta-analyses published within the sports medicine literature over the past decade, the methodological quality of studies included in these reviews has declined.12,23

A number of methodological quality assessment tools have been developed for evaluating bias in systematic reviews and meta-analyses.14,30 The most commonly utilized tools include A Measurement Tool to Assess Systematic Reviews (AMSTAR), 24 AMSTAR 2, 26 Overview Quality Assessment Questionnaire,18,19 and the ROBIS (Risk of Bias in Systematic Reviews) tool. 29 Additional tools have been developed to assess the methodological quality of individual studies included in these reviews such as the methodological index for non-randomized studies (MINORS). 27 Although these tools often demonstrate high interrater reliability and validity,16,25,27 notable limitations include difficulty in their use, 17 vague wording of questions and grading systems,2,13 and nonstandardized interpretation of score reporting.2,13

Within sports medicine research, the methodological acquisition of data and primary outcomes measured are often unique. For instance, while league- or institution-sponsored electronic health database records exist, there has been a recent trend among unaffiliated independent researchers to utilize online injury reports (available to the general public) to compile a defined cohort of athletes with a specific injury of interest. 9 Cohorts that utilize publicly available data have also been based on a specific surgery, sport, league, or player position. The methodological approach of utilizing public data for purposes of scientific inquiry in sports medicine has been heavily critiqued, as these studies only capture between 66% and 70% of all actual injuries, which can introduce significant bias to conclusions garnered from these studies.7,8 Additionally, outcome measures are often specific to a certain sport and, occasionally, to certain positions or roles within a sport. 15 Unfortunately, such performance-based outcomes have not been validated as a true measure of a treatment's benefit or effect despite their acceptance in the peer-reviewed literature, which may also introduce bias.

Applying the aforementioned generic quality assessment tools to evaluate observational clinical sports medicine research may not capture the nuances of this literature and further compound or fail to quantify the inherent biases of these studies. 28 Therefore, the purpose of the current study is to develop and validate a novel methodological quality assessment tool for observational clinical research studies in sports medicine, the Sport Publication Observational Research Tool (SPORT).

Methods

Study Design

A modified Delphi process was utilized to develop the SPORT Score. 21 The Delphi method requires identification of an anonymous panel of experts, who provide their opinion regarding the topic in question. At least 2 rounds of questions pertinent to the subject matter are asked with feedback between rounds. Frequency distributions are then used to develop consensus of opinion. 6 The experts who participated in the current modified Delphi process were recruited from the Herodicus Society and The FORUM – 2 collegial groups of orthopaedic sports medicine experts. These groups were chosen given their members’ clinical experience with athletes and team care, established record of high-quality clinical sports medicine research, and involvement with journal editorial boards and/or participation as reviewers for sports medicine journals. Soliciting participation from these 2 sports medicine societies was intentional to enhance the diversity among participants as 96% of the Herodicus Society members are male, while 100% of The FORUM members are female.

Questionnaires were created and completed using the REDCap (Research Electronic Data Capture; Vanderbilt University) web-based tool. 4 For each round of questioning, a REDCap survey was sent electronically to each participant and responses were returned and imported into an Excel (Microsoft) spreadsheet. All data were deidentified and anonymous. Departmental and institutional review board approval was granted.

Round 1 of the Delphi Process

The first round of questioning was open-ended and designed to solicit general information about the topic of controversy. The experts were contacted initially via email on September 29, 2023, inviting their study participation by the first and senior authors (A.W.K., M.J.M.). The following open-ended question was asked first:

Please list ALL components of primary observational clinical sports medicine research that you think are MOST IMPORTANT to the methodological quality of a study. Please exclude randomized controlled trials.

The group of experts was given 2 weeks to respond to the invitation. A second email was sent 1 week before the 2-week deadline if there was no response to the first invitation.

Round 2 of the Delphi Process

The second round of questioning was designed to organize and assess the importance of the items collected in round 1. The primary study team reviewed the responses from round 1. Duplicates were combined and general features were coalesced into recurring factors or themes. These factors were sent back to the experts for feedback and consensus for eventual inclusion in SPORT. The experts were asked to “include,”“include with changes,” or “omit” each factor and to provide feedback to the following open-ended statement:

The factors listed below from round 1 were thought to affect the methodological quality of primary observational clinical sports medicine research. In this round, we are asking you to decide whether each factor should be included in the final quality score. In later rounds, we will have you develop subscores for each included factor that generates (majority) agreement for inclusion. Please be selective and only include factors you deem to be

Free-text comments were also allowed. Participants were given 3 weeks to complete this round, and a reminder email was sent 2 weeks after the initial invitation.

Round 3 of the Delphi Process

Factors from round 2 that reached >80% consensus were adjusted based on prior comments and presented to the participants in the third round of the Delphi process. Each factor had a scoring system developed by the primary study team and the Delphi study group was asked to select “include outright,”“include with grammatical/style changes,”“needs major changes,” or “omit” each factor and their corresponding scoring system. The participants were asked the following open-ended statement:

Please provide general feedback for each of the following agreed-upon factors (>80% agreement) that affect the quality of primary observational clinical sports medicine research and the synergized corresponding scoring systems.

Free-text comments were also allowed. Participants were given 4 weeks to complete this round, and reminder emails were sent at 2 weeks after the initial round 3 invitation.

Round 4 of the Delphi Process

The primary study team reviewed responses and revised the scoring systems for each factor based on comments from round 3. Afterward, the tool was preliminarily tested by the primary study team and again revised for brevity and ease of use. The adjusted proposed SPORT was sent back to the group for general review. The participants were asked to provide feedback to the following open-ended statement:

Please indicate your APPROVAL/DISAPPROVAL and provide ANY FINAL FEEDBACK regarding the devised methodological quality assessment tool for primary observational clinical studies in sports medicine.

At this point, demographic data including practice type, location, sports team coverage, and academic experience were collected for each Delphi study group member, as well. Participants were contacted via email and telephone if they had not completed the survey after 2 weeks.

SPORT Testing

After final adjustments, 4 members of the primary study team (A.W.K., P.M.I., A.A.H., and M.N.C.) evaluated and tested SPORT on previously published sports medicine clinical studies. These individuals represented varying levels of orthopaedic training including a medical student (A.A.H.), junior resident (M.N.C.), chief resident (A.W.K.), and fellow/early career attending surgeon (P.M.I.). To detect an intraclass correlation coefficient (ICC) of 0.21 (“fair” agreement) with a power of 0.90 and an alpha of .05, the 4 raters needed to score 55 studies. A list of studies were randomly selected by an independent medical librarian who was not involved in this study utilizing the following query in PubMed for observational research in sports medicine:

("Sports Medicine"[Medical Subject Headings (MeSH)] OR “sports medicine”[tiab] OR “sport medicine”[tiab])

The list of studies was returned to the authors. Starting from those most recently published, studies were excluded if they were not observational clinical sports medicine research studies. These included basic science/laboratory studies, editorials, reviews, and commentaries. Studies that were not in the realm of clinical sports medicine were also excluded. Studies were excluded until 55 were obtained.

Time to completion for each study was tallied for each reviewer and compared via a 1-way analysis of variance and post hoc analysis. Each rater then rescored the studies 4 to 6 weeks later. Two-way random-effects ICCs were calculated for interrater reliability (using mean measures for agreement) and intrarater reliability (using single measures for consistency) for SPORT subscores and total scores among the reviewers. Subscores that did not reach an adequate level of agreement (<0.50) were omitted. 11 The median SPORT score for each study among reviewers was then utilized to plot a distribution of total SPORT scores across all studies, and percentiles were delineated.

In 2022-2023, within 3 high-impact sports medicine journals (American Journal of Sports Medicine, British Journal of Sports Medicine, and Arthroscopy), independent analysis of all 250 systematic reviews published were analyzed to determine the most common tools employed. A variety of methodological quality tools were used, the most frequent of which were the Cochrane risk-of-bias tool 5 (n = 50; 20.0%), MINORS 27 (n = 40; 16.1%), and the Grading of Recommendations Assessment, Development and Evaluation 3 (n = 25; 10.0%). There were 39 (15.7%) studies that did not utilize a tool. The 55 previously selected studies identified from the independent literature review were scored across the other numerically based bias score (MINORS) and compared with the SPORT score via Pearson correlation coefficients. All analyses were conducted at the 95% CI level with the statistical software program SPSS Version 27.0 (IBM SPSS Statistics).

Results

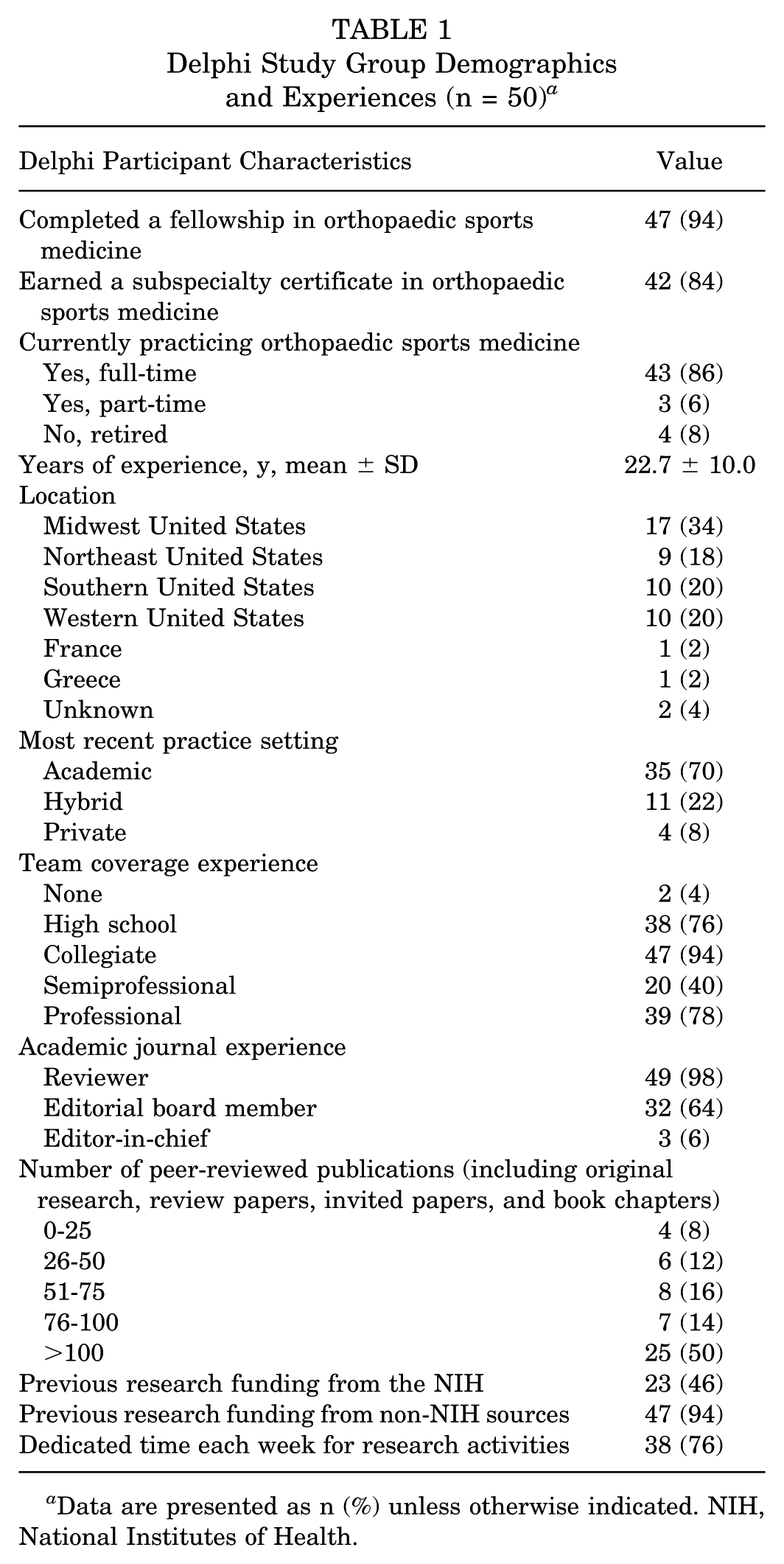

There were 11 FORUM (11/80; 13.8%) and 40 Herodicus Society (40/117; 34.2%) members who agreed to participate in the study. One of the 51 participants dropped out after round 2. Therefore, the completion rates for rounds 1 through 4 were 100%, 100%, 98.0%, and 98.0%, respectively. Delphi study group demographics and experiences for those who completed all 4 rounds (n = 50) are delineated in Table 1.

Delphi Study Group Demographics and Experiences (n = 50) a

Data are presented as n (%) unless otherwise indicated. NIH, National Institutes of Health.

National Institutes of Health

In round 1, there were 37 words or phrases listed by participants, which were coalesced and organized into meaningful factors. In round 2, the Delphi study group voted on whether to ultimately include or omit these factors in the scoring tool. Both the factors and the percentage agreement for inclusion are delineated in Appendix Table A1. In round 3, some factors were combined or adjusted for brevity based on feedback, and a scoring system was developed by the primary study team for each factor that met >80% agreement. These were sent to the Delphi study group for feedback, asking for input on the themes and devised scoring criteria, which are denoted in Appendix Table A2. At this point, feedback was incorporated by the primary study team and scoring systems were revised. It was user-tested on 5 recent sports medicine studies selected by the first author (A.W.K.). Adjustments were again made for clarity and brevity and sent back to the Delphi study group for final approval, which can be visualized in Appendix Table A3. The tool received 94.0% overall approval in round 4. Comments and feedback were again incorporated resulting in the revised SPORT score in Appendix Table A4.

Time to Completion

Across the 4 members of the primary study team, mean time to complete a SPORT score for each of the 55 studies was 6 minutes, 19 seconds (379.4 ± 173.4 seconds). Time to completion varied significantly by level of training in that the “medical student” reviewer was significantly slower (9 minutes, 33 seconds [573.2 ± 188.4 seconds]) than the “junior resident” (5 minutes, 8 seconds [308.2 ± 107.9 seconds]), “chief resident” (4 minutes, 27 seconds [266.4 ± 72.2 seconds]), and “fellow/early career attending surgeon” (6 minutes, 8 seconds [368.1 ± 111.1 seconds]) at scoring each study (F[3,213] = 62.7; P < .001) (Figure 1).

Density plot of total Sport Publication Observational Research Tool (SPORT) scores across the 55 studies evaluated.

Inter- and Intrarater Reliability

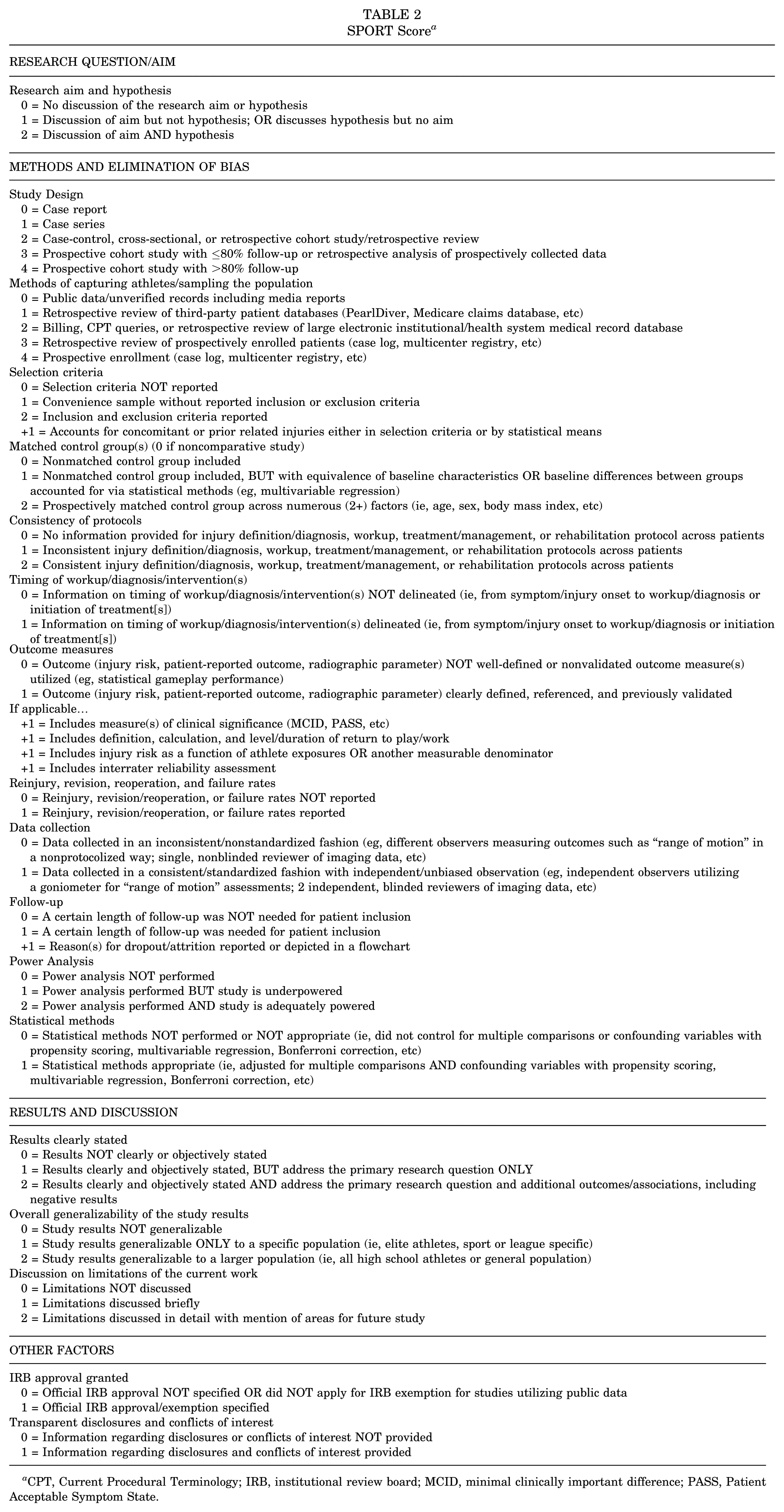

One subscore, regarding “peer review,” demonstrated unacceptable interrater reliability and was thus removed. This resulted in 18 subscores with a total of 38 possible points. The 18 subscores had ICC ranges of 0.599 to 0.955 for interrater and 0.530 to 0.936 for intrarater reliability. Total SPORT Score ICC was 0.967 for interrater reliability and 0.966 for intrarater reliability, demonstrating excellent agreement for both. The finalized SPORT score is presented below in Table 2. A printout version can be found in the Appendix Table A4, and an online scoring calculator was also created at www.sportscoreonline.com.

SPORT Score a

CPT, Current Procedural Terminology; IRB, institutional review board; MCID, minimal clinically important difference; PASS, Patient Acceptable Symptom State.

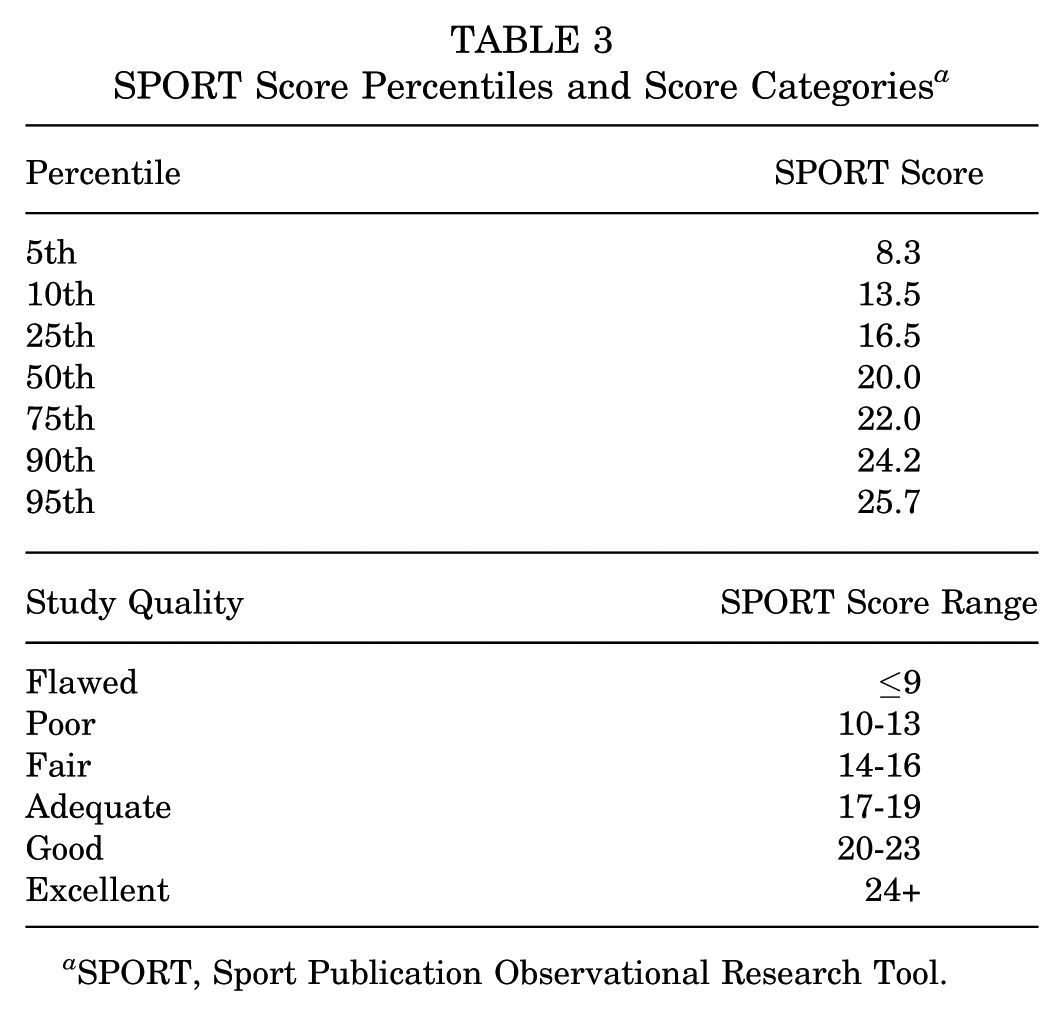

Distribution and Quartiles of Scores

The median total SPORT score across the 55 studies was 20.0 (IQR, 5.5; range, 7.5-26.5). Scores followed a skewed distribution toward lower scores (W = 0.924; P = .002; n = 55) (Figure 1). Percentiles and suggested scoring categories are shown in Table 3.

SPORT Score Percentiles and Score Categories a

SPORT, Sport Publication Observational Research Tool.

Comparison With Other Bias Scores

There was a moderate, significant correlation between SPORT scores and MINORS for the 55 studies evaluated (r[53] = 0.575; P < .001).

Discussion

The purpose of this study was to develop and validate a methodological quality assessment tool for observational clinical sports medicine research studies. We successfully created the SPORT score through a modified Delphi approach with a diverse group of experts in the field. The tool is not overly burdensome. After elimination of the “peer review” subscore, all others were in the range of “moderate” to “near perfect” agreement and the overall tool demonstrated overall excellent inter- and intrarater reliability among users of varying levels of training and expertise. Additionally, it showed moderate, but not perfect, correlation with the previously established MINORS tool. This is the first tool developed to specifically evaluate the methodological quality and bias scores in primary sports medicine observational clinical research studies. The tool can be used to evaluate individual studies. Additionally, it can be utilized to assess studies included within a systematic review or meta-analysis, from which an overall SPORT score can be calculated, helping quantify the overall quality of studies included in the review.

The Delphi group included 50 experts, with a mean of 22 years of clinical sports medicine experience and significant backgrounds in research and medical publishing. Almost all (98%) served as peer reviewers and 98% received extramural funding for research. A lesser, but still large, majority served on editorial boards (64%) and have committed dedicated time to research endeavors (76%). While only 2 orthopaedic sports medicine societies were sampled, members of the Delphi group had a deep understanding of the nuances and limitations of clinical sports medicine research. They were able to provide unique perspectives and feedback after each round of questions, ultimately leading to overall consensus for the tool. For instance, regarding the devised subscore of “methods of capturing athletes/sampling the population,” the category “public data/unverified records including media reports” was given a score of 0, reflecting an appropriate score for the use of data that are uncorroborated by patient-specific medical records or a league-sanctioned medical database. This issue is somewhat unique to the sports medicine literature as evidenced by the exponential increase in publicly obtained data studies pertaining to clinical sports medicine topics.7-9

The time to completion for a SPORT score was, on average, 6 minutes, 19 seconds (379.4 ± 173.4 seconds) per study across the 4 reviewers. We deliberately chose individuals with varying levels of training given that these types of individuals, including trainees, often contribute to the execution of a systematic review and assessments of bias. The time to completion for a SPORT score is faster than previously published times for other bias scores including the Risk of Bias Assessment Tool for Nonrandomized Studies (9.5 minutes) and MINORS (10.45 minutes). 10 Reliability was high for total score both among (ICC, 0.967) and within (ICC, 0.966) reviewers. Again, having reviewers of differing levels of experience was intentional and demonstrated reliability among medical students through early career attending surgeons. The distribution of scores followed a nonnormal distribution (skewed toward lower scores), which suggests that there are more very poorly done studies than very well–conducted studies. This is somewhat consistent with previous bibliometric analyses of the sports medicine literature, where across 4 sports medicine journals (excluding randomized controlled trials), the most frequently published studies were observational descriptive studies (35.7%), single case studies (34.3%), case-control studies (14.6%), and last, cohort studies (11.6%). 1 Qualitative categories were developed based on percentiles to give reviewers reference standards. The SPORT was only moderately correlated with a commonly utilized methodological index (MINORS) suggesting that SPORT can classify studies similarly to MINORS despite subtle differences between the 2 tools. There is no current “gold standard” to assess the methodological quality of observational sports medicine studies.

Limitations

SPORT can be used to evaluate sports medicine studies included in systematic reviews as well as primary clinical observational research studies in isolation as a benchmark for overall methodological quality. While the strengths of this tool are numerous, there are some limitations that should be discussed. First, scoring systems and the weighting of subscores were initiated by the primary research team and agreed upon by the Delphi group after feedback and consensus. It is unclear if a similar scoring system would have been developed by different study and Delphi groups. Second, our Delphi group included experts primarily from North America and Europe. Feedback from experts in different parts of the world and their unique backgrounds taking care of different athlete populations or sports may have resulted in a different quality score. Third, SPORT was validated within a study group of individuals who were training or previously trained at a single institution. It is unclear if similar reliability would be garnered among other users. Fourth, this tool was developed in English and may not demonstrate similar ease-of-use or reliability by non−English speaking users. Fifth, even though scored studies were randomly selected by an independent medical librarian who attempted to capture a wide variety of studies across different journals, the selected studies may not be entirely representative of the entire sports medicine literature base. SPORT was not developed to score randomized trials. Sixth, we compared SPORT with MINORS. External validation studies are needed with different raters, languages, research studies, and legacy methodological quality scores. Last, as scientific methodology continues to improve and the utilization of artificial intelligence becomes more common, this tool may need to be revised to incorporate these advances in the future.

Conclusion

An objective tool to assess the methodological quality of observational sports medicine research—the SPORT score—was successfully developed through a modified Delphi approach with numerous content experts in the field. This tool may be useful in assessing the methodological quality of primary observational sports medicine studies included in systematic reviews and meta-analyses.

Authors

Andrew W. Kuhn, MD (Department of Orthopaedic Surgery, Washington University in St. Louis, St. Louis, Missouri, USA); Paul M. Inclan, MD (Department of Orthopaedic Surgery, Washington University in St. Louis, St. Louis, Missouri, USA; Department of Orthopaedic Surgery, University of Arkansas for Medical Science, Little Rock, Arkansas, USA); Ameer A. Haider, BS (School of Medicine, Washington University in St. Louis, St. Louis, Missouri, USA); Michele N. Christy, MD (Department of Orthopaedic Surgery, Washington University in St. Louis, St. Louis, Missouri, USA); Warren R. Dunn, MD (Marshfield Clinic, Weston, Wisconsin, USA); Laura Alberton, MD (Scripps Health, La Jolla, California, USA); Christina Allen, MD (Yale School of Medicine, New Haven, Connecticut, USA); Kyle Anderson, MD (Anderson Sports Medicine, Bingham Farms, Michigan, USA); James Andrews, MD (Andrews Sports Medicine & Orthopaedic Center; American Sports Medicine Institute, Birmingham, Alabama, USA); Fred Azar, MD (Campbell Clinic, Memphis, Tennessee, USA); Geoffrey Baer, MD, PhD (University of Wisconsin School of Medicine and Public Health, Madison, Wisconsin, USA); Michael Banffy, MD (Cedars-Sinai, Los Angeles, California, USA); Asheesh Bedi, MD (University of Michigan, Ann Arbor, Michigan, USA); Stephen Brockmeier, MD (University of Virginia, Charlottesville, Virginia, USA); Robert Brophy, MD (Washington University in St. Louis, St. Louis, Missouri, USA); Charles Bush-Joseph, MD (Midwest Orthopaedics at Rush, Chicago, Illinois, USA); James Carey, MD, MPH (University of Pennsylvania Perelman School of Medicine, Philadelphia, Pennsylvania, USA); Thomas Carter, MD (Banner—University Orthopedic and Sports Medicine Institute, Phoenix, Arizona, USA); Steven Cohen, MD (Rothman Orthopaedic Institute, Philadelphia, Pennsylvania, USA); Lutul Farrow, MD (Cleveland Clinic, Cleveland, Ohio, USA); David Flanigan, MD (The Ohio State University Wexner Medical Center, Columbus, Ohio, USA); Corinna Franklin, MD (Yale School of Medicine, New Haven, Connecticut, USA); Sharon Hame, MD (University of California, Los Angeles, David Geffen School of Medicine, Los Angeles, California, USA); Terzidis Ioannis, MD, PhD (Thessaloniki Minimally Invasive Surgery (The-MIS) Orthopaedic Centre, Thessaloniki, Greece); Sheeba Joseph, MD (Michigan State University, East Lansing, Michigan, USA); Keith Kenter, MD (University of Missouri, Columbia, Missouri, USA); Jason Koh, MD, MBA (Northshore University Health System, Skokie, Illinois, USA); Aaron Krych, MD (Mayo Clinic, Rochester, Minnesota, USA); Lance LeClere, MD (Vanderbilt University, Nashville, Tennessee, USA); Cassandra Lee, MD (University of California, Davis, Sacramento, California, USA); Bruce Levy, MD (Orlando Health Jewett Orthopedic Institute, Orlando, Florida, USA); David McAllister, MD (University of California, Los Angeles, David Geffen School of Medicine, Los Angeles, California, USA); Michael Medvecky, MD (Yale School of Medicine, New Haven, Connecticut, USA); Christina Morganti, MD (Anne Arundel Medical Center Orthopedics, Annapolis, Maryland, USA); Volker Musahl, MD (University of Pittsburgh Medical Center, Pittsburgh, Pennsylvania, USA); Bradley Nelson, MD (University of Minnesota, Minneapolis, Minnesota, USA); Frank Noyes, MD (Noyes Knee Institute; Cincinnati SportsMedicine Research and Education Foundation, Cincinnati, Ohio, USA); Brett Owens, MD (Warren Alpert School of Medicine, Brown University, Providence, Rhode Island, USA); Lee Pace, MD (Children’s Health Andrews Institute for Orthopaedics and Sports Medicine, Plano, Texas, USA); Lonnie Paulos, MD (Holy Cross Hospital–Jordan Valley, Cottonwood Heights, Utah, USA); Lauren Redler, MD (Columbia University, New York, New York, USA); Scott Rodeo, MD (Hospital for Special Surgery, New York, New York, USA); Marc Safran, MD (Stanford University, Redwood City, California, USA); Felix Savoie, MD (Tulane School of Medicine, New Orleans, Louisianna, USA); Donald Shelbourne, MD (Shelbourne Knee Center, Indianapolis, Indiana, USA); Seth Sherman, MD (Stanford University, Redwood City, California, USA); Ken Singer, MD (Slocum Center for Orthopedics & Sports Medicine, Eugene, Oregon, USA); Matthew Smith, MD (Washington University in St. Louis, St. Louis, Missouri, USA); Bertrand Sonnery-Cottet, MD, PhD (Centre Orthopédique Santy, Lyon, France); Andrea Spiker, MD (University of Wisconsin School of Medicine and Public Health, Madison, Wisconsin, USA); Michael Stuart, MD (Mayo Clinic, Rochester, Minnesota, USA); Russell Warren, MD (Hospital for Special Surgery, New York, New York, USA); Jocelyn Wittstein, MD (Duke University, Durham, North Carolina, USA); Michelle Wolcott, MD (University of Colorado, Denver, Colorado, USA); Rick Wright, MD (Vanderbilt University, Nashville, Tennessee, USA); and Matthew J. Matava, MD, (Department of Orthopaedic Surgery, Washington University in St. Louis, St. Louis, Missouri, USA).

Footnotes

Appendix

Revised Scoring System for Each Theme Thought to Affect Methodological Quality of Observational Sports Medicine Research a

| RESEARCH QUESTION/AIM |

| Research aim and hypothesis 0 = No discussion of the research aim or hypothesis 1 = Discussion of aim but not hypothesis; OR discusses hypothesis but no aim 2 = Discussion of aim AND hypothesis |

| METHODS AND ELIMINATION OF BIAS |

| Study design 0 = Case report 1 = Case series 2 = Case-control, cross-sectional, or retrospective cohort study/retrospective review 3 = Prospective cohort study with ≤80% follow-up or retrospective analysis of prospectively collected data 4 = Prospective cohort study with >80% follow-up |

| Methods of capturing athletes/sampling the population 0 = Public data/unverified records including media reports 1 = Retrospective review of third-party patient databases (PearlDiver, Medicare claims database, etc) 2 = Billing, CPT queries, or retrospective review of large electronic institutional/health system medical record database 3 = Retrospective review of prospectively enrolled patients (ie, case log, multicenter registry, etc) 4 = Prospective enrollment (ie, case log, multicenter registry, etc) |

| Selection criteria 0 = Selection criteria NOT reported 1 = Convenience sample without reported inclusion or exclusion criteria 2 = Inclusion and exclusion criteria reported +1 = Accounts for concomitant or prior related injuries either in selection criteria or by statistical means |

| Matched control group(s) (0 if noncomparative study) 0 = Nonmatched control group included 1 = Nonmatched control group included, BUT with equivalence of baseline characteristics OR baseline differences between groups accounted for via statistical methods (eg, multivariable regression) 2 = Prospectively matched control group across numerous (2+) factors (ie, age, sex, body mass index, etc) |

| Consistency of protocols 0 = No information provided for injury definition/diagnosis, workup, treatment/management, or rehabilitation protocol across patients 1 = Inconsistent injury definition/diagnosis, workup, treatment/management, or rehabilitation protocols across patients 2 = Consistent injury definition/diagnosis, workup, treatment/management, or rehabilitation protocols across patients |

| Timing of workup/diagnosis/intervention(s) 0 = Information on timing of workup/diagnosis/intervention(s) NOT delineated (ie, from symptom/injury onset to workup/diagnosis or initiation of treatment[s]) 1 = Information on timing of workup/diagnosis/intervention(s) delineated (ie, from symptom/injury onset to workup/diagnosis or initiation of treatment[s]) |

| Outcome measures 0 = Outcome (injury risk, patient-reported outcome, radiographic parameter) NOT well-defined or nonvalidated outcome measure(s) utilized (eg, statistical gameplay performance) 1 = Outcome (injury risk, patient-reported outcome, radiographic parameter) clearly defined, referenced, and previously validated If applicable… +1 = Includes measure(s) of clinical significance (MCID, PASS, etc) +1 = Includes definition, calculation, and level/duration of return to play/work +1 = Includes injury risk as a function of athlete exposures OR another measurable denominator +1 = Includes interrater reliability assessment |

| Reinjury, revision, reoperation, and failure rates 0 = Reinjury, revision/reoperation, or failure rates NOT reported 1 = Reinjury, revision/reoperation, or failure rates reported |

| Data collection 0 = Data collected in an inconsistent/nonstandardized fashion (eg, different observers measuring outcomes such as “range of motion” in a nonprotocolized way; single, nonblinded reviewer of imaging data, etc) 1 = Data collected in a consistent/standardized fashion with independent/unbiased observation (eg, independent observers utilizing a goniometer for range of motion assessments; 2 independent, blinded reviewers of imaging data, etc) |

| Follow-up 0 = A certain length of follow-up was NOT needed for patient inclusion 1 = A certain length of follow-up was needed for patient inclusion +1 = Reason(s) for dropout/attrition reported or depicted in a flowchart |

| Power analysis 0 = Power analysis NOT performed 1 = Power analysis performed BUT study is underpowered 2 = Power analysis performed AND study is adequately powered |

| Statistical methods 0 = Statistical methods NOT performed or NOT appropriate (ie, did not control for multiple comparisons or confounding variables with propensity scoring, multivariable regression, Bonferroni correction, etc) 1 = Statistical methods appropriate (ie, adjusted for multiple comparisons AND confounding variables with propensity scoring, multivariable regression, Bonferroni correction, etc) |

| RESULTS AND DISCUSSION |

| Results clearly stated 0 = Results NOT clearly or objectively stated 1 = Results clearly and objectively stated, but address the primary research question ONLY 2 = Results clearly and objectively stated and address the primary research question and additional outcomes/associations, including negative results |

| Overall generalizability of the study results 0 = Study results are NOT generalizable 1 = Study results are generalizable ONLY to a specific population (ie, elite athletes, sport or league specific) 2 = Study results are generalizable to a larger population (ie, all high school athletes or general population) |

| Discussion on limitations of the current work 0 = Limitations NOT discussed 1 = Limitations discussed briefly 2 = Limitations discussed in detail with mention of areas for future study |

| OTHER FACTORS |

| IRB approval granted 0 = Official IRB approval NOT specified OR did NOT apply for IRB exemption for studies utilizing public data 1 = Official IRB approval/exemption specified |

| Transparent disclosures and conflicts of interest 0 = Information regarding disclosures or conflicts of interest NOT provided 1 = Information regarding disclosures and conflicts of interest provided |

| Blinded peer review prior to publication 0 = Not peer reviewed OR underwent unblinded peer review OR published to preprint server 1 = Underwent blinded peer review |

CPT, Current Procedural Terminology; IRB, institutional review board; MCID, minimal clinically important difference; PASS, Patient Acceptable Symptom State.

Acknowledgements

The authors would like to thank Michele Johnson and Elizabeth Vance from the Herodicus Society for their help in the coordination of this study. They also thank Angela Hardi, clinical librarian at the Bernard Becker Medical Library, for her assistance with study selection for tool validation.

Final revision submitted July 26, 2025; accepted August 15, 2025.

One or more of the authors has declared the following potential conflict of interest or source of funding: L.A. has served as president of The FORUM. C.A. is the chair of the AOSSM Team Physician and Athlete Advocacy Committee. K.A. receives royalties from Arthrex Inc. F.A. has received consulting fees from Pacira. G.B. is a consultant for ConMed Linvatec. A.B. is a consultant for and receives royalties from Arthrex Inc. S.B. is a consultant for Arthrex and Exactech; receives royalties from Arthrex, Exactech, and Zimmer Biomet; and receives an editorial stipend from AOSSM Publishing for serving as editor-in-chief of The Video Journal of Sports Medicine. R.B. is a committee member of the American Academy of Orthopaedic Surgeons (AAOS), AOSSM, and American Orthopaedic Association (AOA); has equity in Tulavi and Movement Health Science; and receives consulting fees from Syneos/Anika. J.C. has served as a consultant for Joint Restoration, Arthrex, and Bioventus. T.C. receives royalties from Arthrex; is a consultant for Arthrex and Active Implants; and has received research support from Arthrex, JRF, RTI Biologics, and Phoenix Kinetics; is a member of the Board of Trustees of Arthroscopy Journal; is a development committee member of Arthroscopy Association of North America stock (AANA); and owns stock in Icarus. D.F. is a consultant for Vericel, Moximed, Smith & Nephew, Conmed, Depuy Synthes, and Anika. S.H. has received consulting fees, honoraria, and hospitality payments from Smith & Nephew. K.K. is an unpaid consult for Biopoly LLC; receives honoraria for serving as an associate editor for The Orthopaedic Journal of Sports Medicine; has received travel fees from the American Board of Orthopaedic Surgery (ABOS), AOA, and AOSSM; is the associate dean of clinical affairs/Chief Medical Officer of Western Michigan University Homer Styker MD School of Medicine; and is a committee member of AOA, American Shoulder and Elbow Surgeons, ABOS, and AOSSM. J.K. has received consulting fees from Smith & Nephew, Marrow Access Technologies, Acuitive, Pfizer, and Pacira/Flexion; has received honoraria from Smith & Nephew; has patents in Marrow Access Technologies; and serves in a leadership role in International Patellofemoral Study Group; Patellofemoral Foundation; AAOS; International Society of Arthroscopy, Knee Surgery, and Orthopaedic Sports Medicine; and Marrow Access Technologies. A.K. has received IP royalties and consulting fees from Arthrex Inc and is an editorial or governing board member of The American Journal of Sports Medicine and Springer and a board or committee member of AANA and International Cartilage Regeneration & Joint Preservation Society. L.L. has received research support from Ossio. B.L. has received IP royalties, consultation fees, and travel and lodging from Arthrex Inc; has received speaking fees from Arthrex Inc and Smith & Nephew; and has stock options with COVR Medical LLC. D.M. is a consultant for MTF Biologics and Zimmer Biomet. M.M. is a speaker for Smith & Nephew and DePuy Synthes. C.M. has been employed by the University of Wisconsin School of Medicine–Madison and has served as a board member of The FORUM. B.O. has received a grant from National Institutes of Health (NIH) and Department of Defense; royalties or licenses from Conmed; consulting fees from Mitek, Vericel, and Conmed; and equipment, materials, drugs, medical writing, gifts, or other services from Arthrex and Mitek and owns stock or stock options from Vivorte. L. Pace has received a grant, consulting fees, and research support from Arthex and JRF Ortho; has received honoraria from Arthrex and Vericel; has received support for attending meetings or travel from Arthrex; serves as a board or committee member of AOSSM and Pediatric Research in Sports Medicine Society; and owns stock or stock options in OutcomeMD. S.R. has received consulting fees from Teladoc Health and Don Joy/Enovis and is an associate editor for Translational Biology and The American Journal Sports Medicine. M. Safran has received consulting fees from Medacta, Therapeutics, Smith & Nephew, and Organogenesis; receives royalties or license from DJO, Stryker, Elsevier, Lippincott, Vive Health; Subchondral Solutions; Marrow Access Technologies, Smith & Nephew, Top Shelf, and Medacta; has received nonconsulting fees from Smith & Nephew; has received honoraria from Medacta; has received a gift from Smith & Nephew; and has received fellowship and research support from Smith & Nephew. F.S. receives royalties and honoraria from Linvatec Corp., EXACTECH Inc., and Zimmer Biomet Holdings, Inc. M. Smith has received support for education from Elite Orthopedics and Arthrex and travel fees from Medica Business Device. B.S.-C. has received consulting fees and royalties from Arthrex. A.S. is a paid consultant for Stryker Sports Medicine; is an associate editor of The American Journal of Sports Medicine; and is an editorial board member of The Video Journal of Sports Medicine, Arthroscopy Journal, and Journal of Hip Preservation Surgery. M. Stuart is a consultant for and has received royalties from Arthrex. J.W. has received grants from NIH, has received consulting fees from Miach and Geistlich, has received speaking fees from Arthex and Vericel, has received travel fees from Arthrex, has served on the advisory board of ViewFiHealth and as chair of the membership committee of AOSSM, and owns stock options in ViewFi Health. R. Wright receives royalties from Responsive Arthroscopy and has stock or stock options in Hyalex and Just Cause Apparel. M.J.M. is a consultant for Arthrex Inc, Ostesys, and Breg Inc and has received honoraria from Elite Orthopedics. AOSSM checks author disclosures against the Open Payments Database (OPD). AOSSM has not conducted an independent investigation on the OPD and disclaims any liability or responsibility relating thereto.

Ethical approval for this study was obtained from Washington University in St. Louis (No. 202308119).