Abstract

Background:

Videos uploaded to YouTube do not go through a review process, and therefore, videos related to medial meniscal ramp lesions may have little educational value.

Purpose:

To assess the educational quality of YouTube videos regarding ramp lesions of the meniscus.

Study Design:

Cross-sectional study.

Methods:

A standard search was performed on the YouTube website using the following terms: “ramp lesion” and “posterior meniscal detachment” and “ramp” and “meniscocapsular” and “meniscotibial detachment,” and the top 100 videos based on the number of views were included for analysis. The video duration, publication data, and number of likes and views were retrieved, and the videos were categorized based on video source (health professionals, orthopaedic company, private user), the type of information (anatomy, biomechanics, clinical examination, overview, radiologic, surgical technique), and video content (education, patient support, patient experience/testimony).The content analysis of the information on the videos was evaluated with the use of the DISCERN instrument (score range, 16-80), the Journal of the American Medical Association (JAMA) benchmark criteria (score range, 0-4), and the Global Quality Score (GQS; score range, 1-5).

Results:

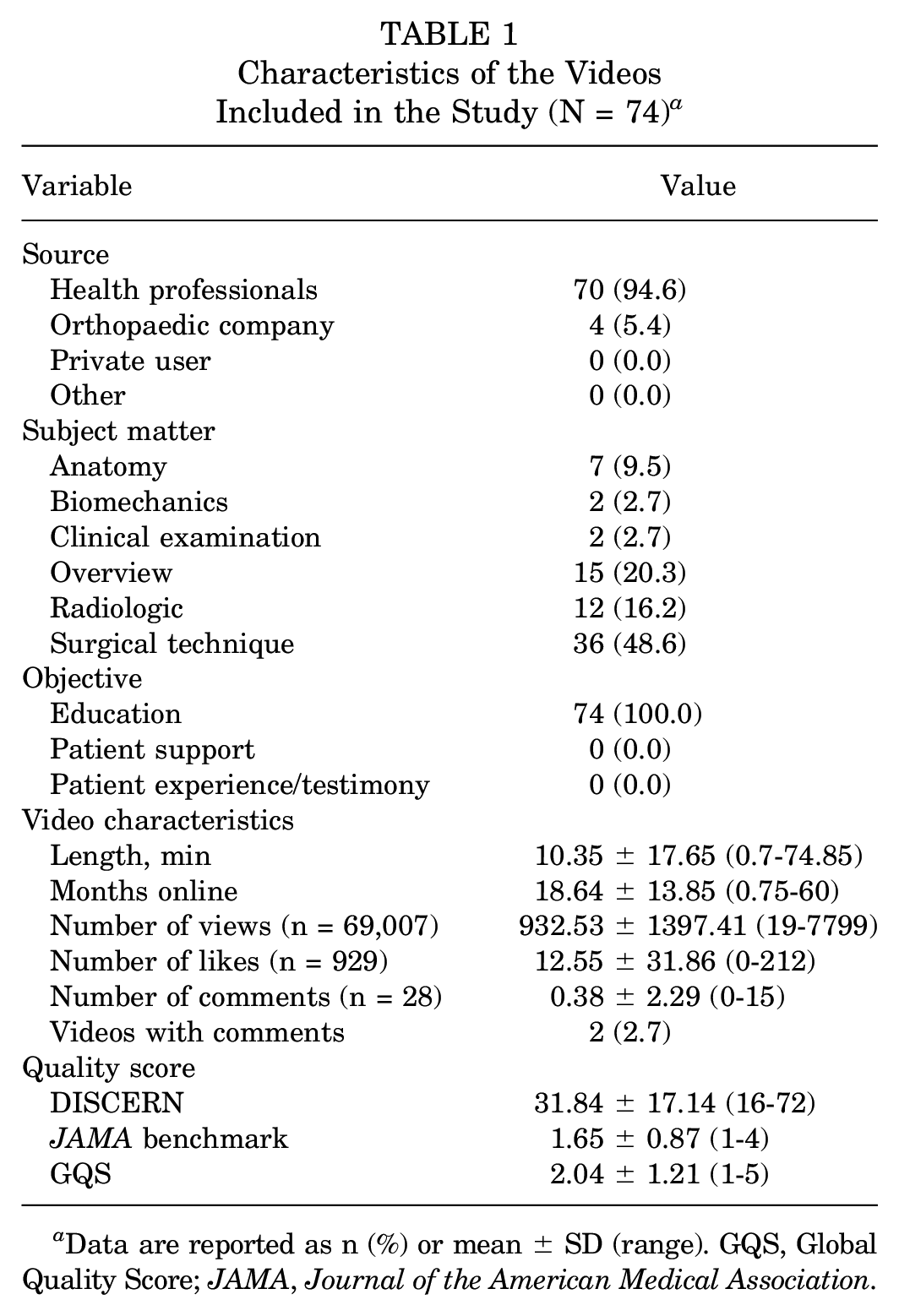

A total of 74 videos were included. Of these videos, 70 (94.6%) were published by health professionals, while the remaining 4 (5.4%) were published by orthopaedic companies. Most of the videos were about surgical technique (n = 36; 48.6%) and all had an educational aim (n = 74; 100%). The mean length of the videos was 10.35 ± 17.65 minutes, and the mean online period was 18.64 ± 13.85 months. The mean DISCERN score, JAMA benchmark score, and GQS were 31.84 ± 17.14 (range, 16-72), 1.65 ± 0.87 (range, 1-4), and 2.04 ± 1.21 (range, 1-5), respectively. Videos that reported an overview about ramp lesions were the best in terms of quality for DISCERN and JAMA benchmark score, while biomechanics videos were the best according to GQS. The worst category of videos was about surgical technique, with all having lower scores.

Conclusion:

The educational content of YouTube regarding medial meniscal ramp lesions showed low quality and validity based on DISCERN score, JAMA benchmark score, and GQS.

A specific meniscal tear affecting the peripheral attachment of the posterior horn of the medial meniscus, later defined as a ramp lesion, was described in 1988 in association with an anterior cruciate ligament (ACL) rupture. 14

Traditionally, the ramp lesion was defined as a longitudinal lesion <2.5 cm in length of the peripheral attachment of the posterior horn of the medial meniscus at the level of the meniscocapsular junction. 18 However, more recent studies have shown that ramp lesions are related to a tear in the attachment of the meniscotibial ligament at the level of the posterior horn of the medial meniscus.6,24,26

Ramp injuries affect knee function by increasing anterior tibial translation, dynamic rotational laxity, and excessive rotational mobility of the knee.2,8,12

In recent years, there has been an increase in patients’ online research on orthopaedic information. 3 One of the main sources used is YouTube (https://www.youtube.com), which allows patients to watch videos of different orthopaedic procedures. It has also been reported that the information acquired online by patients could influence the decision on the type of treatment. 20 Several studies have evaluated the reliability and quality of the information provided by YouTube videos regarding ACL injury and reconstruction, patellofemoral instability, and rotator cuff pathology, demonstrating low quality and poor information.4,15,28

The purpose of this study was to evaluate the reliability and quality of the educational content of YouTube videos concerning meniscal ramp injuries. The hypothesis of the study was that the educational content of YouTube regarding ramp lesions would provide poor information and low-quality videos.

Methods

The current study was determined to be exempt from institutional review board approval. The terms “ramp lesion” and “posterior meniscal detachment” and “ramp” and “meniscocapsular” and “meniscotibial detachment” were used as keywords for searches on YouTube on January 9, 2023, and the 100 most-viewed videos were obtained for each term. Off-topic, non-English, duplicate, and difficult-to-understand videos were excluded from the study. At the end of the process, 74 videos were recruited for qualitative and quantitative analysis. A flowchart of the video selection process is presented in Figure 1.

Flowchart of the videos included in this study.

The duration of the videos, publication data, and the number of likes and views were retrieved. Furthermore, videos were categorized based on video source (health professionals, orthopaedic company, private user), the type of information (anatomy, biomechanics, clinical examination, overview, radiologic, surgical technique), and video content (education, patient support, patient experience/testimony).

The quality and reliability assessments of video contents were assessed using the DISCERN instrument, Journal of the American Medical Association (JAMA) benchmark criteria, and Global Quality Score (GQS) by 2 experienced knee surgeons (R.D. and A.C.).7,10,22,23,27,29,30 Discrepancies with regard to eligibility were addressed with and resolved with the senior author (B.S.-C.).

Assessment Tools of Video Reliability, Validity, and Quality

DISCERN Instrument

The DISCERN Instrument (http://www.discern.org.uk) is an assessment scale developed for patients and providers to assess the reliability and quality of information. 7 The tool, which consists of 16 items, is divided into 3 parts: items 1 through 8 measure the reliability of the information, items 9 through 15 measure the quality of the information, and item 16 is an overall quality rating. DISCERN uses a 5-point Likert scale. For evaluating the first 15 items, responses range from “no” (1 point) to “yes” (5 points). For item 16, responses range from “serious or extensive shortcomings” (1 point) to “potentially important but not serious shortcomings” (3 points) and “minimal shortcomings” (5 points). The DISCERN scoring is usually provided as the sum of all 16 items (range, 16-80), broken down as very poor (16-26), poor (27-38), fair (39-50), good (51-62), and excellent (63-80) (Appendix Table A1). 17

JAMA Benchmark Criteria

The JAMA benchmark criteria instrument is one of the leading tools used to evaluate the medical information obtained from online sources. 29 It includes 4 criteria, authorship, attribution, disclosure, and currency, each worth 1 point, for a total score of 4 points. In the JAMA evaluation, a score of 0 to 1 point represents insufficient information, 2 to 3 points represents partially sufficient information, and 4 points represents completely sufficient information (Appendix Table A2). 30

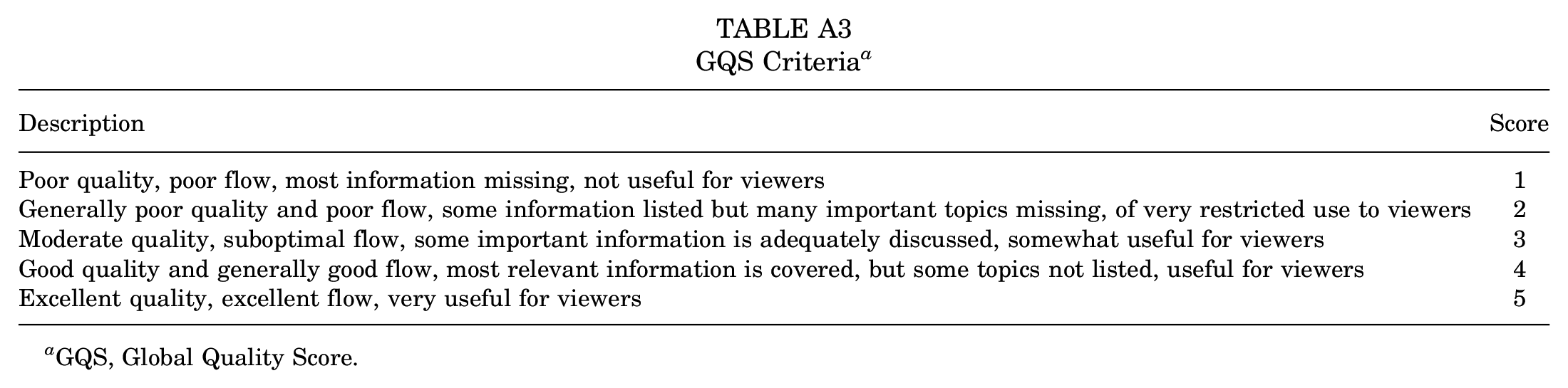

Global Quality Score

The GQS is a scoring system that can be used to assess a video in terms of its instructive aspects for viewers.10,22 It allows us to evaluate the quality, streaming, and ease of use of the information presented in online videos. In the evaluation of GQS, a score of 1 indicates that the video has the poorest quality and is not at all useful for viewers, while a score of 5 indicates that the video has excellent quality and is very useful for viewers (Appendix Table A3).10,22

Statistical Analysis

Descriptive statistics were used to describe video sources, video content, video information, and video characteristics as well as the video reliability, validity, and quality scores (ie, DISCERN, JAMA benchmark, and GQS). Categorical variables are shown as absolute frequencies with percentages. The normality of continuous variables was tested, and variables are presented as the means and standard deviations. Correlations between quantitative variables were estimated and tested using the Spearman correlation test. One-way analysis of variance was used to assess whether the video reliability, validity, and quality scores differed by video information. To assess whether scores varied between types of information, pairwise comparisons were performed using Bonferroni adjustment for multiple comparisons. A 2-tailed P value <.05 was considered to indicate statistical significance. All statistical tests were performed with Stata 14 (StataCorp) and R (R Foundation for Statistical Computing, https://www.R-project.org/).

Results

A total of 74 videos were included in the analysis. Of these videos, 70 (94.6%) were published by health professionals, while the remaining 4 (5.4%) were published by orthopaedic companies. Most of the videos were about surgical technique (n = 36; 48.6%), and all the videos (n = 74; 100%) had an educational aim. The mean length of the videos was 10.35 ± 17.65 minutes, and the mean online period was 18.64 ± 13.85 months. The mean DISCERN score, JAMA benchmark score, and GQS were 31.84 ± 17.14, 1.65 ± 0.87, and 2.04 ± 1.21, respectively. Detailed results are reported in Table 1.

Characteristics of the Videos Included in the Study (N = 74) a

Data are reported as n (%) or mean ± SD (range). GQS, Global Quality Score; JAMA, Journal of the American Medical Association.

Correlations Between Video Quality and Characteristics

All the quality scores were correlated directly with video length (P < .05), and the JAMA score and GQS were also correlated positively with the number of likes and comments (P < .05). Detailed results are reported in Table 2.

Correlations Among Video Quality Scores and Characteristics a

Boldface P values indicate statistical significance (P < .05). GQS, Global Quality Score; JAMA, Journal of the American Medical Association.

Correlations Among Variables

Positive correlations were found between number of likes and comments and video length; between period online and number of views; and between number of views and likes, comments, and duration (P < .05). Details are reported in Table 3.

Correlations Among Variables of Videos Included in the Study (N = 74) a

Boldface P values indicate statistical significance (P < .05).

Spearman correlation coefficient.

Score by Video Content

Videos that reported an overview about ramp lesions were the best in terms of quality for the DISCERN and JAMA benchmark scores, while biomechanics videos were the best according to the GQS. The worst category of videos was about surgical technique, with all having lower scores. Details are reported in Table 4.

Video Quality Based on Content a

Data are reported as mean ± SD. Boldface P values indicate statistically significant difference between groups compared (P < .05). GQS, Global Quality Score; JAMA, Journal of the American Medical Association.

Bonferroni adjustment was used for multiple comparisons.

For comparison of video content between-group effect.

Discussion

The main finding of this study was that the YouTube videos regarding ramp lesions were of low quality and validity based on the DISCERN, JAMA benchmark, and GQS criteria. These findings are in accord with other studies that have analyzed the quality and popularity of YouTube videos related to orthopaedic conditions in different subspecialties.1,4,10,11,13,16,19,21 In all the cited studies that have investigated this topic, content was rated low with regard to disease information, reliability, accuracy, and specific educational content, but, surprisingly, most of the videos were uploaded to the online platform by physicians. Even in the current study, 70 out of 74 videos were uploaded by health professionals. YouTube does not have an editorial process for uploaded videos, and any user can upload any video of their choice; therefore, it is plausible that this lack of restrictions allows inaccurate video content to be published, albeit from health professionals.

Patients are increasingly going online to research potential diagnoses before visiting orthopaedic clinics. The great majority of doctors see cases where a patient arrives at the consultation having already researched the topic online.19,20

This has a significant impact on the doctor-patient interaction, and 38% of doctors think that patients who arrive prepared hinder the effectiveness of the appointment. Physicians should be aware of the caliber of the content on such platforms because patients explore resources like YouTube and it may influence their decision-making processes.19,20

The lack of attention previously given to the ramp lesion was probably caused by an underestimation of their incidence due to a high rate of missed diagnoses, a lack of understanding of their biomechanical effects, and an intuitive belief that these lesions might heal spontaneously.25,26 The recent interest in these injuries heralds an increasing recognition of their importance and an emerging concept of their association with posteromedial knee instability. 24

Furthermore, a recent study revealed different psychological outcomes levels for ACL reconstruction and all-inside meniscal ramp repair compared with isolated ACL reconstruction, confirming the importance of education in patients being treated for a ramp lesion. 9

The current study suggests that ramp lesions are of interest to a relatively large audience, considering that it is still a niche topic, as the 74 videos retrieved from the research were viewed a total of 69,007 times. On average, these videos were viewed 932.5 times at a mean period online of 18.6 months. Despite the popularity of ramp injury videos, the average DISCERN score was 31.84, the average JAMA score was 1.65, and the average GQS was 2.04. These results imply that many viewers are exposed to videos that provide unreliable and low-quality information.

In the present study, the videos were distinguished into 6 categories: anatomy, biomechanics, clinical examination, overview, radiologic and surgical technique. The distribution of scores for DISCERN, which assesses the reliability and quality of information among categories, was heterogeneous. The category that scored the best in terms of the DISCERN score was “overview” with 58.53, resulting in significantly higher values with respect to the other categories except for “anatomy” and “biomechanics.” In addition, the scores obtained from the JAMA benchmark tool used to evaluate the medical information obtained from online sources were significantly better for the “overview” category, with a mean of 2.73; the mean scores in this category were significantly better in respect to the other categories except for “biomechanics” and “clinical examination.” According to the GQS - a tool assessing the instructive aspects for video viewers - the “biomechanics” category was the most valuable, with a mean of 3.5 points. Videos on biomechanics also received relatively high DISCERN and JAMA benchmark scores compared with the other categories, although not significantly different from the others. However, out 74 videos, only 15 (20%) of the sample belonged to the “overview” category, and only 2 were in the “biomechanics” category. Surprisingly, the surgical technique videos, which represented almost half of the sample (48.6%), had lowest DISCERN score, JAMA benchmark score, and GQS.

Regression analysis showed interesting associations between the outcomes: the DISCERN score, JAMA benchmark score, and GQS were correlated directly with video length, and both JAMA benchmark score and GQS were correlated positively with the number of likes and comments. In addition, video length was correlated directly and positively with interactions such as likes and views. This can be interpreted as the fact that videos with longer duration allow the content creators a greater and more detailed explanation of the condition. Consequently, longer videos are more reliable and valid and have a higher quality, so they are more appreciated by the general audience, composed of both physicians and nonphysicians. Similar to the current study findings, Celik et al 5 and Yüce et al 28 also reported a positive correlation between video quality and video duration. This seems to run counter to the prevalence of medical content even in the “Shorts” format on the YouTube platform. This type of video is less than a minute in length, is vertically oriented, and often includes background music and on-screen captions, and it is generally part of a series in which viewers can scroll through an endless queue of videos. This kind of video is also present on platforms such as Instagram “reels” or on TikTok videos.

Limitations

This study has several limitations. First, our keyword searches on YouTube may not have provided all the videos on the platform that address the topic. This is because ramp lesions are still the subject of study and have been referred to by various names over time. However, we took care to use different keywords, and we believe we likely included all possible names describing that entity. YouTube’s search algorithm results may also vary based on variables such as geographic location or user characteristics. Surgical videos may be omitted because the platform requires a registered user of legal age for viewing. Non-English videos were excluded from the analysis, further reducing the generalizability of our results. Finally, there are no validated tools to assess the quality of health information in videos. The current study also used reliability, validity, and quality assessment tools such as DISCERN, JAMA benchmark, and GQS, which have not been validated. In particular, the JAMA benchmark criteria were first published in 1997, with its attendant limitations 22 ; therefore, 2 other more recent and current scales (DISCERN and GQS) were used.10,11 However, these tools are used widely in studies that seek to evaluate these measures for online resources.

Conclusion

The educational content of YouTube regarding medial meniscal ramp lesions showed low quality and validity based on the DISCERN, JAMA benchmark, and GQS criteria.

Footnotes

Appendix

GQS Criteria a

| Description | Score |

|---|---|

| Poor quality, poor flow, most information missing, not useful for viewers | 1 |

| Generally poor quality and poor flow, some information listed but many important topics missing, of very restricted use to viewers | 2 |

| Moderate quality, suboptimal flow, some important information is adequately discussed, somewhat useful for viewers | 3 |

| Good quality and generally good flow, most relevant information is covered, but some topics not listed, useful for viewers | 4 |

| Excellent quality, excellent flow, very useful for viewers | 5 |

GQS, Global Quality Score.

Acknowledgements

Raw data are available upon request to the corresponding author.

Final revision submitted June 30, 2023; accepted July 31, 2023.

One or more of the authors has declared the following potential conflict of interest or source of funding: Research support was received from the Italian Ministry of Health (Ricerca Corrente). B.S.-C. has received consulting fees from Arthrex and royalties from Arthrex. AOSSM checks author disclosures against the Open Payments Database (OPD). AOSSM has not conducted an independent investigation on the OPD and disclaims any liability or responsibility relating thereto.

Ethical approval for this study was waived by IRCCS Ospedale Galeazzi-Sant’Ambrogio.