Abstract

Purpose:

Several studies have been carried out, and there is no classification for proximal humeral fractures (PHF) exempted from variability in interpretation and with questioned reliability. In the present study, we investigated the ‘absolute diagnostic reliability’ of the most currently used classifications for PHFs on a single anterior-posterior X-ray shoulder image.

Methods:

Six orthopaedic surgeons, with varying levels of experience in shoulder pathology, evaluated radiographs from 30 proximal humeral fractures, according to the ‘absolute reliability’ criteria. Each of the observers rated each fracture according to Neer, Müller/AO and Codman-Hertel’s classification systems.

Results:

The overall inter-observer agreement (κ) has been 0.297 (CI95% 0.280 to 0.314) for the Neer’s classification system, 0.206 (CI95% 0.193 to 0.218) for the Müller/AO classification system, and 0.315 (CI95% 0.334 to 0.368) for the Codman-Hertel classification system. We found loss of agreement in Neer’s classification as the study progressed, low agreement in the AO classification, and stable values in the different evaluations with the best degree of agreement for Codman-Hertel classification, with a moderate agreement in the second evaluation among the six evaluators.

Conclusion:

The Neer, AO, and Hertel-Codman classification systems for PHF with a single radiographic projection have a difficult interpretation for orthopaedic surgeons of varying levels of experience, and therefore substantial agreements are not obtained.

Introduction

Proximal humeral fractures (PHFs) comprise 6% of all fractures in adults with an overall incidence of 73 fractures per 100,000 inhabitants and has significantly increased over the last decades (PHFs have tripled over the previous 30 years). The expectations continue to rise as the population ages. 1 –7 Despite several publications (randomized trials, 8 observational studies, 9 and systematic reviews 10 –12 of the PHFs have been published), it remains difficult to interpret their results, to perform prognostic studies, and to obtain consensus on treatment recommendations when concise definitions and a standard ‘fracture language’ are lacking. 9,13,14 Published results for treatment and evidence-based recommendations are inconclusive. 10 –12 The most widely used PHFs classifications are the system created by Charles Neer 2nd in 1970, 15 updated in 2002, 16 the AO/OTA classification, based on the Müller classification from 1990 17 and updated in 2007, 18 and the Codman-Hertel binary fracture description system, 19 updated after the findings of low reliability in 1993 by Siebenrock and Gerber. 20

Neer’s classification system defines PHFs based on the number of fracture fragments (parts) and their displacement, which is defined as having a separation of more than 1 cm or an angle greater than or equal to 45 degrees. 16 The Müller/AO classification system was created for standardization and with defined terms of fracture description. Each bone and bone segment are classified into three categories (A, B, C), which are subdivided into three groups and each group into three subgroups. The type A fracture is extra-articular and unifocal, the type B is partially intra-articular and bifocal, and type C refers to intra-articular trace fractures, establishing 27 classification subgroups. Müller/AO classification system is more complicated than Neer’s classification. 21 The Codman-Lego system was developed by Hertel et al. in 2004 19 and graphically represents the four parts of the proximal humerus (head, major and minor tuberosities, and diaphysis). The absence of a union between any of the four parts represents a fracture trace, making 12 different patterns possible, labelled with the numbers from 1 to 12. Thinking in terms of fracture planes rather than fracture fragments represented the paradigm shift based on the vascularization studies of the humeral head by Hertel et al. 19 and has the highest agreement rates. However, this system does not differentiate between varus and valgus displacement, which is crucial for the reduction and fixation of this type of fractures. 19

Over the past decades, the reliability of the different classification systems has been questioned. Multiple studies with different imaging modalities (X-ray, computed tomography (CT) scan, and 3D reconstructions) have reported low agreement among observers when attempting to classify PHFs. 21 –27 To the best of our knowledge, no study of the ‘absolute reliability’ of the PHF classifications with X-ray images has been published. ‘Absolute reliability’ is considered to be the analysis of a minimum of 30 cases, by at least six blinded observers and a minimum of three to five separate evaluations every 2 weeks in time by each observer. 28,29 Our study aimed to evaluate the absolute diagnostic reliability of the most currently used classifications for PHFs (Neer, AO, and Hertel) on a single anterior-posterior (AP) X-ray shoulder image among orthopaedic surgeons with different levels of experience. We hypothesize that there is significant variability in the reliability of the classifications described, with better results among more experienced evaluators.

Materials and methods

We have prospectively analysed standard AP projection X-ray studies of patients between 50 and 80 years of age with PHF archived on the Picture Archiving and Communication System (PACS) of a secondary-level hospital. It was calculated (significance level of 5% and a power of 80%) that a sample size of 30 cases would be sufficient to detect a minimum variability of 10% between the groups of evaluators. X-rays of patients treated by PHF in a consecutive series over 1 year were evaluated. In some patients, nonoperative treatment was chosen and in others, surgical treatment. Out of 45 cases, the 15 worst quality images were discarded. We also excluded pathological fractures and previous fractures in the same location. Each of the 30 selected radiographs was assigned an ID number, and any signs were removed from identification (Figure 1). The images were randomly arranged for evaluation. No other projection or CT scan images were provided to the evaluators; the study’s primary objective was to assess the absolute reliability of the three classifications studied on a single anterior-posterior radiograph. The study was approved by the Institutional Review Board and the Ethical Committee.

Example of X-ray images provided to observers. An ID number was assigned to each X-ray, and any signs were removed from identification. As an example, this fracture was classified by most evaluators as IVA/IVB Neer, B1 AO, and 3/7 Hertel.

We designed a ‘absolute’ reliability study, according to Hopkins’ criteria (a minimum of 30 cases assessed by a minimum of six assessors, with a minimum of three assessments and with a minimum interval between each assessment of 2 weeks). 29 Three evaluations of 30 AP shoulder X-ray were performed, each assessment separated by 1 month, with each of the six evaluators rating them according to the three systems (Neer, AO, and Codman-Hertel), independently and blindly. In each of the re-evaluations, the order of the X-rays was changed to ensure the blinded evaluation.

All six evaluators were orthopaedic surgeons with varying levels of training and experience in shoulder pathology. The first group consisted of two shoulder surgery specialists with more than 10 years of experience (observer 1 and 2). The second group was made up of two orthopaedic consultants with over 10 years of experience who were not exclusively dedicated to shoulder pathology (observer 3 and 4). The third group was made up of two orthopaedic surgery resident physicians (postgraduate year-2) (observer 5 and 6). The selection of a minimum of six evaluators was a methodological requirement to analyse ‘absolute reliability’. The experience profile followed conventional criteria whereby a senior surgeon is considered a surgeon with 10 or more years of experience. In a homogenization session, before the study start, the criteria for the Neer, AO, and Codman-Hertel classifications were reviewed with all six evaluators. In this training session, the three classifications’ criteria and their differences were thoroughly reviewed with the evaluators. The necessary documentation was provided, and the evaluators could practice with examples of cases different from those in the study.

Statistical analysis was performed using the Statistical Package for the Social Sciences (SPSS), version 25 for Windows (SPSS, Inc., Chicago, Illinois, USA). We have used the kappa statistics to determine interrater reliability. Given the limitations of Cohen’s kappa analysis (agreement measurement limited to two observers), we have also performed Fleiss’ kappa, (κ) to determine the level of agreement between the observers of variables measured on a categorical scale. 30 We have reported the 95% confidence interval for Fleiss’ kappa. We have assessed the level of agreement among observers according to the criteria by Landis and Koch (<0 indicate no agreement, 0.00 to 0.20 indicate slight agreement, 0.21 to 0.40 indicate fair agreement, 0.41 to 0.60 indicate moderate agreement, 0.61 to 0.80 indicate substantial agreement, and 0.81 to 1.0 indicate almost perfect or perfect agreement). 31

Results

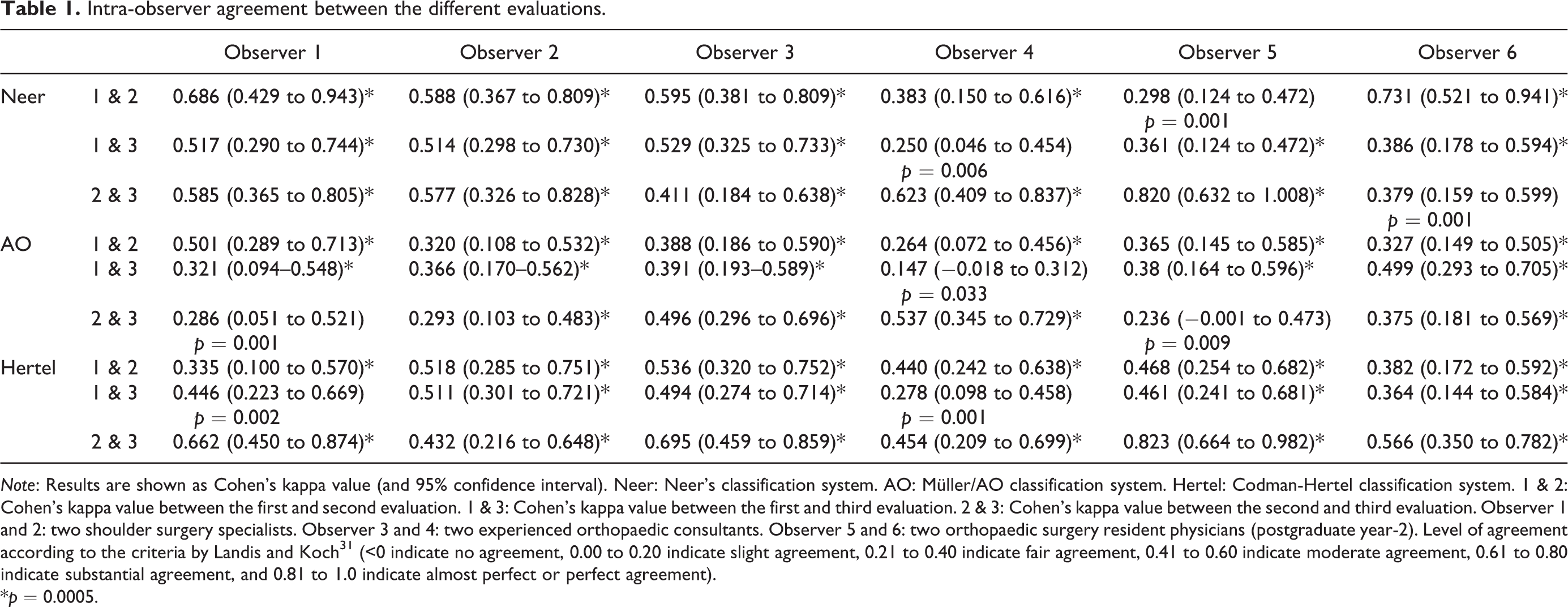

We recorded a total of 1620 observations among the six evaluators. The intra-observer agreement between the different evaluations is shown in Table 1.

Intra-observer agreement between the different evaluations.

Note: Results are shown as Cohen’s kappa value (and 95% confidence interval). Neer: Neer’s classification system. AO: Müller/AO classification system. Hertel: Codman-Hertel classification system. 1 & 2: Cohen’s kappa value between the first and second evaluation. 1 & 3: Cohen’s kappa value between the first and third evaluation. 2 & 3: Cohen’s kappa value between the second and third evaluation. Observer 1 and 2: two shoulder surgery specialists. Observer 3 and 4: two experienced orthopaedic consultants. Observer 5 and 6: two orthopaedic surgery resident physicians (postgraduate year-2). Level of agreement according to the criteria by Landis and Koch 31 (<0 indicate no agreement, 0.00 to 0.20 indicate slight agreement, 0.21 to 0.40 indicate fair agreement, 0.41 to 0.60 indicate moderate agreement, 0.61 to 0.80 indicate substantial agreement, and 0.81 to 1.0 indicate almost perfect or perfect agreement).

*p = 0.0005.

The result of intra-observer variability has been erratic. However, we can observe the tendency to decrease the intra-observer agreement, as the time between one evaluation and the other increases, in Neer’s classification and, on the contrary, the tendency to improve the degree of intra-observer agreement in the Codman-Hertel classification. The overall inter-observer agreement (Fleiss’ kappa) has been 0.297 (CI95% 0.280 to 0.314) for the Neer’s classification system, 0.206 (CI95% 0.193 to 0.218) for the Müller/AO classification system, and 0.315 (CI95% 0.334 to 0.368) for the Codman-Hertel classification system. Besides, when analysing the degree of agreement among the six evaluators in the three different evaluations with the Fleiss kappa statistician, we found loss of agreement in Neer’s classification as the study progressed (0.383 for the first evaluation, 0.282 for the second, and 0.163 for the third), low agreement in the AO classification (0.196, 0.2, and 0.179), and stable values in the different evaluations with the best degree of agreement for Codman’s classification, (0.239, 0.451, and 0.336), with the moderate agreement 30 in the second evaluation among the six evaluators. The differences in the agreement level among the more expert observers (observers 1 and 2) compared to the general agreement of the six evaluators in the three different evaluations are shown in Table 2. Table 3 shows the inter-observer agreement subdivided by fracture type.

Inter-observer agreement among the six evaluators and among the most expert observers in the three different evaluations.

Note: Results are shown as Fleiss kappa statistician (κ) (and 95% confidence interval). Neer: Neer’s classification system. AO: Müller/AO classification system. Hertel: Codman-Hertel classification system. Level of agreement according to the criteria by Landis and Koch 31 (<0 indicate no agreement, 0.00 to 0.20 indicate slight agreement, 0.21 to 0.40 indicate fair agreement, 0.41 to 0.60 indicate moderate agreement, 0.61 to 0.80 indicate substantial agreement, and 0.81 to 1.0 indicate almost perfect or perfect agreement).

Inter-observer agreement subdivided by fracture type.

Note: Level of agreement according to the criteria by Landis and Koch 31 (<0 indicate no agreement, 0.00 to 0.20 indicate slight agreement, 0.21 to 0.40 indicate fair agreement, 0.41 to 0.60 indicate moderate agreement, 0.61 to 0.80 indicate substantial agreement, and 0.81 to 1.0 indicate almost perfect or perfect agreement). In no case was the fracture classified as Codman-Hertel type 11.

Discussion

The extreme variability and complexity of PHF hinder a univocal definition of fracture patterns. There is quite a consensus on the difficulty of categorization of PHF according to different classification systems and in low reliability between and among observers on various imaging modalities. 20 –27,32 The most important aspect that our study contributes to this topic is the methodological application of the ‘absolute reliability’ criteria proposed by Hopkins. 29 To the best of our knowledge, no study of the absolute reliability of the PHF classifications with X-ray images has been previously published.

Majed et al. 21 evaluated several classification systems (Neer, AO, Codman-Hertel and a prototype classification system by Resch et al. 33 ) with three-dimensional printed models. They hypothesized that current PHF classification systems, regardless of imaging methods, are not sufficiently reliable to aid clinical management of these injuries. The κ coefficient values for the inter-observer reliability (four independent senior observers, experts in proximal humeral fracture management) of this study were 0.33 for Neer, 0.11 for AO, and 0.44 for Codman-Hertel classification system. Sukthankar et al. 34 assessed the intra-observer and inter-observer reliability of the Codman’s description by Hertel et al. 19 and compared it with the AO and Neer systems. PHF were examined with anteroposterior, lateral, and axillary radiographs. The authors conclude that the Codman-Hertel classification system provided a more reliable description of proximal humeral fractures than the Neer and AO systems and they argue that this is due to the descriptive approach of Codman’s system, which better defines the varieties of PHFs. In addition, they claim that the reliability of these systems can be improved by training in radiographic interpretation and correct measurement of fragment displacement. 34 Gracitelli et al. 24 aimed to evaluate the inter-observer and intra-observer reliability of different radiographic parameters, classifications, and surgical indication in PHFs among 10 orthopaedic surgeons with different levels of experience, who evaluated radiographs in three views from 40 PHF. They conclude that the pathomorphological classification 33 has higher reliability (κ = 0.504) than the Neer classification (κ = 0.298), and has been the factor that most influenced the surgical decision. Also, the results were influenced by the observer’s experience. In the study published by LaMartina et al., 11 three experienced shoulder surgeons agreed unanimously on treatment in only 51% of 274 cases. Furthermore, among the cases where the unanimous agreement was reached, only 63.5% of the patients underwent the selected treatment. The authors conclude that there will always be some degree of uncertainty in treating displaced PHF, that surgical decision making is difficult and that it may be prudent to involve experienced shoulder surgeons in deciding the best patients with displaced PHF management. 11

In our analysis, the most considerable degree of agreement among different observers has occurred when classifying PHFs using Codman’s system. In our study, the intra-observer agreement for Neer and AO classifications decreases as we temporarily move away from the start of the study (brief review of the classification systems and criteria homogenization session). We have also observed this loss of agreement effect when we have analysed the inter-observer variability. In contrast, this tendency to lose intra and inter-observer agreement has not been as noticeable with Hertel’s classification. This decline in the agreement may be due to the progressive loss of attention or interest from observers. The level of agreement has been higher among more expert observers, similar to that published in other studies, 24,32 except when the classification system used has been that of Codman-Hertel. This fact has two possible interpretations. On the one hand, the Codman-Hertel classification may be the one that best reproduces fracture patterns, regardless of the experience of the observer. On the other hand, and, perhaps in our study, being a classification less used in usual clinical practice, the training before the evaluations for the Hertel classification may have been similar among the observers, so the experience variable has had less influence on the outcome. It is essential to consider different fracture-related characteristics (not assessed by the Neer, AO or Codman-Hertel classifications) that may influence functional outcomes 24 : medial metaphyseal communication, 35 displacements in the coronal and sagittal planes, and bone loss on impaction. 10,21,36 The importance of having a system for classifying PHFs with low inter-observer variability goes beyond the academic realm. As indicated by LaMartina et al., 11 successful management of PHF requires deciding between nonoperative or surgical treatment, deciding on the optimal surgical option for each case, and the technical ability to perform this surgical treatment. It is evident that without a univocal language of fracture, these objectives are difficult to achieve. Regardless of the imaging system used, we do not know the circumstances of the excessive inter-observer variability. It will be necessary to identify them to reduce it and improve reliability.

There are some limitations to our study. Firstly, our study bases its originality on the method applied, since the variability in the interpretation of the different classifications, widely published, is not a novelty. Secondly, we lack a ‘gold standard’ to compare the answers given by each of our evaluators and thus know their degree of accuracy (sensitivity and specificity), so our study is limited to assessing the diagnostic reliability of the three classification systems analysed. Thirdly, all the observers come from the same hospital centre, reducing the evaluation’s variability and external validity. 24 Fourthly, we have exclusively used X-ray images (and a single standard anterior-posterior projection) for the study, which could decrease the intra-observer and inter-observer reliability. Although not without controversy, it is common to complete the information on the X-rays with 2D or 3D computer tomography (CT) images. 37 –39 In a recent comparison of the agreement of the Neer’s classification system among alone plain radiographs (AP and outlet view), CT images and 3D-reconstructed images, Torrens et al. 40 conclude that the different imaging techniques do not improve the agreement or concordance of the Neer’s classification system. Furthermore, CT images are not routinely used in all hospital settings, so using only X-rays in the study may help increase external validity. Moreover, the study aimed to determine whether a single AP radiological image was sufficient for adequate diagnostic matching between different observers. These limitations notwithstanding, the authors believe that the study’s outcomes are valuable because there are no published studies, to our knowledge, of the ‘absolute reliability’ of the PHF classifications with X-ray images.

Conclusions

The Neer, AO, and Hertel-Codman classification systems for PHF have a difficult interpretation for orthopaedic surgeons of varying levels of experience, and therefore substantial agreements are not obtained. According to our results, the system with the least variability in the classification has been that of Codman-Hertel.

Footnotes

Acknowledgement

The authors thank Manme Olvera (FIBAO Hospital Torrecárdenas) for its invaluable assistance with the statistical analysis.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.