Abstract

Digital media and citizen journalism has escalated the infiltration of fake news attempting to create a post truth society (Lazer et al., 2018). The COVID-19 pandemic has seen a surge of misinformation leading to anti-mask, anti-vaccine and anti-5G protests on a global scale. Although the term ‘misinformation’ has been generalized in media and scholarly work, there is a fundamental difference between how misinformation impacts society, compared to more strategically planned disinformation attacks. In this study we explore the ideological constructs of citizens towards acceptance or rejection of disinformation during the heightened time of a COVID-19 global health crisis. Our analysis follows two specific disinformation propagandas evaluated through social network analysis of Twitter data in addition to qualitative insights generated from tweets and in-depth interviews.

Introductions

The outbreak of SARS-CoV-2 novel coronavirus (COVID-19) has unveiled the potential for enormous socio-economic unrest resulting from sheer scale circulation of Fake News. The term Fake News has become a global mainstream fad since the 2016 US presidential election (Allcott & Gentzkow, 2017), yet the origin of the term can be traced back to a Harper’s Magazine article—’Fake News and the Public’—published in 1925 (Wang et al., 2019). While scientists and health professionals are busy taming the pathological consequences of the disease, the World Health Organization (WHO) has emphasized the equal importance of confining the circulation of misinformation in order to prevent serious socio-economic consequences (Tangcharoensathien et al., 2020).

The WHO described the phenomenon as an ‘infodemic’ —a pandemic of misinformation spread (Tangcharoensathien et al., 2020). The European Commission president, Ursula von der Leyen, dedicated a message to the world pledging that humanity is fighting not only against the virus but also against the surge of misinformation attempting to create large-scale social, political and economic unrests.

Plurality of voice is essential in a democracy, but fake news carries insidious abilities to provoke societies by distorting reality (Hartley & Vu, 2020). Citizen journalism, low barrier to entry and freedom of information flow has accelerated the dark art of media and news manipulation in the digital age (Tandoc Jr. et al., 2018). The COVID-19 situation has created a ‘perfect storm’ for conspiracy theorists to jump on the bandwagon once again (Tangcharoensathien et al., 2020).

Through electronic word of mouth (Kapoor et al., 2013), various false statements were being spread such as ‘garlic can cure COVID-19 overnight’, ‘hold your breath for 10 seconds to check if you have the virus’, ‘drinking piping hot water removes COVID-19 viral load’, and ‘5G network activation has led to COVID viral RNA mutation’. At a time when reliable information is vital to public health, social media space was dotted with misinformation faster than reliable facts. Evidence also suggests that mainstream media unconsciously played a key role in dissemination of misinformation while trying to debate on selected fake news (Lazer et al., 2018).

In this research, we establish the ideological distinctions between the notion of misinformation and disinformation. Mainstream research in this area has oversimplified concept of the fake news, resulting in a surge of studies trying to interpret people’s exposure to ‘misinformation’ (not disinformation) along with their consumption, resistance and propagation behaviour (Shahi et al., 2021). Exploration of this theoretical avenue has become increasingly popular during the COVID-19 global pandemic, as targeted manipulation of information has become a prime weapon for social destabilization through a global humanitarian crisis.

Modern fake news is becoming increasingly sophisticated in blurring boundaries between conspiracy and reality. In our endeavour to understand and classify the nature and characteristics of fake news, we argue that the foundations of fake news are multifaceted and, therefore, their propagation and consumption characteristics should be understood at a more fundamental level. To set the stage for a theoretical and empirical debate, in this article, we demystify the conceptual difference between misinformation and disinformation (Bennett & Livingston, 2018), and the role of disinformation in destabilizing socio-political orders during a global health crisis (Van Bavel et al., 2020). We present two case-specific examples of COVID-19 disinformation campaigns (anti-5G and anti-vaccine) that were successful in initiating wider ideological movements across the world. Our discussion aims to invigorate thoughts for a critical and systemic heuristic research into the topic area combining linguistic data science, advanced machine-learning-based natural language processing (NLP), and most importantly, consumer’s lived experience with the phenomenon (Schau et al., 2009). We further aim to support systematic future research development in the area by highlighting the wealth of data resource and collaborative research support available from renowned public and private disinformation observatories and fact checking organizations. These organizations are key to developing a global collaboration network, involving government, universities, humanitarian agencies and technological companies, in the fight against organized disinformation attack.

Review of Literature

COVID-19: Surge of Misinformation or Disinformation?

Fake news is a form of promotion that actively (or proactively) masquerades verifiable trust. Fake news promotes the illusion often displacing people’s worldview by questioning the very foundations of their ideological and moral constructs (Lewandowsky and Cook, 2020; Wang et al., 2019). Yet, the term has often been generalized, both publicly and scholarly, to be one-dimensionally described as a piece of misleading information, that is, misinformation. Little consideration has gone into understanding the surge of disinformation that is etymologically and fundamentally different to the concept of misinformation.

The definition of fake news is inconclusive and inadequate due to the fine line between subjective interpretation of information and misinformation (Cinelli et al., 2020). Over the past decade, the term has received popular attention from government, public bodies, industry and academia, yet arguably, it is extremely troublesome to provide any definitional rigor to the idea (Wang et al., 2019). Overgeneralization of the term means it can be conceptualized as ‘fabricated information’ (Lewandowsky et al., 2017), but fundamentally, misinformation represents a piece of information that is inadvertently false and circulated without a clear cynical intension. In contrast, disinformation is strategically manipulated and circulated with a clear purpose to cause socio-political unrests and disruptions. Disinformation is often amalgamated with semi-authentic news to enhance its aura of authenticity (Bennett & Livingston, 2018).

Historic roots of disinformation can be traced back to World War I political and ideological propagandas (Lazer et al., 2018), while one study reported a series of articles about life on the moon—‘Great Moon Hoax’—tracing back to 1835’s New York Sun (Allcott & Gentzkow, 2017). A recent study conducted by a group of economists at the University of Baltimore concluded that businesses and society suffer annual loses of $78 billion as a result of this growing problem (Pew Research Center, 2019). It is believed that medium to large organizations unwillingly pay around $9.5 billion a year to manage their reputation against targeted attacks online, while the socio-political costs of disinformation remain an uncharted territory especially within the public health sphere (Pew Research Center, 2019).

In addition to sophisticated production value, engineered manipulation of news circulation by technological firms like Cambridge Analytica has left society highly vulnerable to manipulation and destabilization. According to Tangcharoensathien et al. (2020), fake news is hijacking our minds and society. Despite technological advancements, the COVID-19 ‘infodemic’ has shown that we find ourselves in the middle of a losing battle.

Circulation of fake news during health crisis are often motivated by the desire to suppress or distort key official messages critical to recovery and response (Shahi et al., 2021). A combination of misinformation and disinformation has dominated the social media space since December 2019 when the virus became a worldwide news sensation. Constant manifestation and impersonation of key health authorities on social media helped organized disinformation attacks reach wider audiences (Smith et al., 2020). While misinformation was largely circulated in an attempt to suppress genuine health advice, disinformation is much more detrimental in inducing strategically manipulated ideas to alter people’s perception of reality and behaviour (Hartley & Khuong, 2020).

Disinformation in the public health space encourages uncertainty and risky citizen behaviour (Kharod & Simmons, 2020). Amidst the rise of COVID-19 crisis, selective politicization of key messages has fuelled multiple ideological propagandas (Hartley & Khuong, 2020). These ideological propagandas are not merely an idle topic; rather, it has deep socio-political implications manifested in the form of anti-mask movement, anti-5G movement, anti-vaccine movement, etc. According to the European Disinformation Observatory, the nature of disinformation has evolved through the COVID-19 crisis; ‘globalization of fake news’ continues to become sophisticated and adapted to local context creating periodic ‘disinformation storms’ (Tangcharoensathien et al., 2020).

Disinformation, Ideology and Activism

The role of ideology in shaping consumer activism and resistance has been well documented in advertisement and communications research (Brinson et al., 2019; Kozinets & Handelman, 2004). However, there is a lack of clear systematic insight into how disinformation redefines people’s ideological stigmas through socio-culturally constructed ‘alternative facts’ (Allcott & Gentzkow, 2017). Our inspiration for this debate comes from research widely documenting the role of social media as ‘echo chambers’ where people often engage to revalidate their perceived or inherent (dis)beliefs while rejecting oppositions. Disinformation propagandas develop such mechanism by targeting wider ideological crisis, often empowering people to exercise their ideological resistance against state, government, corporations, society or fellow citizens. In other words, disinformation is the modern makeover of conspiracy theories. Typically, conspiracy theories have little to no scientific or factual basis, yet it doesn’t stop them from influencing society at a large scale (Brainard & Hunter, 2020). Research has shown that approximately 60% of the British public believes in at least one conspiracy, while the numbers are even higher in the United States and beyond (Addley, 2018). Vulnerable people are more attracted to the alluring power of conspiratorial thinking, as it acts as a coping mechanism in handling uncertainty. In addition, the simple inquisitive nature of conspiratorial messages often empowers people in disputing mainstream politics.

In our endeavour to unpack the analogy and narratives of COVID-19 disinformation at a more fundamental level, we present two selected topic specific insights into the following: (a) The 5G conspiracy and (b) the ‘Plandemic’ an anti-vaccine conspiracy finding its ideological roots in the notion of anti-technocratic, anti-state and anti-corporate supremacy movement. These two campaigns were specifically selected as the primary context for this study due to their designated disinformation-based characteristics, aimed at destabilizing social order and scientific truth during the time of crisis. These campaigns also received a large number of academic and media interest during a heightened time, making them an important and interesting topic for investigation.

Misinformation and Disinformation Research During COVID-19 Pandemic

The circulation of disinformation during public health crises such as Ebola, Zika and monkey pox has laid the foundations for research into mis-disinformation throughout the past decade (Sell et al., 2020). The COVID-19 pandemic presents a further unfortunate but important opportunity to study and characterize fake news within the public health space. While mainstream media, like the BBC and Cable News Network (CNN), played a key role in raising awareness against COVID-19 misinformation, a number of social science, computer science and epidemiological studies have attempted to raise awareness amongst the academic and scholar community (Cinelli et al., 2020; Van Bavel, 2020). Scholarly development in understanding the role of misinformation (generalized) during COVID-19 has presented various perspectives including sentimental classification of misinformation and hate speech (Boon-Itt & Skunkan, 2020; Islam et al., 2020), characteristics of misinformation diffusion through social networks (Roozenbeek et al., 2020), comparative analysis of fake news dissemination across popular social media platforms (Cinelli et al., 2020), role of bots and automated accounts in creating organized disability (Al-Rawi & Shukla, 2020), people’s susceptibility to misinformation, etc. Studies have also examined misinformation networks utilizing social network analysis (Ahmed, Vidal-Alaball, et al., 2020; Ahmed, Seguí, et al., 2020).

Despite offering early academic interventions, the early stage of COVID-19 mis-disinformation research is limited by data quality and the application of sentiment classification algorithms with limited accuracy and performance, e.g., limited algorithmic capability to classify sentences into pre-assigned categories: joy, anger, surprise etc.

Rapid scientific progression is essential during the time of crisis, but it is also essential to honour academic integrity and robustness in order to develop a true representation of a critical social phenomenon such as mis-disinformation circulation. Concerns have been raised amongst research communities about the rapid publication of COVID-19 research literature through expedited review (Dinis-Oliveira, 2020), the trend is obvious in misinformation research where the concept was widely generalized, devaluated and used to fit specific research purposes. Automated text classification studies have widely generalized the categories of fake news classification into generic sentiments and word of bag models that borrow older classification analogy with poor model accuracy (Stieglitz et al., 2018). There is strong evidence against the failure of computational sentiment analysis in interpreting informal and unstructured social media comments implicit or ironic in nature (Smith et al., 2020). In addition, NLP methods are not designed to interpret visual image-based information, which is becoming more prevalent online. These are potential grounds for fundamental research bias. Also forcing recent information through old classification analogy restricts new insights. Previous research in the field adopting a robust longitudinal approach was successful in producing greater results (Bode & Vraga, 2018).

The next wave of studies in this area appeared to be theoretically and methodologically better informed. For example, Islam et al. (2020) presents leading insight into attitude and behavioural measurement towards people’s misinformation-sharing behaviour in the light of affordance and cognitive load theory (Gibson & Carmichael, 1966). Their conceptual model demonstrates that lack of personal attribute and social recognition could often become an agenda for individual self-promotion by sharing misinformation. Although this study has psychological foundation into the concept of ideology, authors did not expand on this construct extensively. Also, the study is primarily foregrounded into psychological and motivational factors of misinformation sharing, instead of behavioural aspects, due to the nature of selected foundational theories.

In contrast, Choudrie et al. (2021) took a demographic focused exploratory approach to older adult misinformation consumption and sharing behaviour. Due to the nature of the demographic and their vulnerability to misinformation exposure, the study provides a unique perspective into machine-learning classifier-based understanding of misinformation content. Additionally, the authors also unravelled how linguistic nuances are often ignored in consumption and dissemination of misinformation. Despite presenting an exploratory insight into behavioural traits associated with misinformation led digital divides, the authors paid little attention towards exploring the role of ideological predisposition in developing one’s perception of truth in factual information. More statistically grounded studies that attempted to measure people’s susceptibility to fake news (Roozenbeek et al., 2020) oversimplified the context by conceptualizing a ‘monological belief system’, ignoring the extent of reach and impact capability offered by individual fake news. Table 1 summarizes key theoretical and conceptual developments in this topic area.

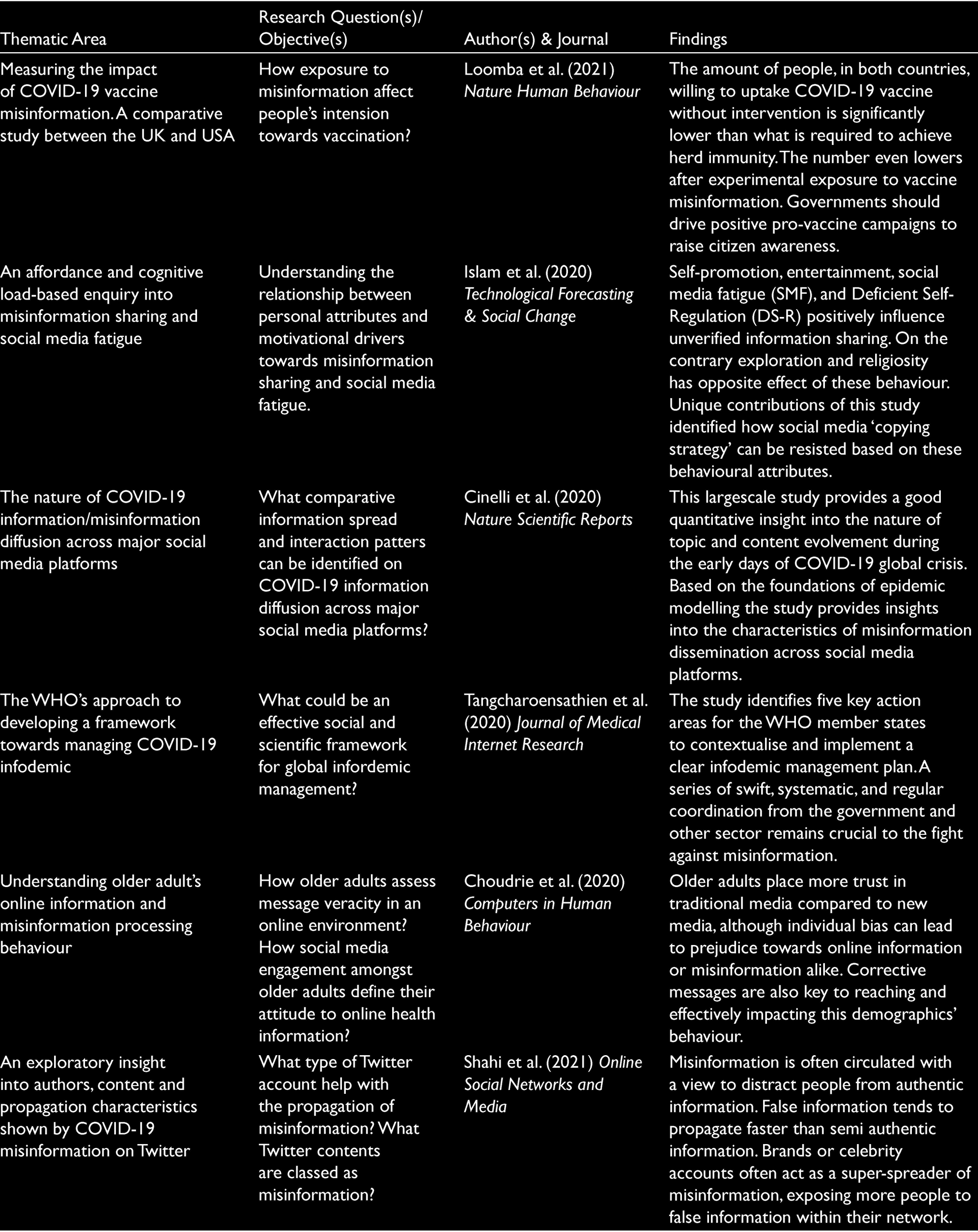

Theoretical and Conceptual Development in COVID-19 Misinformation Research.

After careful evaluation of literature and conceptual development in this area, it seems appropriate to claim that little insight has gone into why understanding the concept of misinformation fundamentally different from disinformation. As a result, there is no clear academic insight into the role of disinformation in destabilizing one’s perception of reality and truth. Such conceptual development had gained momentum in marketing and consumer behaviour research where scholars have shown how deeply grounded ideological constructs can shape into one’s perception of brand acceptance or resistance behaviour (Luedicke et al., 2010). These studies have shown how a group of people accepts Hummer as a reflection of American technocratic symbol, while others show more antagonistic behaviour due to their ideological roots into green movement and naturalism that sees Hummer as a destroyer of natural environment (Luedicke et al., 2010).

Similar work can be noted into emergence of iconic organic product consumption that finds deeply grounded roots into popular culture (Prothero, 2019). As disinformation compels people’s ideological attribution and interpretation to manifest beyond cultural and conceptual boundaries of the conventional world (Hartley & Khuong, 2020). Theoretical and conceptual oversights in this area need to be addressed by instigating the myriads of moral and ideological displacements resulting from the phenomenon. Our research endeavour provides further insight into characteristics of different online communities and their disinformation consumption and propagation behaviour using the principles of Social Network Analysis (SNA) (Wasserman & Faust, 1994; Yang et al., 2016). (SNA) applied to social media data is a unique approach compared to text-analysis because it can provide insight into relationships between users and uncover important amplifiers and communities.

Methods

Data was collected in two different stages considering two different analytical frameworks in mind. As described further, Twitter data was collected using NodeXL, which has access to the Twitter Search Application Programming Interface (API), while a combination of convenient and snowball sampling was used to recruit participants for the interview. All the interview participants were residents of the United Kingdom, and they were recruited through personal contacts or via social media networks. Participants were selected based on their online information consumption and sharing behaviour, in addition to having existing prejudice against vaccines and 5G networks. During the first stage of analysis, social media data was analyzed separate from the interview data, although at a later stage, qualitative findings were triangulated using axial and thematic coding techniques (Corbin & Strauss, 2014). In order to understand topic-specific disinformation propagation network dynamics, we further applied SNA techniques in understanding individual community and agent’s role in in the process. More specific information on our data retrieval process can be found further.

Twitter Data Retrieval and Analysis

NodeXL (release code +1.0.1.428) was used to retrieve data and perform SNA. Our SNA network visualizations are based on the interactions between users. Each circle represents a Twitter user, and lines between users indicate relationships such as retweet, reply and/or quote-tweet. More specifically, community vertices segmentation within the network map was generated using the Clauset-Newman-Moore algorithm (Clauset et al., 2004), while the map layout was developed using the Harel-Koren Fast Multiscale layout algorithm (Koren & Harel, 2003). NVivo 12 was utilized to perform initial topic level thematic analysis to generate preliminary insights.

Twitter Dataset for Plandemic (Anti-Vaccine)

The tweets in the network were tweeted over the four-day, 20-hour, eight-minute period from 1 May to 6 May 2020. There were 6,037 retweets, 3,050 replies to, 11,508 mentions in retweet, 8,882 mentions and 1,466 individual tweets. The total tweets within the network were 30,943 and the total users in the network were 6,990. The keyword ‘plandemic’ was used to retrieve data related to this conspiracy, which would pick up all mentions of this word including ‘#pandemic’.

Twitter Dataset for 5GCoronavirus

The data set used in this article consists of 6,556 Twitter users. The tweets in the network were taken from 27 March to 4 April 2020. The network was based on a total of 10,140 tweets, which are composed of 1938 mentions, 4003 retweets, 759 mentions in retweets, 1,110 replies and 2,328 individual tweets. The keyword ‘5GCornaviurs’ was used to retrieve data on this conspiracy because this formed the ‘#5GCornaviurs’ hashtag which was most popular during this time.

Interviews

To enhance the nuance of our analysis we conducted 15 in-depth interviews with people believing in conspiracy and anti-conspiracy online messages. In order to achieve an in depth understanding of subjective meanings captured through ‘consumers’ lived experience’ of COVID-19 disinformation propagandas, we used long interview and analysis techniques used by Fournier (1998) stemming from modified life-story techniques (Denzin, 1978). Thematic analysis from the interviews were triangulated with social media analysis to derive pervasive meanings (Fournier, 1998). Respondent names presented in this article were anonymized following ethics protocols.

Results

Disinformation and Anti 5G Movement

Disinformation theories do not always stem from genuinely held false consensus. During a crisis, people seek a greater amount of information and conspiracy theories fill the vacuum through rhetorical means that often help to escape the inconvenience of reality. One of the most controversial and effective spread of a disinformation campaigns during the COVID-19 pandemic was the 5G conspiracy. 5G is an advanced telecommunication technology allowing faster communications through high frequency airwaves. As the timeline for activation of 5G technology in Wuhan coincided with the spread of the virus, it provided a relatively easy logical ground for the conspiracy to take off. Conspiracy theorists added further suspense and ammunition to the cause by releasing fake videos of ‘mystery mass death of birds’ in Wuhan. In the absence of a clear scientific explanation, planned strategic assimilation of simultaneous makeshift scientific authenticity destabilizes even the most sceptic minds.

Increased number of activist groups across the United States, the United Kingdom and Australia started to mass protest against the activation of 5G networks, citing it as the real cause of the pandemic. The situation became more vulnerable when 20 5G masts were set ablaze in the United Kingdom over the Easter weekend. Further police investigation unveiled the suspected protesters and activists were largely influenced by two controversial thoughts: 5G suppresses one’s immune system, and the virus uses radio waves to communicate and select victims.

Arson attacks on 5G masts were also reported in Canada, while in Australian cities people marched against 5G and vaccines. A study reviewing over 500 parliamentary committee submissions from the public in Australia, calling for an enquiry into 5G, showed the power of organized disinformation in reaching highest government levels (Jensen, 2020).

Findings from our research shows that people subscribed to widespread 5G conspiracy as it reinvigorated their inherent anti-technocrat and state-supremacy ideology. For an ideology to be successful, its material association is paramount in exercising self-belief. From time to time, such ideological exercises develop into organized socio-political movements, e.g. anti-brand movement (anti-corporate ideology), LGBT movement (gender equality), Black Lives Matter movement (race equality), etc. The anti-5G movement was a manifestation of perceived or inherent technological fear in the form of ‘radiophobia’ (Tuters & Knight, 2020). As Tuters and Knight (2020) discussed, public fear against microwaves started back in the 1970s, while the introduction of 2G technology saw similar backlashes in the 1990s. The 2020 5G movement found better organized ground as conspiracy theorists took opportunistic advantage of the fear of the unknown in gaining wider support. As one of our respondents described,

I regularly watch science documentaries and videos, and I can tell you that there is no clear evidence that 5G is not harmful. (Samuel, interviewee)

The association of 5G conspiracy with radio wave transmission stems from several anti-technocratic and anti-state sentiments grounded into the mythical thoughts of ‘mind control experiment’. The 5G conspiracy is deeply rooted into the conspiratorial sentiments of the 1990s US military programme HAARP (Tuters & Knight, 2020). A large high frequency radio transmitter installed in Alaska to study ionosphere was designated as a weather and mind-control device by early conspiracy theorists. The plot has been reframed to the 5G narrative in victimizing state and technology in the wake of a global health crisis. Government imposed warnings and restrictions in the form of lockdowns and mandatory masks were further amalgamated into the storyline to question personal freedom and democracy.

Total lockdown so you won’t riot while #5GCOVID fibre of death is laid. Believed it’ll be done in 12 [weeks] especially in London. Construction workers amongst those allowed to work. #WhereisOurHumanity. (Anonymized Tweet).

Scientific evidence takes time to develop and disseminate, while during time of crisis, people seek fast information and simplistic logic to make sense of surrounding complexities. As widespread social crisis breeds uncertainty, disinformation and conspiracy theories empower people by transforming them into independent thinkers and free agents of an alternative reality. Conspiracy theorists takes early opportunities to fabricate ideological jigsaws creating false sense of heroism and empowerment amongst citizens by (re)interpreting, (re)fabricating and (re)circulating disinformation.

I remember back in March-April, nobody knew what was going on. All I knew that there would be a nationwide lock…. I had never heard anything like that in my life before. (Doreen, interviewee)

As Doreen mentioned, there was no clear official explanation of the origin of the virus and the disease during the early days. While the scientific community was busy establishing empirical truth, lack of early communications from government bodies provided the perfect ammunition for people to perceive the pandemic as a government failure. On this note, we documented a number of frequent ani-government social media movements trending since April 2020, that is, #WhereisBorisJohnson, #BorishasFailedthe Nation #TrumpHasNoPlan, #TrumpLiesAboutCoronavirus. Studies from the Edelman Trust Barometer suggested in a Western democracy, when state driven scientific knowledge and explanation becomes scares, distrust in government and science grows rapidly with prevailing sense of inequality and scepticism.

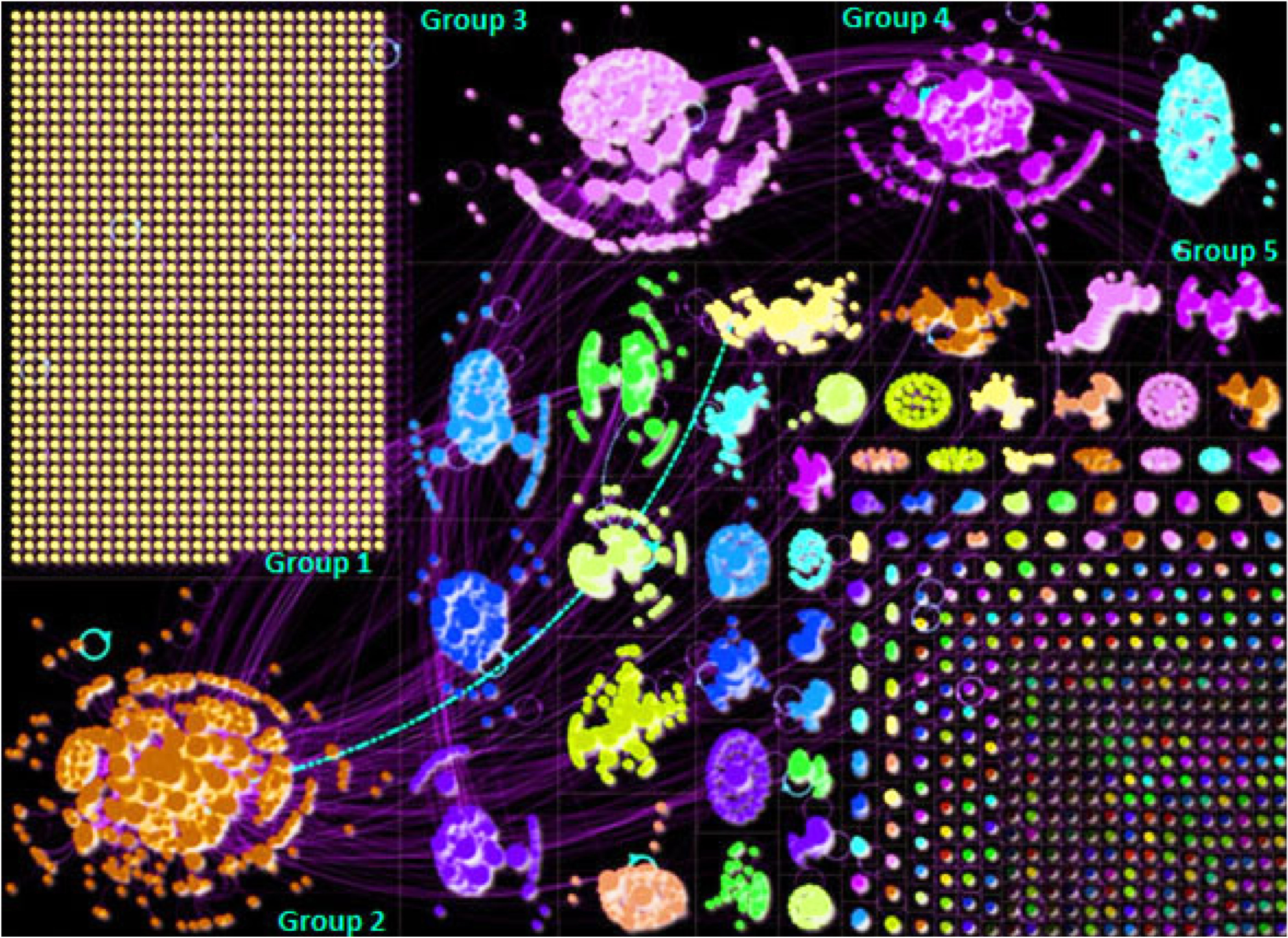

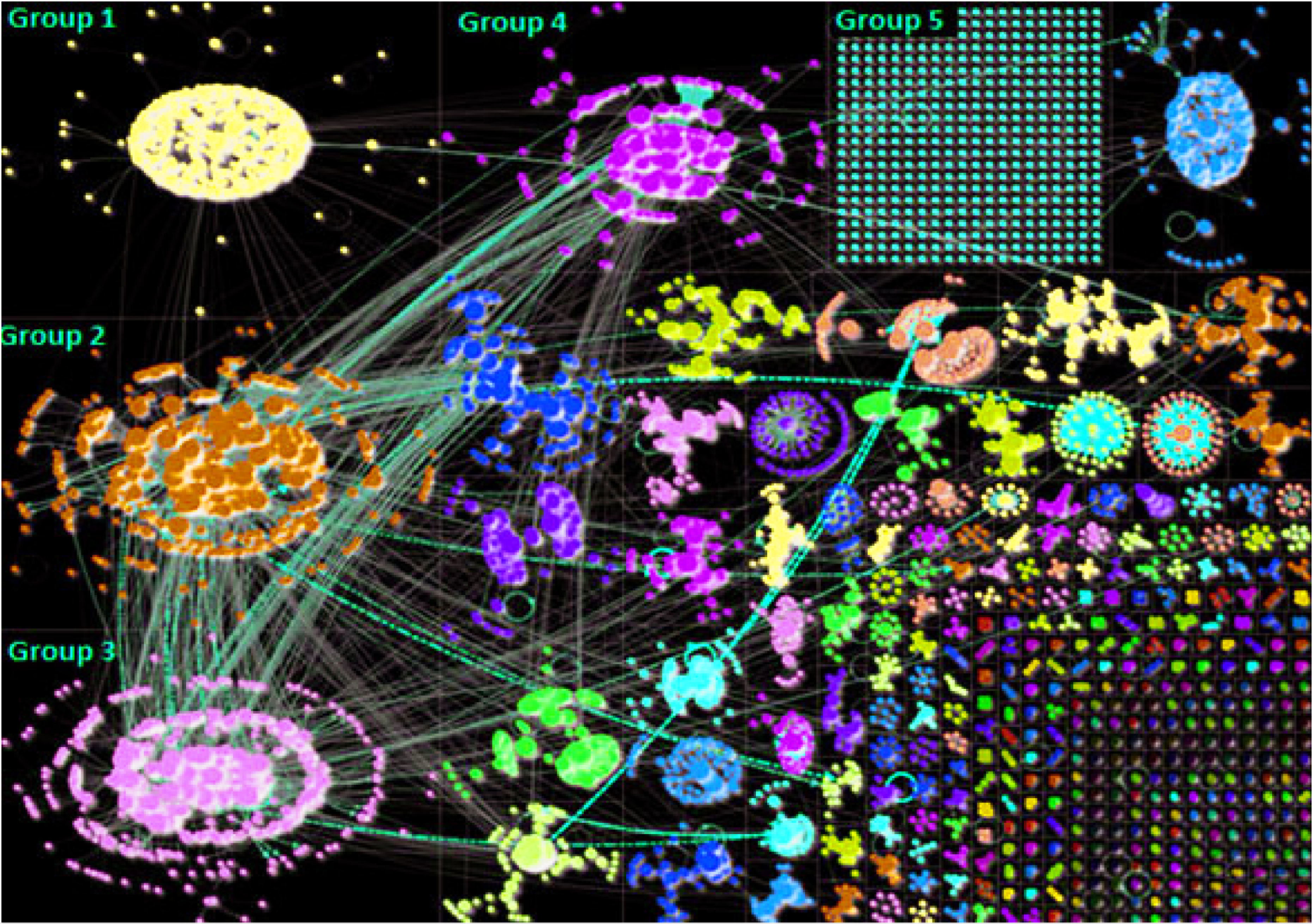

The agents of disinformation circulation in this case could be readily characterized by their confrontational attitude towards scientific evidence, while personal freedom and democratic rights were hypocritically challenged to drive moral protagonism. Results from our Twitter SNA analysis are presented in Figure 1.

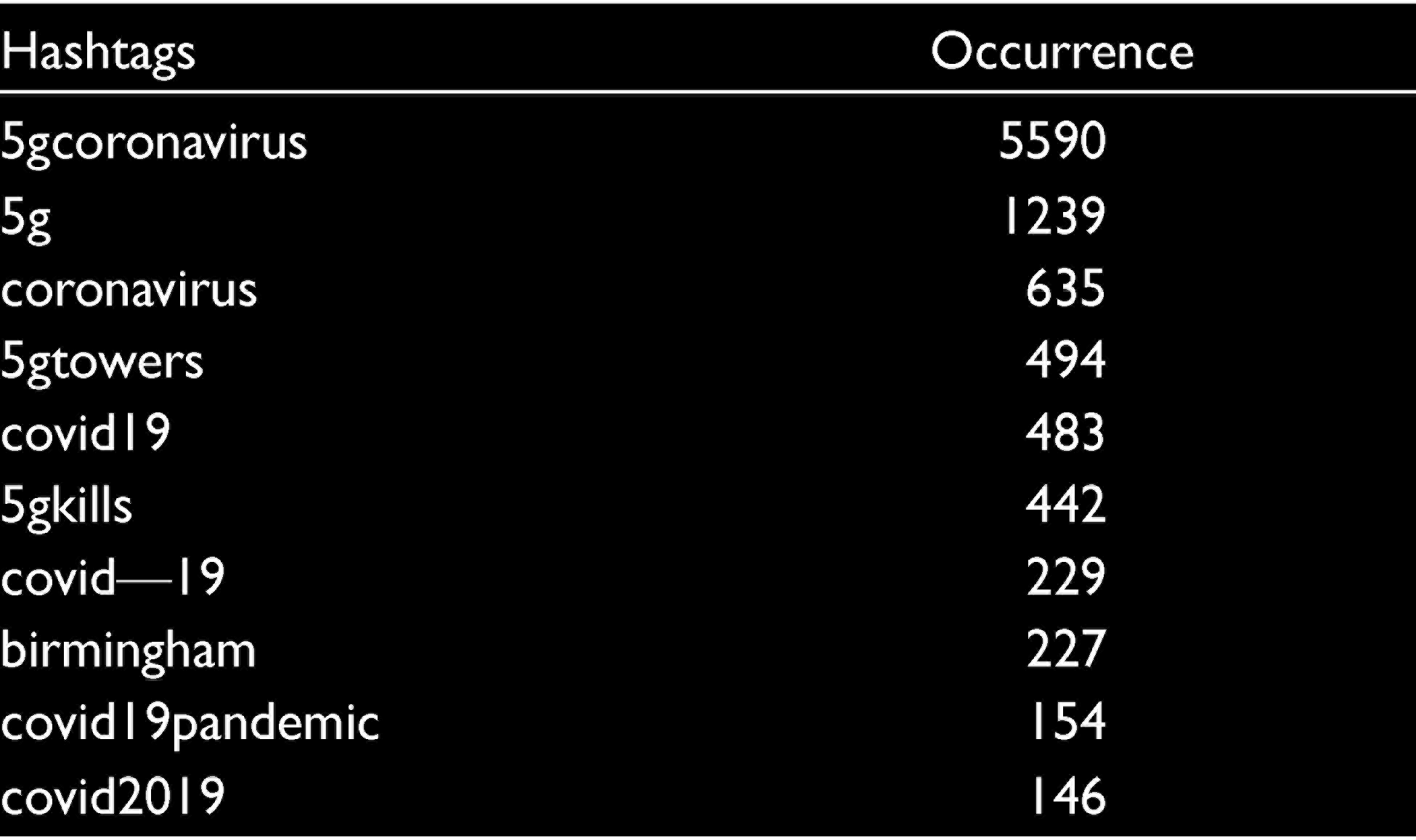

Our network analysis on the topic shows that the largest cluster (Group 1) was formed by isolated users. These people shared their opinion without propagating the agenda within the network. A wide range of hashtags were used across the group; however, association with hashtags like “#5Gkills”, amongst others, may have inadvertently exposed their followers to conspiratorial thoughts. In Group 4, ‘#depopulationagenda’ was significantly popular, while group 6 saw a lot of discussions on ‘satanicsystem’. In comparison, Group 2 attracted a lot of discussion on ‘5gdangers’ that linked to external websites hosting greater conspiratorial thoughts. Research has noted how celebrity influencers can appear to the public (Gauns et al., 2018), and one avenue to raise awareness could be through the use of such influencers. A full overview of the top hashtags can be found in Table 2.

Top Hashtags within 5G Disinformation Network.

Plandemic and Anti-Vaccine Conspiracy

Anti-science scepticism often lacks wider audience reach due to mainstream media censorship. As online media remains an open platform for widely accessible contents, these media spaces are periodically utilized to plant and propagate seeds of socio-political antagonism. A viral video documentary, ‘Plandemic’, tops this category by championing sophisticated production and meteoric spread that baffled even the most qualified scientists and journalists. The documentary was believed to be produced by a conspiracist named Mikki Willis, featuring a former scientist Dr Judy Mikovits. In a short and sophisticated documentary format, the video conveyed ‘research-based evidence’ towards the harm of wearing masks and vaccine administration. Milkovits claimed that her research efforts were suppressed by government and big pharma companies as vaccines weaken immune systems, while wearing masks self-activates viruses. She further claimed that the outbreak was a conspiracy led by Bill Gates, WHO and big pharma companies.

Anti-vaccine sentiments are not a new phenomenon. Prior to the COVID-19 outbreak, WHO flagged ‘vaccine hesitancy’ as one of the top global health prosperity threats. Behavioural science literature has already identified a number of drivers for such ideological developments including complacency, ignorance, inconvenience, risk perception, disbelief and lack of confidence (Betsch et al., 2015). Exposure to anti-vaccine related information is also shown to negatively impact people’s intension; research suggests even a few minutes of exposure to online content can have lasting impact on someone’s inner consensus.

A multiple mediation analysis carried out to understand the effect of anti-vaccine conspiracy suggested the disillusion of powerlessness and being controlled by government and health authoritarian regimes act as significant mediators for vaccine resistance (Betsch et al., 2015). Ideological foundations for these beliefs are not newly founded; instead, resistance towards the most commonly implemented vaccination programmes like polio is also well founded.

Anti-vaccine sentiments largely target different parts of social and political spectrums. Contrary to previous ani-vaccine campaigns, COVID-19 conspiratorial messages largely relied on collective sensemaking within online and social media space. Here it is important remember the importance of culture and context in shaping anti-vaccine narratives across the world. For the purpose of this research, our data specific focus remained on Western countries. A thematic analysis of social media and interview data revealed three key themes across anti-vaccine ideology: (a) morality, safety and efficacy; (b) personal freedom and political governance antagonism; and (c) religious belief.

Much like the 5G conspiracy, anti-vaccine proponents relied on the adaptation of old narratives. In this context the issue of safety and efficacy was repeatedly justified using false scientific narratives. Due the lack of early scientific transparency and speed of COVID-19 vaccine development, conspiratorial thoughts primarily developed on people’s limited scientific knowledge and fabricated pseudo-scientific logic.

Reminder: the Pfizer vaccine uses mRNA technology which has never been tested or approved before. It tampers with your DNA. 75% of vaccine trial volunteers have experienced side effects. (Anonymized tweet)

Safety narratives were fortified by linking them to old conspiratorial thoughts surrounding autism and the MMR vaccine. Negative words such as ‘GMO’, ‘aluminium’, and ‘thalidomide’ were infused into anti-vaccine messages in order to provoke ‘impure’, ‘non-biological’ consensus. As one of the respondents, an animal cruelty activist, described:

I am a vegan and to me vaccines are a way to inject toxins into my body. Healthy eating and living are the doorway to strong immunity. (Sharon, interviewee)

The discourse of animal cruelty falsely encourages Sharon’s moral aversion against vaccines. Disinformation efforts adding more ‘unnaturalistic’ elements to vaccine composition only add to more uncertainty. Anti-vaccine proponents were often found to establish their authenticity before provoking anti-authoritarian thoughts.

Having genuine concerns about a rushed vaccine that was produced by a company primarily concerned with making a profit does not make you an anti-vaxxer conspiracy theorist. It means you’re being sensible. (Anonymized tweet)

Beside safety and efficacy, COVID-19 anti-vaccine sentiments were built on anti-corporate and anti-government motives. As corporate bodies set to capitalize on public funds by selling vaccines as commodities, anti-capitalist sentiments were reinvigorated by targeting government and entrepreneurial activists. Bill Gates was a victim of such an organized disinformation attack. The conspiratorial advertising against him claimed that mass vaccination is a meme to microchip the entire world population. In this framework, fictional transhumanism and anti-capitalism mythology were weaved together to create an ideological construct actively countering against science and pragmatism. The notion of vaccine nationalism was further stipulated in the form of ‘Sputnik V’ to infiltrate into World War and Cold War analogy subliminally resting in people’s mind.

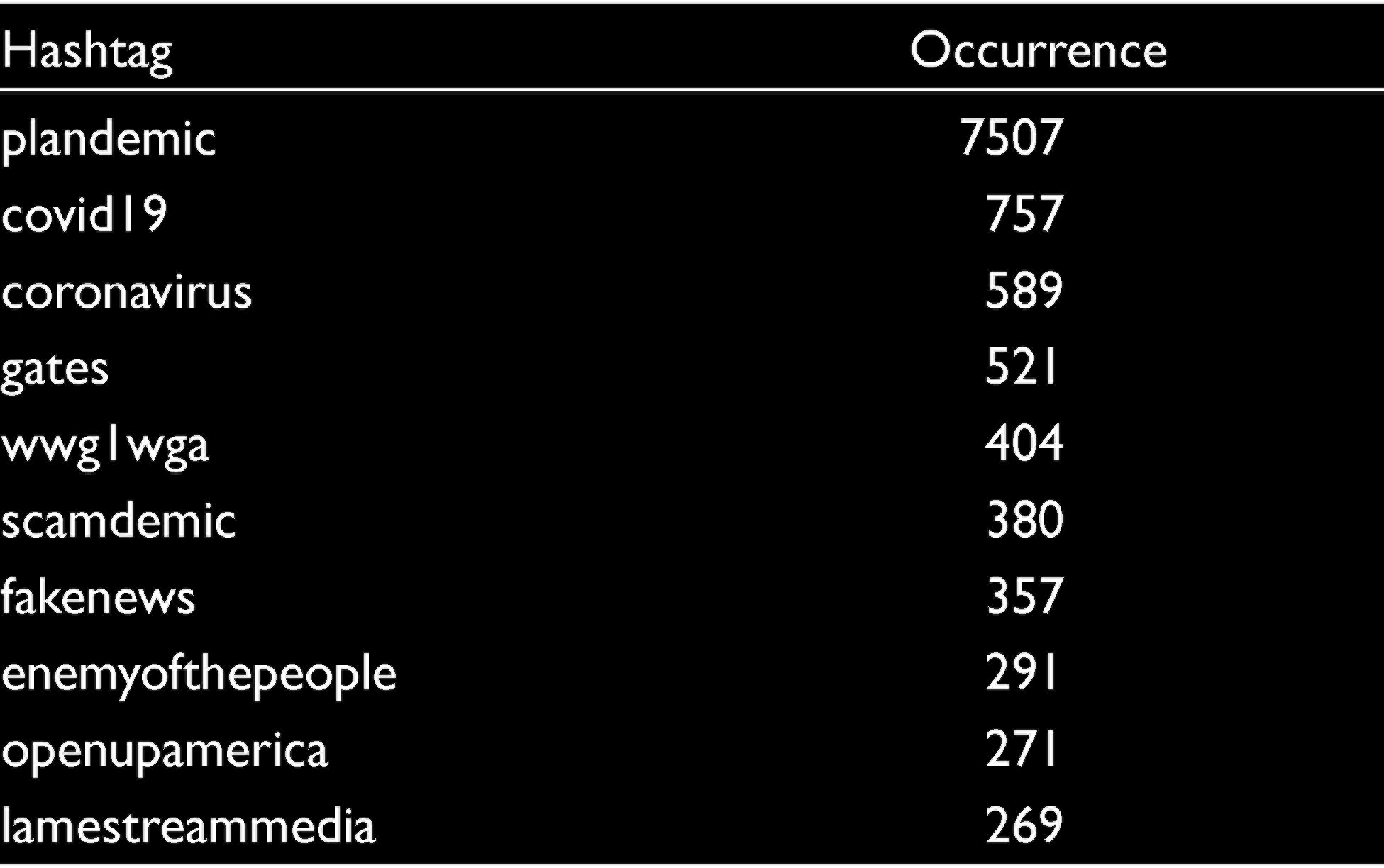

The documentary video domain link ‘plandemicmovie.com’ was frequently shared and became the centre of discussion amongst Groups 2, 3 and 4 (Figure 2). Amongst other interesting observations, Group 3 appeared to actively promote transhuman conspiracies using ‘#billgatesbioterrorist’. The anti-vaccine and anti-mask agenda dominated almost every intra-inter group communication, while conspiracies against the US government surfaced using ‘#potus’. Researchers and analysts were able to trace the origin of the video to a right-wing Facebook page named QAnon with 25.000 followers. However, the video reached millions of potential audiences, often led by opinion leaders and micro-celebrities, before it was taken down. Surprisingly, a small number of social media users within the network were able to identify the nature of the campaign and tried to warn others using #fakenews. A full overview of the top hashtags can be found in Table 3.

Top Hashtags within Plandemic Disinformation Network.

Figures 1 and 2 are useful in highlighting the interactions of Twitter users (such as retweets, tweets, mentions, quotes and replies). They highlight that Twitter users can form virtual crowds online and spread misinformation. Clusters were formed based on the frequency of relationships between users (i.e., users who mentioned each other frequently). Users would also communicate across groups because each group was discussing a particular aspect of the conspiracy. The different groups have an impact on fake news being disseminated because each community may pick up on a different strand and/or news story related to a particular conspiracy that begins to attract an audience. Moreover, in both Figures 1 and 2, interactions between groups were also taking place where users in one community were also engaging with content across other groups within the network.

Discussion

Theoretical Implications

The departure point for this study was initiated to provoke organized and progressive research investigations into the impact of disinformation, especially during the time of socio-economic crisis. The COVID-19 global pandemic provided us the opportunity to study two important case-based narratives of moral and ideological movement mobilized by disinformation rhetoric. We contribute to this emerging steam of the literature by showing that disinformation research need more deeper behavioural evaluation compared to existing generic misinformation research. In our endeavour, we integrate the emerging stream of consumption ideology research (Varman & Belk, 2009) with COVID-19 information crisis and communication research stream (Staszkiewicz et al., 2020), demonstrating that on a fundamental ground, widespread disinformation appears to evoke historic conspiracies with a contemporary makeover. The 5G and Plandemic, both conspiracies, stemmed from underlying ideological insecurities or disbeliefs residing in society for a very long time (Holt & Thompson, 2004). Narratives for this disinformation-led moral antagonisms are often drawn from anti-science, anti-technocratic and anti-state sentiments fabricated into a pseudo reality (Luedicke et al., 2010).

Our study adds a new stream of thoughts by showing that misinformation spreads on a lighter note, while disinformation destabilizes society by transforming consumers into active agents of interpretation and propagation. Disinformation supplies false moral validation, vindicating science and reality. Disinformation composers are preachers of socio-political extremism, where consumers play the role of passive aggressive dupes. Our SNA demonstrates that consumers can either act in groups or as lone agents in multiplying the impact of disinformation during the time of crisis. In Islam et al.’s (2020) words, such behaviour often stems from typical personal attributes such as novelty seeking. Such behaviour can be controlled by following Lewandowsky and Cook’s (2020) suggested ‘prebunking’ and ‘debunking’ strategy in cognitively empowering citizens against mass manipulation, although the process appears to be more difficult than previously thought. More aggressive mass media promotion of truth and fact dissemination, along with celebrity, government organization and humanitarian agency-based opinion leadership, are essential in breaking the social networks and chains of disinformation propagation (Francisco et al., 2021). A number of recent studies have advocated such an approach, but our study demonstrates what type of strategic messages need to be crafted in order to counteract deeply rooted ideological sentiments within disinformation campaigns.

We believe that besides media and linguistic analysis, it is also important for scientific research to develop on complex mathematical simulation models. One such example can be identified in Brainerd and Hunter’s (2020) agent-based model that is able to statistically calculate level (CFR) and degree (Ro) of misinformation spread within a population with three different levels of interventions. While their model is developed and perfected for the COVID-19 pandemic, findings from their previous disease outbreak studies suggest that ‘immunizing’ even 20% of a social network population through education can significantly break the chain of circulation, preventing serious socio-economic consequences. Such mathematical modelling and simulations accompanied by social disinformation network structures unveiled by our studies can help to identify the key links within these complex networks, while these selected individuals could be monitored or deactivated to culminate mass disinformation movements before they gain widespread social momentum.

Managerial and Policy led Implications

Through this article, we call for the development of collaborative academic network towards sharing knowledge and expertise in developing a progressive and heuristic knowledge framework.

Due to the sheer volume of mis-disinformation circulating in the public domain, it is important to develop and apply sophisticated identification and analytical tools based on advanced NLP-based machine learning techniques, that is, reinforcement learning (RL). While it is possible to develop custom-built language processing engines focused on mis-disinformation linguistics, developing the groundwork for such technologically sophisticated tools require immense knowledge, experience, manpower and datasets. It is more convenient and accurate to adapt an off the shelf sophisticated language processing engines (IBM Watson, Google Cloud NLP, Amazon Comprehend, Stanford Core NLP) professionally developed and perfected over years by technological giants.

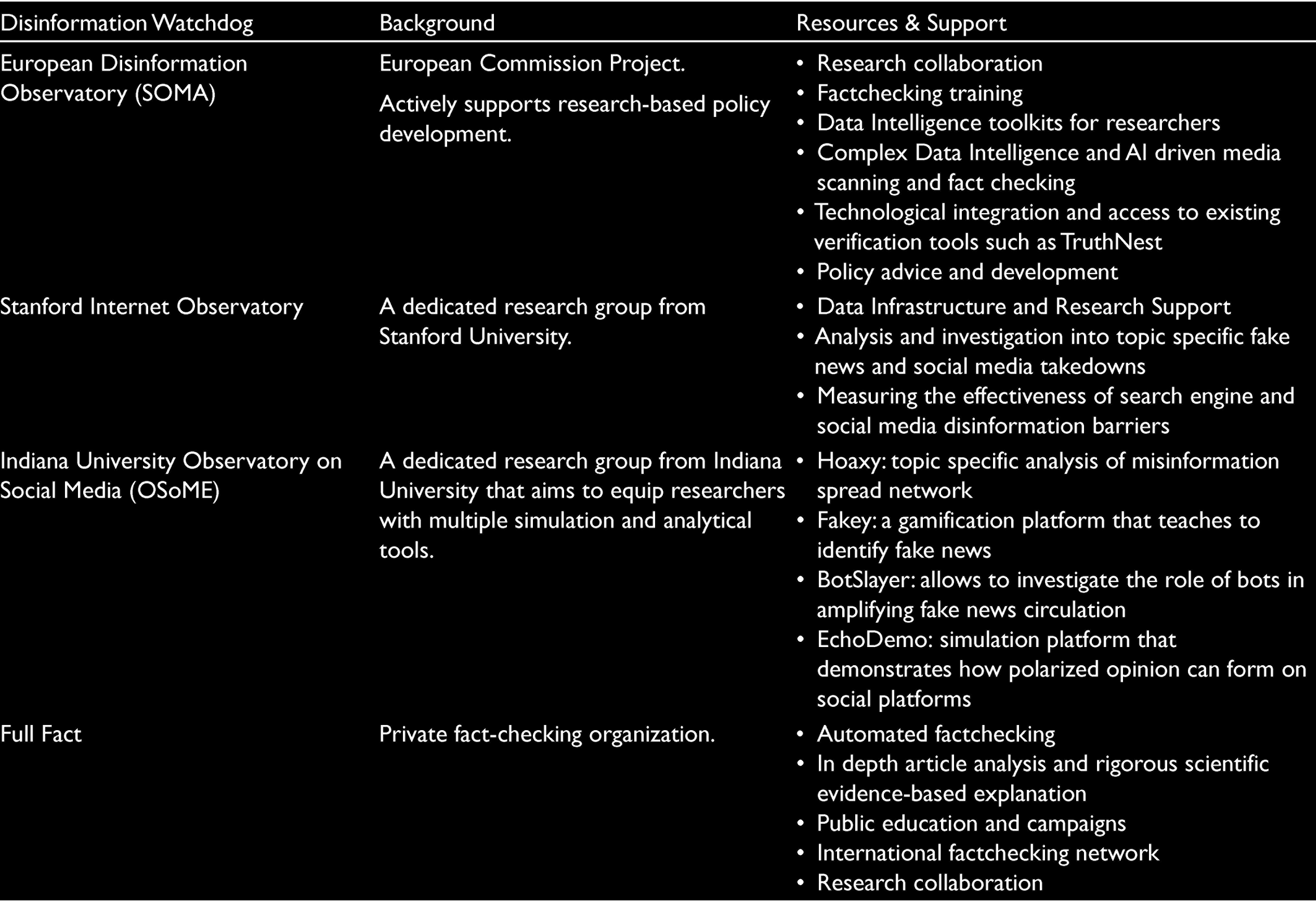

In addition to machine and deep learning algorithms, trained human interventions are also important in understanding the narratives and trajectory of misinformation in developing preventative measures and policies. Key government, public, private and academic institutions are collaborating to develop disinformation observatories (Table 4) by developing sophisticated algorithm-based fact checking tools. These tools are capable of monitoring media space while actively educating researchers and citizens; for example, the European Disinformation Observatory (SOMA) provides access to important fact-checking platforms such as TruthNest and Truly Media. The Observatory on Social Media (OSoME) provides access to a number of simulation, gamification, fact-checking and network-mapping tools for researchers and journalists to learn, apply and develop knowledge in the subject area. Besides sophisticated technological tools, disinformation watchdogs also host a wealth of research and supportive resources in the form of appropriate data repositories, data intelligence tool kits, research data exchange solutions and algorithm development advice in addition to valuable research and media training. In addition, authentic historic news repositories such as Aylien hosts more than 140 million aggregated news information from over 30,000 media licensed feeds in up to 14 languages that can help aid future research in this area.

List of Disinformation Watchdogs.

The Stanford Internet Observatory also provides thought provoking analytical insights in the form of effectiveness of search engines and social media in preventing a topic-specific surge of mis-disinformation storm. Research ideas developed on progressive knowledge from these institutional efforts can make true advancement in the field rather than overcrowding academic journal space with another ‘me too’ research idea. A number of these disinformation watchdogs provide priority-based policy focused research agendas paramount to tackling future disinformation attacks.

Government, public, private and social media agencies have received the biggest wakeup call for the necessity to take tighter actions in the wake of COID-19 infodemic. For the first time humanitarian agencies such as the WHO has taken decisive action in educating people while accelerating the development of a global disinformation observatory network. Future research in this area needs to establish a more robust distinction between the characteristics of misinformation and disinformation; in a way these two areas could become subcategories of academic and scientific developments within mainstream fake news research. More collaboration is required between social, computer and linguistic science in developing a sophisticated fake news classifier that uses recurrent and reinforcement learning (RNN and RL) frameworks rather than the less accurate word-of-bag model. More longitudinal studies are required in identifying trends and patterns of disinformation circulation, while triangulation of consumer narratives is indispensable in establishing the ideological impact of disinformation on everyday citizens.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.