Abstract

In judged sports, such as rhythmic gymnastics, figure skating, and baton twirling, inter-judge variability in scoring—the degree to which judges differ in their scoring of the same athlete—is a common concern. This study quantitatively examined inter-judge variability using freestyle scores from the World Baton Twirling Championships held in 2018 and 2022. Data were collected from the preliminary rounds of senior and junior women's divisions, with scores assigned by seven judges. Welch's analyses of variance were performed to assess the effects of athlete ranking group (high, middle, low) on inter-judge variability across two scoring axes: technical merit (TM) and artistic expression (AE). These analyses were conducted separately for each competition year (2018 and 2022) and division (senior and junior). In the senior division in 2018, inter-judge variabilities for both TM and AE were significantly lower in the high-ranking group than in the other ranking groups. In the junior division in 2018, inter-judge variabilities for both TM and AE were significantly lower in the high-ranking group than in the low-ranking group. These findings are interpreted in terms of the interactions among the competitive structure of baton twirling and the cognitive processes involved in judging.

Introduction

In judged sports, such as rhythmic gymnastics, figure skating, and baton twirling, both the scoring system and judges’ evaluation processes are widely recognised as persistent concerns (Flessas et al., 2015; Huang and Foote, 2011; Osório, 2020; Premelč et al., 2019). With the expansion of international media coverage, what was once considered confined to judges and athletes has evolved into a broader societal issue (Collins, 2010). Sports such as figure skating have introduced revised scoring systems to mitigate the impact of scoring bias on final rankings (Cheng and Gonzalez, 2022). The first author, with extensive experience as a member of judging panels, including as a judge's chair at international baton twirling competitions, has personally observed similar issues in baton twirling. Among the various challenges related to evaluation, the World Baton Twirling Federation (WBTF) has identified inter-judge variability, that is, the degree to which judges differ in their scoring of the same athlete, as a major concern. To address this issue, the WBTF implemented several countermeasures, including rule revisions and enhanced judge education programs (World Baton Twirling Federation, 2020). However, the numerical target for acceptable variability in these efforts has largely been determined through subjective considerations based on long-standing experience rather than objective data-driven metrics, which is a common tendency in human decision making (Ariely et al., 2003; Plessner and Haar, 2006). Accordingly, this study aimed to quantify inter-judge variability in scoring and examine the factor that influences it. By providing an objective framework to assess scoring variability, these findings may contribute to the development of empirically grounded assessment protocols and training initiatives. Furthermore, a deeper understanding of the cognitive processes underlying judgments in judged sports may advance performance research in sports sciences.

In evaluations of baton twirling, field observations indicate that mid-ranked athletes’ scores fluctuate more than those of top-ranked athletes. These observations are consistent with previous research. For example, in studies on rhythmic gymnastics, scoring variability was particularly high for athletes competing at intermediate performance levels (Leandro et al., 2017). Similarly, research on wine contests has shown a high degree of variability in judges’ scoring of wines that are awarded gold medals, whereas lower-quality wines tend to receive more consistent evaluations (Hodgson, 2008). However, such variability-related behaviors have not yet been objectively verified among baton twirling judges. Such patterns may also be influenced by cognitive processes uniquely tied to the specific characteristics of competition (Carvalho et al., 2023; Findlay and Ste-Marie, 2004; Ste-Marie, 1999).

The purpose of this study was to quantitatively clarify the effect of ranking group on inter-judge score variability in international baton twirling competitions. In addition, on the basis of the obtained findings, the potential background factors underlying this variability are discussed from the perspectives of competition characteristics and cognitive processes.

Methods

Data acquisition

This study analysed the scores assigned by judges at the World Baton Twirling Championships organised by the WBTF. Data were acquired from publicly available live-streaming broadcasts of championships. The purpose and methodology of the study were explained to the WBTF president, and written consent was obtained for the use of anonymised data. The Institutional Review Board of Waseda University determined that ethical review was exempt (2025-HN003).

Data selection criteria

Freestyle scores from the WBTF World Baton Twirling Championships, held biennially, were used as the dataset for this study. Specifically, scores from the 2018 and 2022 championships were selected (the 2020 championship was cancelled due to the COVID-19 pandemic). These years were selected because both competitions employed the same scoring system, and the rule known as “Overall Degree of Excellence,” which constrains judges’ scoring ranges to within one point, was not in effect, whereas this scoring system was adopted in other years. The absence of this rule ensures that the data are suitable for investigating inter-judge variabilities.

Among all the freestyle events, the preliminary rounds in the senior (Sr: 18 years or older, n = 64) and junior (Jr: under 18 years of age, n = 62) women's divisions were selected because of their larger sample sizes. The scores used in this study were the original and unadjusted judge scores recorded before any deductions or modifications were made. Both world championships were judged by qualified international judges who had been selected through a formal examination. The demographic characteristics of the judges are summarised in Table 1. The panel of judges differed by year and by division. The panel of judges numbered seven for each division in each year. Six judges served on more than one occasion; therefore, the total number of individual judges was 20.

Demographics of judges.

Note: Years of experience are calculated as of 2022.

In baton-twirling competitions, performance is evaluated using two scoring axes: technical merit (TM) and artistic expression (AE). TM assesses the variety and difficulty level of techniques incorporated into performance and determines the corresponding developmental stage, while AE evaluates the athletic artistry of a performance in relation to musical interpretation. Both categories employ a build-up scoring method with a maximum score of 10, recorded to one decimal place. For example, in TM evaluation, the seven judges may assign raw scores such as 7.8, 8.1, 8.0, 7.9, 8.2, 7.7, and 8.0 to a single performance; AE is evaluated in the same manner. According to the WBTF Judges Manual (World Baton Twirling Federation, 2020), the developmental stages are classified into the following discrete ranges: fair (0.00–2.00), average (2.01–4.59), good (4.60–7.00), excellent (7.01–9.00), and superior (9.01–10.00). Each stage is supplemented with performance examples that visually illustrated the corresponding evaluation standards.

Statistical analysis

In this study, inter-judge variability, measured as the sample variance of the scores of seven judges for a single athlete, was used as the dependent variable. As a preliminary check prior to calculating the sample variance, the range of the seven judges’ scores for each athlete was examined. These were defined as the difference between the maximum and minimum scores, as a simple descriptive indicator of potential extreme dispersion in the raw data. For most performances, the score range was 2 points or lower, although larger ranges were generally observed in 2022 than in 2018, particularly in the Sr division, where several performances showed ranges greater than 2 points, with a maximum of 2.8 points. Importantly, the value of 2 points was not used as a formal criterion to define outliers but only as a descriptive reference to summarize the observed dispersion. Because larger ranges reflect lower inter-judge agreement, which is central to the research question of the present study, cases with large ranges were retained and not excluded from subsequent analyses.

The sample variance was log-transformed; outliers were addressed using the interquartile range (IQR) method; and one-way Welch ANOVAs were conducted separately by year (2018 and 2022), division (Sr and Jr), and scoring axes (TM and AE), with ranking group specified as the sole factor. The normality of the dependent variable was visually assessed using Q–Q plots (see Supplementary Material), which indicated no substantial deviations from normality. Post-hoc pairwise Games–Howell comparisons were conducted following a significant main effect.

The ranking group factor was categorised into three levels based on the competitor rankings: top third (high-ranking group), middle third (middle-ranking group), and bottom third (low-ranking group) (Table 2). In the actual competition, athletes’ final rankings are determined by discarding the highest and lowest scores among the seven judges and subtracting any applicable penalties. However, because the present study focuses on variability among the seven judges, research-specific rankings were determined by summing the mean TM score and the mean AE score across all seven judges, and athletes were then grouped based on these rankings. This classification approach is considered appropriate as it approximately corresponds to the advancement structure in the freestyle individual event at the WBTF World Baton Twirling Championships, where 20 athletes progress to semifinals and 10 advance to finals.

Number of baton twirlers in the high-, middle-, and low-rank groups within each division for each year. Numbers in parentheses represent the score ranges.

Results

Figure 1 presents the inter-judge variance scores, which were log-transformed to reduce skewness, categorized by ranking group in the Sr group for 2018 and 2022. Prior to statistical analysis, outliers were identified and removed using the IQR method. For TM, a one-way Welch ANOVA revealed a significant main effect of ranking group in 2018 (F(2, 17.64) = 9.365, p = 0.0017, ηp2 = 0.473), whereas no significant main effect was observed in 2022 (F(2, 16.56) = 0.949, p = 0.407, ηp2 = 0.050) (Figure 1(a) and (b)). For AE, a significant main effect was also found in 2018 (F(2, 16.37) = 14.74, p = 0.0002, ηp2 = 0.512), but not in 2022 (F(2, 17.51) = 0.510, p = 0.609, ηp2 = 0.030) (Figure 1(c) and (d)).

Log-transformed inter-judge variance of scores by ranking group in the Sr group, after IQR-based outlier removal. TM variance in 2018 (a) and 2022 (b). AE variance in 2018 (c) and 2022 (d). L, low; M, middle; H, high.

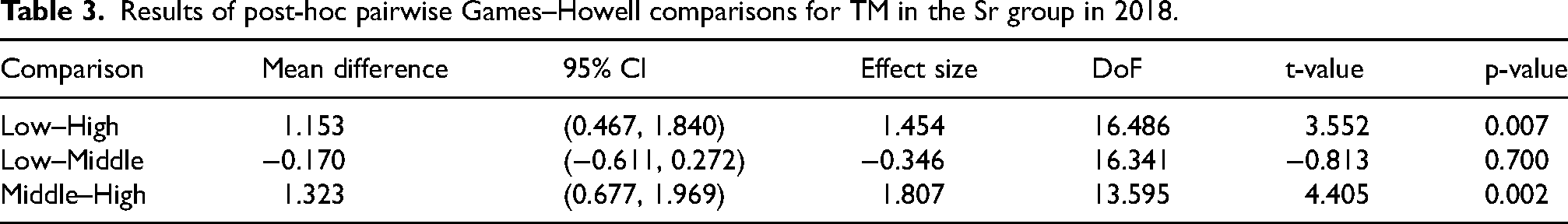

For conditions showing a significant main effect in the Sr group, post-hoc pairwise comparisons were conducted using the Games–Howell test. The results for TM are summarized in Table 3, revealing significant differences between the Low- and High-ranking groups and between the Middle- and High-ranking groups, whereas no significant difference was observed between the Low- and Middle-ranking groups. The results for AE are summarized in Table 4, revealing significant differences between the Low- and High-ranking groups and between the Middle- and High-ranking groups, whereas no significant difference was observed between the Low- and Middle-ranking groups.

Results of post-hoc pairwise Games–Howell comparisons for TM in the Sr group in 2018.

Results of post-hoc pairwise Games–Howell comparisons for AE in the Sr group in 2018.

Figure 2 shows boxplots of the results for the Jr group, after IQR-based outlier removal. For the Jr group, a one-way Welch ANOVA revealed a significant main effect of ranking group on TM in 2018 (F(2, 19.74) = 6.084, p = 0.0087, ηp2 = 0.274), whereas no significant main effect was observed in 2022 (F(2, 15.73) = 0.305, p = 0.741, ηp2 = 0.020) (Figure 2(a) and (b)). For AE, a significant main effect was also found in 2018 (F(2, 19.83) = 5.013, p = 0.017, ηp2 = 0.239), but not in 2022 (F(2, 14.28) = 0.070, p = 0.932, ηp2 = 0.0084) (Figure 2(c) and (d)).

Log-transformed inter-judge variance of scores by ranking group in the Jr group, after IQR-based outlier removal. TM variance in 2018 (a) and 2022 (b). AE variance in 2018 (c) and 2022 (d). L, low; M, middle; H, high.

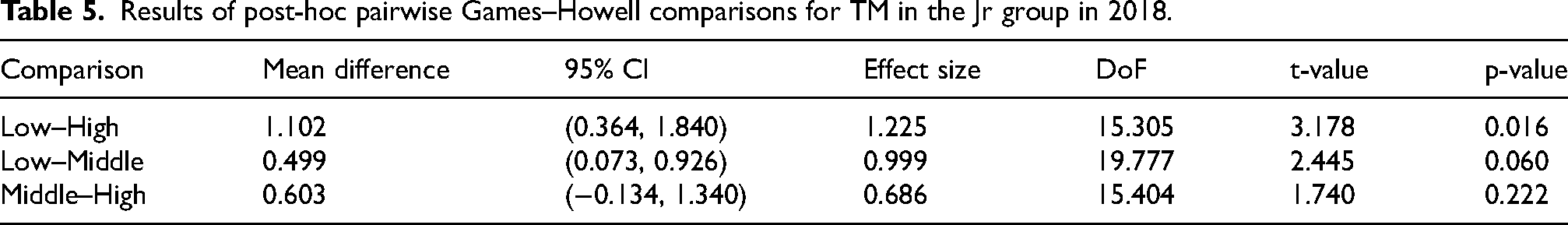

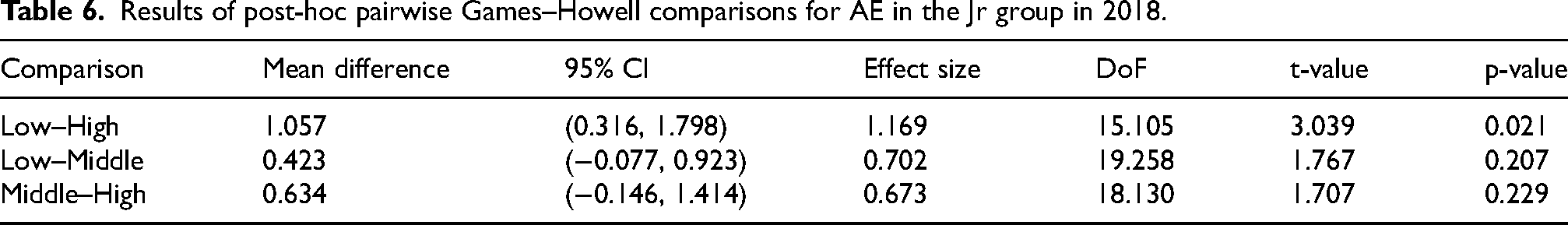

For conditions showing a significant main effect in the Jr group, post-hoc pairwise comparisons were conducted using the Games–Howell test. The results for TM are summarized in Table 5, revealing a significant difference between the Low- and High-ranking groups, whereas no significant differences were observed for the other pairwise comparisons. The results for AE are summarized in Table 6, revealing significant differences between the Low- and High-ranking groups, whereas no significant differences were observed for the other pairwise comparisons.

Results of post-hoc pairwise Games–Howell comparisons for TM in the Jr group in 2018.

Results of post-hoc pairwise Games–Howell comparisons for AE in the Jr group in 2018.

Discussion

This study aimed to clarify the effect of ranking group on inter-judge score variability in international baton twirling competitions. Inter-judge variability was quantified using the sample variance of judges’ scores, and one-way Welch ANOVA was applied to examine differences across ranking groups. The analyses were conducted separately by year, division, and scoring axis. This discussion addresses the significant main effects observed and offers implications for judging systems.

Similarity between TM and AE

As shown in Figures 1 and 2, TM and AE exhibit similar overall patterns. This similarity may reflect certain characteristics of baton twirling competitions. In baton twirling, TM and AE scores tend to show similar trends because AE is generally dependent on TM, as TM provides the technical foundation for AE. When technique and skills are insufficiently developed, it may become difficult to fully express artistic intentions, no matter how strong those intentions may be. In contrast, TM evaluates the accuracy of baton and body manipulation (i.e., whether skills are successfully executed), as well as the difficulty of and variation in techniques. These evaluations can be made without information related to musical interpretation or narrative progression; for example, scores can be determined even if the athlete performs with a neutral facial expression. Accordingly, the observed similarity between TM and AE scores in the present study may be explained by AE's considerable dependence on TM.

Effect of ranking group

In the Sr division in 2018, inter-judge variabilities for both TM and AE were significantly lower in the high-ranking group than in the other ranking groups. In the Jr division in 2018, inter-judge variabilities for both TM and AE were significantly lower in the high-ranking group than in the low-ranking group. These results suggest that different cognitive processes may be involved in evaluating athletes across ranking groups. This possibility is explored by considering the judges’ introspective experiences in light of cognitive models.

The lower inter-judge variability observed for the high-ranking group aligns with the judges’ introspective reports during evaluation: scoring for high-ranking athletes tends to be decisively clear, whereas scoring for middle-ranking athletes often involves hesitation. This hesitation stems from the difficulty of determining the most appropriate developmental stage corresponding to an athlete's techniques and skills. The first author, who served as a judge's chair at international competitions organised by the WBTF, observed this introspective pattern among judges. For instance, in TM evaluation, when assessing the combined execution of a thumb toss and a grand jeté, judges must classify 12 evaluation items that capture multiple dimensions of the technique into five developmental stages and further categorize each stage into upper, middle, and lower subgroups. A similar process applies to AE, which consists of seven evaluation items divided into five developmental stages, each further stratified into three subranges. High-ranking athletes consistently performed at an advanced level across all evaluation items, enabling the judges to rely on intuitive judgments. In contrast, middle-ranking athletes exhibited greater variability in evaluation combinations, making instantaneous judgments more cognitively demanding. This introspective account by judges is consistent with previous research on rhythmic gymnastics, in which scoring variability was particularly high for athletes competing at intermediate performance levels (Leandro et al., 2017). Additionally, findings from clinical examinations indicate that examiner bias tends to be more pronounced for borderline candidates than for those who clearly pass or fail (Shulruf et al., 2018). These parallels suggest that an increased cognitive load contributes to higher inter-judge variability when evaluating athletes in the middle performance range.

The cognitive processes observed in the evaluation of the high- and middle-ranking groups may reflect two distinct reasoning systems: heuristic and analytic system (De Neys, 2006, 2022; Kahneman, 2011). A heuristic system is an automatic and rapid process that enables intuitive evaluation, whereas an analytical system is a slower and resource-demanding process. Although both systems operate simultaneously to some extent (De Neys, 2022), judges’ introspective reports suggest that evaluations of high-ranking athletes rely primarily on a heuristic system, whereas evaluations of middle-ranking athletes rely on an analytic system. As these cognitive processes can be verified experimentally (De Neys, 2006), the findings of this study should be validated in future research.

Furthermore, if this explanation were correct, one would expect the judges’ scores for the low-ranking group to converge similarly to those of the high-ranking group. However, this pattern was not observed in the present study. This discrepancy may be attributed to methodological factors, such as the criteria used to define ranking groups or the inherently high skill level of competitors in international championships. These factors also warrant further investigation in future studies.

Potential effect of differences in judge preparation

In the present study, significant main effects were observed only in the 2018 championships, in both the Sr and Jr divisions, whereas no significant effects were found in 2022. This pattern may be related to differences in the extent of judge training between the two competition years. Under normal circumstances, judge training is typically conducted systematically over several months prior to the championships, allowing for a shared understanding of how evaluation criteria are interpreted and applied. However, due to the COVID-19 pandemic, judge training for the 2022 championships became more limited. When such training is limited, the consistency of judges’ evaluations may be reduced, and individual interpretations may play a larger role in scoring. Therefore, inter-judge variability may become more diffusely distributed, rather than being associated with specific ranking groups. Under these conditions, systematic differences in variability between ranking groups may be attenuated and, therefore, less likely to be detected as statistically significant effects. Accordingly, the absence of significant ranking-group effects in the 2022 championships does not necessarily indicate that such effects were absent; rather, it may reflect a situation in which variability in the evaluation process increased and became less structured, making these effects more difficult to detect. However, other contextual factors, such as changes in athletes or competition venues, may also have influenced the results.

Implications for judging systems

The WBTF has made concerted efforts to reduce inter-judge score variability; however, the underlying evaluation criteria have traditionally been based on subjective indicators. In this context, the present findings—such as the similarity between TM and AE scores and the smaller inter-judge variability observed in higher-ranking groups, particularly in 2018—provide objective patterns that align with judges’ practical understanding of the sport and illustrate the potential value of integrating objective indicators into the judging process. The present findings therefore serve as a useful resource for fostering constructive communication among judges from diverse linguistic and cultural backgrounds with the aim of minimizing inter-judge variability.

Supplemental Material

sj-docx-1-san-10.1177_22150218261436207 - Supplemental material for Inter-judge variability in scoring at the world baton twirling championships

Supplemental material, sj-docx-1-san-10.1177_22150218261436207 for Inter-judge variability in scoring at the world baton twirling championships by Tomoko Natsuda, Yuto Kurihara, Takahide Etani, Taketoshi Sugisawa and Akito Miura in Journal of Sports Analytics

Footnotes

Acknowledgements

ChatGPT (versions 4.5 and 5.2; OpenAI) was used to improve the readability of the English text. The text was subsequently reviewed and the authors assume full responsibility for the final content.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by JSPS KAKENHI (Grant Number 25H01237).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.