Abstract

Student success remains a central imperative for higher education institutions globally, yet traditional advising approaches struggle with the twin challenges of scale and specificity. This paper proposes a framework distinguished by its two-dimensional scale: vertical, spanning institutional role-player hierarchies from executives to students, and horizontal, enabling personalized reach across large populations through data-driven automation. A central attribute is a correlation-based needs assessment, foregrounding student voice, systematically linking self-reported challenges with academic outcomes to inform intervention selection and tailoring. Grounded in Improvement Science methodology, the framework creates closed-loop systems where effectiveness is continuously evaluated and refined through iterative cycles. Drawing on Student Integration Theory, Expectancy-Value Theory, and Self-Determination Theory, deployed as evaluative lenses for assessing platform design choices, we articulate how the framework addresses conditions for student persistence while respecting student agency. Contributions include an explicit theorisation of two-dimensional advising scale that builds on and extends existing advising frameworks, novel integration of Improvement Science with automated advising systems, and the use of motivation and persistence theory as evaluative lenses for assessing platform design choices.

Keywords

The Case for Advising at Scale

Despite decades of sustained scholarly attention and substantial institutional investment, student attrition remains a persistent challenge across higher education systems worldwide. While access to higher education has expanded dramatically, particularly in developing contexts undergoing post-colonial transformation, completion rates have not kept pace with enrolment growth. The gap between those who enter and those who successfully exit with qualifications represents not only individual disappointment but significant wastage of scarce societal resources and perpetuation of existing inequalities (Scott et al., 2007; Tinto, 2012).

Vincent Tinto, whose foundational work has shaped student retention research for half a century, recently observed that “though much has been learned about student success, much more remains to be learned” and called for renewed attention to the conditions that enable rather than merely predict success (Tinto, 2025:7). This phenomenological turn, emphasising student experience over institutional metrics, challenges researchers and practitioners to reconsider both what we measure and how we intervene. Students are not passive recipients of institutional support but active agents navigating complex academic and social environments, and their subjective experience of belonging, competence, and purpose fundamentally shapes their persistence decisions.

Contemporary higher education institutions find themselves caught between competing imperatives. On one hand, there is recognition that student success requires personalised attention to individual circumstances, challenges, and aspirations (Kuh et al., 2010). On the other, resource constraints, particularly acute in developing contexts, preclude the kind of intensive one-on-one advising that such personalisation seems to demand. Student-to-advisor ratios in many institutions render meaningful individual engagement impossible through traditional models. The question becomes: how might institutions provide meaningful, contextualised support to large and diverse student populations without proportional increases in advising capacity?

Established advising frameworks, including the Council for the Advancement of Standards (CAS) Standards for Academic Advising Programs and proactive advising models (Rust & Wiley, 2025Rust & Willey, 2025), have laid important groundwork by articulating role differentiation and structured outreach approaches. However, these frameworks were developed primarily within resource-rich, individualised advising contexts and do not explicitly theorise scale as a multi-dimensional challenge. The framework developed here builds on these foundations while addressing the specific constraints of large, diverse, and resource-constrained institutional environments, in which both the institutional role-player hierarchy and population reach must be explicitly designed for.

This paper proposes a framework that addresses this challenge through what we term “Advising at Scale”: an approach distinguished by its conceptualisation of scale as operating along two distinct dimensions. The first dimension is vertical, spanning what we call the institutional role-player hierarchy, the layered structure of actors who influence student success, from executive leadership through program management, academic staff, and support services to students themselves. Each tier in this hierarchy has distinct information needs and distinct capacities to act. Yet conventional approaches to student success data tend to concentrate insights at the executive level, leaving those with direct student contact, lecturers, tutors, advisors, without the actionable intelligence they need.

The second dimension is horizontal, concerning the reach of advising across student populations. Traditional advising models scale linearly: more students require more advisors in roughly proportional relationship. Our framework breaks this constraint through data-driven automation that enables personalised advising messages to reach large populations at low marginal cost. Crucially, this is not generic mass communication but rather individualised advice generated through scripts that incorporate each student’s specific academic data, progress status, and identified needs.

The framework is further distinguished by its integration of Improvement Science methodology (Bryk et al., 2015; Lewis, 2015). Rather than deploying interventions and hoping for the best outcomes, the framework creates closed feedback loops where engagement with advising outputs is tracked and correlated with outcomes. This enables continuous refinement of both the interventions themselves and the metrics used to evaluate them, a departure from the episodic, summative evaluation that characterises much institutional research.

We position this framework as a deliberate departure from conventional institutional research (IR) practice. Traditional IR tends to operate at institution-wide scales with significant temporal lag (annual or semester-level reporting), producing aggregate statistics that serve accountability and planning functions but offer limited actionable guidance for those working directly with students. Our framework inverts several of these conventions: it operates continuously rather than periodically, targets program-specific rather than institution-wide scales, distributes insights across all role-player tiers rather than concentrating them at executive levels, and integrates discipline-specific teaching and learning interventions rather than generic support services. The diagram below illustrates the multi-tiered advising at scale framework.

The framework we present is grounded in theory but informed by implementation. We have developed and deployed an instantiation of these ideas within a South African research university context, and the system interfaces illustrated in this paper reflect that implementation. However, our purpose here is not to describe a particular software system but to articulate the theoretical architecture that guided its design, an architecture we believe has broader applicability beyond our specific context. The implementation serves as proof of concept and source of illustrative examples, while the contribution lies in the conceptual framework itself.

This paper proceeds as follows. Section 2 establishes the theoretical foundations, drawing on student integration theory, motivation theories, and data-driven decision-making literature. Section 3 presents the framework in detail, elaborating each dimension of scale and the improvement science loop that connects them. Section 4 articulates the theoretical contributions, while Section 5 discusses practical implications for implementation. Section 6 addresses limitations and critical considerations, Section 7 outlines future research directions, and Section 8 concludes.

Theoretical Foundations

The Advising at Scale framework draws on multiple theoretical traditions that, while developed independently, converge on complementary insights about the conditions necessary for student success. This section reviews these foundations and identifies the theoretical gaps the framework addresses.

Tinto’s Student Integration Theory and Recent Extensions

Vincent Tinto’s Student Integration Model (1975, 1993) has dominated student retention research for five decades. The model posits that students enter higher education with various background characteristics and initial commitments, which are then modified through experiences of academic and social integration. Students who successfully integrate into the academic system (through grade performance and intellectual development) and the social system (through peer relationships and faculty interactions) develop stronger institutional commitment and are more likely to persist.

Tinto’s recent work (2025) extends this foundation in important ways. He emphasises the phenomenological dimension of integration: it is not merely objective participation in academic and social activities that matters, but students’ subjective experience of those interactions. A student may attend classes and submit assignments yet feel fundamentally alienated from the academic enterprise. Conversely, meaningful connection with even a single faculty member or peer group can sustain persistence through difficult periods.

Tinto’s foundational model has been subject to substantial critique and productive extension. Museus’s (2014) Culturally Engaging Campus Environments (CECE) model shifts the analytical emphasis from student assimilation into existing campus culture to institutional responsibility for culturally responsive practice — a reorientation with direct implications for how advising is framed and targeted. Harper (2012) and Bensimon (2005) have similarly challenged deficit-oriented framings, repositioning persistent equity gaps as evidence of institutional failure rather than individual shortcoming. Cabrera et al.’s (1993) empirical work extended the model to incorporate financial considerations alongside academic and social integration. Collectively, these contributions reinforce the framework’s dual emphasis on institutional transformation and student agency, and its deliberate avoidance of deficit language in favour of enabling-conditions framing.

This phenomenological turn has significant implications for how we conceptualise advising. Generic support services, however well-intentioned, may fail to create the experience of genuine care and attention that students need. The question becomes how to create authentic experiences of support at scale — a challenge our framework addresses through personalisation that incorporates specific knowledge of each student’s situation.

Tinto also emphasises the classroom as the primary locus of student experience, particularly for commuter students and those without strong residential community ties. Most students spend the majority of their institutional time in classes; if integration is to occur, it must occur there. This insight motivates our framework’s emphasis on the lecturer tier and discipline-specific teaching and learning interventions, rather than relying solely on centralised support services that students may never access.

Motivation Theories: Expectancy-Value and Self-Determination

While Tinto’s work explains persistence in terms of integration, motivation theories illuminate the psychological mechanisms through which students engage with academic work. Two frameworks are particularly relevant: Expectancy-Value Theory and Self-Determination Theory.

Expectancy-Value Theory (Eccles & Wigfield, 2020; Wigfield & Eccles, 2000) proposes that achievement-related choices are driven by two factors: expectancy (Can I succeed at this task?) and value (Why should I try?). Expectancy beliefs concern students’ confidence in their ability to perform successfully; distinct from actual ability, these beliefs shape effort investment and persistence. Value comprises four components: attainment value (importance to identity), intrinsic value (enjoyment), utility value (relevance to goals), and cost (what must be sacrificed).

Research on “wise interventions” demonstrates that relatively brief, well-targeted interventions can shift expectancy and value beliefs with lasting effects on achievement (Harackiewicz & Priniski, 2018; Walton & Wilson, 2018; Yeager & Walton, 2011). Utility-value interventions, for example, help students connect course content to personal interests and goals, increasing both engagement and performance. These findings suggest that advising need not be extensive to be effective. Instead, well-timed, contextually appropriate messages can catalyse significant shifts in student motivation.

More recent work has examined how utility-value interventions function differentially across student populations, with particular effectiveness for first-generation and underrepresented students (Harackiewicz & Priniski, 2018). This equity dimension is directly relevant to the framework’s needs assessment approach: identifying which value components are most salient for specific student sub-groups enables more precisely targeted — and equity-conscious — advising messages, rather than applying generic motivational content across heterogeneous cohorts. In this sense, EVT functions here not merely as a design rationale, but as an evaluative lens for assessing whether the framework’s personalisation mechanisms are likely to benefit all students equitably.

Self-Determination Theory (Ryan & Deci, 2000, 2017) identifies three basic psychological needs whose satisfaction supports intrinsic motivation and wellbeing: autonomy (sense of volition and choice), competence (sense of effectiveness), and relatedness (sense of connection with others). Learning environments that support these needs foster more autonomous forms of motivation, deeper engagement, and better outcomes than controlling environments that undermine them.

Crucially, Self-Determination Theory (SDT) differentiates between interventions that are controlling and those that are autonomy-supportive. Advising perceived as surveillance or coercion risks undermining the very motivation it intends to foster. Alzahrani et al. (2023) demonstrate that instructor support can simultaneously fulfil multiple basic psychological needs; however, the quality and manner of such support are as consequential as its mere provision. This insight informs our framework’s emphasis on “advising the advisor” rather than relying on automated interventions devoid of human judgment. In this view, technology serves to augment the advisor’s capacity to deliver autonomy-supportive guidance, while preserving the irreplaceable relational dimension of human advising. Evaluated through the SDT lens, the framework’s design choices representing personalisation over mass messaging, human-in-the-loop oversight, opt-out provisions, can be assessed for their likely impact on student autonomy experience.

Data-Driven Decision-Making in Education

The expansion of institutional data systems and learning management platforms has created unprecedented opportunities for data-informed educational practice. Learning analytics, defined as “the measurement, collection, analysis and reporting of data about learners and their contexts” (Siemens & Gašević, 2012), promises to transform how institutions understand and support student success (Ferguson, 2012).

Early warning systems represent one prominent application, using predictive models to identify students at risk of failure or dropout and trigger support interventions (Arnold & Pistilli, 2012; Jayaprakash et al., 2014). These systems have demonstrated value in some contexts while raising important questions about accuracy, equity, and the nature of the interventions triggered (Kizilcec & Lee, 2020).

While much of the existing learning analytics literature concentrates on the student as the primary unit of analysis and intervention, comparatively fewer applications explore how analytics might empower other institutional actors. Lecturers may leverage analytics to refine pedagogical practice, program coordinators to detect and address curriculum bottlenecks, and support staff to coordinate interventions across diverse student cohorts. Our framework advances this broader perspective by extending learning analytics beyond student-facing applications to encompass the full hierarchy of role-players within the institution.

Mandinach and Gummer (2016) emphasise that effective data use requires not merely access to data but data literacy: the capacity to interpret, contextualise, and act on information appropriately. This observation motivates the framework’s emphasis on role-appropriate advising: different stakeholders require different information presented in different ways to support effective action. Raw data dumps do not constitute useful advising; insights must be translated into actionable guidance for specific decision contexts.

Critical Perspectives on Student Success

Critical scholarship has interrogated prevailing conceptions of student success on several important grounds (Rowland et al., 2021). Central to these critiques is the question: whose definition of success is privileged? Conventional metrics such as graduation rates or time-to-completion often reflect institutional accountability imperatives, while obscuring the diversity of student aspirations and pathways. Moreover, the deficit framing inherent in “at-risk” identification situates challenges within individual students, rather than interrogating the institutional structures and practices that generate barriers to achievement (Dhunpath & Vithal, 2012).

These perspectives inform our framework in three key ways. First, we foreground student voice by systematically polling learners to articulate their needs, rather than presuming institutional knowledge of their challenges. Second, we deliberately frame the enterprise in terms of student success rather than risk identification, thereby shifting emphasis toward enabling conditions for achievement rather than merely flagging vulnerability. Third, we distribute responsibility across the institution: when students encounter difficulties, the primary question becomes what institutional conditions require transformation, rather than what deficits reside within the students themselves.

Improvement Science in Educational Contexts

Improvement Science originated in healthcare quality improvement and has been increasingly applied to educational settings (Bryk et al., 2015; Lewis, 2015). The core methodology involves rapid iterative cycles of Plan-Do-Study-Act (PDSA): identify a problem, develop a theory of improvement, test changes on small scale, study results, and either adopt, adapt, or abandon the change before the next cycle.

Several features of Improvement Science are particularly relevant to student success work. First, it emphasises practical measurement by identifying indicators that can be tracked in real time to assess whether changes are actually improvements. Second, it acknowledges variation: what works in one context may not work in another, and understanding local conditions is essential. Third, it is explicitly iterative, building knowledge through accumulated cycles rather than seeking definitive answers from single studies.

Despite growing interest in Improvement Science for education, we identify a gap in its integration with automated advising systems. The framework we propose addresses this gap, creating automated feedback loops where engagement with advising outputs is tracked and incorporated into subsequent improvement cycles.

Theoretical Gap and Framework Positioning

The theoretical traditions reviewed above offer complementary insights but have developed largely in isolation. Integration theory explains persistence in terms of academic and social connection but offers limited guidance on how to create those connections at scale. Motivation theories illuminate psychological mechanisms but are typically operationalised through researcher-designed interventions rather than ongoing institutional practice. Learning analytics provides technical infrastructure but often lacks theoretical grounding in what students actually need. Critical perspectives offer important correctives but can struggle to translate critique into constructive alternatives. Our framework synthesises these traditions, positioning automated advising systems as infrastructure for delivering theoretically-grounded, student-informed interventions at scale while maintaining human judgment at the centre of the advising relationship. The Improvement Science methodology provides the feedback architecture for continuous refinement.

Each theoretical tradition informs specific framework components and, crucially, serves as an evaluative lens for assessing whether specific design choices are likely to achieve their intended effects. Tinto’s emphasis on the classroom as the primary locus of integration motivates our prioritisation of the lecturer tier (Tier 3) and discipline-specific interventions over centralised support services. His phenomenological turn, emphasising subjective experience of care, shapes our commitment to personalisation that demonstrates specific knowledge of each student’s situation, and can be used to assess whether automated messages genuinely convey authentic care. The contributions of Museus, Harper, Bensimon, and Cabrera et al. reinforce the framework’s orientation toward institutional transformation rather than student deficit remediation. Expectancy-Value Theory informs the design of advising messages, which explicitly address expectancy beliefs (“you can succeed”) and utility value (“here is why this matters for your goals”), while also providing criteria for evaluating whether messages are likely to be differentially effective across student sub-groups. Self-Determination Theory’s distinction between controlling and autonomy-supportive interventions underlies our “advising the advisor” philosophy and provides a framework for evaluating whether automation enhances or undermines the quality of the advising relationship. Critical perspectives on deficit framing motivate our correlation-based needs assessment, which centres student voice in problem identification. Finally, Improvement Science provides the PDSA architecture that enables continuous refinement based on engagement evidence.

The Advising at Scale Framework

This section presents the framework in detail, elaborating each dimension of scale and the improvement science loop that integrates them.

Conceptual Overview: Two Dimensions of Scale

The framework is distinguished by its conceptualisation of “scale” as operating along two dimensions: a vertical dimension spanning institutional role-player hierarchies, and a horizontal dimension enabling personalised reach across large populations. Traditional approaches to scaling student support assume a single dimension: reaching more students requires more advisors. This assumption leads either to unsustainable resource demands or to diluted, generic support that fails to address individual needs. Our framework reconceptualises scale by distinguishing who is advised (vertical dimension) from how many are reached (horizontal dimension).

This two-dimensional conceptualisation is absent as an explicit construct from mainstream advising frameworks, which have tended to treat scale as a single-axis challenge of population reach. Organisational theory offers a complementary perspective: coordinating interventions across institutional tiers requires explicit vertical design because authority, information needs, and decision-making capacity differ qualitatively across levels, not merely quantitatively. Horizontal scale, by contrast, is a logistical challenge of reach and personalisation, solvable through data infrastructure rather than hierarchy. Conflating these two dimensions leads either to interventions that reach many students superficially (horizontal without vertical) or that engage senior role-players without filtering insights to those with direct student contact (vertical without horizontal). The framework addresses both simultaneously, and each dimension requires different design principles, different success metrics, and different implementation strategies.

The vertical dimension recognises that students are not the only, or even the primary, targets of effective advising. Those who work with students daily (lecturers, tutors, advisors) can amplify the impact of insights they receive across all students they encounter. Program coordinators can address structural issues affecting entire cohorts. Executives can direct resources toward programs requiring support. Advising the full role-player hierarchy creates multiplicative rather than merely additive effects.

The horizontal dimension addresses how personalised advising can reach large populations without proportional resource increase. The key mechanism is data injection: advising messages are generated through scripts that incorporate individual student data, producing personalised output for each recipient. The marginal cost of reaching an additional student is minimal once the advising logic is established. Crucially, this is not impersonal automation but rather human-designed advice delivered through automated channels.

Dimension 1: Vertical Scale: The Role-Player Hierarchy

The vertical dimension spans five tiers, each with distinct information needs, decision contexts, and capacities for action.

Tier 1: Executive Level (Institution Scale)

Role-players at this tier include Vice-Chancellors, Deputy Vice-Chancellors, Executive Deans, and institutional planning units. Their decisions concern resource allocation, strategic priorities, and accountability to external stakeholders. They require information at program or faculty level that enables comparison across units and identification of where attention is most needed. Relevant metrics include minimum-time graduation rates (proportion completing in regulation time), dropout and stop-out rates, failure event frequencies, and time-to-completion distributions. The framework provides these metrics with appropriate contextualisation; absolute numbers mean little without an understanding of student intake profiles and historical trends.

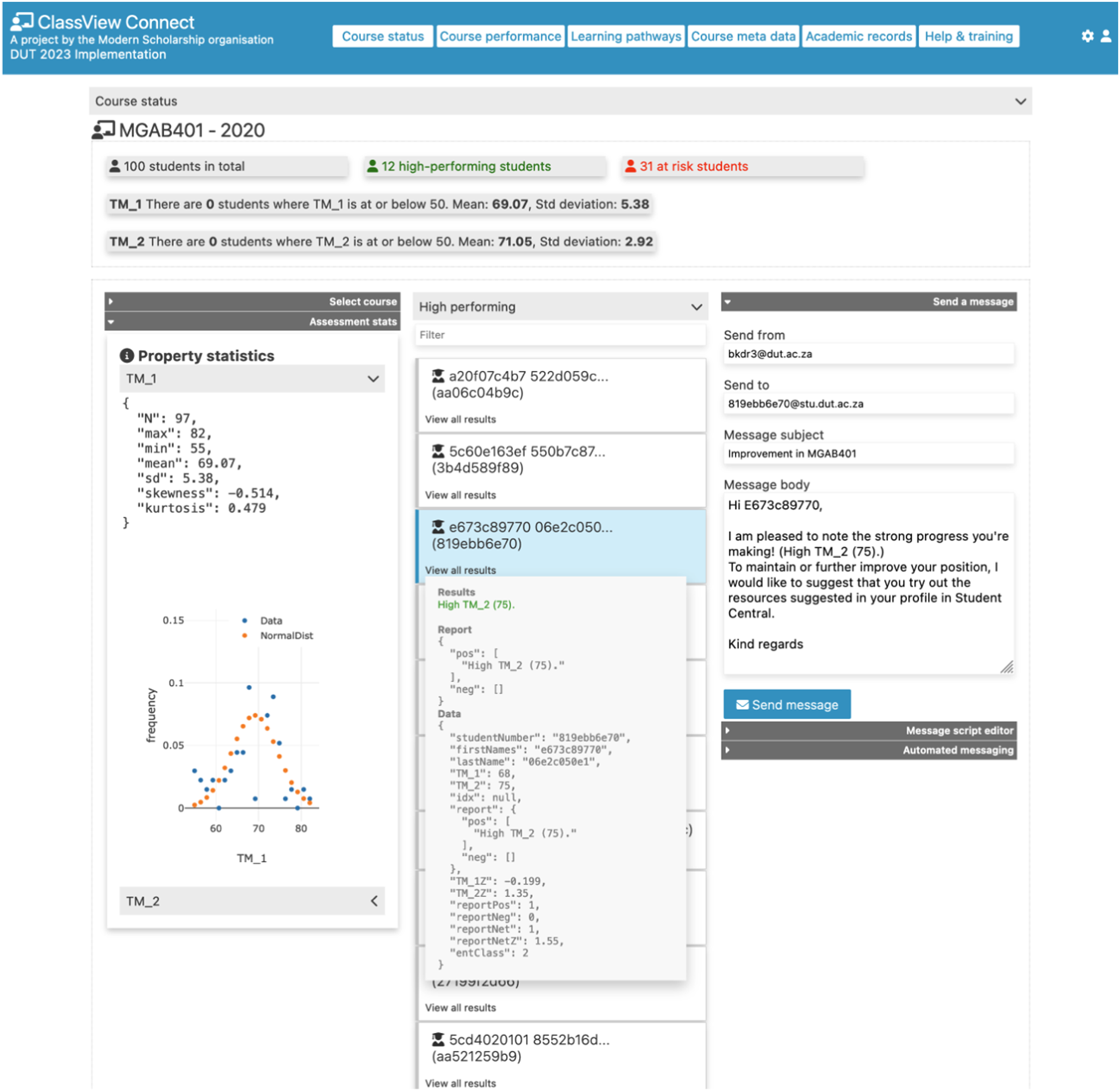

Advising outputs for this tier include identification of programs showing concerning trends, resource allocation recommendations, and progress indicators for strategic initiatives. Figure 1 illustrates the Executive Insight interface showing program-level performance metrics. The Executive Insight interface displaying program-level performance metrics. Executives can sort programs by minimum-time graduation rate, dropout rate, or failure events, with automated commentary highlighting programs requiring attention

Tier 2: Program Management Level (Faculty/Discipline Scale)

Program coordinators, Heads of Department, and Faculty Teaching & Learning committees operate at this tier. They have direct responsibility for curriculum design, course sequencing, and program-level student support. Their decisions shape the structural conditions within which students and lecturers work.

Information needs centre on course-level performance patterns, progression bottlenecks, and curriculum pathway analysis. Which courses have unusual failure rates? Where do students get stuck in their progression? Are there courses that students repeatedly fail before eventually passing? The framework provides gatekeeper course identification: courses where failure disproportionately predicts dropout, and curriculum pathway analysis showing how students actually move through programs compared to prescribed sequences. Figure 2 illustrates the progression pathway visualisation. Network visualisation of student progression pathways through a program. Nodes represent course combination states; edges show transitions between states. Node colour indicates outcome (green = graduation, red = dropout, blue = continuing). This view enables program managers to identify common pathways and bottleneck points

Tier 3: Course/Module Level (Classroom Scale)

Lecturers, tutors, and course coordinators occupy this tier. They have direct, regular contact with students and the greatest capacity to shape students’ day-to-day academic experience. Following Tinto’s emphasis on the classroom as the primary locus of integration, this tier is crucial for student success yet often underserved by institutional data systems. Information needs include assessment performance distributions, LMS engagement patterns, and identification of students showing early warning signs within specific courses. Lecturers need to know not just class averages but which students are struggling and what patterns characterise their engagement. The framework provides class-level dashboards showing performance distributions, automated identification of at-risk students based on configurable criteria, and tools for personalised communication.

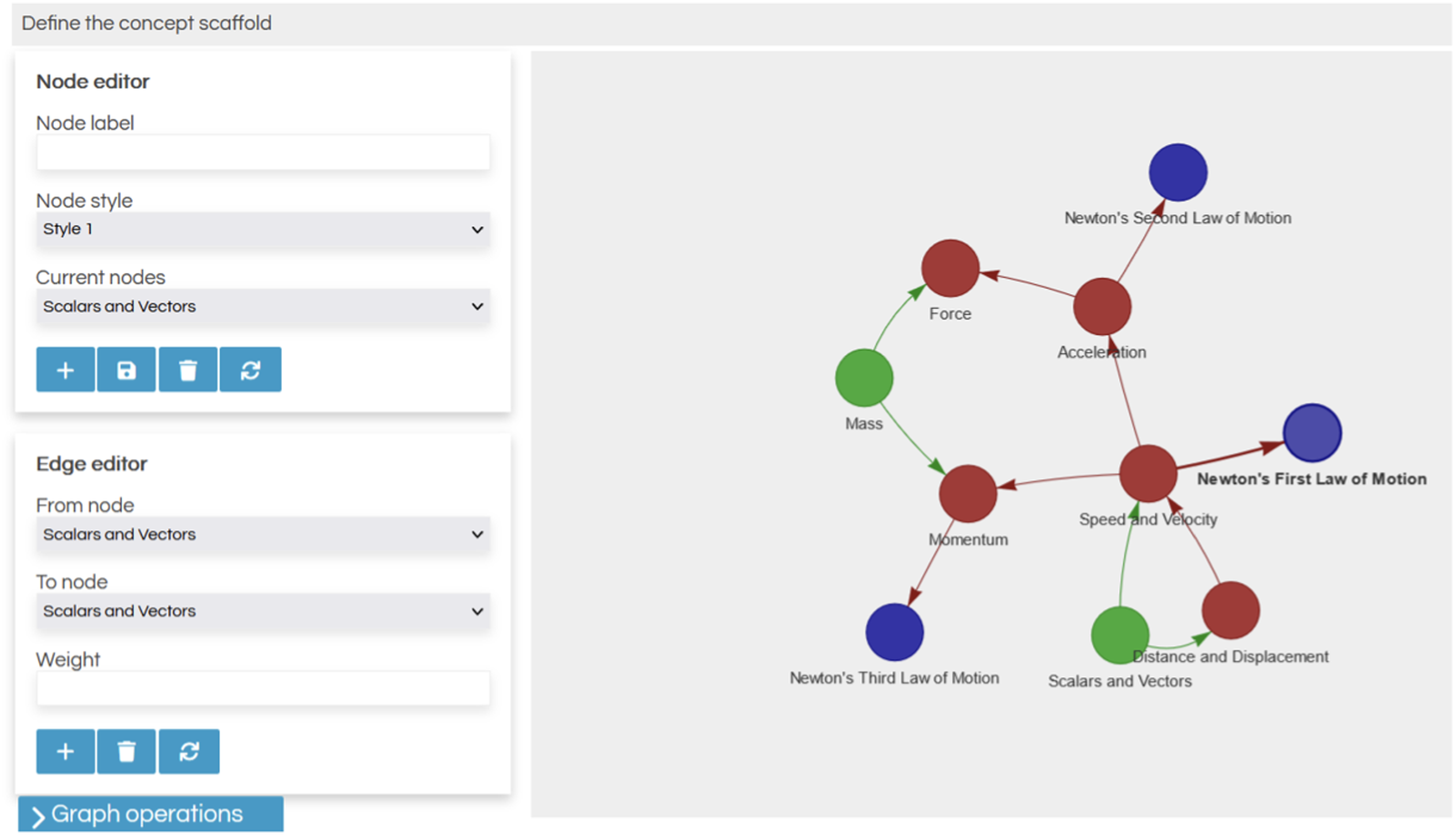

Critically, the framework also supports discipline-specific teaching and learning interventions through concept scaffolding. Course content is mapped as a graph of prerequisite relationships, enabling identification of foundational concepts that warrant additional attention and automated generation of practice questions targeting areas of weakness. Figure 3 shows the ClassView Connect interface for lecturers, and Figure 4 illustrates the student-facing learning interface that delivers these scaffolded interventions. The ClassView Connect interface showing a lecturer’s view of course performance. The interface displays assessment statistics, distribution visualisations, student performance categories (high-performing, at-risk), and integrated messaging functionality for personalised outreach The concept scaffolding interface presenting students with structured learning pathways. Students progress through sequenced content (readings, videos, practice questions) with metacognitive prompts assessing their understanding. This discipline-specific intervention addresses foundational gaps within the teaching context rather than through generic support services

Tier 4: Support/Counselling Level (Cross-Cutting)

Student advisors, counsellors, academic development staff, and peer mentors work across programs and courses, providing individualised support to students who seek help or are referred. Their work requires holistic understanding of each student’s situation: academic, personal, and historical. Information needs include comprehensive student profiles aggregating academic records, advising history, and service utilisation (CAS, 2019). When a student presents for support, the advisor needs immediate access to relevant background: What courses are they taking? How are they performing? Have they engaged with support services before? What strategies have been tried?

The framework provides casefile management that maintains complete advising history across multiple advisors and service providers. Each interaction is logged, enabling continuity even as students move between services. Figure 5 illustrates the student progression advising view that counsellors access. Counsellor view of individual student progression. The interface shows degree classification projections, required performance in remaining assessments to achieve target outcomes, and course-by-course breakdown enabling targeted advising conversations

Tier 5: Student Level (Individual Scale)

Students themselves constitute the final tier, not merely as recipients of institutional intervention but as active agents in their own success. Information asymmetry often leaves students uncertain about where they stand, what they need to achieve their goals, and what resources are available to support them. The framework provides students with transparent visibility of their academic position: credit-weighted averages, degree classification projections, class standing, and specific targets for remaining assessments. Figure 6 shows the student-facing academic records interface. Student Central interface displaying academic history across years, course results, assessment performance, and registration status. Students can access their records, study planners, and career planning tools through this unified interface

Beyond academic records, the framework supports student self-regulation through study planning tools, career pathway exploration, and personalised resource recommendations based on identified needs.

Dimension 2: Horizontal Scale — Population Reach Through Automation

The vertical dimension establishes what each role-player needs; the horizontal dimension addresses how to deliver it at scale.

The Challenge of Scale in Resource-Constrained Contexts

Traditional one-on-one advising models encounter inherent scalability limitations. If effective advising requires approximately 30 minutes per student per semester, and assuming an advisor can conduct 20 sessions per week, the theoretical maximum capacity is about 800 students annually, assuming no administrative overhead, follow-up, or complex cases requiring extended engagement. In practice, actual capacity is considerably lower.

Compounding this challenge, the students most in need of support are often the least likely to seek it. Help-seeking behaviour presupposes recognition of need, awareness of available resources, and confidence that engagement will be beneficial rather than stigmatising (Newman, 2002). Underperforming students may actively avoid advisor contact out of fear of judgment or shame regarding their struggles. Generic mass communication strategies fail to close this gap, as students frequently disregard messages perceived as impersonal or irrelevant. The central challenge, therefore, lies in delivering personalised and contextually meaningful advising to all students, including those who would not self-refer, while maintaining sustainable resource levels.

Personalisation Through Data Injection

The framework addresses this challenge through automated advising that generates personalised messages by injecting individual student data into message templates. Consider this exemplar message alerting students to academic difficulty: Dear [STUDENT_NAME], your current credit-weighted average of [CW_AVG]% places you at risk of not achieving the [TARGET_CLASS] degree classification you indicated as your goal. To reach [TARGET_CLASS], you would need to average [REQUIRED_AVG]% in your remaining [REMAINING_CREDITS] credits. I would encourage you to…

The same underlying logic produces different messages for each student, incorporating their name, actual performance, stated goals, and calculated requirements. From the student’s perspective, this is personalised communication demonstrating specific knowledge of their situation. From the institutional perspective, this is scalable: generating 10,000 personalised messages requires no more effort than generating 10, once the template logic is established.

Personalisation extends beyond simple variable substitution. The framework can incorporate conditional logic (different messages for different situations), reference specific courses where the student is struggling, and recommend resources aligned with identified needs. The automated messages can be less intimidating for struggling students precisely because they demonstrate concrete knowledge: the system knows their actual marks, not just generic concern.

The “Advising the Advisor” Philosophy

Automation in the framework is positioned as augmentation rather than replacement of human judgment. We conceptualise this as “advising the advisor,” where technology handles routine information brokering, freeing human advisors to focus on complex cases requiring empathy, contextual understanding, and professional judgment.

Consider a lecturer seeking to support struggling students. Without the framework, they must manually examine gradebooks, identify concerning patterns, compose individual messages, and track responses, all time-consuming work that competes with teaching, research, and administrative responsibilities. With the framework, at-risk students are automatically identified, suggested message templates are provided, and the lecturer’s role shifts to reviewing recommendations, adding personal touches, and engaging directly with students who need more than automated outreach can provide.

This philosophy addresses a common concern about educational technology: that automation will depersonalise education and eliminate the human relationships that matter for student success. Our framework takes the opposite position: by automating routine information processing, we create space for more meaningful human interaction, not less. Evaluated through the SDT lens articulated in Section 2, this design choice is explicitly intended to preserve the autonomy-supportive character of advising, with technology operating to reduce administrative burden rather than to substitute the relational dimension of the advising encounter.

Multi-Channel Delivery

The framework delivers advising through multiple channels appropriate to different contexts: embedded prompts within learning management systems, email communications for formal advising, dashboard interfaces for self-service exploration, and API access enabling integration with institutional systems. The technical architecture follows API-first principles, enabling institutions to customise delivery channels to their specific contexts and existing infrastructure.

The Improvement Science Loop: Closing the Feedback Cycle

The two dimensions of scale establish the architecture of advising; the Improvement Science loop provides the methodology for continuous refinement.

Integration With PDSA Methodology

The framework operationalises Plan-Do-Study-Act cycles as follows:

Plan: Each implementation begins with metric definition at each institutional scale and identification of priority areas through data analysis. What indicators will we track? What does the data suggest needs attention? What theoretically-grounded interventions might address identified needs? Critically, problem identification incorporates student voice through systematic polling. Students are surveyed across multiple domains: teaching and learning quality, financial circumstances, home environment, motivation, support service awareness, technology access, time management, and sense of belonging, and their responses are correlated with academic performance. This correlation-based needs assessment identifies which factors most strongly associate with success in specific program contexts, enabling evidence-informed intervention selection rather than assumptions about what students need.

Do: Selected interventions are deployed through the framework’s automated and human-mediated channels. Automated messages are sent, support services are triggered, resources are made available, and lecturers are prompted to engage with identified students.

Study: The framework tracks engagement with deployed interventions. Did students open advising messages? Did they access recommended resources? Did triggered referrals result in service utilisation? These engagement metrics are correlated with subsequent outcomes, providing estimates of intervention effectiveness. Importantly, we do not present this as rigorous causal inference. Students who choose to engage may differ systematically from those who do not, and observed associations may therefore reflect selection effects rather than the direct impact of interventions. Nevertheless, engagement tracking offers a valuable signal for continuous improvement, even in the absence of clean causal identification. Interventions that fail to capture student attention cannot be effective, irrespective of their other merits; systematic tracking enables the timely detection of such shortcomings.

Act: Based on study findings, interventions are refined, metrics are revised if needed, and successful approaches are scaled while ineffective ones are discontinued. The cycle then repeats, building cumulative knowledge about what works in specific institutional contexts.

Continuous vs. Episodic Evaluation

Traditional institutional research typically operates on annual or semester cycles, yielding retrospective accounts of student cohort outcomes. This delayed temporal structure constrains responsiveness: by the time challenges are identified, the affected students may already have disengaged or departed. In contrast, the framework enables continuous monitoring with substantially shorter feedback loops. Early-semester engagement patterns can inform timely, mid-semester interventions, while assessment results can prompt immediate outreach. This transition from retrospective reporting to prospective, actionable intelligence marks a fundamental reconfiguration of how institutions engage with student success data.

Program-Level Specificity: A Deliberate Departure

The framework explicitly targets program-level implementation rather than institution-wide generalisation. This represents a deliberate departure from conventional IR practice and warrants explicit justification.

The Case Against Institution-wide Aggregation

Institution-wide metrics serve important accountability functions but offer limited guidance for action. Knowing that 65% of students graduate within six years tells us something about institutional performance but nothing about what to do differently. The factors influencing success vary dramatically across disciplines, programs, and student populations. Aggregate trends obscure these variations, potentially leading to generic interventions that address no one’s actual needs. Moreover, accountability for student success is diffuse at institutional level but concentrated at program level. The lecturer teaching first-year chemistry can do something about first-year chemistry outcomes; she cannot directly address institution-wide graduation rates. Actionable insights must be delivered to actors with capacity to act.

The Case for Program-Level Implementation

Program-level implementation ensures that insight is closely aligned with the capacity for action. Staff operating at this level maintain direct contact with students and are therefore well positioned to address identified needs through curriculum adjustments, pedagogical modifications, and targeted forms of support. Because analysis occurs within a relatively homogeneous context, program-specific factors can be more readily discerned. Correlation-based needs assessments thus generate findings tailored to particular disciplines: what proves critical for engineering students may differ markedly from the priorities of education students. This approach enables interventions that are discipline-sensitive and pedagogically integrated, in ways that generic support services cannot achieve. For example, if students within a given program encounter difficulties with foundational mathematics, the appropriate response is mathematics-focused support embedded within program teaching, rather than reliance on generic study skills workshops.

The Correlation-Based Needs Assessment

The needs assessment methodology warrants detailed description. Students are polled across multiple domains using validated instruments where available and locally-developed items where needed. Domains include: • Teaching and learning quality: Perceived course organisation, instructor clarity, assessment fairness, feedback quality • Financial circumstances: Degree of financial stress, impact on academic engagement • Home environment: Study space availability, family responsibilities, commute burden • Motivation: Intrinsic interest, goal clarity, self-efficacy • Support services: Awareness, utilisation, perceived helpfulness • Technology access: Device availability, connectivity reliability, digital literacy • Time management: Self-regulation capacity, competing demands • Belonging: Social integration, sense of institutional fit

Students are invited but not required to provide their student numbers, enabling linkage with academic records. Typical compliance rates around 75% provide sufficient data for meaningful analysis. Responses are then correlated with academic performance outcomes, typically credit-weighted average or, where cohorts share common courses, performance in those focal courses. Rank-order correlation coefficients identify which factors most strongly associate with success. These rankings inform intervention selection: limited resources should target factors with strongest demonstrated association with outcomes rather than factors assumed to matter.

Theoretical Contributions

The framework makes several contributions to student success scholarship.

Contribution to Student Success Literature

First, we offer an explicit theorisation of two-dimensional advising scale that extends existing advising frameworks. While models such as the CAS Standards for Academic Advising Programs and proactive advising frameworks (Rust & Wiley, 2025Rust & Willey, 2025) have implicitly operated across both institutional tiers and student populations, they have not distinguished horizontal scale (population reach) from vertical scale (role-player hierarchy) as conceptually distinct dimensions requiring fundamentally different mechanisms and design choices. By making this distinction explicit and theoretically grounded, the framework enables more precise diagnostic thinking about where advising capacity is constrained and what type of solution is most appropriate.

Second, the framework bridges macro-level retention theories and micro-level intervention practices. Tinto’s work explains why integration matters for persistence but offers limited guidance on how institutions might foster integration at scale. Motivation theories illuminate psychological mechanisms but are typically operationalised in researcher-controlled studies rather than ongoing institutional practice. Our framework translates these theoretical insights into implementable institutional architecture, with each theoretical tradition serving both a generative function (motivating design choices) and an evaluative function (providing criteria for assessing likely effectiveness).

Third, by foregrounding student voice through systematic needs assessment, the framework operationalises the phenomenological turn in retention research. Rather than assuming institutional knowledge of student needs, it creates mechanisms for students to identify their own challenges and for that identification to shape intervention selection. This approach is consistent with the equity-oriented critiques of Museus (2014), Harper (2012), and Bensimon (2005), which call for institutions to centre student experience rather than impose institutional assumptions about what students lack (Tinto, 1975, 1993).

Contribution to Learning Analytics (LA) Discourse

The framework extends learning analytics beyond student-facing applications to encompass the full institutional role-player hierarchy. Most LA implementations focus on dashboards for students or early warning systems that trigger support referrals. This framework positions analytics as infrastructure serving all institutional actors, each receiving role-appropriate insights for their specific decision contexts. The integration of engagement tracking with outcome analysis creates closed-loop systems rarely seen in LA implementations. Rather than deploying dashboards and hoping they help, the framework provides mechanisms to evaluate whether and how analytics-informed advising affects outcomes. This contributes to addressing the “so what?” question that plagues much learning analytics work.

Contribution to Improvement Science in Higher Education

We contribute novel integration of Improvement Science with automated advising systems. While PDSA methodology has been applied in educational contexts, applications typically involve human-intensive improvement teams working through deliberate cycles. The framework automates aspects of the improvement loop: engagement tracking, outcome correlation, feedback generation, enabling more rapid iteration than purely human-driven approaches allow. The framework also demonstrates application of Improvement Science across multiple institutional scales simultaneously. Rather than improvement projects targeting single courses or programs, the architecture enables coordinated improvement across the full role-player hierarchy.

Reframing Scale: Beyond “More Students”

Perhaps the most fundamental contribution is reconceptualising what “scale” means in student success work. The conventional framing assumes scale equals reach: more students served. This framework suggests a richer conception: scale also involves spanning institutional levels (vertical dimension) and creating sustainable mechanisms for personalisation (horizontal dimension through data injection). The reconceptualisation opens new possibilities for resource-constrained institutions. Rather than viewing scale as requiring proportional resource increase, institutions can consider how technology infrastructure enables reaching more students and more role-players without linear growth in advising staff.

Practical Implications

Having described the framework’s architecture in Section 3, we now consider what it means for different institutional actors. While Section 3 detailed what the framework provides, this section examines how stakeholders might use these capabilities to transform their practice.

Implications for Institutional Leadership

For executive leadership, the framework offers a shift from retrospective accountability reporting to prospective, actionable intelligence. Rather than learning at year-end that graduation rates declined, executives can monitor leading indicators in real time and direct attention to emerging concerns before they become entrenched problems. The program-level focus provides natural units for resource allocation decisions. Executive dashboards can identify programs requiring support without homogenising diverse contexts into misleading aggregates. This enables more targeted investment than institution-wide initiatives allow.

Implications for Program Management

Program coordinators gain tools for evidence-informed curriculum development. Gatekeeper course identification highlights where structural interventions may be needed. Progression pathway analysis reveals how students actually navigate programs versus prescribed sequences, potentially identifying problematic prerequisite structures or course scheduling conflicts. The correlation-based needs assessment provides program-specific evidence of what students need, enabling resource allocation aligned with demonstrated priorities rather than generic assumptions. This evidence base supports cases for additional resources where needed and accountability for effective use of existing resources.

Implications for Teaching Staff

Lecturers receive actionable intelligence about their own students without requiring manual data analysis. Automated identification of at-risk students, combined with suggested messaging templates, dramatically reduces the effort required to implement proactive outreach. The shift from reactive (waiting for students to seek help) to proactive (reaching out before problems become crises) engagement can transform the advising relationship. Concept scaffolding tools support discipline-specific teaching interventions. Rather than referring struggling students to generic support services, lecturers can address foundational gaps within their own teaching, maintaining disciplinary coherence and pedagogical authority.

Implications for Student Support Services

Student advisors gain comprehensive casefile management that maintains continuity across multiple service providers and extended time periods. When a student presents for support, the advisor has immediate access to relevant history rather than starting from scratch each time. Triggered referrals create warm handoffs, because the support service knows why the student is coming and what concerns prompted the referral. This enables more efficient initial interactions and demonstrates institutional coordination rather than fragmented services. Engagement tracking provides evidence of intervention effectiveness, supporting continuous improvement of advising practice and accountability for resources invested in support services.

Implications for Students

Students gain transparency about their academic standing that is often surprisingly absent. Many students lack clear understanding of how they are performing relative to requirements or what they would need to achieve to reach their goals. The framework provides this information directly, supporting student agency and self-regulation. Proactive outreach ensures that students receive support offers regardless of whether they would have sought help themselves. For students who avoid advisors due to stigma, shame, or simple unawareness, automated personalised messages can bridge the gap. The personalisation, demonstrating specific knowledge of the student’s situation, signals authentic institutional care rather than generic mass communication.

Implementation Considerations

Successful implementation requires more than technology deployment. Several considerations shape likely success. Technical infrastructure: the framework requires integration with student information systems, learning management systems, and communication platforms. API-based architecture enables flexibility but requires technical capacity to implement and maintain. Data quality: advising is only as good as the underlying data. Incomplete records, delayed grade entry, or inconsistent identifier use undermine the framework’s value. Data governance practices must ensure timely, accurate, complete information. Staff capacity building: technology provides tools; people must use them. Staff development for data literacy, interpretation of analytics outputs, and effective use of advising tools is essential. Without such development, sophisticated systems may sit unused. Organisational culture: the framework implies shifts in how institutions relate to student success data, from accountability reporting to actionable intelligence, from executive focus to distributed insight, from episodic to continuous evaluation. These shifts require cultural change alongside technical implementation. Phased implementation: given these complexities, phased approaches are advisable. Pilot implementations in willing programs can demonstrate value, refine processes, and build institutional knowledge before broader rollout.

Limitations and Critical Considerations

The framework has important limitations and raises critical concerns that warrant honest acknowledgment.

Correlation vs. Causation

The correlation-based needs assessment identifies associations between student-reported factors and academic performance, but such associations do not constitute evidence of causation. Correlated factors may represent symptoms rather than drivers of success: for instance, students who report high motivation may excel not because motivation itself directly causes achievement, but because underlying conditions foster both motivation and performance. This limitation underscores the need for humility in selecting interventions. Correlation offers a valuable signal, yet it cannot provide definitive guidance. Effective intervention design requires the integration of theoretical reasoning with empirical patterns, followed by rigorous evaluation to determine whether the interventions genuinely produce the intended outcomes.

Ethical Considerations

The framework inevitably raises significant ethical considerations that cannot be overlooked (Slade & Prinsloo, 2013). Intensive forms of student monitoring risk sliding from supportive engagement into surveillance, fostering environments in which students feel observed rather than genuinely cared for. The boundary between constructive nudging and manipulative control is often ambiguous, underscoring the importance of safeguarding student consent and agency. Learners must be fully informed about what data is collected, how it is utilised, and the choices available to them regarding participation. Opt-out provisions should constitute authentic alternatives, not nominal options that carry implicit penalties. Furthermore, algorithmic systems may perpetuate or even exacerbate existing inequities: when historical data reflects biased practices or structural inequalities, models trained on such data risk reproducing injustice rather than correcting it. Equity audits are therefore essential to evaluate whether automated advising generates differential impacts across student populations and to ensure that technological interventions advance, rather than undermine, fairness.

Staff implications also warrant consideration. If technology automates aspects of advising work, what happens to advising staff? The framework positions automation as augmentation, but implementation choices could instead pursue replacement, with consequences for employment and for the human relationships that matter for student success.

Finally, the framework’s reliance on digital channels raises equity concerns related to the digital divide. Students without reliable internet access, adequate devices, or strong digital literacy may be disadvantaged by interventions delivered primarily through technological platforms. In contexts where technology access is unevenly distributed, often correlating with existing socioeconomic disparities, the framework risks benefiting already-advantaged students while underserving those facing the greatest barriers. Implementation must consider multi-channel delivery that does not assume universal digital access.

Contextual Specificity and Transferability

The framework was developed and implemented within a specific institutional context: a South African research university with particular characteristics, student populations, and resource configurations. While we have articulated the framework in generalised terms, its effectiveness in our context does not guarantee transferability to others. Institutions with different technological infrastructures, data governance maturity, staff cultures, or student demographics may find that significant adaptation is required. We have outlined implementation considerations in Section 5, but these represent necessary rather than sufficient conditions for success. Institutions considering adoption should expect iterative refinement rather than simple replication.

Institutional Looseness and Coordination Challenges

The framework assumes a degree of institutional coherence that does not always characterise academic organisations. Universities frequently operate as loosely coupled systems (Weick, 1976), wherein faculties, departments, and student support units maintain substantial operational autonomy. Coordinating multi-tier advising across these units requires not only technical integration but sustained inter-unit political negotiation, which implementation experience suggests is often the primary constraint rather than any technical or conceptual barrier. The program-level focus of the framework mitigates this challenge to some degree: program staff are more tightly coupled than institution-wide actors, but the vertical dimension remains aspirational in contexts where executives and lecturers inhabit largely separate spheres of influence and accountability. Prospective implementations would therefore benefit from conducting an institutional coupling audit prior to designing the vertical tier structure, identifying which tiers are accessible and which would require dedicated change management to activate.

Effectiveness Evidence

We have presented a framework grounded in theory and implemented in practice, but rigorous outcome evidence remains limited. The Improvement Science orientation emphasises continuous refinement based on local evidence rather than definitive proof from controlled trials. This is both strength (enabling contextual adaptation) and limitation (lacking the certainty that randomised evaluation might provide). Claims about framework effectiveness must therefore be appropriately hedged. We offer a promising approach supported by theoretical reasoning and preliminary implementation experience, not a proven intervention with established effect sizes.

Future Research Directions

The framework opens several directions for future research.

Empirical Validation Studies

Rigorous evaluation of framework effectiveness is a priority. Quasi-experimental designs comparing outcomes before and after implementation, or across implementing and non-implementing programs, could provide stronger evidence than currently available. Longitudinal tracking through full degree trajectories would enable assessment of completion effects, not just intermediate outcomes. Multi-site studies would assess generalisability across institutional types (teaching-focused vs. research-intensive, well-resourced vs. constrained) and disciplinary contexts.

Equity-Focused Research

Investigation of differential effects across student populations is essential. Do all students benefit equally from automated advising, or do some populations benefit more (or less) than others? Algorithmic audit studies should examine whether risk identification or intervention triggering operates equitably or reproduces existing disparities.

Qualitative research on student experience of automated advising would illuminate how students perceive and respond to data-driven outreach. Is it experienced as caring or creepy? Helpful or intrusive? Such research should centre voices of students from historically marginalised groups whose perspectives may differ from majority populations.

Implementation Research

Understanding barriers and enablers of successful implementation would inform broader adoption, including identifying which institutional conditions, change management approaches, and data governance practices support effective use. Comparative analysis across implementations could identify more and less effective strategies, enabling future adopters to learn from accumulated experience. Particular attention should be given to the loosely coupled character of academic organisations (Weick, 1976), which poses distinctive coordination challenges that merit dedicated investigation in future implementation studies.

Theoretical Extensions

The framework could be enriched through integration with additional theoretical perspectives. Nudge theory (Thaler & Sunstein, 2008) provides conceptual vocabulary for understanding how choice architecture shapes student behaviour. Gamification theory (Deterding et al., 2011) addresses the badges, leaderboards, and achievement systems the framework incorporates. Community of Practice theory (Wenger, 1998) might illuminate how the multi-tier system creates interconnected communities with shared concern for student success. Extension to additional contexts: postgraduate education, professional programs, online and hybrid learning, could test the framework’s scope and identify necessary adaptations for contexts that differ from the undergraduate residential model implicit in much student success research.

Concluding Observations

Advancing student success in contemporary higher education requires a decisive departure from approaches that have consistently failed to resolve persistent challenges. Traditional advising models premised on linear scaling, where increased student numbers necessitate proportionally more advisors prove unsustainable in resource-constrained environments. Likewise, institutional research that produces aggregate, retrospective reports for executive leadership offers limited utility, as it rarely translates into actionable guidance for those positioned to intervene directly. Generic support services that rely on student self-referral are similarly inadequate, overlooking precisely those learners who most require assistance yet are least likely to seek it. Addressing these limitations demands innovative frameworks that integrate responsiveness, personalisation, and institutional accountability into the very fabric of student success strategies.

The Advising at Scale framework proposed in this paper offers an alternative architecture. By reconceptualising scale as operating along two dimensions: vertical (spanning institutional role-player hierarchies) and horizontal (enabling personalised reach through automation), the framework creates possibilities for extending advising capacity without proportional resource increase. By integrating Improvement Science methodology, it establishes feedback loops that enable continuous refinement based on evidence of effectiveness. By foregrounding student voice through correlation-based needs assessment, it ensures interventions address what students actually need rather than institutional assumptions about their deficits. Crucially, the theoretical traditions informing the framework: student integration theory as extended by Museus, Harper, Bensimon, and Cabrera et al.; Expectancy-Value Theory; Self-Determination Theory, function not merely as design rationales but as evaluative lenses through which the framework’s effectiveness and equity implications can be continuously assessed.

The framework does not promise easy solutions to difficult problems. Implementation requires technical infrastructure, staff development, organisational culture change, and sustained attention over time. The loosely coupled character of academic institutions poses real coordination challenges that cannot be resolved by technical design alone. Ethical considerations demand ongoing vigilance. Effectiveness claims must be tested through rigorous evaluation. But for institutions genuinely committed to student success, and willing to invest in the systems and practices that commitment requires, the framework offers a coherent approach grounded in theory, enabled by technology, and oriented toward the continuous improvement that complex challenges demand. We invite scholarly engagement with these ideas, collaborative refinement through implementation in diverse contexts, and the shared pursuit of educational environments where all students have opportunity to succeed.

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Declaration of Generative Ai and Assisted Technologies in the Writing Process

During the preparation of this article, the authors made use of generative AI and AI-assisted technologies as follows: • Claude AI was employed to support the identification of sources for the literature review. • Manus AI was utilized to enhance the readability of the article and assist with language editing.

Following the use of these tools, the authors carefully reviewed, revised, and edited all content as required. The authors accept full responsibility for the accuracy, integrity, and originality of the final manuscript.