Abstract

Study Design

Literature review.

Objective

The Transparent Reporting of a multivariable prediction model for Individual Prognosis or Diagnosis (TRIPOD) statement was developed to improve the generalizability of predictive models. This study systematically evaluated the quality of predictive models related to spine procedures and assessed their compliance with the TRIPOD guidelines.

Methods

A systematic search was conducted on PubMed to identify original research articles published between January 1st, 2018, and February 1st, 2023 reporting prediction models in the top six spine journals ranked by Scimago Journal Ranking (SJR): Journal of Bone and Joint Surgery, Spine, Journal of Orthopaedic Trauma, Journal of Neurosurgery: Spine, Neurosurgery, and Neurosurgical Focus. We assessed article adherence to the TRIPOD criteria using a standardized checklist.

Results

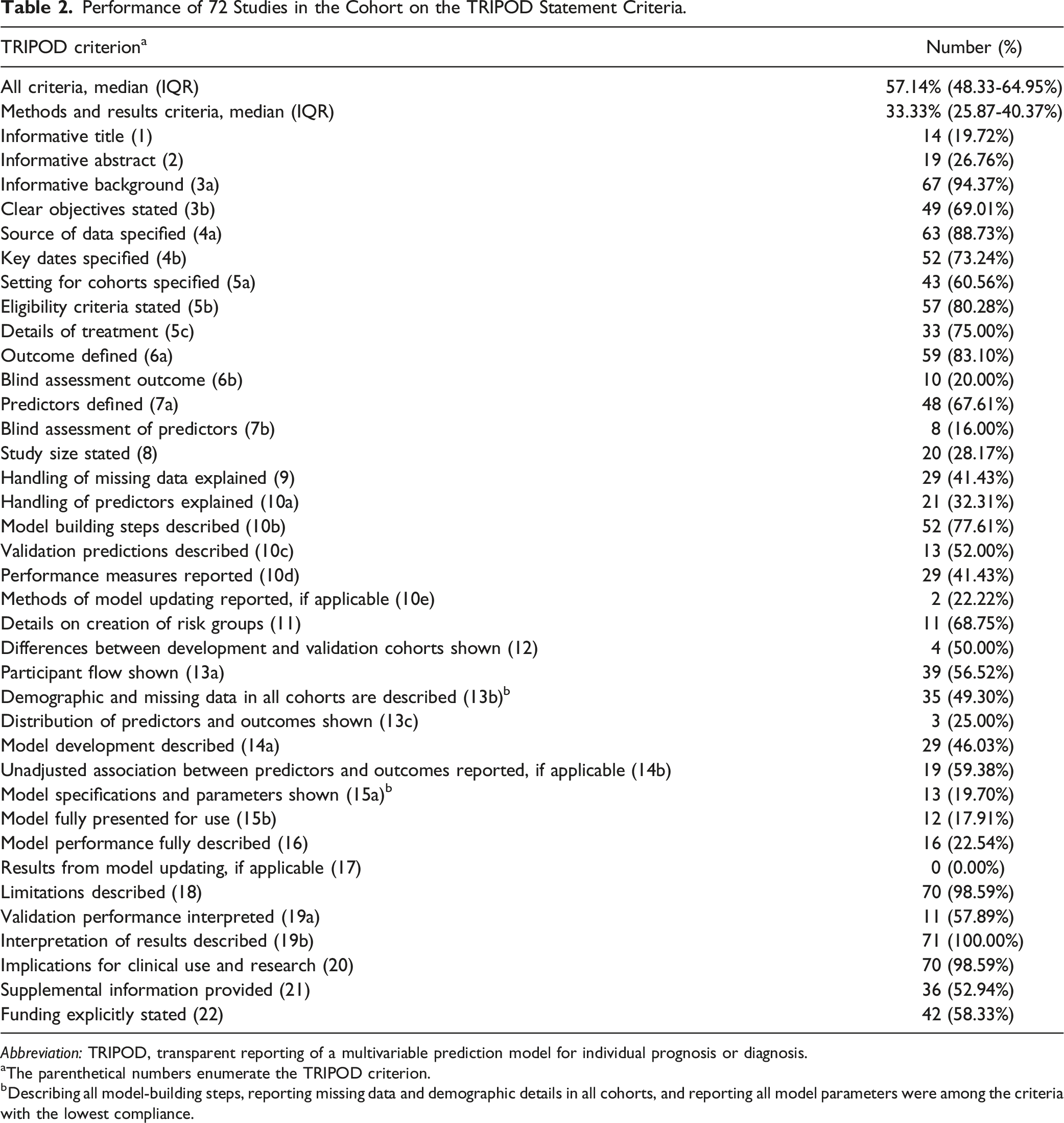

72 articles were included and analyzed with the TRIPOD checklist. Median compliance with the TRIPOD criteria was 57.14% (IQR: 48.33-64.95%). Compliance varied significantly across journals (P < 0.05). Among the TRIPOD criteria, the lowest compliance was observed in blinding the assessment of predictors (n = 8, 16.00%), fully presenting the model for use (n = 12, 17.91%), and providing sufficient information to allow for the external validation of results (n = 13, 19.70%).

Conclusions

Published machine learning models predicting outcomes in spine surgery often do not meet the established guidelines for their development, validation, and reporting outlined by TRIPOD. This lack of compliance may suggest that these models have not been adequately validated externally or adopted into routine clinical practice in spine surgery.

Keywords

Introduction

The United States devotes significant healthcare expenditure toward managing spine pathologies. 1 As healthcare costs continue to rise, there is an increasing need to promote value-based care to improve healthcare resource utilization.2-4 Artificial Intelligence (AI) has already been leveraged to improve the quality and value of care provided for adult spinal deformity. It holds promise as a tool to maximize the value of care provided across the field of spine surgery.5,6 AI has demonstrated the ability to outperform conventional statistical techniques, 7 leading to a subsequent rise in predictive models within spine surgery.8-10

Despite the rapid growth of AI prediction models, recent literature has drawn attention to inadequacies in developing and validating these models.11,12 The Transparent Reporting of a multivariable prediction model for Individual Prognosis or Diagnosis (TRIPOD) 22-item checklist was designed to increase the transparency of predictive models. 13 To our knowledge, adherence to reporting guidelines in predictive models for spine surgery remains unexplored. This article critically appraised the development and validation of predictive models in spine surgery through the TRIPOD checklist.

Methods

Search Strategy

A PubMed search string was constructed to identify articles published in the top six orthopedic and neurosurgery journals based on their Scimago Journal Ranking (SJR): The Journal of Bone and Joint Surgery, Spine, Journal of Neurosurgery, Journal of Orthopedic Trauma, Neurosurgery and Neurosurgical Focus. Articles published between January 1st, 2018, and February 1st, 2023, that developed or validated a predictive model for spine surgery were included (Supplemental Table 1). Articles that did not report outcomes specific to spine surgery were excluded.

The present study was conducted in accordance with the Preferred Reporting Items for Systematic reviews and Meta-Analyses (PRIMSA) reporting guidelines and was exempt from institutional review board review due to the lack of human participants.

Appraisal of Included Publications, Data Synthesis, and Analysis

The Enhancing the Quality and Transparency of Health Research (EQUATOR) Network aims to promote research transparency by emphasizing reporting guidelines. The TRIPOD checklist consists of thirty-seven items assessed across various manuscript domains that describe the development or validation of a predictive model. Articles included in this review were broadly categorized based on whether they performed development and internal validation (DIV), external validation (EV) of a pre-existing model, development, and external validation (DEV), or incremental value (IV) (updating of a pre-existing model).

The overall TRIPOD adherence score was calculated as the number of checklist items that were adhered to divided by the number of applicable checklist items. Items not applicable to a particular model were excluded from calculations.

Two authors (J.R and A.P) assessed the adherence to reporting guidelines issued in accordance with the TRIPOD checklist. Conflicts were resolved by a third author (S.K).

The results were computed as frequency and percentage of the total. Student T-tests and ANOVA were used to analyze continuous variables. Analyses were performed with R software version 4.1.1 (R Foundation for Statistical Computing, Vienna, Austria).

Results

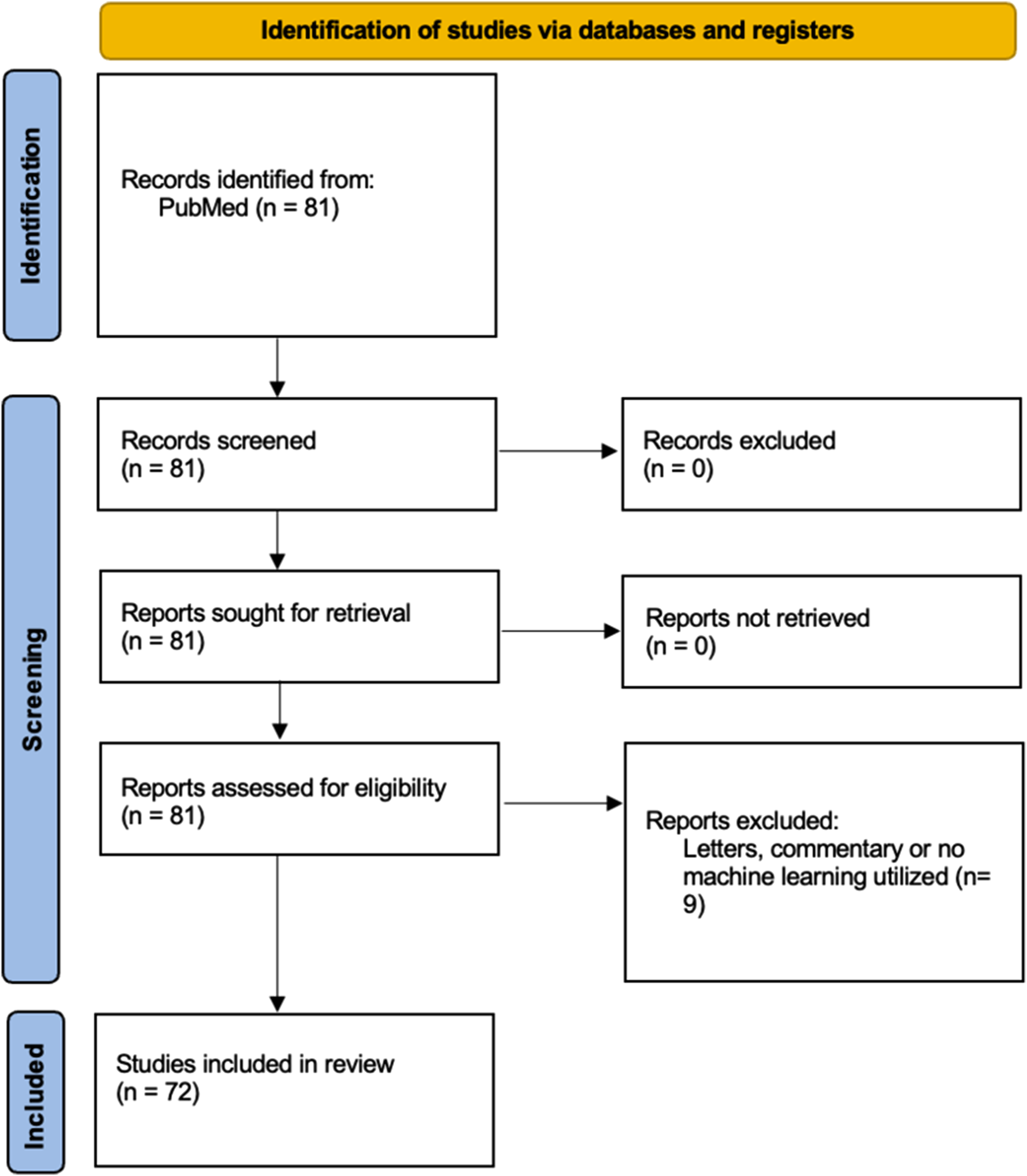

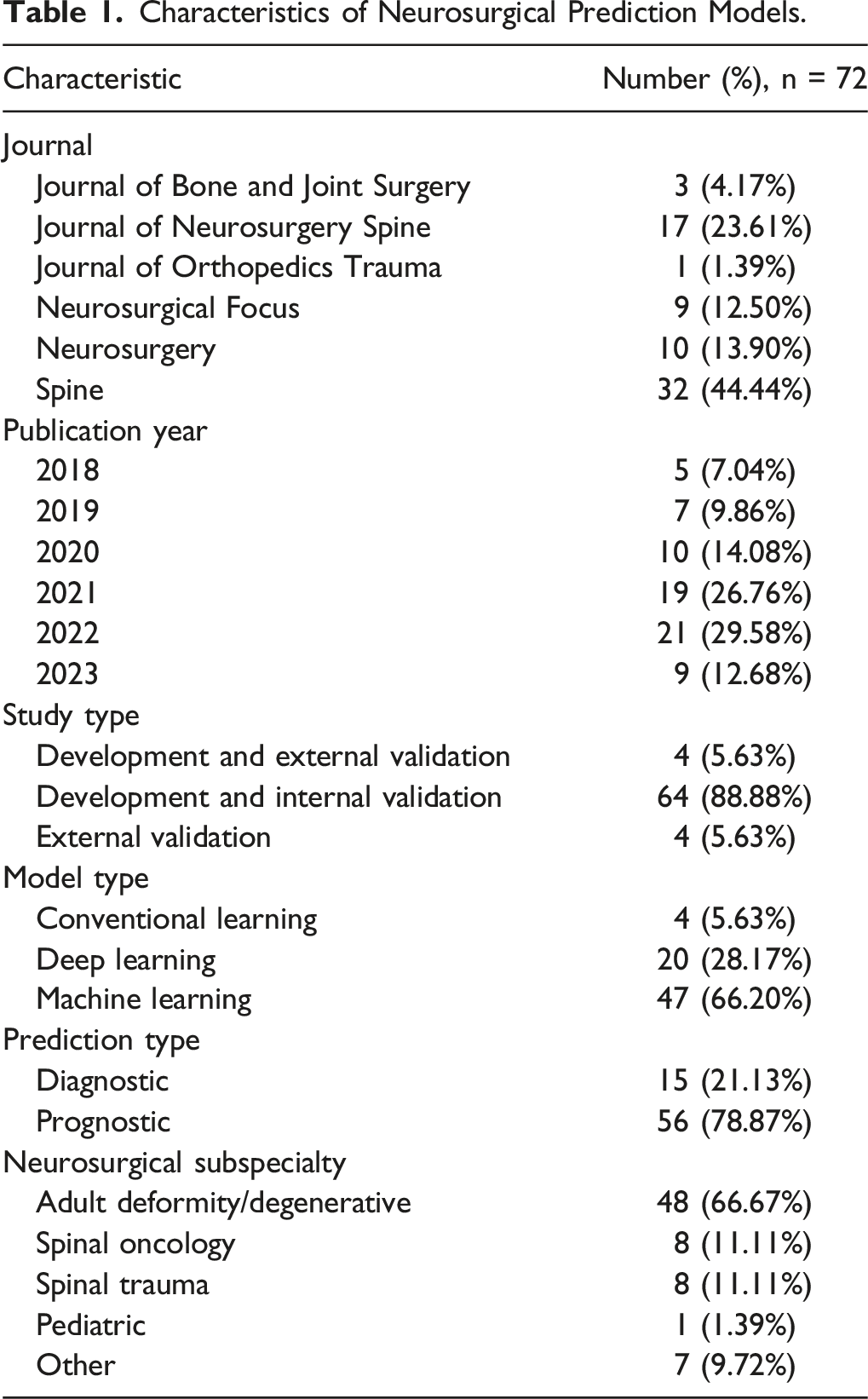

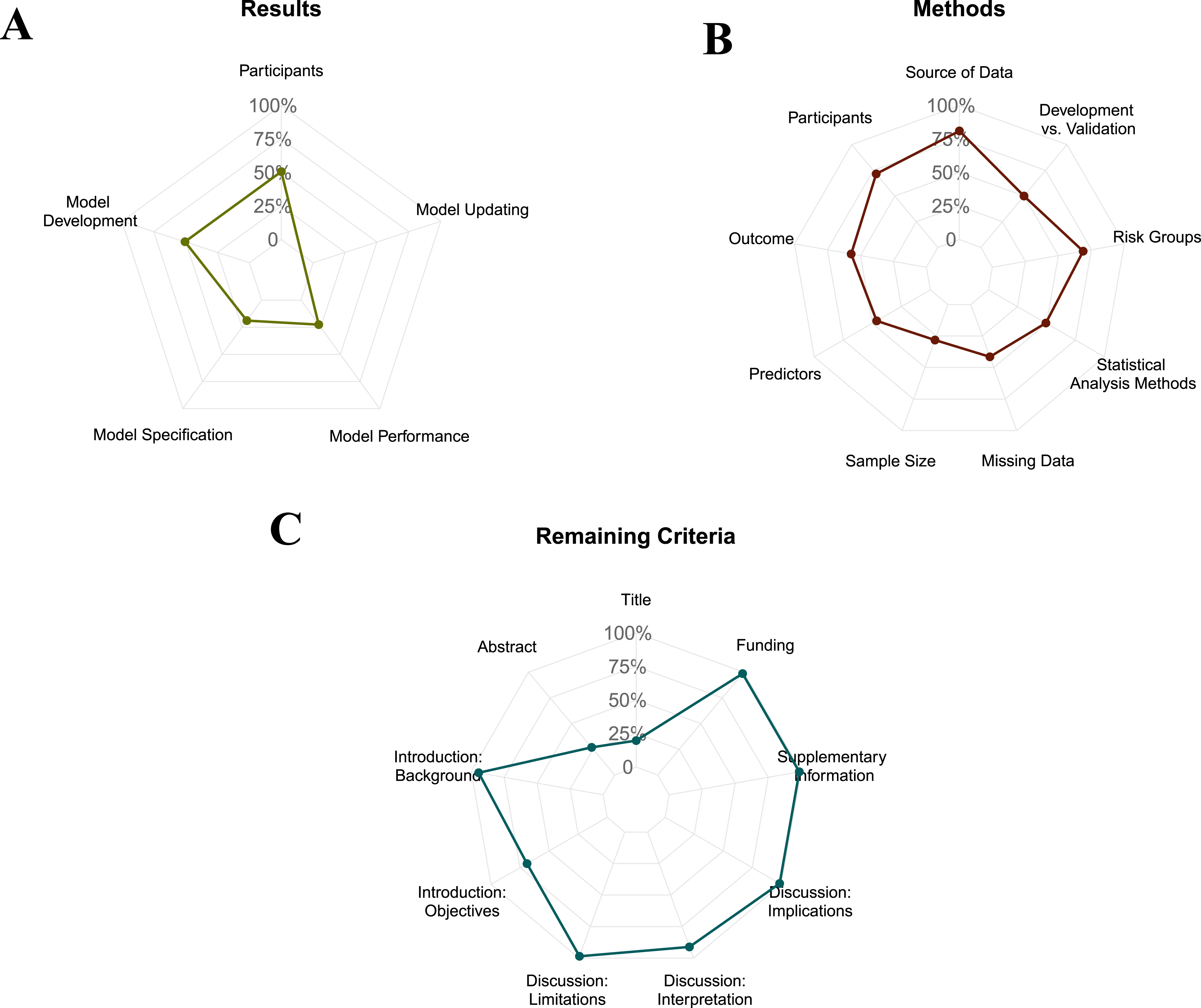

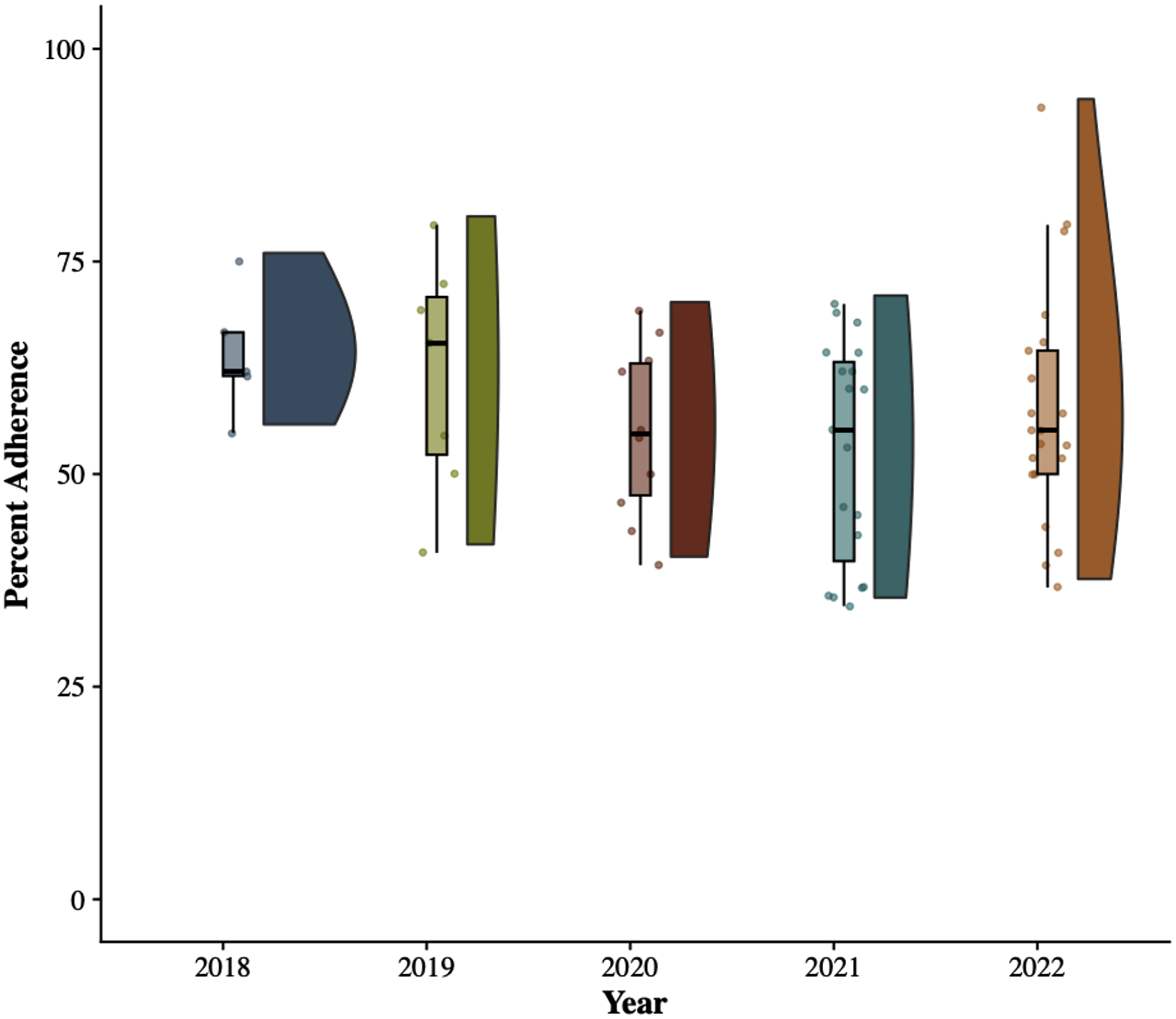

Our literature search identified 81 articles. After screening for articles that did not utilize machine learning models or were not specific to spine surgery, 72 articles met the criteria for inclusion (Figure 1). Of these 72 articles, 44.4% were published in the Spine Journal—88.7% of articles performed development and internal validation of a predictive model (Table 1). Degenerative/deformity surgery was the most common sub-specialty (n = 48). Figure 2 demonstrates article compliance across the various TRIPOD domains with radar graphs. Figure 3 demonstrates median TRIPOD criterion performance by year and neurosurgery subspecialty (Table 2). Article selection paradigm. Characteristics of Neurosurgical Prediction Models. Radar diagrams of a) Results, b) methods, and c) all other metrics, percent adherence to the TRIPOD criterion Median TRIPOD criterion performance by year and neurosurgery subspecialty Performance of 72 Studies in the Cohort on the TRIPOD Statement Criteria. Abbreviation: TRIPOD, transparent reporting of a multivariable prediction model for individual prognosis or diagnosis. aThe parenthetical numbers enumerate the TRIPOD criterion. bDescribing all model-building steps, reporting missing data and demographic details in all cohorts, and reporting all model parameters were among the criteria with the lowest compliance.

Adherence Across Subspecialties

The median TRIPOD compliance across all predictive spine surgery models was 57.14% (IQR: 48.33-64.95%). Among published studies, the highest compliance was 93.10%, and the lowest was 34.48%. Compliance varied across subspecialties, with pediatric spine surgery exhibiting the highest median compliance (66.66%) and spinal trauma having the lowest compliance (54.25%). There was no significant difference in median compliance across subspecialties (P > 0.05).

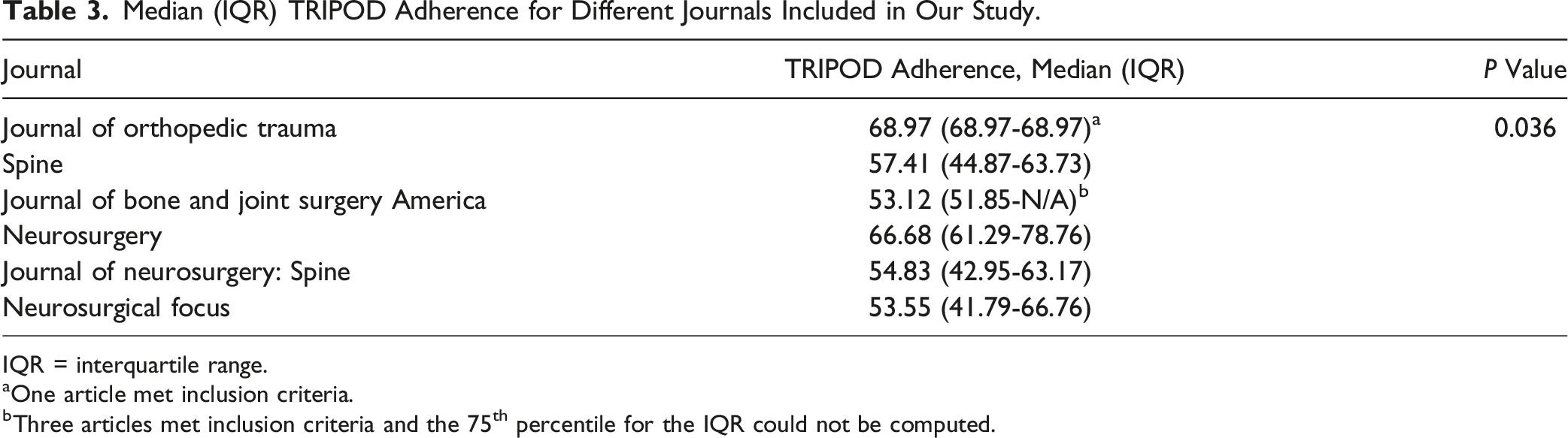

Adherence Across Journals

Median (IQR) TRIPOD Adherence for Different Journals Included in Our Study.

IQR = interquartile range.

aOne article met inclusion criteria.

bThree articles met inclusion criteria and the 75th percentile for the IQR could not be computed.

Adherence to Individual TRIPOD Criterion

As graded according to the TRIPOD checklist, 80.18% of articles lacked an informative title, and 73.24% did not adequately summarize their study in the abstract. 94.3% of articles described a rationale for their study, and 69.01% listed whether their study performed development or validation of a predictive model. Most articles (>70%) reported adequate information on their study design and period. About 80% of articles lacked adequate information on the assessment of predictors and outcomes used in their predictive model. Sample size calculation and handling of missing data were less frequently reported (28.2% and 41.3%, respectively). Low compliance was observed across TRIPOD items related to model parameters (19.7%) and performance measures (22.4%). None of the articles in our study updated a pre-existing model (item 17). Over 98% of the articles reviewed were found to have adhered to reporting guidelines on clinical implications and relevant limitations to utilizing their predictive model.

Information about the availability of supplementary resources and sources of funding was provided in 52.94% and 58.33% of studies, respectively. Compliance with reporting funding sources varied across journals (P < 0.001). Articles published in the Spine Journal exhibited the highest adherence (81.25%) to item 22 of the checklist.

Discussion

This study critically evaluated the quality of AI predictive models on spine surgery published in the top 6 neurosurgery and orthopedic journals between 2018-2023. The findings of our investigation demonstrate inadequate adherence to the Transparent Reporting of a multivariable prediction model for Individual Prognosis Or Diagnosis (TRIPOD) guidelines. These shortcomings must be addressed before implementing AI models to enhance healthcare outcomes.

Our median compliance of 57.14% is comparable to studies that evaluated TRIPOD adherence of predictive models in general surgery and orthopedic surgery.11,14 However, this is substantially lower than a recent study that appraised the quality of predictive models across five major neurosurgical journals. 15 We also observed lower compliance across ML models than conventional statistical models such as logistic regression, possibly due to the TRIPOD statement’s terminology for regression models. The EQUATOR network aims to develop guidelines specific to AI-predictive models to overcome this drawback. 16

TRIPOD guidelines require the title to explicitly mention whether the study developed, validated, or added incremental value to a predictive model. Our results revealed suboptimal compliance in items that governed the reporting of a title and abstract, which could potentially compromise readership and retrieval of articles during database searches. About 80% of articles lacked adequate information on the blinded assessment of predictors and outcomes used in their predictive model. This allows for bias during the evaluation of characteristics that could vary subjectively, such as the grading of radiographic parameters. 17

The median compliance to items in the methods and results criteria was 33.33%. This could impact the clinical utility of predictive models due to limited generalizability during the selection of participants. Reporting of sample size calculation and handling of missing data was observed in 28.17% and 41. 43% of studies, respectively. Despite exhibiting adequate performance on the development dataset, models developed using smaller cohorts may lack generalizability. 18 A lack of information about how missing data was handled raises the question of bias that may have been introduced during participant selection. 19 Over half the predictive models in our review lacked adequate demographic information or information on the number of participants with missing data (item 13b). Results from models that fail to adhere to reporting guidelines for demographic characteristics must be interpreted and utilized cautiously. Race and sex have been identified as independent predictors of unfavorable outcomes such as increased length of stay, re-admissions, and post-operative complications after spine surgery.20,21 Few models (19.7%) provided adequate information on model-building parameters, which would allow for external validation, thereby compromising the ability to assess their performance on a cohort. Satisfactory rates of compliance (>95%) to reporting of items related to rationale, limitations, and clinical implications (3a, 18, 19b) suggest adequate literature review among spine surgery publications. This could result from dedicated outcomes research groups and mentorship within the field of spine surgery. We observed significant differences in overall compliance to TRIPOD criteria and reporting of sources of funding across journals. This could be a result of varying journal specifications before manuscript submission. Based on previous research demonstrating increased compliance to reporting guidelines after the mandatory checklist requirement during manuscript submission, 22 we hypothesize that a similar strategy would improve adherence to TRIPOD guidelines within spine surgery.

Clinical Implications and Future Directions

Low adherence to reporting guidelines for the development and validation of predictive models in spine surgery could impact their use in clinical practice. For instance, many predictive models lack adequate information on demographic data and statistical analyses. Outcomes in spine surgery are affected by demographic characteristics.23,24 Additionally, many models lacked external validation. Clinical prediction models require externally validated in order to demonstrate their predictive ability across datasets to demonstrate their generalizability. Future research could encourage the use of better reporting practices through submission of checklists during submission in order to promote transparency in clinical research.

Limitations

Our systematic review is limited by virtue of inclusion of articles published in the top six journals according to their SJR ranking. Thus, our results may not be generalizable to other journals. We would also like to acknowledge that the TRIPOD criteria was not specifically developed for ML models and aim to assess the compliance of studies in accordance with the TRIPOD-AI checklist in a future study. Journals included in our study may have had varying specifications for reporting guidelines prior to the peer review process. We were also unable to determine whether low compliance was due to inadequate standards by journals or due to a lack of knowledge by the authors in adhering to reporting guidelines.

Conclusion

Despite an increase in the development of AI predictive models in spine surgery, inadequate adherence to reporting guidelines poses a drawback to utilization in clinical practice. Emphasizing the submission of a checklist based on TRIPOD guidelines could potentially improve the quality of predictive models.

Supplemental Material

Supplemental Material - An Appraisal of the Quality of Development and Reporting of Predictive Models in Spine Surgery

Supplemental Material for An Appraisal of the Quality of Development and Reporting of Predictive Models in Spine Surgery by Syed I. Khalid, Joanna M. Roy, Elie Massaad, Kyle Thomson, Pranav Mirpuri, Aashka Patel, Ankit I. Mehta, Ali Kiapour and John H. Shin in Global Spine Journal.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Data Availability Statement

The datasets used in this study are available upon reasonable request from the corresponding author.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.