Abstract

Study Design

Cross-sectional survey.

Objectives

A cornerstone of classification systems is good reliability amongst different groups of classification users. Thus, the aim of this international validation study was to assess the reliability of the new AO Spine DGOU Osteoporotic Fracture Classification (OF classification) stratified by surgical specialty, work-setting, work-experience, and trauma center level.

Methods

320 spine surgeons were asked to rate 27 cases according to the OF classification at 2 time points, 4 weeks apart (assessment 1 and 2) in this online-webinar based validation process. The kappa statistic (κ) was calculated to assess the inter-observer reliability and the intra-rater reproducibility.

Results

A total of 7798 (90.3%) ratings were recorded in assessment 1 and 6621 (76.6%) ratings in assessment 2. Global inter-rater reliability was moderate in both assessments (κ = 0.57; κ = 0.58). Participants with a work-experience of >20 years showed the highest inter-rater agreement in both assessments globally (κ = 0.65; κ = 0.67). Participants from a level-1 trauma center showed the highest agreement (κ = 0.58), whereas participants working at a tertiary trauma center showed higher grade of agreement in the second assessment (κ = 0.66). Participants working in academia showed the highest agreement in assessment 2 (κ = 0.6). Surgeons with academic background and surgeons employed by a hospital showed substantial intra-rater agreement in the second assessment.

Conclusions

The AO Spine-DGOU Osteoporotic Fracture Classification showed moderate to substantial inter-rater agreement as well as intra-rater reproducibility regardless of work-setting, surgical experience, level of trauma center and surgical specialty.

Keywords

Background

Osteoporosis represents an increasing public health concern worldwide in the elderly population. 1 Due to the potential morbidity and mortality, osteoporotic vertebral fractures (OVFs) are of increasing concern as they represent the most common osteoporotic fractures due to low bone density and fragility.2,3 With the rising incidence and prevalence of OVFs, it is crucial to implement evidence-based treatment algorithms in order to establish standardized care strategies for these patients.4,5 These treatment algorithms are mainly based on fracture classifications to determine fracture morphology precisely, which also facilitates uniform communication between caregivers and guide in determining optimal treatment strategies. AO (Arbeitsgemeinschaft für Osteosynthesefragen) Spine developed and introduced a new classification system for thoracolumbar fractures in 2013, which was quickly accepted and validated with high reproducibility internationally.6,7 However, this classification was not developed specifically for patients with osteoporotic changes. A distinct differentiation between high-energy vertebral fractures and OVFs is crucial, since OVFs are being managed differently due to their unique prognosis and characteristics. 8 The Spine Section of the German Society for Orthopaedics and Trauma (DGOU), who have formed the working group “Osteoporotic Fractures”, proposed a new classification for osteoporotic thoraco-lumbar spine fractures. 3 Further, the final AO Spine-DGOU Osteoporotic Fracture (OF) classification system was developed after several consensus meetings with members of the AO Spine Knowledge Forum Trauma. This classification (OF) consists of 5 groups: OF 1, no deformation, only bone edema assessed with MRI, OF 2, deformation of 1 endplate with no or minor involvement of posterior wall, OF 3, deformation of 1 endplate with substantial involvement of posterior wall, OF 4, deformation of both endplates with or without involvement of posterior wall, and OF 5, fracture with anterior or posterior tension band failure. (Appendix 1).

Based on this classification system, treatment recommendations were proposed by Blattert et al and subsequently validated in a large multi-center study from Germany. The study showed high accordance in treatment strategies of OVFs with favorable outcomes with the proposed treatment recommendations.8,9 A cornerstone of classification systems is good reliability amongst different groups of classification users (eg, different levels of surgical experience). 10 Studies on the reliability of subaxial and thoracolumbar AO Spine classifications have shown that the spine surgeon`s level of experience and subspecialty did not have substantial influence on the classification and intra-observer reliability of these classifications.11,12 However, data on the reliability amongst different surgeons` levels of experience for the AO Spine-DGOU OF classification is lacking. Thus, the aim of this international validation was to assess the reliability of the AO Spine-DGOU OF classification amongst spine surgeons with different levels of experience and subspecialties as well as to assess the effect of the level of trauma center spine surgeons are working at on the reliability of this classification.

Material and Methods

Development of Classification System

The methodology behind the creation of the AO Spine-DGOU OF classification has been described in detail in the publication of the Spine Section of the German Society for Orthopaedics and Trauma (DGOU). 3

Validation Process

The present international validation followed strictly the previously published methodology and recommendation of Lambrechts et al. 13 In total, 320 spine surgeons participated in this online-webinar based validation. All participants were invited in advance to watch a video on the correct application and classification. Additionally, the official poster of the AO Spine-DGOU OF classification for illustration was available. Before the start of the validation, all participants were given the opportunity to ask questions to the instructor. After a short introduction, the participants were given the opportunity to practice the handling in the context of training cases. Subsequently, the 27 cases were presented by means of a key-image and subsequently by means of CT and (if available or relevant) MRI in sagittal, coronal and axial slices. The participants were then able to give their rating for the respective case in RedCap (REDCap Software – Version 6.5.2 - © 2023 Vanderbilt University).

The second rating took place with rearranged cases 4 weeks after the initial assessment to avoid recall bias.

Gold-Standard-Committee

Members of the Osteoporotic Fracture working group of the DGOU provided the gold-standard classification for each injury on radiological imaging. Prior to evaluation of the injuries by the study participants, the Gold Standard Committee reached unanimous agreement on the classification of each presented case.

Statistical Analysis

The kappa statistic (κ) was calculated to assess the reliability of the classification system among different observers (inter-rater agreement) and the reproducibility for the same observer on separate occasions (intra-rater reproducibility). 14 The Fleiss kappa statistic measures the agreement of multiple raters who rate multiple subjects, with the rating based on multiple categories. The coefficients were interpreted utilizing the Landis and Koch grading system, which defines κ ≤ 0.2 as slight reliability (agreement/reproducibility), 0.2 < κ ≤ 0.4 as fair reliability, 0.4 < κ ≤ 0.6 as moderate reliability, 0.6 < κ ≤ 0.8 as substantial reliability, and κ > 0.8 as excellent reliability. 15 Inter-rater and intra-rater agreement was calculated for the different classification groups (OF 1-5) and overall, for the classification itself. Furthermore, agreement with the gold-standard (accuracy) was calculated overall and for each OF-subtype. Results were stratified by the raters` specialty, work-experience in years (subgroups: <5 years; 5-10 years; 11-20 years; >20 years), work-setting and level of trauma center. Differences between the groups were calculated using the chi-square test. Statistical significance was set at P < 0.05.

Results

Participants and Ratings

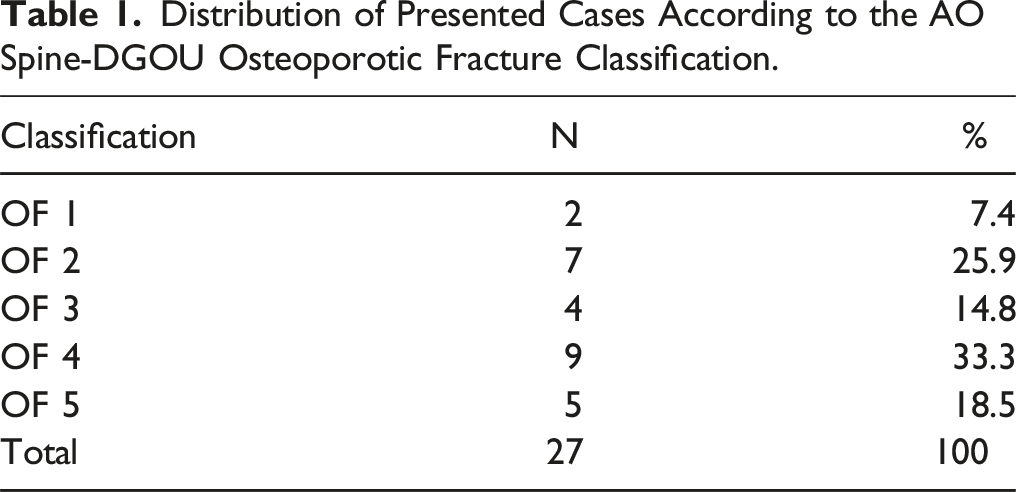

Distribution of Presented Cases According to the AO Spine-DGOU Osteoporotic Fracture Classification.

Out of 17280 possible ratings (8640 ratings in each validation time point), 7798 (90.3%) ratings were recorded in the first validation round and 6621 (76.6%) ratings were recorded 4 weeks later.

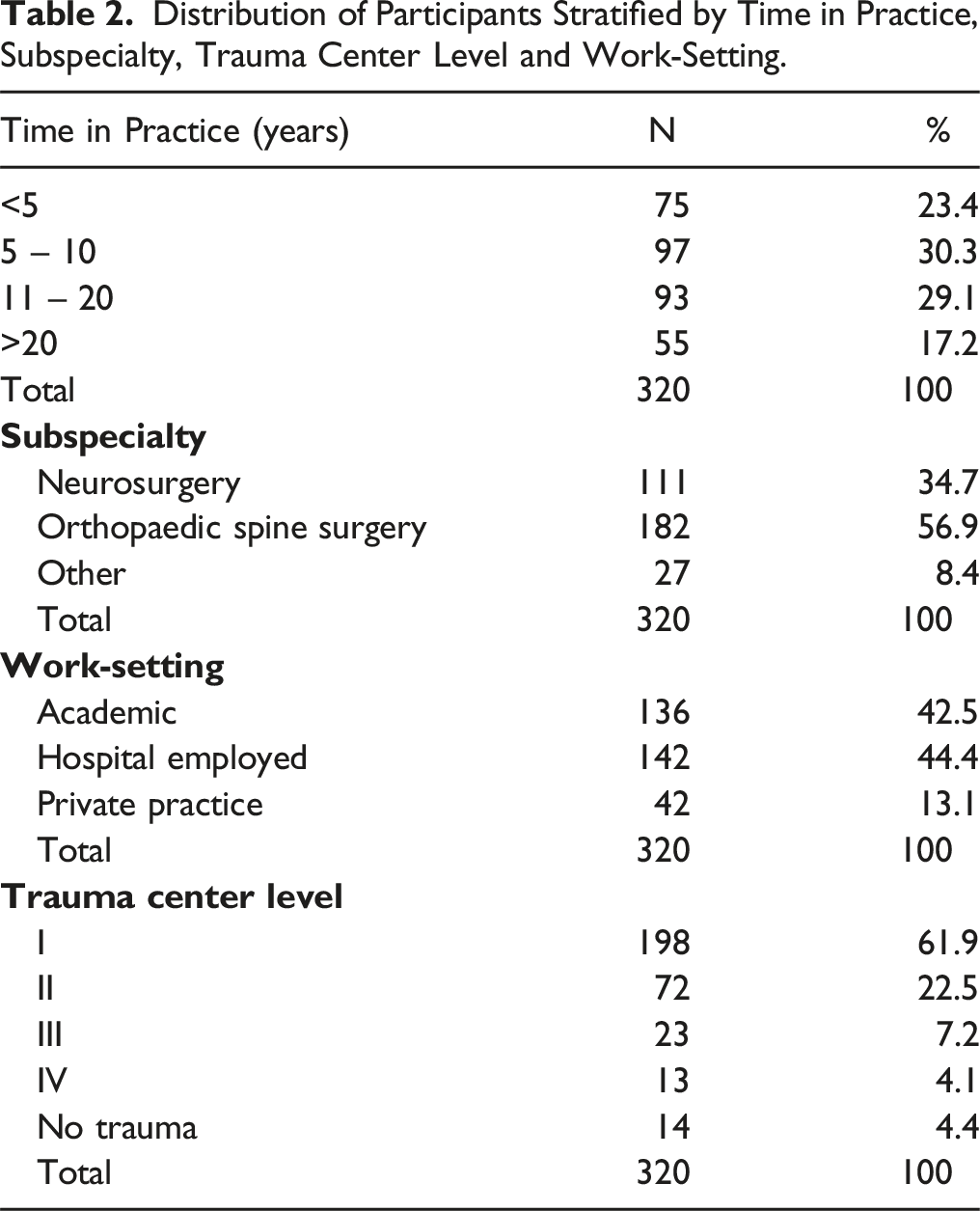

Most of the Participants Reported a Work Experience of 5 to 10 years (n = 97, 30.3%).

Orthopaedic spine surgery was the most frequently reported subspecialty amongst the participants (n = 182, 56.9%) and most of the study participants were hospital employed (n = 142, 44.4%).

Distribution of Participants Stratified by Time in Practice, Subspecialty, Trauma Center Level and Work-Setting.

Agreement With Gold-Standard

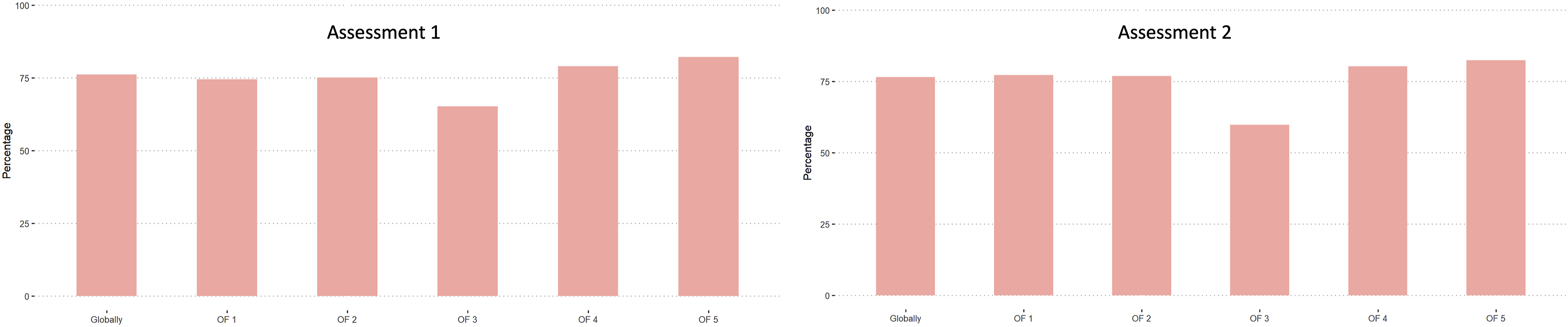

Amongst all participants (unstratified), the evaluation showed relatively high agreement with the gold-standard in both, first and second assessment. (Figure 1). Agreement with gold-standard amongst all participants stratified by OF-classification.

Regarding time in practice, the highest global agreement with the gold-standard was achieved in the group practicing more than 20 years in both, assessment 1 and assessment 2. OF 3 fractures showed the lowest agreement with the gold-standard amongst all time in practice groups.

Overall, the highest agreement with the gold-standard was achieved by neurosurgeons in assessment 1 (76.9%) and by orthopaedic spine surgeons (77.5%) in assessment 2. The least agreement with the gold-standard amongst the different subspecialties was found in OF 3 fractures. Generally, the agreement with gold-standard increased from the first to the second assessment for neurosurgeons and orthopaedic spine surgeons but decreased for the “other” group.

Surgeons from level-1 trauma centers achieved the highest agreement globally in assessment 1 (76.6%), whereas level-3 centers achieved the best agreement with the gold-standard in the second assessment (81.5%). Also in this sub analysis, fractures classified as OF 3 were rated the poorest.

Hospital employed participants achieved the best agreement with the gold-standard globally in the first assessment (77.3%), whereas surgeons from an academic work environment had the best agreement with the gold-standard in the second assessment (76.9%).

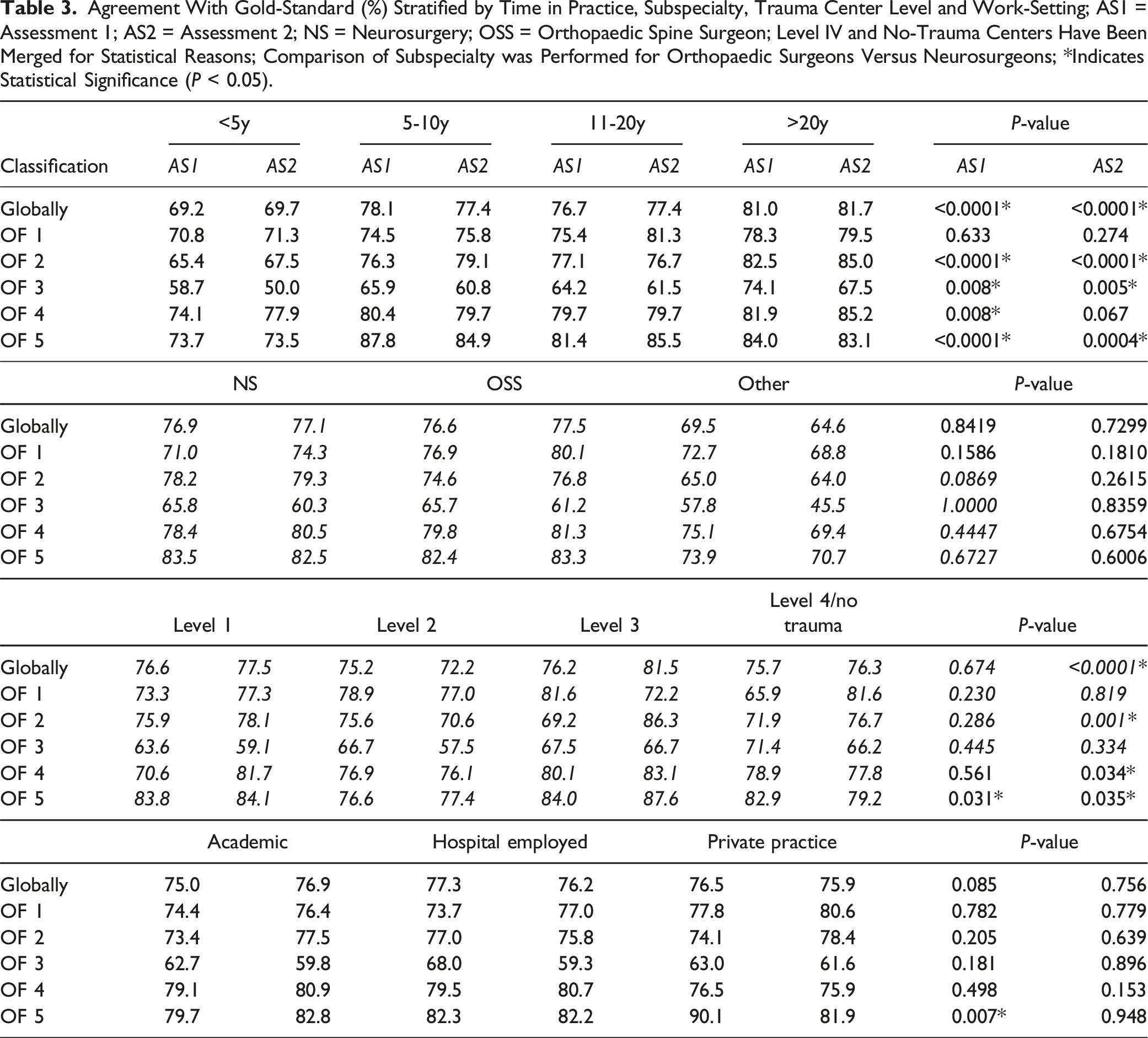

Agreement With Gold-Standard (%) Stratified by Time in Practice, Subspecialty, Trauma Center Level and Work-Setting; AS1 = Assessment 1; AS2 = Assessment 2; NS = Neurosurgery; OSS = Orthopaedic Spine Surgeon; Level IV and No-Trauma Centers Have Been Merged for Statistical Reasons; Comparison of Subspecialty was Performed for Orthopaedic Surgeons Versus Neurosurgeons; *Indicates Statistical Significance (P < 0.05).

Inter-Rater Agreement

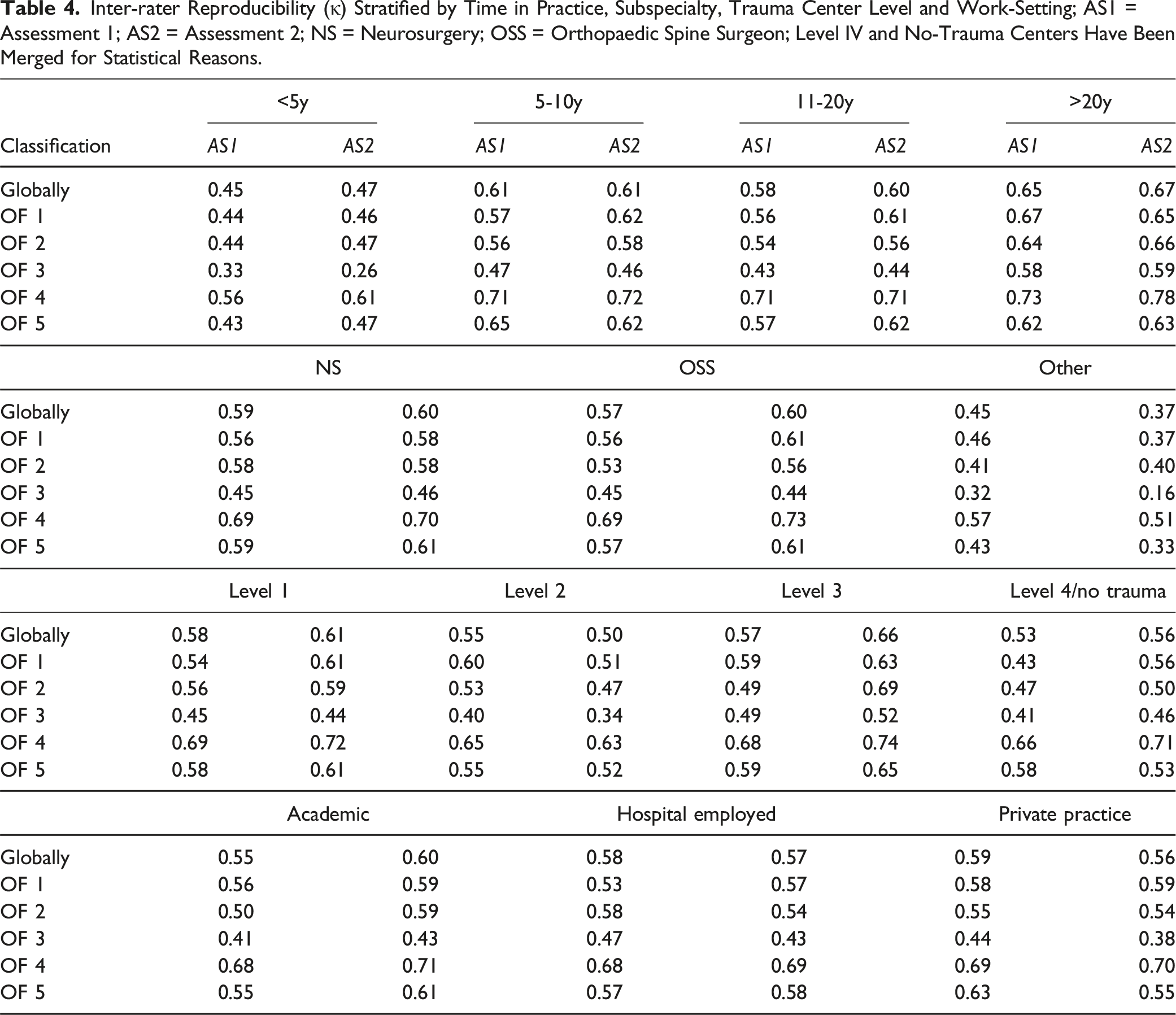

Amongst all participants, the global inter-rater reliability was moderate in both, first and second assessment (κ = 0.57; κ = 0.58). The best inter-rater reliability globally was achieved for fracture subtypes OF 4 in both assessments (κ = 0.68; κ = 0.70), whilst the worst reliability was found in OF 3 fractures in both assessments (κ = 0.44; κ = 0.42).

The subgroup with a work-experience of more than 20 years showed the highest inter-rater agreement amongst all participations in both assessments globally (κ = 0.65; κ = 0.67). In most of the fracture types, there was a general improvement between the assessments amongst all work experience groups, however, only the more experienced surgeons showed improvement in OF 3 fracture types.

Neurosurgeons had the best global inter-rater agreement in the first assessment (κ = 0.59) whereas orthopaedic spine surgeons showed a higher agreement in assessment 2 (κ = 0.60). The “other” group showed general decrease in reliability amongst all fracture types in the second assessment compared to the first, whereas neurosurgeons and orthopaedic spine surgeons showed general improvement.

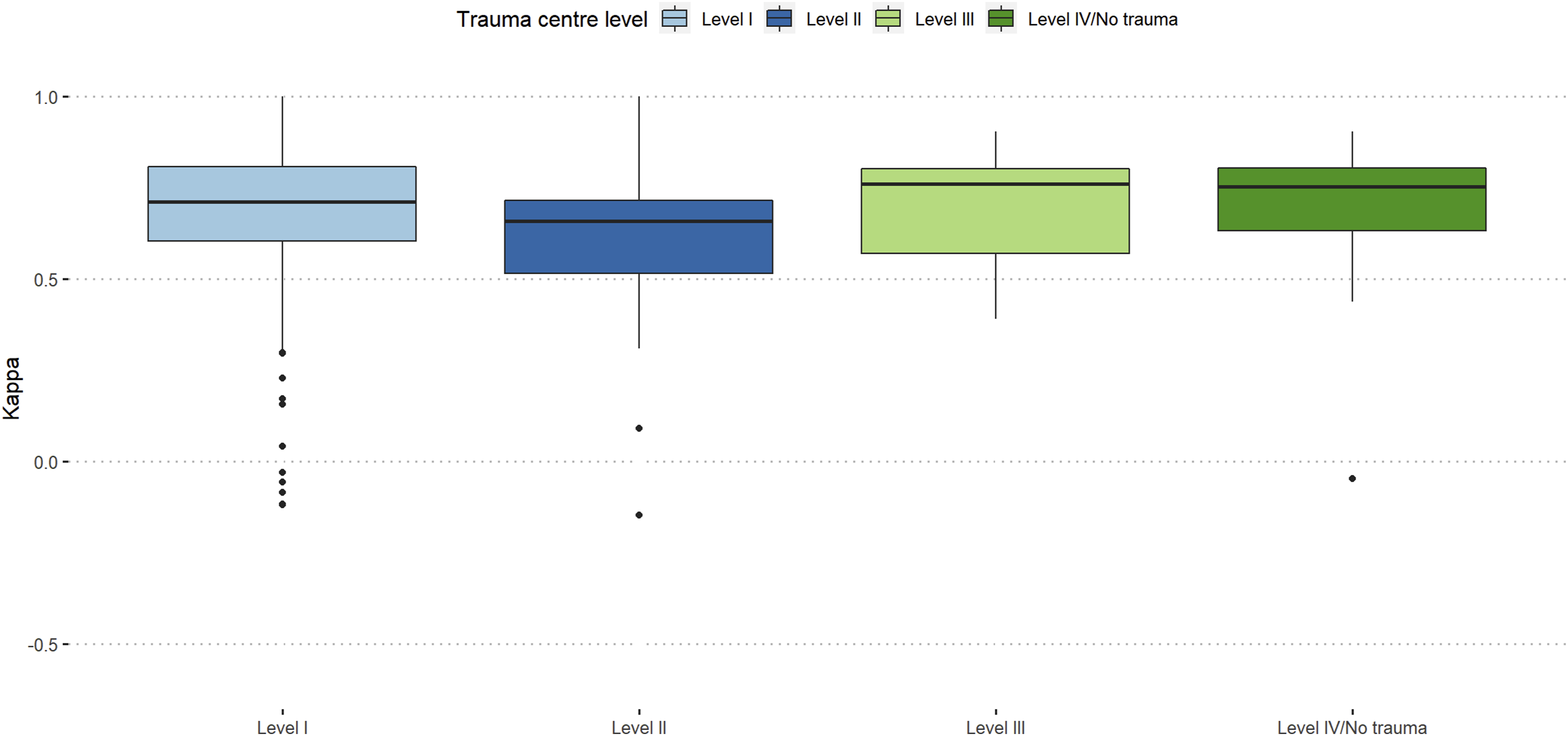

In the first assessment, participants working at a level-I trauma center showed the highest agreement (κ = 0.58), whereas participants working at a tertiary trauma center showed higher grade of agreement in the second assessment (κ = 0.66). There was a general improvement amongst trauma levels except for OF 3 fracture types amongst level-I and -II centers and level-III as well as level-IV/no trauma centers showed the highest increase of agreement compared to the first assessment.

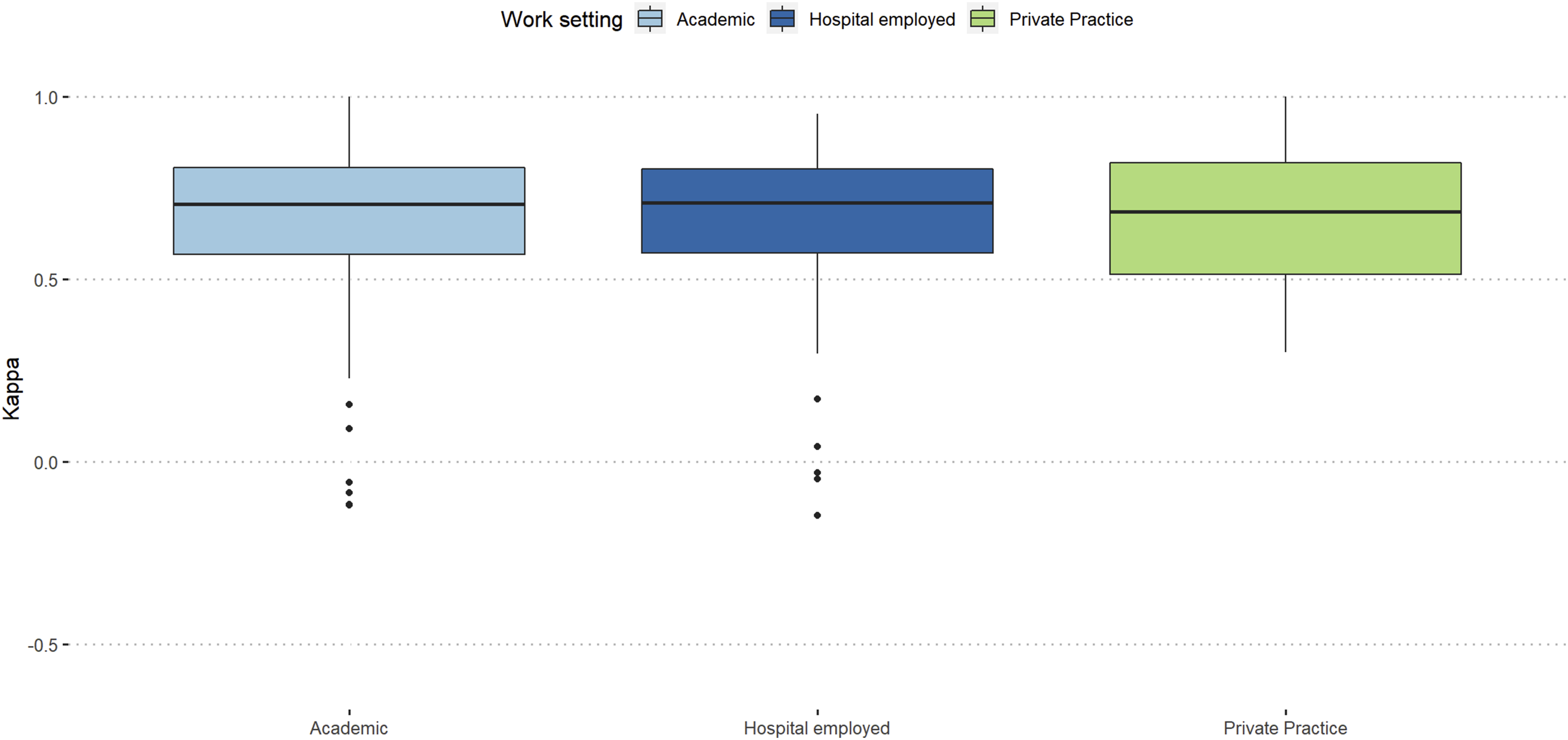

Participants working in a private practice showed the highest agreement in the first assessment (κ = 0.59), while participants working in academia showed the highest agreement in the second assessment (κ = 0.6). Surgeons from private practices showed the highest decrease in agreement between the assessments compared to surgeons with academic background and surgeons employed by a hospital.

Inter-rater Reproducibility (κ) Stratified by Time in Practice, Subspecialty, Trauma Center Level and Work-Setting; AS1 = Assessment 1; AS2 = Assessment 2; NS = Neurosurgery; OSS = Orthopaedic Spine Surgeon; Level IV and No-Trauma Centers Have Been Merged for Statistical Reasons.

Intra-Rater Reproducibility

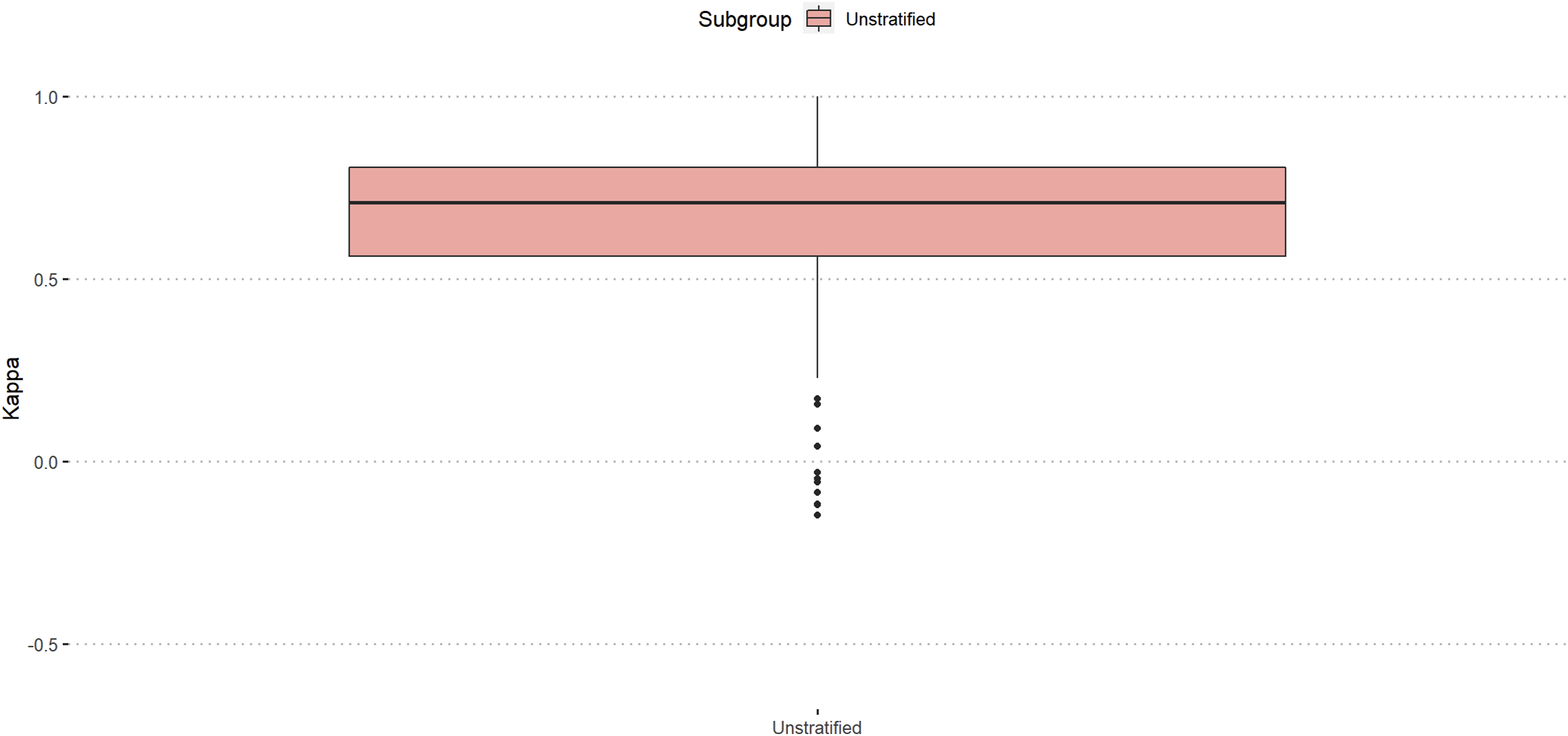

Amongst all participants (n = 234), the median intra-rater reliability was substantial (κ = 071). (Figure 2). Intra-rater reliability amongst all participants, unstratified.

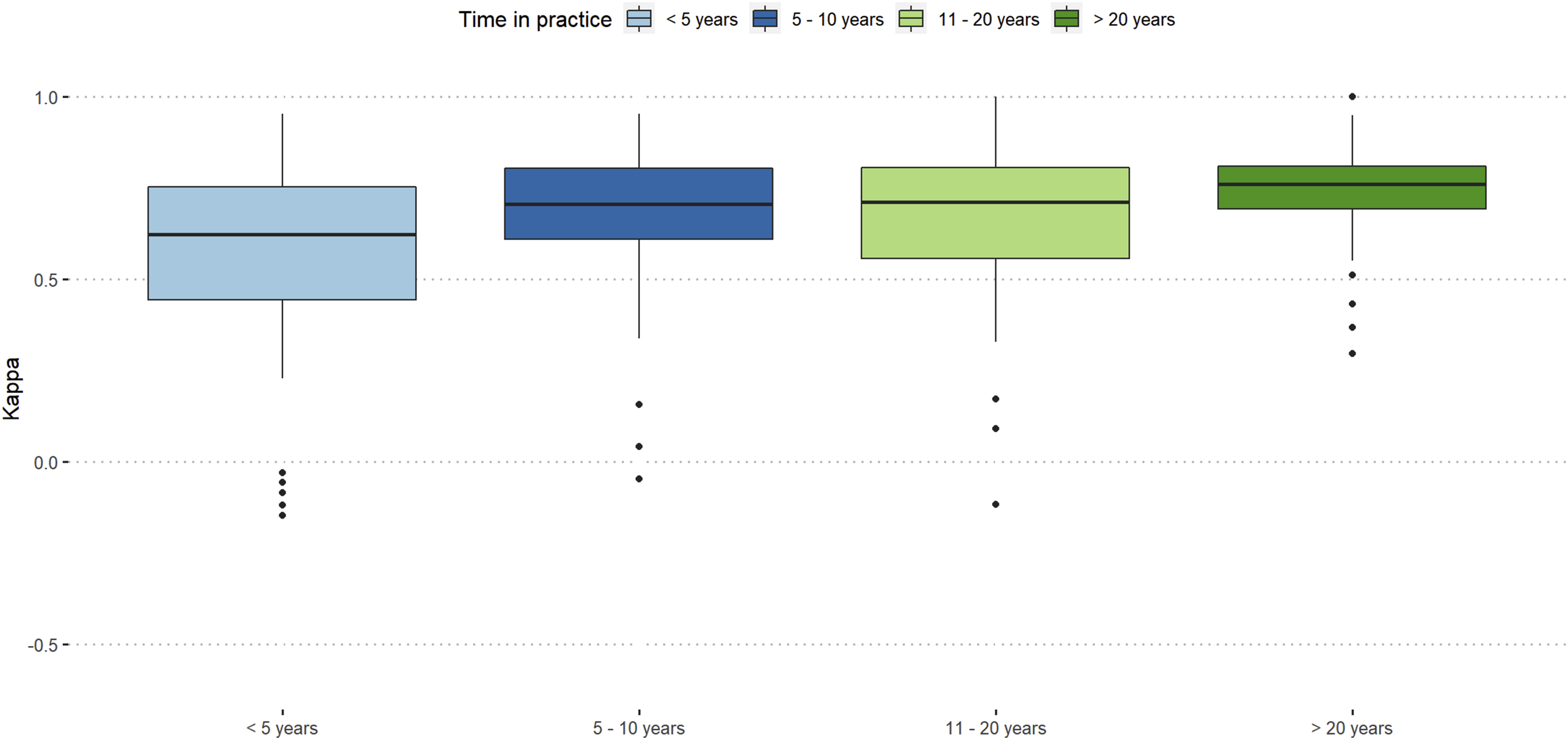

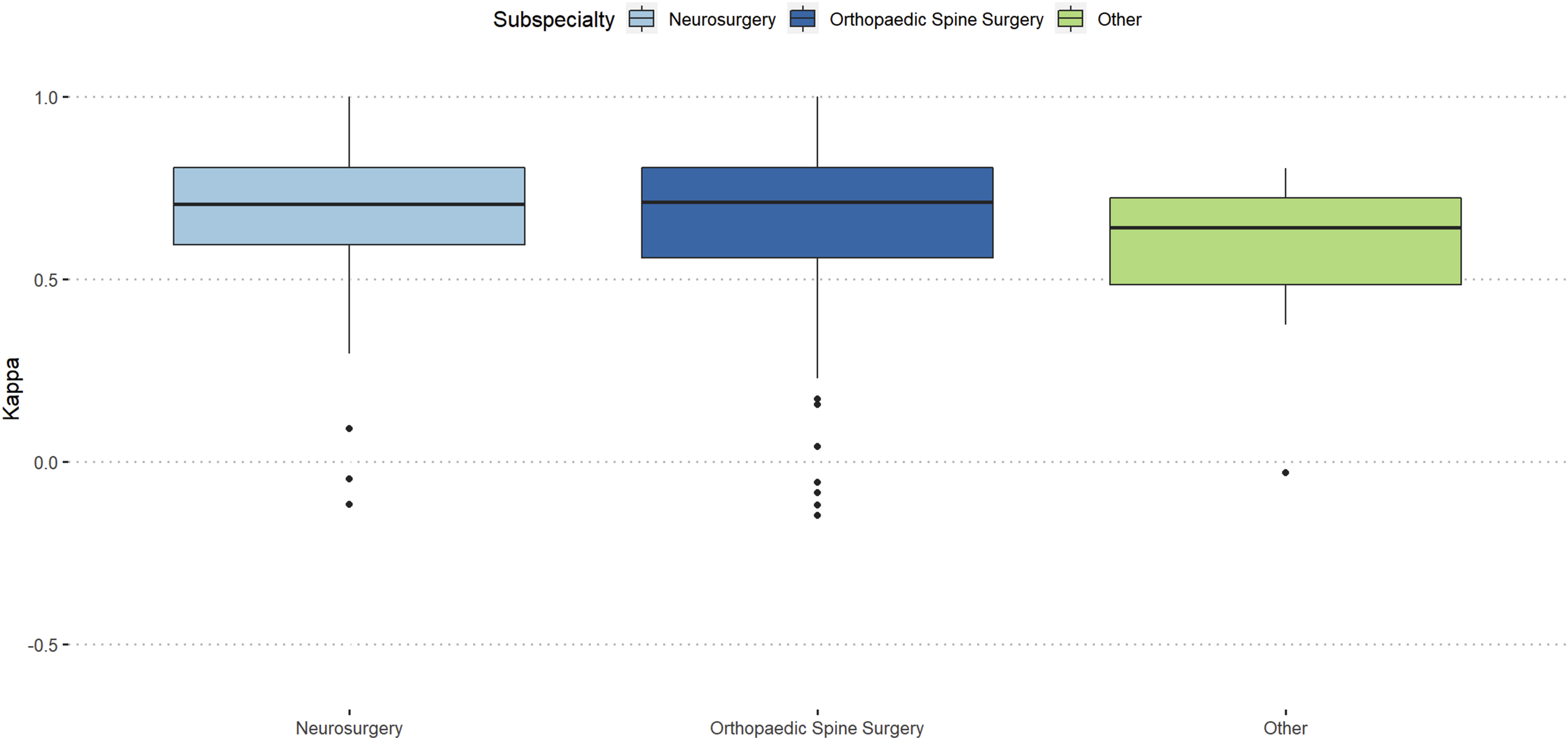

Participants with more than 20 years of work-experience showed almost excellent reproducibility (κ = 0.76) and orthopaedic spine surgeons showed slightly better intra-rater reproducibility than neurosurgeons (κ = 0.71 vs κ = 0.7). (Figures 3 and 4). Intra-rater reliability stratified by work-experience. Intra-rater reliability stratified by surgical specialty.

Participants working at level-3 trauma centers showed the best intra-rater reproducibility (κ = 0.76), and participants from academic background and hospital employed surgeons showed similar intra-rater reproducibility (κ = 0.71). (Figures 5 and 6). Intra-rater reliability stratified by trauma center level. Intra-rater reliability stratified work-setting.

Discussion

The aim of this international validation of the AO Spine-DGOU OF classification was to assess the effect of surgeon`s subspecialty, work-experience, work-setting, and level of trauma center on the reliability and reproducibility of this classification. We assessed several interesting findings.

Comparison With the Gold-Standard

In general, we found a relatively good global agreement with the gold-standard, which was slightly inferior compared to evaluations of other AO Spine classifications.16,17 This phenomenon might be explained due to the relative novelty of the OF-classification. OF-3 fractures were rated the poorest and OF-4 fractures were rated best amongst the participating raters. This might be due to the borderline-nature of OF-3 fractures, which can be either over-interpreted as OF-4 or under-interpreted as OF-2. This has been shown in previous studies assessing only fair agreement in OF-3 fractures.18,19 However, this observation is crucial for refining and enhancing classification systems in future studies and specifically re-defining the OF-3 fracture. More experienced surgeons were significantly more in agreement with the gold-standard of the OF-classification in this evaluation compared to less experienced surgeons, which is similar to the evaluation of the AO Spine Upper Cervical Spine Injury Classification System and the AO Spine sacral classification system but contrary to the evaluation of the AO Spine Thoracolumbar Spine Injury Classification System.11,12,20 The better agreement with the gold-standard in more experienced surgeons might be due to better radiological knowledge which is needed for the OF-classification and favors work-experience. This finding suggests that the implementation of early training of this classification might have a positive effect on rating success. Raters from level-3 trauma centers had a significant learning curve in between the 2 assessments and showed similar agreement with the gold-standard as level-1 trauma centers in the first assessment and even better agreement compared to level-1 centers in the second assessment. The better agreement in raters from level-1 centers might be explained due to general higher case load, however, since the OF classification is imaging based, the better agreement in level-3 centers might be due to the scarcity of available radiologists and that spine surgeons from these centers might be better trained in analyzing acquired imaging without a radiologist at site. A previous study on the reliability of the upper cervical spine injury classification has shown significantly higher agreement with the classification gold-standard in level-1 and level-2/3 trauma centers compared to level-4/no trauma centers, which we were not able to assess in this evaluation. 21 Overall, there was no significant difference regarding work-setting in this evaluation on the agreement with gold-standard, which is inconsistent with evaluation on other AO Spine classifications. 21 Further, we did not assess significant differences between the spine subspecialties regarding overall agreement with the gold-standard, which is consistent with the evaluation on the AO Spine upper cervical spine classification and the AO Spine sacral classification.12,20

Inter-Rater Agreement

Amongst all participants, the global inter-rater reliability was moderate in both assessment (first:κ = 0.57; second:κ = 0.58). The same could be shown within 2 previous studies assessing the inter-rater agreement of this classification amongst 6 surgeons in each study.18,22 Furthermore, we found that our findings are comparable to the previous international validation study of the AO Spine thoracolumbar injury classification system (κ = 0.56). 23

Amongst all participants and subgroups, fractures classified as OF-3 according to the OF classification showed moderate inter-rater agreement in both assessment (κ = 0.44; κ = 0.42), whereas OF-4 fractures produced substantial agreement (κ = 0.68; κ = 0.70) which is a similar finding as the comparison with the gold-standard. Globally, participants with less than 5 years of work-experience showed moderate inter-rater agreement in both assessments (κ = 0.45; κ = 0.47), whereas all other participants showed substantial inter-rater agreement. The best performance was observed within in the group of participants with more than 20 years experience. These findings are similar to an assessment of the AO Spine upper cervical spine classification, however, in this previous assessment, participants with more than 20 years of work experience were not able to reach the threshold of substantial inter-rater agreement at the second assessment. 12 Furthermore, our assessment showed inferior agreements compared to the AO Spine sacral classification, where throughout all experience groups, an excellent agreement was achieved. 20 In an assessment of the AO Spine thoracolumbar injury classification system, participants with more than 20 years of experience showed the poorest inter-rater agreement compared to participants with least experience, which is contrary to our findings. 11 Both, neurosurgeons and orthopaedic spine surgeons showed substantial inter-rater agreement in the second assessment (κ = 0.60), whereas other surgeons showed only fair agreement (κ = 0.37), which is a similar finding to the assessment of the AO Spine upper cervical injury classification. 12 This seems logical, since other specialties such as general surgeons are less frequently in contact with spinal injuries and therefore are in less need of classifying these injuries. However, proper training of non-spine specialties might facilitate a common language between several specialties. Interestingly, only level-1 and level-3 trauma centers showed substantial inter-rater agreement (κ = 0.61 and κ = 0.66) in the second assessment, which is partly in line with an assessment of the AO Spine upper cervical injury classification (κ = 0.66 and κ = 0.58). 21 It must be noted that this previous assessment analyzed level-2 and -3 trauma centers jointly and therefore, this data can be misleading. The reason for the better inter-rater agreement in the level-3 center group might be the same as described for the comparison to gold-standard. In this assessment, participants from an academic background showed the best inter-rater agreement in the second assessment (κ = 0.60), which is in keeping with the assessment of the AO Spine upper cervical injury classification. 21 These findings might be explained by the increased contact with scientific research and therefore classification of participants with an academic background as well as increased contact with acute trauma patients and higher patient load in general.

Intra-Rater Reliability

We assessed a substantial overall intra-rater agreement amongst all participants (κ = 0.71), which reflects the practicality of the OF classification.

Participants with a surgical experience of more than 20 years showed almost excellent reliability (κ = 0.76), which is contrary to the validation of the AO Spine upper cervical injury classification system, where the best intra-rater reliability was achieved by participants with surgical experience of less than 5 years, and also contrary to the validation of the AO Spine thoracolumbar injury classification system, where participants with an experience of 11-20 years achieved the highest reliability.11,12

Similar to the comparison to gold-standard and inter-rater agreement in this study, participants from level-3 trauma centers showed the highest intra-rater reliability (κ = 0.76) which is contrary to other AO Spine classification systems. 21

In our assessment, participants with academic background and participants who were hospital employed, showed the same reliability (κ = 0.71), which is a similar findings compared to the validation of the AO Spine upper cervical injury classification. 21

Orthopaedic spine surgeons showed a slightly better intra-rater reliability (κ = 0.71) than neurosurgeons (κ = 0.70) and others (κ = 0.64), which is in keeping with other previously validated AO Spine classifications.12,17

Limitations

This study has certain limitations. Firstly, there might be a varying degree of familiarity with the OF-classification amongst the raters which results in a classification knowledge-inequality and could result in a potential bias. Furthermore, the sole use of key-images (in some cases) or vice versa might have an impact on the rater`s response. It is likely that some of the reviewers would change their response while assessing a complete imaging file rather than solely key images. Another potential limitation is the inequal frequency of different fracture subtypes and hence, the data on rare subtypes should be interpreted with caution. Additionality, some of the presented images showed multiple fractures which might have caused confusion regarding the fracture to rate, decreasing the reliability rate. However, it was mentioned beforehand, that the most apparent fracture was to rate in order to reduce bias. Furthermore, the members of the AO Spine Knowledge Forum Trauma might have varying degrees of knowledge on interpreting different imaging modalities and familiarity with the presented classification since not all members were involved in the development process, which could result in an inhomogeneous group of raters and could have a negative effect on the inter-rater agreement. Standardized training on imaging and of the OF-classification could limit these potential biases. Further international validation with a larger, more diverse group of surgeons is needed.

Conclusions

Longer work experience was associated with higher inter-rater agreement and intra-rater reliability. This finding may suggest implementing training of the assessed classification in the early stages of the surgical career. Surgical subspecialties other than orthopaedic spine surgeons and neurosurgeons showed inferior ratings, which highlights the necessity of teaching this new classification amongst other none-spine specialties. Level-1 and Level-3 trauma centers showed good results in both, inter-rater agreement, and intra-rater reproducibility. Overall, the AO Spine-DGOU Osteoporotic Fracture Classification showed moderate to substantial inter-rater agreement as well as intra-rater reproducibility regardless of work-setting, surgical experience, level of trauma center and surgical specialty.

Supplemental Material

Supplemental Material - Validation of the AOSpine-DGOU Osteoporotic Fracture Classification – Effect of Surgical Experience, Surgical Specialty, Work-Setting and Trauma Center Level on Reliability and Reproducibility

Supplemental Material for Validation of the AOSpine-DGOU Osteoporotic Fracture Classification – Effect of Surgical Experience, Surgical Specialty, Work-Setting and Trauma Center Level on Reliability and Reproducibility by Julian Scherer, Sebastian Frederick Bigdon, Gaston Camino-Willhuber, Ulrich Spiegl, Andrei Fernandes Joaquim, Harvinder Singh Chhabra, Marcel Dvorak, Gregory Schroeder, Mohammad El-Sharkawi, Richard Bransford, Lorin Michael Benneker, Klaus John Schnake, and on behalf of the AO Spine-DGOU international validation Group in Global Spine Journal

Footnotes

Acknowledgments

The authors of the manuscript would like to thank Olesja Hazenbiller for her assistance in developing the methodology and providing support during the validation. The authors of the manuscript would like to thank Dr Stefan Bentink, Director Data Science and Diagnostics from Staburo GmbH for his assistance with the statistical analysis. We also would like to extend our gratitude to the Osteoporotic Fracture working group of the German Society for Orthopaedics and Trauma (DGOU) for their first draft of the classification system.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was organized and funded by AO Spine through the AO Spine Knowledge Forum Trauma, a focused group of international Trauma experts. AO Spine is a clinical division of the AO Foundation, which is an independent medically-guided not-for-profit organization. Study support was provided directly through AO Network Clinical Research.

AO Spine-DGOU International Validation Group

Maximo-Alberto Diez-Ullloa, Marco Burkhard, Mahmoud, Haribabu Subramaniam, Alsammak Wael, Panneerselvam Keerthivasan, Luis Maria Romero-Munoz, Sokol Trungu, Sebastian F Bigdon, Chee Huan Pan, Banerjee Purnajyoti, Kumar Sharma, Mantu Jain, Ehab Shiban, Risto Todorov, Adetunji Toluse, Juliano Chaves, Maria Celeste Zamora, Satish Muthu, Roberto Berebichez-Fridman, Miltiadis Georgiopoulos, Eduardo Laos, Waeel Hamouda, Hüseyin Sina Coşkun, Margetis Konstantinos, Jaime Segura, Sung-Joo Yuh, Davide Bizzoca, Adesh Shrivastava, Valentin Neuhaus, Nasser Khan, Mengistu G Mengesha, Elena Virginia Colombo, Jagadish Thapa, Michal Ziga, Pragnesh Bhatt, Rohan Gala, Robert Huang, Benito Dario, Arshad Ayesha, Ateef Ataulla Khan, Manjul Tripathi, Luís Marques, Ataollah Shahbandi, Harsh Deora, Luigi Pansini, Arne Mehrkens, Paloma Bas, Federico Sartor, Juan Lourido, Bishnu Dev Sharma, Marcello Barbato, Ricky Rasschaert, Inan Uzunoglu, Mahmoud Alkharsawi, Sergio Zylbersztejn, Dimitrios Ntourantonis, Ikwutah Emmanuel, Parmenion Tsitsopoulos, Selim Ayhan, Francisco Verdu-Lopez, Konstantinos Gousias, Luis Miguel Duchén, Woon Tak Yuh, Nader Hejrati, Peramunugamage Dilanka, Falethu Sukati, Omar Elwardany, Alexander Durst, Rafid Al-Mahfoudh, Eugen Cezar Popescu, Yasunori Sorimachi, Manoj Khatri, André Barros, Konstantinos Sidiropoulos, Reuben Soh, Jose-Carlos Sauri-Barraza, Sayid omar Mohamed, Murray Echt, Andrea Redaelli, Guilherme Godoy, Stipe Corluka, Senan Faruk, Paulo Pereira, David Dalton, Paul Thorpe, Anubhav Amin, Prasad Shetty Ajoy, Tarun Suri, Ratko Yurac, Petr Vachata, Lukas Dominik Burger, Wongthawat Liawrungrueang, Ahmed Hassan, Abduljabbar Alhammoud, Vijay Kumar Loya, Leon-Gordian Koepke, Madhivanan Karthigeyan, Martin Nikolaus Stienen, Bernhard Ullrich, Dave Anthony Dizon, Ahmad Nigad, Mark Murerwa, Erkin Sönmez, Nicolas Lauper, Salvatore Russo, Peter Ferlic, Mohammed Riaz, Michele Parolin, Lady Lozano Cari, Etxeberria Igone, Dilip Gopalakrishnan, Joost Rutges, Richard A Lindtner, Joseph Asuquo, Vito Fiorenza, Peter Loughenbury, Pumibal Wetpiriyakul, Jarkko Halme, Emiliano Vialle, Mario Ganau, Derek Cawley, Juan Gabriel Limpin De Leon, Michel Triffaux, Eran Steinberg, Sanjay Hajare Swapnil, Alvin Pun, Peter Obid, Lincoln Liu, Monsef Jad Bou, Babak Shariati, Nilay Chhasatia, Mohammed Imran, Akash Mohapatra, Sultan Alkalbani, Claudio Lamartina, Omar Marroquin-Herrera, Ralph T. Schär, Giorgio Lofrese, Muñoz M Juan, Shaffaf Kareem, Shah Waliullah, Mateusz M. Weclewicz, Kim-Soon Oh, Mohamed A.R. Soliman, Madhan Jeyaraman, Arulkumar Nallakumarasamy, Antonio Krieger, Stefano Carnesecchi, Charles A Carazzo, Alessandro Ramieri, Pradeep Kafle, Kiran Gurung, Fergus McCabe, Irfan Shujah, Rakesh Kumar, Konstantinos Paterakis, Stacey Darwish, Alecio Barcelos, Kyaw Linn Linn, James Bernatz, Zdenek Klezl, Shukla Devansh, FERAS QUMQUMJI, Elma Suljagic-Ljubovic, Hector Aceituno, Ramy Sherif, Daniel Cruz, Barry Kweh, Kamil Krzysztof Siedlecki, Ugochukwu Uchendu, Javier Altamirano, Najib El Tecle, Takeshi Aoyama, Martin Tejeda, Ravi Sreenivasan, Amitava Biswas, Juan A Uribe, Gumar Davletgaleev, Julian Scherer, Joshua Decruz, PS Khoh, Ivan Marintschev, Chumpon Jetjumnong, Stavridis Stavros I, Paula Valerie Ter Wengel, Pathinathan Kalaventhan, Manuel Tavares de Matos, Jeasson Javier Pérez Rios, Pasquale Donnarumma, Udit Agrawal, Girinivasan Chellamuthu, Yu-Mi Ryang, Christogeorgos Paris, Dan Schneider, Daniel Sauer, Nicola Montemurro, Aravind Ravichandran, Mohammad Elbaroody, Gerardo Zambito-Brondo, Ousmane Toumany Cherif Haidara, Hossein Elgafy, Lykourgos Kollintzas, Koopong Siribumrungwong, Hitesh Dabasia, Pedro Kalo, Sergey Mlyavykh, Philipp Schleicher, Oscar Garcia-Casas, Mike Achor, Nikolaos Gkantsinikoudis, Darko Perovic, Ahmed Ezzat, Giuseppe Francesco Papalia, Zion Hwang, Michael S Hisey, Pedro Luis Bazán, Lukas Grassner, Federico Landriel, Youheng Ou Yang, Zahid Rehan, Hugo Marcelo Wirz, Samy Samer, Victor Martin-Gorgojo, Alon Grundshtein, Dana Niry, Santiago Hem, Thami Benzakour, Raju Reddycherla Naga, Hansdieter Neumann.

ORCID iDs

Data Availability Statement

Data is available upon reasonable request towards the corresponding author.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.