Abstract

Study Design

Systematic review.

Objective

Artificial intelligence (AI) and deep learning (DL) models have recently emerged as tools to improve fracture detection, mainly through imaging modalities such as computed tomography (CT) and radiographs. This systematic review evaluates the diagnostic performance of AI and DL models in detecting cervical spine fractures and assesses their potential role in clinical practice.

Methods

A systematic search of PubMed/Medline, Embase, Scopus, and Web of Science was conducted for studies published between January 2000 and July 2024. Studies that evaluated AI models for cervical spine fracture detection were included. Diagnostic performance metrics were extracted and included sensitivity, specificity, accuracy, and area under the curve. The PROBAST tool assessed bias, and PRISMA criteria were used for study selection and reporting.

Results

Eleven studies published between 2021 and 2024 were included in the review. AI models demonstrated variable performance, with sensitivity ranging from 54.9% to 100% and specificity from 72% to 98.6%. Models applied to CT imaging generally outperformed those applied to radiographs, with convolutional neural networks (CNN) and advanced architectures such as MobileNetV2 and Vision Transformer (ViT) achieving the highest accuracy. However, most studies lacked external validation, raising concerns about the generalizability of their findings.

Conclusions

AI and DL models show significant potential in improving fracture detection, particularly in CT imaging. While these models offer high diagnostic accuracy, further validation and refinement are necessary before they can be widely integrated into clinical practice. AI should complement, rather than replace, human expertise in diagnostic workflows.

Keywords

Introduction

Cervical spine fractures are critical injuries that can result in significant morbidity and mortality.1,2 Clinical practice of the accurate and timely detection of these fractures is essential to prevent complications such as spinal cord injury, paralysis, or death. 3 Radiological imaging, particularly computed tomography (CT) and radiographs, plays a pivotal role in diagnosing cervical spine fractures. However, the complexity of cervical spine anatomy, coupled with subtle fracture patterns, can make detection challenging, even for experienced radiologists. 4 In recent years, artificial intelligence (AI) and deep learning (DL) have emerged as promising tools to enhance diagnostic accuracy and reduce human error in medical imaging.5,6

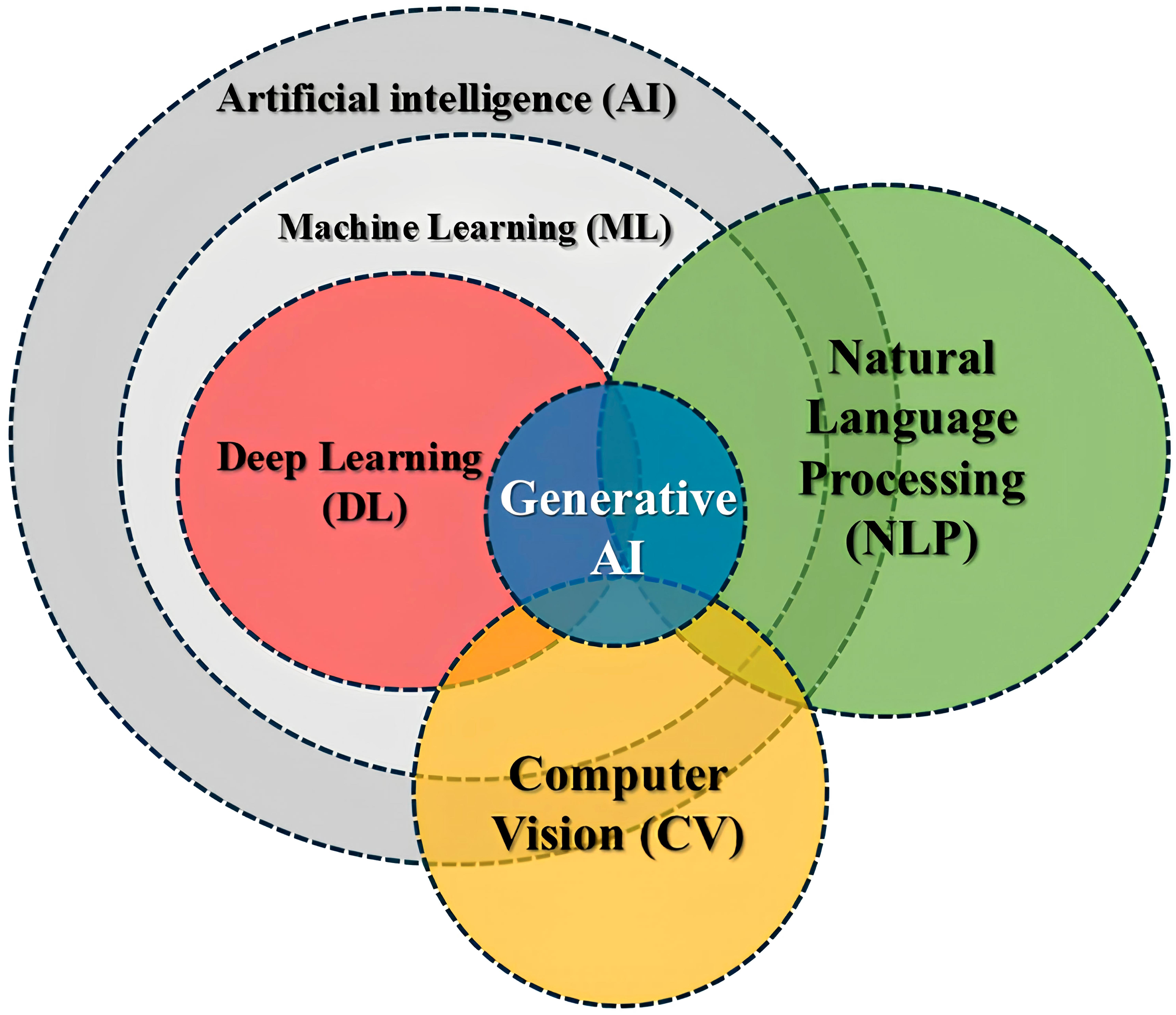

Deep learning, a subset of machine learning, involves training convolutional neural networks (CNNs) and other advanced models to automatically learn features from large datasets (Figure 1). These models have demonstrated remarkable success in image classification tasks across various medical fields, including radiology.

7

The application of AI models, specifically CNNs, in cervical spine fracture detection has shown a potential to improve diagnostic accuracy, streamline workflows, and assist in clinical decision-making. Despite these advancements, there remains variability in the performance of AI models across different datasets, imaging modalities, and clinical settings, highlighting the need for systematic evaluation.8,9 Synergistic relationships: AI is the overarching field, ML provides foundational learning algorithms within AI, DL enhances ML with deep neural networks, NLP and CV apply ML/DL to language and vision, and generative AI uses DL to create new information.

This systematic review aims to comprehensively analyse the current literature on using AI and deep learning models for cervical spine fracture detection. By evaluating these models’ diagnostic accuracy, object detection performance, and clinical applicability, we seek to elucidate their strengths, limitations, and potential for integration into routine clinical practice. Additionally, we will address the challenges associated with adopting AI in this domain, including the need for external validation, standardized evaluation metrics, and larger datasets to ensure robust model performance across diverse patient populations.

Material and Methods

Literature Search Strategy

A systematic search of four databases included PubMed/Medline, Embase, Scopus, and Web of Science. It was conducted to identify studies on applying AI and DL models for detecting cervical spine fractures. The search strategy included a combination of Medical Subject Headings (MeSH) terms and keywords, such as “artificial intelligence,” “deep learning,” “cervical spine fracture,” “fracture detection,” and “diagnostic accuracy.” Boolean operators (AND, OR) were applied to refine the search, and reference lists of relevant articles were manually screened for additional studies. The literature search from January 2000 to July 2024 adhered to the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guideline. 10

Study Selection

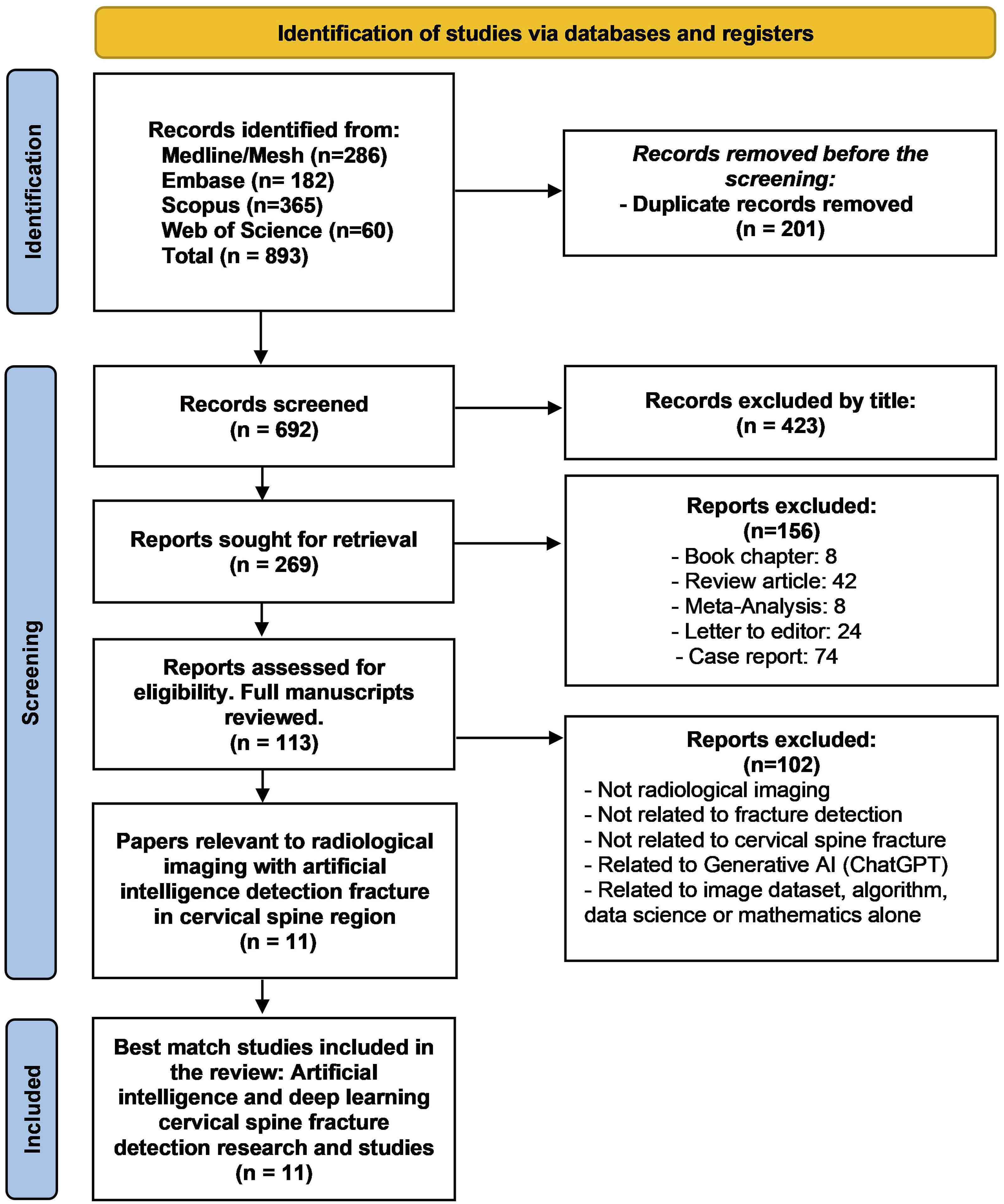

The initial search yielded 893 records (Medline/Mesh: 286, Embase: 182, Scopus: 365, Web of Science: 60). After removing 201 duplicate records, 692 studies were screened based on titles and abstracts. A total of 423 records were excluded, leaving 269 reports for further evaluation. These reports were reviewed for eligibility, and 113 full-text articles were assessed. Studies were excluded if they did not meet the following inclusion criteria: The population consisted of patients with suspected or confirmed cervical spine fractures. The intervention used AI or DL models for cervical spine fracture detection. The performance of AI models compared to radiologists or expert labels. The outcome was diagnostic accuracy metrics. The study design was a diagnosis study, prospective, retrospective and randomised controlled trials studies evaluating the performance of AI models. The excluded were those that did not involve cervical spine fracture detection, discussed generative AI models (ChatGPT) unrelated to radiology and focused solely on image dataset algorithms, data science, or mathematical models. Studies excluded from the review were case reports, reviews, meta-analyses, and studies without available abstracts or full texts. Non-English language articles were also excluded. Two reviewers conducted the study selection process independently, with discrepancies resolved through discussion and, if necessary, by consultation with a third reviewer. The inclusion and exclusion criteria were included in this systematic review. The study selection process is visualized in the PRISMA flow diagram.

Data Extraction

Data were extracted by two independent reviewers using a standardized form. The data included: Study characteristics: Author, year, country of origin, sample size, and population demographics. Imaging modality: CT, plain radiograph x-ray. AI model type: Models such as CNN, YOLO, Vision Transformer (ViT), and others. Performance metrics: sensitivity, specificity, accuracy, recall, F-Score, predictability and AUC. Any discrepancies between reviewers were resolved through discussion or by consulting a third reviewer.

Risk of Bias Assessment

The quality of included studies was assessed using the Prediction Model Risk of Bias Assessment Tool (PROBAST).11,12 The PROBAST tool evaluated the risk of bias across four domains: participants, predictors, outcomes, and analysis. Each study was rated as having low, high, or unclear risk of bias for each domain. The risk of bias assessment was conducted independently by two reviewers, with discrepancies resolved through discussion or, when necessary, by involving a third reviewer.

Results

Study Selection

The literature search yielded a total of 893 records from four databases: Medline/Mesh (286), Embase (182), Scopus (365), and Web of Science (60). After removing 201 duplicate records, 692 studies remained for screening. Title and abstract screening excluded 423 articles due to irrelevance, leaving 269 studies for full-text review. Following the application of inclusion and exclusion criteria, 113 full manuscripts were further assessed for eligibility, and 102 studies were excluded for reasons such as a focus on non-radiological imaging, lack of relevance to cervical spine fractures, or dealing with generative AI models unrelated to radiological diagnosis. A total of 11 studies were included in this systematic review. The selection process is shown in the PRISMA flow diagram (Figure 2). Preferred reporting items for systematic reviews and meta-analyses (PRISMA) flow diagram in this systematic study.

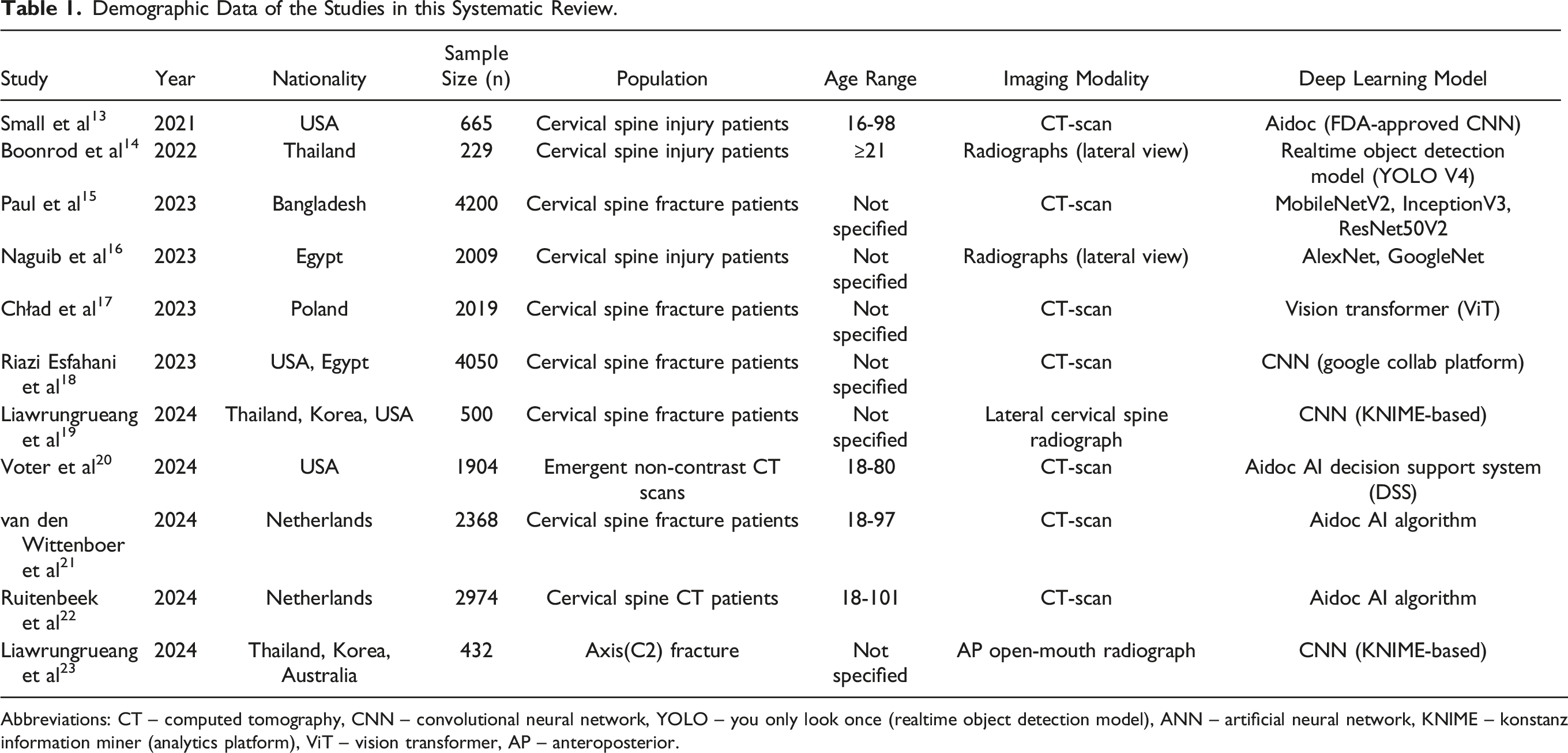

Study Characteristics

Demographic Data of the Studies in this Systematic Review.

Abbreviations: CT – computed tomography, CNN – convolutional neural network, YOLO – you only look once (realtime object detection model), ANN – artificial neural network, KNIME – konstanz information miner (analytics platform), ViT – vision transformer, AP – anteroposterior.

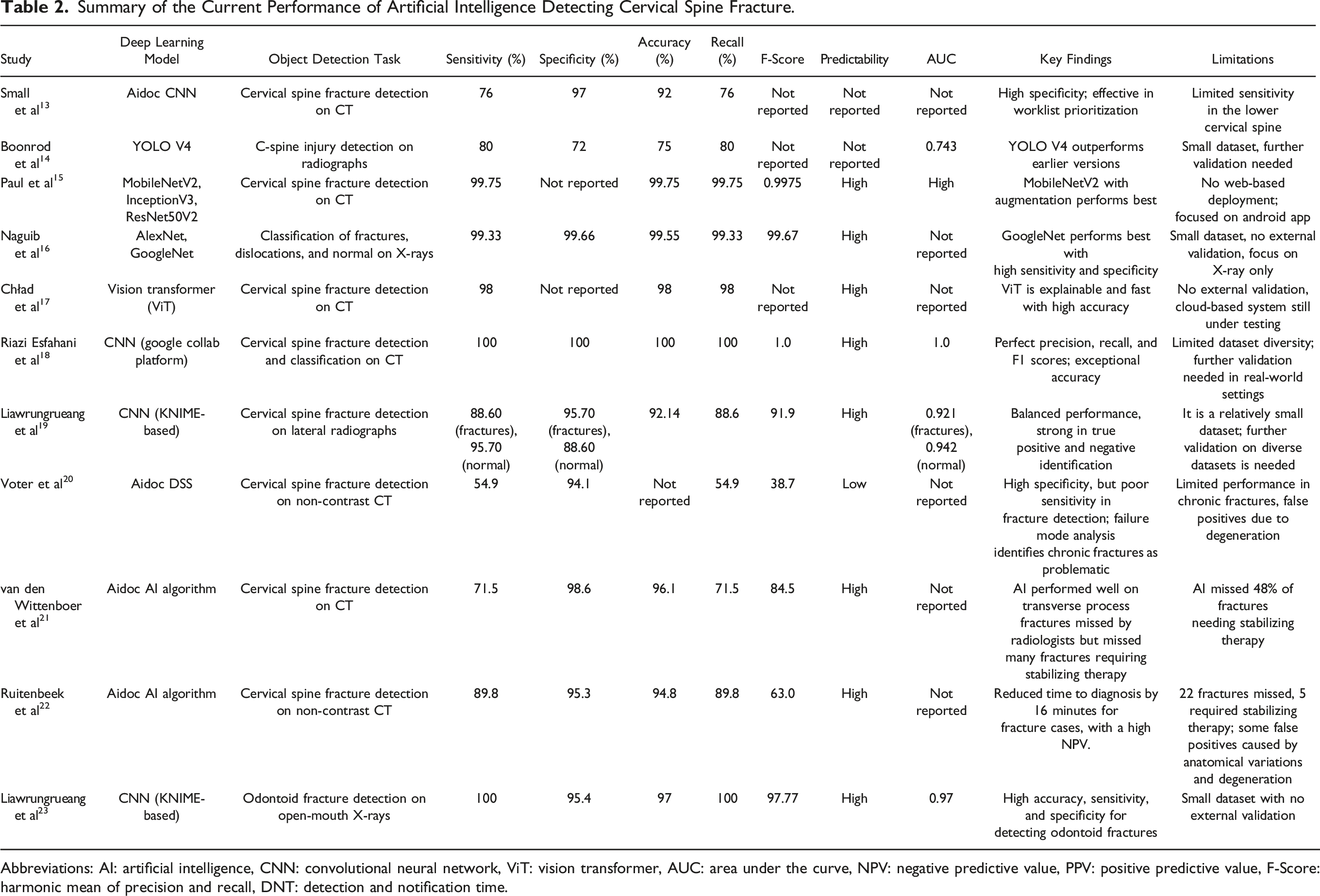

Performance of AI Models

Summary of the Current Performance of Artificial Intelligence Detecting Cervical Spine Fracture.

Abbreviations: AI: artificial intelligence, CNN: convolutional neural network, ViT: vision transformer, AUC: area under the curve, NPV: negative predictive value, PPV: positive predictive value, F-Score: harmonic mean of precision and recall, DNT: detection and notification time.

Sensitivity

Sensitivity, a measure of the model’s ability to correctly identify fractures, ranged widely from 54.9% to 100%. Aidoc DSS showed the lowest sensitivity, underperforming in identifying fractures on non-contrast CT scans, particularly in chronic cases and fractures with complex anatomical variations. Conversely, CNN-based models for CT imaging and odontoid fracture detection achieved 100% sensitivity, showcasing their ability to identify all positive cases without missing fractures. Advanced architectures like GoogleNet and MobileNetV2 consistently reported sensitivity values above 98%, demonstrating their robustness for detecting a variety of cervical spine injuries.

Specificity

Specificity, which measures the ability to correctly identify non-fracture cases, was uniformly high across studies, ranging from 72% (YOLO V4) to 98.6% (AIDOC Medical AI). Models such as AIDOC Medical AI excelled in avoiding false positives, particularly for CT-based fracture detection, while YOLO V4, used on radiographs, faced challenges with specificity due to limited dataset diversity and radiographic artifacts.

Accuracy

Overall accuracy values ranged from 75% to 100%, with models like YOLO V4 at the lower end, reflecting its limited performance on radiographs, and CNN-based approaches achieving perfect scores on CT imaging. Models like MobileNetV2 and Vision Transformers consistently delivered accuracy above 98%, demonstrating their capability to generalize effectively across diverse datasets and imaging scenarios.

Recall

Recall, synonymous with sensitivity in this context, varied similarly across studies. High recall values were evident in top-performing models like GoogleNet, MobileNetV2, and CNNs trained for specific fracture detection tasks, indicating their reliability in minimizing false negatives.

F-Score

F-Score, the harmonic mean of precision and recall, offered a balanced perspective on model performance. Models like CNN-based approaches for odontoid fracture detection achieved F-Scores as high as 97.77, reflecting their ability to maintain strong performance across both precision and recall metrics. This is critical for clinical applications where both true positive identification and minimizing false positives are essential.

Predictability

Most studies reported high predictability, indicating consistent model performance across datasets. Models like MobileNetV2 and GoogleNet demonstrated strong generalizability, crucial for deployment in clinical settings where robustness across diverse patient populations is required.

AUC

AUC values, which measure the overall discriminative ability of the models, ranged up to 1.0 in CNN-based studies, highlighting their capacity to differentiate between fracture and non-fracture cases effectively. This metric underscores the potential of AI tools to support clinical decision-making by reducing diagnostic uncertainty.

CT vs Radiographs

AI models applied to CT scans consistently outperformed radiographs with higher accuracy, sensitivity, and specificity. The detailed anatomical imaging provided by CT likely contributed to these better outcomes. This was particularly evident in studies like Riazi Esfahani et al 18 and Paul et al, 15 where CT-based models achieved near-perfect diagnostic performance.

Model Variability

The review found significant variability in the performance of different AI models. While some models, such as MobileNetV2 and ViT, demonstrated strong performance metrics, others, such as YOLO V4, had lower specificity and struggled with the complexity of detecting fractures in lateral radiographs. This variability suggests that the choice of model architecture and training dataset greatly influences performance.

Training Dataset Size and Quality

Studies with larger datasets, such as those by Paul et al 15 with 4200 patients and Riazi Esfahani et al 18 with 4050 patients, reported better performance metrics across all evaluation criteria. In contrast, studies with smaller sample sizes, such as Boonrod et al 14 with 229 participants, showed reduced performance, emphasizing the importance of large, diverse datasets for training AI models.

Clinical Implications

Several studies, including Voter et al 20 and van den Wittenboer et al, 21 highlighted the potential for AI models to assist radiologists in fracture detection, particularly in high-volume trauma settings. These models can prioritize urgent cases and reduce diagnostic delays. However, concerns remain about the clinical deployment of these models, especially those with lower sensitivity.

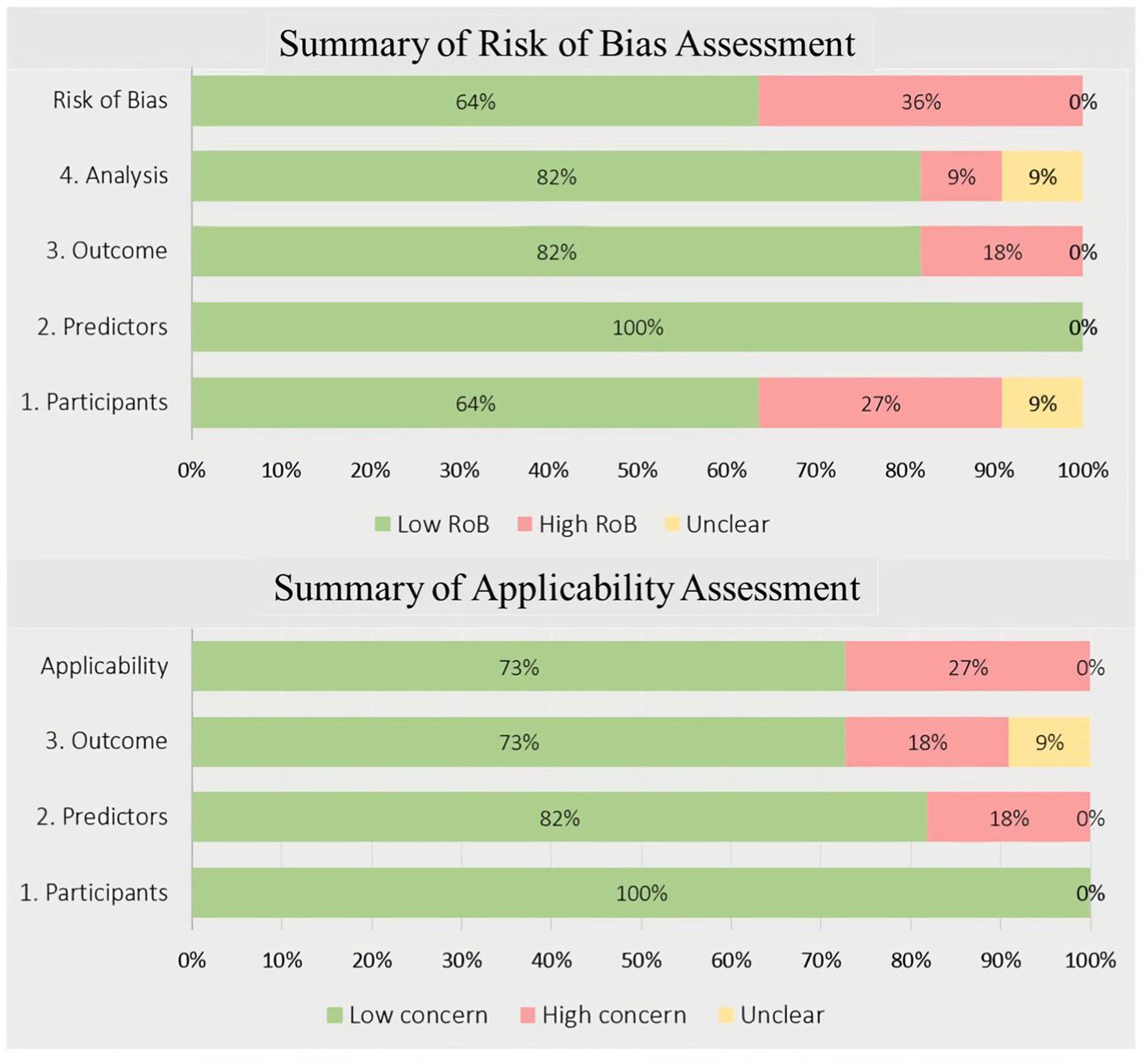

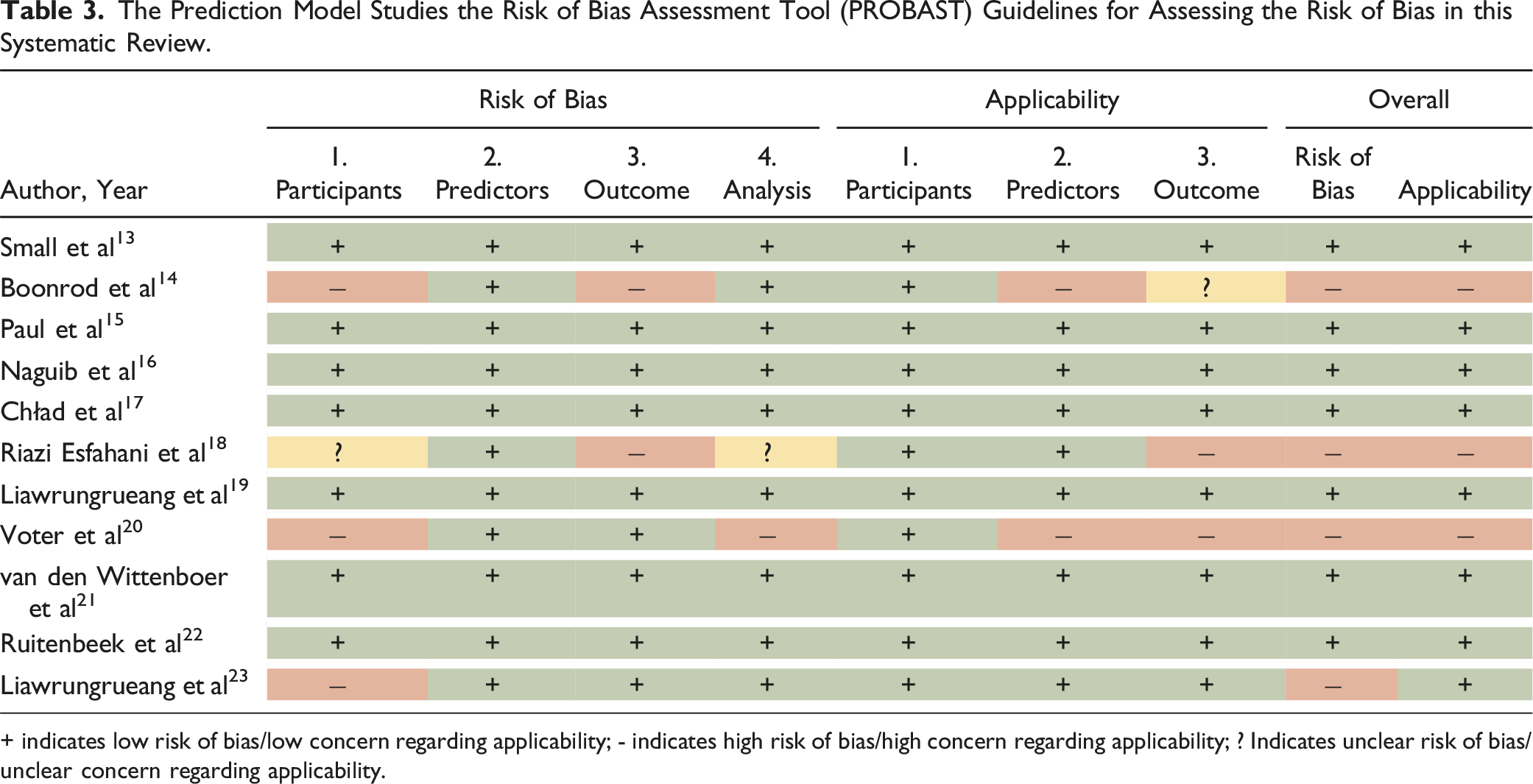

Risk of Bias

The risk of bias was assessed using the PROBAST tool.11,12 Most studies were rated as having a low risk of bias. However, three studies (Boonrod et al,

14

Voter et al,

20

and Riazi Esfahani et al

18

) exhibited a higher risk of bias in participant selection and predictor domains. These studies used small datasets or datasets lacking diversity, which may limit the generalizability of their findings. Boonrod et al

14

relied heavily on a single-site dataset, while Voter et al

20

focused on emergent CT scans, which may not fully represent the broader patient population. Results from the PROBAST assessment are presented in Figure 3 and Table 3. Summary of the risk of bias assessment and applicability using prediction model risk of bias assessment tool (PROBAST). The Prediction Model Studies the Risk of Bias Assessment Tool (PROBAST) Guidelines for Assessing the Risk of Bias in this Systematic Review. + indicates low risk of bias/low concern regarding applicability; - indicates high risk of bias/high concern regarding applicability; ? Indicates unclear risk of bias/unclear concern regarding applicability.

Discussion

This systematic review assesses the diagnostic performance of AI and DL models in detecting cervical spine fractures based on 11 studies published between 2021 and 2024. These studies demonstrated significant variation in diagnostic outcomes, with sensitivity ranging from 54.9% to 100% and specificity between 72% and 98.6%. The review highlights that AI models applied to CT imaging generally performed better than those applied to radiographs, likely due to the superior anatomical detail provided by CT imaging. The superior performance of CNN and more advanced models like MobileNetV 24 and ViT 25 demonstrates that AI has the potential to improve diagnostic accuracy and efficiency in clinical settings significantly. Studies with larger datasets, such as Paul et al 15 and Riazi Esfahani et al, 18 reported near-perfect accuracy and sensitivity, highlighting the importance of comprehensive and diverse training datasets in optimizing model performance. However, variability in model outcomes across studies indicates the need for further refinement, particularly in terms of external validation and standardized evaluation metrics, to ensure the generalizability and reliability of these models in clinical practice.

AI integration into clinical workflows offers several potential advantages. In particular, the application of AI in radiology can augment diagnostic processes by providing automated decision support, improving diagnostic speed, and reducing human error. One of the most immediate and impactful ways AI can be integrated into clinical practice is through worklist prioritisation. AI models, such as the Aidoc AI Decision Support System (DSS), 26 can be embedded in radiology information systems (RIS) and picture archiving and communication systems (PACS) to triage cases based on the likelihood of fractures. 27 This approach allows urgent cases to be flagged for immediate review, improving the efficiency of radiological workflows, especially in high-volume trauma centers where quick identification of fractures is critical. Another key area for AI integration is real-time decision support. AI models can provide instant feedback to radiologists by highlighting regions of interest (ROIs) or suspicious areas on imaging scans. This serves as a second check, helping radiologists focus on potential fracture sites. This assistance is particularly valuable in complex cases, where subtle fractures may be easily overcome, or in high-stress environments such as emergency departments, where rapid decision-making is paramount. Integrating AI directly into PACS would minimize workflow disruptions by allowing radiologists to interact with AI-driven insights without needing to switch between different systems.

AI can also play an essential role in training and education. Radiology trainees and early-career clinicians could benefit from AI-assisted tools that provide instant feedback on image interpretation. AI models could help trainees learn to identify subtle fracture patterns, especially in less commonly encountered cases. This could be particularly valuable in low-resource settings or hospitals with limited access to advanced imaging modalities like CT or MRI, where clinicians might rely more heavily on radiographs. 28 AI can help bridge the gap between experienced and less experienced radiologists by continuously learning from diverse datasets and improving over time as more data are fed into these models. Another promising area for AI integration is interdisciplinary use. AI can assist radiologists and other clinicians involved in trauma care, such as emergency room physicians, trauma surgeons, and orthopaedic specialists. For instance, non-radiologists could use AI models as preliminary screening tools to identify patients at high risk of cervical spine fractures in emergency situations. This is particularly valuable in settings where access to radiologists may be delayed, such as rural or under-resourced hospitals. By providing AI-driven preliminary assessments, these models could assist in triaging patients and guiding immediate clinical decision-making before radiological confirmation is available.

The type of study is critical for optimizing AI performance in fracture detection. Prospective, multi-center studies using large, diverse datasets are essential for ensuring that AI models can generalize across varied patient populations and clinical settings. Thus, the AI research detection of the fracture should focus on larger, more diverse datasets, particularly those from multi-center studies, to improve the reliability. The importance of CT scans, which consistently demonstrate superior performance compared to radiographs in fracture detection. The detailed anatomical information provided by CT imaging allows for more accurate and reliable fracture identification, making it the preferred modality for AI-based fracture detection in cervical spine trauma.

AI to be fully integrated into routine clinical practice, there are important considerations regarding regulatory approval and ethical deployment. AI systems that influence clinical decision-making must undergo rigorous validation and be approved by regulatory agencies such as the U.S. Food and Drug Administration (FDA) or the European Medicines Agency (EMA). 29 The development and deployment of AI systems must be transparent, ensuring that they are explainable to clinicians and that their decision-making processes are interpretable. One concern with AI is the “black box” problem, where the underlying reasoning behind AI outputs is unclear. 30 Clinicians must be able to trust and understand the outputs provided by AI models to incorporate them into their practice confidently. In addition to these considerations, AI models should be continuously monitored and updated to ensure ongoing accuracy and relevance. Models trained on historical datasets may become outdated as new diagnostic criteria, imaging techniques, or population health trends emerge. Establishing a feedback loop that allows AI systems to learn from newly acquired data can improve the long-term effectiveness of these tools. Furthermore, AI integration should include mechanisms for real-time performance monitoring, ensuring that these systems maintain high diagnostic accuracy as they encounter new and diverse patient populations.

Despite these promising developments, AI models are not ready to fully replace radiologists. The variability in model performance, particularly in cases where sensitivity is suboptimal, such as the Aidoc AI Decision Support System’s (DSS) lower sensitivity for detecting chronic fractures, highlights the need for further refinement before these models can be deployed confidently in routine clinical practice. 26 Several limitations were identified in the studies included in this review. Most studies were retrospective which may introduce selection bias and limit the real-world applicability of AI models. Additionally, a lack of external validation was noted in several studies, such as Boonrod et al 14 and Liawrungrueang et al, 23 raising concerns about the generalizability of these models to broader patient populations. Furthermore, many included studies relied on single-centre datasets, which may not adequately represent the diversity of imaging conditions in everyday clinical practice.

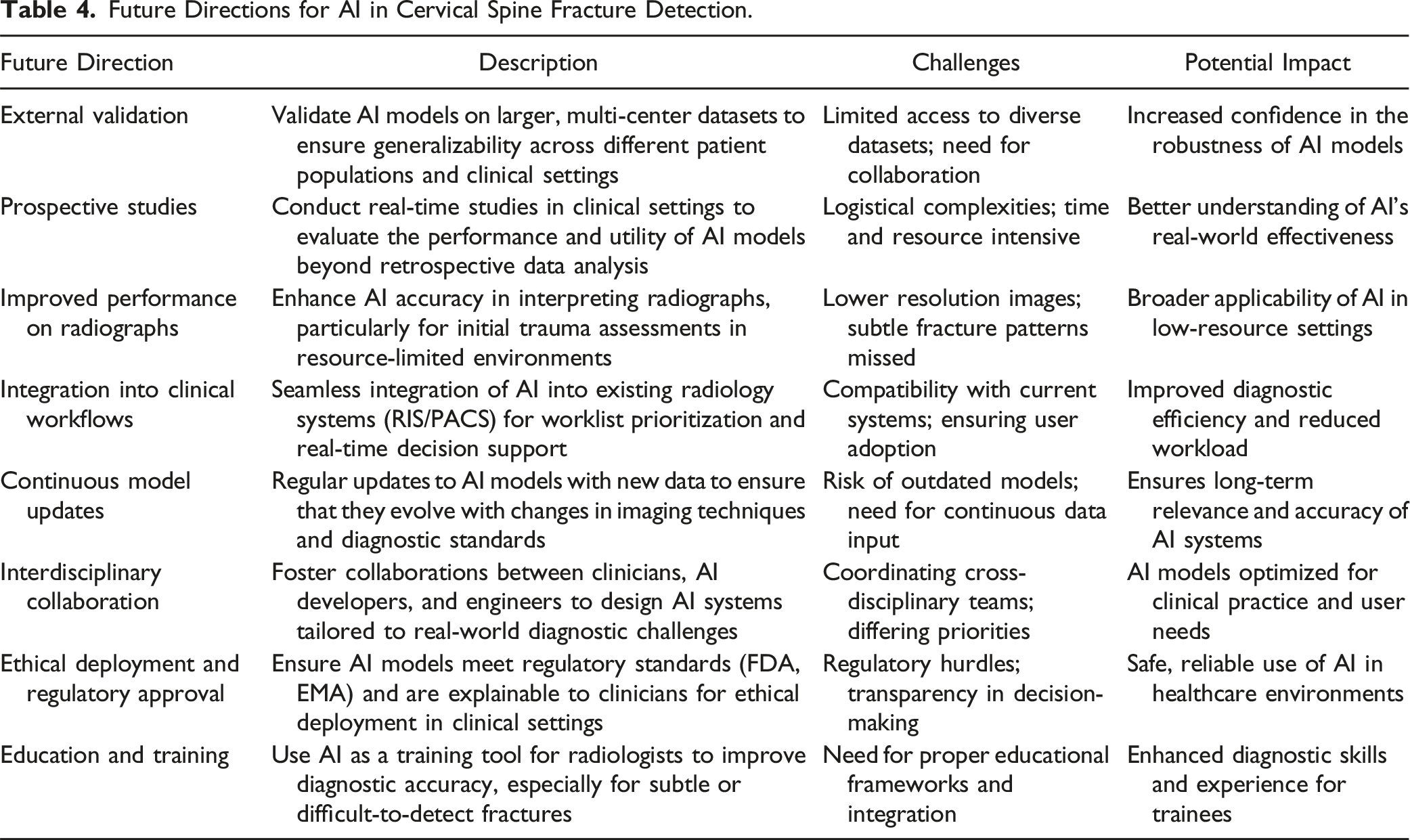

Future Directions for AI in Cervical Spine Fracture Detection.

Conclusion

This systematic review demonstrates that AI and deep learning models, particularly CNN and advanced architectures like MobileNetV2 and ViT, show significant potential in detecting cervical spine fractures, especially in CT imaging. These models can enhance diagnostic accuracy and streamline clinical workflows, offering valuable support to radiologists. However, challenges remain, including variability in performance across imaging modalities and a lack of external validation in many studies. Future work must focus on refining AI models, validating them in diverse clinical settings, and ensuring they complement human expertise. With further development, AI has the potential to play a critical role in improving the detection of cervical spine fractures.

Footnotes

Acknowledgments

The authors would like to thank the Thailand Science Research and Innovation Fund (Fundamental Fund 2025, Grant No. 5025/2567) and the School of Medicine, University of Phayao.

Author Contributions

Conceptualization: W Liawrungruean, Data curation: W Liawrungrueang, W Cholamjiak, A Promsri, P Sarasombath, Formal analysis: W Liawrungrueang, P Sarasombath, Funding acquisition: W Liawrungrueang, Methodology: W Liawrungrueang, Project administration: W Liawrungrueang, Visualization: W Liawrungrueang, W Cholamjiak, A Promsri, V Kotheeranurak, K Jitpakdee, S Sunpaweravong, P Sarasombath, Writing - original draft: W Liawrungrueang, P Sarasombath, Writing - review & editing: W Liawrungrueang, P Sarasombath.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

IRB Statement

The study was conducted according to the guidelines of the Declaration of Helsinki and approved by the Institutional Review Board of Phayao University Hospital (Approval No. HREC-UP-HSST 1.1/046/67).