Abstract

This is an important critical reassessment regarding the proliferative publication trend of “Systematic Reviews” seen not just in just spine surgery but across all medical specialties. With the advent of the “evidence-based medicine” era, the traditional hierarchy of evidence, which had historically held prospectively randomized clinical trials at the very pinnacle of the evidence pyramid, was rearranged in favor of “well-performed” systematic reviews (SRs) and meta-analyses (MAs). 1 With their overarching and collective nature, these undertakings can offer a statistically much more potent literature overview while potentially reducing bias almost invariably introduced in single studies. 2,3 Undeniably, SRs and MAs have become essential foundations for guidelines (ie, National Institute for Health and Care Excellence [NICE] guidelines), they are the “go-to” first look resource for government agencies and granting bodies alike. And for future authors, such studies have become a welcome primary entry point for a deeper dive towards their own research projects.

By now, the dramatic proliferation of SRs and MAs has become a well-reported phenomenon as well as problem in the scientific publication world. 4 As the study by Fontelo and Liu 4 reported, the United States has shown a linear rise of medical SRs crossing the 1000 publication threshold in 1996 and in 2015 contributing just shy of 10 000 such studies/year to the peer reviewed literature amounting to a total of >82 000 publications. In contrast, the People’s Republic of China (PRC) showed a sudden rise since 2010 and is now the nation with the second highest number of SR publications following the United States with 21 000 publications as of 2015. In the arena of MAs, the PRC now leads the world in MAs published with almost 4000 per year (15 345 total) ahead of the United States with just below 2500/year (16 581 total). Again, the PRC publication profile took a sudden sharp upward turn after 2009 and since then continued in a continued logarithmic turn upward.4

There are several well-recognized reasons for the appeal of SRs and MAs: If done well, they hold the potential to provide a “state of the art” overarching assessment of the body of literature on a given topic. Well-done or unique SRs and MAs promise a ready bounty of copious citations, especially if published early-on regarding a novel or hot button topic. Frankly speaking, they also offered the convenience of publishing scientifically even in major scientific journals from the convenience of a connected desktop workstation by searching various freely accessible search engines without having to bother with institutional review boards or hassling with the increasingly prohibitive cost and rigmarole of de novo clinical research. Creating a new and well-recognized publication in a matter of days suddenly became a reality.

The sudden proliferation of SRs and MAs has not unexpectedly lead to inconsistent quality standards with journal editors not necessarily applying existing quality standards to submissions. A telling quote of the authors of this EBSJ study expressed this deficiency that “most authors (at least in the spine literature as of 2018) seemed to equate systematic reviews with systematic literature searching.”

Looking back there was an early recognition of the need to raise the quality of SRs and MAs. The PRISMA statement was born from a collaborative effort called QUORUM (QUality Of Reporting Of Meta-analyses) in 1996. 5 The change to the term PRISMA (Preferred Reporting Items for Systemic reviews and Meta-Analyses) arose from a wish to include SRs in addition to a straightforward checklist tool and adopted the definitions used by the respected Cochrane Collaboration. 6

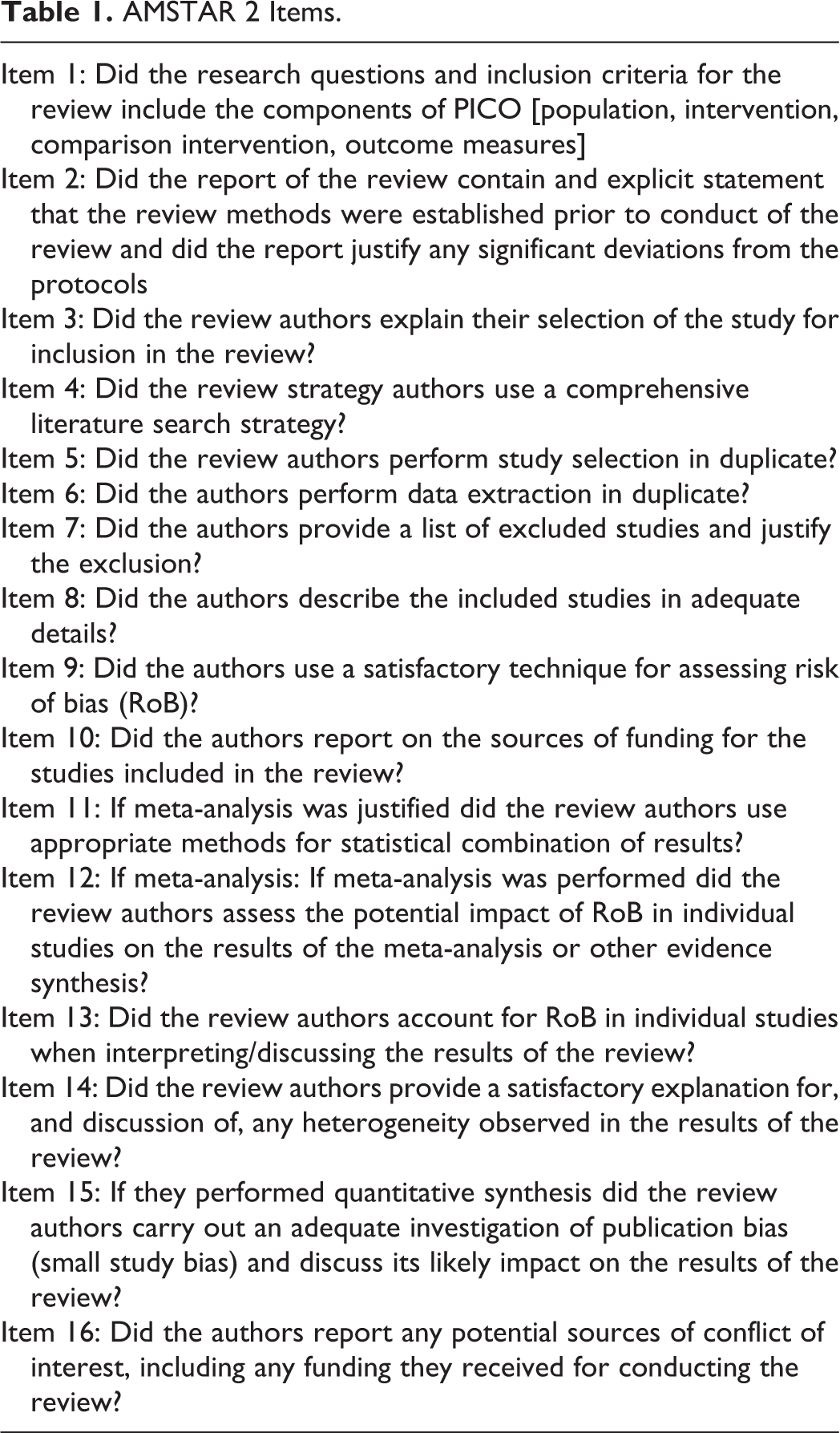

While the intent of the PRISMA group was to provide authors a checklist tool for their creation of a quality SR or MA, the AMSTAR (AMeasSurement Tool to Assess systematic Reviews) instrument published in 2007 and its AMSTAR 2 revision published in 2017 were created to create a more user friendly “critical appraisal tool” of an SR inclusive of nonrandomized studies (Table 1). 7 While both tools share some overlap they are meant to be complementary to one another and thus are applied sequentially for critical assessment of quality of SR and MA studies. For instance, Kelly et al 8 applied a sequential analysis of PRISMA and AMSTAR 2 tools to so-called Rapid Reviews (which are a more recent introduction of a more “accelerated evidence synthesis”) published throughout 2016 and found poor compliance with both entities with published reviews showing better compliance with PRISMA guidelines than with AMSTAR items.

AMSTAR 2 Items.

In psychiatry, a larger study assessing AMSTAR 2 in comparison with AMSTAR and another rating tool (ROBIS; Risk Of Bias in Systematic reviews) showed moderate interrater reliability of AMSTAR 2 and high concordance of this test with the ROBIS test but not with AMSTAR itself for SRs that studied psychological and pharmacologic depression treatments but overall found similar validity across all rating tools. 9

As the science of rating SRs and MAs is still evolving, the authors of the present EBSJ study performed a thorough assessment of spine-related SRs in one publication year (2018) and applied the AMSTAR 2 criteria to evaluate the quality of compliance of these SR’s with these new ratings tools and inform the larger spine community about this tool and its intent.

The authors did not wish to belittle the efforts of the authors or the 4 historically leading spine journals and their editorial staff but rather hoped to expand the quality awareness of future authors and reviewers on the subject matters of SRs and MAs. The findings, which were (critically low) in 93% of results, will hopefully lead to an improved adherence to PRISMA standards at the onset and address the quality standards formulated in the AMSTAR 2 guidelines. To this end, the evolving field of evaluation tools for SRs and MAs introduces a new field on investigations for a new generation of spine researchers.