Abstract

The auditory alarms used in oil and chemical processing control rooms are often based on practices and knowledge which is twenty to thirty years out of date, and therefore do not embody the significant progress that has been more recently made in this area. Best practice available from other areas (aviation, transport, and healthcare) can be brought to bear in improving and updating control room alarms as they share similar problems with alarms in those domains. This paper describes the processes of designing, benchmarking, and testing a series of sets of auditory alarms intended for use in control rooms by the oil and chemical processing industry. In particular, the work shows how important the localizability of alarms can be in practice, and how improved localizability can be designed into auditory alarms.

Background

On March 23rd 2005, a cloud of natural gas and petroleum exploded at the BP Texas City oil refinery in Texas City, TX. The incident killed 15 people and injured 180 (Holmstrom et al, 2005). Although the accident was attributed to a direct cause, with BP’s report of the investigation attributing the accident to ‘heavier-than-air hydrocarbons combusting after coming into contact with an ignition source, probably a running vehicle engine…’ the sequence of events which led to the explosion was similar to other accidents (such as that at Three Mile Island) wherein each event of itself is not catastrophic, but the cumulative effect of those individual incidents leads to an event which is far more serious (Reason, 2000).

The findings of the investigation into the accident highlighted a number of safety issues relevant across the industry and among these, not surprisingly, were a series of human factors elements. A consortium, the Center for Operator Performance (COP), subsequently formed in order to address those issues and to improve ergonomics and human factors (and therefore safety and performance) across the oil and chemical processing industries. The COP seeks to commission projects in those areas identified as problematic as a result of the enquiry into the incident. Unsurprisingly, one of the areas highlighted was that of the auditory alarms, which were found to have contributed to the accident. Unlike Three Mile Island (Rogovin, 1980) which saw a plethora of uninterpretable and confusing alarms, in the Texas City accident there was a notable absence of an emergency alarm. In both cases, auditory alarms were therefore a problem, for difrerent reasons. Thus auditory alarms are one of the HFE areas the COP wishes to investigate and improve upon.

In 2018 the COP commissioned a project to investigate and improve control room auditory alarms. Our experience of working in other work domains suggested to us that we were likely to find problems similar to those found elsewhere, which typically include: alarms being added piecemeal so that there is little strategic and meaningful mapping of alarms to hazards; alarms that are too loud, or too quiet; alarms that are confusing with one another; alarms that are not urgency-mapped to their hazards; alarms that are difficult for older listeners to hear; and alarms of poor acoustic quality, meaning that they are either too loud or inaudible, depending on the noise conditions, as well as being hard to localize (for the user to locate precisely where the alarms is coming from). All of these issues can be addressed through good design and good HFE follow-up.

There is considerable auditory cognitive psychology and human factors research that can be brought to bear in addressing auditory alarm improvement. For example, we know a lot about urgency, difference, and other cognitive aspects of auditory alarm design and subsequent performance (e.g. Collett et al 2020; Edworthy et al 2011; Edworthy et al 1991; McDougall et al, 2020). There is also an important set of acoustic and psychoacoustic issues which need to be considered during the design of auditory alarms relating to audibility (which will always be a problem in areas where the background noise varies, as it does in most work environments), and localizability. For both of these topics there is established science (Alton Everest and Pohlmann, 2015; Blauert, 1997). The hearer of the sounds is not necessarily aware of these elements, and how they might limit or affect the nature of the resultant sound, so part of the challenge of any auditory alarm design project where there is an end-user waiting and wanting to use the final deliverables, is to guide the selection of sounds so that it is ergonomically appropriate, rather than being based purely on aesthetics.

There is no agreed set of criteria via which an auditory alarm or set of alarms can be evaluated, though there are many potential measures that are of value. We therefore favor a set of benchmarking tests including aspects such as learnability, difference, urgency, audibility, and localizability. This approach was used in the development, testing, and simulation of auditory alarms now supporting a global medical device standard (Bennett et al 2019; Edworthy et al 2017; Edworthy et al 2018; McNeer et al, 2018). With a broad set of measures, the user is able to decide which of them is important for their application. In this paper we showcase the alarms developed for the project, and also record the process of benchmarking. HF & E professionals may find those tests useful when working in similar areas.

Phase 1

In Phase 1 of the project we visited two control rooms, recorded many of the auditory alarms currently used in those control rooms, measured the loudness and the variability in loudness in those rooms, spoke to personnel (both managers and operators) about the alarms and behavior surrounding the alarms, looked at alarm philosophies (documents which set out how the many hundreds of potential alarm situations are mapped to two, three, or sometimes four priorities) and asked operators what kinds of issues they felt were important in relation to their alarms currently as well as which issues might be addressed if new alarms were to be designed and implemented. We also conducted a survey. This survey was sent to all members of COP (to individual plants) and asked questions about potential issues with alarms, what improvements might be possible, as well as asking for information such as the size of the control room and the number of consoles within it, and for recordings of the alarms currently in use. We subsequently reported on the findings from this work (Edworthy et al, 2022). In summary, our completion of Phase 1 indicated that auditory alarms tended to be added on a piecemeal basis, they were typically not urgency-mapped, there were concerns about operators not hearing alarms (particularly when away from their consoles) and the other usual issues prevalent when looking at how auditory alarms are traditionally used in the workplace. In short, overall the alarms and their implementation were typically far from ergonomic best practice, but there were many elements of good thinking and practice to be found here and there.

Phase 2

There are two main components to Phase 2 of the project. In the first part we carried out a lengthy, iterative process of design in order to develop a final suite of 12 sets of 4 auditory alarms. Each set consisted of a High, Medium, and Low priority alarm and an Information sound. We started off by developing 20 sets, from which the 12 were later selected. The initial 20 sets were exposed to lengthy benchmarking, testing, and modification.

Alarm design

The design of the initial 20 sets of 4 sounds is described in a previous paper (Edworthy et al, 2022). Each set consisted of a High, Medium, and Low Priority sound as well as an Information sound. All members of the research team took part in the design process. The main objectives in the design process was to imbue the sounds with acoustic features which would make them relatively easy to localize, which is achieved by ensuring that every sound had several harmonic components in its structure (typically at least 10), that the four sounds within each set were appropriately urgency-mapped (so that the High priority was more urgent than the Medium priority, which was more urgent than the Low priority, which was more urgent than the Information sound), and that within and between the sets the sounds were appropriately different in their design so that they could be individually recognized. These design goals were later confirmed through the benchmarking.

On-line Benchmarking

A computer program was used which collected data on each of the 20 sets of data. Data from at least 12 participants was collected for all 20 sets. The participants were primarily students at the University of Plymouth, but some of the participants were recruited from the COP and were therefore oil and chemical industry personnel. As the data collected is nomothetic rather than idiographic in nature, so the use of student participants was considered an appropriate participant pool. The tests were as follows:

Urgency

Each sound within a set was compared with every other and the participant selected the more urgent. The higher the score, the more appropriately urgency-ordered were the designs.

Difference

Sounds within sets were presented in pairs. Participants were required to indicate the difference between the sounds on a 1 (very similar) to 10 (very dissimilar) scale.

Learning

Participants were played each of the four sounds in the set being tested four times, for learning purposes. They were then presented with each of the sounds four times and were asked to name the sound. The higher the score the more accurate were the responses.

All 20 sets performed at almost ceiling level, and higher than a set of current alarms in use which was included for comparison. The data was used to help select the final 12 sets (though other factors were taken into account as well, such as acceptability and liking, which did not always correlate with good performance on the benchmarking measures) and for identifying areas where the design could be improved.

On-site testing Part 1

The first stage in moving from a completely online set of tests to something more control-room based was a series of tests carried out on a small number of participants in a training facility. The training facility was a simulated control room and the participants were all individuals with extensive experience of the oil industry, particularly within the control room.

Throughout the process of benchmarking and presenting the results of the design and benchmarking process at regular COP meetings, we received a lot of informal feedback about the sounds and how operators might respond to them. While the ‘likeability’ of the sounds is not a key issue in terms of their performance as alarms, they have to be acceptable enough for operators to at least consider the potential implementation of the sounds. We used this informal feedback on preferences for some of the sounds over others, performance in the initial benchmarking tests, plus our own observations about the sounds, to select four sets of sounds for further and extensive testing. The sets selected for further testing were Sets 4, 6, 10, and 18. In practice, few plants would require more than four different sets of alarms. The on-site testing we carried out on these sets was as follows. Eight participants were tested.

Urgency, difference, and learnability online tests

The participants each performed the three online tests using the same program as for the benchmarking tests. All four sounds in each set were tested. At the end of the tests, participants were asked to rate both the absolute urgency and the appropriateness of each of the alarms in the sets.

Localizability test

Participants sat in the middle of a set-up of 8 small loudspeakers set 3 m away from them (similar to Edworthy et al 2017, 2018). Only the HP alarms were tested. Each was presented twice from each of the eight speakers. Participants were asked to indicate which of the eight speakers from which they felt the sound had emanated. Four participants heard the sounds in 50dB(A) noise, and 4 heard them in quiet. The sounds were presented at 70dB(A).

Audibility test

Participants heard each of the 4 HP sounds at distances of 1, 3, 5, 7, 9, and 11meters away from a single loudspeaker at levels of 80, 70, 60 and 50dB and were asked to indicate how audible the sound was on a 1-5 scale (1 = inaudible, 5 = very audible/too loud). Four participants heard the sounds in quiet, four heard them in 50dB(A) noise.

Results

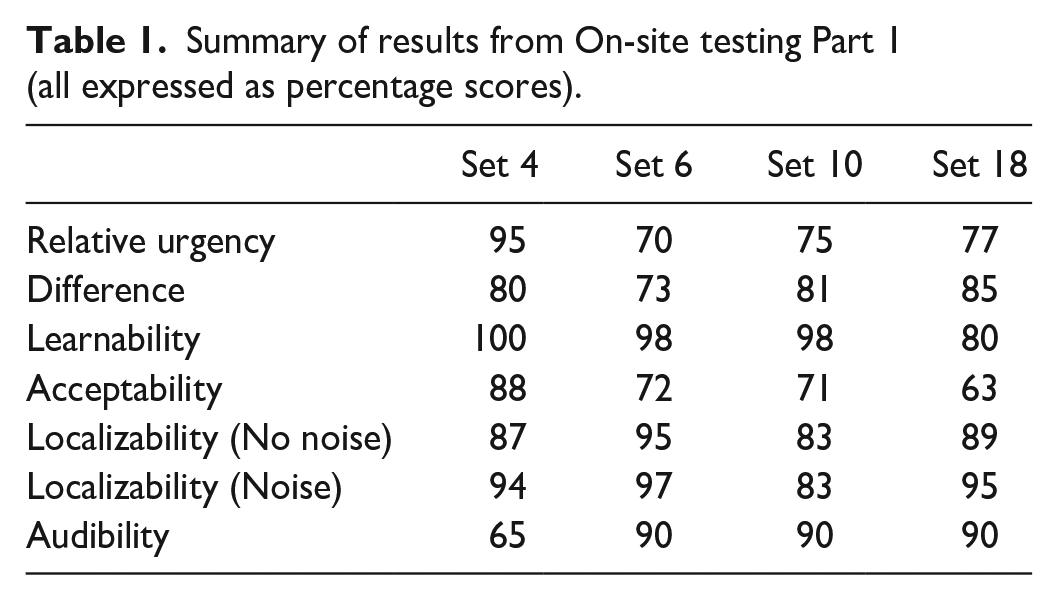

All results are expressed as percentages, converted from the raw measures taken. The audibility measure is the mean score (with the score ‘5’ being 100%) when hearing the 70dB version of the alarm from 11meters. This was chosen as being a meaningful measure when selecting alarms for use once the sounds had been delivered to COP, as it was the furthest distance tested and the most likely level at which alarms and speakers will be set so as to be neither too loud nor too quiet (See Table 1).

Summary of results from On-site testing Part 1 (all expressed as percentage scores)

Brief summary of results

The data obtained had two purposes. The first was to check the performance of the sounds and to use it to make further improvements on them, and the second was to grow a database on sound performance for the eventual users of the sound, so that they might select sounds and sound sets suitable for their environment. The sounds performed well on most of the measures, and the differences indicate the relative strengths and weaknesses of the different sounds and sound sets. Some of the sounds were subject to further development in order to improve on lower scores. For example, the relative urgency of Set 6 was improved by having larger differences in pitch and speed across the three different levels of urgency, and Set 18 was adapted in order to sound more appealing and appropriate. These changes were taken forward into the On-site testing Part 2.

On-site testing Part 2

Localizability of the alarm sounds when the operator is away from the console is a major concern for both managers and operators. Some of the alarms currently in use are very hard to localize, and there are instances where the operator cannot even tell whether or not it is their own alarm sounding when they are seated at the console. There are a number of reasons for this. Some of the alarm sounds are very poor acoustically, relying on one or two harmonics (which are often overly loud) which means that they will be very hard to localize as localizability improves with more, and broader band, harmonics (Blauert, 1997; Vaillancourt et al, 2013). Additionally, speakers are often to be found in unergonomic locations relative to the operator, for example tucked in the back of the console furniture, which serves to muffle and misdirect the sound.

Operators are keen to be able to localize their console when they are elsewhere in the control room, or even outside of the control room, so that they can quickly return should an emergency occur. One solution to this is to use earpieces rather than to make demands of the alarm signal (so that it is delivered straight to the operator’s ear, wherever they might be) but it seems that operators do not want to do this. Hence improving the localizability of the new alarm sounds relative to the old was a key challenge. We designed the alarms so that they consisted of many harmonics with a good spread across the acoustic spectrum, which would predictably be easier to localize than most of the existing alarms. We tested localizability of the HP alarms in Sets 4, 6, 10, and 18 in a control room.

Procedure

A large (80’ x 80’) control room with 15 consoles spread over 5 pods of unequal size was used. The procedure took place over two days. On Day 1 the two experimenters, one sedentary and the other mobile, sat in the middle of the room. On Day 2 they sat to one side of the room. Day 2 was particularly noisy as some of the operators were assembling and then testing a running machine nearby. The sedentary experimenter sat at a table with the participant and with a plan of the room on the table, while the mobile experimenter had the task of walking around the room and placing the speaker at a range of locations unseen by the participant. At least six locations spread around the room were chosen, with additional locations being selected if it was felt necessary (for example, if the participant had difficulty localizing the alarm sounds).

During each trial, the mobile experimenter targeted one of the locations and placed a bluetooth speaker (in this case a JBL Flip 5 speaker) at the location. The target was always a specific console, and the speaker was always placed on the floor. The participant would not see the mobile experimenter doing this, and the mobile experimenter would take a circuitous route back from the chosen location so as to not give the location away. Once the mobile experimenter had returned to the desk with the sedentary experimenter and the participant, the sedentary experimenter would trigger the alarm sound from a laptop. The participant was asked to point and describe the location of the alarm, or to indicate on the plan where the sound had come from. The participant was asked to identify the precise console or origin, or if unable to do so, the pod from which the alarm had sounded. The participant was allowed to move their head, and to stand and move around (but not so much as to be able to see the speaker) if they so wished.

The alarms were tested on five participants, in at least six locations each. A few of the other alarms were additionally tested because we were interested in the performance of some of the more unusual designs (for example, a sound which moved across two speakers, and one with an echo, sounds which were given these design characteristics for ease of identification but their potential effects on localizability somewhat unpredictable).

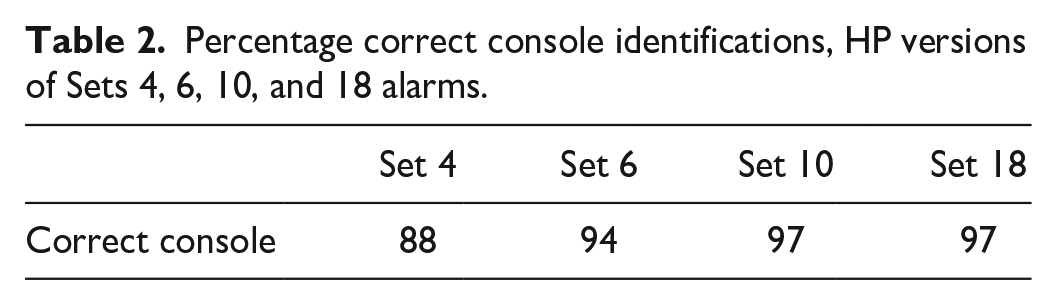

Results

Almost all of the alarms were identified accurately to the precise console of origin, with very few errors. Those errors which did occur tended to be in the same direction as the actual location, but in front of or beyond that location. In a very few cases only the pod, and not the actual console, could be identified. Table 2 shows the percentage correct responses in terms of console identified correctly.

Percentage correct console identifications, HP versions of Sets 4, 6, 10, and 18 alarms.

We also tested a participant who had issues in identifying their own console’s alarm when both away and at the console, who had known and substantial hearing loss. This participant performed at almost 100% accuracy, similar to other participants, aside from being a little slower and needing to hear the alarm more than once.

Deliverables

The COP are keen to have deliverables from the project which will be of use to those making decisions about upgrading and updating the alarm sounds in their control rooms in the future, now that the project is complete. A series of technical reports have described the data and the findings, as well as the development and design of the alarm sound sets and their subsequent testing and refinement. The alarm sounds themselves are being delivered as 12 sets of 4 sounds, each with a High, Medium, and Low Priority alarm sound and an Information sound. The COP will be making these sets available to their members, and one or two sites have already started using some of the sets.

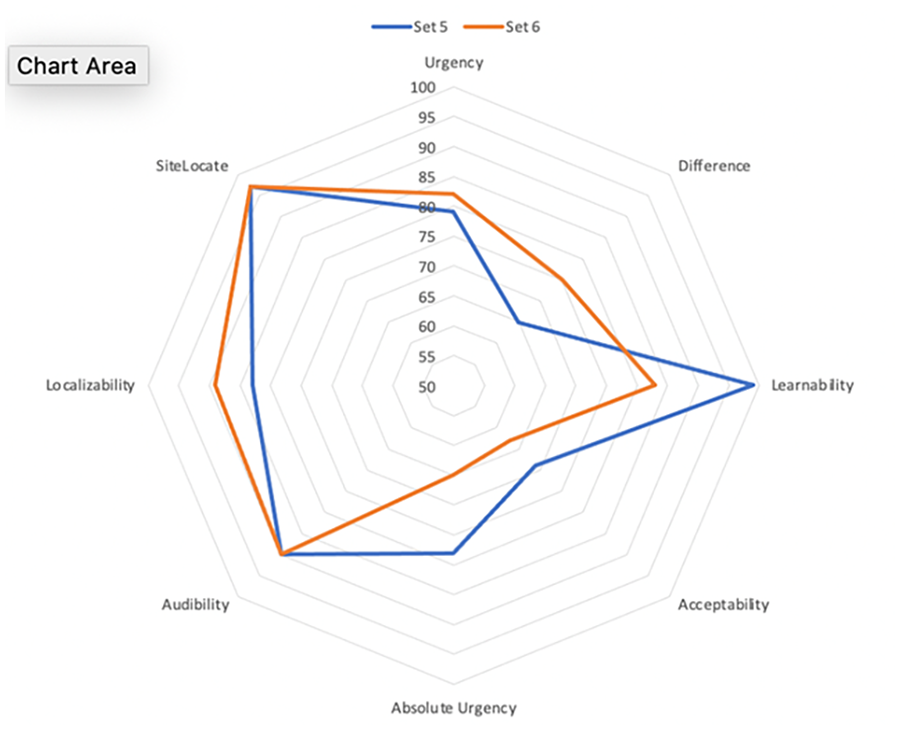

Additional deliverables focus around guidance and practical information for decision-makers about how to select alarms for the specific control room in question, and the provision of the information needed to make those decisions in an ergonomic and understandable form. A user guide has been developed which accompanies the technical report and the provision of the sounds themselves. This user guide provides information as to how the individual metrics (urgency, difference, learnability, acceptability, absolute urgency, audibility, localizability, and on-site localizability) were derived as well as giving their values for each alarm set. The collated information for each set of alarms, or in the case of some of the metrics, individual alarms, are shown as radar charts in the user guide. Figure 1 shows a sample radar chart for Sets 10 and 18, now renumbered so that the alarm sets range from 1-12 rather than the original 1-20.

Radar charts for Sets 10, 18 (now 5, 6).

Practical Takeaways

Sets of benchmarked and site-tested auditory alarms are now available for use in oil and chemical processing control rooms.

These alarm sets have improved urgency mapping, differentiability, and learnability and are based on best practice.

The localizability of the alarms is a key issue, and the high priority versions of the most favored alarm sets have been demonstrated to be almost 100% localizable, to the correct console, in a large control room.

Easy-to-use guidance is available for end-users to interpret each of the tested variables, to enable effective selection of alarms and alarm set

Footnotes

Acknowledgements

This project was supported by the Center for Operator Performance, Dayton, OH, USA.